Abstract

Pairwise and network meta-analysis (NMA) are traditionally used retrospectively to assess existing evidence. However, the current evidence often undergoes several updates as new studies become available. In each update recommendations about the conclusiveness of the evidence and the need of future studies need to be made. In the context of prospective meta-analysis future studies are planned as part of the accumulation of the evidence. In this setting, multiple testing issues need to be taken into account when the meta-analysis results are interpreted. We extend ideas of sequential monitoring of meta-analysis to provide a methodological framework for updating NMAs. Based on the z-score for each network estimate (the ratio of effect size to its standard error) and the respective information gained after each study enters NMA we construct efficacy and futility stopping boundaries. A NMA treatment effect is considered conclusive when it crosses an appended stopping boundary. The methods are illustrated using a recently published NMA where we show that evidence about a particular comparison can become conclusive via indirect evidence even if no further trials address this comparison.

Keywords

1 Introduction

In 1898, George Gould, the first president of the Association of Medical Librarians, presented his vision regarding the optimal use of existing evidence. He was looking forward to a situation where “a puzzled worker in any part of the civilized world shall in an hour be able to gain a knowledge pertaining to a subject of the experience of every other man in the world”. 1 Highlighting the increasing information overload and the pivotal role of systematic reviews in health care, 2 Mike Clarke updated Gould’s vision in 2004, hoping for a system in which decision makers “would be able, in 15 minutes, to obtain up-to-date, reliable evidence of the effects of interventions they might choose, based on all the relevant research.” 3

In essence Gould and Clarke call for cumulative (network) meta-analyses of randomized trials of health care interventions.4–7 Ideally, cumulative meta-analyses are prospectively planned: investigators establish a collaboration before the design of their trials is finalized, so that study procedures, interventions and outcomes can be harmonized and analyses can be done as soon as the results become available.6,7 Prospectively planned meta-analyses have the potential to reduce bias because key decisions on inclusion criteria, outcome definition and other procedures are made a priori. 7 Several prospective meta-analyses have been conducted in recent years, for example in cardiology8,9 or oncology.10,11

However, the vast majority of meta-analyses are not prospectively planned. Reviewers tend to update their meta-analysis when relevant studies are published but have no direct influence over the planning of future studies. Nevertheless, after each update they need to characterize the evidence (for a particular treatment comparison and outcome) as conclusive or not, decide whether future updates of the evidence are needed and recommend the realization of further studies or not. The Cochrane Collaboration has a policy about when a systematic review should be updated. 12 Updating meta-analysis (either because it is prospectively planned or because its result would be used to form a decision about conclusiveness) involves multiple tests as evidence accumulates and effect sizes are recalculated at each step, resulting in an inflated type I error.13–15 Sequential methods for standard pairwise meta-analysis have been developed to account for multiple testing and adjust nominal significance.5,16–19

For many conditions, several treatment options exist and data on their comparative effectiveness are of primary interest to clinicians. At present comparisons of one treatment with no treatment, or with placebo, continue to dominate clinical research, and head-to-head comparisons remain uncommon. Network meta-analysis (NMA) addresses this situation. Under the condition that studies are similar with respect to the variables that might modify the treatment effects, NMA can synthesize evidence from trials that form a network of interventions in a single analysis. Summary estimates of comparative effectiveness for all treatment options are thus obtained, including treatments that have not been compared directly in head-to-head comparisons.20,21 In line with recent calls for comparative evidence at the time of market authorization,22,23 Naci and O’Connor suggested the use of prospective, cumulative NMA in the regulatory setting. 19 Evidence on relative effects of treatments can become conclusive even if there are no new trials that directly compare them because of new studies contributing indirect evidence.

In this article we extend ideas of sequential monitoring of trials to provide methods for updating NMAs. We argue that sequential methods are relevant in any setting where a decision is to be made based on the results of an updated meta-analysis; when future studies are to be planned based on existing meta-analytic results (prospective meta-analysis) or when decisions are made about the necessity of future updates. We introduce cumulative NMA, discuss ways to adjust for multiple testing and recommend graphical representations of the sequential NMA process. We then discuss how important outputs of NMA can be monitored when updating a NMA.

2 Illustrative example: Coronary revascularization in diabetic patients

To illustrate the methodology we use a recently published NMA evaluating the optimal revascularization technique in diabetic patients. 24 The primary outcome examined is a composite of all-cause mortality, non-fatal myocardial infarction and stroke measured using odds ratio (OR). Authors combined 15 studies examining the effectiveness of three interventions; percutaneous coronary intervention with bare mental stent (BMS) or drug eluting stents (DES) and coronary artery bypass grafting (CABG). For illustration purposes we consider that NMA has been undertaken sequentially; each study included in the data as soon as it is published and the systematic reviewers have to decide, after each update, whether future updates of the NMA are necessary to provide a conclusive answer. This particular NMA was chosen because it examines few treatments and includes a substantial number of studies to ensure that methods will be easily exemplified and the sequential process will be conveniently presented. Throughout we assume that comparability between trial populations and characteristics that may act as potential effect modifiers is justified, so that the synthesis of the planned trials in a NMA model is sensible. The data set comprises 12 two-arm studies and three three-arm studies. NMA suggests that the best treatment is CABG which is significantly better than BMS (OR 0.59; 95% confidence interval 0.44 to 0.78) and marginally better than DES (OR 0.73; 95% confidence interval 0.54 to 0.98). Studies were published between 2007 and 2013 and it would be interesting to see whether significance is sustained after correcting for multiple testing and if yes, at which point in time the accumulated evidence was conclusive. Note that when updating NMA the comparison of ‘BMS versus CABG’ can become statistically significant even when ‘BMS versus DES’ studies are published via indirect comparison.

In order to undertake a sequential analysis, one needs to specify type I and type II errors, as well as the alternative hypothesis. The specification of the effect size to be detected is of crucial importance as the alternative hypothesis should express a clinically important effect reflecting the perspectives, needs and preferences of different individuals.15,25–28 However, determination of an effect that reflects patient perceptions is very challenging when the primary outcome is a composite endpoint.

24

For illustrative reasons, in the remainder of the paper we will use arbitrarily (yet clinically plausible) log ORs for the three comparisons to be

3 Methods

3.1 Cumulative NMA

Consider a network of n trials forming a set

Let

3.2 Assumptions underlying the updating of NMA

The justification of similarity in effect modifiers is important to ensure the plausibility of the transitivity assumption after each update of the network.19,20,32 Throughout, we assume that the transitivity assumption is epidemiologically evaluated and deemed reasonable. The consistency assumption is the statistical manifestation of transitivity and lies on the statistical agreement between different sources of evidence. 33 A statistical test for inconsistency can be monitored as soon as its evaluation is possible, that is when a closed loop (not composed only by multi-arm trials) is formed. Large amounts of inconsistency should prohibit a joint synthesis of the data and explore the differences between the various sources of evidence. However, the power of inconsistency tests might be low even after the inclusion of several studies in NMA.34,35 In collaborative prospective NMAs inconsistency is likely to be avoided through the efforts of the researchers to ensure the comparability of the studies and maximize the chances of transitivity.

We adopt a random effects NMA model and we assume a network specific heterogeneity variance

3.3 Z-score and relevant information of cumulative network estimates

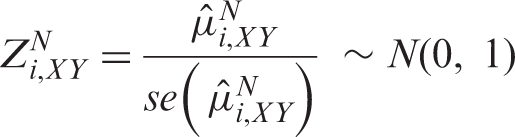

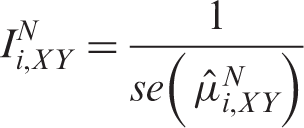

The cumulative network estimate

Several approaches have been suggested for measuring information in pairwise meta-analysis; we adopt an approach which is directly related to the precision of the meta-analytic estimates and consequently to the amount of evidence accumulated.5,15,40,41 According to that approach the information contained within each comparison in the network can be measured as

3.4 Construction of efficacy stopping boundaries

Several methods have been proposed to control type I error in clinical trials when multiple looks at the data are taken through the construction of stopping boundaries for deciding whether or not to reject the null hypothesis. These methods include the Haybittle-Peto method, the Pocock boundaries and the O’Brien-Flemming monotone decreasing boundaries.

42

Application of different stopping boundaries can lead to different conclusions regarding early stopping of a clinical trial in an interim analysis. It has been suggested that the O’Brien-Flemming method is more close to the behavior of data monitoring committees who require a great beneficial effect to stop a trial at an early stage.

43

An important problem associated with standard sequential methods is the necessity to define the number of interim analyses at the beginning and the requirement of equally spaced interim analyses. These problems are handled by the introduction of alpha spending functions which extend group sequential designs to allow flexibility in the number and timing of interim analyses.

44

An alpha spending function

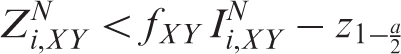

Appending efficacy boundaries to the

As the total amount of information that will be employed is unknown, the specification of

The alpha spending function is used to allocate a portion of the total a to each

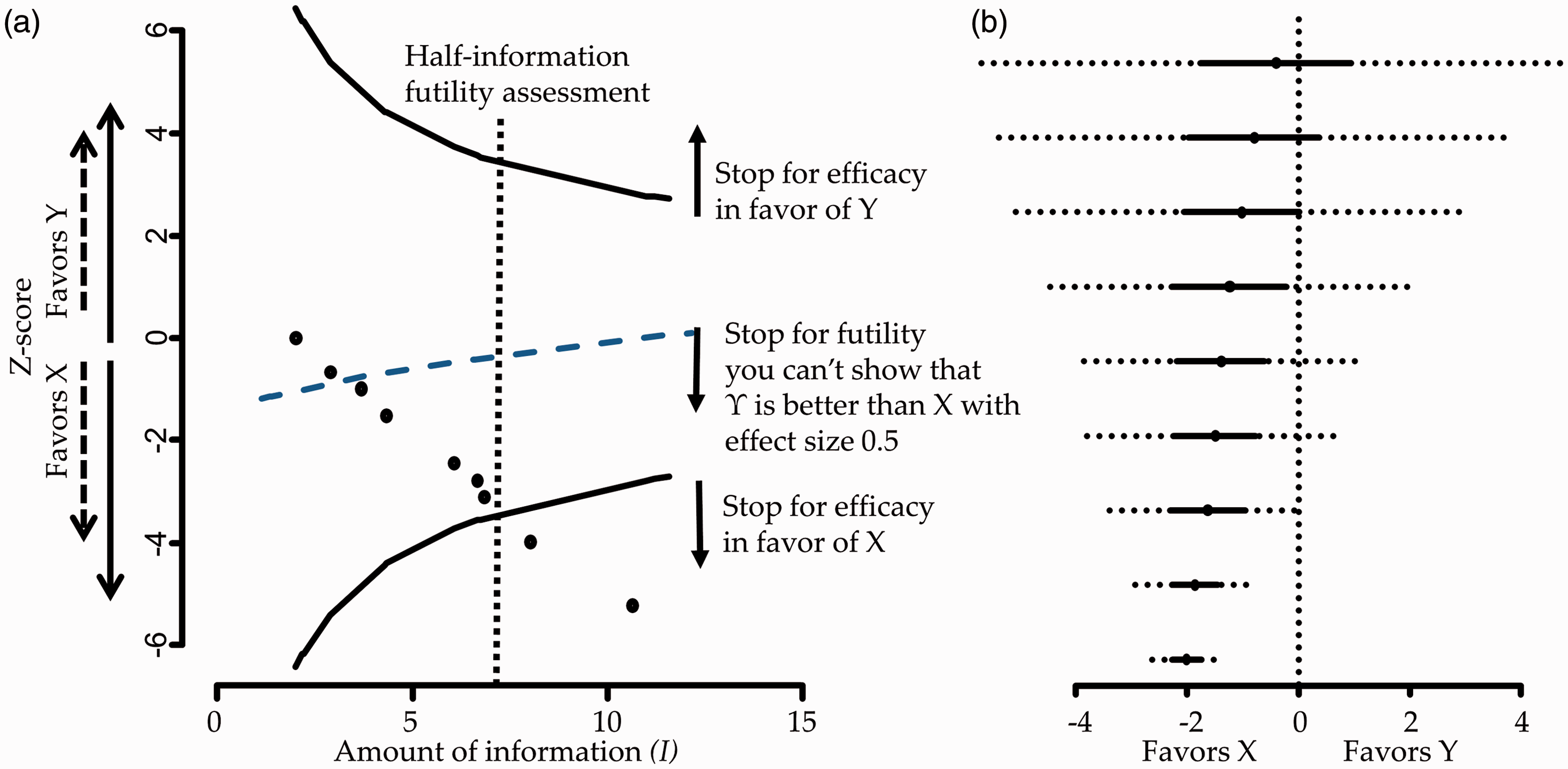

Figure 1 panel a presents the Panel a: Hypothetical stopping framework for efficacy and futility. Futility here means that Y will not be shown better than X by more than 0.5 effect size. Panel b: Hypothetical forest plot with repeated confidence intervals (dotted lines).

3.5 Construction of futility stopping boundaries

Future updates of NMA can be considered unnecessary when there are early signs of efficacy or because it is considered unlikely that the relative superiority of a treatment will be shown in subsequent steps of analysis. Such decisions in clinical trials are known as stopping for futility.

46

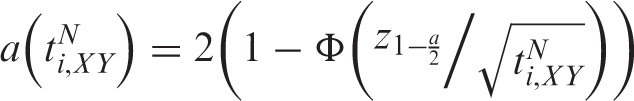

Roughly, there are four major methods used to stop further experiments for futility: conditional power, predictive power—which is the analogue of conditional power in Bayesian analysis—, construction of triangular regions—also known as sequential probability ratio tests—, and beta spending functions.46–48 We choose to transfer the later method for stopping for futility in NMA because of its analogy to the alpha spending functions and its convenient visualization along with the efficacy boundaries in the

We adopt a method described by Lachin to determine futility boundaries.

50

Without loss of generality we assume that positive values of

The value

It has been shown that under the alternative hypothesis

3.6 Other network characteristics to be monitored

Monitoring changes in the conclusions from NMA should be accompanied by an evaluation in changes in the inconsistency and heterogeneity (if re-estimated at each update) so as to put results into context. Investigators planning NMA should make sure that the inclusion criteria of the studies ensure their comparability and maximize the chances of transitivity and that the distribution of effect modifiers is comparable across treatment comparisons. However, even after careful planning, there is always the possibility of inconsistency in the assembled data.20,52 Thus, we consider that in each update of NMA an estimation of inconsistency is included; here, we consider the cumulative performance of the loop specific approach. 53 Taking into account the low power of tests for inconsistency, we do not recommend adjusting for multiple testing.34,35 Any signs of inconsistency in interim stages should be explored and the inclusion of new evidence should be carefully reconsidered.

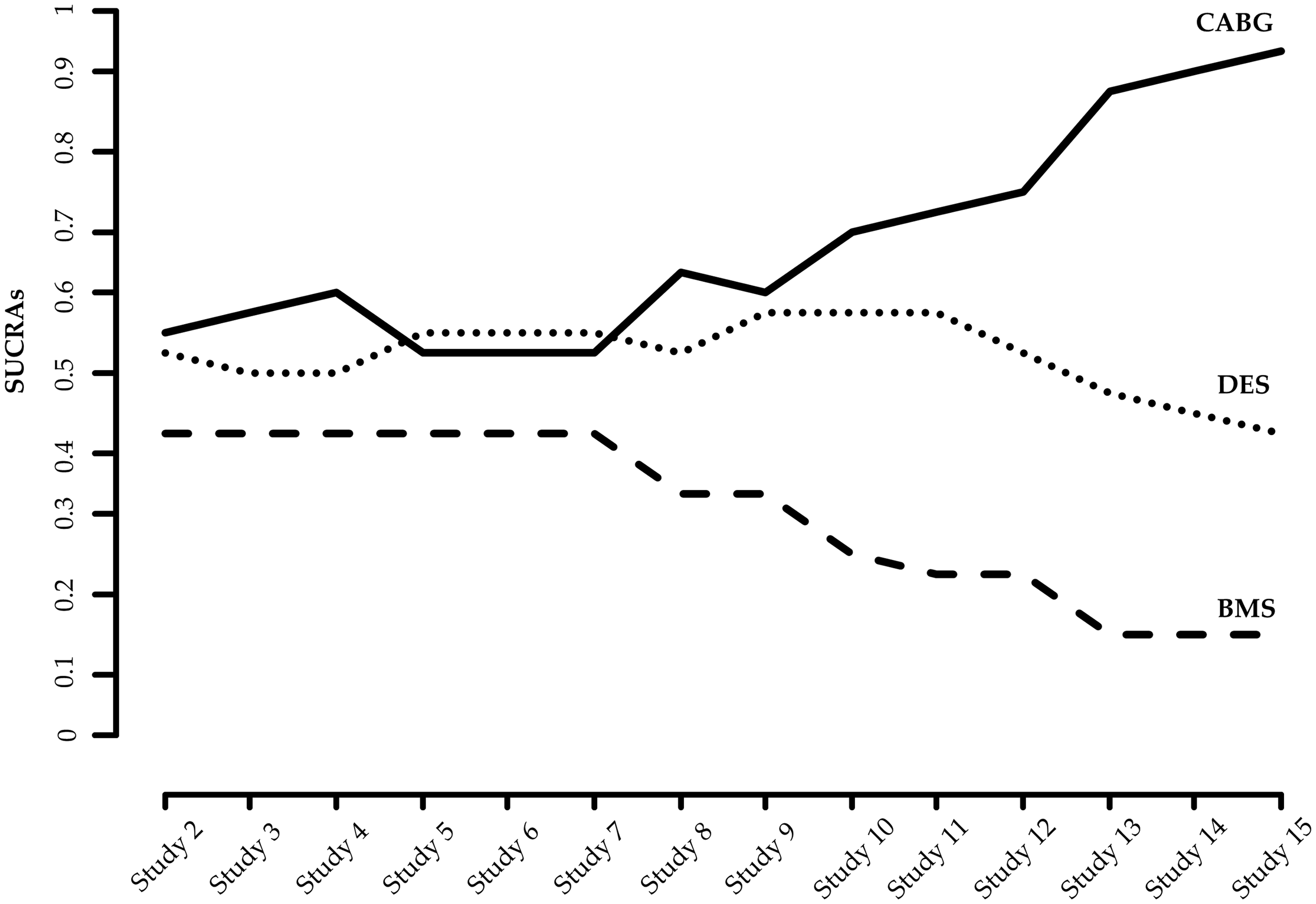

Monitoring changes in the treatment ranking might also be useful in particular in large networks where many treatments are compared. Probabilities for each treatment being at each possible rank can be obtained and the surface under the cumulative ranking probabilities (SUCRAs) and their equivalent P-scores or mean ranks can be illustrated in graphs.54,55 As these measures are based on the estimated summary effects at each update, their uncertainty should be expressed by the repeated confidence intervals while P-scores could be based on the adjusted p values.

4 Application

We apply our methodology to the network of trials for coronary revascularization in diabetic patients.

24

Arm level data for the 15 studies along with the year of publication and the respective ORs are given in Appendix Table 1. For a ‘non-pharmacological versus any’ intervention comparison type and a semi-objective outcome a log-normal distribution for heterogeneity

4.1 Description of the accumulation of evidence

When evidence is updated regularly, researchers perform both pairwise and NMA and evaluate the criteria of stopping early for efficacy or futility for both procedures. Appendix Figure 5 shows the cumulative pairwise and NMA effect estimates along with their confidence and predictive intervals after the inclusion of each study.

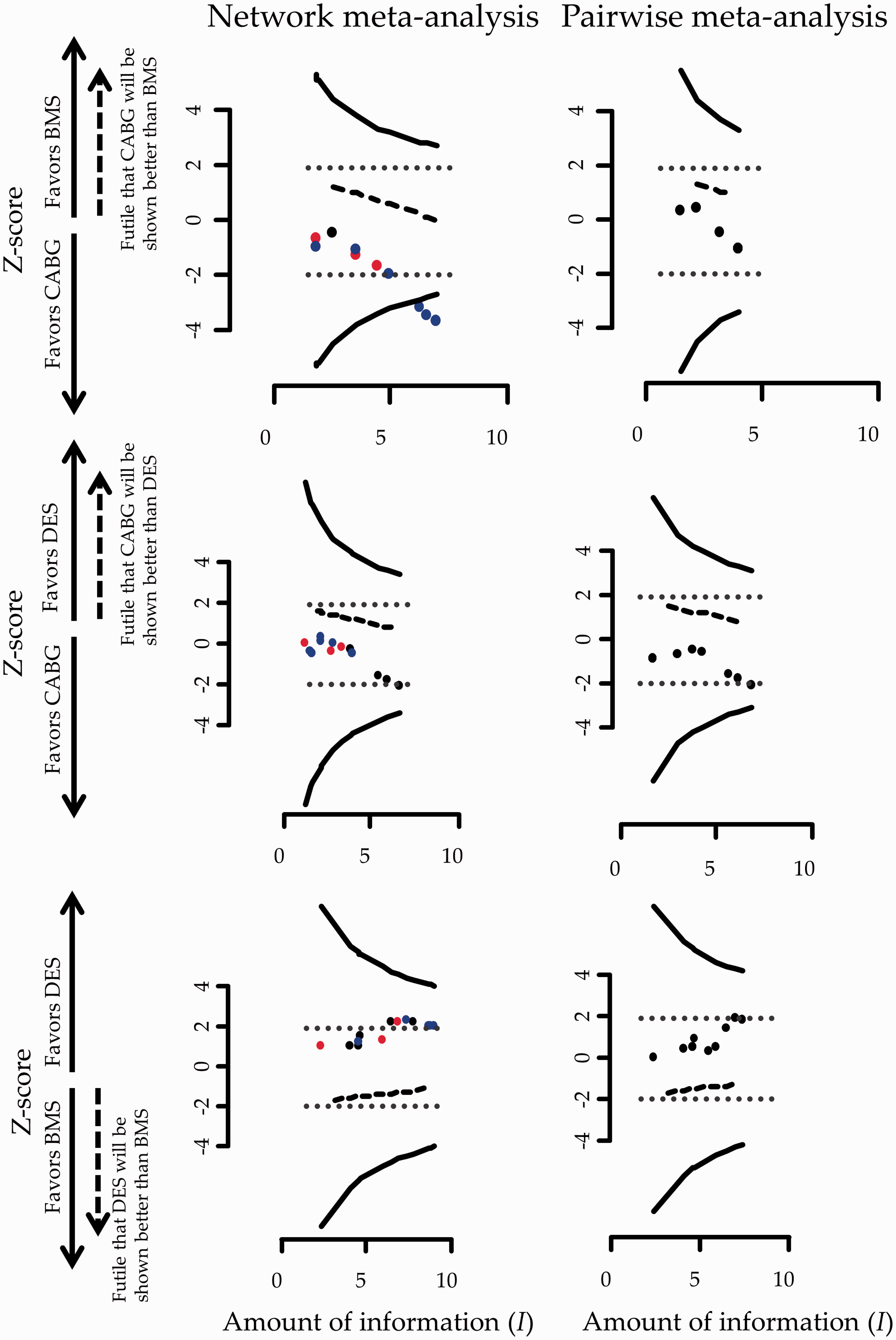

Figure 2 shows the stopping framework for the three evaluated comparisons in the network assuming a heterogeneity standard deviation equal to the median of the predictive distribution,

While inference regarding the comparison ‘BMS versus CABG’ is inconclusive using evidence only from the four trials providing direct evidence this is not the case for the accumulated evidence from NMA. More specifically, the 13th study was conducted in December of 2012 and examined the relative effectiveness of DES compared to CABG. This study informs the comparison ‘BMS versus CABG’ indirectly leading to a conclusion that further research is not needed for that particular comparison. Note that the comparison ‘BMS versus CABG’ would have become marginally significant after the inclusion of the 12th study in an unadjusted cumulative NMA as the respective ‘z-score’ lies on the dotted boundary which represents conventional stopping. The inclusion of nearly half of the included studies rendered the ‘DES versus CABG’ comparison statistically significant in favor of DES in an unadjusted cumulative NMA; adjusting for multiple testing though, both ‘DES versus BMS’ and ‘DES versus CABG’ comparisons remain inconclusive using either pairwise meta-analysis or NMA (Figure 2).

Stopping framework for efficacy (solid lines) and futility (dashed lines) for the network of coronary revascularization in diabetic patients. Maximum information is not displayed in the graphs as it is everywhere larger than 10. Heterogeneity standard deviation is assumed to be equal to the median of the predictive distribution,

For all three comparisons, the accumulated data do not cross the futility boundaries so no decision over stopping for futility is being made throughout the updating process of NMA or pairwise meta-analysis.

During the updating process, the inclusion of the 13th study would lead investigators to reach conclusive results about the relative effectiveness of one out of the three evaluated comparisons indicating that CABG is better than BMS. After the inclusion of 15 studies, DES would appear to have an insignificant advantage over BMS and CABG a non-statistical significant benefit over DES. As

The information regarding stopping for efficacy using results from pairwise meta-analysis or NMA given in Figure 2 can also be visualized in the form of repeated confidence intervals (Appendix Figure 6).

Assuming a 25th and 75th quantile of the predictive distribution for heterogeneity instead of the median in our calculations does not markedly change the conclusions of the stopping framework (Appendix Figure 7 and Appendix Figure 8). The influence of the 13th study continues to be pronounced in the stopping decisions. In general, greater values of heterogeneity render the repeated confidence intervals larger and consequently stopping for efficacy is less likely to occur.

Appendix Figure 9 shows the cumulative estimates of the inconsistency factor for the loop ‘DES-BMS-CABG’. It suggests that the initial inconsistency factor of 1.34 (on a logOR scale) in 2007 was decreased to 0.84 in 2009 and finally to a relatively small inconsistency factor of 0.26 in 2013. The confidence intervals become smaller as more studies are included and, although the method is underpowered, initial concerns that the network might be inconsistent are challenged.

We calculate the SUCRAs of the three treatments in each interim analysis allowing for uncertainty expressed by the repeated confidence intervals. Cumulative estimation of SUCRAs is illustrated in Figure 3. Repeated SUCRAs are relatively close to each other in the first years of the sequential NMA while their distinction is growing as evidence is accumulated.

Accumulated SUCRAs for the network of coronary revascularization in diabetic patients. Heterogeneity standard deviation is assumed to be equal to the median of the predictive distribution,

It is important to note that the assumptions feeding into the analysis (values for heterogeneity, type I and type II error, alternative effect sizes) may not be universally acceptable to all health-care professionals and patients. Thus, results from a sequential NMA should be interpreted in the light of such decisions. Moreover, firm recommendations on the need of further studies should take into account that new studies might be useful for the examination of a secondary outcome; indeed Tu et al. point out that although CABG seems to be better than BMS and DES in terms of the primary outcome, it is associated with an increased risk of stroke and might not be preferred for patients at high risk of such an event. 24 In that case, it is even more important to avoid undertaking further trials that involve CABG because its superiority has been established and further experimentation might be deemed unethical. Instead, indirect evidence, e.g. by planning more ‘BMS versus DES’ studies, should be sought for all comparisons of interest. In general, clinical judgment considering several outcomes that might be of interest to patients is necessary to evaluate which intervention is appropriate to which patient group.

5 Discussion

We suggest formal statistical monitoring when decisions need to be made every time a ΝMA is updated. The outlined method is adopted from the respective methodologies developed for clinical trials and pairwise meta-analyses. We consider two situations in which our methodology can be appropriate; in both situations the analyses of studies are performed as their results become available. The first one is the prospective design of a NMA at the time of market entry of a new drug as suggested by Naci and O’Connor. 19 In contrast to the current practice that drug approval often relies on the evaluation of each drug in placebo-controlled trials, such a procedure would feed regulatory agencies with the optimal level of evidence regarding the comparative efficacy and safety of the new drug. The establishment of designing prospective NMAs in the regulatory setting may be challenged by the potential reluctance of manufacturers to compare their treatments with all competing alternatives, which might lead to selective inclusion of pieces of evidence. Moreover, efforts to reduce the cost of performing a series of trials might lead to postponing the design of prospective NMA until a competing company has collected enough relevant evidence. Informing policy decision-making by health technologies assessments could also include an evaluation of the sufficiency of included evidence using methods described in this paper.

The second context that our method can be used in is the regular update of systematic reviews that contain multiple treatments when new trials become available. Application of the statistical monitoring is of particular interest to organizations that produce and maintain systematic reviews such as the Cochrane Collaboration. As the main aim of the Cochrane Collaboration is to provide the best available and most up-to-date evidence, authors not only prepare systematic reviews but are also committed into updating them. This commitment aims to minimize the risk of the reviews to become out-of-date and potentially misleading. Frequent updates of systematic reviews, however, can result in an inflated type I error, in a similar manner as in a genuinely prospective NMA.

Appending a stopping rule to the meta-analysis context has received considerable criticism.15,56 In particular, expressed concerns highlight the lack of direct control over the process of collecting and synthesizing studies in the sense that the meta-analyst is not in a position to decide whether more trials are to be conducted or not. We consider the formal statistical monitoring to be relevant for situations in which a researcher can have control—or at least provide recommendations—over future updates of the meta-analysis.

Our methods are similar to those proposed by Whitehead and Higgins et al. for pairwise meta-analyses, extended to the case where multiple treatments are competing.5,15 Whitehead has developed a sequential method for meta-analysis using the triangular test in a series of concurrent clinical trials and Higgins et al. focused on the restricted procedure of Whitehead, equivalent to an O’Brien and Flemming boundary.5,15 Wetterslev et al. have developed an alternative sequential method for pairwise meta-analysis 41 ; they have also created software (www.ctu.dk/tsa) which has been largely applied in practice and they argue that their methodology should be adopted by Cochrane authors. 25 Their approach has technical and conceptual similarities and dissimilarities with that proposed by Higgins et al. 15,25 For instance, Wetterslev et al. adjust the required sample size by a factor that depends on the estimated heterogeneity. As estimation of heterogeneity is difficult at the beginning of the sequential process, the estimate at the final update is employed. This is something that can be feasible only on retrospective cumulative meta-analysis. Higgins et al. explores several ways to handle heterogeneity in a sequential random-effects meta-analysis approach including incorporating a prior distribution for the between-studies variance parameter. They argue in the discussion that “further empirical research is needed to characterize the degree of heterogeneity that can be anticipated in a meta-analysis with particular clinical and methodological features, so that realistic informative prior distributions can be formulated.” 15 As such empirical research has been conducted since then,38,39 we here employ informative priors for the heterogeneity variance.

Whitehead suggests that the sequential procedure in meta-analysis may be more justifiable for safety outcomes while Higgins et al. propose the area of adverse effects of pharmacological interventions as a potential application of sequential methods.5,15 Whether the proposed methods work well in the context of rare events is an issue that remains to be investigated. It has been argued that when a major adverse event is rare it might be inappropriate to control over inflated type I error as even a small signal could be sufficient for the meta-analysis to ‘stop’. 5 In any case, the practice of accumulating evidence in a formal way becomes even more imperative in the context of rare events.

6 Concluding remarks

The evolvement of technology can decisively contribute to the realization of living systematic reviews—the high quality, up-to-date online summaries, updated as new research become available—by providing semi-automation to the production process. The inclusion of all available treatment options in such ‘real-time’ syntheses has been termed as “live cumulative network meta-analysis” and can further facilitate informed research prioritization and decision-making. 57 Development, refinement, and evaluation of appropriate statistical methodology as well as guidance over the optimal update of systematic reviews can aid the attempt of living systematic reviews and live cumulative NMA to bridge the gap between research evidence and health care practice. 4

Methodology described in this paper should ideally be viewed as part of a holistic framework for strengthening existing evidence by judging when evidence summaries provide conclusive answers,28,58 planning new studies when needed27,49,58,59 and subsequently updating meta-analysis to include the—assumed justified—future studies. While methodological developments regarding parts of this process have appeared in the literature, they are rarely used in practice. In order to shift the paradigm to evidence-based research planning, methodology needs to be refined and summarized in a comprehensive global framework while its properties need to be evaluated in real world examples. The development of user-friendly software routines along with educational material could also contribute to the usefulness and applicability of the methodology.

Footnotes

Acknowledgements

The authors thank the reviewers for their helpful comments, which greatly improved this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: GS received funding from a Horizon 2020 Marie-Curie Individual Fellowship (Grant no. 703254). AN, DM and ME received no financial support for this article.