Abstract

How information literate are students in higher education, and how accurate is their metacognition related to that ability? Are students’ perceived needs to learn more and their level of interest in becoming information literate related to their pursuit of information literacy (IL) skill development? First-year undergraduates, master’s, and PhD students (N = 760) took an objective IL test and estimated their scores both before and after the test. IL ability, as well as students’ estimation of their IL ability, increased with higher education experience and IL test experience, though also varied notably within groups. Low-performers tended to overestimate their abilities, while high-performers tended to underestimate them—both evidence of the Dunning-Kruger effect. Furthermore, gender comparisons revealed that men tended to estimate higher, and more accurate, scores than women. Finally, PhD students reported greater interest in becoming information literate than undergraduates. Although undergraduates felt a greater need to learn more, PhD students were more inclined to pursue IL growth. For both groups, interest in becoming information literate correlated far more with their likelihood to invest effort into developing IL competencies than their perceived need to know more. What implications might these findings have for how we conceptualize the teaching of IL?

Introduction

On January 6, 2021, the United States Capitol was stormed by individuals who believed information that results of the US presidential election were fraudulent, despite ample, and reliable media coverage of evidence to the contrary. The dissenting information sources motivating the angry skeptics were neither reliable nor credible, yet people were uncritically convinced by this misinformation, and the result of that was stupefying.

When such events occur, many questions arise. Do we know how to evaluate the quality of information sources well enough to be credibly informed citizens? Are we able to find reliable sources and use them appropriately when we produce information? Equally important, are we aware of when our abilities to evaluate, find, and use information are insufficient? Disquieting findings from recent research such as the following imply that the answer to these questions is a resounding no. 53% of American adults rely on social media as their regular source of news, with Facebook topping the list of platforms (Shearer and Mitchell, 2021); 61% of Facebook users trust misinformation that they find there (Al-Zaman, 2021); and popular “fake news” stories are shared more extensively on Facebook than top stories on the same topic from more trustworthy, mainstream media (Silverman, 2016).

The goal of this article is to theoretically and empirically frame issues related to students’ information competencies and metacognitive awareness thereof. Specifically addressed are the competencies students in higher education (HE) have for finding, evaluating, and using information sources. At the same time, we explore the relevance of student interest in possessing these skills, their felt need to develop them further, and their effort intentions related to that.

In order to examine these questions, the article begins with a presentation of the interrelated concepts of information literacy, metacognition, the Dunning-Kruger effect, and interest. Empirical evidence addressing the research questions is then analyzed and discussed. Are students metacognitively aware of their information literacy levels, and are there differences between high- and low-performers? Are there links between these initial findings and factors that could influence their motivation to learn more about information literacy?

Information literacy

In order to best help students responsibly engage in the information-based work at higher levels of education, as well as in daily life, librarians, and teaching staff in colleges and universities have long endeavored to teach students to recognize when they have a need for information, to find and critically evaluate information sources, and to ethically and legally employ these sources in their writing. Such knowledge, skills, and dispositions are part of a diverse set of competencies known as information literacy (IL).

The definition and scope of IL has gradually evolved as technology has advanced and the creation and dissemination of information has changed character. An analysis of various definitions and frameworks (e.g. Association of College and Research Libraries [ACRL] 2000, 2015; Bruce et al., 2006; Bundy, 2004; Coonan and Secker, 2011; Secker, 2018) shows that in most, the IL-construct includes a set of competencies needed to find, evaluate, and use information (Nierenberg et al., 2021). These three source-related facets of IL have been extensively examined over the past 30 years, also in studies designated as for example “digital literacy” (e.g. Eshet, 2004), “media literacy” (e.g. Negi, 2018), “information problem solving” (e.g. Brand-Gruwel et al., 2009), “new literacies” (e.g. Leu et al., 2004), “multiple document comprehension” (e.g. Rouet and Britt, 2011), “civic online reasoning” (e.g. McGrew et al., 2018), and metaliteracy (e.g. Mackey and Jacobson, 2011).

With an abundance of information sources and access methods, individuals are continually faced with information choices. The abilities to find, evaluate, and use information are important competencies when navigating these choices; they are crucial both when processing and creating information and for tempering our susceptibility to misinformation. Inherent in IL is the ability to think critically, enabling us to find and make reasoned judgments about the stream of (mis)information from its myriad of sources, whether online or offline. IL also involves the ability to cite these sources correctly when we create information in order to signal credibility and avoid plagiarism or other misuse. Effective information seeking, another core component of IL, enables us to find reliable and relevant sources. For these reasons, IL is claimed to be a vital competency—not only in education, but also in the workplace, in everyday life, in health, and for citizenship (Secker, 2018).

Because IL is multi-faceted construct with varying definitions, there is little consensus on a standard measure for assessing IL levels. The choice of assessment tools depends on not only the chosen definition, but also on the context of the research. The current study uses the definition of IL provided by Nierenberg et al. (2021: 79): “Information literacy encompasses the knowledge, skills and attitudes needed to be able to discover, evaluate and use information sources effectively and appropriately in order to answer questions, solve problems, create knowledge and learn.” In terms of context, the study focuses on HE students’ knowledge and self-assessments regarding finding, evaluating, and using information. Related to this, we hypothesize, is how interested a student is in being or becoming an information literate person, as well as the degree to which they feel a need to learn more IL skills and their actual intention to do so.

Finding, evaluating and using information are important competencies, and many IL researchers and practitioners recognize a need to better assess these aspects of IL (e.g. Secker and Coonan, 2011). Interestingly, most IL assessment studies rely on measures that have not been controlled for reliability and validity (Mahmood, 2017a; Walsh, 2009). Even for those that are, the tools’ reliability is often measured with Cronbach’s alpha (internal consistency; Mahmood, 2017b), which is not appropriate for multidimensional constructs such as IL (Nierenberg et al., 2021). The assessment tools employed in the current study, described in more detail in the Methods section, have evidence of both reliability and validity. With these tools, this study attempts to assess IL in HE students and to determine students’ calibration accuracy.

Metacognition

Metacognition, the overall ability to reflect upon our own thoughts, includes the ability to assess what we know (Double et al., 2018). Metacomprehension, our ability to accurately judge what we have read, is a particular aspect of metacognition (Dunlosky and Lipko, 2007; Glenberg and Epstein, 1985). In a seminal study of metacognitive awareness, Kruger and Dunning (1999) state that metacognition provides people with “. . .the ability to know how well one is performing, when one is likely to be accurate in judgment, and when one is likely to be in error” (p. 1121). Both metacognition and metacomprehension are therefore important for effective learning, however, they can be faulty (Kruger and Dunning, 1999).

Believing that we understand something, when we, in fact, do not, can (and commonly does) lead to serious errors (Martinez, 2006; Mok et al., 2006; Norman et al., 2019). Low performers often lack these metacognitive skills (Kruger and Dunning, 1999: 1122). This miscalibration becomes problematic by limiting awareness of and attention to important learning needs.

Indeed, students’ understanding of their abilities often differs from the abilities they actually exhibit in a college environment (Salisbury and Karasmanis, 2011), and new students tend to overestimate their IL competencies (Guise et al., 2008; Nierenberg and Fjeldbu, 2015; Øvern, 2018). In one study that touches on the relationship between metacognition and information seeking, students evaluated their own skills at finding information online to be adequate, even before beginning higher education, and therefore seldom sought librarian assistance (Guise et al., 2008). However, the study also found that when students experienced discrepancies between their perceived skills and their actual skills, they quickly lost confidence.

Dunning-Kruger effect

Individuals with low abilities tend to overestimate their abilities and those with high abilities tend to underestimate their abilities (Kruger and Dunning, 1999). These biases are now referred to as the Dunning-Kruger effect. Kruger and Dunning postulated that the difference in miscalibration between low- and high-performers likely stems from erroneous judgments about oneself and beliefs about others, respectively. Researchers in multiple disciplines, including IL, have replicated Kruger and Dunning’s results (Mahmood, 2016).

Händel and Fritzsche (2016) studied undergraduate students’ ability to accurately estimate their performance (metacognitive monitoring ability) by asking how confident they were about their answers on a test. Low-performers were found to be “unskilled and unaware,” as Kruger and Dunning argued in their 1999 article. Low-performers both overestimated their abilities, and were less accurate in their estimates than high-performers (Händel and Fritzsche, 2016). However, the low-performers were less confident in their actual test answers than high-performers. This could possibly increase low-performers’ motivation to invest in needed learning, despite their overall overconfidence in ability, which could conceivably decrease their motivation. From a motivational perspective, then, the low-performing students might not be “doomed to remain unaware,” despite their poorer performance estimation accuracy (Händel and Fritzsche, 2016).

People falling victim to the Dunning-Kruger effect regarding misinformation, and those who are unmotivated to attempt to evaluate information otherwise, are at a disadvantage (Rosman et al., 2015). Their convictions can lead to dangerous outcomes, for example in the current pandemic. Belief in misinformation about vaccines can result in increased COVID-19 deaths, as this false information may be trusted and shared, seemingly uncritically, among misinformed individuals (Bangani, 2021).

In a larger, systematic review of empirical IL studies assessing peoples’ estimated and actual IL abilities, Mahmood (2016) claims to have found convincing evidence of the Dunning-Kruger effect in 92% of relevant studies. Mahmood bases this statistic on the “overall evidence of inconsistency” between estimated and actual ability (p. 205). Mahmood suggests that these claims can be more convincingly nuanced by comparing different HE-levels, and low- and high-performing groups. Mahmood, however, does not specify whether the studies included in his review measured students’ self-assessments before or after taking an objective IL test, an important distinction when interpreting results. Test-taking provides respondents with a better basis for judging their own competencies, as they then have a better idea of what the topic—in this case IL—involves (Rosman et al., 2015). However, when examining the order of testing in five randomly chosen studies from Mahmood’s review, two did not specify the order of the measurements (Jackson, 2013; Oliver, 2008), two measured students’ self-assessments prior to testing (Tepe and Tepe, 2015; Vickery and Cooper, 2003), and one measured their self-assessments both before and after testing (Gross and Latham, 2012).

Reliable assessment tools may be valuable for student learning of IL—including information seeking, evaluation, and use—because they can be used to increase students’ metacognitive awareness of their abilities and needs. Such tools are also useful in the teaching of IL, allowing IL practitioners to more accurately assess students’ competencies, as self-assessments without (or prior to) testing are particularly prone to the biases described by the Dunning-Kruger effect (Rosman et al., 2015).

Interest

Though students may become more aware of their level of IL competence through testing, is accurately identifying a need to know more enough to motivate actual learning? Research on interest suggests that need paired with interest may be more motivating than just the identification of need alone (Rotgans and Schmidt, 2014; Tapola et al., 2013). In addition, recent research by Smarandache et al. (2021) has shown that students devote more time and effort to learning when they find the material interesting, and that this results in deeper learning. This is an important part of learning in a society with a multitude of information sources. Generating interest can therefore be fundamental for sparking a desire to take advantage of provided opportunities to develop their IL skills, and the drive to continue IL learning on their own.

According to interest researchers Renninger and Hidi (2019), interest increases students’ motivation to work with subject matter over time, as it has beneficial effects on both attention, memory and engagement. They have found that interest leads to meaningful learning and increased cognitive performance, as individuals with interest are more likely to expend effort in learning, developing, and employing strategies to realize their learning goals. Interest also enables the integration of information with previously acquired knowledge, allowing the formation of connections across disparate information sources (Hidi and Renninger, 2006; Kintsch, 1980)—important work for IL competence.

Knowing the difference between the nature of less and more developed interest could matter for how the teaching of IL skills is approached. Our understanding of student interest therefore has relevance for teaching and learning in general, and is likely useful for IL learning in particular. In this study, then, we explore connections between IL interest and its relationship with student awareness of their IL competence, their felt need to learn more, and their intention to actually pursue that.

Research questions and hypotheses

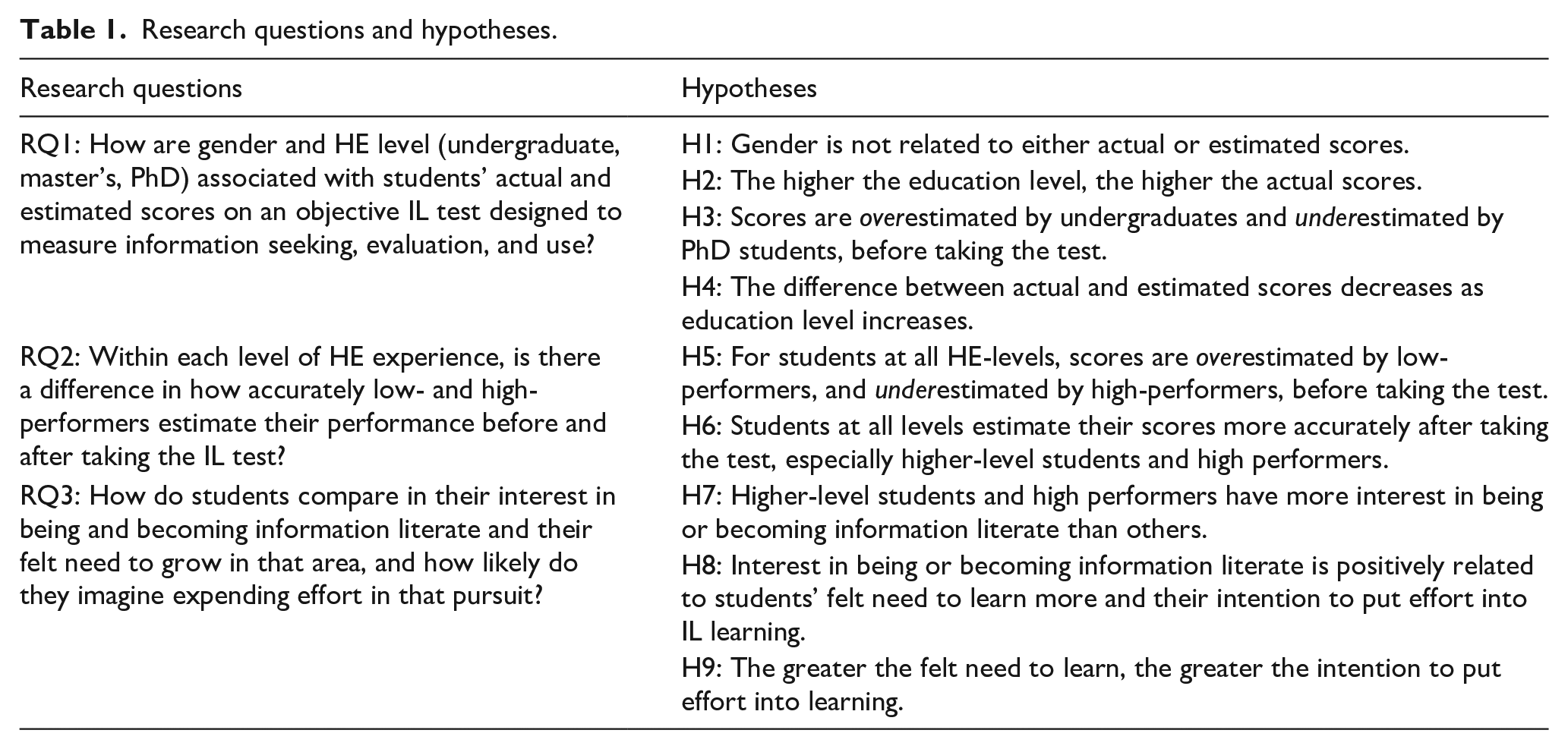

In order to determine how metacognitively aware students are of their IL levels, and to find possible links between these findings and variables that may influence their motivation to develop their IL further, we formulated three main research questions and nine corresponding hypotheses (see Table 1). The variables we chose to measure were interest, gender, and education level. As far as we know there are no empirical studies linking interest to IL, and we believe that this previously unexplored link is relevant, as interest is a factor that increases motivation and learning. Gender is an important variable to explore in all educational contexts, and no recent studies of IL and metacognition focus on this variable. Education level can have an association with both actual and estimated scores, and is therefore also relevant to explore.

Research questions and hypotheses.

Methods

Participants

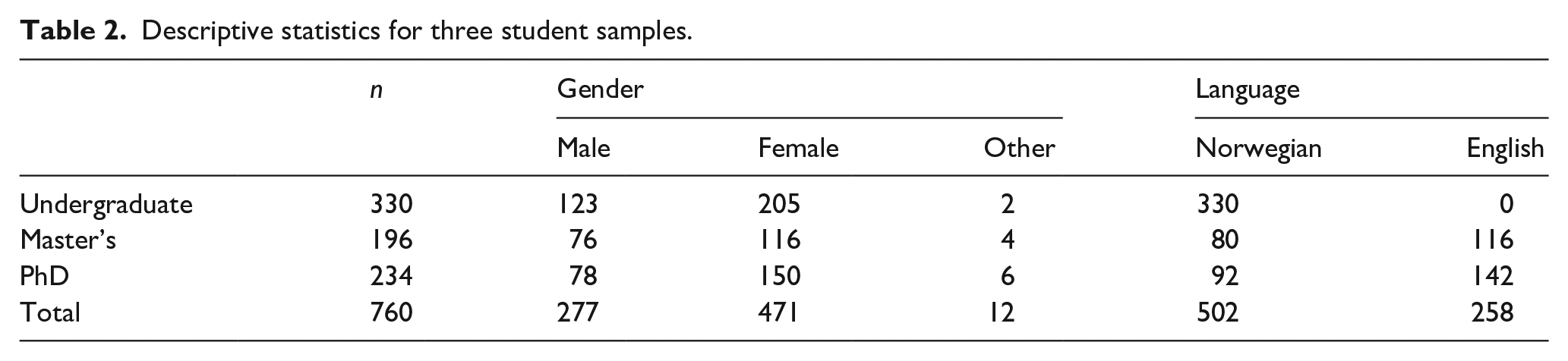

Data were collected from three student groups representing three levels of higher education: undergraduate, master’s, and PhD (see Table 2). The undergraduate sample consisted of students in the first semester of their current study program, recruited from three universities and one university of applied sciences (UAS) across Norway. These institutions are small to medium-sized (5200–18,000 students), and offer a range of academic disciplines and professional studies. Participants came from various fields of study, including psychology, teacher education, biology, and history. Students were recruited via their learning management system (LMS) or in the classroom, and offered a small reward for their participation.

Descriptive statistics for three student samples.

Master’s and PhD students from universities in nine countries, including Norway, participated in the study. Representing a wide variety of disciplines, these students were recruited via social media (Facebook, Twitter, and Reddit) and e-mail. International graduate students were recruited when the survey was translated from Norwegian to English. Students could choose between the two language versions.

The distribution of genders for the undergraduate and master’s samples is similar to that of Norway as a whole, where 66.8% and 58.4% of students are female, respectively. In the PhD sample however, females are overrepresented by 11% compared to national levels, where 52.8% are female (Database for Statistics on Higher Education, 2019).

Materials

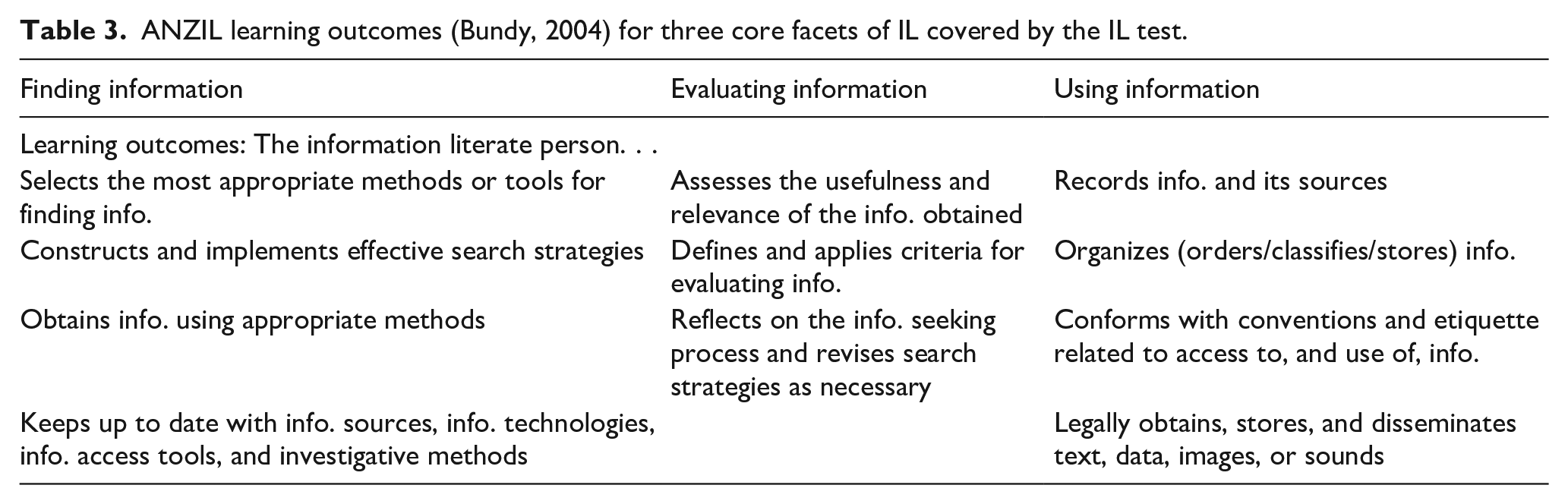

Data for this study were collected with an online survey containing a self-created, psychometrically robust IL knowledge test (available at our IL assessment tools website). The test consists of 21 multiple-choice items, seven for each of the three source-focused, core components of IL described in its various definitions and frameworks: finding, evaluating, and using information. For a detailed description of test development, including evidence of reliability and validity, see Nierenberg et al. (2021). Items were designed to test learning outcomes from the Australian and New Zealand Information Literacy Framework (ANZIL; Bundy, 2004) for the three aforementioned facets (see Table 3). The ANZIL framework is based on the previous gold standard for assessing IL, the Information Literacy Competency Standards for Higher Education (ACRL, 2000). The ACRL Standards, which guided instruction and facilitated assessment of IL by providing explicit learning outcomes, were replaced in 2015 by the more conceptual Framework for Information Literacy for Higher Education (ACRL, 2015). The ANZIL and ACRL standards do not conflict with more recent frameworks, but detail concrete learning outcomes that aid in teaching and measuring students’ developmental journeys toward greater IL, with its other facets, as well.

ANZIL learning outcomes (Bundy, 2004) for three core facets of IL covered by the IL test.

Each item in the IL knowledge test has four randomly ordered alternative answers, one of which is most correct. There is no option for “I do not know,” and each item must be answered in order to proceed to the next. This example item, with the correct answer in italics, relates to the use of sources: “What is the most important reason to use sources when writing a paper? (a) To support arguments; (b) To avoid plagiarism; (c) To show that you’ve read the sources; (d) To satisfy the requirements of the assignment.”

Several methods were used to evaluate the IL knowledge test for validity and reliability, as described in Nierenberg et al. (2021). Expert evaluations and think-aloud protocols ensured the validity of test items. After four IL experts evaluated the items for their clarity, content accuracy, and objectivity, think-aloud protocols with students in the target group were held to check items for readability and comprehension. The IL test demonstrated high test-retest reliability, a better measure of reliability than internal consistency for tests/indexes of multidimensional constructs such as IL, as argued in the aforementioned article. For a more detailed description of the development of the IL knowledge test, including item selection criteria and evidence for its reliability and validity, see Nierenberg et al. (2021).

Procedure

In order to measure participants’ metacognition of their IL knowledge, all respondents estimated how many of the questions they believed they had answered correctly, after having taken the test. After data collection had begun, we realized that it would be useful to also have students estimate their scores prior to taking the IL test, based on the working definition of IL provided at the beginning of the survey. A total of 308 respondents (70 undergraduates, 122 master’s students, 116 PhD students) were therefore given the opportunity in a revised version of the questionnaire to also estimate, before the test, how many of the 21 questions they predicted they would answer correctly. Additionally, 70 undergraduate and 64 PhD students were also asked to rate on a scale from 1 (not at all true for me) to 6 (very true for me) two additional statements before the test: “I am interested in being or becoming an information literate person” (IL interest) and “I need more skills in information literacy” (IL need). After taking the test and receiving their final score, they rated the same two statements again, as well as a third question, “Knowing myself, I will make the effort to develop stronger information literacy skills” (IL effort; 1 = not likely at all, 6 = highly likely).

Data were collected from August 2019 to December 2020. Test results were analyzed using IBM SPSS Statistics 26 (2019).

To determine whether there are differences within each educational level for students scoring low and high on the IL test, results for each level were split into two subgroups—those scoring below the median and those scoring above the median (the median being different for each HE level).

Results

Results are arranged in the order of the three research questions.

RQ1. IL levels and score estimation accuracies by gender and HE level

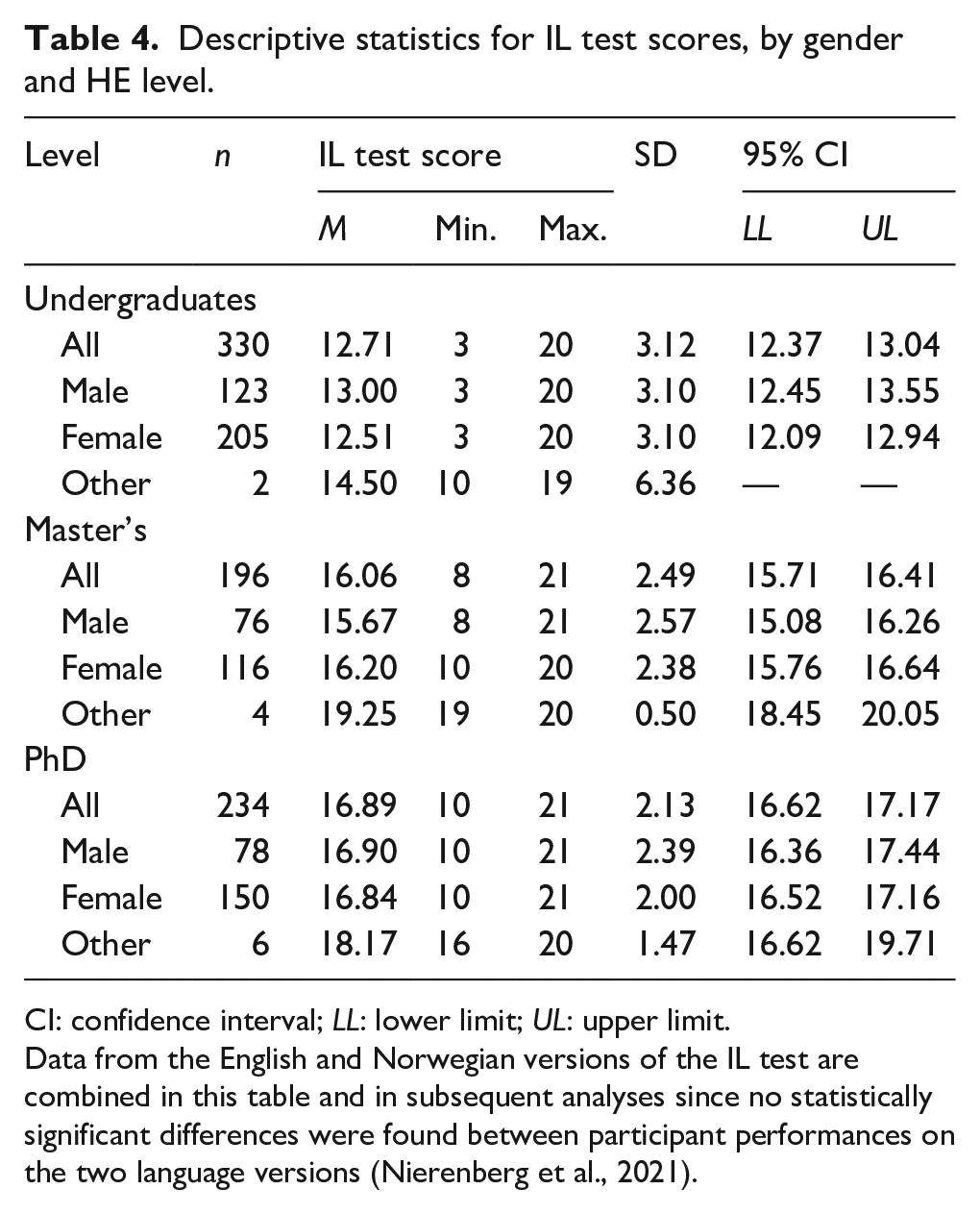

Table 4 provides descriptive statistics for IL test scores for the three samples, by gender. The maximum possible score on the IL test is 21 points.

Descriptive statistics for IL test scores, by gender and HE level.

CI: confidence interval; LL: lower limit; UL: upper limit.

Data from the English and Norwegian versions of the IL test are combined in this table and in subsequent analyses since no statistically significant differences were found between participant performances on the two language versions (Nierenberg et al., 2021).

Gender and HE level comparisons

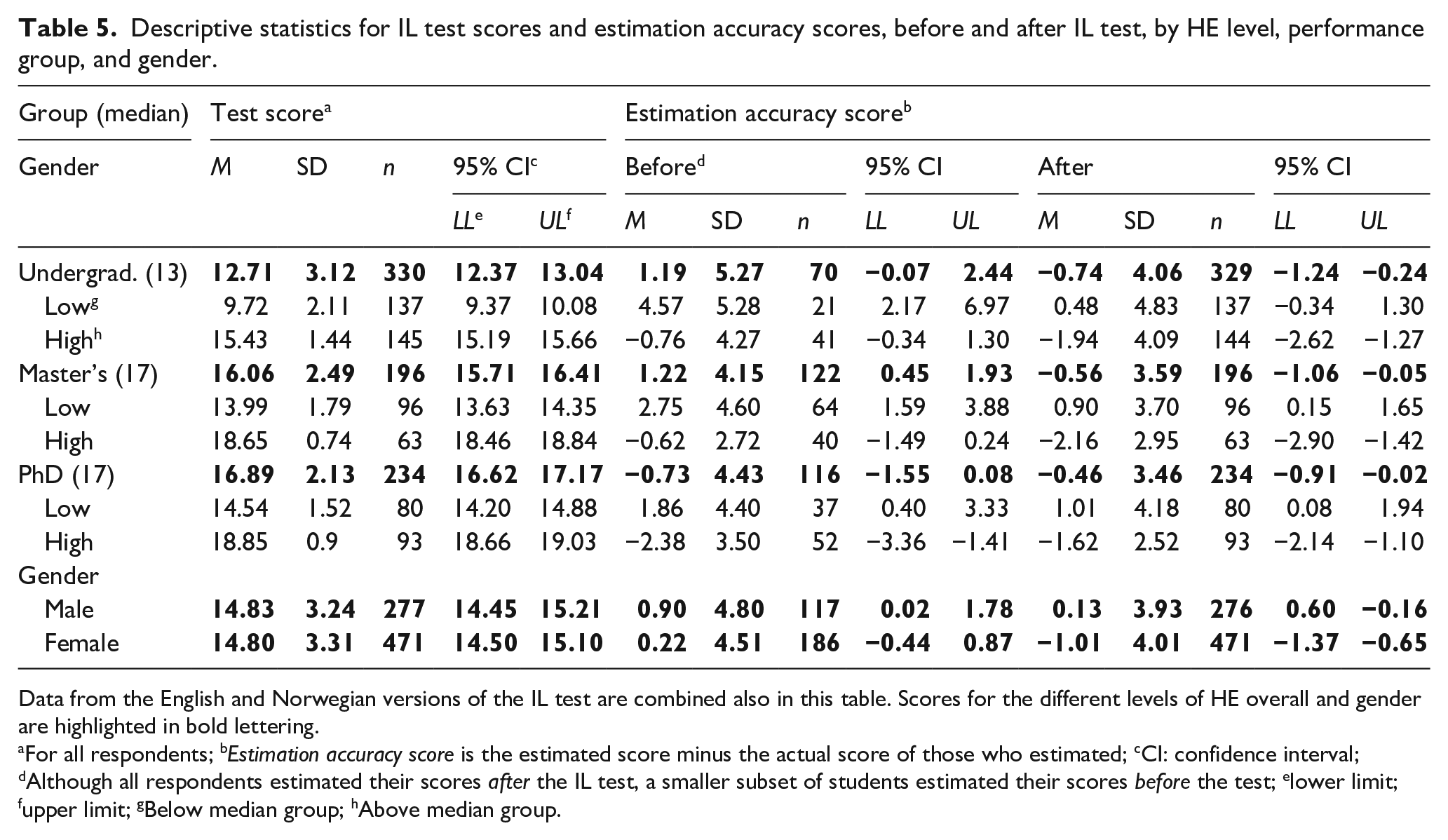

Table 5 shows actual IL test scores and the difference between actual and estimated scores (estimation accuracy scores) before and after the test. Positive estimation accuracy mean values indicate overestimated scores, while negative mean values indicate underestimated scores. The closer the value to zero, the better the student’s metacognition. Students scoring below or above the median score were divided into low- and high-performing groups for each HE level (For additional descriptive statistics and analyses of students’ estimated scores, see Supplemental Material 1, available at our IL assessment tools website, https://site.uit.no/troils/).

Descriptive statistics for IL test scores and estimation accuracy scores, before and after IL test, by HE level, performance group, and gender.

Data from the English and Norwegian versions of the IL test are combined also in this table. Scores for the different levels of HE overall and gender are highlighted in bold lettering.

For all respondents; bEstimation accuracy score is the estimated score minus the actual score of those who estimated; cCI: confidence interval; dAlthough all respondents estimated their scores after the IL test, a smaller subset of students estimated their scores before the test; elower limit; fupper limit; gBelow median group; hAbove median group.

IL test score comparison

When excluding the small gender group “other,” a two-way ANOVA of IL test scores by HE level (undergraduate, master’s, and PhD) and gender (male, female), showed a main effect for HE level, F(2, 745) = 187.47, p < 0.001, η2 = 0.34. Mean IL test scores increased with HE level, as could be expected. Bonferroni post hoc tests showed that each successive HE level scored significantly higher than the level below it (p < 0.01 for all comparisons). There was no significant difference between men and women on overall test scores. No main effect was found for gender, and the interaction between gender and HE level was not statistically significant.

Estimation accuracy comparison

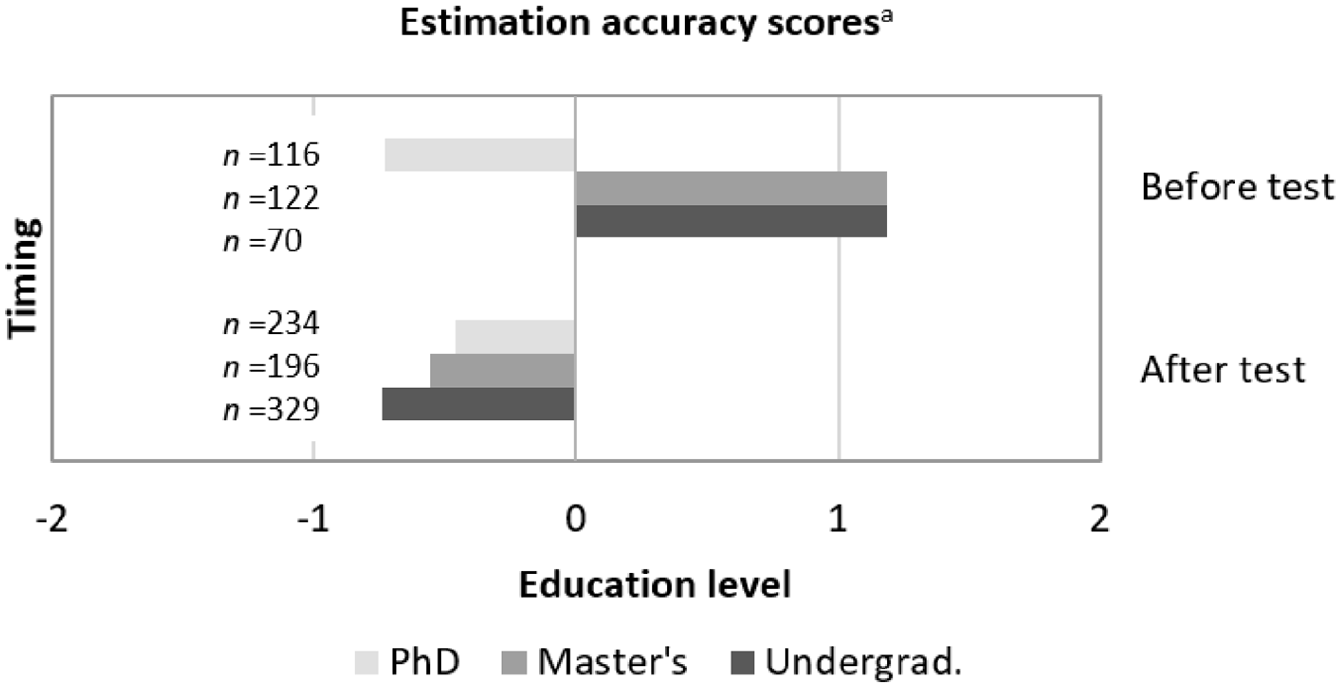

Student estimation accuracy was calculated by subtracting each individual’s actual IL test score from their estimated IL test score, both before and after taking the test. Figure 1 illustrates overall estimation accuracy scores for those students who made test score estimates. Overestimated scores are on the right side of zero and the underestimated scores are on the left. The closer the value to zero, the more accurate the estimate.

Estimation accuracy scores, before and after test.

Accuracy differences by HE level and gender (male and female) were tested with two-way ANOVAs for before- and after-test accuracy scores. Effect size was measured with eta squared (η2). A two-way ANOVA of before-test accuracy scores by HE level and gender showed a main effect for HE level, F(2, 297) = 3.82, p < 0.05, η2 = 0.03, but not for gender. Bonferroni post hoc tests showed that undergraduate and master’s student estimation accuracies were not significantly different, while PhD student estimation accuracy was significantly higher than undergraduate (p < 0.01) and master’s student estimation accuracy (p < 0.05).

A two-way ANOVA of after-test accuracy scores by HE level and gender showed no main effect for HE level, but a main effect for gender, F(1, 741) = 10.44, p = 0.001, η2 = 0.01. The HE level by gender interaction was statistically significant, F(2, 741) = 4.38, p < 0.05, η2 = 0.01. Three independent sample t-tests comparing men’s and women’s scores—with effect size measured with Cohen’s d (d)—showed that undergraduate men made significantly more accurate estimations than undergraduate women, t(325) = 4.04, p < 0.001, d = 0.15. There were no significant gender differences found for the master’s and PhD students.

Together, these findings show that students at higher HE levels have higher estimates of their IL competency, and that they are better at accurately estimating their IL test scores compared to students at lower HE levels. However, once they have had a chance to calibrate their understanding with the IL test itself, that difference more or less disappears among all HE levels. Furthermore, though men make more accurate after-test estimates than women at the undergraduate level, women’s estimates become equally accurate with more HE experience.

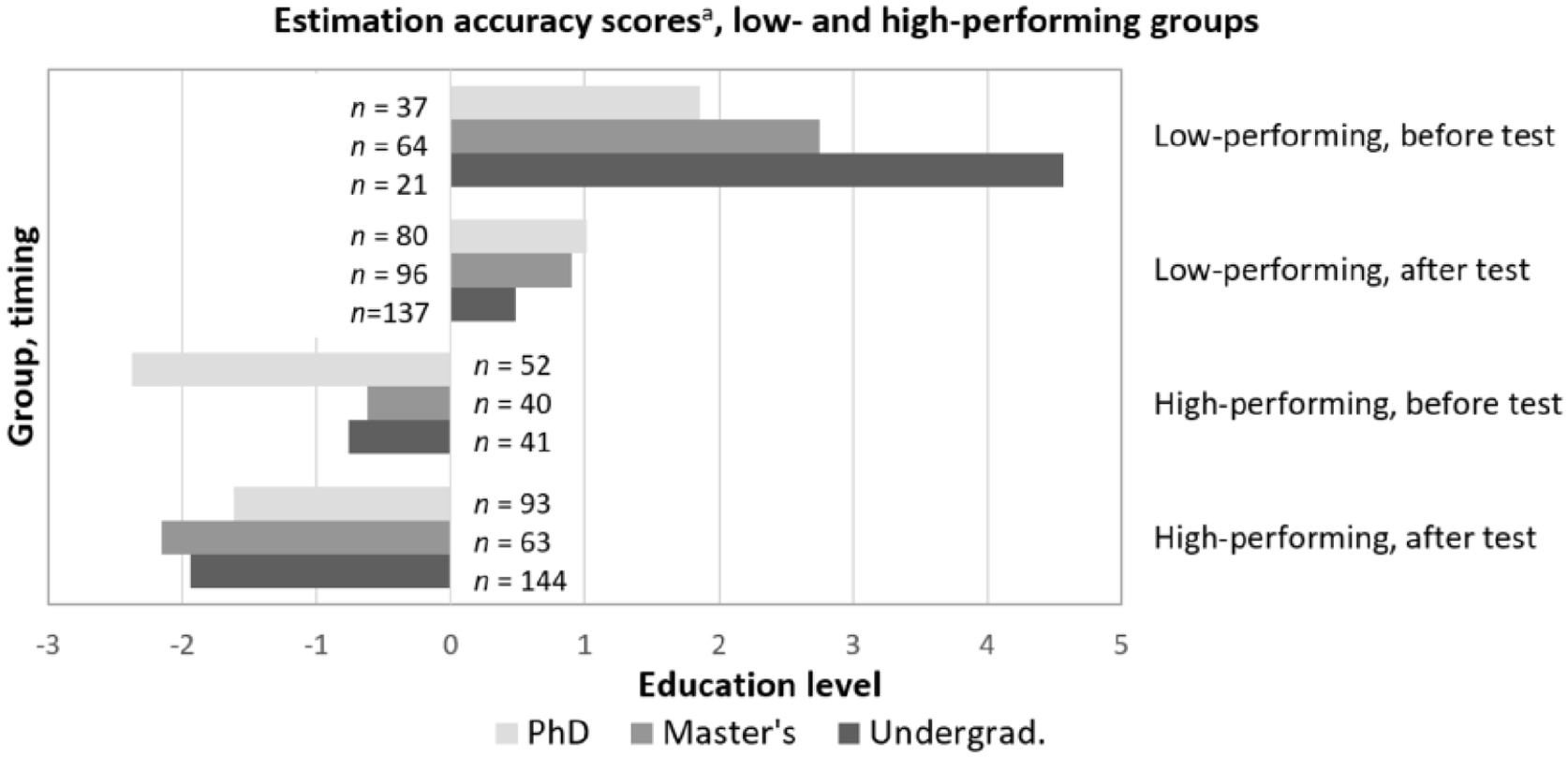

RQ2. Score estimation accuracies: Comparison of low- and high-performing groups by HE level

There may be more difference within HE levels between low- and high-performers on the IL test than these overall analyses capture, particularly in terms of how much students under- or overestimate their IL test score—both before and after taking the IL test—and in terms of their desire and commitment to developing those competencies. To test this, students were divided into a low- or high-performing group by median split for each of the three HE levels (the median varied by HE level as noted in Table 5). Students who scored below the median in each HE level were put in a low-performing group, and those who scored above the median in each HE level were put in a high-performing group. Estimation accuracy scores for the low- and high-performing groups at different HE levels are illustrated in Figure 2.

Estimation accuracy scores, low- and high-performing groups, before and after IL test.

As for estimation accuracy scores, two-way ANOVAs by HE level and performance level group indicated a main effect for HE level in before-test accuracy, F(1, 254) = 5.05, p < 0.01, η2 = 0.04, but not after-test accuracy. For before-test accuracy, a Bonferroni post hoc test shows that there were significant differences between master’s and PhD students, p < 0.005, and between undergraduate and PhD students, p < 0.05. The difference between undergraduate and master’s students however was not significant. A main effect was also found for performance level group. Differences between low- and high-performing groups are significant both before, F(1, 254) = 62.04, p < 0.001, η2 = 0.20, and after, F(1, 612) = 66.87, p < 0.001, η2 = 0.10, taking the test. This indicates that there are significant differences in the low and high groups’ abilities to accurately estimate their scores.

Taken together, results indicate that students have a diffuse sense of their IL skill before taking the IL test, while after taking it, most become better at calibrating their score estimates. Interestingly, although lower-performing students tend to overestimate their skill rather than underestimate it like their higher-performing peers, low-performers are more accurate in making after-test estimations than high-performers. These metacognitive differences may have differential relevance for the students’ felt need or intent to develop their IL competencies.

RQ3. Interest, need, and effort: Comparisons by HE level and performance level

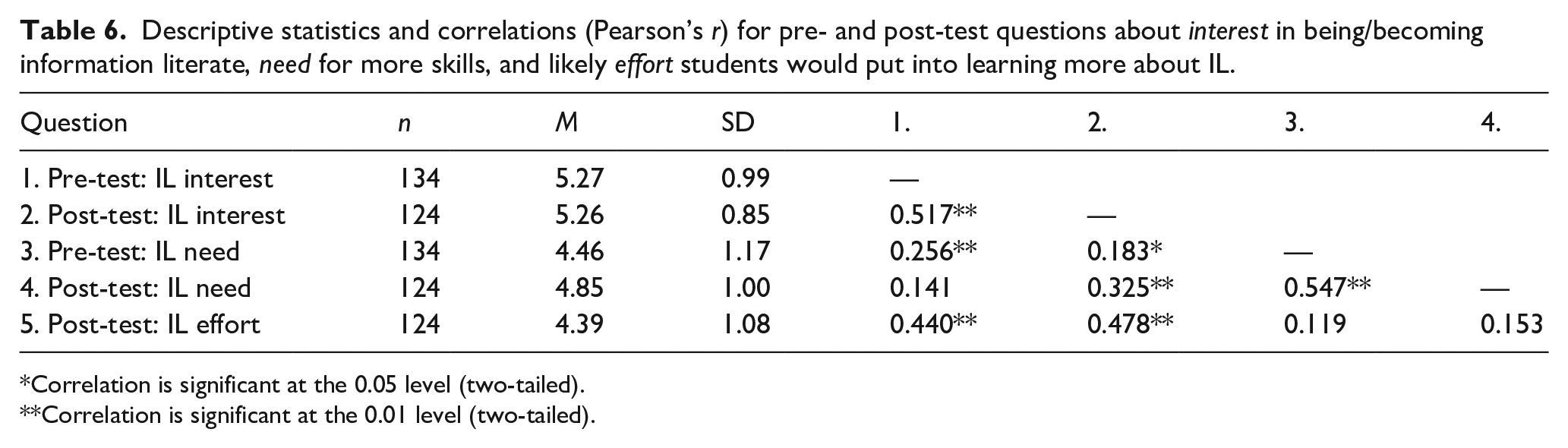

In order to determine whether taking the IL test influenced students’ interest in becoming information literate, a selection of undergraduate (n = 70) and PhD (n = 64) students were asked two questions both before and after the test, and a third question after the test.

The first question (“interest”) asked about the student’s interest in being or becoming information literate, and the second (“need”) asked whether they believe that they need more skills in order to do this. The third question (“effort”) asked whether, knowing themselves, they would put the effort into obtaining additional IL knowledge and skills. Before- and after-test answers to the interest and need questions correlated highly with each other (p < 0.001), indicating that the act of taking the test did not significantly change answers regarding interest or the perceived need to know more (see Table 6). Their likelihood to do anything about that (effort), however, was significantly correlated only with their interest in being/becoming an information literate person (both before and after the test), but not with their felt need for more skill. Furthermore, their perceived need to grow, and what they believed they would actually do about that, were hardly correlated at all (For additional descriptive statistics and analyses related to interest, need and effort, see Supplemental Material 2, available at our IL assessment tools website, https://site.uit.no/troils/).

Descriptive statistics and correlations (Pearson’s r) for pre- and post-test questions about interest in being/becoming information literate, need for more skills, and likely effort students would put into learning more about IL.

Correlation is significant at the 0.05 level (two-tailed).

Correlation is significant at the 0.01 level (two-tailed).

A two-way repeated measure MANOVA was performed with HE group (undergraduate, PhD) by performance level group (low, high), comparing the two dependent variables—student IL interest and perceived skill need—before and after the test. Between groups, a main effect was found for HE level, F(2, 101) = 9.41, p < 0.001, η2 = 0.16, and performance level, F(2, 101) = 4.05, p < 0.05, η2 = 0.07. PhD students reported significantly greater interest both before and after the test, than undergraduates. Nevertheless, undergraduates reported significantly greater need for skills both before and after the test than PhD students.

Within groups, an interaction was found between time (before and after the test) and performance level on interest in being/becoming an information literate person, F(2,101) = 3.21, p < 0.05, η2 = 0.06. While the high-performing undergraduates were more interested both before and after the test than their lower-performing peers, there was a tendency for higher-performing PhD students to be less interested before the test than their lower-performing peers, though more interested than their lower-performing peers after the test (p = 0.057).

In sum, between groups, PhD students reported a greater interest in being or becoming information literate than the undergraduates, while undergraduates reported recognizing a greater need for more IL skill than the PhD students. Within groups, the high-performing undergraduate student interest was greater than that of their lower-performing peers and that relationship was relatively stable across time. Meanwhile, though the lower-performing PhD students were more interested than their high-performing peers before the test, that relationship switched after the test.

Likelihood to make the effort to develop stronger information literacy skills

After taking the test, the same selection of undergraduates and PhD students rated the likelihood that they would make an effort to develop stronger IL skills. Although a two-way ANOVA by HE level and performance level indicated no significant difference between low- and high-performers, it did show a main effect for HE level, F(1, 105) = 12.21, p = 0.001, η2 = 0.11. PhD students reported being more likely to make the effort to develop their IL skills than undergraduates were.

The correlation between interest in being or becoming information literate and the likelihood of making an effort to develop IL skills is significant for both HE levels tested, and is higher for PhD students (r = 0.51) than for undergraduates (r = 0.42).

Discussion

A main goal of this study was to determine whether levels of IL, based on results of an objective 21-item IL test, correspond to student metacognitive awareness of that ability. Unlike similar research, though, we specifically analyzed associations to students’ gender, HE level, and performance level. Furthermore, we investigated whether students’ IL performance is related to (1) their interest in being or becoming an information literate person, (2) their perceived need for increasing their proficiency in IL, and (3) how likely they imagine putting effort into pursuing that need.

Our study, as far as we know, is the first to address gender differences in self-assessments of IL ability and their accuracy. We found that although men and women had nearly identical mean test scores, as predicted in H1, men’s estimated scores were both higher and more accurate than women’s after the test—an unexpected result. These differences were statistically significant for undergraduates’ and master’s students’ after-test estimates and accuracy. Though several other IL studies have shown that men tend to have higher self-efficacy than women (e.g. Punter et al., 2017; Taylor and Dalal, 2017), our results suggest that men additionally tend to have better IL metacognition.

Overall, we found that student IL knowledge levels generally improve with HE experience, as hypothesized in H2. When estimating their test scores, students at all HE levels vary both in the estimates and in their accuracy, which gives an indication of their metacognitive awareness. Before the test, undergraduate and master’s students tended to overestimate their skills, while PhD students tended to underestimate their skills. These findings are in accordance with H3. In addition, PhD students had higher estimation accuracies than lower level students, as predicted in H4. After taking the test, all HE levels underestimated their skills, though their estimation accuracy improved (see Figure 1). One could even say that their estimation accuracy, and thereby their metacognitive monitoring ability, was remarkably good. H6 predicted this higher accuracy in scores estimated after the test, but we had not foreseen that all HE levels would underestimate their actual scores after the test. Nevertheless, the higher the HE level, the more accurate the after-test estimate, as other IL researchers also have found (Mahmood, 2016). The overall improvement in calibration is most likely a result of students anchoring their estimations based on the test content; they were primed to become metacognitively aware of their ability by seeing the questions. This was an important effect of the measure itself.

Performance-level comparisons revealed that low-performers on the IL test tended to overestimate their skills, while high-performers tended to underestimate their skills, both before and after the test, as predicted in H5 and H6. In addition, the low-performers estimated their scores more accurately after taking the test than before taking the test, a healthy sign of knowledge calibration.

Our findings thereby illustrate a typical example of the Dunning-Kruger effect. The lower the student’s HE level and/or performance level, the more likely they were to overestimate their abilities (Kruger and Dunning, 1999; Mahmood, 2016). Explained simply, they cannot know what they do not know. Conversely, those with the most HE experience and/or a high performance level, tended to underestimate their scores. Kruger and Dunning (1999) might attribute this miscalibration to a comparative error associated with beliefs about others’ performance. In other words, they are aware of what they do not know, and believe that others may have this knowledge.

The inability to recognize incompetence has many implications. One consequence of overestimating performance, as observed by Guise et al. (2008), is that students may avoid seeking assistance from librarians or teachers, believing instead—perhaps mistakenly—that they can find reliable information sources on their own. However, without the competencies necessary to find and evaluate sources, a student may end up trusting conspiracy theories or other misinformation found with a simple Google search. Recognizing the need for improvement is essential for voluntary self-improvement (Pennycook et al., 2017), and an important motivation for learning.

Contrary to H6 and to prior research on the Dunning-Kruger effect, we did find an unexpected result. While high-performing students at all HE levels tended to underestimate their scores after the test, undergraduate and master’s students’ after-test estimates deviated surprisingly more from their actual scores than their before-test estimates. This may indicate that the high-performing undergraduate and master’s students, more than the PhD students, are still calibrating their understanding of their IL proficiency even after the test, though it is intriguing that they are poorer at this than their low-performing peers. Other studies show more accurate calibrations in after-test estimates (cf. Händel and Fritzsche, 2016).

This fits with the Gross and Latham (2007) argument, however, that more skilled individuals may adjust their estimates by considering how their performance might compare with that of other experts. Likewise, :imposter syndrome,” where people fear they lack the knowledge or skills they have been ascribed, has been noted among high achieving students in other research as well (see, e.g. Craddock et al., 2011; Gibson-Beverly and Schwartz, 2008).

Meanwhile, some researchers, for example Krueger and Mueller (2002), argue that what others report as evidence of the Dunning-Kruger effect may in some cases actually be a statistical artifact, caused by regression to the mean (RTM) and/or the better-than-average effect (BTA). RTM can occur when unusually small or large measurements are followed by measurements closer to the mean. If RTM was a factor in our study, estimation accuracy scores would have been more evenly distributed around zero in Figures 1 and 2. Our distributions therefore suggest real variation as opposed to statistical artifacts. Meanwhile, BTA refers to the observation that the majority of people predict higher-than-average scores on cognitive tests, such as the IQ test, when the mean score is known. However, students in our study were unaware of the IL test group mean scores, rendering the BTA irrelevant. Finally, in research on the Dunning-Kruger effect, it is common to compare participants in the lowest and highest quartiles. By using a median split to distinguish low- and high-performers in each HE group, the statistical differences we found were therefore even more pronounced.

Finally, a unique contribution to the field is our investigation of additional factors related to student and metacognitive competence that may affect how students go about addressing gaps in their IL competencies. Indeed, as hypothesized in H7, interest in being or becoming information literate was higher among the high performers in general and among PhD students more than undergraduates, though the high-performing PhD students reported being more interested after the test than before. Interest was positively related to students’ felt need to learn more and their intention to put effort into IL learning, as predicted in H8. Importantly, student interest is more correlated with their felt need to learn after they have taken the test, than it is before—though PhD students reported being significantly more likely to act on that need than the undergraduates. However, the greatest correlation was between student interest in being or becoming information literate and their likelihood to invest effort in developing IL skills—both before and after taking the test. In that sense, arousing IL interest may be more effective for inspiring action than identifying a learning need, particularly among lower-performing students. This result deviated from H9, which predicted that the felt need to learn more about IL would have a higher correlation to students’ intended effort to learn. These findings are correlational, though, and should therefore be interpreted with that in mind, providing grounds for further study of these relations.

Limitations

In order to make bolder claims about gender comparisons, sampling is important. We strove to complement existing research with a sample that had gender distributions representative for Norwegian HE. We were successful in this for the undergraduate and master’s levels, but not for the PhD level, where we had an overrepresentation of women. This limitation of the study may explain why men in the PhD group did not show metacognitive and test-estimate accuracy advantages, though more research is required to test this.

Another limitation of this study is a cultural difference between samples that may have affected results; while all undergraduate participants took the Norwegian version of IL test, one-third of graduate students took the English version. In addition, four questions were added to the survey after data collection had begun, and were therefore not answered by all respondents.

Implications and future research

Of methodological interest, student estimates of their IL knowledge were often inaccurate, especially when predicting scores before taking the IL test. This implies that “cold,” or before-test, predictions are not as reliable a measure of student IL competence and should be interpreted with caution also in other research. This study found that estimation accuracy improves however, when students estimate their scores after the test. This implies that taking a reliable IL test has value in helping students develop a metacognitive awareness of their IL ability. Taking the IL test in this study also led to an increased correlation between interest and the felt need to learn more.

Accurate score estimates are also helpful for those who teach IL, regarding who to help (prioritize low-performers and low HE levels), and how to help (testing contributes to skill awareness). Our study also shows that taking an IL test helps students calibrate their understanding of what they know. With increased metacognition, undergraduates grow most in understanding their need to learn more. In this way, in addition to affirming the value of the IL test per se, the test scores also clarify for students the relevance of IL instruction for them, personally.

This discrepancy—between what students initially believe about their competence and how they perceive it after testing—is important for IL research that bases its findings on self-reports (Gross and Latham, 2012). Conclusions from studies based on pre-test self-assessments may be less accurate than those based on post-test self-assessments (Rosman et al., 2015). For example, despite being provided with a definition of IL upon which to base their pre-test competence judgment in this study, students’ post-test judgments aligned significantly better with their actual demonstrated competence.

Low-performers may discover or reinforce a need to improve. Knowing themselves, however, acting on that is more likely associated with their interest in being or becoming an IL person than their identified need. In addition, in terms of HE level, results indicate that interest in being or becoming information literate, and the likelihood of making an effort to develop IL skills, are correlated and increase with each level. So, worthy of further investigation in future research is the role interest plays both in the HE trajectory of IL learning, and in helping students identify their skill levels.

Though helping especially lower-performing students develop interest in being or becoming IL may be valuable for improving student IL learning, it may be equally valuable to counteract student loss of interest when they do not perform well, by simultaneously developing feedback opportunities to support positive growth in IL self-efficacy (Guise et al., 2008). Interventions designed for students with low IL performance and/or interest levels with this in mind may be especially worthwhile, and a focus of future research.

Conclusion

Differences in IL competencies and metacognition related to those competencies in students of different genders and HE levels—both understudied to date—have been explored in this article. In addition to confirming results from previous studies on the existence of the Dunning-Kruger effect in the IL domain, this study makes several new contributions to the field. Findings indicate that (1) in general, IL metacognition increases with HE level and performance level, (2) in some groups, men tend to have better metacognitive awareness of their IL competency than women, and (3) interest in being or becoming information literate is highly associated with the likelihood of putting effort into learning more, more so than need, implying that getting students interested in IL may be as relevant for IL instruction as the teaching of the competencies themselves.

It is in all our best interest to use the platform of academia to help students develop the knowledge and skills they need to become intelligent information consumers and contributors. It is relevant not only for academic performance, but also for informed social engagement, and it is especially important in today’s “post-truth era,” characterized by a growing disregard for facts and distrust in mainstream media.

Because of the larger, more diverse sample than that used in previous research on the Dunning-Kruger effect, this study identifies nuances in that effect related to HE experience, gender and performance level. Intriguing connections between student interest, need, and likely effort in attending to their IL growth have also been highlighted. These findings can be used in future research and practice in order to design even better approaches to student IL development in all levels of higher education, and, just as importantly, to help counteract people’s vulnerability to false or misleading information that can have truly dangerous consequences.

Footnotes

Acknowledgements

The authors would like to thank bachelor student Mina Taaje Sønsterud (UiT The Arctic University of Norway) for her valuable contributions to this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.