Abstract

Universities are increasingly offering support services for bibliometrics, often based in the library. This paper describes work done to produce a competency model for those supporting bibliometrics. The results of a questionnaire in which current practitioners rated bibliometric tasks as entry level, core or specialist are reported. Entry level competencies identified were explaining bibliometric concepts, doing basic calculations and some professional skills. Activities identified by participants as core are outlined. Reflecting on items that were considered in scope but specialist there was less stress on evaluating scholars, work at a strategic level, working with data outside proprietary bibliometric tools and consultancy-type services as opposed to training for disintermediated use. A competency model is presented as an appendix.

Keywords

Introduction

Bibliometrics – the statistical analysis of publications – has been practised since the 1920s (Gingras, 2016). However, bibliometric activity grew significantly with the emergence of new citation mapping tools starting with the ISI’s citation indices in the 1960s (De Bellis, 2009; Thelwall, 2008). Since the turn of the century there has been a proliferation of bibliometric tools and indicators from the bibliographic database suppliers and academic researchers working in this field. In addition, the use of altmetrics has grown in an attempt to use the social web to measure the impact of research in new ways. Such quantitative approaches to research evaluation have attracted increasing interest and controversy. Researchers are, of course, interested in evaluating their own performance. Higher education (HE) institutions also want to use such calculations for management purposes. Further, an interest in measuring the value and impact of publicly funded research is a legitimate public and governmental concern. However, such measurement could be interpreted as an aspect of the rise of an audit culture in HE, a symptom of wider trends towards the New Public Management and ‘neo-liberalisation’ (Burrows, 2012; Fanghanel, 2012; Thornton, 2009). Metrics can be seen as a challenge to academic freedom and to the university’s traditional role as a centre in society of critical and independent thinking, since they imply managing academics through quantifiable, even objective and universal evaluations of research quality. It is argued that they can have potentially harmful effects both on researchers and on research (Coulthard and Keller, 2016; De Rijcke et al., 2015).

More immediately, concern has been prompted by the understanding that, if applied without awareness of such factors as differing disciplinary cultures and publishing practices, quantitative metrics lack validity. Uncritical reliance on certain metrics such as the Journal Impact Factor and h-index have been strongly criticized (e.g. Barnes, 2014; Curry, 2012; Lariviere et al., 2016). Such concerns have been solidified in the last few years by a number of important publications. Thus, the 2014 Declaration on Research Assessment (DORA) (http://www.ascb.org/dora/) critiqued the use of journal metrics, in particular the Journal Impact Factor, for measuring individual researchers. The 2015 Leiden Manifesto (http://www.leidenmanifesto.org/) set out 10 principles for using bibliometrics in research assessment, with an emphasis on responsible use. The Metric Tide Report (Wilsdon et al., 2015) which advised against the use of bibliometrics as an alternative to peer review in the UK Research Excellence Framework (REF) also called for all stakeholders to use metrics responsibly. In this context, the importance of professionally conducted and supported research evaluation, recognizing the principles of responsible use, is clear.

Gumpenberger et al. (2012: 174) go so far as to label bibliometric work as ‘a perfect fit for academic libraries’. Indeed, there is growing evidence that university libraries are offering or planning to offer research evaluation services, aligned to the library’s increased support for research and scholarly communication (Corrall et al., 2013). Yet they are services that research administrators and HE planners could be equally well positioned to play. Indeed, Gadd (2017) argues that there are roles for both groups in supporting bibliometric activities. However, there is evidence that a lack of skills and confidence can be a barrier to entry to bibliometric work (Corrall et al., 2013). As professional services begin to develop bibliometric offerings it is important for them to have a clear idea of what competencies are required in order to recruit and train staff appropriately. Professional learning and training providers, such as information schools, need to develop a clear conception of what entry level and core competencies are needed.

In this context, the aim of the study was to develop a community-supported set of bibliometric competencies for those working in libraries as well as in other related services, such as research offices. In order to achieve this aim the specific objectives were:

To identify the tasks that practitioners working with bibliometrics currently undertake;

To identify which of these tasks they perceive as entry level, core and specialist;

To explore variations in these perceptions, for example, between the UK and other countries and between those based in libraries and those in other units, such as the research office;

To produce a model of bibliometric competencies and validate it with the community.

The paper is based on data from a project commissioned by the LIS-Bibliometrics forum and Elsevier’s Research Intelligence Division.

The paper begins by exploring what we already know about why and how librarians and other practitioners are supporting the use of bibliometrics. It also considers the practices of job analysis and competency modelling as ways of analysing job roles. The methodology then positions the work within this continuum, and explains in detail how the current research was conducted. The findings of a questionnaire in which members of the bibliometrics community rated a list of bibliometric activities are then reported. The discussion reflects on the results and explains how a competency model was developed from this. The conclusion summarizes the contribution in clarifying our understanding of the competencies for bibliometrics. A current version of the competency model is offered as an appendix.

Literature review

Corrall et al. (2013) found that the majority of academic libraries they surveyed in Australia, New Zealand, Ireland and the United Kingdom offered bibliometric services. The main services were training of staff, production of citation reports and measurement of research impact. Grant application support was also strong in Australia and Ireland. The UK appeared to be lagging behind with only about half of respondents currently offering bibliometric training, and only another 20% planning it. In contrast, this was a service offered or planned by close to 100% of institutions in the other three countries. More patchy development in the UK was seen as reflecting uncertainties around how metrics might be involved in the national research evaluation. Nevertheless, the findings make a strong suggestion that bibliometrics is becoming a mainstream service in academic libraries. Case studies of a number of other countries support this (Aström and Hannson, 2013; Bladek, 2014; Dennie, 2010; Gumpenberger et al., 2012; Mamtora and Haddow, 2015) although not all evidence to points to on-going growth (Richter, 2011). The increasing interest in bibliometrics for research evaluation, and the rise of altmetrics, suggest that this trend is only likely to have intensified, though there is little data from which to draw firm conclusions.

A number of authors have presented arguments for why bibliometrics would be an appropriate new area of activity for librarians. The science of bibliometrics was developed partly as a sub-discipline of library and information science (De Bellis, 2009) and from the 1970s it was extensively used in collection management (Astrom and Hansson, 2013). In a survey of Swedish academic libraries, Aström and Hansson (2013) found that participants thought that librarians were the right people to offer support to bibliometrics because of competencies with bibliographic tools and metadata and because they could take a neutral position towards the evaluation of academic work. Their respondents saw the benefit to the library in increased institutional visibility through bibliometric work.

Gumpenberger et al. (2012) give four reasons why bibliometric services are a ‘perfect fit’ for academic libraries:

Librarians already use major bibliographic databases;

They have experience of data gathering, cleaning and analysis;

Librarians offer services for researchers;

Librarians have the opportunity to participate in a global bibliometric research community.

Not all these arguments are equally convincing. It is true that there is an evident connection between bibliometrics and library licensing and support to the use of bibliographic databases. Yet librarianship has traditionally attracted people trained in humanities and relatively few library roles involve data manipulation and analysis. Also, while a focus on information literacy implies a professional interest in guiding students to identify quality in research publication, librarianship typically positions itself as a service profession; the element of evaluating academics’ research quality fits uneasily with this. Such fears were evident in Aström and Hannson’s (2013) study, which found that while many Swedish libraries were developing bibliometric services, major issues were: competency in advanced statistical analysis, unease about evaluating scholars and the risk of being associated with identifying under-performing departments.

Thus, libraries may see bibliometrics as a natural area of work, and given the pressure on their traditional core roles might feel the need to expand into such new areas (Cox and Corrall, 2013). At the same time there are also barriers, especially in terms of skills. Further, libraries are not the only professional services in universities that might have a role in using or supporting the use of bibliometrics. In so far as bibliometrics is useful to evaluate departmental or institutional performance or support grant capture, then it would be relevant to research administrators in their roles. University planning offices that support major initiatives such as returns to national research assessment exercises might also be involved in using bibliometrics. Anecdotally it is clear that this happens, but we have no systematic data about how the role is performed. The growing literature on research management, for example, does not yet discuss roles in bibliometrics (Green and Langley, 2009; Langley, 2012; Shelley, 2010). Similarly, ARMA’s (the UK professional Association for Research Managers and Administrators, https://www.arma.ac.uk/) professional development framework does not mention bibliometrics as such (https://www.arma.ac.uk/professional-development/PDF/explore-the-PDF).

In this context, there has been relatively little work to understand what knowledge librarians or others working in this field need. Among the skills for UK liaison librarians identified by Auckland (2012) were:

Understanding of the national and local research assessment processes, and the requirements of the REF;

Understanding of research impact factors and performance indicators and how they will be used in the REF, and ability to advise on citation analysis, bibliometrics, etc.

However, this is a very high-level summary. There are also some useful practitioner descriptions of typical activities (Delasalle, 2011).

The most substantial study in this area was produced by Petersohn (2016) who considers the cognitive and social claims made for jurisdiction over bibliometrics by librarians in the UK and Germany. She argues that if ultimately the purpose of bibliometrics is measuring the quality of science, librarians tend to interpret this from within their existing knowledge base, as an information problem. Thus, she finds that their main ways of doing bibliometrics revolve around ‘empowering users, consulting without strategic implications, and, especially the use of commodities’ (i.e. software products) (Petersohn, 2016: 187). Thus, librarians focus on training users to empower them to improve their own publication performance and impact by improving their ‘bibliometric literacy’ as an aspect of information literacy. Such training typically explains bibliometric measures, and their limitations, but avoids technicalities. They offer advice, but avoid more strategic aspects. They work with proprietary systems, rather than exploring manipulation of data or abstract concepts. They perceive bibliometrics as rather abstract, in line with the profession’s stress on practicality. Their academic knowledge base stems mostly from their library qualification, but more important in bibliometric service delivery is their professional knowledge derived from day to day library practice, and various forms of informal learning, for example from blogs.

This is a convincing account of how bibliometrics is interpreted by librarians through their existing knowledge, values and practices. Implicit is the notion that professionals from a different background (e.g. based in research administration or university planning) would define bibliometrics in very different ways, in line with their own expertise.

Job analysis and competency modelling

In order to gain a clearer picture of what the current professional practice of bibliometrics actually involves there is a need for a process of job analysis or competency modelling. This is an area where there has been much work in the library field in the last few years. For example, there have been two editions of a Competency Index for the Library Field (Gutsche and Howe, 2009, 2014). However, neither mentions bibliometrics or altmetrics. There have also been projects to develop competency models in a number of critical or highly dynamic areas such as leadership (Ammons-Stephens, 2009), electronic resource librarianship (NASIG, 2016), digital curation (DigiCurV), data science (Edison, http://edison-project.eu/), and linked data (Linked Data for Professional Educators, http://explore.dublincore.net/theory/briefing-papers/ld4peoverview/).

Job analysis and competency modelling are related practices. Both broadly seek to define the combination of knowledge, skills, abilities and other individual characteristics (KSAOs) needed to perform a particular role (Campion et al., 2011). Traditional practices of job analysis were based on asking those performing a role to identify the major tasks at the current time. Competency modelling tends to be different in a number of ways (Campion et al., 2011; Sanchez and Levine, 2009; Stevens, 2012):

It has an orientation towards identifying the abilities that underlie exceptional performance, rather than typical performance. Thus, those consulted in producing such a model are often executives with a responsibility for the job and high performers in the role.

Rather than compiling an exhaustive listing of activities, competency modelling focuses on a refined list of qualities of individuals who can perform the task well.

It often includes descriptions of how competencies progress at different levels.

It is future orientated, reflecting the dynamic character of most modern jobs.

It has a strategic purpose, seeking to link the role to organizational objectives. As such it can be seen as a management intervention and often takes a more deductive approach, rather than building up inductively from a study of current activities. A competency model may also be designed to serve the purpose of defining attributes across roles, even for the whole organization, rather than focusing on the specifics of a particular job.

An emphasis is placed on presentation to make the competency model easier and more likely to be used.

Competency modelling has more persuasive power and strategic value, but it may be less rigorous than job analysis (Shippmann, 2000). Certainly, there is agreement that both techniques can be usefully combined (Campion et al., 2011; Sanchez and Levine, 2009).

Method

The approach taken to job analysis/competency modelling in this study can be seen as hybrid. In a foundational study it was considered desirable to consult broadly and build up a detailed picture of all the tasks involved in bibliometrics work, in a way more akin to job analysis. The emphasis was on discovering what people do, rather than linking through to organizational purposes. How this fits into the evolution of wider professional competencies is another piece of work. Some elements of competency modelling were employed, however. A central aspect was identifying tasks that were entry level, from core and specialist activities. There was a strong element of seeking views on how the bibliometrics role will develop in the future, recognizing the dynamic character of modern roles. Presenting the final model in an easy to understand way, and with an emphasis on the main points, rather than exhaustive coverage, was also considered a priority.

Thus, the bibliometrics competency model was developed in three phases. In Phase 1, participants at a LIS-Bibliometrics workshop in June 2016 were asked to list as many bibliometric tasks as possible that they have been asked to do, and record them on post-its. Post-its were then ordered by participants on a flip chart based on whether they were considered to require low, medium or high levels of knowledge or skill. These data were analysed to identify a comprehensive list of tasks that those working in bibliometrics undertake and place them in broad categories (Objective 1). This was achieved by combining the data from the workshop with data from the literature, job postings and interview and documentary data from Petersohn’s doctoral research.

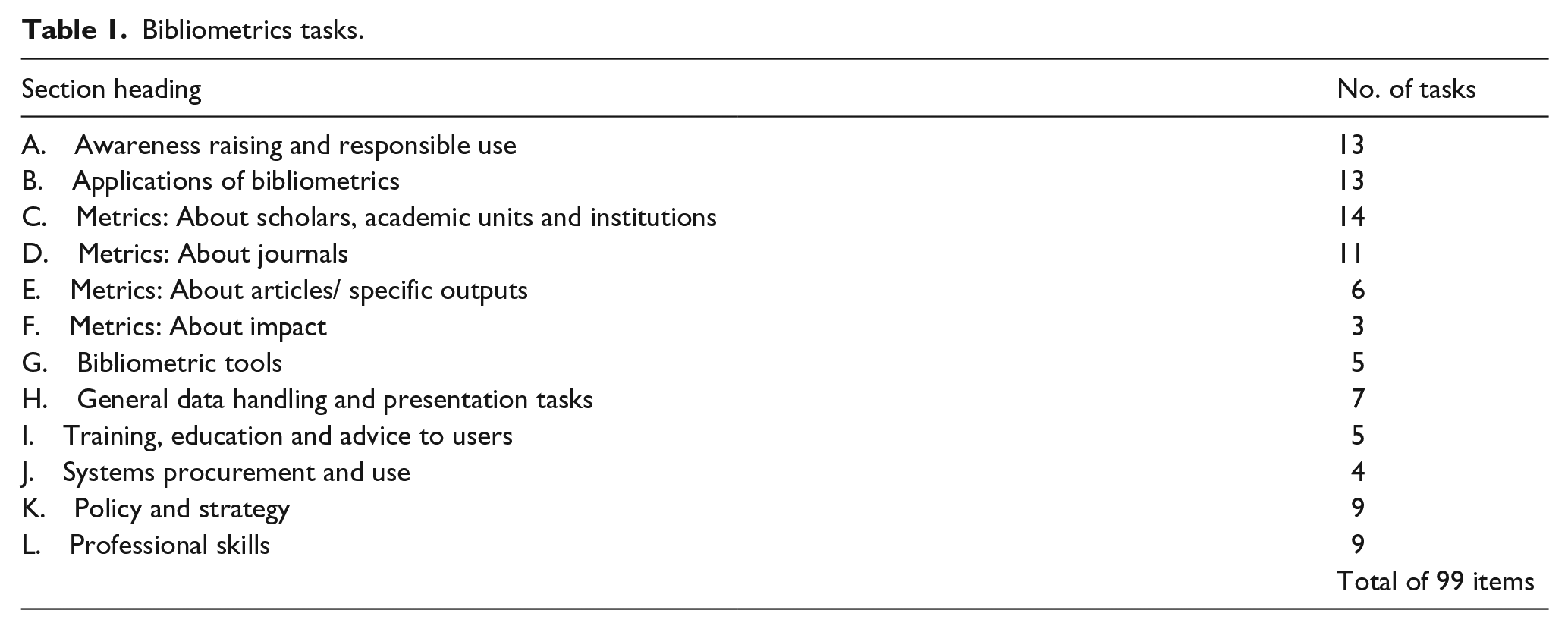

The analysis produced a list of 99 activities under 12 headings (see Table 1).

Bibliometrics tasks.

The headings are largely self-explanatory, but whereas most sections were specific tasks, ‘Section B: Applications of bibliometrics’ was a question about the different purposes for which the tasks could be applied, e.g. to evaluate scholars, to evaluate a collection, etc. Conceptually there were some challenges in differentiating tasks. For example, in theory, one should differentiate calculating metrics within specific tools from calculating them manually. Similarly, the use of specific tools to calculate metrics could have been probed, but to do so would have been repetitive and added to the length of items.

This list formed the foundation for Phase 2, a questionnaire designed to explore how those who performed bibliometrics perceive different tasks (Objectives 2 and3). The questionnaire was piloted with the LIS-Bibliometrics committee, and this led to some changes in wording of task descriptions. A major change was the final choice of how to articulate different levels of competence. The final wording as used in the questionnaire was:

Entry level – a basic task of bibliometrics, one that a newly qualified professional should be able to perform;

Core – a core task of bibliometrics, one that an established professional with a responsibility for bibliometrics performs beyond entry level tasks;

Advanced/Specialist – a task involving very specialist knowledge and evaluative skills;

Out of scope of the role.

Other approaches were possible. It was decided to differentiate these levels rather than degree of difficulty because a major objective of the project was to identify entry and core level tasks to shape training programmes. However, the concepts are clearly quite subjective. Entry level and core is different from level of difficulty: a task could be difficult but required by a new entrant or a task may be hard to do conceptually but if a tool exists to calculate it, it becomes easy. Another way of asking the questions would be to ask people whether they did them frequently, rarely or never, but this would be more about skills people used than what were perceived to be needed. Many actual activities listed in the workshop involved multiple tasks as listed. Given the length of the list of tasks in some areas it was not possible to differentiate subtly different roles in relation to a particular measure, e.g. between advising on policy and setting policy, without further adding to the length of the questionnaire.

In addition to rating tasks against these levels of competence (required fields) respondents were given the option to identify tasks in the section that they thought would grow in importance in the next five years. They were also asked for some contextual information such as the name of their institution, about their role and job title, the staffing of bibliometrics at their institution and sources of training.

The questionnaire was distributed in January 2017 to LIS-Bibliometrics (a network of bibliometric practitioners mostly based in the UK that uses JISCMail and community events to share knowledge), sigmetrics (the ACM’s (Association for Computing Machinery) special interest group on performance evaluation, https://www.sigmetrics.org/) and the Metrics Special Interest Group list for ARMA (https://www.arma.ac.uk/). Such listservs remain a primary means of communication in professional library work. Attendees at a recent LIS-Bibliometrics were also directly targeted.

A total of 92 complete responses were received. Of these, 48 were from UK institutions; about half from the Russell Group of universities. There were seven UK institutions for which more than one person responded, thus a total of around 40 UK institutions are represented in the results. It was considered that multiple responses from one institution were valid because (a) institutions often have staff working in multiple services performing bibliometrics tasks, and (b) differences of view are of legitimate interest. Of the non-UK respondents, there were 13 from the Americas, 11 from Europe, five from Australia and a number of others, including some who did not declare their national base.

Most respondents (55) were based in the library. However, 20 were in research administration, four in planning and 12 in other places including academic departments. A few people were based in more than one unit. The low number of respondents partly reflects the low development of bibliometric services across HE. With a large number of items, the questionnaire was time consuming to complete, but there were few partially completed surveys. The response rate was more due to people not starting the survey than giving up part way through.

The full list of tasks and the figures for all responses can be accessed from the ORDA repository (DOI: 10.15131/shef.data.5271697).

Phase 3 built on the task list to articulate required competencies and develop an effective structure within which to present them. Iterations of this were developed by the project team, with input from the LIS-Bibliometrics committee.

Results of the survey

Task groupings

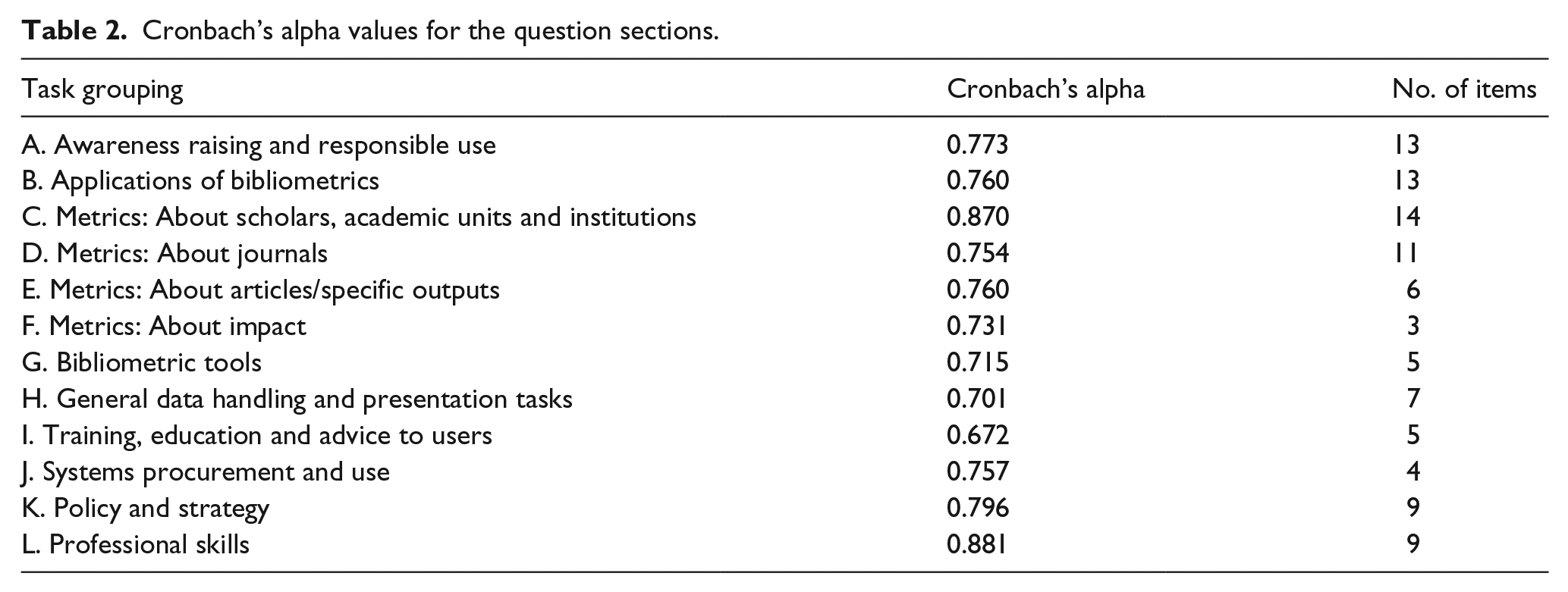

Cronbach’s alpha is a test of internal consistency that determines the degree to which answers are consistent in a multi-item scale. It can be used to assess how consistently people respond to a particular set of questions. The threshold value is 0.7 (Tabachnick and Fidell, 2007). Table 2 reports the results for the questions in each section of the questionnaire.

Cronbach’s alpha values for the question sections.

The results support the idea that the grouping of tasks in the questionnaire made sense to respondents, although more weakly for Sections I and H. The result for Section I might relate to the inclusion of consultancy alongside different types of training. The designers saw consultancy as at the far end of a spectrum of types of support to users, but it is possible respondents saw consultancy as different in kind.

Section H included specialist tasks such as manipulating data, programming, running statistical tests. However, it also included more obvious practices (that everyone would think of as entry level or core) such as presenting data effectively.

Entry level tasks

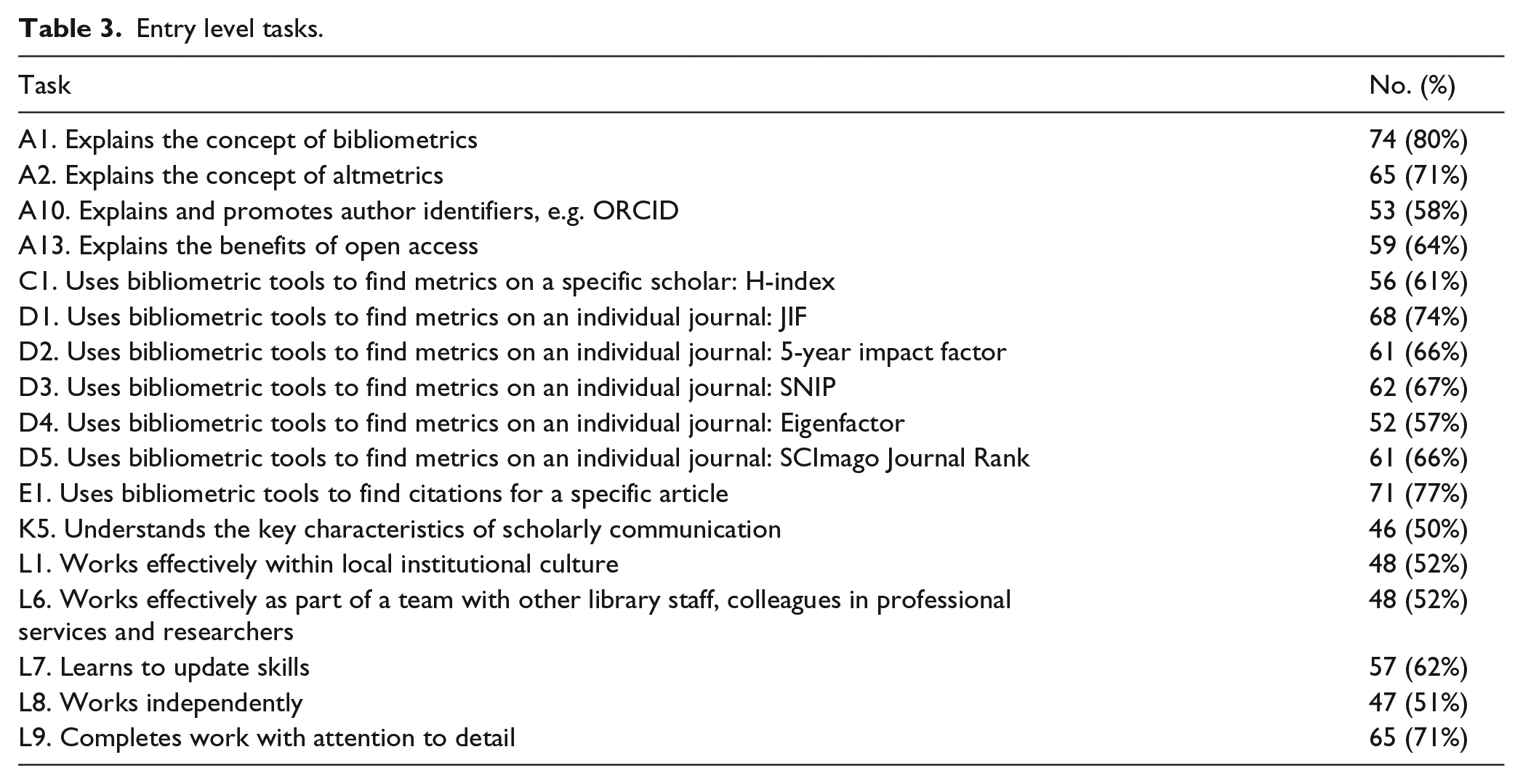

Table 3 presents items that 50% or more of all respondents identified as entry level tasks.

Entry level tasks.

Of the 99 items offered in the questionnaire, 17 were considered to be entry level. Four from section A reflected the need to explain basic concepts such as bibliometrics itself and altmetrics. An ability to use a bibliometric tool to calculate some basic metrics was also seen as important (C1, D1-D5, E1). Interestingly, most of these were journal metrics, implying less focus on evaluating scholars/institutions or individual works, more on identifying places to publish for impact. This is doubly interesting because the use of such metrics is quite controversial. However, they may have been identified as entry-level skills as their use is still commonplace in the sector. Five items were drawn from the listing of professional skills, namely, the need to work effectively with other colleagues as well as independently, and at a high level of attention to detail. Such skills may form the basis of many library and data analysis jobs, but are certainly important to bibliometric roles. The emphasis on the need to keep skills up-to-date reflects the fast-moving nature of this area. Full understanding of these responses would require a comparison to respondents in other areas of library/professional work, since they could be simply generic requirements for entry level professionals.

It seems that the current expectation for a new professional is not that they have advanced skills, simply a basic understanding of key concepts sufficient to explain them to others, the ability to use basic bibliometric tools and the soft skills to operate effectively in the workplace.

Core tasks

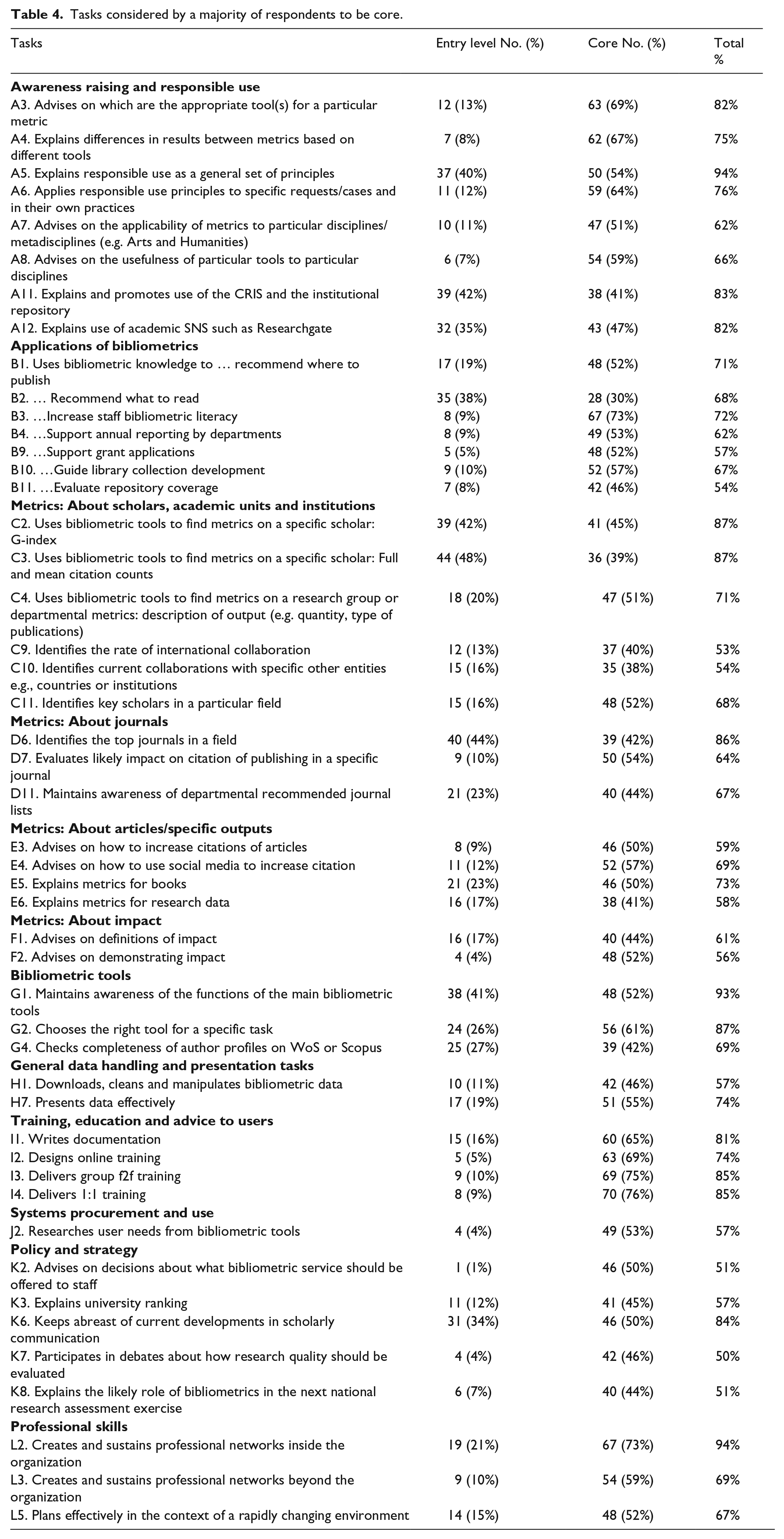

There were 32 tasks that more than 50% of respondents saw as Core. In addition there were another 16 items that scored above 50% when combining entry level and core – excluding those that were already in the list of items that were above 50% for entry level alone. They are all listed in Table 4.

Tasks considered by a majority of respondents to be core.

A large part of Section A was seen as core. Advising on appropriate tools and explaining the differences between results from different tools was rated as core by many respondents. Interestingly, 94% of respondents thought that explaining responsible use principles to bibliometrics was rated as core. Raising academics’ ‘bibliometric literacy’ also received strong agreement, triangulated by a strong agreement that different aspects of training such as writing documentation and delivering training were core tasks. Under professional skills, networking inside the organization seemed to be important.

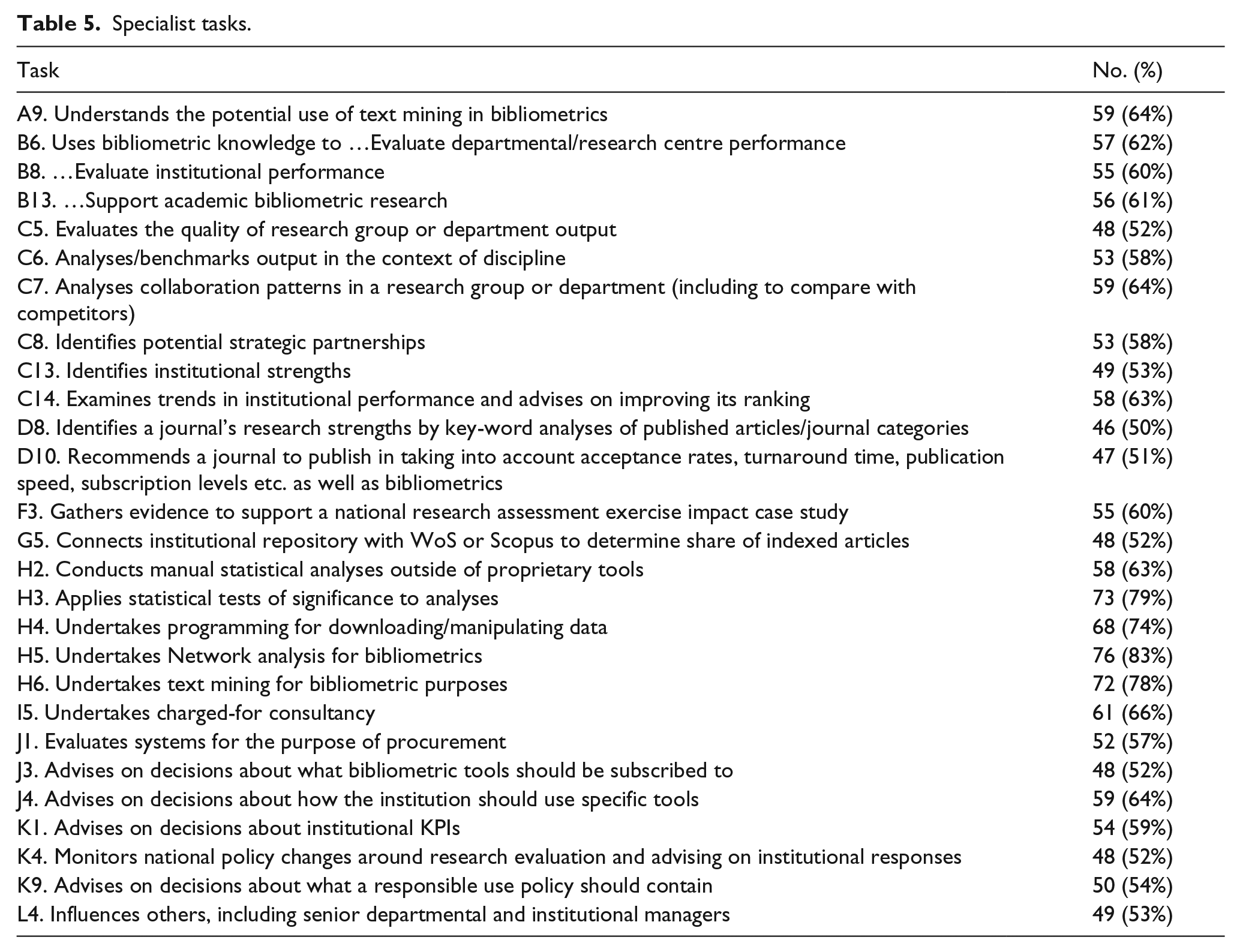

Specialist tasks

Table 5 lists tasks that more than 50% of participants rated as ‘Advanced/Specialist’.

Specialist tasks.

Just over a quarter of all tasks – 27 – were seen as specialist or advanced. By analysing these responses, we can observe that aspects that are seen as more specialist include:

Activities that relate to the managerial use of bibliometrics to evaluate scholars (B6, B8, C5-C8, K1);

More technical activities, including working outside proprietary bibliometric tools (A9, H2-H6) as well as keeping suppliers up-to-date with data and also system evaluation and choice (J1, J3);

Consultancy based bibliometrics as opposed to training users (I5);

It did not seem core to work at the policy level, e.g., to monitor wider policy change (K4), advise senior managers on responsible use (K9) or influence senior managers (L4).

A considerable number of common bibliometric activities were considered ‘specialist’. It would be outside the usual work of librarians, for example, to be involved in the evaluation of academics’ work or in high-level policy making. Librarians might also be reluctant to engage in more technical roles or paid for consultancy. The majority of respondents saw the use of suppliers’ bibliometric tools as a core activity, but working outside of those tools as more specialist.

Tasks rated as out of scope

There were no items identified by more than 50% of all respondents as being out of scope of the role, however a few items were seen as out of scope by over 20% of participants, namely:

B5. Uses bibliometric knowledge to … Promote/employ staff – 30 responses (33%)

B7. …Allocate funding to departments – 43 responses (47%)

C5. Evaluates the quality of research group or department output – 20 responses (22%)

D9. Recommends a journal to publish in purely through bibliometrics – 27 responses (29%)

E2. Evaluates quality of specific article – 33 responses (36%)

I5. Undertakes charged-for consultancy – 22 responses (24%)

The rating of evaluating the quality of a specific article (E2) was interesting because roughly the same numbers rated it as core as rated it out of scope. It could be argued that rather than merely out of scope some of these items were considered something that should not, or could not, be done (which was not an option available for respondents). For example, many would argue that recommending a journal to publish in purely through bibliometrics (D9) is bad practice.

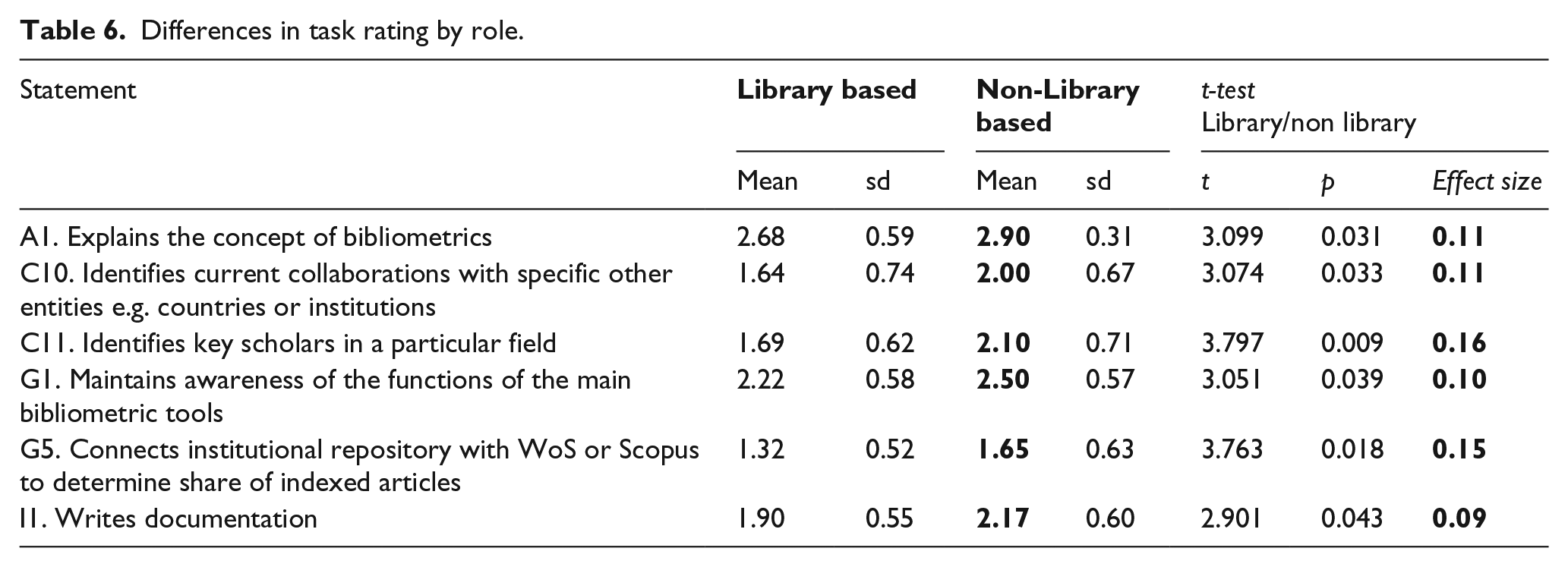

Differences in response between librarians and ‘others’

One objective of the study was to compare the views of librarians and others undertaking bibliometrics. Unfortunately, the numbers of non-librarians responding limited our ability to undertake this analysis in any depth. However, a comparison of library-based respondents (55 individuals) with those who said they were based elsewhere (excluding those based partly in the library) (34 individuals), revealed some statistically significant differences. These are listed in Table 6. Means were calculated by treating 1=Advanced/Specialist; 2=Core; 3=Entry level. Thus, the highest score arises where the task is seen as more of an entry level or core activity, than a specialist one. Higher scores are highlighted in bold.

Differences in task rating by role.

For all these tasks it was less likely that librarian respondents would see them as a usual practice. It is hard to fully explain these results. It does seem reasonable that librarians might have less need to identify collaborators (C10) and key scholars in a field (C11), but there are many other of the uses of bibliometrics listed in Section B with which librarians may not be expected to engage where there was no statistically significant finding. It is a little surprising that librarians would see explaining bibliometrics as a less core part of their role than others. Although there seems to be ample theoretical reason to expect marked differences in how different groups involved in bibliometrics might view the task (Petersohn, 2016) this was not really confirmed by the data, at least when looking for statistically significant differences.

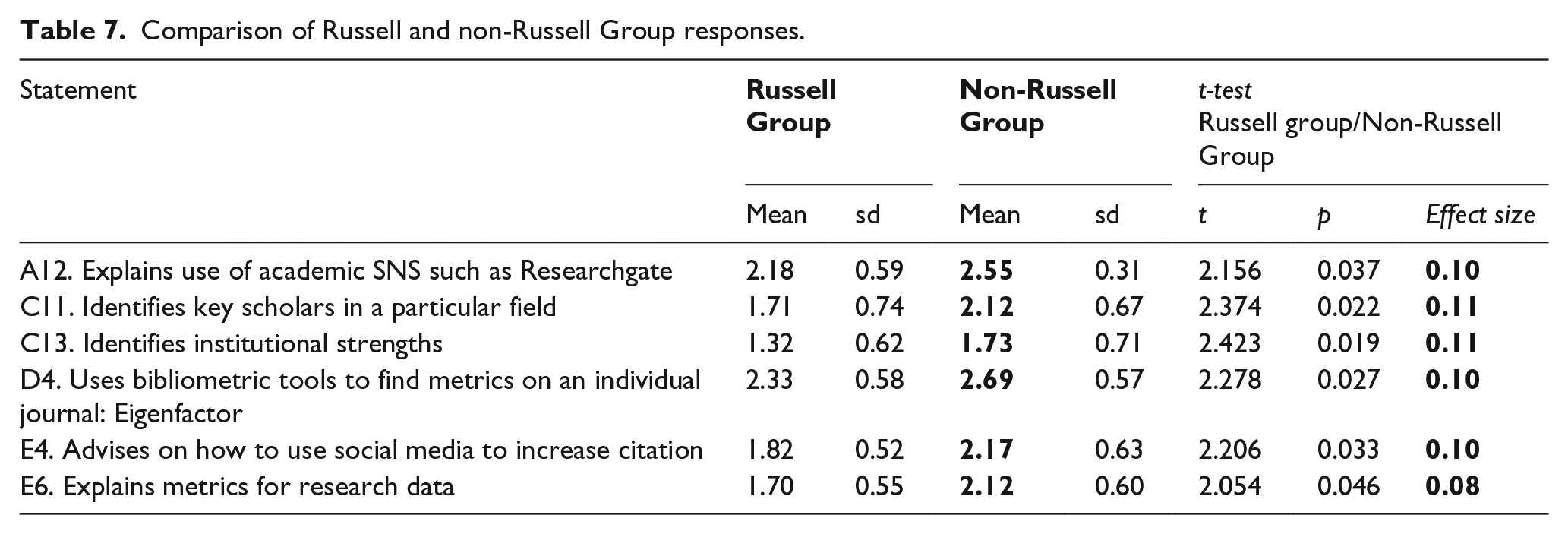

Russell Group and non-Russell Group comparison

It might be anticipated that research-intensive institutions might use bibliometrics a little differently from non-research-intensive universities. For example, it might be anticipated that research-intensive institutions with their institutionally powerful bodies of researchers might be more able to resist imposition of metrics for evaluation, whereas non-research-intensive institutions might be expected to take a more managerial approach. A small number of significant differences were found between Russell Group (research-intensive) and non-Russell Group based UK respondents. Table 7 sets these out.

Comparison of Russell and non-Russell Group responses.

These comparisons suggest non-Russell Group universities are slightly more likely to focus on academic SNS (social networking sites) and advise on social media use. Yet the data does not suggest a very marked difference of use between the two sets of institutions.

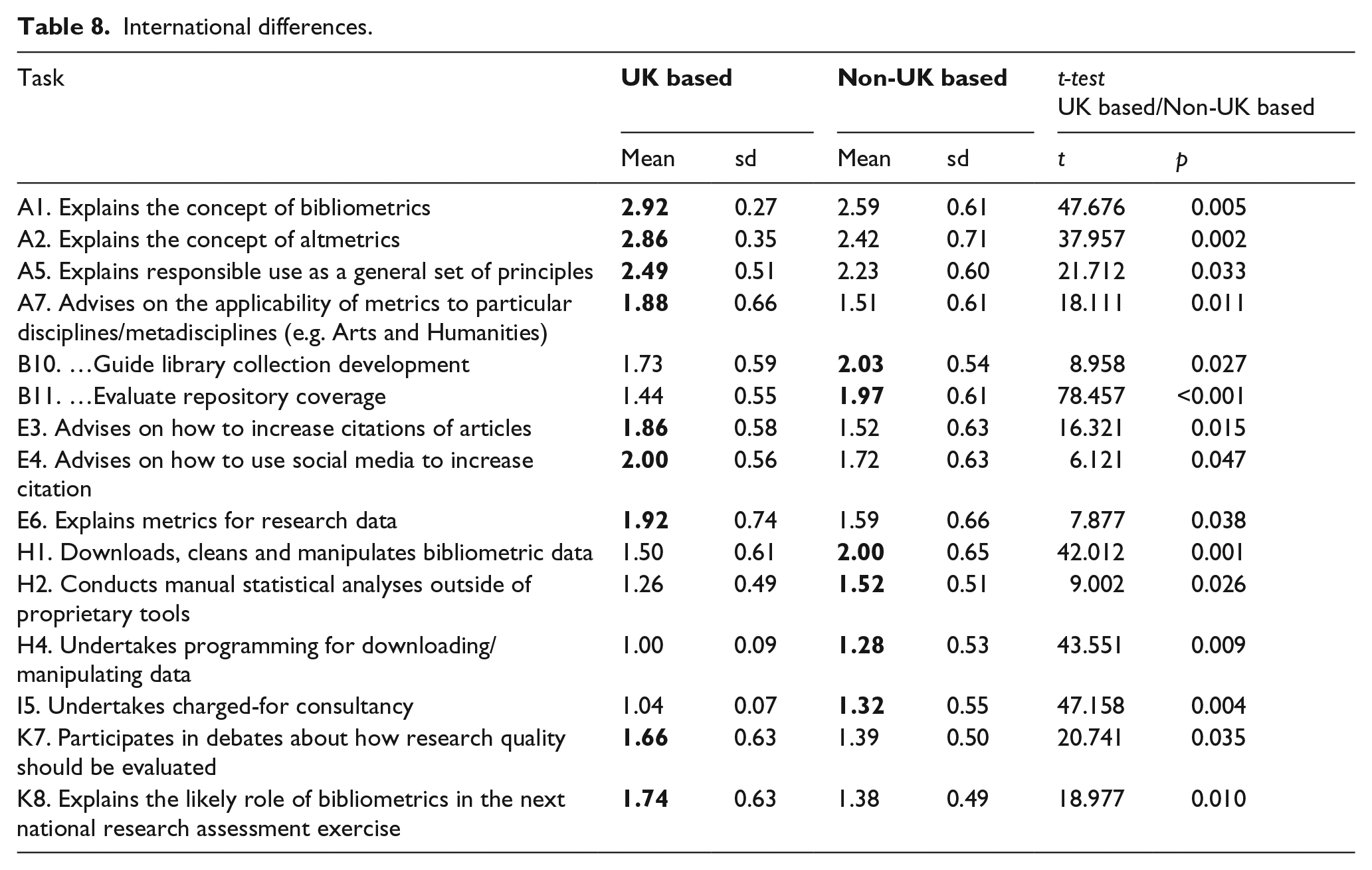

International differences in response

To understand whether there was a difference in bibliometric practices between UK and non-UK respondents, responses from the 48 UK respondents were compared with all others (44). Table 8 identifies the 15 tasks (about 15% of all the items) for which there was a significant difference between UK and non-UK answers.

International differences.

The results suggest that UK bibliometrics practitioners see it as more central to their role to explain basic concepts like bibliometrics or altmetrics, and responsible use. They also see it as more central to advise on increasing citation in different ways (E3, E4, E6); this could be interpreted to reflect the impact of the UK’s national research evaluation process; such national research assessment exercises do not exist in every country. They also see it as more core to explain how metrics for research data might operate and to participate in debates about research quality. In contrast, UK-based respondents were less likely to see it as core to use bibliometrics to evaluate the library collections and to map repository coverage. They are also less likely to rate more technical tasks such as downloading data or manipulating data as part of the role. It seems they are also less likely to do charged-for consultancy. The results do suggest bibliometrics in the UK has developed in a slightly different direction from other countries.

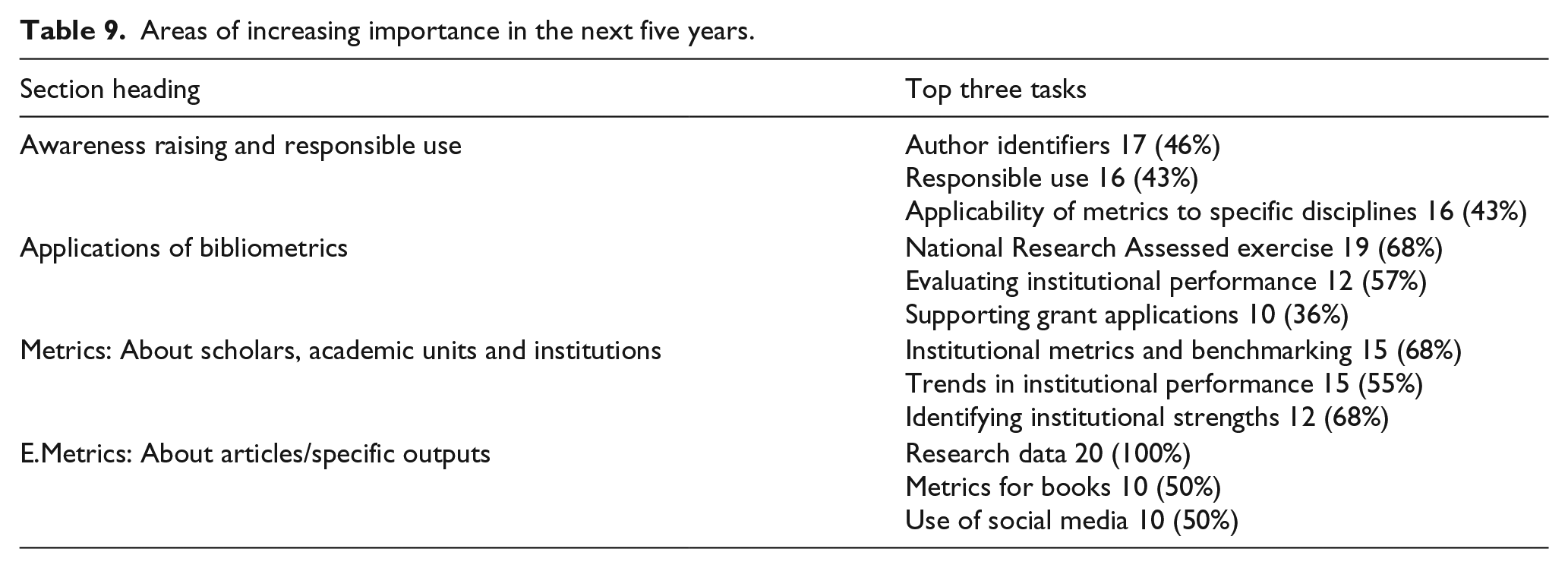

Growth areas

At the end of each of the 12 sections there was an open text box to allow respondents to ‘identify any items in this section you think will be of increased importance in the next 5 years’. This question sought to gather views of the direction of bibliometric practices. It was an optional question, but respondents could select as many items as they wanted. Most respondents did not give a reply, so percentages are calculated against the total number giving any reply. Predictably the number responding fell in later sections, so figures are only given for the earlier items where a reasonable number of people did give a response. Table 9 lists the top three tasks, as identified by participants who did respond, for the four sections where 20 or more people responded in total.

Areas of increasing importance in the next five years.

Interestingly the use of author identifiers was the most important trend selected in Section A. It was followed by responsible use and developing metrics specific to disciplines. Text mining was the fourth most important item; explaining open access was also selected by 14 participants.

One of the patterns that seemed to emerge from the data was a growing expectation that using bibliometrics to assess institutional performance would be of greater importance in the next few years. This could be simply linked to current consultations in the UK around the form of the next REF. This was apparent in the growth areas for metrics about scholars, but also in the response on applications of bibliometrics. Growing areas of application of bibliometrics was for national research assessment (not surprising) but also supporting grant applications. As regards new metrics, everyone who replied (20 people) mentioned citation of research data as an important trend.

About bibliometric work

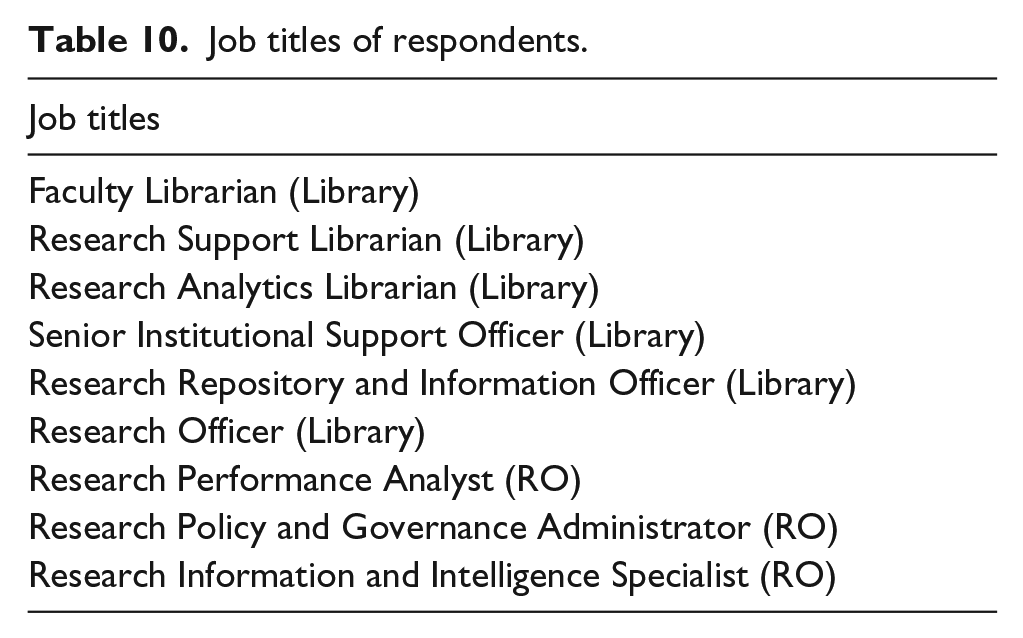

Job titles and locations

Predictably – since this is a pattern across professional roles across the sector – there was considerable variation in job title reported by respondents. Table 10 lists some of the job title recorded. The lack of standardization in terminology and local institutional job title practices presumably determine this. The variation probably also reflects genuine differences in role, especially as bibliometric services vary in level and some individuals combine supporting bibliometrics with other tasks.

Job titles of respondents.

Training in bibliometrics

Of the 55 respondents who gave an answer to the question: ‘If you have an Library/Information Studies qualification, did it cover bibliometrics?’ 37 (65%) said that their library qualification had not included bibliometrics. Only 16 (29%) said it had; three could not remember. Thus it seems that library training is often not the basis for professional practice. The next question was: ‘Apart from on an LIS course, have you received training in bibliometrics? If so please give brief details’. Answers included courses run by commercial companies such as Elsevier and by CWTS (at Leiden University), as well as individual seminars and webinars and reading the literature. A few were highly qualified with a Master’s or PhD in bibliometrics.

Discussion

The survey confirmed that the items in the list of 99 tasks developed from the workshops are all considered to be part of the bibliometric practice of respondents. None of the items were rated as out of scope by a majority of respondents. The categories within which items were organized in the survey also seemed to make sense to participants: both the task categories and the notion of entry level and core categories. It does not follow that the list is comprehensive, indeed an important point raised by participants in a dissemination event was that ethical aspects of bibliometrics extends beyond responsible use: all aspects of the conduct of the practice should be ethical.

The data identified a rather narrow entry level set of competencies (17/99 tasks). These were about explaining basic concepts, calculating key metrics (especially journal metrics), and some aspects of professional behaviour. Forty-eight tasks were identified as core, meaning that 65/99 items were rated as either core or entry level, together representing the main part of the role. Such tasks included providing basic explanations about relevant concepts and applying responsible use principles. Increasing staff bibliometric literacy and different forms of training also were commonly related as core which may be an effect of the large proportion of library-based respondents. The data suggests that a considerable proportion (27/99) of the bibliometric tasks were seen as specialist/advanced, as opposed to core. Specialist tasks included more managerial elements of evaluating scholars and more technical activities, such as working outside bibliometric tools, as well as influencing senior managers.

Reflecting on the difference between what was seen as core, and what specialist, the picture is largely consistent with Petersohn (2016), whilst providing a lot more detail. Respondents mainly saw bibliometrics as about empowering academics through information and training. There is an emphasis on responsible use. They see evaluation of academics’ and institutional performance as a more specialist role (though not out of scope). Influencing senior managers and policy is also specialist. Their skills are in using proprietary tools, rather than advanced manipulation of data or calculations outside of them. While this picture is consistent with Petersohn (2016) in terms of how the role is defined, her explanation that this arises from the character of librarians’ professional knowledge base does not seem to be supported. Comparing those who located themselves in the library only and those who did not report themselves to be based in the library, even partly, there were only a small number of statistically significant differences in perception. These do not suggest a fundamental difference of view about what bibliometrics is. An Abbottonian analysis as developed by Petersohn (2016) would expect there to be a greater difference, reflecting competing professions’ attempts to define the practice in ways consistent with their own knowledge base. The lack of such a pattern may be due to the small dataset. It could also possibly reflect the current dominance of librarians in interpreting what bibliometrics means. Librarianship is a well-organized profession that works collaboratively across the sector to define its role. Research administration is a newer, less formally defined group (Green and Langley, 2009; Langley, 2012; Shelley, 2010). Nevertheless, the differences are perhaps less than expected.

Similarly, we would expect differences to exist in such very different institutional contexts, such as between Russell Group and post 1992 universities in the UK and between the UK and other countries. The data did point to a small number of statistically significant differences, however because the non-UK data was from a range of countries including USA, Australia and in Europe, these findings should be treated with caution due to the varying evaluation systems in use. For example, not all countries employ national frameworks.

There was some interesting data on how people saw the practice of bibliometrics developing over the next five years. Areas of growth included author identifiers, responsible use, metrics for data, and the application of metrics for institutional benchmarking and to support funding applications. Reporting the results at professional workshops for LIS-bibliometrics and the UKSG conference produced some informal feedback that strongly supported the growing emphasis on responsible use. These discussions also suggested a widening range of bibliometrics uses. It followed that keeping up to date is a professional priority.

Finally, the evidence suggested that the majority of staff currently working in bibliometrics did not receive any formal training during their LIS qualification. People used a wide range of sources to develop their knowledge and keep themselves up-to-date.

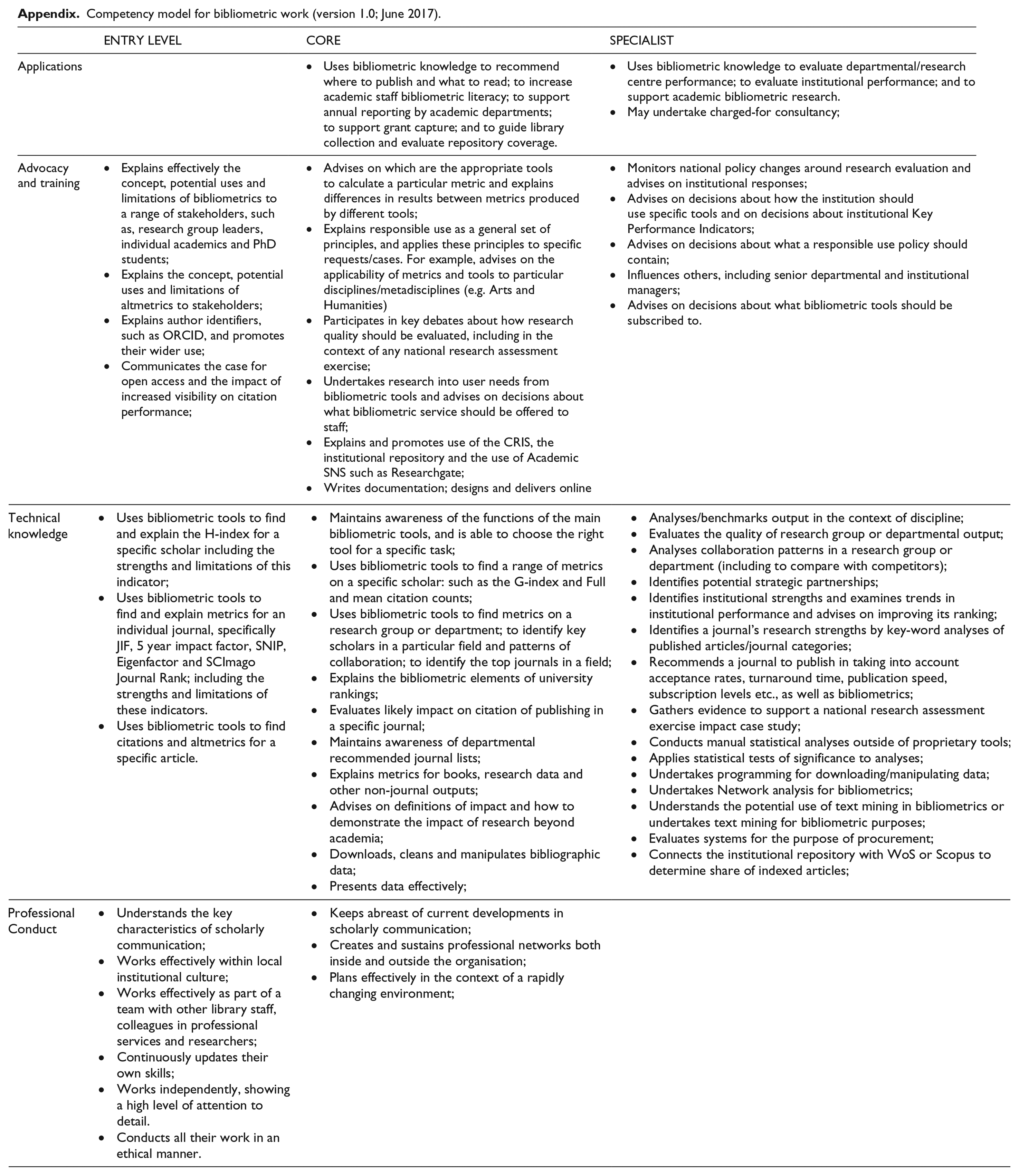

In phase 3 of the project, on the basis of the questionnaire results a competency model was developed (see Appendix). The entry level competencies chosen were those which over 50% of all participants rated as entry level. Core were all those that scored over 50% for the sum of entry and core level, removing any that were included in the entry level listing (48 original items). Specialist tasks listed are those that scored over 50% of all respondents. In line with practices of competency modelling, the listing was simplified by merging closely related items and organized under four headings. This involves an element of interpretation. What the representation does suggest is that entry level tasks are centred around advocacy, basic technical tasks and professionalism. Core tasks include training and more technical tasks. Specialist competencies are technical and strategic.

Conclusion

Bibliometrics, especially citation analysis, and altmetrics have an increasingly significant place in the governance of research at international, national and institutional levels. Governments have a growing interest in seeking to measure the return on public investment in research and the performance of institutions. It is a particular concern in the UK with the evolving definition of the national research assessment exercise, the REF. However, as the Leiden Manifesto eloquently points out, the use of bibliometrics in research assessment is fraught with challenges. Many specific measures seem to be significantly flawed, but remain widely used. As a result, the responsible, professional use of research metrics is important for the health of research and wellbeing of researchers.

In this context, support of bibliometrics and altmetrics has become an important area of new work for librarians and other professionals. Yet published research on their role in research metrics has huge gaps. This is the first study to examine systematically the competencies necessary to undertake bibliometric work. The study took a rigorous approach to analysing data from practitioners to produce the first listing of bibliometric competencies which differentiated entry level, core and specialist tasks. It also identified beliefs about likely growth areas. This is a significant contribution to the understanding of professional roles in supporting bibliometrics. The listing of competencies can inform institutions in recruiting and training staff; and professionals in planning their own self-development. It can also help organizations involved in the training of staff develop appropriate curricula, particularly in the context of competency based education (ACRL, 2017).

Although it is clear that it is not just librarians who are undertaking bibliometric work, the study also sheds further light on the nature and direction of development of librarianship as a profession. It reinforces our understanding of librarianship as a service profession, that focuses on empowering users through increased training, rather than building technical expertise or offering consultancy type expert services. Eschewing a more evaluative role in academic performance, librarians (and all doing bibliometrics) emphasize empowering users through information and training. This may also be seen to somewhat preclude alignment to the more ambitious hopes of Herther (2009) that librarians play a strong role strategically in developing new more reliable metrics and better tools. Yet in the light of the question marks over the validity of many bibliometric measures and the broader sense of a growing audit culture in HE, this is a judicious, even compassionate posture.

In a fast-moving field, there is a need to keep the competencies model up-to-date. For this reason, the list has been shared with the community under a CC-BY-NC licence, and can be downloaded from the Bibliomagician blog (https://thebibliomagician.wordpress.com/). The current study is only a temporally limited snapshot of views. Earlier research (Corrall et al., 2013) suggested that the UK was a little out of line with other comparable countries in its bibliometric practices. Therefore, since most of the questionnaire responses were from the UK, further research would be useful to explore international differences in how the professional support of bibliometrics is organized. Work linking bibliometric competencies to those developing in other dynamic areas of library practice would help us understand how the profession is developing as a whole, and how the various LIS curricula need to respond. Given the growth of metric work in librarianship, be that various library analytics (Showers, 2015) and library (data) carpentry (Baker et al., 2016) as well as bibliometrics, it may be that more quantitative data handling and statistical skills need to be made core to professional knowledge. This would have significant implications for curriculums in LIS schools. There is also an opportunity to develop an understanding of how these competencies might be rated differently among research administrators or for publishers and intermediaries, who are themselves also users of bibliometrics.

Footnotes

Appendix

Competency model for bibliometric work (version 1.0; June 2017).

| ENTRY LEVEL | CORE | SPECIALIST | |

|---|---|---|---|

| Applications | • Uses bibliometric knowledge to recommend where to publish and what to read; to increase academic staff bibliometric literacy; to support annual reporting by academic departments; to support grant capture; and to guide library collection and evaluate repository coverage. | • Uses bibliometric knowledge to evaluate departmental/research centre performance; to evaluate institutional performance; and to support academic bibliometric research. |

|

|

|

|||

| Advocacy and training | • Explains effectively the concept, potential uses and limitations of bibliometrics to a range of stakeholders, such as, research group leaders, individual academics and PhD students; |

• Advises on which are the appropriate tools to calculate a particular metric and explains differences in results between metrics produced by different tools; |

• Monitors national policy changes around research evaluation and advises on institutional responses; |

|

|

|||

| Technical knowledge | • Uses bibliometric tools to find and explain the H-index for a specific scholar including the strengths and limitations of this indicator; |

• Maintains awareness of the functions of the main bibliometric tools, and is able to choose the right tool for a specific task; |

• Analyses/benchmarks output in the context of discipline; |

|

|

|||

| Professional Conduct | • Understands the key characteristics of scholarly communication; |

• Keeps abreast of current developments in scholarly communication; |

|

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.