Abstract

Understanding variation in cognitive abilities is critical to understanding both the evolution and development of cognition. In this study, we examined the stability, structure, and predictability of individual differences in cognitive abilities in great apes across a broad range of domains, including social cognition, reasoning about quantities, executive function, and inferential reasoning. We administered six tasks to 48 individuals from four species, spanning 10 sessions over 1.5 years. Task performance was most strongly predicted by stable, individual-specific characteristics rather than transient or group-level variables. Using additional data from the same individuals in other tasks, we found substantial positive correlations between nonsocial tasks. In contrast, tasks measuring social cognition were not correlated either with each other or with nonsocial measures. Future studies should work toward mechanistic models of great apes’ cognitive processes to build an understanding of the evolution of cognition based on process-level commonalities across species.

Variation fuels evolution. Individual differences in cognitive abilities lead to differences in behavior that ultimately influences the fitness of the individual on which selection pressures act (Thornton & Lukas, 2012; Völter et al., 2018). Investigating these differences can also shed light on the broader structure of the cognitive architecture by identifying relationships between different cognitive abilities. Moreover, investigations help identify the socioecological factors that shape cognition during both ontogeny and phylogeny. Broadly speaking, great ape cognition is marked by substantial individual variability across functional domains, such as tool use, communication, social cognition, causal reasoning, and reasoning about quantities. Cognitive variability has been observed in both captive and wild settings (Berdugo et al., 2025; Bohn et al., 2023; Fröhlich et al., 2019; Herrmann et al., 2010; Watson et al., 2018).

Despite the prevalence and importance of individual differences in cognition, few studies have explored their broader structure in great apes. Most work has focused on finding something akin to general intelligence or a g factor (reviewed in Burkart et al., 2017; Deaner et al., 2006; Reader et al., 2011). Using the primate cognition test battery (PCTB; Herrmann et al., 2007), Herrmann et al. (2010) found no evidence for a single g factor in chimpanzees. Instead, they observed a bifactorial structure, with one factor linked to spatial tasks and the other to social and physical tasks. Similar findings have been reported for other primates (Fichtel et al., 2020; Schmitt et al., 2012). By contrast, Hopkins et al. (2014) used the PCTB to test a different sample of chimpanzees and identified a g factor that was later found to relate to measures of self-control (Beran & Hopkins, 2018). However, this study did not test whether the proposed structure (a single g factor) fit the data well. In a subsequent reanalysis, Kaufman et al. (2019) combined data sets collected with the PCTB and found the single g-factor model inadequate. Only multidimensional models accurately described the data. Beyond general cognitive abilities, Völter, Reindl, et al. (2022) investigated the structure of executive function in chimpanzees using a multitrait, multimethod approach. Their results showed limited evidence for the structure proposed for executive function in humans.

The existence of individual differences raises questions about their origins. Most theories about the factors influencing the emergence of complex cognitive abilities operate on a species level (Dunbar & Shultz, 2017; Henke-von der Malsburg et al., 2020; Rosati, 2017). Empirical studies in this tradition often compare closely related species with differing social structures or ecological pressures (Amici et al., 2008; Joly et al., 2017; Kaigaishi et al., 2019; Rosati & Santos, 2017). Alternatively, researchers aggregate data across studies to compare species on a larger scale (Deaner et al., 2006; Piantadosi & Kidd, 2016). The aggregation approach, however, faces challenges in comparability because data are often collected using inconsistent methods (Schubiger et al., 2020). An exception is ManyPrimates et al. (2022; see also ManyPrimates et al., 2019), which used standardized methods to collect a large data set on short-term memory and test species-level hypotheses. However, their results were surprising: No single socioecological predictor explained cognitive variation beyond phylogenetic relatedness.

In contrast, much less research has focused on the individual level (Sih et al., 2019). Early work focused on the effects of enculturation—raising great apes in a human environment. Most of these studies, however, involved only one individual, making it difficult to identify the relevant aspects of experience that led to the observed changes in cognition compared with nonenculturated great apes (for a recent summary, see Berio & Moore, 2023). Few studies with larger samples exist: Watson et al. (2018) found that human-reared chimpanzees are more likely to use social information; Bard et al. (2014) showed that human-reared chimpanzees excel at social cognition. Another line of research focused on personality traits (Altschul et al., 2017). For example, human-rated dominance and openness to experience correlated with problem-solving abilities (Hopper et al., 2014), and extraversion and agreeableness correlated with sensitivity to inequity (Brosnan et al., 2015; Carter et al., 2014). Yet personality is itself a latent psychological variable, and the experiences that shape differences in personality in great apes remain unclear.

To summarize, studies on individual differences in great apes are promising but rare. One reason for this shortage is the difficulty of precise individual-level measurement (Boogert et al., 2018; Matzel & Sauce, 2017). Cognitive abilities are, by definition, latent variables that cannot be directly observed but need to be inferred from behavioral tasks using psychometric models (Borsboom, 2006). To explore cognitive structures or link abilities to external variables, high-quality tasks yielding reliable measures are essential. Nevertheless, reliability is rarely assessed in primate cognition research (Griffin et al., 2015). For instance, the reliability of the widely used PCTB has yet to be systematically evaluated.

An exception is the work by Bohn et al. (2023), who combined several approaches to studying individual differences while simultaneously assessing measurement quality. Over 2 years, they tested individuals from four great ape species on a variety of cognitive tasks (for more details, see the Structure section below). They found that most—but not all—tasks reliably measured individual differences. Stable cognitive differences were linked to long-term differences in experiences. However, because of the small number of tasks, this study offered only limited insights into the structure of individual differences.

The current study builds on Bohn et al. (2023) by addressing two key gaps. First, we broadened the range of cognitive domains studied, including social cognition, reasoning about quantities, executive function, and inferential reasoning. Increasing the breadth of covered domains allowed us to test whether their findings—stable cognitive traits predicted by stable individual-level characteristics—replicate and generalize to others. Second, by pooling data from both studies, we explored the correlations between cognitive traits within and across domains, providing a deeper analysis of the structure of great ape cognition.

Research Transparency Statement

General disclosures

Study disclosures

Method

Participants

A total of 48 great apes participated at least in one task at one time point (for a detailed breakdown, see Supplementary Fig. 1 in the Supplemental Material available online). The sample included 12 bonobos (Pan paniscus; four females; age range = 3.60–40.70 years), 24 chimpanzees (Pan troglodytes; 17 females; age range = 3.80–57.80 years), six gorillas (Gorilla gorilla; four females; age range = 4.40–24.40 years), and six orangutans (Pongo abelii; five females; age range = 4.70–43.10 years). The total sample size at the different time points ranged from 34 to 45 (for details, see the Supplemental Material). All apes participated in cognitive research on a regular basis. Apes were housed in groups, with one group per species and two chimpanzee groups (Groups A and B). The research was noninvasive and strictly adhered to legal requirements. Animal husbandry and research complied with the European Association of Zoos and Aquaria Minimum Standards for the Accommodation and Care of Animals in Zoos and Aquaria as well as the World Association of Zoos and Aquariums Ethical Guidelines for the Conduct of Research on Animals by Zoos and Aquariums. Participation was voluntary, all food was given in addition to the daily diet, and water was available ad libitum throughout the study.

Procedure

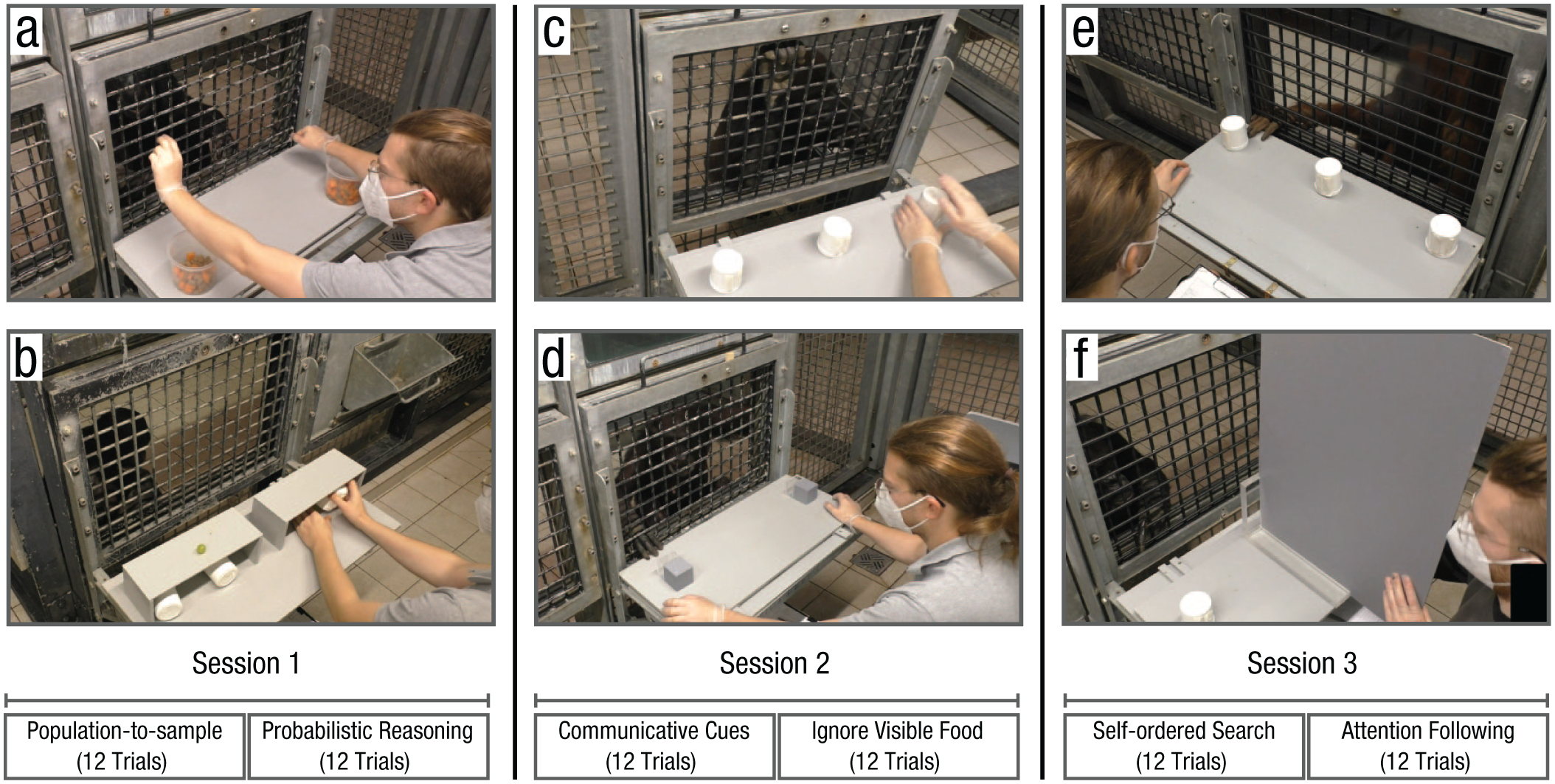

Apes were tested in familiar sleeping or observation rooms by a single experimenter. The basic setup comprised a sliding table positioned in front of a mesh or a clear Plexiglas panel. The experimenter sat on a small stool and used an occluder to cover the table (Fig. 1).

Setup used for the six tasks. (a) population-to-sample, (b) probabilistic-reasoning, (c) communicative-cues, (d) ignore-visible-food, (e) self-ordered-search and (f) attention-following. Text at the bottom shows order of task presentation and trial numbers.

The study involved a total of six cognitive tasks that were based on published procedures in the field of comparative psychology. The original publications often included control conditions to rule out alternative, cognitively less demanding ways to solve the tasks. We did not include such controls here and ran only the experimental conditions. For each task, we refer to these articles to learn more about control conditions and/or a detailed discussion of the nature of the presumed underlying cognitive mechanisms. Example videos for each task can be found in the associated online repository (https://doi.org/10.5281/zenodo.18384250). A second coder, unfamiliar to the purpose of the study, coded 20% of all time points for all tasks. Interrater reliability was excellent. The highest proportion of agreement was for the population-to-sample task (κ = .99), and the lowest proportion of agreement was for the ignore-visible-food task (κ = .97). Additional details can be found in the Supplemental Material.

Attention following

The attention-following task was loosely modeled after Kaminski et al. (2004). The setup consisted of two identical cups placed on the sliding table and a large opaque screen that was longer than the width of the sliding table (Fig. 1f). The experimenter placed both cups on the table and showed the ape that they were empty. The experimenter then baited both cups in view of the ape and placed the opaque screen in the center between the two cups, perpendicular to the mesh. Next, the experimenter moved to one side and looked at the cup in front of them. The experimenter then pushed the sliding table forward, and the ape was allowed to choose one of the cups by pointing at it. If the ape chose the cup that the experimenter was looking at, they received the food item. If they chose the other cup, they did not. We coded whether the ape chose the side the experimenter was looking at (correct choice) or not. Apes participated in 12 trials. The side at which the experimenter looked was counterbalanced with the same number of looks to each side and looks to the same side not more than two times in a row. We assumed that apes followed the experimenter’s focus of attention to determine whether or not their request could be seen and thus be successful.

Communicative cues

The communicative-cues task was modeled after Schmid et al. (2017). Three identical cups were placed equidistantly on a sliding table directly in front of the ape (Fig. 1c). At the beginning of a trial, the experimenter showed the ape that all cups were empty. After placing an occluder between the subject and the cups, the experimenter held up one food item and moved it behind the occluder, visiting all three cups but baiting only one. Next, the occluder was lifted and the experimenter looked at the ape (ostensive cue), called the ape’s name, and looked at one of the cups while holding on to it with one hand and tapping it with the other (continuous looking, three times tapping). Last, the experimenter pushed the sliding table forward for the ape to make a choice. If the ape chose the baited cup, they received the reward—if not, they did not receive the reward. If the ape chose the indicated cup, we coded it as the correct choice. Apes participated in 12 trials. The location of the indicated cup was counterbalanced, with each cup being the target equally often and the same target not more than two times in a row. We assumed that apes used the experimenter’s communicative cues to determine where the food was hidden.

Ignore visible food

The ignore-visible-food task was modeled after Völter, Tinklenberg, et al. (2022). The task involved two opaque cups with an additional sealed but transparent compartment attached to the front of each cup (facing the ape). For one cup, the compartment contained a preferred food item that was clearly visible; for the other cup, the compartment was empty (Fig. 1d). At the beginning of the trial, the two cups were placed upside down on the sliding table so that the ape could see that the opaque compartments of both cups were empty. Next, the experimenter baited one of the cups in full view of the subject. In nonconflict trials, the baited cup was the cup with the food item in the transparent compartment. In conflict trials, the baited cup was the cup with the empty compartment. After baiting, the experimenter pushed the sliding table forward, and the ape could choose by pointing. If the baited cup was chosen, the ape received the food. Apes participated in 14 trials—12 conflict trials and two nonconflict trials (first and eighth trial). Only conflict trials were analyzed. The location of the cup with the baited compartment was counterbalanced, with the cup not being in the same location more than two times in a row. We assumed that apes inhibited selecting the visible food item and instead used their short-term memory to remember where the food was hidden.

Probabilistic reasoning

The probabilistic-reasoning task was modeled after Hanus and Call (2014). Three identical cups were presented side by side on a sliding table, with the cup in the middle sometimes positioned close to the left cup and sometimes close to the right (Fig. 1b). Two half-open boxes served as occluders to block the ape’s view when shuffling the cups. Each trial started by showing the ape that all three cups (one on one side of the table, two on the other) were empty. After placing the occluders over both sides of the table, thereby covering two cups on one side and one cup on the other, the experimenter put one piece of food on top of each occluder. Next, the experimenter hid each piece of food under the cup(s) behind the occluders. In the case of the occluder with the two cups, the food was randomly placed under one of the two cups while both cups were visited and even shuffled. Last, both occluders were lifted, and the table was pushed forward, allowing the ape to choose one of the three cups, from which they then received the content. We coded whether the ape chose the certain cup (i.e., the cup from the side of the table with only one cup). Apes participated in 12 trials. The side with one cup was counterbalanced, with the same constellation appearing not more than two times in a row on the same side. We assumed that apes would infer that the cup from the tray with only one cup certainly contained food, whereas the other cups contained food only in 50% of cases.

Population to sample

The population-to-sample task was modeled after Rakoczy et al. (2014; see also Eckert et al., 2018). During the test, apes saw two transparent buckets filled with pellets and carrot pieces (the carrot pieces had roughly the same size and shape as the pellets). Each bucket contained 80 food items. The distribution of pellets to carrot pieces was 4:1 in Bucket A and 1:4 in Bucket B. Pellets are preferred food items compared with carrots. The experimenter placed both buckets on a table—one on the left side and one on the right (Fig. 1a). At the beginning of a trial, the experimenter picked up the bucket on the right side, tilted it forward so the ape could see inside, placed it back on the table, and turned it around 360°. The same procedure was repeated with the other bucket. Next, the experimenter looked at the ceiling, inserted each hand in the bucket in front of it, and drew one item from the bucket without the ape seeing which type (the experimenter always picked from the majority bucket). The food items remained hidden in the experimenter’s fists. Next, the experimenter extended the arms (in parallel) toward the ape, who was then allowed to make a choice by pointing to one of the fists. The ape received the chosen sample. In half of the trials, the experimenter crossed arms when moving the fists toward the ape to ensure that the apes made a choice between samples and not just the side on which the favorable population (bucket) was still visible. In between trials, the buckets were refilled to restore the original distributions. Apes participated in 12 trials. We coded whether the ape chose the sample from the population with the higher number of preferred food items. The location of the buckets (left and right) was counterbalanced, with the buckets in the same location no more than two times in a row. The crossing of the hands was also counterbalanced, with no more than two crossings in a row. We assumed that apes reasoned about the probability of the sample being a preferred item on the basis of observing the ratio in the population.

Self-ordered search

The self-ordered-search task was modeled after Völter et al. (2019). Three identical cups were placed equidistantly on a sliding table directly in front of the ape (Fig. 1e). The experimenter baited all three cups in full view of the ape. Next, the experimenter pushed the sliding table forward for the ape to choose one of the cups by pointing. After the choice, the table was pulled back, and the ape received the food. After a 3-s pause, the table was pushed forward again for a second choice. This procedure was repeated for a third choice. If the ape chose a baited cup, they received the food—if not, they did not receive the food. We coded the number of times the ape chose an empty cup (i.e., chose a cup they already chose before). Please note that this outcome variable differed from the other tasks in two ways: First, possible values were 0, 1, and 2 (instead of just 0 and 1), and second, a lower score indicated better performance. Apes participated in 12 trials. No counterbalancing was needed. We assumed that apes used their working memory abilities to remember where they had already searched and which cups still contained food.

Predictor variables

In addition to the data from the cognitive tasks, we collected data for a range of predictor variables to predict individual differences in performance on the cognitive tasks. Predictors could vary with the individual (stable individual characteristics: group, age, sex, rearing history, and time spent in research), the individual and time point (variable individual characteristics: rank, sickness, and sociality), group membership (group life: time spent outdoors, disturbances, and life events), or the testing arrangements and thus with the individual, time point, and session (testing arrangements: presence of an observer, participation in other studies on the same day and since the last time point). Predictors were collected from the zoo handbook with demographic information about the apes via a diary that the animal caretakers filled out on a daily basis or via proximity scans of the whole group. A detailed description of these variables is provided in the Supplemental Material.

Data collection

Data collection started on April 28, 2022, lasted until October 7, 2023, and included 10 time points. One time point meant running all tasks with all participants. Within each time point, the tasks were organized in three sessions (Fig. 2) that usually took place on three consecutive days. Session 1 included the population-to-sample and probabilistic-reasoning tasks, Session 2 included the communicative-cues and ignore-visible-food tasks, and Session 3 included the self-ordered-search and attention-following tasks.

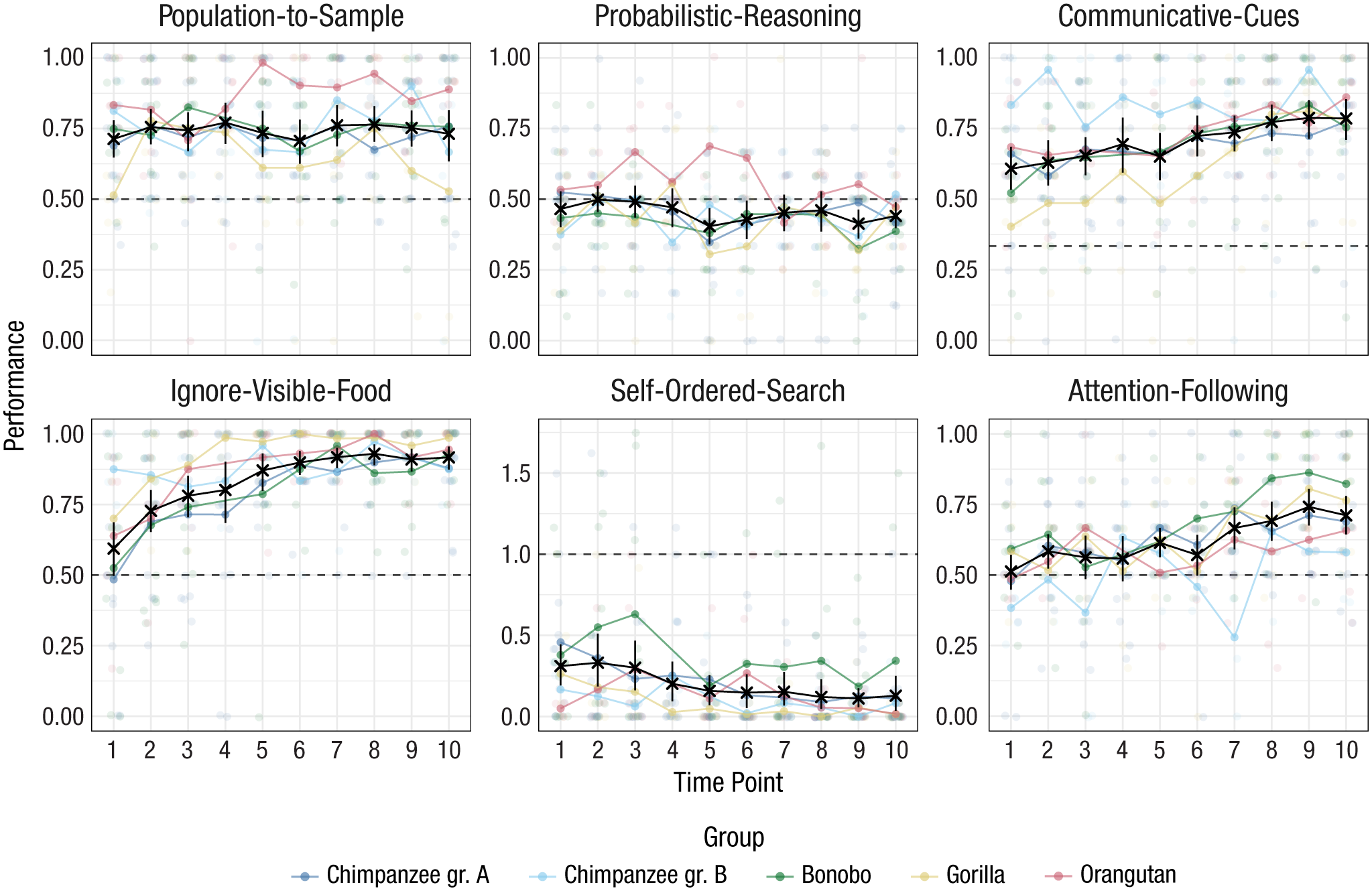

Results from the six cognitive tasks across time points. Black crosses show mean performance at each time point across species (with 95% CIs). The sample size varied between time points and can be found in Supplementary Figure 1 in the Supplemental Material. Colored dots show mean performance by species. Dashed lines show chance-level performance. CI = confidence interval.

The interval between two time points was planned to be 8 weeks. However, it was not always possible to follow this schedule, so some intervals were slightly longer or shorter (for details, see the Supplemental Material). The order of tasks and the counterbalancing within each task were the same for all subjects. This exact procedure was repeated at each time point so that the results would be comparable across participants and time points.

Analysis and Results

To get an overview of the results, we first visualized the data (Fig. 2). Group-level performance was consistently above chance in the communicative-cues, ignore-visible-food, and population-to-sample tasks. For attention following, this was the case only from Time Point 7 onward; for probabilistic reasoning, performance was, if anything, below chance. For the self-ordered-search task, performance was below chance (lower values on this task reflected better performance, i.e., systematic avoidance of the already searched location). For the attention-following, ignore-visible-food, communicative-cues, and self-ordered-search tasks there was a steady improvement in performance over time.

Figure 2 also shows that there was substantial variation between individuals of the same species. Furthermore, species formed largely overlapping distributions in that for every task there were high- and low-performing individuals from each species, which warranted an individual-differences analysis of the data.

We linked performance in the tasks across time points to latent variables representing cognitive abilities. That is, we saw the observable performance as a function of (a) an individual’s cognitive ability, (b) situational factors, and (c) measurement noise. The statistical models reported below teased these components apart and allowed us to address questions about the nature of individual differences. We first asked how stable cognitive abilities were over time and how reliably they were measured. Next, we studied the correlations between different abilities to explore the internal structure of great ape cognition. Last, we linked performance in the tasks to external predictors to shed light on the sources of individual differences in abilities. Different statistical techniques were used for each analysis.

Stability and reliability

We first asked how stable performance was on a task level, how stable individual differences were, and how reliable the measures were. We used structural equation modeling (SEM; Bollen, 1989) to address these questions. 1 For each task we fit two types of models that addressed different questions. A detailed, mathematical description of the models is provided in the Supplemental Material. An alternative analytical approach that used a Rasch (1PL) model on the individual trial data is also reported in the Supplemental Material. The results did not differ substantively between the two approaches.

We started with a latent state (LS) model. The goal of this model was to estimate for each time point a measurement-error-free LS representing an individual’s cognitive ability. We divided the trials from one time point into two test halves (odd vs. even trials). Roughly speaking, the correlation between these two test halves indicated measurement precision and was used to estimate measurement error (and reliability). Mean changes in task-level performance could be assessed by comparing the means of LSs across subjects for the different time points. The stability of individual differences could be assessed by correlating LSs across different time points.

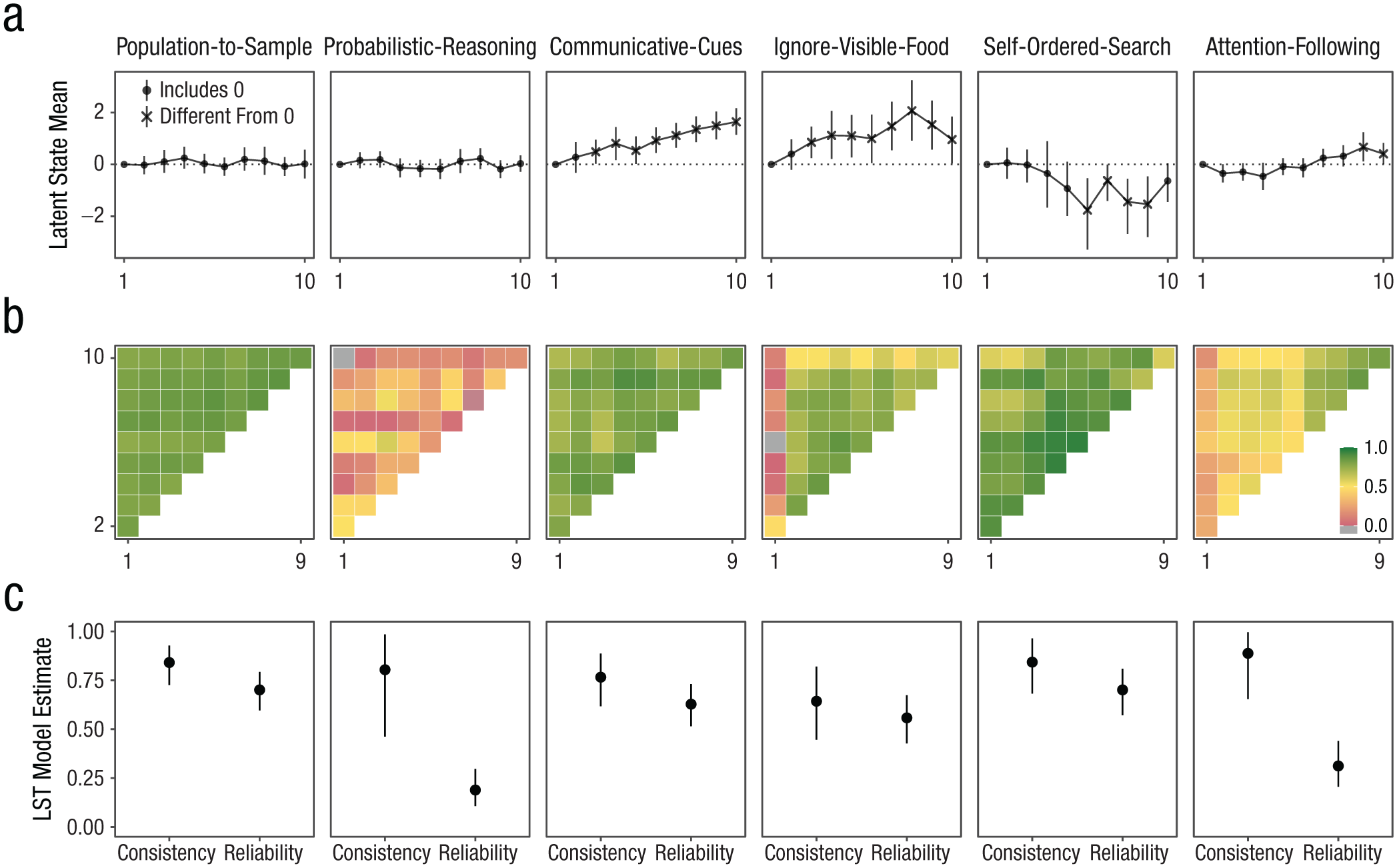

The temporal pattern of LS means varied across tasks (Fig. 3a). In the attention-following task, means increased over time and were significantly different from zero at later time points (Time Points 9 and 10). The communicative-cues and ignore-visible-food tasks exhibited steady increases, although the ignore-visible-food task saw a late-stage decline, with the latent mean at Time Point 10 still significantly different from Time Point 0. The self-ordered-search task showed a decrease (reduction in errors) from Time Point 6 onward, whereas latent means for the probabilistic-reasoning and population-to-sample tasks remained stable throughout the study.

Results from structural equation models. Panel (a) shows latent mean estimates for each time point by task based on latent state model. Means at Time Point 1 are set to zero. The shape denotes whether the 95% CrI included zero (dashed line). The sample size varied between time points and can be found in Supplementary Figure 1 in the Supplemental Material. Panel (b) shows correlations between subject-level latent state estimates for the different time points by task. Panel (c) shows coefficients estimated from latent state-trait models with varying means with 95% CrI. “Consistency” refers to the proportion of (measurement-error-free) variance in performance explained by stable trait differences. “Reliability” refers to the proportion of true score variance to total variance. CrI = credible interval.

Correlations between LSs illustrated varying degrees of stability of individual differences across tasks (Fig. 3b). Attention-following tasks displayed low-to-moderate correlations at early time points (before Time Point 7), increasing substantially thereafter. The communicative-cues, ignore-visible-food, and self-ordered-search tasks generally showed high correlations between LSs (with Time Point 1 of the ignore-visible-food task being an exception). Population-to-sample correlations were consistently high, whereas correlations for probabilistic reasoning were generally low, sometimes even negative, suggesting no stability across time points.

Next, we fit latent state-trait (LST) models. Compared with LS models, LST models assume that there is a single latent trait that represents an individual’s stable cognitive ability that is the same across time points. Accordingly, we partitioned variation in performance on a given time point into variance because of the trait (consistency), occasion specificity (1 = consistency), and measurement error (used to estimate reliability). Like the LSs in the LS model, the trait and state residual variables in the LST model are assumed to be free from measurement error (Geiser, 2020; Steyer et al., 2015). Classic LST models assume that the absolute trait values do not change over time. After inspecting the data, we decided to relax this assumption to account for mean change in performance over time. Thus, we fit LST models that allowed the absolute trait values to change over time. This analysis was not preregistered. Change over time, however, is seen as a change that is the same for all individuals. The stability of individual differences is reflected in the proportion of variance explained by the trait (consistency).

Consistency estimates varied across tasks (Fig. 3c). The consistency coefficient for the attention-following task was estimated to be .89, 95% credible interval (CrI) = [.65, .996], suggesting that almost 90% of true interindividual differences was attributable to stable traits. However, given the low reliability of measurement (see below), this result should be interpreted with caution because the variability in responses largely reflects measurement error and only to a small extent stable differences between individuals. For the communicative-cues task, consistency was estimated to be .77, 95% CrI = [.62, .89]; that is, 77% of the variance was explained by trait differences between individuals. For the ignore-visible-food task, consistency was estimated to be .64, 95% CrI = [.45, .82]. The probabilistic-reasoning task showed a similar pattern to the attention-following task: Consistency was estimated to be high (.80), 95% CrI = [.46, .98], but reliability was low, so the same restrictions for interpretation apply. The self-ordered-search and population-to-sample tasks had high consistency estimates—self-ordered-search task: .84, 95% CrI = [.68, .96]; population-to-sample task: .84, 95% CrI = [.73, .93].

Measurement reliability also varied significantly across tasks on the basis of the LST models (Fig. 3c). Reliability was initially low for the attention-following task (.31), 95% CrI = [.21, .44], but was substantially higher when considering only Time Point 7 onward (.66), 95% CrI = [.52, .79]. Reliability for the communicative-cues (.63), 95% CrI = [.52, .73], and ignore-visible-food (.56), 95% CrI = [.43, .67], tasks was moderate. As mentioned above, the reliability for the probabilistic-reasoning task was very low (.19), 95% CrI = [.11, .30]. The reliability for the population-to-sample (.70), 95% CrI = [.60, .79], and self-ordered-search (.70), 95% CrI = [.57, .81], tasks was acceptable.

To summarize the SEM results, we saw that the six tasks differed substantially in what they revealed about group- and individual-level variation. What stands out is the widespread change in performance over time. We observed an improvement in performance over time for all tasks except the population-to-sample and probabilistic-reasoning tasks. This group-level change, however, has different individual-level interpretations for the different tasks. For the communicative-cues, ignore-visible-food, and self-ordered-search tasks, individual differences remained relatively stable despite the group-level change, suggesting stable individual differences combined with a systematic learning effect across individuals. In contrast, for the attention-following task, there was little stability in individual differences at earlier time points, and only toward the end did a more stable ordering of individuals emerge. In combination with the low reliability at earlier time points, the change in stability of individual differences suggests that at least some individuals changed their response strategy over the course of the study. The combination of low reliability, chance-level performance, and the low correlation of LSs for the probabilistic-reasoning task suggests that this task is not suited for assessing individual differences in probabilistic-reasoning abilities in great apes.

It is also noteworthy that the reliability estimates across tasks were on average lower compared with those from a previous study that tested the same individuals on different tasks (Bohn et al., 2023). One explanation might be the increase in performance over time, which was not observed by Bohn et al. (2023). At the beginning of the study, more individuals might have chosen randomly instead of using the available information provided in the task setup and the demonstrations. By definition, random variation is not reliable. With time, more and more individuals started using the available information; thus, interindividual differences in how well these individuals used this information could be detected.

Structure

To explore the structure of great ape cognition we correlated latent trait estimates for each task. In contrast to raw performance scores, these estimates take into account measurement reliability and are considered to be error-free. The method for computing correlations between trait estimates for the different tasks was not preregistered. Bohn et al. (2023) tested the same individuals, and we therefore also included their data to investigate relations between a broader set of abilities. The list below provides a brief overview of these additional tasks. A more detailed description can be found in the Supplemental Material. We did not include the switching task from this previous study because it did not produce meaningful results either on a group or an individual level:

Gaze following: In this task, the experimenter intermittently looked up after offering food, and we coded whether apes followed the experimenter’s gaze; this assessed sensitivity to others’ attentional focus.

Direct causal inference: Apes chose between two closed cups, one of which was baited, after the experimenter shook the baited one, producing a rattling sound; success required causal reasoning (the effect of the solid food on the container while shaking).

Inference by exclusion: Using the same setup as in the previous task, the experimenter now shook the empty cup (producing no sound). To find the food, apes had to discard the shaken cup, requiring inference-by-exclusion abilities.

Quantity discrimination: Apes chose between two plates containing different amounts of food (five vs. seven); correct choices reflected discrete quantity estimation and comparison.

Delay of gratification: Apes decided between an immediate smaller reward (one piece of food) and a delayed larger one (two pieces of food); success required inhibitory control. All of these tasks showed at least acceptable reliability in the study by Bohn et al. (2023).

Even though the data in the two studies were collected at different time points, we analyzed them jointly because it is unlikely that changes in cognitive abilities (over and above task-specific training effects that apply to all individuals) occur over this time span (for implementation details, see the Supplemental Material). The probabilistic-reasoning task was excluded from this analysis because of its poor psychometric properties (as reported above).

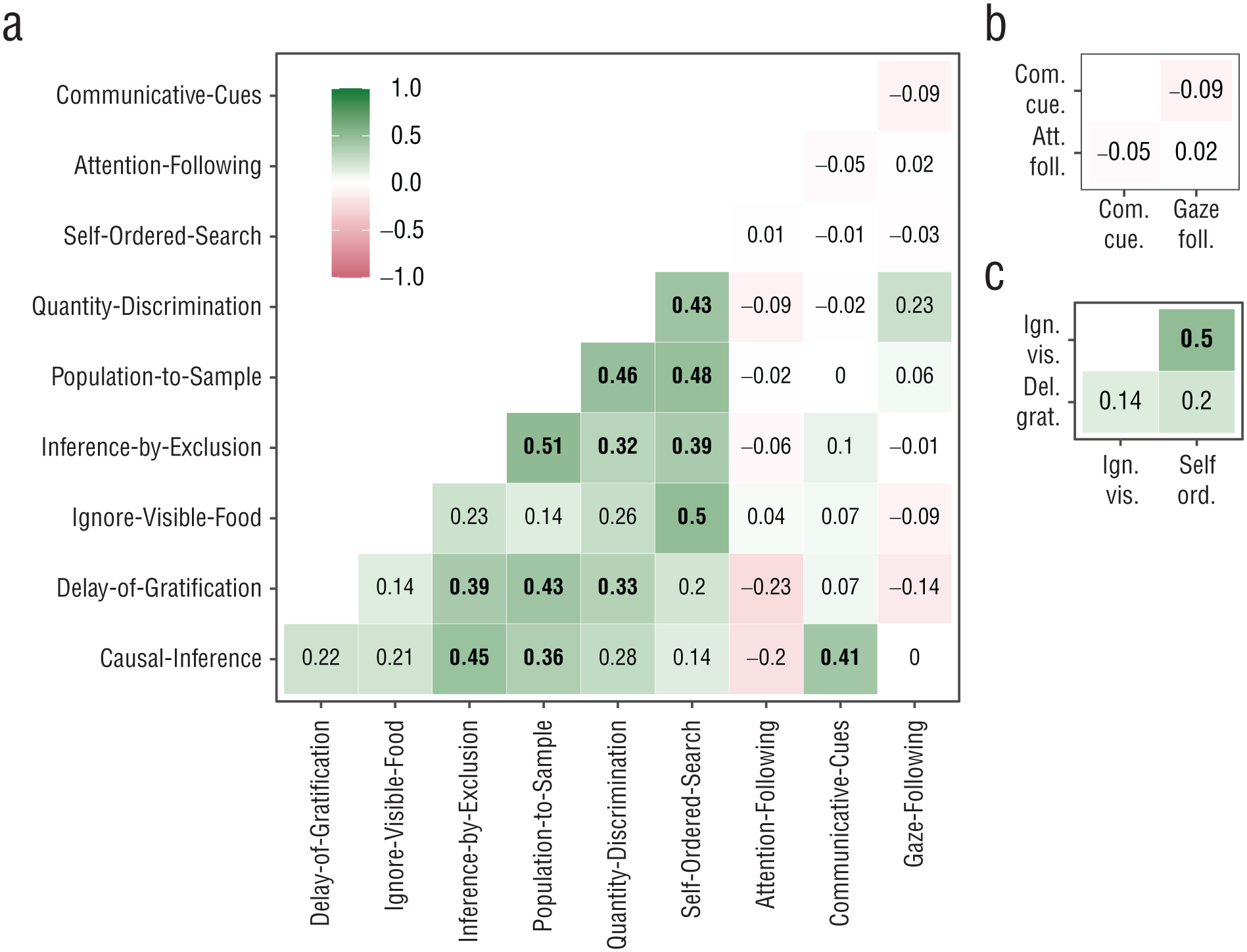

Figure 4 shows the correlations between trait estimates for the different tasks. Overall, most correlations were not significantly different from zero (i.e., the 95% CI did include zero). Because of this low average level of correlations, we decided not to explore models with higher order factors and only interpreted specific qualitative patterns. In the Supplemental Material, we also report an individual-differences analysis of the learning rate over time, that is, to what extent the rate of improvement (i.e., slope for time point) for each individual was correlated across tasks.

Correlations between trait estimates. Bold correlations have 95% CrIs that do not overlap with zero. Panels show correlations between (a) all, (b) social-cognition, and (c) executive-function tasks; the correlation between the two inferential-reasoning tasks is not shown in a separate panel. Correlations involving the self-ordered-search task (coded as the number of errors) were multiplied by −1, so higher values can be interpreted as better traits for all tasks. CrI = credible interval.

Conceptually, the tasks can be clustered in the following broader domains: social cognition (attention-following, gaze-following, and communicative-cues tasks), reasoning about quantities (quantity-discrimination and population-to-sample tasks), executive function (delay-of-gratification, self-ordered-search, and ignore-visible-food tasks), and inferential reasoning (causal-inference and inference-by-exclusion tasks). As a first step, we evaluated the evidence for such a clustering in the data. We found no significant correlation between any of the social-cognition tasks. Furthermore, the attention-following and gaze-following tasks did not correlate significantly with any of the other tasks, and the communicative-cues task correlated only with the causal-inference task—a result discuss in more detail below. Thus, we found no evidence for shared cognitive processes in tasks measuring different aspects of social cognition, in line with previous work (Herrmann et al., 2010).

We found that the tasks measuring reasoning about quantities (i.e., the quantity-discrimination and population-to-sample tasks) to be significantly correlated. Both tasks require discriminating between different quantities, directly in the case of quantity discrimination and as part of the decision-making process in the case of the population-to-sample task. Deciding between the samples from the two populations requires discriminating between the relative quantities within each bucket from which the samples were drawn.

Within the executive-function measure, the self-ordered-search and inhibit-visible-food tasks were significantly correlated, but neither task correlated with the delay-of-gratification task. The significant correlation can be explained by the need to inhibit a premature response (selecting visible food or a cup that was previously rewarded) in both tasks. It has been argued that delay of gratification requires self-control (tolerating a longer waiting time to gain a more valuable reward) over and above behavioral inhibition (Beran, 2015). From this point of view, individual differences in the delay-of-gratification task might be due to differences in self-control and less to differences in inhibition.

We found a correlation between the two inferential-reasoning measures: inference by exclusion and causal inference. This correlation likely resulted from both tasks being involved in making inferences about the location of food on the basis of reasoning about its physical properties.

Next, we turned to the correlations across domains. Perhaps the most surprising finding was the correlation between the causal-inference and communicative-cues tasks. The origin may have been task impurity because there were two ways to solve the causal-inference task: The first way, as hypothesized, was by using the rattling sound to infer the location of the food, and the second way the task could be solved was by interpreting the experimenter’s shaking of the cup as a communicative cue, which was very similar to the communicative-cues task. Thus, we suspect that at a least some individuals solved the task via the second route.

Last, a notable cluster including all nonsocial tasks that reliably measured individual differences emerged. Of 21 correlations, 12 were significant. All others were positive and numerically close to the significant ones. One might further consider that the self-ordered-search and ignore-visible-food tasks had limited variation because of ceiling effects, which might have led to an underestimation of the correlations involving these tasks (six of nine nonsignificant correlations).

Predictability

To evaluate predictability, we analyzed which external variables accounted for inter- and intraindividual differences in task performance. That is, we asked which of the predictor variables described above predicted performance in the different tasks. Given the large number of predictor variables (14), this question translated to a variable selection problem: selecting a subset of variables from a larger pool. We used the projection predictive inference (Piironen et al., 2020) approach because it is a state-of-the-art procedure that provides an excellent tradeoff between model complexity and accuracy (Pavone et al., 2023). The projection prediction approach is a two-step process. The first step consists of building the best predictive model possible, that is, the “reference model.” In our case, the reference model was a Bayesian multilevel regression model—fit via brms (Bürkner, 2017)—that included all available predictors (Catalina et al., 2022). In the second step, the goal is to replace the posterior distribution of the reference model with a simpler distribution containing fewer predictors compared with the reference model. The importance of a predictor is assessed by inspecting the mean log-predictive density (elpd) and root-mean-square error (rmse) of the models containing the predictor compared with the models that lack it.

The output of the procedure is a ranking of the different predictors. That is, for each task, we get a ranking of how important a predictor is for constructing the simpler replacement distribution. In addition, we can qualitatively assess whether or not a predictor is relevant. In addition to the global assessment, we also inspected the projected posterior distribution of the predictors classified as relevant to see how they influenced performance. A detailed description of the procedure that includes how the different variables were handled and how the importance of each predictor was assessed is provided in the Supplemental Material.

In addition to the external predictors, the models also included a random-intercept term for subject—(1 | subject) in brms notation—that was handled in a special way in that it was always considered last because it would otherwise have soaked up most of the variance before the other predictors would have had a chance to explain it.

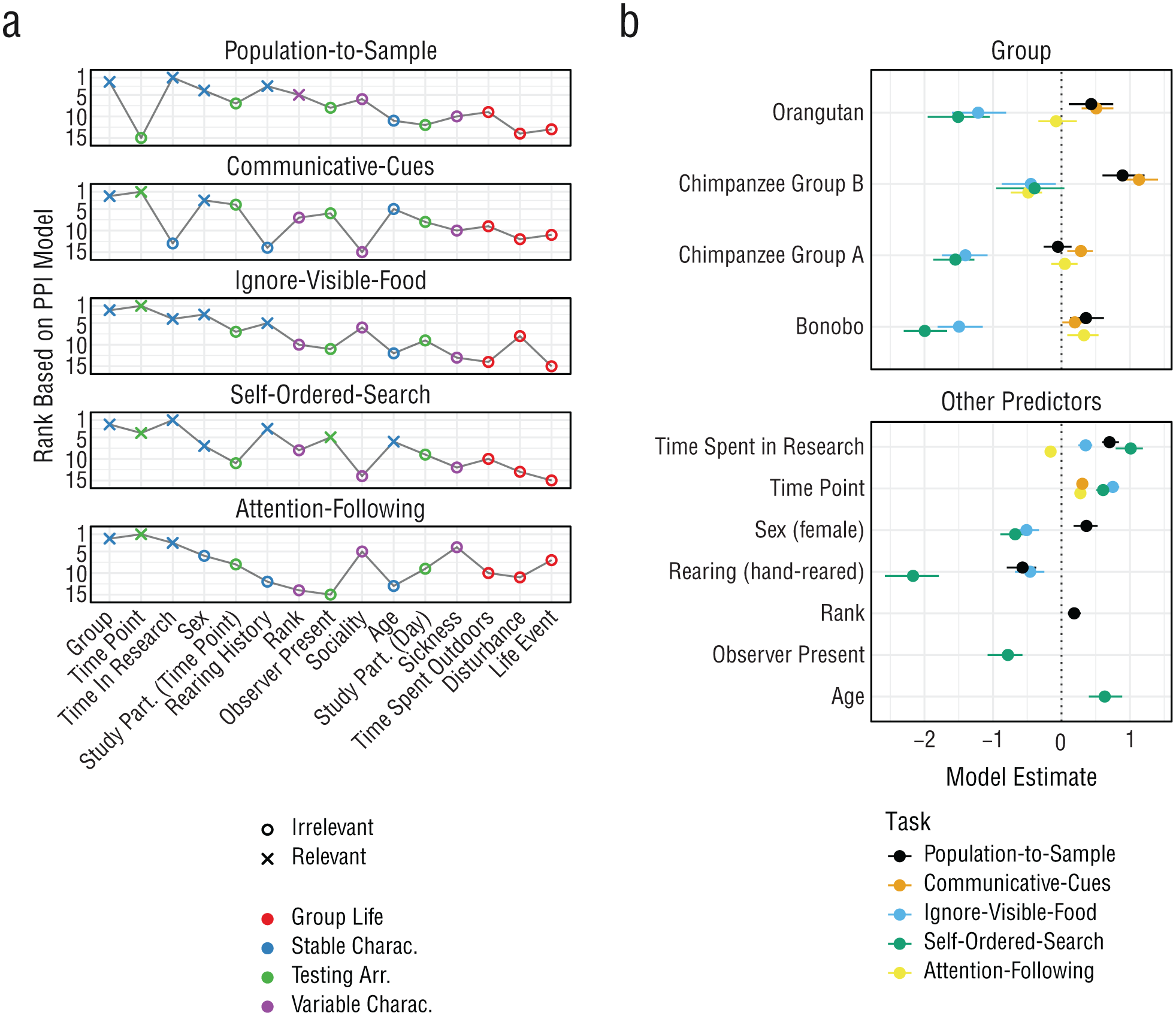

Figure 5a summarizes the selected predictors across tasks. For all tasks, the random-intercept term improved model fit the most (not shown in Fig. 5a). In line with results reported by Bohn et al. (2023), the predictive importance of individual identity suggests that genetic predispositions and/or idiosyncratic developmental processes, which operate on timescales longer than what we captured in our study, accounted for a substantial portion of the variance in cognitive abilities between individuals.

Predicting cognitive performance. Panel (a) shows predictor ranking and selection based on PPI models. Crosses indicate the predictors that were selected to be relevant on the basis of the PPI models. Colors indicate the broader category each predictor belongs to. The x-axis is sorted by the average rank across tasks. Panel (b) shows posterior model estimates for the selected predictors for each task based on the data. Points indicate means with 95% CrIs. Colors denote the task. For categorical predictors, the estimate gives the difference compared with the reference level (gorilla for group). PPI = projection predictive inference; CrI = credible interval.

However, for two tasks, other predictors had a comparable explanatory power—something that was not observed in Bohn et al. (2023). For the population-to-sample task, time spent in research improved the model fit even more than adding the random intercept at the end. Performance in this task could be interpreted as strongly depending on having learned to pay attention to stimuli and the human experimenter. For the ignore-visible-food task, the time point had an influence exceeding that of the random-intercept term.

For the remaining predictors, the most highly ranked and frequently selected ones came from the group of stable individual characteristics. The time point, which was ranked second across tasks, was the notable exception. The high ranking of stable individual characteristics aligns with the SEM results, in which most of the variance in performance could be traced back to stable trait differences between individuals. Mean changes in task performance were largely due to improvement over time, most likely reflecting task-specific learning processes. The remaining time-varying predictors did not account for much variation.

The predictor selected most often was the group. It was the only predictor that was selected as relevant for all tasks. However, differences between groups were variable in that the ranking of the groups changed from task to task (Fig. 5b). For example, gorillas performed best in the ignore-visible-food and self-ordered-search tasks, Group B of the chimpanzees performed best in the communicative-cues and population-to-sample tasks, and the bonobos performed best in the attention-following task. Variable differences between groups speak against clear species or group differences in general cognitive performance. Again, the most likely explanation for group differences is an interaction between species-specific dispositions and individual-level/task-level developmental processes.

The predictors that were selected more than once influenced performance in variable ways (Fig. 5b). As mentioned above, the time point always had a positive effect because performance increased with time. Whenever rearing was selected to be relevant, mother-reared individuals outperformed others. The time spent on research had a positive effect, suggesting that more experience with research (or researchers; see Damerius et al., 2017) leads to better performance, except for the attention-following task. The effect of sex was variable in that females outperformed males in the population-to-sample task, but males outperformed females in the self-ordered-search and ignore-visible-food tasks.

Discussion

In the current study, we investigated the stability, structure, and predictability of great ape cognition across a broad range of domains, including social cognition, reasoning about quantities, executive function, and inferential reasoning. We repeatedly administered six tasks to a comparatively large sample of great apes a total of 10 times over a period of 1.5 years. Group-level results varied by task: Whereas some tasks demonstrated substantial changes over time, others remained relatively stable. The tasks also differed in how reliably they measured individual differences, ranging from very poor (probabilistic-reasoning task) to very good (population-to-sample and self-ordered-search tasks). A significant portion of the observed variance in performance could be attributed to stable differences in cognitive abilities between individuals. However, these individual differences were not strongly associated across all tasks; instead, most nonsocial tasks were correlated, whereas social tasks correlated neither with each other nor with other tasks. Last, individual differences in cognitive performance were better predicted by stable, individual-specific characteristics compared with transient aspects of everyday experience.

The tasks varied substantially in their quality of measurement, which emphasizes the importance of rigorously assessing measurement properties before including tasks in cognitive test batteries or collecting data from large samples with the goal of assessing individual differences (see also Cauchoix et al., 2018; Soha et al., 2019). The reliability of measurement has profound implications for the conclusions that can be drawn (Fried & Flake, 2018). For instance, the probabilistic-reasoning task showed no meaningful correlations with other tasks, which might suggest that probabilistic reasoning is an isolated cognitive ability. However, the lack of correlation—paired with chance-level performance—was more likely because of the task failing to measure anything reliably, with variation in performance being predominantly noise. The communicative-cues task, on the other hand, reliably measured individual differences but did not correlate with any of the other social-cognition tasks, suggesting that it does not share cognitive processes with them.

The overall low correlations between tasks speak against the idea that a variation in a higher order (g) factor explains any meaningful differences between individuals. In the absence of shared variance, there is nothing that such a factor could explain. This pattern aligns with previous work and animal cognition research more broadly (Poirier et al., 2020). For example, Herrmann et al. (2010) found that only 10 of the 105 bivariate correlations between tasks of the PCTB were significant. Likewise, Völter, Reindl, et al. (2022) found that only three of 36 bivariate correlations between executive-function tasks were significantly different from zero. Compared with samples from work with human participants and from earlier ape studies (Herrmann et al., 2010; Hopkins et al., 2014; Völter, Reindl, et al., 2022), the sample we tested could be considered small. However, we collected a large number of data points for each individual, and our analytical approach explicitly accounted for measurement reliability. We therefore believe that the dominance of low correlations reflects a genuine finding rather than noise.

We did find a cluster of substantial correlations between the nonsocial tasks, which speaks toward shared cognitive processes among these tasks. We think the best way to explicate this idea would be to adopt a process-level perspective and build computational models that specify the processes involved. A potential alternative—less cognitive—explanation for individual differences could be associative learning: All tasks (except for the gaze-following task) involved differential reinforcement, and most tasks involved a reliable predictive cue signaling the reward location. In this regard, individuals differed in performance because they differed in associative learning speed. This explanation predicts (a) improvement in performance in all tasks because individuals learned the predictive relations over time and (b) correlations of individual differences in learning rates across all tasks because some individuals learned faster than others. However, we did not find these predicted patterns: Some, but not all, tasks showed a group-level increase in performance over time (see Fig. 2; see also Bohn et al., 2023, Fig. 3), and individual-level learning rates were not broadly correlated across tasks (see Supplementary Fig. 15). In addition, for some of these tasks, earlier work also showed that associative learning alone is insufficient to explain apes’ performance (Call, 2004; e.g., Call & Tomasello, 1998). However, these arguments should not be taken to mean that associative learning plays no role in explaining performance in the tasks; it suggests only that performance is not reducible to it. Additional candidate subprocesses would be working memory or attention shifting. A computational modeling approach would also allow for dealing with task impurity (i.e., multiple processes contributing to any task performance) and varying task strategies (including cognitive offloading strategies, which we observed in the self-ordered-search task with increasing linear search patterns over time). Models could be validated by testing predictions about which (yet to be designed) tasks should correlate because they are modeled to share a common set of processes.

Last, this study, alongside findings from Bohn et al. (2023), highlights that the origins of individual differences in great ape cognitive abilities most likely lie deeply embedded in the ontogenetic—and perhaps genetic—history of individuals. Efforts to explain these differences by using easily measurable variables, such as age, sex, or rank, proved unproductive. Of such easy to measure variables, only the housing group emerged as a relevant predictor across all tasks. Notably, in this study, “group” is not synonymous with “species”: The two chimpanzee groups differed substantially across tasks. The independent variability of groups from the same species underscores the importance of studying within-species variation rather than focusing solely on between-species differences (Van Leeuwen et al., 2018).

The study reported here underscores the presence of systematic and stable cognitive differences between individuals from the four great ape species. Such individual differences are systematically linked across domains but cannot be reduced to a single g factor. There is substantial variation between individuals from the same species, the origins of which are linked to stable characteristics shaped by ontogeny and/or genetics. Thus, these findings highlight the need for longitudinal studies that begin as early in life as possible to truly understand the developmental roots of individual differences in great ape cognition.

Supplemental Material

sj-pdf-1-pss-10.1177_09567976261434817 – Supplemental material for Individual Differences in Great Ape Cognition Across Time and Domains: Stability, Structure, and Predictability

Supplemental material, sj-pdf-1-pss-10.1177_09567976261434817 for Individual Differences in Great Ape Cognition Across Time and Domains: Stability, Structure, and Predictability by Manuel Bohn, Christoph J. Völter, Daniel Hanus, Nico Eisbrenner, Johanna Eckert, Jana Holtmann and Daniel Haun in Psychological Science

Footnotes

Transparency

Action Editor: Tom Beckers

Editor: Simine Vazire

Author Contributions