Abstract

Conscious experiences appear to play a central role in human behavior, yet most neural processing occurs outside of consciousness. Understanding how the mind prioritizes information for consciousness is, therefore, crucial for theories of cognition. Prior research has largely focused on vision, but generalization is tenuous given the vastly different characteristics of the senses—particularly for audition, which lacks foveation and cannot be intentionally stopped. We examine the affective domain, for which prioritization is not well understood. In three experiments (two preregistered), 101 Hebrew-speaking adults completed a visual task with a stream of auditory pseudowords in the background. Occasionally a meaningful word appeared, and participants were asked about its presence. Using objective and subjective awareness measures, we found that neutral words were prioritized over negative words, regardless of task difficulty, intelligibility, and low-level features. These findings challenge theorizing and modal intuitions, and we discuss ways in which those can be reconciled.

Introduction

Our sensory environment is filled with sounds, yet given the limited capacity of conscious processing (Kahneman, 1973), much of the auditory input we encounter is managed by nonconscious processes we cannot access (Bargh & Morsella, 2008; Dehaene et al., 2006). In addition to effects like nonconscious priming, in which the processed information implicitly affects behavior (e.g., Draine & Greenwald, 1998; Kouider & Dupoux, 2005), nonconscious mental processes also contribute to the prioritization of what constitutes the contents of conscious awareness (for a review, see Sklar et al., 2021). This prioritization process is crucial, as it essentially shapes the contents that influence our thoughts, feelings, and behavior. Here, we examine how emotionally meaningful sensory information, known to serve as a key signal for the cognitive system (Pessoa, 2008; Pratto & John, 1991; Vuilleumier, 2005), is prioritized. Specifically, we ask whether the emotional valence of spoken words affects their probability of reaching conscious awareness and whether it is prioritized or inhibited compared with neutral words.

Although prioritization for consciousness has been previously studied in vision (for reviews, see Gayet et al., 2014; Sklar et al., 2021), much less is known about other modalities in general and about audition specifically (Dykstra et al., 2017; Faivre et al., 2017). Notably, unlike vision, audition lacks a foveation mechanism; humans cannot avert their “gaze” nor shut their ears, leaving the entire acoustic input always available for processing. Thus, audition requires efficient prioritization even more than other senses. It therefore captures a common but understudied case in which the cognitive system selects information.

Understanding the basic principles of auditory prioritization will also advance the theoretical discourse on nonconscious processing capacities (Kouider & Dehaene, 2007; Mudrik & Deouell, 2022). Some maintain that nonconscious processes are largely limited to low-level information, precluding the possibility that word meaning or valence will affect prioritization (Holender, 1986; Loftus & Klinger, 1992; Perruchet & Vinter, 2002). In contrast, other views suggest that many high-level processes can take place nonconsciously, allowing affect-based prioritization (Dehaene et al., 2006; Hassin, 2013; Merikle et al., 2001). Moreover, accounts of nonconscious processing depict it as short-lived, with limited ability for temporal integration—a prediction that could be directly tested with speech processing (Mudrik et al., 2014; Van Gaal et al., 2014).

Emotional influences on prioritization and postperceptual processing

Valence is a dimension central to human lives, for which the principles of prioritization are not well understood. One account may hold that detecting emotional information, especially negative, is important for adaptive behavior, and therefore such information is prioritized (Pratto & John, 1991; Vuilleumier, 2005). However, adaptive behavior could also benefit from filtering out irrelevant negative information—information that might engage executive networks and interfere with performance (Cohen et al., 2010).

The prioritized negativity account is supported by studies showing the impact of valence on visual detection (Gaillard et al., 2006) and performance in Stroop-like tasks, even when irrelevant (Algom et al., 2004). In the auditory domain, negative words disrupt performance in Stroop-like tasks (Bertels et al., 2011), cause attention-shifting (Bertels et al., 2010), and are associated with faster recognition times (Gao et al., 2022; Goh et al., 2016).

However, these effects of valence may reflect postperceptual influences occurring only after awareness is reached, rather than nonconscious selection. In studies addressing nonconscious processing, negative printed words and images were found to break suppression later than neutral ones, supporting the avoidance-of-interference position (Doswell et al., 2025; Prioli & Kahan, 2015; Yang & Yeh, 2011; but see Sklar et al., 2012). Yet even in such studies, postperceptual influences may affect detection time (Gayet et al., 2014; Lanfranco et al., 2023; Stein & Peelen, 2021). It is thus unclear whether nonconscious processes prioritize or deprioritize emotional contents.

Testing spoken-word prioritization

To understand prioritization of emotional contents, one needs to establish conditions in which speech stimuli often go unnoticed, thus allowing nonconscious processing, and then test which stimuli are more likely to reach awareness. Most auditory paradigms creating such conditions involve either simultaneous speech streams (e.g., dichotic listening; Broadbent, 1958; Cherry, 1953) or degradation of the acoustic quality of the stimuli to a (sub)liminal level (Kouider & Dupoux, 2005; Lamy et al., 2008). Generally speaking, such approaches have not, so far, provided strong evidence that high-level speech features can be processed before selection (Holender, 1986; Lachter et al., 2004). However, within-modality competition or stimulus degradation diminishes the available information to be processed, thus limiting conclusions to rather weak inputs.

An alternative way is to examine supraliminal and nondegraded stimuli that participants fail to notice, as in the well-documented inattentional-blindness phenomenon (Mack & Rock, 1998; Most et al., 2005). Notably, for us, a parallel

To understand whether and how emotional stimuli are prioritized to consciousness, we present a paradigm that allows for multiple occurrences of inattentional deafness without degradation or masking. In this paradigm, participants perform a visual one-back task while random pronounceable pseudowords are played, completely audible and undegraded. In rare target trials, a pseudoword is replaced with a real, meaningful word, varying in valence, and participants are asked whether they heard it. Prioritization in this context is measured by the ability to consciously detect words. To preview our results, across three experiments (two preregistered), we found a consistent tendency to detect negative words less often, which supports the avoidance-of-interference account and suggests that processing of spoken-word valence can precede conscious awareness.

Research Transparency Statement

General disclosures

Study 1 disclosures

Study 2 disclosures

Study 3 disclosures

Experiment 1

Method

Participants

Twenty-nine Hebrew University students (

Stimuli

The experiment was programmed using PsychoPy Builder (Peirce et al., 2019). Visual stimuli were displayed on a 24-in. screen with a refresh rate of 144 Hz (BenQ XL2411P), and auditory stimuli were played via two loudspeakers at angles of 45° and 315° from the location of the participant and a distance of roughly 90 cm.

Visual stimuli

Eighty images of purple Greebles figurines (Fig. 1; Gauthier & Tarr, 1997) were used for the visual one-back task. Greeble images (6.2 × 7 cm) were presented against a colored background. In the middle of the screen, we presented a gray square that occluded the greeble after its presentation was over (Fig. 1). The backgrounds depicted different environments (e.g., desert, city, forest) in order to make the visual experience more engaging to participants.

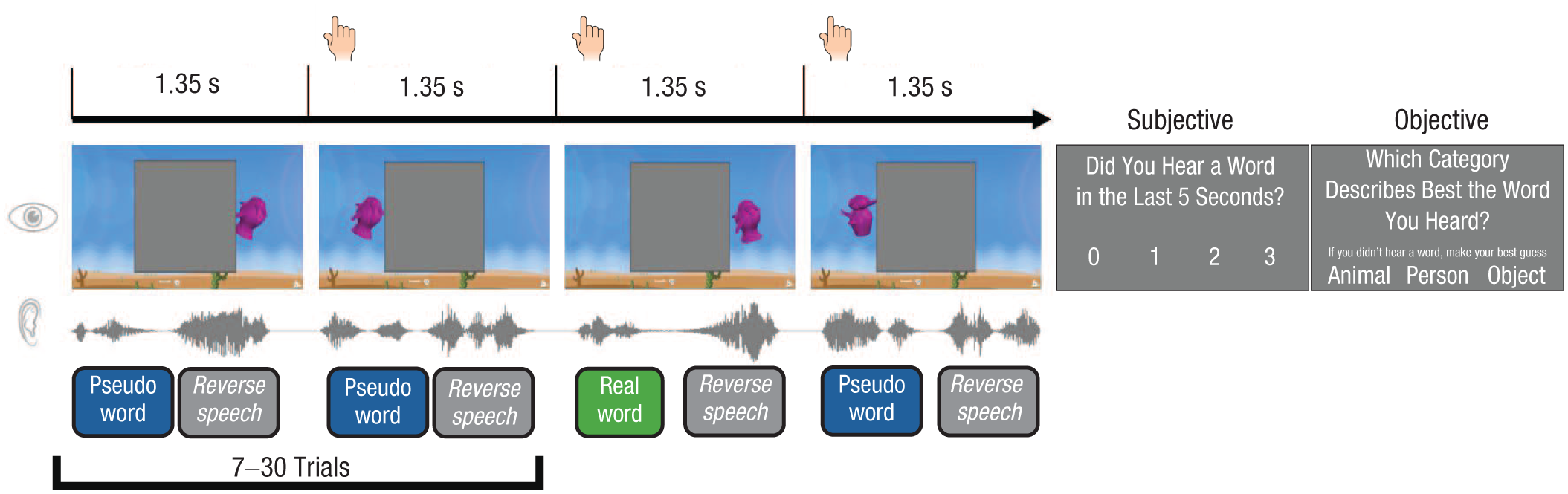

Description of the dual task used in all experiments. Participants performed a visual one-back task (same/different decision) regarding moving Greebles figurines (purple colored) while a stream of pseudowords was played. After a random number of trials (at least seven and no more than 30) a word was played instead of a pseudoword, and participants performed subjective and objective awareness tests regarding its presence (the actual display was in Hebrew with the meaning of the 0–3 labels presented; see the Method section in Experiment 1). Greebles courtesy of Michael J. Tarr, http://www.tarrlab.org/.

Spoken stimuli

We selected 72 Hebrew words from a sample of words with known valence ratings (Armony-Sivan et al., 2013). Words were chosen to form a bimodal distribution with 36 neutral words (e.g.,

To validate word valence and to get measures of pronunciation intelligibility, we ran an online sample (

Pseudoword stimuli

Pseudowords were 238 random Hebrew nouns for which two consonants were replaced to create nonexistent yet pronounceable nonwords (for example, the word

Procedure

Upon arriving in the lab, participants signed a consent form and sat at a distance of 60 cm from the screen in a quiet chamber. They were informed that the experiment was aimed at “understanding visual processing under noisy conditions.” This was done to ensure that participants perceived the visual task as the primary task.

Visual (one-back) task

During each trial of this task, moving Greebles appeared on different sides of the screen (left on odd trials and right on even trials), overlaid over a colored background (Fig. 1). The Greeble moved outside the sides of the gray square and back in over 900 ms in the first two training blocks and over 500 ms in the last training block and all following trials. The interval between Greeble onsets was 1,500 ms. The Greeble had a 50% chance of being identical to the previous Greeble shown and a 50% chance to include a newly sampled Greeble. The task was to indicate with a button press whether the Greebles had changed or remained identical to the previous one.

The experiment started with three training blocks of 18 trials to ensure proper understanding of the tasks. The first training block included no sound. From the second training block onward, a randomly sampled pseudoword was played starting 0 to 250 ms after Greeble onset (sampled from a uniform distribution with 251 steps), with no instructions about the auditory stimuli. One hundred ms after the pseudoword ended, a randomly sampled reversed pseudoword was played to dampen echoic memory effects.

A counter of the number of correct one-back responses was presented in the middle of the screen. The counter font color indicated whether the last press was correct or not (red for mistake, green for correct). Each training block ended with the overall one-back success rate presented to participants, to encourage them to engage more with the visual task. If participants failed to reach 50% accuracy in the second training block, the training block was repeated.

Auditory (word-detection) task

Following the three training blocks, participants were informed of the auditory task with the following instruction: “We also want to understand how you experience the sounds you hear. For that, the experiment will stop from time to time, and we will ask you whether you heard a meaningful word in the last 5 seconds.” Meaningful words were defined as Hebrew words that are not first names and have at least two syllables. Participants were informed that a question may follow a nonword probe, to ensure they do not think a probe follows only words, and they were asked to always report awareness. Participants were not given any feedback for their objective or subjective responses.

Participants then performed six blocks of the one-back task that included the speech sounds, which were occasionally interrupted with an awareness probe. Each block included 210 trials. Eighteen of the trials (probe trials) were followed by awareness tests—12 followed meaningful words, and six followed pseudowords (catch trials). Thus, across all blocks, 72 awareness probes followed a target word and 36 followed only pseudowords.

Meaningful words appeared after a quasirandom number of trials sampled from an exponential distribution with a minimum of seven and a maximum of 30. After another one-back trial (2,220–2,730 ms from word offset), participants were probed for subjective and then objective awareness of the presented word. The temporal lag between the word and the probe question was required because stopping immediately after the word would allow participants to retrieve it from echoic memory without being aware of it initially (we return to this issue in the General Discussion).

For the subjective awareness test, we used a modified version of the visual Perceptual Awareness Scale (PAS; Sandberg & Overgaard, 2015). This scale included four options that were described by the experimenter and appeared on the screen with the Hebrew definitions every time the probe was presented: 0 (“I only heard meaningless syllables”), 1 (“I’m not sure if I heard a meaningful word, and I can’t repeat it”), 2 (“I think I heard a meaningful word, but unsure I can repeat it”) and 3 (“I heard a meaningful word and I can repeat it”). The objective test included a three-alternative forced-choice decision (3AFC) between the word category and two foil categories, which were predetermined so that each of the possible answers appeared one third of the time as a correct answer and two thirds of the time as a wrong answer.

Data preparation and analysis approach

To determine whether hearing words was more likely after a word was presented than after a pseudoword, we performed an ordinal regression for every subject, with the subjective response as the dependent variable and the trial type (catch or target) as the independent variable. We calculated

To test whether the subjective reports indeed reflected objective knowledge of word identity, we used a mixed logistic regression to predict the response accuracy (correct or incorrect) in the objective 3AFC with the subjective report as a single predictor, alongside a random by-subject intercept. A linear contrast was conducted on the model to examine whether objective accuracy increased with PAS score.

For the analysis of the valence effect, we analyzed the trial-level data with a mixed ordinal regression with the subjective response as the dependent variable and the word-valence rating obtained in Preliminary Experiment 1 as the independent variable, with a random by-subject intercept and random slope for valence. As variations in intelligibility could influence detection, we used the mean subjective intelligibility ratings from Preliminary Experiment 1 (see the Supplemental Material) as a covariate, alongside other measures that might influence conscious detection: word-arousal ratings and the number of syllables (two or three). Valence, arousal, and intelligibility were continuous and

A small minority of trials (3.98%) that were responded to with “3” (

To report the influence of specific predictors in the ordinal regression, we used odds ratios (

Results

General task performance

Before proceeding to our main hypothesis regarding word valence, we examined the general performance in both visual and auditory tasks. Participants reached a mean accuracy of 78.2% (

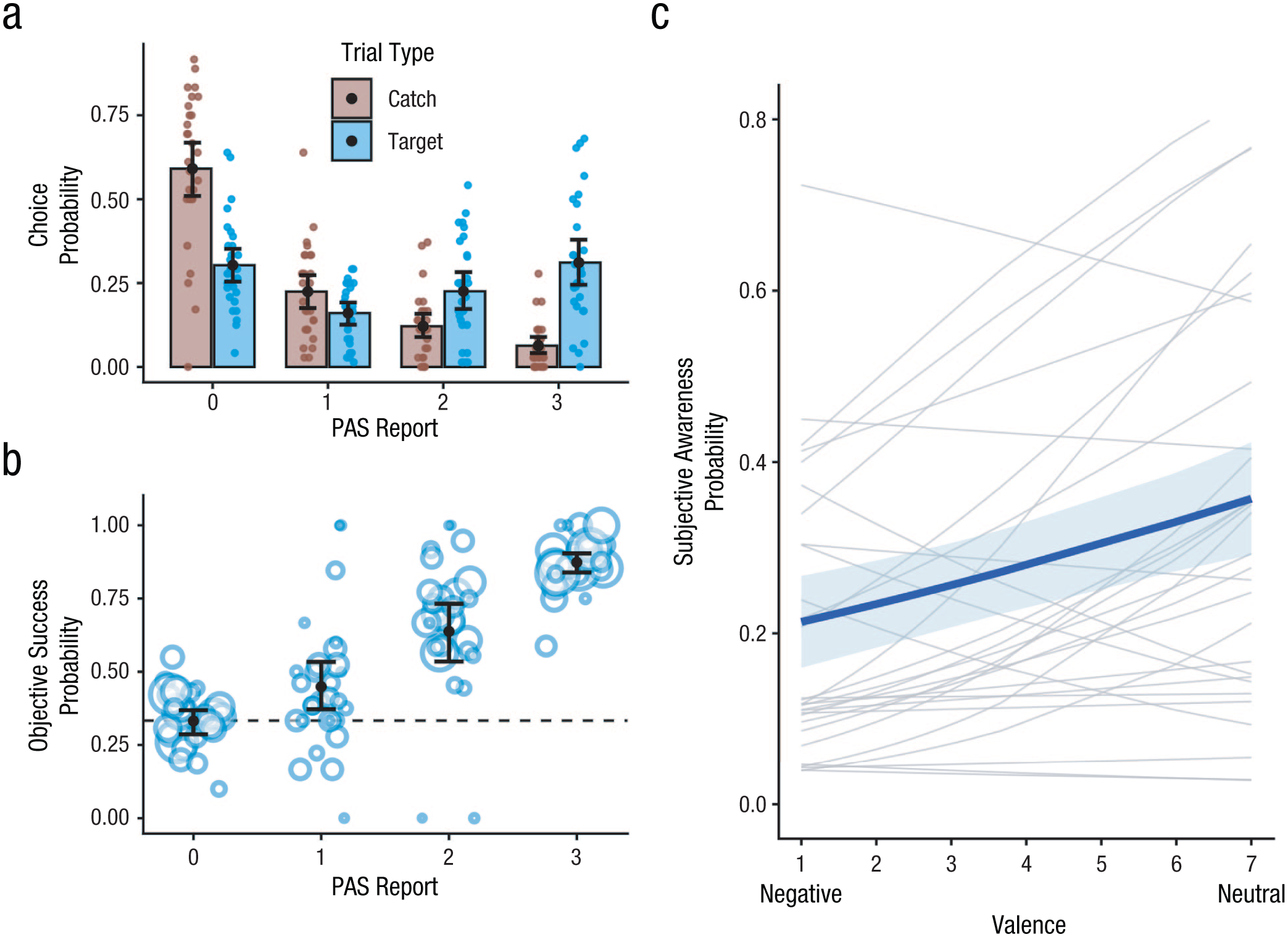

Experiment 1 results. Shown in (a) is the probability of choosing each response in the Perceptual Awareness Scale (PAS) after hearing a target word or catch pseudoword. Each point represents the response rate of 1 participant, and the means are represented by bars that sum up to 1 within each trial type. Shown in (b) is the objective success in the 3AFC test (category identification) per subjective PAS report for targets. Each dot represents 1 subject, and dot size reflects the number of responses with a given option (0, 1, 2, or 3) for that subject; larger dots mean that the success rate was based on more trials. In (c) we show the predicted rate of responding “3” in the PAS (“I heard a meaningful word and can repeat it”; blue bold line) for different levels of word valence based on mixed ordinal regression. Note that this regression was based on all 0 to 3 observations, and we present the predictions for one level (3) for clarity. The gray lines represent regression lines derived from single-subject ordinal regressions with the same covariates used in the group mixed ordinal regression. All error bars and shaded areas represent 95% confidence intervals. The predictions are plotted across the observed range of valence scores.

The objective success rate in the objective task was at chance level when participants pressed “0” (0.333, 95% confidence interval (CI) = [0.290, 0.376]) and was very high when they pressed “3” (0.875, 95% CI = [0.840, 0.909]), with a stable and significant monotonic trend, which increased alongside the subjective PAS rating (linear contrast:

Main valence analysis

The model with valence and arousal yielded an Akaike information criterion (AIC) of 5,115.1, which was significantly lower (i.e., better) than a null model including only intelligibility and number of syllables (AIC = 5,125.7, χ2(2) = 14.58,

Crucially, the coefficient (fixed effect) of valence was positive, indicating that participants were more likely to consciously detect neutral words compared with negative ones ( (

Exploratory control analyses

To examine the robustness of the results to specific analysis choices, we repeated the analysis with different sets of covariates that may influence the conscious detection rate. These included the time elapsed since the previous probe, the root-mean-square of the sound amplitude, log frequency in language, word category, and block number. We also tested different ways of formulating the dependent and independent variables—as continuous rather than ordinal, without the middle levels of awareness (that is, only 0 and 3 ratings). Finally, to ensure that response bias is not behind the effect, we used the objective success (

Experiment 2

Experiment 1 suggested that neutral words are more likely to be consciously recognized than negatively valenced words while participants attended a demanding visual task. The second experiment served as a preregistered replication and extension of Experiment 1 with additional words while also testing the intelligibility and valence ratings of a new and larger sample, with additional analyses to control for possible low-level influences.

Method

Participants

Participants (

Stimuli

The list of words was identical to Experiment 1, with the following changes. We excluded 18 words that were rarely identified in Experiment 1 and included 21 new words with ratings falling between neutral and negative. This resulted in a distribution that encompasses the entire range between negative and neutral. In total, we had 75 words that were used in this experiment. All previously reported matching procedures were conducted here as well, and we used the average intelligibility and valence rating from a new independent online sample (

As in Experiment 1, no significant relationships were found between word valence and language frequency, number of syllables, intelligibility measures, or duration. We also observed a marginally significant negative relationship between word valence and intelligibility, which was in the opposite direction to the reported effect on conscious detection found in Experiment 1. See Table S2 in the Supplemental Material for full details on the words and relationships between the different factors.

Procedure

The experiment was conducted and analyzed exactly as in Experiment 1. Participants performed six blocks, each including 215 one-back trials, 12 to 13 target words (75 in total), and 6 to 7 catch probes (39 total).

Data preparation

Preregistered inclusion criteria were as follows. We looked for higher subjective awareness of words in target trials compared with catch trials, which did not include meaningful words before the probe (using the same by-subject regression from Experiment 1). All participants passed this criterion (

Analysis of low-level auditory artifacts

In this experiment, we tested models with multiple phonetic and acoustic covariates that could have provided an alternative explanation for the correlation between PAS ratings and valence. These covariates may not, however, exhaust the full space of possible auditory confounds.

Because of the complexity of auditory signals, we took a computational approach in testing for possible confounds by relying on an artificial neural network model (wav2vec 2.0) for representations of speech (Baevski et al., 2020; Hebrew version used: https://huggingface.co/imvladikon/wav2vec2-xls-r-300m-hebrew). Wav2vec is used for speech-to-text tasks and encodes speech audio in latent spaces. We used a fine-tuned Hebrew version of wav2vec, allowing us to extract vectors representing low- and high-level linguistic information. The first layer of wav2vec is tuned mostly to acoustic features (i.e., correlates with features of spectrogram), and later layers encode also phonetic and linguistic and identity-related features (Pasad et al., 2021).

We used principal component analysis (PCA) to reduce the dimensionality of each layer to 10, which explained over 60% of the variance in representation. Because the combination of many components may be meaningful, we used the first 10 components representing each layer in one regression model, each time predicting intelligibility or valence (total of four regressions). Beyond demonstrating no correlation between valence and speech representations, we also tested whether the main analysis held while we statistically controlled for the first 20 PCA features (in one model for components 1–10, and another for components 11–20, because of convergence issues). This was done for both high-level and low-level features.

Results

General task performance

Participants reached a mean accuracy of 76.1% (

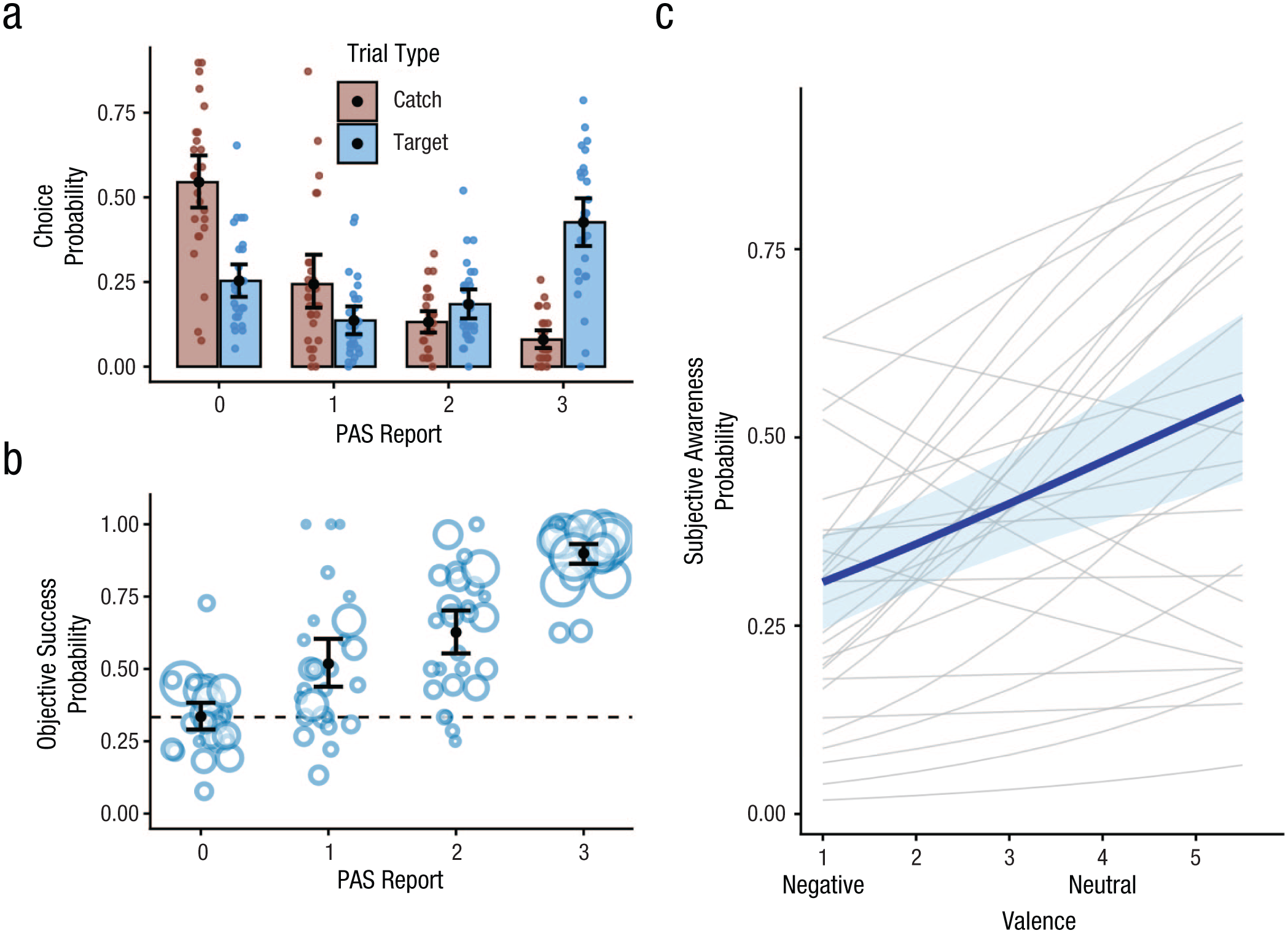

Experiment 2 results. The rate of choosing each response in the Perceptual Awareness Scale (PAS) after hearing a target word or catch pseudoword is shown in (a). Each point represents the response rate of one participant, and the means are represented by bars that add up to 1 within each trial type. In (b) we show objective success in the 3AFC test (category identification) per subjective response for targets. Each dot represents 1 participant, and dot size reflects the number of responses, so the success rate for larger dots was based on more trials. In (c), the blue (bold) line represents the predicted rate of responding “3” in the PAS (“I heard a meaningful word and can repeat it”) for different levels of word valence. The regression was based on all 0 through 3 observations, and we present the predictions for one level (3) for clarity. The gray lines represent regression lines derived from single-subject ordinal regressions with the same covariates used in the group mixed ordinal regression. Error bars and shaded areas reflect 95% confidence intervals. Note that the

Main valence analysis

To examine our main question, we ran the same model from Experiment 1. The model with valence and arousal (AIC = 4,995.1) was superior to the null model without these factors (AIC = 5,011.8, likelihood-ratio test: χ2(2) = 20.76,

We then separately tested whether the individual model-estimated odds of the subjective report were higher given an increase in valence or arousal. The coefficient of valence replicated Experiment 1, demonstrating an overall tendency to consciously detect neutral words more than negative words (

Exploratory control analyses

To ensure robustness to different analysis choices, we reran all the alternative models tested in Experiment 1 and replicated the results, including the use of objective performance as a dependent variable (see Table S6a–i in the Supplemental Material for full details). Additionally, we ran the same analysis with half the words each time, to inspect whether the effect persists within each valence level (only negative, or only neutral). The slope of valence was positive when using only negative words and also when using only neutral words (both

Additional control analyses were conducted to exclude the influence of different phonetic features in the words used, like the number of bilabial, alveolar, fricative, and plosive consonants (each separately), and the overall number of all vowels in the word. The model yielded the same significant influence of word valence (

Next, we examined whether the valence effect was specific to the words used in Experiment 1 (“old”), or whether it occurred for the 21 newly added words. We used the same model but coded each word as

The last analysis tested the correlation between word valence and high- or low-level factors derived by lower and higher layers of wav2vec-based representations of the stimuli (see the Method section). When we tested word-intelligibility ratings, as a sanity check, we found that it was significantly associated with both low- and high-level features of words (as would be expected), as encoded by wav2vec—low level:

As a complementary approach, we tested single correlations between each feature and valence, this time using PCA with 50 features, which explained over 95% of variance. None of the 50 components in both high- and low-level layers were strongly correlated with word valence (all |

Most important, the effect of valence remained strong when we controlled for high-level and low-level representations (all

Interim discussion

Experiments 1 and 2 showed that negative spoken words reach awareness less often when one is performing a demanding task, a pattern that seems somewhat incongruent with previous studies showing increased attention to negative stimuli (e.g., Bertels et al., 2010; Gao et al., 2022; Vuilleumier, 2005). This pattern may be explained by the fact that our secondary task was demanding, rather than a property of nonconscious cognition. If so, it should disappear or even be reversed if we reduce cognitive load. Alternatively, nonconscious prioritization processes might be fixed and unchanged by the load used.

Experiment 3

In this experiment, we contrasted the one-back primary task of the previous experiments with a simple perceptual task, testing for a load-by-valence interaction. Participants completed the same auditory-detection task, with an identical design and stimuli to those used in Experiment 2.

Method

Participants

Fifty-five participants performed this study (

Stimuli and procedure

The stimuli, number of trials, and procedure were identical to those used in Experiment 2, with one exception: In the first or second (counterbalanced between participants) half of the experiment, a different task was employed instead of the one-back task. The Greeble identity did not matter for this task, and participants were instructed to identify whether the Greeble was facing upward or inverted (which occurred with a 50% probability). The one-back task was defined as

Data preparation

For each participant, we conducted an ordinal regression with the subjective response as the dependent variable and the trial type (catch or target) as the independent variable. Consistent with our preregistration, we excluded 11 participants who had nonsignificant (

For the analysis of load and valence effects, we tested a model that included word-valence rating (from Experiment 2), load condition (high or low, within-subject), and their interaction (whether task load interacted with the valence effect). Additional preregistered covariates included word frequency, intelligibility, number of syllables (two or three), block number, and a between-subject factor with the identity of the first load condition (high or low), because the order of tasks may affect the results.

Results

General task performance

The overall accuracy was 66.1% (

In the auditory task, participants consciously detected 35.3% of the words (

Main valence analysis

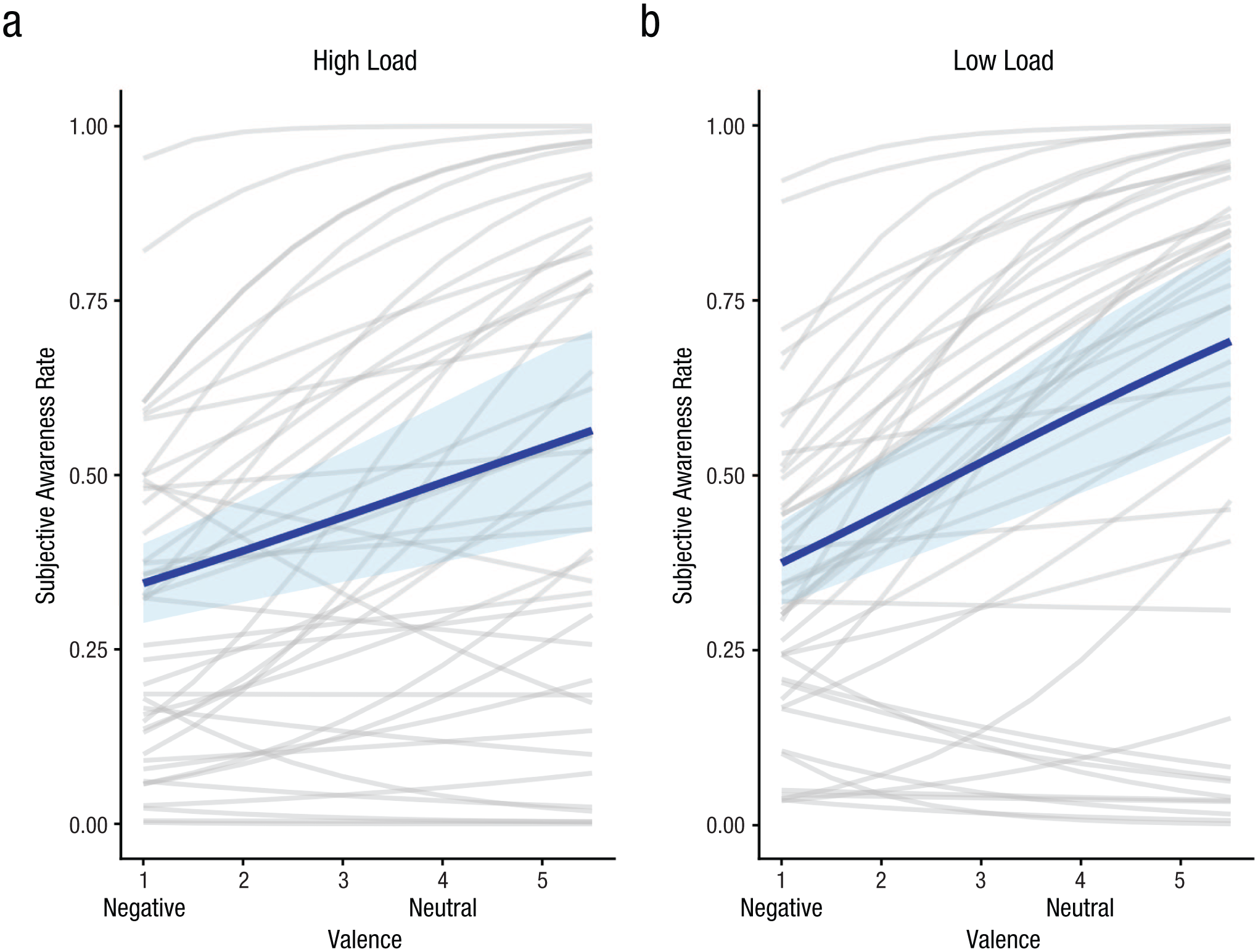

The coefficient of word valence in the model (reflecting a main effect across load conditions) was significant and positive (

Experiment 3 results. The blue (bold) line is the predicted rate of responding “3” in the Perceptual Awareness Scale (PAS). (“I heard a meaningful word and can repeat it”) for different levels of word valence, divided by experimental conditions (the shaded area represents 95% confidence intervals). In (a) we depict the predictions for the high-load condition (one-back task), and in (b) we depict the predictions for the low-load condition (upright or inverted decision). Individual gray lines are derived from within-subject ordinal regressions with the covariates used in the group mixed ordinal regression, to illustrate individual differences.

Exploratory control analyses

Although the issue was orthogonal to our main question, we also tested and found no main effect of load condition on the conscious detection rate, suggesting that even an easy task may be distracting enough to prevent awareness of otherwise audible stimuli (low vs. high load;

As an additional (not preregistered) control analysis, we also tested the effect while controlling for other semantic dimensions that may influence awareness: category used (categorical with 9 levels), animacy (dichotomous), concreteness (continuous), and danger (dichotomous). The effect of valence remained significant even when we accounted for other semantic categories (

General Discussion

Our daily lives are filled with sounds presented in vibrant visual environments that prevent us from noticing everything we hear. Here, we tested whether the valence of completely audible and highly intelligible Hebrew words affects prioritization for consciousness. 1 Across three experiments, negative words were detected less than neutral words during the execution of another task. These results were similar for objective and subjective measures of awareness, and they held with and without controlling for various lower-level word properties. The effect is also not an “ease of identification” effect (Lanfranco et al., 2023) because negative words were not associated with lower pronunciation intelligibility. In addition, within the range of task difficulties manipulated here, there was no evidence that valence depended strongly on the task-load manipulation we used. Thus, at least in the context of performing another task, we nonconsciously prioritize negative information less often, compared with neutral information.

The prioritization of neutral over negative spoken words may seem incompatible with studies showing increased attention and faster auditory-recognition times for emotional stimuli (Bertels et al., 2010; Gao et al., 2022; Vuilleumier, 2005). One possible explanation, which can be investigated further, is that consciously experiencing negative information is costly, and the cognitive system sometimes opts not to pay this price. This hypothesis is inspired by the observation that negative stimuli impair performance even when goal irrelevant (Algom et al., 2004; Pratto & John, 1991). Negative information has indeed been shown to take longer to break visual suppression (Doswell et al., 2025; Prioli & Kahan, 2015; Yang & Yeh, 2011). For example, Prioli and Kahan (2015) have shown that when negative words are initially suppressed using binocular rivalry, they are detected later than neutral words. Interestingly, supraliminal presentation resulted in faster RTs to negative words, supporting a dissociation between postperceptual and prioritization mechanisms.

Although we have provided initial insights into speech prioritization, we cannot yet conclude whether the effect involves active suppression of negativity, facilitation of neutral contents, or another mediating mechanism, and this is a topic for future investigations. Regardless of directionality, the fact that valence was associated with the likelihood of conscious detection suggests that spoken-word meaning is represented before awareness is reached, consistent with semantic-speech processing without awareness. Moreover, because nonconscious speech processing requires the system to integrate linguistic and acoustic units over time, our results suggest a time frame of (at least) a few hundred milliseconds (Dupoux et al., 2008; Mudrik et al., 2014; Van Gaal et al., 2014).

Potential limitations and alternative explanations

One common caveat in prioritization studies is the need to control for confounding features that may correlate with valence. Here, we provide a useful approach for future speech studies to address such issues. Alongside controlling for semantic, phonetic, and acoustic variables of choice, we also tested a deep-network representation of all acoustic-phonetic features, avoiding a priori feature selection.

Another possible limitation concerns the timing of awareness probes, which occurred one trial after the word to prevent retrieval from echoic memory. It is possible that valence might have influenced memory rather than awareness. This would mean that participants heard words consciously, yet selectively, and immediately forgot their presence despite their relevance. In the words of Most et al. (2005) in their seminal inattentional-blindness study, this is “conceivable, but such an explanation obscures the meaning of conscious perception” (p. 238). Regardless, even more skeptical readers should find the results intriguing, as they indicate an effect of valence on reportability merely 2 s after presentation.

Our choice to focus here on negative and neutral words also introduces a limitation. Specifically, our data contain no information about very positive words, nor do we have information on taboo words (Bertels et al., 2011), both of which might deviate from the linear effect we found. Investigating even more diverse semantic categories is an important avenue for our future understanding of prioritization. It is also important to note that these findings are based on relatively young Hebrew speakers, and the generalizability of the findings to other populations remains to be tested. Last, an important avenue for future work on nonconscious speech prioritization is understanding the role of task demands and task sets in prioritization beyond the relatively demanding tasks used here.

Conclusion

Despite the importance of speech as a source of information, the factors that promote awareness of speech are not well understood, especially compared with factors affecting visual awareness. We have here documented evidence for semantic and affective speech processing that influences access to awareness well beyond the mere intelligibility and acoustic features of the word. Thus, when one is performing another task, nonconscious mechanisms may rely on affective valence to determine conscious access to speech. The reduced detection of negative contents calls for reconsideration of the way emotional valence shapes the boundary between nonconscious and conscious perception and suggests that factors affecting conscious and nonconscious processing may diverge.

Supplemental Material

sj-docx-1-pss-10.1177_09567976261434113 – Supplemental material for Conscious Detection of Spoken Words Depends on Their Valence

Supplemental material, sj-docx-1-pss-10.1177_09567976261434113 for Conscious Detection of Spoken Words Depends on Their Valence by Gal R. Chen, Zaheera Maswadeh, Leon Deouell and Ran R. Hassin in Psychological Science

Footnotes

Transparency

Leon Deouell and Ran R. Hassin contributed equally to this manuscript.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.