Abstract

It is important for people to feel listened to in professional and personal communications, and yet they can feel unheard even when others have listened well. We propose that this feeling may arise because speakers conflate agreement with listening quality. In 11 studies (N = 3,396 adults), we held constant or manipulated a listener’s objective listening behaviors, manipulating only after the conversation whether the listener agreed with the speaker. Across various topics, mediums (e.g., video, chat), and cues of objective listening quality, speakers consistently perceived disagreeing listeners as worse listeners. This effect persisted after controlling for other positive impressions of the listener (e.g., likability). This effect seemed to emerge because speakers believe their views are correct, leading them to infer that a disagreeing listener must not have been listening very well. Indeed, it may be prohibitively difficult for someone to simultaneously convey that they disagree and that they were listening.

In organizational or group decision-making contexts, people want to be heard, to feel that others have listened to and understood their views (Lloyd et al., 2015; Skarlicki & Folger, 1997). Feeling listened to fosters trust, social connection, and collaborative decision-making (Bergeron & Laroche, 2009; Curhan et al., 2006; Itzchakov et al., 2017; Stine et al., 1995), whereas feeling unheard makes people frustrated, angry, and unmotivated to resolve issues (David & Roberts, 2017; Kriz et al., 2021; Levinson et al., 1997; Lloyd et al., 2015). Not surprisingly, both scholars and practitioners have sought to identify the factors that promote perceived listening, defined as a speaker’s judgment of how well or poorly someone listened to them (Collins, 2022; Fitzgerald, 2021; Kluger & Itzchakov, 2022; Yip & Fisher, 2022).

Scholars tend to agree that people feel listened to when listeners focus on them (Itzchakov et al., 2018), are open and receptive (Yeomans et al., 2020), and try hard to understand the speaker’s views rather than asserting their own (Kluger & Mizrahi, 2023). However, although good listeners may not emphasize their own views during a conversation, speakers may know, infer, or learn listeners’ views. For example, speakers may know listeners’ views before talking to them, may infer those views from listeners’ echoes of agreement, or may learn about them after a conversation, as would often be the case when people must reach consensus, make a decision, or vote on a policy.

In this article, we are interested in how speakers’ inferences about a listener’s views affect perceived listening. Existing research has not addressed this question directly. Indeed, a listener’s views on a topic appear nowhere in the literature’s lists of factors that affect perceived listening (Lipetz et al., 2020). Moreover, scholars have suggested that good listening can compensate for interpersonal and intergroup differences (Bruneau & Saxe, 2012; Santoro & Markus, 2023), implying that whether a listener agrees or disagrees with a speaker is independent of how well they can signal that they have listened. But is this the case? We contend that it is not. Drawing on the theory of naive realism, we suggest that speakers may struggle to feel that someone has listened to them when they learn that the person disagrees with them. Consequently, all else equal, speakers judge a disagreeing listener as a worse listener.

Research on naive realism finds that people often experience their views as objective and correct (Robinson et al., 1995; Ross & Ward, 1996). They believe that they are reasonable and rational and that they process the world in an unmediated way. Consequently, people assume that if they explain their views on a topic to other reasonable and rational people, then these people will agree with them (Cheek et al., 2021; Dorison et al., 2019; Dorison & Minson, 2022; Minson & Dorison, 2022; Pronin et al., 2004). Taken a step further, one could imagine that signs of disagreement may be taken as evidence that the person was not listening. In other words, people may think that listeners who agree with them are better listeners than those who disagree, even if their objective listening behaviors are the same.

We tested this prediction in 11 experiments, six reported in the main text and five in the supplement (see Table S1 in the Supplemental Material for a description of each study). In each study, participants shared their views on a topic with a listener. We held constant or manipulated the listener’s objective listening behaviors, revealing only after the conversation the listener’s views on the topic. Across various topics and mediums (e.g., video, text), speakers consistently perceived better listening when the listener agreed with them than when they did not. Mediation and moderation analyses supported our naive-realism explanation while also showing that the effect arises separately from a mere “halo effect,” or simply favoring others who share one’s own views (Nisbett & Wilson, 1977).

Our work contributes significantly to our understanding of how a listener is judged, suggesting that when someone exclaims “You’re not listening!” they might mean “You’re not agreeing!” Furthermore, our findings indicate that agreeing listeners convey listening with ease, whereas disagreeing listeners face an uphill battle. Indeed, even if disagreeing listeners objectively listen better than agreeing listeners, speakers may still perceive them as listening worse or no better than agreeing listeners. Finally, we found that using certain interpersonal markers of perceived good listening, such as acknowledging and affirming the speaker’s views, can help a disagreeing listener to be seen as a better listener. However, we also observed that using these markers led speakers to think the listener agreed with them more.

Overall, this work reveals that to understand how speakers judge the quality of a listener’s listening, one must consider speakers’ inferences about whether the listener agrees with what they are saying. In many cases, perceived listening and perceived agreement may be impossible to disentangle.

Statement of Relevance

Imagine listening closely to someone sharing their views on a topic. You are attentive and engaged and understand the speaker’s point. But you ultimately disagree with the speaker’s conclusion. The present findings suggest that although you listened well, the speaker may not think so. We find that speakers rely on whether someone agrees with them as a signal of how well they listened, suggesting that when speakers lament “You are not listening to me!” what they may mean is “You are not agreeing with me!” This conflation of agreement with perceived listening quality has important implications. For example, in both personal and professional communications, disagreeing listeners may find it prohibitively difficult to convey that they are properly listening, whereas agreeing others may do so with ease, even when distracted by their phones. Overall, agreeing with someone may be one of the best ways to convince them that you are listening.

Study 1

Method

Participants and procedure

Undergraduate students (N = 116) from a private university on the East Coast of the United States completed the study. We told participants that we would assign them to be the speaker or the listener during a live video chat. We actually assigned everyone to be the speaker and had actors play the listener.

After receiving their speaker role assignment, participants selected which of four possible campus-related topics (e.g., how to address free speech in the classroom) was the most personally relevant. Participants then shared their views on their selected topic with the listener during a virtual meeting. During this video call, the actors who played the listeners were trained to listen similarly to all speakers. They made eye contact with the speaker, nodded their head occasionally, and gave short feedback, such as “Makes sense” and “OK.” The Supplemental Material contains a link to an example video. The actors were blind to condition and to the study’s purpose.

Manipulation of the listener’s views on the topic

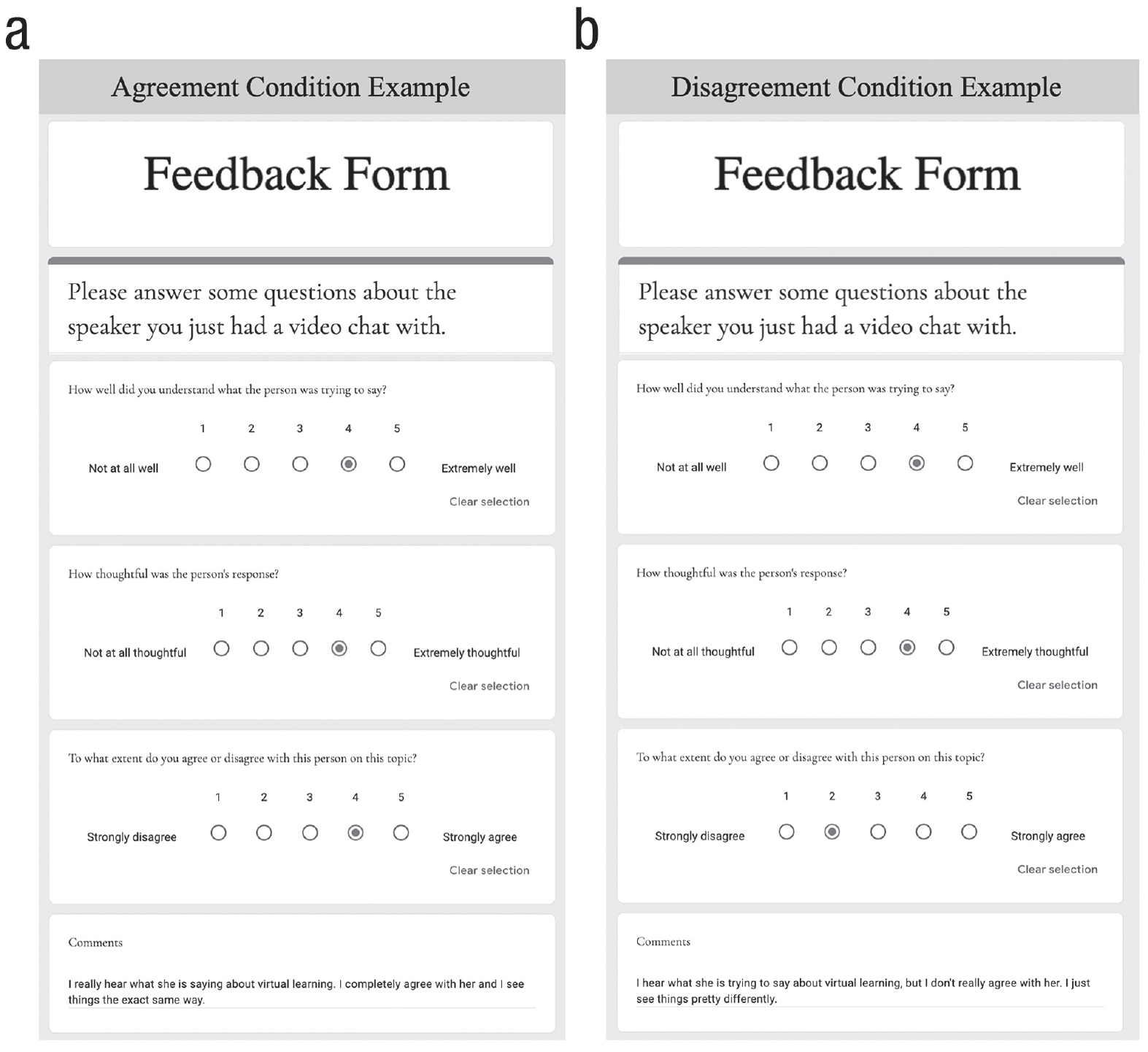

After the conversation, participants clicked a link that took them to a feedback form that the listener supposedly completed. This was a prefilled Google Form that we generated (see Fig. 1). The feedback form always indicated that the listener understood the speaker and thought the speaker was thoughtful. However, we varied across conditions how the listener responded to the question, “To what extent do you agree or disagree with this person on this topic?” In the disagreement condition, the listener selected either 1 or 2 on a 5-point scale, ranging from 1 = strongly disagree to 5 = strongly agree. In the agreement condition, the listener selected 4 or 5 on this scale. We varied the strength of (dis)agreement for stimulus sampling purposes and collapsed across this variation in the main analysis (see the Supplemental Material, Section 2, for the results by strength of [dis]agreement).

Example of the feedback participants received from the listener (Study 1). This shows the feedback a female participant who selected the topic of the merits or drawbacks of virtual learning would have received in each condition.

We also used the written comments to further signal whether the listener agreed with the speaker. In the disagreement condition, the listener commented, “I hear what [speaker] is trying to say about [topic the participant selected], but I don’t really agree with [speaker]. I just see things pretty differently.” In the agreement condition, the listener commented, “I really hear what [speaker] is saying about [topic the participant selected]. I completely agree with [speaker], and I see things the exact same way.” We matched the bracketed content to the participant’s gender identity and topic choice.

Measures

After reviewing the feedback, participants answered one question about their impressions of the listener’s listening ability: “Do you think this person is an attentive listener?” (1 = strongly disagree; 5 = strongly agree).

Participants concluded the study by answering a manipulation check question, “Based on the feedback you received from the other participant, to what extent do you think this person agrees or disagrees with you about [topic the participant selected]?” Participants answered the question on a sliding scale ranging from 0 = strongly disagree to 100 = strongly agree. The slider was positioned at 50 by default.

We debriefed participants after the study and told them the listener was an actor and that the feedback was fake.

Results

Manipulation check

Participants thought that an agreeing listener agreed with them more (M = 87.20, SD = 15.86) than an disagreeing listener (M = 22.09, SD = 19.20), t(106.91) = 19.83, p < .001, Cohen’s d = 3.70. 1 The manipulation checks also confirmed the success of our manipulations in the subsequent studies (see the Supplemental Material, Table S4).

Main analysis

In general, participants thought the listener listened well. This makes sense given that all actors were trained to demonstrate attentive listening. However, as predicted, participants thought the listener listened better when they agreed with them (M = 4.32, SD = 0.68) than when they disagreed with them (M = 4.00, SD = 0.87), t(103.52) = 2.17, p = .032, Cohen’s d = 0.41. We replicated the same effect in a similar study in which participants audio recorded their viewpoints and sent the recording to the listener (see the Supplemental Material, Section 3).

Study 2

Study 2 tested whether the effect of (dis)agreement on perceived listening generalized to novel, less socially charged topics.

Method

Participants and procedure

We recruited participants from Prolific Academic for a hiring simulation in which they would have a live chat with another participant using the Smartriqs chat platform (Molnar, 2019). We randomly assigned participants to one of two roles: a human resource (HR) manager who would recommend a job candidate to a supervisor or a supervisor who would listen to an HR manager’s recommendation. Participants assigned to the HR manager role were the speakers; those assigned to the supervisor role were the listeners. The listeners acted as confederates in the study. We gave them specific instructions about how to interact with the speakers. Thus, as preregistered, we focus our analysis on the speakers (N = 388).

The speakers (the HR manager role)

Participants assigned to the HR manager role in the hiring simulation were the speakers. They reviewed information about two job candidates and decided whom they would hire. They then had a live, 2-min chat with another participant, who was playing their supervisor, during which they explained their recommendation. We told the speakers that the participant playing their supervisor received the same information about the candidates as they did and would render a final hiring decision after the chat. In reality, participants assigned to the supervisor role acted instead as confederates to the study and received no information about the candidates.

The listeners (the supervisor role)

Participants assigned to the supervisor role were the listeners. They acted like confederates in the study. We told them we wanted to create a specific listening experience for the participants assigned to the HR manager role. We told all supervisors what to say during the conversation and provided them with phrases to use during the chat. Examples of the phrases include “OK, I see,” and “Anything else you want to mention about the candidates?” Section 4 of the Supplemental Material shows the full list of phrases and instructions the supervisors received. We did not provide participants assigned to the supervisor role information about the candidates so that their opinions about the candidates would not affect how they listened.

Manipulation of the listener’s views on the topic

After the conversation, for each dyad, we varied whether the supervisor (the listener) supposedly agreed or disagreed with the HR manager’s (the speaker’s) hiring recommendation. We again used a feedback form to relay this information. The HR manager received a link that opened a Google Form that the supervisor supposedly had completed. The form was like the one shown in Figure 1, with minor changes to match the narrative context of the study (see Figure S1 in the Supplemental Material for an example).

Measures

After the HR managers (the speakers) reviewed this form, they rated their supervisor’s listening ability in the same way as they did in Study 1. They also rated the supervisor’s engagement during the chat (“How disengaged/engaged was the other participant [the supervisor]?”), whether the supervisor understood them well (“Do you think the other participant [the supervisor] understood you well?”), and agreement with them (as a manipulation check).

Results

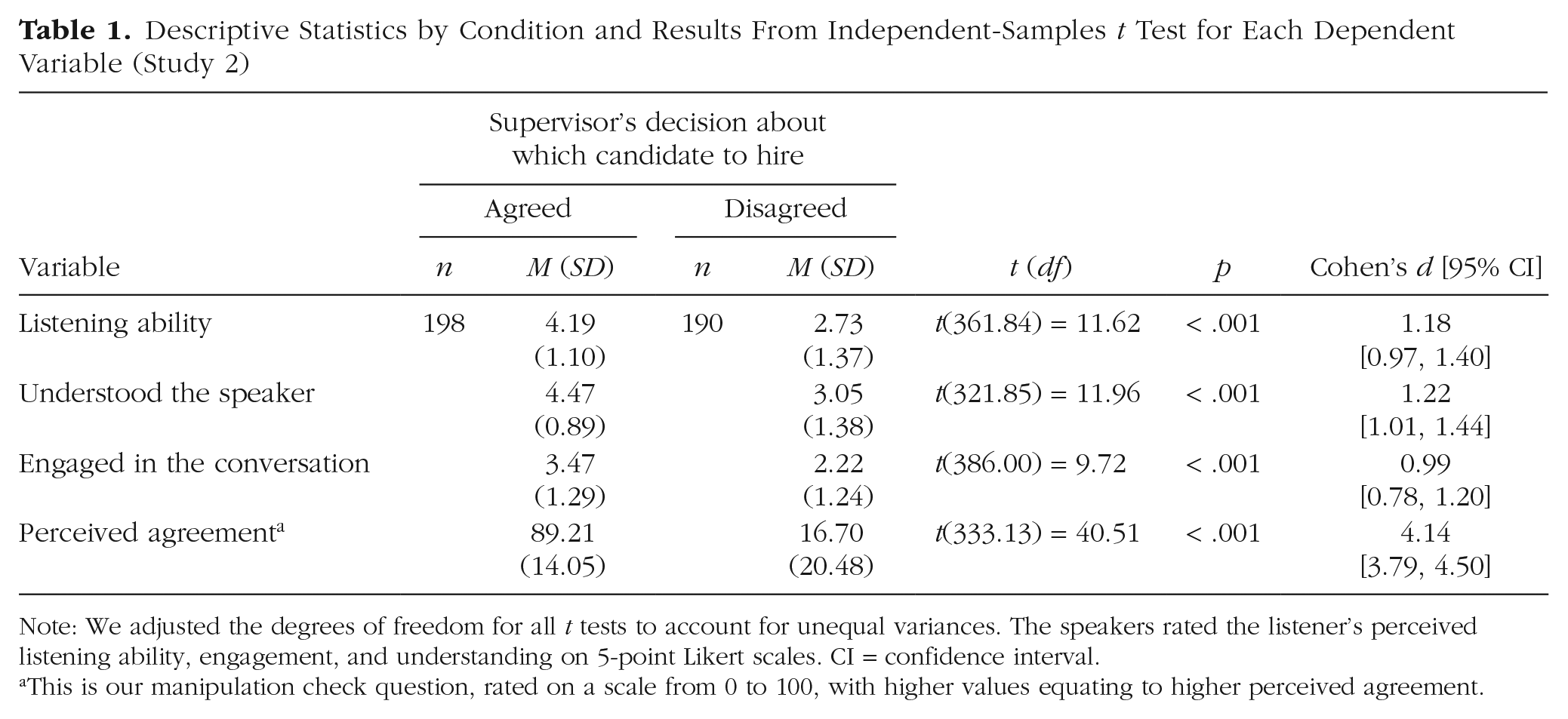

Table 1 shows the results. As predicted, and replicating Study 1, participants assigned to the HR manager role (the speakers) thought their supervisor listened better, was more engaged, and understood them better when they agreed with their recommendation about whom to hire than when they disagreed with the recommendation. These effects are fairly large, ranging from a Cohen’s d of 0.56 to d = 0.90.

Descriptive Statistics by Condition and Results From Independent-Samples t Test for Each Dependent Variable (Study 2)

Note: We adjusted the degrees of freedom for all t tests to account for unequal variances. The speakers rated the listener’s perceived listening ability, engagement, and understanding on 5-point Likert scales. CI = confidence interval.

This is our manipulation check question, rated on a scale from 0 to 100, with higher values equating to higher perceived agreement.

Study 3

We reason, in line with a naive-realism account, that speakers think listeners who agree with them are better listeners because they are more willing and able to process information objectively. We tested this proposed mechanism in Study 3. We also tested an alternative mechanism—that the effect of (dis)agreeing with a speaker on perceived listening emerges because people simply form positive impressions of people who agree with them (i.e., a halo-effect explanation; Thorndike, 1920). Finally, we included a no-information (control) condition to determine whether agreement, disagreement, or both drive the effects.

Method

Participants and procedure

The recruitment and procedure were the same as Study 2 except we included a control condition in which the HR managers (the speakers) did not learn whether their supervisor (the listener) agreed or disagreed with them. Like Study 2, our analysis focuses on the speakers (N = 335) because the listeners acted as confederates.

In addition to answering the question about their supervisor’s (the listener’s) listening ability, the HR managers (the speakers) also answered six questions about the supervisor’s ability and willingness to process information in rational or unbiased ways (e.g., “They [the supervisor] had an unbiased understanding of the job candidates” (see Section 6 in the Supplemental Material for the exact items; Cronbach’s α = .80). They also indicated to what extent they agreed or disagreed that their supervisor was highly principled, honest, and of high integrity (1 = strongly agree; 5 = strongly disagree). We averaged these items to create a measure of perceived moral character (Cronbach’s α = .80; seeGoodwin, 2015) to capture a general positivity toward an agreeing listener.

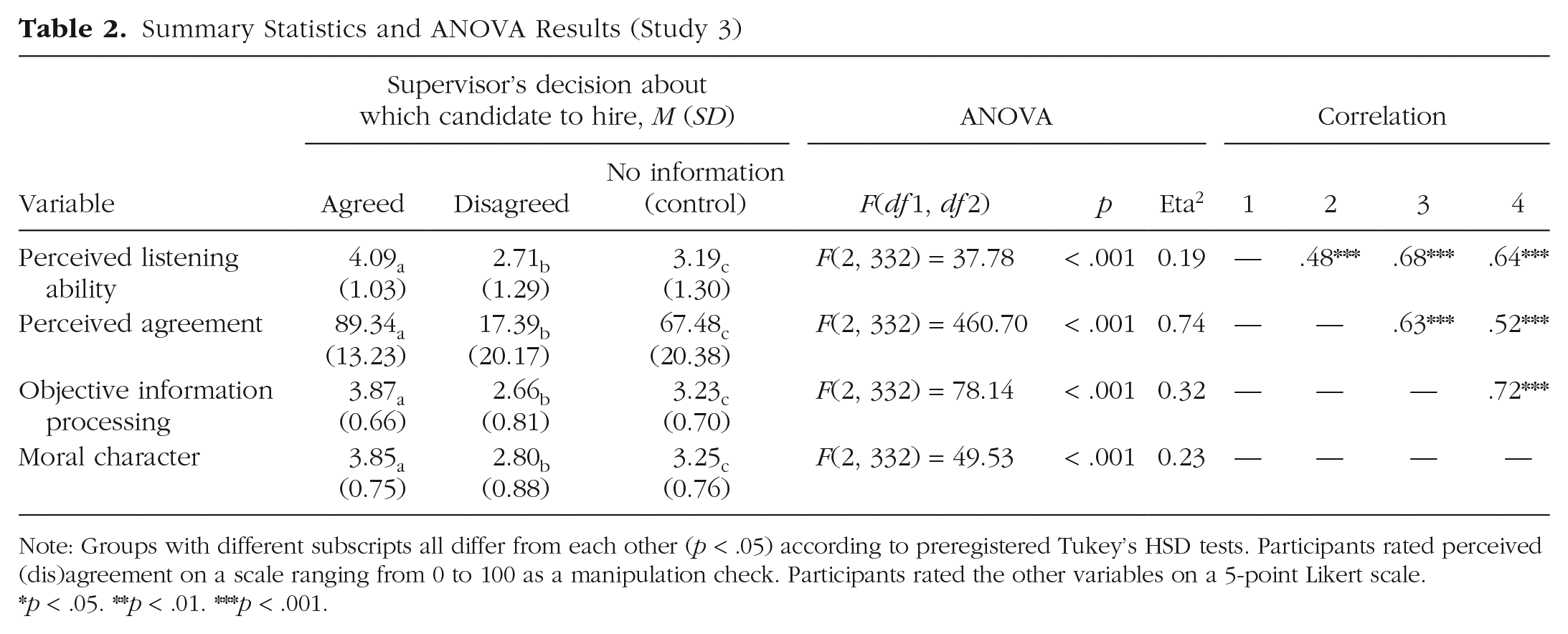

Results

Table 2 shows the results and descriptive statistics for each measure by condition. The HR managers (the speakers) thought their supervisor (the listener) listened the best when they agreed with them and the worst when they disagreed with them. They also thought their supervisor was the best at processing information objectively when they agreed with them and the worst when they disagreed. Finally, they thought their supervisor had the highest moral character when they agreed with them and the lowest when they disagreed.

Summary Statistics and ANOVA Results (Study 3)

Note: Groups with different subscripts all differ from each other (p < .05) according to preregistered Tukey’s HSD tests. Participants rated perceived (dis)agreement on a scale ranging from 0 to 100 as a manipulation check. Participants rated the other variables on a 5-point Likert scale.

p < .05. **p < .01. ***p < .001.

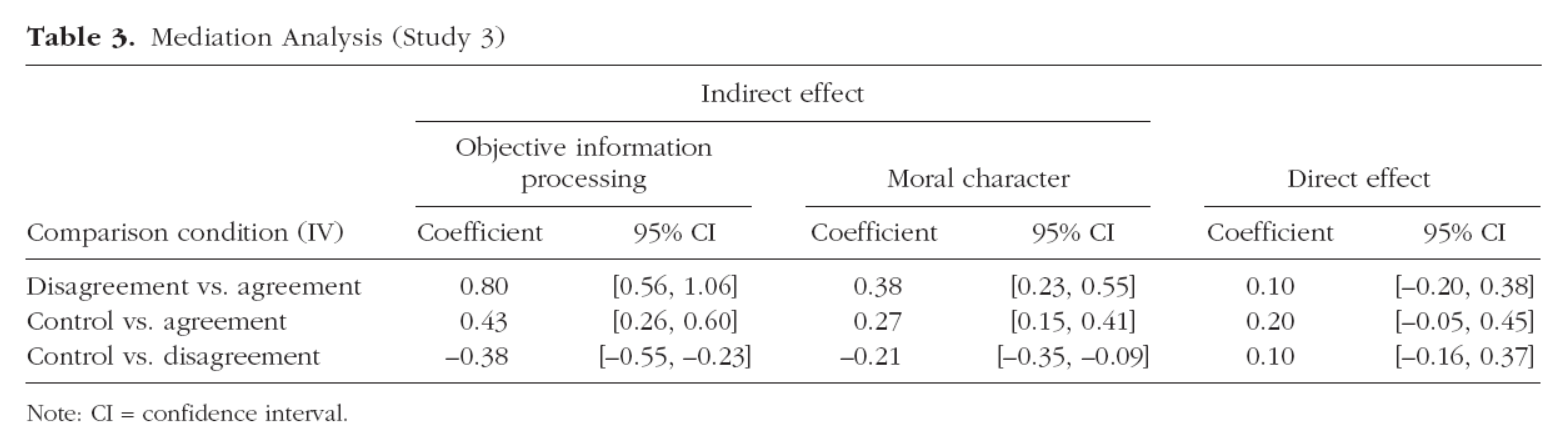

We ran a multiple-mediator model with 5,000 bootstrap samples to test the naive-realism pathway (i.e., an indirect effect through perceived objective information processing) and the halo-effect pathway (i.e., an indirect effect through perceived moral character) simultaneously (see Preacher & Hayes, 2004).

As shown in Table 3, both indirect effects were significant. However, the indirect effect through objective information processing explained more variance of the direct effect than did the one through moral character. Also, the effect of the (dis)agreement manipulation on perceived listening remained after controlling for perceived moral character, F(2, 331) = 53.85, p < .001 (see also Study S2 in the Supplemental Material).

Mediation Analysis (Study 3)

Note: CI = confidence interval.

Discussion

Study 3 showed that both agreeing with a speaker and disagreeing with a speaker affected perceived listening relative to a no-information control. This manipulation of agreement also affected assumptions of the speaker’s objective information processing and moral character. While both perceptions played a role in explaining the effect of (dis)agreement on perceived listening, the pathway through objective information processing (i.e., the naive-realism pathway) was stronger than the pathway through moral character (i.e., the halo-effect pathway).

These findings are consistent with the idea that naive realism may play a significant role in driving this effect, separate from a general shift in interpersonal impressions caused by agreement. Nevertheless, we conducted additional studies to further assesses this process. A naive-realism perspective suggests that speakers would be more likely than observers to see their hiring choice as correct (e.g., Pronin et al., 2004). Thus, we tested in Study S3 whether a listener’s (dis)agreement with a speaker affected speakers more than it did third-party observers. It did (see Section 7 of the Supplemental Material for more details). We also conducted two additional studies (Studies 4 and S4) to further address the possibility of a halo effect explaining the findings.

Study 4

A halo-effect account would suggest that the effects are not about listening per se but about liking. People like someone more when they agree with them, and people tend to rate someone more positively on a range of dimensions when they like them (Cialdini & Goldstein, 2004). We designed Studies 4 and S4 to test this alternative account. We reasoned that if the effect of (dis)agreement on perceived listening operates only through liking, then making the listener highly likable or unlikable should constrain how much the (dis)agreement manipulation can affect liking. Thus, we manipulated and measured how much speakers liked the listener in these studies to test this halo-effect account.

Method

Participants and procedure

Participants were recruited to participate in a hiring simulation in the same way as described in Studies 2 and 3. Our analysis again focuses on the speakers (N = 539) because the listeners acted as confederates.

The study had a 2 (listener’s position: agreed with the speaker vs. disagreed with the speaker) × 2 (listener’s likability: likable vs. unlikable) factorial design. The procedure was the same as Studies 2 and 3, with the following exceptions.

Before chatting, the HR managers (the speakers) supposedly completed a resource allocation task with their supervisor (the listener). The resource allocation task was a dictator game in which the supervisor allocated $0.30 between them and the HR manager. In the likable condition, the supervisor supposedly behaved generously, giving the HR manager $0.25 of the $0.30 bonus. In the unlikable condition, the supervisor supposedly behaved somewhat selfishly, giving them only $0.05 of the $0.30 bonus. After learning about this allocation, the HR managers rated their supervisor’s likability on a 5-point Likert scale (1 = very unlikable; 5 = very likable).

The HR managers then entered the chat to explain their hiring recommendation to the supervisor. We manipulated (dis)agreement in the same way as we did in Studies 2 and 3. After this manipulation, the HR managers rated their supervisor’s listening ability. They also rated their likability for a second time.

Results

Manipulation check

The likability manipulation was effective: After learning their supervisor’s allocation decision, HR managers (the speakers) reported liking the supervisor (the listener) much more when the supervisor was generous (M = 4.63, SD = 0.70) than when they were not (M = 2.15, SD = 1.03), t(477.49) = 32.46, p < .001, 95% confidence interval [4.63, 2.15], Cohen’s d = 2.80).

Main results

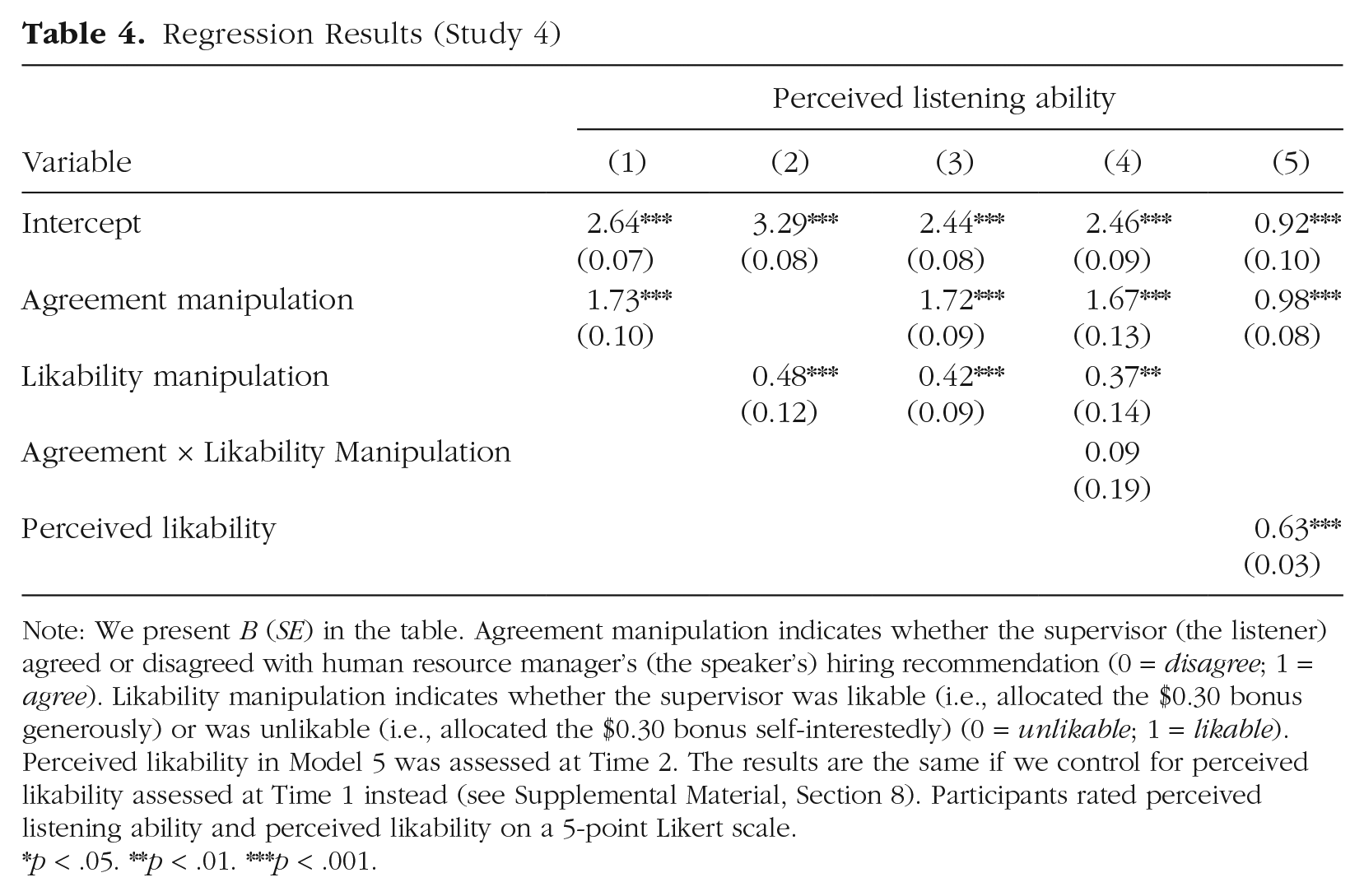

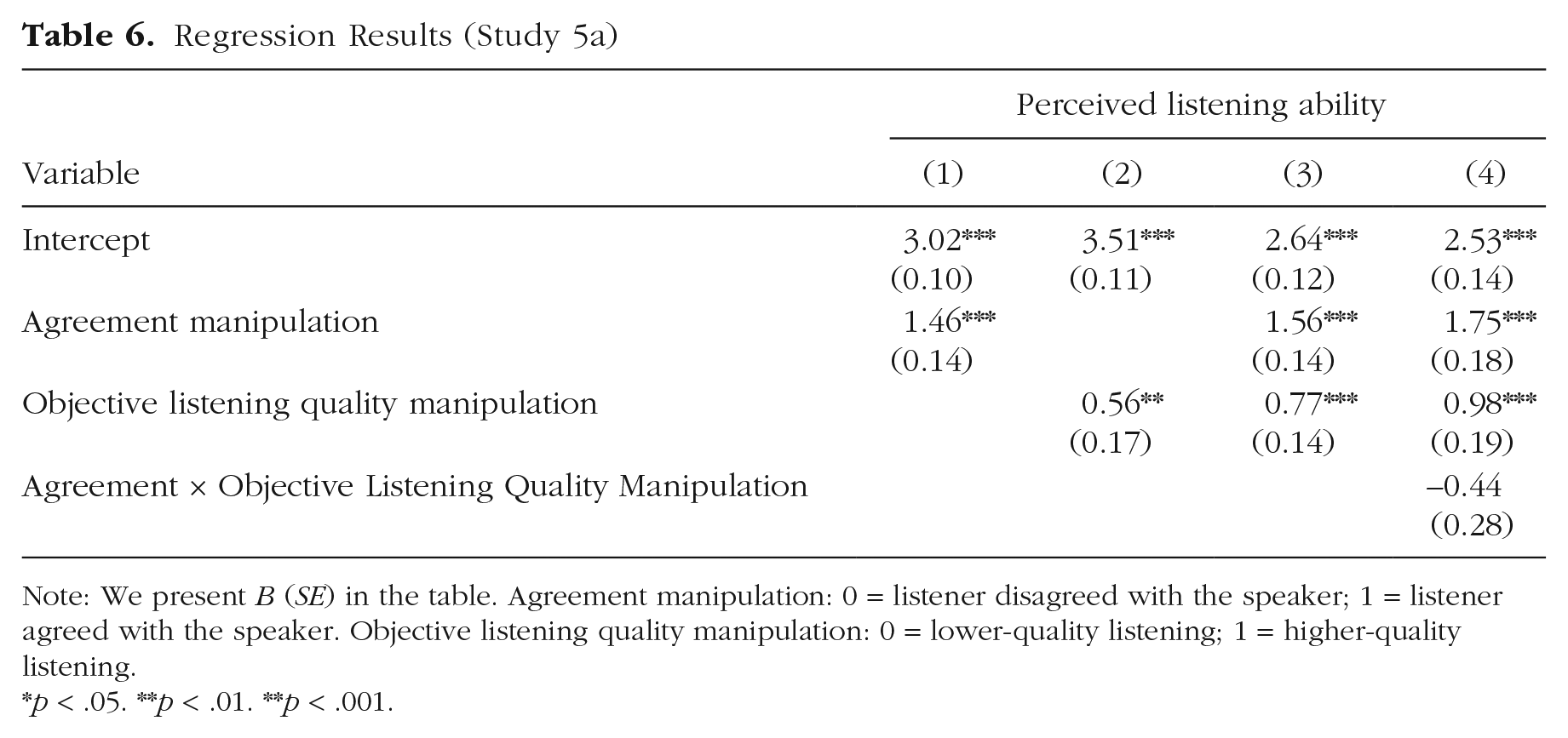

We conducted a series of regression analyses to test the effect of the listener’s position on perceived listening and likability. As shown in Table 4 (Model 1), there was a positive main effect of the supervisor (the listener) agreeing with the speaker, a positive main effect of the supervisor being likable (Model 2), and no interaction (Model 4) on perceived listening.

Regression Results (Study 4)

Note: We present B (SE) in the table. Agreement manipulation indicates whether the supervisor (the listener) agreed or disagreed with human resource manager’s (the speaker’s) hiring recommendation (0 = disagree; 1 = agree). Likability manipulation indicates whether the supervisor was likable (i.e., allocated the $0.30 bonus generously) or was unlikable (i.e., allocated the $0.30 bonus self-interestedly) (0 = unlikable; 1 = likable). Perceived likability in Model 5 was assessed at Time 2. The results are the same if we control for perceived likability assessed at Time 1 instead (see Supplemental Material, Section 8). Participants rated perceived listening ability and perceived likability on a 5-point Likert scale.

p < .05. **p < .01. ***p < .001.

The supervisor’s (dis)agreement affected perceived listening separate from liking (see Fig. 2). Participants thought their supervisor (the listener) listened better when they agreed with them than when they did not. This effect of (dis)agreement emerged for both the unlikable (B = 1.67, SE = 0.13, p < .001) and likable listeners (B = 1.76, SE = 0.14, p < .001). Critically, this effect remained highly significant when we controlled for participants’ ratings of the listener’s likability (see Model 5).

The manipulation of (dis)agreement came after the manipulation of likability and closer in time to the measure of perceived listening. Readers may wonder whether these procedural details accounted for the results. To examine this, we conducted Study S4, which removed this timing confound by having the liking and (dis)agreement manipulations both occur after the conversation in a counterbalanced order. We found the same results (see Supplemental Material, Section 9).

Discussion

Study 4 provided evidence that the effect of (dis)agreement on perceived listening is not due solely to liking. The (dis)agreement manipulation influenced liking (Cohen’s d = 1.02), but it had a larger influence on perceived listening (Cohen’s d = 1.56). Critically, the effect of (dis)agreement on perceived listening persisted even when controlling for liking. Moreover, the effect of dis(agreement) on perceived listening emerged for both the likable and the unlikable listener. Thus, while expressing agreement or disagreement can shift people’s general interpersonal impressions of someone (as Studies 3, S3, and 4 show), the findings suggest that this effect of (dis)agreement on perceived listening emerges separately from this shift.

Studies 5a and 5b

The previous studies held objective listening quality constant. But what if people have information that the listener listened objectively well? Do the effects of (dis)agreement on perceived listening persist? We assessed this question in Studies 5a and 5b (see also Study S5) by manipulating objective listening quality in different ways.

Study 5a

Method

Participants and procedure

We recruited participants (N = 257) via the CloudResearch Platform for Mechanical Turk (Litman et al., 2017) for a two-part study that involved audio recording their views on a sociopolitical topic (Part 1) and then receiving feedback from someone who listened to the recording (Part 2).

Part 1: speaking about a sociopolitical topic

In Part 1, participants selected a personally relevant sociopolitical topic from a list of 13 topics (e.g., police reform, vaccine mandates) and recorded a 2-min audio clip about their views on this topic. We explained that we would send the recording to an affiliate of our university. This person would listen to the recording and provide a written response to it. We would then contact participants in 2 days with this person’s response and a few questions about it.

Manipulation of objective listening quality

We manipulated objective listening quality by varying how well or poorly the listener comprehended the speaker’s views (see Bruneau & Saxe, 2012; Flynn et al., 2023; Kluger & Itzchakov, 2022). We trained research assistants to write customized feedback to the speaker. The research assistants wrote a high-quality summary and a low-quality summary of each audio recording to signal different levels of comprehension. For the high-quality summary, the research assistants wrote a short paragraph summarizing the speaker’s key points and the underlying reason(s) for their position. For the low-quality summary, the research assistants wrote only one to two vague sentences.

We ensured the effectiveness of this manipulation by having a separate group of raters evaluate the quality of the summaries. They rated the high-quality summaries as higher quality than the lower-quality summaries (see Supplemental Material, Section 10).

Manipulation of the listener’s agreement or disagreement with the speaker

We manipulated whether the listener supposedly agreed or disagreed with the speaker by appending agreeing or disagreeing phrases to the beginning and end of the summaries. We sampled the phrases from a bank of phrases (e.g., “I see things pretty differently,” “This is just not how I see things,” “This is how I see things too!”) for stimulus sampling purposes.

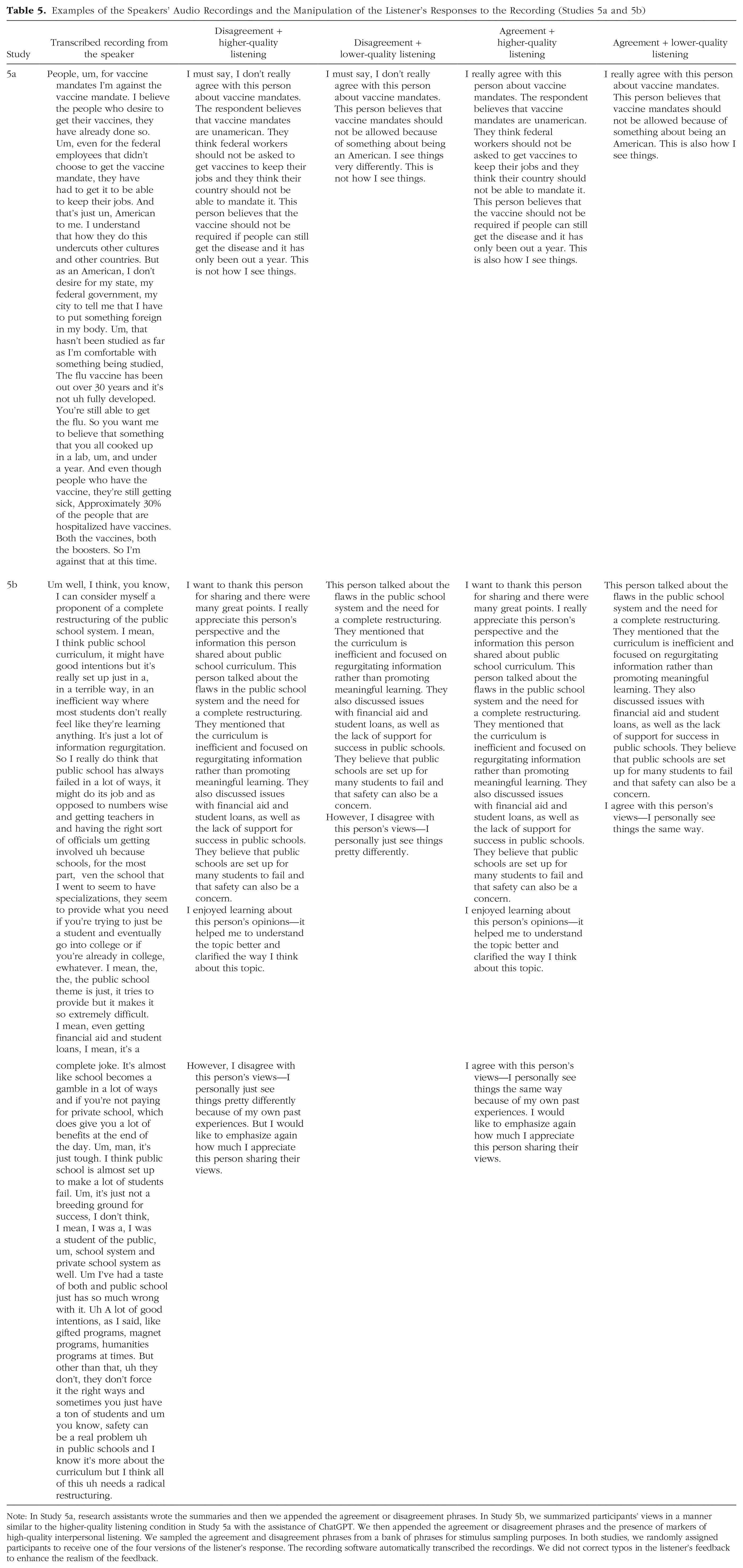

Overall, we created four responses for each participant and randomly assigned them to receive one of these responses, using a 2 (listener’s position: agreed with the speaker vs. disagreed with the speaker) × 2 (objective listening quality: higher quality vs. lower quality) factorial design. Table 5 shows an example of two participants’ transcribed recordings. It also shows the four possible responses to them: higher-quality disagreement, lower-quality disagreement, higher-quality agreement, and lower-quality agreement.

Examples of the Speakers’ Audio Recordings and the Manipulation of the Listener’s Responses to the Recording (Studies 5a and 5b)

Note: In Study 5a, research assistants wrote the summaries and then we appended the agreement or disagreement phrases. In Study 5b, we summarized participants’ views in a manner similar to the higher-quality listening condition in Study 5a with the assistance of ChatGPT. We then appended the agreement or disagreement phrases and the presence of markers of high-quality interpersonal listening. We sampled the agreement and disagreement phrases from a bank of phrases for stimulus sampling purposes. In both studies, we randomly assigned participants to receive one of the four versions of the listener’s response. The recording software automatically transcribed the recordings. We did not correct typos in the listener’s feedback to enhance the realism of the feedback.

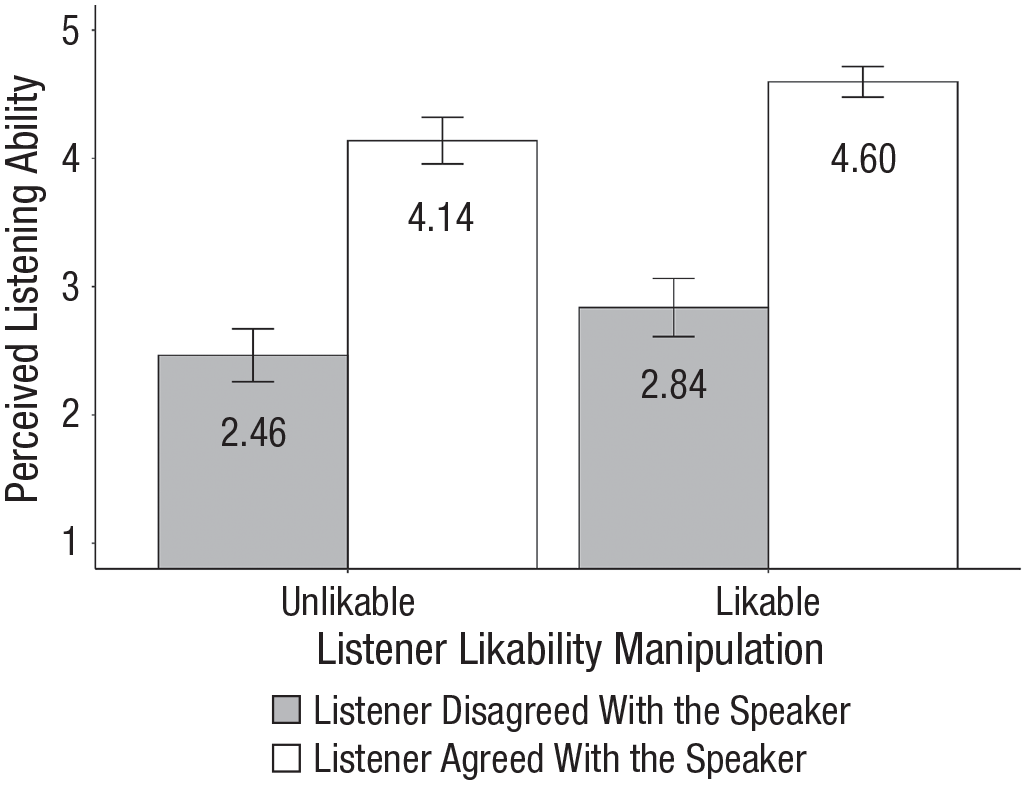

Regression Results (Study 5a)

Note: We present B (SE) in the table. Agreement manipulation: 0 = listener disagreed with the speaker; 1 = listener agreed with the speaker. Objective listening quality manipulation: 0 = lower-quality listening; 1 = higher-quality listening.

p < .05. **p < .01. **p < .001.

Part 2: receiving the feedback from the listeners

We contacted participants approximately 2 days after they submitted their recordings. We explained that an affiliate of our university had listened to their recording and provided written feedback about it. Participants reviewed the feedback. They then answered the same question about the listener’s listening ability as they did in the previous studies.

Results

We conducted a series of regression analyses to assess the main and moderating effects of the listener’s position and objective listening quality on perceived listening. Participants perceived the listener to be a better listener when the listener agreed with them than when they disagreed with them, replicating the previous studies (see Models 1 and 3, Table 6). Participants also thought the listener was a better listener when they demonstrated higher-quality listening versus lower-quality listening (Model 2, Table 6). Objective listening quality did not moderate the effect of agreement on perceived listening (Model 4, Table 6).

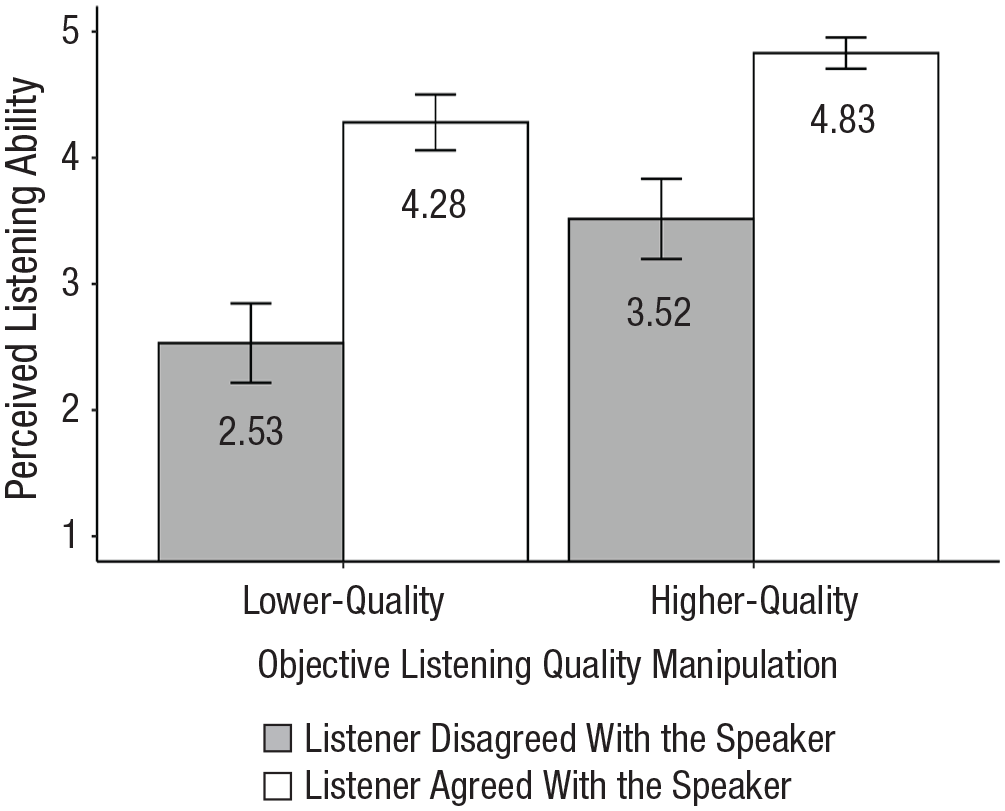

As shown in Figure 3, participants rated the listener who agreed with them as a better listener than the one who disagreed in both the lower-quality objective listening condition (B = 1.75, SE = 0.18, p < .001) and the higher-quality objective listening condition (B = 1.31, SE = 0.21, p < .001). We observed a similar pattern of results in Study S5, in which we manipulated listening quality as attentiveness versus distraction (see Supplemental Material, Section 11), and in a conceptual replication study (see Supplemental Material, Section 10).

The effect of a listener’s agreement or disagreement with the speaker and likability on perceived listening ability (Study 4). Error bars indicate 95% confidence intervals. We manipulated the listener’s likability by varying whether they allocated $0.25 or $0.05 of a $0.30 bonus to the speaker.

The effect of a listener’s agreement or disagreement with the speaker and the manipulation objective listening quality on the speaker’s ratings of the listener’s listening ability (Study 5a). Error bars indicate 95% confidence intervals.

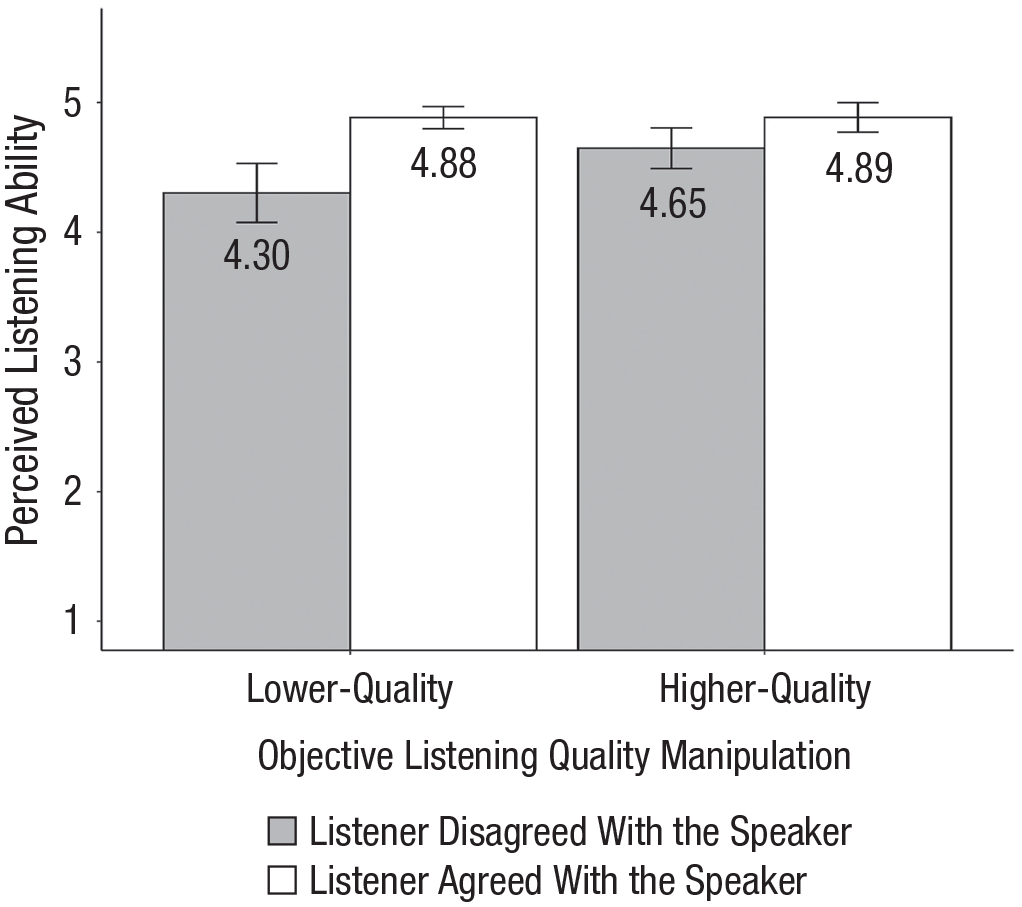

The effect of a listener’s agreement or disagreement with the speaker and the manipulation of objective listening quality on the speaker’s ratings of the listener’s listening (Study 5b). Error bars indicate 95% confidence intervals.

As also shown in Figure 3, speakers thought the listener who agreed with them but demonstrated lower-quality objective listening was a better listener than the listener who disagreed with them but demonstrated higher-quality objective listening, t(117.38) = 3.87, p < .001.

Study 5b

Prior literature on listening has identified markers of higher-quality listening beyond comprehension, such as expressing interest in the speaker’s views (Lipetz et al., 2020; Yip & Fisher, 2022). In Study 5b, we manipulated objective listening quality by having the higher-quality listener acknowledge and show respect for and interest in the speaker’s views. We reasoned that these listening behaviors might mitigate the negative effect of disagreement on perceived listening. We also tested the effect of these listening behaviors on speakers’ perceptions of how much the listener agreed with them.

Method

Participants and procedure

The recruitment and procedure were like those in Study 5a. Participants from Prolific Academic (N = 267) recorded their views on a sociopolitical topic and learned that an affiliate of our university would listen to their recording and provide feedback about it.

Like in Study 5a, we generated four possible responses to the recording and assigned participants to receive one of the responses, using a 2 (listener’s position: agreed with the speaker vs. disagreed with the speaker agreement) × 2 (objective listening quality: higher quality vs. lower quality) factorial design. 2 We manipulated the listener’s position in the same way as we did in Study 5a. We manipulated objective listening quality by varying the presence or absence of markers of high-quality listening. In the higher-quality objective listening condition, the listener acknowledged the speaker’s perspective, expressed respect for and interest in this perspective, and explained (in general terms) why they (dis)agreed. In the lower-quality objective listening condition, these markers of good listening were omitted (see Table 5 for examples).

We contacted participants approximately 1 day after they submitted their recordings. We explained that an affiliate of our university had listened to their recording and provided written feedback about it. Participants reviewed the feedback. They then evaluated the listener’s listening ability and how much they thought the listener agreed with them using the same manipulation check item as in the previous studies as well as additional items to assess perceived shared views, whether the listener was persuadable, and the perceived strength of the listener’s views (see Section 12 of the Supplemental Material).

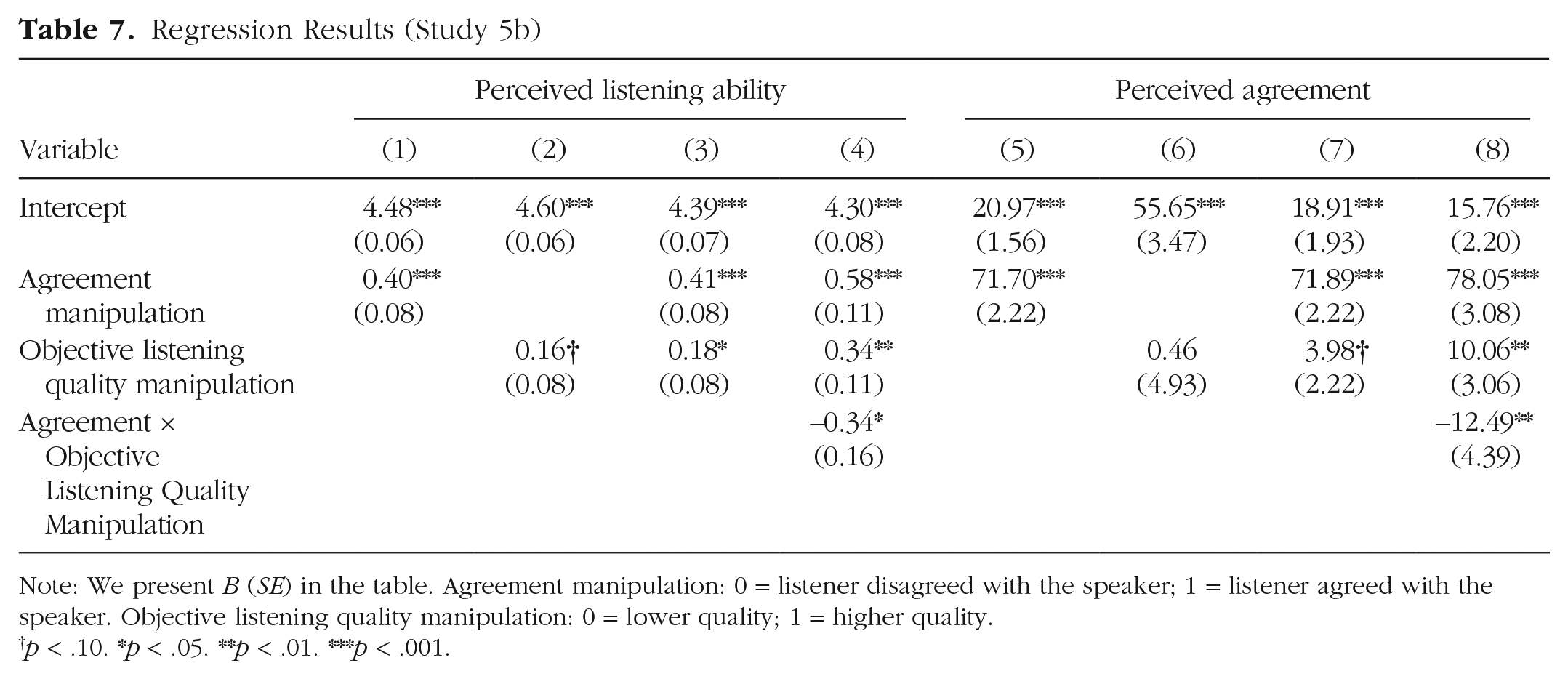

Results

We conducted a series of regression analyses to test the main and moderating effects of the listener’s position and objective listening quality on perceived listening ability. Participants thought the listener listened to them better when they agreed than when they disagreed (see Table 7, Model 1) and when the listener used markers of high-quality listening (see Table 7, Model 2). However, these two main effects were qualified by a significant interaction (see Table 7, Model 4): The effect of (dis)agreement on perceived listening was weaker when the listener used markers of higher-quality listening (B = 0.24, SE = 0.11, p = .038) than when they did not (B = 0.58, SE = 0.11, p < .001). Moreover, using markers of higher-quality listening had a positive significant effect on perceived listening when the listener disagreed with speakers (B = 0.34, SE = 0.11, p = .002) but no significant effect when they agreed with them (B = 1.19e-3, SE = 0.11, p = .992; see Fig. 4).

Regression Results (Study 5b)

Note: We present B (SE) in the table. Agreement manipulation: 0 = listener disagreed with the speaker; 1 = listener agreed with the speaker. Objective listening quality manipulation: 0 = lower quality; 1 = higher quality.

p < .10. * p < .05. **p < .01. ***p < .001.

Interestingly, using markers of higher-quality listening also affected perceived agreement. Unsurprisingly, participants thought that the listener agreed with them more when the listener agreed with them than disagreed with them (Table 7, Model 5). But markers of high-quality listening moderated the effect of agreement on perceived agreement (Model 8). Participants thought the disagreeing listener disagreed with them less when they used markers of higher-quality listening (M = 25.82, SD = 23.27) than when they did not (M = 15.76, SD = 20.55). This was not the case for the agreeing listener (Mhigher-quality listening = 91.38, SDhigher-quality listening = 10.83; Mlower-quality listening = 93.81, SDlower-quality listening = 13.13).

Discussion

Study 5b showed that displaying markers of higher-quality listening improved the impressions that speakers formed of how well a disagreeing listener listened. Interestingly, in this study, people also thought the disagreeing listener disagreed with them less when they used markers of higher-quality listening. This suggests an intriguing possibility: Perhaps the very markers people use to show good listening also convey that they agree more with the speaker.

General Discussion

Our studies consistently showed that speakers perceived better listening from a listener who agreed with them than a listener who did not, even when considering other positive impressions of the listener. We found that naive realism can explain this effect. Naive realism holds that people feel their views are unbiased and objective (Ross & Ward, 1996), so they assume others will agree with them once they explain their views to them. Supporting this, speakers believed that a listener who agreed with them processed information more objectively than a listener who did not (Study 3), and the positive effect of agreement on perceived listening was more pronounced for speakers than for observers (Study S3).

We manipulated objective listening quality to test if the effect of (dis)agreement on perceived listening persisted under conditions of strong signals of good listening. These studies revealed novel insights about listening. First, engaging in higher-quality listening can improve how well speakers think a disagreeing listener listened to them, but demonstrating higher-quality listening may still be less effective than merely agreeing with a speaker. Second, engaging in higher-quality listening can unintentionally convey stronger alignment with the speaker’s views. Future research would benefit from exploring this effect further because it could shift understanding about the mechanism underlying the benefits of listening. Perhaps it is not about listening per se but about the effect of listening on assumed shared views.

The finding that making someone feel listened to relies heavily on the listener agreeing with them may not be problematic when a listener agrees with a speaker. But it poses a significant challenge when they do not. A listener who disagrees with a speaker could withhold their views. But this might be infeasible or undesirable in decision-making contexts in which people will learn one’s views and/or when the sharing of different opinions is vital (Heltzel & Laurin, 2020; Minson et al., 2011; Silver & Shaw, 2022). Furthermore, if people rely on whether a listener agrees with them when evaluating listening quality, they may misjudge how well the listener understood them. They may overestimate the understanding of an agreeing listener and underestimate the understanding of a disagreeing one, thus contributing to false polarization (Blatz & Mercier, 2018). However, this may be less consequential when information exchange is not the main goal (e.g., when a friend is seeking emotional support for the loss of a loved one; Yeomans et al., 2022).

Our work shows that one way people make sense of why someone disagrees with them after having listened to them is to assume they did not listen well. But this may not be a universal assumption. For example, in some cases, people might attribute the disagreement to their own lack of clarity in explaining their views. This attribution might motivate the speaker to try again or reengage the listener. It would be interesting for future research to explore the circumstances under which people make these different attributions.

Overall, the findings shed light on common listening issues in conflict. A frequent exchange in conflict is one side accusing the other side of not listening (“You are not listening to me!”) while the other side remains resolute that they are listening (“I am listening to you!”). The present findings suggest that both sides might be right. The speaker does not feel listened to because the other side has not conceded their position. The listener has understood the speaker but holds different views. Identifying that the core issue is one of divergent views rather than poor listening may help to improve communication dynamics.

Supplemental Material

sj-docx-1-pss-10.1177_09567976241239935 – Supplemental material for Disagreement Gets Mistaken for Bad Listening

Supplemental material, sj-docx-1-pss-10.1177_09567976241239935 for Disagreement Gets Mistaken for Bad Listening by Zhiying (Bella) Ren and Rebecca Schaumberg in Psychological Science

Footnotes

Acknowledgements

We thank Sara Sermarini, Victoria Coons, Mary Spratt, Emily Rosa, and Owen Vadala for their help in running the experiments. We thank Maurice Schweitzer, Joe Simmons, Charlie Dorison, Julia Minson, and Drew Carton for their helpful comments and valuable insights.

Transparency

Action Editor: Yoel Inbar

Editor: Patricia J. Bauer

Author Contributions

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.