Abstract

We used machine-learning techniques to assess interactions between language and cognitive systems related to inhibitory control and conflict adaptation in reactive control tasks. We built theoretically driven candidate models of Simon and Number Stroop task data (N = 777 adult bilinguals ages 18–43 years living in Montréal, Canada) that differed in whether bilingual experience interacted with inhibitory control, including two forms of conflict adaptation: shorter term sequential congruency effects and longer term trial order effects. Models with continuous aspects of bilingual experience provided signal in predicting new, unmodeled data. Specifically, mixed language usage predicted trial order adaptation to conflict. This effect was restricted to Number Stroop, which overtly involves linguistic or symbolic information and relatively higher language- and response-related uncertainty. These results suggest that bilingual experience adaptively tunes aspects of the control system and offers a novel integrative modeling approach that can be used to pursue other complex individual difference questions within the psychological sciences.

Regular use of multiple languages (bilingualism) leads to adaptations in language outcomes, language control, and executive control (Abutalebi & Green, 2016; Bialystok et al., 2012; Green & Abutalebi, 2013; Green & Wei, 2014; Gullifer & Titone, 2021). Bilingual experience exhibits hallmarks of ideal cognitive training that should generalize to nonlinguistic domains (Diamond & Ling, 2016): It is taxing, continual over the lifespan, and socioculturally relevant. However, empirical links between language experience and executive control are variable, leading to questions about scientific practices, transfer between cognitive systems, and recurring epistemological issues (Titone et al., 2017; Titone & Tiv, 2022).

One theoretical perspective asserts that linguistic and cognitive systems do not interact (Paap, Anders-Jefferson, et al., 2019) or that there is weak transfer between systems (Barnett & Ceci, 2002; Sala et al., 2019). Executive control differences for bilinguals versus monolinguals are attributed to a reliance on small sample sizes and p values, publication bias, researcher degrees of freedom, and questionable research practices (de Bruin et al., 2015; Donnelly et al., 2019; Lehtonen et al., 2018; Paap, 2019; Paap et al., 2015). These practices inflate effect sizes and cause false positives (Chambers, 2013; Cumming, 2014; Easterbrook et al., 1991; Francis, 2012; Gelman & Carlin, 2014). Accordingly, studies with larger samples may reveal null effects for bilingual versus monolingual experience on executive control (Dick et al., 2019; Nichols et al., 2020; Von Bastian et al., 2016).

Another perspective asserts that interactions between systems are conditioned by sociolinguistic contexts or usage practices. For example, dual language contexts engage control processes (e.g., active goal maintenance and interference control) to a greater extent than single language contexts (Beatty-Martínez et al., 2020; Green & Abutalebi, 2013). The continual engagement of such processes may transfer to broader aspects of executive control. Here, mixed evidence is ascribed to grouping individuals with diverse usage patterns, abilities, and cognitive states into monolithic categories (Beatty-Martínez & Titone, 2021; Grosjean, 1997; Gullifer et al., 2021; Luk & Bialystok, 2013; Navarro-Torres et al., 2021; Salig et al., 2021; Surrain & Luk, 2019) on the basis of socially invented language distinctions (Makoni & Pennycook, 2007; Ortega, 2018; Otheguy et al., 2019). Signal is detected by including diverse aspects of experience using multidimensional continua (Beatty-Martínez & Titone, 2021; Gullifer & Titone, 2020b).

For example, Gullifer and Titone (2020b) modeled a proactive control task (where control is applied ahead of conflict) using a large sample (N = 450+) and machine-learning techniques that compared out-of-sample prediction error across models (integrative modeling; Hofman et al., 2021). Large samples yield more accurate effect sizes, and cross-validation offers analytical flexibility while minimizing researcher biases and reliance on p values. Continuous features of bilingual experience (namely, language entropy, which measures joint language use) predicted performance. The effect cross-validated, minimizing prediction error relative to models with trial type alone. Thus, joint language engagement provides signal in predicting proactive control (i.e., active goal maintenance) in the Montréal, Canada, context.

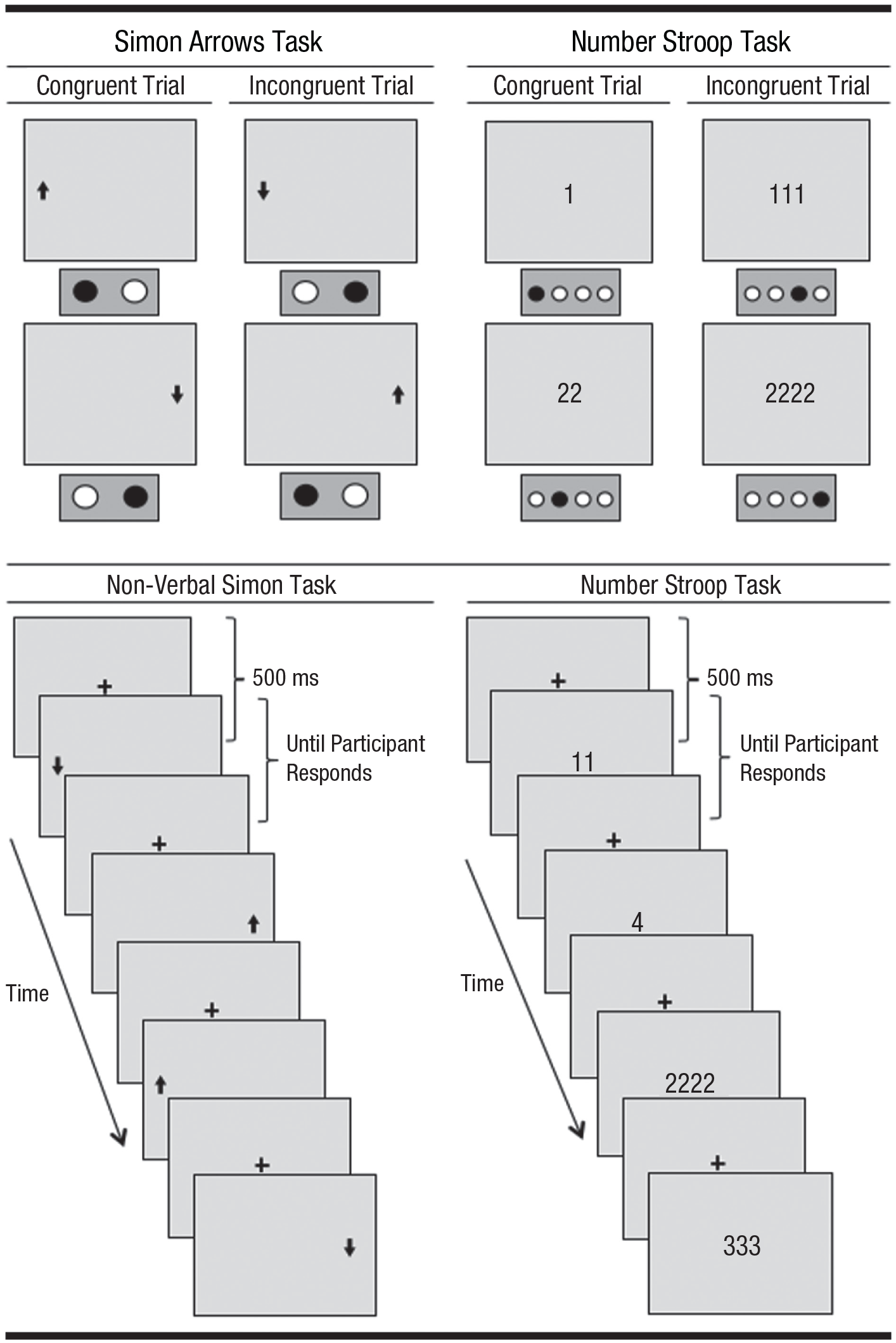

Conflict is also managed reactively through immediate interference suppression, a central construct to bilingual control. Thus, an open question is whether cognitive models of reactive inhibitory control are structured similarly. Reactive control is commonly measured through tasks such as Simon Arrows and Number Stroop (Fig. 1). These tasks assess conflict monitoring and adaptation but differ in two ways, resulting in higher language- and response-related uncertainty for Number Stroop. First, only Number Stroop involves competing language representations via numeric symbols. This uncertainty may be heightened for bilinguals who experience cross-language competition for routinized number labels (Meuter & Allport, 1999). Second, Number Stroop has more response options (four) than Simon Arrows (two), heightening response uncertainty. The higher language- and response-related uncertainty might better simulate control demands experienced by bilinguals (Gullifer & Titone, 2021).

Illustration of the Simon Arrows (left panel) and Number Stroop (right panel) tasks. In the Simon Arrows task, conflict arises between the position of an arrow in a display (left side vs. right side) and the location of a desired response (left response vs. right response). In the Number Stroop task, conflict arises between the number of digits that appear on a display and the quantity specified by those digits. Trials with conflict (incongruent trials) result in costs in reaction times (RTs) and accuracies relative to those without conflict (congruent trials). In tasks in which trial types are equally likely, participants cannot reliably predict upcoming trials, and control is exercised reactively.

Importantly, people adapt performance in the presence of conflict. Often, conflict adaptation is assessed narrowly, in the short term, by measuring changes in conflict effects as a function of immediately preceding trial types, known as sequential congruency effects (Gratton et al., 1992). Typically, conflict effects are smaller after incongruent versus congruent trials. This could reflect immediate increases in control demands following conflict (Botvinick et al., 2001) or passive carryover from prior application of control (Hubbard et al., 2017; Mansouri et al., 2009; Paap, Myuz, et al., 2019). However, as people progress through tasks, they gain familiarity with task parameters and conflict, which could result in longer term adaptations: faster task performance (speedups on all trials) and smaller conflict effects as more trials are seen (trial order adaptation).

Statement of Relevance

How might language influence thought? Bilingualism—or the frequent use of more than one language—has been thought to confer advantages to broader aspects of cognition, like the ability to attend to pertinent information and ignore irrelevant information. Yet evidence for this possibility has been mixed, possibly because of failure to recognize that that are various types of bilingualism. That is, participants from diverse language backgrounds are commonly lumped together into groups of “bilinguals” and “monolinguals”, when, in reality, these categorical distinctions are not clear-cut. Here, we employ a highly rigorous statistical approach to data from a large sample of diverse bilinguals, and we measure their experiences using sensitive measures. We find that these measures relate to cognition in predictable ways: we observed cognitive impacts of bilingualism that were most prominent for tasks with high language-related uncertainties, similar to those that bilingual individuals experience in their day-to-day lives.

At the group level, some studies have found bilinguals to be less susceptible to short-term sequential congruency effects than monolinguals, suggesting that bilinguals effectively disengage from prior conflict (Grundy et al., 2017). However, other researchers have questioned the generality and interpretation of these effects (Goldsmith & Morton, 2018; Paap, Myuz, et al., 2019; but see Grundy & Bialystok, 2019). There is also a well-documented reaction time (RT) advantage for bilinguals versus monolinguals (Bialystok et al., 2004; Costa et al., 2009; Hilchey & Klein, 2011; Paap, Myuz, et al., 2019; but see Hilchey et al., 2015). RT advantages suggest enhanced conflict monitoring due to bilingualism. Meta-analytic effect sizes are small (g = 0.06) and do not survive publication bias corrections (Lehtonen et al., 2018), but meta-analytic approaches may obscure group differences (Grundy, 2020), particularly for samples with heterogeneous language practices. Within bilingual samples (our focus here), joint language engagement matters. Frequent code switchers (who interleave multiple languages) exhibit sequential congruency effects when they encounter code-switched sentences in a Flanker task (Adler et al., 2020), particularly in later task blocks. They also exhibit smaller conflict effects as they progress through a traditional Simon task (Kheder & Kaan, 2021). Thus, code switching may progressively increase control demands, but code switchers develop efficiency at resolving conflict. Altogether, joint language engagement is important, but it interacts in complex ways with the presence of conflict, prior trial conflict, and trial order.

Here, we assessed such interactions in Simon Arrows and Number Stroop tasks. We used a large sample (N = 700+) of adult, bilingual Montréalers and an integrative modeling approach (Gullifer & Titone, 2020b; Hofman et al., 2021) to compare models that differed in whether aspects of bilingualism predict conflict adaptation, broadly construed. We focused on joint language engagement (mixed use) but also tested other indicators such as age of acquisition (AoA; Kousaie et al., 2017; Tao et al., 2011) and second language (L2) exposure (Gullifer et al., 2021; Subramaniapillai et al., 2018). Given past work, we expected a modulation of sequential congruency effects or trial order adaptation effects as a function of mixed use as well as a relationship between aspects of bilingual experience and global RT.

The studies presented here were not preregistered. Readers may access the code for the analytical routines on OSF (https://osf.io/axrtv/). Data will be made available upon request by contacting the corresponding author.

Method

Participants

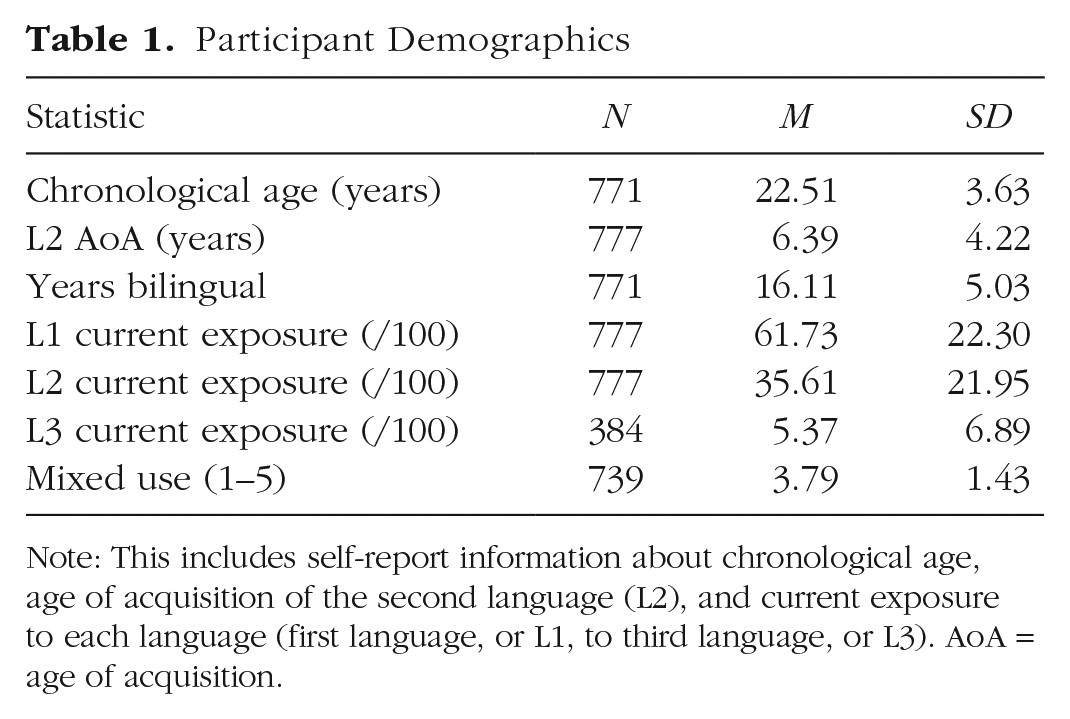

We analyzed data from 777 bilingual participants who reported language history information (including relative exposure to and use of two or more languages) and completed our target executive control tasks. This sample size is larger than typical for cognitive psychology. Participants’ language history was previously analyzed and reported by Gullifer and Titone (2020a), and a subset (n = 459) completed a proactive control task that was previously analyzed and reported by Gullifer and Titone (2020b). Approximately 40% of the sample reported acquiring English as their first language (L1; n = 320), and approximately 60% reported acquiring French as their L1 (n = 457). Approximately 75% of the sample identified as female (n = 580). Table 1 presents summary demographic and language history data. Participants were a mix of university students and members of the larger Montréal community, recruited through McGill’s human participant pool system, posted flyers (digital, physical), and word of mouth. All participants provided written informed consent to have their data from experiments and questionnaires collected, stored, and analyzed. The McGill Research Ethics Board approved this research.

Participant Demographics

Note: This includes self-report information about chronological age, age of acquisition of the second language (L2), and current exposure to each language (first language, or L1, to third language, or L3). AoA = age of acquisition.

Materials

Assessing language experience

Approximately 60% of participants completed a questionnaire previously reported (Gullifer & Titone, 2020a, 2020b). The remaining 40% completed an earlier, shorter questionnaire version. The questionnaires allowed us to probe language experience within the Montréal context. For purposes of the analysis, we extracted or computed several background measures, outlined below.

We extracted two classic measures of L2 experience: L2 AoA (based on the onset of learning) and global exposure to the L2 (Gullifer & Titone, 2020a, 2020b). Participants also reported information about language use in different communicative contexts. Because of changes in the questionnaires over time, the response types of language usage differed. Approximately 40% reported usage in a coarse manner: whether a specific language is used (i.e., French, English, other, or combinations of two or more languages) at home, at work, and in social settings. Approximately 60% reported a more fine-grained Likert score according to the amount of usage of each language (Gullifer & Titone, 2020a, 2020b) on a scale ranging from 1 (no usage at all) to 7 (usage all the time or a significant amount).

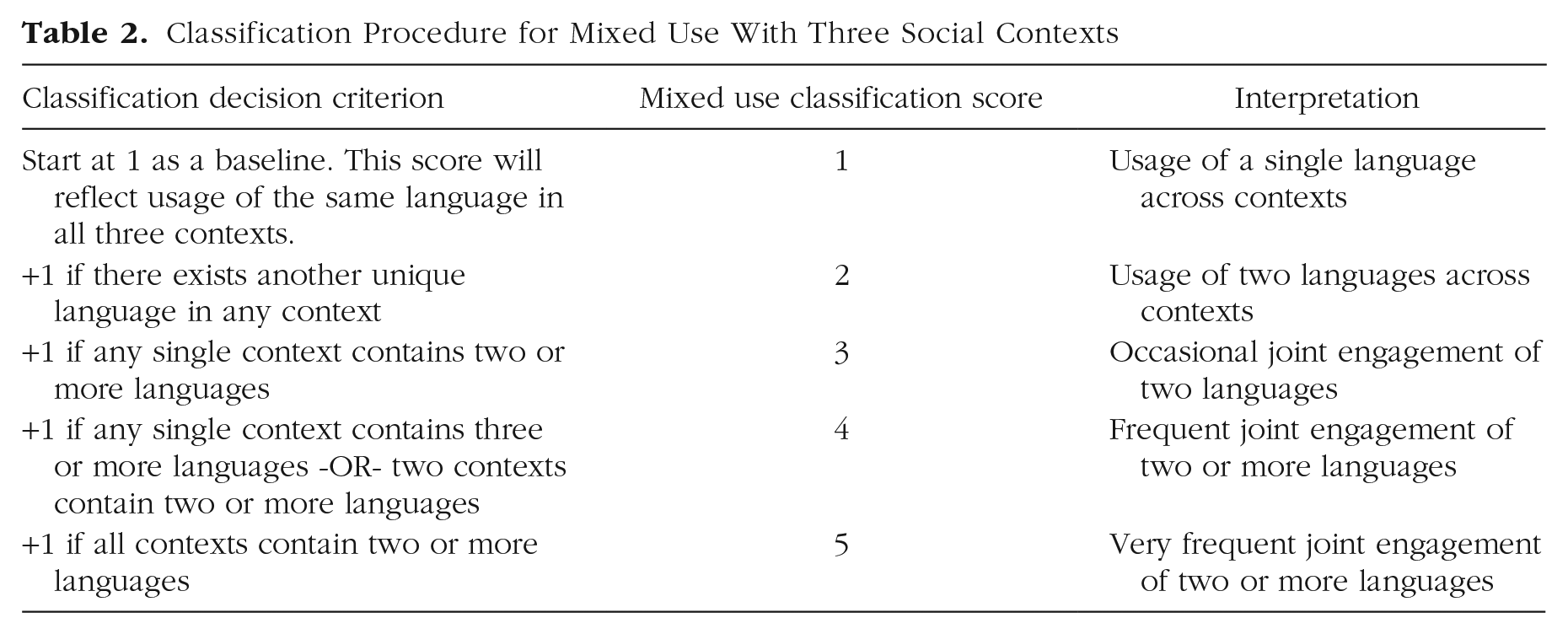

To assess joint language engagement, we computed a mixed-use language measure. First, we converted the Likert scores to coarse usage: We treated scores greater than 1 for any language as usage of that language in that context. We then classified participants into one of five ordered categories on the basis of their joint language engagement (for decision criteria, see Table 2). Overall, mixed-use scores of 1 reflect usage of a single language across contexts, and scores of 5 reflect the engagement of two or more languages in all contexts.

Classification Procedure for Mixed Use With Three Social Contexts

Assessing reactive control using Simon Arrows and Number Stroop tasks

In the Simon Arrows task, participants responded, as quickly and accurately as possible, to the direction (up or down) signified by an arrow on a display, which required a left or right response, respectively. Arrows were positioned on the left or right side of the screen, resulting in congruent trial types (response position and stimulus positions matched) and incongruent trial types (response position and stimulus positions mismatched). Conflict effects were assessed by contrasting incongruent and congruent trials and reflected participants’ abilities to inhibit positional information and attend to directional information. Conflict adaptation effects were assessed locally via interactions between trial type and prior trial type. Conflict adaptation effects were assessed globally via interactions between trial type and trial order (a continuous variable reflecting current trial number, scaled and centered). The task contained 80 trials, preceded by a practice block. Half of the trials (n = 40) required a congruent response, and half (n = 40) required an incongruent response. Figure 1 (left panel) illustrates the task.

We chose the vertical version of the Simon Arrows task because pilot studies and work by Verbruggen and colleagues (2005) suggested that the vertical version results in larger conflict effects compared with the horizontal version. Paap and colleagues (2020) further suggested that the vertical Simon Arrows task evidences lower reliability compared with other tasks. Tasks with lower reliability (i.e., with high between-individuals variability) may be more reliable individual difference markers (Hedge et al., 2018).

In the Number Stroop task, participants saw a display of digits in the center of the screen (e.g., 4444 or 222). The numbers that composed the digits were semantically congruent (e.g., 4444, 22) or incongruent (e.g., 2222, 44) with the response. Participants responded, as quickly and accurately as possible, to the number of digits that appeared in the display while ignoring the quantity specified by each digit’s value. Participants used one of four fingers to press a number key (on the number row of the keyboard; 1, 2, 3, or 4) to indicate how many digits appeared on the screen. Figure 1 (right panel) illustrates the task (button presses indicated as black circles). In the illustration, participants pressed the first button when they saw one digit (e.g., “1”), the second button when they saw two digits (e.g., “22”), the third button when they saw three digits (e.g., “111”), and the fourth button when they saw four digits (e.g., “2222”). The number strings “1” and “22” are examples of congruent trials because the quantity specified by the digits (distractor information: “one” and “two,” respectively) matches the number of digits on the screen (response criterion: press Buttons 1 and 2, respectively). In contrast, “111” and “2222” are examples of incongruent trials because the quantity specified by the digits (distractor information: “one” and “two,” respectively) conflicts with the number of digits (response criterion: press Buttons 3 and 4, respectively).

Conflict effects were assessed by contrasting incongruent and congruent trials, which provided an indication of participants’ abilities to inhibit semantic information and attend to quantity information. Conflict adaptation effects were assessed via conflict effects as a function of prior trial type and trial order. The task contained 60 trials preceded by a practice block. Half of the trials required a congruent response, and half required an incongruent response. Responses were evenly distributed across the four fingers (n = 15 trials each).

Results

Data were processed and analyzed in the R programming environment (Version 4; R Core Team, 2021). For trial-level Simon Arrows data, we removed RTs less than 150 ms and greater than 2,000 ms as outliers. For trial-level Number Stroop data, we removed RTs less than 250 ms and greater than 2,000 ms as outliers. Outlier detection was based on visual inspection of a density plot for each data set. Prior to analysis, trial type and prior trial type were deviation coded (congruent: −0.5, incongruent: 0.5), and L2 AoA and trial order were z scored.

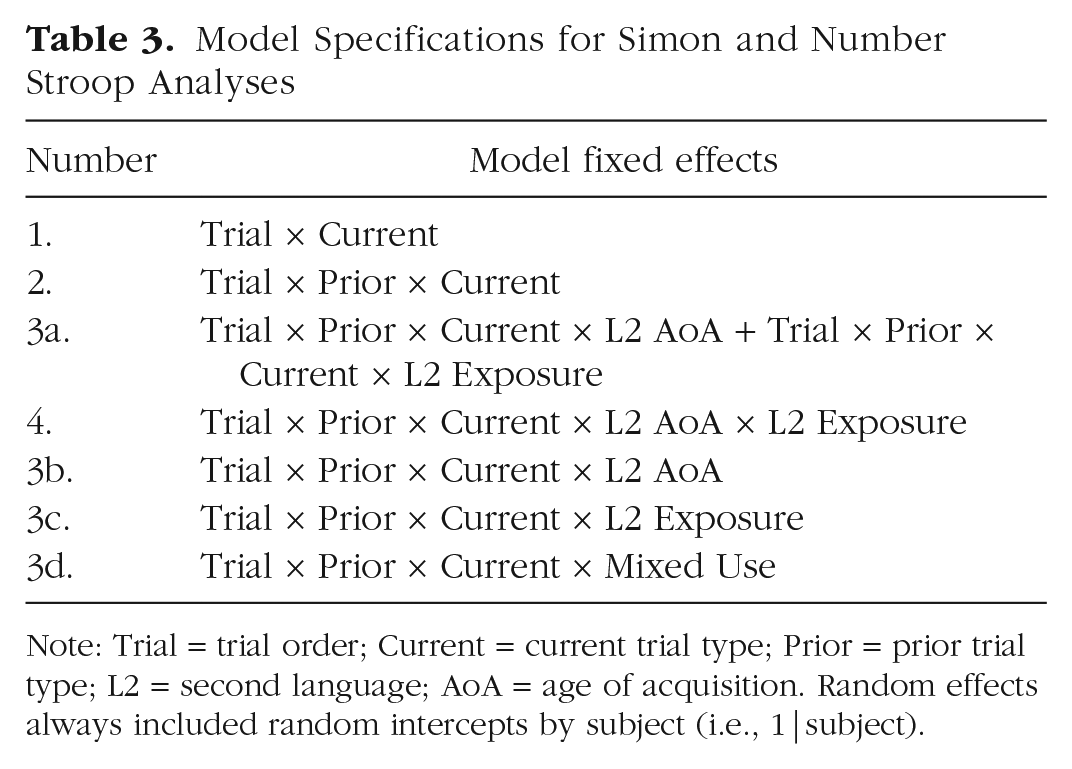

Using linear mixed-effects regression (lme4; Bates et al., 2015), we fitted several theoretically driven models to RTs and accuracy for each task data set to determine the best model specification. The models differed in whether they included interactions between aspects of bilingual experience and task effects. All linear mixed-effects regression models included a random intercept for subject. We did not add a random slope for trial type by subject because our purpose was to assess fixed effects related to individual differences, and these would have been completely captured by a random slope by subject. We computed effect sizes (d) for RTs by dividing the slope estimate by the square root of the sum of the random effect variances (following Brysbaert & Stevens, 2018; Gullifer & Titone, 2020b; Westfall et al., 2014), and we computed p values using the degrees of freedom approximated by the Satterthwaite method (Kuznetsova et al., 2017). Model specifications are listed in Table 3.

Model Specifications for Simon and Number Stroop Analyses

Note: Trial = trial order; Current = current trial type; Prior = prior trial type; L2 = second language; AoA = age of acquisition. Random effects always included random intercepts by subject (i.e., 1|subject).

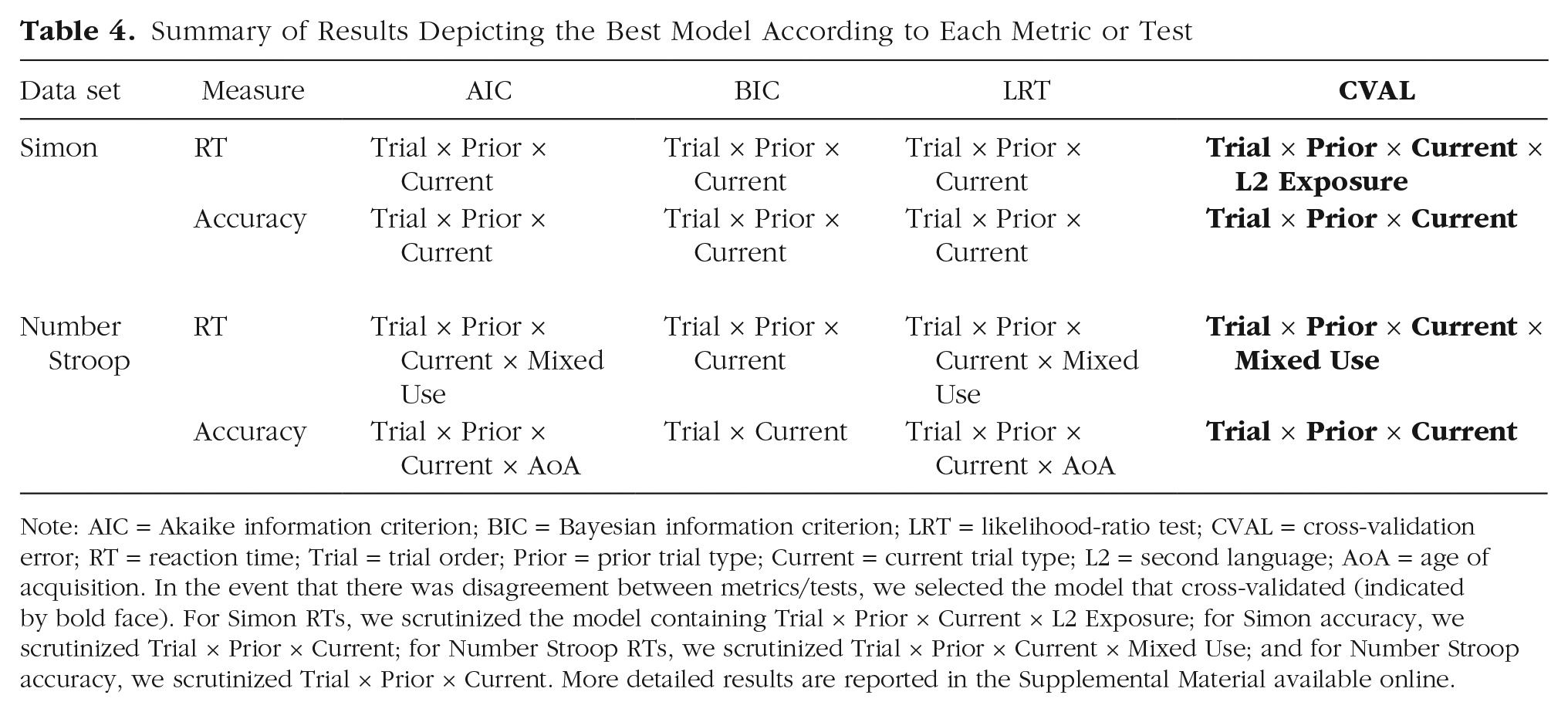

To select the best model specification, we compared four metrics: Akaike information criterion (AIC), Bayesian information criterion (BIC), likelihood-ratio tests (LRTs), and cross-validation error (described more fully by Gullifer & Titone, 2020b). Table 4 provides a summary of the best model specification selected by each metric for each task, and detailed modeling tables are provided in the Supplemental Material available online. We scrutinized the model that cross-validated.

Summary of Results Depicting the Best Model According to Each Metric or Test

Note: AIC = Akaike information criterion; BIC = Bayesian information criterion; LRT = likelihood-ratio test; CVAL = cross-validation error; RT = reaction time; Trial = trial order; Prior = prior trial type; Current = current trial type; L2 = second language; AoA = age of acquisition. In the event that there was disagreement between metrics/tests, we selected the model that cross-validated (indicated by bold face). For Simon RTs, we scrutinized the model containing Trial × Prior × Current × L2 Exposure; for Simon accuracy, we scrutinized Trial × Prior × Current; for Number Stroop RTs, we scrutinized Trial × Prior × Current × Mixed Use; and for Number Stroop accuracy, we scrutinized Trial × Prior × Current. More detailed results are reported in the Supplemental Material available online.

Simon task

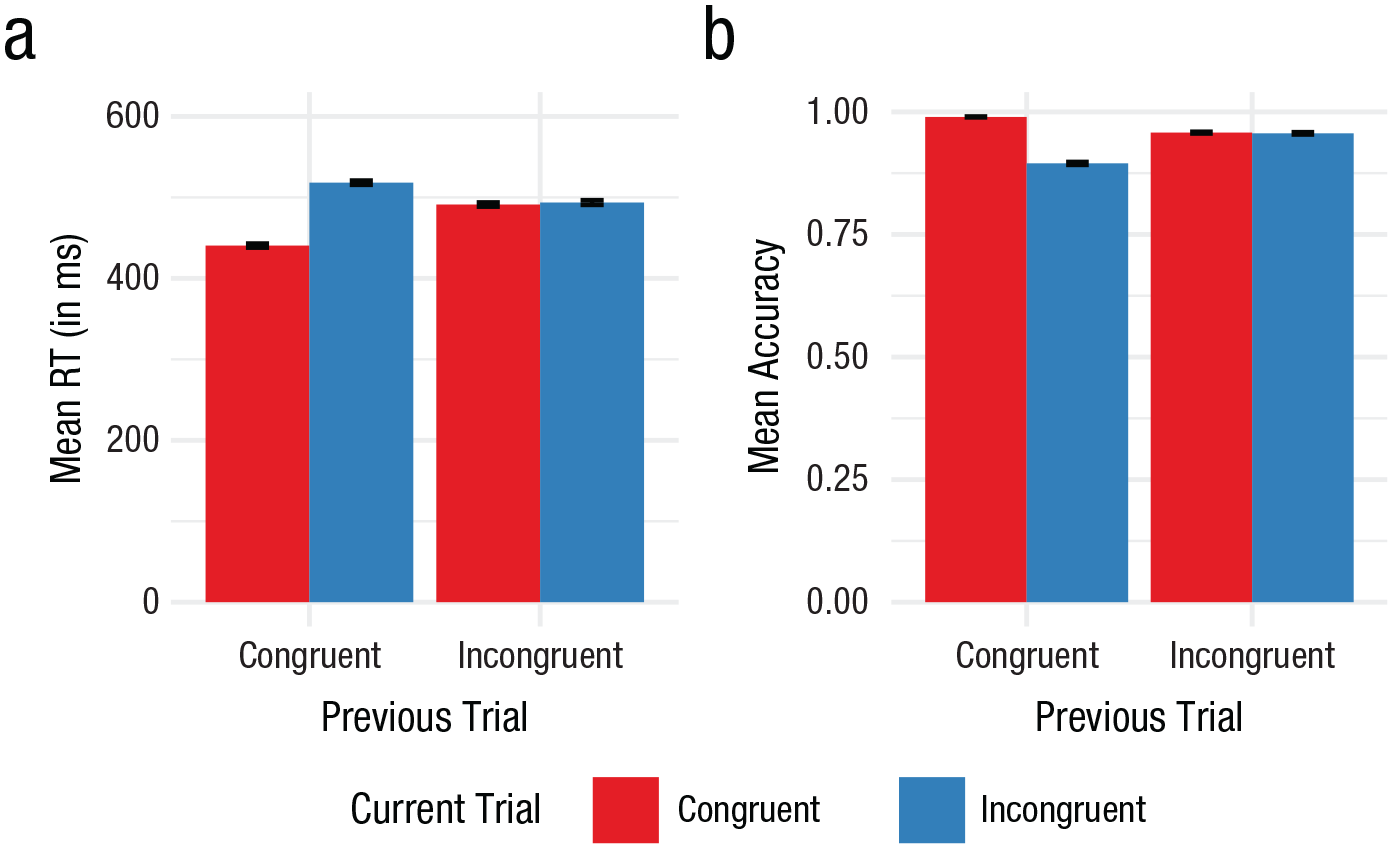

Aggregated data at the subject level are illustrated in Figure 2. Visual inspection of these figures indicates a sequential congruency effect (typical conflict effect after a prior congruent trial; smaller conflict effect after a prior incongruent trial) for both RTs (Fig. 2a) and accuracy (Fig. 2b).

Subject aggregate (a) reaction times (RTs) and (b) accuracy for the Simon Arrows task. Error bars represent standard error of the mean.

RT analysis

For Simon Arrows RTs, most metrics (AIC, BIC, and LRT) agreed that the best model included interactions between trial order, prior trial type, and current trial type. However, cross-validation error indicated that the best model included an additional interaction with L2 exposure. Model fits are available in Tables S2 and S3 in the Supplemental Material. We scrutinized the model that cross-validated and observed the following pattern of results.

There was a significant effect of current trial type (b = 39.76, 95% confidence interval, or CI = [37.6, 41.92], d = 0.28, t = 36.05, p < .001), indicating that incongruent trials were slower than congruent trials. Current trial type interacted with prior trial type (b = −75.57, 95% CI = [−79.91, −71.22], d = 0.53, t = −34.07, p < .001) and with trial order (b = −4.2, 95% CI = [−6.36, −2.03], d = 0.03, t = −3.80, p < .001). This indicated that conflict effects were smaller after an incongruent trial (i.e., the presence of a sequential congruency effect) and toward the end of the task, suggesting that participants exhibited conflict adaptation. There were no significant interactions with L2 exposure, although there was a main effect of L2 exposure (b = −5.48, 95% CI = [−10.29, −0.67], d = 0.04, t = −2.23, p = .026). This indicated that participants with higher L2 exposure were faster overall. Note that the main effect of L2 exposure remained stable in an ad hoc model in which it was treated as a simple additive effect (b = −5.37, 95% CI = [−10.17, −0.57], t = −2.19, p = .029). Full regression results are available in Table S4 in the Supplemental Material.

Accuracy analysis

For Simon Arrows accuracy, all metrics agreed that the best model included interactions between trial order, prior trial type, and current trial type. Model fits are available in Tables S5 and S6 in the Supplemental Material. We scrutinized this model and observed the following pattern of results.

There was a significant effect of current trial type, indicating that incongruent trials were less accurate than congruent trials (b = −1.23, 95% CI = [−1.34, −1.12], z = −21.72, p < .001). Current trial type interacted with prior trial type (b = 2.44, 95% CI = [2.22, 2.66], z = 21.57, p < .001) but not trial order (b = 0.11, 95% CI = [0, 0.22], z = 1.90, p = .057), again indicative of a sequential congruency effect, suggesting that participants exhibited conflict adaptation. However, these accuracy effects did not build over the task. Full regression results are available in Table S7 in the Supplemental Material.

Summary

Overall, there was no indication through inspection of this model that aspects of bilingual experience, as measured in this experiment, modulated conflict effects or sequential congruency effects in the Simon Arrows task. However, L2 exposure modulated RTs across trials. There were no such modulations for accuracy.

Number Stroop task

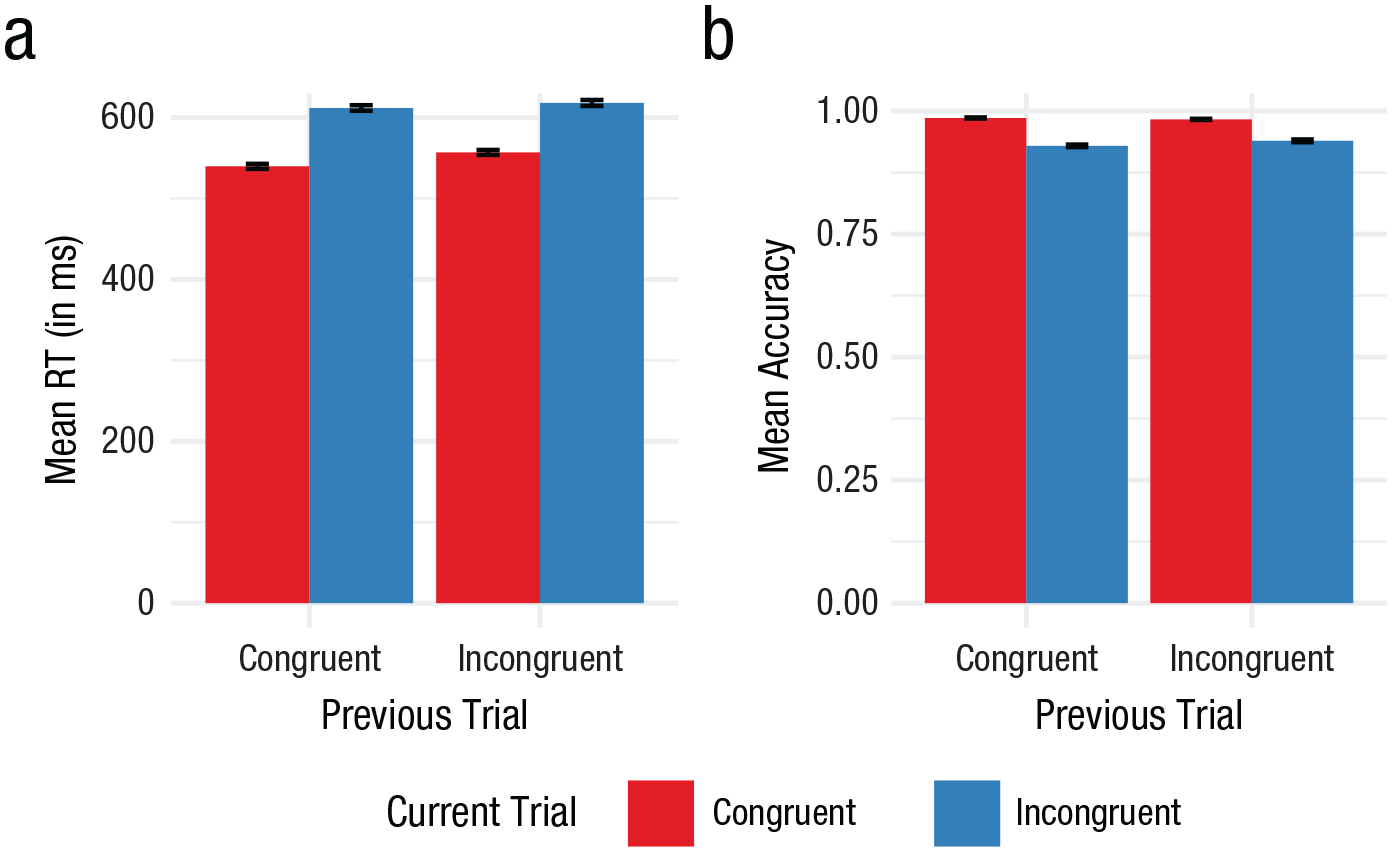

Aggregated data at the subject level are illustrated in Figure 3. Visual inspection of Figure 3 indicates typical conflict effects in RTs (Fig. 3a) and accuracy (Fig. 3b) and a possible, but small, SCE.

Subject aggregate (a) reaction times (RTs) and (b) accuracy for the Number Stroop task. Error bars represent standard error of the mean.

RT analysis

For Number Stroop RTs, most metrics (AIC, LRT, and cross-validation error) agreed that the best model included interactions between trial order, prior trial type, current trial type, and mixed use. Model fits are available in Tables S8 and S9 in the Supplemental Material. We scrutinized the model that cross-validated and observed the following pattern of results.

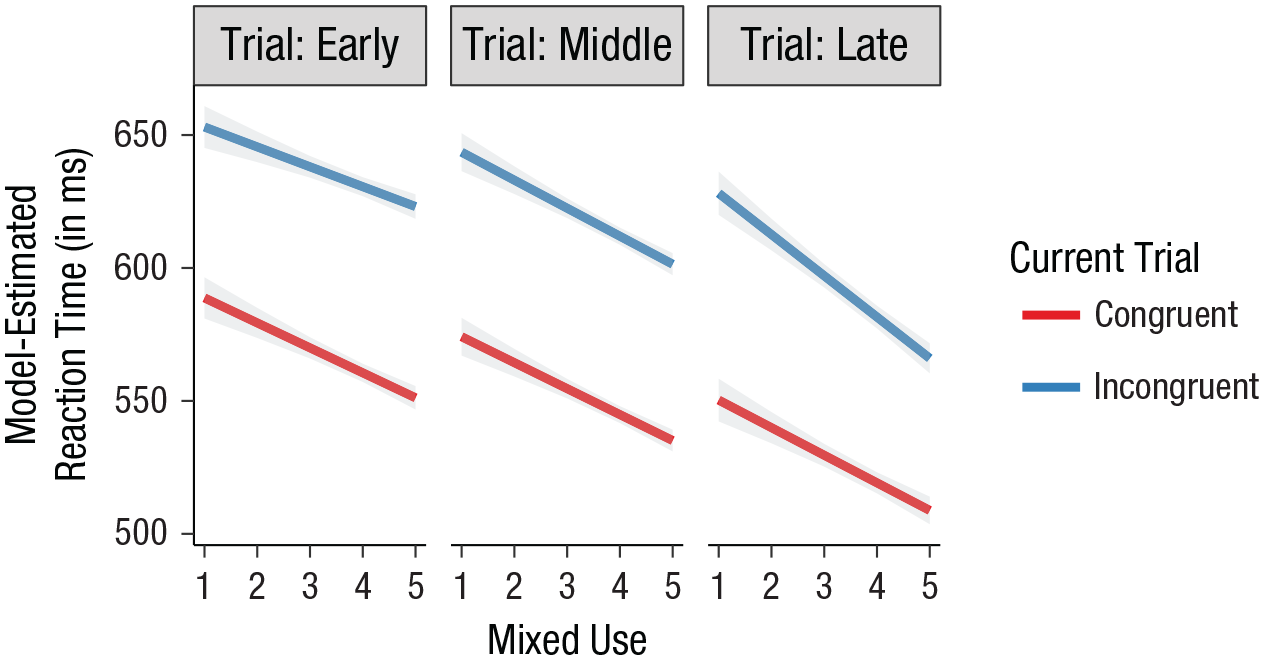

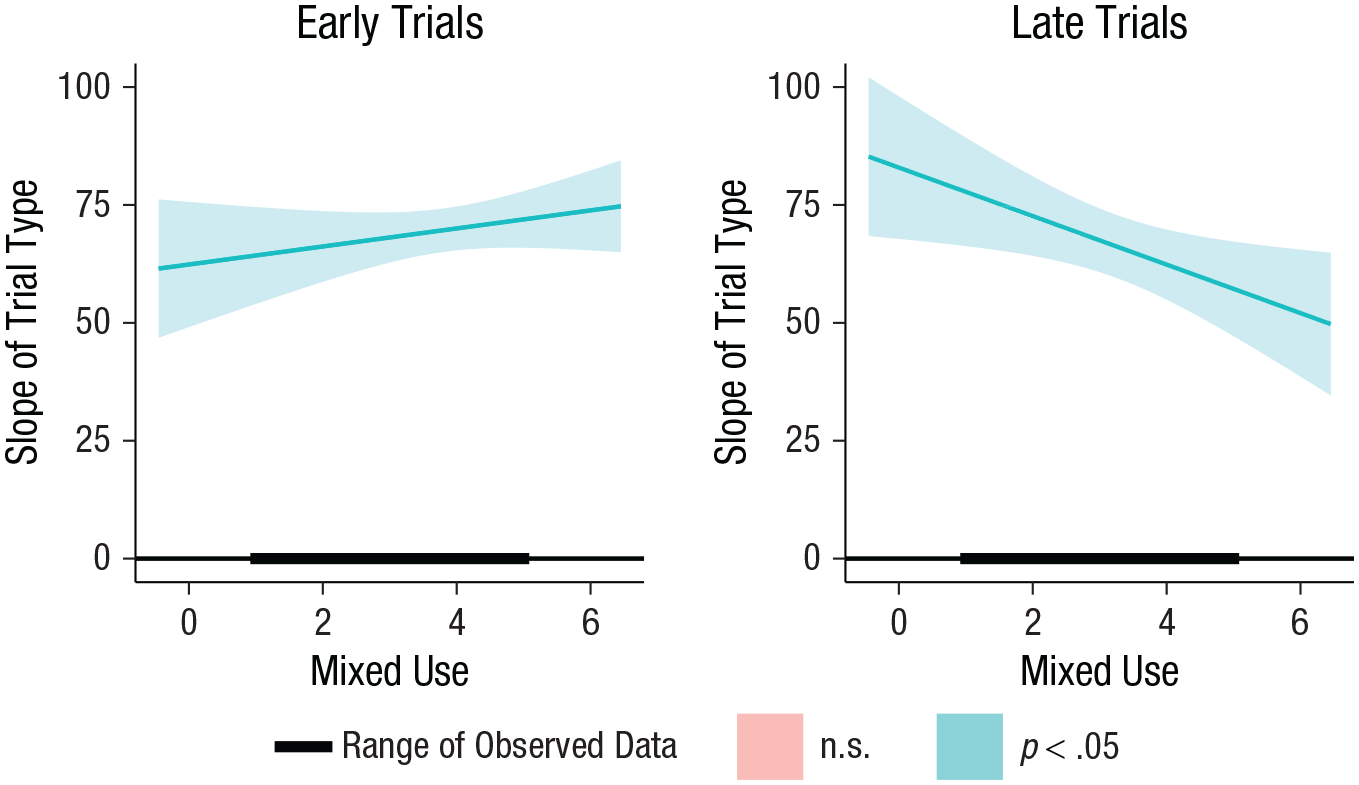

There was a significant effect of current trial type (b = 69.63, 95% CI = [62.45, 76.81], d = 0.41, t = 19.01, p < .001), indicating that incongruent trials were slower than congruent trials. Current trial type did not interact with prior trial type (b = −8.67, 95% CI = [−23.05, 5.71], d = 0.05, t = −1.18, p = .237) or with trial order (b = 5.55, 95% CI = [−0.71, 11.8], d = 0.03, t = 1.74, p = .082). Thus, there was no evidence of a sequential congruency effect in RTs to support conflict adaptation at the group level. Crucially, there was a main effect of mixed use (b = −10.16, 95% CI = [−14.45, −5.86], d = 0.06, t = −4.64, p < .001) and a three-way interaction between current trial type, trial order, and mixed use (b = −1.85, 95% CI = [−3.51, −0.18], d = 0.01, t = −2.17, p = .030). These effects indicated that participants with higher degrees of mixed use were faster on the task. Moreover, for trials that occurred early in the task, participants with high degrees of mixed use exhibited larger conflict effects relative to participants with low degrees of mixed use. However, toward the end of the task, the pattern of results flipped; participants with high degrees of mixed experience reduced conflict effects (for an illustration of the interaction, see Figs. 4 and 5). Full regression results are available in Table S10 in the Supplemental Material.

Illustration of the three-way interaction between current trial type, trial order, and mixed use in the Number Stroop task. This interaction indicates that people with high degrees of mixed use exhibit greater conflict during early trials and less conflict during late trials relative to people with low degrees of mixed use. This suggests that they exhibit a trial order adaptation effect. Error bands represent standard error of the mean.

Johnson-Neyman interval plot illustrating the slope of the trial type effect as a function of trial order and mixed use. This plot illustrates the three-way interaction with respect to conflict.

Accuracy analysis

For Number Stroop accuracy, half of the metrics (AIC and LRT) agreed that the best model included interactions between trial order, prior trial type, current trial type, and L2 AoA, and half (BIC and cross-validation error) agreed on a simpler model without L2 AoA. Model fits are available in Tables S11 and S12 in the Supplemental Material. We scrutinized the simpler model that cross-validated and observed the following pattern of results.

There was a significant effect of current trial type, indicating that incongruent trials were less accurate than congruent trials (b = −1.52, 95% CI = [−1.62, −1.41], z = −29.16, p < .001). Current trial type interacted with prior trial type (b = 0.36, 95% CI = [0.16, 0.57], z = 3.53, p < .001) but not trial order (b = 0.03, 95% CI = [−0.08, 0.13], z = 0.52, p = .603). These effects indicated that conflict effects were smaller after an incongruent trial (i.e., sequential congruency effect), suggesting that participants exhibited conflict adaptation. However, conflict adaptation effects did not build over the task. Full regression results are available in Table S13 in the Supplemental Material. Because the reader may be interested in possible effects of L2 AoA (that did not cross-validate), we provide results in “Effects of L2 AoA on Number Stroop Accuracy” and Table S14 in the Supplemental Material.

Summary

Overall, there was evidence that bilingual experience, measured by mixed use in this experiment, modulated conflict over the task. Participants with high mixed use responded faster and exhibited smaller conflict effects than those with low mixed use, especially as the task progressed. Bilingual experience, as measured in this experiment, did not modulate sequential congruency effects as measured by the Number Stroop task in RTs. Interactions with bilingual experience were specific to RTs; no such effects were observed in accuracy.

Discussion

We modeled reactive inhibitory control performance to test whether individual differences in bilingual experience provide signal in predicting out-of-sample data beyond task information. This allowed us to test two broad theoretical perspectives differing in whether linguistic and cognitive systems interact. We modeled Simon Arrows and Number Stroop data with a large sample of bilinguals. Both tasks measured reactive inhibitory control and conflict adaptation but differed in language- and response-related uncertainty. Uncertainty is higher in Number Stroop, which may more precisely simulate control demands experienced by bilinguals (Gullifer & Titone, 2021).

At the group level, we observed engagement of inhibitory control through traditional conflict effects in both tasks. Incongruent trials were slower and less accurate than congruent trials. We also observed conflict adaptation in both tasks. Conventional sequential congruency effects occurred in RTs and accuracy in Simon Arrows, and in accuracy in Number Stroop. Conflict effects were smaller after incongruent trials than congruent trials, reflecting an increase in control demands from prior conflict (Botvinick et al., 2001) or carryover from the prior application of control (Hubbard et al., 2017; Mansouri et al., 2009; Paap, Myuz, et al., 2019). Conflict effects were also smaller toward the end of the task (an effect restricted to Simon Arrows RTs), reflecting adaptations in conflict management.

Crucially, individual differences in bilingual experience modulated performance related to long-term adaptations. We observed robust global speed advantages associated with bilingual experience in Simon Arrows and Number Stroop. In Simon Arrows, participants with higher L2 exposure were faster on all trial types relative to participants with lower L2 exposure. A compatible effect surfaced in Number Stroop as a function of mixed use. Both effects cross-validated, suggesting that they are robust and predictive of new data and relevant to reactive control models. Although this experiment did not explicitly compare monolingual versus bilingual populations, these results are in line with previous literature on conflict monitoring in bilinguals and monolinguals (Bialystok et al., 2004; Costa et al., 2009; Hilchey & Klein, 2011; Paap, Myuz, et al., 2019). They suggest that bilingual language experiences impact the cognitive system, when experiences are measured continuously in accordance with language usage patterns.

In Number Stroop, we also observed a relationship between mixed use, conflict effects, and trial order. People with higher mixed use exhibited larger conflict effects early but smaller conflict effects later relative to people with lower mixed use. This effect was restricted to RTs; accuracy was near ceiling. This suggests that individual differences in bilingual experience relate to efficiency in adapting to conflict between semantic number information. One possibility is that bilinguals with higher mixed use experienced more cross-language competition from the number labels than bilinguals with lower mixed use. These interactions cross-validated, suggesting that they are robust. The task specificity of the interaction might relate to the idea that Number Stroop more readily simulates bilingual language control demands than Simon Arrows.

Overall, these results extend work on proactive control by Gullifer and Titone (2020b) to reactive inhibitory control. There, bilinguals with higher language entropy, reflective of high joint language engagement, exhibited greater engagement of proactive control and active goal maintenance in a nonlinguistic task in which prior context is crucial for performance. Here, bilinguals with higher mixed use, indexing a similar construct (see “Correlational Analysis” in the Supplemental Material), exhibited greater conflict adaptation in Number Stroop compared with bilinguals with lower mixed use. These differences may reflect greater demands placed on interference control (including conflict monitoring and interference suppression) in highly bilingual contexts in which two languages must be regularly controlled (dual language contexts; Green & Abutalebi, 2013; Gullifer & Titone, 2020b).

The results are compatible with work showing that aspects of bilingualism relate to conflict adaptation (Adler et al., 2020; Grundy et al., 2017; Kheder & Kaan, 2021), but with some differences. First, modulations here occurred for long-term adaptation effects but not short-term sequential congruency effects. Thus, sequential congruency effects may not necessarily reflect adaptive attentional processes but instead reflect carryover effects from prior trials, particularly for tasks with equal proportions of trial types (Hubbard et al., 2017; Mansouri et al., 2009; Paap, Myuz, et al., 2019). Alternatively, sequential congruency effects may reflect processes that are adapted by some sociolinguistic contexts (e.g., Toronto, where bilingualism often involves English and another language) but not others (e.g., Montréal, where multilingualism almost always includes French, English, and another language). Methodological differences (e.g., whether monolingual samples were included) may also have contributed to differences between studies. In other words, the results observed here do not invalidate work done in these other locations.

In contrast, long-term adaptation effects observed here for Number Stroop reflect improvements in performance. Taken together, long-term adaptation effects vary within populations and can be accounted for by aspects of social language experience that involve joint language engagement (Adler et al., 2020; Kheder & Kaan, 2021).

Another key difference between this and prior studies is that, besides RT advantages, conflict adaptation effects were not observed in the Simon Arrows task. One possible reason is that these tasks are unreliable in measuring the targeted construct. Other researchers have argued that executive control tasks do not correlate with one another, indicating poor task validity (Paap et al., 2020). However, tasks recruit multiple control processes and may differ in their recruitment strength according to individual and task differences. Thus, it may be too simplistic to expect high between-tasks correlations (Salig et al., 2021). Another possibility is rooted in differences between linguistic and social contexts across studies, impacting transfer between cognitive systems (Barnett & Ceci, 2002; Sala et al., 2019).

Montréal is a uniquely multilingual environment (e.g., Tiv et al., 2021, 2022) relative to other locations such as Florida (Adler et al., 2020; Kheder & Kaan, 2021) and Ontario (Goldsmith & Morton, 2018; Grundy et al., 2017), where English is predominant. Thus, Montréalers who jointly engage multiple languages must frequently manage competing language representations and response options. This coheres with the idea that far transfer occurs for socioculturally relevant cognitive training (Diamond & Ling, 2016). Whereas our cross-validation results provide evidence that the results will generalize within Montréal, an open question is whether they generalize to other comparable sociolinguistic contexts. Moreover, the generalizability of our results may likely be affected by other factors, such as sampling bias. For example, the sample was overwhelmingly female, and we recruited individuals based on their bilingualism (and willingness to participate in a language study), as opposed to random sampling.

Importantly, the idea and findings that aspects of language usage might impact executive control abilities call into question generalizability of a vast number of studies on various psychological constructs that were restricted to group-level analyses of single populations. A broad assumption in the psychological sciences is that group-level results should generalize to all populations, when this is not thoroughly tested. Going forward, researchers could be more rigorous, not only in terms of methodology but also in exploring the generalizability of our constructs to different populations. The field of bilingualism champions iterative scientific discovery, where theoretical perspectives are reformulated as new data are acquired (Navarro-Torres et al., 2021).

Our results bolster theoretical perspectives that treat language and executive control systems as interactive and adaptive. Although the findings are subtle in terms of effect size and cross-validation error reduction, they highlight the power of treating language experience as a multidetermined spectrum of continuous variables versus an arbitrary dichotomy (e.g., bilinguals vs. monolinguals). Crucially, past studies would have treated this sample as one homogeneous group, when in fact there is a diversity of experiences that systematically predict performance outcomes.

Supplemental Material

sj-odt-1-pss-10.1177_09567976221113764 – Supplemental material for Bilingual Language Experience and Its Effect on Conflict Adaptation in Reactive Inhibitory Control Tasks

Supplemental material, sj-odt-1-pss-10.1177_09567976221113764 for Bilingual Language Experience and Its Effect on Conflict Adaptation in Reactive Inhibitory Control Tasks by Jason W. Gullifer, Irina Pivneva, Veronica Whitford, Naveed A. Sheikh and Debra Titone in Psychological Science

Footnotes

Transparency

Action Editor: Angela Lukowski

Editor: Patricia J. Bauer

Author Contributions

J. W. Gullifer, I. Pivneva, and D. Titone developed the study concept and contributed to the study design. I. Pivneva, V. Whitford, and N. A. Sheikh conducted testing, data collection, and aggregation. J. W. Gullifer and D. Titone analyzed and interpreted the data. J. W. Gullifer drafted the manuscript, with input from D. Titone. V. Whitford and D. Titone provided revisions. All the authors approved the final manuscript for submission.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.