Abstract

Research shows that people prefer self-consistent over self-discrepant feedback—the self-verification effect. It is not clear, however, whether the effect stems from striving for self-verification or from the preference for subjectively accurate information. We argue that people prefer self-verifying feedback because they find it to be more accurate than self-discrepant feedback. We thus experimentally manipulated feedback credibility by providing information on its source: a student (control condition) or an experienced psychologist (experimental condition). In line with our expectations, the results of two preregistered studies with 342 adults showed that people preferred self-verifying feedback only in the control condition. In the experimental condition, the effect disappeared (or reversed, in Study 1). Study 2 showed that individual differences in credibility (epistemic authority) ascribed to the self and to psychologists matter as well. These findings suggest that feedback credibility, rather than the desire for self-verification, often drives the self-verification effect.

Keywords

How people select and process self-relevant information plays an essential role in shaping their self-perceptions, affect, and behavior. For instance, depression, one of the most ubiquitous and debilitating psychological disorders, is rooted in negative self-referential schemas inferred from such information (e.g., Beck, 1976; Gotlib & Joormann, 2010). Research shows, however, that the processing of self-relevant information is biased by how people view themselves to begin with. The influential self-verification theory (Swann, 1983, 1987, 1990, 2012) proposes that people seek feedback consistent with their self-views (e.g., Swann, 1983, 2012). Indeed, multiple studies have shown that people with positive self-views solicit and prefer positive feedback from others, whereas people with negative self-views prefer less favorable feedback. This self-verification effect has been robustly found across samples, methods, and cultures (Seih et al., 2013; see also Kwang & Swann, 2010, for a meta-analysis).

However, the mechanism underlying this effect is less clear, and much of the evidence cited in the self-verification literature could be interpreted as supporting two alternative explanations. One, offered by Swann et al. (1989; see also Swann, 2012), is that people generally desire self-verifying feedback because it gives them a sense of coherence, predictability, and control. However, it has also been claimed that the self-verification effect stems from the perception that feedback consistent with one’s self-views is accurate (e.g., Kwang & Swann, 2010; Seih et al., 2013). Indeed, studies have shown that people find self-verifying (i.e., self-consistent) feedback more accurate, credible, and diagnostic than self-discrepant feedback (e.g., Giesler et al., 1996; Markus, 1977; Pelham & Swann, 1994; Shrauger & Lund, 1975; Swann et al., 1987; Swann & Pelham, 2002).

Determining whether a coherence-driven desire for self-verification or perceived (subjective) accuracy is the main driver of the self-verification effect has important theoretical and practical implications. If the desire for self-verification is the primary (or only) mechanism, any new information discrepant from what people think about themselves should be ignored, discounted, or rejected. However, if the preference for self-verifying feedback is mainly driven by subjective accuracy, self-discrepant information may be appropriately considered, given that it is perceived as accurate. This opens possibilities for presenting people with self-discrepant feedback they would seriously consider and, as a consequence, possibly changing their persistent self-views (e.g., in clinical work).

The conceptual challenge, however, is that self-consistency of feedback and its perceived accuracy are typically related—people see self-verifying (compared with self-discrepant) feedback as more accurate and its source as more competent (Swann et al., 1987, 1992). Indeed, these two variables have often been conflated in previous experiments. Therefore, in the present research, we aimed to disentangle them and test whether people might prefer self-verifying feedback simply because they see it as more accurate.

We assumed that people typically regard themselves as self-experts, or epistemic authorities (Kruglanski et al., 2005), in matters related to the self. After all, they have a lifelong experience of how they have performed various tasks and how others have treated them (e.g., Mead, 1934; Swann, 2012). With accumulating feedback, they thus acquire a growing “database” to support their self-views and become increasingly confident in them. They use these self-views as a reference point and view information consistent with them as accurate and self-discrepant information as likely inaccurate. Self-discrepant information is thus rejected even if it is desirable.

In light of this analysis, the self-verification effect is not surprising. People think they know better when it comes to judging the credibility of feedback about their own characteristics, and they trust themselves more than they do others, especially when the others offer views that are discrepant from people’s own (see Swann et al., 1987).

However, other people can be seen as credible, too, in some matters more credible than oneself; for example, we see a doctor when we have a health problem or a mechanic when we need to fix a car. If we are not ourselves experts in a given domain (or we do not think we are), we rely on others’ opinions rather than our own. Indeed, studies show that people give greater priority to information provided by sources ascribed with high (compared with low) epistemic authority, they process such information more extensively, and they derive greater confidence from it (for an overview, see Kruglanski et al., 2005). In other words, information from a source with high epistemic authority is seen as more accurate and valid than information from one with lower epistemic authority.

Statement of Relevance

People typically prefer feedback consistent with what they think of themselves and reject feedback inconsistent with their self-views (e.g., they disregard compliments they find “too good to be true”). This preference, called the self-verification effect, contributes to the persistence of overly negative, maladaptive, or inaccurate self-views. But why does it occur? Psychology has offered two explanations. First, people strive for coherence and seek to confirm their existing self-knowledge. Alternatively, they find self-consistent feedback more accurate. We empirically tested these explanations. Participants with positive and negative social self-esteem received positive and negative feedback regarding their social skills. Self-discrepant feedback (positive for participants with negative self-esteem and vice versa) came from either a student (a lower credibility source) or an experienced psychologist (a higher credibility source). The results showed that people no longer favored self-consistent feedback when self-inconsistent feedback came from a psychologist and was thus seen as credible. This highlights the possibility of providing people with corrective feedback they will not readily reject.

Admittedly, we may know more about our traits, talents, and abilities than about medicine or automotive mechanics, yet even in those domains, we may perceive others, such as psychologists or psychiatrists, as more knowledgeable than ourselves. So if subjective accuracy underlies the self-verification effect, the effect should disappear when the self-discrepant feedback about one’s traits comes from a source of high authority.

To test this prediction, we ran two studies in which we manipulated the credibility (epistemic authority) of the source of self-discrepant feedback. In Study 2, we additionally measured the epistemic authority one ascribes to the self and a source of self-discrepant information, and we tested whether individual differences in epistemic authority matter for feedback preference.

Study 1

In Study 1a (preregistered at https://aspredicted.org/my5jk.pdf), we aimed to test participants’ preference for self-verifying versus self-discrepant feedback, depending on its credibility. Preselected groups of participants performed a feedback-selection task (Giesler et al., 1996). Crucially, we manipulated the credibility of the feedback sources. We told participants that self-discrepant feedback came from either a student (control condition) or an experienced psychologist (experimental condition). We expected that in the control condition, participants with positive self-views would prefer positive feedback and participants with negative self-views would prefer negative feedback. In the experimental condition, however, when the source of the self-discrepant feedback was highly credible, we expected self-verification effects to disappear.

Method

Participants

The sample size for the study was determined on the basis of an a priori power analysis (G*Power, Version 3.1; Faul et al., 2009) run for a mixed analysis of variance (ANOVA) containing within-between interactions (two measurements and four groups, respectively). We assumed a medium effect size (f = 0.15) and power at the level of .90. It turned out that a sample of at least 164 participants would be necessary to obtain the assumed power. Anticipating that some data would be lost and that some participants would guess the purpose of the study, we sought to recruit 180 participants (90 with positive self-views and 90 with negative self-views).

To recruit participants for the study, we first administered a screening survey in which we asked participants to fill out the Texas Social Behavior Inventory (Helmreich & Stapp, 1974) measuring social self-esteem. Following previous experiments (e.g., Hixon & Swann, 1993; Seih et al., 2013; Swann et al., 1987, 1990, 1992), we focused on more specific instead of general self-views and measured social self-esteem. We recruited participants via advertisements in local portals. We invited adults between 18 and 40 years old who spoke fluent Polish (the language of the study).

In total, 614 participants (476 women, 136 men; two did not indicate their gender) between 18 and 40 years old (M = 23.45, SD = 4.26) volunteered to participate in the study. Of these participants, the 25% with the highest scores (positive self-view) and lowest scores (negative self-view) were invited to sign up for the actual study. Ultimately, 96 participants with high social self-esteem and 94 participants with low social self-esteem participated (see next section for details). There were 159 women and 30 men (one participant did not indicate gender) between 18 and 38 years old (M = 23.58, SD = 4.48). For final analyses, we excluded cases with incomplete data on crucial variables (n = 7) and participants who guessed the purpose of the study (n = 11; see the Materials section). Thus, the final sample comprised 172 participants (145 women) with a mean age of 23.60 years (SD = 4.40).

Participants were compensated 20 Polish zlotys (equivalent to $5 U.S.) for participation in the study. All provided written consent. The study was approved by the local research ethics committee.

Materials

Screening survey

To recruit participants with positive and negative self-views on their social abilities, we ran a short online survey in which we asked those who wanted to participate to answer a set of questions about themselves. The questions were taken from the short version of the Texas Social Behavior Inventory (Helmreich & Stapp, 1974; based on Helmreich et al., 1974), often used in other self-verification experiments (e.g., Hixon & Swann, 1993; Seih et al., 2013; Swann et al., 1987, 1990, 1992). The scale consists of 16 items, such as, “I have no doubts about my social competence” or “I am not likely to speak to people until they speak to me” (reverse scored). For each item, the response alternatives range from 0 (not at all characteristic of me) to 4 (very characteristic of me). Responses to all items are averaged, and the average is used as a general score of social self-esteem (the higher the score, the higher the social self-esteem). In our screening sample, the scores ranged from 0.06 to 4.00 (participants with high social self-esteem: M = 2.94, SD = 0.27; participants with low social self-esteem: M = 1.19, SD = 0.34; Cronbach’s α = .87). The two groups significantly differed in their levels of social self-esteem, t(179.07) = 39.13, p < .001.

Online questionnaires

Before coming to the laboratory, participants were asked to fill out a set of online questionnaires. These measures were used to make the cover story and the feedback in the lab more believable. Because we focused on social self-esteem, in the survey, we included items from questionnaires such as the Test of Emotional Intelligence (Śmieja et al., 2014), the Rosenberg Self-Esteem Scale (1965), and the Self-Attributes Questionnaire (Pelham & Swann, 1989) to create a set of items that would make a plausible base for creating participants’ social profiles.

Feedback

In the laboratory, participants performed a feedback-selection task (e.g., Giesler et al., 1996). Specifically, they were asked to read summaries of two profiles that ostensibly had been prepared by two independent evaluators on the basis of responses to the online questionnaires. In fact, the two profiles were fictitious, and all participants read the same descriptions regardless of their answers on the questionnaires. The profiles pertained to social skills and were said to represent longer in-depth descriptions. One was moderately positive and described a person who is socially skilled and well-adjusted. The other was moderately negative and described someone who is “sufficiently socially adjusted” and not very socially skilled (see Swann et al., 1987). Short descriptions were accompanied by numerical scores assigned to three socially relevant abilities: social competence, emotional intelligence, and social adjustment. The rates for the positive feedback oscillated between 8 and 9 and for the negative feedback between 4 and 5 on a scale ranging from 0 to 10. Participants were told that the profiles had been prepared according to a template, so that participants would not doubt the veridicality of the opinions because of their similar structure (such similarity was essential for our research purposes, because we intended only the favorability of the opinions to differ). The summaries are presented in Supplementary Materials A (available at https://osf.io/xertg/).

After reading each summary, participants were asked to rate (a) its favorability, or positivity, on a scale from 1 (definitely negative) to 11 (definitely positive); (b) its accuracy, on a scale from 1 (definitely inaccurate) to 11 (definitely accurate); and (c) to what extent it was consistent with what they thought of themselves, on a scale from 1 (definitely inconsistent) to 11 (definitely consistent). They were also asked to rate the competence of the source of the feedback, from 1 (definitely incompetent) to 11 (definitely competent), and to what extent they wanted to read the full-length version of the given description, from 1 (definitely no) to 11 (definitely yes). Finally, participants indicated which of the two descriptions they wanted to read in full (they selected either positive or negative feedback). The last two variables, willingness to read the description in full length (continuous variable for both positive and negative feedback) and selection of feedback (categorical variable), were our dependent variables. The other four measures served as manipulation checks. For economy of presentation, the results for manipulation checks are presented in Supplementary Materials B (available at https://osf.io/4trmw/).

Source-credibility manipulation

Additionally, we manipulated the credibility of the feedback source. In the control condition, participants were led to believe that both types of feedback were prepared by a psychology student (as in other similar experiments; see Giesler et al., 1996; Hixon & Swann, 1993). Specifically, they read, Both opinions were prepared by second-year psychology students, Tomasz Kaminski and Jacek Kaczmarek, who are learning how to interpret personality tests and draw conclusions from questionnaire data. They have not prepared profiles in previous studies, so we do not have information on their accuracy yet.

In the experimental (high-credibility) condition, the self-verifying opinion (positive for participants with positive self-views and negative for participants with negative self-views) was also said to have been prepared by a psychology student (Tomasz Kaminski, described in the same manner as in the control condition). However, the self-discrepant opinion (negative for participants with positive self-views and positive for participants with negative self-views) was said to have been prepared by an experienced psychologist. Specifically, participants read that the self-discrepant opinion had been prepared by Dr. Jacek Kaczmarek, “an experienced clinical and personality psychologist who is an expert in interpreting personality tests and drawing conclusions from questionnaire data.” They were also told that his former opinions showed that, on the basis of personality tests, he could judge a person’s character very well and predict their behavior in a variety of social situations—even if those opinions seemed surprising at first glance. The psychologist was thus presented as higher in expertise and experience, and hence able to deliver more accurate feedback, than a student.

We assumed that manipulating the source of self-discrepant feedback (rather than self-verifying feedback, or both) would be crucial for testing our hypothesis. In the experimental condition, participants were forced to choose between self-verification and accuracy (or credibility): Self-verifying feedback is not credible, and credible feedback is nonverifying. Therefore, this condition allowed us to identify whether the desire for self-verification or accuracy primarily drives feedback choice. This would not be possible in a condition in which both opinions come from sources of the same (high or low) credibility or when self-verifying feedback is provided by a high-credibility source and self-discrepant feedback is provided by a low-credibility source.

Procedure

The study consisted of three steps. The first was the screening survey, which we used to recruit participants with positive and negative self-views. In the second step, preselected participants were asked to fill in a set of questionnaires in which they were asked questions about their self-views, social skills, and personality in general. This was done to make the feedback provided more believable. The questionnaires were administered online, and participants needed to complete them at least 1 day before coming to the laboratory.

On arrival, participants were given a cover story stating that they would be asked to read two profiles that had been prepared on the basis of their answers to the online questionnaires. They were told that this was being done to prepare materials for another study that would soon be run under the same project.

The study was run in sessions containing one to six participants. Each participant was in a separate cubicle and was unable to communicate with others. The experimenter, blind to participants’ self-concept and to the study hypotheses, handed the profiles to each participant individually in an envelope marked with the nickname the participant was using in the study (the same nickname as in the online part). This was done to make participants believe that the opinions were indeed personalized. Participants read two summaries: one positive, one negative. The order of the positive and negative summaries was counterbalanced. Half the participants were assigned to the control condition and half to the experimental condition. The assignment was random within positive- and negative-self-view groups (to make sure the groups were equally represented within conditions). The order of the feedback from the student and the psychologist (in the experimental condition) was also counterbalanced.

After reading each opinion, participants evaluated it by responding to a set of questions. Then they indicated which of the two profiles they would like to read in full after the actual study had ended. Participants marked their answers on the sheet provided and placed it back in the envelope. Then they performed a set of filler tasks (presented as the actual study). At the end, they read the debriefing script and answered questions about the purpose of the study. They were then thanked and compensated for participation.

After completing the filler tasks, participants answered an open-ended question asking what they thought the purpose of the study was. They typed their answer to this question and, having done that, read an extensive debriefing explaining the purpose of the study and the manipulations used. After familiarizing themselves with the explanations, participants were asked to confirm that they understood that the feedback was false. They were also asked whether they had guessed that the feedback was false. They responded by selecting one of the following options: (a) “Yes, I was sure of that [feedback was fabricated] when I was reading it,” (b) “Yes, I did not know that when reading the feedback but I guessed later,” (c) “No,” and (d) “I did not think about that during the study.” Those who selected response (a) and who provided a response to the open-ended question suggesting that they might have guessed the study’s purpose (or the crucial manipulations used) were excluded from further analyses.

Results

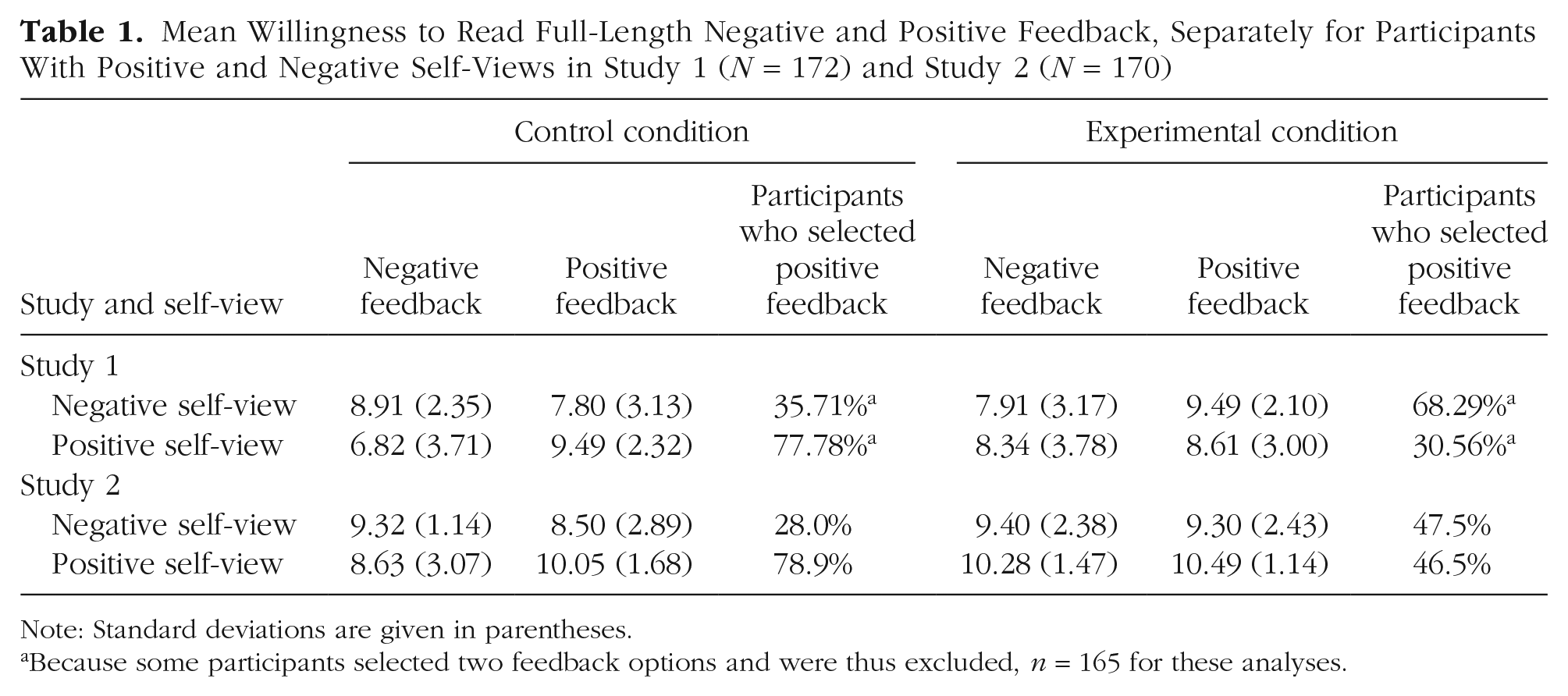

In line with the self-verification paradigm, our variables of interest were the ones indicating preference for self-verifying versus self-discrepant feedback. In our study, participants expressed this preference in two ways: by rating the extent to which they wanted to read the positive and negative feedback and by selecting one of the two types of feedback. Table 1 presents participants’ ratings of their willingness to read each type of feedback in full.

Mean Willingness to Read Full-Length Negative and Positive Feedback, Separately for Participants With Positive and Negative Self-Views in Study 1 (N = 172) and Study 2 (N = 170)

Note: Standard deviations are given in parentheses.

Because some participants selected two feedback options and were thus excluded, n = 165 for these analyses.

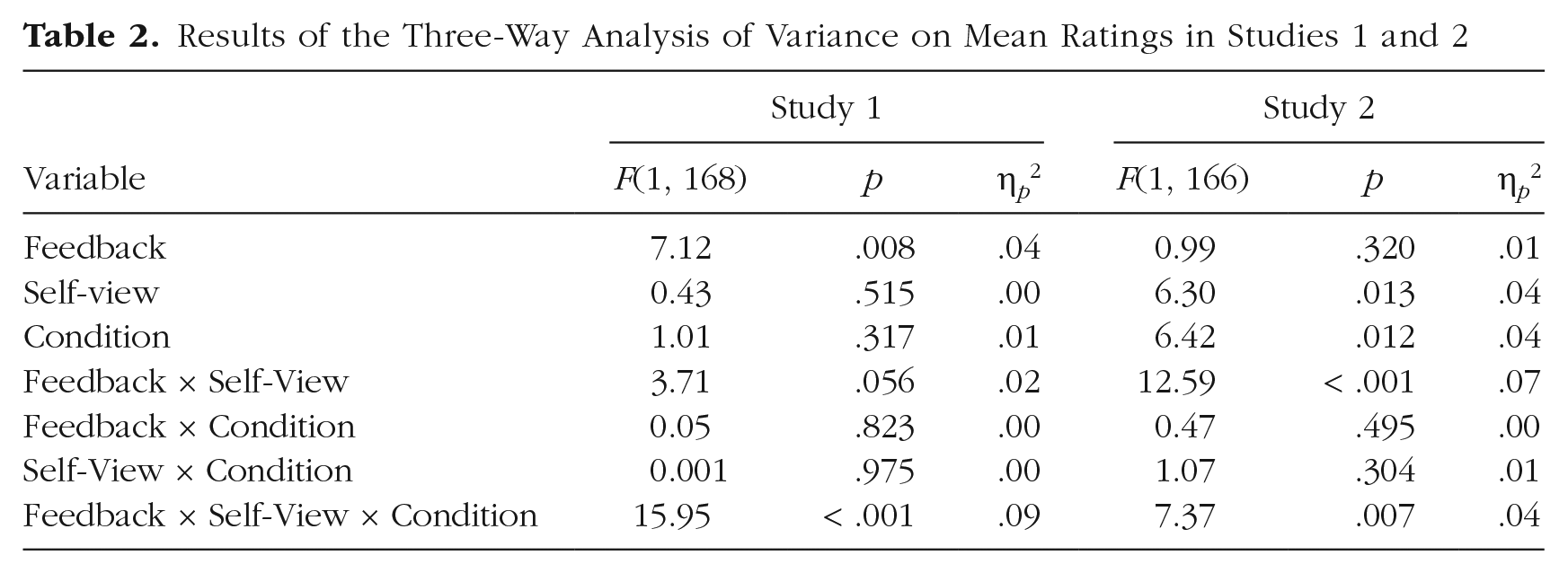

These ratings were entered in a 2 (feedback: negative vs. positive) × 2 (self-view: negative vs. positive) × 2 (condition: control vs. experimental) mixed ANOVA in which we expected a three-way interaction indicating a self-verification effect (i.e., participants with positive self-views would prefer positive feedback, and participants with negative self-views would prefer negative feedback), but only in the control condition. In the experimental, or high-credibility, condition, we expected no preference for self-verifying feedback.

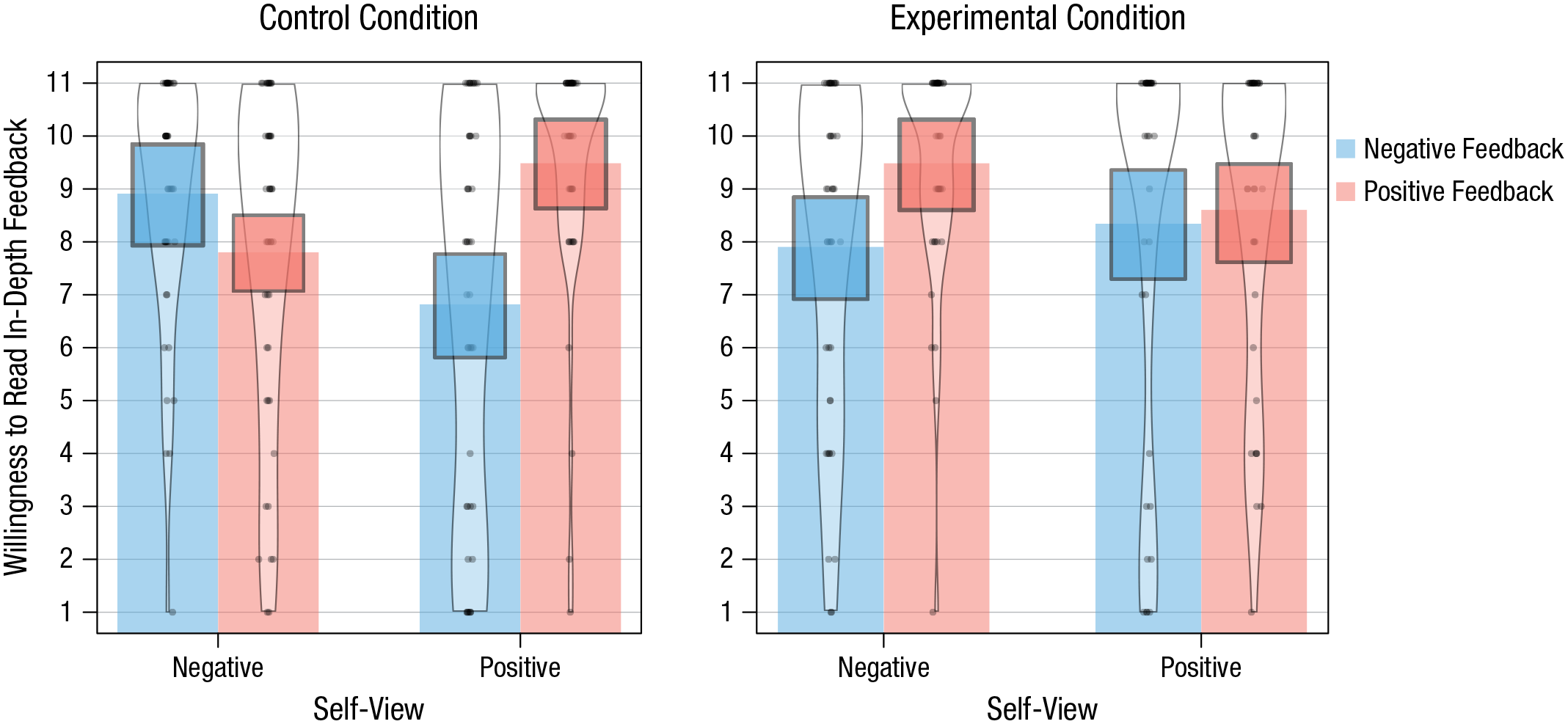

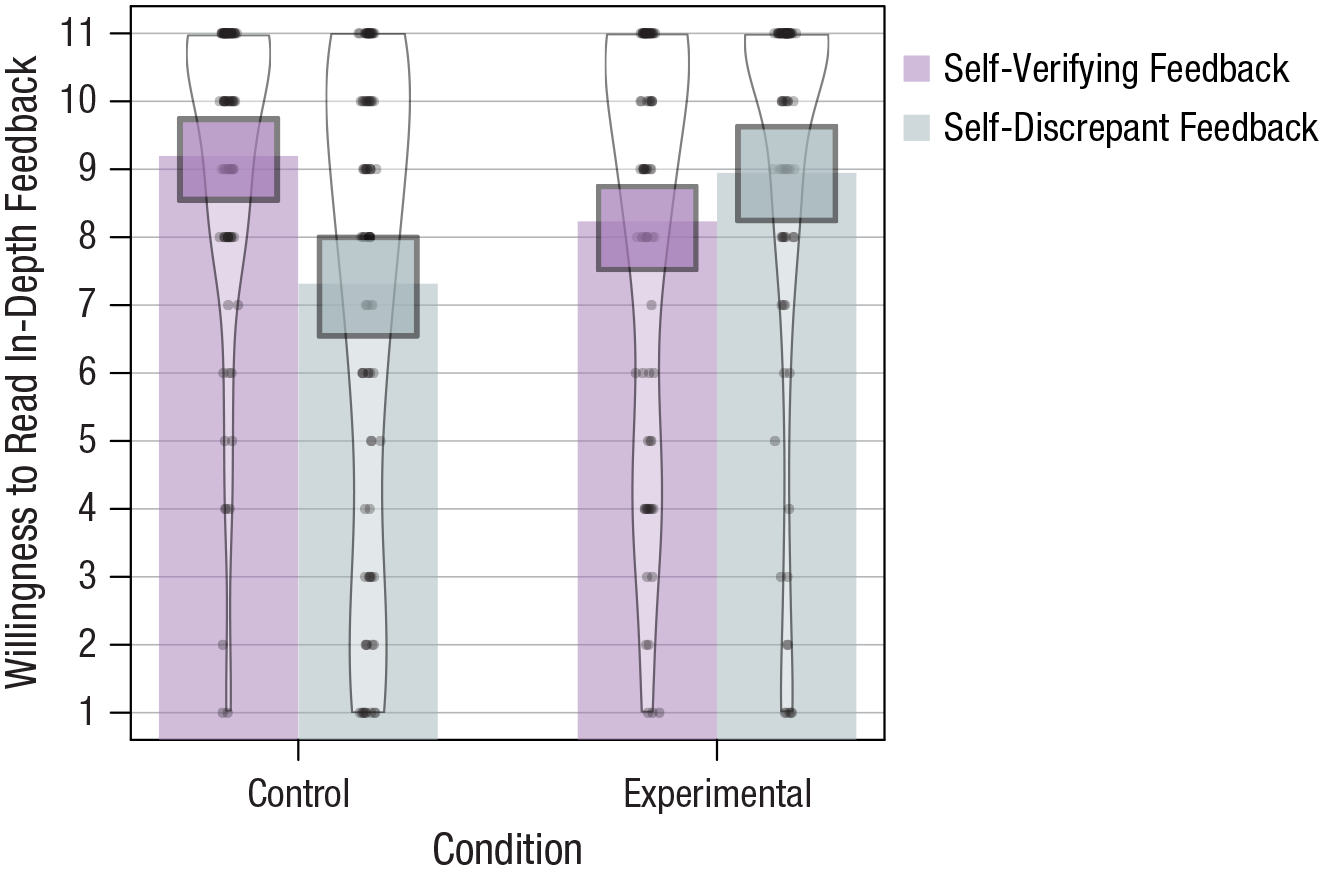

The results supported our predictions: There was a significant Feedback × Self-View × Condition interaction (see Table 2). In line with expectations, results showed the self-verification effect only in the control condition. Indeed, in the control condition, participants with positive self-views preferred positive over negative feedback, F(1, 168) = 18.40, p < .001, η p 2 = .10, whereas participants with negative self-views tended to prefer negative over positive feedback, F(1, 168) = 3.25, p = .073, η p 2 = .02 (all pairwise comparisons were Bonferroni corrected). This was not true in the experimental condition, in which participants with positive self-views were equally willing to read positive and negative feedback, F < 1. Interestingly, in this condition, participants with negative self-views were more willing to read positive (i.e., self-discrepant) than negative feedback, F(1, 168) = 6.18, p = .014, η p 2 = .04. Thus, these participants preferred self-discrepant over self-verifying feedback when that feedback came from a highly credible source. Figure 1 presents these results graphically.

Results of the Three-Way Analysis of Variance on Mean Ratings in Studies 1 and 2

Participants’ preference for positive and negative feedback depending on their self-views, separately for the control and experimental conditions in Study 1. Points represent raw data (jittered horizontally), bars represent means, beans represent smoothed densities, and rectangles represent 95% confidence intervals around the means (to properly adjust for within-subject comparisons, we estimated the confidence intervals in SPSS). This pirate plot was created using the yarrr package (Version 0.1.5; Phillips, 2017) for R (Version 4.0.3; R Core Team, 2020).

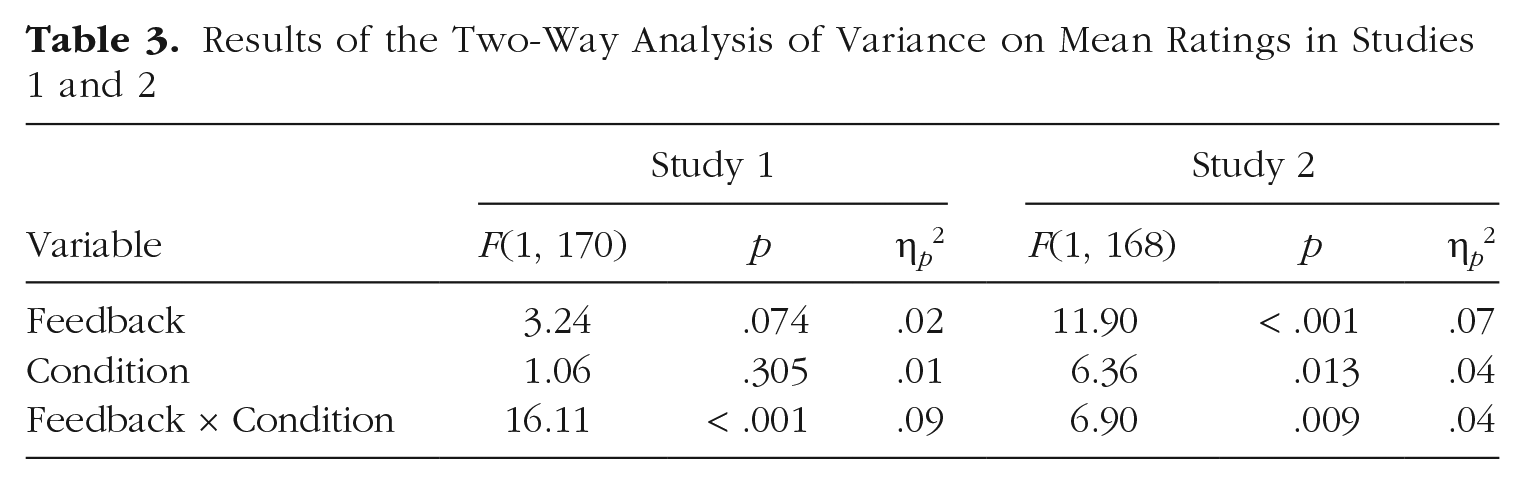

Our main finding—that the preference for self-verifying feedback was present in the control condition but disappeared in the experimental condition—emerged more clearly after we recoded the positive and negative feedback into self-verifying and self-discrepant feedback: Positive feedback was coded as self-verifying for participants with positive self-views and self-discrepant for participants with negative self-views, and negative feedback was coded as self-verifying for participants with negative self-views and self-discrepant for participants with positive self-views. By doing so, we omitted self-view in our analysis and ran a 2 (feedback: self-discrepant vs. self-verifying) × 2 (condition: control vs. experimental) mixed ANOVA. This analysis, although not preregistered, was equivalent to our above analysis and allows us to present the findings in a more straightforward manner.

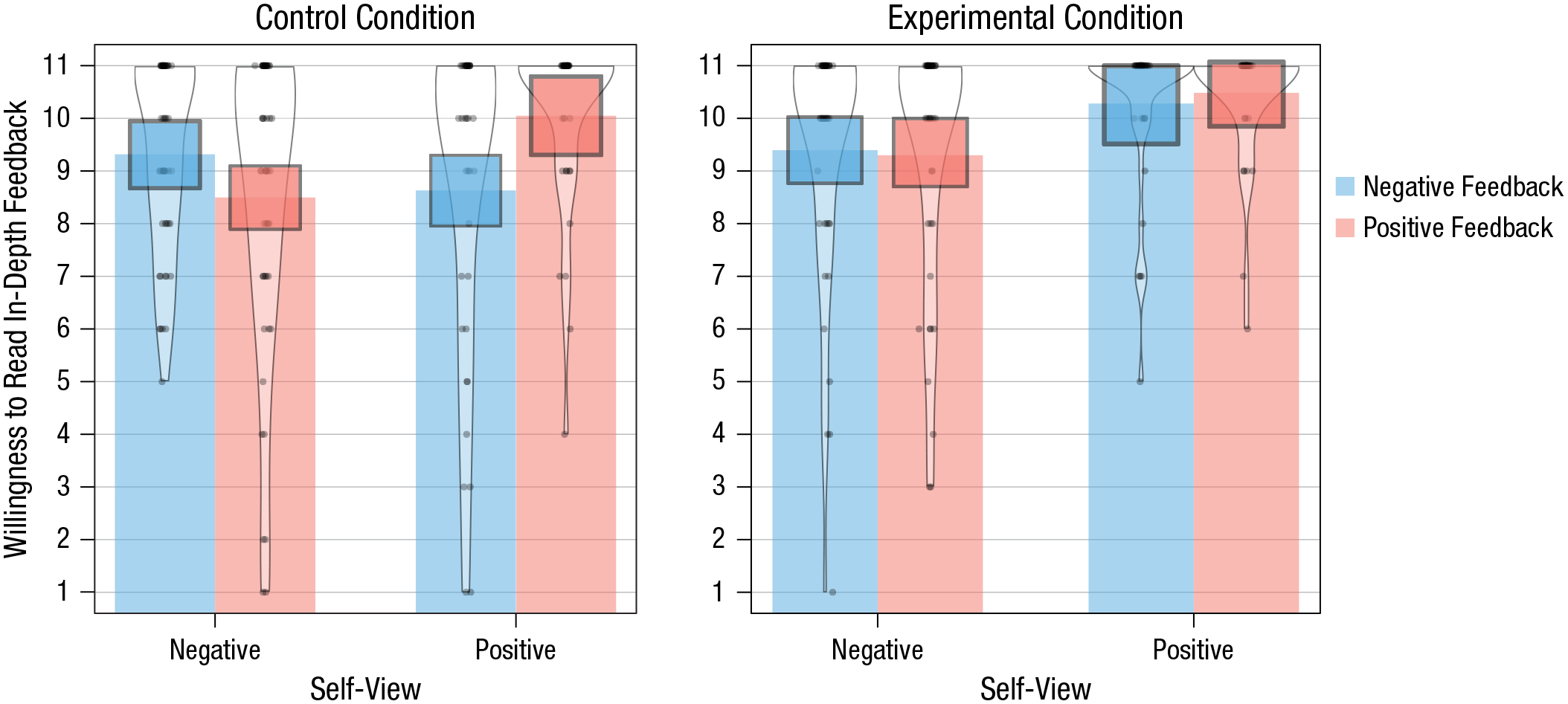

The results of this analysis yielded a significant two-way interaction (see Table 3). The interaction indicates that there was a significant difference between participants’ willingness to read both feedback types in the control condition, F(1, 170) = 17.93, p < .001, η p 2 = .10; specifically, participants preferred self-verifying over self-discrepant feedback. This preference, however, disappeared in the experimental condition, F(1, 170) = 2.32, p = .130, η p 2 = .01. The results are in line with our main prediction and are presented graphically in Figure 2.

Results of the Two-Way Analysis of Variance on Mean Ratings in Studies 1 and 2

Participants’ preference for self-verifying and self-discrepant feedback, separately for the control and experimental conditions in Study 1. Points represent raw data (jittered horizontally), bars represent means, beans represent smoothed densities, and rectangles represent 95% confidence intervals around the means (to properly adjust for within-subject comparisons, we estimated the confidence intervals in SPSS). This pirate plot was created using the yarrr package (Version 0.1.5; Phillips, 2017) for R (Version 4.0.3; R Core Team, 2020).

Following our preregistration protocol, we also analyzed participants’ feedback choices. The results of the logistic regression analysis aligned with the results for the willingness variable (see Supplementary Materials B). Specifically, we found the self-verification effect in the control condition: Participants with positive self-views were significantly more likely to select positive feedback than participants with negative self-views were. However, this tendency was reversed in the experimental condition. This time, participants with negative self-views were significantly more likely to choose positive feedback than participants with positive self-views were.

We reasoned that if the self-verification effect occurs because people doubt the credibility of self-discrepant feedback, the effect should disappear when the credibility of that feedback is experimentally increased. The results showed that this was indeed the case. Specifically, participants in the control condition preferred self-verifying over self-discrepant feedback, which replicates the findings of many studies demonstrating the self-verification effect (see Kwang & Swann, 2010; Swann, 2012). The same, however, was not true in the experimental condition, in which the self-discrepant feedback came from a highly credible source. In this condition, participants with positive self-views were equally willing to read positive and negative information about themselves, whereas participants with negative self-views were more willing to read positive (i.e., self-discrepant) information.

We obtained similar results when we used a different manipulation of source credibility. In Study 1b (preregistered at https://aspredicted.org/5xe8i.pdf), we manipulated credibility by informing participants about the evaluator’s accuracy in previous tests: moderate (45–50%) in the control condition and high (85–90%) in the experimental condition. Similar to the findings of Study 1a, the results of Study 1b showed that there was a significant preference for self-verifying feedback in the control condition and no such preference in the experimental condition. Study 1b is presented in Supplementary Materials D (available at https://osf.io/f8qdg/).

The results were thus in line with our expectations and show that the tendency to seek self-verifying information may not be universal but may also stem from self-discrepant information being seen as less accurate.

Study 2

The main aim of this preregistered study (https://aspredicted.org/ak97v.pdf) was to replicate the findings of Study 1a. Our secondary goal was to test whether the self-verification effect is conditional on epistemic authority ascribed to the self and the source of self-discrepant feedback (in our case, a psychologist). Therefore, we measured individual differences in epistemic authority ascribed to the two sources and expected the self-verification effect to be enhanced when people trusted themselves to be a good source of information about themselves (i.e., at high but not low levels of self-epistemic authority). On the other hand, we expected the self-verification effect to be weak or disappear at high (but not low) levels of epistemic authority ascribed to the source of the self-discrepant opinion.

Method

Participants

Participants were recruited through an independent research platform. Following previous experiments, we administered a screening survey with the Texas Social Behavior Inventory (Helmreich & Stapp, 1974), which was completed by 1,266 participants (627 women with a mean age of 35.45 years, SD = 12.13). Of these participants, the 25% with the highest and lowest scores were invited to participate in the actual study. As a result, 450 participants (248 women) between 14 and 40 years old (M = 30.22, SD = 6.42) responded to questionnaires from the first stage of the study. From this sample, 94 participants with high social self-esteem (positive-self-view group; M = 2.75, SD = 0.29) and 111 participants with low social self-esteem (negative-self-view group; M = 1.24, SD = 0.32) took part in the actual study—the feedback-selection task. The two groups significantly differed in their levels of social self-esteem, t(202.34) = 35.30, p < .001.

For final analyses, we excluded participants who had not indicated that they responded to the questionnaires seriously or who had guessed the purpose of the study (n = 6). We also introduced additional exclusion criteria that we had not foreseen in the initial preregistration form. It turned out that five participants under 18 took part in the study; therefore, we excluded them. Additionally, there were 24 participants with very unusual times for reading the feedback summaries. We decided to exclude data from everyone who spent less than 4 s or more than 15 min reading the feedback, assuming that this was an indicator that they did not focus on the content of the study or were distracted with other activities. The final sample comprised 170 participants (95 women) between 18 and 40 years old (M = 30.52, SD = 6.24).

Participants were compensated with the rates of the panel for their participation in the study. All provided informed consent. The study was approved by the local research ethics committee.

Materials

Feedback

Each participant read two types of feedback: positive and negative. Wording of the feedback was the same as in Study 1a; this time, however, the feedback was presented online.

Questionnaires

Before the main part of the study, which was the feedback-selection task, we asked participants to fill out a set of personality questionnaires similar to the ones used in Study 1. Additionally, there were three open-ended questions in which we presented scenarios describing challenging social situations (modified from the Test of Emotional Intelligence; Śmieja et al., 2014) and asked participants what the actors should do. The questionnaires were selected to make participants believe that their social abilities were measured. Only those who filled out the questionnaires were invited to participate in the next part of the study.

Epistemic-authority measures

We measured the epistemic authority one ascribed to the self and to others (i.e., psychologists). Because the feedback in our study pertained to social self-esteem, we measured epistemic authority in the social domain. To measure self-epistemic authority, we used eight items (e.g., “I have confidence in myself when it comes to assessing my ability to interact with others,” “I can accurately assess my social skills”; Cronbach’s α = .90), and to measure epistemic authority ascribed to psychologists, we used 11 items (e.g., “I would have confidence in a psychologist when it comes to assessing my skills in dealing with others,” “I usually see psychologists as experts on people’s social abilities and relationships”; Cronbach’s α = .94). The items were based on the Epistemic Authority Scale (Raviv et al., 1993) and were selected from a larger pool (see Supplementary Materials E, available at https://osf.io/d45pk/). Responses were given on a scale ranging from 1 (definitely disagree) to 6 (definitely agree). The scores for both scales were calculated by averaging responses to respective items. In both cases, the higher the score, the greater the epistemic authority.

Procedure

The study was run online, in Polish, and consisted of three steps: screening, questionnaires for the cover story, and the feedback-selection task. Only preselected participants were invited to fill in a set of questionnaires, including the epistemic-authority measures. They were told that the study was investigating the ability to draw inferences from personality questionnaires; therefore, relying on their responses to the questionnaires, two independent evaluators would prepare their profiles. Participants were instructed that they would be asked to rate each of these profiles in the next survey. Indeed, a few days later, participants received a link to the final part of the study—the feedback-selection task.

The task was presented in such a way as to make participants believe that the opinions had been individualized (e.g., a person entered a unique participant code to display the opinions). We also explained to participants that the opinions had been created according to a template we had provided the evaluators. The template consisted of 1- to 10-point scales on three dimensions (social competence, emotional intelligence, and social adjustment), followed by a description of a person (similar to the procedure in Study 1a).

Each participant read two opinions: one positive, one negative. Additionally, source credibility was manipulated. Participants in the control condition read two opinions from a psychology student. Participants in the experimental condition read a self-verifying opinion from a student and a self-discrepant opinion from an experienced clinical and personality psychologist (descriptions were the same as in Study 1a). Within the positive-self-view and negative-self-view groups, each participant was randomly assigned to either the control or the experimental condition. The order of the positive and negative feedback, as well as the feedback coming from a student and a psychologist (in the experimental condition), was counterbalanced. Information about the evaluator was presented on one screen, followed by the feedback on another screen and questions about the feedback on yet another screen. This was done to ensure that we correctly measured the time taken to read the feedback.

Participants familiarized themselves with the two types of feedback, answered the same set of questions as in Study 1a, and selected which in-depth feedback they wanted to read. The time taken to read each instance of feedback was recorded (for more information, see Supplementary Materials B, available at https://osf.io/4trmw/). Finally, they read the debriefing and answered the questions about their seriousness in responding and about guessing the purpose of the study.

Results

To test our main hypotheses, we followed the same analysis plan as in the previous study. Therefore, we analyzed the willingness to read each type of in-depth feedback and feedback selection.

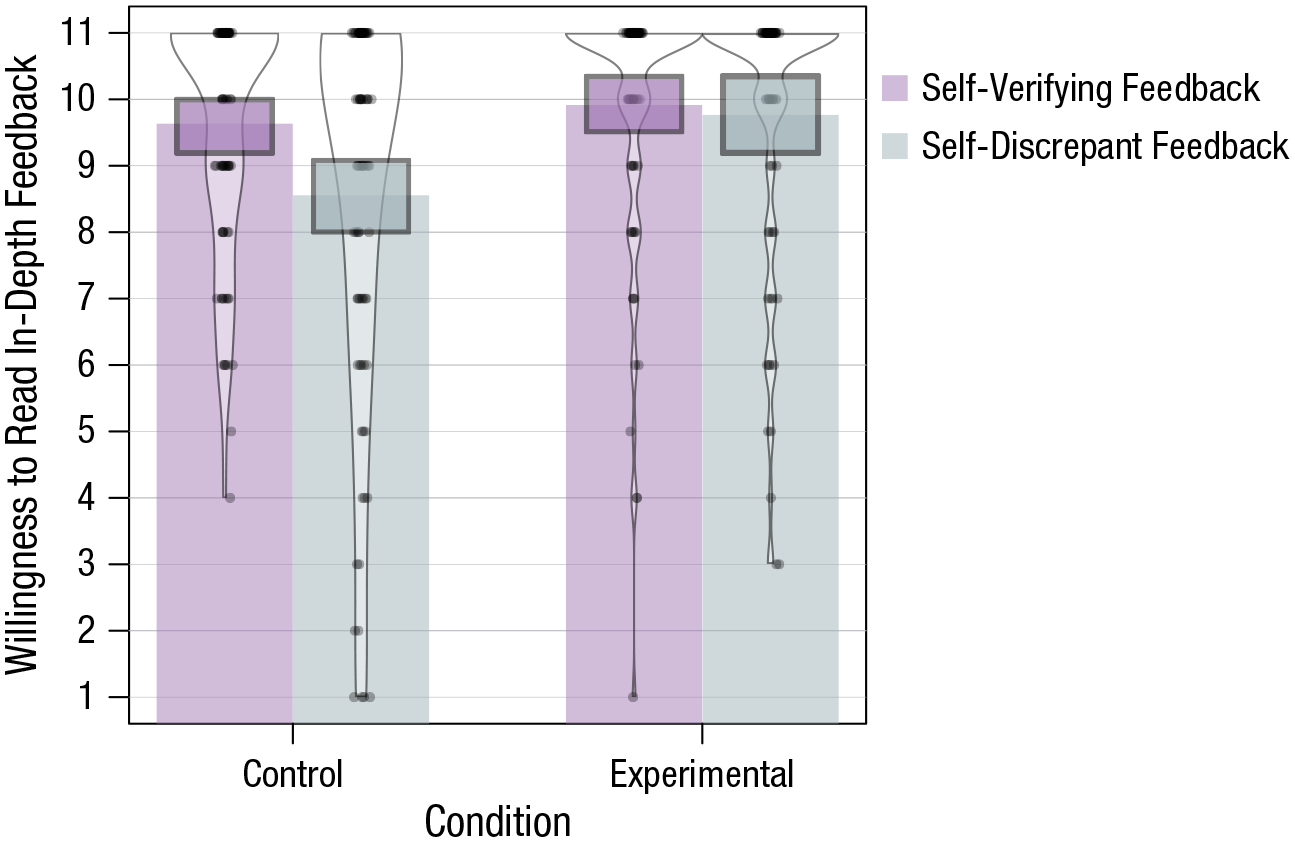

Willingness to read in-depth feedback

Table 1 presents participants’ ratings of their willingness to read each type of feedback in full. We entered these ratings in a 2 (feedback: negative vs. positive) × 2 (self-view: negative vs. positive) × 2 (condition: control vs. experimental) mixed ANOVA. This time, too, we expected a three-way interaction indicating a self-verification effect, but only in the control condition. Indeed, this is what we found, as in the previous study (see Table 2). Bonferroni-corrected pairwise comparisons revealed that in the control condition, participants with positive self-views preferred positive over negative feedback, F(1, 166) = 14.27, p < .001, η p 2 = .08, whereas participants with negative self-views preferred negative over positive feedback, F(1, 166) = 6.25, p = .013, η p 2 = .04. This effect did not emerge in the experimental condition, in which both participants with positive and negative self-views were willing to read positive feedback to the same extent as negative feedback, Fs < 1. Thus, there was no preference for self-consistent feedback in any of the groups. The results are presented graphically in Figure 3.

Participants’ preference for positive and negative feedback depending on their self-views, separately for the control and experimental conditions in Study 2. Points represent raw data (jittered horizontally), bars represent means, beans represent smoothed densities, and rectangles represent 95% confidence intervals around the means (to properly adjust for within-subject comparisons, we estimated the confidence intervals in SPSS). This pirate plot was created using the yarrr package (Version 0.1.5; Phillips, 2017) for R (Version 4.0.3; R Core Team, 2020).

Following the same procedure as in Study 1, we also recoded positive and negative feedback into self-verifying and self-discrepant feedback and ran a 2 (feedback: self-discrepant vs. self-verifying) × 2 (condition: control vs. experimental) mixed ANOVA. The results showed a significant two-way interaction (see Table 3) indicating that participants in the control condition preferred self-verifying feedback over self-discrepant feedback, F(1, 168) = 19.13, p < .001, η p 2 = .10, whereas participants in the experimental condition were equally willing to consider the two types of feedback, F(1, 168) = 0.33, p = .568, η p 2 = .002. These results are in line with our main prediction and are presented graphically in Figure 4.

Participants’ preference for self-verifying and self-discrepant feedback, separately for the control and experimental conditions in Study 2. Points represent raw data (jittered horizontally), bars represent means, beans represent smoothed densities, and rectangles represent 95% confidence intervals around the means (to properly adjust for within-subject comparisons, we estimated the confidence intervals in SPSS). This pirate plot was created using the yarrr package (Version 0.1.5; Phillips, 2017) for R (Version 4.0.3; R Core Team, 2020).

We obtained similar results when we analyzed participants’ feedback choices. Specifically, we found the self-verification effect in the control condition: Participants with positive self-views were significantly more likely to select positive over negative feedback. In the experimental condition, however, participants were equally likely to choose both feedback types. We present the analyses and the results in Supplementary Materials B, available at https://osf.io/4trmw/.

Effects of epistemic authority

Next, we tested whether individual differences in self-epistemic authority and in the epistemic authority ascribed to a psychologist matter. We expected the self-verification effect to be the most pronounced when participants ascribed themselves high epistemic authority (i.e., when they trusted themselves), but not when they ascribed themselves low epistemic authority. By contrast, the self-verification effect should be weaker when participants ascribe high epistemic authority to the source of self-discrepant feedback (a psychologist) but stronger when they ascribe low epistemic authority to this source. We thus expected the self-verification effect to be conditional on epistemic authority. Because a psychologist was introduced only in the experimental condition, we expected epistemic authority ascribed to a psychologist to matter only in the experimental condition and self-epistemic authority to matter only in the control condition. Analyses with epistemic authority were our secondary preregistered analyses (our study design and power calculations were tailored to the primary analyses: Feedback × Self-View × Condition interaction). Therefore, the results we present in the following two sections are preliminary, and more studies designed specifically for testing these effects are needed.

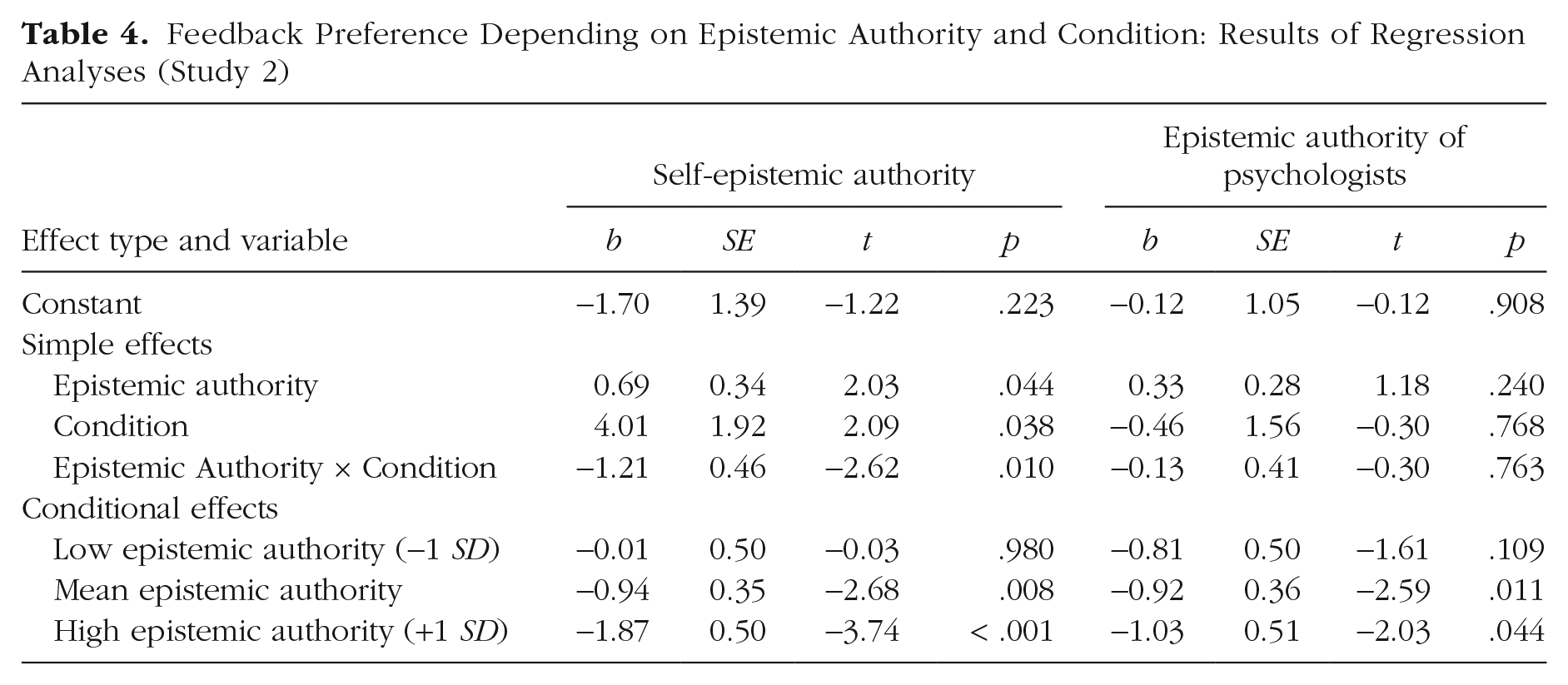

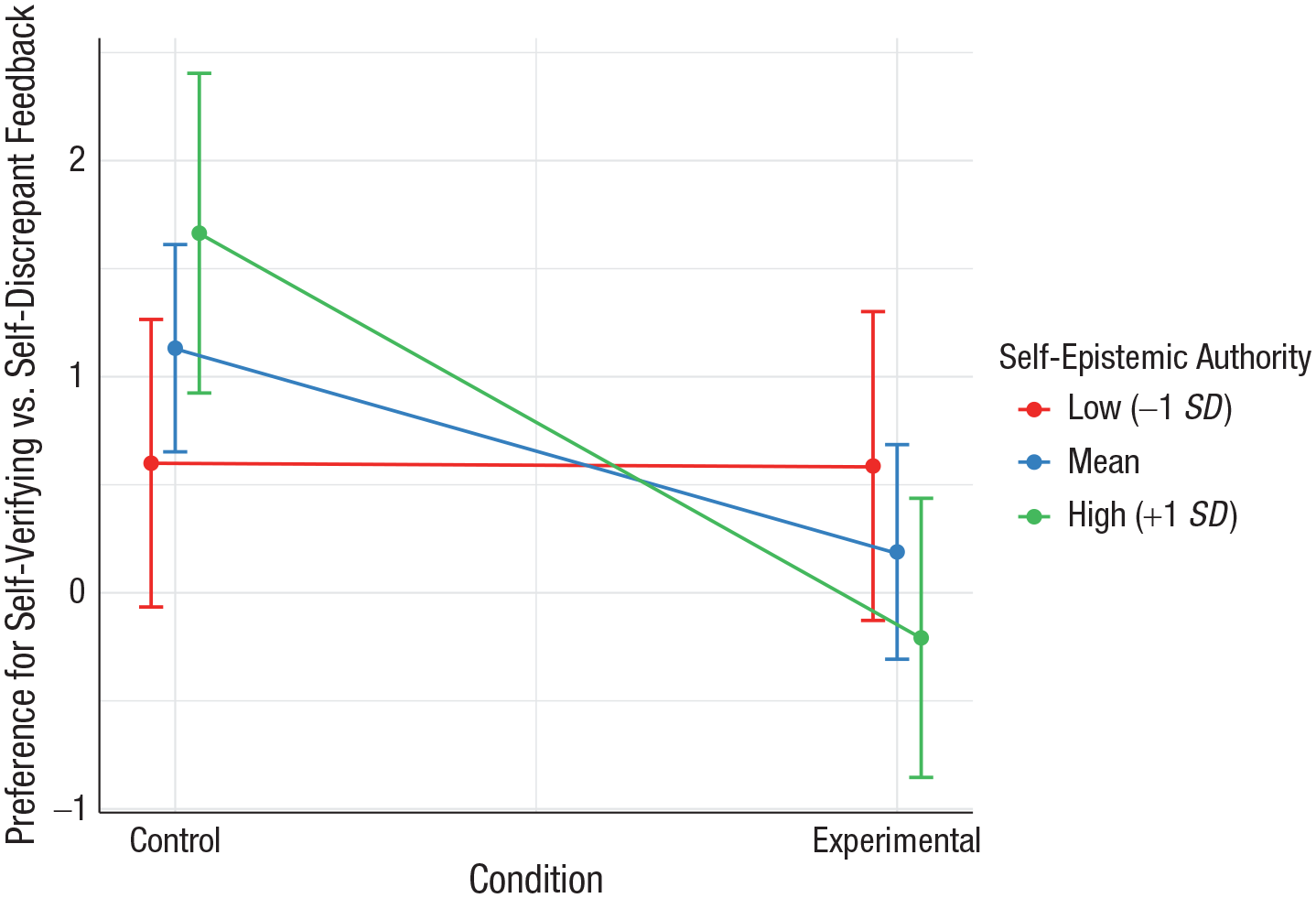

Epistemic authority of the self

To simplify our analyses, we calculated a difference score of feedback preference. To this end, we subtracted willingness to read self-discrepant feedback from willingness to read self-verifying feedback. Positive scores thus indicate preference for self-verifying feedback, negative scores indicate preference for self-discrepant feedback, and 0 indicates no preference. We regressed the difference score on condition (0 = control, 1 = experimental), self-epistemic authority (a continuous variable), and the interaction of the two. The results showed significant conditional effects of epistemic authority (see Table 4). Specifically, participants with low self-epistemic authority (−1 SD) showed a very weak preference for self-verifying information (scores close to 0), and this did not depend on condition. However, the difference was significant for those high in self-epistemic authority (+1 SD). Further comparisons showed a significant difference between participants with high and low epistemic authority in the control condition, b = 0.69, SE = 0.34, t = 2.03, p = .044; those with high self-epistemic authority preferred self-verifying feedback more than did those with low self-epistemic authority. The differences in the experimental condition were not significant, b = −0.52, SE = 0.31, t = −1.67, p = .100. The interaction term was significant (see regression coefficients in Table 4). The results are presented graphically in Figure 5.

Feedback Preference Depending on Epistemic Authority and Condition: Results of Regression Analyses (Study 2)

Participants’ preference for self-verifying over self-discrepant feedback depending on condition and participants’ self-epistemic authority in Study 2. Error bars represent 95% confidence intervals. The results were plotted with the sjPlot package (Version 2.8.6; Ludecke, 2020) for R (Version 4.0.3; R Core Team, 2020).

These results are in line with what we expected in that high levels of self-epistemic authority were associated with greater preference for self-verifying over self-discrepant feedback (i.e., a stronger self-verification effect). This was true in the control condition in which both opinions came from sources of equal epistemic authority. There were no similar effects for the choice variable.

Epistemic authority of a psychologist

We further tested the effects of epistemic authority ascribed to a psychologist. We first ran analyses on the difference score (analogous to the ones described above), and although the interaction term was not significant, we found conditional effects in line with our expectations. Specifically, participants who ascribed high epistemic authority to psychologists preferred self-verifying over self-discrepant feedback significantly less in the experimental condition than in the control condition. The same difference was not significant for participants who ascribed low epistemic authority to a psychologist (see Table 4).

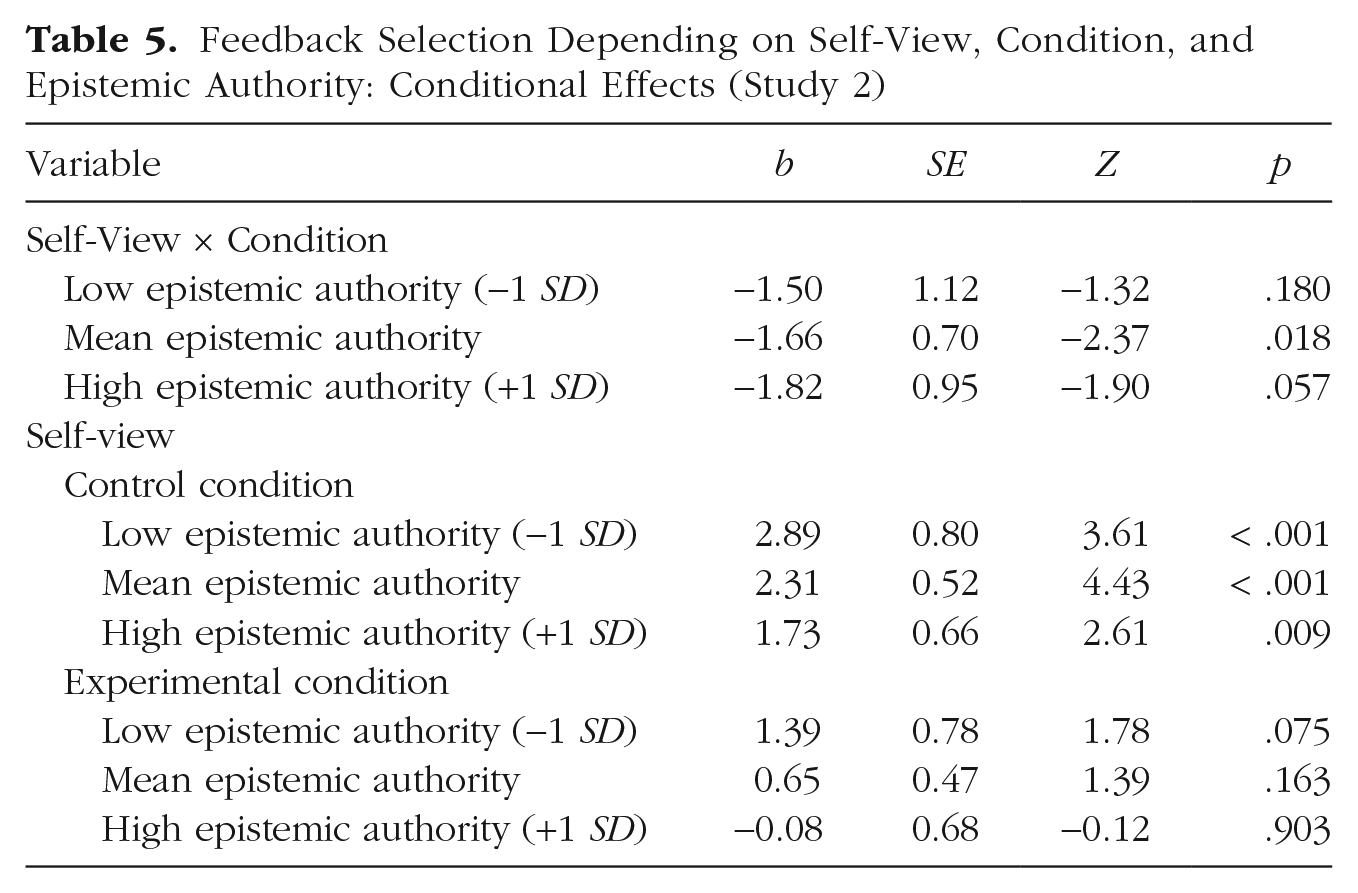

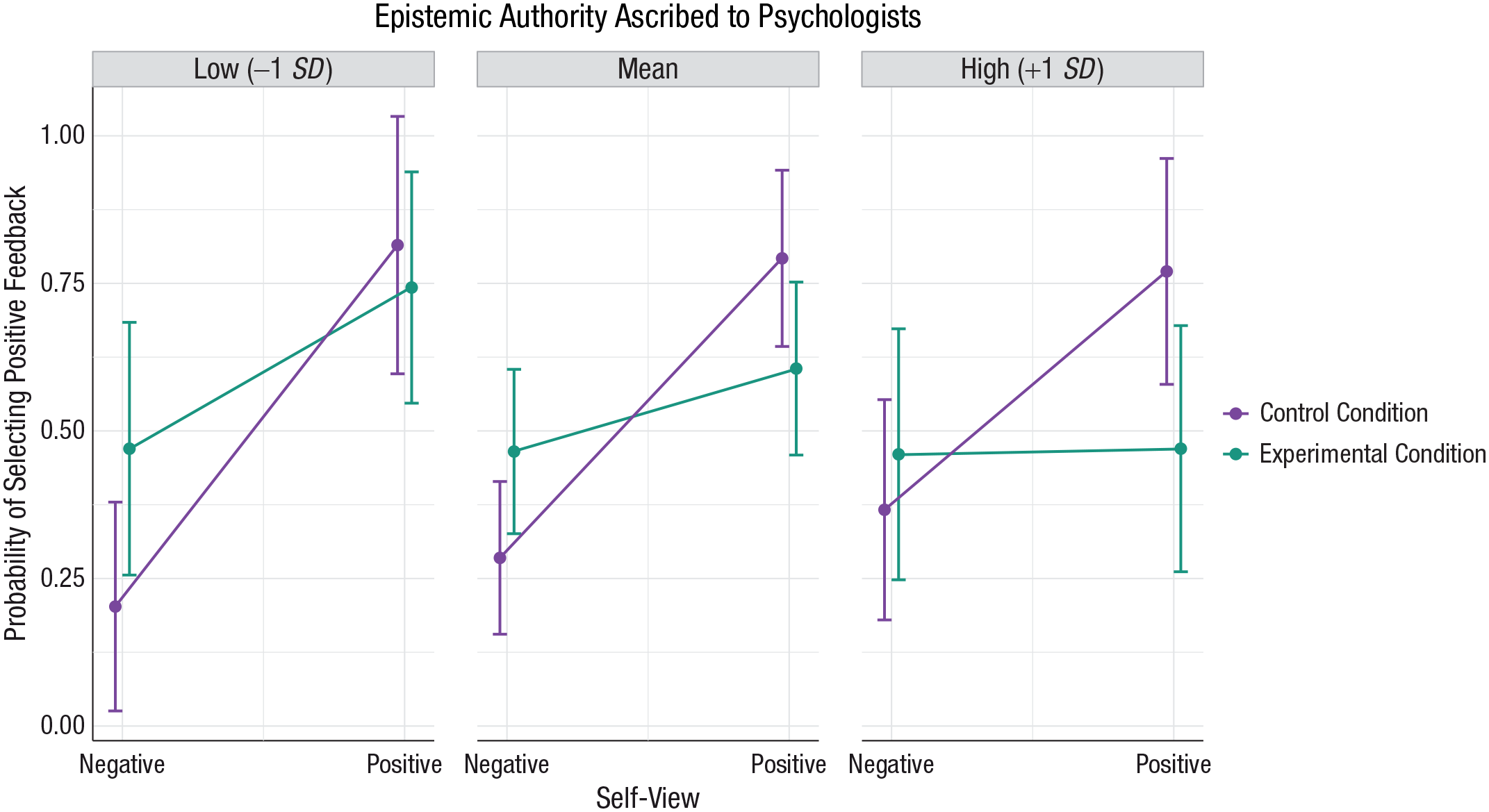

To gain further insight into the effects of epistemic authority on feedback selection, we also analyzed participants’ feedback choice (the categorical variable). We thus regressed the choice variable on self-view (0 = negative, 1 = positive), condition (0 = control, 1 = experimental), and epistemic authority, as well as two- and three-way interaction terms.

Although the interaction terms were nonsignificant (|Z| < 1), we obtained conditional effects in line with our expectations (see Table 5). Specifically, the Self-View × Condition interaction was more pronounced for participants with medium and high levels of epistemic authority but was weaker and nonsignificant for those with low epistemic authority. These effects are presented graphically in Figure 6 and indicate that participants in the control condition preferred self-verifying information at all levels of epistemic authority (i.e., participants with positive self-views were significantly more likely to select positive feedback than were participants with negative self-views; see Table 5). This is not surprising given that there was no feedback coming from a psychologist in the control condition.

Feedback Selection Depending on Self-View, Condition, and Epistemic Authority: Conditional Effects (Study 2)

Predicted probability that participants would select positive (vs. negative) feedback depending on their self-view and condition, separately for each level of epistemic authority ascribed to psychologists. Error bars represent 95% confidence intervals. The results were plotted with the sjPlot package (Version 2.8.6; Ludecke, 2020) for R (Version 4.0.3; R Core Team, 2020).

In the experimental condition, however, the self-verification effect disappeared for participants who ascribed high or medium epistemic authority to a psychologist. However, participants who ascribed low epistemic authority to a psychologist still tended to prefer self-verifying information (see Table 5). The results thus provide support for our predictions in that high epistemic authority ascribed to a psychologist was associated with a weaker tendency to prefer self-verifying information in the experimental condition.

We also checked the relationships between epistemic authority and our manipulation-check variables. In line with our theorizing, results showed that self-epistemic authority was positively associated with perceived accuracy of self-verifying feedback and its rated self-consistency. Additionally, self-epistemic authority was positively related to the perceived competence of the source of self-verifying feedback, whereas the epistemic authority of a psychologist was associated with greater perceived competence of self-discrepant feedback. Correlations are presented in Table 3E in Supplementary Materials E (available at https://osf.io/d45pk/).

Overall, the results of this study replicated the findings of Studies 1a and 1b and provide further support for our main hypothesis that preference for self-verifying feedback disappears when self-discrepant feedback comes from a highly credible source. We also found preliminary evidence that individual differences in epistemic authority may also play a role in feedback preference. In line with our theorizing, the greater the epistemic authority ascribed to the self, the greater the tendency to select self-verifying over self-discrepant feedback in the control condition. By contrast, the greater epistemic authority ascribed to the source of self-discrepant feedback (a psychologist), the weaker the self-verification effect and the greater the willingness to familiarize oneself with the self-discrepant opinion. In the latter case, we obtained significant conditional effects but no significant interaction. Therefore, more studies are needed to further test the role of the epistemic authority of psychologists, or more ideally, the relation between the two epistemic authorities—that is, of self versus other.

General Discussion

In two studies, we tested credibility effects in preference for self-verifying versus self-discrepant feedback. Specifically, we manipulated the credibility of the source of self-discrepant feedback and demonstrated that although people preferred self-verifying information in the control condition, in which the source of self-discrepant feedback was of lower credibility, they did not do so in the experimental condition, in which the source of self-discrepant feedback was of high credibility. Moreover, in Study 1, participants with negative self-views preferred positive (i.e., self-discrepant) over negative (i.e., self-verifying) feedback when it came from a highly credible source.

These results show that when self-discrepant feedback was seen as credible, participants showed no significant preference for self-verifying over self-discrepant information. They were thus equally (or more) likely to read self-discrepant and self-verifying feedback. This finding has important theoretical and practical implications. Particularly, it significantly supplements self-verification theory (Swann, 1983, 1990, 2012) by demonstrating that people’s preference for self-verifying feedback stems from their perceiving self-discrepant feedback as less accurate than self-verifying feedback; that is, the self-verification effect is mainly driven by subjective accuracy, or credibility, rather than the desire for self-verification (see also the mediation analyses in Supplementary Materials C, available at https://osf.io/f8329/). Study 2 additionally supported this notion by showing that individual differences in ascription of epistemic authority matter as well: Only participants who trusted themselves to be sources of valid and accurate information about themselves (those with high self-epistemic authority) exhibited self-verification bias when the external sources were of equal credibility. By contrast, those who ascribed high epistemic authority to a psychologist, who delivered self-discrepant feedback in our study, showed no preference for self-verifying feedback (i.e., did not exhibit the self-verification effect).

From a more practical point of view, the fact that people do not have an invariant bias toward self-verifying feedback means that they can devote the same attention and processing capacity to self-verifying and self-discrepant information. This, is turn, can affect the likelihood that this information will change their self-views. The problem with self-views, especially negative ones, is that they are persistent and resistant to change, mainly because self-discrepant information is ignored, discounted, or rejected (e.g., Swann et al., 1987). Here, we show that this may happen because such information is perceived as less valid. Thus, it is not necessarily the case that individuals with negative self-views do not want positive feedback; rather, they do not believe it or regard it with doubt. Therefore, when the positive information is seen as credible, they may be equally (or more) willing to receive it. This suggests that one possible intervention to improve a person’s negative self-view or correct otherwise unrealistic self-perception is to increase the subjective credibility of the source of inconsistent feedback (e.g., a close friend who sees a person more favorably than they see themselves). Indeed, studies show that appreciable mental health benefits accrue from close friendships (e.g., Narr et al., 2019) and psychotherapy (e.g., Boden et al., 2012), in which people with negative self-views are systematically provided with positive or more realistic feedback from a source they find credible. Alternatively, interventions might be designed to temporarily decrease the credibility of the self by highlighting biases of self-perception (as done in therapy as a part of cognitive restructuring; Leahy, 2017).

Our findings highlight the role accuracy plays in feedback selection. Crucially, however, it is the perception of accuracy (i.e., subjective accuracy), rather than objective accuracy, that matters. Although people’s self-views are grounded in reality (self-knowledge is acquired via interactions with other people and the world), these views may reflect the reality imperfectly, and people may hold self-beliefs that are biased or inaccurate. However, if a person thinks that these views are accurate, the views will drive the person’s choices regardless of their objective accuracy.

Moreover, people’s self-views can lead to further distortions of reality, as Hart et al. (2021) have shown. In two experiments, the researchers demonstrated that participants behaved in a manner that confirmed their self-beliefs about their own depression. Specifically, participants higher on self-rated depression perceived colors as turning less or more intense in a color-gazing task, depending on whether they had been told that seeing less or more intense colors is symptomatic of depression. Crucially, the colors did not change. Participants’ perceptions were thus inaccurate, leading the authors to conclude that self-verification behavior is not attributable to the accuracy motive. However, participants with higher depression levels believed that they truly saw the color change and reported that their behavior felt genuine and authentic; that is, it was subjectively accurate. This shows that in some cases, striving for accuracy might lead to choosing information that only seems true (in light of inadequate beliefs).

Finally, people’s behavior is multiply determined, and striving for accuracy is only one of the self-related motives. Self-verification theory emphasizes the role of coherence or certainty motives (e.g., Swann, 2012), and indeed there may be situations in which coherence considerations override the accuracy concerns (e.g., under a heightened need for cognitive closure). In such circumstances, consistent but less credible feedback might be preferred over credible but inconsistent feedback. Another major driver of feedback selection is the self-enhancement motive, or the need to view oneself positively (e.g., Allport, 1937). Again, when this need becomes dominant, people may convince themselves that positive feedback is accurate without paying too much attention to its quality. These various motives and the behaviors aimed at satisfying them may enter into more complex relationships. For instance, accurate information serves accuracy but also certainty motivation (only subjectively accurate knowledge provides one with a sense of certainty). Consistency, or being right, can also be esteem enhancing (the “I-told-you-so” effect). Also, feedback that satisfies several motives (e.g., is both accurate and enhancing) may be preferred over feedback that satisfies only one motive (is accurate but harmful). There are thus various motivations at play, and considering their relative magnitudes in a given situation is necessary to fully predict the resultant impact on feedback selection.

Limitations and future research directions

Our results point to the role epistemic authority plays in feedback selection. Importantly, we show that both self-epistemic authority (self-view certainty; see Swann & Ely, 1984; Swann et al., 1988) and one’s ascription of certainty to the opinion of an external source (i.e., that source’s perceived epistemic authority) matter. Only the relationship between the two (epistemic authority of the self vs. the other) helps us to fully understand the feedback-selection phenomenon. Therefore, future studies could include domains in which people ascribe different epistemic authority to the self than to others.

There are also alternative explanations for our findings: Demand and curiosity may have been higher in the experimental than in the control condition. Because an expert provided the self-discrepant information, participants might have felt that they ought to select this opinion. They also may have been more curious about an expert opinion than about a nonexpert opinion. However, participants in the experimental condition showed no preference for an expert opinion, and as Study 2 suggests, the obtained effects were conditional on the level of epistemic authority, which speaks against the demand effect. Though these alternative explanations are not particularly feasible in the present context, they could be profitably probed in further research.

Constraints on generality

We believe that the present studies tested general psychological mechanisms driving the selection of self-relevant feedback. However, we used one specific paradigm in which accuracy concerns may be paramount, whereas coherence motives may be more prominent in other contexts. Nevertheless, our findings should apply to the same broad class of situations as the self-verification effect (see Kwang & Swann, 2010; Seih et al., 2013).

Also, we measured one self-view (social self-esteem) and used two credibility manipulations. We believe, however, that the effects should generalize to other domains a person finds important (see Swann & Pelham, 2002) and to other credibility manipulations. Crucially, the source of self-discrepant feedback needs to be seen as more credible than the source of self-verifying feedback and more credible than the self. However, when devising credibility manipulations, experimenters should consider that the credibility of various sources, including the self, differs across individuals, groups, and cultures and is domain specific.

Feedback believability should also be assured. For instance, extreme or very self-discrepant opinions may raise suspicion and seem inaccurate. They will likely fall in most people’s latitude of rejection (Sherif, 1963), and hence might be rejected even if they come from a highly credible source (the “too-good-to-be-true” effect).

Finally, our samples were nonclinical. According to the continuum principle (e.g., Westbrook et al., 2007), mental disorders exaggerate normal processes. Therefore, the effects should not substantially differ in clinical samples except in their strength. Apart from that, we have no reason to believe that the results depend on other characteristics of the participants, materials, or context.

Supplemental Material

sj-docx-1-pss-10.1177_09567976211049439 – Supplemental material for Says Who? Credibility Effects in Self-Verification Strivings

Supplemental material, sj-docx-1-pss-10.1177_09567976211049439 for Says Who? Credibility Effects in Self-Verification Strivings by Ewa Szumowska, Natalia Wójcik, Paulina Szwed and Arie W. Kruglanski in Psychological Science

Supplemental Material

sj-docx-2-pss-10.1177_09567976211049439 – Supplemental material for Says Who? Credibility Effects in Self-Verification Strivings

Supplemental material, sj-docx-2-pss-10.1177_09567976211049439 for Says Who? Credibility Effects in Self-Verification Strivings by Ewa Szumowska, Natalia Wójcik, Paulina Szwed and Arie W. Kruglanski in Psychological Science

Supplemental Material

sj-docx-3-pss-10.1177_09567976211049439 – Supplemental material for Says Who? Credibility Effects in Self-Verification Strivings

Supplemental material, sj-docx-3-pss-10.1177_09567976211049439 for Says Who? Credibility Effects in Self-Verification Strivings by Ewa Szumowska, Natalia Wójcik, Paulina Szwed and Arie W. Kruglanski in Psychological Science

Supplemental Material

sj-docx-4-pss-10.1177_09567976211049439 – Supplemental material for Says Who? Credibility Effects in Self-Verification Strivings

Supplemental material, sj-docx-4-pss-10.1177_09567976211049439 for Says Who? Credibility Effects in Self-Verification Strivings by Ewa Szumowska, Natalia Wójcik, Paulina Szwed and Arie W. Kruglanski in Psychological Science

Supplemental Material

sj-docx-5-pss-10.1177_09567976211049439 – Supplemental material for Says Who? Credibility Effects in Self-Verification Strivings

Supplemental material, sj-docx-5-pss-10.1177_09567976211049439 for Says Who? Credibility Effects in Self-Verification Strivings by Ewa Szumowska, Natalia Wójcik, Paulina Szwed and Arie W. Kruglanski in Psychological Science

Footnotes

Transparency

Action Editor: Mark Brandt

Editor: Patricia J. Bauer

Author Contributions

E. Szumowska conceived the study. E. Szumowska, N. Wójcik, and P. Szwed designed the study. Data were collected by E. Szumowska, N. Wójcik, and P. Szwed and analyzed by N. Wójcik and E. Szumowska. E. Szumowska drafted the manuscript with input from N. Wójcik, and P. Szwed. A. W. Kruglanski provided critical revisions. All the authors approved the final version of the manuscript for submission.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.