Abstract

Background

The European Union’s Artificial Intelligence Act (AI Act) establishes a pioneering, sector-agnostic, risk-based taxonomy for artificial intelligence systems. However, its concrete operational implications for academic libraries, which increasingly rely on AI for discovery services, metadata generation, user support, and analytics, remain insufficiently explored.

Methods

This study conducts a systematic review of recent scientific and professional literature and maps common academic library AI use cases onto the proportional risk and compliance obligations defined by the AI Act. Based on this analysis, a sector-specific risk-classification matrix is developed to support regulatory interpretation in the library context.

Results

The findings indicate that ethical principles frequently prevail over enforceable compliance mechanisms, that library AI applications align conceptually with the AI Act’s taxonomy but lack practical operationalization, that generative AI and large language models intensify regulatory ambiguity, that AI procurement practices in libraries rarely incorporate AI Act safeguards, and that the protection of fundamental rights, including equity, privacy, and intellectual freedom, requires measurable controls beyond transparency notices.

Discussion

The results reveal a substantial gap between the regulatory framework and its practical implementation in academic libraries. Governance approaches remain largely normative, while auditable and operational compliance mechanisms are still underdeveloped. The rapid diffusion of generative AI further complicates accountability, risk classification, and institutional responsibility.

Conclusion

This study contributes a sector-specific AI risk-classification matrix, identifies policy needs for tailored audit and procurement models, and highlights key research gaps in empirical validation, bias detection, and trust frameworks. By bridging regulation and practice, it positions academic libraries as potential norm-setters within the European AI governance ecosystem, exemplifying rights-preserving and compliance-ready institutions where regulation acts both as a safeguard and a catalyst for responsible innovation.

Keywords

Introduction

The European Union Artificial Intelligence Act (AI Act) represents the first comprehensive attempt to regulate AI on a risk-based model. While its provisions are designed to be sector-agnostic, their implications for academic and research libraries remain largely unexamined. This absence is striking: libraries increasingly rely on AI systems, ranging from recommender algorithms and discovery platforms to generative AI tools and biometric-based learning analytics, yet few studies have systematically mapped these applications against the Act’s risk categories and proportional obligations.

This gap gives rise to a central research problem: How can the AI Act’s risk taxonomy be operationalized within academic library settings, and what benefits or limitations does such alignment create for responsible AI governance?

To address this question, the study pursues the following objectives: ✓ ✓ o Map common AI use-cases in libraries against the AI Act’s risk categories; o Assess the extent to which current literature addresses compliance mechanisms beyond ethical principles; o Explore the governance and procurement implications of risk classification; o Identify future research and practice directions for aligning library operations with regulatory requirements.

Based on the literature reviewed, two working hypotheses frame the inquiry: (1) Academic libraries tend to rely on principle-based ethical frameworks rather than enforceable compliance mechanisms when deploying AI systems; (2) The AI Act, if operationalized through sector-specific tools such as risk-classification matrices and contractual safeguards, can transform libraries into exemplars of rights-preserving AI governance.

The underlying problem, therefore, is not merely whether libraries use AI, but whether they govern it responsibly and in alignment with binding regulatory standards. The risk is that, without contextualized frameworks, libraries may either over-regulate benign tools or, conversely, underestimate the compliance burdens of high-risk systems, both scenarios undermining their mission as custodians of equitable access, academic freedom, and public trust.

This article positions itself within that tension. By bridging the regulatory innovations of the AI Act with the operational realities of academic libraries, it seeks to provoke a broader debate: should libraries remain passive adopters of AI shaped by external vendors, or can they become active norm-setters within Europe’s emerging AI governance ecosystem? The challenge is not only technical but institutional, demanding a shift from abstract ethics to enforceable procedures.

Methodology

This study followed a qualitative approach supported by a systematic literature review. The methodological design was guided by the central research question:

The following search string was applied: (“Artificial Intelligence Act” OR “EU AI Act” OR “European Union AI regulation”) AND (“Academic libraries” OR “University libraries”) AND (“Artificial Intelligence” OR “AI” OR “Generative AI”)

Three databases were initially considered. In the Web of Science, the time span was restricted to 2021-2025. This decision was grounded in the fact that the political and scientific discussion on the AI Act started in 2021, and our intention was to capture literature produced during its negotiation and early implementation phases. Filters were applied to retrieve only review articles, and the disciplinary scope was refined to Library & Information Science Source. In Scopus, the search produced only 11 results when limited to articles. This relatively low yield reflects the emerging and still underexplored nature of the topic. Dimensions was also tested; however, the single item retrieved was situated in the field of journalism and therefore outside the scope of this study.

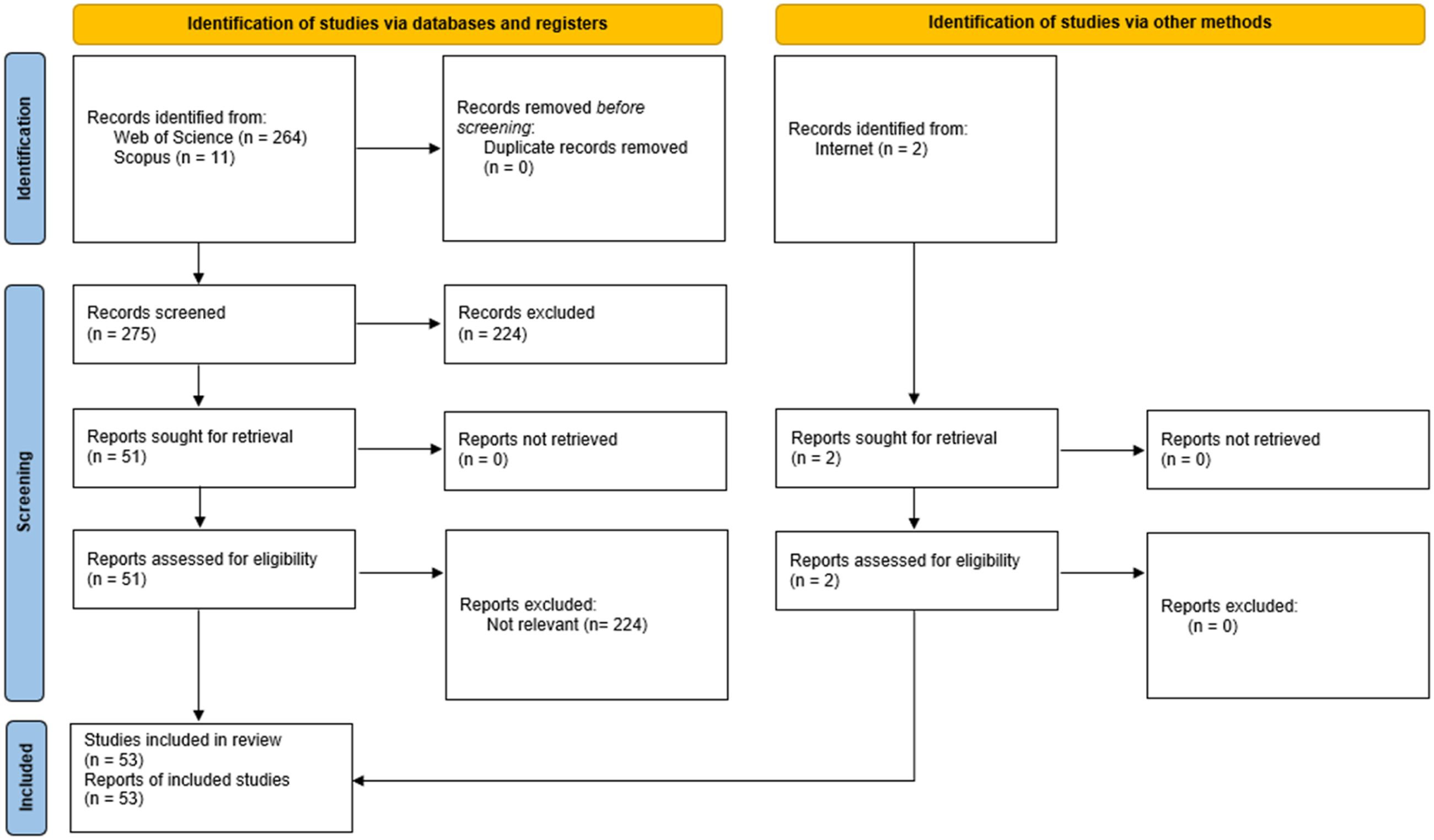

Altogether, the searches returned 275 results (Figure 1). To optimize the screening process, the tool Rayyan was employed. The first step was the identification of duplicates, of which none were found. The second step involved systematic screening, applying inclusion criteria (articles explicitly addressing the AI Act, artificial intelligence, and their implications for libraries or higher education) and exclusion criteria (items unrelated to the research problem, such as the journalism paper retrieved from Dimensions). Prisma flux diagram. Source: Based on PRISMA 2020 Statement.

Through this process, 224 items were excluded, leaving a working corpus of 51 relevant results. To strengthen the review, one additional article identified through web-based search was included, along with the official Portuguese version of the AI Act, which provides essential legal and conceptual grounding for the analysis.

This methodological pathway ensures that the review is both comprehensive, covering the key international databases most likely to index relevant LIS scholarship, and selective, focusing strictly on material aligned with the research problem. The use of Rayyan enhanced transparency and reproducibility in the selection process, while the explicit reporting of inclusion and exclusion criteria increases methodological rigor.

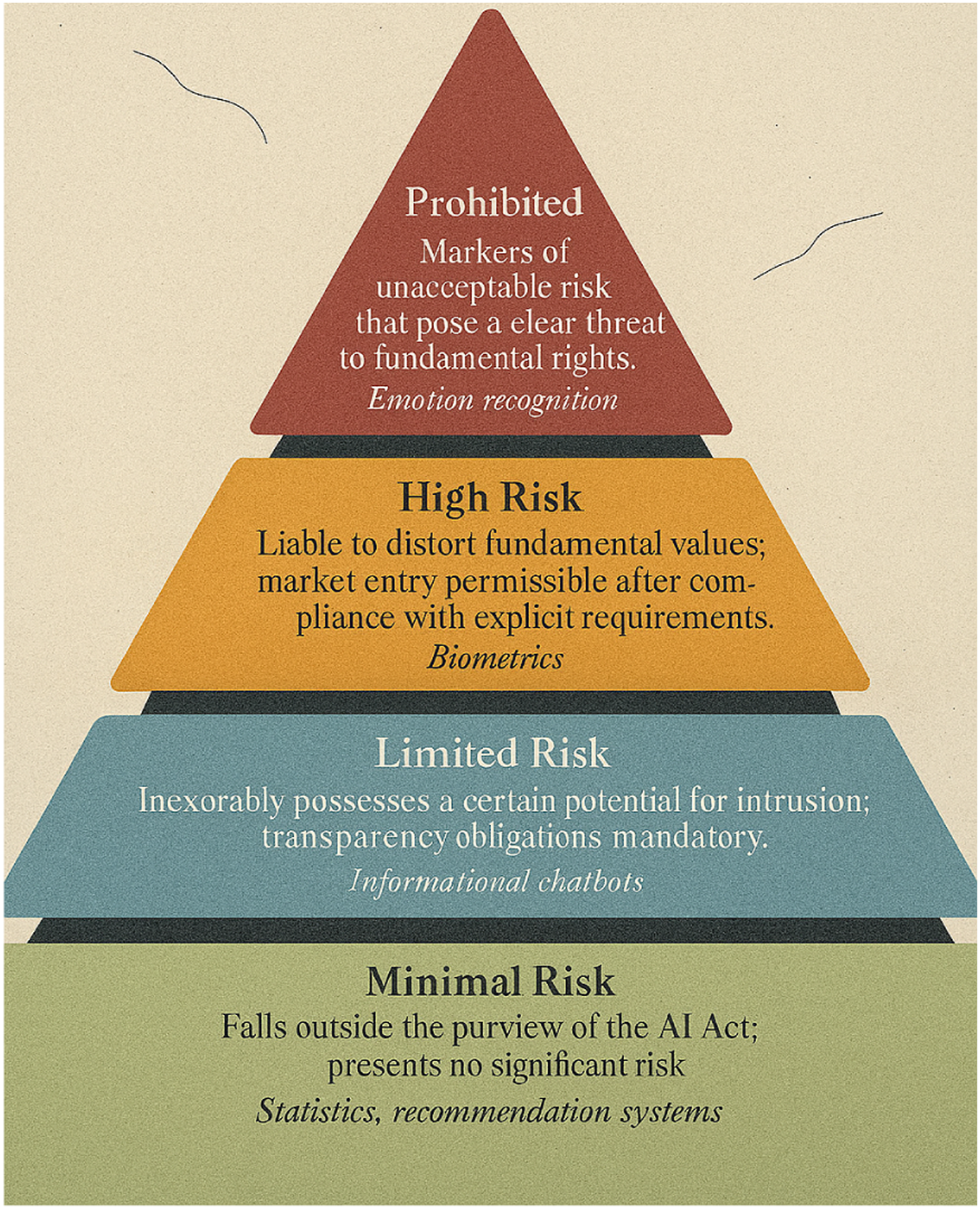

To enhance methodological transparency, it is important to note that Figure 2 through 6 were generated using artificial intelligence tools. These figures were designed to synthesize and visualize complex concepts emerging from the literature review, thereby supporting analytical clarity and facilitating knowledge translation. In addition, AI-assisted language refinement was employed to improve precision, coherence, and readability of the manuscript, while ensuring that all substantive arguments, interpretations, and critical perspectives remained the responsibility of the authors. This combined use of AI-generated visuals and text refinement aligns with recent scholarly practices that recognize the role of AI as an auxiliary instrument in academic writing, without displacing the centrality of human judgment and critical analysis. AI Act risk levels and illustrative library applications. Source: Generated with AI.

Literature review

Operationalizing risk taxonomies: Applying the EU Artificial Intelligence Act to academic library practices

The European Union’s

Existing studies highlight efficiency gains and enhanced user services from AI adoption in libraries, while also warning about opacity, bias, and governance challenges (Borgohain et al., 2024; Collins et al., 2021). Yet, few explicitly connect these insights to the AI Act’s proportional obligations (Ashok et al., 2022; Laine et al., 2024).

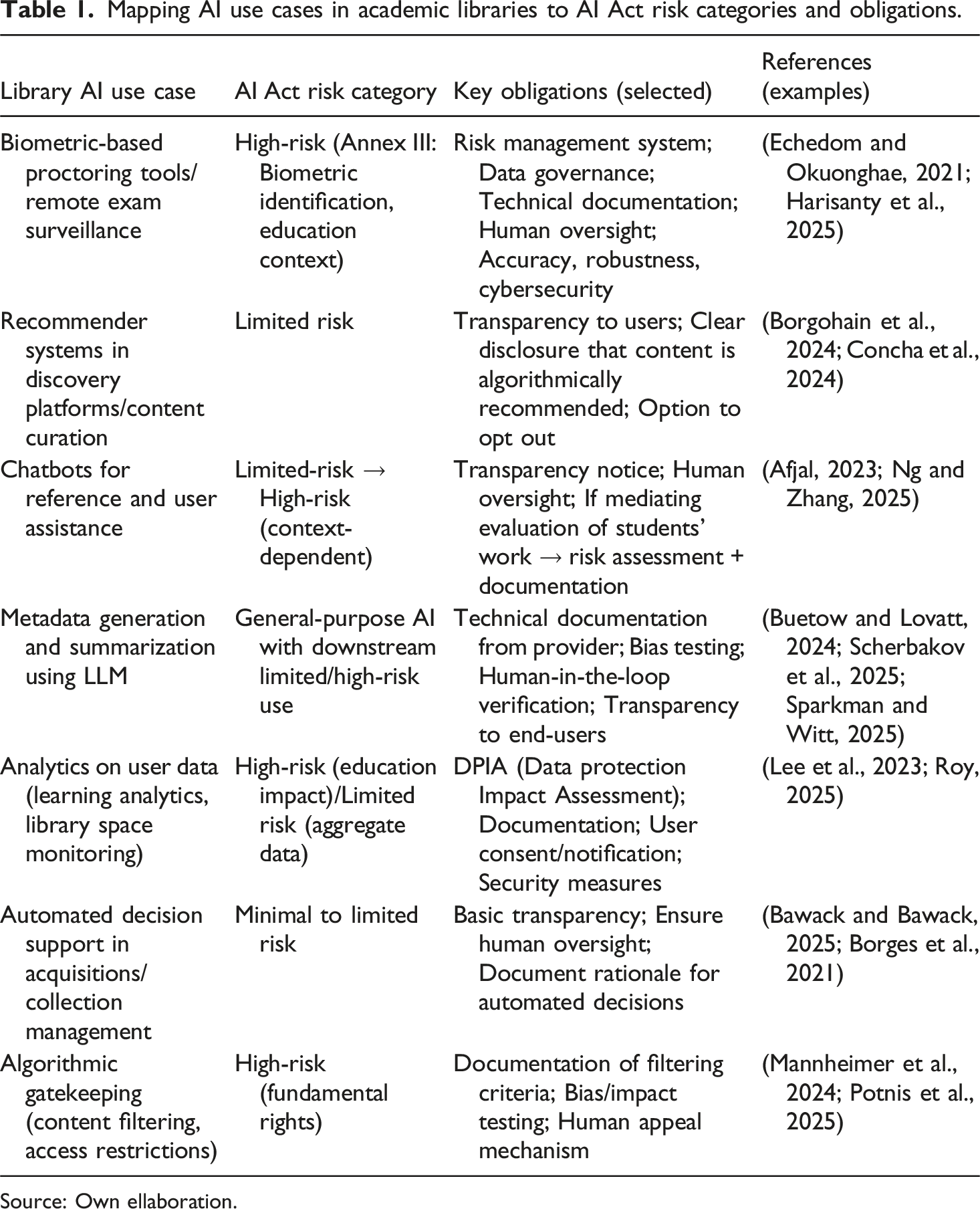

Several strands of the reviewed literature provide the necessary building blocks for such operationalization. Ethical frameworks and dialogical instruments, such as the Data Ethics Decision Aid (DEDA) (Franzke et al., 2021), demonstrate how abstract ethical principles can be embedded into organizational processes. Likewise, systematized reviews of AI in library contexts (Bawack and Bawack, 2025; Concha et al., 2024) identify concrete cases of use that can be reclassified under the AI Act’s risk taxonomy. For instance, AI-powered proctoring and biometric identification tools align with high-risk categories due to their potential to affect fundamental rights, while recommender systems for content discovery are more plausibly framed as limited-risk, requiring transparency and user notification (Chen et al., 2024).

Within this regulatory framework, it becomes essential to distinguish how different applications of AI in libraries may fall under the European Union’s four-tier taxonomy of risk. This categorization not only clarifies the level of regulatory scrutiny but also highlights the proportionality principle at the core of the AI Act. To illustrate this, Figure 2 presents the hierarchy of AI risk levels, prohibited, high-risk, limited-risk, and minimal-risk, together with indicative examples relevant to library contexts. This visual framing reinforces the idea that the Act does not impose uniform requirements; instead, it calibrates obligations according to the potential harm posed to fundamental rights. Such a framework provides the conceptual foundation for analyzing library-specific applications in the subsequent sections.

The literature on generative AI and large language models (Afjal, 2023; Buetow and Lovatt, 2024; Gupta et al., 2025) further complicates the taxonomy, as the AI Act introduces specific obligations for general-purpose AI systems. These obligations, ranging from technical documentation and testing to transparency towards downstream deployers, translate directly into library contexts where LLMs are increasingly deployed in search assistance, metadata generation, and summarization services. Without contextualized frameworks, libraries risk either over-regulating benign applications or, conversely, underestimating the compliance burden of high-risk.

What emerges from this body of research is both a clear opportunity and a pressing necessity: to design a sector-specific operational matrix that aligns common academic library applications with the AI Act’s risk categories and associated compliance obligations. Such a matrix would serve two purposes: first, as an internal governance instrument that guides procurement, deployment, and evaluation of AI systems in libraries (Lee et al., 2023; Roy, 2025); and second, as a contribution to the broader discourse on responsible AI in education and research sectors, positioning libraries not merely as passive adopters but as critical intermediaries in the European AI regulatory landscape.

Towards AI-Act-ready governance: Ethical auditing frameworks for library and information services

Across the literature, one theme stands out with urgency: the question of governance and ethical oversight. Libraries have long framed themselves as guardians of intellectual freedom, equity of access, and the responsible management of knowledge. As AI enters their workflows, whether in metadata creation, discovery, or user-facing services, the challenge is not merely what these systems can do, but how they are governed.

Several studies provide conceptual scaffolding for this debate. Ashok et al. (2022) and Laine et al. (2024) propose broad ethical frameworks for AI and digital technologies, highlighting principles of transparency, accountability, fairness, and explicability. Franzke et al. (2021) with the DEDA, demonstrate that ethical reflection can be embedded into day-to-day decision-making, turning values into operational processes. Heyder et al. (2023) and Trigo et al. (2024) add further nuance, showing how human-AI interaction and algorithmic fairness can be evaluated systematically.

What is striking, however, is the regulatory gap. These frameworks are not explicitly aligned with the risk-based obligations introduced by the AI Act. Ethical audits often remain voluntary, aspirational, or sector-agnostic, while the AI Act requires that for certain categories, especially high-risk systems, ethical considerations be transformed into legally binding compliance checks, technical documentation, and ongoing monitoring. For libraries, this means moving from principles to procedures: how to evidence that a chatbot is sufficiently transparent, or that a recommender system has been tested for bias, not just assumed to be fair.

The literature on libraries and archives begins to sketch this transition. Mannheimer et al. (2024) argue that responsible AI practice in cultural heritage institutions requires formal governance structures, not ad hoc adaptations. Borgohain et al. (2024) and Concha et al. (2024), in their reviews of AI applications in libraries, emphasize the diversity of use cases, yet they stop short of specifying governance protocols matched to risk levels. The implication is clear: while ethical guidance is abundant, libraries lack a sector-specific audit framework that translates these principles into compliance pathways under the AI Act.

This study positions itself within that gap. By synthesizing existing ethical frameworks with the regulatory obligations of the AI Act, it aims to outline what an AI-Act-ready audit process for libraries could look like. Such a process would not only strengthen compliance but also reaffirm libraries’ longstanding commitment to responsible stewardship of information technologies. In doing so, libraries could shift from being passive adopters of AI tools to active shapers of how AI is governed in academic knowledge environments.

General-purpose AI and large language models in academic libraries

If there is one technology that has accelerated debates around the AI Act, it is the rise of general-purpose AI and large language models (LLM). Unlike domain-specific applications, these systems are adaptable, fast-evolving, and widely deployed across multiple contexts, including libraries. The AI Act treats general-purpose AI with particular attention, imposing obligations for technical documentation, transparency, and risk management precisely because of their broad applicability (Europe Union, 2024).

In the library field, the literature shows a surge of interest in how LLM reshape core functions. Afjal (2023) highlights the transformative, yet disruptive, potential of ChatGPT in research and learning environments. Scherbakov et al. (2025) and Buetow and Lovatt (2024) demonstrate how LLM are already being tested for dynamic literature reviews, while Sparkman and Witt (2025) critically assess their ethical boundaries. Hossain (2025) and Harper and Groth (2025) point to the need for AI literacy and new skillsets among librarians, reinforcing the idea that these tools are no longer peripheral, they are becoming embedded in the everyday practice of information work.

Yet the scholarship also voices caution. Gupta et al. (2025) map the emerging trends of generative AI and emphasize unresolved issues of bias, opacity, and misuse. Ridley (2025) underscores the importance of human-centered explainability, a principle echoed in Potnis et al. (2025) when discussing the risks of algorithmic gatekeeping. These concerns are amplified in academic libraries, where intellectual freedom, academic integrity, and equity of access are non-negotiable values. Without clear governance, LLM risk undermining precisely the principles libraries are meant to protect.

What is missing across the literature is a regulatory lens: how to classify and manage LLM deployments in libraries within the AI Act’s risk taxonomy. Are LLM-based chatbots for reference services merely limited-risk tools requiring transparency notices, or do they edge into higher-risk categories when they mediate assessments of students’ academic work? What safeguards must be in place when metadata generation or recommender systems are driven by generative models? These are not theoretical questions; they are compliance issues with direct legal and ethical implications.

Mapping AI use cases in academic libraries to AI Act risk categories and obligations.

Source: Own ellaboration.

Contractual safeguards and procurement policies: Embedding responsible AI clauses in library vendor agreements

If libraries are to operationalize the AI Act in practice, they cannot focus only on internal governance. Much of the AI they deploy is procured through third-party vendors, discovery platforms, analytics dashboards, learning support tools, or LLM. This procurement environment creates a structural dependency: libraries rarely design the AI themselves, yet they remain accountable for how these tools are implemented within academic contexts. The literature increasingly acknowledges this tension, but systematic approaches remain scarce.

Collins et al. (2021) and Borges et al. (2021) underline the strategic dimension of AI adoption in organizational settings, noting that procurement choices are often driven by functionality rather than compliance. Mannheimer et al. (2024) argue that responsible AI in libraries and archives requires embedding accountability mechanisms not only at the institutional level but also contractually with providers. This is where the AI Act provides a critical lever. By explicitly defining provider and deployer obligations, it empowers libraries to negotiate contracts that reflect risk-based compliance requirements.

The gap is evident in practice. Few library contracts today include clauses on algorithmic transparency, bias auditing, or human oversight mechanisms. Yet under the AI Act, deployers of high-risk systems are required to ensure these safeguards are in place. This means procurement contracts need to go beyond service-level agreements on uptime and data protection; they must include compliance clauses that specify, for example: ✓ Provider responsibility for maintaining technical documentation; ✓ Obligations to disclose training data limitations; ✓ Commitments to deliver bias testing results; ✓ Clear processes for libraries to exercise audit rights.

Literature outside the library domain reinforces this trajectory. Ashok et al. (2022) propose ethical frameworks that emphasize contractual embedding of values, while Lee et al. (2023) demonstrate how organizational AI adoption hinges on governance at the procurement stage. Saputra et al. (2024) and Netipatalachoochote and Pailler (2025), though examining national AI legislation in other jurisdictions, point to a similar insight: law without contractual integration risks remaining aspirational.

For libraries, the implication is twofold. First, procurement becomes a frontline site for ensuring AI Act compliance: contracts must explicitly reference risk classification and associated safeguards. Second, by adopting proactive procurement policies, libraries can influence vendors, many of whom serve multiple institutions and markets, to raise their standards more broadly. This places libraries not only as implementers but as norm-setters, aligning procurement power with their ethical mission.

Safeguarding fundamental rights and values in the age of AI libraries

At the heart of the AI Act lies a concern that extends beyond technical compliance: the protection of fundamental rights. This resonates deeply with libraries, which historically position themselves as institutions of intellectual freedom, privacy, equity, and public trust. If the Act classifies systems not only by technical function but by their potential impact on rights and freedoms, libraries provide a particularly rich terrain in which these principles are tested.

The literature acknowledges the stakes, though often indirectly. Mannheimer et al. (2024) emphasize that responsible AI in libraries and archives must be anchored in ethical commitments to openness and inclusivity. Potnis et al. (2025) warn of the dangers of algorithmic gatekeeping, where automated systems inadvertently restrict access to knowledge or reinforce structural inequities. Ridley (2025) argues for human-centered explainability, situating transparency not merely as a compliance obligation but as a right of the user to understand how decisions affecting their access to information are made.

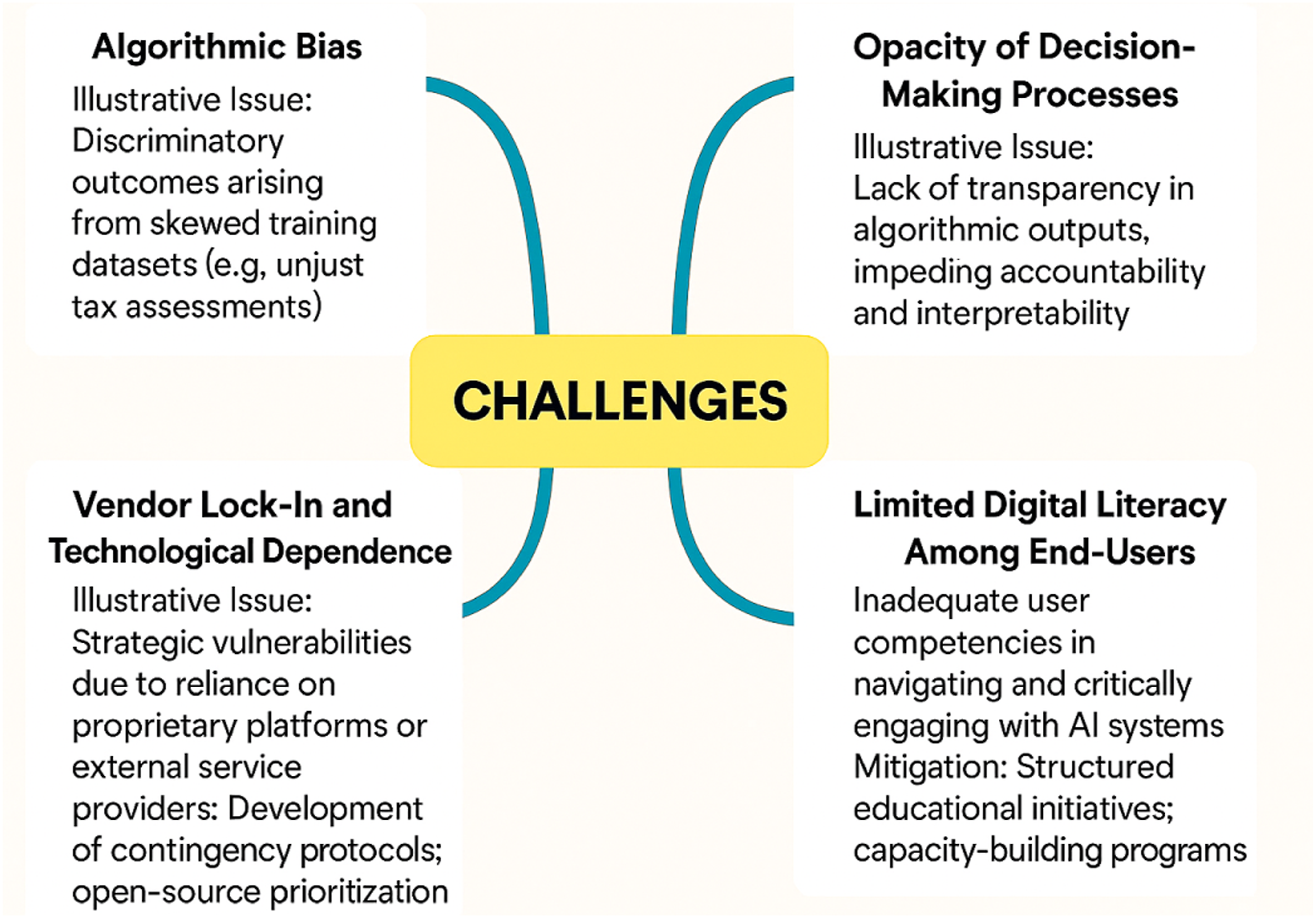

These challenges, while conceptually distinct, converge around a common concern: how to preserve user rights and institutional trust in the face of increasing AI integration. To synthesize these issues, Figure 3 maps four recurring areas of concern identified in the literature, algorithmic bias, opacity of decision-making, vendor lock-in, and limited digital literacy among end-users. By clustering these challenges, the figure highlights both the diversity and the interdependence of the obstacles libraries must confront. Key challenges for AI adoption in academic libraries: bias, opacity, vendor lock-in, and digital literacy. Source: Generated with artificial intelligence.

Beyond the library field, broader studies underline the risks at play. Chen et al. (2024) document how bias in AI systems can disproportionately affect vulnerable groups. Trigo et al. (2024) highlight fairness as a principle too often abstracted rather than operationalized, while Kelly et al. (2023) examine acceptance of AI as contingent on trust, a fragile asset that libraries cannot afford to erode.

What emerges from these strands is a paradox. Libraries, often early adopters of digital innovation, risk becoming sites where rights are compromised unless safeguards are explicit. Transparency notices alone may not suffice; what is needed is a culture of rights-oriented governance, where bias testing, human appeal mechanisms, and rigorous oversight are not treated as optional enhancements but as core responsibilities.

The AI Act provides a framework for this shift. By codifying protections against discriminatory outcomes, mandating human oversight, and requiring proportionality in high-risk deployments, it aligns with the foundational mission of libraries. Yet the Act also challenges libraries to translate values into verifiable practices: to demonstrate, for example, how a recommender system protects against exclusionary bias, or how an LLM-based service avoids undermining academic integrity.

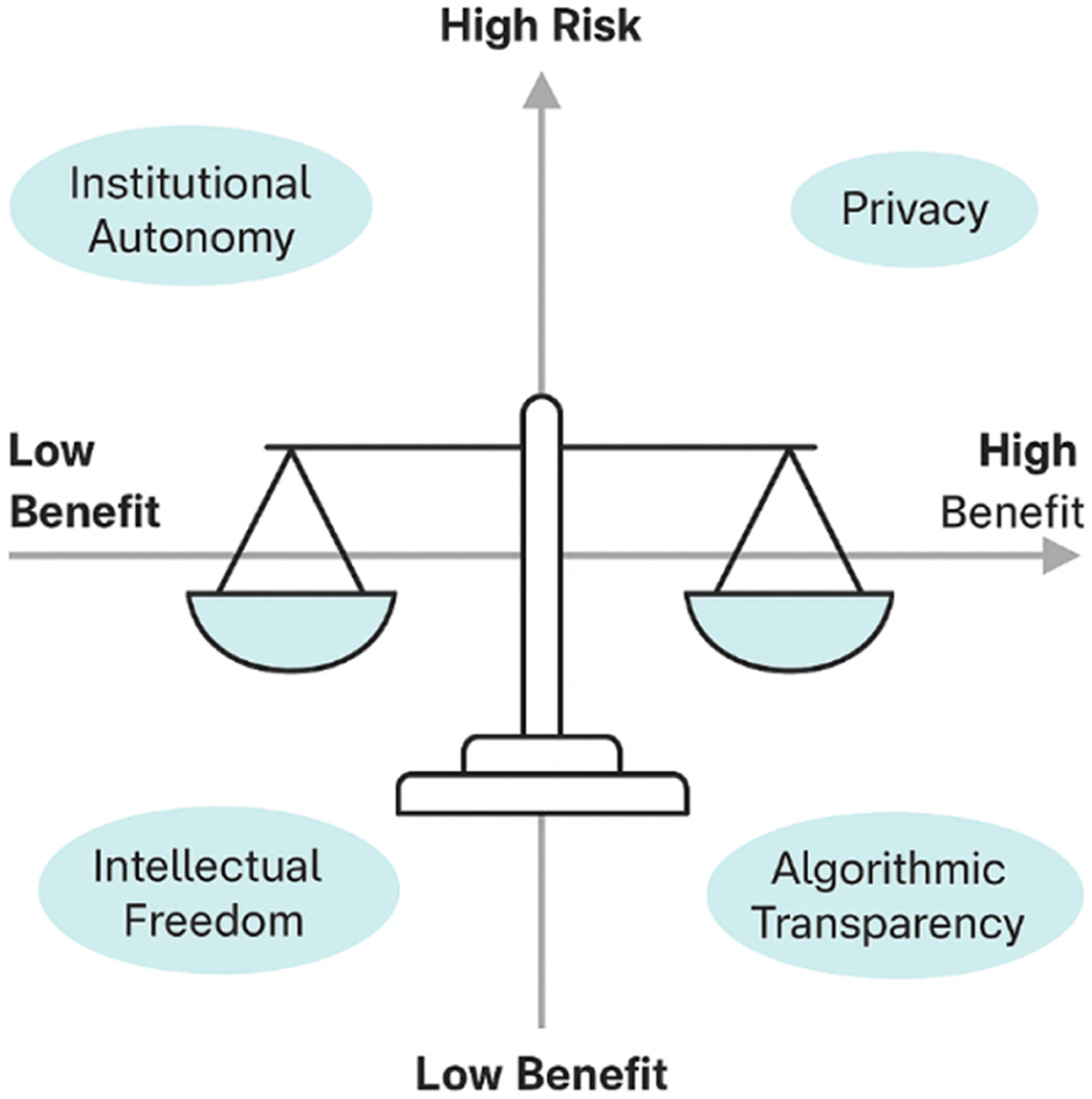

This paradox can be more clearly understood when visualized as a balance between rights-oriented safeguards and the functional benefits promised by AI systems. Figure 4 situates core library values, intellectual freedom, institutional autonomy, privacy, and algorithmic transparency, within this tension, illustrating how each can be simultaneously strengthened or undermined by AI adoption. By framing these values as points of equilibrium rather than fixed absolutes, the figure underscores the challenge libraries face: to adopt innovative technologies without compromising their foundational mission. Balancing risks and benefits of AI adoption in academic libraries: mapping core values against regulatory implications. Source: Generated with Artificial Intelligence.

In this sense, libraries are not merely passive recipients of regulation but potential exemplars. By embedding fundamental rights into their AI governance models, they can model what responsible implementation looks like across the broader knowledge ecosystem. This positions the library not only as a site of compliance but as a custodian of rights in the algorithmic age.

Findings

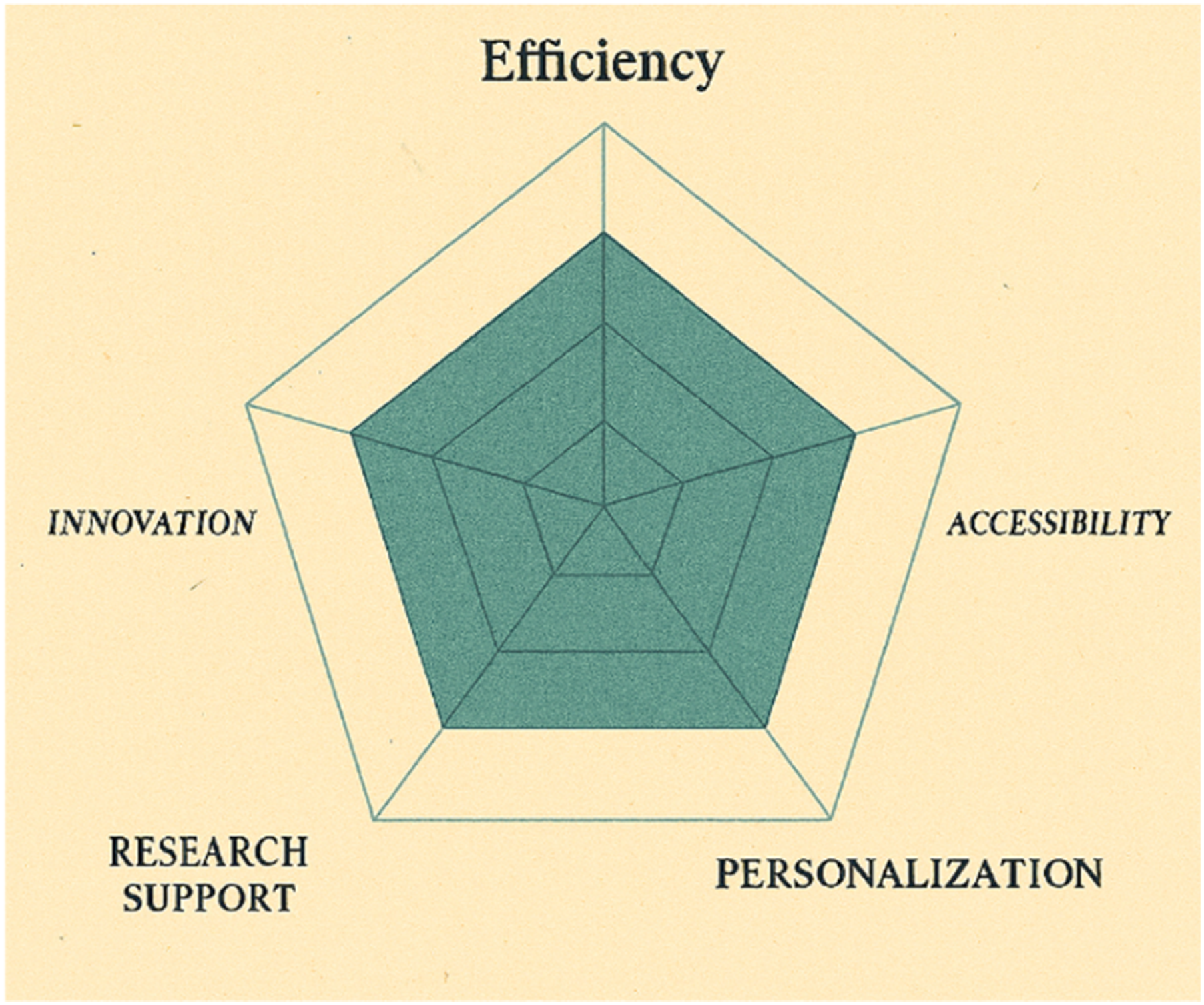

This review yields five interlocking findings that clarify how the AI Act can be operationalized in academic library settings and where the current literature still falls short. I. II. III. IV. V. Benefit dimensions of AI applications in academic libraries. Source: Generated with artificial intelligence.

Cross-cutting implications

(1) (2) (3)

Taken together, the findings position academic libraries not only as deployers of AI but as norm-setting institutions capable of demonstrating what AI-Act-aligned, rights-preserving implementation looks like in the European knowledge ecosystem.

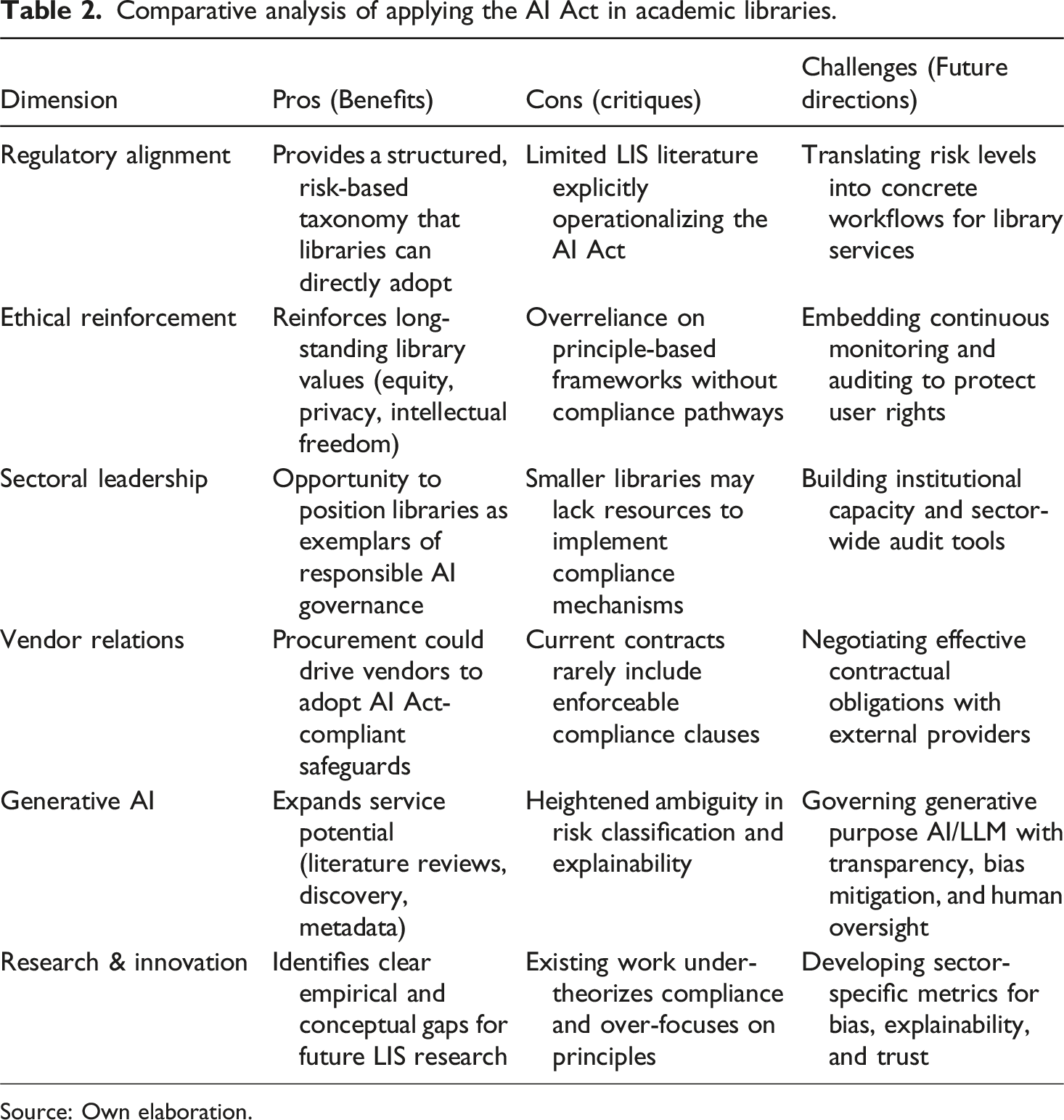

Discussion

The findings of this review highlight both the potential and the limitations of applying the EU AI Act to academic libraries. The Act offers a regulatory compass that can transform ethical aspirations into enforceable safeguards, yet the literature reveals significant gaps: compliance pathways remain underdeveloped, procurement contracts lack explicit safeguards, and libraries risk becoming passive adopters rather than active shapers of AI governance.

Comparative analysis of applying the AI Act in academic libraries.

Source: Own elaboration.

This comparative framing reinforces that libraries are at a crossroads: either they adopt AI uncritically, replicating patterns of bias and opacity, or they seize the AI Act as an opportunity to embed rights-preserving governance into their operations. The pros demonstrate that alignment is feasible and synergistic with core library values; the cons reveal a scholarly and operational lag in translating principles into practice; and the challenges highlight where sector-wide effort and empirical inquiry are most needed.

In short, academic libraries are not merely deployers of AI systems, they can become exemplars of responsible, AI-Act-aligned governance within the European knowledge ecosystem.

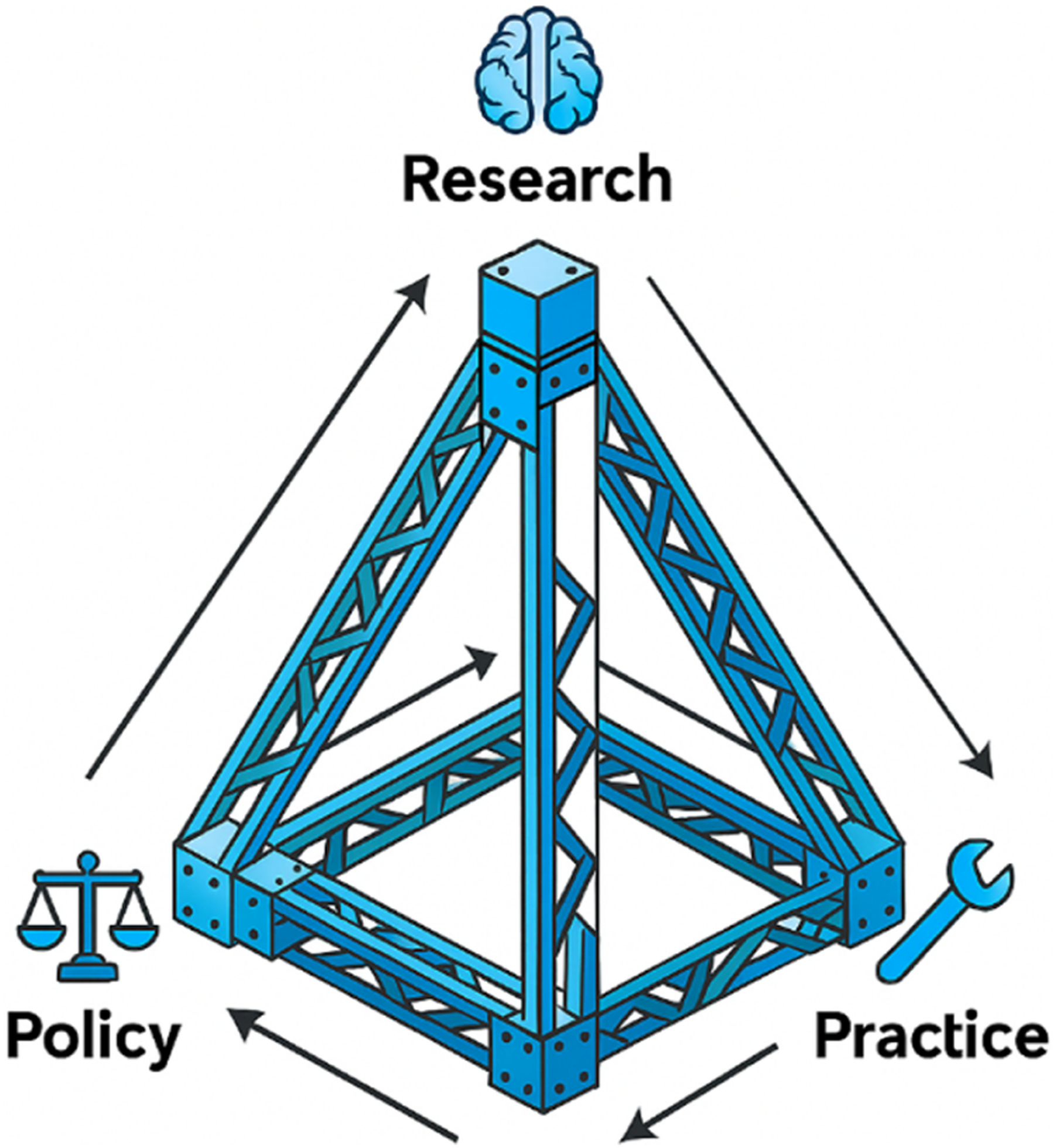

Building on these reflections, it becomes evident that aligning academic libraries with the EU AI Act requires a triangular interaction between research, policy, and practice. Research provides the conceptual and empirical evidence to ground risk-classification frameworks; policy translates these insights into enforceable governance structures; and practice ensures their day-to-day operationalization within library environments. To illustrate this interdependence, Figure 6 visualizes the tripartite scaffolding necessary for embedding responsible AI governance in libraries. Triangular framework linking research, policy, and practice for AI-Act-aligned library governance. Source: Generated with artificial intelligence.

Conclusion

This study demonstrates that the EU AI Act offers academic libraries not just a legal framework but also a strategic opportunity to embed responsible AI practices aligned with their long-standing values of equity, intellectual freedom, and trust. By applying the Act’s risk taxonomy to concrete library use cases, ranging from chatbots and discovery systems to generative AI tools and biometric applications, it becomes evident that the regulation is both relevant and adaptable to the sector.

The analysis reveals a dual picture. On the one hand, the AI Act provides libraries with a clear pathway to move beyond principle-based ethics into enforceable, risk-proportionate governance. On the other hand, significant barriers remain: vendor dependency, limited compliance expertise, and the under-theorization of regulatory pathways within LIS scholarship. These tensions underscore the importance of libraries positioning themselves not as passive adopters of AI but as proactive institutions capable of shaping responsible deployment.

For practice, the findings suggest that libraries should develop sector-specific risk classification matrices, strengthen procurement processes with enforceable compliance clauses, and build internal capacity for auditing and monitoring AI systems. For policy, there is a need for tailored audit frameworks and contractual models that reflect both the AI Act’s requirements and the library sector’s mission. For research, three directions stand out: empirical validation of AI risk classification in library settings, metrics for explainability and bias mitigation in discovery systems, and studies on user trust, rights, and academic freedom under different governance models.

Ultimately, academic libraries occupy a unique position at the intersection of knowledge, rights, and technology. By operationalizing the AI Act in ways that safeguard fundamental rights while enabling innovation, libraries can become exemplars of responsible AI governance in the European knowledge ecosystem, demonstrating that regulation, far from being a constraint, can serve as an enabler of both trust and progress.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.