Abstract

Artificial Intelligence (AI) is inseparable from human society, and the future of humankind is intertwined with the future of AI. This article is intended to explore the interconnection between the futures of humankind and ethical AI from the perspective of Jürgen Moltmann's theology of hope. It will focus on predictive AI, particularly predictive policing tools, which are designed to forecast potential crimes and harms through statistical analysis of personal data. It will be demonstrated that predictive policing presupposes that the future can be mathematically calculated out of the past and the present. This principle underlies various social and moral issues, such as racial discrimination and injustice, surrounding the deployment of predictive policing tools. Moltmann's theology of hope generates the concept of the hoped-for future and reconciliation ethics, which together advance the development of the ecosystem of AI-driven predictive policing and identify predictive policing tools as human planning participating in God's planning for the future of reconciled humanity. The theology of hope shows a path through which human planning leads to a future that is not absolutely determined by the past or the present but will be packed with the newness of human community.

Introduction

Artificial Intelligence (AI) has become inseparable from human society, and the future of humankind is intertwined with the future of AI. Multiple approaches have been proposed to delineate the futures of humans and AI. Some scholars suggest that AI can be incorporated into human communities as a companion, which means humans and AI will share the same global inhabitable environment. 2 Others, like futurists, assert that AI will direct human evolution toward the Singularity, where humans will go beyond biological limitations under the auspice of intelligent machines. 3 Furthermore, many AI researchers and ethicists believe that humans and AI are and will remain interwoven and stress that the interweaving of humans and AI is linked up with the future of ethical AI. 4 The term ‘ethical AI’ does not connote that AI itself becomes a moral agent like human beings, nor does it suggest that AI can substitute for human moral agents in the future. Rather, it refers to AI technology that is morally deployed in human life and society.

Ethical AI is of particular importance for the future of humankind. For AI not only benefits human lives but also poses threats to the development of human society. In her most recent work, Shannon Vallor reminds us that AI ‘is the first technology that can place our future in jeopardy by preventing us from knowing how to make a future at all. AI is the first technology that can make us forget how to answer our own question.’ 5 This threat is entangled with the ethical and social issues surrounding AI applications. Hence, it is hardly an exaggeration to claim that the flourishing and future of humankind are inextricably linked to the development of ethical AI.

This article is intended to explore the interconnection between the futures of humankind and ethical AI from the theological perspective of hope. 6 Christian theology of hope is tied up with the future of humankind and attends to moral life, which orients humans towards the future that is hoped for. Michael Burdett argues that while technology brings forth various futuristic accounts of hope, Christian eschatology gives birth to a hope that is oriented towards the new and unforeseen future promised by God. 7 A few questions arise here: What is the future? What kind of future do humans expect? How do the futures of humankind and AI stimulate, enhance, or challenge each other?

To address these questions, I will focus on examining predictive AI, which refers to AI systems trained to predict certain outcomes (either benefits or harm) through statistical analysis of data. It has been extensively deployed to shape a better future of human life and society. It is utilised to forecast unemployment rate and help policymakers to plan economic and financial growth in the future. 8 In addition, Taiyu Zhu and his colleagues suggest that a dilated recurrent neural network can help to develop a glucose forecasting model, which can predict the levels of blood glucose and help patients to self-administer insulin. 9 Predictive AI exemplifies how AI is bound up with the future of humankind, showing a great deal of benefits AI technologies can bring forth to human lives. Given the space of the article, I will explore the deployment of predictive AI in police forces, that is, predictive policing tools, designed to forecast potential crime and harm through statistical analysis of personal data.

While engaging theologically with predictive AI, I will narrow the scope of this article to concentrate on Jürgen Moltmann's theology of hope, which yields a theological principle of future that helps us to reconfigure the role of predictive policing in human society. As Richard Bauckham notes, ‘most of all enabled theologians … think once more of eschatology as speaking of the real future of the world’ after the publication of Moltmann's

In this article, it will be demonstrated that AI-driven policing presupposes a future that can be mathematically

This article breaks into three sections. First, I will delve into AI-driven predictive policing and relevant social and ethical issues, foregrounding the underlying principle of the predicted future. Second, I will spell out Moltmann's theology of the hoped-for future and his hope-oriented ethics. Finally, I will leverage Moltmann's theology of hope to constructively develop a framework for deploying predictive policing tools for the reconciled humanity and the future of human society.

A Future That Can Be Predicted

Prediction has been central to policing before the advent of AI. Forecasting crimes and harms is crucial for crime and justice research, as it can enhance decision-making in parole, policy applications, and police forces allocation. 11 Traditional tools used for policing before the emergence of AI includes, among others, crime mapping, descriptive statistics for illustrating crime trends and hotspots, community policing that connects law enforcement with communities to reduce crime, and so forth. Forecasting crimes and harms based on these traditional tools is retrospective, reactive, descriptive, and small-scaled. AI revolutionises policing insofar as AI-driven predictive policing tools offer real-time and large-scaled prediction of crime and harm based on autonomous decision-making. To borrow Sarah Brayne's words, AI brings forth the ‘shift from reaction to prediction’ in policing and law enforcement. 12 In this sense, it can be argued that predictive policing becomes possible in the age of AI.

Predictive AI has been warmly received by police departments worldwide. Alexander Babuta and Marion Oswald's research on predictive policing tools in England and Wales helpfully summarises three factors that catalyse the extensive deployment of predictive policing tools. 13 First, police forces have to deal with vast volumes of complex digital data, which are closely linked to human and societal safety. Second, the concurrence of the growth of demand on, and the shortage of, police forces necessitates technology like predictive policing tools to manage this demand efficiently and effectively. 14 Third, there is growing emphasis on the capacity of police forces to take pre-emptive measures to prevent potential harm and crime in a real-time manner. Furthermore, Alireza Daneshkhah and his colleagues suggest that preventative measures are of particular significance for police forces to deal with cybercrime, which is often very complex due to its potential to be committed from anywhere in the world. 15 These three factors illustrate the benefits of predictive policing tools, illuminating an expectation for nurturing justice and human well-being on a large scale.

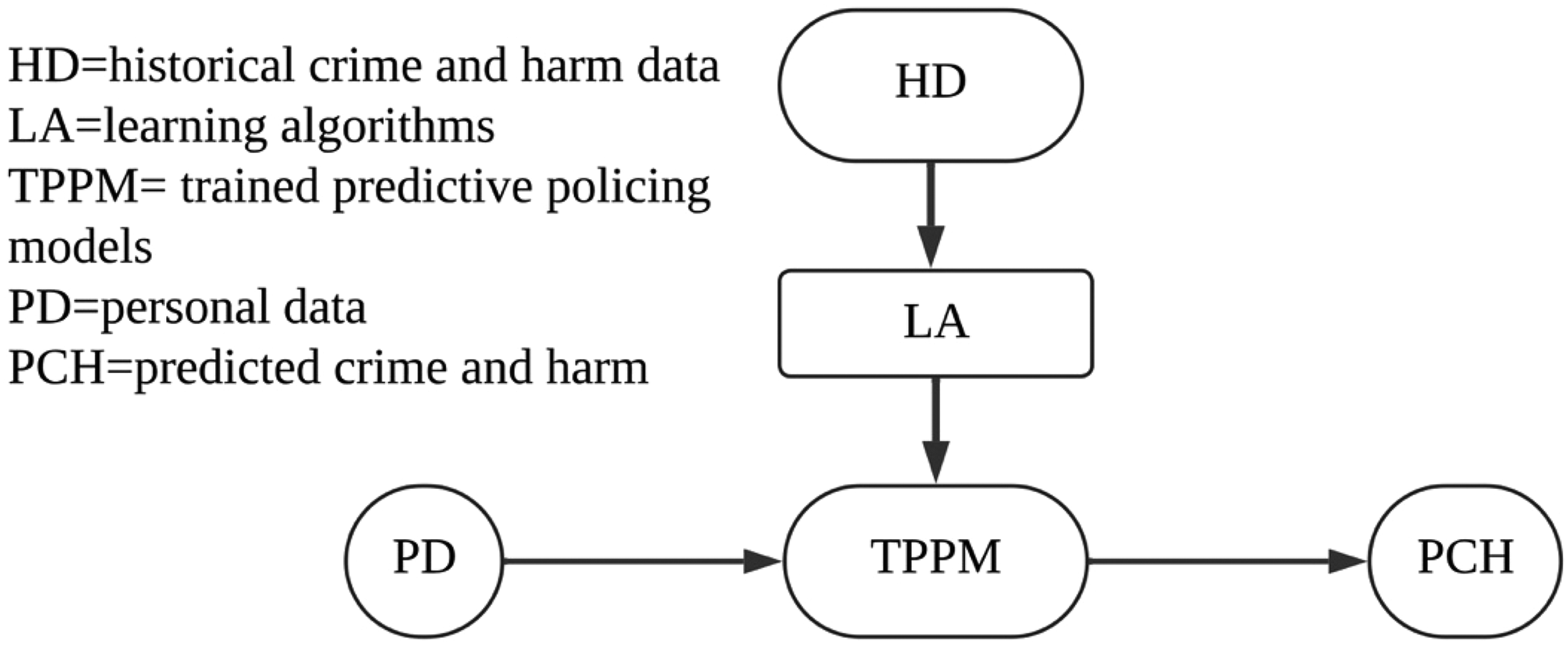

What kind of future do predictive policing tools bring forth? To address this question, we need to look at the

As Figure 1 shows, a predictive policing system is a model produced by learning algorithms that evaluate historical crime and harm data and adjust the parameters of a primordial model according to

Predictive policing systems.

That said, the benefits of predictive policing tools cannot offset or remedy associated social and ethical issues, which result from their algorithms and data. The trained model of predictive policing generated through algorithms is expected to predict crime and harm in reality. Yet, models are not identical to reality. The model is not exactly isomorphic to the aspect of reality it represents. Rather it is made through a process of conscious selection from a slice of reality by using abstraction … The abstraction used in creating models means that they represent idealisations of reality. This does not, however, invalidate models. On the contrary, their validity—and thus their usefulness rests precisely on their ability to abstract.

17

Accordingly, the trained models of predictive policing are justified in terms of their powerful abstraction rather than recapitulating reality. Algorithms cannot generate models that guarantee the accurate forecasting of crime and harm

The mispredictions of predictive policing tools give rise to social and ethical issues such as racial discrimination, unfairness, and privacy breaches. For example, on 5 February 2020, the District Court of The Hague banned the use of System Risk Indication in the Netherlands, the predictive policing tool the Dutch government had employed to identify individuals suspected of committing welfare fraud. The court ruled that this policing tool violated the right to privacy as protected under the

It is worth noting that behind ethical and social concerns about predictive policing tools lies a metaphysical principle. That is, the reality of the past, the present, and the future is, by its nature, mathematically connected, which means that the future can be Any projection of historic crime patterns into the future assumes a degree of continuity. This is not necessarily unreasonable, but the appropriateness of this assumption depends on the context. It may be more appropriate for some types of crimes (e.g. burglary) than for others (e.g. kidnapping) and the degree of continuity can be affected by changes in policy as well as social and cultural changes in a particular community.

20

Granted that there is a degree of continuity between the past and the future, Moses and Chan point us to the role that contextual variables play in shaping the future. The continuity between the past and the future is dynamic and vibrant rather than mechanical or static. Indeed, predictive AI surpasses humans in detecting correlations between historical data and potential crimes and harms. Nevertheless, it is misleading to equate such correlations with causation or to argue that the reality of the past encapsulated in historical data is the very cause of the reality of the future. In this light, it is an overstatement to suggest that AI possesses a type of knowability not accessible to humans. 21 A permanent question about predictive policing tools is ‘how accurate those predictions are and how proportionate are the subsequent actions to the predicted offence’. 22 The future cannot be fully predicted, and the credibility and legitimacy of predictive policing tools rest partly, yet significantly, on how well contextual variables are incorporated to improve the accuracy of their predictions.

Upon closer examination of the presupposition of continuity between the past and the future, it comes to the fore that this metaphysical principle underlies the ethical and social issues surrounding predictive policing. This continuity is the mathematical operation of connecting present personal data to historical crime and harm data, largely leaving out the newness of human life derived from contextual variables. Yet, notwithstanding that predictive policing tools efficiently process data and, to a certain extent, forecast potential crimes and harms, it is an exaggeration to argue that predictive AI will necessarily serve the betterment of human life and society. The metaphysical principle of predictive policing should be critically examined so as to articulate a framework for addressing its ethical and social issues.

A Future That Is Hoped-For

Christian theology of hope presents an alternative account of the nature of the future, offering a critical response to the metaphysical principle of predictive policing. In what follows, I will flesh out how Jürgen Moltmann's theology of hope can be employed to reconstruct a metaphysical principle of the future for predictive policing tools. His theological articulation of the future and derived ethics of hope can furnish guiding principles for the ethical deployment of predictive policing tools.

Moltmann's theological account of the future is Christocentric: the nature of the future is theologically rooted in the event of Christ. He argues that the resurrection of the crucified Christ paves the way for the expectation of what will be given in ‘the risen and exalted Lord’.

23

This Christocentric account of the future is intertwined with God's promise. The future of Christ which is to be expected can be stated only in promises which bring out and make clear in the form of foreshadowing and prefigurement what is hidden and prepared in him and his history … The knowledge of the future which is kindled by promise is therefore a knowledge in hope, is therefore prospective and anticipatory, but is therefore also provisional, fragmentary, open, straining beyond itself.

24

In Moltmann's position, hope is related to the meaning of the future, and our knowledge of the future is not fixed but open-ended precisely because it is Christ, rather than humans, who brings hope. Hence, Christocentric hope discloses the metaphysical deficiencies of predictive AI, providing a conceptual tool through which to address the social and ethical issues surrounding predictive policing tools. This point will be revisited in the next section.

Moltmann inquires into the meaning of hope in comparison with the concept of planning. He defines planning as ‘an anticipatory disposition for the future’, which can be manifested in different ways.

25

First, planning as an anticipatory disposition can bring the

Hope differs from planning, all the more so when considering the Christocentric nature of hope. Hope arises when anticipatory dispositions or planning becomes uncertain or when something

The divergence between hope and planning turns our attention to the difference Moltmann makes between two types of future: planned or calculable future and hoped-for future. We can talk about what is going to

Three points are of note in reference to this passage: (1) the radical distinction between calculable and hoped-for future, (2) shaping the future by developments, and (3) the link between planned and hoped-for future. As will be demonstrated, the three points can stimulate the critical assessment of predictive policing in the theological terms of the future.

First, the calculable future differs radically from the hoped-for future. Moltmann maintains that the calculable or planned future is The future in the sense of

The word ‘parousia’ signifies the significant role that Moltmann's Christological understanding of the future has to play here. At the same time, the term ‘extrapolated’ features in Moltmann's concept of future. Extrapolation means to ‘[give] a meaning to the descriptive function beyond the sphere of what is measurable and checkable’.

33

The extrapolated future is based on calculation, including ‘trend-analysis and computer-forecasting’.

34

It is calculated out of the ‘fixed and prescribed’ present, which means that the future is nothing other than the ‘extended present’ and is not truly the future.

35

On the contrary, the hoped-for future is characterised by the otherness of the future, generating something new that does not arise out of the present but rather out of the impossible.

36

Theologically speaking, Moltmann designates this new thing as ‘

The second observation on Moltmann's distinction between the planned and the hoped-for future is his attention paid to the connection between development and the future. He seems to correlate development with the planned or calculable future. However, Moltmann does not invalidate the development of human society and life. His purpose is to criticise development within the context of the modernity project and the optimism about the progress of technoscience. The modernity project drives humans to choose their own path toward the future and commit to the development of a better humanity.

38

Although many people have become disillusioned with the modernity project, technoscience continues to generate and sustain the conviction in the optimistic development and progress of human society.

39

In this regard, Moltmann's remark on the development of human society and life coincides with the rationale behind predictive policing tools, that is, developing better human society with predictive AI. That said, Moltmann makes it clear that Christian hope is never in conflict with the development of human life and society. Rather, Christian hope embraces the development of the world, ‘encouraging, rectifying and stimulating [development] by virtue of the infinite powers which reach beyond all finite purposes’.

40

In this light, hope does not turn down but orients development towards the hoped-for future. Given that development involves analysis, calculation, and planning, a question arises: How does planning contribute to the

The third observation on Moltmann's view of the calculated and the hoped-for future addresses this question. When opposing the attempt to identify the hoped-for future as something arising out of the past and present, Moltmann recognises the worth of planning, claiming that ‘[t]he human spirit of planning could then be understood as the image of the divine’.

41

Hence, planning

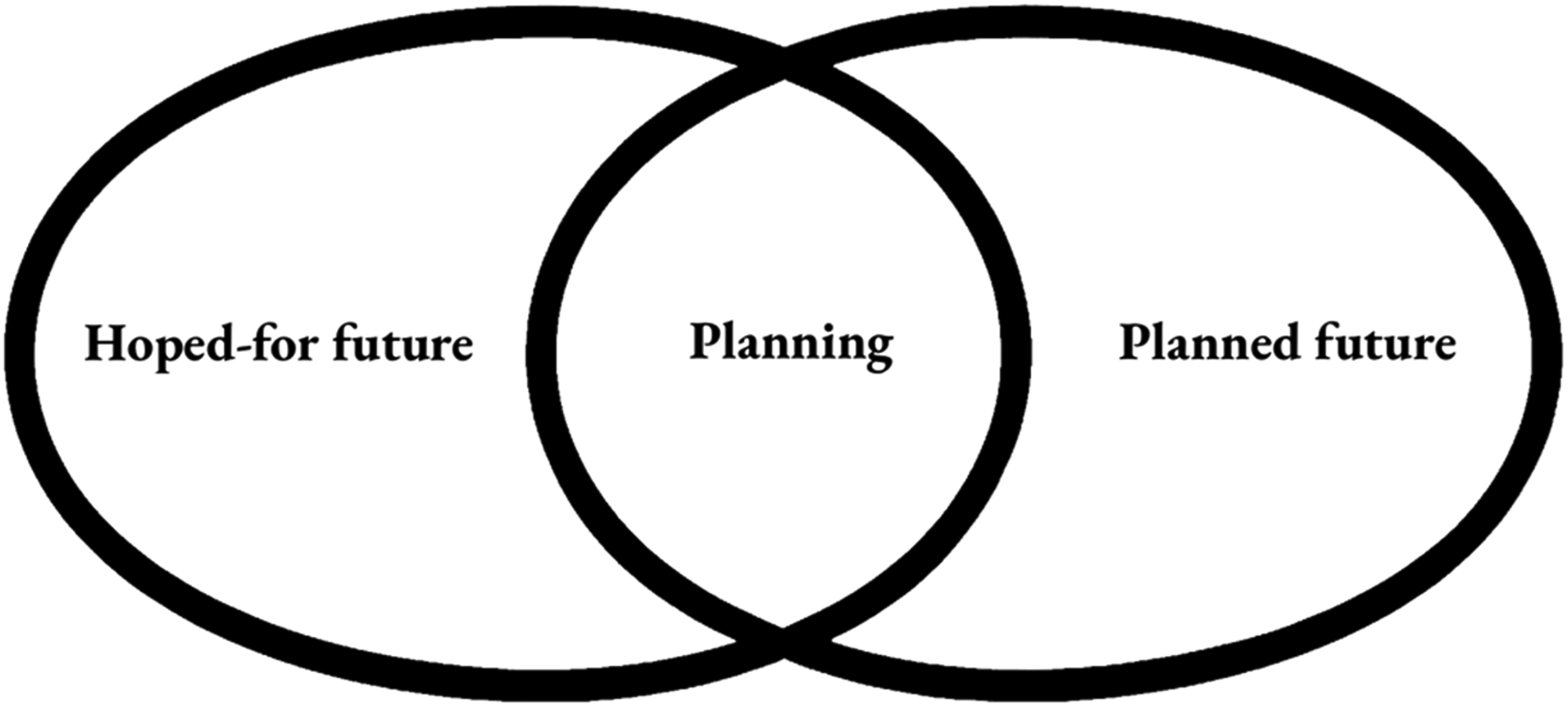

From the difference yet connection between planning and hope, it follows that planning can be oriented towards two distinct goals insofar as it is part of both the hoped-for and the planned future (see Figure 2).

Planning.

As noted earlier, planning can mean to anticipate the future that is expected to arise out of the past and present. Yet, planning operates differently for the hoped-for and the planned future. In the scenario of disconnecting planning from hope, planning serves the planned future that is purely calculated from the past and present. In the scenario of grounding planning in hope, planning does not simply bring historical possibilities into the real. More importantly still, hope ‘kindles itself on the Hope is that which gives us an attitude to the future, which

Moltmann emphasises the necessity of incorporating planning into the hoped-for future. For the hoped-for future ensures that human planning can allow for the promises of others and foster personal relationships, which together constitute the otherness of the future.

The three observations on the distinction between the planned and the hoped-for future are rooted in Moltmann's Christological account of hope. His explication of planning is also bound up with the theological account of God's promise in Christ. It may be called into doubt how Moltmann's view of the hoped-for future can present an effective approach to engaging with ordinary human planning, which often has nothing to do with the hoped-for future. In fact, Moltmann's theology of the hoped-for future lays a foundation for his ethics of hope, elucidating how the theology of hope offers an ethical tool to direct human planning towards the hoped-for future as God promises. In his position, the work of Christ reveals the interconnection between hope and reconciliation, which means that the hoped-for future must be characterised by reconciled humanity, that is, the fellowship between humans. 45 Moltmann adds further clarification on this reconciliation: ‘An ethics of hope sees the future in the light of Christ's resurrection … The liberation of the oppressed, the raising up of the humiliated, the healing of the sick and justice for the poor are familiar and practicable keywords.’ 46 Since the crucified Christ represents the poor, the humiliated, and the marginalised, the hoped-for future should bring forth both the reconciliation among humans and an ethics that embraces these neglected people. Moltmann deliberately links the ethics of hope with the progress of technoscience, highlighting the ethical issues related to technologically enhanced planning. In his position, technology enables those who have the power to carry out their plans more efficiently and impose their planning more comprehensively, especially on the poor and the marginalised. 47 Hence, the theology of hoped-for future and the ethics of hope together disclose the necessity to embed reconciliation into technoscience. In what follows, I will flesh out the importance of reconciliation for predictive policing tools as human planning for the hoped-for future, which is not simply determined by the past and the present but highlights the newness being brought forth in the future.

Predictive Policing as Planning for the Hoped-For Future

Having examined the notions of future shaped respectively by predictive policing and Moltmann's theology, it suffices to say that his theological account of the hoped-for future brings up critical challenges to the widespread deployment of predictive policing tools and their underlying metaphysical principle. Be that as it may, I will demonstrate that Moltmann's theology of hope provides an ethical framework for developing ethical AI for predictive policing.

The contrast between the hoped-for future and the predicted future is obvious. The future simply taking shape in predictive policing can be characterised as planned in the calculable sense. Relying upon historical crime and harm data, predictive AI extrapolates the future from the past through the present. Hence, predictive policing is considered the midwife who assists in delivering a just and better future. In this manner, AI is often over-trusted even when humans are unaware of the training data and algorithms, as occurred with the aforementioned predictive policing tool used by the Chicago Police Department. Moltmann's warning remains pertinent on this matter: ‘our society has lost sight of a future which is to be desired and hoped for lying beyond the future that is technically possible.’ 48 Over-trusting predictive policing as well as other AI systems will lead to an algorithm-calculated future and associated ethical and social issues. As such, the future of AI, including predictive AI, should incorporate the hoped-for future. But how?

Moltmann's theological justification of planning can serve as a conceptual framework for validating the deployment of predictive AI within police forces. Predictive policing can be viewed as a type of human planning, the purport of which is to explore, not finalise, the hoped-for future. 49 Theologically speaking, predictive policing as planning is a human effort to participate in God's planning for the flourishing of humankind, leading to the hoped-for future. It should be conceded that human planning often conflicts with, rather than aligns with, God's planning. Yet, Moltmann's correlation of reconciliation and hope gives birth to an ethical framework for deploying predictive policing tools to make and sustain a human community loved by God.

This ethical framework illustrates two aspects of the future of AI. First, it affirms the benefits of predictive policing tools as the consequence of human participation in God's planning for humans. The hype surrounding AI and AI's challenges to human society has brought about AI-phobia. However, it should be conceded that AI has been integrated into human communities and is playing a significant role in shaping the future of human society.

50

Furthermore, Eric Stoddart cogently demonstrates that Christian ethics of care and responsibility for others can help orient surveillance technology to be a tool

This mechanism should allow for the hope for the newness of human communities while developing predictive AI for policing. Predictive policing tools, as well as all AI-driven artefacts, are inclined to define human identity and what is meaningful to human persons, having the potential to strip off human active and dynamic creation of their own identities.

53

By contrast, Moltmann's theological account of hope shows that the marginalised and the neglected can reshape their identities and are part of reconciled humanity. Such newness nurtures the growing diversity of communities. This theological insight implies that predictive policing should keep open to the contextual variables of the community, which sustain the persistent newness of the human community. For example, the shared values within a particular community underlie the communal well-being and orient it towards a future where

Many AI researchers submit that predictive AI systems ‘have very limited ability to predict how novel interventions might change the world they are interacting with, or how an environment might have evolved differently under different conditions’.

54

The mechanism of reconciliation ethics should confine each predictive AI system to a specific communal context and enable predictive policing to adapt itself to the newness of human communities. The hope for the newness of the community enriches the ‘Ethics Guidelines for Trustworthy AI’ published by the European Commission. According to the Guidelines, AI systems should ‘[rest] a commitment to their use in the service of humanity and the common good, with the goal of improving human welfare and freedom’.

55

The concept of the hoped-for future adds ethical weight to AI's ‘use in the service of humanity’ from the theological vantage point. That is, the common good, human welfare and freedom are rooted in

To actualise this participation, predictive policing tools should operate as the planning for the hoped-for future of the moral community, where all individuals, including the marginalised and the neglected, participate The knowledge, fundamental to Christianity, that the future of God has begun in the crucified Jesus, brings Christians into critical conflict with technocratic notions of development.

Accordingly, the hoped-for future does not conflict with the technologically driven development of human society. What is at stake during technological development is the place of the neglected, the oppressed, and the marginalised in technological society. This is resonant with Shannon Vallor's warning about the future of AI: wealth and power will be substantially and unprecedentedly concentrated into increasingly smaller groups, especially those who develop and sell AI technologies. 57 In terms of predictive AI, predictive policing is likely to be abused to oppress certain groups of people, resulting in social discrimination and injustice. Building reconciliation into predictive AI as human planning should remedy the tendency to manipulate predictive policing for the ends of certain groups of people and to mitigate the unawareness of immoral predicted outcomes or mispredictions.

How can reconciliation-based human planning be embedded within predictive policing systems? Laws and legal regulations are enacted to provide prescriptive rules for the operation of predictive policing, which can guide the design of predictive AI. It is beyond the scope of this article to go into comprehensive details on AI legislation.

58

Here I would like to explore how planning for the hoped-for future of the moral community can be

The term ‘explainable AI’ was introduced into AI research in 2015 by the Defense Advanced Research Projects Agency, the US Department of Defense. XAI refers to an AI system that not only presents end users with decisions but also makes the rationale for its decisions and decision-making process understandable. 59 XAI is designed to alert humans to the potential risks resulting from AI's mispredictions, showing the necessity of the explainability of AI. The planning for the hoped-for future can be integrated into the explainability of AI so that predictive policing tools can ethically serve the future of humankind.

XAI methods vary, but they can be largely classified into two groups: intrinsic explainability and post hoc explainability. 60 Intrinsic explainability means that AI itself has the ability to explain its decisions and decision-making process to humans. In other words, AI is self-explainable. This type of XAI is often used at a less complex level, such as decision trees. Post hoc explainability refers to an AI system that explains the decisions and decision-making process of another AI system, which is typically less understandable and more complex. To this extent, post hoc XAI acts to open the black boxes of complex trained AI models. Given that predictive policing systems are not self-explainable and have the potential to produce mispredictions, post hoc XAI can be used to orient predictive policing toward the planning for the hoped-for future in two ways.

First, the post hoc XAI of counterfactual explanation can detect whether the deployment of predictive policing tools align with reconciliation ethics, operating as human planning that participates in God's planning for human flourishing. Counterfactual explanations are intended to answer what-if questions and produce consequences of alternative scenarios. 61 When receiving the decision of AI, counterfactual explanation algorithms can make a different decision by altering input data. In terms of predictive policing, the post hoc XAI of counterfactual explanation can change the input data of targeted individuals to detect whether or not decisions are inherently biased against certain groups of people. By way of illustration, when predictive policing judges that a Black man will bring potential harms and should be arrested, police can use counterfactual explanation AI to check whether the decision would remain the same if the targeted individual were a white man. In like manner, post hoc XAI can explain the reason for making decisions and the process of decision-making. Following this, the counterfactual explanation algorithm can change the input data of targeted individuals based on real-time, spontaneous, and instant contextual variables, so that police can weigh up whether the decisions of predictive policing tools facilitate the actualisation of the hoped-for future of human society and pay due attention to the newness of human communities.

Second, predictive policing tools can be embedded with reconciliation by combining the post hoc XAI with predictive AI models to watch over police and forecast their misconduct. It is taken for granted that predictive policing tools are extensively deployed to watch over the public. The public is always passively surveilled. The deployment of predictive policing tools reflects the asymmetry between police and the public. The ethical issues surrounding predictive policing tools largely stem from this asymmetric relationship. Predictive policing tools characterised by reconciliation ethics should, first of all, recast the relationship between police and the public and, thereby, create an environment for nurturing the newness of human communities. This reconciliation approach echoes and consolidates a recent methodology to articulate AI ethics through building an AI ecosystem, which characterises AI as an integral whole comprised of many elements such as engineers, users, data-providers, tech companies, policy, legal regulations, the public, and so forth. These elements are interlinked, and so the principles underlying AI ethics should permeate each element for human flourishing.

62

In terms of predictive policing tools, the ethical principle of reconciling the marginalised and the neglected into humanity should be part of each element of the ecosystem, rather than being confined to the relationship between police and the public. In other words, each element of this ecosystem should be involved in nurturing reconciliation among humans by developing the post hoc XAI and predictive models. For example, data-providers should gather raw data from both the public and police, ensuring that the data for training the predictive models of policing tools can be used to

Conclusion

AI has an impact on the formation of the future of humankind because the advice and decisions generated by AI such as predictive policing have already played a great part in the development of human society and, consequently, have raised associated ethical and social issues. The future of human life and society has a close tie to the ethical framework for the development and deployment of predictive policing tools as well as predictive AI at large.

I have demonstrated in this article that Moltmann's theology of hope is conducive to the construction of such an ethical framework for predictive policing tools. His theological notion of hope is embedded with a metaphysical principle of the future, which prevents us from over-trusting and enables us to reflect morally on the decisions generated by predictive policing tools in light of reconciliation ethics. The theology of hope can act as a conceptual tool through which to identify predictive policing as the human planning for the hoped-for future. Such planning

Hope-rooted ethics endows predictive policing with a moral principle for addressing associated social and ethical issues while recognising its benefits to human society. It is hope, rather than algorithms, that drives humans to desire an incalculable and uncontrollable future in which marginalised and neglected people can play their role in the flourishing of humankind. Although human planning does not always serve God's planning for humankind, hope-based reconciliation ethics can facilitate the growth of the ecosystem of AI-driven predictive policing. In this way, human agents remain as decision-makers, as opposed to handing over their autonomy and decision-making to AI. After all, it is human beings, rather than AI, who belong to the reconciled humanity and together hold the hope for the future.