Abstract

The purpose of this paper is to investigate data relating to the outcomes of enterprise and entrepreneurship education (EEE) activity in UK higher education institutions (HEIs). This is achieved via the use of data obtained from the Research Excellence Framework (REF), the Teaching Excellence and Student Outcomes Framework (TEF), the Knowledge Excellence Framework (KEF) and the Higher Education Business and Community Interaction (HE-BCI) survey. Overall, the analysis suggests, powerfully, that EEE impacts research, teaching and knowledge exchange in a variety of ways. Firstly, it shows that EEE, in terms of the REF, may be up to 46 times more impactful than other management disciplines. Secondly, with regard to TEF submissions, it highlights a positive relationship between the use of the EEE terms and the award level achieved. Finally, research also demonstrates a link between membership of certain HEI mission groups and improved KEF metrics when compared to the sector averages. There is a clear need to research how to develop successful EEE interventions and demonstrate their impact on the graduate, the university ecosystem and the wider economy. These data sources and methodology have not previously been used to develop a narrative for EEE across a university sector in the UK.

Keywords

Enterprise and Entrepreneurship Education (EEE) represents a range of intra and extra-curricular activities which are focused on the supporting behaviours, attributes and competencies that are thought to have an impact on individuals’ future careers prospects (Quality Assurance Agency (QQA), 2012; 2018). EEE often forms part of a broader employability approach to learning and development in an institution, albeit a distinct one, with a greater focus on deeper interventions to effect changes in the learner that will enable them to, for example, explore opportunities, investigate and address problems, provide value for others, lead, reflect and remain resilient. The aim is to produce graduates who are able to formulate ideas and act on them. Calls to develop EEE are well established (Lahikainen et al., 2018), and considerable work has already been done to integrate it into higher education institutions (HEIs) (Mulholland and Turner, 2019; Solomon, 2007). However, there is surprisingly little literature that explores the distinct impact of these activities (Nabi et al., 2017).

This is not a new problem: in 2007, Pittaway and Cope noted that evaluations of the outcomes of EEE were rare and in the intervening period subsequent papers have echoed their observation (Bryne et al., 2014; Nabi et al., 2017; Rideout and Gray, 2013; Smith, 2015). A review of the literature by the authors, focusing on this work in the context of UK HEIs concluded that these observations held, and that the limited research that did exist could be categorised into four broad groups. Interestingly, the authors noted that research in all these groups largely ignored the UK’s own Excellence Frameworks, which were created by the Government and HEI funding bodies to capture the status of research, teaching and knowledge exchange activities in UK HEIs (Johnson, 2022). Given that these Framework datasets explore a range of output metrics that might be relevant to an understanding of the outcomes of EEE, this appeared to be an important omission which could be addressed by further investigation.

This paper will, therefore, use the Excellence Framework data as lenses through which to explore the evidence presented in terms of the outcomes of EEE and its associated impacts. 1

EEE outcomes research

In preparing this paper the authors reviewed the academic (Kamovich and Foss, 2017; Nabi et al., 2017; Pittaway and Cope, 2007), stakeholder (Hannon 2007; Rae et al., 2012) and practitioner and policy (APPG, 2018) literatures which explore the outcomes of EEE in UK HEIs. This exercise led to the identification of four broad groups of literature which presented perspectives on the outcomes (and the associated impacts) of EEE: cohort based studies, cross institutional studies, stakeholder reports and regional insight reports. In this section the authors will explore these works to clarify their positions, contributions and their shortcomings.

The first group categorised by the authors included papers that explored enterprising and/or entrepreneurial outcomes through the application of single institution cohort studies. This research typically seeks to connect outcomes – such as business knowledge, attitudes required to run a business, entrepreneurial competencies and entrepreneurial intent – to specific activities that have been used in a programme or module cohort to measure their impact (Bozward and Rogers-Draycott, 2020; Curtis et al., 2020; Sánchez, 2013; Williams, 2011).

The second group included works that explored interventions across institutions. Generally, this was a more diverse literature whose authors sought to explore a range of EEE outcomes. Matlay and Carey (2007), for example, explored entrepreneurship education initiatives over a 10-year period in 40 UK HEIs; unusually, they focused on programme design, delivery and assessment and their impact on how the sector was understood externally. Kitagawa et al. (2015) investigated the differences in entrepreneurial intention for students from two UK HEIs in the same city in an attempt to connect EEE experience (in the institutions) to entrepreneurial economic activity and its impact on the wider city-region. Jones et al. (2017) compared the impact on careers for alumni of two UK universities and found that EEE had long-term positive value in terms of starting a business and career development. Topazly (2018) sought to understand whether EEE activity in three UK universities had a measurable impact on the post-study entrepreneurial activities of Russian graduates on their return to their home country, and what this might mean for the institution with regard to further interventions.

The third group consisted of research reports produced by stakeholder organisations that explored UK-wide approaches. These employed literature reviews, surveys and case study presentations to arrive at broader findings that reflected sectoral trends. The National Council for Graduate Entrepreneurship (NCGE) (Hannon 2007; Rae et al., 2012) explored the development of the entrepreneurial university. Based on its survey of EEE and support activity in English institutions, this work reviewed a wide range of metrics including engagement, provision, support and funding, and the reports that followed used this to suggest institutional improvements and public policy reforms related to EEE. The National Association of University and College Entrepreneurs (NACUE) (NACUE 2010; NACUE, 2014; NACUE, 2021) used surveys and case studies to investigate the role of student-led enterprise societies and their impact on students, intuitions and the wider economy. Reports for the UK Government (Anderson, et al., 2014; Williamson et al., 2013) have variously used case studies and literature views of the efficacy of EEE and its impacts on students, staff, institutions and the economy to argue for policy interventions.

The fourth group consisted of regional insight reports drawn from international surveys, such as the Global Entrepreneurship Monitor (GEM) (GEM, 2021) and the Global University Entrepreneurial Spirit Students’ Survey (GUESSS) (GUESSS, 2021; Saridakis et al., 2016). GEM uses surveys of entrepreneurs, non-entrepreneurs and expert panels combined with economic data to draw conclusions about the worldwide ecosystem for entrepreneurs. GEM’s national reports provide localised insights into the level of entrepreneurial activity in a country and they also compare national conditions to benchmark the country against others and explore its potential as an entrepreneurial economy. GEM has wide-ranging impact, central to which is its role in informing international policy, investment and collaboration. GUESSS confines itself to exploring each participating country’s university-level student population for their intentions and activities in an effort to better understand student entrepreneurship. GUESSS also provides national reports which allow for benchmarking. Its key impact is in helping institutions to better understand their students and the actions they might take to further improve intention and action.

Across the four groups of literature, we observe a diverse range of approaches and measures being applied. These range from a micro-level, exploring specific interventions and their impact on individuals, up to a macro perspective wherein publications have explored broader trends and their impact on economies. Herein, success, or failure, can be measured as anything from a change in mindset to a fluctuation in national GDP.

Furthermore, each group has its own unique challenges: cohort-based and cross-institutional studies tend to be focused on a particular intervention and/or short-term outcomes, which limits their value as general indicators of impact (Nabi et al., 2017); stakeholder reports can provide broader insights into the impact of EEE activities but they are limited by the campaigning positions that underpin their creation; and regional insight reports can provide useful perspectives as to the performance of an economy, or a group of individuals, but their ability to connect this to specific activities is limited, meaning that impacts are hard to identify.

Taken together, these critiques underscore the difficulty in exploring impact in an EEE context which has previously been noted by Nabi et al. (2017). In short, there is a superfluence of impact measures using a range of different data to interpret varied outcomes, some of which may not even be suitable for the intended task. This makes comparison between approaches difficult and serves to obfuscate the true nature of impact in an EEE context.

Crucially, all four of these groups of research largely ignore the UK Excellence Framework data (Smith, 2015) and what it might add to the debate. When taken together, these critiques may explain the limited impact of this work on the development of EEE, and the opportunity this presents to argue for novel approaches.

This paper will, therefore, seek to present an innovative approach by examining the under-utilised information captured by the UK’s Excellence Framework assessments of research, teaching and knowledge exchange in an effort to explore what it shows about EEE in UK HEIs and its impact on research, teaching and students’ entrepreneurial career choices.

UK excellence frameworks

In the UK, HEIs and their activities are governed in part by three Excellence Frameworks against which their research (REF), teaching (TEF) and knowledge exchange (KEF) contributions are judged. These cyclical reviews play a significant role in influencing how the institution is perceived, and how it is funded.

Research excellence framework

The REF is a periodic research impact evaluation for UK universities, which has been running in different formats since 1986 (Arnold, 2018; REF, 2021). The REF is conducted by a process of expert review which draws on subject-based panels of senior academics, international members and research users to assess submissions based on their quality, impact and the institutional environment they represent. The review itself is based on an institutional submission which includes selected research outputs, impact case studies and a narrative element that details the HEI and its research facilities, systems and processes.

The panel evaluates the institutional submission and grades it on a star basis (REF, 2021): • Four-star: outstanding impacts in terms of their reach and significance. • Three-star: very considerable impacts in terms of their reach and significance. • Two-star: considerable impacts in terms of their reach and significance. • One-star: recognised but modest impacts in terms of their reach and significance

The star grading is used to help stakeholders understand how HEIs are meeting the UK funding councils’ policy aim to ‘secure the continuation of a world-class, dynamic, and responsive research base across the full academic spectrum (UKRI, 2020) within UK higher education’.

According to REF 2021 information (UKRI, 2021), the main use of the REF is to guide the allocation of about £2 billion per year of public funding for universities’ research. The data are also used by UK publishers as a metric in several league-table ranking guides, and will be a key performance indicator for any research-intensive institution.

Teaching excellence and student outcomes framework

The Teaching Excellence and Student Outcomes Framework, commonly known as the TEF, is a voluntary quality mark which measures teaching excellence in HEIs in an effort to help students select the best institution in which to undertake their studies. It was introduced in the Higher Education and Research Act in 2017 (DfE, 2017).

Institutions take part in the TEF to highlight the quality of their teaching as a point of differentiation. The TEF uses a Gold, Silver or Bronze rating to highlight the relative quality of provision in an HEI. It operates on a rolling 3-year submission cycle and, to date, 130 HEIs have undertaken the assessment with 26% achieving a Gold award, 50% Silver and 24% Bronze (Vivian et al., 2019). The TEF is also assessed using an independent expert panel. In this instance, its membership includes students, academics and other teaching and learning experts. The panel assesses the institution by reviewing official data on a range of teaching-related metrics alongside a detailed written submission from the HEI (Office of Students (OFS), 2020).

As Gillard (2018) notes, much like the REF, the process of the TEF is not just data-driven and the narrative overview in the provider submission has a significant effect on the award bestowed. Research on the connection between EEE and the TEF is extremely limited; those papers that do exist have tended to focus on using TEF data as part of a broader conversation about the development of the entrepreneurial university, including the need to promote industry engagement in student programmes (Gray et al., 2020), an internal university culture that is embracing of change and flexibility (McKellar, 2020) and the development of lifelong learning both in and outside the curriculum (Morley and Jamil, 2021).

Knowledge exchange framework

The goal of the KEF is to establish the efficiency, effectiveness and benchmarking of knowledge exchange to businesses (Research England, 2021), with full participation in the KEF likely to be a condition of future Research England funding (Research England, 2020). However, unlike the REF and TEF, the operation of the KEF does not rely on an expert panel to reach conclusions. Instead, the KEF takes data from the annual Higher Education Business and Community Interactions (HE-BCI) survey which is compiled by the Higher Education Statistics Agency (HESA), along with some additional data from Innovate UK and Elsevier, and case study narratives where appropriate. The data are put into a model, with the results presented in interactive dashboards alongside a narrative from the HEI, and these are made available on the KEF website (Research England, 2021) to be interpreted by HEIs and their stakeholders. The dashboard presents a rating against seven perspectives with the perspective of Skills, Enterprise, and Entrepreneurship (SEE) being the most relevant for this paper (Research England, 2020). SEE is underpinned by three metrics: graduate start-up rate using student FTE, CPD-CE learner days delivered normalised by HEI income, CPD-CE income normalised by HEI income. The majority of these ratings are based on data collected through the HE-BCI survey. There are challenges for institutions in collecting and reporting graduate start-up data, and data may well be incomplete (Smith, 2015); however, this remains the best proxy for evaluating entrepreneurship activity over an extended period of time, encouraged and supported by UK universities.

Research approach

The research draws on two approaches to interrogate the data: benchmarking methods and keyword analysis. Both of these have been used previously used to research the impact of interventions in higher education (Epper, 1999; Jackson, 2001; Nazarko et al., 2009; Pojanapunya and Watson Todd, 2021). The paper applies these approaches across the three core data sets – the TEF 2017–2020, the REF from 2014 and the HE-BCI survey, which was used in conjunction with student population data to produce a proxy KEF metric for 2017/2018. These were the most recent accessible datasets for each Framework at the time the analysis was conducted.

In this paper benchmarking is used to compare quantitative data from each university for the HE-BCI submissions, while keyword analysis is used to analyse the research outputs and impact case studies from the REF and TEF submissions.

To arrive at our conclusions, each dataset had to be analysed independently using slightly different approaches to reflect the types of data. The following sections outline the specific approach in each instance and detail its application.

REF methodology

REF submissions are composed of both quantitative (such as number of full-time staff, income, etc.) and qualitative (research outputs and impact case studies) data. The analysis conducted in this paper focuses on the qualitative data: research outputs (identified in the REF 2014 database as REF2); and impact case studies (identified as REF3B) which can all be found in REF, 2014.

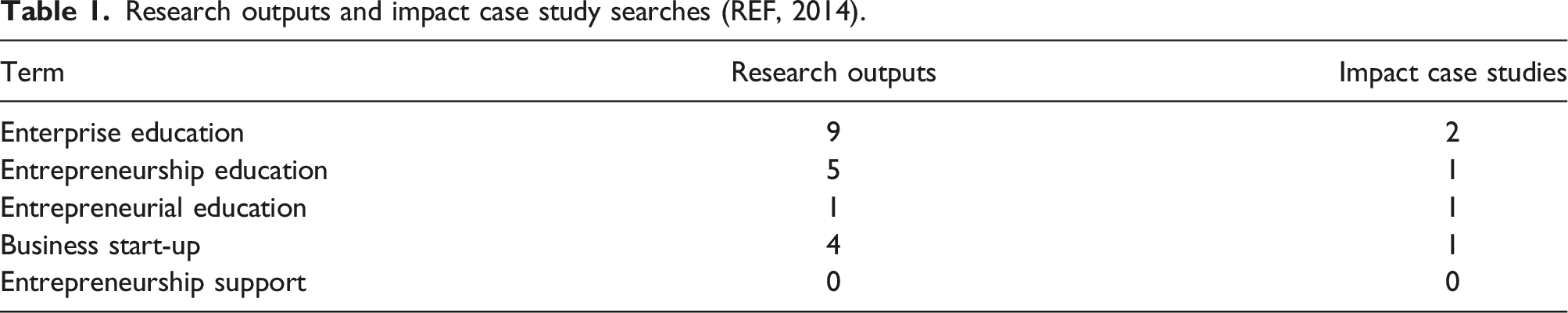

The first stage of this analysis involved interrogating data from across the REF 2014 portal (REF, 2014) for all units of assessment using the following five EEE-related terms: 1. enterprise education; 2. entrepreneurship education; 3. entrepreneurial education; 4. business start-up; and 5. entrepreneurship support.

The first three terms were taken from the Quality Assurance Agency (QAA, 2012 and 2018) guidance for enterprise and entrepreneurship education for UK HEIs. Items four and five were used to reflect entrepreneurial outcomes relevant to student and graduate start-up activity, with the search results reviewed to ensure that the outputs explicitly mentioned student and graduate businesses. The documents returned were then blind reviewed in depth by two of the co-authors to ensure that EEE was the focus of the document and not simply an unrelated statement. The search and review of the results produced 23 documents, four of which were impact case studies and 19 of which were research outputs. Finally, to understand the impact of each of the 23 documents, the REF 2014 quality profiles for each institution, showing how much of the submission had met a star rating within the unit of assessment, were linked to the documents to provide an indication of their quality.

TEF methodology

As part of their TEF submission, HEIs again provide both quantitative and qualitative data. The quantitative data focus on a range of metrics which we could not relate to our EEE terms. This meant that we focused on the qualitative data and the accompanying result narratives (Office of Students (OFS), 2020).

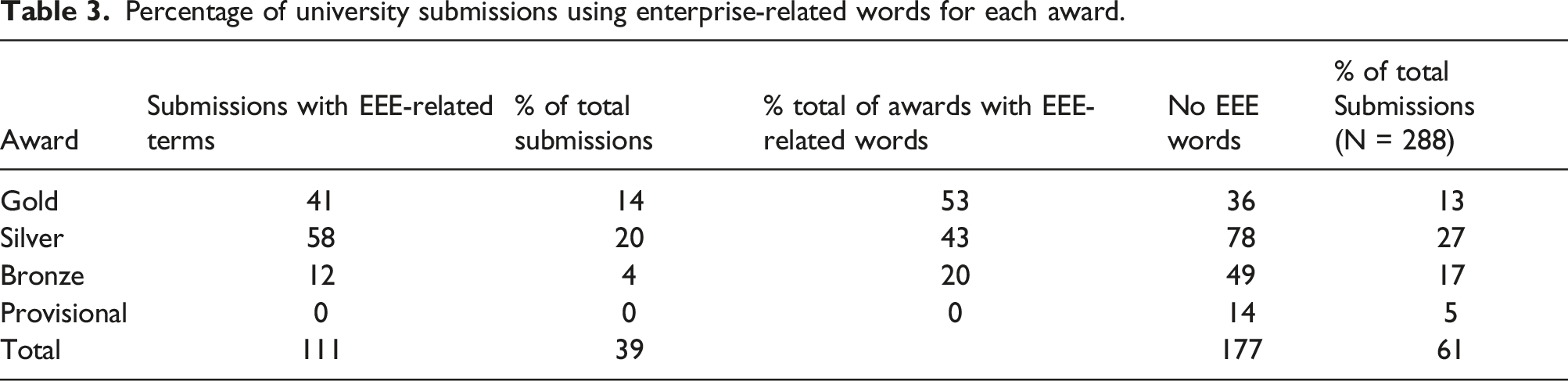

The 2017–20 data in this analysis consists of 525 TEF submissions from 399 providers of higher education, including further education colleges and other institutions. For this analysis we focused on the HEI only TEF submission documents and results narratives, which numbered 111. These were selected to position these data on a similar footing to the REF and KEF analyses, which focused only on HEIs and were downloaded and then imported to NVivo (Version 12) to establish a benchmark (Tasopoulou and Tsiotras, 2017). A text search query was conducted using the EEE search terms (1, 2, and 3 above), and this produced a list that identified the frequency of occurrence of the EEE terms in the submissions and/or result narratives. This gave an overview of the total number of documents that contained EEE-related words. The number of EEE words occurring in each award category was explored to understand the use of the term and its relation to the award allocated. The authors recognise that this benchmarking approach lacks sophistication but, as the data will later show, it does indicate that there are clear relationships between the use of the EEE terms and the award made to the institution.

KEF methodology

As noted previously, the KEF is based predominantly on the HE-BCI data which, along with its other component parts, are put into a model. The results of this process are then presented in interactive dashboards on the KEF website, alongside a narrative from the HEI. However, the dashboards themselves indicate only the relative strength of an institution against the cluster average for each element of analysis; they do not facilitate simple comparison or provide any deeper insights as to how a strength has been arrived at. This renders them highly problematic as a unit of analysis. Given that the central element of the KEF is the HE-BCI data, we knew from previous research that those data presented the best available proxy through which this activity could be understood (Smith, 2015). For this project we use the HE-BCI data for 2014–18 relating to university-supported student and graduate start-ups. The HE-BCI data for items 1-6 below were obtained from the Higher Education Statistics Agency (HESA, 2020a). In order to generate a proxy calculation for the KEF, student FTEs for 2018 were also obtained from HESA for item 7 (HESA, 2020b). 1. Number of graduate new start-ups created. These are defined as all new businesses started by recent graduates (within 2 years) regardless of where any intellectual property resides, but only where there has been formal business/enterprise support from the HE provider; 2. Number of graduate start-ups, the number still active which have survived at least 3 years or more. 3. Number of active firms, number of new starts, plus the number of graduate start-ups that have been active at least 3 years or more, plus those companies that have been active for between 1 and 3 years. This is a catch-all category that captures an overview of all registered graduate businesses from start-up to 3 years and beyond. 4. Estimated current employment (EFTE, full time employees); 5. Estimated current turnover (£000); 6. Estimated external investment (£000) from external partners but excluding investment from HEFCE (now the Office for Students)/the Department of Business, Innovation and Skills (now the Department for Department for Business, Energy and Industrial Strategy) third stream funds. 7. Student FTE numbers.

It should be noted that HE-BCI statistics relate to both students and graduates up to 3 years from graduation (HESA, 2020c), and not just students, which might be inferred from the KEF’s use of student FTE. The total student FTE each year also includes non-EU international students who are not able to start up a business whilst a student due to visa restrictions, but may apply as graduates for a visa to start up a business. Further, the FTE year used by UKRI to calculate the KEF ratio relating to EEE is also not clear from the methodology information provided. The total student FTE (total studying at all levels and fee status) for the year of the HE-BCI return has therefore been used as a proxy here (i.e., the 2017/2018 HE-BCI return will be matched with the 2017/2018 total student FTE numbers).

Findings

Research excellence framework review

Research outputs and impact case study searches (REF, 2014).

The development of a research impact case study starts with the collection of research outputs for the REF submission. From these the HEI selects a paper, or collection of papers, submitted to journals rated 2* or above, from which it will shape a case study that highlights the impact of its research. Impact in this context is defined as an effect on, or change or benefit to the economy, society, culture, public policy or services, health, the environment, or quality of life, beyond academia (UKRI Website).

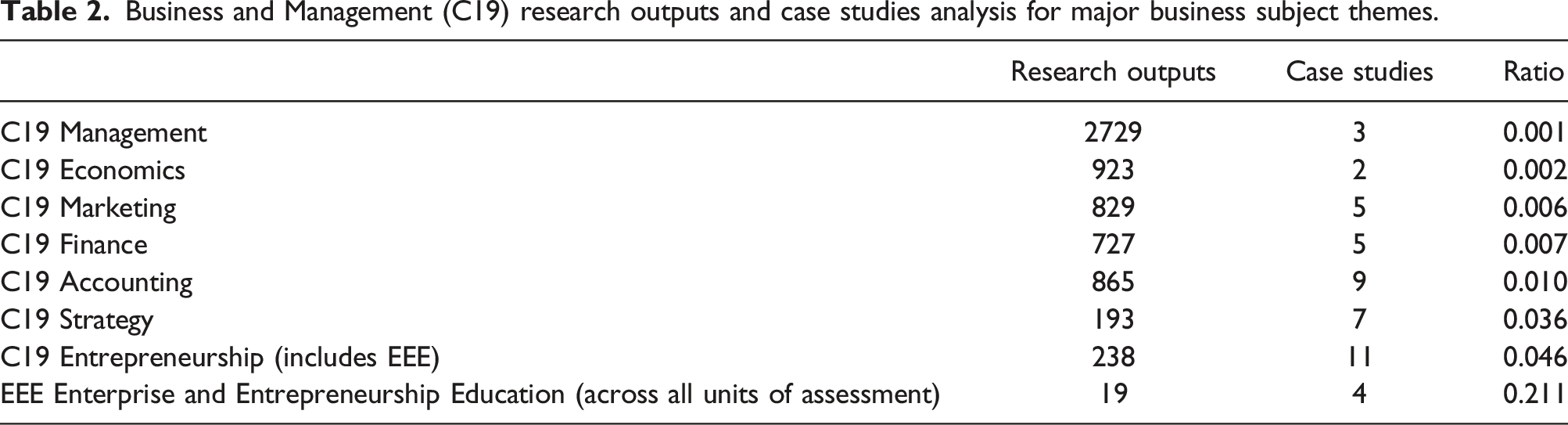

Business and Management (C19) research outputs and case studies analysis for major business subject themes.

Finally, although one cannot assess the quality of a solitary case study with the information publicly available, it is possible to infer quality and impact using the star rating awarded for the institution in this unit of assessment. To do this the rating for each of the four institutions that submitted EEE case studies was explored. The overall impact for two of them to the C19 unit of assessment was rated at least 3* (very considerable or outstanding), with a further institution’s impact rated at least 2* (considerable, very considerable, or outstanding). An overall impact rating is not provided for the fourth institution submitting an EEE-related case study as only one case study was submitted to the unit of assessment and REF 2014 does not provide a rating in this situation. Overall, the high level of impact ratings suggests that, although not commonly submitted to assessment, EEE research is impactful, relevant and can contribute well to the REF-related funding that institutions receive as a result of the exercise.

Teaching excellence framework review

Percentage of university submissions using enterprise-related words for each award.

A review of a sample of submissions suggested that the use of these words ranged from the extremely broad and general (e.g., a mention of being an entrepreneurial university) through to detailed descriptions of particular interventions or activities.

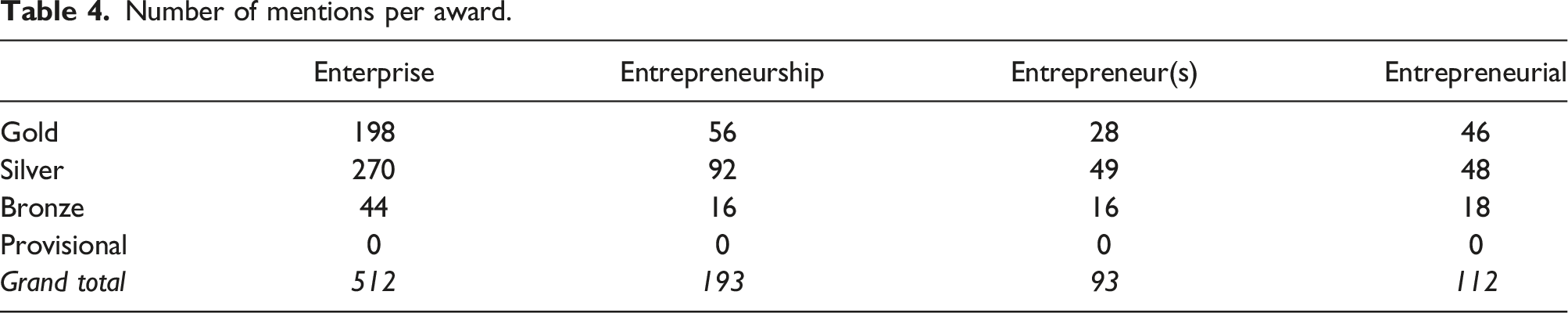

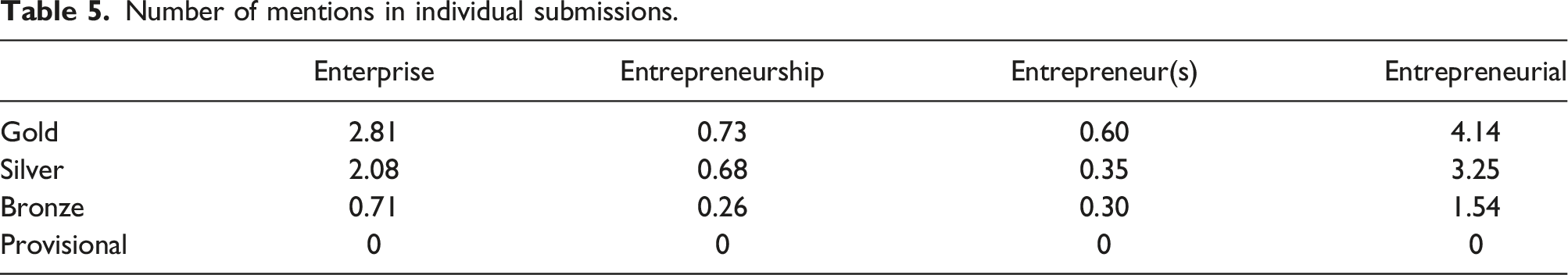

Number of mentions per award.

Number of mentions in individual submissions.

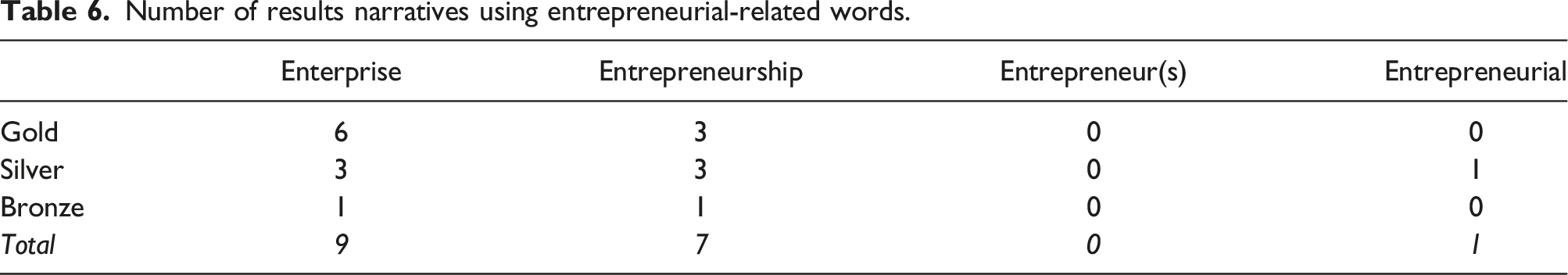

Number of results narratives using entrepreneurial-related words.

Initial analysis suggests that the use of the words in results narratives reflects the detail and ‘embeddedness’ (Beech, 2017) of the activity described in the university submissions. Mention of enterprise-related activity appears to be made by reviewers only where there is robust evidence that this is a sustained and strategic part of the university’s educational activity. This provides indicative evidence for the hypothesis that active engagement and explicit articulation of enterprise-related work have an impact in terms of the level of TEF award gained.

Knowledge excellence framework review

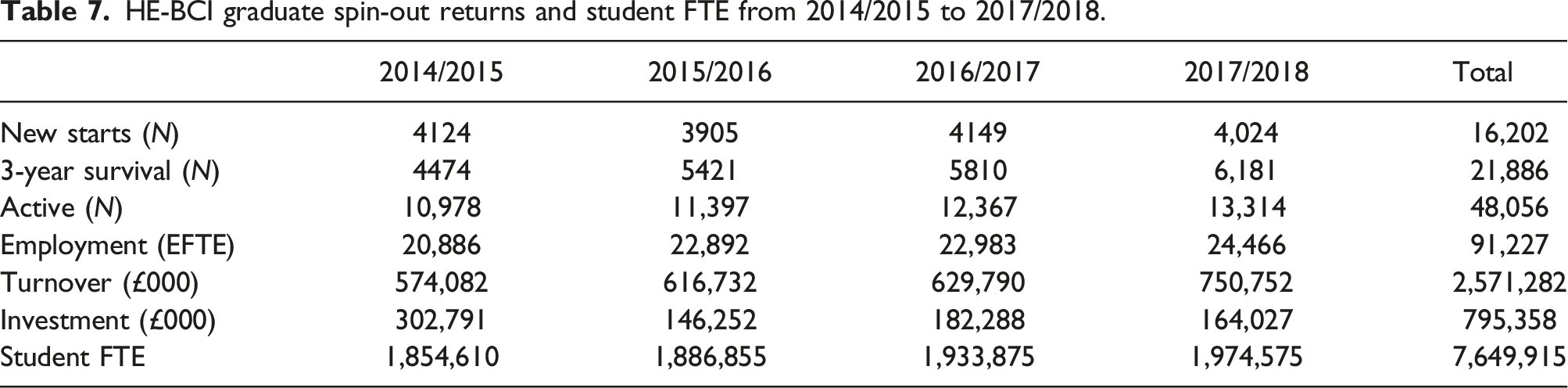

HE-BCI graduate spin-out returns and student FTE from 2014/2015 to 2017/2018.

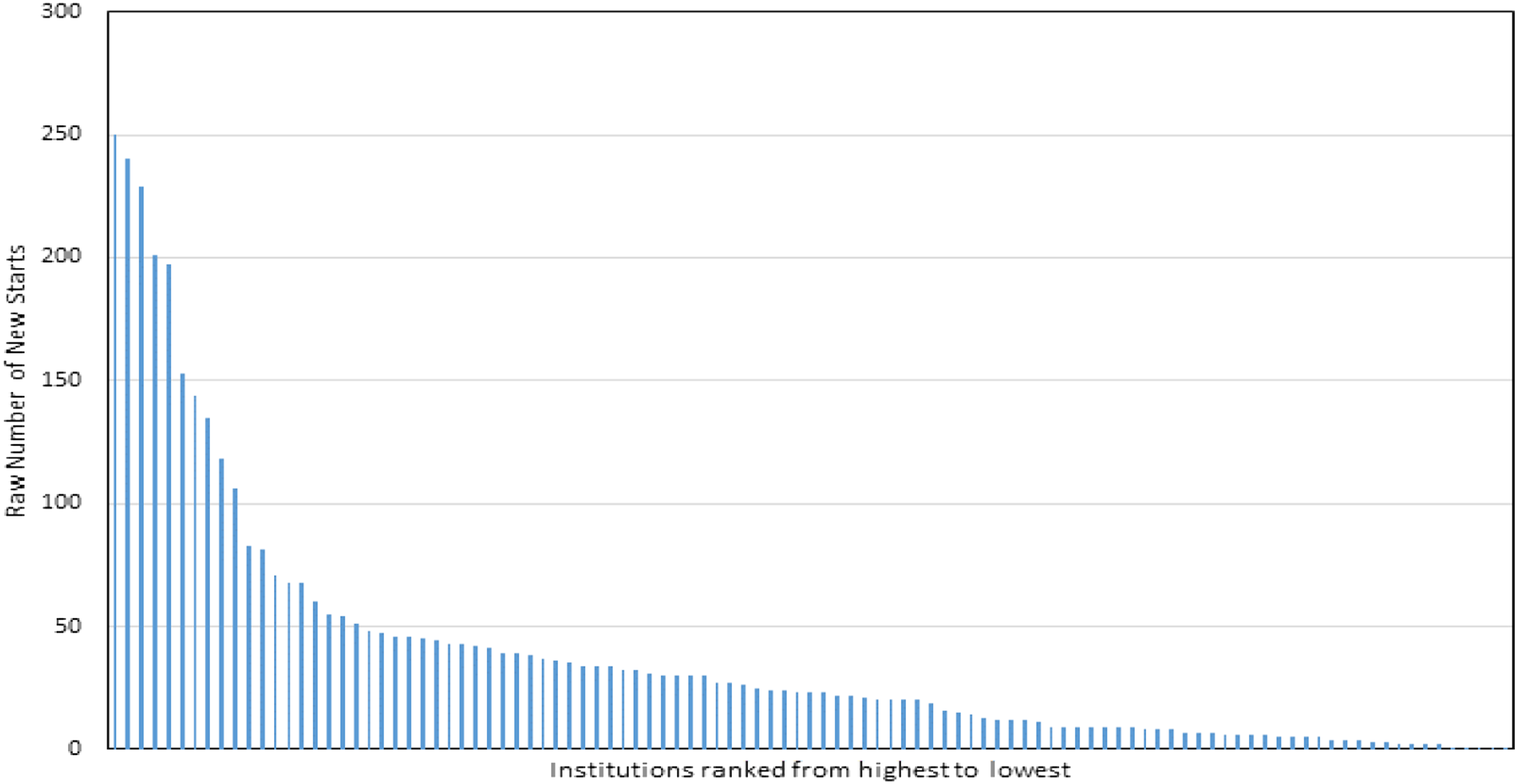

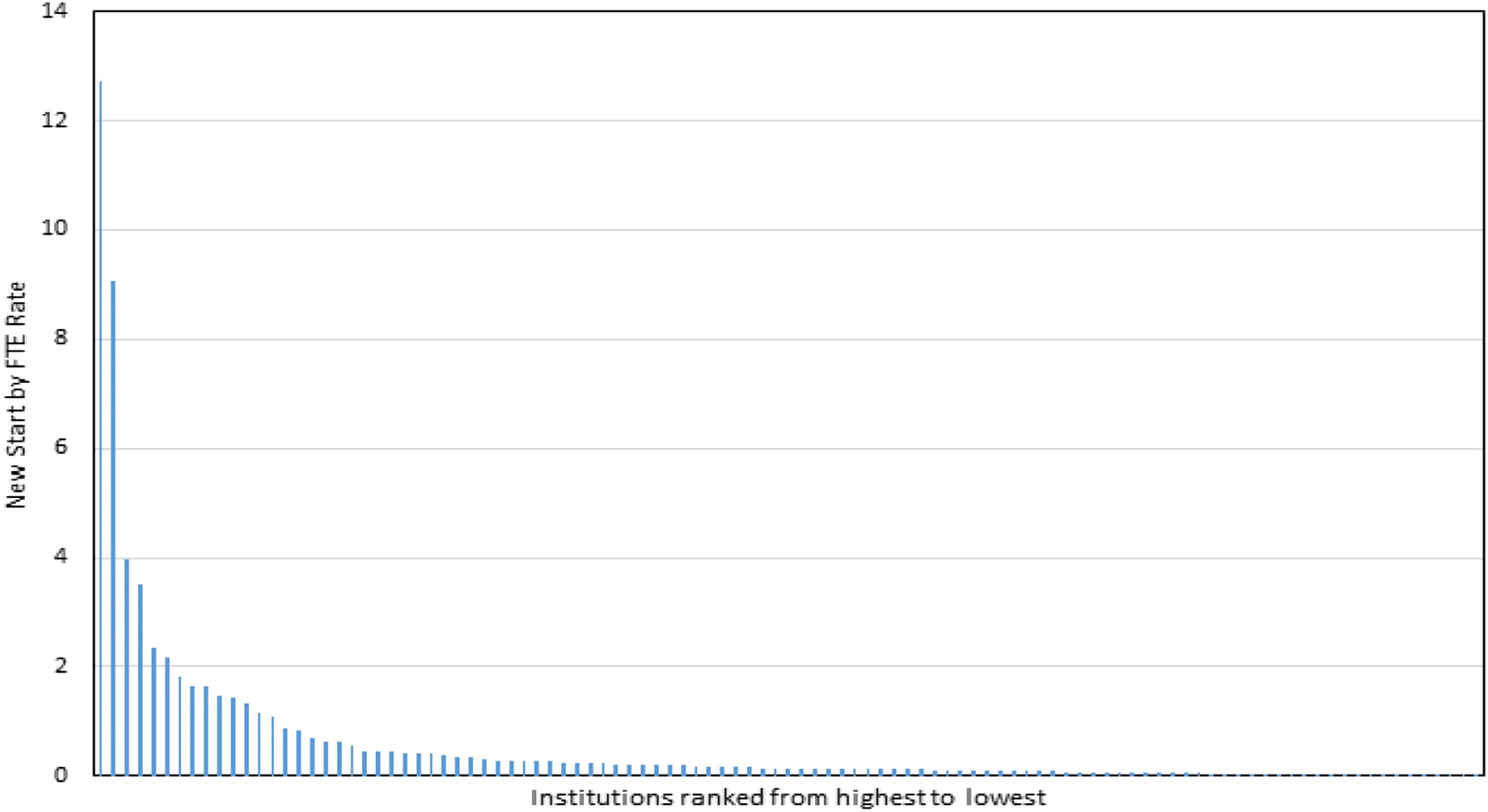

Figure 1 presents data relating to the numbers of new-start businesses reported by each of the 108 institutions in 2017–18, ranked from the highest number of returns to the lowest. This shows that a relatively small number of institutions were responsible for the majority of the returns, with 10 institutions reporting between 100 and 250 new-starts each, nine institutions returning between 50 and 100 new starts, and the remaining 86 institutions reporting fewer than 50 new starts each. Three of the nine highest ranking institutions were specialist art, music, dance or drama institutions. Number of start-ups reported in 2017/2018 by 108 institutions, ranked from the highest number of returns to the lowest.

Specialist universities typically have smaller student numbers and it is therefore important to understand the new start-ups per number of students in each institution (Figure 2), as used by the KEF. Figure 2 shows that the highest-ranking institution returned a number of new starts to HE-BCI that equated to 12.7% of its total student FTE population in 2017/2018. Only six institutions (including the highest ranking) had a KEF proxy figure of over 2% of their total student FTE; 85 institutions reported less than 0.5%. Six of the 10 highest ranking institutions were specialist art, music, dance, or drama institutions, with the KEF proxy used here compared with three using the raw HE-BCI new start numbers. Percentage number of new starts by total FTE reported in 2017/2018 by 108 institutions, ranked from the highest percentage of returns to the lowest.

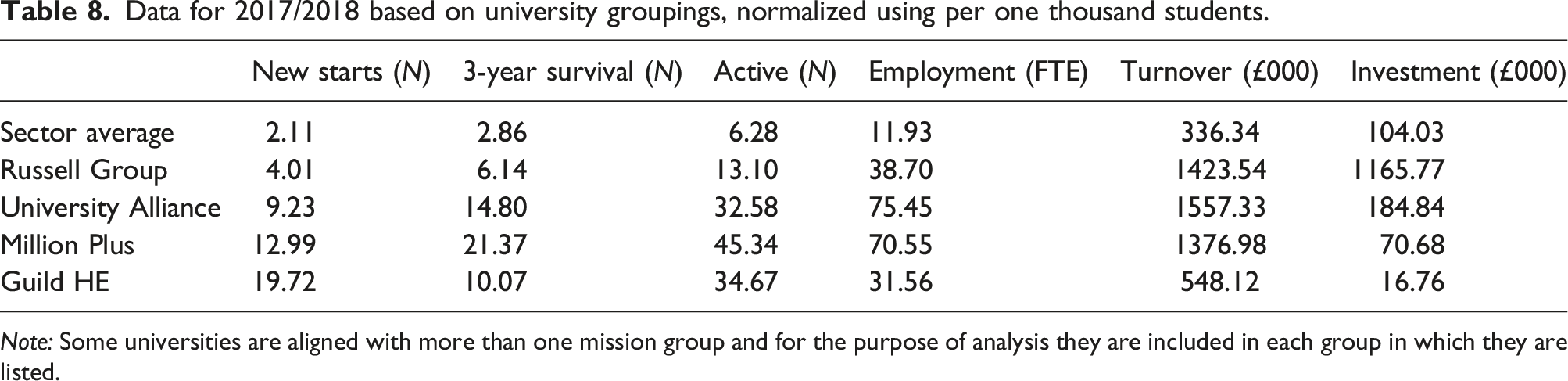

Data for 2017/2018 based on university groupings, normalized using per one thousand students.

Note: Some universities are aligned with more than one mission group and for the purpose of analysis they are included in each group in which they are listed.

The Russell Group (2021), consisting of 24 UK research-intensive universities, has the lowest 3-year survival and active businesses. These universities are fundamental to the knowledge economy and therefore the business start-ups from these universities should be high-growth knowledge-based businesses.

The University Alliance (2021) consists of predominantly professional and technical universities. Its mission is to drive local growth through research, teaching and enterprising activity. This group of 12 institutions does well for employment and turnover with around 15% less of the investment that Russell Group students are provided with.

The Million Plus Group (2021) represents the 23 ‘new’ or ‘modern’ institutions across the UK established after 1992. Many of its members are former colleges or polytechnics. Members of this group achieve good results on 3-year survival and active businesses, with 6% less of the investment that Russell Group students are using.

The Guild HE (2021) group consists of the smaller specialist institutions based on art, design, teacher training, agriculture, music and drama. This group has high start-up rates and fairly high numbers of active businesses. That said, it has the lowest figures for employment, turnover and investment, which may be reflective of the applied nature of the businesses that graduates create.

These university groupings based on interest groups allow us to explore the narrative within the UK HEI Excellence Framework data. It is also clear that these groups could be used to address policy issues, but a discussion of this potential are beyond the scope of this paper.

Discussion

Our analysis builds on over a decade of conversation which has explored the challenges of measuring impacts in an EEE context, and called for more research attention to be given to the topic to support its development. Having reviewed this work, we believe that the lack of progress in advancing the impact agenda is the result of three interlinked factors: • the fluid perceptions of enterprise, entrepreneurship and employability as topics; • the challenge of arriving at a consensus on which outcomes and impacts to measure; and • the difficulty in identifying when to measure impacts.

Firstly, although there is broad agreement as to the nature of EEE within the domain community, beyond these boundaries the various edges of the disciplines can appear blurry. Furthermore, the range of practices applied in EEE and the various manners in which these are discussed can make EEE even less distinctive. We feel that the broad nature of EEE means that it can be conflated with other institutional agendas, such as employability, making its particular impact on learning and development harder to unpick, and therefore more difficult to measure. Secondly, the intention of EEE practice is to affect change in the individual or group. However, EEE interventions can take many forms, with the goal of effecting a variety of competencies, attitudes and behaviours. This means that the potential impacts that could be measured are diverse in scope and scale. Educators and practitioners, often with broad EEE briefs, may lack the luxury of setting overly constrained boundaries in an effort to promote inclusivity, encourage engagement, achieve institutional targets and further their own activities. We believe that this situation leads to a scenario in which impact measures are highly dependent on cohort size, and the scope of the intervention; smaller cohorts can be assessed in more depth, while larger groups tend to be explored using more binary metrics.

It is also worth considering whether this focus with regard to EEE affecting change in people has created an unintended bias in the assessment of EEE impacts. Across all the reviews we explored we found no evidence that EEE impacts on teaching, learning, assessment or research had been reviewed in any meaningful detail.

Finally, EEE might affect a student today, tomorrow or several years later and might do so in a variety of ways. This means that deciding on a timescale over which to measure any impact is inherently difficult. Furthermore, linking any impact, especially over longer periods, to any particular intervention(s), while excluding for other external stimuli is challenging. We conclude that this is why there are few national longitudinal studies in any reviews, which is frequently noted as a weakness in the understanding of EEE impacts.

Our work takes a different approach to addressing the issue of EEE impact measures. We have chosen to focus on the three UK excellence frameworks (REF, KEF and TEF) to explore what these previously unreviewed datasets can tell us.

The UK has a unique higher education ecosystem insofar as education policy is, on the one hand, devolved to a national level in terms of structure, regulation and funding (e.g., the Office for Students in England), whilst on the other hand remaining centralised in the context of data collection and assessment (e.g., the Higher Education Statistics Agency). This has led to different approaches to the way data is collected for the REF, KEF and TEF, with some nations and their HEIs not participating fully, especially if the data are not tied directly to funding (e.g., the TEF and Scottish HEIs). However, these datasets still provide one of the most complete national pictures, allowing for a holistic view of higher education performance, which is why it is so odd that they have been largely ignored (with the exception of some critiques of their management and execution) as tools from which useful insights can be gained.

UK HEIs are also quite tribal: as we can see from the data, mission groups are important to understanding the UK higher education landscape, especially with regard to self-employment and venturing activity. The fact that a relatively small number of HEIs are responsible for the majority of graduate start-ups speaks to the features of the universities in these groups. Some with higher levels of activity may be more specialised, with programmes that are highly focused on self-employment or venture creation based on the careers they are preparing students to engage with. Others may be less research-focused, more vocational, or may attract students for whom self-employment is either their only option or part of a portfolio of post-education activity. It may also be that, for some, reporting on EEE activity is simply less important than other metrics. It is certainly a compelling area for further study.

This study is an exploration of the manner in which REF, TEF and KEF interact and what we, as researchers, can learn from this interaction. In an EEE context we know that EEE activity impacts an institution’s TEF score, and we also know that research work focusing on EEE is, arguably, more impactful than other disciplines. We know too that EEE interventions have a direct impact on the KEF through the creation of graduate enterprises.

Although there are no direct linkages between the datasets, there are several important inferences that we can draw. Firstly, the REF and TEF data certainly suggest that impactful research activity likely informs impactful teaching; although they also indicate that the opposite may also be true (i.e., quality teaching may inform quality research). There is certainly scope to research this in more depth. Secondly, it is clear from our review of the TEF narratives that EEE activity that supports the KEF likely also leads to improved TEF outcomes. It is impossible to say whether the reverse is true, although again there is scope here for further research to explore whether excellent teaching learning impacts the KEF. Finally, we know that there is a relationship between the KEF and the REF in so far as KEF activity may lead to research that can form part of the REF submission. What is less apparent is the manner in which REF activity might affect KEF outcomes (research leading to spin-outs, for example) and this may be worth studying in more depth, especially as the KEF will likely be linked to institutional funding in the future.

The literature review conducted for this paper demonstrates that these publicly available data are under-utilised within the higher education research community, especially in relation to the development of education management and UK/national higher education policy. Also, as the REF, KEF and TEF cycles repeat, there will be a longitudinal dataset which can be used to understand the impact of university-level management on the local population, regional GDP and business community.

In the context of EEE, this work could be expanded to create a national benchmark to explore the development of the entrepreneurial university in practice. This would provide an open and transparent methodology for comparing universities in the UK in terms of their EEE activity and its impact. The Excellence Frameworks could also be used to investigate the clustering of universities with a view to creating improved strategic alliances in, or between, mission groups to support regional development.

Conclusions

This paper has reviewed the under-utilised data captured by the UK’s Excellence Framework assessments of research, teaching and knowledge exchange in an effort to ascertain what they might help us to understand about the impact of EEE in UK HEIs.

The REF 2014 results show that EEE may be up to 46 times more impactful than other management disciplines. This implies that, given the link between REF impact and institutional income, more HEIs should focus efforts on developing EEE research activities. An updated review of this conclusion using data drawn from REF 2021 would be an interesting area for research exploration.

The positive relationship between the use of the EEE terms in TEF submissions and the award level achieved by the HEI suggests that EEE activity may play a role in improving the TEF rating of the institution. This analysis should be conducted again, once HEIs have submitted their second application, to understand the strategic differences in these TEF submissions and if the trends identified herein are repeated.

The interpretation of the HE-BCI data as a KEF proxy is more complex because of the range of outputs it addresses. Firstly, we observe that the rate of graduate start-ups has remained relatively flat over the 4-year academic period which was the focus of this analysis. This suggests that any positive or negative changes in EEE activity in HEIs (measurement of which was beyond the scope of this analysis) appear to have had a limited effect on start-ups. Secondly, the analysis shows that a relatively small number of HEIs are responsible for the majority of graduate start-ups. Identification of the reasons behind this requires more research focus to facilitate the sharing of best practice across the sector. Furthermore, the data imply that many of these institutions are small specialist universities, meaning that a review of these institutions is necessary to better understand their function and contribution to the UK economy. Finally, there appears to be a link between membership of an HEI mission group and improved KEF metrics when compared to the sector averages. Further work is also needed to better understand this relationship and the factors underpinning these data.

Taken together, this analysis suggests, powerfully, that EEE impacts research, teaching and knowledge exchange quality in a variety of ways. However, it also shows that the reasons for this are still poorly understood at a micro-level, and that further research activity is necessary to develop a more nuanced understanding of best practice which could lead to improvements across the sector in the UK and beyond.

The combination of these three datasets as presented in this paper offers a unique insight into EEE activity in the UK and its impacts. This has never before been explored in the literature. We offer it here in the hope that it prompts new discussion as to how impact measures can be conceptualised, especially with regard to these national framework datasets. This might help to address some of the critiques noted in the literature review.

In addition to the points above, the connections between REF, TEF and KEF submissions should be further analysed to evaluate the relationships for EEE between research, teaching and knowledge exchange, with a specific focus on how policies need to be shaped to support and develop EEE work.

Limitations

The authors recognise the limitations of this research. Firstly the data are those that HEIs themselves have submitted with no internal or external auditing. Secondly proxies have been used for the KEF data, which means that a fuller picture may not have been realised. And finally, the datasets presented herein contain different numbers of HEIs, and in this current format normalisation is not possible, meaning that comparisons are not like for like.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the