Abstract

The UK government is concerned about job quality. However the lack of scientific consensus about measuring job quality hampers policy efforts to improve the quality of jobs. To address this problem, a standard measure was developed and adopted to report job quality by the UK’s Office for National Statistics. This article outlines a replication study using a new dataset to assess the reliability and validity of this standard measure. The dataset comprises 75 empirical studies that examine job quality in the UK and elsewhere. Using this dataset, the standard measure is confirmed, encompassing six dimensions of job quality. Subsequently, this study establishes both the reproducibility of the measure and the replicability of the methods used to develop that measure. In doing so, the findings will facilitate improved research and policy development along with greater conceptual clarity regarding job quality, long called for by social scientists.

Introduction

Over the past decade, a range of governments, including the UK, and inter-governmental bodies have advocated improving jobs as a route out of economic crisis, spurring competitiveness and delivering a sustainable future (e.g. European Commission [EC], 2012; G20, 2015; Green Jobs Taskforce, 2021; International Labour Organisation [ILO], 2018; OECD, 2018). However, there is a lack of scientific consensus, even arbitrariness, with an ‘anything goes’ approach to the measurement of job quality (Eurofound, 2008; Findlay et al., 2013; Kalleberg, 2016; Muñoz de Bustillo et al., 2011b). In the absence of an agreed standard measure, comparisons between studies are hampered, making trends and developments difficult to discern, subsequently constraining policymaking to improve job quality.

To resolve this problem, the UK Government’s Taylor Review of Modern Working Practices (Taylor et al., 2017) recommended the development of a standard measure (or set of metrics) of job quality. These metrics were subsequently developed by the Measuring Job Quality Working Group (MJQWG) (Irvine et al., 2018), co-chaired by Taylor. That development drew on the dimensions of job quality that had recently been adopted by the UK’s Chartered Institute for Personnel & Development (CIPD) as the Good Work Index for its then new annual UK Working Lives survey (Gifford, 2018), and which emerged from a literature review conducted for the CIPD by Warhurst et al. (2017). These measures were then recommended by the MJQWG for use by the UK Government in pursuance of its Good Work Plan (HM Government, 2018a) and are now used by the UK’s Office for National Statistics (ONS) to periodically report job quality in the UK (e.g. ONS, 2018, 2022). Significantly, through the MJQWG, this standard measure has the support of key UK stakeholders outside academia: trade unions, business, government and the ONS (see Irvine et al., 2018). This support means that the measure has a dual legitimacy – being grounded in a scientific evidence base from the original study for the CIPD and having the support of senior UK policymakers and practitioners.

To increase consensus and advance both research and policy, this article outlines a replication study designed to assess the reliability of that standard measure of job quality. While there can be variations by discipline (Heers, 2021) and some conflation in the use of the terms ‘replicability’ and ‘reproducibility’ (e.g. Bryman, 2015), replication is central to assessing the reliability of a measure and it involves ensuring that the procedures that embody the method can be repeated with the same outcomes. To be credible, and in this case reliably contribute to future research and policy, prior studies’ results need to be confirmable and confirmed (Bryman, 2015; European Science Foundation [ESF], 2000). Ensuring that the method and outcome in the original study are valid and reliable is central to generating confidence in the standard measure.

Methodologically, qualitative and quantitative researchers share a concern with replication, even if their language differs; for example, ‘confirmability’, ‘reliability’ and ‘reproducibility’. Recently, a UK higher education sector initiative (the UK Reproducibility Network) 1 was established to promote research replicability (and reproducibility) across all disciplines from the arts and humanities through to the social and physical sciences. Some in the social sciences have long advocated the importance of replicability (e.g. Clairbom, 1969). Given that replication is central to the logic of science and the credibility of the social sciences is paramount, Heers (2021) has called on the ‘top scientific journals’ (p. 5) in the social sciences to encourage replication studies.

Being able to measure job quality in a consistent manner is crucial to monitoring and evaluating trends, developments and issues. To ensure that the standard measure is reliable, we conduct this replication study using new data drawn from existing empirical research – an approach advocated for other aspects of employment where common measures are also lacking; for example, precarious employment (Kreshpaj et al., 2020). Using this approach, we create a new dataset that encompasses 75 studies over a 20-year period between 2002 and 2022. Subsequently, our study makes two major contributions. First, it confirms the new standard measure of job quality involving six key dimensions. In doing so, it not only confirms the standard measure but also assures its validity and reliability. This outcome creates confidence in the standard measure, which will help the policy utility of future research in the field. Such research is critical to creating rigorous evidence-based policymaking in the UK – and beyond we suggest. Second, it establishes and codifies a method for future replication studies, which will ensure that the standard measure of job quality can be reassessed at future points in time and updated as required. As we argue, replication ought to be undertaken periodically to ensure the contemporary relevance of the measure over time. These outcomes will enable much-needed clarity regarding the conceptualisation of job quality now and in the future.

While the empirical research that we draw on to create our dataset is international, following the Taylor Review (Taylor et al., 2017), our starting aim is to support UK-level policy development in this field. The UK Government’s longstanding policy focus on job creation has evolved, encompassing a new concern for improving job quality (HM Government, 2018b). However, our study has utility for the devolved governments within the UK as well as international relevance as governments across the advanced economies seek to improve job quality.

The article is structured as follows. First, it outlines the policy terrain concerning job quality and the reasons underpinning the lack of consensus measuring job quality. It then describes the different ways in which a standard measure can be generated. For the replication study, we adopt one of these methods. We then detail our methods and findings before offering recommendations in the concluding discussion.

Job quality policy terrain

For many years skills were the policy response to a series of economic and social problems (Keep and Mayhew, 2010). In recent years, there has been a discernible shift in policy thinking towards job quality as a solution, sometimes augmenting the emphasis on skill (e.g. Weber, 2023). The evidence base supports this shift in policy thinking, indicating that higher quality jobs benefit individuals through enhanced wellbeing (Horowitz, 2016) and firms through enhanced productivity (Bosworth and Warhurst, 2020). Better jobs can also create more sustainable and competitive economies (Siebern-Thomas, 2005) and improve social mobility (Carré et al., 2012). Moreover, and importantly for governments, improving job quality does not compromise job creation (Osterman, 2012).

Over the past 20 years, the socio-economic importance of job quality has sparked demands among inter-governmental bodies for the creation of good jobs and improvement of bad jobs (e.g. EC, 2012; ILO, 2010; OECD, 2018). The European Employment Strategy was adapted to encourage not just more but also better jobs (Commission of the European Communities, 2002). The UN Sustainable Development Goals, which were introduced in 2015, include the dual promotion of decent work and full employment (SDG 8). 2 In the context of the environmental crisis, decent work has been posited as ‘indispensable’ to a just transition to sustainable growth (ILO, 2015: 4; see also EC, 2023) and, with COVID-19 and its aftermath, there are calls to protect and improve the quality of jobs to increase innovation and productivity, and thereby aid social and economic recovery (OECD, 2020). More recently the European Commission’s Eurofound agency has argued that current labour shortages might be addressed through improved job quality (Weber, 2023). These inter-governmental bodies have also encouraged particular member countries to introduce reforms to deliver better quality jobs to raise living standards and reduce poverty (e.g. Jin et al., 2017).

Individual countries too have pledged to improve job quality (e.g. G20, 2015). The UK Government was one of the signatories to the 2015 G20 Ankara Declaration, which committed member countries to creating better jobs. Since that time, five successive UK prime ministers have committed to creating good jobs: Teresa May in commissioning the Taylor Review and subsequently launching the Good Work Plan (HM Government, 2018b); Boris Johnson and Rishi Sunak (the latter then as chancellor) with their joint post-Covid Build Back Better: Our Plan for Growth (HM Treasury, 2021); Liz Truss with a vision of the UK as a place of ‘great jobs’; 3 and Keir Starmer including good jobs as part of his government’s growth strategy (Labour Party, 2024). The UK Government’s promotion of a new zero economy is also premised on the creation of good jobs (Green Jobs Taskforce, 2021). Within the UK, devolved governments, both national and regional, have become champions of improving job quality. Scotland and Wales have promoted their own versions of job quality under the label of ‘Fair Work’, each with its own national character (Fair Work Convention, 2016; Fair Work Commission, 2019). The driver in Scotland has been a desire to increase economic competitiveness and promote social inclusion (Findlay, 2020). In Wales, the driver of Fair Work has been the need to address inequality, reduce in-work poverty and boost productivity (Felstead, 2020). Similarly, one of the four pillars underpinning the Northern Ireland Executive’s economic vision is good jobs (Department for the Economy [DfE], 2024). Finally, the introduction of employment charters in the English regions has been intended to improve levels of job quality, driven by concerns with inclusive growth, health and wellbeing, and productivity (Dickinson, 2022).

Reflecting such policy developments, the ILO has called on countries across the world to develop working conditions surveys that include comparable data on job quality, stating that doing so is vital to identifying issues of concern and providing evidence for policy action (ILO, 2019). Researchers and policymakers across the advanced and other economies thus agree on the importance of job quality. At present, however, comparative research and policymaking remain hampered by the lack of consensus regarding a standard measure of job quality (Findlay et al., 2013; Kalleberg, 2011). Without an ‘established standard’, Muñoz de Bustillo et al. (2011b: 247) point out, current social science research findings can differ and even be contradictory. As a consequence, they state, it is difficult to both develop job quality policy per se and, moreover, embed job quality within employment policy more broadly.

Varieties of job quality

Job quality has long been salient in social science research (Adamson and Roper, 2019; Brown, 1987). A job is an occupation within an industry and comprises both work and employment practices. Work is defined as an activity performed by persons to produce goods and services for own or others’ use. This work can be unpaid or paid. If the latter, it tends to fall within an employment relationship, turning workers into employees. Their employment has other terms and conditions, such as contract type, but is defined by receiving payment (ILO, 2013). The quality of a job is assessed through these work and employment practices. However, measures of job quality vary across studies, with the variety of measures reflecting different conceptual, methodological and disciplinary approaches (e.g. Findlay et al., 2013; Kalleberg, 2011).

Conceptually, a key issue is whether job quality should be measured objectively or subjectively. Conceptualising job quality objectively focuses on ‘the essential characteristics of jobs that meet workers’ needs’ (Eurofound, 2012: 10). By comparison, conceptualising job quality subjectively hinges on the assumption that each worker has preferences over different aspects of jobs, and these preferences can vary by worker – even for the same job. 4 Some researchers rely solely on objective indicators as they are considered more robust and more easily producible (e.g. Osterman and Shulman, 2011); others insist on subjective measures, asserting that it is workers’ own assessments that matter (e.g. Stuart et al., 2016); still others adopt a ‘hybrid’ approach, including objective and subjective indicators to capture needs and preferences (e.g. Lené, 2019; Wright, 2015).

Methodologically, approaches to measuring job quality also vary. Some approaches rely on measuring job quality using a single indicator, such as pay (e.g. Osterman and Shulman, 2011); others use multiple indicators (e.g. Holman, 2013). The use of multiple indicators leads to further challenges regarding which indicators, how many indicators, the relative importance of indicators and their appropriate weightings (e.g. Muñoz de Bustillo et al., 2011a). Deciding the number of indicators is an outcome of balancing opposing demands. With fewer dimensions, the analysis becomes more tractable and easier to present. However, that analysis can lack detail and create difficulty in interpretation (Eurofound, 2012). In failing to address this problem, too often a ‘shopping list’ of ad hoc ‘interesting’ variables are adopted (Bonnet et al., 2003).

Measures also vary by discipline. Hurley et al. (2012) identified seven disciplinary traditions, each with different foci and different measures or indicators. For example, orthodox economists tend to focus on pay while sociologists typically examine autonomy. Researchers from the institutionalist tradition tend to focus on contract status and stability of employment. Researchers from occupational health and safety focus on the physical and psychosocial risks of work. While these disciplines have an established body of research on job quality, other disciplines, such as geography, are now beginning to develop that body of research (see Weller et al., 2022).

Attempts to develop an agreed measure of job quality have also been constrained by the availability of data (Green, 2006). Indeed, it might be said that the current use of particular indicators is simply the outcome of data availability – that is, the indicators adopted reflect items in existing statistical datasets. Sometimes this limitation is explicitly acknowledged, as when Felstead et al. (2019) offer a ‘short form’ of job quality or Grande et al. (2020) propose a ‘Summary Job Quality Index’. Despite its policy prominence, it is instructive that there is no UK (or international) dataset that is explicitly intended to measure job quality. Green argues that this gap exists because researchers have lacked the influence (and/or the will) to develop or advocate a job quality survey. Instead, a ‘make do’ approach exists among researchers, which compounds the variety of conceptual, methodological and disciplinary approaches (Eurofound, 2008; Findlay et al., 2013; Kalleberg, 2016; Muñoz de Bustillo et al., 2011b).

These challenges mean that existing measures of job quality remain piecemeal and lack consistency, constraining comparative research (Muñoz de Bustillo et al., 2011b). Moreover, policymakers lack a commonly accepted understanding of job quality from which to develop policy. Indeed, a number of governmental bodies (e.g. Eurofound, 2008; ILO, 2012; OECD, 2016; UN, 2015) have developed measures that do not align, disabling cross-national understanding and creating confusion within countries that are members of some or all of these bodies. The outcome generally has ‘proven to be extremely detrimental to the relevance and usefulness’ of such measures according to Muñoz de Bustillo et al. (2011b: 2). What is needed is the development of a standard measure of job quality to enable comparative research that can identify issues and trends, and support policy development. It was in recognising and wanting to resolve this problem, that the Taylor Review recommended that the UK government adopt a measure of job quality developed for the Chartered Institute of Personnel & Development (CIPD, 2018), a recommendation accepted by the UK Government (HM Government, 2018a).

A methodology for developing a standard measure of job quality

This section backgrounds the methodologies for developing a standard measure of job quality, drawing on the work of Muñoz de Bustillo et al. (2009, 2011a,b). One of these methodologies was used to develop the standard measure adopted by the CIPD (Gifford, 2018), subsequently recommended for UK Government use by the MJQWG (Irvine et al., 2018) and which we also use in the replication study.

A standard measure of job quality can be developed via three methods. The first approach, sometimes described as a ‘shortcut’ (Muñoz de Bustillo et al., 2009: 12), focuses on having an overall indicator of job quality. Rather than using job characteristics to assess job quality, the indicator measures the outcome – typically the wellbeing of the worker in the job. However, wellbeing is seldom defined and job satisfaction is often used as a proxy. Although it is a straightforward approach, job satisfaction is an unsatisfactory indicator of job quality (Kalleberg, 2011; Muñoz de Bustillo et al., 2011a). First, there are other unrelated and exogenous variables not related to job quality that can affect job satisfaction, such as life satisfaction. Second, there is an extremely small variation in the distribution of job satisfaction between countries despite large differences in conditions of work and employment, suggesting that job satisfaction and job quality are not synonymous.

The second approach involves asking workers what they consider to be important in terms of job quality. This approach gives workers a voice in determining what makes a job seem good or bad but, often for the sake of practicality, workers are presented with a predefined set of criteria to be ranked. A version of this approach is evident in Jencks et al.’s (1988) Index of Job Desirability based on ‘a job’s average desirability in the eyes of workers’ (p. 1330). Significantly, leaving out important criteria can have a ‘disastrous effect’ on understanding job quality as critical factors may be excluded (Muñoz de Bustillo et al., 2009: 13).

The third approach involves a literature review covering the different perspectives and approaches in research studies on how work and employment affects workers. Advocated by Muñoz de Bustillo et al. (2009), the strength of this approach is that it is based on existing literature and can be multidisciplinary. It also focuses only on aspects of the job, by which Muñoz de Bustillo et al. mean, broadly, the organisation and nature of work being undertaken, and the terms and conditions of employment under which this work is performed. The inclusion of extraneous, wider socio-economic issues, such as the extent of child labour or gender equality within the labour market (e.g. ILO, 1999), while important in themselves, are excluded as not constituent of the job per se. 5

This last approach was adopted for the original study for the CIPD (Warhurst et al., 2017) and so used again for our replication study. The type of literature review used is based on a ‘textual narrative synthesis’. This method involves standard data extraction from different studies and then compares similarities and differences across the studies (Xiao and Watson, 2019). Data collection is not intended to be comprehensive or exhaustive but indicative. Such reviews set boundaries for data search inclusion and exclusion, categorise subsequent data and, while rigorous in approach, recognise that there can be search constraints driven by time and other resources. For this reason, they need to be undertaken by researchers familiar with the subject. What is important is that the method is transparent and can be repeated (Khangura et al., 2012).

Replication studies can be conducted by different researchers to the original study (independent replication) or the same researchers (dependent study) (Heers, 2021). The one reported here is the latter, though the original team augmented. Using textual narrative synthesis for this replication study, we draw on multidisciplinary, international scientific and grey literatures using quantitative and qualitative research methods. The objective of this replication study is to assess if the same common dimensions and indicators still exist in this study as they did in the original study. If the indicators of job quality in this replication study cluster into the same six dimensions as emerged from the first literature review in the original study, the findings can be regarded as valid and reliable, and the standard measure can be used with greater confidence.

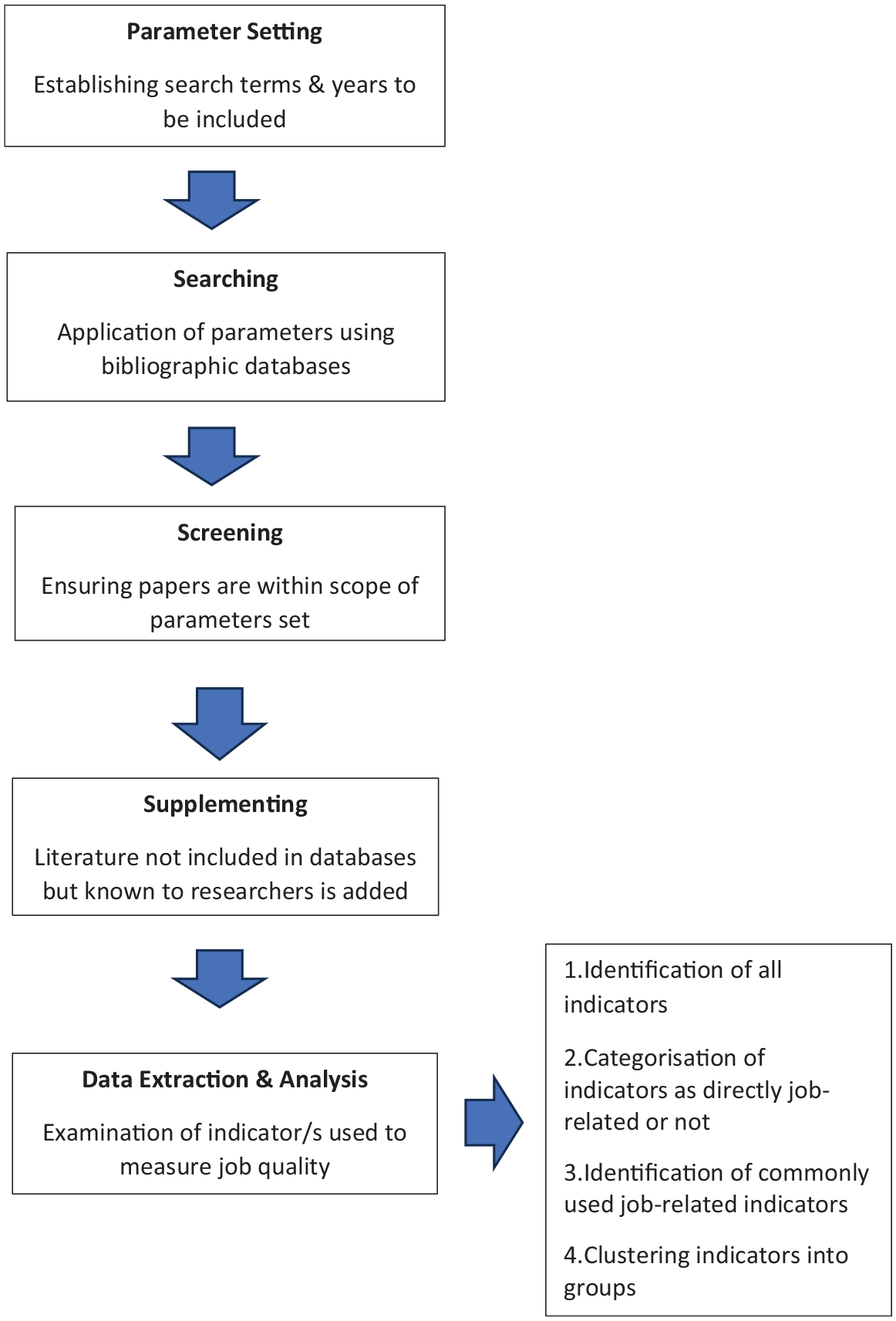

The replication study review comprised five phases: parameter setting, searching, screening, supplementing, and data extraction and analysis (see Figure 1). The first phase, parameter setting, drove the information-gathering process, including establishing the search terms and years to be included. Reflecting job quality as a family of often interchangeable concepts (Warhurst et al., 2022), a wide range of search terms was used, namely: ‘job quality’, ‘quality of work’, ‘quality of employment’, ‘good work’, ‘decent work’, ‘quality of working life’, ‘fair work’, ‘fulfilling work’ and ‘meaningful work’. In this review, we cover the period 2002–2022, thereby augmenting the publications in the first review, which covered 2002–2017, and ensuring more current coverage. The second phase involved data collection through the application of the parameters using relevant bibliographic databases. The databases searched were: ABI/INFORM Global; Applied Social Science Index and Abstracts; Business Source Complete; EBSCOhost; EconLit; Emerald; ERIC (via EBSCO); International Bibliography of the Social Sciences; JSTOR; Oxford Scholarship Online; ProQuest; SAGE Premier; Social Science Citation Index; Sociological Abstracts; Springer Link; Taylor & Francis Online; Wiley Online Library; and the OECD iLibrary. The third phase involved screening the data. As a feature of investigator triangulation (see Denzin, 1970), this screening provided assurance that all publications were within scope. Our scoping parameters required articles to be published between 2002 and 2022, be in English, include measures of job quality, and be based on original empirical research; thus excluding non-empirical studies such as those that are purely conceptual or policy elaborations. This focus generates a coherent dataset by type of publication and type of study. In the fourth phase, literature not included in the bibliographic databases but known to the researchers was considered. In addition to academic literature in the form of journal articles, books and book chapters, grey literature, such as reports, was included. The reference lists of publications were also scanned to identify additional relevant publications. When the same authors had published multiple articles using the same measure of job quality using the same data source, it was the earliest publication that was retained.

Stages of literature review.

Thus, in the replication study, we created a new dataset that is both narrower and wider than that deployed in the first review. Narrower in that we only include publications reporting empirical research to ensure that the emergent measure has a clear theoretical grounding (cf. Muñoz de Bustillo et al., 2011b). Wider in that the review now covers 20 years, which allows us to not only update the dataset with new studies but also sense-check the original inclusions. The dataset for the first review involved 60 publications. Seventeen of these publications were omitted in the replication study for being either purely conceptual or policy elaborations. Thirty-two new publications were added, either to reflect new studies post-2017 (N = 13) or, in line with textual narrative syntheses, using the researchers’ knowledge of the field to include publications such as book chapters not identifiable through bibliographic databases (N = 19). A total of 75 studies were finally identified as in scope for the replication study after screening (these studies are listed in Appendix A in the supplementary material and not in the references section of this article). These studies included both qualitative and quantitative research. They were drawn from a range of disciplines: sociology, economics, politics and psychology for example, with concentrations by sub-discipline; for example, within politics, political economy and within economics, labour. Some studies were multidisciplinary and intentionally so, for others it is difficult to discern discipline with confidence. The creation of this new dataset is central to the replication study. Following Heers (2021), it enables assessment of both the reproducibility of the initial results and the replicability of the methods used to achieve those results.

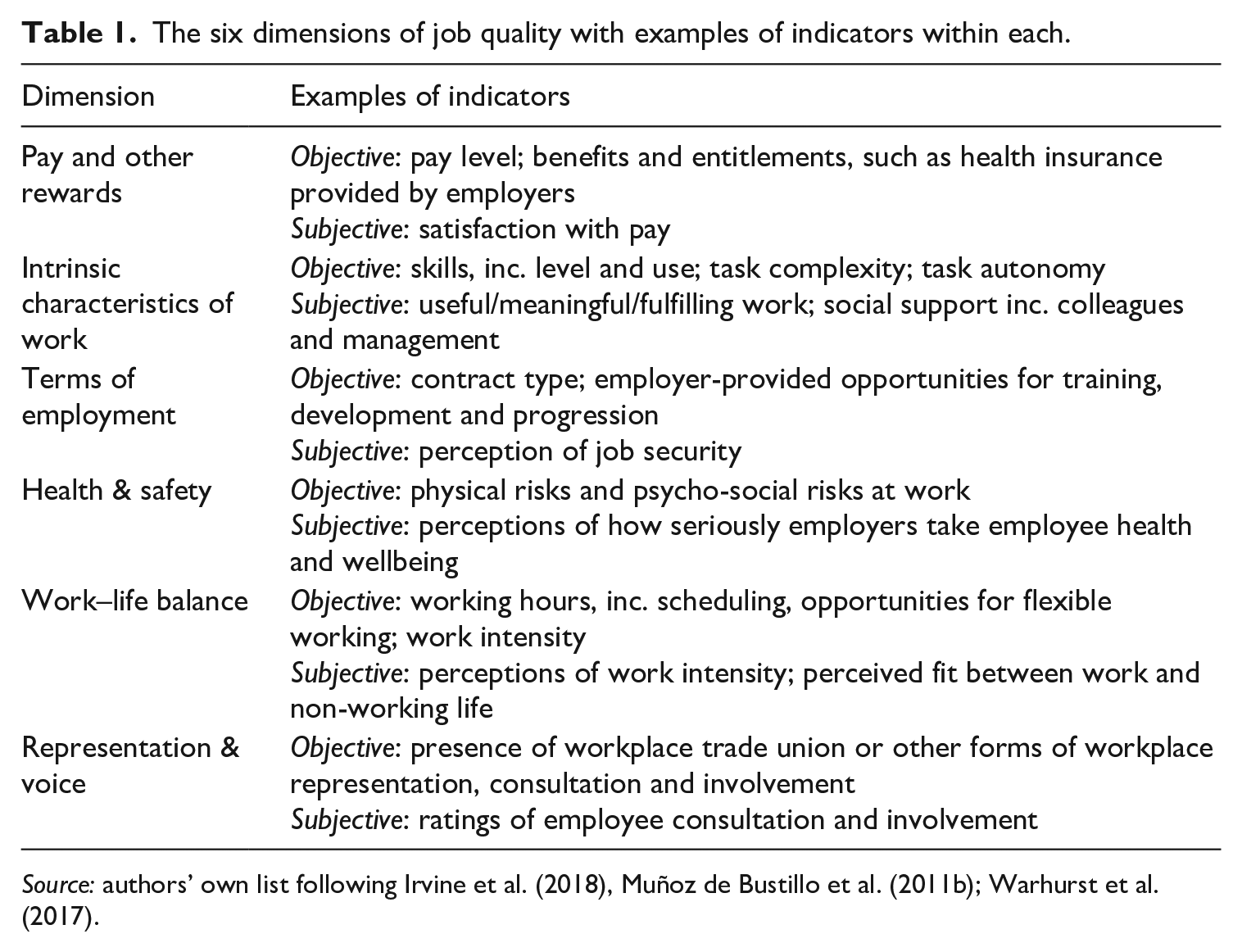

The fifth phase involved data extraction, involving an in-depth examination of each study’s indicator/s used to measure job quality. For this phase, a framework for analysing the data was developed based on content analysis of the studies, which involved four stages. First, all of the indicators used in each empirical study were identified and listed. Second, indicators were categorised based on whether they were directly job-related or not. We determined the latter as not relevant to job quality per se (e.g. adult literacy rates in Ghai, 2003). Third, among those indicators that were directly job-related, we distinguished between those that were commonly used across many studies and those that were relative outliers, occurring in very few studies (see Appendix B in the supplementary material). Fourth, we assessed whether any of these commonalities clustered into groups. In developing these clusters, we were influenced by previous work by leading researchers in this field (e.g. Erhel et al., 2012; Muñoz de Bustillo et al., 2011b). At the end of each stage, investigator triangulation was conducted to resolve any inconsistencies or ambiguities within the data. This practice has been used elsewhere in similar exercises, for example by Kreshpaj et al. (2020) in developing a common definition of precarious employment. This process resulted in the indicators for the six dimensions that make up our standard measure. Illustratively, the indicators for ‘Pay and other rewards’ encompass pay level, benefits and entitlements (such as health insurance provided by employers), and satisfaction with pay. The indicators for ‘Health & safety’ envelop physical risks and psycho-social risks at work, and perceptions of how seriously employers take employee health and wellbeing (see Table 1 below). Importantly, the six dimensions represented the most commonly used job quality indicators across the 75 studies examined, ranging in frequency from 24 (Representation & voice) to 61 (Terms of employment) (see Appendix B). By contrast, the less commonly used indicators ranged in frequency from one to five across the studies examined.

The six dimensions of job quality with examples of indicators within each.

Source: authors’ own list following Irvine et al. (2018), Muñoz de Bustillo et al. (2011b); Warhurst et al. (2017).

This five-phase methodology provides a robust approach to determine a standard measure of job quality and assess its validity and reliability. As we discuss further in the conclusion, this methodology also offers a means for undertaking future updates or replication studies of the measure.

Confirming the standard measure of job quality

The replication study confirmed some of the measurement problems outlined earlier in this article, as well as the overall heterogeneity of, even messiness among, measures currently being used. It also presented the opportunity to provide more detail of that heterogeneity. First, the use of measures is still constrained by the contents of available datasets (cf. Green, 2006, 2021). Second, despite its limitations, job satisfaction is used in a number of studies (e.g. Wilson, 2016), while being explicitly rejected in other studies (e.g. Chen and Mehdi, 2019). Third, some studies use indicators related only to aspects of the job (e.g. Okay-Sommerville and Scholarios, 2013), other studies include wider indicators, either because of an interest with wider policy concerns such as child labour or youth unemployment (e.g. Bescond et al., 2003) or because it is posited that perceptions of job aspects are related to other factors such as family commitments and labour market opportunities (Cooke et al., 2013). In some cases, intra- and extra-organisation variations of the same indicator are included; for example in Howell and Kalleberg’s (2019) analysis of pay, pay rates and share of poverty wages, respectively. Fourth, and relatedly, some of these studies’ indicators focus on the national level rather than workplace or worker levels, as Ghai (2003) illustrates with his ‘basic rights indicators’ of national civil liberties and national trade union density. Fifth, some studies conflate work and employment practices, offering them together as ‘the quality of work’ (e.g. Brisbois, 2003), or use the terms ‘job’, ‘work’ and ‘employment’ interchangeably as if they are synonymous. By contrast, other studies disentangle work and employment as concepts and offer measures for one or the other (e.g. Amossé and Kalugina, 2012) or both (e.g. Holman and McClelland, 2011). Sixth, the type of indicators used by researchers in different disciplinary traditions varies as expected; for example, pay by economists (e.g. Goos and Manning, 2007) and task discretion/autonomy by sociologists (e.g. Gallie, 2007). Seventh, there is considerable variation in the number of indicators used. There can be as few as one (e.g. Vieira et al., 2005) and as many as 26 (Stuart et al., 2016), though some of the latter’s list are variations, for example of pay – decent hourly rate and fair pay. Finally, some studies simply offer a list of indicators (e.g. Curtarelli et al., 2014), while others group similar indicators (e.g. Handel, 2005). All of this variety makes comparisons across occupations, industries, countries and time difficult, constraining the utility of the research base and undermining policy development.

Importantly, the replication study also identified several points of implicit consensus across the studies. First, employee reporting of job quality is regarded as essential, even if complementary data are gathered from employer respondents (e.g. Oxford Research, 2011). Second, most studies focus on aspects of the job rather than features of the labour market or wider public policy (e.g. Leschke et al., 2012). Third, there is common inclusion of both objective and subjective indicators (e.g. Crespo et al., 2017). Fourth, and significantly, a relatively high degree of overlap exists among the indicators used (see Appendix B). Fifth, these indicators are often clustered. For example, Findlay et al. (2017) cluster contract status, job security, pay and working hours into a ‘distinct area of study’ (p. 115). Sometimes these clusters are conceptualised as distinct, either broadly as ‘work quality’ or ‘employment quality’ (e.g. Holman and McClelland, 2011) or bundles based on particular job aspects, as Bonnet et al.’s (2003) ‘Income security index’ and ‘Voice representation security index’ illustrate. Sixth, there is a high level of agreement among researchers that job quality is a multidimensional construct (e.g. Davione et al., 2008). Finally, when indicators are clustered and explicitly articulated as ‘dimensions’ (such as ‘pay and other rewards’ or ‘work–life balance’), the range of such dimensions is 3–11, with the mode being 3–6 dimensions (e.g. Chen and Medhi, 2019; Erhel et al., 2012; Kalleberg, 2011). This implicit consensus about the approach to measuring job quality and the commonalities in the use of particular indicators suggest the possibility of identifying a set of standard metrics for measuring job quality.

In the original study for the CIPD, six dimensions emerged. Two changes were made to those dimensions by the CIPD and MJQWG. First, one of the dimensions (Intrinsic characteristics of work) was split into two by the CIPD to create a separate, seventh dimension (Relationships at work) in its UK Working Lives survey (Gifford, 2018) because of the CIPD’s explicit remit regarding people management. Second, there was some relabelling by the MJQWG. ‘Pay & other rewards’ became ‘Pay & benefits’, ‘Intrinsic characteristics of work’ became ‘Job design and the nature of work’ and ‘Relationships at work’ became ‘Social support and cohesion’ (Irvine et al., 2018). For consistency, in the replication study we used the original dimensions’ labelling. The six dimensions are: Pay and other rewards, Intrinsic characteristics of work, Terms of employment, Health & safety, Work–life balance, and Representation & voice; with each dimension including a series of related indicators (see Table 1).

In framing these dimensions, we drew on but adapted and extended the dimensions suggested by Muñoz de Bustillo et al. (2011b). In their analysis, Muñoz de Bustillo et al. list five dimensions of job quality. These dimensions are pay, intrinsic job quality, employment quality, health and safety, and work–life balance. The label ‘intrinsic job quality’ can be tautological in a measure of job quality. To avoid confusion, we call this dimension ‘intrinsic characteristics of work’. In their analysis, an additional dimension emerged that Muñoz de Bustillo et al. call ‘participation and industrial democracy’. However, it was deliberately omitted from their subsequent statistical analysis because Muñoz de Bustillo et al. wished to apply the European Working Conditions Survey (EWCS) dataset to the dimensions and, at that point in time, there were no data in the EWCS that could be applied to support analysis of this dimension. As we are not constrained by dataset limitations but rather seek to identify what emerges as a standard set of metrics through analysis of empirical studies, we include a version of this sixth dimension as it also emerges from our review. We label it ‘Representation & voice’ in order to avoid past conceptual debate about whether this voice and representation constitute ‘industrial democracy’ (see Ramsay, 1980). Given the popularity of the EWCS among job quality researchers, the lack of data previously in the EWCS might be one explanation as to why this dimension has received relatively less research attention compared with the other five dimensions (see Appendix B). As noted above, we also relabel the ‘intrinsic job quality’ dimension as ‘Intrinsic characteristics of work’, as the indicators in this dimension reflect work practices (i.e. skills, task complexity, task autonomy, fulfilling work and social support). We also extend ‘Pay’ to become ‘Pay & other rewards’ as it better reflects the use of other non-wage rewards, such as employer-provided paid sick leave and pensions, as indicators within the empirical studies (e.g. Howell and Kalleberg, 2019).

Conceptually, the replication study confirms that the inclusion of both objective and subjective indicators of job quality is not universally accepted. Some studies only use objective measures – for example, median wages (Goos and Manning, 2007). However, the use of both types of measure is common and, as Muñoz de Bustillo et al. (2011b) point out, gaining acceptability, if only because of data availability. It should be noted, however, that some of the dimensions lend themselves to inclusion of particular objective and subjective indicators. ‘Pay and other rewards’ for example can include objective indicators such as level of wage and non-wage benefits such as paid sick leave, and subjective indicators such as satisfaction with pay (e.g. Knox et al., 2015). Likewise, ‘Terms of employment’ can include objective indicators such as type of contract and opportunities for training, development and progression, and subjective indicators such as perception of job security (e.g. Pyoria and Ojala, 2016). The inclusion of both types of indicators is necessary to reflect the important point that workers’ subjective perceptions of objective aspects of their jobs can vary depending upon their circumstances, expectations and needs. For example, job security is deemed to be more important to older than younger workers in the same occupation (Belardi et al., 2021). Examples of both potential objective and subjective indicators are therefore offered as appropriate for each dimension (see Table 1).

Beyond the objective–subjective issue, there is recognition that ‘Health & safety’ should cover both physical and psycho-social risks in jobs (e.g. Tangian, 2009), that ‘Work–life balance’ should include working time arrangements as well as work intensity (e.g. Pocock and Skinner, 2012) and that ‘Representation & voice’ should include workplace-based trade union and other forms of (collective) representation as well as employee consultation and involvement in decision-making (e.g. Hurley et al., 2012).

Overall, the replication study confirmed three types of indicators are used to measure job quality: first, indicators that are common in the study of job quality and relate only to aspects of the job; second, indicators that are occasionally used and also relate only to aspects of the job; and, third, indicators that are occasionally used but do not directly relate to aspects of the job. When extraneous non-job-related and occasional job-related indicators are removed, there are clear commonalities in the use of particular indicators, which, clustered, confirm the six dimensions of job quality emergent from the first literature review in the original study (see Appendix B).

Discussion and conclusions

This replication study re-affirmed the variety of job quality measures. As Muñoz de Bustillo et al. (2011b) argue, such variety creates contradictions and incomparability across research. The lack of scientific consensus about the measurement of job quality also hampers policy development (Taylor et al., 2017). This article promotes that consensus by conducting a dependent replication study (Heers, 2021) of the original study that led to the standard measure of job quality adopted to report job quality for the UK (e.g. ONS, 2018, 2022). It draws on a range of empirical research on job quality published between 2002 and 2022 and confirms the six dimensions of job quality in that original study (Warhurst et al., 2017) and should create confidence in the standard measure.

This measure establishes the parameters of job quality that should be used to inform research and policymaking. It is already gaining some research traction – as Jones et al. (2024) illustrate in their analysis of the relationship between welfare policy and job quality. It is important to emphasise that the six dimensions emerge not from one particular empirical study of job quality but from a range of empirical studies across a 20-year period within which we identified commonality in the indicators used to measure job quality. The measures therefore are not an attempt to add to the multiplicity of existing measures but rather to consolidate them. As such, they do not champion any one set of measures but offer best use of all measures. The resulting standardised measure provides opportunity for multidisciplinary scientific consensus of what constitutes job quality, as well as what does not, and thereby offers much-needed measurement consistency (cf. Kalleberg, 2016).

Given its intention to provide consistency in analysing, monitoring and evaluating job quality and supporting UK policy development through research, it is important that the standard measure be subject to periodic assessment. The first exercise in that task is undertaken and reported in this article and confirms the six common dimensions of job quality as that standard measure. As Heers (2021: 4) notes, if the same results are achieved as with the original study but using different data, it ‘contributes to a strong evidence base’. The second purpose of the replication study was to enhance replicability; that is, develop the method by which we can determine what comprises job quality. For social science’s continued credibility, it is important that such studies are pursued (Freese and Paterson, 2017) and having the means to do so is likewise important (Baker, 2016). This article transparently sets out that method, covering both approach and phases in a way that should be reproducible (see Khangura et al., 2012). Overall, as Baker (2016) frames it, the study affirms both the reproducibility of the measure and the replicability of the method that derives the measure. In pursuing and delivering on these two purposes, the replication study achieves its twin tasks. First, it increases confidence in this measure by assuring its validity and reliability. Second, it establishes and codifies a method for future replication studies. Subsequently, these findings provide greater understanding of job quality and improve conceptual clarity.

In terms of periodic assessment, the literature on replication studies seems to omit discussion of the desirable frequency of such studies. The implication is that they occur ad hoc or when funding is available. Given that there are continual developments to both work and employment, we, however, recommend that the six dimensions are reviewed periodically to assess if they hold constant or require revising in light of new empirical studies. In terms of frequency, we suggest that such reviews follow the example of the Standard Occupational Classification (SOC): every 10 years a working group undertakes a full review of new and disappearing occupations and then publicly publishes the revised SOC. 6 We also suggest that any future review of the job quality standard measure follow the methodology outlined for our replication study; that is, by reference to emergent empirical research in order to ensure that the job quality measure captures any developments.

It should be noted that while the Taylor Review intended the standard measure to be a UK-level measure, the MJQWG also intended the measure to have utility within the UK’s patchwork of political governance (Irvine et al., 2018). Contrary to the claim by Sisson (2019) that they are vastly different, there is, in fact, strong overlap between the contents of Good Work, as job quality was called by the Taylor Review and adopted by the UK’s ONS, and the versions of Fair Work within the UK; see, for example, Zemanik (2020). 7 The measure has been adopted by the Northern Ireland Executive and incorporated into the requirements for businesses wanting to tender for government contracts. 8 In addition, the measure also features in the employment charters of London and the English regional Metropolitan Combined Authorities such as Greater Manchester (Dickinson, 2022). Of course, there are additional items that feature in these charters across the devolved nations and English regions that reflect local policy priorities; for example, in Wales, encouraging Welsh language use at work (Fair Work Commission, 2019), but, given the strong content overlaps, core measurement needs exist across all.

As Taylor et al. (2017) pointed out, having a standard measure of job quality for the UK is an important step forward for research and policy but other tasks remain. The key challenge is that neither countries and regional authorities within the UK nor the UK itself has the necessary supportive dataset to analyse and monitor their expressions of job quality. Developing that dataset must be the next task (Elias, 2022). The development of the six dimensions was not confined by current data availability but the measure does need supportive data. At the moment, a popular dataset used by UK (and other) researchers is the European Commission’s EWCS (see, for example, Holman, 2013). However, its UK (and other countries’) sample size is too small to allow robust within-country disaggregated analysis. Moreover, with Brexit, the UK is not continuing participation in this survey. In time, the CIPD’s use of the measure in its new annual UK Working Lives survey will help, although its sample size, as with that of the Economic and Social Research Council-funded Skills and Employment Survey (SES) is also too small to allow the level of detailed analysis that is required (Elias, 2022). Moreover, neither the funding nor periodicity of the SES are fixed, and its sampling is England-centric. As an initial response to this data deficit, the MJQWG suggested that the UK Labour Force Survey (LFS) might be used by the ONS to administer a job quality module based on the standard measure, a suggestion repeated by Elias in his feasibility study for improving data availability. Elias recommends that the module encompasses 50,000–60,000 jobholders in order to allow good analytical disaggregation. The ONS has already piloted the standard measure and extended coverage of it (see ONS, 2018, 2022). The use of such periodic supplementary modules within established surveys is not unusual (see Wright et al., 2018). The data generated by the ONS using the UK LFS would be useful not only to the UK government but also devolved governments within the UK.

It would also be useful if a standard cross-national measure could be developed (Osterman, 2010). Indeed, it is vital to policy development internationally (ILO, 2019). The measure affirmed in our replication study might meet that need. Dobbins (2022) highlights a number of countries – Spain, New Zealand and the Netherlands for example – where governments are actively developing job quality policies, though each using different measures. In 2015, when the G20 countries, including the UK and the other major advanced economies, signed the Ankara Declaration pledging to improve job quality, they did so without offering a measure. Similarly, the European Commission (2012) and OECD (2018) both encourage their member countries to create more good jobs but each suggests using different measures. The UN Expert Group on Measuring Quality of Employment (UN, 2015) has the involvement of both the EU and OECD but its measure includes some indicators that are extraneous to the job, and it admits that some indicators are ‘experimental’ (p. 6) and that further work is needed on its measure.

Our standard measure offers a way forward. It has been recommended as a standard measure of job quality for the EU by European trade unions (IndustriAll, 2024). In the case of the EU, five of the six original dimensions are now addressable through the latest EWCS as, from its 2015 sixth edition, this survey now includes items that would support the Representation & voice dimension. The other dimension not supported by the EWCS – Pay and benefits – can be addressed through use of data from the EU’s Statistics on Income and Living Conditions (EU-SILC). However, the EWCS is administered only every four years, which is probably too infrequent for policy cycle purposes. Changes to some aspects of job quality – for example, pay (Costa and Machin, 2017) and work intensification (Felstead et al., 2013) – can be more rapid, particularly following economic shocks. The European Commission could make the EWCS bi-annual, though of course it would still need to address the problem of small national sample sizes. The alternative would be for the Commission to also use the EU-LFS for data generation in the same way as the UK ONS; an option that could also be pursued by some OECD member countries. If policy is to be supported internationally, it is important that a standard measure is quickly adopted so that a start can be made to build up comparative data cross-nationally and longitudinally.

In terms of its cross-national and longitudinal use, we suggest that the methodology used to develop the standard measure – the clustering of indicators into six dimensions without being dogmatic about the contents of each dimension – offers some temporal and spatial flexibility to researchers and governments in terms of which indicators might be included in particular dimensions. With the ‘Terms of employment’ dimension, for example, currently having an indicator of zero-hours contracts in the UK makes sense (see ONS, 2021) but might be less relevant to other countries (Fillan, 2021). Similarly, some aspects of job quality that are important in the US, most obviously work scheduling (Carré and Tilly, 2017), tend to be absent in Europe (Fillan, 2021). However, there is a need to be sensitive to potential indicator clutter and the importance of being able to offer clear presentation of findings (Eurofound, 2012). Baseline understanding of the extent of, for example, permanent or temporary employment in each country and the extent of employee perceptions of job security can still be measured and would enable comparative analyses without insisting upon including all indicators for all countries. What is important is that the scope of the six dimensions is included in order to provide consistency in measurement and reporting. We accept that our method has a language bias in that only English language publications are considered. If the standard measure is adopted beyond the UK, then periodic review internationally might occur and rightly include empirical research published in other languages.

At this stage, we leave open how job quality is reported from these data (e.g. as a composite index or as a dashboard of the six dimensions), recognising that it is a matter of debate. Although information can be lost, a single composite index that generates an easy-to-grasp quantified level of job quality (e.g. six out of 10) might be championed because single numbers gain policymaker attention, as Elias (2022) argues and illustrates with his example of the Index of Multiple Deprivation. On the other hand, Eurofound (2012) point out that when [lay] people use the term ‘job quality’, they implicitly think of it as comprising several dimensions. It would make sense then to report it in a way that would seem understandable to people. What is important, we suggest, is that all dimensions of the standard measure are encompassed in any reporting.

Job quality measurement incomparability is not helpful to either research or policy. If social science is to seriously engage and enable UK and international policy to improve job quality, it needs to have a standard measure – in the same way as exists with other phenomenon such as innovation (OECD, 2005). With the appropriate dataset, the six dimensions affirmed in our replication study provide that standard measure – even if adapted to become seven dimensions (Gifford, 2018; Irvine et al., 2018). Adoption will enable UK job quality to be better understood conceptually as well as to be monitored and evaluated more consistently and regularly. The outcome, hopefully, will be that job quality interventions can be designed and job quality improved. That the measure emerges from not just multidisciplinary but also international studies suggests that scaling up the UK measure to create an internationally accepted standard measure of job quality would be possible as well as useful for future cross-national comparisons, initially at least across the advanced economies.

Supplemental Material

sj-docx-1-wes-10.1177_09500170251325774 – Supplemental material for Developing a Standard Measure of Job Quality

Supplemental material, sj-docx-1-wes-10.1177_09500170251325774 for Developing a Standard Measure of Job Quality by Chris Warhurst, Angela Knox and Sally Wright in Work, Employment and Society

Supplemental Material

sj-xlsx-2-wes-10.1177_09500170251325774 – Supplemental material for Developing a Standard Measure of Job Quality

Supplemental material, sj-xlsx-2-wes-10.1177_09500170251325774 for Developing a Standard Measure of Job Quality by Chris Warhurst, Angela Knox and Sally Wright in Work, Employment and Society

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.