Abstract

Facility with learning from multiple text documents is critical for college and career readiness. However, children with reading difficulties and disabilities have been largely excluded from research on multiple document comprehension. Accordingly, little is known about how children with reading difficulties and disabilities perform on multiple-document tasks, the factors that predict their performance, and how best to foster their development of this skill. To begin to fill these gaps, we examined concurrent predictors of one dimension of multiple-document comprehension, intertextual integration (ie, the ability to integrate information presented across two or more text documents), among third-grade children with reading difficulties and disabilities (N = 70). Correlational analyses revealed that children's intertextual integration performance was significantly and positively associated with single-document comprehension (SDC) but not with word-level reading (WLR) or passage-reading fluency (PRF). Multiple-regression analyses indicated that SDC significantly predicted intertextual integration but that WLR and PRF did not.

Keywords

For many academic, occupational, and civic purposes, readers must search for, select, evaluate, and integrate information from multiple document sources (Bråten et al., 2020; Britt et al., 2018). Indeed, most current conceptions of functional literacy are defined in part by the ability to critically process and learn from multiple documents (Goldman et al., 2016; National Assessment Governing Board, 2021; Rouet et al., 2021). For instance, in the 2026 Framework for the National Assessment for Educational Progress (NAEP; National Assessment Governing Board, 2021), readers are expected to, “work across multiple texts and perspectives while solving a problem” (p. 19). Similarly, in current college- and career-readiness standards, children are expected in kindergarten, and continuing throughout 12th grade, to engage with, analyze, and learn from multiple document sources in language arts, science, and social studies (National Council for the Social Studies, 2013; National Governors Association & Council of Chief State School Officers, 2010; National Research Council, 2013).

Accordingly, tasks involving multiple documents are introduced early in children's formal schooling and quickly become a central feature of their language arts, social studies, and science education (Goldman et al., 2016; National Council for the Social Studies, 2013; National Governors Association & Council of Chief State School Officers, 2010; National Research Council, 2013). Nonetheless, studies consistently reveal that readers struggle even into adolescence and adulthood with the complex demands posed by multiple-document tasks (Segev-Miller, 2007; Wolfe & Goldman, 2005). Much has been hypothesized about how multiple-document comprehension unfolds (List & Alexander, 2019; Rouet et al., 2017), and the factors that give rise to performance differences (Anmarkrud et al., 2022; Barzilai & Strømsø, 2018).

Unfortunately, elementary-level children, and particularly those with learning difficulties (eg, dyslexia, attention-deficit/hyperactivity disorder), have been largely neglected in research on multiple-document comprehension. Consequently, little is known about how elementary-level children with and without learning difficulties process multiple documents and the factors that shape their performance. Moreover, it is unclear how multiple-document comprehension fits within current frameworks for identifying and supporting individuals with reading difficulties and disabilities (eg, Fletcher et al., 2018). This study was designed to take an initial step toward closing these gaps. Specifically, we examined how variation in other literacy skills (eg, word-level reading) is associated with one aspect of multiple document comprehension: intertextual integration.

Intertextual Integration

In psychology and education, intertextual integration has been conceptualized as the mental processes of corroborating, synthesizing, and organizing information from multiple document sources (Barzilai et al., 2018; Brand-Gruwel et al., 2009). Many frameworks, theories, and models have been proposed to account for intertextual integration and multiple-document comprehension more generally (Espinas & Chandler, 2024). The Documents Model Framework (DMF) was among the first and remains influential (Perfetti et al., 1999). The DMF builds upon memory-based models of discourse processing (eg, Construction-Integration Model; Kintsch, 1988) in proposing that to comprehend a situation presented across multiple documents, readers may need to construct at least two, interconnected mental representations: the Intertext Model and the Situations Model. The Intertext Model represents relations among the documents as well as between each document and the situation. The precise content of the intertext model varies depending on the reader's understanding of the task, their unique profile of cognitive resources, and their motivation to engage in the task. For instance, when reading to form an argument, a reader may construct a detailed intertext model containing information about each document's source (eg, author, setting, form), rhetorical goals (eg, intent, audience), and content. The Situations Model represents the situation presented among the documents. Under idealized circumstances, this would reflect a complete and accurate representation of the situation. However, the reader's actual representation may be distorted by their prior beliefs (Perfetti et al., 1999).

Over the past 30 years, intertextual integration, and multiple-source use more generally, has become a topic of growing interest (Braasch et al., 2018). The bulk of this work has focused on adolescents and adults. However, a small number of descriptive (eg, Many, 1996; Raphael & Boyd, 1991) and intervention (Guthrie et al., 1998; Wissinger et al., 2021) studies have involved elementary-level children. In the following, we will focus on findings from this nascent childhood literature.

In the context of social studies instruction, VanSledright (2002) observed few instances of fourth- and fifth-grade U.S. children corroborating textual information, either spontaneously or when directed. This suggests intertextual integration may prove difficult for children. An early study by Raphael and Boyd (1991) provides further evidence to this point. They had fourth- and fifth-grade U.S. children (N = 20) synthesize information from two parallel-structured text sets. For each set, children were directed to write a report about ideas presented in the texts. Though their responses varied, children generally struggled to produce balanced and coherent essays (Study 1). Interviews with 13 additional children (Study 2) revealed that difficulties arose for several reasons, including reliance on associative memory and recall, audience insensitivity, unnecessary elaborations, and copying from the source documents. The interviews also revealed large differences in children's understanding of the task, which appeared to shape the strategies they used to construct meaning from the documents. Later studies have provided additional evidence that tasks involving intertextual integration prove difficult for elementary-level children (Garcia-Rodicio et al., 2023; Kiili et al., 2020).

Factors that Influence Intertextual Integration

As with single-document comprehension (Snow, 2002), intertextual integration results from the interaction of reader, task, document, and contextual factors (List & Alexander, 2019). For instance, studies have shown that intertextual integration is affected by features of the task, to include the context in which it is being performed (eg, school, home), its purpose (eg, argumentation vs summarization), and the reader's perception of it (McNamara et al., 2023; Wiley & Voss, 1999). Performance may also be affected by features of the documents, such how related each source appears (Kim & Millis, 2006; Kurby et al., 2005), whether the sources present complementary or conflicting accounts (Saux et al., 2017), and whether the documents are printed or digital (Singer Trakhman et al., 2023). Finally, performance can also be affected by the reader's judgments about the relevance and trustworthiness of each document (Londra & Saux, 2023); their knowledge, beliefs, and interest in the situation and task (Demir et al., 2024; List & Alexander, 2019); and their unique profiles of cognitive and metacognitive skills (Espinas & Chandler, 2024; List & Alexander, 2019; Rouet et al., 2017).

The interaction of these contextual, task, document, and reader factors can result in different patterns of metacognitive and cognitive strategy use (Britt et al., 2018; List & Alexander, 2019), which may ultimately affect the structure and quality of the representations that the reader forms (Perfetti et al., 1999; Rouet et al., 2017). Much has been learned about each set of factors and how they relate for adolescents and adults (Braasch et al., 2018). However, little is known about intertextual integration for elementary-level children and the factors that affect their performance.

Evidence from a small number studies indicate that single-document comprehension (SDC) and word level reading (WLR) are positively and moderately associated with intertextual integration among children spanning Grades 3 through 5 in Italy (Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Raccanello et al., 2022), the Netherlands (Beker et al., 2019), and the United States (Hattan et al., 2023). Notably, though, only one study has involved children below fourth grade (Hattan et al., 2023). Furthermore, although individuals with learning difficulties are starting to be included in research on multiple-document comprehension (Andresen, Anmarkrud & Bråten, 2019; Andresen, Anmarkrud, Salmerón et al., 2019; Englert et al., 1988, 1989; Kanniainen et al., 2022; Sabatini et al., 2014), we are aware of no studies that have examined predictors of intertextual integration among children with learning difficulties (see Espinas & Chandler, 2024). Additionally, in most of the existing studies, measures of intertextual integration were confounded with other multiple-document comprehension processes (eg, source selection and evaluation). There are valid reasons for this approach. Nonetheless, it limits what can be learned about associations with intertextual integration per se.

Purpose and Research Question

We examined associations among intertextual integration, SDC, WLR, and PRF (passage-reading fluency) among third-grade children with reading difficulties and disabilities. This work builds upon previous research that examined associations among WLR, PRF, and SDC (Peng et al., 2019), and associations among these skills and intertextual integration with older, mostly neurotypical adolescents and adults (Espinas & Chandler, 2024). Based on studies with slightly older, neurotypical children (Beker et al., 2019; Florit, Cain et al., 2020; Florit, De Carli et al., 2020), we hypothesized we would find small to moderate positive effects for WLR and PRF, and a small positive effect for SDC.

Method

Participants

The sample comprised third-grade children from the first cohort of a multi-year study aimed at testing the effects of a reading intervention. Participants were drawn from two public school districts located in metropolitan areas in Tennessee and Texas. Both districts have traditionally included large proportions of schools receiving Title I assistance.

We used a screening process to identify third-grade children with reading difficulties and disabilities. We screened all consenting third-grade children in the participating schools and classes with the reading comprehension subtest of the Gates-MacGinitie Reading Tests-Fourth Edition (GMRT-4; MacGinitie et al., 2010). All who scored at or below the 30th percentile were eligible to participate and were randomly assigned at both sites to an intervention or a comparison condition. The 30th percentile has been used as a cutoff in other studies involving individuals with reading difficulties (eg, Oslund et al., 2018). Students with an identified vision, hearing, or intellectual disability were excluded.

These standard selection criteria allowed for a clear definition of a sample of students with reading difficulties and students with identified disabilities struggling with reading, based on their current significant difficulties in understanding text; thus, providing a replicable sample. In the current study, we included only participants assigned to the intervention condition to avoid burdening teachers in the comparison condition with additional testing. Four participants did not complete the assessments and were removed from this study, leaving a total of 70 across both sites.

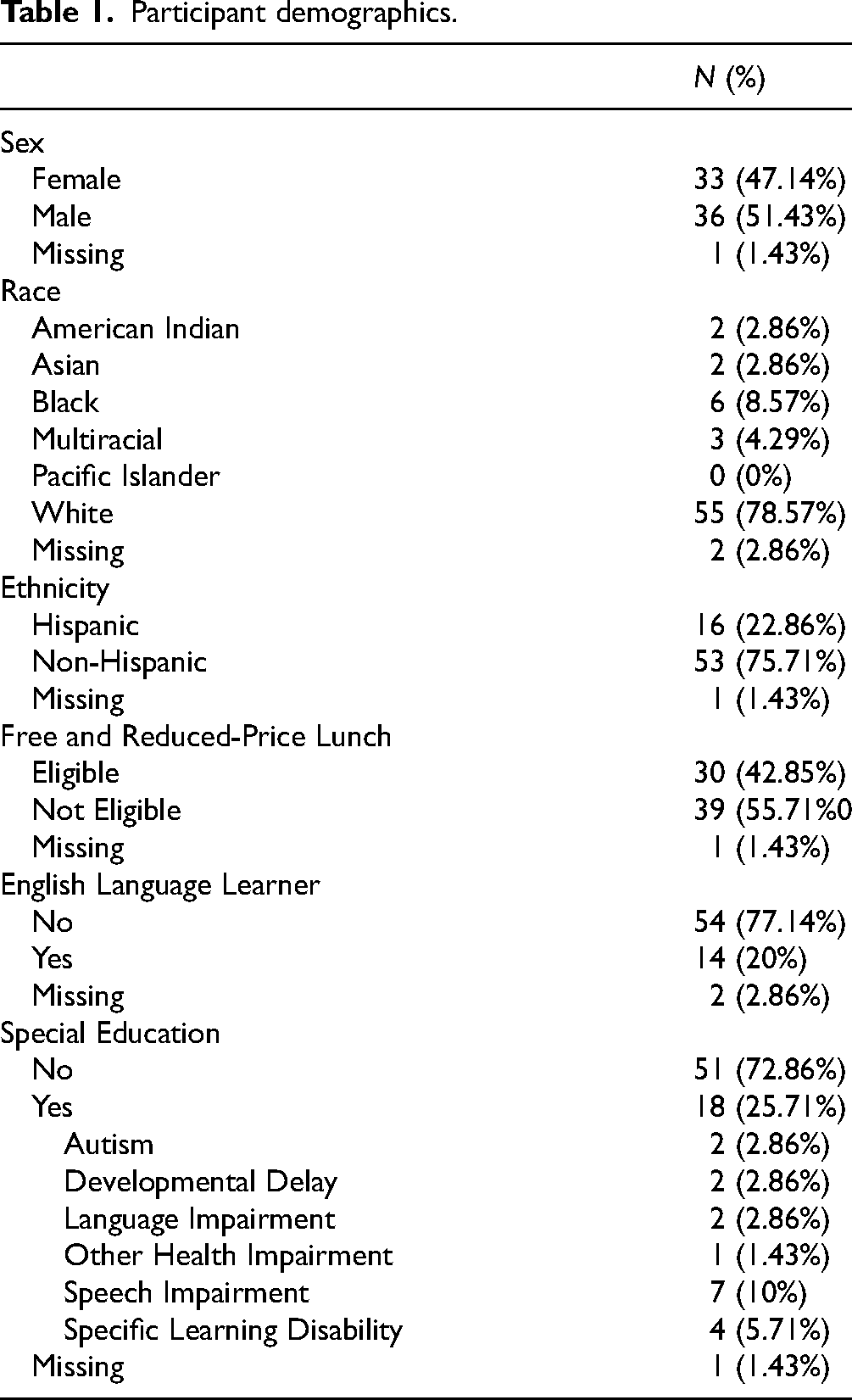

Participants’ demographics are presented in Table 1. Participants were hierarchically clustered within 32 teachers, 9 schools, and 2 states and were approximately evenly split between female (47.14%) and male. The sample was 2.86% American Indian, 2.85% Asian, 8.57% Black, 4.29% Multiracial, 78.57% White. Sixteen participants (22.86%) were Hispanic, of which one was American Indian and 15 were White. Twenty percent of participants were categorized as English Language Learners (ELs) and 42.85% were eligible for free and reduced-price lunch. About 26% received special education services. Of the students receiving special education services, 4 were Black, 13 were White, and 1 was multicultural. Disability categories included autism (2.86%), developmental delay (2.86%), language impairments (2.86%), other health impairment (1.43%), speech impairments (10%), and specific learning disability (5.71%). The mean age was 8.59 years (SD = 0.31).

Participant demographics.

Measures

Word-Level Reading

Woodcock-Johnson Test of Achievement-Fourth Edition (WJ-IV) Letter-Word Identification

The Letter-Word Identification test (Schrank et al., 2014) is an untimed measure of the ability to identify isolated English written letters and words. Items are presented from least to most difficult. The basal is the six lowest items correct, or Item 1. The ceiling is the six highest numbered items correct or the last test item. Items are scored dichotomously and summed for a total score. The measure has a median reliability of .90 for individuals 5 to 19 years of age (Schrank et al., 2014).

WJ-IV Word Attack

Word Attack (Schrank et al., 2014) is an independently administered, untimed measure of the ability to apply English phonics and structural analysis skills to pronounce unfamiliar, pseudowords in printed format (eg, tiff). Items are presented from least to most difficult. The basal is the six lowest items correct, or Item 1. The ceiling is the six highest numbered items correct or the last test item. Items are scored dichotomously and summed for a total score. The measure has a median reliability of .87 for individuals 5 to 19 years of age (Schrank et al., 2014).

TOWRE-II Word Reading Efficiency

TOWRE-II Word Reading Efficiency (Torgesen et al., 2012) is an independently administered, timed measure of the ability to pronounce high-frequency English words. One hundred and four words are presented in list form from least to most difficult. Participants have 45 s to read as many words as possible. Items are scored dichotomously and summed for a total score. Test-retest reliability estimates with the same forms averaged .89 to .93 for children in Grades 1 to 12 (Torgesen et al., 2012).

TOWRE-II Phonemic Decoding

TOWRE-II Phonemic Decoding (Torgesen et al., 2012) is an independently administered, timed measure of the ability to read pseudowords (eg, pim) presented in list form from least to most difficult. Participants have 45 s to read as many words as possible. Items are scored dichotomously and summed for a total score. Test-retest reliability estimates with the same forms averaged .89 to .93 for children in Grades 1 to 12 (Torgesen et al., 2012).

Passage Reading Fluency

Single-Document Comprehension

GMRT-4 Comprehension Subtest

The Comprehension subtest of the Gates-MacGinitie Reading Tests-Fourth Edition (GMRT-4; MacGinitie et al., 2010) is group-administered and contains narrative and expository structured passages, each followed by three to six multiple-choice questions. Participants have 35 min to read and answer questions. Items are scored dichotomously and summed for a total score. For Grade 3 children assessed in the fall, the measure has reliability (ie, KR20) of .93 (MacGinitie et al., 2002).

WJ-IV Passage Comprehension

Passage Comprehension (Schrank et al., 2014) is an independently administered, untimed measure of the ability to construct propositional representations, integrate syntactic and semantic properties of written words and sentences, and construct bridging inferences. Participants are directed to point to the picture corresponding to a rebus, or to a printed word, and then silently read passages to identify missing words. Items are scored dichotomously and summed for a total score. The measure has a median reliability of .83 for individuals aged 5 to 19 years of age (Schrank et al., 2014).

Intertextual Integration

To our knowledge, no test had been developed to measure intertextual integration with third-grade struggling readers. In other studies with children, writing tasks have generally been used. However, we believed this would prove too difficult for our targeted population. Therefore, we used the guidelines proposed by Royer (2001) to develop a sentence and inference verification task. Such tasks have been used in multiple-document comprehension studies with adolescents and adults.

In the test we developed, participants first independently read two brief, informational-structured texts in a fixed order. To account for background knowledge, the texts were written about a fictional animal (ie, wugs) that inhabits an alien world. Each text was 12 sentences long and evenly divided into three paragraphs. The texts were formatted as stand-alone, physical books. We used Coh-Metrix (McNamara et al., 2014) to ensure that the texts were written at a third-grade difficulty level. Participants were given a maximum of 12 min to read the two text documents. During this time, test administrators did not provide corrective feedback.

After reading, participants were administered two sets of questions about the texts. They first answered 12 sentence verification questions designed to assess literal recall of information presented within the texts, with questions evenly divided by text but randomly ordered within the test. Participants then answered five inference verification questions designed to assess the ability to connect and combine information from across the two text documents (ie, intertextual integration). Prior to answering the two sets of test questions, participants completed practice questions. The test was administered in small groups and designed to take 35 min. The test administrator read aloud all questions. Participants recorded their answers in individual response packets and were instructed not to work ahead. They were allowed to consult the texts as needed. Items were scored dichotomously and summed for a total score (maximum = 5). Sample-based α = .46,

Procedure

Prior to collecting data, the study was approved by the Vanderbilt University Institutional Review Board. Verbal consent was obtained from each student participant, and written consent from each student's parent or guardian. Participants were assessed in three sessions (screening; WRL, PRF, SDC; intertextual integration) in the fall of their third-grade year prior to intervention instruction. Data were collected by graduate-level students and full-time research assistants who were trained to administer and score each assessment with 100% reliability. Participants were tested in a quiet location in their schools during normal school hours in the fall of their third-grade year. Research assistants independently double-scored and double-entered all test data. Scores were checked for discrepancies. There was 100% scoring agreement.

Analysis

We conducted correlational and multiple-regression analyses to examine concurrent associations among WLR, PRF, SDC, and intertextual integration. All analyses were conducted in RStudio (Version 2023.06.0+421) with specific packages noted throughout. Model assumptions were reasonably met, and results were generally robust to sensitivity analyses.

Data reduction

To reduce the number of variables in the model and increase power (Brown, 2015), we used a two-stage factor score regression method (McNeish & Wolf, 2020). In Stage 1, we fit a one-dimensional confirmatory factor analysis (CFA) model with total scores from the WJ-IV Word Attack, the WJ-IV Letter-Word Identification, the TOWRE-II Phonemic Decoding, and TOWRE-II Word Reading Efficiency. We used the maximum a posteriori (MAP) method (Thomson, 1938) implemented in lavaan (Rosseel, 2012) to estimate and extract factor scores for each participant. With the MAP method, each participant's location on the factor is estimated (Thurstone, 1935). This is recommended when factor scores are intended to be used later as predictors (McNeish & Wolf, 2020).

Clustering

Participants (Level 1) were hierarchically clustered within classes (Level 2), schools (Level 3), and states (Level 4). When clustering exists and is ignored, the estimated variance and standard errors are generally too small and increase the likelihood of Type I error (McNeish, 2023). To examine how the proportion of total variance was explained by this clustered structure, we calculated intraclass correlation coefficients (ICCs) for each variable (Hox et al., 2018). The ICCs ranged from 0 to .17 at the teacher level (Level 2), 0 to .15 at the school level (Level 3), and 0 to .05 at the state level (Level 4). The ICCs do not indicate how much standard errors will be affected by clustering. However, they do indicate that scores on the dependent and predictor variables vary considerably across teachers and schools. Accordingly, we concluded that the clustered structure at these levels should not be ignored.

We considered several approaches for handling the clustered data structure. Although mixed-effects models (MEMs) are the most common approach used in educational and psychological research (Dedrick et al., 2009), we opted against this approach for three reasons. First, we were underpowered at Levels 2 and higher to detect even large magnitude effects (Spybrook, 2008). The small sample size also made it unlikely that the models would converge (McNeish & Stapleton, 2016). Second, it is unlikely that the data would have satisfied important MEM assumptions (eg, exogeneity; McNeish & Kelley, 2019). Third, our research question concerned only level 1 variables.

We decided to use sandwich standard errors for several reasons. In this approach, a fixed-effects 1 regression model is fit using least squares estimation. The coefficient standard errors are then corrected for dependencies induced by clustering among the model errors. This is intended to estimate and account for covariances between observations in the same cluster. Moreover, because the only aspect of the model that changes is the method for computing the standard errors, methods to address model fit such as R2 are not affected. For these reasons, we used sandwich standard errors.

Results

Descriptive and Correlational Analyses

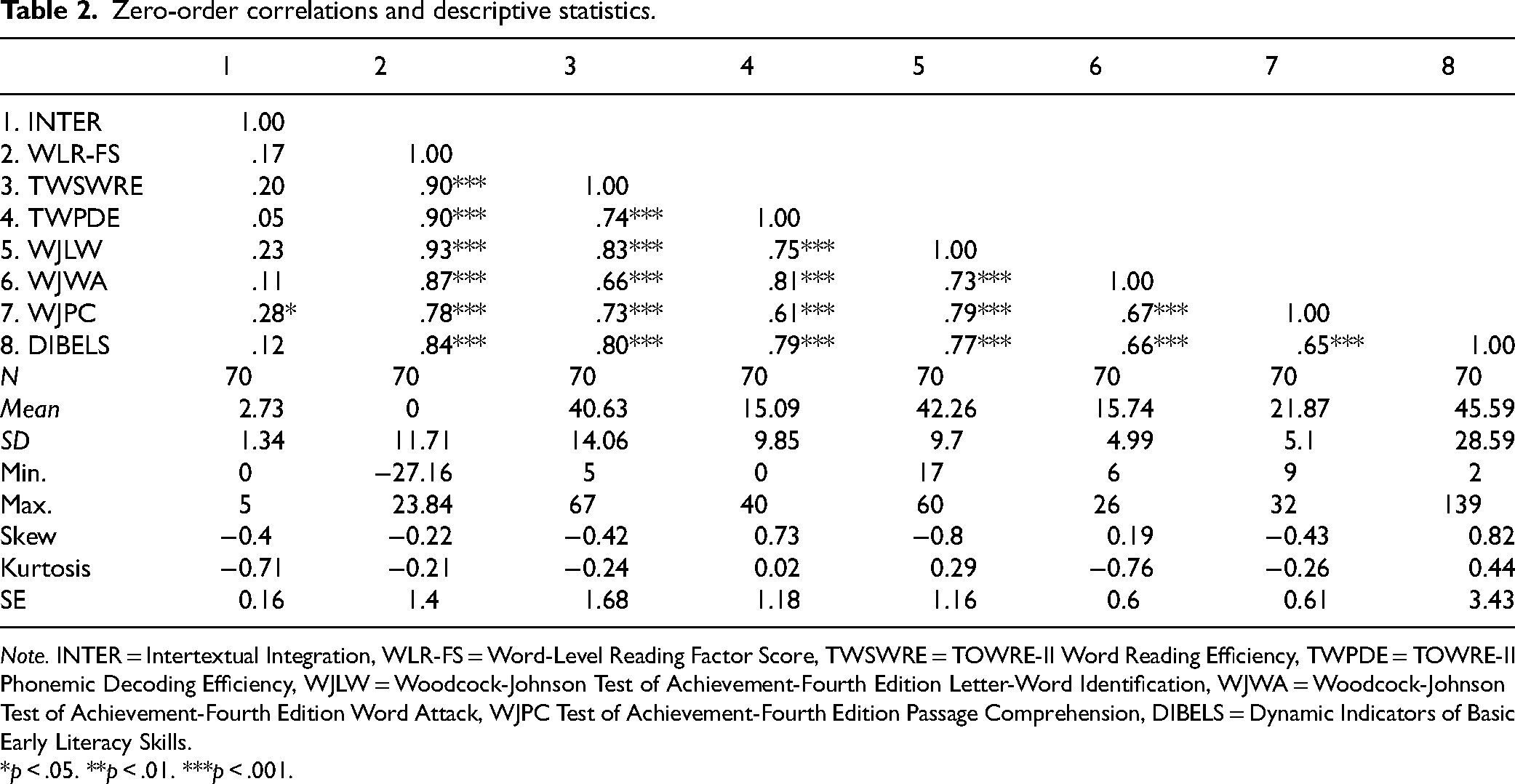

Descriptive statistics (eg, means, variances, skewness, kurtosis) are reported for each measure in Table 2. Internal consistency estimates (ie, alpha, omega) are also reported in Table 2 for the intertextual integration measure. Zero-order correlations among the test scores are presented in Table 2. Intertextual integration was positively and weakly correlated with scores from the other tests (rs = .05–.28). From among these, intertextual integration was significantly correlated only with SDC (r = .28, p = .01). Its association with the WLR factor score was small and nonsignificant (r = .17, p = .15), as were its correlations with the individual WLR measures (rs = .05–.23, p > .05). Intertextual integration was also weakly and nonsignificantly associated with PRF (r = .12, p = .38). Associations among the three predictor variables were all larger and significant. Word-level reading had a correlation of r = .78 (p < .001) with SDC and r = .84 (p < .001) with PRF. The correlation between SDC and PRF was r = .65 (p < .001).

Zero-order correlations and descriptive statistics.

Note. INTER = Intertextual Integration, WLR-FS = Word-Level Reading Factor Score, TWSWRE = TOWRE-II Word Reading Efficiency, TWPDE = TOWRE-II Phonemic Decoding Efficiency, WJLW = Woodcock-Johnson Test of Achievement-Fourth Edition Letter-Word Identification, WJWA = Woodcock-Johnson Test of Achievement-Fourth Edition Word Attack, WJPC Test of Achievement-Fourth Edition Passage Comprehension, DIBELS = Dynamic Indicators of Basic Early Literacy Skills.

*p < .05. **p < .01. ***p < .001.

Multiple-Regression Analyses

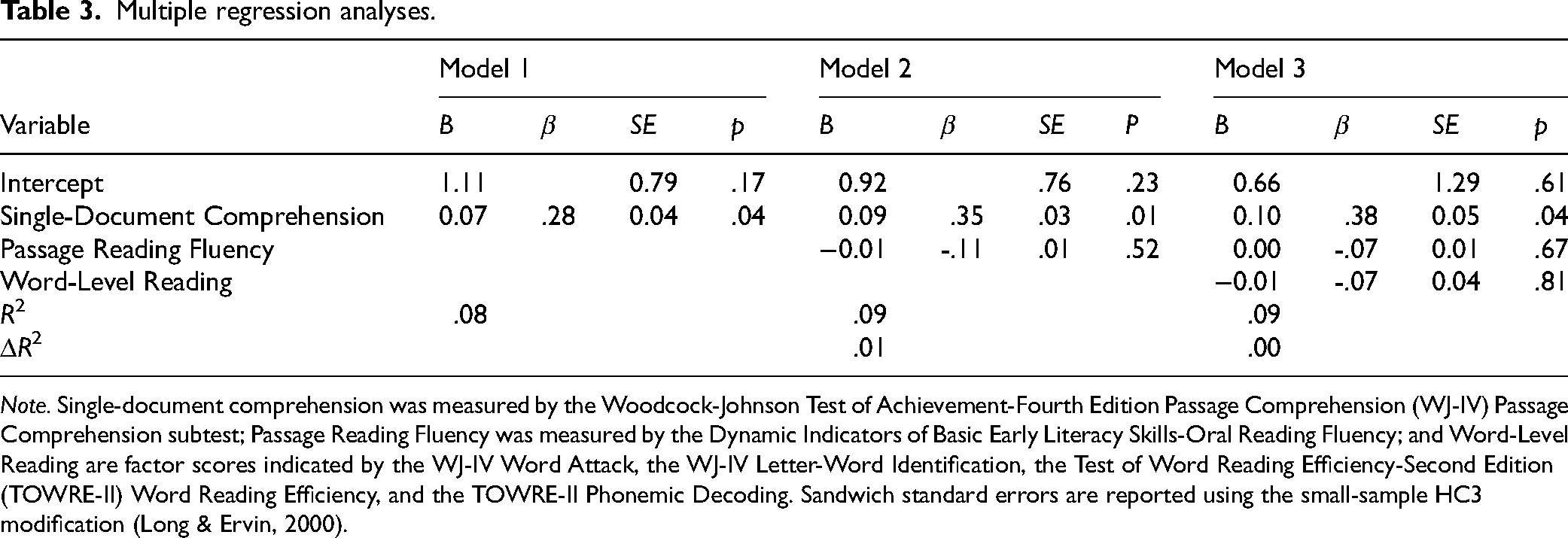

We began by examining potential control variables by simultaneously entering participant gender, free and reduced-price lunch status, EL status, and special education status into a multiple-regression analysis. None of these variables significantly predicted intertextual integration (p > .05) and were, therefore, excluded from further analyses. We then fit three multiple-regression analyses using a fixed-order hierarchical procedure. We used the sandwich package (Zeileis, 2022) to calculate sandwich standard errors with the HC3

2

small-sample correction (Long & Ervin, 2000) and the QuantPsyc package (Fletcher, 2022) to calculate standardized beta coefficients. Results from the three models are presented in Table 3. Model 3 is expressed as

Multiple regression analyses.

Note. Single-document comprehension was measured by the Woodcock-Johnson Test of Achievement-Fourth Edition Passage Comprehension (WJ-IV) Passage Comprehension subtest; Passage Reading Fluency was measured by the Dynamic Indicators of Basic Early Literacy Skills-Oral Reading Fluency; and Word-Level Reading are factor scores indicated by the WJ-IV Word Attack, the WJ-IV Letter-Word Identification, the Test of Word Reading Efficiency-Second Edition (TOWRE-II) Word Reading Efficiency, and the TOWRE-II Phonemic Decoding. Sandwich standard errors are reported using the small-sample HC3 modification (Long & Ervin, 2000).

In Model 1, reading comprehension had a small positive and significant effect (β = .28, p = .04). The overall model accounted for about 8% (R2 = .08) of the variance in intertextual integration, F(1, 68) = 4.32, p = .04. In Model 2, the effect of SDC remained small but significant (β = .35, p = .01). Passage reading fluency had a small, insignificant effect (β = -.11, p = .52) and did not significantly improve Model 1 (R2 = .09, F(1) = 0.51, p = .48). In Model 3, the effect of SDC remained small and significant (β = .05, p = .04). The effects of both PRF (β = -.07, p = .67) and WLR (β = -.07, p = .81) were small and insignificant. The addition of WLR did not significantly improve upon Model 2, F(1) = 0.077, p = .78.

Discussion

Results from this study reinforce and extend several previous findings. Consistent with other studies involving children in the upper-elementary grades, our results indicate that the intertextual integration of third-grade children with reading difficulties is only weakly predicted by other reading skills. Indeed, the model containing SDC, PRF, and WLR (Model 3) accounted for only about 9% of the variance in intertextual integration. Also, as has been found in other studies (Beker et al., 2019; Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Hattan et al., 2023), and consistent with our hypothesis, SDC positively and significantly predicted intertextual integration, even after controlling for PRF and WLR.

Notably, we used a standardized measure of SDC with documents unrelated to those used to measure the children's intertextual integration. In the single other study with third-grade children, the same documents were used for both measures (Hattan et al., 2023), likely inflating relations between them. Furthermore, our results were robust to most approaches for handling the clustered data structure. In contrast to previous studies, and inconsistent with our prediction, PRF and WLR were nonsignificant and weak predictors of intertextual integration (rs = .05 to .23). Moreover, they did not significantly improve upon the predictive power of models that included SDC.

Our findings suggest that the ability to integrate information from two or more texts is only moderately related with the ability to form a coherent representation of a single text (ie, SDC) and weakly related to WLR and PRF skills for third-grade children with reading difficulties and disabilities. This is consistent with prior work with slightly older neurotypical children (Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Raccanello et al., 2022). What remains unclear from these results is what does account for substantial variation in children's intertextual integration. We consider this matter further in the section below on future directions.

Although our focus in this study was on children with reading difficulties and disabilities, 20% of participants were learning English in addition to another language (see Table 1). Multilingual learners have often been included in studies of intertextual integration (Espinas & Chandler, 2024) but have rarely been a specific topic of interest. As a cause or consequence, frameworks, theories, and models of intertextual integration have little to say about how multilingual status may impact performance. One exception is a study by Davis et al. (2017), who examined intertextual integration with fifth- through seventh-grade children in the United States. Seventy-two percent (n = 60) of participants were multilingual and classified as ELs. They found that EL status did not significantly predict intertextual integration after accounting for prior content knowledge, strategy knowledge and use, and epistemic beliefs. Furthermore, think-aloud protocols with a subsample of seventh graders revealed that EL participants could effectively engage in intertextual integration. However, 90% of this subsample was proficient or advanced in English reading. It is unclear how these results might generalize to EL students with reading difficulties. Unfortunately, we were not powered in this study to explore this question with subgroup analyses.

On the matter of practical implications, consider a third-grade end-of-unit assessment from a language arts curriculum used in approximately 20% of U.S. elementary schools (Kaufman et al., 2017). The assessment involves reading and comparing main ideas and details presented in two texts about the water cycle (EngageNY: Common core curriculum; https://www.nysed.gov/curriculum-instruction/engageny). Much has been learned about how children may separately process each of these texts (Fox, 2009); the factors that will influence their SDC (Carlson et al., 2022); and, critically, how to effectively design instruction to support them in fluently recognizing the words in each text and understanding the situations presented in them (Castles et al., 2018).

However, our results suggest that the skills involved with reading single texts are only weakly related to the assessment's primary aim: intertextual integration. This is not to say that attention to WLR, PRF, and SDC should not hold a secure and prominent place in elementary education. What it does suggest, though, is that when developing intertextual integration skills is a goal, as reflected in this assessment and in current college- and career-readiness standards, the scope of current elementary reading assessment and instruction may need to be broadened.

One key difference between results from our study and others that have involved children should be noted. In previous studies, but not the present investigation, WLR was moderately and significantly associated with intertextual integration, and was significantly predictive of intertextual integration even after controlling for other reading, demographic, cognitive, and affective variables (Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Raccanello et al., 2022). Two factors may account for these discrepant findings.

First, in the present study we focused on a different population. Indeed, this is the first intertextual integration study to our knowledge that has involved children with reading difficulties and disabilities. Our study is also only the second to have examined intertextual integration with third-grade children and with children learning to read in English in the United States. Previous studies have excluded children with reading difficulties and disabilities and sampled slightly older populations in primarily non-English-speaking European countries.

These differences likely influenced the results. First, the potentially smaller variation in our sample's reading skills may have attenuated the associations among WLR, PRF, SDC, and intertextual integration. Second, in research on WLR and SDC, differences in languages and writing systems have been found to affect the rate of literacy acquisition and associations among literacy variables (Perfetti & Harris, 2013). For example, although both English and Italian readers use the same Latin alphabet, Italian has a more transparent orthographic system (Burani et al., 2017). Italian children benefit from this transparency and acquire fluent word reading earlier than children learning in English (Seymour et al., 2003). In comparison to their older, neurotypical, Italian counterparts (Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Raccanello et al., 2022), children's WLR skills were less developed in the present study. Notably, though, on each WLR measure, the distribution was largely normal (see Table 2).

Second, the present study's intertextual integration task, measure, and documents differed from those in previous studies with children. In previous studies, longer and larger text document sets have been used (Beker et al., 2019; Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Hattan et al., 2023), and performance has most often been measured with essay tasks (Florit, Cain et al., 2020; Florit, De Carli et al., 2020). Our reasoning for using simpler and shorter texts, and for assessing intertextual integration with verification tasks, was motivated by a concern about the accessibility of alternative approaches for young children with reading difficulties and disabilities. We believe nonetheless that the materials and measure that we used are externally valid for our targeted population. Indeed, the text documents were developed to be similar in difficulty and format to what children would encounter as part of the larger study's intervention program. Furthermore, we used a task that did not require writing because most children with reading difficulties and disabilities also experience writing difficulties (Peterson et al., 2021). We were, therefore, concerned that a writing task would produce floor effects. The inference verification task we used instead produced a normally distributed set of responses (see Table 2).

In most previous work with children, intertextual integration was instead measured with essays, multiple-choice questions, and open-ended/application questions. Notably, this work involved research with adolescents and adults and has shown that certain tasks lead to more integration than others (eg, summarizing vs argumentation; McNamara et al., 2024; Wiley & Voss, 1999). In research involving children, argumentation tasks were also frequently used (Florit, Cain et al., 2020; Florit, De Carli et al., 2020; Raccanello et al., 2022; Raphael & Boyd, 1991). However, in only one study (Raphael & Boyd, 1991) were children explicitly directed to integrate information from across the provided documents.

In the present study, participants were also not explicitly directed to integrate information between the documents. Moreover, although the documents were presented as printed booklets, information was not provided about their authors, dates of publication, publishers, or purposes. Accordingly, participants may not have perceived the task as one requiring much integration. Therefore, as in most previous work, this study likely provides insights only into how children with reading difficulties and disabilities spontaneously integrate information from across documents rather than how they perform when explicitly directed to do so.

Ultimately, it is unclear how these factors affect intertextual integration and its relations with the other reading variables considered in this study. It may be that argumentation tasks used in several of the other studies elicited more integration than the task used in the present study. However, these differences could also be attributed to more developed writing and argumentation skills in older and higher-performing children. As with research on reading comprehension development (Cain & Barnes, 2017), little is known about how intertextual integration unfolds across the elementary grade years, how its development is mutualistically influenced by other cognitive and affective processes, and whether difficulties with intertextual integration reflect a qualitative difference or a developmental lag. Additional research is needed to sort out these matters. To that end, this study contributes initial insights into potential sources of variation in the intertextual integration for children at a key point in their early literacy development.

Limitations

Our study was limited by several factors. First, the study's cross-sectional design greatly limits any inferences that can be drawn about the causal direction of the reported associations. Second, we were unable to recruit the planned number of participants. Consequently, the final model (Model 3) was slightly underpowered. To preserve power, we used sandwich standard errors rather than fixed or mixed-effects models. Furthermore, results were generally robust to the modeling strategy.

Third, although we used well-validated measures for each predictor, our intertextual integration task had not been previously validated. Unfortunately, its scores were weakly correlated with the other reading measures. It may simply be that they measured unrelated skills. Alternatively, the low internal consistency of the intertextual integration scores may have attenuated the relations (Bollen, 1989). In either case, refinements are needed to improve score reliability. Notably, previous efforts to measure children's intertextual integration have run into similar reliability issues. For instance, in Hattan and colleagues’ (2023) study, the reliability of their multiple-choice intertextual integration measure was only α = .47 for third-grade children (Grade 3-6 range = .47 to .73). They also found weak relations between one of their intertextual integration measures and measures of SDC (r = .12 to .28). Furthermore, in this study we had to administer the intertextual integration measure in a group format. Accordingly, we had participants silently read the documents. As a result, we were unable to verify that they read them in full. It is possible that some did not and that their performance was affected by this. Finally, time and resource limitations precluded the inclusion of additional measures that may be relevant for predicting intertextual integration.

Future Directions

As with any new venture, there are many potential directions for future research. Here we offer three recommendations. First, we recommend a program of developmental research. As noted in the title of Karmiloff-Smith's (1998) classic article, “Development itself is the key to understanding developmental disorders,” our ability to explain why some children experience difficulties with intertextual integration will depend on an understanding of its precursors and how it unfolds across development. To these ends, improvements are needed in how intertextual integration is measured (Recommendation 2). Greater insight is also needed regarding the factors that predict its growth (Recommendation 3).

Our second recommendation is for improvements in how intertextual integration is measured. In this study, and in others with children (eg, Hattan et al., 2023), our measure of intertextual integration suffered from poor internal consistency. Moreover, attention has not been paid to the dimensionality of these measures, their invariance across participant groups (eg, gender, multilingual status) and time, and their validity for informing insights into development and instructional design. To this final point, Raphael and Boyd (1991) noted, “it may be naïve merely to ask, [c]an younger students synthesize discourse from multiple sources?” (p. 5). Indeed, it may be more apt to ask how intertextual integration develops alongside children's ability to interpret task directions, search for relevant sources, evaluate them, and selectively decide what information to privilege and what to disregard. In research with adolescents and adults, efforts have been made along these lines (Goldman et al., 2013). This type of work should be extended to studies with children. Moreover, for these efforts to be useful for both research and practice, greater consensus is needed concerning the types of tasks, document features, and response formats that are to be considered most valid for measuring intertextual integration and its related skills at particular points in development.

Third, results from our study and others with children indicate that WLR, PRF, and SDC appear weakly associated with intertextual integration. We may conclude that these literacy skills are necessary but insufficient for predicting intertextual integration performance. For insights into which factors may be more predictive, we recommend consulting the adolescent and adult literature, which has shown that a wide variety of metacognitive, cognitive, and affective factors contribute to variation in intertextual integration (Barzilai & Strømsø, 2018; Espinas & Chandler, 2024). Some of these factors are well studied in research on SDC and reading difficulties. For instance, prior knowledge has been reliably shown to predict both SDC (Hwang et al., 2023) and intertextual integration (Demir et al., 2024). Moreover, interactions have been found among prior knowledge, tasks, and document features (eg, cohesion; McNamara et al., 1996; Smith et al., 2021). Due to time limitations, we did not include a measure of prior knowledge in this study. However, this is likely an important factor to consider. We recommend research, then, aimed at understanding how prior knowledge—at the general, domain, subject, and topic levels—predicts intertextual integration under different task conditions (eg, argumentation, summarization) and when different types of documents are involved (eg, print vs digital, cohesive vs incohesive).

Executive functions may also be an important factor to consider. As discussed in the introduction, intertextual integration involves the process of constructing multiple mental representations of documents and the situation presented among them. Given this complexity, it seems likely that selective attention, response inhibition, cognitive flexibility, and working memory may all play important roles. In contrast to the voluminous literature on executive functions in SDC (Butterfuss & Kendeou, 2018; Peng et al., 2018), and reading difficulties (Peng et al., 2022), relatively few studies have examined the role of executive functions in intertextual integration (Espinas & Chandler, 2024; Tarchi et al., 2021). Furthermore, only several of these studies have involved children (Espinas & Chandler, 2024).

Attention is also needed to factors that have not traditionally been studied in research on SDC and reading difficulties. For example, an individual's beliefs about knowledge—its simplicity, certainty, sources, and justification—can play important roles in how they approach a task involving multiple documents and ultimately integrate information from them (Bråten et al., 2011, 2016). Epistemic beliefs appear particularly important when documents contain conflicting information (Braasch & Bråten, 2017) or present a view that does not accord with the reader's prior belief (Richter & Maier, 2017). Although much research has examined preschool-aged children's epistemic beliefs when learning from others (Harris et al., 2018), little work has examined the role the epistemic beliefs play as elementary-aged children begin to engage with and learn from multiple document sources.

Conclusion

Children are expected to begin learning from multiple documents in kindergarten, and by third-grade, this competency represents a central and enduring feature of their language arts, science, and social studies education (National Governors Association & Council of Chief State School Officers, 2010). An understanding of how children in the elementary grades integrate information presented across multiple text documents is, therefore, of both great theoretical and practical importance. Unfortunately, children, and particularly those with learning difficulties, have been largely neglected in research on intertextual integration and multiple-document use more generally.

To begin to close this gap, we examined in this study how WLR, PRF, and SDC predicted intertextual integration for third-grade children with reading difficulties and disabilities. Consistent with previous studies with children, reading skills were weak predictors of intertextual integration. Moreover, of these three factors, only SDC was a significant predictor. The cross-sectional design of this study precludes conclusions about the causal nature of these relations. However, these findings indicate that better reading comprehension skills are associated with better intertextual integration performance. Future studies using longitudinal and intervention designs should build upon these findings by examining the influence of these and additional predictors.

Supplemental Material

sj-docx-1-ldr-10.1177_09388982241275650 - Supplemental material for Predictors of multiple-document comprehension among third-grade children with reading difficulties and disabilities

Supplemental material, sj-docx-1-ldr-10.1177_09388982241275650 for Predictors of multiple-document comprehension among third-grade children with reading difficulties and disabilities by Daniel R. Espinas, PhD and Jeanne Wanzek, PhD in Learning Disabilities Research & Practice

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Office of Special Education Programs, Office of Special Education and Rehabilitative Services, Institute of Education Sciences, (grant number H325D180086, R324A210021).

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.