Abstract

When using response to intervention (RTI) for special education eligibility decisions, multidisciplinary teams (MDTs) may use performance level and rate of improvement (ROI) as two metrics when evaluating student responsiveness. Although examining student responsiveness to instruction is a critical component of an evaluation using RTI, previous research suggests that final level of performance is the main factor in MDT decisions. ROI is influenced by pre-intervention performance and is also inherently captured in students’ postintervention level, potentially limiting ROI's utility as a distinct indicator of performance. To address these issues with ROI, this study examined whether initial performance level and ROI improve actual MDT decisions about special education eligibility beyond the final level of performance. Results indicate that initial level of performance and ROI add no additional value to predicting MDT's eligibility decisions when using RTI despite identified students having significantly lower initial performance levels and lower ROIs than their nonidentified peers.

The use of response to intervention (RTI) for the identification of specific learning disabilities (SLDs) includes multidisciplinary team (MDT) evaluation of a student's “lack of response to scientifically based instruction” as part of their eligibility determinations in addition to inadequate achievement (United States Department of Education, 2006). Measuring inadequate achievement has long been a requirement for the identification of SLD. This concept of “unexpected” low achievement has been the defining characteristic of a learning disability for decades. Historically, low achievement has reliably predicted MDT decisions regarding eligibility for SLD (Kavale & Reese, 1992; Maki et al., 2020; Peterson & Shinn, 2002; Ysseldyke et al., 1982).

The introduction of student responsiveness as an inclusionary criterion introduced new requirements for practitioners who must be able to reliably measure student intervention response as part of high-stakes decisions. There are multiple methods available to practitioners for evaluating student intervention response based on the literature, including split median, final normalization, final benchmark, slope discrepancy, and the dual-discrepancy framework (Fuchs & Deshler, 2007). The split median is derived from the work of Vellutino et al. (1996), which involves the repeated administration of a standardized measure of reading such as word reading and pseudoword reading subtests that are common to most academic achievement batteries. Slopes are derived from these scores, and students whose slope is below the median would be considered nonresponsive to intervention. Final normalization is derived from the work of Torgesen et al. (2001). In this approach, students are administered a standardized measure of word reading following an intervention period. Students who do not grow to a standard score of 90 are deemed non-responsive. The final benchmark is similar to the final normalization approach except that the postintervention goal is to achieve a criterion-referenced benchmark associated with curriculum-based measurement in reading (CBM-R), such as 40 words correct per minute by the spring (Good et al., 2001). Slope discrepancy uses the slopes derived from time-series graphing of CBM-R data and compares it to an established benchmark (eg 1.5 words correct per minute per week). Students whose performance does not meet this criterion are considered non-responsive (Fuchs et al., 2004).

The dual-discrepancy framework, which requires a student to be discrepant from peers in both achievement level and growth following evidence-based intervention, has the most empirical support when applied to identifying students with learning disabilities when using RTI. Research shows that using a dual-discrepancy identifies a group of students who are more impaired in reading and related measures that is more consistent with estimated prevalence rates of SLD (Speece et al., 2003). When compared to the approaches in the previous paragraph, the dual-discrepancy model also has the best sensitivity and specificity for learning disability identification (Fuchs & Fuchs, 1998) and continues to reliably identify distinct groups of students, with those discrepant in both performance level and growth likely requiring further consideration for special education services (Bose et al., 2019).

In the original dual-discrepancy studies, student growth was conceptualized as rate of improvement (ROI; Fuchs & Fuchs, 1998; Speece & Case, 2001). Student ROI, when using a dual-discrepancy framework, is most often obtained using an ordinary least squares (OLSs) regression line derived from the time-series graphing of CBM-R data (Ardoin et al., 2013). To obtain ROI using OLS, a line of best fit is calculated for all progress monitoring data points in the series, and the resulting slope of that line is the student's ROI. When using OLS to obtain ROI, student growth is operationalized as words correct per minute per week. OLS is viewed as the most accurate way to summarize these data (Parker & Tindal, 1992; Shinn et al., 1989)and is the most accessible method available to practitioners. Using ROI to help MDTs evaluate the lack of student responsiveness to intervention in reading was recommended by Kovaleski et al. (2022).

Obtaining students’ ROI using OLS has empirical support and can be calculated using computer programs typically available to practitioners, such as Microsoft Excel or Google Sheets. Furthermore, a student's achieved ROI as well as normative ROI data are often provided by CBM software suites. Despite these benefits of using OLS, however, there are unanswered questions and problems with using ROI to measure student growth. Currently, there are no agreed upon, empirically based guidelines for determining if ROI is inadequate, which can lead to different interpretations of intervention response and eligibility decisions (Burns & Senesac, 2005). Additionally, OLS is vulnerable to extreme outliers (Haupt et al., 2014)and assumes linear growth despite oral reading fluency trajectories being curvilinear (Nese et al., 2013). Further impacting high stakes decision-making is the measurement error associated with ROI, as it can be high and interfere with reliable decision making (Christ et al., 2012). Given those limitations, it is not surprising that studies have found that ROI may not add any predictive value to student performance beyond performance level (Kim et al., 2010; Tolar et al., 2014). Despite this, the dual-discrepancy model still reliably identifies a subgroup of students who are both low in initial performance level and low in ROI (Bose et al., 2019).

Studies examining the utility of ROI and dual-discrepancy have primarily involved highly controlled conditions that mimic practice. Few studies have examined the role that ROI plays in actual MDT eligibility decisions. Hunter et al. (2023) examined this issue using data from two different sites with different RTI models to understand if, in actual practice, ROI predicts MDT eligibility decisions for SLD in reading. Low achievement, operationalized in this study as post-intervention CBM-R performance, strongly predicted MDT eligibility decisions. ROI did not predict eligibility decisions. In a study examining the impact of multiple variables on MDT eligibility decisions, Maki et al. (2020) found that achievement level and demographic variables explained eligibility decisions, but that slope data did not contribute to MDTs’ decisions even though RTI was to be used during the evaluation process. This research is consistent with previous research findings that practitioners largely ignore eligibility criteria and identify the lowest performing students (Algozzine et al., 1982; Maki et al., 2017).

These studies suggest that both criteria of the dual-discrepancy model, although supported by research, are not being applied to decisions made by practitioners as performance level, not growth, seems to drive practitioner decisions about special education eligibility. One possible explanation for this could be the lack of clear and consistent guidelines for using RTI for SLD eligibility available to practitioners. States have generally provided inadequate guidance (Maki et al., 2020; Maki et al., 2015, 2017), and research to date has not generated specific criteria for ROI in relation to disability eligibility. Additionally, eligibility decisions are categorical in nature, but reading performance is measured as a continuous variable. As a result, any criteria imposed as a cut score will likely increase error (Fletcher & Miciak, 2019).

Another explanation for why practitioners may not utilize ROI as part of special education eligibility decisions is the mediating factor that initial level of performance has on student growth rates. Students who start at a lower level of reading performance will have to demonstrate growth rates that exceed other at-risk students who have higher initial performance to reach a final level of performance that can be supported with general education intervention. Attaining the growth rates needed to reach this level of performance is further complicated by research that shows that students who start with lower performance levels evidence slower growth rates (Silberglitt & Hintze, 2007). Kim et al. (2010) also found that initial level of performance was a better predictor of reading comprehension skills than slopes derived from progress monitoring data. However, Bose et al. (2019) found that ROI alone could differentiate a low performing group of students from other students receiving intervention with a high degree of confidence.

Although there is a longstanding history of performance level at the time of an evaluation influencing MDT decisions regardless of identification method (Kavale & Reese, 1992; Maki et al., 2020; Peterson & Shinn, 2002; Ysseldyke et al., 1982), foundational research on RTI assessment practices suggested both final level and ROI should assist in classification of students as nonresponsive (Fuchs & Fuchs, 1998; Speece & Case, 2001). More recent research (Bose et al., 2019; Kim et al., 2010), however, suggests differing impacts on initial performance level and ROI when classifying students as responsive or non-responsive. Therefore, the present study aims to explore whether the initial level of performance and ROI add additional predictive value beyond the final level of performance when using RTI to make eligibility determinations for SLD. It was hypothesized that both the initial level of performance and ROI variables would contribute to MDT decisions beyond final level of performance alone. The study aimed to answer the research question, do initial level of performance and ROI improve our ability to predict practitioners’ eligibility decisions beyond final level of performance?

Method

Prior to examining the predictive influence of the variables of interest, a quasiexperimental design was used to determine if group differences exist for the initial level of performance and ROI. Two analyses were conducted. In the first, the dependent variable was initial level of performance on CBM-R probes, and the independent variable was group membership. One group was comprised of “eligible” students who were evaluated by MDTs and determined eligible for SLD in reading. The other group, referred to as “not eligible” was comprised of students receiving intensive intervention who were not referred for evaluation. Means were compared using a one direction, independent samples t-test. This analysis was repeated with ROI as the dependent variable and group membership as the independent variable.

To explore the research question, a hierarchical, predictive design was used with three predictor variables, final level of performance, initial level of performance, and ROI. The outcome variable was special education eligibility determination; a categorical variable with two levels. Students who were evaluated by MDTs and found eligible for SLD in reading comprised the “eligible” group. Students who were not referred for evaluation comprised the “not eligible” group. A hierarchical, logistic regression was used with final level of performance as the variable in the first block. The initial level of performance was entered in the second block, and ROI was entered in the third block. This was done to see if initial level of performance and ROI increase the predictive ability of the model when controlling for final level of performance, a well-documented variable in explaining MDT eligibility decisions (Hunter et al., 2023; Maki et al., 2020; Peterson & Shinn, 2002).

Sample

Data were requested from 24 schools implementing RTI in a Midwestern state, and 19 sites returned consents. Schools were selected for inclusion if at least 75% of their students were proficient on a state-approved universal screener to ensure fidelity of universal supports (Kovaleski et al., 2013). All students received 90 minutes of core instruction and were expected to receive intensive general education reading intervention. Only schools using FastBridge CBM-R were included to ensure passage equivalency of the progress monitoring assessments (FastBridge, 2015).

Deidentified student data were provided from the participating school sites. The following data were compiled into a single spreadsheet by a third party: Grade; sex; race; free and reduced lunch status; FastBridge CBM-R progress monitoring data points; referral date for a special education evaluation; and MDE team eligibility decision. Each case was assigned a randomly generated number.

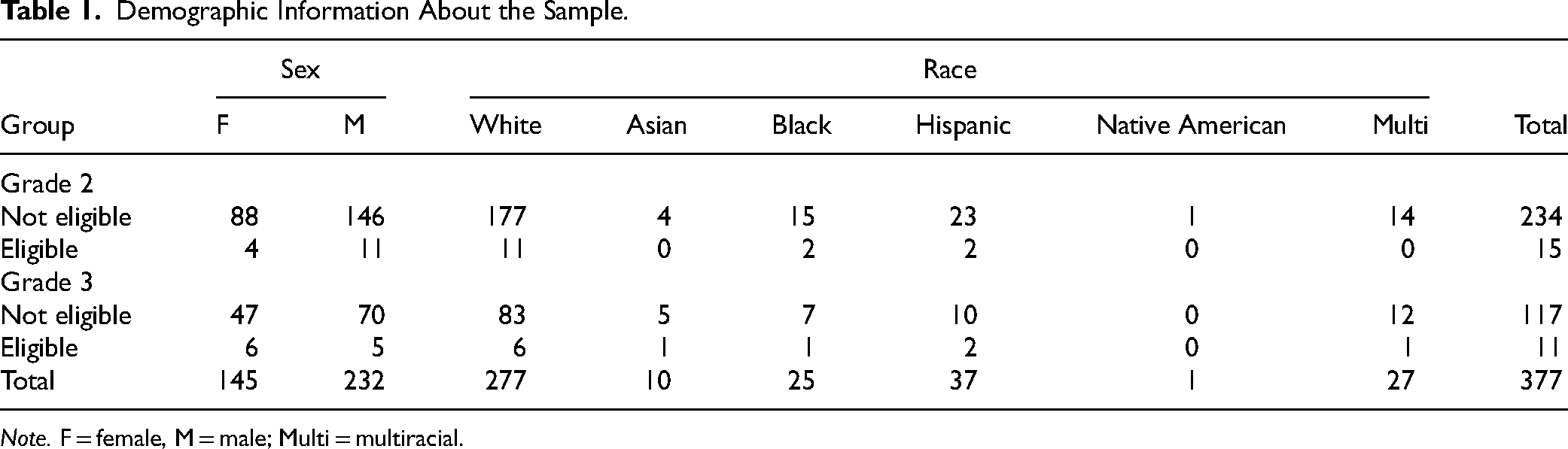

FastBridge CBM-R progress monitoring data were obtained for the 2015–2016 school year and during the first semester of the 2016–2017 school year. Progress monitoring assessments were conducted by the school sites. Data from second and third-grade students were included in this study. Students were assumed to have intervention needs in basic reading skills and fluency given the age of the students in the sample. To be included in the final sample, students were required to have at least seven progress monitoring data points. This requirement was consistent with the recommendations in state guidance documents. However, the final sample met more rigorous recommendations for the quality of progress monitoring data sets. Ninety-one percent of participants’ data included 14 or more data points, which is consistent with recommendations by Christ et al. (2012). Grade 2 not-eligible students had a mean of 22.8 (range = 7–36) progress monitoring data points. Grade 2 eligible students had a mean of 19.1 (range = 9–27) progress monitoring data points. Grade 3 not-eligible students had a mean of 22.4 (range = 9–35) progress monitoring data points. Grade 3 eligible students had a mean of 19.2 (range = 7–30) progress monitoring data points. Duplicate cases were excluded from the sample using a selection prioritization process that prioritized data from the year in which a student was found eligible for special education services, or if the student was not referred for evaluation, data from the year in which more available progress monitoring data were used. See Table 1 for demographic information on the final sample, which included 377 students.

Demographic Information About the Sample.

Note. F = female, M = male; Multi = multiracial.

Measurement

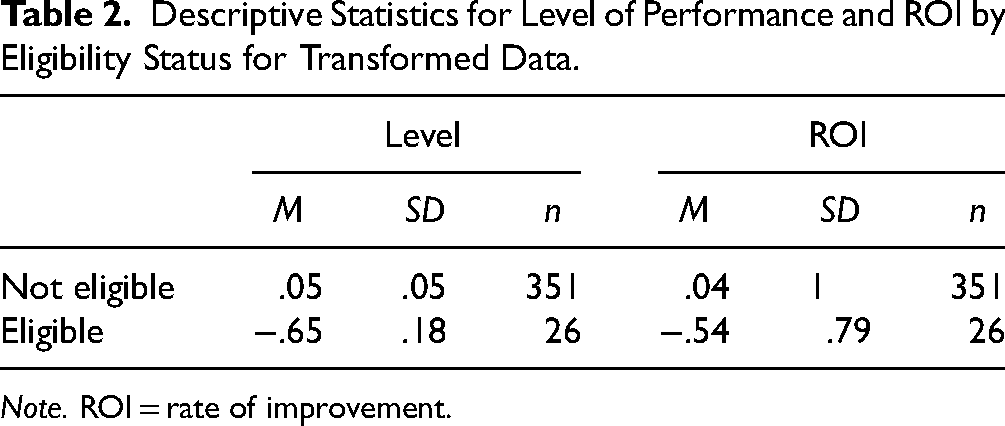

To ensure assessment consistency, only FastBridge CBM-R data were analyzed. FastBridge CBM-R data have sufficient reliability and validity for progress monitoring and educational decisions about general reading performance (National Center on Intensive Intervention, n.d.). The utility of CBM-R to inform instructional decisions has been established (Wayman et al., 2007), and it is a source of performance data available to MDTs (Kovaleski et al., 2022). CBM-R data were used to measure the initial level of performance, final level of performance, and ROI. The initial level of performance was obtained from the median of the first three progress monitoring data points. Final level of performance was obtained from the median of the final three progress monitoring data points (Burns et al., 2010). ROI was obtained from the OLS trendline of the progress monitoring data set using the actual date of the assessment to ensure a more valid measurement interval (Runge et al., 2017). For students in the eligible group, ROI was calculated from only those CBM-R data points obtained prior to the special education eligibility data. For students in the not eligible group, all data points were used to calculate ROI. Since ORF and ROI can vary considerably by grade, the data were converted to z scores so they could be analyzed together to maximize the sample size and increase the number of positive cases in the sample. See Table 2 for the descriptive statistics of the sample.

Descriptive Statistics for Level of Performance and ROI by Eligibility Status for Transformed Data.

Note. ROI = rate of improvement.

Results

The data for initial level of performance were tested for the assumptions of an independent samples t test. The data contained outliers and violated the assumption of normality, as assessed by the Shapiro-Wilkes test (p < .001). The assumption of the homogeneity of variance was met. Given that the outliers were not the result of coding or data entry errors, they were included for analysis as there was no meaningful reason to exclude them. An independent samples t-test was conducted. The results show that students in the eligible group (M = 0.048, SD = 0.99) had significantly lower initial starting points than their peers who were not eligible (M = −0.65, SD = 0.91), t(375) = 3.476, p < .001, d = 0.99. Since the samples used for the analysis were unequal in size and the eligible group was small in size with an extreme skew, it was decided to also analyze the data using the Mann-Whitney U test (Sawilowsky & Blair, 1992). The distributions of CBM-R scores for students in the eligible and not eligible group were similar. The results show significant differences in the initial level of performance between the eligible group (−0.75) the not eligible group (−0.16) with the eligible group having lower initial performance levels, U = 2675.5, z = −3.52, p < .001.

The variable of ROI was tested for the assumptions of an independent samples t test. Outliers were present in the data set and were retained as there was no valid reason to exclude them. The assumption of normality was not met for the eligible group, p < .001. The assumption of equal variances was met, p = .3. The results of the independent samples t test revealed statistically significant differences between the ROI of eligible (M = −0.54, SD = 0.79) and not eligible students (M = 0.04, SD = 1), t(375) = 2.857, p = .002, d = 0.58. Given the violations to the assumptions of the independent samples t test, the data were also analyzed using the Mann–Whitney U test. The distributions of ROI for the eligible and not eligible students were not similar, as assessed by visual inspection. There was a statistically significant difference in ROI between the eligible and not eligible group, U = 3054, z = −2.184, p = .005 using an exact sampling distribution for U.

The data were tested for the assumptions of a logistic regression. All assumptions were met except for the assumption of no outliers. Nine outliers, four in grade 2 and five in grade 3, were identified by studentized residuals of greater than 2.5. The data were examined and did not appear to be the result of coding or data entry errors and thus were included in the analyses since excluding genuine cases could impact the results.

The data were first analyzed with final level of performance as the control variable. For block 1, the model was significant with the good fit (χ2 = 27.71, p < .001) and explained 18% of the variance (Nagelkerke R2) in students being correctly classified as eligible for special education. Lower final levels of performance on CBM-R predicted being eligible for special education, Wald = 22.94, p < .001. For block 2, the model was statistically significant (χ2 = 28.92, p < .001) and explained 19% of the variance of students being correctly classified as eligible for special education. The inclusion of the initial level of performance in the model did not improve the classification accuracy of the model, χ2 = 1.21, p = .271. The final level of performance predicted special education eligibility, Wald = 12.64, p < .001. Initial level of performance did not predict special education eligibility, Wald = 1.23, p = .267. For block 3, the model was statistically significant (χ2 = 31.74, p < .001) and explained 21% of the variance in students being correctly classified as eligible for special education. The addition of ROI as a variable did not improve the classification accuracy of the model, χ2 = 2.82, p = .093. Final level of performance continued to predict special education eligibility, Wald = 4.19, p = .041. Initial level of performance (Wald = .002, p = .964) and ROI (Wald = 2.54, p = .626) did not predict special education eligibility.

Discussion

Students found eligible for special education have significantly lower initial levels of achievement on CBM-R compared to students who received intensive intervention but were never referred. Eligible students also had significantly lower ROIs than non-eligible students. The results of the t tests suggest that eligible students demonstrate discrepancies in both achievement level and growth when provided with intervention, consistent with both metrics of a dual-discrepancy. However, consistent with previous research (Hunter et al., 2023; Peterson & Shinn, 2002), final level of performance significantly predicted special education eligibility. That is, students who performed lower on CBM-R were more likely to be determined eligible than their peers in reading intervention with higher reading levels. When the influence of the final level of performance was controlled for, the addition of the initial level of performance did not add any classification accuracy to predicting MDT eligibility decisions. Also consistent with previous research (Hunter et al., 2023, Maki et al., 2020), ROI continued to not add any additional explanatory value to MDT decisions about SLD eligibility despite significant differences in ROI among eligible and not referred students. Despite group differences in ROI, it does not seem to influence MDT decisions even when controlling for other relevant variables. Our hypothesis that the initial level of performance and ROI would predict MDT decisions beyond the final level of performance was not supported by the results.

First and foremost, the authors acknowledge that one explanation for the results could be error due to violating the assumptions of the statistical procedures used. This error is mainly due to the small n in the eligible group. We used nonparametric procedures when possible to verify the results of parametric tests with violated assumptions, but still must acknowledge that the results should be interpreted with caution. Given the small size of the eligible group, outliers in the dataset could have a greater influence on the mean ROI of this group and impact results obtained from statistical analysis. However, the data used in the present study represents actual data from schools implementing RTI with the hope that the limitations in statistical validity may be balanced by the external validity of a sample that represents what practicing school psychologists may encounter.

There are several other possible explanations for these results, including impacts of measurement error and adherence to eligibility criteria, which are indicated in previous research. The impact of measurement error may explain the results because OLS trendlines derived from CBM-R data have been found to contain a large amount of error (Christ, 2006; Christ et al., 2012). The standard error of the slope has been found to exceed the expected weekly growth rates of students. Although significant differences were found between the ROIs of eligible and not eligible students in the current study, standard deviations for ROI for both groups are large and the confidence intervals overlap substantially. Although ROI can reliably differentiate students with SLD from typically developing peers (Fuchs & Fuchs, 1998; Speece et al., 2003), it seems that the measure is not sensitive enough to differentiate students with SLD from other at-risk students.

Another possibility is that MDTs are not adhering to eligibility criteria, or have insufficiently explicit criteria when making eligibility determinations. Historically, MDTs have not adhered to eligibility criteria and may rely on student characteristics and performance levels when making eligibility determinations (Algozzine et al., 1982; Maki et al., 2017; Peterson & Shinn, 2002). States have also provided inadequate guidance on using RTI for eligibility purposes, particularly interpreting student responses to scientifically based instruction (Maki et al., 2015, 2017; Maki & Adams, 2020). Without clear criteria, practitioners may rely on a familiar metric (eg, low academic performance at the time of referral) when making decisions.

A final possibility is that ROI offers limited incremental validity beyond the performance level alone and that growth is captured in the final performance level postintervention. Some evidence for this can be seen in the Kim et al. (2010) and Tolar et al. (2014) studies that found slope offered little predictive validity and that initial performance level and static performance level from progress monitoring data provide better estimates of reading comprehension abilities. However, these studies focused on predicting academic performance, particularly reading comprehension, and not the presence of a disability. When the progress monitoring method is better aligned with the outcome measure (e.g., reading fluency strongly aligns with a disability in basic reading skills and fluency), the predictive validity of slope increases (Tolar et al., 2014).

Limitations

Several limitations to the current study exist. First, the study made use of a quasi-experimental design, so no causal relationships can be inferred. The study included a small sample of eligible students, and the sample overall was not representative of the U.S. population. Therefore, the results may not generalize to all populations. Several statistical assumptions were violated as well. Additionally, no information was provided regarding fidelity of MTSS implementation, intervention match, and intervention fidelity. It is reasonable to assume that differences in levels of fidelity exist, which may explain differences in obtained growth rates and performance levels, and thus, the quality of data available to MDTs. The researchers also had no information regarding the eligibility criteria used by the schools. It is possible that ROI was not considered by MDTs when evaluating criterion 2 as there are multiple methods available to practitioners when measuring student RTI. Given the multiple factors that could impact MDT decisions across sites, examining the impact of school as an independent variable would be important follow-up research.

Implications for practice

Despite the difficulties with reliably measuring growth, examining student response to scientifically based instruction is an important component of identifying SLD when using RTI. Without consensus about how to best assess student responsiveness, RTI has been criticized as an arbitrary approach to learning disability identification (Hendricks & Fuchs, 2020). IDEA does not mandate a particular approach to evaluating criterion 2 but does provide some guidance on general evaluation procedures that are applicable when evaluating this criterion. Evaluation procedures must: use a variety of assessment tools, assist in determining the content of the child's IEP, not use a single measure or sole criterion to determine classification, use technically sound instruments, and be sufficiently comprehensive to assess all areas of suspected disability. When applying these to the evaluation of criterion 2, simply saying the student's ROI was or was not sufficient may not satisfy the requirements of IDEA.

Practitioners can more comprehensively evaluate student growth by considering both quantitative and qualitative aspects of ROI. Knowing that ROIs obtained from CBM-R data may not be technically sound for high stakes decisions about individual students, it can be combined with additional—and likely already existing—assessment information to increase the reliability and comprehensiveness of the decision being made. ROI can tell us more than if a student has or has not responded sufficiently and can be used to inform the IEP team of instructional strategies that have and have not worked for the student. MDTs should be looking for a convergence of data that helps document the student's progress over time and identify which instructional strategies have meaningfully accelerated growth. The use of RIOT procedures (review, interview, observe, test) allows practitioners to consider growth from multiple assessment procedures. Considering multiple sources of data for eligibility decisions (historical test performance, duration and intensity of intervention support, teacher and family interviews) gathered through RIOT procedures, is consistent with hybrid approaches to learning disability identification (Miciak & Fletcher, 2020).

When seeking to evaluate criterion 2, MDTs should first consider quantitative information about student growth. Quantitative information about student growth helps MDTs evaluate the effectiveness of their efforts in improving outcomes for the student. ROI, despite its limitations, still has the most empirical support for use in the identification of SLD. The ROI of the student in evaluation may be compared to the growth rates of similar students (ie, student growth percentiles). Additionally, teams can also consider a final benchmark or postintervention status compared to a meaningful benchmark to better understand whether the realized growth rate was accelerated in a way to begin closing the gap. By considering growth rates in comparison to like-peers as well as discrepancy from a meaningful outcome, MDTs can better evaluate intervention success as well as the need for further intensification, adaptation, or individualization to better meet the child's needs.

While quantitative information helps MDTs understand the success of instruction and intervention, MDTs should also evaluate qualitative information about growth. Qualitative information helps teams understand the context of student growth, and when considering the context of growth in light of a special education evaluation, it helps establish the basis for the need for specially designed instruction. If MDTs are engaging in evaluations to not just classify, but to provide useful information for determining the content of a child's IEP (or other intervention plan), then the team must consider whether the supports provided were matched to the student's needs, whether intervention was provided with sufficient intensity and for a sufficient duration, and whether the intervention was delivered with high levels of fidelity. As part of the prereferral intervention process, teams would be collecting information about the extent to which adaptations were made to instructional delivery and to curriculum. MDTs may then consider whether those adaptations to instruction and curriculum are specially designed, which informs decisions about whether a student “needs” special education.

Conclusion

While the final performance level appears to drive decisions about whether students have an SLD, MDTs must engage in comprehensive evaluations as outlined in the IDEA. Until consensus is reached about how to best evaluate growth, practitioners should consider both quantitative and qualitative information about student outcomes. A quantitative ROI helps MDTs understand to what extent intervention efforts were successful, and qualitative information helps inform ongoing plans for the student who was evaluated, including whether instruction needs to be specially designed. Interventions are not conducted in a vacuum, and without considering all aspects of growth, MDTs have not sufficiently evaluated student responsiveness to intervention and may come to erroneous decisions about students’ educational needs.

Footnotes

Author note

We have no known conflict of interest to disclose

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.