Abstract

This study examines the role of learning and evaluation data utilization in nonprofit accountability practices. Survey data of 243 nonprofit managers were used to assess the pathway between learning environments and practices to evaluation data utilization and the subsequent sharing of evaluation information. Results from partial least squares structural equation modeling (PLS-SEM) indicate that supportive learning environments, where nonprofit managers and staff engage in learning practices, can facilitate data utilization for internal decision-making, thereby resulting in stronger linkages to sharing evaluation information. Our research suggests the need for intentional strategies around learning and data cultures in nonprofits, as nonprofit managers serve as the linchpin to internal accountability through using evaluation results to inform decisions, assess progress, improve programs, and train staff. Our research contributes to the nonprofit literature by showing how the combination of learning environments and practices serve as drivers for data-driven decision-making, which in turn improves nonprofit accountability practices.

Introduction

Nonprofit managers engage in accountability practices for several reasons, but two narratives drive the majority of nonprofit accountability conversations. The first, and most common explanation, centers on how nonprofit accountability practices result from an external orientation that emphasizes resource-dependency relationships and multiple constituencies demands inherent in the nonprofit sector (e.g., Christensen & Ebrahim, 2006; Herman & Renz, 1997; Hug & Jäger, 2014; LeRoux, 2009). One limitation of these frameworks, however, is that they are disempowering to nonprofit managers who have less control over their external environments (Mayer & Fischer, 2023). Therefore, it is unclear what steps should be taken to improve internal operations. The second narrative describes nonprofit accountability practices as an opportunity for nonprofits to engage in learning practices that prioritize inward accountability to goals and mission as equally crucial to success as reporting externally (e.g., Ebrahim, 2005, 2019; Gugerty & Karlan, 2018; Umar & Hassan, 2019). In this scenario, gathering data and learning equips managers with the information they need to make decisions about organizational operations that further the mission. We seek to add to the latter narrative by exploring how learning environments and practices relate to evaluation data utilization (EDU), which in turn is associated with sharing evaluation information with stakeholders.

An internal orientation to accountability practices around evaluation emphasizes data utilization for learning and improvement inside the organization that may extend outward. At its core, this viewpoint articulates an accountability framework in which learning processes facilitate data-driven decisions to improve programs and services. Internal accountability refers to a nonprofit’s efforts and responsibility to report to organizations themselves: staff, board, and volunteers (Ebrahim, 2005; van Zyl et al., 2019). This internal approach to accountability empowers nonprofit managers to exploit learning in service to mission outcomes, and at the same time, practice accountability to stakeholders by sharing information about performance of the organization. We define accountability practices as those actions of information sharing nonprofit managers take to demonstrate transparency, credibility, and professionalism to one another and to the broader public (Heimstädt & Dobusch, 2018). Accountability practices can include formal legal mandates required of most nonprofit organizations (NPOs hereafter; that is, filing the IRS 990 form) or consist of other voluntary practices that foster connection and trust among a nonprofit’s various stakeholders (Cordery et al., 2019; Hale, 2013). For example, accountability practices may include making available financial reports, audits, annual reports, program evaluations, performance information on client outcomes for public scrutiny, and online information sharing (Sanzo-Pérez et al., 2017; Striebing, 2017; Tremblay-Boire & Prakash, 2015). Exploring the role of accountability practices in nonprofits is critical to the sector’s perceived legitimacy in delivering social goods and services.

This study focuses on one accountability practice: sharing evaluation information. It is crucial to look at accountability practices around evaluation utilization and outcome disclosure because evaluation is a common tool nonprofits use to collect data and to assess the quality of their programs (Benjamin et al., 2023; Carman, 2010; Hoefer, 2000). In addition, evaluation data can address funder requirements, beneficiaries’ needs, and improve internal decision-making (Benjamin, 2012; Mayer & Fischer, 2023). Accomplishing these ends requires nonprofit managers and staff to commit to learning from evaluation and implementing that learning into practice. Consistent with recent literature, we focus on practices that prioritize the utilization of evaluation data for both learning and accountability purposes (Benjamin et al., 2023; Bryan et al., 2021). This article examines how NPOs’ internal learning environments and practices influence their use of evaluation outcomes in stakeholder communications. We explore learning practices around program evaluation and how these practices increase the likelihood that the NPOs will use and share evaluation data. We argue that an NPO with a supportive learning environment (SLE) is more likely to commit to accountability practices, as a form of sharing its evaluation data with stakeholders, when the organization engages in learning practices and evaluation data use.

Our study builds upon scholarship that suggests learning systems may positively influence how performance data are used purposely to improve programs and to inform stakeholders (Kroll, 2015; Moynihan, 2005). Research suggests that learning organizations excel at creating cultures around information acquisition, reflecting upon the implications of that information, and then modifying behaviors to reflect new learning (Garvin et al., 2008; Greiling & Halachmi, 2013; March, 1991). These adaptive learning practices extend beyond compliance-driven behaviors to encompass mission-focused, strategically driven actions (Ebrahim, 2016). Recent nonprofit literature connects learning with various organizational outcomes like performance (Ebrahim, 2019; Umar & Hassan, 2019), evaluation utilization (Bryan et al., 2021; Mayer & Fischer, 2023), and social capital and impact (Janus, 2017; Lim et al., 2023). We argue that nonprofit managers should engage in measurable learning practices to harness evaluation results in service of mission achievement from the outset. We offer guidance to professionals on how the use of evaluation data can bridge learning environments and learning processes to accountability practices such as the disclosure of evaluation data.

This article seeks to elucidate a pathway of the internal processes from learning to evaluation data use to accountability practices. Our study responds to calls from scholars to focus on the influence of internal organizational processes on evaluation data use to supplement the environmental variables that populate much of the nonprofit literature on evaluation use (Mayer & Fischer, 2023), and to model the direct and indirect effects of organizational variables on data use (Kroll, 2015). In addressing the role of an SLE and learning practices that promote information sharing through the utilization of evaluation data, we focus on two process questions: (a) “How does an SLE prompt staff learning practices and further EDU?” and (b) “How does EDU promote evaluation information sharing?” Exploring engagement in learning practices (ELP) provides an opportunity to apply a stream of literature seldom applied to nonprofit settings: learning organizations.

We employ three components of learning organization in this article: SLEs, ELP, and EDU. We find support for a pathway from SLEs and learning practices to EDU, which in turn promotes sharing evaluation information to stakeholders. Our article contributes to the literature by establishing how utilization of evaluation data can bridge nonprofit efforts between cultivating SLEs and harnessing learning practices to its accountability practices such as sharing evaluation information.

Strengthening Accountability Practices Through Learning and Evaluation Utilization

Linking Sharing Evaluation Information to Accountability Practices

Performance assessments and evaluations are useful analytical tools for understanding the outcomes of programs and services. In addition, organizations leverage evaluation for accountability purposes (Barman & MacIndoe, 2012; Benjamin, 2012; Carman, 2010; Hoefer, 2000). Accountability practices around evaluation can include sharing evaluation and performance information with stakeholders, including boards and the broader public. Information sharing to boards members is a critical accountability practice not only because of their governance responsibilities but also because board members perform boundary spanning activities and often facilitate access to key external stakeholders (Cody et al., 2022; LeRoux, 2009). Sharing evaluation information with the broader public is an important accountability practice because it exhibits transparency and openness (Saxton & Guo, 2011). In other words, disseminating evaluation information makes the organization’s inner workings visible and allows those outside the organization to assess performance (Curtin & Meijer, 2006; Gerring & Thacker, 2004; Grimmelikhuijsen & Welch, 2012). Given that different stakeholders may desire particular information, what makes a nonprofit more likely to share evaluation information as an accountability practice?

On one hand, greater rationality and professionalization of the sector has led to increasing norms around the need for evaluation and data collection that demonstrate efficient use of resources and program effectiveness (Suykens et al., 2022; Williams & Taylor, 2013). While evaluation data disclosure provides legitimacy of nonprofit performance in the eyes of funders, lesser attention is given to how, or whether, managers use evaluation data for internal purposes. In general, the nonprofit sector still struggles to affect behavioral norms around the “role and purpose of data and decision-making” (Winkler & Fyffe, 2016, p. 3). This may in part be attributed to some accountability systems impeding organizational learning (Ebrahim, 2005) and failing to create data cultures that clearly demonstrate social impact (Janus, 2017). On the other hand, some argue even when facing external pressures to show outcomes, nonprofits have limited internal capacities beyond compliance to invest in mechanisms that increase learning and improve decision-making. For example, studies repeatedly show that compliance and evaluation capacities alone do not sufficiently guarantee accountability or positive results for nonprofits and their clients (Carman, 2010; Despard, 2016; Winkler & Fyffe, 2016). Furthermore, even when NPOs have sufficient evaluation capacities, differences emerge in how managers utilize staff competencies and technical resource capacities to “do” evaluation versus learning climate and strategic planning capacities to “use” evaluation for learning and decision-making (Bryan et al., 2021). Outcome measurement may become counterproductive if it shifts a nonprofit’s focus and resources from program improvement to meeting funder expectations alone (Benjamin, 2008; Ebrahim, 2019). Therefore, exploring internal processes and practices around EDU offers a bridge between continuous learning and accountability practices around information sharing.

Improving nonprofit outcomes ultimately relies on managers who can see how data generated from evaluation facilitate organizational learning processes and then share evaluation information more broadly, recognizing accountability practices can take many forms. Nonprofit managers must maintain a delicate balance between the “internal push” to continually learn how to fulfill their mission and the “external pull” to provide evaluation results to external constituencies, primarily to meet the demands of funders (Alaimo, 2008). While agreement exists that learning should be a critical goal of accountability systems and the evaluation process itself, studies addressing the specifics of the evaluation learning-accountability link are sparse (Despard, 2016; Ebrahim, 2005, 2019; Williams & Taylor, 2013). With this in mind, we aim to unpack further how evaluation learning practices and data utilization shape the accountability practice of evaluation information sharing.

Learning Organizations and the Role of Evaluation

Organizational learning involves practices that facilitate individual and group learning, which fosters the development of new knowledge that organizations can use (Levitt & March, 1988; March, 1991). While the organizational learning literature prioritizes how individuals and groups process and interpret information, learning organizations is an organizational-level construct that characterizes the systems, mindsets, and structures that promote reflection and learning at all levels in service to improvement. Garvin (1993) defines a learning organization as “skilled at creating, acquiring, and transferring knowledge, and at modifying its behavior to reflect new knowledge and insights” (p. 78). Learning organizations cultivate SLEs and practices that advance a culture of learning (Garvin et al., 2008) and support overall performance (Jiménez-Jiménez & Sanz-Valle, 2011). For example, nonprofit research suggests board self-assessment can facilitate learning that informs decision-making at the board and organizational level (Carman & Millesen, 2023). We leverage the learning organization, organizational learning, and evaluation capacity-building literature to understand how nonprofits use evaluation data for learning and accountability practices for internal and external purposes.

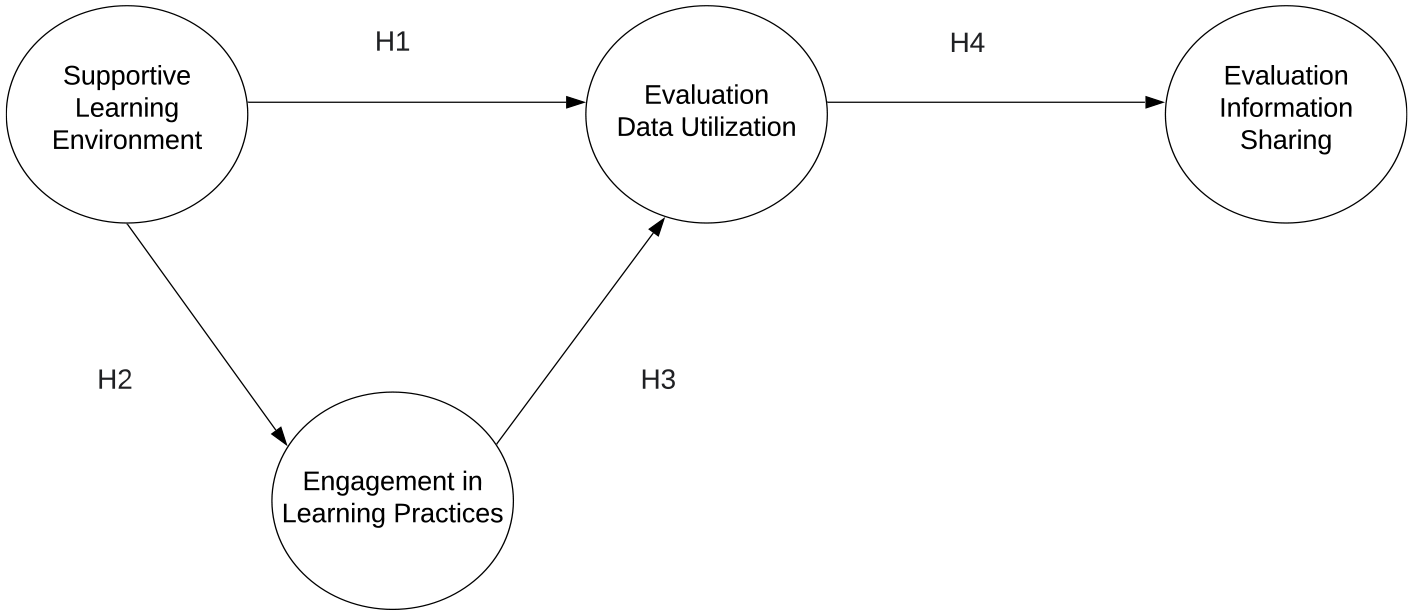

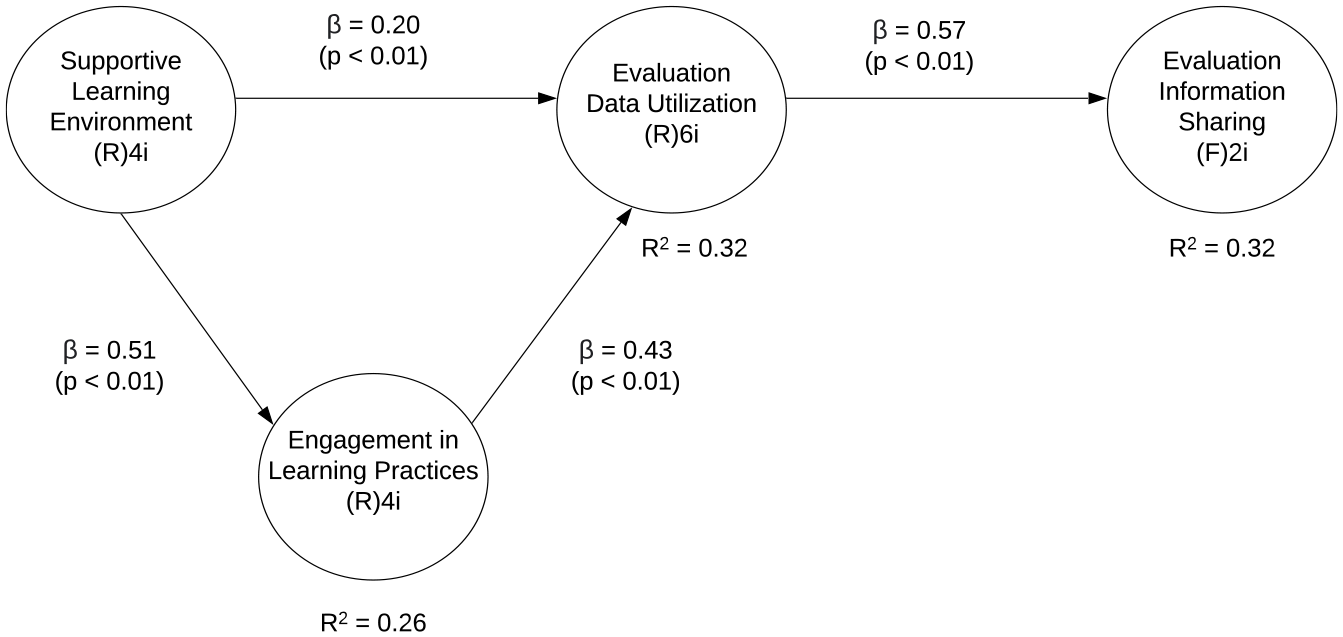

While the growing nonprofit literature on evaluation utilization consistently finds that learning climate plays a vital role in evaluation use and performance monitoring (Lee, 2021; Mitchell & Berlan, 2016, 2018), how nonprofits integrate and use evaluation data to practice transparency and accountability is not often considered. Indeed, evaluation for accountability can be a barrier to learning when it is solely focused on the external pull of meeting funder mandates (Alaimo, 2008; Torres & Preskill, 2001). We contend that when managers emphasize learning from evaluation, they can cultivate an organizational environment that values reflection, discussion, and use of data. This focus on fostering a learning environment, including promoting behaviors that engage with evaluation findings, improves nonprofits’ capacity to apply data in accountability practices like sharing insights with stakeholders. Three components of learning related to evaluation use are explored: SLEs (i.e., what mindsets facilitate learning), ELP (i.e., how learning occurs), and EDU (i.e., what results from the learning). The figure below illustrates how these constructs connect to accountability practices.

The research demonstrates the importance of SLEs to facilitate learning practices and encourage EDU (Cousins et al., 2014). SLEs are fundamental for building organizational learning cultures that value evaluation practices (Preskill & Boyle, 2008; Taylor-Ritzler et al., 2013). Yet, we have a limited understanding of the internal processes and systems needed to connect SLEs to the accountability practice of information sharing. In this conceptual model (Figure 1), we propose EDU as a mediating construct between SLEs and evaluation learning practices and evaluation information sharing. This article addresses a gap in the literature by uncovering these mediating factors to help nonprofit staff and managers learn and then connect learning in a measurable and responsive way to share evaluation information.

Nonprofit Learning Pathway to Evaluation Information Sharing.

Supportive Learning Environment

Fostering an environment where learning is promoted and encouraged is foundational to embedding learning into organizational processes and structures (Garvin et al., 2008). There are multiple facets to creating an SLE, including cultivating psychological safety among staff (Edmondson, 1999; Garvin et al., 2008), valuing diverse perspectives (Garvin et al., 2008), and embracing openness to new ideas (Garvin et al., 2008; Jerez-Gomez et al., 2005). These facets highlight the importance of staff feeling safe to question assumptions and offer new ways of thinking. Edmondson (1999) describes it as “a sense that the team will not embarrass, reject, or punish someone for speaking up” (p. 354). In addition, Strichman et al. (2008) find that creating a collaborative environment that prioritizes open dialogue, inquisitiveness, and constructive feedback engenders a learning atmosphere. Taken together, the literature on SLEs suggests it is an essential ingredient in developing a learning organization.

Furthermore, empirical studies show that SLEs positively affect organizational learning behaviors and processes (Edmondson, 1999; Lim et al., 2023; Umar & Hassan, 2019). An environment that promotes openness encourages staff to take risks and experiment without worrying about any negative social repercussions. In the context of evaluation, nonprofits with SLEs may be more likely to see data as an opportunity to learn how to do their work better. Building on the work of program evaluation scholars, it can be argued that SLEs that promote questioning and experimentation are essential organizational factors that can indicate an organization is prepared to use evaluation results in its practices and decision-making (Bourgeois & Cousins, 2013; Cousins et al., 2014; Preskill & Boyle, 2008). Therefore, we expect that SLEs are positively associated with learning practices and data utilization in NPOs:

Engagement in Learning Practices

Process-oriented learning approaches involve the implementation of practices that enable the integration of information into knowledge that is utilized by organizational actors. As Lopez et al. (2005) state, learning organizations must “move from simply putting more knowledge into databases to leveraging the many ways knowledge can migrate into an organization” (p. 228). Two practices that facilitate organizational learning and often coincide are the distribution of information and knowledge interpretation by organizational members (Huber, 1991; Lopez et al., 2005; Rashman et al., 2009). The distribution of information is a necessary step in integrating new knowledge into the organization. An essential aspect of distribution is with whom evaluation data are shared. For such processes to directly influence learning inside the organization, information must be shared widely with those responsible for managing programs and delivering services (e.g., staff and volunteers). Once distributed, knowledge interpretation processes that prioritize learning for internal organizational members can be an important practice in fostering understanding, assessing what is and is not working, and how improvements can be made is an important learning practice.

The collective nature of knowledge interpretation processes requires organizational members to have a shared understanding of how evaluation data could inform the work of the organization (Jerez-Gomez et al., 2005). Cultivating systems thinking in organizational members is essential for allowing them to understand the interdependencies across the organization, thus discouraging a siloed approach to the organization’s work and promoting integration across teams (Strichman et al., 2008). A systems perspective entails an understanding of the interdependence between different parts (departments, teams, groups) within an organization, and how those parts interact to further the mission of the organization (Senge, 1997). Developing a systems perspective enables organizational members to effectively incorporate evaluation learning into their programs and services while simultaneously understanding how this evaluation will affect different aspects of their organization.

Tools and assessment for knowing when evaluation learning practices occur are of increasing interest among scholars who advocate for the mainstreaming of evaluation throughout organizations (Cousins et al., 2014; Preskill & Boyle, 2008; Suarez-Balcazar & Taylor-Ritzler, 2021; Taylor-Ritzler et al., 2013). One sign of the presence of evaluation capacity is when staff are learning, adopting new skills and practices, and applying their knowledge to program improvement—that is, the interaction of evaluation information to learning through evaluation (Preskill & Boyle, 2008). Some consider evaluation processes part of broader organizational learning systems but acknowledge there has not been much integration between the evaluation and the organizational learning literatures (Cousins et al., 2014). This study seeks to integrate these two streams of literature by leveraging the learning organization literature to examine ELP and the relationship to EDU inside NPOs. We expect that distributing knowledge and interpreting knowledge generated as a byproduct of learning from evaluation is critical to informing how managers mobilize that information for action:

Evaluation Data Utilization

Internalized learning that leads to embedding new knowledge into the organization’s mental schemas, structures, and processes is an important outcome of organizational learning systems (Lopez et al., 2005). Growing attention has been paid to data utilization in NPOs, especially in funding relationships that require “results-based accountability,” including performance measurement and outcome assessment from grantees (Lee, 2021; Pfiffner, 2020; Suykens et al., 2022). At the same time, studies have not shown a positive relationship between funder requirement for data and actual utilization for service improvement (Christensen & Ebrahim, 2006; Kim et al., 2019; Lee, 2021), prompting scholars to call for more inquiry into the factors that affect learning and data use in NPOs (Kroll, 2015; Mayer & Fischer, 2023). Research suggests that staff and managers can learn through evaluation, which can lead to the use of evaluation data to improve programs and services (Patton, 2008). Therefore, data utilization can be a learning practice. EDU also promotes responsiveness to mission—the goal of internal accountability—by using evaluation data to better achieve mission outcomes. This article explores how nonprofits can leverage evaluation in an integrated way that informs data-driven decision-making to sharing evaluation and outcome information as an accountability practice.

In the evaluation context, data utilization focuses on how managers apply the knowledge gained from evaluation data to adapt and change organizational practices in light of new learning (Patton, 2008). In this study, EDU is defined as the reported use of evaluation results to inform decisions, measure progress, improve programs, and train staff. For example, nonprofit managers may use evaluation information to improve client services or institute new practices to serve clients better. However, using evaluation information to make improvements can be challenging if nonprofits lack the capacity to create a systematic approach to evaluation data use (Benjamin et al., 2023; Bryan et al., 2021; Strichman et al., 2008). Studies on performance data use in NPOs find that leadership support for data use has a significant influence on the likelihood that data utilization will lead to program improvement (Kim et al., 2019).

Data utilization can have far-reaching benefits. In addition to using evaluation data for compliance purposes, providing access to evaluation data can be utilized as a management decision-making tool to build shared mental models and to improve programs and services for mission alignment (Bryan et al., 2021; Mayer & Fischer, 2023; Suarez-Balcazar & Taylor-Ritzler, 2021). When nonprofits design evaluation with both learning and utilization processes in mind, the evaluation results can be highly impactful. We seek to explore how accountability practices through information sharing to multiple stakeholders increase as learning and EDU are put into action by nonprofit managers. The following relationships are expected:

Research Methods

Sample and Data

The data used to test the study hypotheses were collected from NPOs in one mid-sized Midwestern city. Using a local community foundation’s listserv as a sampling frame, we sent out an online survey link to 603 nonprofits in a three-county area composing the metropolitan statistical area. The survey was adapted from the Taylor-Ritzler et al. (2013) Evaluation Capacity Building Instrument and was available from October to December of 2016. The organizations surveyed were designated 501(c)3 as verified by the foundation. Executive Directors (EDs) or their program managers/staff (non-EDs) most knowledgeable about evaluation were asked to complete the survey. Identifying information about survey respondents and their nonprofits was not collected due to the anonymity agreement with the foundation, limiting our ability to test for nonresponse biases in the sample. There were 243 surveys completed giving us a response rate of 40.0%.

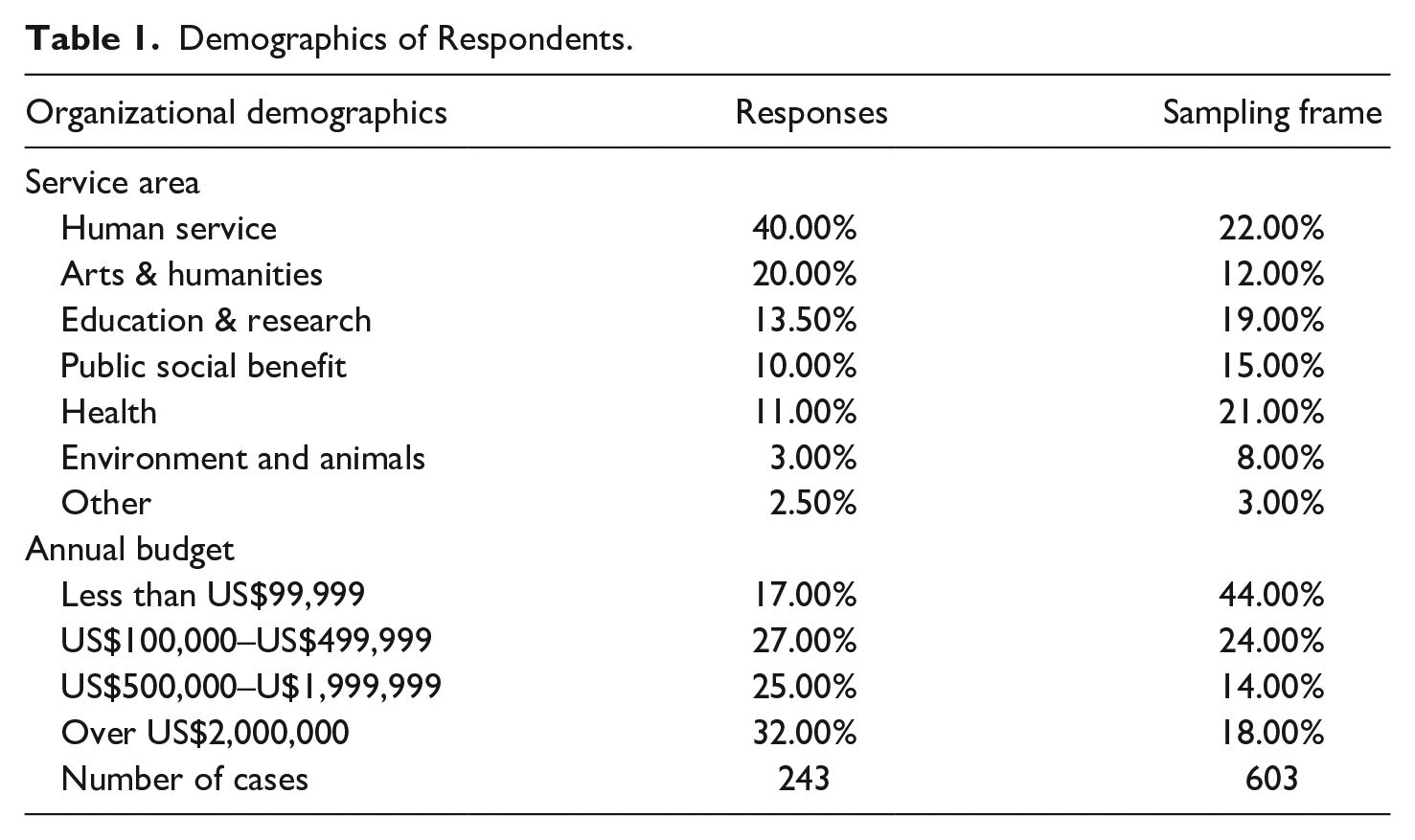

Table 1 describes the organizational demographics of our respondents and the sampling frame of the nonprofits connected with our foundation partner. Respondents reported their nonprofits offer a wide range of services. The organizations’ budget sizes were also diverse. Religious congregations were excluded from the sample.

Demographics of Respondents.

The foundation provided the list of the sampling frame of organizations on their listserv based on their service area and annual budget. When compared with the foundation’s listserv demographics, NPOs with budgets above US$500,000 as well as human services and arts and humanities were overrepresented among survey respondents. This may be because human service nonprofits engage in program evaluation more often than other types of NPOs. The literature has documented the prevalence of evaluation activities in human service organizations over the past 30 years (for examples, see Carman & Fredericks, 2008; Hoefer, 2000). In addition, the time, staff, data, and financial resources it takes to implement and use evaluation data are barriers to program evaluation (Carman & Millesen, 2005), and thus NPOs that do not collect or use evaluation data may have chosen not to complete the survey.

Measurement

Dependent Variable

As a dependent variable, we used evaluation information sharing to capture one important aspect of accountability practices. Evaluation information sharing is operationalized as the extent to which an NPO shares its program evaluation and outcome information to stakeholders such as board members and the broader public. Information sharing to boards members is a critical accountability practice not only because of their governance responsibilities but also because board members perform boundary spanning activities and often facilitate access to key external stakeholders. Sharing evaluation information with the broader public is an important accountability practice because it reflects transparency and openness. Two survey items were used to measure it (see Table 2). These survey items were measured on a Likert-type scale from 1 (not at all) to 5 (to a great extent).

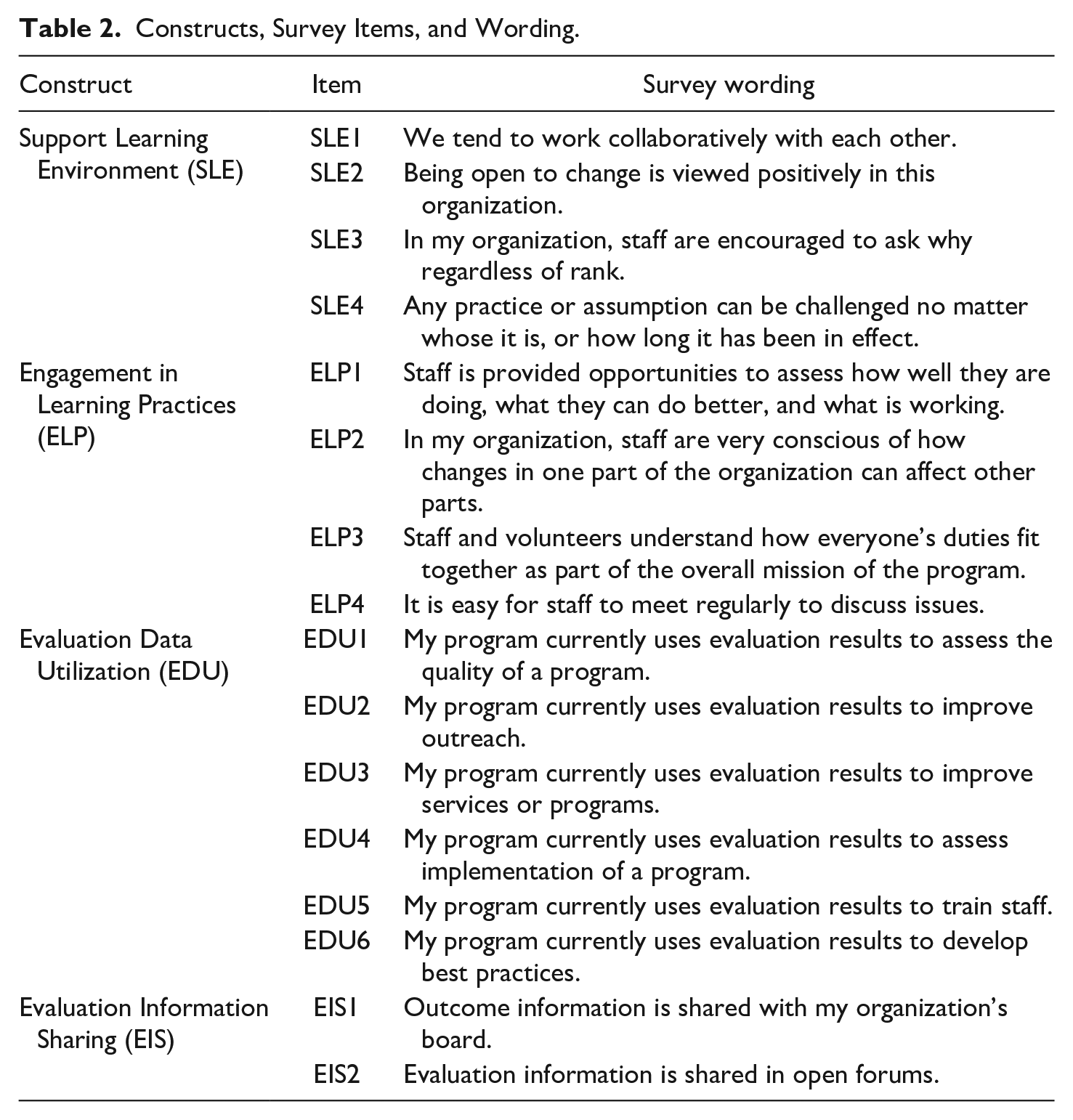

Constructs, Survey Items, and Wording.

Independent Variable

SLE was used as an exogenous latent variable. Garvin and his colleagues (2008) suggest psychological safety, appreciation of difference, openness to new ideas, and time for reflection are key characteristics of an SLE. We used four survey items to measure these characteristics of an SLE.

Mediators

ELP and EDU were included as two mediators in the model. The ELP construct was used as the first mediator connecting SLEs and EDU. The specific measures in the ELP construct were derived from the literature specific to information distribution and knowledge integration (see Huber, 1991; Lopez et al., 2005; Preskill & Boyle, 2008). In this research context, ELP were operationalized as the extent to which organizational members engage in understanding, assessing, distributing, and sharing information about managing programs and delivering services. Four survey items were used as potential indicators of the engagement in the learning practices construct. EDU construct was used as the second mediator variable connecting SLE and ELP to evaluation information sharing. We operationalized EDU as the extent to which evaluation data can be accessed and used for key decision makers in NPOs to make informed decisions (see Patton, 2008; Taylor-Ritzler et al., 2013). We considered six survey items as potential measures of EDU. Table 2 shows all the constructs as well as survey items, and wording.

Analysis

The statistical analysis applied partial least squares (PLS)-structural equation modeling (SEM) using WarpPLS 8.0 to assess the measurement model and test the study hypotheses. SEM is preferred because all the constructs used in our model are latent variables with multi-item scales. Also, SEM is a more useful technique to handle measurement errors and multiple endogenous latent variables within a single model (Gefen et al., 2011). PLS technique was chosen mainly because in comparison with covariance-based SEM, PLS-SEM has been recommended as a more appropriate approach to exploratory research and when the underlying model is fairly new, which is consistent with the purpose of this research, it has fewer restrictions on normality assumptions, and it has formative constructs (as discussed later; Chin, 1998; Gefen et al., 2011).

Results

The assessment of a model using PLS-SEM involves a two-step process including the assessment of the measurement and structural models. Specifically, the measurement model tests the reliability and validity of the indicators for the corresponding construct, while the structural model validates the hypothesized paths among constructs. In assessing our model, expectation-maximization technique was employed to deal with data imputation of missing values in the data. SLE, ELP, and EDU constructs in our model are considered reflective in that a set of items reflect the content of corresponding construct and is interchangeable (Garson, 2016). The construct of evaluation information sharing is modeled to be formative in that two measurement items are not necessarily correlated—one item measures information sharing with external stakeholders (i.e., the public) while another item measures information sharing with internal stakeholders (i.e., the board). The results indicate a low correlation coefficient (.3) and low Cronbach’s alpha coefficient (.465) for these two items. This suggests that they are not interchangeable and, therefore, evaluation information sharing is considered a formative construct.

Measurement Model

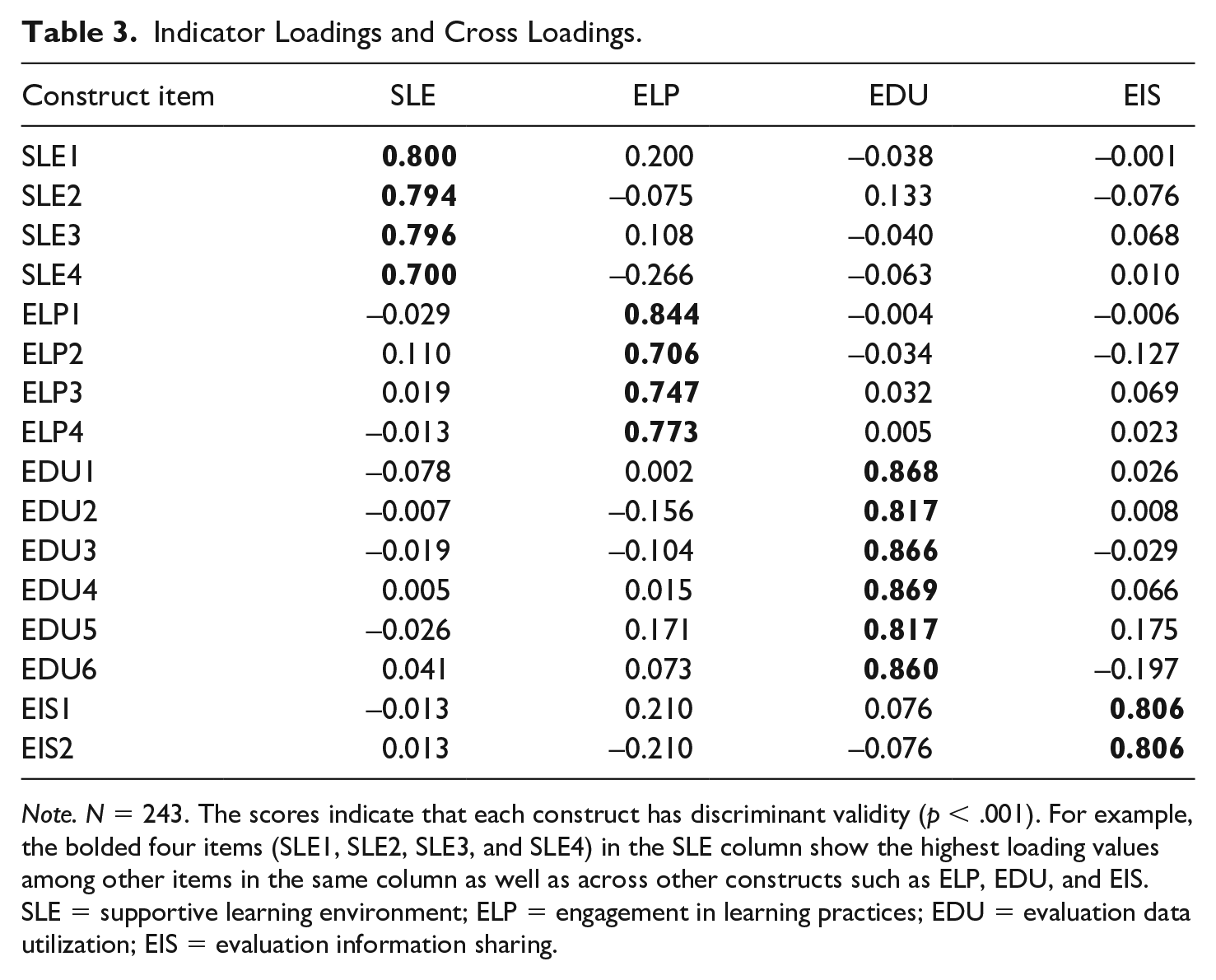

Reflective and formative constructs have distinct criteria that are relevant to their assessment (Hair et al., 2019). The assessment of reflective constructs involves examining indicator loadings, internal consistency reliability, convergent validity, and discriminant validity (Hair et al., 2019). To assess formative constructs, indicator loadings, indicator weight, and multicollinearity are recommended (Hair et al., 2011). 1 For an item to be retained for structural analysis in the initial run, we employed 0.7 as a threshold of indicator loading on its respective latent construct (Hair et al., 2019). Table 3 demonstrates that all of both reflective and formative constructs have indicator (item) loadings greater than the threshold. It should be noted that six items that were below 0.7 at the initial run were dropped, which allows 16 items with above 0.7 to be retained.

Indicator Loadings and Cross Loadings.

Note. N = 243. The scores indicate that each construct has discriminant validity (p < .001). For example, the bolded four items (SLE1, SLE2, SLE3, and SLE4) in the SLE column show the highest loading values among other items in the same column as well as across other constructs such as ELP, EDU, and EIS. SLE = supportive learning environment; ELP = engagement in learning practices; EDU = evaluation data utilization; EIS = evaluation information sharing.

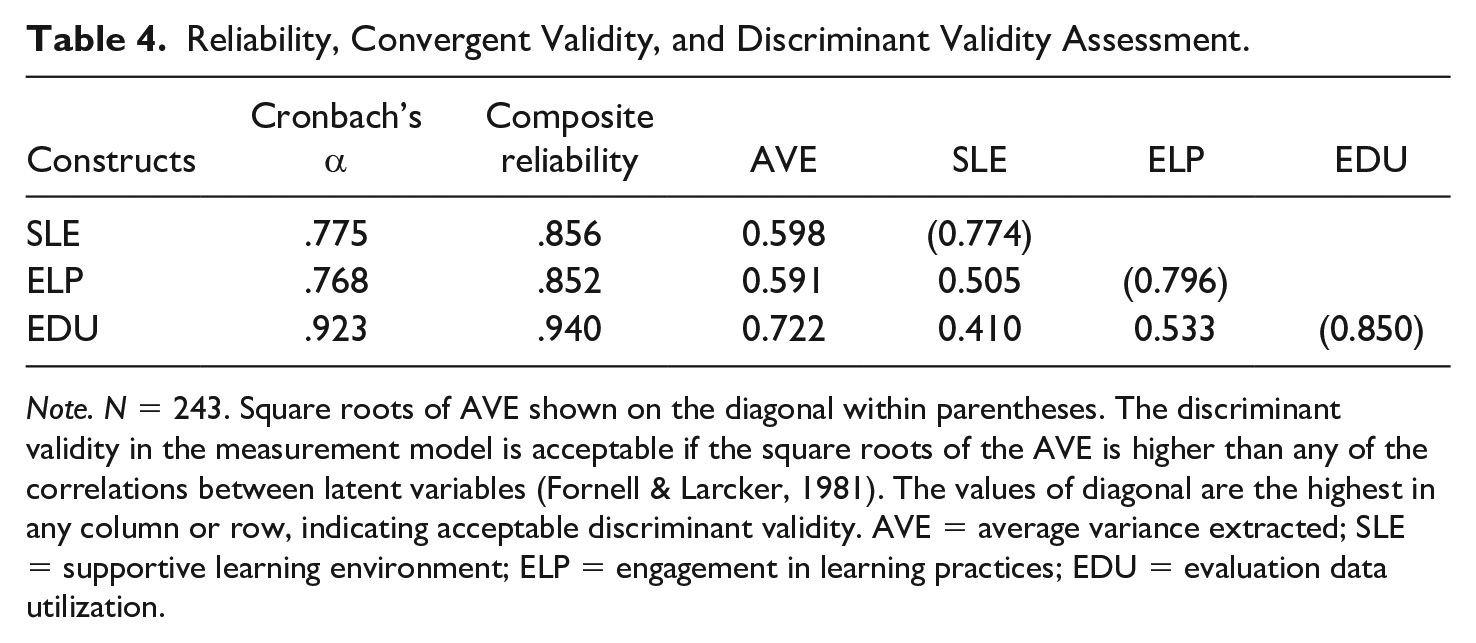

With those 16 items, we then performed internal consistency reliability testing to validate reflective constructs, using Cronbach’s alpha and composite reliability. All three reflective constructs are acceptable (greater than 0.7; Hair et al., 2019). Convergent validity is the extent to which a construct converges to explain the variance of its items. It was assessed using average variance extracted (AVE) comparable with the proportion of variance explained in factor analysis (values between 0 and 1) where 0.5 AVE or above is desirable (Hair et al., 2019). Discriminant validity refers to the extent to which a construct is empirically distinct from other constructs in the model. Table 4 reports that three reflective constructs display internal consistency reliability, convergent, and discriminant validities.

Reliability, Convergent Validity, and Discriminant Validity Assessment.

Note. N = 243. Square roots of AVE shown on the diagonal within parentheses. The discriminant validity in the measurement model is acceptable if the square roots of the AVE is higher than any of the correlations between latent variables (Fornell & Larcker, 1981). The values of diagonal are the highest in any column or row, indicating acceptable discriminant validity. AVE = average variance extracted; SLE = supportive learning environment; ELP = engagement in learning practices; EDU = evaluation data utilization.

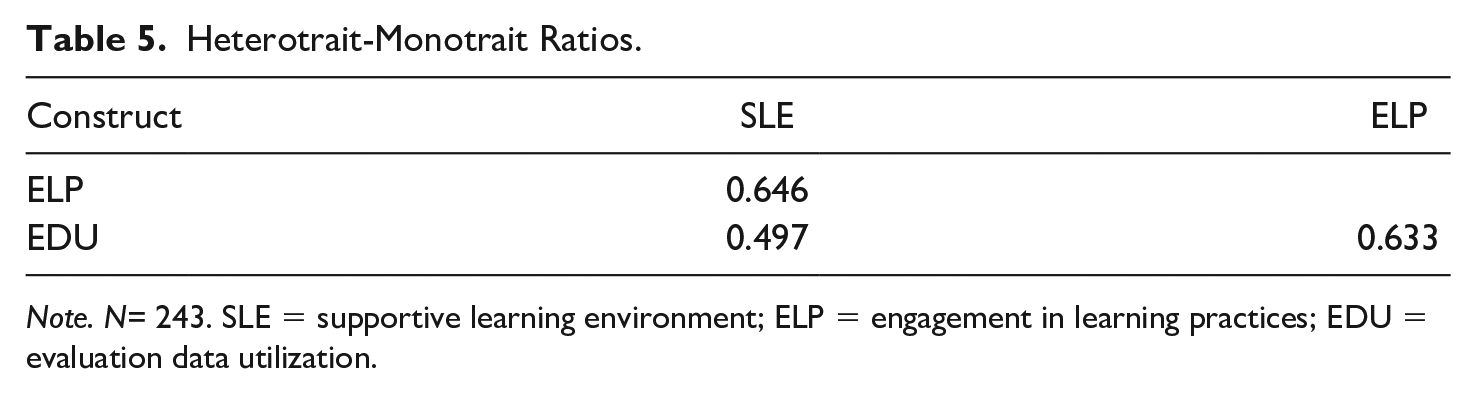

As a recommended alternative (Henseler et al., 2015), Heterotrait-Monotrait ratio was used to assess discriminant validity further. If the HTMT ratio is below 0.9, discriminant validity has been established between two constructs. The results in Table 5 show that HTMT values range from 0.497 (lowest) to 0.646 (highest), which suggests that discriminant validities of the reflective constructs meet the threshold.

Heterotrait-Monotrait Ratios.

Note. N= 243. SLE = supportive learning environment; ELP = engagement in learning practices; EDU = evaluation data utilization.

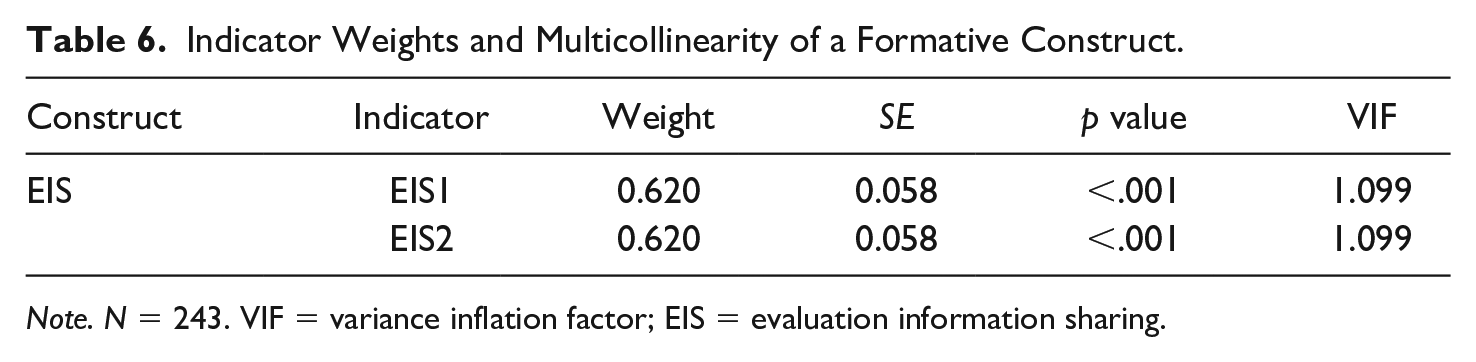

According to Hair et al. (2019), it is recommended to report the weights, statistical significance, and multicollinearity of formative indicators for the assessment of formative constructs. Table 6 shows that two formative indicator weights are statistically significant and have acceptable variance inflation factor (VIF) values (less than 3), indicating no multicollinearity issue (see Kock, 2015).

Indicator Weights and Multicollinearity of a Formative Construct.

Note. N = 243. VIF = variance inflation factor; EIS = evaluation information sharing.

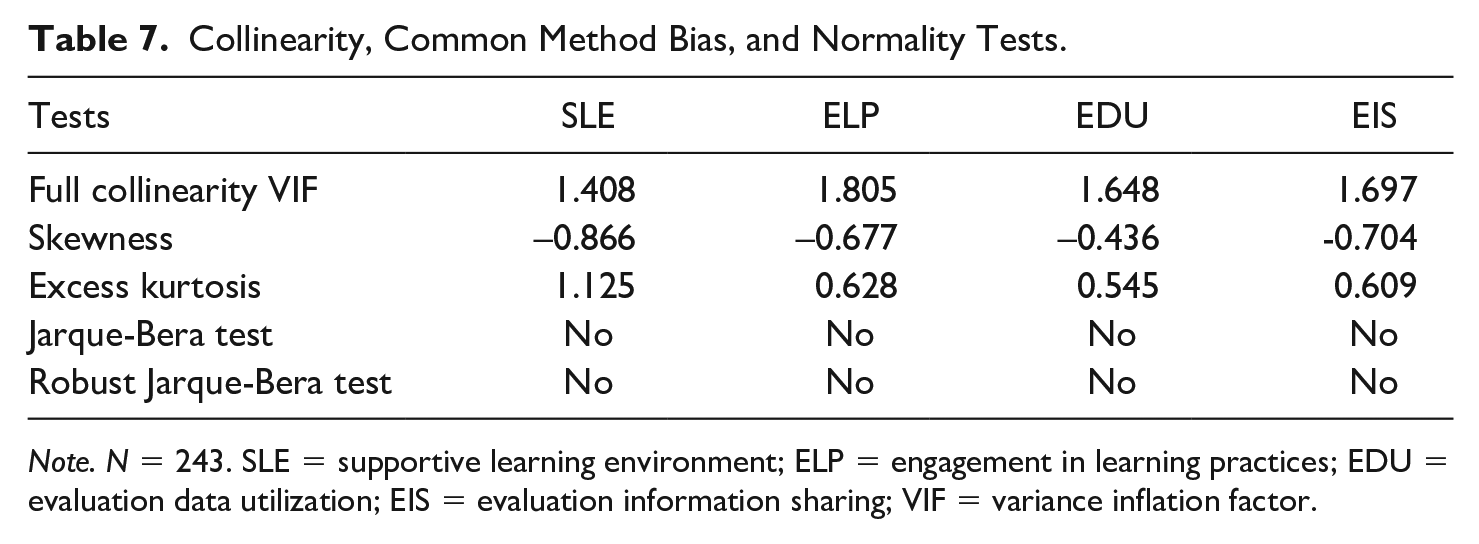

Finally, we assessed collinearity, common method bias, and normality with respect to the measurement model. We used a full collinearity VIF to test collinearity and common method bias. Normality is assessed with the Jarque-Bera and robust Jarque-Bera test, which builds on measures of skewness and excess kurtosis. Table 7 shows that VIF values are lower than 3, which are acceptable, indicating that the constructs in the model are less likely to generate vertical and lateral collinearity issues as well as common method bias (Kock, 2015). Two tests of normality showed that our data are non-normal multivariate. The robust Jarque-Bera test revealed that four latent variables—SLE, ELP, EDU, and evaluation information sharing—are not normally distributed, which justify our choice of PLS-SEM approach because it does not assume normal distribution (Kock, 2015).

Collinearity, Common Method Bias, and Normality Tests.

Note. N = 243. SLE = supportive learning environment; ELP = engagement in learning practices; EDU = evaluation data utilization; EIS = evaluation information sharing; VIF = variance inflation factor.

Structural Model

In PLS-SEM, a structural model can be assessed in terms of standardized path coefficients (β), significance of the path coefficients, the effect size for path coefficients as indicated by f2, and the variance of explained (R2) by the independent variable(s). The effect of path coefficient can be assessed as small if effect size (f2) is less than 0.02; medium if effect size is between 0.02 and 0.15; or large if it is above 0.35 (Cohen, 1988). The results in Figure 2 demonstrate that an SLE explained 26% of the variance of ELP, which explained 32% of the variance of EDU in our sample of NPOs. EDU explained 32% of the variance in evaluation information sharing.

Structural Model With Results.

Figure 2 also demonstrates that the relationship between an SLE and ELP is positive and significant (β = 0.51, p < .01, f2 = 0.26) with a medium effect. With respect to the relationship between ELP and EDU, the results reveal that ELP are positively and significantly related to EDU (β = 0.43, p < .01, f2 = 0.23) with a medium effect. EDU has positive and significant associations with evaluation information sharing (β = 0.32, p < .01, f2 = 0.32) with a large effect. Thus, the study hypotheses are supported by the data. Of all the constructs, EDU shows a stronger association with evaluation information sharing.

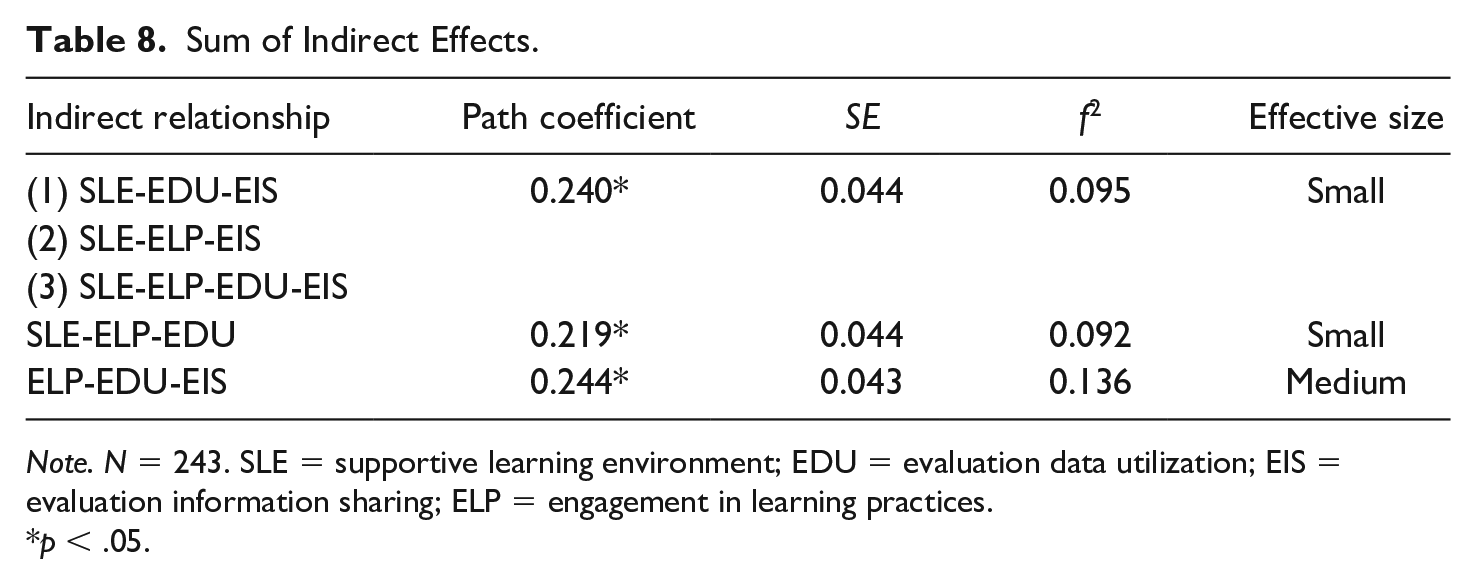

To explore mediation effects in the study model, we tested indirect effects of an SLE on evaluation information sharing, which consists of three paths respectively. The results in Table 8 present that the total sum of indirect effect path coefficients of SLE on evaluation information sharing is significant with a small effective size (β = 0.24, p < .05, f2 = 0.095). In addition, Table 8 demonstrates that the indirect effect path coefficients between SLE and EDU through ELP are significant (β = 0.219, p < .01, f2 = 0.092), indicating that ELP significantly mediated the relationship between SLE and EDU. EDU also significantly mediated the relationship between ELP and evaluation information sharing (β = 0.244, p < .01, f2 = 0.136) with a medium effective size. In sum, the indirect effects in the model suggest that the relationship between SLE and evaluation information sharing is significantly mediated by ELP and EDU.

Sum of Indirect Effects.

Note. N = 243. SLE = supportive learning environment; EDU = evaluation data utilization; EIS = evaluation information sharing; ELP = engagement in learning practices.

p < .05.

Ad-Hoc Analysis of Group and Model Comparison

To explore the results in Figure 2 more deeply, we conducted a series of multigroup comparative analysis of the extent to which the results in Figure 2 are similar or different depending on organizational size, service domains, and/or survey respondent’s roles in NPOs. Overall, the results show patterns like those in Figure 2 in terms of the significance in relationships. For example, although we found that the coefficient differences between large and medium-to-small NPOs are significant in the relationship between SLEs and ELP and EDU and evaluation information sharing, other relationships are not significantly different between large and medium-to-small NPOs. Moreover, the results in Figure 2 are not statistically different in human service areas or other areas and ED respondents or non-ED respondents. These additional analyses speak to the robustness of our findings across organization service area type and the role of survey respondents.

We conducted a multigroup analysis by grouping the sample in terms of its organizational size. Using annual budget as a proxy measure of organizational size, we created an organization size variable by re-coding it as 1 (large organization) if their annual budget is over US$2,000,000 otherwise re-coded as 0 (medium-to-small organization). As a result, 127 medium-to-small nonprofits (68.28%) and 59 large organizations (31.72%) were used in the multigroup analysis. The results demonstrate that overall, large NPOs show higher magnitude of path coefficients, which implies that respondents in large NPOs are more confident in the linkages between SLE and ELP, ELP and EDU, EDU and evaluation information sharing. In particular, we found that an SLE plays a stronger role in facilitating ELP in large NPOs than medium-to-small NPOs. Moreover, the relationship between EDU and evaluation information sharing in large NPOs is stronger than in medium-to-small NPOs.

We also created a dummy variable of service domains—human services versus other areas. There were 66 (40.49%) nonprofits focusing on human services and 97 (59.51%) focusing on other areas that were used in the multigroup analysis. The findings suggest that there is no significant difference between the path coefficients of human service and nonhuman service nonprofits. As described earlier, two types of survey respondents participated in the survey: EDs and non-EDs who are executive leadership staff, director/manager, or direct service staff. The valid number of ED’s response was 82 (41.2%) while that of non-ED was 117 (58.8%; 44 respondents did not indicate their roles). Our results indicate that there were no statistically significant differences between the path coefficients of ED and non-ED groups.

Furthermore, to assess the fitness of the hypothesized model and to determine the best model to represent the data, we compared the hypothesized model with three alternative models 2 (Seibert et al., 2001). We examined model fit and quality indices of four models to comparatively assess the model fit with the data. In particular, we used 10 model fit indices (Kock, 2022). The results show that the hypothesized model’s indices are greater than Alternative Models 1 and 2, but it is similar to Alternative Model 3 in terms of most indices, except for a couple of indices (e.g., Average Path Coefficient, Average R2). Given these model comparison findings, we are confident that the hypothesized model fits best with the data.

Discussion and Implications

This study explores how nonprofit learning environments and practices promote evaluation information sharing through the use of evaluation data. Our results suggest that SLEs in organizations encourage nonprofit managers and staff to engage in learning practices and to facilitate data utilization for internal decision-making, which strengthens evaluation information sharing. By emphasizing an internal orientation, we aim to add to the narrative where managers and staff realize the benefits of evaluation data to create organizational improvements through learning (Ebrahim, 2019; Gugerty & Karlan, 2018). Our results indicate that EDU is an integral management tool that enhances accountability practices associated with data disclosure. We extend the existing literature by proposing a new empirical model of the mediating factors that act as a bridge between nonprofit learning to data-driven decision-making to evaluation information sharing.

Our results show an internal pathway from learning to EDU to sharing evaluation information. Our findings demonstrate the importance of fostering SLEs and practices that promote EDU. By creating an SLE—where staff work collaboratively, are open to change, and ask why—managers can facilitate opportunities for staff learning and growth (Cousins et al., 2014; Edmondson, 1999; Garvin et al., 2008). The data indicate that an SLE has a direct link to ELP. Learning practices capture the distribution and interpretation of knowledge by staff and volunteers that encourages them to assess their work and performance in relation to the larger organizational context. Through these practices, staff and volunteers are able to gain understanding of how their work contributes to the nonprofit’s mission and programs. The data also suggest that staff and volunteer ELP is more strongly associated with EDU than SLEs alone. It is in this pathway between SLEs and learning practices that we see a stronger connection to data utilization.

Overall, these findings indicate that EDU is essential to connecting learning with data sharing and reporting practices. EDU is critical in helping nonprofit managers identify successes and challenges that inform their decision-making. EDU is also essential for helping to demonstrate internal accountability to staff, mission, board, and other key stakeholders. The data show that managers who utilize evaluation data in their decision-making have a higher likelihood of sharing evaluation information with boards and in open forums. Ultimately, our results suggest that nonprofits can benefit from creating an environment that encourages learning and data utilization practices.

Our research contributes to nonprofit literature in a couple of ways. First, these results address a gap in learning research regarding where learning processes are centered within organizational settings (Watkins & Kim, 2018), which in our case can occur within the nonprofit evaluation setting. Consistent with the broader learning organization literature (e.g., Garvin et al., 2008; March, 1991; Strichman et al., 2008), this article offers an example of how the combination of learning environments and learning practices serve as drivers for data-driven decision-making. We offer evidence that supports nonprofit learning as an important component of evaluation settings. We argue nonprofit scholars should continue to explore where learning occurs and how it influences other organizational processes such as board self-assessments (e.g., Carman & Millesen, 2023). Second, by focusing on data use, our findings connect with the utilization-focused evaluation literature that emphasizes evaluation as a tool for managers and their organizations to learn from and to make more informed decisions (Patton, 2008). Moreover, EDU is consistent with the broader performance literature on developing and collecting metrics that achieve results (Pfiffner, 2020; Umar & Hassan, 2019), a burgeoning area of study in the nonprofit space. Our research elucidates the consequences of creating learning and data cultures where results are used to assess and improve programs, train staff, and develop best practices.

Finally, the result that evaluation data use mediates organizational learning and information sharing contributes to an identified weakness in the evaluation and performance literature related to the integration of organizational learning (Cousins et al., 2014) and nonprofit data use (Kroll, 2015; Mayer & Fischer, 2023). Given the prevalence of evaluation in the nonprofit sector as an external accountability requirement (Carman, 2010), our approach relates to the internal accountability literature where nonprofit managers must be responsive to themselves as well as other external stakeholders. Embedding data-informed decisions can increase an NPO’s capacity to create a thriving learning climate (Bryan et al., 2021; Gugerty & Karlan, 2018) which is a critical best practice. Nonprofits with strong internal accountability systems can reinforce multiple accountabilities in that it allows for the creation of trust among various stakeholders through data-informed decision-making and information sharing (Costa & Pesci, 2016; Williams & Taylor, 2013). An internal orientation focuses on the choices managers make to use evaluation data for inward accountability purposes around mission achievement that facilitates an accountability practice of sharing evaluation results openly with others.

Our study offers several considerations for managers working in the nonprofit sector. An essential question facing nonprofit managers today is how to harness data that lead to more informed decision-making and to program improvements. One implication of this study is the need for intentional strategies around learning and data practices among NPOs. Creating learning environments and internalizing learning practices are crucial steps in developing learning climates that ultimately transform knowledge into action—the goal of learning organizations (Greiling & Halachmi, 2013). Over time, learning cultures exploit information as “embedded learning” opportunities where both staff and managers are encouraged to use data to inform their work, routines, and make meaningful change (Winkler & Fyffe, 2016). Nonprofit managers are the linchpin to fostering learning environments and practices among staff around why collecting and using evaluation data matter in a mission-focused way (Janus, 2017; Umar & Hassan, 2019). Moreover, managers are responsible for making data-informed decisions on behalf of the organization as well as signaling to others the importance of information sharing (see Milway & Saxton, 2011; Winkler & Fyffe, 2016).

Another practical implication of this study is that nonprofit learning environments and staff practices are significant precursors to enhancing EDU. EDU is a mission critical best practice. Nonprofits need “right-fit data” systems that simultaneously encourage learning through data utilization while also ensuring organizations are accountable to all stakeholders, but these systems should not place undue burden on the organization (Gugerty & Karlan, 2018). A systematic approach to evaluation is needed where the pursuit of measurement and data does not stifle value creation and impact. Beyond this, research suggests successful nonprofit managers are able to use data to engage in storytelling that compels internal and external support of the work (Janus, 2017). One way to do this is to share evaluation information with boards and with the broader public in open forums. The level of information sharing and transparency an organization discloses reflect a strategic choice nonprofits make around their accountability practices (Tremblay-Boire & Prakash, 2015). In our examination of sharing evaluation outcomes as an accountability practice, we hope to renew efforts among nonprofit managers and funders to build learning-oriented climates that facilitate data-informed decision-making. Practical steps are needed to cultivate learning organizations that value learning as an end, and not just a means. Otherwise, nonprofits could face a scenario where evaluation information sharing occurs without learning or learning without accountability transpires.

While this study offers contributions to the understanding of the intersections of nonprofit learning, EDU, and accountability, several research limitations should be noted. First, the generalizability of these results are limited by the data coming from a self-reported, cross-sectional survey of managers in one region of the United States. Due to the confidentiality agreement with the foundation, tracking nonprofits to their Form 990s was not possible although the foundation did certify only registered 501(c)3s were included in the list. 3 Executive directors and program managers were chosen due to their knowledge of the overall organizational context and their likely access to organizational data, its usefulness, and related decision-making. Second, the survey instrument was adapted from the Taylor-Ritzler et al. (2013) Evaluation Capacity Building instrument; therefore, some aspects of that instrument were employed to address our research questions, which limits our ability to measure each construct systematically and comprehensively. For example, the concept of an SLE is similar to Garvin et al. (2008) operationalization, but the data do not capture staff’s view of leadership which is important in establishing learning organizations. Another limitation is that the data collected do not measure the underlying motivations behind why nonprofits share evaluation information. Information sharing could be externally mandated by regulators or funders for instance. Finally, we recommend further systematic and comprehensive development of survey items with reliability and validity that gauge the constructs of EDU and information sharing with a connection to managerial motivations and learning in other research contexts.

Future research could examine how nonevaluation driven data (e.g., client satisfaction survey) informs management and decision-making around data utilization and accountability practices. Further work is still needed to understand how other stakeholders such beneficiaries, funders, or regulatory agencies view nonprofit’s ability to make data-informed decisions in a way that is accountable in a myriad of ways. This will require more case study and qualitative approaches that would examine how external actors view the work of these nonprofits. Our research also prompts the question of what types of information are most valuable to share with different stakeholders, indicating a need for more tailored communication approaches. Beyond accountability, inquiry into double loop learning and the extent to which it can inform program design (or re-design) and organizational decision-making would allow the field to better understand how learning can influence organizational strategy. Nonetheless, our sample entails a diverse group of organizational actors and budget sizes as well as a wide range of service areas. The findings from our model comparison discussed earlier strengthen our belief that we can learn something about the intersection of evaluation utilization, learning, and accountability.

Conclusion

This research focuses on the importance of the utilization of evaluation data in NPOs, and how an SLE and engaging in learning practices can bridge the gap between internal processes and accountability practices such as sharing evaluation information to stakeholders. It highlights the significance of an internal orientation to accountability practices, which centers on data utilization for learning and improvement. This study provides important directions for nonprofit managers who want to better utilize their data and foster a learning-oriented culture among their employees. We offer several considerations for those working in the nonprofit sector, such as the need for intentional strategies around learning and data practices and the need for successful nonprofit managers to use data to engage in storytelling. Further research should look at how these practices translate into actual data utilization and improved organizational outcomes.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.