Abstract

This article presents the results of a comprehensive study examining the influence of bots on the dissemination of COVID-19 misinformation and negative vaccine stance on Twitter over a period of three years. The research employed a tripartite methodology: text classification, topic modeling, and network analysis to explore this phenomenon. Text classification, leveraging the Turku University FinBERT pre-trained embeddings model, differentiated between misinformation and vaccine stance detection. Bot-like Twitter accounts were identified using the Botometer software, and further analysis was implemented to distinguish COVID-19 specific bot accounts from regular bots. Network analysis illuminated the communication patterns of COVID-19 bots within retweet and mention networks. The findings reveal that these bots exhibit distinct characteristics and tactics that enable them to influence public discourse, particularly showing an increased activity in COVID-19-related conversations. Topic modeling analysis uncovers that COVID-19 bots predominantly focused on themes such as safety, political/conspiracy theories, and personal choice. The study highlights the critical need to develop effective strategies for detecting and countering bot influence. Essential actions include using clear and concise language in health communications, establishing strategic partnerships during crises, and ensuring the authenticity of user accounts on digital platforms. The findings underscore the pivotal role of bots in propagating misinformation related to COVID-19 and vaccines, highlighting the necessity of identifying and mitigating bot activities for effective intervention.

Introduction

Social media bots have been examined in multiple domains, and their presence became particularly apparent during the COVID-19 pandemic. This crisis was marked by an “infodemic,” referring to the widespread dissemination of dubious content and sources of information (WHO, 2021). The pandemic intensified the spread of misinformation (Ferrara et al., 2020), leading platforms like Facebook, Instagram, and Twitter to enforce additional measures (Suarez-Lledo & Alvarez-Galvez, 2022). These platforms have pledged to remove misinformation content (Tan, 2022), although the effectiveness of these actions remains uncertain. As individuals become increasingly reliant on digital platforms for information and news updates, the role of bots in spreading misinformation has become increasingly relevant. Understanding the functioning of bots and their impact on public health is essential to developing effective strategies to counteract the spread of misinformation and to promote preventive measures in health emergencies.

Several studies illustrate how and to what extent bots were involved in COVID-19 online discussions, but most of the studies extracted data from a short period of time (Al-Rawi & Shukla, 2020; Carrasco-Polaino et al., 2021; Ferrara, 2020; Zhang et al., 2022), or relies upon limited sample size (Hoffman et al., 2021), or unsupervised text analysis methods, such as lexicon-based sentiment analysis (Carrasco-Polaino et al., 2021; Suarez-Lledo & Alvarez-Galvez, 2022; Wang et al., 2022) and topic modeling (Weng & Lin, 2022). Moreover, in the initial period of the crisis, more research was needed to establish what qualifies as rumors, misinformation, or disinformation campaigns; this is due to the fact that the information environment has developed and more scientific insights into the clinical and medical implications of this condition have been revealed (Ferrara, 2020).

Furthermore, despite many uncertainties and measures, the size of bots and misinformation content has grown. For instance, faced with the challenge of widespread misinformation, the Finnish Institute for Health and Welfare (THL), Finland’s primary public health authority, made the decision to discontinue using Twitter. Besides the significant presence of inappropriate content, the main catalyst for this decision was to address the widespread dissemination of misinformation. The proliferation of misinformation posed significant challenges to the institution, as its account became a target for bots and other entities seeking to enhance their visibility and infiltrate human accounts (Unlu et al., 2023; Yle News, 2023).

Public trust in health authorities can be eroded by various factors (Cummings, 2014), including the malicious activity of social media bots and anti-vaccine groups, which further amplify this erosion. These elements have the potential to significantly affect public opinion during the COVID-19 pandemic (Ojea Quintana et al., 2022; Unlu et al., 2023). Finland, known for its high levels of institutional trust, serves as an illustrative example. Research indicates that individuals in high-trust nations are more likely to adhere to health guidelines, which has been linked to reduced COVID-19 mortality rates (Oksanen et al., 2020). Conversely, Oksanen et al.’s (2020) study reveals that countries with lower trust levels, such as Italy, Spain, and France, not only delayed implementing restrictions but were also compelled to enforce more stringent measures later, such as curfews, due to non-compliance with social distancing recommendations. Despite these strict measures, these nations still faced challenges in managing non-compliant citizens. It is crucial to recognize that trust related to COVID-19 is multifaceted, influenced by uncertainties surrounding the pandemic, rapid vaccine development, skepticism towards the pharmaceutical industry, and varying approaches to pandemic measures, even within countries with high institutional trust (Nurmi & Harman, 2022; Rosenthal & Cummings, 2021). For instance, despite its high trust, Sweden adopted a markedly different strategy in its response to COVID-19 (Valentini & Badham, 2022; Yabunaga & Watanabe, 2022). Consequently, even in Finland, trust in the institutions has fluctuated, influenced by misinformation, negative attitudes toward vaccines, and the involvement of various actors in online discussions (Kestilä-Kekkonen et al., 2022; Unlu et al., 2024a, 2024b, 2024b).

This research makes several significant contributions to bot-related studies within public health communication. Firstly, it spans three years and exclusively analyzes tweets in Finnish, a language primarily spoken in Finland. Given that most studies in this field are in English, our focused setting allows us to assess external factors such as geographical dispersion and a diverse study population. Long-term data analysis provides a robust examination of bots’ engagement in COVID-19-related discussions. Second, majority of the studies in this domain focus on bot identification methods or method comparisons in this domain (Antenore et al., 2023; Beatson et al., 2023; Wang et al., 2023). Our research utilizes the state-of-the-art Botometer 4.0 for bot account classification. Going beyond previous studies, our approach enhances automatic bot identification methods and includes additional analyses to distinguish COVID-19-specific bots from regular ones. Finally, instead of relying on traditional methods like unsupervised topic modeling or sentiment analysis of bot activity, we delved deeper by applying text classification specifically to analyze bot interactions focused on misinformation and vaccine hesitancy, marking a direct contribution to the field (Carrasco-Polaino et al., 2021; Suarez-Lledo & Alvarez-Galvez, 2022; Wang et al., 2022; Weng & Lin, 2022). Our findings illustrate the evolution and scaling of bot networks during the COVID-19 pandemic, highlighting the topics bots targeted and their impact on vaccination discussions on Twitter.

Health Communication and Social Media Bots

Health communication is an applied field of social science that investigates the influential roles of human and mediated communication in the delivery of healthcare and the promotion of wellness (Kreps, 2011). According to Kreps (2011), “Health communication research is typically problem-based, focused on explicating, examining, and addressing important and troubling health care and health promotion problems” (p. 595). The integration of social media into our daily lives alters healthcare information access and services, adding complexity to health interventions. This shift introduces a new health communication model where people are not reliant solely on traditional authorities, like health institutions or journalists, for health information; rather, it empowers anyone to create and share health content (Tian & Robinson, 2021).

Achieving widespread immunization necessitates not only promoting accessibility to effective vaccines through health authorities but also employing strategic communication to enhance confidence among vaccine-hesitant individuals (Hudson & Montelpare, 2021). Effective communication regarding COVID-19 is undermined by two predominant threats on social media: the dissemination of misinformation and the propagation of adverse attitudes towards vaccines. Misinformation is false or inaccurate information that is spread, either intentionally or unintentionally. A negative vaccine stance is when someone chooses not to get vaccinated, regardless of the reason. This can include refusal, delay, or discontinuation of vaccination (Bussink-Voorend et al., 2022; Hwang et al., 2022; Larson et al., 2022; Muric et al., 2021). This is facilitated not only by the platforms but also through the utilization of social media bots (Kreuter & Wray, 2003).

Social media bots are online identities managed by automated software programs to communicate, share information, and interact with others on social media platforms to affect the opinions of online audience (Ferrara, 2020). Bots serve multiple purposes: they enhance the visibility of social influencers, disrupt democratic processes, manipulate discourse, and propagate information. This is evidenced by the coordinated efforts originating from Russia during the 2016 U.S. elections, which also included manipulating race-oriented discussions and involving bots and fake accounts in the UK’s EU referendum. Furthermore, they have been used to manipulate discourse during the COVID-19 pandemic and to spread information amid the ongoing conflict between Russia and Ukraine (Beatson et al., 2023; Freelon et al., 2022; Hagen et al., 2022; Matamoros-Fernández et al., 2024).

The participation of bots in social media discussions varies by topic. In benchmark studies, the percentage of bot accounts ranges from 9% to 15% of total accounts on Twitter (Suarez-Lledo & Alvarez-Galvez, 2022; Varol et al., 2017). However, discussions with a political orientation tend to include greater bot participation and more bot-produced content. For example, Antenore et al. (2023) found that while the ratio of bot involvement in COVID-19-related discussions (such as lockdowns, Wuhan, and the outbreak) varies from 6% to 11%, it increases to 18% in discussions related to or initiated by Trump. Furthermore, in these politically oriented discussions, the proportion of content created by bots can reach up to 55%, while in other discussions, it remains below 12% (Antenore et al., 2023). Thus, previous studies indicate that bots’ engagements are highly context-dependent and are often utilized for advancing political agendas.

By engaging in interactions with human users, social media bots have the capacity to amplify human sentiments and trigger negative emotions, including anger and sadness, among the public by disseminating provocative information. Consequently, this behavior contributes to the distortion of online public opinion and the manipulation of public sentiment (Singh et al., 2020), more likely to believe misinformation (Wang et al., 2022; Xu & Sasahara, 2022) or decrease in vaccine intention (Loomba et al., 2021; Puri et al., 2020) or neutralize and polarize vaccine-related content (Broniatowski et al., 2018).

However, the impact of bots on human behavior is complex and multifaceted. Bots are designed to mimic human actions, and their effectiveness hinges on the accuracy of this mimicry. As bots become more adept at simulating human behavior, the lines between humans and these socio-technical entities increasingly blur (Antenore et al., 2023). Put differently, the more human-like accounts appear, the greater the likelihood of user engagement or reaction (Wischnewski et al., 2024). However, accurately assessing an account’s authenticity (humanness) is challenging and influenced by various factors. These include the user’s prior knowledge of social media bots, age (with older users facing greater challenges in differentiation), time spent on social media platforms, and whether the political orientation of the account aligns with the user’s own views, influencing judgment through in-group favoritism and out-group hostility (Wischnewski et al., 2024; Yan et al., 2021).

Recent research shows that bots were used in a variety of ways during the COVID-19 pandemic. Some Twitter bots may be designed to gain followers and popularity to generate marketing revenue in various ways, such as by distributing factual news on COVID-19. By gaining trust, like other media outlets, they have explored objectivity to be financially lucrative because it allows them to attract broader, nonpartisan audiences (Al-Rawi & Shukla, 2020). Bots can also be used for health promotion campaigns, such as vaccination or Covid-19 measures (Ruiz-Núñez et al., 2022).

Bots, however, can be employed for malicious purposes. They have been shown to amplify false narratives, manipulate public opinion, and create confusion by blurring the line between credible and noncredible sources (Ruiz-Núñez et al., 2022; Xu & Sasahara, 2022). Additionally, through coordinated campaigns and algorithm-driven actions like rapid retweets, likes, and comments on specific posts, bots increase their visibility and reach. This can foster the illusion of substantial support for a message, even if the bulk of engagement comes from a limited number of bots (Broniatowski et al., 2018; Suarez-Lledo & Alvarez-Galvez, 2022; Weng & Lin, 2022).

Given the disruptive role of this type of bots in information dissemination, it is imperative to analyze their impact on health communication and its associated risks within its context (Basu & Dutta, 2008; Street & Haidet, 2011). Health communication is dynamic, changing over time and influenced by receiver feedback (Rimal & Lapinski, 2009). Recent research has shown the value of ongoing monitoring and analysis of public online conversations, as information gaps frequently reoccur and narratives shift over time (Purnat et al., 2021).

Moreover, the audience is diverse, so messages must be tailored to different audiences, taking into account their level of health literacy, cultural beliefs, political ideologies, and other individual factors, such as age, education level and ethnicity (Al-Rawi & Shukla, 2020; Andrulis & Brach, 2007; Chang & Ferrara, 2022; Jacobs et al., 2017). These factors influence how different groups perceive credibility. For instance, health literacy affects information processing, while cultural beliefs and political ideologies shape attitudes toward health messages. Additionally, age, education, and ethnicity impact the effectiveness of communication strategies. Understanding these variables is crucial for interpreting how diverse audiences receive health information and for crafting effective public health communication. Consequently, these vulnerabilities create an ideal environment for social media bots to operate. Being exposed to anti-vaccine conspiracy theories on social media, which promote skepticism regarding established scientific consensus, has been linked to a rise in vaccine hesitancy and delay (Bernard et al., 2021).

The multifaceted impact of bots on misinformation and negative vaccine stance dissemination on Twitter necessitates further investigation. Framing our research from a health communication perspective, we aim to address the following questions: RQ1) In what ways did bot accounts influence discussions about COVID-19 on Twitter? RQ2) What specific topics were predominantly discussed by bot accounts? RQ3) In what manner did bot accounts coordinate their activities, specifically in terms of retweeting and mentioning, during the COVID-19 discussions?

Methodology

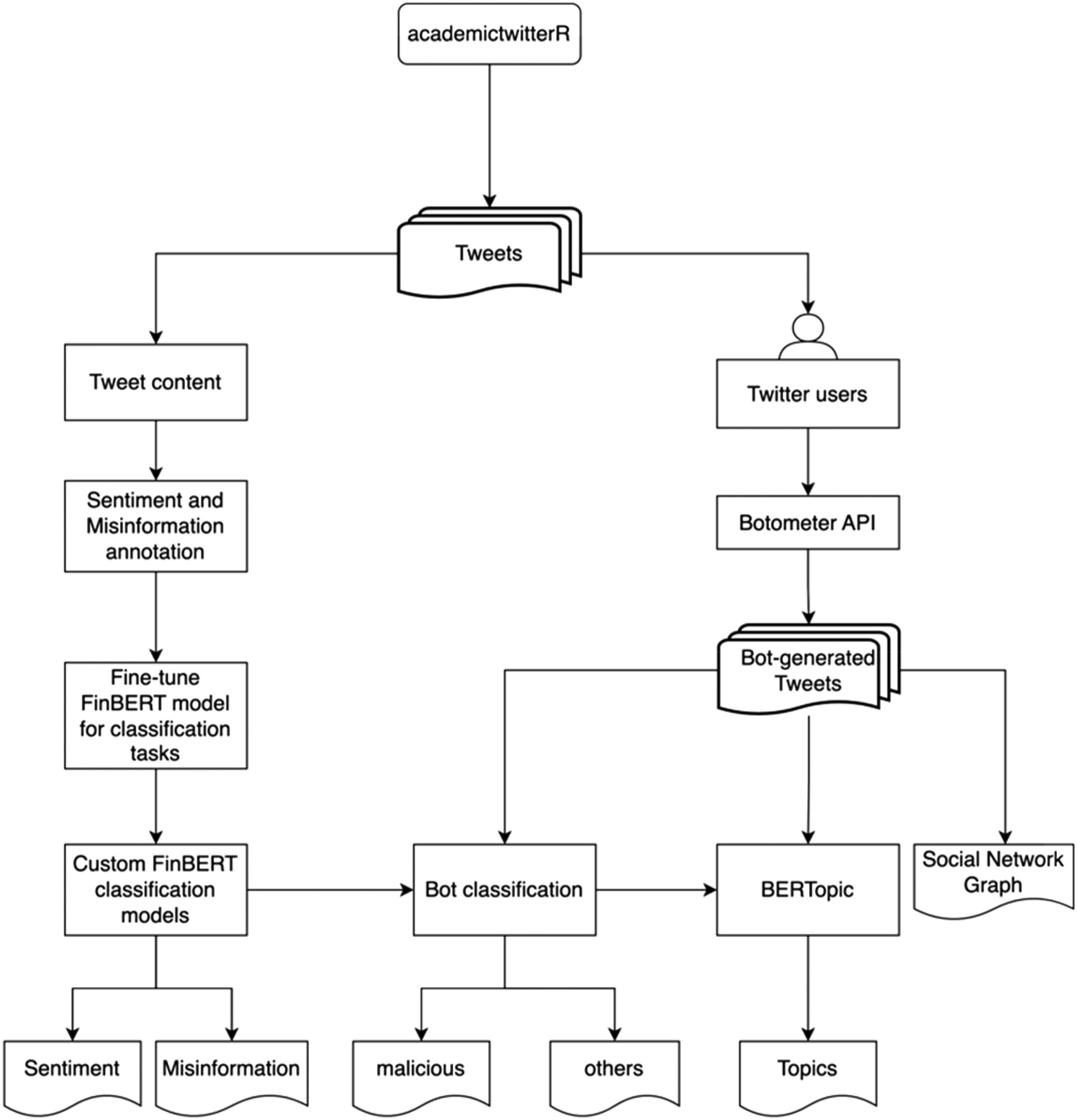

In this study, we utilized three main approaches that are commonly used in both computational analysis and health communication. These include the use of big data, examining public perceptions, and investigating network-related dimensions of the phenomenon (Rains, 2020). The whole process of the analysis procedures is shown in Figure 1. Flow chart of the analysis processes.

Dataset

We collected data from Twitter (https://twitter.com) that included 14 Finnish terms 1 (with added street names and hashtags, a total of 147 search keywords), covering variations in inflection, conjugation, and phrasing. The data was collected between December 1st, 2019, and October 24th, 2022. The AcademictwitteR R programming package (Barrie & Ho, 2021) was utilized with an API provided to the researchers by Twitter to extract the data. After further filtering for Finnish tweets with the language feature from AcademictwitteR, the search terms yielded a total of n = 1,683,700 tweets, and there were 60,560 unique user accounts. Among all tweets, 724,214 of them were retweets, 57,865 of them were quoted, and 901,621 of them original tweets.

Text Classification

A total of 4150 randomly selected texts were manually annotated by four native Finnish-speaking research assistants across two categories: misinformation and vaccine stance. We trained annotators using the misinformation codebook developed by Memon and Carley (2020), which has been tested in other studies (Moffitt et al., 2021). To adapt to the evolving landscape of COVID-19 misinformation, we updated our misinformation database (i.e., new conspiracy theories, effects of measures, fake cures) to reflect the realities of January 2023. We used fact-checking websites, including THL pages, to assist our annotators, but we recognize the challenge of maintaining a consistent annotation codebook over time, as new information about COVID-19 is continuously being released (see the discussion about annotation training and Table 7–10 in the supplemental file for a list of web pages and sample tweets for codebook implementation).

Furthermore, to simplify the process, we trained annotators to detect misinformation in various dimensions using misinformation categories from their codebook to recognize the types of misinformation. However, we asked annotators to classify misinformation as binary. Thus, we refer to misinformation here as any false, inaccurate information, or conspiracy theories regarding COVID-19 and vaccination.

To address negative vaccine stance, we utilized a methodology previously established for stance detection (Du et al., 2017; Lindelöf et al., 2022). This code book specifies that a tweet can express a positive, negative, or neutral/unclear stance regarding the COVID-19 vaccines. For our study, while we use these categories in annotation stage, we collapsed three stance categories into binary classifications in the analysis stage, with a score of 1 indicating a negative stance and a score of zero indicating a positive or neutral/unclear stance.

We conducted six iterative training sessions, in each of which annotators classified 60 posts. We then assessed inter-rater reliability (IRR) using Krippendorff’s alpha test. In each session, we discussed and trained until we reached full consensus. The sessions continued until we achieved a good IRR result for both classes. The average Krippendorff’s alpha for stance detection and misinformation was 0.693 and 0.668, respectively. While these scores might appear modest, they are considered satisfactory within the scope of Krippendorff’s metrics 2 for preliminary research of this nature.

We observed that annotators had more difficulty identifying misinformation than detecting stance. Achieving higher IRR was challenging due to our annotation team’s diverse composition of non-experts in public health misinformation, reflecting lay audiences’ social media interactions. This setup reduced IRR but provided insights into how the public perceives and evaluates misinformation. Additionally, the context-dependent nature of social media posts, often lacking full context due to our random sampling method, complicated accurate classification, especially for sarcastic or contextually ambiguous posts that contain memes and emojis. Moreover, the evolving COVID-19 situation rendered previous understandings obsolete over time, affecting annotation consistency (see Table 11 in the supplemental file for samples of complex contexts encountered by our annotators). Furthermore, similar studies using Twitter data also reported low Krippendorff’s alpha, ranging from 0.428 (Kolluri et al., 2022), 0.54 (Mendes et al., 2023), 0.67 (Jiang et al., 2023), and 0.58–0.69 (Valeanu et al., 2024).

We used the Bidirectional Encoder Representations from Transformers (BERT) text classification model, which is a pre-trained transformer-based neural network model developed by Google. It has been shown to be highly effective for natural language processing tasks (Devlin et al., 2018). BERT models can be fine-tuned for various language recognition tasks, and in this study, the Turku University FinBERT pre-trained embeddings model was used (Virtanen et al., 2019). Table 1 in the supplemental file contains an overview of the performance metrics for the FinBERT models, along with two additional benchmark models trained on our dataset.

Bot Account Detection

The separation of bot accounts and human-operated accounts is an expanding area of research. Various methods have been employed in this field, ranging from unsupervised to supervised models. These approaches focus on different aspects, including the content of the text, the digital footprints reflecting account behaviors, or a combination of these elements (Antenore et al., 2023; Beatson et al., 2023; Wang et al., 2023).

This study used the Botometer software (version 4) to differentiate bot-like Twitter accounts from human-like ones. This version of Botometer employs a novel supervised machine learning method that trains classifiers for each type of bot and aggregates results from all classifiers to produce a final score (Gilani et al., 2019; Yang et al., 2019). The algorithm is trained with over 1200 features collected from each Twitter account, including metadata of the account, along with content information and sentiment from the 200 most recent tweets from the account.

Botometer assigns a bot score in the range of 0 and 1 for a given account, and a Complete Automation Probability (CAP) score is provided for a probabilistic interpretation of the bot score. In this study, we used Botometer’s recommended threshold of 0.804 to identify automated accounts (a summary of CAP-score threshold validation is included in Table 2a of supplemental file). If the user’s primary language is English, we relied the on the default score else we used “universal score” (Sayyadiharikandeh et al., 2020).

Malicious Bots Classification

Our initial analysis indicates that Botometer categorizes a broad spectrum of bot account types. Our manual examination reveals that accounts belonging to newspapers, government institutions, and other public entities are often identified as bots, which are also known as self-declared bots (Suarez-Lledo & Alvarez-Galvez, 2022). This classification stems from Botometer’s algorithm, which assesses whether content is generated by humans or if the account exhibits human-like behavior. Key indicators for this classification include content nature, posting frequency, and active hours—mainly during typical work periods. For instance, bot accounts are likely to post three times more frequently than non-bot accounts, although the difference between self-declared bots and other bots is minimal (Suarez-Lledo & Alvarez-Galvez, 2022).

Most of these types of bot accounts tend to support governmental health promotion efforts, rather than just disseminating information. While such bots may occasionally critique certain COVID-19 measures, they generally do not undermine the overarching COVID-19 health communication strategy. Moreover, regular bots are also regarded as credible sources that can be 2.5 times more influential than humans (Rizoiu et al., 2018). The more significant concern arises from bots explicitly designed to propagate misinformation or negative stance towards vaccines.

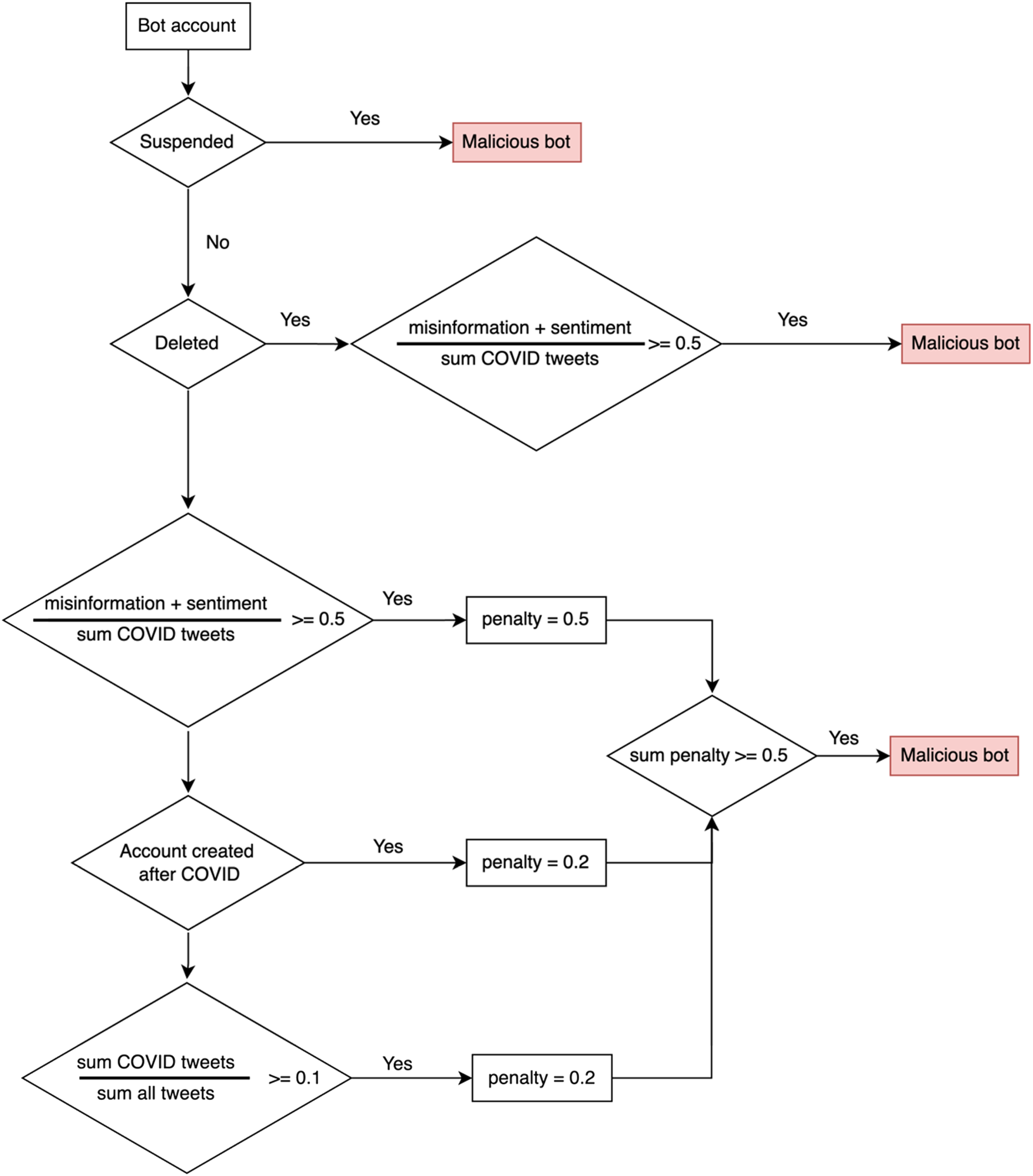

This is also a topic of discussion in the literature, where criticism arises due to the binary classification of bots, given that various types of bots exist (Wang et al., 2023). Therefore, our primary objective is to identify and differentiate these specific bot accounts. We label these as “malicious bots,” characterized by their predominant engagement in disseminating negative sentiments about vaccinations and propagating misinformation. These bots strategically influence public discourse and perception regarding COVID-19 related topics. To distinguish between malicious and non-malicious bot accounts, additional features were collected that are mentioned in recent literature (Ferrara, 2020; Moffitt et al., 2021; Shahid et al., 2022; Wang et al., 2022) and used alongside Botometer results.

These features include the account’s misinformation and sentiment ratio, account status, COVID-related tweet ratio over the total number of tweets, and the age of the account in relation to the first occurrence of COVID-19 in Finland. The status of bot accounts was either active, Twitter suspended, or deleted, since either Twitter identified them or they chose to deactivate their accounts to avoid detection (Al-Rawi, 2019). Each feature was assigned a penalty score that was calculated and summed up. If the total score exceeded 0.5, the account was classified as malicious (Figure 2) (More information about malicious bot calculation and validation is available in the supplemental file). The calculation formula for identifying malicious bots.

It is essential to recognize that our analysis focused exclusively on bot accounts identified by Botometer. Although this software is an established tool which has been widely adopted in several peer-reviewed publications for bot classification tasks, it is subject to well-known limitations, including the possibility of misclassifying some human-operated accounts (Rauchfleisch & Kaiser, 2020). Consequently, to enhance the accuracy of our analysis, we adopted an additional validation approach as proposed by Yang et al. (2022). Following the validation sampling process used by Gallwitz and Kreil (2022), we selected 100 bot accounts for manual validation (the detailed validation process and results are reported in supplemental document). At the CAP-score threshold of 0.804, we observe an accuracy of 0.87 and a recall of 0.78 from Botometer bot classification results (see details in supplemental file).

Continuing from the result of Botometer validation, we manually validated the performance of malicious bot calculation against 22 automated accounts, which are correctly labeled by Botometer. We observe that the malicious bot calculation correctly identifies 6 out of 7 malicious accounts, which is equivalent to an accuracy of 0.95 and a recall of 1.

Topic Modeling

Topic modeling allows us to identify themes in tweets that express a negative vaccine stance and contain misinformation. BERTopic (Grootendorst, 2022) clusters texts based on semantic similarity using the BERT language model (Devlin et al., 2018). The analysis was performed employing the Python programming language, while the Turku FinBERT pre-trained data served as the basis for the study. To detect the topics propagated by malicious bots, BERTopics were trained exclusively on tweets originating from these bots, which are subsequently categorized into two groups: negative vaccine stance and misinformation.

BERTopic provides customizable parameters for dimension reduction, clustering methods, the number of words per topic, n-gram range, minimum topic size, and the number of resulting topics (Grootendorst, 2022). The misinformation BERTopic model was created using word vectorization and a feature selection process that identified the top 20,000 features within a range of 0.05 minimum and 0.98 upper limits. Additionally, the model utilized 20 neighbors and 10 components for UMAP dimension reduction. The final model was configured to identify topics with a minimum of 60 documents (tweets here) and 15 words per topic. The analysis of vaccine stance was conducted using similar settings, but with a UMAP parameter of 15 for the neighbors and a minimum topic size of 50 for the model.

Network Analysis

We utilized social network analysis to scrutinize the interactions among Twitter users, with a particular focus on identifying and understanding the dynamics within networks such as information and opinion diffusion. This analytical method not only helps in recognizing significant nodes (opinion leaders) and clusters (communities) within the network but also facilitates an in-depth understanding of the network’s structural characteristics by pinpointing influential users, discerning predominant topics of interest, and assessing the connectivity and opinion diversity within the network (Borgatti et al., 2009; Kolaczyk & Csárdi, 2020).

To specifically address the influence and spread of malicious bots on Twitter, we applied two distinct network analysis techniques: the analysis of retweet networks and mention networks. Retweet networks are constructed by tracking how tweets are shared across users; this helps in understanding the propagation of content and the viral potential of specific messages or themes. In this network, each node symbolizes a user, and a directed edge, colored to indicate misinformation, signifies that one user has retweeted another’s tweet. This allows us to trace the flow of information and to identify key disseminators within the network.

Conversely, interactions within the mention network occur either through references to previously posted content or direct engagements with other users, including explicit mentions or tags in new content. These mention activities underscore significant efforts in agenda-setting, especially in introducing and engaging with new topics and emphasizing their relevance. By analyzing these mentions, we can identify direct communication lines and attempts at influence, particularly those initiated by malicious bots. In this network, nodes symbolize users, and colored directed edges, where colors denote misinformation, indicate that one user has mentioned another. This arrangement highlights the focal points of communication efforts. Such mentions often signify engagement and can sway conversations toward specific topics or perspectives.

By extracting and analyzing the sources and targets of these interactions at the level of individual bots, we can derive a nuanced understanding of how malicious entities attempt to manipulate discussions and influence Twitter communities. This dual approach enables us to comprehensively examine the roles that these bots play in both spreading information and targeting specific user groups, thereby offering valuable insights into the strategies employed by malicious actors on social media platforms.

Analysis and Results

Our text classification model identified a substantial presence of misinformation on Twitter, with 460,087 tweets (27%) categorized as misinformation. Furthermore, a significant percentage of tweets (26.5% or 446,400) expressed a negative stance towards vaccines.

Botometer detected 13,522 of these (22.3%) as being bots. Furthermore, our calculations revealed that out of the detected bot accounts, 4894 (36%) were determined to be malicious. Previous studies show that bots produced 11% of the posts (Zhang et al., 2022). Out of 60,560 accounts in our dataset, Botometer detected 13,522 (22%) as bots and 47,038 (78%) as non-bots. Our malicious bot calculation classified bot accounts into two categories, with 8628 accounts considered regular bots, representing 14% of the total, and 4894 accounts categorized as malicious bots, accounting for 8%.

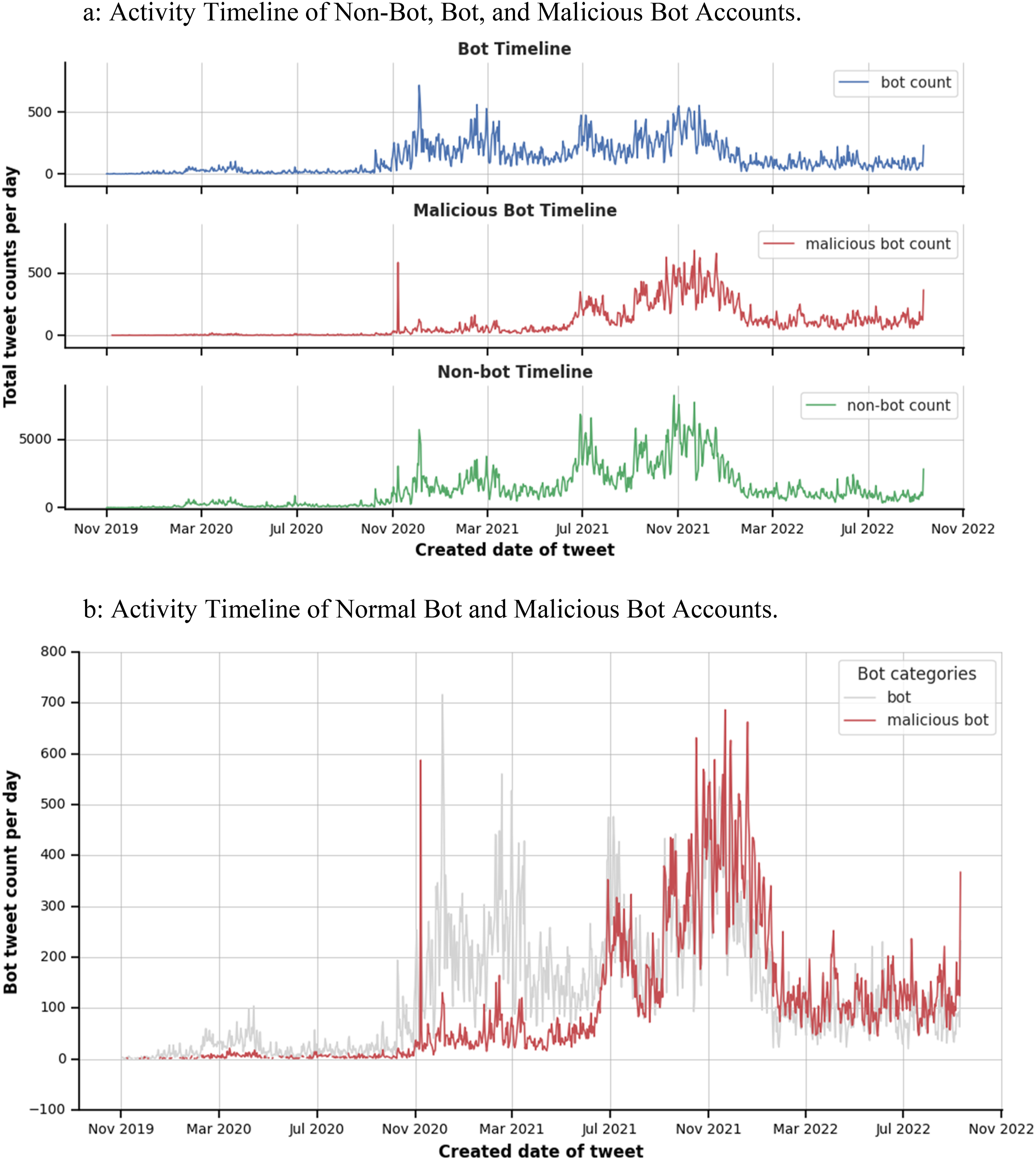

In addressing RQ1 (in what ways did bot accounts influence discussions), we analyzed the communication patterns of different account types, with a particular focus on malicious bots. The timeline analysis of social media discussions indicated a surge in activity after November 2020, with fluctuations that continued until February 2022 when they stabilized at approximately 100 posts per day. Despite the similarity in the fluctuation patterns between regular bots and non-bots, malicious bots began to surface after November 2020, and their post count increased gradually over time. In July 2021, the quantity of posts originating from malicious bots exceeded those generated by regular bots, despite their number being only one-third of the regular bots as illustrated in Figure 3(b). Figure 3(a) and (b) illustrate distinct activity patterns among bots, malicious bots, and non-bots. These differences in activity levels and patterns indicate varying engagement strategies and behaviors among the different types of accounts. (a) Activity timeline of non-bot, bot, and malicious bot accounts. (b) Activity timeline of normal bot and malicious bot accounts.

In-depth discussion of top 3 predominant topics during these periods will be discussed in the topic analysis results. These observations suggest that while regular bots tend to align their communication patterns with public health authorities (Figures 6 and 7), they lost interest in this discussion towards the end of the pandemic.

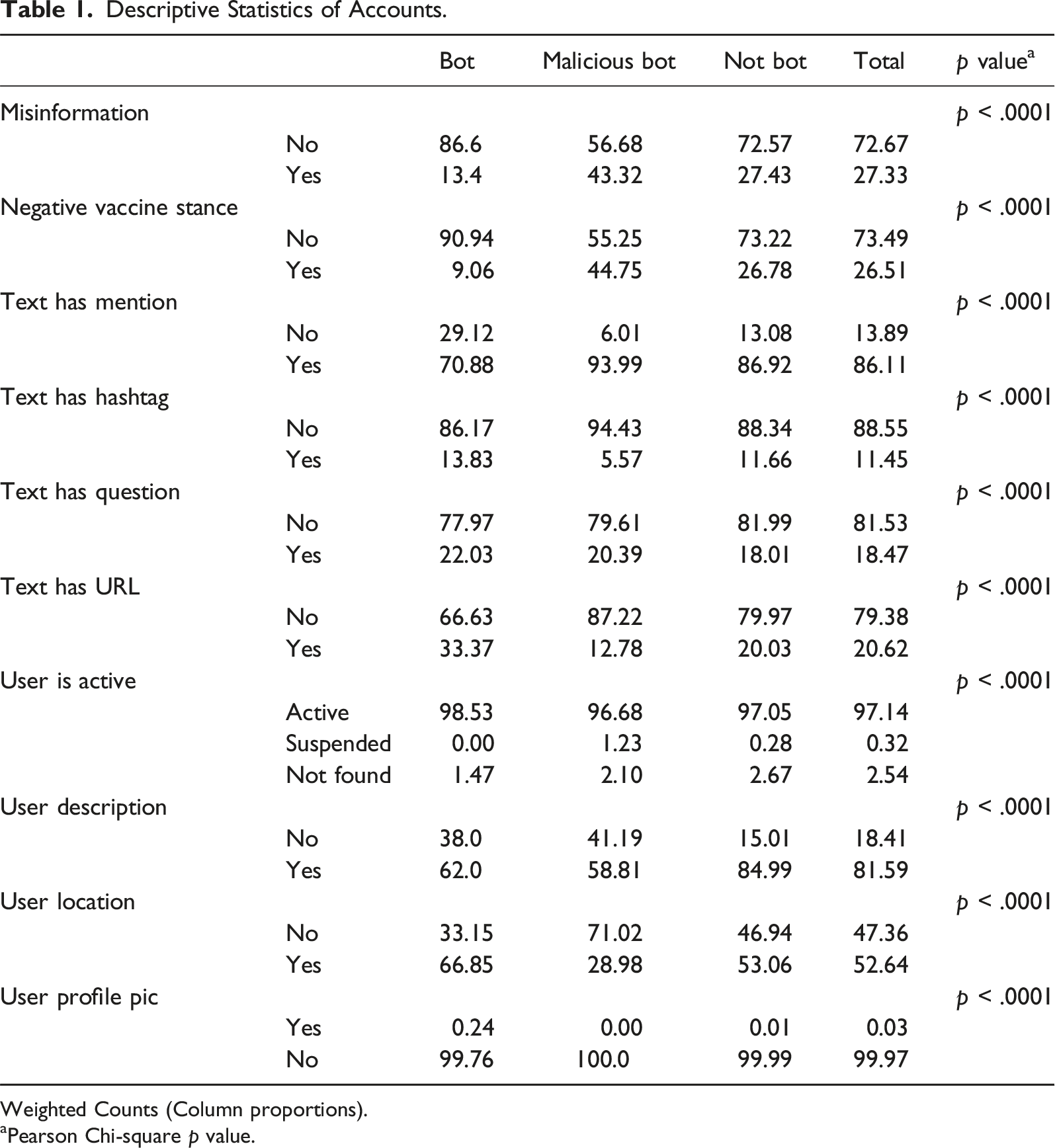

Features of Malicious Bots

Descriptive Statistics of Accounts.

Weighted Counts (Column proportions).

aPearson Chi-square p value.

In the context of misinformation dissemination, tweets containing misinformation comprise 13.4% of the total tweets from bot accounts, with a more pronounced percentage of 43.3% observed among all tweets from malicious bots. The share of misinformation content within tweets from normal bots is previously discussed in Al-Rawi and Shukla’s (2020) findings, in which they explained that conventional bots primarily engage in sharing news and information without resorting to sensationalism or expressing extreme negative sentiments. Conversely, the higher proportion of misinformation within the tweets from malicious bots aligns with previous findings from other authors (Broniatowski et al., 2018; Xu & Sasahara, 2022) regarding the active role of malicious bots in disseminating and amplifying misinformation.

Similarly, we noted comparable trends in the ratio of tweets expressing a negative stance on vaccines within the overall tweet count of both bots and malicious bots, examined independently. While tweets conveying a negative sentiment constitute a mere 9.1% of all tweets disseminated by bots, this percentage significantly increases, rising to 44.8%, in the context of tweets shared by malicious bots.

In our analysis, we found that a significant 86% of all tweets included a mention of another user. Notably, malicious bots were more likely to mention other users, doing so in 94% of their tweets. This is considerably higher than the mentioned rates for regular bots (71%) and non-bot accounts (87%). Breaking down the tweet content further, we observed that 37% of tweets by malicious bots contained two user mentions. Additionally, 16.6% of their tweets included three mentions, 6.7% had four, and 2.9% featured five or more mentions.

When it comes to the use of hashtags, non-bot accounts used hashtags in 11.6% of their tweets. Regular bots had a slightly higher hashtag usage rate at 13.8%, while malicious bots used hashtags the least, only in 5.6% of their tweets. Across all tweets, the overall hashtag usage rate was 11.4%.

We also analyzed tweets for information-seeking behavior, as indicated by the presence of a question mark. Overall, 18.4% of tweets contained a question mark. Non-bot tweets had a slightly lower rate of question marks at 18%, compared to 20.3% for malicious bots and 22% for regular bots. This data suggests that bots, especially regular ones, are more likely to use question marks in their tweets, possibly to capture their followers’ attention and direct them to visit webpages, such as news sites.

Our study found that a notable portion of tweets from bot accounts included links to websites, serving as additional information sources. Specifically, 20.6% of all analyzed tweets contained a URL. Regular bots were most likely to include URLs (same reason as above), doing so in 33.3% of their tweets. This was followed by non-bot accounts at 20%, and malicious bots at 12.8%.

Regarding the use of profile pictures, all bot accounts commonly adopted the practice. However, there was some variation in adding descriptions to their profiles. While 85% of non-bot accounts had profile pictures, the percentage was lower for regular bots (62%) and malicious bots (58.8%).

The inclusion of location information also varied among these accounts. It was least common among malicious bots, with only 28% sharing their location. In contrast, 66.8% of regular bots and 53% of non-bots included location information in their profiles.

An examination of the status of these user accounts as of February 21, 2023 (less than 4 months after the data was collected), revealed that the majority of malicious bot accounts (97%) remained active. A small percentage (0.8%) had been suspended by Twitter, and another 2.2% had closed their accounts. This provides insight into the operational status and practices of these different types of bot accounts on Twitter.

Analysis of Topics in Malicious Bot Posts

Topic modeling enabled us to address RQ2, which investigates the specific topics predominantly discussed by bot accounts. Our analysis identified content related to 59 distinct topics posted by malicious bots for spreading misinformation. Owing to space constraints, this paper discusses the ten most salient topics (refer to Table 4 in the supplemental file for an overview of all topics and the supplemental file 2 for tweet samples of each topic).

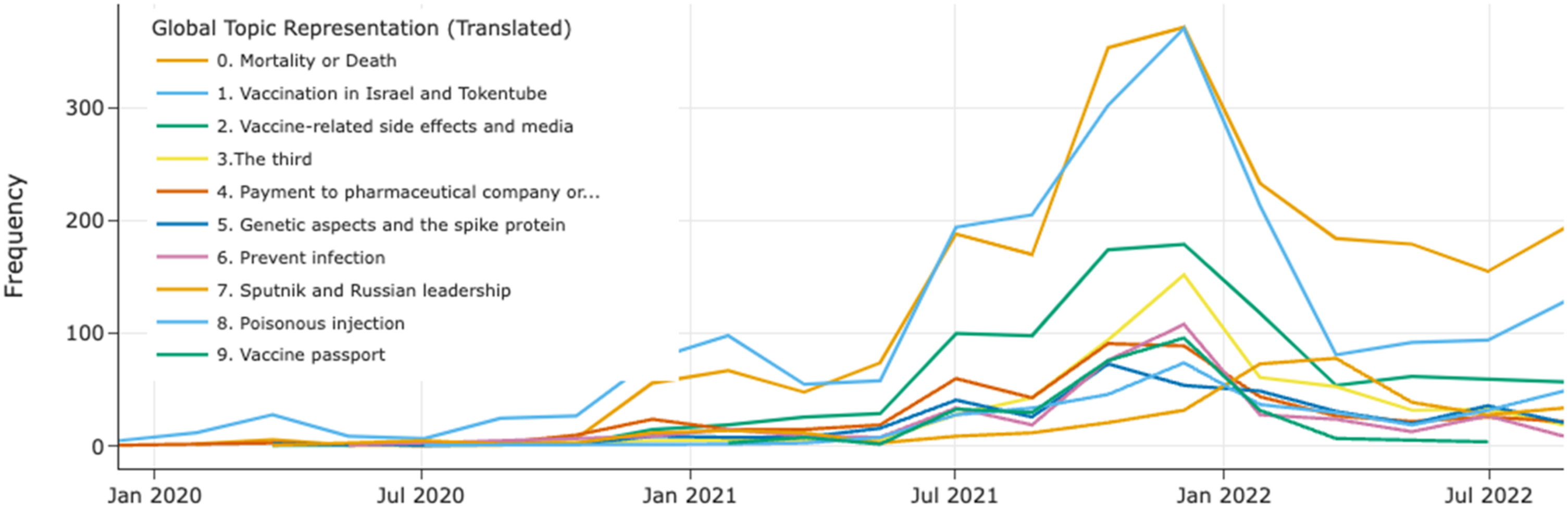

Figure 4 illustrates that the most significant and persistent topic discussed by malicious bots were related to COVID-19 deaths (kuolema). As previous research indicates, malicious bots use sensational language and false statistics to create fear and confusion among users, as well as emotionally charged language to increase their reach and engagement (Kušen & Strembeck, 2018). It is followed by vaccination-related discussions, vaccination implementation (injection, lääkettä = medicine) in Israel and video streams shared from TokenTube, a Finnish video platform that promotes freedom of speech and was utilized by anti-vaccination activists here to promote anti-vaccination campaigns. The third major topic focused on the role of the media, with accusations that it fails to report the side effects of vaccinations (rokotehaitoista) and acted as a state and elite-controlled entity (valtamedia). The fourth prominent topic was centered around vaccination boosting (kolmas, neljäs), with contents being produced for each boosting period. Topic timeline of negative vaccine stance. Note: The original topic names and timeline figure in Finnish can be found in the supplemental file.

The fifth topic pertains to the amount of money (billion - miljardi) paid (maksaa) to the pharmaceutical industry (lääketeollisuuden) and the business sector (bisnes). This suggests that the vaccination program is being orchestrated by private businesses to generate substantial profits. Additionally, the sixth major topic revolves around a conspiracy theory that vaccinations alter human DNA.

The subsequent topic addresses the efficacy of vaccines in preventing (estää) the spread (leviämistä) of COVID-19. Malicious bots seem to imply that vaccines are not effective against COVID-19 and that despite their use, the virus continues to spread. The eighth topic concerns the superiority/effectiveness of the Russian (venäjä) Sputnik vaccine over others. The ninth topic is related to street slang, namely, the use of the term “poisoning vaccine” (myrkkypiikki) as a derogatory reference to vaccination. The tenth topic pertains to the COVID-19 vaccination certificate (koronapassi) used for public restrictions. Other topics discussed by the malicious bots include adverse effects of vaccines (haittavaikutus) on the elderly and children, embolism (veritulppa), causing immunity (immuniteetti) weakness (heikkenee), sale license (myyntilupa), tests of masks (maskitestit), vaccine a war crime (rokote sotarikos) and selective reporting of news from various countries such as Australia, Germany, Sweden, and Norway.

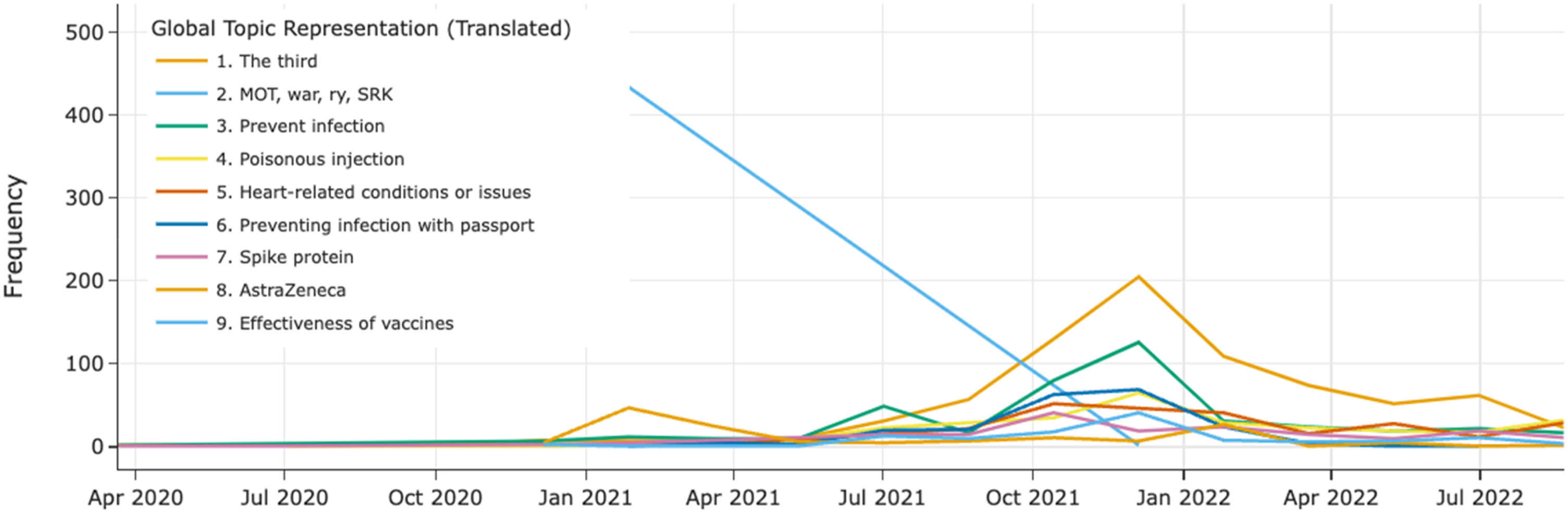

In our vaccination stance analysis, we identified fewer topics (35) compared to the misinformation analysis, even though we used smaller parameters to increase the topic coverage (refer to Table 3 in the supplemental file for the list of all topics and the supplemental file 2 for tweet samples of each topic). As depicted in Figure 5, we found similar topics to those identified in the misinformation topic analysis. However, the third topic is distinctive and related to war (sota), mot,

3

and Finnish Security Intelligence Service (suojelupoliisi), which emerged rapidly at the end of 2020 and took a year to lose its importance. Additionally, specific topics related to vaccination stance include side effects such as heart attack (sydänkohtaus) and narcolepsy (narkolepsia), the responsibility of political parties like Finns Party (perussuomalaiset) and National Coalition Party (kokoomus) in the administration of vaccination, as well as other terms, like lies (valheisiin) and fear porn (pelkopornoa). It is also important to notice that miracle cures (i.e., inhaling bleach or eating garlic) found in other studies (Moffitt et al., 2021) are not common in Finnish context. Topic timeline of misinformation. Note: The original topic names and timeline figure in Finnish can be found in the supplemental file.

Examining tweet topics in the context of malicious bot timeline, we observed that the top 3 topics of misinformation were initially centered around vaccine development and vaccination implementation (planning, risk groups, etc.), followed by Covid-related death and monetary profit earned by the pharmaceutical industry during November 2020, when malicious bot activities started gaining traction. As malicious bot activities overtook bots in July 2021, the main misinformation topics shifted towards vaccination implementation in Israel, COVID-19 death tolls, and criticizing the role of media in communicating vaccine side effects. These three topics remained most predominant during the most active period of malicious bot activities (from July 2021 to February 2022).

Concurrently, in the negative vaccine stance topic development, the initial top topics disseminated around November 2020 were preventing COVID-19 infection, third (death tolls, vaccine testing trials, etc.), and spike protein (in the context of human DNA). By July 2021, the top 3 topics shifted to war, preventing COVID-19 infection, and the third vaccination booster shot. As the discussion of war died out, Covid passport discussion, along with preventing COVID-19 infection and the number of booster shots, became predominant during the most active period of malicious bot activities (July 2021 to February 2022).

Results of Network Analysis

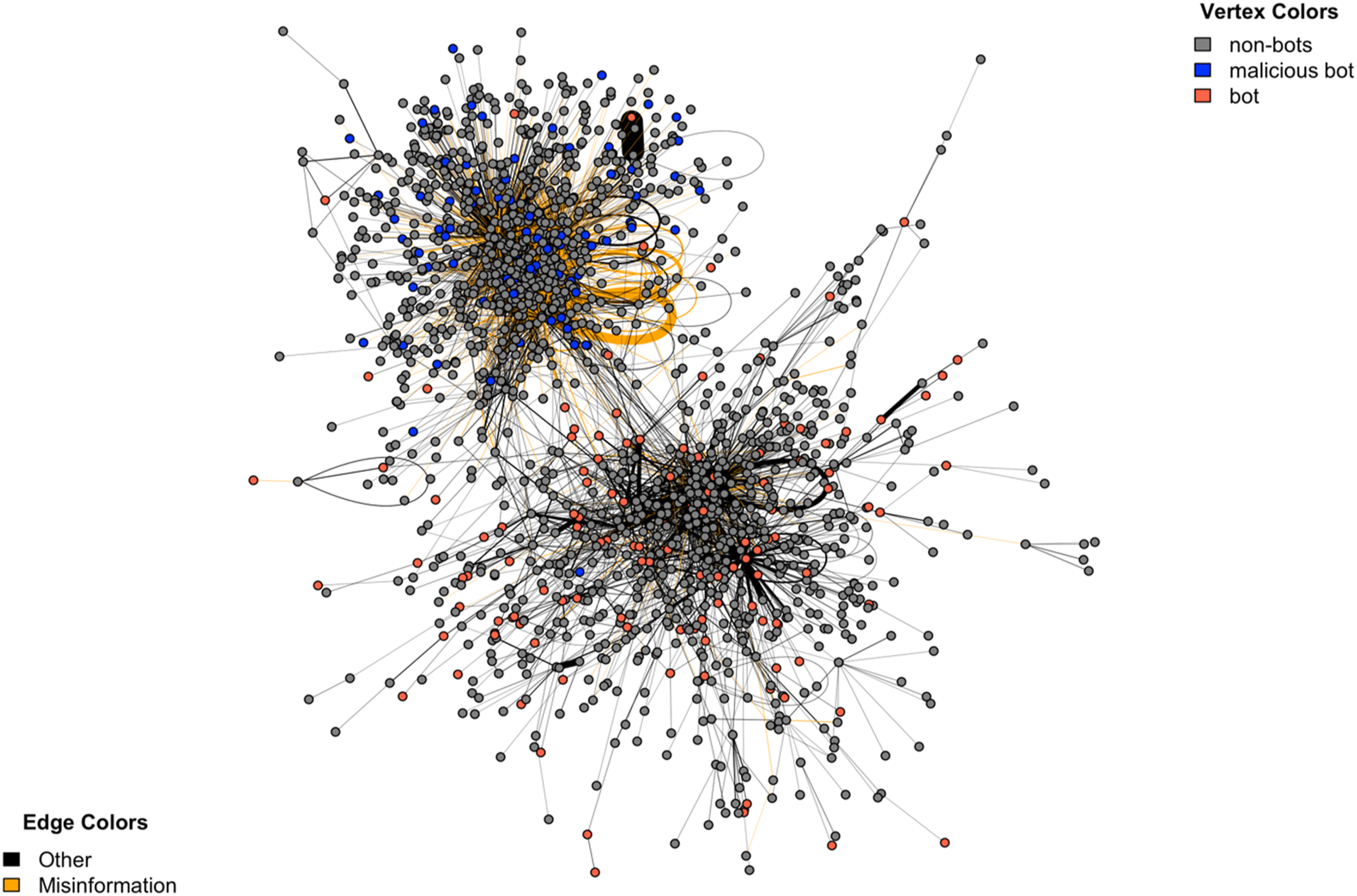

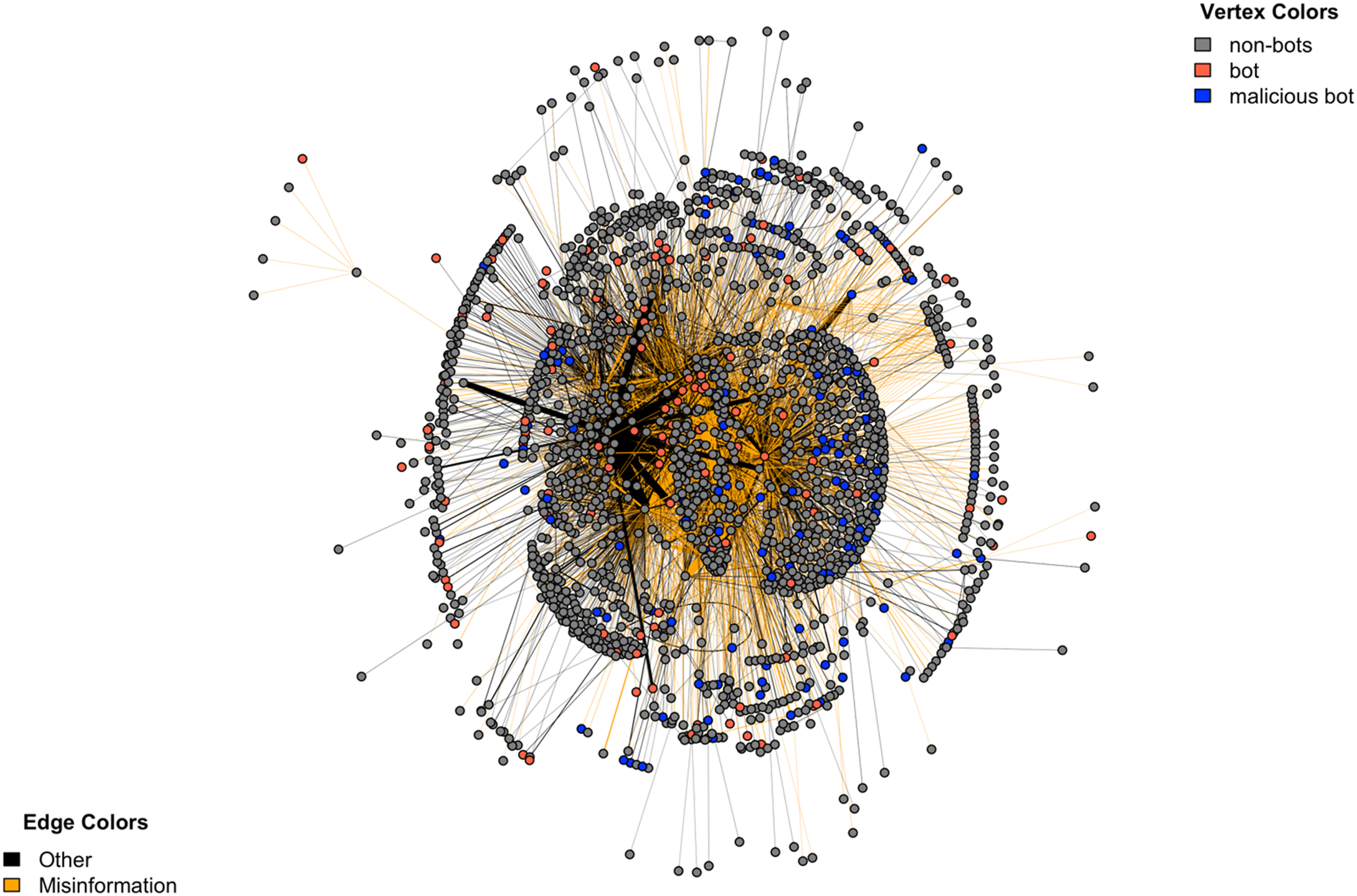

We utilized network analysis to address RQ3, which examines how bot accounts coordinated their activities. We visualized retweet and mention networks for misinformation (Figures 6 and 7) and negative sentiment tweets (Figures 4 and 5 in supplemental file), noting similar communication patterns. Negative sentiment tweets demonstrate significantly higher volume. In the retweet networks (Figure 6) for misinformation, two primary clusters emerge: one dominated by misinformation, the other with diverse content. The division of malicious bots and regular bots are evident, with few exceptions, they form their own clusters. More importantly, malicious and regular bots occupy opposing positions, with malicious bots concentrated primarily within the misinformation cluster. Retweeted network of all COVID-19 related online discussion. Note: The figure illustrates the network’s interconnections, focusing on vertices with more than 10 interactions for clarity. Interactions are quantified in retweets and edge width reflects interaction volumes (normalized). The visual layout employs the Fruchterman–Reingold algorithm for graph representation. Mention network of all COVID-19 related online discussion. Note: The figure illustrates the network’s interconnections, focusing on vertices with more than 20 interactions for clarity. Interactions are quantified in mentions and edge width reflects interaction volumes (normalized). The visual layout employs the stress majorization layout algorithm for graph representation.

The analysis of mention networks, as illustrated in Figure 7, reveals three primary clusters at the center. Intriguingly, only a single cluster of malicious bots is situated within a misinformation-focused cluster. The rest of the malicious bots are distributed throughout the network, maintaining loose connections with other malicious bots. This pattern indicates a strategic dispersion rather than a concentrated effort in any specific area.

These findings suggest a broader operational strategy employed by malicious bots. They are not limited to a single cluster or topic but instead exhibit active engagement across diverse groups and networks. Their widespread presence and the nature of their connections imply a systematic approach to influencing various discussions, rather than a focus on a singular narrative or agenda.

Furthermore, the malicious bot induced social network analysis reveal significant differences between retweet and mention networks (Table 5 in supplemental file). Notably, the density scores indicate that retweet networks are considerably more densely interconnected than mention networks, with a density of 0.0088 compared to 0.0017 for misinformation and 0.0094 compared to 0.0018 for negative sentiment. This suggests that information in the retweet networks spreads more extensively, potentially reaching a larger audience.

Additionally, the diameter scores are substantially smaller in retweet networks, with a diameter of 27 compared to 52 for misinformation and 30 compared to 73 for negative sentiment. Specifically, diameter score results indicate that retweet networks form tighter, more closely knit communities where information can spread rapidly and extensively. The higher density and smaller diameter of these networks suggest a more efficient and swift dissemination of content, often within a more confined network space (Al-Taie & Kadry, 2017). In contrast, mention networks, with their lower density and larger diameter, imply a broader, less interconnected community where information dissemination is less concentrated and potentially slower.

In addition, the reciprocity scores reveal a stark contrast, with retweet networks exhibiting a minimal reciprocity of 0 for misinformation and 0.009 for negative sentiment, while mention networks display significantly higher reciprocity scores of 0.141 for misinformation and 0.091 for negative sentiment. Put simply, the low reciprocity scores in retweet networks point to a unidirectional flow of information, where users are more likely to share or endorse content without expecting a direct response or engagement. Conversely, the higher reciprocity in mention networks suggests a more conversational or interactive environment, where users engage in back-and-forth communication, reflecting a two-way exchange of information and opinions (Kolaczyk & Csárdi, 2020).

Last but not least, our analysis shows that both retweet and mention networks exhibit negative assortativity, meaning that nodes with different characteristics are more likely to connect (Kolaczyk & Csárdi, 2020). This trend is more pronounced in retweet networks, with scores of −0.286 for misinformation and −0.273 for negative sentiment, compared to −0.078 and −0.061 in mention networks. The higher negative assortativity in retweet networks suggests a diverse array of account types are actively involved in disseminating information, particularly regarding misinformation and negative sentiments. This implies a more varied and extensive communication pattern in retweet networks, characterized by a heterogeneous mix of accounts, in contrast to the more homogeneous interactions typically seen in mention networks.

Additional analysis of bot accounts in networks marked by misinformation and negative sentiment, as detailed in Table 5 of the supplemental file, reveals that regular bots are somewhat more common in mention networks than in retweet networks. This suggests that their shared content either received comments or they were the direct targets of interactions. We assessed their influence using various centrality measures, including in-degree, out-degree, closeness, betweenness, PageRank, and Eigenvector centrality. For example, among the top 100 accounts, there are 23 bot accounts in the misinformation mention networks, whereas the misinformation retweet network contains only one bot account. This pattern is consistent in networks related to negative vaccine sentiments, suggesting that while regular bots are less likely to retweet misinformation and negative vaccine stances (with only 1 among the top 100), they are often targeted in these posts by others.

However, this trend contrasts with the behavior of malicious bots. They are more inclined to retweet posts related to misinformation and negative vaccine stances but are less frequently mentioned. For instance, within the top 100 retweeters, there are 15 malicious bots in both misinformation and negative vaccine stance networks.

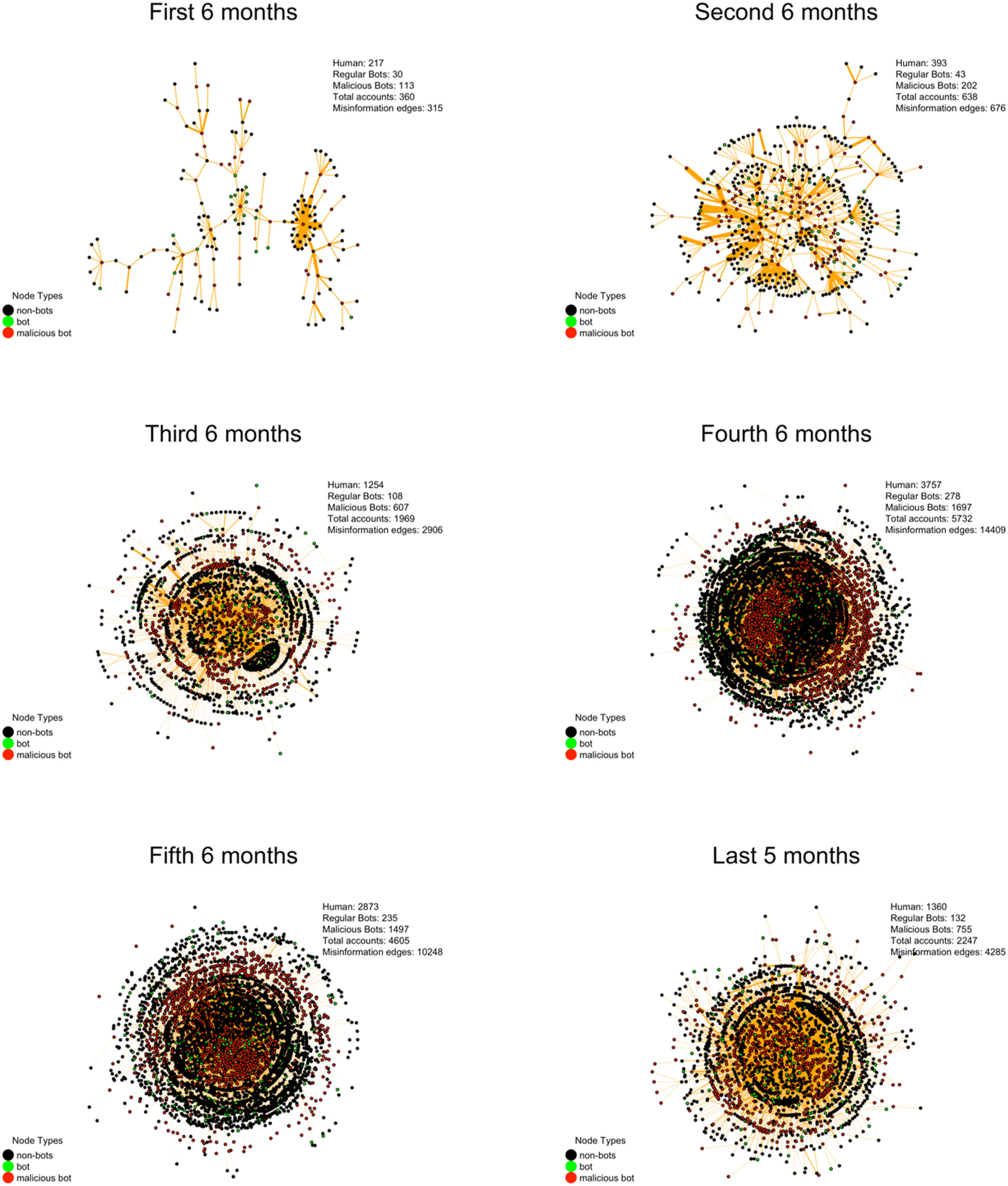

Finally, Figure 8 illustrates the evolution of malicious bot mention networks over three years, captured bi-annually. Initially, the network displayed modest activity with around 113 malicious bot accounts and 315 misinformation posts. This growth peaked in the fourth six-month period with 1697 malicious accounts and 14409 misinformation posts, indicating a surge in coordinated malicious bot interactions. Subsequently, a network contraction is observed, with a reduction to 755 accounts and 4285 misinformation posts in the final period. Despite this decline, the persistent activity suggests a resilient albeit reduced network capacity for mentions. It’s crucial to note that malicious bots often specifically target certain human accounts. For example, in the initial period, it is evident that each malicious bot (represented by red dots) is surrounded by black dots, symbolizing human accounts. As the network expands, these black dots (human accounts) begin to form distinct clusters. Especially towards the end of the period, the black dots, representing human accounts, were encircled both from within and outside the network. Temporal evolution of malicious bot networks over three years. Figure Note: This figure delineates the network topology, exclusively portraying vertices representing malicious bots that have engaged in misinformation activities and connecting to main network component. The interactions are quantified by the volume of mentions, with the width of the edges (normalized) corresponding to the frequency of these interactions. For the visual representation of the network, the stress majorization layout algorithm has been utilized, optimizing the depiction of node interconnectivity and edge distribution.

THL’s Role Within Networks and Interactions with Malicious Bots

As a key aim of this study is to assess THL’s role and the targeting patterns of malicious bots, we explore the entities with which both THL and the malicious bots interacted during the pandemic. Our analysis shows that THL was the most mentioned account across all mention networks, receiving approximately 11% of the mentions. However, when focusing specifically on the misinformation mention network, THL did not rank within the top 20, garnering only 2073 misinformation engagements. This accounts for less than 0.1% of all misinformation engagements, which total 211,053. These findings suggest that THL’s interactions with its audience are primarily driven by standard health communications.

Conversely, our investigation into the activities of malicious bots shows that they mentioned THL 619 times across the entire mention network, accounting for merely 0.8% of all mentions of THL. Furthermore, upon narrowing our analysis to the misinformation segment of the network, THL received 338 mentions (16%) from malicious bots. These findings indicate that while malicious bots are indeed a source of misinformation targeting THL, they are not the predominant source.

Detailed analysis of the activity of malicious bots showed that THL was not their primary target; instead, they targeted a wide range of accounts. Interestingly, only about 11% of their mentions were directed at other malicious bots. This suggests that while THL was a significant point of interest, malicious bots predominantly engaged with a broad array of accounts, not just THL or other bots. Within the misinformation-specific network segment, malicious bots accounted for about 11.5% of all engagements, underscoring their widespread involvement across various network segments, without exclusive focus on any single entity or group.

Discussion

Firstly, our approach makes a significant contribution to the existing literature by differentiating bot accounts into two distinct groups, confirming that a binary bot classification is insufficient (Rizoiu et al., 2018; Wang et al., 2023). However, we acknowledge that further classification is highly context-dependent, relying on the topic, social context, and country. This may require additional analysis, making automation through applications like Botometer more challenging. Although numerous studies have highlighted the influence of bots on health-related discussion, our findings demonstrate a significant discrepancy between regular bots and malicious bots. Regular bots often align with government and health authorities, bolstering their long-term reliability and broadening their follower base. This would position them to play a pivotal role in pandemic-related communication, particularly when public health authorities make strategic decisions and form partnerships (Hoffman et al., 2021), since regular bots exhibit influential role (Rizoiu et al., 2018).

Our analysis addressing RQ1 shows that malicious bots exhibit distinct characteristics and objectives. This study highlights their key features and communication patterns, contributing to the ongoing development of robust tools for their identification in health communications. Notably, during the prolonged pandemic, malicious bot proliferation closely coincided with the emergence of the crisis. More than half (55%, 2715 out of 4894) of the malicious bots were created after the first cases of COVID-19 were detected in Finland. Subsequently, these bots displayed a notable surge in their level of activity within COVID-19-related discussions, shortly following their creation. Despite constituting only one-third of the total bot population, malicious bots surpassed regular bots by the end of the pandemic.

Contrary to our expectations that malicious bot activity would subside with the conclusion of the pandemic, our data reveals a concerning persistence. This continued presence underscores the critical need for further research into the motivations behind such activity. The observed tactics employed by these actors are suggestive of a dualistic intent. On one hand, they may be aiming to undermine public trust in governmental institutions (Kestilä-Kekkonen et al., 2022; Unlu et al., 2023). On the other hand, there is a potential alignment with the global anti-vaccination movement (Horawalavithana et al., 2023). For example, Yousefinaghani et al. (2023) found critical periods where high amounts of misinformation coincided with important vaccine announcements all over the world. Similarly, small scale local demonstrations happened in Finland against the THL in the last stage of the pandemic (MTV Uutiset, 2023). Thus, a more nuanced understanding is required to fully grasp this phenomenon. Although our research was not specifically designed to investigate these motivations, it highlights the potential for malicious bot activity to persist beyond crisis periods due to factors that are not yet fully understood. Therefore, further study is warranted to explore the broader societal implications and underlying drivers of this ongoing phenomenon.

Second, addressing RQ3, our study is one of the few that analyze social media bot activities across both retweeting and mentioning engagement types, whereas most literature primarily focuses on how bot accounts spread information through retweets (Cai et al., 2023; Xu & Sasahara, 2022; Yuan et al., 2019; Zhen et al., 2023). Our study shows that malicious bots employ specific communication tactics, particularly through the method of mentioning. They aggressively mention at least one account in 94% of their tweets, with an average of 1.6 mentions per posts. The observed high frequency of mentions by malicious bots signifies a strategic attempt to leverage social network mechanics for amplification (Javed et al., 2023; Unlu et al., 2024a). This tactic facilitates increased reach and influence by directly engaging with other users. Frequent mentions serve to draw attention to the bot’s content, stimulate interactions, and potentially manipulate public opinion more effectively (Gaisbauer et al., 2021).

Retweets tend to amplify content to larger audiences and are associated with spreading messages with positive feelings or popular characteristics, making them more visible to a wider group of users (Segev, 2023). Mentions, however, are more personalized and targeted, often leading to direct interactions between users (Li et al., 2016). This manipulation is particularly concerning in the context of misinformation and disinformation campaigns, where such engagement strategies can enhance the dissemination and perceived credibility of misinformation. Furthermore, the high frequency of mentions can also be interpreted as an attempt by malicious bots to tailor their messages to specific target profiles, potentially leading to a more impactful manipulation effort.

Moreover, our analysis of tweet content characteristics revealed that hashtag usage, question mark usage, link sharing, profile photo sharing, and location information sharing patterns differ between malicious bots, regular bots, and human accounts, parallel with previous studies (Suarez-Lledo & Alvarez-Galvez, 2022). These distinctions in communication strategies (RQ1) underscore the sophisticated methods employed by malicious bots to mimic human behavior and evade detection, further highlighting the need for advanced detection mechanisms.

The decentralized distribution of malicious bots in mention networks underscores their adaptability and potential impact across diverse discourse areas. Their engagement with various groups and topics reflects a multifaceted strategy, influencing a broad spectrum of discussions and opinion formations. This pattern is reinforced by our topic modeling results, which show a wide and fluctuating range of topics produced by these bots throughout the pandemic. This variability highlights their ability to shift focus across different subjects during various pandemic phases.

While the identified topics are not new, our analysis addressing RQ2 shows the topics that malicious bots want to be salient during the pandemic. Prior studies indicate that in the context of misinformation, the political ideology of the message source is the primary predictor of anxious and enthusiastic responses (Antenore et al., 2023). This is followed by characteristics of the message that emphasize individual concerns and the network positions of the messages (Yang et al., 2023). Thus, our findings also highlight the similar elements in contents malicious bots produced.

The malicious bots’ messages concentrated on safety, political/conspiracy theories, and choice categories. The malicious bots exhibited a tendency to criticize the pandemic containment measures, demonstrate disagreement with political figures, and cast doubt on the accuracy of information disseminated on social media, similar to other studies (Suarez-Lledo & Alvarez-Galvez, 2022). Additionally, fear appeal elements, such as perceived severity and perceived susceptibility, were predominantly utilized in these messages (Carrasco-Polaino et al., 2021; Scannell et al., 2021). Fearmongering about vaccines includes spreading false information such as the vaccine being ineffective against the spead of COVID-19 infection, altering DNA, causing heart attacks, weakening the immune system, and having potential serious side effects on children and the elderly. These claims seek to foster distrust in governmental and pharmaceutical entities, highlighting the importance of public education to mitigate the use of misinformation in exploiting emotional responses and fears, making individuals less susceptible to such tactics (Scannell et al., 2021).

The use of particular health-related terminologies by social bots may be just one aspect of a broader strategy to manipulate public opinion or disseminate misinformation. Specifically, bots may deploy specific terms as a means of creating the impression of expertise and credibility, thereby engendering greater trust among their target audiences. For example, previous research shows that anti-vaccine tweets more frequently provided research or scientific evidence (even if it was incorrect) and used personal narratives (Hoffman et al., 2021). Likewise, bots may inflate the values of statistical parameters or employ sensational language, leading to an increase in the likelihood that such information will be shared or noticed (Stieglitz et al., 2017). However, this can have negative implications for public health, since the rapid spread of false or misleading claims through social media can result in misinformation being widely accepted as fact. Besides, delayed response may cause a form of data deficiency that arises when “there are high levels of demand for information about a topic, but credible information is in low supply” (Purnat et al., 2021; Shane & Noel, 2020). The use of technical language can cause public confusion, potentially leading to adverse health outcomes. Public health authorities must intervene by promoting clear, tailored, concise language and active social media monitoring (Puri et al., 2020; Purnat et al., 2021; Weng & Lin, 2022).

Current practices on social media platforms do not generally include informing users about the authenticity of accounts. Although Twitter has implemented additional labels for “government and state-affiliated media accounts” and suspends some accounts based on its opaque policies (X, 2024), research indicates that users benefit from increased awareness of account authenticity. As previously discussed, human assessment of bot accounts is inherently challenging, and individuals often struggle with this task (Beatson et al., 2023).

However, if platforms were to provide background information on accounts, it could significantly influence user judgment (Wischnewski et al., 2024; Yan et al., 2021). For instance, Twitter, as well as other social media platforms, has enabled APIs for bot account (also named as agents) automation on its platform, allowing companies to leverage these capabilities to expand their audience reach and enhance advertising campaigns. Despite this, cross-platform automation remains a contentious issue (Makhortykh et al., 2022). Companies often use authenticity arguments to block competitors’ automation efforts (e.g., preventing YouTube influencers from sharing their content on Twitter). Differentiating bot accounts from human ones is complex. Companies often act as black boxes, reluctant to disclose information to their users, especially when deleting accounts for bot-like activities (Beatson et al., 2023). However, it also indicates that these companies do possess the technical capacity to assess account and behavior authenticity. For example, starting in 2021, Facebook has been notifying users when they encounter content that fact-checkers have determined to be false (Tan, 2022). With increased public pressure or governmental regulation, companies could be compelled to offer greater transparency about account origins, likelihood of authentic behavior, or fact-checker results. This would be highly effective in curbing political polarization, misinformation, and the spread of propaganda.

Finally, our comprehensive analysis shows that goal-oriented bots emerge during prolonged health pandemics. Public health agencies must recognize that during public health crises like COVID-19, individuals or other actors may create automated accounts, or bots, to manipulate public opinion with certain agendas (Cai et al., 2023; Ferrara, 2020). To counter this, agencies should enhance their identification and monitoring of such bots, employing advanced analytics and machine learning tools to detect and mitigate their influence early. Strengthening collaboration with social media platforms to swiftly remove malicious content can also bolster health promotion efforts on these platforms.

While malicious bots employ sophisticated communication tactics and intensify their activity, their overall influence remains limited. Network centrality measures indicate they do not hold a prominent position in spreading misinformation or promoting a negative stance on vaccines. Public health agencies should focus on preemptive strategies such as pre-bunking and debunking misinformation, educating the public on recognizing bot activity, and promoting accurate information to mitigate the potential impact of these bots (Unlu et al., 2024a).

Additionally, while THL is not the primary target of malicious bots within the mention network, this pattern points to a potential risk of malicious bots targeting government agencies and health authorities. Such activities could lead to significant consequences, aligning with trends identified in previous research (Al-Rawi & Shukla, 2020). These coordinated efforts could create the perception among authorities that these criticisms originate from ordinary citizens, suggesting widespread societal resistance or unrest against current practices. Without discerning the true source of these engagements, public authorities could inadvertently amplify the malicious intentions behind them. Nonetheless, their surveillance and big data analysis remains essential for public health agencies in shaping more effective communication strategies (Park, 2022).

Limitations

The research has limitations that may affect its generalizability and validity. First, it focuses solely on the impact of social media bots on COVID-19, vaccine stance, and misinformation, neglecting other factors like government policies, traditional media, and personal beliefs. Second, the sample of social media users engaging with COVID-19 content may not represent the wider population’s views on these topics.

Third, the study was conducted in Finland, so its findings may not apply to other contexts. Fourth, it relied on publicly available social media data, which may be incomplete or inaccurate, potentially underestimating or overestimating the impact of social media bots.

Finally, our malicious bot calculation relies on the accuracies of both the text classification models and Botometer software and it is based on the authors assumptions of key features of malicious bots (e.g., misinformation, negative vaccine stance, and Twitter account statuses). Although the findings indicate a distinct divergence in communication patterns through network analysis, generalizing these results requires additional statistical analyses of tweet and account features, as well as an examination of the distribution of text classification results. Furthermore, employing more robust validation methods with larger sample sizes for Botometer and malicious bot calculation is essential to enhance the generalizability of the conclusions.

Conclusions

The study contributes to the existing literature by distinguishing regular bots from malicious bots while analyzing their distinct features, tactics, and motivations. The research shows that the majority of malicious bots created during the COVID-19 pandemic were used to manipulate public discussions and disseminate misinformation on social media. Malicious bots aggressively used mentioning tactics and narrated fear appealing elements, such as perceived severity and susceptibility, and concentrated on safety, political/conspiracy theories, and choice categories. To counteract the influence of these accounts, it is essential to educate the public about misinformation and fear appeal tactics, develop effective strategies for detecting and countering bots, and adopt clear and concise language in public health communication. The results of this study have important implications for health communication research and practice, indicating the importance of close monitoring and intervention efforts on social media to promote accurate health information and prevent the spread of misinformation.

Supplemental Material

Supplemental Material - Unveiling the Veiled Threat: The Impact of Bots on COVID-19 Health Communication

Supplemental Material for Unveiling the Veiled Threat: The Impact of Bots on COVID-19 Health Communication in Ali Unlu, Sophie Truong, Nitin Sawhney, and Tuukka Tammi in Social Science Computer Review

Supplemental Material

Supplemental Material - Unveiling the Veiled Threat: The Impact of Bots on COVID-19 Health Communication

Supplemental Material for Unveiling the Veiled Threat: The Impact of Bots on COVID-19 Health Communication in Ali Unlu, Sophie Truong, Nitin Sawhney, and Tuukka Tammi in Social Science Computer Review

Data Availability Statement

The data that support the findings of this study are available from Aalto University, but restrictions apply to the availability of these data, which were used under license for the current study and so are not publicly available.

Footnotes

Acknowledgments

The authors wish to acknowledge the scientific computing support provided by the Aalto University Research Software Engineer team.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Academy of Finland (grant 339931).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.