Abstract

Conversational AI (e.g., Google Assistant or Amazon Alexa) is present in many people’s everyday life and, at the same time, becomes more and more capable of solving more complex tasks. However, it is unclear how the growing capabilities of conversational AI affect people’s disclosure towards the system as previous research has revealed mixed effects of technology competence. To address this research question, we propose a framework systematically disentangling conversational AI competencies along the lines of the dimensions of human competencies suggested by the action regulation theory. Across two correlational studies and three experiments (Ntotal = 1453), we investigated how these competencies differentially affect users’ and non-users’ disclosure towards conversational AI. Results indicate that intellectual competencies (e.g., planning actions and anticipating problems) in a conversational AI heighten users’ willingness to disclose and reduce their privacy concerns. In contrast, meta-cognitive heuristics (e.g., deriving universal strategies based on previous interactions) raise privacy concerns for users and, even more so, for non-users but reduce willingness to disclose only for non-users. Thus, the present research suggests that not all competencies of a conversational AI are seen as merely positive, and the proposed differentiation of competencies is informative to explain effects on disclosure.

Introduction

During the last years, artificial intelligence (AI), and especially conversational AI systems, such as Amazon Alexa or Google Assistant, have become ubiquitous. Worldwide, 3.25 billion conversational AI systems (also called voice assistants, personal intelligent agents, etc.) had been in use in 2019 (Statista, 2022). Conversational AI offers several benefits to its users, such as convenience, utility, enjoyment, or personalization, and can assist its users in a wide range of tasks (e.g., access to information, entertainment, online shopping, control of smart home devices).

To offer these functionalities and particularly to adapt the interaction experience to the user and their individual needs, conversational AI needs to gather personal information. For instance, location-based requests can only be answered adequately if the system has access to the user’s current location, a system can only provide its user with news fitting their interests if it has information about the individual’s interests, daily routines can only be created if the system has the necessary information about the user’s habits, and the most important information from mails and appointments can only be extracted if the system has access to the mailing and calendar applications of the user. This implies that conversational AI collects a significant amount of the user’s personal data which is often considered problematic: users as well as non-users of conversational AI report concerns about privacy and the amount of data collected by conversational AI (e.g., Dubiel et al., 2018; Liao et al., 2019).

In this context, it is important to understand which factors guide people’s disclosure towards technologies. Up to now, research has investigated associations between disclosure and trust in the technology provider (e.g., Joinson et al., 2010; Pal et al., 2020), individual user characteristics (e.g., Bansal et al., 2010; Mohamed & Ahmad, 2012), as well as objective system characteristics (e.g., Easwara Moorthy & Vu, 2015) such as anthropomorphic design features (e.g., Ha et al., 2021; Lucas et al., 2014) or functionality.

Research on system functionality has often focused on objective system capabilities (e.g., Bandara et al., 2020; Ha et al., 2021). Yet, an approach that has to the best of our knowledge not been taken to understand disclosure is to consider perceptions of conversational AI’s functionality or competence from a more human-oriented perspective. This is surprising because this approach is particularly promising, given that people tend to treat technical systems as social actors (Nass & Moon, 2000; Reeves & Nass, 1996) and anthropomorphize them (i.e., ascribe them human-like characteristics; Epley et al., 2007). Plus, conversational AI is far more capable of communicating in a human-like manner than earlier technologies and it has been observed that some users even engage in small talk with it (Purington et al., 2017). Overall, this suggests that the human-oriented perspective is most likely in line with how people approach conversational AI.

Thus, the present research investigates the associations between perceptions of human-like competence in conversational AI and disclosure towards this technology. In doing so, we aim to contribute to the understanding of people’s disclosure towards technologies and shed light on the impact of perceived system competencies.

Technology Competence Can Heighten Acceptance and Disclosure

At first sight, more functionality of technical systems seems very positive and should, accordingly, increase the perceived usefulness as well as the adoption and acceptance of the respective technologies. Several theoretical models include this aspect as a core driver of technology adoption (e.g., perceived usefulness in the Technology Acceptance Model, TAM, Davis et al., 1989; performance expectancy in the Unified Theory of Acceptance and Use of Technology, UTAUT, Venkatesh et al., 2003). Furthermore, empirical research has repeatedly shown that functionality of technical systems is highly relevant for technology acceptance, and adoption of technologies in general and conversational AI in specific (e.g., McLean & Osei-Frimpong, 2019; Moriuchi, 2019; Moussawi et al., 2020; Pitardi & Marriott, 2021; Shao & Kwon, 2021). Besides, for people who already use a (smart) technology, competence is an important driver of usage continuance intentions (e.g., Dehghani, 2018; Moussawi et al., 2022).

More relevant to the current research question, functionality can also have a positive impact on users’ disclosure towards a technology. Several studies have shown that this willingness increases as people perceive to get benefits in exchange: perceived benefits (e.g., increased personalization or usefulness) can outweigh privacy concerns and lead to a heightened information disclosure (Chellappa & Sin, 2005; Sharma & Crossler, 2014; Xu et al., 2013) and, in some cases, users accept privacy intrusions for convenience in smart home technologies (Townsend et al., 2011).

Beyond the context of conversational AI, there is research on the relation between a human-like competence measure of technologies (i.e., assessing perceived competence based on the Stereotype Content Model by Fiske et al., 2002) and acceptance. In several studies, higher competence perceptions were associated with a higher acceptance of technologies: for instance, a higher believability of these agents (Demeure et al., 2011) and a higher willingness to use (Liu et al., 2022). There is also very first evidence regarding conversational AI: Pitardi and Marriott (2021) report that, besides perceived usefulness, also higher subjective perceptions of competence were related to a more positive attitude towards and higher trust in conversational AI.

In summary, the research described so far seems to suggest that functionality and competence of technical systems is key to adoption and acceptance. This research has either focused on the technical functionality of systems or, when considering a human-like consideration of competence, used a very simplified approach with a single dimension.

But: Technology Competence Can Also Lessen Acceptance and Disclosure

However, in contrast to the previously described findings, there are also indications that increased functionalities might not always be desirable. Technological progress and increased system capabilities are often assumed to be associated with a general fear of privacy invasion and exploitation of personal data, especially in the field of AI (e.g., Bandara et al., 2020; Ha et al., 2021). Further, referring to an “uncanny valley of mind,” it was observed that AI described as having a human-like mind in comparison to basic algorithms as a system basis led to participants feeling more eeriness (Stein et al., 2020).

Empirical studies focusing on human-like competencies did not only find positive effects as the ones described above but also detrimental effects of technology competence. Złotowski et al. (2017) reported that autonomous robots were perceived as more threatening and evoked stronger negative attitudes than non-autonomous ones—thereby suggesting that competencies are not always seen as merely positive. Similarly, McKee et al. (2022) found that perceptions of higher competence predicted lower preferences for cooperative agents—with the effect being stronger than and opposed to the effect of objective performance measures, thus, highlighting the important role of perceived competencies.

The previously described findings are focused on detrimental effects regarding perceptions of eeriness and general usage decisions. Few studies considered the (detrimental) effects of competence on disclosure. As an exception, Peng et al. (2019) conducted a study in which participants interacted with a robot recommending them items. They report that participants shared more feelings and thoughts about the recommended items with a robot that had a medium proactivity compared to a robot with either low or high proactivity. Here, obviously one specific aspect of competence resulted in detrimental effects. In another study, Liu et al. (2021) observed that higher perceptions of competence in a social robot led to more privacy concerns.

In sum, the findings presented in this and the preceding section are inconsistent. Competence can be positive or detrimental to technology acceptance. We sought to integrate these findings by taking a more differentiated approach to competence. We argue that it will, for example, potentially make a large difference whether users perceive a technical system as capable of completing tasks by following given rules or as being able to flexibly pursue their own independent goals.

Differentiation of Competence

Four levels of competencies of conversational AI can be distinguished from a human-oriented perspective by applying the theory of human action regulation (Frese & Zapf, 1994; Hacker & Sachse, 2014; Semmer & Frese, 1985). The action regulation theory—originally introduced by Hacker (1971, 1998) to describe different levels of action regulation in human work actions—initially included three levels and was extended to four levels by Semmer and Frese (1985). These levels differ in the amount of required action regulation.

On the lowest sensorimotor level, highly automatized movement patterns and cognitive routines are regulated (Zacher, 2017). It is assumed that actions on this level are not associated with independent and conscious goals but are usually triggered by regulation processes at higher levels (Zacher, 2017). Applied to the perception of a technology’s competence, this level includes acting according to existing (or predefined) rules to solve clearly defined problems and executing specific commands.

The second level of flexible action patterns includes action regulation based on (semi-)conscious automatized schemata or scripts: humans process information from the environment according to well-established rules. Signals from the environment can then activate action patterns (Zacher, 2017). For technologies, flexible action patterns can be characterized as an adaptability to different factors (e.g., situation, environment, or task). We expected that these lower two levels are commonly perceived in technologies. Thus, we were unsure whether their perception would have a meaningful impact on people’s disclosure towards conversational AI.

Regulating actions on the intellectual level implies the regulation of new or complex actions, entailing the development and selection of goals and detailed action plans (Zacher, 2017). Action regulation on this level requires attention and cognitive effort as it includes the analysis and evaluation of novel and complex information (Zacher, 2017). For technologies, intellectual competencies include anticipating and planning, finding innovative solutions as well as dealing with complex or incomplete information. We expected that people would perceive these intellectual competencies as clearly beneficial and useful. As the system uses the submitted data directly and it seems to be clear what the data is used for, we expected that higher intellectual competencies would heighten disclosure towards conversational AI.

Finally, the level of meta-cognitive heuristics involves the use of more abstract, less task-oriented templates, strategies, and heuristics to guide action regulation enabling to solve similar problems in an efficient and effective way (Frese & Zapf, 1994; Semmer & Frese, 1985). For technologies, meta-cognitive heuristics include learning as well as the development and adaptation of universal strategies based on previous events and interactions. We expected that people would perceive meta-cognitive heuristics as autonomous and strategic attributes that might be perceived as too competent and, thus, eerie (Stein et al., 2020; Złotowski et al., 2017). Accordingly, we expected that meta-cognitive heuristics would lessen disclosure towards conversational AI.

Differentiation of Disclosure

Regarding the assessment of disclosure, we distinguished between (a) willingness to disclose as an attitude regarding behavioral intention and (b) privacy concerns as an indicator for more abstract attitudes towards privacy. We chose this distinction because previous research has indicated that high privacy concerns do not necessarily stop people from sharing their data (“privacy paradox,” for a review see Kokolakis, 2017). To investigate potential different effects of competencies on these two aspects of disclosure, we differentiated them in our research question and hypotheses accordingly:

Do lower order competencies (sensorimotor competencies, flexible action patterns) of a conversational AI affect (a) willingness to disclose and (b) privacy concerns?

Higher intellectual competencies of a conversational AI predict (a) a higher willingness to disclose and (b) lower privacy concerns.

Higher meta-cognitive heuristics of a conversational AI predict (a) a lower willingness to disclose and (b) higher privacy concerns.

Current Research

We tested these ideas in five online studies. In the first study, we aimed to develop a scale for the assessment of competencies according to the action regulation theory across different technologies. Further, we exploratory investigated associations between disclosure and the four levels of competence in conversational AI. As the action regulation theory for human working actions had, up to now, not been used to assess the perception of competencies in technological systems, we did not initially formulate the described hypotheses but developed them based on the described theoretical considerations in combination with the results of this first study. Starting with Study 2 the hypotheses were pre-registered. All deviations from the preregistrations are reported in detail in the Supplemental Material.

In the second study, we aimed to validate and improve the scale as well as to replicate the findings regarding intellectual competencies and meta-cognitive heuristics in conversational AI. In Studies 3–5, we experimentally manipulated intellectual competencies and meta-cognitive heuristics to check for the causality of the observed effects.

In the research process, it became obvious that effects might differ based on usage experience, which is in retrospect highly plausible. As users have, in contrast to non-users, actual interaction experience with conversational AI, its competencies could have a different meaning for them. Accordingly, users might focus more on the positive aspects rather than the negative ones. Thus, we clearly indicate the usage experience of our samples in all studies and discuss its potential effects in the discussions of the respective studies and the overall research.

Study 1

Method

Design and Participants

We aimed to sample 300 valid cases for this study (https://aspredicted.org/tq3m3.pdf) in order to reach stable correlations (Schönbrodt & Perugini, 2013). We recruited participants via Prolific for a 10-min online survey remunerated with £1.25. Participants were randomly assigned to one of five conditions. Depending on the condition, participants were surveyed about their perception of one specific technology (i.e., either conversational AI, streaming service, search engine, autonomous vehicle, or washing machine). After exclusion as pre-registered (for details see Supplemental Material), the eligible sample consisted of N = 358 participants (59.8% male, 38.5% female, 1.4% non-binary; aged: M = 25.5, 18–62 years). In the conversational AI condition, the final sample consisted of 72 participants (54.2% male, 44.4% female, 1.4% non-binary; aged: M = 27.0, 18–56 years; 27 using conversational AI maximum once a month, 21 at least several times a week and 24 with usage frequencies in between).

Procedure

Participants were randomly assigned to one of the five conditions. In each condition, participants first read a short description of the respective technology: either a conversational AI or another technology (i.e., washing machine, search engine, streaming service, autonomous vehicle). This was done to increase the variance of the responses to the competence scales. Therefore, we used all conditions for the scale construction, but only the conversational AI condition to test the relation between the competencies and disclosure. Then, participants were surveyed about their perceptions of the respective technology’s competencies, their privacy concerns, and willingness to disclose. A complete list of all measures taken, instructions, and items is provided in the supplement—this applies for all studies reported in this paper. In addition, data (https://doi.org/10.23668/psycharchives.12175) and code (https://doi.org/10.23668/psycharchives.12176) for all studies are available for scientific use on PsychArchives.

Measures

Action Regulation Competencies

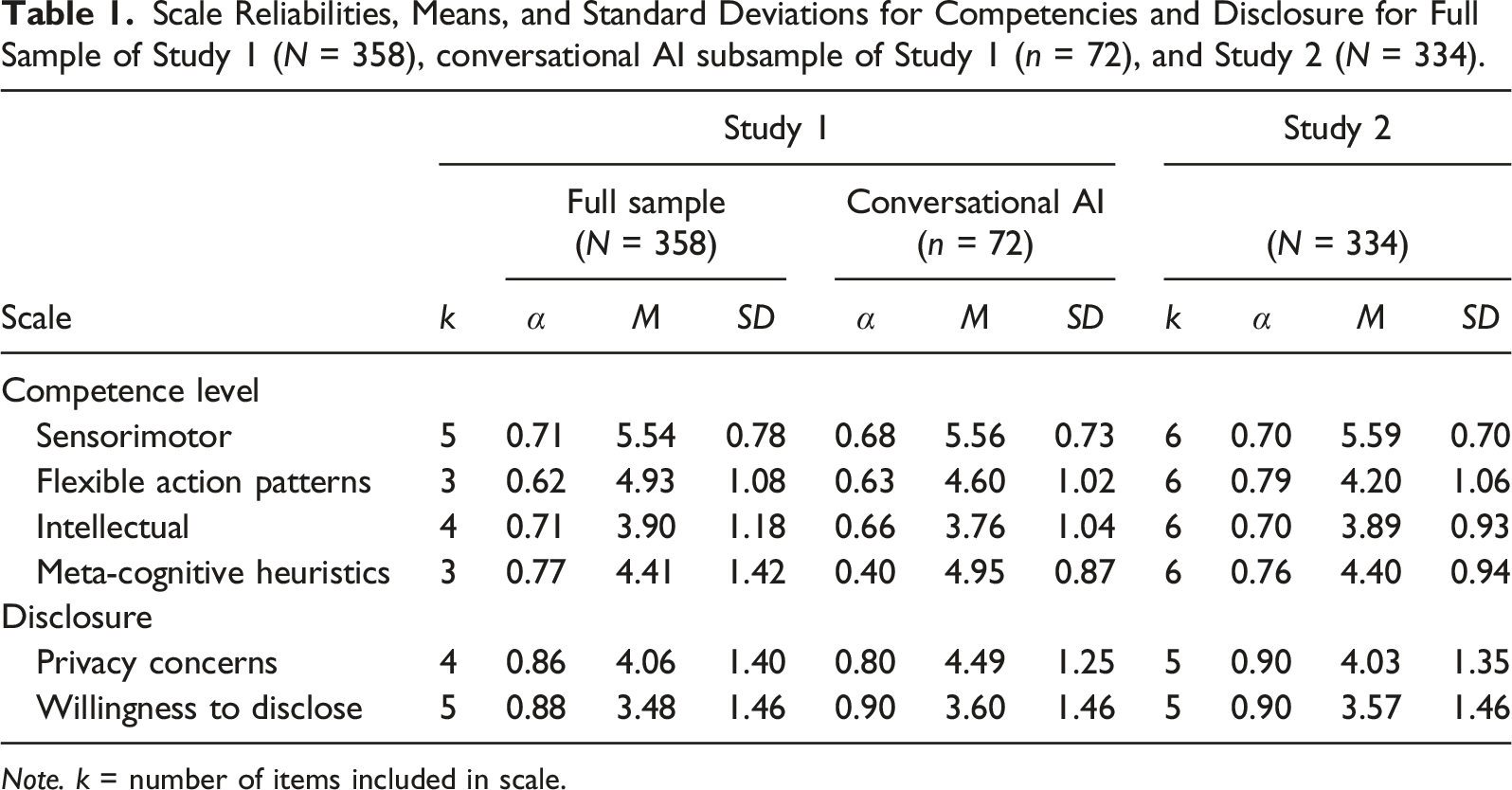

Scale Reliabilities, Means, and Standard Deviations for Competencies and Disclosure for Full Sample of Study 1 (N = 358), conversational AI subsample of Study 1 (n = 72), and Study 2 (N = 334).

Note. k = number of items included in scale.

Disclosure

Privacy concerns were assessed with four items (adapted from a scale called perceived legitimacy by Alge et al., 2006, for example, “I feel that ...’s practices are an invasion of privacy.”). Willingness to disclose was measured with three items (adapted from Gerlach et al., 2015, for example, “I would provide a lot of information to ... about things that represent me personally.”) and two additional, self-developed items.

Results

To explore the relation between perceived competencies of conversational AI and disclosure, we separately regressed (1) willingness to disclose and (2) privacy concerns towards conversational AI to the four levels of competence. Willingness to disclose towards conversational AI was neither predicted by the sensorimotor level (β = 0.13, p = .222, 95% CI [−0.08, 0.34]) nor by flexible action patterns (β = 0.12, p = .315, 95% CI [−0.11, 0.35]). However, higher intellectual competencies predicted a higher willingness to disclose (β = 0.45, p < .001, 95% CI [0.23, 0.66]), whereas higher meta-cognitive heuristics predicted a lower willingness to disclose (β = −0.24, p = .036, 95% CI [−0.46, −0.02]).

Privacy concerns regarding conversational AI were neither predicted by the sensorimotor level (β = −0.16, p = .148, 95% CI [−0.39, 0.06]) nor by flexible action patterns (β = 0.04, p = .733, 95% CI [−0.20, 0.29]). However, higher intellectual competencies predicted less privacy concerns (β = −0.36, p = .003, 95% CI [−0.59, −0.13]), whereas higher meta-cognitive heuristics predicted more privacy concerns (β = 0.25, p = .039, 95% CI [0.01, 0.48]).

Discussion

In Study 1, neither willingness to disclose nor privacy concerns towards conversational AI were associated with perceived competencies on the two lower levels (i.e., sensorimotor competencies and flexible action patterns). Higher intellectual competencies were associated with more willingness to disclose and less privacy concerns, whereas higher meta-cognitive heuristics were associated with less willingness to disclose and more privacy concerns. These results are in line with Hypotheses 1 and 2, which we pre-registered for Studies 2–5.

The exclusion of several items of the action regulation scales resulted in a low number of items for some scales. Besides, the internal consistency for some of the scales were non-satisfactory. Moreover, the sample focusing on perceptions of conversational AI was rather small and consisted in large parts of conversational AI non-users. Therefore, the results of Study 1 should be interpreted with caution. To overcome these limitations, we developed new items to assess competencies on the four levels of action regulation aiming to improve the reliability of the scales and recruited a larger sample size of actual conversational AI users to assess their perception of this technology in Study 2.

Study 2

Method

Design, Participants, and Procedure

We conducted a correlational study (https://aspredicted.org/v87ub.pdf). We aimed to collect 340 valid cases to reach a power of 95% (alpha = .05) for observing effects with a minimum effect size of f 2 = 0.03 in a multiple regression analysis with four predictors. For this purpose, we recruited participants owning a home assistant or smart hub via Prolific for a 12-min online study remunerated with £1.50. 425 participants who matched the pre-screening (i.e., owning home assistant/smart hub and having used a conversational AI before) completed the survey. After exclusion as pre-registered, the eligible sample consisted of N = 334 participants (54.2% male, 44.0% female, 1.8% non-binary; age: M = 30.3, 18–73 years).

Study 2 was a conceptual replication of Study 1 with the following changes: (1) we focused exclusively on the perception of conversational AI and (2) included only conversational AI users as participants.

Measures

Action regulation competencies

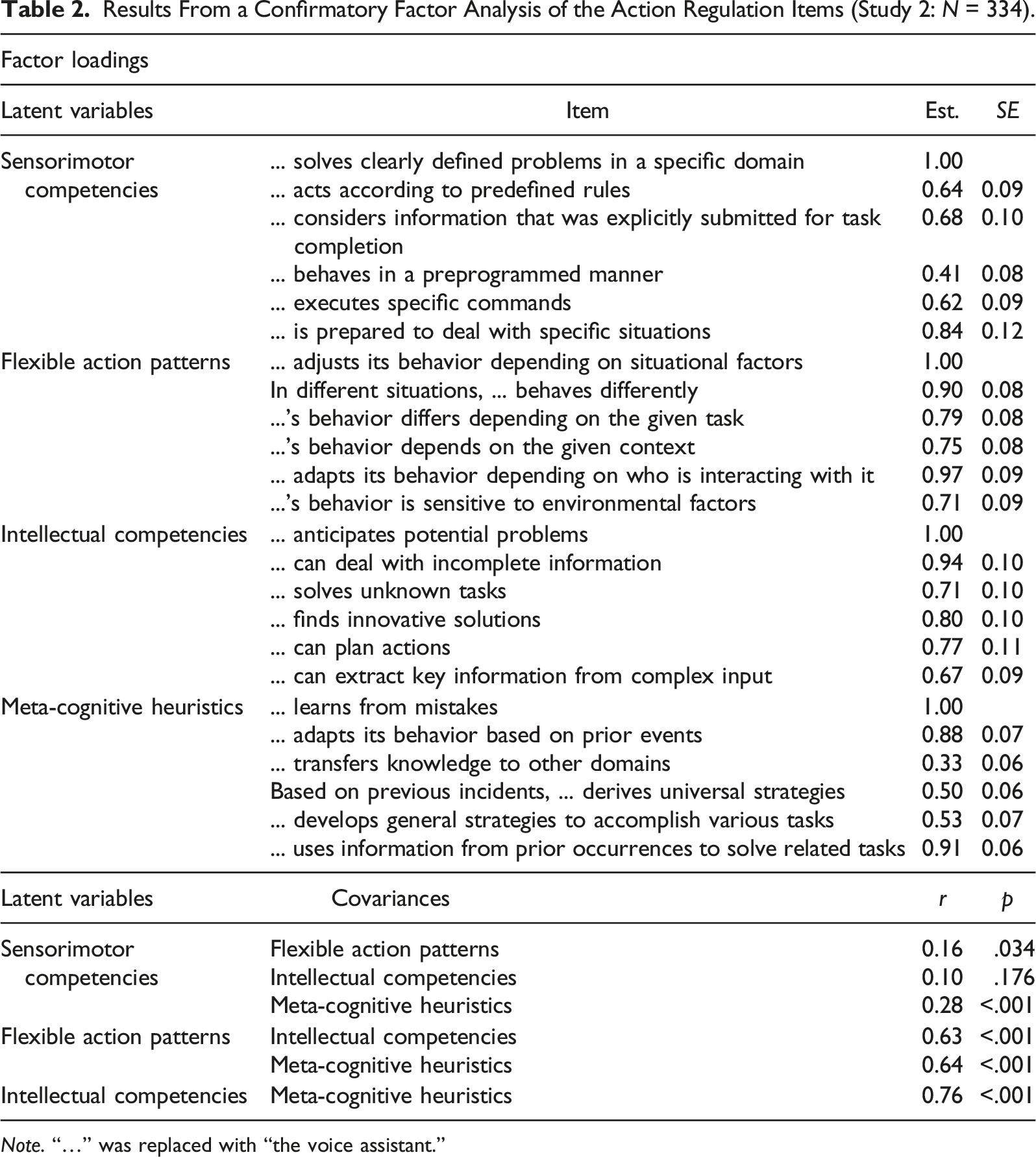

Results From a Confirmatory Factor Analysis of the Action Regulation Items (Study 2: N = 334).

Note. “…” was replaced with “the voice assistant.”

Disclosure

Expect for the change of one item in the privacy concerns scale (“I am concerned about my privacy when using the voice assistant.”) scales were the same as in Study 1.

Results

We tested the predictions that higher intellectual competencies would predict a higher willingness to disclose (H1a), whereas higher meta-cognitive heuristics would predict a lower willingness to disclose (H2a). Supporting H1a, a multiple regression analysis (pre-registered) showed that higher intellectual competencies were associated with more willingness to disclose (β = 0.22, p = .001, 95% CI [0.09, 0.35]). H2a was not supported, as meta-cognitive heuristics did not predict willingness to disclose (β = −0.05, p = .430, 95% CI [−0.19, 0.08]). Further, higher willingness to disclose was predicted by higher sensorimotor competencies (β = 0.22, p < .001, 95% CI [011, 0.32]) but not by flexible action patterns (β = 0.09, p = .155, 95% CI [−0.03, 0.21]).

Regarding privacy concerns, we predicted that higher intellectual competencies would predict lower privacy concerns (H1b), whereas higher meta-cognitive heuristics would predict higher privacy concerns (H2b). A multiple regression analysis (pre-registered) supported H1b as higher intellectual competencies (β = −0.14, p = .040, 95% CI [−0.28, −0.01]) predicted lower privacy concerns and also H2b as higher meta-cognitive heuristics (β = 0.15, p = .034, 95 CI [0.01, 0.29]) predicted higher privacy concerns. Furthermore, the analysis showed that lower privacy concerns were predicted by higher sensorimotor competencies (β = −0.19, p = .001, 95% CI [−0.30, −0.08]) but not by flexible action patterns (β = 0.05, p = .400, 95% CI [−0.07, 0.18]).

Discussion

In both studies, higher perceived intellectual competencies were associated with higher willingness to disclose and less privacy concerns, supporting H1a and H1b. Regarding the detrimental relation between meta-cognitive heuristics and disclosure, the results were somewhat inconsistent. Support for its negative relation with willingness to disclose (H2a) was only found in Study 1, but not in Study 2. However, higher perceived meta-cognitive heuristics were in both studies associated with more privacy concerns as suggested in H2b.

In a next step, we aimed to test whether the differences between the results of both studies result from the fact that Study 2 relied on users, whereas participants of Study 1 had mixed usage experience. Thus, we additionally included usage experience as a quasi-experimental factor in Study 3. Moreover, both studies offer only correlative support for the hypotheses—thus not allowing conclusions about causality. Studies 3–5, therefore, experimentally manipulated the conversational AI’s intellectual competencies and meta-cognitive heuristics to check for causality of the observed relations and to make sure that the effects are due to one or the other concept (i.e., both concepts are independent). Furthermore, Study 1 and 2 did not show a clear pattern of associations between sensorimotor competencies and flexible action patterns and disclosure. Therefore, we did not further focus on these two competence levels (and respectively RQ1) in the following studies.

Study 3

Method

Design and Participants

Study 3 was implemented as a 2 (intellectual competencies: low vs. high) × 2 (meta-cognitive heuristics: low vs. high) × 2 (usage experience: users vs. non-users) between-subjects design. Usage experience was a quasi-experimental factor: participants were regarded as users if they indicated to use a voice assistant at least several times a week and as non-users if they indicated to use it maximum once a month. Participants were randomly assigned to one of the four conditions made up by the other two factors.

We aimed to collect 344 valid cases in order to reach a power of 80% (α = 0.05) for observing a minimum effect of f 2 = 0.02 in a MANOVA with eight groups and two dependent variables. We recruited participants via Prolific for an 11-min online study remunerated with £1.40. After exclusion as pre-registered (https://aspredicted.org/qg5m4.pdf), the eligible sample consisted of N = 323 participants (35.3% male, 63.8% female, 0.9% non-binary; aged: M = 39.3, 18–81 years), with n = 167 non-users and n = 156 users.

Procedure

Participants read a short description of a fictional planned update for an existing conversational AI. Within this description, sensorimotor competencies and flexible action patterns were consistently described as improved compared to before the update as follows: “After the update, the voice assistant will have a substantially improved capability to react to more different user requests by executing specific commands. Another improvement is that it will consider characteristics of specific situations more than before (e.g., behave differently depending on where and when the interaction takes place, or who is interacting with it).”

The description of intellectual competencies and meta-cognitive heuristics differed depending on the experimental condition. In the high intellect conditions, intellectual competencies were described as improved after the update by the following description and an additional example (see Supplemental Material) to illustrate the respective competencies: “Besides, after installing the update, the voice assistant will have improved its capabilities to gather key information out of complex input, and to plan actions in advance, and to find innovative solutions.” In contrast, in the low intellect conditions, intellectual competencies were described as not improved by the update using the respective description and the same example to illustrate the lacking competencies: “Yet, after installing the update, the voice assistant will NOT have improved its capabilities to gather key information out of complex input, nor to plan actions in advance, nor to find innovative solutions”.

Similarly, meta-cognitive heuristics were described as improved by the update in the high meta conditions with the following description and an illustrating example: “However, the voice assistant will have improved its capabilities to use information from prior events to solve related tasks, and to develop general strategies for task completion, and to learn from its previous mistakes”. In the low meta conditions, a similar text was used to indicate that the respective competencies were not improved by the update.

After reading the update description, participants were asked about their disclosure to the conversational AI after the described update followed by a manipulation check, and a measure of conversational AI usage.

Measures

Privacy concerns (α = 0.94) and willingness to disclose (α = 0.91) were assessed with the same scales as in Study 2. Manipulation checks consisted of three items each for intellectual competencies (α = 0.78) and meta-cognitive heuristics (α = 0.70). Close care was taken that the wording of the manipulation and the manipulation checks did not overlap.

Results

Manipulation Check

As intended, participants in the high intellect conditions (M = 4.23, SD = 1.19) perceived significantly higher intellectual competencies compared to participants in the low intellect conditions (M = 2.72, SD = 1.07), F(1, 315) = 181.47, p < .001, ηp2 = 0.37. Further, participants in the high intellect conditions (M = 4.21, SD = 1.48) also perceived significantly higher meta-cognitive heuristics compared to participants in the low intellect conditions (M = 3.79, SD = 1.42), F(1, 315) = 13.26, p < .001, ηp2 = 0.04.

Participants in the high meta conditions perceived intellectual competencies (M = 3.98, SD = 1.34) as higher than participants in the low meta conditions (M = 3.01, SD = 1.22), F(1, 315) = 74.68, p < .001, ηp2 = 0.19, and also, as intended, meta-cognitive heuristics (M = 5.05, SD = 0.74) as higher compared to those in the low meta conditions (M = 2.93, SD = 1.23), F(1, 315) = 371.48 p < .001, ηp2 = 0.54.

There was no main effect of usage, p = .398. However, a significant meta by usage interaction indicates that the spillover effect of the meta manipulation on perceived intellectual competencies was higher for users compared to non-users, F(1, 315) = 6.73, p = .010, ηp2 = 0.02, while no interaction occurred for perceived meta-cognitive heuristics, p = .624. Further, the interaction between the two experimental factors indicates that this spillover effect on intellectual competencies was stronger in the high intellect compared to the low intellect conditions, F(1, 315) = 4.71, p = .031, ηp2 = 0.01, but absent for perceived meta-cognitive heuristics, p = .611. Accordingly, both manipulations had the intended effects, but they also had considerably smaller effects on the respective other competence level. Given that effects were substantially larger on the intended level of competence and also the observed interactions were small in effect size, we considered the manipulation successful.

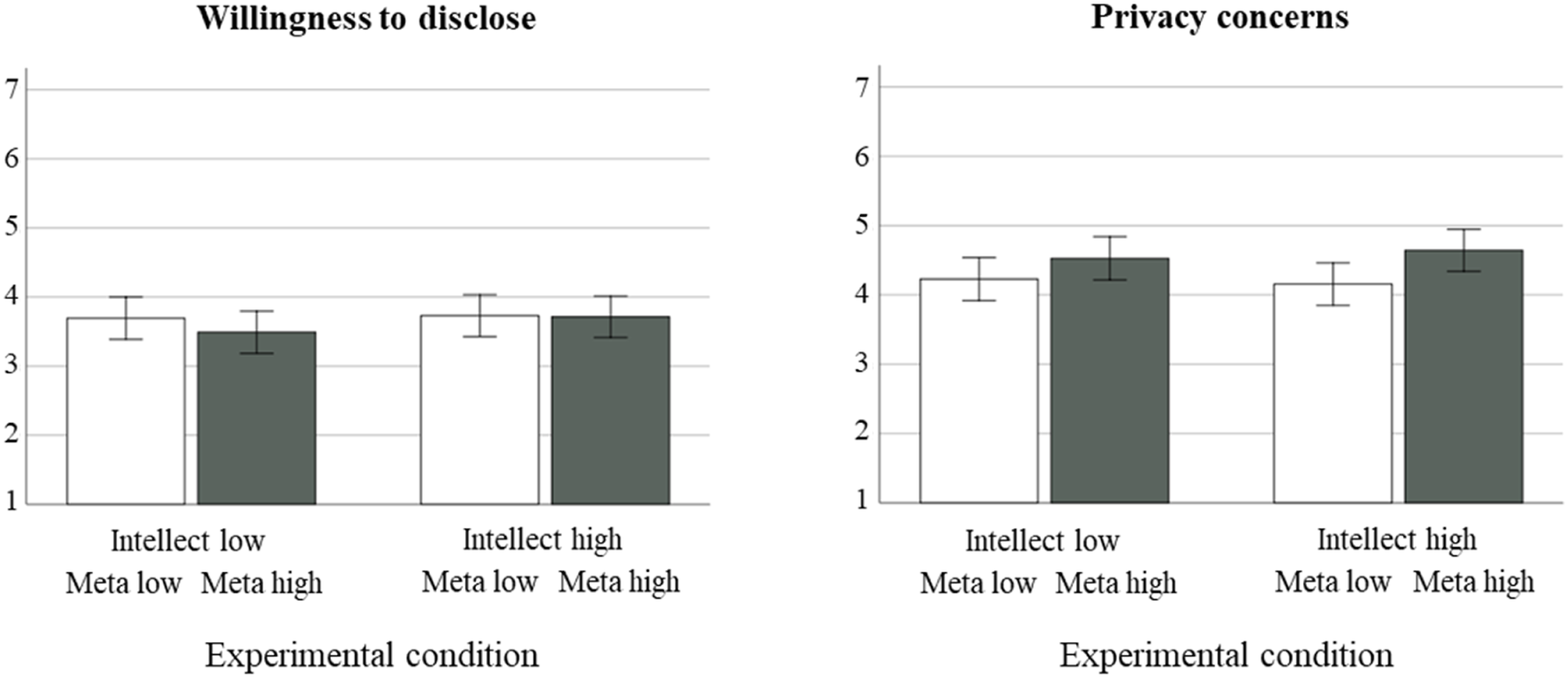

Hypotheses Testing

A MANOVA (pre-registered) with privacy concerns and willingness to disclose as dependent variables revealed significant effects of usage, F(2, 314) = 12.12, p < .001, ηp2 = 0.07, Wilk’s λ = 0.93, and the meta manipulation, F(2, 314) = 4.34, p = .014, ηp2 = 0.03, Wilk’s λ = 0.97, but not of the intellect manipulation nor any of the interactions between these three factors, all ps >.100, see Figure 1. Non-users (M = 4.77, SD = 1.45) reported higher privacy concerns than users (M = 4.01, SD = 1.39), F(1, 315) = 22.68, p < .001, ηp2 = 0.07. Besides, non-users (M = 3.32, SD = 1.49) reported lower willingness to disclose than users (M = 3.99, SD = 1.27), F(1, 315) = 18.39, p < .001, ηp2 = 0.06. Further, participants in the high meta conditions (M = 4.62, SD = 1.49) had higher privacy concerns compared to those in the low meta conditions (M = 4.19, SD = 1.42), F(1, 315) = 6.33, p = .012, ηp2 = 0.02, whereas the meta manipulation did not have an impact on willingness to disclose, F(1, 315) = 0.50, p = .479, ηp2 = 0.00. Privacy concerns and willingness to disclose by experimental condition (Study 3, N = 323). Note. 95% error bars.

Although there was no significant interaction of any experimental factor with usage, we exploratory analyzed the subsamples of non-users and users separately due to the differences between the results of Study 1 and 2 by computing MANOVAs with privacy concerns and willingness to disclose as dependent variables.

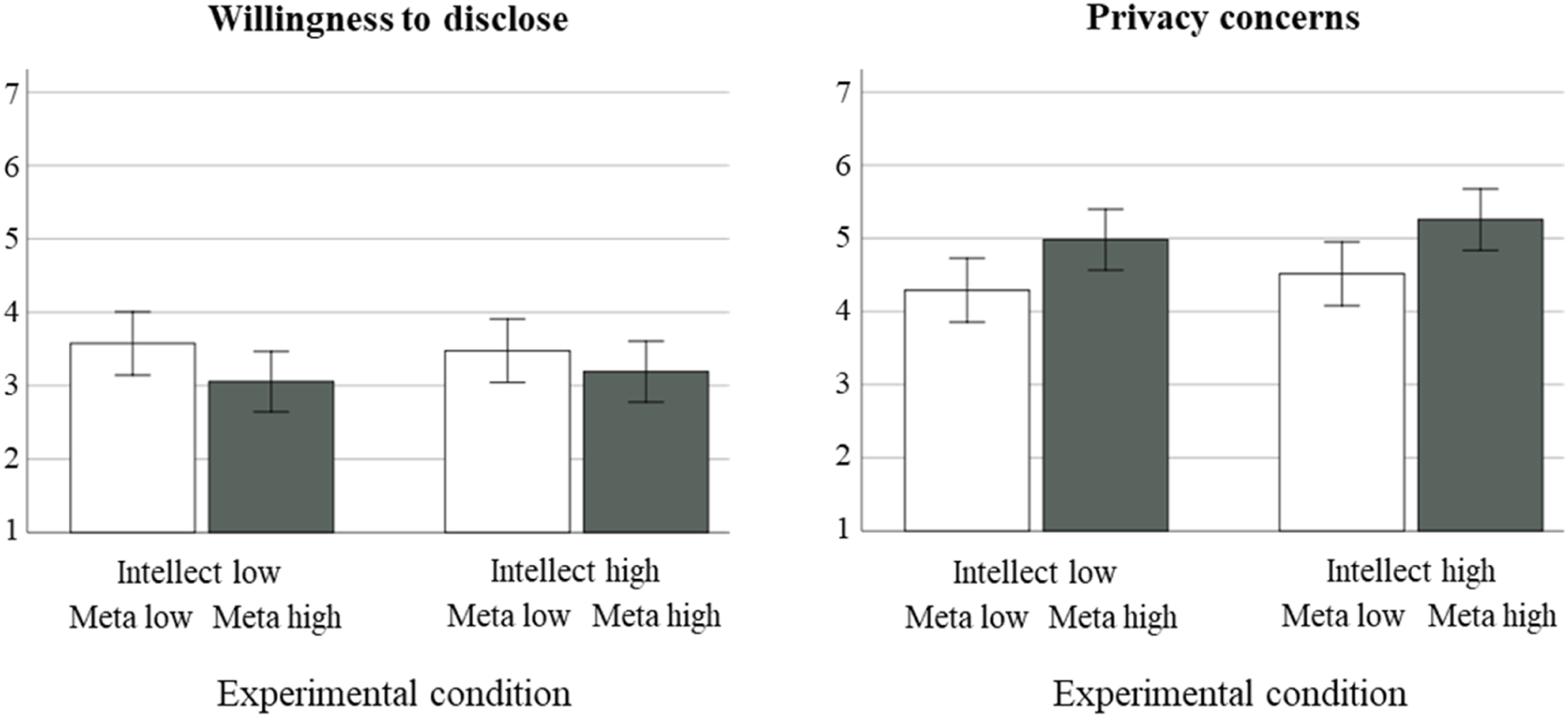

For non-users, the meta manipulation had a multivariate main effect, F(2, 162) = 5.80, p = .004, ηp2 = 0.07, Wilk’s λ = 0.93, but we found neither an intellect main effect, p = .218, nor an interaction, p = .683, see Figure 2. Non-users in the high meta condition reported higher privacy concerns (M = 5.12, SD = 1.36) compared to those in the low meta conditions (M = 4.40, SD = 1.45), F(1, 163) = 10.76, p = .001, ηp2 = 0.06. The willingness to disclose was not affected, p = .083. For users, none of the effects turned out significant, all ps > .290. Privacy concerns and willingness to disclose by experimental condition in the non-user subsample (Study 3, n = 167). Note. 95% error bars.

Discussion

In this experiment, we observed no effect of the intellect manipulation on neither willingness to disclose nor privacy concerns—thus not supporting H1a and H1b. The manipulation of meta-cognitive heuristics had no effect on willingness to disclose; however, for the overall sample and the non-user subsample, there was a marginal trend that participants in the high meta condition were less willing to disclose. However, the manipulation of meta-cognitive heuristics significantly affected participants’ privacy concerns. The effect observed in the overall sample was driven by the non-users, whereas the effect was non-significant in the user subsample. Accordingly, the results support H2b for non-users but not for users. In order to (1) validate the effect of meta-cognitive heuristics on privacy concerns for non-users and (2) test again for a potential effect of intellectual competencies, we conducted Study 4.

Study 4

Method

Design and Participants

The study was implemented as a 2 (intellectual competencies: low vs. high) × 2 (meta-cognitive heuristics: low vs. high) between-subjects design. We aimed to collect 351 valid cases in order to reach a power of 80% (α = 0.05) for observing a minimum effect of f = 0.15 in an ANOVA with four groups. We recruited non-users of conversational AI via the Clickworker platform for an 11-min online study renumerated with 1.60€. After the pre-registered exclusion (https://aspredicted.org/39en3.pdf), the eligible sample consisted of N = 253 participants (54.1% male, 53.4% female, 1.2% non-binary, one participant preferring not to indicate their gender; aged: M = 39.8, 18–72 years).

Procedure

The procedure was similar to Study 3 with the following change: the experimental manipulation was implemented via a description of a newly developed conversational AI (vs. an update for an existing one in Study 3) as described in the following.

Participants read a short scenario in which it was described that a friend of them recently bought a new conversational AI and would now tell them about their usage experience. Similar to Study 3, competencies on the two lover levels (i.e., sensorimotor level and flexible action patterns) were always described as high. Depending on the experimental condition, intellectual competencies were described as either low or high (e.g., in the low intellect condition by the text “However, the voice assistant is incapable of dealing with incomplete information and it cannot anticipate potential problems or find innovative solutions.” and an illustrating example) and similarly meta-cognitive heuristics were described as either high or low (e.g., in the low meta condition by the text “In addition, I realized that the voice assistant does not learn from mistakes and cannot use information from prior events to solve related tasks. The voice assistant is, thus, incapable to develop abstract strategies based on previous incidents.” and an illustrating example).

Measures

Privacy concerns (α = 0.92) and willingness to disclose (α = 0.92) were assessed with the same scales as in the previous studies. Manipulation checks for competencies on the intellectual level (α = 0.70) and competencies on the level of meta-cognitive heuristics (α = 0.74) again consisted of three items each, which were not part of the description of the conversational AI used for the experimental manipulation in this study.

Results

Manipulation Check

As intended, participants in the high intellect conditions (M = 4.52, SD = 1.11) perceived significantly higher intellectual competencies compared to the low intellect condition (M = 3.73, SD = 1.22), F(1, 249) = 39.45, p < .001, ηp2 = 0.14, but did not differ in their perception of meta-cognitive heuristics, p = .133. Participants in the high meta conditions perceived intellectual competencies (M = 4.72, SD = 1.03) as higher than participants in the low meta conditions (M = 3.56, SD = 1.14), F(1, 249) = 82.56, p < .001, ηp2 = 0.25, and also meta-cognitive heuristics (M = 5.55, SD = 0.72) as higher compared to the low meta conditions (M = 3.59, SD = 1.25), F(1, 249) = 234.20, p < .001, ηp2 = 0.49. Accordingly, the manipulation of meta-cognitive heuristics spilled over to the perception of intellectual competencies. Nonetheless, effect sizes show that its effect on the perception of meta-cognitive heuristics was substantially larger.

Hypotheses Testing

A MANOVA with privacy concerns and willingness to disclose as dependent variables indicated no significant multivariate effect of the intellect manipulation, p = .681, but of the meta manipulation, F(2, 248) = 3.54, p = .030, ηp2 = 0.03, Wilk’s λ = 0.97. The interaction of the two experimental factors was non-significant, p = .256. Participants in the high meta conditions were less willing to disclose information to the conversational AI (M = 3.39, SD = 1.49) than participants in the low meta conditions (M = 3.86, SD = 1.45), F(1, 249) = 6.14, p = .014, ηp2 = 0.02. Further, participants in the high meta conditions (M = 4.89, SD = 1.52) had more privacy concerns than participants in the low meta conditions (M = 4.43, SD = 1.30), F(1, 249) = 6.29, p = .013, ηp2 = 0.03.

Discussion

The manipulation inducing higher meta-cognitive heuristics led to less willingness to disclose (different from Study 3, where we observed no significant effect in the overall sample but a marginal effect in the non-user sample) and more privacy concerns (as in Study 3), supporting H2a and H2b.

Similar as in Study 3, Study 4 again showed no support for an effect of intellectual competencies on willingness to disclose (H1a) or privacy concerns (H1b). To assure that this lack of effect is not due to the lower salience of the intellect manipulation compared to the meta-cognitive heuristics (due to the earlier manipulation in the study process), we conducted Study 5 in which we excluded the meta manipulation and, thus, made the intellect manipulation more salient.

Study 5

Method

Design and Participants

In large part, Study 5 was a conceptual replication of Study 4. However, it was implemented as a one factorial (intellectual competencies: low vs. high) between-subjects design and meta-cognitive heuristics were not manipulated.

We aimed to collect 486 valid cases in order to reach a power of 80% (α = 0.05) in a MANOVA with two groups, one independent and two dependent variables. Participants were recruited via Prolific for an 8-min online study remunerated with £1.00 following the same pre-screening procedure as in Study 3. After exclusion as pre-registered (https://aspredicted.org/947b9.pdf), the eligible sample consisted of N = 471 participants (31.4% male, 68.2% female, 0.4% non-binary; aged: M = 38.7, 18–79 years).

Procedure and Measures

The procedure was identical to Study 4 with the following changes: (1) for the experimental manipulation, we stick to the description of a newly developed conversational AI but used an illustrating example for the described intellectual competencies similar to the one used in Study 3 and (2) we did not manipulate meta-cognitive heuristics.

Measures were identical to Study 3: privacy concerns (α = 0.93), willingness to disclose (α = 0.90) and the manipulation check for intellectual competencies (α = 0.84) had good internal consistency.

Results

As intended, perceived intellectual competencies were lower in the low intellect condition (M = 2.82, SD = 1.10) compared to the high intellect condition (M = 4.82, SD = 0.91), t(446.08) = −21.37, p < .001.

A MANOVA with privacy concerns and willingness to disclose as dependent variables indicated no multivariate effect for the intellect manipulation, F(2, 468) = 1.58, p = .207, ηp2 = 0.01, Wilk’s λ = 0.99. Accordingly, there were no differences regarding willingness to disclose information between the high intellect (M = 3.32, SD = 1.55) and the low intellect condition (M = 3.20, SD = 1.49), F(1, 469) = 0.79, p = .374, ηp2 = 0.00. Privacy concerns did also not differ between the high intellect (M = 5.04, SD = 1.46) and the low intellect condition (M = 4.98, SD = 1.42), F(1, 469) = 0.22, p = .643, ηp2 = 0.00.

Discussion

Similar to Studies 3 and 4, Study 5 again showed no effect of the intellect manipulation on neither willingness to disclose information nor privacy concern, thus not supporting H1a and H1b.

Results Across Studies

We conducted meta-analyses across all data to test the robustness of the effects and check for potential differences between non-users and users across studies. For this purpose, we transformed t-values of β-regression coefficients in Studies 1 and 2 as well as F-values from simple comparisons in Studies 3–5 into r separately for non-users and users for all studies—including Study 1 in which users and non-users were not reported separately above. Due to the small sample size in this study, the respective confidence intervals are rather wide.

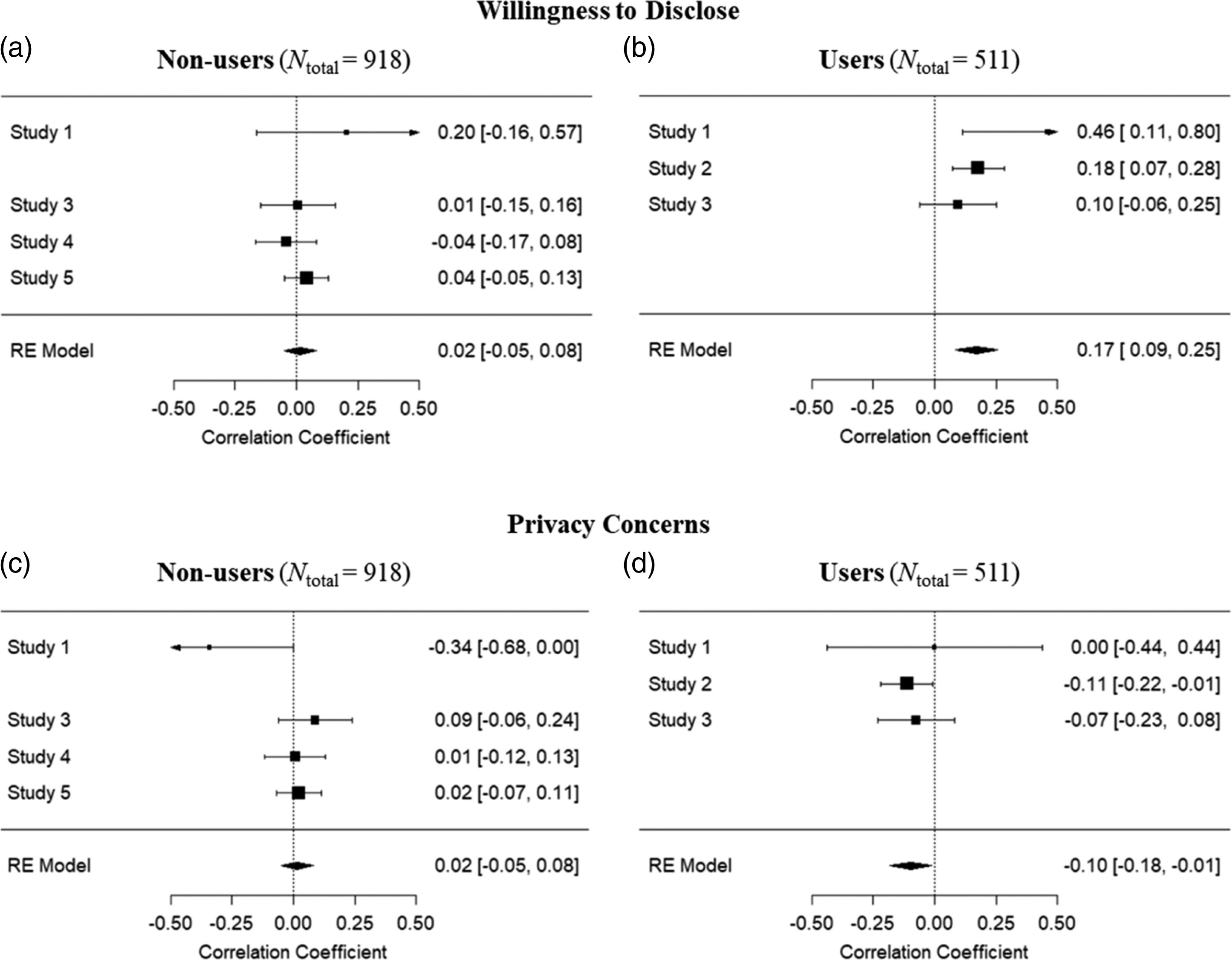

We expected that higher intellectual competencies would predict more willingness to disclose information (H1a). For non-users, this prediction was not supported, r = .02, z = 0.51, p = .609 (Figure 3(a)). In contrast, users were more willing to disclose information if perceiving higher intellectual competencies, r = .17, z = 3.98, p < .001 (Figure 3(b)). Of note, as this effect was only significant in Studies 1 and 2 but not in Study 3, experimental support for H1a is also lacking for the user subsample. Results of meta-analyses across studies for the effect of intellectual competencies on willingness to disclose (H1a) and privacy concerns (H1b) in the non-user (N = 918) and user (N = 511) samples. The figure shows results for (a) willingness to disclose of non-users, (b) willingness to disclose of users, (c) privacy concerns of non-users, and (d) privacy concerns of users.

We further expected that higher intellectual competencies would predict less privacy concerns (H1b). This hypothesis was likewise not supported for non-users, r = .02, z = 0.52, p = .605 (Figure 3(c)), but for users, r = −.10, z = −2.21, p = .027 (Figure 3(d)).

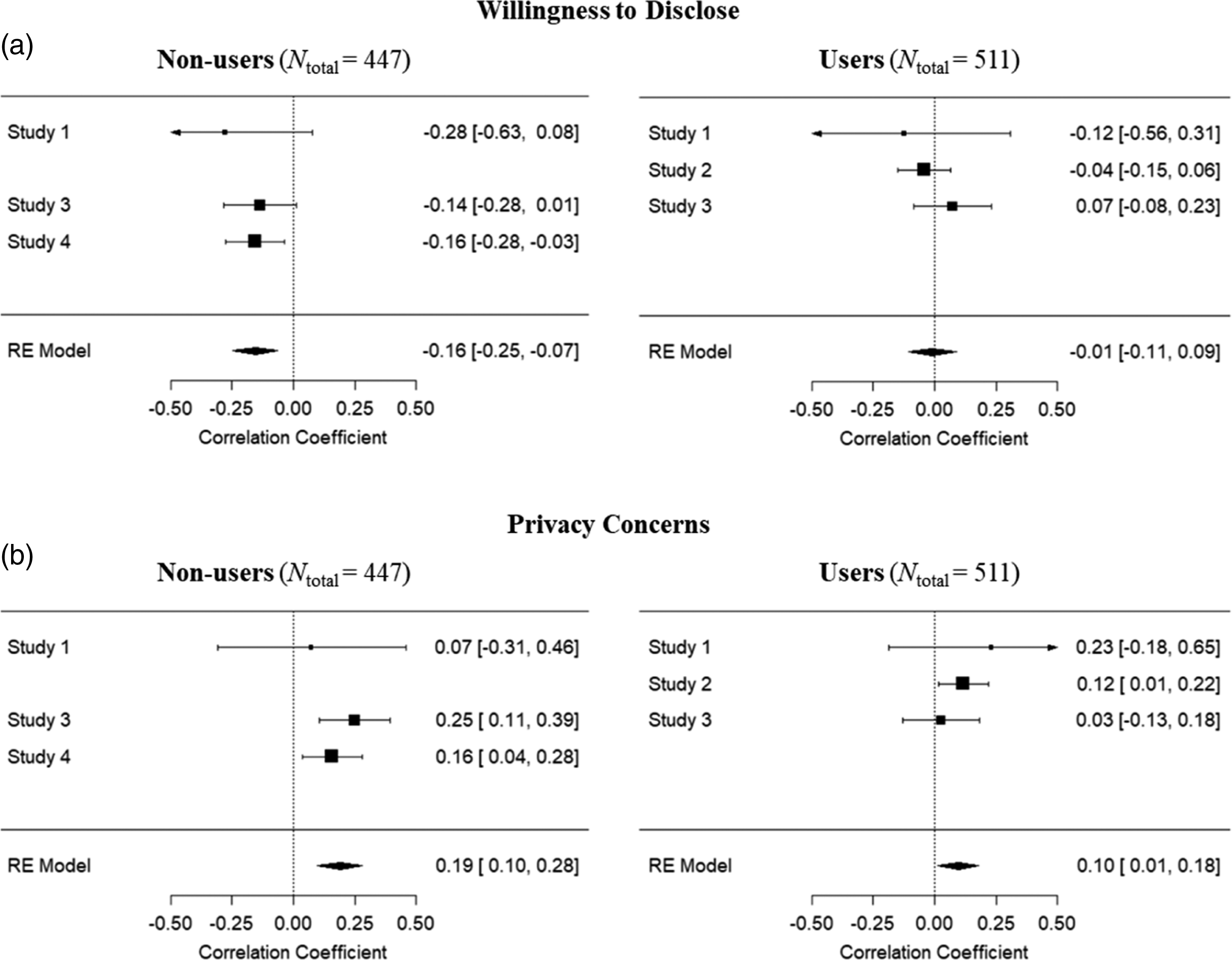

Moreover, we assumed that higher meta-cognitive heuristics would predict less willingness to disclose (H2a). This hypothesis was supported across studies for non-users, r = −.16, z = −3.37, p = .001, but not for users, r = −.01, z = −0.18, p = .858 (Figure 4(a)). Results of meta-analyses across studies for the effect of meta-cognitive heuristics on (a) willingness to disclose (H2a) and (b) privacy concerns (H2b) in the non-user (N = 447) and user (N = 511) samples.

Lastly, we assumed that higher meta-cognitive heuristics would predict higher privacy concerns (H2b). As shown in Figure 4(b), this effect was found for non-user and users. It is noteworthy, that the estimated overall effect size was stronger for non-users, r = 0.19, z = 4.13, p < .001, than for users, r = 0.10, z = 2.23, p = .026.

To sum up, the meta-analytical results suggest that intellectual competencies of a conversational AI led to a higher willingness to disclose and lower privacy concerns for users, whereas no effect of these competencies was observable for non-users. Meta-cognitive heuristics in a conversational AI led to more privacy concerns for non-users and users, however, only for non-users they also led to a lower willingness to disclose, whereas, for users, this willingness was not affected by meta-cognitive heuristics.

General Discussion

The present research tested whether perceived competencies of conversational AI affect people’s disclosure in terms of privacy concerns and willingness to disclose. Focusing on participants’ subjective perception of human-like competencies by applying the action regulation theory (Hacker & Sachse, 2014; Semmer & Frese, 1985), we followed the idea that technologies are perceived and treated as social actors (Reeves & Nass, 1996) and are ascribed human-like characteristics (Epley et al., 2007).

We predicted that different competencies of conversational AI would have different effects on disclosure. In detail, higher intellectual competencies in a conversational AI should heighten the willingness to disclose (H1a) and lessen privacy concerns (H1b), whereas meta-cognitive heuristics should heighten privacy concerns (H2a) and lessen willingness to disclose (H2b). As differences between users and non-users became evident within the research process and are highly plausible (as discussed below), we differentiated between those two groups.

Intellectual Competencies

For non-users, intellectual competencies of conversational AI did neither affect their willingness to disclose nor their privacy concerns. For users, the results suggest that intellectual competencies heighten their willingness to disclose and lessen their privacy concerns, although we could not validate these effects in an experimental setting (Study 3) and, thus, the causality of the effects is not ensured by our data.

The finding that intellectual competencies in a conversational AI are positively related to users’ disclosure is in line with prior research showing that people are willing to share their personal data in exchange for benefits. Further, the finding adds to the literature of positive effects of higher (perceived) competencies on technology acceptance (e.g., Dehghani, 2018; McLean & Osei-Frimpong, 2019; Pitardi & Marriott, 2021) by demonstrating a similar effect on users’ disclosure. It is, nonetheless, noteworthy that we did not observe the expected effect for non-users of conversational AI. It might be the case that the benefits of intellectual competencies were less clear and salient to non-users and did, thus, not have the same effect as for users. At this point, this is however speculative and should be tested in further research.

Meta-Cognitive Heuristics

As expected, meta-cognitive heuristics reduced non-users’ willingness to disclose (although the effect was significant only in Study 4, the overall pattern of observed effects clearly supports this notion) and heightened their privacy concerns. These findings support previous research suggesting that technical systems can be perceived as too competent—especially when it comes to human-like competencies. Adding to previous literature, we observed that too high competencies do not only lead to feelings of eeriness and perceptions of threat (Stein et al., 2019, 2020; Złotowski et al., 2017) but also to concrete concerns about privacy and a reduced willingness to disclose in the specific case of conversational AI.

However, meta-cognitive heuristics did not affect users’ willingness to disclose. Across studies, meta-cognitive heuristics were associated with heightened privacy concerns for users. Given that this relation was significant only in the correlational Study 2, it remains unclear whether there is a causal effect. In sum, meta-cognitive heuristics have, if at all, a small effect on users’ privacy concerns. It is plausible that for users (in comparison to non-users), benefits due to a conversational AI’s heightened competencies on the level of meta-cognitive heuristics are again more obvious and salient and, thus, also more likely to outweigh the perceived negative aspects of information sharing (e.g., higher privacy concerns) in a privacy trade-off. As previous research has further shown a gap between disclosure intentions and actual disclosing behavior (e.g., Norberg et al., 2007), the differences between privacy concerns and actual disclosure might be even larger than the ones observed in our studies focusing on willingness to disclose.

Besides, the findings regarding meta-cognitive heuristics are consistent with another mechanism: high human-like competencies in a technical system might be concerning in the first place (as demonstrated for the non-users) but with ongoing usage, people might accustom to these high capabilities and, thus, be less concerned about them. With the ongoing technological progress, especially in the field of AI, this mechanism raises awareness that particularly users should be sensitized for potential privacy risks associated with the usage of technical systems as they might lose caution over time.

Strengths and Limitations

Our findings are based on large samples from several studies (including both survey and experimental data) and allow insight on both users’ and non-users’ disclosure towards conversational AI. The results clearly signalize that the proposed systematic differentiation of competencies was informative to disentangle the consequences of perceived human-like competencies on disclosure towards conversational AI. Thus, the proposed systematic differentiation of perceived competencies can be a starting point for future research focusing, for instance, on the effects of these competence levels on other aspects of acceptance (e.g., trust, believability) as well as usage (e.g., adoption, usage frequency, usage purposes) for conversational AI or other highly competent (AI) technologies.

Despite the aforementioned strengths of our studies, some limitations should be considered when interpreting the results. Our experimental studies were based on vignette descriptions rather than real interaction with the conversational AI. This approach comes close to capturing responses to information about technology updates. However, as users already have the regular experience of interacting with conversational AI, it was considerably harder to delude them about the system’s competencies by using vignette descriptions. Here, the survey data gave valuable insights especially for the users: while their interaction experience with conversational AI potentially reduced the effectiveness of experimental manipulations, it also enabled them to answer survey question more validly (e.g., they probably have a more realistic estimate of their information sharing). Besides, the experimental manipulation of conversational AI competencies in a real interaction is challenging: to enable a system to make use of meta-cognitive heuristics (e.g., developing general strategies based on previous interactions), regular interaction over a longer period of time would be necessary. We thus encourage longitudinal research in order to investigate how the effects of perceived competencies develop and change within a longer usage period.

Further, we relied on self-reported disclosure intentions but did not assess actual disclosing behavior. Despite the fact that intentions are generally good predictors of behavior, future research should, thus, complement our findings by applying the proposed framework to investigate actual disclosing behavior.

Conclusion

Building on the idea that technologies are anthropomorphized, perceived, and treated as social actors (Epley et al., 2007; Reeves & Nass, 1996), the present research investigated the effects of perceived human-like competencies in a conversational AI on users’ and non-users’ disclosure. In a nutshell, the results indicate that non-users are concerned about their privacy and do not want to share personal information with highly competent conversational AI (i.e., using meta-cognitive heuristics). In contrast, users are willing to share personal information in exchange for intellectual competencies of the technology. Even though meta-cognitive heuristics in a conversational AI slightly heightened privacy concerns also for users, these competencies did not reduce their willingness to share information with the system. Thus, the presented findings highlight a tension: on the one hand, users can benefit from higher competencies of a conversational AI and are willing to share personal data in exchange. On the other hand, high competencies are perceived as concerning—especially for people with little interaction experience with the system.

Supplemental Material

Supplemental Material - The More Competent, the Better? The Effects of Perceived Competencies on Disclosure Towards Conversational Artificial Intelligence

Supplemental Material for The More Competent, the Better? The Effects of Perceived Competencies on Disclosure Towards Conversational Artificial Intelligence by Miriam Gieselmann and Kai Sassenberg in Social Science Computer Review

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.