Abstract

The grid question refers to a table layout for a series of survey question items (i.e., sub-questions) with the same introduction and identical response categories. Because of their complexity, concerns have already been raised about grids in web surveys on PCs, and these concerns have heightened regarding mobile devices. Some studies suggest decomposing grids into item-by-item layouts, while others argue that this is unnecessary. To address this challenge, this paper provides a comprehensive evaluation of the grid layout and four item-by-item alternatives, using 10 response quality indicators and 20 survey estimates. Results from the experimental web survey (n = 4644) suggest that item-by-item layouts (unfolding or scrolling) should be used instead of grids, not only on mobile devices but also on PCs. While the former justifies the already increasing use of item-by-item layouts on mobile devices in survey practice, the latter implies that the prevailing routine of using grids on PCs should be reconsidered.

Introduction

In the context of survey questions, the term grid refers to a specific layout used for a series of items (i.e., sub-questions) sharing the same introduction and identical response categories (Callegaro et al., 2015). Grid questions are also referred to as matrix questions (Grady et al., 2018) or a battery of items (Saris & Gallhofer, 2014). Compared with the sequence of stand-alone questions (i.e., an item-by-item layout), the grid is arranged in a tabular format, usually with items in rows and response categories in columns. This eliminates the need to repeat the introduction and response categories for each item, reducing the overall word count and layout space.

For PC web surveys, the literature (e.g., Callegaro et al., 2017; Couper et al., 2013; de Leeuw et al., 2012; Vehovar & Batagelj, 1996) has already warned that the corresponding PC grid may increase item nonresponse, breakoffs, and satisficing behavior (for a discussion of satisficing, see Roberts et al., 2019). Accordingly, some researchers have suggested avoiding grids (Callegaro et al., 2015; Dillman et al., 2014; Gräf, 2002; Poynter, 2001; Wojtowicz, 2001). However, some studies have found no negative effects of using grids (e.g., Callegaro et al., 2009; Couper et al., 2001). In addition, two potential benefits of grids have been identified: shorter completion times (de Leeuw et al., 2012; Toepoel et al., 2009) and higher interitem consistency (Mavletova et al., 2018; Tourangeau et al., 2004; Yan, 2005). Given the mixed research findings, grids remain popular in PC web surveys, and optimizing the grid layout has become a research challenge (e.g., Couper, 2008, pp. 190–209; Couper et al., 2013).

When web surveys are answered from mobile devices, we talk about mobile web surveys. Here, the small screens raise additional questions about usability and methodology. To some extent, this is addressed with web survey software that automatically adjusts font size, graphical elements, and spacing between elements (Antoun, Katz, et al., 2018; Macer, 2011). This is referred to in the literature as mobile optimization, mobile-friendly design, or adaptive or responsive design (see Mavletova et al., 2018). When the corresponding optimization is applied to grids, we denote them as mobile grids. This optimization is not uniform but varies across web survey software. However, it contrasts with the nonoptimized mobile grids, where the PC grid layout is displayed on a mobile device without any adjustments.

Similar to PC web surveys, research on grids in mobile web surveys is inconclusive. Some studies claim that grids amplify the problems of PC web surveys (e.g., Antoun, Katz, et al., 2018; Čehovin & Berzelak, 2020), while others find almost no impact (e.g., Revilla et al., 2017; Tourangeau et al., 2017). The discrepancies may be due in part to differences in aspects and settings of the studies. Nonetheless, this points to a research gap that is also of great importance for survey practice; this paper aims to fill this gap. Thus, the added value of this study is to examine the effects of grids in a comprehensive research setting. Using 10 response quality indicators (RQIs) and 20 survey estimates, a grid layout and four main item-by-item alternatives are systematically evaluated on PCs and mobile devices. The intent of this study is also to provide practical guidance on when and how grids should be used.

We note that we assume well-designed grids that follow the recommendations of relevant textbooks (e.g., Callegaro et al., 2015; Couper, 2008; Dillman et al., 2014). Among other things, this means that the number of rows (items) and columns (response categories) is limited. A small or large number of rows or columns or various other specifics certainly has additional effects, but these are beyond the scope of this study, which focuses on typical grids that prevail in social science research practice (i.e., grids with 5-point scales, with attitudinal items, and in the context of longer surveys [e.g., 20 minutes]).

Literature Review

The advocacy of grids arose in the context of paper-based questionnaires because of the obvious space savings. However, there is little research evidence that this would improve response quality. On the contrary, some studies even point to disadvantages for response quality (e.g., Chesnut, 2008). Moreover, the Internet has radically changed the way respondents interact with questions, so the advantages of grids in paper-based questionnaires (if they exist) may disappear in web surveys.

Grids in PC Web Surveys

Since the inception of (PC) web surveys, concerns have been raised about grids, which have been associated with higher rates of breakoffs and item nonresponse (Vehovar & Batagelj, 1996) and increases in nonsubstantive responses, extreme responses, and straightlining (e.g., Callegaro et al., 2015; Dillman et al., 2014). Grids also made it less likely for respondents to notice reverse-worded items (Tourangeau et al., 2004). However, many studies found these effects statistically nonsignificant (e.g., Couper et al., 2013; Revilla et al., 2017). Still, there is almost no research that has demonstrated the benefits of grids, with the exception of some very specific studies (e.g., Couper et al., 2001).

Sometimes, however, the research has revealed a specific advantage of grids in terms of response speed (e.g., Couper & Peterson, 2017; de Bruijne & Wijnant, 2013) although this has not been confirmed in some other studies (e.g., Bell et al., 2001; Ha & Zhang, 2018; Revilla & Couper, 2018). Nevertheless, it is also unclear whether reduced response times actually improve response quality, as speeding can lower cognitive effort and response quality (e.g., more missing data).

A similar controversy exists for interitem consistency in the case of items measuring the same underlying dimension. Namely, interitem consistency is reportedly higher in grids (e.g., Couper et al., 2001; Silber et al., 2018; Tourangeau et al., 2004), although some studies have found little or no difference (e.g., Bell et al., 2001; Toepoel et al., 2009; Yan, 2005). Again, the question is whether this increase truly improves response quality. Peytchev and Tourangeau (2005) found that construct validity was higher in item-by-item layouts than in grids, despite higher interitem consistency in grids, which could be increased artificially due to nondifferentiation in grids (e.g., Callegaro et al., 2017; de Leeuw et al., 2012; Silber et al., 2018). Some research suggests that only the same-direction item scales increase interitem consistency in grids (e.g., Tourangeau et al., 2004; Weijters et al., 2013), while with reverse wording, these effects disappear (e.g., Bell et al., 2001; Callegaro et al., 2009). We lack studies showing that interitem consistency in grids is also higher when the scale direction of the items is varied.

Regarding subjective evaluation, respondent satisfaction decreased when the number of items (4, 10, and 40 items) per grid was increased (Toepoel et al., 2009). Similarly, de Leeuw et al. (2012) and Mavletova et al. (2018) found that grids had negative effects on subjective evaluations. However, some studies (e.g., Callegaro et al., 2009) found no difference. Nevertheless, there is no evidence that grids increase respondent satisfaction.

Grids in Mobile Web Surveys

Small screen sizes in mobile web surveys present further challenges for grids (e.g., Antoun, Conrad, et al., 2018; Peterson et al., 2017; Struminskaya et al., 2015). Early studies have documented the negative effects of nonoptimized mobile grids (see Arthur et al., 2014; Lambert & Miller, 2015; Richards et al., 2016; Tourangeau et al., 2017), leading to the clear avoidance of such grids (e.g., Antoun, Katz, et al., 2018; Čehovin & Berzelak, 2020). Therefore, in the following sections, we only discuss mobile grids, which are optimized, as defined in the introduction.

Antoun, Katz, et al. (2018) have elaborated general principles for mobile web survey design, which require that the questionnaire layouts on mobile devices differ from that on PCs. This is largely true for grids as well. However, despite the adaptations on mobile devices, studies often report (see Antoun, Katz, et al., 2018; Čehovin & Berzelak, 2020) that grids increase item nonresponse, breakoffs, and satisficing behavior (e.g., McGeeney, 2015; Stern et al., 2016). Nevertheless, similar to the PC setting, some studies found no compelling evidence that item-by-item alternatives are superior (e.g., Ha & Zhang, 2018; Revilla & Couper, 2018), reviving the impression of inconclusive results. Conversely, the apparent advantages of grids in speed and interitem consistency from PCs also reappear on mobile devices (e.g., Liu & Cernat, 2018; Mavletova et al., 2018; Richards et al., 2016).

Regarding survey estimates, studies generally found rare, negligible, or nonsignificant differences when grids were compared to item-by-item layouts on PCs (e.g., Couper et al., 2013; Thorndike et al., 2009) or mobile devices (e.g., Liu & Cernat, 2018; Mockovak, 2018).

Grid and Item-by-Item Layout Decompositions

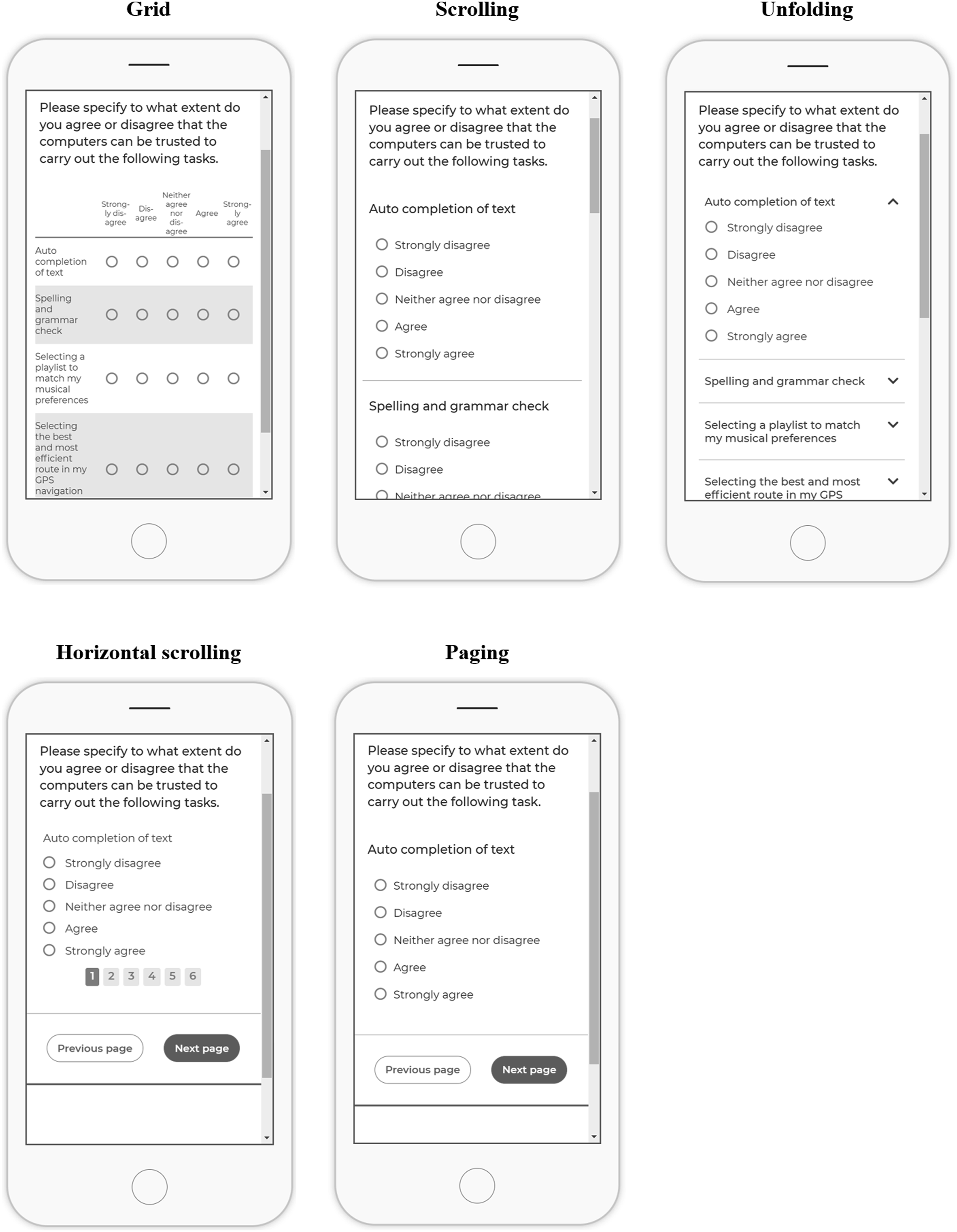

In item-by-item decompositions, the response categories are repeated for each item, so the main grid simplification is lost. The grid can be decomposed in several ways; the four main alternatives described below have been identified according to their occurrence in the literature (see Čehovin & Berzelak, 2020). Figure 1 illustrates the corresponding layouts for mobile devices (for PC screenshots, see Figures A.1–A.5 in Online Supplement): The mobile grid squeezes the PC grid layout into the smaller screen of the mobile device and optimizes the appearance. In some situations, as in Figure 1, this also eliminates the need for horizontal scrolling or zooming. Vertical scrolling, or simply scrolling, displays items sequentially on the same page. Respondents scroll vertically to navigate through the items, requiring more scrolling compared to a grid. This layout is dominant in the literature among item-by-item alternatives (e.g., Couper & Peterson, 2017; Grady et al., 2018; Mavletova et al., 2018; Revilla et al., 2017; Revilla & Couper, 2018). Unfolding displays items vertically on the same page. However, only the current item is fully displayed with response options (i.e., unfolded), while for others, only the item statement is displayed. After an item is answered, an automatic forwarding unfolds the next item, while the answered item collapses. Respondents can navigate without scrolling or clicking, so the number of interactions is the same as with the grid. This layout is sometimes called a “concertina” or “accordion” design (e.g., Knibbs & Stobart, 2018; Mavletova et al., 2018). Horizontal scrolling displays items on separate subpages and uses automatic forwarding, allowing respondents to move through items without scrolling or clicking. Additional navigation is provided by a horizontal list of item numbers at the top or bottom of the screen. This layout is also referred to as a “horizontal scrolling matrix” (e.g., Callegaro et al., 2015; de Leeuw et al., 2012; Klausch et al., 2012) or a “dynamic grid” (e.g., Hanson, 2017). Paging resembles scrolling, but it places items on separate pages, requiring additional clicks after each item. Furthermore, the introduction is often repeated for each item. This layout is expected to increase harmonization across survey modes and devices (Villar et al., 2018) and is frequently used in research on grids (e.g., Couper & Peterson, 2017; Peytchev & Hill, 2010; Stern et al., 2016; Tourangeau et al., 2004). Screenshot examples of mobile grids and four item-by-item layout alternatives on mobile devices.

Methods

In the context discussed above, the empirical study evaluates the grid and four item-by-item decompositions from the perspectives of response quality and survey estimates, both for PCs and mobile devices. The research questions are as follows:

What is the effect of the layouts on response quality?

What is the effect of the layouts on survey estimates?

Should grids (always) be decomposed, and if yes, how? Regarding mobile devices, the empirical study focuses only on smartphones, hereafter referred to as SP, as other mobile devices have a negligible share (e.g., gaming consoles) or behave like an inconsistent mix of PCs and smartphones (e.g., tablets; see Peterson et al., 2017). As mentioned, we only consider well-designed grids and 5-point ordinal scales measuring attitudes (e.g., rating scales), although some grids addressing behaviors (e.g., frequency of online activities) are also included.

Experimental Design and Data Collection

Respondents were randomly assigned to five experimental cells (grids and four item-by-item layouts), where the effects of the layouts on response quality and estimates were evaluated. The study assessed the cumulative effects of the grids on the entire questionnaire rather than the effects of the individual grid.

Respondents were recruited from the largest Slovenian access panel 1 in January–February 2020, while the data collection itself ran at the University of Ljubljana using 1KA 2 software, which was also adapted for paradata collection. A total of 11,169 panelists were invited (initial e-mail and follow-up; see Section 4 of Online Supplement for details), and 4771 (43%) clicked on the web questionnaire. Tablet users (n = 127) were excluded. Of the remaining 4644 units, 4058 completed the questionnaire, and 713 were breakoffs, of which 197 provided no responses. Respondents self-selected the device, with 2516 (54%) responding on PCs and 2128 (46%) on smartphones. Once the respondent started the survey, the device could not be changed, and the survey had to be completed in a single session. Soft reminders were used when respondents attempted to skip items; thus, they were allowed to proceed without answering. The median duration was 20.6 minutes.

Questionnaire

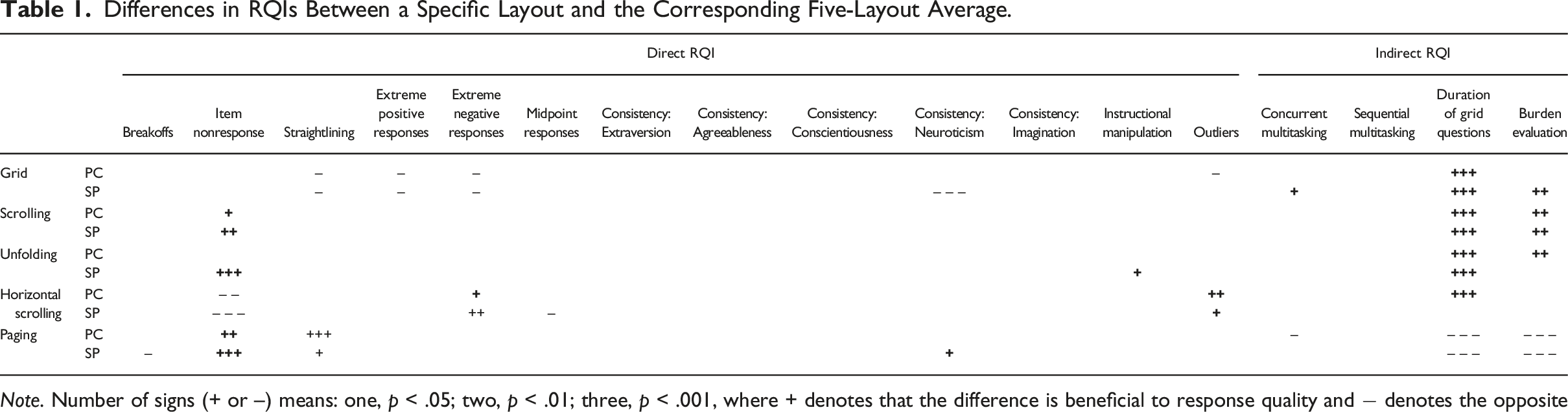

Differences in RQIs Between a Specific Layout and the Corresponding Five-Layout Average.

Note. Number of signs (+ or –) means: one, p < .05; two, p < .01; three, p < .001, where + denotes that the difference is beneficial to response quality and − denotes the opposite

Response Quality Indicators

The RQIs used are based on the understanding of response quality in Alwin (2007), Ganassali (2008), and Callegaro et al. (2015). It encompasses measurement errors related to cognitive problems in the response process (Tourangeau et al., 2000) comprised of four components (i.e., comprehension of the question, retrieval of information, judgment, and response). In addition, selected nonresponse errors are also considered. Based on this approach, 10 typical RQIs (see Roberts et al., 2019) were selected from the literature. No further conceptual elaboration on response quality is provided here. A broader context of response quality can be found under the umbrella of survey quality (e.g., Biemer & Lyberg, 2003; Blasius & Thiessen, 2012) and survey errors (e.g., Groves, 2005).

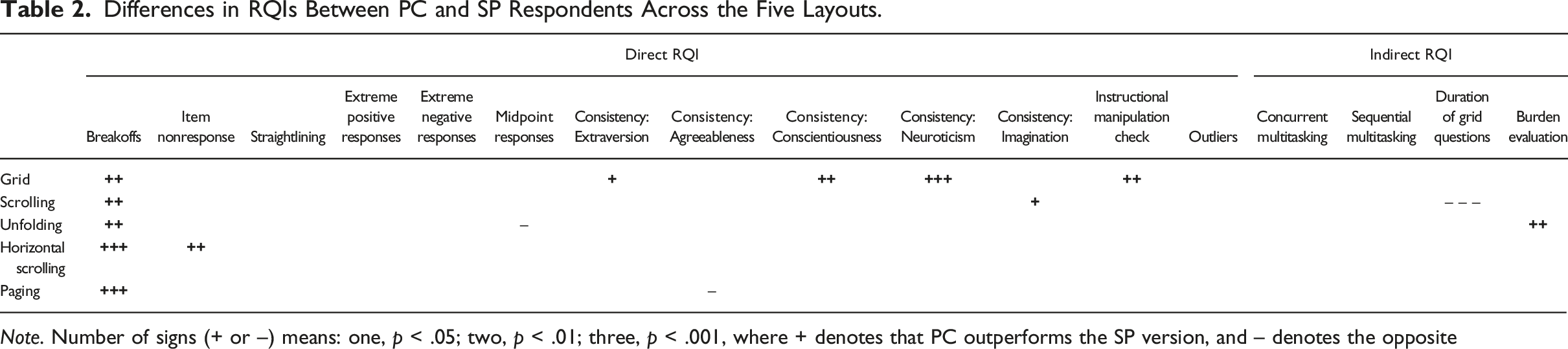

The selected RQIs can be grouped into two sets. The first set is the direct RQIs, which reflect actual response quality problems and are thus of greater importance. The RQIs were calculated for each of the five experimental cells and separately for the PC and SP (i.e., smartphone) respondents. For a summary table of the questions included in the RQIs, see Table A.1. (1) Breakoffs. The breakoff rate was calculated as the share of respondents who quit before the questionnaire was concluded among all respondents that started the questionnaire (Callegaro et al., 2015). (2) Item nonresponse. The number of unanswered items to which the respondent was exposed was divided by the number of all items that were shown to the respondent. The mean across all respondents was then calculated. (3) Straightlining. This form of nondifferentiation (Kim et al., 2019) is a typical manifestation of satisficing (Roberts et al., 2019). It was calculated for each respondent as the number of grids (out of four attitudinal grids) where the responses had a standard deviation of zero (i.e., the respondent selected the same answer for all items). The mean for all respondents was then calculated. (4) Extreme and midpoint responses. These are also forms of satisficing and can indicate lower response quality (Roberts et al., 2019). The percentages of left-most and right-most response options and the percentages of midpoint responses were calculated for each item in the four attitudinal grids. The corresponding means were then calculated across the items and respondents. (5) Interitem consistency. Cronbach’s alpha measures interitem correlations in a group of items with single underlying measurement dimensions (Cronbach, 1951); high values indicate greater consistency. This measure was applied only to the Big Five personality dimensions, where alternating scale directions were used, resulting in five RQIs. (6) Instructional manipulation check (IMC). This is an indicator of the level of attentiveness (e.g., Morren & Paas, 2020; Revilla & Ochoa, 2015). Two fictitious online stores were included in a grid for online shopping. Inattentive respondents failed the IMC if they indicated that they had visited a nonexistent online store. Each respondent could fail the IMC zero times (91% of respondents) once (5%) or twice (4%). The mean across all respondents was then calculated. Item nonresponse was not counted as failing the IMC. (7) Outliers. The Mahalanobis distance (De Maesschalck et al., 2000; Peck & Devore, 2012) has been used to detect respondents with unusual response patterns (Curran, 2016; Hong et al., 2020), as they likely reflect inconsistent (e.g., random or blind) responding. The distance was based on variables from the four attitudinal grids. Outliers were units with a statistically significant distance from the corresponding centroid in multivariate space (p < .01). The overall number of outliers was then calculated. Differences in RQIs Between PC and SP Respondents Across the Five Layouts. Note. Number of signs (+ or –) means: one, p < .05; two, p < .01; three, p < .001, where + denotes that PC outperforms the SP version, and – denotes the opposite

The second set of indirect RQIs is associated with undesirable response behaviors that have only potentially negative effects on response quality. (8) Self-reported concurrent and sequential multitasking. Respondents self-reported multitasking at the end of the questionnaire, which might have negatively affected response quality (Sendelbah et al., 2016). Concurrent multitasking refers to activities that could be done in parallel, such as listening to music and watching TV, while sequential multitasking means pausing the response process due to alternative activities, such as visiting other websites and doing household chores (see the note of Table A.1 for a complete list of activities). The average number of multitasking activities was calculated for all respondents. (9) Duration of grid questions. This indicator comprised the time spent on 15 grid questions, which were nested into separate pages (i.e., sections), for which the page time was measured. Focus-out time (i.e., time spent in other windows on the same device) was excluded from the page duration (see Sendelbah et al., 2016). For each page, the times of the slowest 1% of respondents were replaced by the 99th percentile (see Matjašič et al., 2018; Yan & Tourangeau, 2008). The corresponding page times were then summed up to determine the total grid duration for each respondent. The average across all respondents in certain experimental cells was then calculated. (10) Burden evaluation. The indicator was based on responses to the question “How burdensome was it to complete this survey?” on a 5-point scale. The mean across all respondents was calculated.

Statistical Analysis

Data from the 4644 respondents were weighted by gender, age, education, and region separately for PC and SP. The weighting had a minimal effect because the panel provider anticipated the nonresponse segments and oversampled appropriately but also because the control variables had a relatively little effect on the results examined. Further details and data are provided in Online Supplement. Unless otherwise stated, only statistically significant (two-tailed) effects were interpreted, using a standard threshold of p < .05.

Results

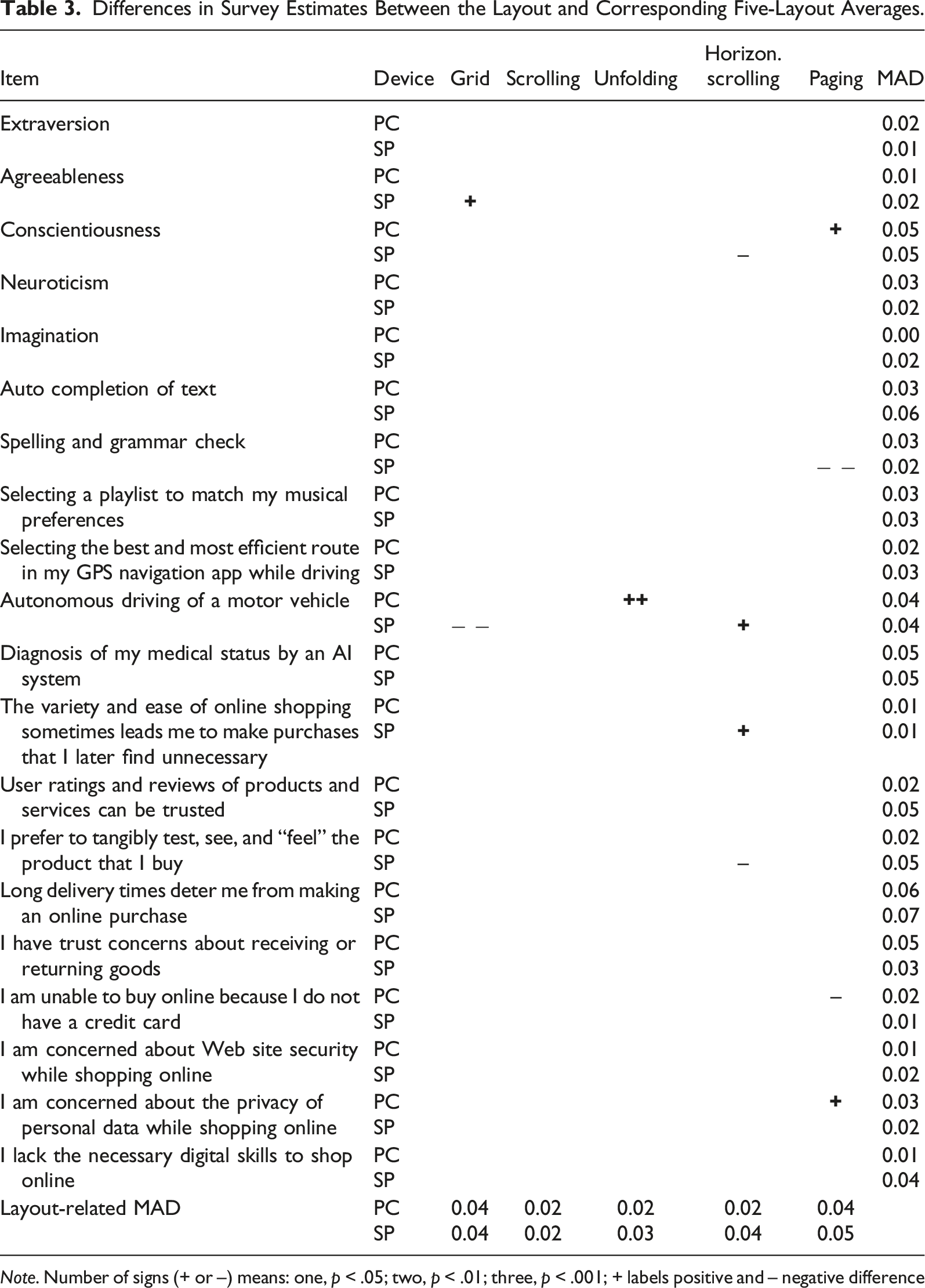

Differences in Survey Estimates Between the Layout and Corresponding Five-Layout Averages.

Note. Number of signs (+ or –) means: one, p < .05; two, p < .01; three, p < .001; + labels positive and – negative difference

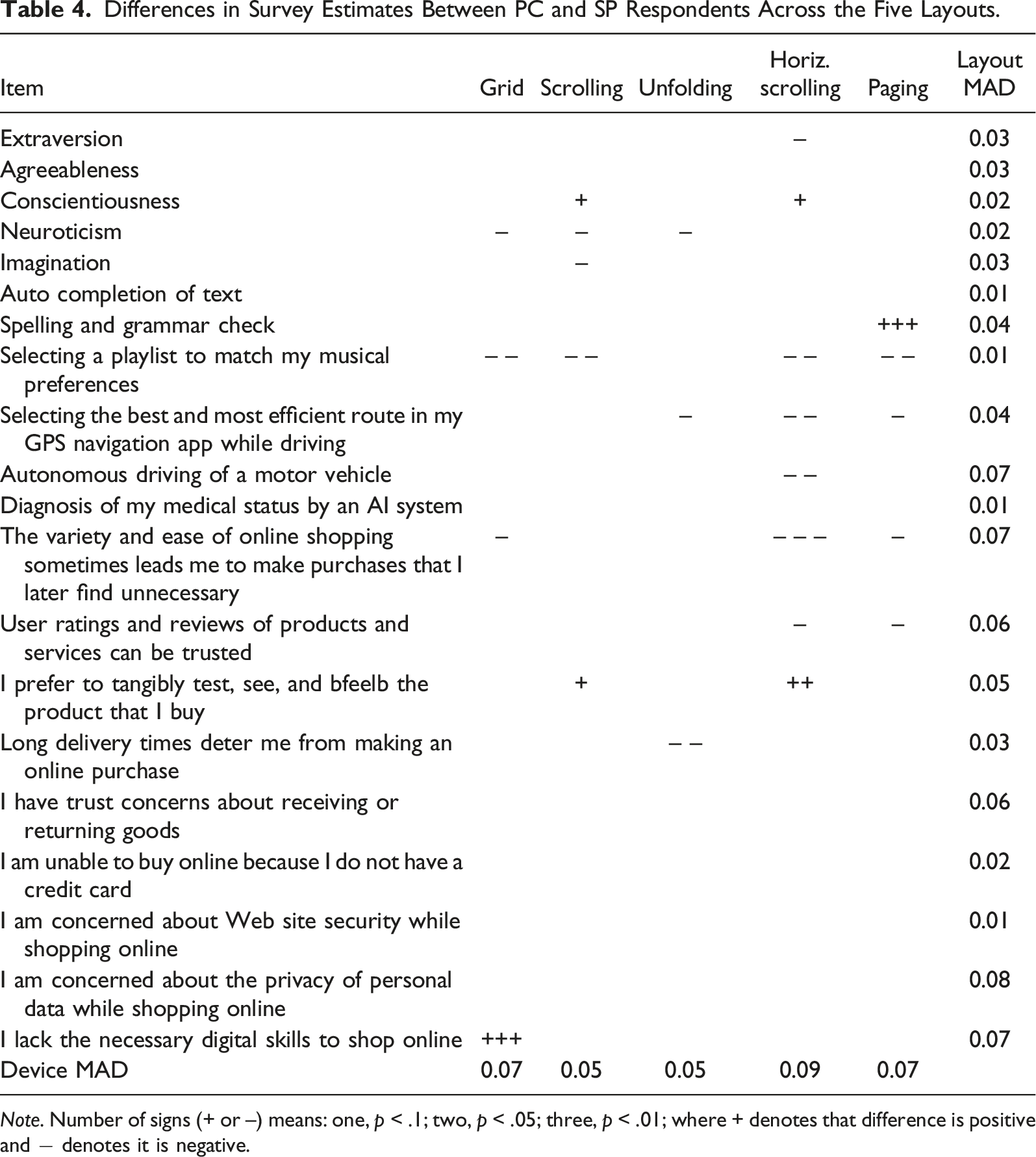

Differences in Survey Estimates Between PC and SP Respondents Across the Five Layouts.

Note. Number of signs (+ or –) means: one, p < .1; two, p < .05; three, p < .01; where + denotes that difference is positive and − denotes it is negative.

Layouts and Response Quality

Table 1 compares the RQIs for each layout with the corresponding mean across all five layouts. A positive sign indicates deviation toward positive response quality and vice versa. For example, two minus signs (– –) for breakoffs on SP for the paging layout mean that the percentage of breakoffs (24.2%) was statistically significantly (p < .01) higher than the corresponding five-layout average (19.4%). In this case, a higher-than-average value of RQI indicated a below-average response quality reflected in minus signs.

The grid layout performed poorly on PCs and SPs (while the differences between the two were surprisingly small), particularly when compared to unfolding and scrolling. For example, on PCs, the grids underperformed the five-layout average for straightlining because the values 0.460 (grid) and 0.388 (five-layout average) on PCs mean a relative difference of 19%, that is, (0.460–0.388) / 0.388. The grid layout also underperformed for straightlining on SPs (relative difference of 25%). A similar situation was observed for extreme responses, while on PCs, the grids also underperformed for outliers (60%). In terms of duration, grids statistically significantly outperformed paging but not scrolling and unfolding (see Section 7 of Online Supplement). The situation was similar for perceived burden. For interitem consistency, grids had no advantage on PCs, while they actually underperformed on SPs for neuroticism (22%).

Unfolding did not underperform in any RQI. On SPs, it overperformed on item nonresponse (53% lower than five-layout average), duration (17%), and IMC (35%), while on PCs, it overperformed on survey duration (18%) and burden (7%). Scrolling behaved very similarly, with slight (but not statistically significant) lags in item nonresponse and IMC.

Horizontal scrolling severely underperformed on item nonresponse, which was 90% (PC) and 197% (SP) higher than the five-layout average. It also suffered from a higher share of midpoint responses on SPs (6%). Compared to unfolding and scrolling, it had fewer advantages in duration and burden. Conversely, it overperformed on extreme negative responses (PC 8%, SP 13%) and outliers (PC 47%, SP 36%).

Paging had a major disadvantage on both devices in duration (about 60% above the five-layout average) and burden (higher by about 15%). It also underperformed for breakoffs (9% PC, 25% SP). On PCs, paging underperformed for concurrent (29%) and sequential multitasking (26%). Conversely, paging showed an advantage in straightlining (30% PC, 18% SP) and in interitem consistency for neuroticism (13% SP). Paging also overperformed on item nonresponse (48% PC, 69% SP), but this was similar to scrolling and unfolding.

Device Effect and Response Quality

Table 2 summarizes the differences in the corresponding RQIs between PCs and SPs within each layout. Positive signs mean that the PC layout performed better than the corresponding SP layout, and vice versa. The bno differenceb status is preferred where the device has no effects within a given layout.

The grid layout had the largest device effects. In addition to breakoffs, the grid underperformed on three out of four consistency components. For example, the Cronbach’s alpha values 0.722 (PC) and 0.588 (SP) for conscientiousness yielded a relative difference of 23%—that is, (0.722–0.588)/0.588. Similarly, the relative difference for the IMC was 53%. Conversely, the differences disappeared for scrolling and unfolding for almost all direct RQIs, while for indirect RQIs, they persisted for scrolling for duration (SP respondents were 11% faster) and for unfolding for burden (10%). Specific effects were found for horizontal scrolling, where PCs performed much better for item nonresponse (42%) and particularly for breakoffs (52%). The latter is also true for paging, which seems to have the lowest overall device effects.

Layouts and Survey Estimates

Layout effects were calculated for each estimate as a difference between the estimate in a given layout and the corresponding five-layout average. The Median Absolute Deviation (MAD) was calculated for each estimate (as the median of absolute differences from the corresponding five-layout median) as well as for each layout (as the median of absolute differences among the 20 estimates within a given layout). We assume that better layouts produce statistically significant deviations in fewer estimates (of course, we assume that the overall median is closer to the true value than an estimate based only on one specific layout). Table 3 presents deviations for 20 estimates related to attitudinal grids, where the Big Five personality dimensions (i.e., extraversion, agreeableness, conscientiousness, neuroticism, and imagination) were each computed from the corresponding four survey items. The results show that layouts had an effect (p < .05) on estimates in 11 of 200 cells (20 estimates by 5 layouts by 2 devices). Relative differences were equal to or greater than 5%—which is denoted hereafter as a notable difference—in 6 cells. Multivariate analysis of variance showed statistically significant effects of layouts (p = .001), devices (p = .000), and their interaction (p = .040).

Considerable differences exist among layouts. Specifically, the grid layout had a significantly higher score than the five-layout average for agreeableness (PC) and trust in autonomous driving (SP); the latter was also a notable difference. Scrolling produced no statistically significant differences for any estimate. Unfolding generated one significant (and notable) deviation related to trust in autonomous driving (PC). Horizontal scrolling produced two significant and notable deviations on autonomous driving (SP) and unnecessary online purchases (SP). Paging generated four statistically significant deviations and two were notable. This is all consistent with layout-related MAD, which was the lowest for scrolling (0.02), while the others were up to 0.05. We can surmise that high deviations may signal that paging—due to its prolonged cognitive time—provided the true values of responses (better than averages across the five layouts).

The sign of the difference had no methodological interpretation, while from a substantive perspective, as expected, the items measuring digital competencies scored higher among SP respondents; however, this was not associated with layout.

Device Effects and Survey Estimates

Compared to the limited effects that layouts had on estimates within each device, the device effects on differences in estimates for specific layouts were much stronger. Here, a positive difference meant a higher score for PCs than for SPs and vice versa, and “no difference” is again the preferred layout characteristic. Of the 100 estimates (20 estimates by 5 layouts), 24 showed statistically significant differences between PCs and SPs (Table 4), with 15 of these differences being notable (relative difference equal to or greater than 5%).

Unfolding had the fewest differences between PCs and SPs (three significant differences out of 20 estimates and one notable difference), followed by scrolling (five/one), grid (four/three), paging (five/three), and horizontal scrolling (eight/seven). Similar effects were reflected in MAD, where scrolling and unfolding had a relatively low value (0.05), while other layouts were in the range of 0.07–0.09.

Discussion

Research Questions

Impact of Layouts on Response Quality (RQ1)

The results support previous research that grids underperform on indicators of item nonresponse and satisficing compared to scrolling and unfolding (e.g., Liu & Cernat, 2018; Mavletova et al., 2018; Revilla & Couper, 2018; Richards et al., 2016; Stern et al., 2016). A specific result of this study was that grids also underperformed on outliers on PCs and on consistency (i.e., neuroticism) on SPs. It was somewhat surprising, however, that grids showed no disadvantages for breakoffs (e.g., Peytchev, 2006; Vehovar & Batagelj, 1996). The results also clarified the supposed advantage of survey duration. Indeed, the grids were much faster when compared to paging (see Antoun, Katz, et al., 2018; Peytchev & Hill, 2010; Stern et al., 2016), but not when contrasted to scrolling and unfolding, as previously noted in some studies (e.g., Bell et al., 2001; Revilla & Couper, 2018). We should not forget, however, that a shorter survey duration is not per se an advantage unless it improves direct RQIs (see discussion on paging below). Similarly, in this study, the supposed advantage of higher consistency disappeared with alternating item scale directions. This confirms warnings about artificially high item correlations in grids (e.g., Revilla et al., 2017; Silber et al., 2018; Tourangeau et al., 2004) when uniform item scale directions spuriously inflate consistency measures through straightlining (e.g., Couper et al., 2001; Mavletova et al., 2018).

From the perspective of the RQIs, unfolding performed best on both devices, followed closely by scrolling. Paging was more problematic because a longer overall duration (i.e., 29.1 minutes for paging vs. 19.6 minutes for non-paging layouts) manifested in higher breakoffs for SPs and in a very high burden on both devices, as already observed by Mavletova and Couper (2014) and Peytchev et al. (2006). Thus, this is an excellent example of the disadvantages arising from a prolonged survey duration. Interestingly, this very argument has been used implicitly—though never empirically confirmed—to defend grids in the sense that their speed advantage (which nevertheless exists only in comparison to paging) prevents the degradation of RQIs (e.g., breakoff) arising from a lengthy duration. Conversely, with respect to straightlining, paging was superior to grids (e.g., Antoun, Katz, et al., 2018; Čehovin & Berzelak, 2020) and, to a lesser extent, scrolling and unfolding. Thus, given sufficient motivation—although difficult to achieve—paging may ensure that the (surviving) respondents exert high cognitive effort to provide quality responses, so paging might be considered for some important or short surveys where cooperation is assured due to salience or incentives. There were even more challenges with horizontal scrolling, particularly with item nonresponse. One reason for this could be the underdeveloped visual design in terms of item numbering and the positioning of the “forward” button in relation to automatic forwarding (Revilla & Couper, 2018).

Regarding device effects, response quality was generally lower on SPs than on PCs, as often noted in the literature (see Keusch & Yan, 2017; Lambert & Miller, 2015; Wells et al., 2014), which is particularly true for breakoffs (see Mavletova & Couper, 2015; Mittereder, 2019). Grids performed the worst; besides breakoffs, they also exhibited high device effects in interitem consistency and IMC. Except for breakoffs—where all studies confirm the disadvantage of grids—these results contradict some findings of negligible differences in response quality when grids are compared on PCs and SPs (e.g., Couper & Peterson, 2017; Mavletova et al., 2018; Revilla & Couper, 2018). This discrepancy with other studies may be due to their design specifics. Conversely, paging elicited the least device effects (apart from breakoffs), which was somehow expected given the harmonized visual layout on both devices (Villar et al., 2018). Scrolling and unfolding followed paging very closely, lagging behind in only indirect RQIs (burden and duration). The highest device effects appeared with horizontal scrolling, particularly for item nonresponse.

Impact of Layouts on Survey Estimates (RQ2)

Similarly to previous research (e.g., Couper et al., 2013; Liu & Cernat, 2018; Mockovak, 2018; Thorndike et al., 2009), results show little effect of layouts on survey estimates. Nevertheless, there were specific differences between the layouts. Scrolling was superior and produced no significant differences in 40 cells, while unfolding produced one significant difference, grid produced two, horizontal scrolling four, and paging five.

Regarding effects on survey estimates within certain layouts, statistically significant differences were found between PC and SP estimates in 24 of the 100 cells, with 15 cells also showing notable relative differences (5%). This is slightly higher compared with some previous research (e.g., Arthur et al., 2014), particularly Antoun, Conrad, et al. (2018), where the notable differences were observed only in 2 of the 19 survey estimates. Unfolding and scrolling performed best (each with only one significant and notable difference among 20 estimates), followed by paging and grids (three) and horizontal scrolling (seven).

Whether (and how) Grids Should be Decomposed (RQ3)

The justification for using grids is based on claims that their negative effects are small, inconsistent, or nonexistent, while there may exist some benefits in terms of speed and consistency. However, this study has shown that grids have significant negative effects without any benefits, at least when compared with scrolling and unfolding. Therefore, the results support suggestions (e.g., Callegaro et al., 2009, 2015; Debell et al., 2019; Dillman et al., 2014; Peytchev & Tourangeau, 2005; Revilla et al., 2017) to avoid grids on SPs and PCs. Of course, this applies to situations with similarly designed (attitudinal) grids with 5-point scales (columns) in 20-minute questionnaires, with about half of the items in grid format, which is, however, a frequent situation in survey practice.

Decomposing the grids into item-by-item alternatives also mitigates the risk of poor design of the grids, especially on smartphones, while the same decomposition on both devices can help avoid additional (device-related) measurement errors (Peterson et al., 2017). Nevertheless, the pairwise comparisons (see Section 7 of Online Supplement) suggested that even if the grid layout is retained on PCs, it is still better to decompose it on SPs than to use a mobile grid.

In terms of the best item-by-item alternative, the unfolding and scrolling layouts are very close. Unfolding has a slight advantage from the RQI perspective, while scrolling has an edge from the survey estimate perspective. Unfolding also evoked slightly fewer device effects. Other layouts suffer from various specific problems. Nevertheless, paging can be advantageous in certain circumstances (when the high motivation of respondents is assured and device effects are controlled), while horizontal scrolling requires further improvement to avoid disadvantages in item nonresponse and device harmonization.

The Role of Web Survey Software

So far, only the scientific literature has been considered. To gain insight into survey practice, we refer to an illustrative review of web survey software with a trial version available (Vehovar et al., 2021), where 14 grid layout alternatives were identified. The four item-by-item options discussed in this paper were also the layouts most frequently offered by web survey software. The review found that on mobile devices, most software automatically (i.e., by default) decompose grids, making this the de facto industry standard. Scrolling dominates as a default option, while unfolding is surprisingly uncommon. Nevertheless, only rarely was an additional layout option offered alongside the default one. On PCs, grids dominate almost exclusively, while item-by-item alternatives are offered only in a few cases.

Given the results of our study, the recommendation would be to encourage web survey software—and researchers—to extend an item-by-item layout as a default option on SPs and to routinely introduce it also on PCs. Another recommendation is to offer more alternatives so that users can choose the layout that best suits their purpose. This is especially true for unfolding, which is somewhat neglected given its performance.

Limitations and Future Research

One obvious limitation relates to recruitment, which was based on a (typical) access panel in which respondents were already familiar with a certain layout (scrolling in this case). Similarly, respondents received incentives, which could lead to higher survey participation (e.g., Bosnjak et al., 2005; Keusch et al., 2014) and fewer breakoffs. Respondents were also accustomed to hard reminders (not allowing them to proceed without answering); thus, soft reminders were an exception in this study. Therefore, changing the reminders and incentives could have revealed additional issues across the layouts. Moreover, applying standard statistical inference to a nonprobability sample of an access panel is also problematic, requiring the usual caveat that unpredictable errors may exist. Nonetheless, nonprobability samples are proving to be very robust for this type of randomized experimental studies (Vehovar et al., 2016). In any case, these issues do not compromise the internal validity of the findings, while in terms of external validity, it should be noted that nonprobability and probability-based panels produce similar effects in terms of response quality (Cornesse & Blom, 2020). Since surveys of the general population today are increasingly conducted via access panels, such studies are nevertheless relevant.

Second, the respondents were allowed to self-select the device, so uncontrolled factors might appear, such as the higher technical skills of the mobile device respondents (e.g., Conrad et al., 2017). However, it should be repeated that oversampling and weighting considerably compensated for these effects. It is also true that we are not aware of any study to date that shows that the effects on response quality found in quasi-experimental designs are neglected in a fully randomized experimental study. It is important to mention that in survey practice, respondents use their preferred devices anyway, which the researcher cannot control, so minimizing these effects is desirable. The disadvantages of pre-selecting devices (which is required in full randomization) should also be considered, as this practice forces respondents to use the device they may not prefer, leading to additional nonresponse as well as response quality effects (e.g., Peterson et al., 2017).

Third, this study focused predominantly on ordinal scales. Still, the results can be generalized, at least in part, to nominal, interval, and ratio scales because the corresponding layouts share common format principles. We should also note that, similarly to other studies, we predominantly discussed grids with attitudinal items. Regarding the number of categories, as mentioned, this study addressed only 5-point scales because they prevail in survey practice, so we cannot discuss the effect of a lower or higher number of categories. Nevertheless, Grady et al. (2018) claimed that on mobile devices, grids with 5-point scales are best for measuring attitudes, and according to Liu and Cernat (2018), 7- or 11-point scales are likely to increase the negative effects of grids.

Fourth, it should be reiterated that this study did not examine the effects of a single grid but rather the effects of grids aggregated across the questionnaire. Furthermore, the study used a 20-minute web survey. While this is a relatively frequent research choice (Callegaro et al., 2015), we expect shorter surveys (e.g., 5–10 minutes) or surveys with fewer grid questions to have smaller effects.

All the above limitations can be a starting point for future research. This study can therefore be replicated in different survey settings (e.g., probability sampling, different proportions of items in the grid format, and changes in duration) using rigorous randomization and testing of other scales and dimensions, particularly with variations in the number of scale points. Further elaboration of item-by-item decompositions would also be an important extension. One of the major challenges is whether paging leads to better substantive responses in situations in which motivation and participation are warranted. A vital research topic is also the evaluation of other item-by-item alternatives, especially the scrolling layout with automatic forwarding, which reduces scrolling actions. The use of automatic forwarding should be further investigated because it may increase missing data (e.g., de Bruijne, 2015). Similar issues apply to horizontal item scales (e.g., Revilla & Couper, 2018) and variations in the repetition of question introductions. Moreover, improving the design of horizontal scrolling is still an unsolved problem. Finally, careful design of the grid (general or for specific situations), where the grid would outperform item-by-item alternatives, remains an ongoing challenge.

Conclusion

The literature review revealed that research on the use of grids in web surveys is still inconclusive. Therefore, the grid survey question layout and four main item-by-item alternatives (i.e., scrolling, unfolding, horizontal scrolling, and paging) were evaluated for PC and mobile web surveys in a comprehensive study using 20 survey estimates and 10 response quality indicators. The results show that grids have several disadvantages and no advantage compared with scrolling and unfolding. It was also demonstrated that the supposed advantages of grids (i.e., shorter survey duration and higher interitem consistency) are only spurious effects. It seems that the preference for grids stems from the perspective of paper-and-pencil questionnaires, overlooking the fact that modern web interfaces offer better ways to interact with survey questionnaires.

Thus, the results suggest decomposing grids on mobile devices and PCs. Accordingly, in survey practice, most web survey software packages already automatically decompose grids on mobile devices, although they offer too few item-by-item alternatives (neglecting the unfolding layout is particularly critical). Conversely, software rarely automatically decomposes grids on PCs, which seems to be a future task for web survey software developers and researchers. More specifically, on PC, scrolling and unfolding grid layouts need to become a should have option, while offering more item-by-item alternatives on PC and mobile is a nice to have feature of high-quality software (Callegaro et al., 2015).

The exact choice of item-by-item alternatives should depend on the circumstances and preferences of the researcher. Nevertheless, a carefully designed grid layout may still be appropriate for certain situations (e.g., short item statements, few response options, and short surveys), but so far, there is no evidence against the general recommendation that grids can be safely replaced by unfolding (or scrolling) in all situations. However, the choice between paging and unfolding (or scrolling) is more complicated since paging involves a complex tradeoff between the advantage of response quality and disadvantages such as breakoffs and device effects.

Supplemental Material

Supplemental Material - Alternative Layouts for Grid Questions in PC and Mobile Web Surveys: An Experimental Evaluation Using Response Quality Indicators and Survey Estimates

Supplemental Material for Alternative Layouts for Grid Questions in PC and Mobile Web Surveys: An Experimental Evaluation Using Response Quality Indicators and Survey Estimates by Vasja Vehovar, PhD, Mick P. Couper, PhD, and Gregor Dehovin, PhD in Social Science Computer Review.

Footnotes

Acknowledgments

The authors acknowledge the contribution and feedback of Nejc Berzelak, PhD (National Institute of Public Health, Ljubljana, Slovenia).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Slovenian Research Agency (grant numbers P5-0399, J5-9334, J5-8233, and NI-0004).

Data Availability

The data are available in Online Supplement.

Software Information

Statistical analyses were performed using SPSS version 26 and R (R Core Team, 2018) version 3.6.2.

Supplemental Material

Supplementary material for this article is available on the online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.