Abstract

Communication privacy research has employed a plethora of theoretical approaches to explain the information disclosing behavior of users. To explain information disclosure intentions in mHealth apps, this article integrates the attitude-behavior model of privacy decisions with approaches on the role of heuristics and the impact of habitual app use. Specifically, we examine the relationship between privacy attitudes, privacy concerns, app habits, and social norm cues with the intention to disclose three types of information (personal, budget, health) in two types of mHealth apps. Testing our model in an online survey including an experimental manipulation of social norm cue strength (high/ medium/ low) among N = 475 smartphone users, our findings underline the importance of privacy attitudes for the intention to disclose information, but also point out the influence of app habits and the role of subjective evaluations of social norm cues.

mHealth apps aim at supporting users’ quest for healthier living by offering a tailored user experience through personalized health information and recommendations for future behaviors (Bol et al., 2018). In return, such apps require users to provide a broad range of personal information ranging from age, gender, or location to particularly sensitive information such as medical conditions (Torre et al., 2018). Privacy research has focused on how to explain users’ disclosing behavior of such information (Acquisti et al., 2015; Tsay-Vogel et al., 2018). Empirical findings in this domain point to a discrepancy between users’ privacy attitudes and observed disclosing behavior, often described as a “privacy paradox” (Barth & de Jong, 2017; Zhu et al., 2021). Consequentially, information disclosing behavior cannot fully be explained by attitude-behavior models that see disclosing behavior as a rational decision-making process whereby users make (more or less) deliberate cost-benefit analyses on what to disclose, as outlined for instance in the (Extended) Privacy-Calculus Model (Dinev & Hart, 2006; Kim et al., 2019; Trepte et al., 2020). At the same time, privacy research that advocates for the relevance of cognitive heuristics and biases as an alternative explanation for disclosing behavior (Acquisti et al., 2020; Sundar, 2008), neglects the stable findings on privacy attitudes’ role in explaining disclosing behavior (Kokolakis, 2017). Moreover, (mobile) privacy researchers have paid little attention to users’ app use habits (Wagner et al., 2020) even though past behavior has been shown to be a strong predictor of media use (LaRose, 2010; Quinn, 2016).

To explain disclosing intentions in mHealth apps, we set out to combine the attitude-behavior model, the heuristic-and-bias approach, and findings on habitual app use. Specifically, following Dienlin and Trepte (2015), we focus on the interplay between privacy concerns and privacy attitudes to overcome the privacy paradox. We integrate aspects of heuristic cues into our model, namely social norm cues, indicating the effects of the behavior of other users on disclosing behavior (Acquisti et al., 2012; Spottswood & Hancock, 2017), and we investigate the role of habitual app use as a failure in self-regulation (LaRose, 2010). We test the interplay between these factors on the information disclosing intention for three types of information in mHealth apps: personal, health-related, and budget data for two mHealth apps. Thus, answering the question of in how far a combination of existing approaches in privacy research can help us better understand information disclosing behavior in the specific context of mHealth app use.

Theoretical Background

Privacy Concerns and Information Disclosure in Smartphone Apps

When using smartphone apps, users disclose information in one of two ways: Either their data is stored and processed unaware of their knowledge (Brandtzaeg, et al., 2019; Henke et al., 2018) or they deliberately decide whether to give away a certain type of information. We focus on the latter, which we describe as self-disclosure of private information in mobile apps. Self-disclosure can be understood as a process of social interaction through which individuals communicate personal information (Cozby, 1973). Following an understanding of privacy as individuals’ control about what others know about them (or not) (Westin, 1967), privacy researchers have investigated the conditions under which users are willing to engage in self-disclosing behaviors in varying online contexts and applications, such as social networking sites (SNS) (Trepte et al., 2020) or mobile apps (Jozani, et al., 2020).

One argument for why users are willing to share their data is that privacy decisions are subject to cost-benefit analyses (Laufer & Wolfe, 1977). In the case of mHealth apps, this means that the benefits of a (tailored) service (e.g., personalized recommendations or information) outweigh the risk involved in using it (Bol et al., 2018; Kim et al., 2019). Zhu et al. (2021), for instance, found that in a privacy-calculus perspective such benefits in health apps are a stronger (positive) predictor for information disclosure than privacy concerns (as a negative one). However, research has pointed out that such rational cost-benefit calculations are often biased (Barth & de Jong, 2017).

Systematic reviews (Barth & de Jong, 2017; Baruh et al., 2017); Kokolakis, 2017) have provided a general understanding of the relationship between privacy attitudes and concerns with information disclosure. While reviews show evidence, both for and against the effects of privacy concerns on privacy behavior, Baruh et al.’s (2017) meta-analysis revealed a weak (r = -.22, CI 95% [ ˗.28; ˗.015]), yet significant negative effect of privacy concerns on personal information disclosure, meaning the more people are concerned about their privacy, the less information they (intend to) disclose online. The small effect size is likely due to three limitations in previous research that we address in the present study: (A) the relationship between privacy concerns and behavior tends to become spurious as variables are measured with different degrees of specificity, (B) studies employ a broad range of theoretical models, and (C) studies vary in their measure of information disclosure.

Developing a conceptual research model for overcoming existing limitations in privacy research

To overcome these limitations, we differentiate between privacy concerns and attitudes as distinct constructs as suggested by Dienlin and Trepte (2015). We focus on two main mechanisms privacy research has put forward to explain information disclosure, namely the attitude-behavior model of privacy management and the heuristic-based approach that accounts for social cues as (relevant) contextual factors. We complement these two research strands by integrating the impact of app use habits (Wagner et al., 2020) as a further crucial predictor of app usage intention and hence privacy decision making. Finally, we consider not only one type of information but further test the stability of our findings by looking into further contexts, namely differed types of information and apps. As a consequence, we develop a research model explaining information discloser for mHealth apps. Even if such a model is eclectic in nature, it is based on hypotheses derived from existing and well-studied approaches. Combining these into a coherent model leads to further as of now not yet answered research questions.

Ambiguity in the concepts: Privacy Attitudes vs. Privacy Concerns

Privacy attitudes and concerns are often used interchangeably with the latter serving as a “measurable proxy” (Masur, 2019, p. 106) for the former. While Baruh et al. (2017) and Barth and de Jong (2017) focus on privacy concerns, Kokolakis (2017) employs the term privacy attitudes, referring to (largely) the same studies. Based on Theory of Planned Behavior (Ajzen, 2005), Dienlin and Trepte (2015) differentiate between privacy attitudes and privacy concerns which are both considered factors that capture people’s opinions about actions in online (and/or mobile) environments. While privacy concerns are less specific and more abstract, privacy attitudes are specific for certain online activities. Moreover, privacy concerns are unipolar as they only relate to negative aspects such as fears of data theft. Privacy attitudes, on the other hand, are bipolar: Attitudes towards a specific online service can be positive or negative.

Dienlin and Trepte (2015) further underline that broadly defined concerns about privacy are not appropriate to predict very specific behaviors as both should be measured with the same degree of specificity. Therefore, they operationalized privacy attitudes as positive or negative evaluations of privacy behaviors and empirically demonstrated that these evaluations were predicted by privacy concerns and, in turn, predicted behavioral intentions better than concerns alone (see also Dienlin et al., 2021). We follow their approach by regarding privacy concerns and attitudes as distinct constructs which can, potentially, both contribute to explaining information disclosure behavior, but we argue that the direct effect of (more general) privacy concerns on information disclosure is mediated through (more specific) privacy attitudes, leading to our first hypothesis which expands the dominant attitude-behavior model: H1: The effect of privacy concerns on intention to disclose information is mediated through privacy attitudes.

Combining the attitude-behavior model with heuristic-based approaches to information disclosure and habitual app use

Barth and de Jong’s (2017) literature review found 35 different theoretical approaches across 32 studies. Although Theory of Planned Behavior (Ajzen, 2005) and related viewpoints are among the most prominent approaches in studying information disclosure, another domain of research has moved beyond the rational assumption of such approaches by focusing on heuristics and biases that impact privacy decision-making and hence privacy management (Acquisti et al., 2020).

Previous studies have pointed out that app use is often subject to such heuristic decision-making (Acquisti et al., 2020), which means that individuals are likely to rely on a limited amount of information to reach their decisions. Regarding information disclosure, cues about disclosing norms—for example, what is perceived as appropriate information-sharing behavior—are particularly important (Spottswood & Hancock, 2017).

As Acquisti et al. (2012) argue, human judgment and decision-making are comparative in nature and privacy behavior is affected by this as well. The underlying mechanism for this has been widely acknowledged and described in a variety of terms: In the broadest sense, users follow a bandwagon (Kim & Sundar, 2014) or majority vote heuristic (Joeckel et al., 2019) that refers to the principle that people base their decision on the simple maxim that when others have made the same choice, this decision must be good (Kim & Gambino, 2016). Similarly, Waldman (2020) argues that SNS users rely on others’ behavior as an anchor for their own decisions about how much information to divulge.

The underlying mechanism of these heuristics can best be described by social norm theory (Rimal & Lapinski, 2015). People’s behaviors are not only based on norms about what should be done (injunctive norms) but also based on social norms about what others are doing, so-called descriptive norms (Cialdini et al., 1991). Relying on such descriptive norms provides an efficient and often well-suited decisional shortcut (Cialdini et al., 1991). In application to privacy, Wirth and colleagues (2019) reported that such perceived norms predicted the disclosing behavior of SNS users. Regarding mHealth apps, Beldad and Hegner (2017) found that injunctive norms predicted the continued use of fitness apps, while descriptive norms predicted trust in the app developer and the perceived usefulness of an app.

In the following, we label cues that indicate norms about other users’ information disclosing behaviors “social norm cues” (SNcues)—comparable to what Spottswood and Hancock (2017) describe as explicit visual cues displaying the aggregate behavior of other users. Empirically, Acquisti et al. (2012) showed that participants were more likely to confess to certain behaviors if SNcues indicated a high number of others who had also performed the behavior. Spottswood and Hancock (2017) replicated Acquisti et al.’s (2012) findings with respect to personal information disclosure on a SNS with similar results. Similarly, Trepte et al. (2020) found that new users of a novel SNS disclosed more information during registration if they were presented with information about how many other new users had provided the same piece of information.

Based on these findings, we deduce our second hypothesis, which integrates findings from the heuristics-and-bias perspective in our model, specifically the impact of SNCues: H2: A stronger SNcue will lead to a higher intention to disclose information.

While existing studies in support of H2 indicate a positive effect of SNcues on disclosing behavior, they are limited in the sense that the researchers deliberately differentiated between high vs. low cue conditions or the presence vs. absence of cues (e.g., Trepte et al., 2020) without inquiring what users perceive as high or low levels of self-disclosure. For example, some users may interpret 60% of other users disclosing their location in an app as high while others may interpret it as medium or low. Recent approaches to privacy decision-making strengthen the importance to account for a subjective, situational component of privacy (Masur, 2019). Thus, we assume that the effect of SNcues depends on users’ personal evaluations of what is considered a high or a low cue value.

That is, users are likely to make subjective judgments about the strength of an SNcue, which then may or may not trigger a majority vote heuristic. That being said, we can assume that the higher the proportion of other people disclosing a certain type of information, the higher this cue’s strength is. What we still do not know is, how far the subjective evaluation of SNcue strength will affect the decision to disclose information. Hence, we propose a research question: RQ1: How does the perceived SNcue strength affect the intention to disclose information?

Combining findings from the attitude-behavior model with our investigation of perceived SNcues strengths opens the ground for some untested assumptions. Privacy attitudes and perceived SNCues strength are likely to be related to each other. If we accept that attitudes affect behavior, we can conclude that if users are sensitive about their privacy (i.e., have higher privacy attitudes), this will lead to such users evaluating other peoples’ disclosing decisions more critically, which then will lead to lower perceived SNCues strength compared to users with less outspoken privacy attitudes. As this claim has, to our understanding, not been tested empirically, we pose a research question: RQ2: Does sensitivity about privacy (i.e., higher privacy attitudes) lead to more critical perceptions of SNCues (i.e., lower perceived SNcue strength)?

The attitude-behavior model and the heuristic-and-biases perspective both contribute to an understanding of when to disclose personal information in a mHealth app. Still, usage decisions often occur automatically and are neither shaped by cognitive elaboration nor existing SNCues. The role of habitual app use has started to receive more attention in this respect (Quinn, 2016; Wagner et al., 2020). Following Social Cognition Theory (Bandura, 1991), habits are a case of failures in self-regulation, when users simply decide to use an app or disclose a type of information, because they are used to this behavior and not because of cognitive elaboration. Thus, habits, defined as automatic behavioral responses that are developed through repeatedly performing behaviors (LaRose, 2010), are strong predictors of behavior that undermine self-regulation. Moreover, strong usage habits are associated with considering less information during decision-making, thus challenging rational-choice perspectives (Aarts et al., 1998).

Regarding smartphone use, habits were found to be a relevant factor in explaining the formation of certain app repertoires (Jung et al., 2014) and habitual use is a significant predictor of continuant use of social apps (Hsiao et al., 2016; Wagner et al., 2020) as well as mHealth apps (Yuan et al., 2015). Focusing on privacy, Barth and de Jong (2017) argue with reference to Quinn (2016) that habitual media use inhibits users’ engagement with privacy management strategies and, as such, can be seen as a potential bias affecting the relationship between privacy attitudes and concerns with information disclosure. Based on these findings, we can derive our third hypothesis: H3: App habits have a positive effect on intentions to disclose information.

Adding the concept of habitual app use to our model, we can speculate that privacy attitudes and app habits are not unrelated. That is, users who hold stronger privacy attitudes are potentially less likely to have strong app habits—as such users exercise stronger self-regulation. Even if this assumption has some face validity, it has not been tested empirically. Wagner et al. (2020) who tested the relationship between app habits and perceived privacy risks, a concept related to privacy attitudes and concerns, could not empirically confirm this relationship. Thus, we pose a research question

RQ3: Do higher privacy attitudes lead to lower habitual app use?

Disclosing Different Types of Information in Different Contexts

Kokolakis’ review (2017) concludes that different types of personal information need to be considered in privacy research as individuals differ in their valuation of different types of information. Some types of information are deemed more private than others—and health information is considered very sensitive information (Petronio & Venetis, 2017), especially compared to other types of information such as information on hobbies or leisure activities (Bansal et al., 2010). However, it is currently unknown if the same predictors play the same, or at least a similar, role in explaining disclosing intentions across different types of information. For example, will app habits have the same impact on disclosing budget information or health information? As a consequence, we focus on three distinct types of information. Namely, we are interested in app users’ disclosure of health (e.g., their blood pressure levels), personal (e.g., their location), and budget data (e.g., their available money for food or time to exercise). Because privacy decision-making is situational in nature (Masur, 2019), the impact of individual predictors may further vary depending on contexts specific apps. Thus, we test this within the context of two related yet distinct mHealth apps. By this, we partially set out to overcome the problem of single stimuli studies, that suffer from generalizability and lack of replicability (Reeves et al, 2016). Focusing on three types of information in two apps, we test the stability of our findings and highlight where we should caution against drawing conclusions from our single study.

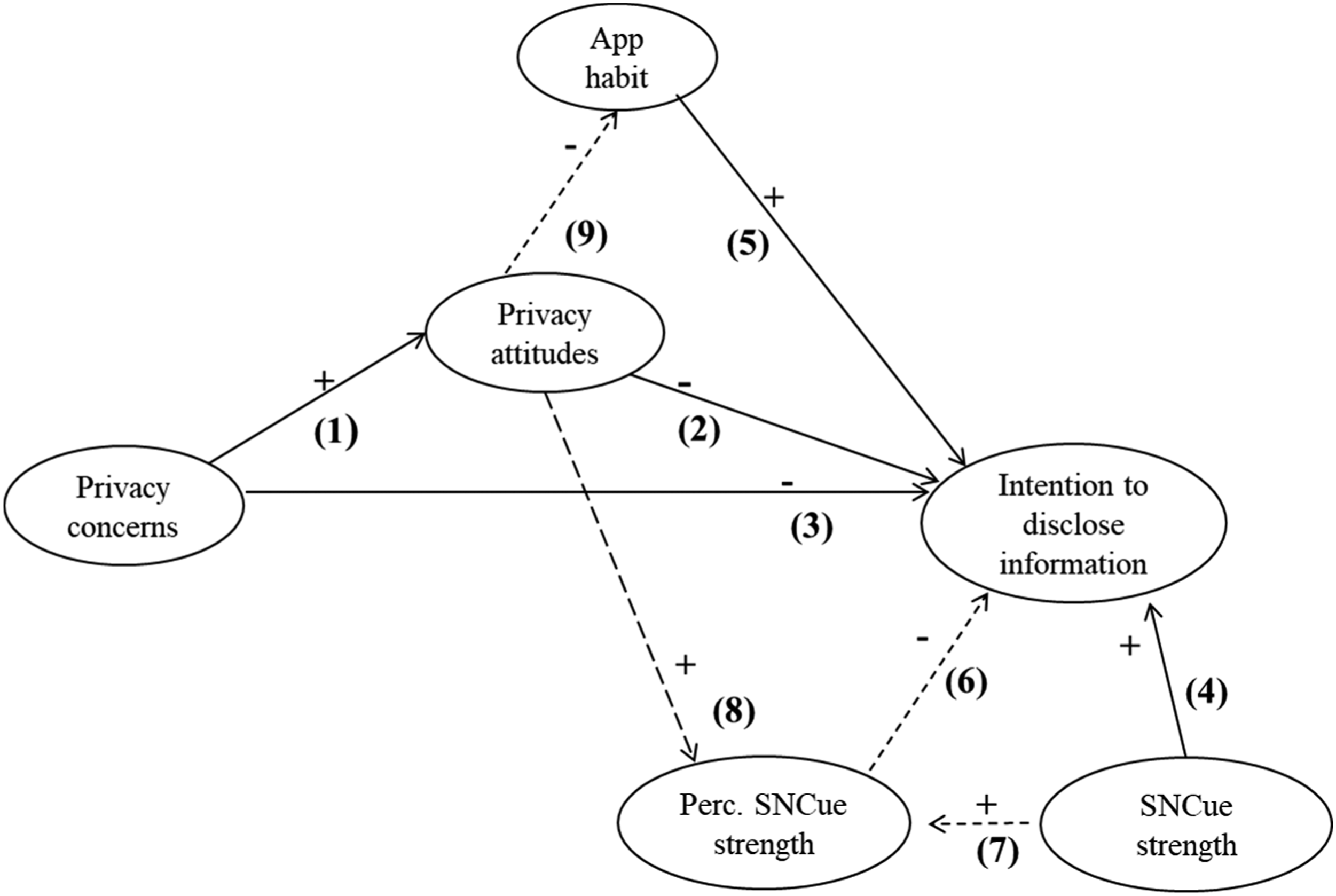

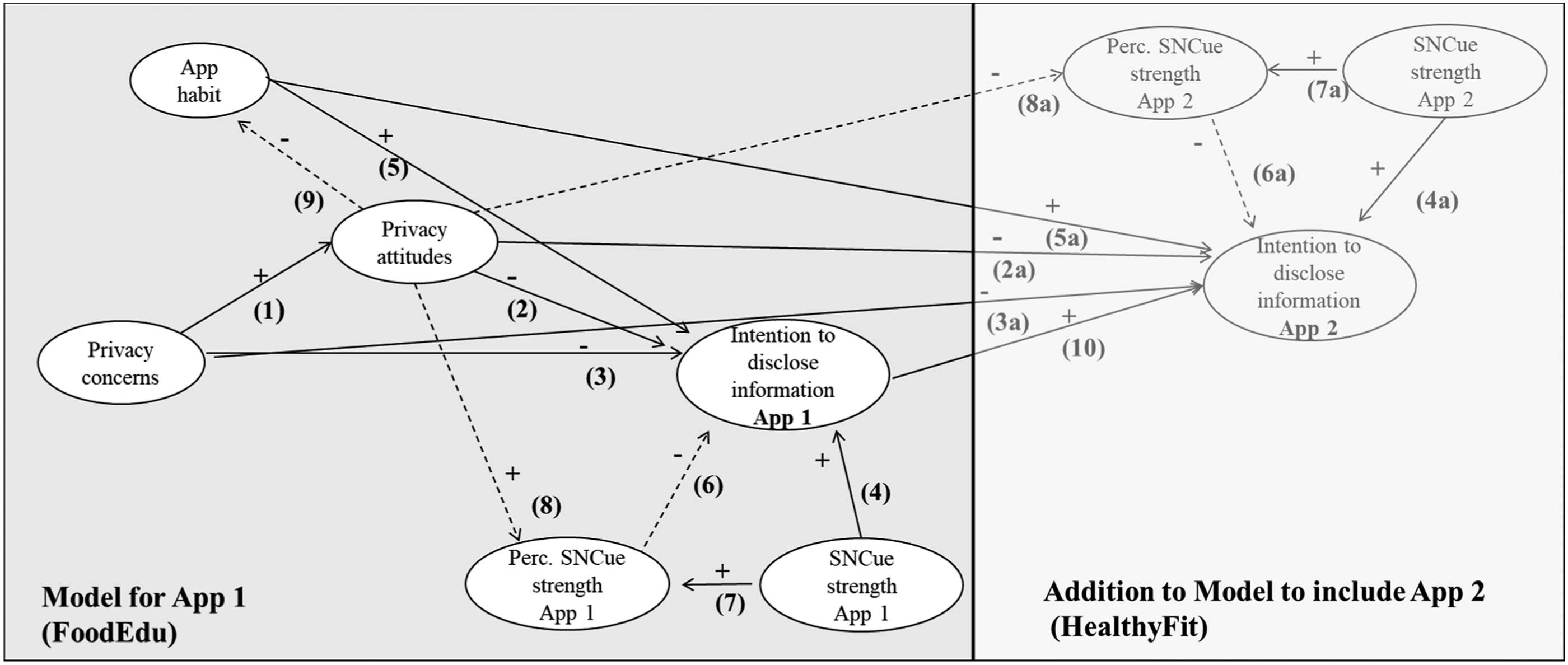

Figure 1 depicts our research model. The central dependent variable is the intention to self-disclose information—the proxy often used when privacy behavior cannot be observed directly. Path 1–3 set out to investigate the mediation proposed in H1. Paths 4 and 5 test H2 and H3, respectively. Path 6 investigates RQ1, while path 7 supports this analysis. Path 8 investigates RQ2, while path 9 caters for RQ3. Research Model.

Materials and Method

Participants

Participants were recruited in late 2018 through a commercial online access panel in Germany. The study followed the ethical guidelines for research at the hosting institution. Participants had to qualify for the study by indicating whether they used a smartphone. We required statistical power to be above .8 to identify even weak effects ( f = .15). After eliminating incomplete data, a total of 475 (48.2% Female, Mage = 46.3; SD = 13.3) participants remained, which yielded enough statistical power for regression-based analysis (Faul et al., 2007) such as our employed PLS-based model (Kock & Haydaya, 2018).

Study Design and Procedure

Participants took part in an online survey with a stimulus presentation. After answering questions about their general and health-related app use as well as their privacy attitudes and concerns, participants were told that they were going to be presented with a description of a food-related app (FoodieEdu, FE). The first screen consisted of a summary of the app’s features including a description of personalization options, such as that the use of this app gives users tailored recommendations based on their age, fitness level, or location. On a second screen, we presented a list of eleven different types of information (for stimulus presentation see Appendix A). Alongside this list, we presented the SNcues (one for each item of information) to our participants. These indicated how many other users had provided the information in question. These cues were randomly generated percentages between 1% and 100% (individually for each item) that were administered in three randomized conditions: In the low SNcue strength (=1) condition, the range was limited to a number between 1% and 33%, in the medium (=2) condition between 34% and 66% and in the high condition (=3) between 67% and 100%. We repeated the above-described procedure for the fitness-related fictitious app HealthyFit (HF). Food- and fitness-related apps represent the two most popular health app categories (Ernsting et al., 2017). After each app, we asked participants about their perceptions of the provided SNcues. The survey ended with questions about socio-demographics and a debriefing.

Measures

Information disclosing intention

For each app, we asked participants whether they were willing to disclose a list of eleven different types of information. The items were based on information often required by mobile health apps to provide maximum functionality and a personalized user experience. We deliberately differentiated these items into three broader categories: personal, health-related, and budget data. The number of information per category intended to be disclosed was added up to a sum index.

(Absolute) Strength of SNcues

Our experimental groups (1 = low, 2 = medium, 3 = high) acted as an indicator for the administered SNcues’ strength.

Perceived strength of SNcues

To account for participants’ subjective evaluations of the proportion of other users disclosing a piece of certain information, we asked, how participants would rate the number of other people disclosing this type of information that they had seen just before as part of the SNcue strength manipulation. This was measured on a scale ranging from 1 (“very few”) to 10 (“many”). These perceived SNcue strengths were averaged out as a formative factor.

Privacy concerns

Privacy concerns were measured using the Mobile Users’ Concerns for Information Privacy (MUIPC) scale (Xu et al., 2012).

Privacy attitudes

We assessed the attitudes of participants in three dimensions: using apps, which rely on user data to function, disclosing such information, and personalized app experiences. We presented participants with three incomplete statements related to privacy and app usage. Participants were then asked to finish each statement on a 5-point semantic differential with six adjective pairs, which was adapted from Dienlin and Trepte (2015).

App habits

To measure app habits, we employed an adapted version of the Self-Report Habit Index (SRHI; Verplanken & Orbell, 2003). Participants were asked about their agreement with eight statements corresponding to four elements of the habit construct: repeated behavior, unconscious execution of behavior, ability to control the behavior, and cognitive efficiency of starting the behavior.

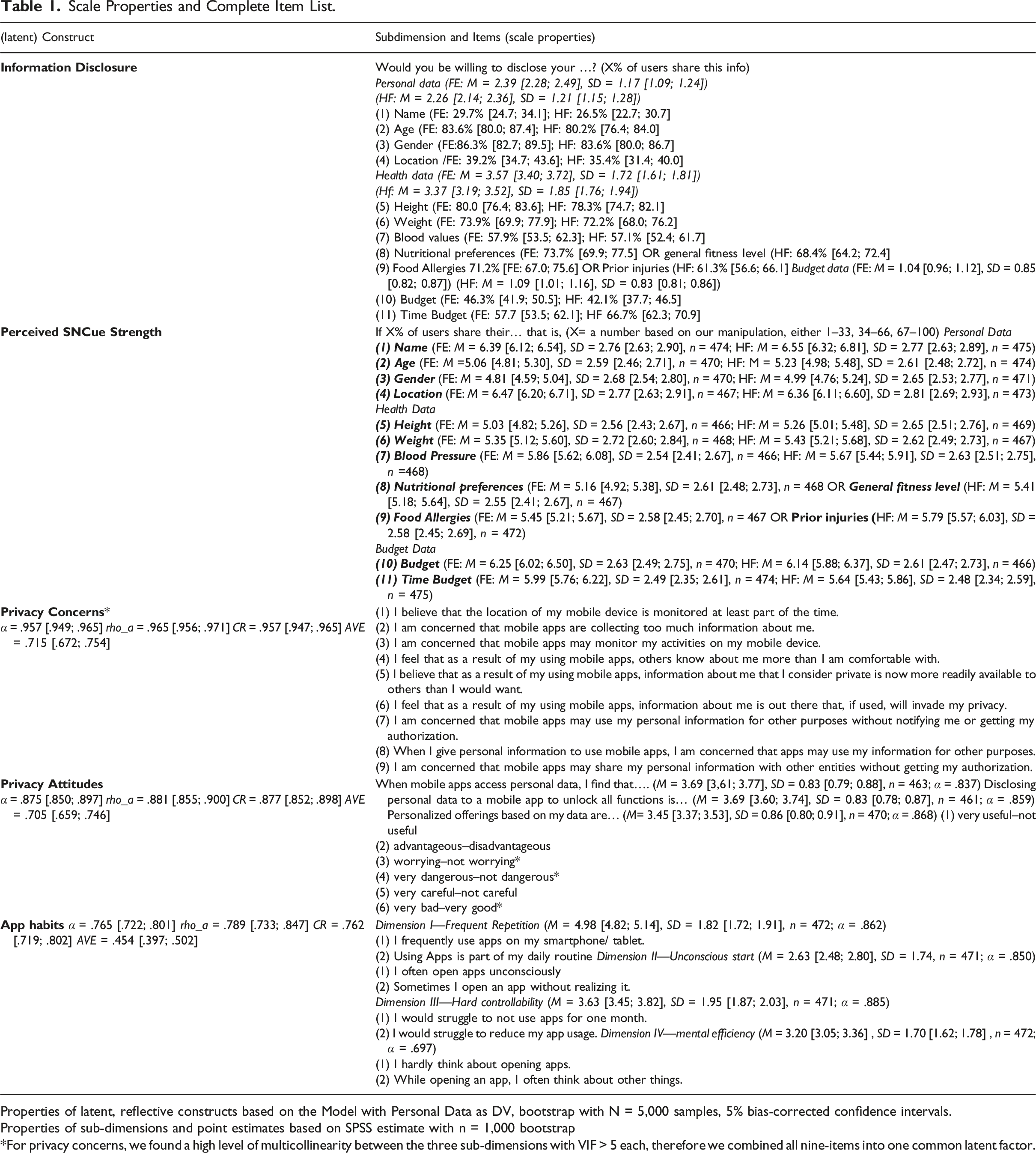

Scale Properties and Complete Item List.

Properties of latent, reflective constructs based on the Model with Personal Data as DV, bootstrap with N = 5,000 samples, 5% bias-corrected confidence intervals.

Properties of sub-dimensions and point estimates based on SPSS estimate with n = 1,000 bootstrap

*For privacy concerns, we found a high level of multicollinearity between the three sub-dimensions with VIF > 5 each, therefore we combined all nine-items into one common latent factor.

Data Analysis Strategy

Our data analysis was based on the research model as specified in Figure 1. Empirically, we further controlled for the potential impact of socio-demographic variables by adding a direct path from age and gender to our dependent variable, intention to disclose information. We tested our research model in three separate structure-equation models using the PLS-procedure in SmartPLS 3.0 (with consistent bootstrap of N = 5,000). In contrast to covariance-based SEM, PLS-procedure allows the use of reflective as well as formative latent factors at the same time and is more liberal regarding the types of data employed (Hair et al., 2014). Established measures were used as reflective factors and perceived SNcue strength as a formative factor. PLS-based SEM does not treat global fit indicators, particularly when models include formative factors in the same way as covariance-based SEM. Therefore, these indicators should be treated cautiously (Hair et al., 2014) but HTMT scores for discriminant validity were acceptable for all measures. To provide a parsimonious model, and as we did not intend to provide a further test of the latent factor structure of employed scales, we tested a first-order model for each of the measures employed.

We tested each model for our three information categories separately.

Results

Model Properties

Including our controls for age and gender, the overall models for app 1 (FE) explained 13.4 % [.057; .192] 1 of variance in disclosing intention of health information (adjusted R 2 ), 16.2% [.083; .220] for personal information and 20.8% [.128; .271] for budget information.

Attitude-Behavior Model (H1)

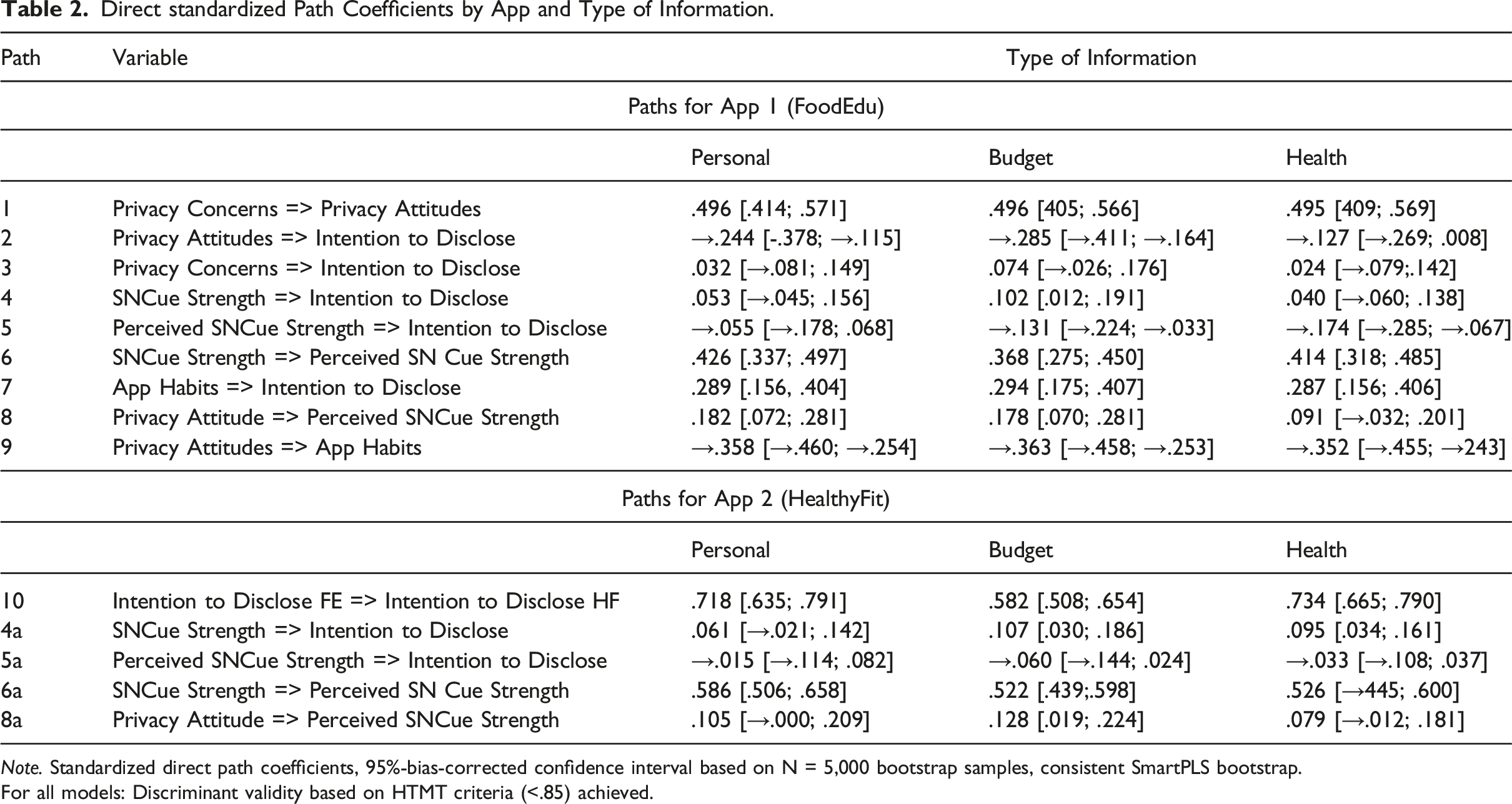

Direct standardized Path Coefficients by App and Type of Information.

Note. Standardized direct path coefficients, 95%-bias-corrected confidence interval based on N = 5,000 bootstrap samples, consistent SmartPLS bootstrap.

For all models: Discriminant validity based on HTMT criteria (<.85) achieved.

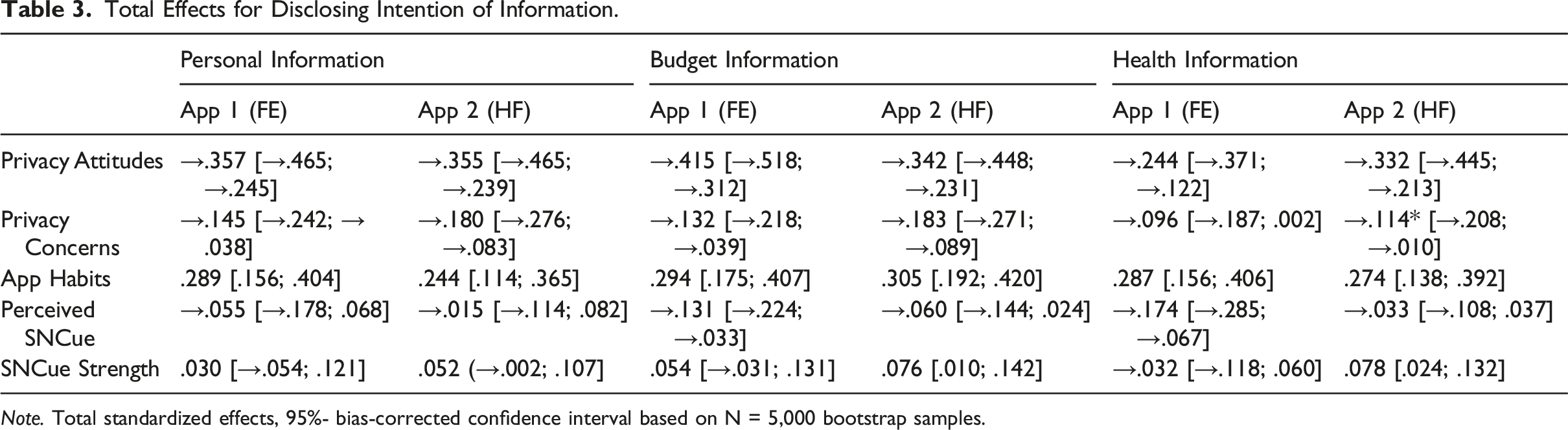

Total Effects for Disclosing Intention of Information.

Note. Total standardized effects, 95%- bias-corrected confidence interval based on N = 5,000 bootstrap samples.

Heuristics Model: The Impact of SNcues (H2, RQ1)

As expected in H2, SNcue strength had a positive influence on the intention to disclose information (path 4), meaning the higher (manipulated through our three experimental conditions) the absolute number of other people indicating to disclose a certain type of information, the higher the number of information participants intended to disclose. Yet, this effect only became significant (p =.030) for budget information and was rather weak. Thus, we can confirm H2 only for budget information.

This overall rather low and partly non-significant effect was potentially due to the suppression effect of perceived SNcue strength. For all information types, we found a significant (p<.001) moderate positive effect of SNcue strength on perceived SNcue strength (path 7). However, the effect of perceived SNCue strength on the intention to disclose information (path 6) was negative. This effect was significant (p = .003) for health and budget (p = .008) information yet not for personal information (p = .391). Looking at total effects, these effects remained the same for perceived SNcue strength, yet absolute SNcue strength is not a significant (p >.1) predictor for any of the three types of information (Table 2 and 3). We can answer RQ1 by stating that perceived SNCue strength suppresses the effects of absolute SNCue strength on information disclosure.

Effects of App Use Habits (H3)

Across all models, app use habits (H3; path 5) were a significant (p<.001) medium-sized positive direct predictor of information disclosure (Table 2): The more people were used to downloading and using apps, the more they were willing to disclose information about themselves. We can confirm H3 for all three types of information.

Relationships between attitudes, SNcue strength, and app use habits (RQ2, 3)

In RQ2 and RQ3 we further investigate the influence of privacy attitudes on perceived SNcue strength (RQ2) and app habits (RQ3). Findings underline the crucial role of privacy attitudes. Privacy attitudes had a significant and positive effect on perceived SNcue strength (RQ2) (p<.001) for budget and personal information but not for health information (p = .128) (path8). In answering RQ2, this means that if users have high, that is, critical privacy attitudes and are confronted with a high number of other users disclosing a certain type of information, this may activate higher levels of perceived SNcue strength that will then reduce the likelihood of disclosing personal and budget information. Answering RQ3, we also see that privacy attitudes were a significant (p <.001) negative predictor of app habits (RQ3; path 9, Table 2). That leads to a suppression effect of privacy attitudes on the effect of app habits on intention to disclose information as the total effect size for app habits is lower than the observed direct effects (Table 3).

Testing the stability of the identified effects

Comparing findings for different types of information, we see a stable pattern, with privacy attitudes and app habits as the strongest predictors. Absolute SNcues only played a significant role in the case of budget information but even this effect disappeared when accounting for total effects. Here, perceived SNcue strength remained a significant predictor for the case of health and budget information. Overall, personal information is best explained by privacy attitudes and app habits and is less likely to be influenced by SNcues compared to health or budget information. Disclosing intention for health information is not only least explained by our model but also the type of information for which perceived SNcue strength plays a more substantial role (Tables 2 and 3).

To further test the stability of our analysis and to overcome the problem of having a one-stimuli study, we integrated data from the second test, that of the HF app, into the model. To do so, we calculated a direct path from information disclosure in app 1 (FE) to app 2 (HF) (Figure 2, path 10). We added the paths to re-analyze H1 (paths 2a, 3a), H2 (path 4a) and H3 (path 5a) as well as RQ1 (paths 6a, 7a) and RQ2 (pas 8a) based on the manipulation of SNcue strength and the respective perceived SNcue strength for the second app (Healthy Fit). Dependent variable now was information disclosure (personal, budget, health) for app 2 (HF) (see Figure 2). Schematic Research Model for Data Analysis—App 1 and App 2. Note. Schematic for SEM Model with predicted paths for two apps, effects of age and gender on intention to disclose information App 1 and App 2 are controlled for.

Our findings point into the direction that disclosing behavior in one app impacts disclosing behavior in another app. The direct path from the disclosing of information in app 1 (FE) to app 2 (HF) (path 10) shows a strong direct effect of previous information disclosure (app 1) on subsequent information disclosure (app 2) (Table 2). This implies that, on a statistical level, the direct path 10 mediated potential effects found for the other predictors in explaining intention to disclose information in app 2. Yet, for investigating the stability of our findings, we are not interested in analyzing the mediation of prior information disclosure, but we compared the total effects of the predictors in our model. The exceptions are the paths that are relating to the influence of the SNcues (paths 4a, 6a, 7a) on information disclosure. Adding the direct effect of prior information disclosure allowed us to control potential sequence and learning effects from our two stimuli presentations. Tables 2 and 3 also include the relevant paths and the total effects for our analysis of app 2 (HF).

For privacy attitudes, privacy concerns, and app habits we almost completely replicated the findings from our original model, thus confirming H1 and H3 again: Privacy attitudes are stable, medium-sized, negative predictors for information disclosure, effects of privacy concerns are weaker and nearly fully mediated through privacy attitudes. App habits are stable, medium-sized positive predictors for all types of information disclosure.

Regarding H2, absolute SNcue strength again had a significant (p = .004) weak positive effect on the intention to disclose budget information but this time also on health information. As in app 1, the effect on personal information was non-significant. Again, we can only partially confirm H2. Looking at RQ1, we observed a significant, positive medium-sized effect of SNcue strength on perceived SNcue strength. Yet in contrast to app 1, we could not find any direct effect of perceived SNcue strength on intention to disclose information and the total effects of SNcues on intention to disclose budget and health information remained significant. We see this as indicative of a complex relationship between perceived SNcue strength and absolute SNcue strength. Even though perceived cue strength is likely to suppress effects of absolute SNcue strength, in the case of our second app, these positive effects of SNcue strength on intention to disclose remained significant at least for health and budget information—even if effect size was rather low. This means that findings related to H2, studying the effect of SNcue strength, are indeed dependent on circumstances, such as the app involved, or the type of information being disclosed.

Discussion

Disclosing personal information is an integral part of mHealth app use and giving away such data can be seen as a way of “paying” for the use of such apps. Yet, privacy research has struggled to explain such a trade-off, asking whether the process of disclosing personal information is a conscious one, guided by one’s privacy attitudes (Dienlin & Trepte, 2015; Dinev & Hart, 2006) or a sub-conscious one guided by heuristic-driven or automated decision making (Acquisti et al., 2020; Kim & Sundar, 2014). Our answer to this question is that a combination of approaches from both domains helps to better explain users’ disclosing intentions in mHealth apps. We therefore accounted for the relationship between privacy attitudes and concerns on the intention to disclose information and we added (a) potential effects of social norms as heuristic cues (Spottswood & Hancock, 2017) as well as (b) habitual app use (Quinn, 2016) to complement our framework. Our results show that each of these approaches adds value to our understanding of privacy decision-making in mHealth apps and should be viewed as complementary.

Consequentially, we conclude the following: First, the differentiation between general privacy concerns and specific privacy attitudes as suggested by Dienlin and Trepte (2015) increases the explanatory power of privacy research models. Privacy attitudes, if measured to reflect attitudes towards specific behaviors, explain a large proportion of the overall explained variance—regardless of the type of information considered. Our findings highlight the crucial role privacy attitudes play for information disclosing intentions as they almost fully mediate effects of privacy concerns on information disclosure. Looking at the effect sizes, the direct path coefficients of our models for privacy attitudes on information disclosure are in the upper bond of what Baruh et al. (2017) found in their meta-analysis. And when we take not only the direct path coefficients but also the indirect paths through the effects of privacy attitudes on app habit and perceived cue strength on information disclosing intention into consideration, total effect sizes are substantially stronger. So, research is well-advised not only to investigate the direct effects of privacy attitudes on intention to disclose information but also to account for its indirect effects. In addition to recent attempts to test the relationship between attitudes and concerns from a longitudinal perspective accounting for within-person-level effects (Dienlin et al., 2021) our study provides further evidence that the privacy paradox of an attitude-behavior gap is not as paradoxical as it appears, but rather a matter of modeling and measuring the influence of privacy attitudes on (intention to) information disclosure.

A second takeaway from our study relates to the integration of social norms into the framework by measuring them not through self-report assessment, but through the manipulation of social norm cue strength. Here, our research expands existing approaches on social norm cues for privacy management (Acquisti et al., 2012; Kim & Sundar, 2014; Spottswood & Hancock, 2017). Yet, for the first time, in our understanding, our research also considered the subjective evaluation of the cue strength in addition to its objective value. Again, our findings are rather stable and highlight the importance of this step. Potential effects from social norm cues can disappear when subjective cue strength is considered. And here we see that particularly for health information in our food app, perceived cue strength becomes a significant and negative predictor of intentions to disclose information. More research is needed to understand under which circumstance social norm cues play a more or less important role. Numerical indications of other users disclosing a certain type of information alone seem not to trigger social norm cues but need to be viewed with reference to a how such a number is perceived in strength. Users will be more likely to follow such a cue if it is in line with their expectations. As a methodological consequence, the manipulation of social norm cue strength should take this subjective notion into consideration by, for instance, manipulating absolute cue strength based on inquiring how likely users are to disclose certain types of information beforehand.

A third conclusion from our research is the crucial and as of now often neglected role of app use habits in privacy decision-making. App habits explained a substantial amount of variance for all types of information disclosure. On a more exploratory note, we also found that app habits and privacy attitudes are related. We proposed that privacy attitudes lead to lower habitual app use. The argument behind this assumption is that consciousness about one’s mobile privacy might be expressed through an overall reduced habitual use of apps. Importantly, the argument could be reversed to state that privacy attitudes are not a predictor but a function of lower habitual use. That is, users whose behavior is less guided by automatic components of decision-making could be more conscious about other factors, such as their attitudes. Because our design was cross-sectional, we were not able to test these competing views on the role of habits. This would require longitudinal data and appropriate models, such as the random intercept cross-lagged panel model (Hamaker et al., 2015) which we see as a promising avenue for further research.

Lastly, while our results are stable overall, we observe subtle differences with respect to what app is used and what type of information is (intended) to be disclosed. For us, this is a finding in itself as well as a challenge for future research both on a theoretical as well as methodological level. Our findings are limited to the specific case of mHealth apps, and the information required by this type of app. A broader, more generalizable model may have greater difficulties to account for the effects and effect sizes we found. Yet, Kokolakis (2017) already concluded that studies on the privacy paradox are well-advised to be specific with respect to different types of information to be disclosed. However, this implies that studies on privacy decisions in digital environments are likely to suffer from a lack of generalizability and a more generalized model for information disclosure is very unlikely to be helpful in explaining specific cases of information disclosure. In this regard, the type of disclosed information may be viewed as a moderator variable. While our study design forced us to analyze the three types of information disclosure separately, rather than including information type as a moderator variable, we can observe a few, still unexplained differences between the information types.

On a theoretical level, we presented and tested a research model that integrated several streams of privacy research and therefore has an eclectic nature. This means that we deliberately focused on specific approaches towards explaining individual privacy behaviors and information disclosure intention while other approaches were left out. Drawing on other recent approaches towards studying the seemingly paradoxical behavior related to privacy decision-making, privacy fatigue and privacy cynicism were found additional explaining factors that account for users’ perceptions of diminished agency and feelings of powerlessness in regard of the handling of their data of platform or service providers (Lutz et al., 2020; Zhu et al., 2021). Acknowledging that previous research found privacy fatigue to be a stronger explanatory factor for privacy behaviors compared to privacy concerns (Cho et al., 2018), we wonder how this concept is related to privacy attitudes and app habits—as both concepts were found stronger predictors of information disclosure intention compared to privacy concerns and we could expect that part of the variance explained by privacy fatigue is already catered for by the more habitual app use as a consequence of less cognitive investment to protect one’s privacy.

Regarding the design of our study and in line with a substantial amount of privacy research, we were not able to observe actual privacy behavior, but only behavioral intention, which is a strong predictor of actual behavior, yet it is far from perfect. Therefore, our model need to be tested in a setting with actual observational data—which would be a considerable challenge for researchers to gather.

Finally, our study was cross-sectional, therefore we have to be careful with respect to causal claims and while the direction of effects in our model was theoretically based, we need to be aware that reciprocal effects still may play a role.

Finally, we see that our study opened the ground to provide further evidence that the so-called privacy paradox can be solved by investigating the complex relationships both in terms of attitudinal as well as circumstantial factors of online and mobile privacy. On a theoretical level, it makes sense to differentiate between privacy concerns and attitudes and to integrate effects from habitual app usage. On a methodological level, we encourage researchers to built upon the experimental approaches put forward in the tradition of heuristic driven decision-making research. For the integration of social norm cues as predictors, we specifically recommend accounting for the subjective component of perceived cue strength.

Supplemental Material

Supplemental Material - Disclosing Personal Information in mHealth Apps. Testing the Role of Privacy Attitudes, App Habits and Social Norm Cues

Supplemental Material for Disclosing Personal Information in mHealth Apps. Testing the Role of Privacy Attitudes, App Habits and Social Norm Cues by Leyla Dogruel, Sven Joeckel, and Jakob Henke in Social Science Computer Review.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Note

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.