Abstract

Online discussions about politics and current events play a growing role in public life, and they can foster positive outcomes (e.g., civic engagement and political participation) and negative outcomes (e.g., hostility and polarization). The present research examines how the use of doubtful (vs. confident) language influences behavior in online discussions of current events. We examine the effects of doubtful language on comment popularity (i.e., recommendations from other users) and the use of emotional language in subsequent replies. We examine data from 1.9 million user comments from the New York Times website. Comments containing doubtful language were less popular, receiving fewer user recommendations. Additionally, replies to doubtful comments were less emotional (containing fewer positive emotions and fewer negative emotions). These results suggest that although doubtful authors are less likely to be recommended by other users, they may play an important role in helping to foster civility in online discussions.

People use online news sources to learn about current events and politics (Pew Research Center, 2020), and these platforms enable users to actively engage with the news by sharing content and participating in online discussions (Kalogeropoulos et al., 2017). While some research has argued that online discussions stimulate civic engagement and political participation (Boulianne, 2016), other work has found that online discussions can have negative social consequences, such as ideological polarization (Yarchi et al., 2020) as well as harassment and flaming behaviors (Su et al., 2018). As online discussions about politics and current events play a growing role in public life, it is important to understand how social psychological processes shape user behavior on these platforms. It is important for users (who hope to influence others) and publishers (who want to promote user engagement and discourage toxic behavior) to understand which online comments are most likely to become popular and spread to wide audiences and which comments are most likely to evoke strong, emotional reactions from other users.

We examine how the use of doubtful (vs. confident) language influences behavior in online discussions of current events. People use the presence (or absence) of doubt to make inferences about the intelligence and moral character of others (Critcher et al., 2013; Pulford et al., 2018). Doubt may be particularly relevant in online discussions of current events, as expressions of doubt influence how people perceive politicians (Dumitrescu et al., 2015) and scientific experts (Hollin & Pearce, 2015). We use data from New York Times user comments (Keserwani, 2018) to investigate two research questions: whether doubtful comments are more (or less) likely to be recommended by other users, and whether replies to doubtful comments are less emotional.

Social Processes in Online News Discussions

People use the Internet to learn about and discuss current events. For example, one in five U.S. Americans use the Internet as a primary source for political news (Pew Research Center, 2020). Of those users, many (30%–40%) engage in online discussions of news stories (Pew Research Center, 2016). Participating in online news discussions provides users with an opportunity to have social contact with other users and journalists (Springer et al., 2015). Previous research suggests that commenters are influenced by a range of psychosocial motives, such as emotion regulation (Harber & Cohen, 2005), impression management (Lo & McKercher, 2015), and the desire to influence others (Hong & Cameron, 2018). There is also substantial heterogeneity in how commenters interact, with online discussions ranging from respectful debate (Han & Brazeal, 2015; Papacharissi, 2004) to sadistic provocation (e.g., online trolling and flaming; Buckels et al., 2019; Buckels et al., 2014).

We examine how the use of doubtful language influences two outcomes in news discussions: comment popularity (i.e., the extent to which comments are recommended by other users) and emotionality (i.e., the presence of emotional language in discussions). In online discussions, the majority of user-generated content receives very little attention and it is very difficult to predict, a priori, what content will become popular (Salganik et al., 2006; Salganik & Watts, 2009). Online systems tend to produce extremely unequal outcomes (i.e., a very small percentage of content receives a very large amount of attention), as both social influence processes and underlying content curation algorithms draw user attention toward popular content (Bond et al., 2012; Sridhar & Srinivasan, 2012). Understanding which comments become popular is important for users (who hope to make popular contributions) and for online platforms (who want to present users with content that they will find interesting or appealing).

In addition to understanding which comments are more likely to become popular, it is important to understand which comments provoke emotional discussions. Previous research has found that emotions, once activated, spread through social networks, a phenomenon known as emotion contagion (Goldenberg & Gross, 2020; Rosenbusch et al., 2018). In particular, researchers have noted that negative emotions, such as anger, spread quickly in online networks (Crockett, 2017). Negative emotions, in turn, are associated with ideological extremity (Frimer et al., 2019) and are a precursor of antisocial online behaviors, such as public shaming (Basak et al., 2019), flaming (i.e., posting offensive or insulting remarks on social media; Kayany, 1998; Moor et al., 2010), and other forms of online harassment (Lewis et al., 2020). Given these consequences, it is important to understand the factors that can inflame (or dampen) emotions in online discussions. We focus on doubt as one potential factor.

The Interpersonal Consequences of Expressing Doubt

Doubt is “the subjective uncertainty that people experience when assessing the correctness of their decisions, beliefs, or opinions” (van de Calseyde et al., 2018, p. 98). Doubt refers to the absence of confidence or certainty in decision making. Many studies have investigated doubt at an individual level, examining when people feel doubtful about their choices and the downstream consequences of feeling doubtful (Krosnick & Petty, 1995; Moore & Healy, 2008; van de Ven et al., 2010). For example, when people feel doubt they are less likely to choose normative alternatives (van de Ven et al., 2010), and feelings of postdecision doubt predict stronger feelings of regret (van de Calseyde et al., 2018). We are interested in the interpersonal effects of doubt, that is, how people react to others who express their opinions with doubt (or confidence). Prior research suggests that expressions of doubt influence the perceptions of politicians (Dumitrescu et al., 2015) and scientists (Hollin & Pearce, 2015), suggesting that doubt is an important metacognitive cue that people use to judge the intentions and competencies of others.

Research on the confidence heuristic suggests that people often perceive confidence as an indicator of speaker expertise and doubt as an indicator of lack-of-expertise (Price & Stone, 2004; Pulford et al., 2018; Thomas & McFadyen, 1995). Given two comparable advisors, people are initially more willing to trust in the advisor who expresses a greater degree of confidence, as confidence is often correlated with knowledge and expertise (Pulford et al., 2018). Note, however, calibrated beliefs are preferred over unconditionally confident beliefs in the long run (Tenney et al., 2007; Tenney et al., 2018; Tenney et al., 2011). In the presence of feedback and evidence of past performance, people prefer advisors who properly calibrate their levels of uncertainty (Tenney et al., 2018). Confidence is beneficial in first impressions but leads to negative evaluations if it is not backed up with results (Heck & Krueger, 2016; Tenney et al., 2018).

At the same time, there are some contexts in which doubtful advisors are preferred over confident advisors (Evans et al., 2021; Karmarkar & Tormala, 2010; van de Calseyde et al., 2014). Specifically, doubt is perceived as a sign of authenticity or trustworthiness when people interact with advisors in low-trust environments (Evans et al., 2021). For example, in the domain of online consumer reviews, many readers are concerned about the possibility of fake positive reviews provided by bots or restaurant owners (Luca & Zervas, 2016). Given these concerns, readers are more likely to see doubtful (vs. confident) positive reviews as authentic, as fake reviewers are unlikely to express any uncertainty or reservations in their advice (Evans et al., 2021). Doubtful advice is also associated with increased trust and persuasion when it is unexpected; doubt is beneficial when it is shared by a trusted expert but not a novice (Karmarkar & Tormala, 2010). Whereas confidence is sometimes seen a sign of competence, doubt is sometimes taken as a sign of authenticity or trustworthiness.

In addition to the above findings, expressions of doubt influence whether people have extreme (or moderate) reactions to others’ decisions. For example, someone who engages in an immoral behavior (keeping a lost wallet) is judged less negatively if they express some doubt about the decision (Critcher et al., 2013). Similarly, someone who acts morally (e.g., engaging in prosocial behavior) is judged less positively if they are perceived to be doubtful about the choice (Jordan et al., 2016; van de Calseyde et al., 2020). In other words, conveying doubt reduces blame for negative actions and praise for positive actions. Consequently, expressions of doubt in online discussions might reduce the emotional intensity of replies.

The Present Research

We report a pre-registered study to examine the effects of doubtful user language on comment popularity (recommendations from other users) and subsequent online discussion (the emotionality of user replies).

Question 1: Doubt and Comment Popularity

Our first research question is whether people are more likely to recommend doubtful (vs. confident) comments. Previous research on expressions of doubt gives rise to two competing hypotheses: Research on the confidence heuristic suggests that doubtful advisors are seen as less credible in one-shot interactions, where there is no opportunity for them to be proven wrong (Tenney et al., 2007; Tenney et al., 2018; Tenney et al., 2011). This view suggests that doubtful authors should be seen as less credible (and less likely to be recommended) than confident authors. On the other hand, there is also evidence for the competing hypothesis that doubtful comments should be more likely to be recommended: Doubt can serve as a signal of authenticity or trustworthiness in low-trust environments (Evans et al., 2021; van de Calseyde et al., 2014). If readers are concerned about the authenticity and potential biases of online commenters (Luca & Zervas, 2016; Zimmer et al., 2019), then they may respond positively to authors who openly express uncertainty and lack of confidence in their opinions. To test these competing hypotheses, we examine the relationship between doubt and the number of subsequent “user recommendations” of each comment.

Question 2: Doubt and Reply Emotionality

Our second research question is whether responses to comments containing doubtful language are less emotional than responses to comments containing confident language. Our hypothesis is that comments containing doubtful language will evoke weaker emotional responses (in terms of both positive emotions and negative emotions) than comments containing confident language. This hypothesis is based on previous research where reactions to doubtful decisions were less extreme than reactions to confident decisions (Critcher et al., 2013; Jordan et al., 2016). In the context of online discussions, we expect that doubtful comments will evoke weaker positive reactions and weaker negative reactions. In turn, these mitigated reactions mean that downstream replies to doubtful comments will be less emotional than reactions to confident comments. To test this hypothesis, we will examine the relationship between the doubtful language (of initial user comments) and the use of emotional language in subsequent replies.

Exploratory Analyses

We also conducted two sets of exploratory analyses: First, we compared the effect sizes of doubt (on recommendations and reply emotionality) with the effect sizes for other psychological variables measured using language. This helps put the effect sizes we observe in context with the effect sizes of other plausible predictor variables. Second, we examined how article content influenced the prevalence of doubt in user comments, and whether article content moderated the effects of doubtful comment language on subsequent user behavior. Specifically, we tested whether the effects of doubt differed for political and nonpolitical discussions. Arguably, due to the high level of polarization in political discussions (Gruzd & Roy, 2014), expressions of doubt might be relatively uncommon and less influential in the domain of politics.

Method

The preregistration for our study is available at https://osf.io/qjxfz/?view_only=38855efb6bd34b64bdc134c0c91d3824

Data Set

We used the “New York Times Comments” data set (Keserwani, 2018). The data set consists of the comments made on the articles published in New York Times in January–May 2017 and January–April 2018. The data set contains over 2 million user comments (and replies to comments) related to over 9,000 articles collected over a period of 9 months. These data were originally collected using the New York Times Developer API (https://developer.nytimes.com/apis).

Prior to preregistering our study, we used 1 month’s worth of data (April 2017), 11.2% of the total data set (243,831 user comments from 886 articles) for exploratory purposes. More specifically, we downloaded the “ArticlesApril2017.csv” and “CommentsApril2017.csv” files from the online repository and did not access any of the remaining data files. We examined the distributions of our primary dependent and independent variables, and we identified what analyses would be possible with the available data. Importantly, we did not test our models of interest, and these data were not included in our final analyses.

The remaining data set consisted of 1,932,532 comments (1,422,478 initial comments on articles and 510,043 replies to other user comments). The comments were written by a total of 223,289 unique users and they were associated with a total of 8,449 articles from 44 different sections of the paper (e.g., Editorial, Arts & Leisure, Business).

Data Processing

The original data set consisted of a set of CSV files containing the comments and articles published during each month. We downloaded and merged these files across months and then removed all variables that could potentially be used to identify the authors of comments (e.g., data related to the usernames and locations of authors). Then, comment texts were processed using the Linguistic Inquiry Word Count (LIWC) software (Pennebaker et al., 2015). The LIWC software scores texts based on how often they contain terms from predefined dictionaries. LIWC dictionary scores range from 0 to 100, indicating the relative frequency at which terms from a dictionary appeared in a text. The software also calculates several summary scores and variables related to length and linguistic complexity, descriptions of these variables are reported below.

Expressions of Doubt

Our primary independent variable of interest was the extent to which comments contained doubtful versus confident language. Following the approach in Evans et al. (2021), we measured doubt by combining scores from two LIWC dictionaries: tentativeness (example words: “maybe” and “perhaps”; M = 2.70; SD = 3.23) and certainty (example words: “always” and “never”; M = 1.81; SD = 3.15). These dictionaries measured the percentages of tentative and certain words in a document; for example, a tentative score of 5 indicates that 5% of the words in a text were related to the concept of tentativeness.

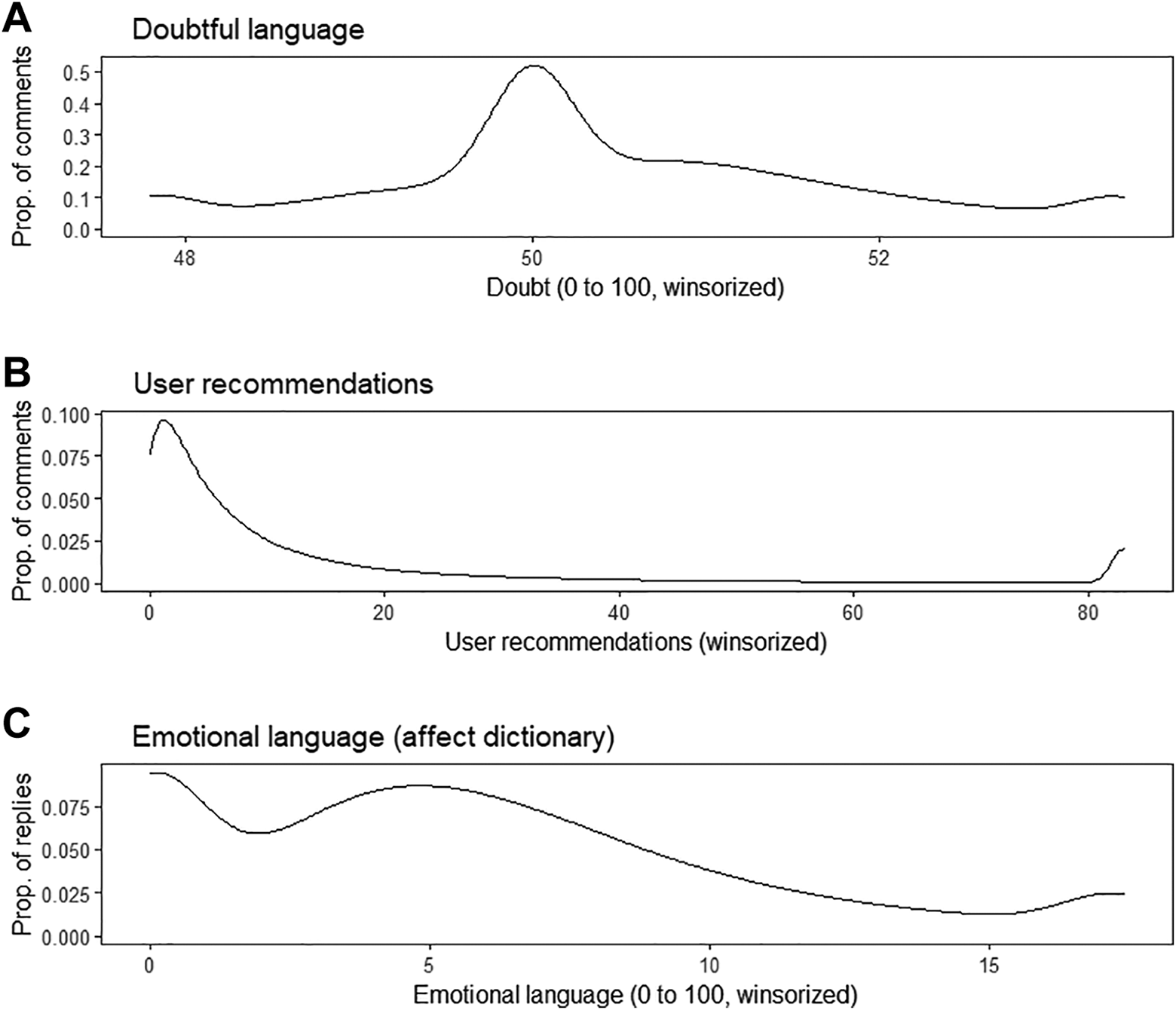

Doubt scores were calculated by averaging scores for tentativeness and reverse-scored certainty. Doubt scores ranged from 0 to 100, with an average of 50.44 (SD = 2.29). For doubt, a score of 50 indicates a neutral score (i.e., comments that contained equal numbers of doubtful and confident words). The majority of comments, 80.0%, contained at least one-word related to one of the two dictionaries used to create the measure of doubt. The distribution of doubt scores is reported in Figure 1A, and representative examples of high- and low-doubt comments are provided in Table 1.

The distributions of our primary independent variable ([A] doubt scores) and dependent variables ([B] user recommendations of initial comments and [C] emotional language in replies to comments). Note. These variables were winsorized for visualization (for doubt, the top 2.5% and bottom 2.5%; for recommendations and emotionality, the top 5%) but were not transformed in analyses.

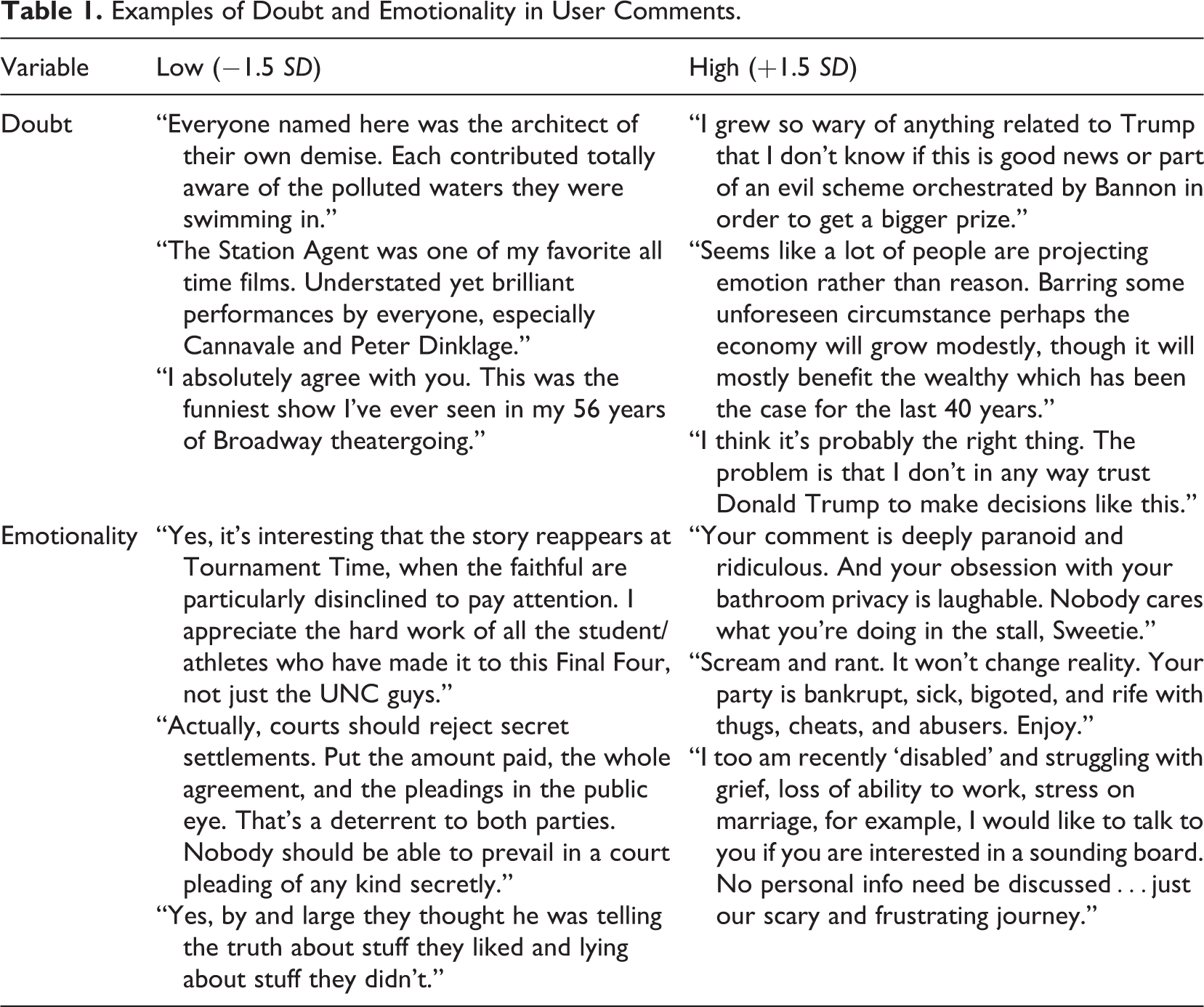

Examples of Doubt and Emotionality in User Comments.

Content validation study

We conducted a study to test whether our measure of doubt correctly differentiated between high-doubt and low-doubt opinions. We recruited 400 U.S. American participants from Prolific and asked them to write about issues they felt either doubtful or confident about (in a between-subjects design). Participants were told to write about issues related to “current events, politics, or other social issues.” Texts about doubtful opinions scored higher on the doubt dictionary (M = 51.7, SD = 1.66) compared to texts about confident opinions (M = 50.2, SD = 1.45), b = 1.49, β = 0.43, p < .001. Note that we use b to refer to unstandardized regression coefficients and β to refer to standardized coefficients. Moreover, there were significant differences in both of the subdictionaries used to create the measure of doubt: doubtful opinions (vs. confident opinions) contained more tentative language (b = 2.35, β = 0.42, p < .001) and less certain language (b = −0.62, β = −0.18, p < .001).

Dependent Variables

We focused on two primary dependent variables: user recommendations of initial comments and the use of emotional language in replies to comments.

User recommendations

Our first dependent variable was the total number of times users voted to recommend initial comments (M = 26.07, SD = 123.57, skewness = 18.25, kurtosis = 594.22). We focused on recommendations of initial comments, N = 1,422,478, excluding recommendations of downstream replies to user comments. This variable was positively skewed, with the majority of comments (50.9%) receiving between zero and five recommendations. The distribution is illustrated in Figure 1B.

Emotionality in replies

Our second dependent variable was the use of emotional language (either positively or negatively valenced) in replies to comments. To measure the use of emotional language, we used the LIWC affect dictionary, which counts the total use of positive emotional language (posemo dictionary; example words: “love” and “nice”) and negative emotional language (negemo dictionary; example words: “hurt” and “nasty”), M = 6.39, SD = 7.77, skewness = 4.81, kurtosis = 44.99, N = 510,043. The use of emotional language was also positively skewed, and the distribution of scores is shown in Figure 1C, and representative examples of high- and low-emotionality replies are reported in Table 1. We also report additional analyses considering both dictionaries separately.

Additional Measures

Psychological measures

We tested whether the effects of doubt were robust while controlling for other psychological aspects of language, focusing on four previously validated summary scores from LIWC. Previous research found that the analytical thinking dictionary (analytic) was correlated with academic success (Pennebaker et al., 2014), clout was correlated with social status (Kacewicz et al., 2014), authenticity was correlated with honesty (Newman et al., 2003), and tone was correlated with the expression of positive (or negative) emotions (Cohn et al., 2004). Note that the tone dictionary measures whether an opinion is positive or negative (e.g., its valence), whereas the affect dictionary measures whether a text contains any words related to emotions (e.g., the strength of the opinion).

Indicators of length and complexity

We also conducted analyses to control differences in word count and linguistic complexity, which was measured using the average words per sentence and the relative frequency of words with more than six letters (Hartley et al., 2003; Tausczik & Pennebaker, 2010).

Newspaper section

Initial articles came from 44 different sections of the paper (e.g., Editorial, Arts & Leisure, Business).

Analysis Strategy

We conducted our analyses using functions from R and Stata. Given the large sample sizes, confidence intervals and measures of significance were uninformative; all statistical tests of interest were significant at p < .001. Therefore, we omitted this information and focused on effect sizes.

Our two primary outcomes of interest (user recommendations and emotionality of replies) were both over-dispersed count variables with high levels of skewness and kurtosis (Figure 1B and C); therefore, in our primary analyses, we estimated a series of negative binomial regressions (Gardner et al., 1995). Descriptive statistics and zero-order correlations among our variables of interest are reported in the Supplemental Materials.

Our preregistered approach was to estimate multilevel models with crossed random-intercepts for initial articles, N = 8,449, and comment authors, N = 223,289. Due to the size of our data set and the complexity of our analyses, our planned models were not able to converge. As an alternative approach, we estimated binomial regressions with robust error terms clustered at the level of comment author. We computed intra-class correlations to estimate the variance in user recommendations explained by differences in original articles and comment authors. Both ICCs were relatively small (original article = .008 and comment authors = .038). We included clustered errors for comment authors, as this variable had a comparatively larger effect. As a robustness check, we also estimated multilevel models using smaller (10%) subsets of our data. All of these analyses were consistent with our main results and are reported in our Supplemental Materials.

Additionally, we conducted several additional checks to examine the consistency of our results. More specifically, we winsorized our dependent variables and estimated standard ordinary least squares regressions. The results from this approach were consistent with our negative binomial regressions (see Supplemental Materials).

Results

Confirmatory Analyses

Doubt and user recommendations

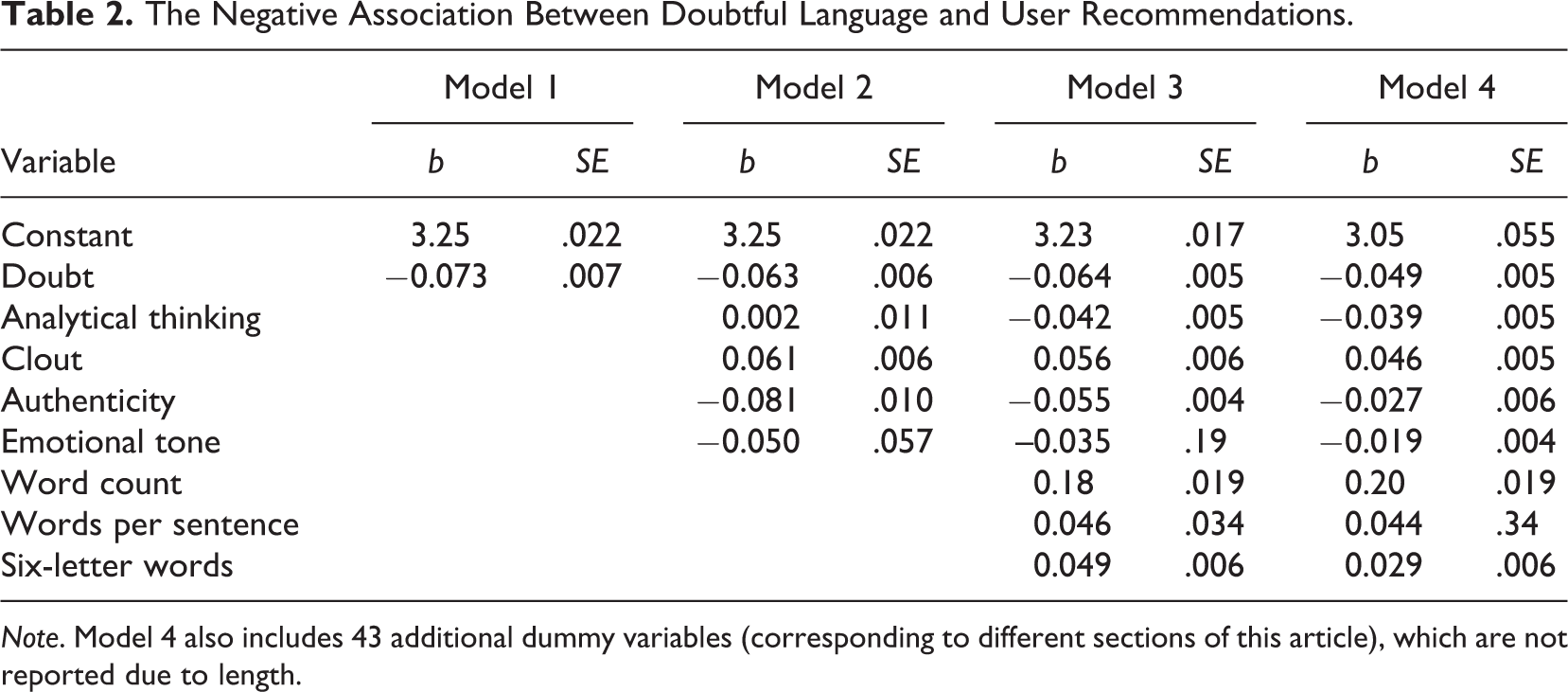

Our first set of analyses focused on the association between doubtful language in initial comments and user recommendations. We estimated a series of four models: Model 1 included only the effect of doubt, Model 2 also included the four LIWC psychological summary scores (analytical thinking, clout, authenticity, and emotional tone), Model 3 included these summary scores and measures of length and linguistic complexity (word count, words per sentence, and the frequency of words with six or more letters), and Model 4 included all of the preceding terms as well as dummy variables to control for differences across the 44 different sections of the paper. To improve the interpretability of our results, we standardized the predictors by their standard deviations but did not standardize the dependent variable. The results are reported in Table 2.

The Negative Association Between Doubtful Language and User Recommendations.

Note. Model 4 also includes 43 additional dummy variables (corresponding to different sections of this article), which are not reported due to length.

The association between doubtful language and user recommendations ranged from b = −0.049 (Model 4) to b = −0.073 (Model 1). In other words, a one standard deviation increase in the use of doubtful language was associated with a potential decrease in the expected number of user recommendations of 4.78% (Model 4) to 7.03% (Model 1).

Differentiating the effects of certainty and tentativeness

Next, we conducted analyses testing the relationship between user recommendations and the two subdictionaries used to create our doubt score, certainty, and tentativeness. These subdictionary analyses allow us to judge if the above effects were related to the negative effects of uncertain language and/or the positive effects of certain language.

We estimated a negative binomial regression with both dictionaries simultaneously included as standardized predictors. Comments containing tentative language were less likely to receive user recommendations, b = −0.075, SE = 0.009, and comments containing certain language received more recommendations, b = 0.018, SE = 0.006. The effect of tentativeness was more than four times larger than the effect of certainty, suggesting that people were more likely to penalize the presence of doubt than to reward the presence of confidence.

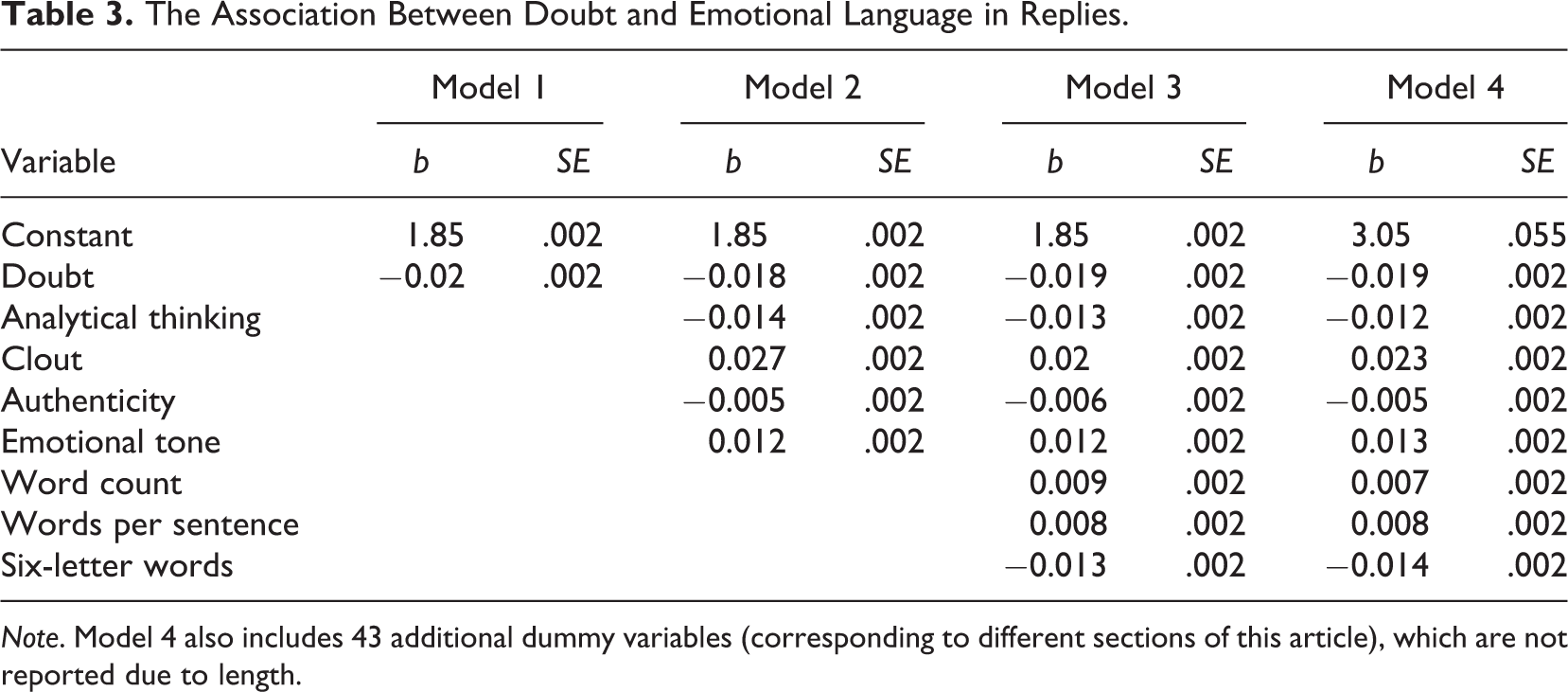

Doubt and reply emotionality

Our next set of analyses examined the association between doubtful language (expressed in initial comments) and the use of emotional language (expressed in subsequent replies). As in the previous section, we estimated a series of four models. Note that all of the predictors were measured at the level of the original comments. For example, we tested the effect of doubt (in initial comments) while also controlling for the use of analytical language (in initial comments). In contrast, our dependent variable (the use of emotional language) was measured at the level of subsequent replies to initial comments, total N = 510,043.

Our preregistered analytic strategy was to include random-intercepts for initial articles, initial comments, and authors of replies. The planned models were not able to converge, therefore we estimated binomial regressions with robust error terms clustered at the level of the initial comment, which had the largest intra-class correlation: initial comments = .152, author of reply = .149, and initial article = .015. The results are reported in Table 3. As in our above analyses, we standardized our predictors by their standard deviations but not the dependent variable.

The Association Between Doubt and Emotional Language in Replies.

Note. Model 4 also includes 43 additional dummy variables (corresponding to different sections of this article), which are not reported due to length.

There was a consistent negative association between the use of doubtful language in initial comments and the use of emotional language in replies, b ∼ .02. In other words, a one standard deviation increase in the use of doubtful language was associated with a 1.9% decrease in the expected frequency of emotional words used in replies.

Differentiating the effects of certainty and tentativeness

Next, we conducted analyses testing the relationship between emotional language and the two subdictionaries used to create our doubt score, certainty, and tentativeness, estimating a negative binomial regression with both dictionaries simultaneously included as standardized predictors. There was a negative relationship between tentative language and emotionality in replies, b = −0.019, SE = 0.002, and a positive relationship between certain language and emotionality in replies, b = 0.009, SE = 0.002. As in our analyses of recommendations, the association between doubt and emotionality was related to the use of tentative (rather than certain) language.

Specific emotional reactions to doubt

The preceding analyses thus far found that replies to doubtful comments contained less emotional language. To better understand these results, we examined the relationship between doubtful language and five specific measures of emotionality from LIWC: positive emotions, negative emotions, anger, sadness, and anxiety. When an initial comment contained doubtful language, subsequent replies contained fewer positive emotions, b = −0.048, SE = 0.003, as well as fewer negative emotions, b = −0.038, SE = 0.003. Analyses of the three negative emotion measures (anger, sadness, and anxiety) revealed that expressions of doubt were associated with fewer expressions of anger (b = −0.065, SE = 0.006) and sadness (b = −0.053, SE = 0.007), but doubt was also associated with more expressions of anxiety (b = 0.051, SE = 0.008).

Exploratory Analyses

Assessing the effect size of doubt

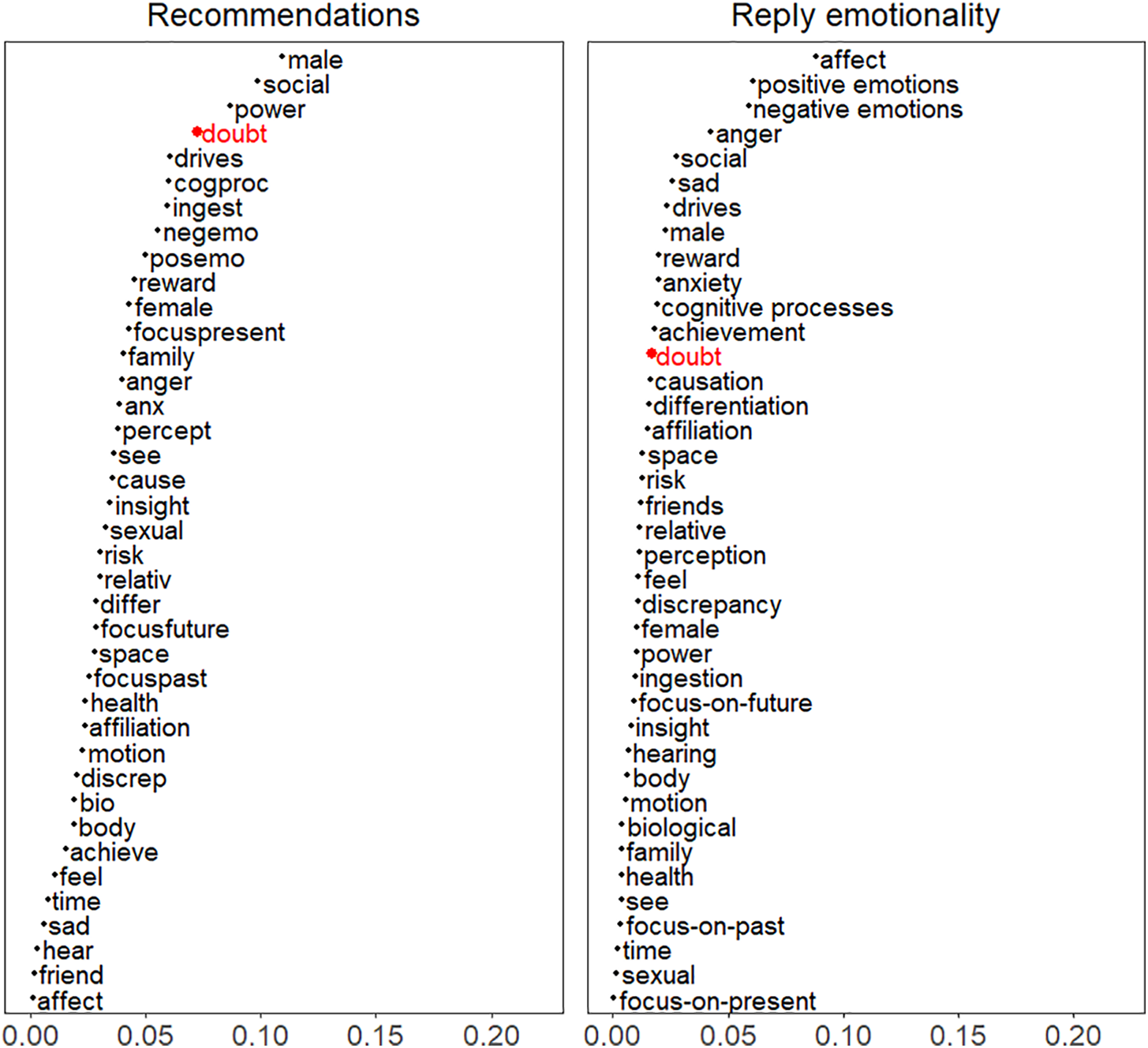

We compared the effects of doubt with the effects of 38 additional psychological variables measured using LIWC. These variables included references to emotions (e.g., anxiety, anger, and sadness), psychological drives (e.g., affiliation, power, and reward), and social concerns (e.g., friends and family). We compared the Model 1 β coefficient of doubt (i.e., the effects of doubt on user recommendations and emotional language without controlling for covariates) with the absolute effect sizes (β coefficients) of these 38 other psychological variables. In other words, we estimated a series of negative binomial regressions with user recommendations as the dependent variable and 38 other psychological variables as predictors. The effect sizes from these 38 models are compared to the doubt effect size in Model 1. Note that all variables (except for user recommendations) were standardized. The distribution of effect sizes is illustrated in Figure 2. For user recommendations, the effect size of doubt was larger than 35 of the 38 effects (92.1%). For reply emotionality, the effect size of doubt was larger than 26 of the 38 effects (68.4%).

The effect of doubt (bold) was compared with 38 other psychological dictionaries (gray) measured using Linguistic Inquiry Word Count.

Article content and the effects of doubt

Finally, we conducted exploratory analyses to examine how article content influenced the prevalence of doubt in user comments, and whether article content moderated the effects of doubtful comment language on subsequent user behavior. Specifically, we compared comments for political articles (from the “politics,” “editorial,” and “OpEd” sections; n = 649,854) and nonpolitical articles (from the remaining 41 sections, n = 772,922).

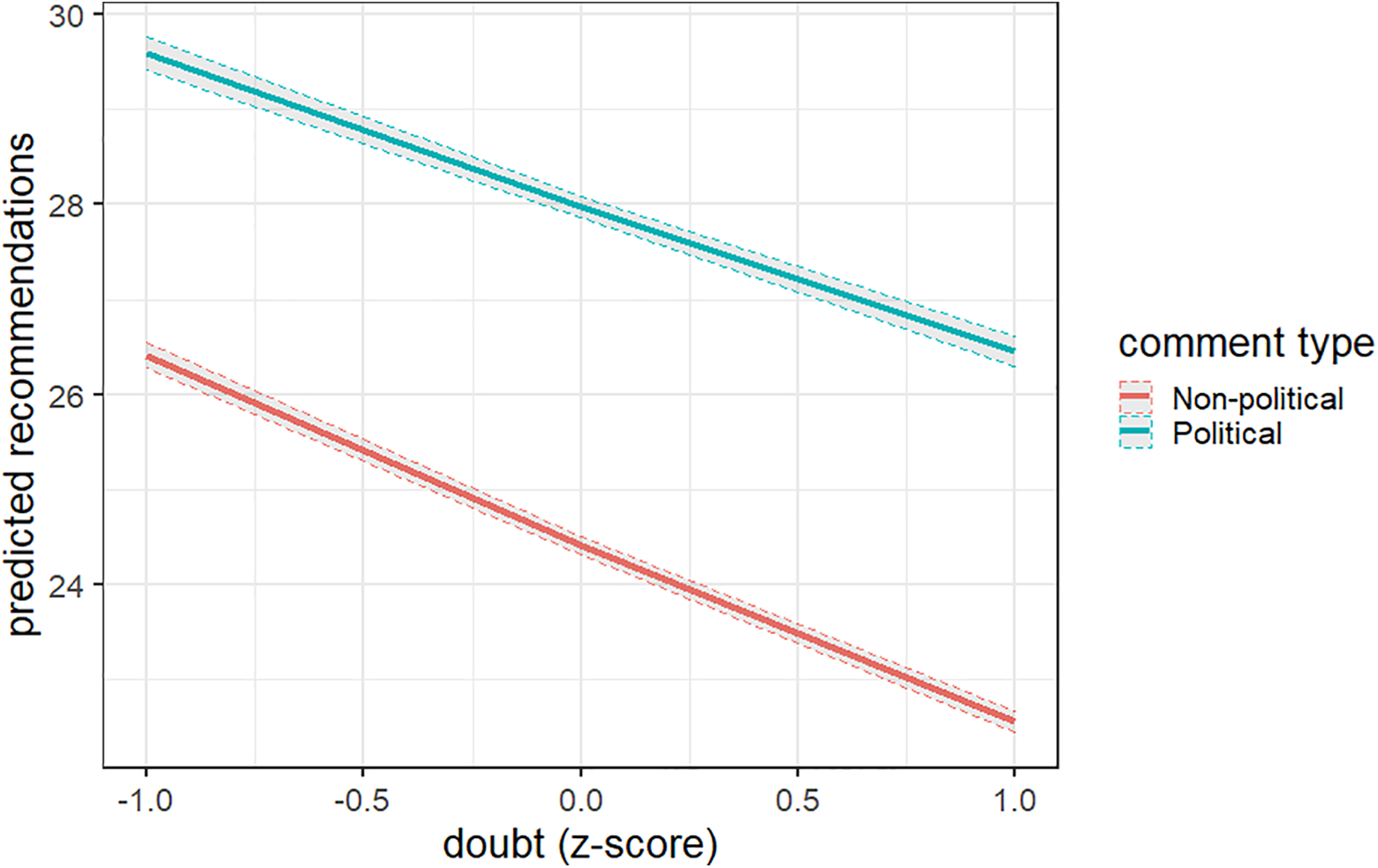

Comments about political articles were less doubtful (M = 50.39) than comments about articles on other topics (M = 50.50), b = −0.10, SE = 0.004. We tested whether the effects of doubt on user recommendations were different for political comments, estimating a negative binomial regression with user recommendations entered as the dependent variable and the following variables entered as predictors: doubt, article type (+.5 = political, −.5 = nonpolitical), and a doubt-by-article type interaction term. Doubtful comments received fewer recommendations (b = −0.067, SE = 0.007) and comments to political articles received more recommendations (b = 0.135, SE = 0.027). There was also a significant doubt-by-article type interaction (b = 0.022, SE = 0.011). The interaction between doubt and political content is reported in Figure 3. For nonpolitical comments, a one standard deviation increase in doubt was associated with a 7.5% decrease in user recommendations; for political comments, a one SD increase in doubt was associated with a 5.4% decrease in recommendations.

We also tested whether article type moderated the effect of doubt on reply emotionality: Emotionality was higher in replies to comments about political articles (b = 0.033, SE = 0.005), but there was no significant doubt-by-article type interaction (b = 0.001, SE = 0.004, p = .73).

The estimated effects of doubt and comment type (political vs. nonpolitical) on user recommendations.

Discussion

How do expressions of doubt influence user behavior in online news discussions? We analyzed the effects of doubtful language on user recommendations and emotionality in user replies. First, we found that comments containing doubtful language received fewer user recommendations. These findings are in line with prior research showing that doubt often functions as a signal of (lack of) expertise (Price & Stone, 2004). Second, we found that replies to initial comments containing doubtful language were less emotional, containing fewer positive emotions and fewer negative emotions. However, analyses of specific emotions revealed that replies to doubtful comments were less positive, less angry, and less sad but more anxious. Taken together, these findings suggest that expressions of doubt have mixed effects on online discussions: At the individual level, authors who express doubt receive fewer recommendations, which determine the visibility of comments in online discussions. However, doubtful language may also help to dampen emotional reactions that lead to toxic or uncivil online behavior.

The Social Consequences of Expressing Doubt

Doubt plays an important role in individual decision making (Moore & Healy, 2008) and interpersonal communication (Tenney et al., 2018). Previous research disagrees on whether expressing doubt has positive or negative consequences for social perception, with some studies showing that confident advisors are preferred to doubtful advisors (confidence heuristic; Price & Stone, 2004), and other studies showing the opposite (e.g., Evans et al., 2021). Our study results suggest that when it comes to online discussions, comments containing confident language enjoy more popularity, providing support for the confidence heuristic. Importantly, the negative effects of doubt on user recommendations were robust when controlling for other relevant psychological variables (such as the use of emotional and analytical language), newspaper section (e.g., politics, culture), and linguistic variables related to actual message quality (such as word count and linguistic complexity).

Our findings provide general support for the confidence heuristic, which suggests that expressions of confidence increase perceived competence and, in turn, persuasiveness. Additionally, the negative effects of expressing doubt were substantially larger than the positive effects of expressing confidence. This difference is consistent with prior work suggesting that people are generally more sensitive to negative, rather than positive, information (Baumeister et al., 2001). We also conducted exploratory analyses to examine domain differences in the consequences of expressing doubt, with the finding that the negative effects of doubt were weaker for political (vs. nonpolitical) comments. Future research should consider how contextual factors, such as issue complexity and audience polarization, influence the extent to which people react negatively (or in some cases, positively) to expressions of doubt.

Our findings also confirm that reactions to confident actors tend to be more extreme than reactions to doubtful actors (Critcher et al., 2013; Evans & van de Calseyde, 2017a). Replies to confident comments were more emotional than replies to doubtful comments, containing more positive emotions and more negative emotions (particularly, more anger and more sadness). These findings are noteworthy, given the important role that emotions play in online outrage and other forms of toxic user behavior (Crockett, 2017). An initial author’s willingness to express doubt may potentially influence the course of conversation in online forums.

We also looked at the correlations between doubtful language and the expressions of specific emotions in replies. While replies to doubtful comments contained fewer emotions overall, they also contained more expressions of anxiety. Previous research found that experiencing emotions associated with uncertainty (such as anxiety) can lead to feelings of doubt in subsequent situations (Tiedens & Linton, 2001). Our findings suggest that the process works both ways: being exposed to doubtful language evokes emotions associated with uncertainty appraisals.

We also conducted exploratory analyses comparing political and nonpolitical articles: Comments on political articles were less likely to contain doubtful language than comments on nonpolitical articles. At the same time, the negative relationship between doubtful language and user recommendations was weaker for political comments. Possibly, readers were more willing to accept doubt in the context of politics. However, further work is needed to disentangle audience effects (i.e., the types of readers commenting on political vs. nonpolitical articles) and specific content factors that influence reactions to doubt.

The Importance of Being Doubtful

To gauge the relative importance of doubtful language, we compared the effects of doubt to the effects of 38 other psychological language variables measured using LIWC. Compared to other language variables, doubt had a relatively strong effect on user recommendations: The absolute effect size of doubt was larger than the effect sizes of 35 (of 38) additional variables. The effect of doubt on emotionality in replies was comparatively smaller, but still noteworthy: Here, the effect size of doubt was larger than the effect sizes of 26 (of 38) variables. Not surprisingly, the use of emotional language in initial comment was the strongest predictors of emotional language in subsequent replies. Initial comments containing strong positive emotions or strong negative emotions elicited stronger emotions in subsequent replies. These findings are in line with theories of digital emotion contagion (Goldenberg & Gross, 2020; Rosenbusch et al., 2018), confirming that doubt is a robust and reliable predictor of user behavior across different social media platforms (Evans et al., 2021).

In our study, a one standard deviation increase in the use of doubtful language was associated with a 7% decrease in user recommendations and a 2% decrease in reply emotionality. These effects are relatively large when compared to other language variables, but it is also important to consider whether these effects also have practical significance. Arguably, small effect sizes should be expected in studies of actual online behavior, as it is extremely difficult to predict the content that will become successful in online markets (Salganik et al., 2006). Moreover, even if the effects of doubt are small, they may result in large cumulative effects on behavior. In online markets, small initial advantages (e.g., a single positive recommendation) can lead to large-scale herding effects (Muchnik et al., 2013). Ideally, future studies should test whether experimentally increasing the visibility of high-doubt content (e.g., by manipulating the probability that high-doubt comments are selected as “featured comments”) has practical consequences on user engagement and online civility.

Limitations

One of the limitations of our approach is that we were not able to distinguish between self-directed expressions of doubt (e.g., readers who are unsure of their opinions) and other-directed expressions of doubt (e.g., readers who have doubts about the motives of others). This limitation applies to most of the previous research on the interpersonal consequences of doubt (Evans & van de Calseyde, 2017a; Stavrova & Evans, 2019; van de Calseyde et al., 2014). When considering how people perceive other-directed expressions of doubt, it is reasonable to assume that the effects of expressing doubt depend on the target and the observer’s relationship to the target. For example, people dislike those who generally distrust others but form positive evaluations of actors who distrust in appropriate targets (Evans & van de Calseyde, 2017b).

It is also important to consider how features of our data source (New York Times online comments) may have affected our results. Online comments on the site are actively moderated for hate speech, bots, and inappropriate behavior. A lack of heterogeneity in the sample could represent another potential limitation: Users on the site are required to have paid subscriptions to the paper, and this may mean that they are more homogenous than users on other online platforms. For example, New York Times commenters may be more likely to be liberal, educated, and relatively higher in income (George & Waldfogel, 2006). Arguably, these characteristics (as well as audience heterogeneity) might influence the consequences of doubtful comments.

Conclusion

People use online social media platforms to learn about and discuss current events. We used data from the New York Times to examine how the use of doubtful language shapes user behavior in online news discussions. Comments containing doubtful language received fewer user recommendations. At the same time, replies to doubtful comments were less emotional, reply authors expressed fewer positive emotions and fewer negative emotions. Moreover, the negative effects of doubt on subsequent emotionality were particularly strong for anger. These results suggest that although doubtful authors receive less attention from other users, they may play an important role in helping to foster civility in online discussions.

Supplemental Material

Supplemental Material, sj-pdf-1-ssc-10.1177_08944393211034163 - Expressions of Doubt in Online News Discussions

Supplemental Material, sj-pdf-1-ssc-10.1177_08944393211034163 for Expressions of Doubt in Online News Discussions by Anthony M. Evans, Olga Stavrova, Hannes Rosenbusch and Mark J. Brandt in Social Science Computer Review

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

The supplemental material is available in the online version of the article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.