Abstract

Introduction

Social Cognition (SC) refers to a set of processes involved in interpreting socially relevant stimuli and understanding another individual's feelings, intentions, behaviors, attitudes, and emotions. 1 SC is critical for successful social interaction, communication, mental health, and well-being. 2 According to Arioli et al., 3 appropriate social interactions are based on 3 core dimensions of SC: the ability to recognize and respond to emotional cues such as interpretation of facial expressions, body language, and prosody (social perception); the ability to decode others’ mental and, particularly, intentional states (social understanding); and the ability to make appropriate decisions in a variety of social contexts (social decision-making).3,4

The social perception domain is related to distinguishing people and objects, including facial expression recognition. During interpersonal communication, the recognition and production of facial emotional expressions are essential means of communicating emotional experiences. 5 One of the functions of this system is to convey additional information to help interpret other people’s words and actions. 6 Facial expression recognition is part of a larger process called social cognition and depends on contextual details and previous knowledge about the person, involving multiple features.7,8 Indeed, the human face’s unique salience reflects its predictive power concerning others´ intentions and thus their potential consequences in social terms. 8 Therefore, facial processing is a key aspect of interpersonal communication and a modulator of social behavior. 3

Facial expressions are a combination of action units characterizing different emotions. Physiologically, an emotion corresponds to a pattern of neural activity in the orbitofrontal cortex and linked limbic structures that represent the subjective value that defines that emotion. 5 A set of 6 basic emotions (happiness, anger, sadness, fear, disgust, and surprise) are considered universal, as all humans can express and recognize them regardless of socio-cultural effects. 3 The experience of emotions through emotional communication depends on the integrity of the association cortex (predominantly in the right hemispheric convexity) and the integrity of the orbitofrontal limbic system, the substrate for emotional experience. Emotional experience can be affected by endogenous dysfunction of orbitofrontal limbic systems associated with agitation/aggression, depression, anxiety, and psychosis. 5

Several studies have shown that familiar and meaningful material can enhance the recognition performance of older adults).8-10 Tinard et al. 8 explored the impact of familiar material on age-related recognition of memory decline. Older adults in their study only showed lower recognition accuracy and false alarm rate in the unfamiliar face recognition task. The authors observed that prior knowledge in the famous face recognition task improved recollection for older adults, and no age-related deficit was found.

In dementia research, it is well known that frontotemporal dementia significantly impairs social and emotional functioning. However, in Alzheimer’s disease (AD) these deficits may be subtler. In a recent systematic review, Torres et al. 11 suggested that the degree of impairment in facial expression recognition in AD is associated with the intensity of the emotions. 11 Additionally, there is a relationship between facial expression recognition and deficits in visuoperceptual ability and executive function4,12-14 and the deficits following the level of cognitive damage and disease severity.13,15,16

Two studies showed that negative emotions can be more difficult for persons with AD to identify when compared to positive ones.15,17 Kumfor et al. 18 suggested that participants with AD showed significantly worse facial expression recognition than a control group regarding negative emotions, although performed within normal limits for recognition of surprise and happiness. 18 Nevertheless, an explanation on the emotion of surprise is necessary. While surprise is a common emotion in everyday life, some of its fundamental characteristics are still unclear. For example, does surprise have positive or negative valence? The literature has been unclear on the experiential valence of surprise. 19 An emotion’s valence is understood according to the emotional context in which it is involved. Surprise has been depicted as a pre-affective state or as an emotion that can be both positive and negative, depending on the goal conduciveness of the surprising event. 19 A study showed that surprise may initially be a (mildly) negative emotion, as it represents the interruption of ongoing thoughts and activities, an experience that can be unpleasant. 19 However, it is worth noting that in facial expression recognition, perceptual and affective information is interpreted using semantic knowledge and contextual information, which would contribute to categorizing specific expressions such as surprise.20,21 Therefore, some studies on the surprise emotion have suggested the role of individual differences, since some situations and contexts may be positive for one person but negative for another in the same situation.

In previous studies by our group with small sample sizes,4,13 we adapted an experimental task developed by Shimokawa et al., 15 called FACES. Although the original FACES task also included object recognition tasks, a longitudinal study by Torres et al. 13 only included facial emotional recognition and the recognition of emotional situation tasks. The findings suggested that people with mild AD performed significantly worse in recognizing emotional situation tasks but were able to recognize and discriminate simple facial emotions. Moreover, the ability to perceive more complex emotional situations was the first impairment to appear in AD. 13

We also observed difficulties with emotional processing across stages of AD. Using the full protocol of the adapted FACES task, Dourado et al. 4 compared facial expression recognition in mild and moderate AD and identified the factors associated with impairment of this ability according to disease severity. There were significant differences between groups in FACES global scores, the ability to comprehend facial emotions (task 2), the ability to comprehend the nature of a particular situation, and the expected associated emotional state (task 4). In addition, there was no influence from cognitive impairment when participants were asked to recognize emotion in a situation with evident emotional content. 4 Considering the findings related to the recognition of facial expressions in emotional situations, some key questions remain: (1) Do people with mild and moderate AD understand the context of the emotional situation? (2) Are mild and moderate AD ratings of facial expression recognition congruent with the context of the emotional situation? (3) Are there differences in understanding the emotional situation’s context and the ratings of facial expression recognition in people with AD according to dementia severity?

Emotion labeling tasks include relatively rapid detection of perceptual cues to emotional status and higher-level decision-making about which verbal label best describes an emotional face. 15 Nevertheless, recognizing a facial expression is part of an emotional context in daily life. Therefore, assessing facial expression recognition detached from an emotional context raises questions about the validity of facial expression recognition reports by persons with AD. Given the findings of our previous studies,4,13 we decided to decompose the process of recognition of facial expression in emotional situations in 3 contexts among people with mild and moderate AD: understanding the situation, the ability to name the congruent emotion, and choosing the correct face. Thus, this study examines group differences in the comprehension of emotional situations and ratings of facial expression recognition by persons with AD. We hypothesized the presence of more preserved coherence between comprehension of an emotional situation and ratings of facial expression in mild compared to moderate AD.

Methods

Design

Cross-sectional study

Participants

We recruited a convenience sample of 115 people with a healthy older control group (HOC n = 40) and 2 AD groups (mild n = 39; moderate n = 36) in the outpatient department of the Center for Alzheimer’s Disease and Related Disorders, Institute of Psychiatry, Universidade Federal do Rio de Janeiro, Brazil. HOC participants consisted of older adults recruited from the community without reported history of neurological or psychiatric illness or signs of cognitive decline. Only HOC individuals with Mini-Mental State Examination (MMSE) equal to or above 28 were included in the study.22,23

Data on the FACES task from 52 of the 115 participants in this sample have been published previously; 4 however, that study did not focus on the emotional situation subtask examined here. Participants were diagnosed with possible or probable AD according to the Diagnostic and Statistical Manual of Mental Disorders, Fifth Edition (DSM-5) criteria (APA, 2013). 24 Diagnosis of AD was established through a clinical interview with individuals and their caregivers and cognitive screening tests (MMSE,22,23 Verbal Fluency Test,25,26 Clock Drawing Test), 26 laboratory tests, and imaging methods (CT or MRI). People with mild and moderate AD were included according to the Clinical Dementia Rating (CDR 1 and CDR2)27,28) and scores ranging from 12 to 26 on the MMSE.22,23

Exclusion criteria included any history of psychiatric or other neurological disorders such as aphasia, head trauma, alcohol abuse, drug abuse, and epilepsy, as defined by the DSM-5 criteria. 24

Procedures

The HOC group and AD participants underwent a facial recognition task and cognitive assessment. The caregivers of AD participants provided information on demographics, functionality, neuropsychiatric symptoms, and dementia severity. The assessments were performed in people with AD by trained neuropsychologists, who were blinded to the disease severity of all participants.

The Ethics Committee of the Institute of Psychiatry (IPUB) at the Universidade Federal do Rio de Janeiro (UFRJ) approved the study. All AD participants and their caregivers signed the informed consent form as provided by the Declaration of Helsinki.

Measures

Facial Expression Recognition Ability

The study employed the FACES protocol, an adaptation of an experimental task developed by Shimokawa et al.14,29 to assess participants' ability to recognize facial expressions. The original FACES tool comprises 4 increasingly difficult tasks, all also assessing object recognition tasks. The current study includes other visuospatial instruments; thus, only tasks related to facial emotion recognition and recognition of emotional situations were employed. Facial expression drawings used as visual stimuli in FACES are considered universal and less likely to be culturally biased.4,13

The FACES protocol evaluates sadness, surprise, anger, and happiness and consists of 4 tasks with a total score of 16 points, divided according to each specific task:

Task 1 explores the visuoperceptual ability to identify the 4 specific emotions represented in 4 drawings. Task 2 examines the ability to comprehend facial emotions. Task 3 examines the ability to recognize an emotional expression on a conceptual basis, for instance, to comprehend a verbal label of emotion (see Dourado et al. 4 for more details).

In this study, we only analyzed task 4. FACES task 4 explores the ability to comprehend the nature of a particular situation and its association with the inferred emotional state. First, we presented sketches of everyday scenes with apparent emotional content. Participants were asked to describe the situation presented in the sketches to evaluate their comprehension. Next, we asked participants to indicate the face that best described the emotion they had inferred from the stimulus. FACES task 4 consists of 4 different emotional contents: • a boy crying because of an injury (subtask 1 - sadness) • a boy being bitten by a crab (subtask 2 - surprise) • 2 boys fighting (subtask 3 - anger) • a father receiving a gift from his children (subtask 4 - happiness)

In each situation, there are 4 drawings of faces expressing different emotional contents (sadness, surprise, anger, happiness). For each emotional content presented, the participant is asked to respond: “What is happening in this situation?” “What is the emotion in this situation” A response sheet with 4 faces for each situation is used. Then, the participant is asked to name one of the 4 faces that best describes the character's emotion in the specific situation. The score is based on the number of correct answers. For each correct response, one point. The maximum score for this task is 4 points.

Our study used a qualitative analysis of task 4, which did not generate a score for the FACES test. The researcher explored whether the participants correctly understood the situation, named the congruent emotion, and chose the correct face. For each evaluated item, there are 2 possible answers, YES or NO.

Cognitive Assessment

The MMSE is a general cognitive screening task incorporating language, attention, memory, motor, and executive function skills. The total score ranges from 0 to 30. Lower scores indicate impaired cognition. 22 ,23

For the Trail Making Test, we used both forms. Form A (TMT-A) assesses visuoperceptual abilities such as speed measures, and participants are required to connect numbers on a paper in the correct order as quickly as possible. Meanwhile, Form B (TMT-B) reflects inhibition, set-shifting ability, working memory, and flexibility, and participants are required to sequentially connect 25 encircled numbers and alternating letters (1, A, 2, B, 3, C). Each score (TMT-A and B) consists of the time computed to complete the task. Longer time indicates an impaired skill. 30

The Verbal Fluency Test assesses executive function, cognitive flexibility, perseveration, working memory, inhibition, language functions, and phonemic and semantic memory. In the phonemic category (VF-P), participants must say as many as words as possible that begin with a specific letter. Three letters are asked (F, A, S), and participants have 60 seconds for each letter. The total score refers to the sum of correct words listed for each letter. In the semantic category (VF-S), participants are asked to produce the names of as many animals as possible in 60 seconds. The final score refers to the number of animals listed correctly. Lower scores indicate impaired skill.25,26

Functional Ability

The Pfeffer Functional Activities Questionnaire (PFAQ) evaluates activities of daily living (ADL). Ratings for each item range from average (0) to dependent (3), with 30 points. Higher scores indicate worse functional status.31,32

Neuropsychiatric symptoms

The Neuropsychiatric Inventory (NPI) evaluates the presence of delusions, hallucinations, dysphoria, anxiety, agitation/aggression, euphoria, disinhibition, irritability/lability, apathy, aberrant motor activity, nighttime behavior disturbances, and appetite and eating abnormalities. Each item is rated in relation to their frequency (1 = absent to 4 = frequently) and intensity (1 = mild to 3 = severe). The total score ranges from 0 to 144 points. A higher score means a higher presence of neuropsychiatric symptoms.33,34

Severity of Dementia

The Clinical Dementia Rating scale assessed the severity of dementia (CDR, with scores ranging from normality (0) to questionable, mild, moderate, and severe dementia, 0.5, 1, 2, and 3, respectively), according to the degree of cognitive, behavioral, and activities of daily living (ADL) impairment. The full CDR protocol was used.27,28

Statistical Analysis

Kolmogorov–Smirnov and Levene tests were used to verify the normal and homoscedasticity distribution, respectively. Non-parametric variables were described by their median, minimum, and maximum or frequency and percentages for categorical variables. Chi-square and Mann-Whitney U tests were used for comparison between groups separated by CDR 1 and 2. Chi-square and Kruskal-Wallis tests were also used for comparison between groups separated by CDR 0, 1, and 2, with a pairwise comparisons analysis.

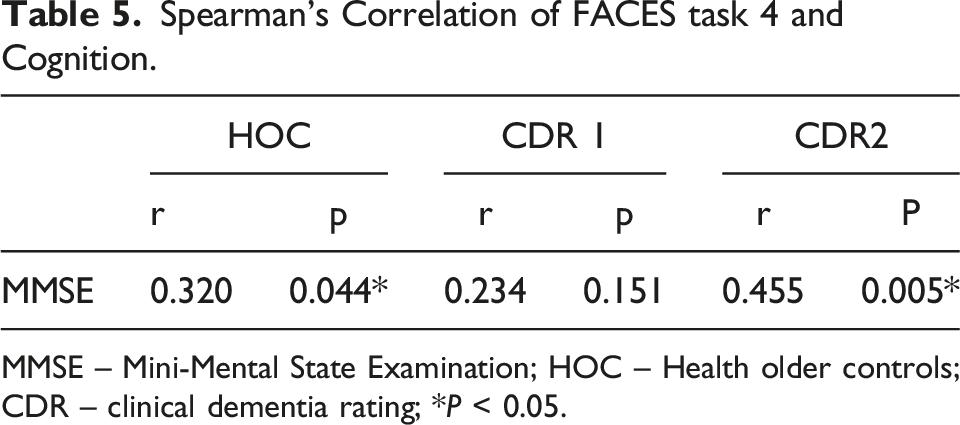

Spearman correlation for non-parametric data was performed between the MMSE and FACES task 4 for each CDR group. To interpret the magnitude of the correlation coefficient, we used the Cohen classification (1988) (<0.30 weak, 0.30 to 0.49 moderate, >0.5 strong).

Statistical analyses were performed using SPSS® software, version 26.0 (IBM Corporation, NY, USA), and level of significance was set at p ≤ 0.05.

Results

Sociodemographic and Clinical Profile

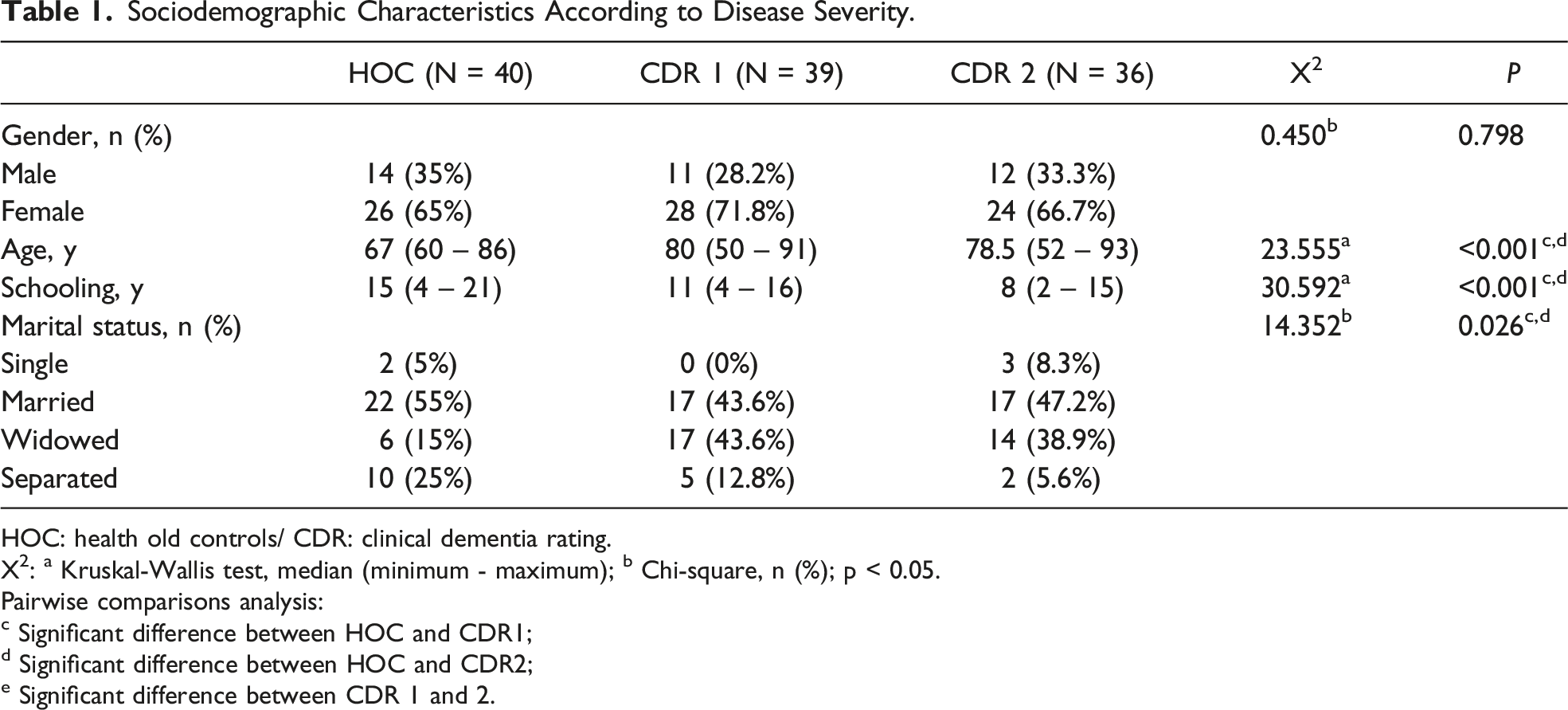

A significant difference was found between groups according to age (P < 0.001) and schooling (P < 0.001).

Sociodemographic Characteristics According to Disease Severity.

HOC: health old controls/ CDR: clinical dementia rating.

X2: a Kruskal-Wallis test, median (minimum - maximum); b Chi-square, n (%); p < 0.05.

Pairwise comparisons analysis:

c Significant difference between HOC and CDR1;

d Significant difference between HOC and CDR2;

e Significant difference between CDR 1 and 2.

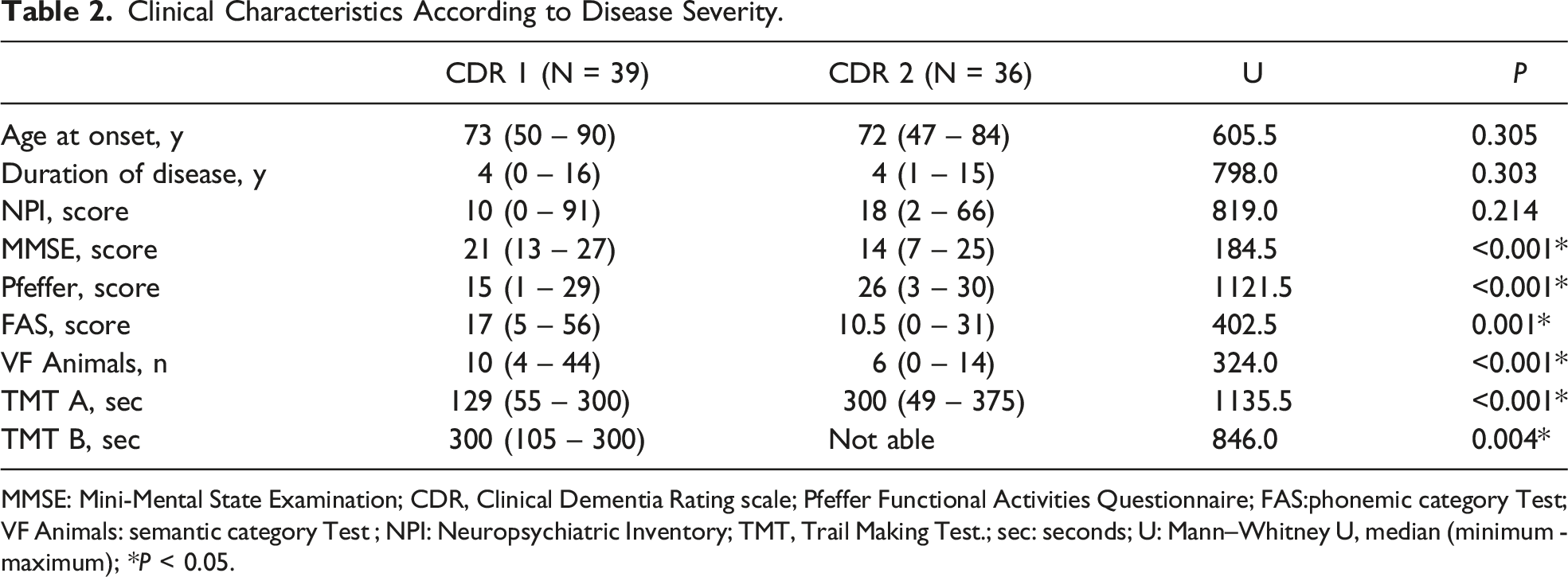

Clinical Characteristics According to Disease Severity.

MMSE: Mini-Mental State Examination; CDR, Clinical Dementia Rating scale; Pfeffer Functional Activities Questionnaire; FAS:phonemic category Test; VF Animals: semantic category Test ; NPI: Neuropsychiatric Inventory; TMT, Trail Making Test.; sec: seconds; U: Mann–Whitney U, median (minimum - maximum); *P < 0.05.

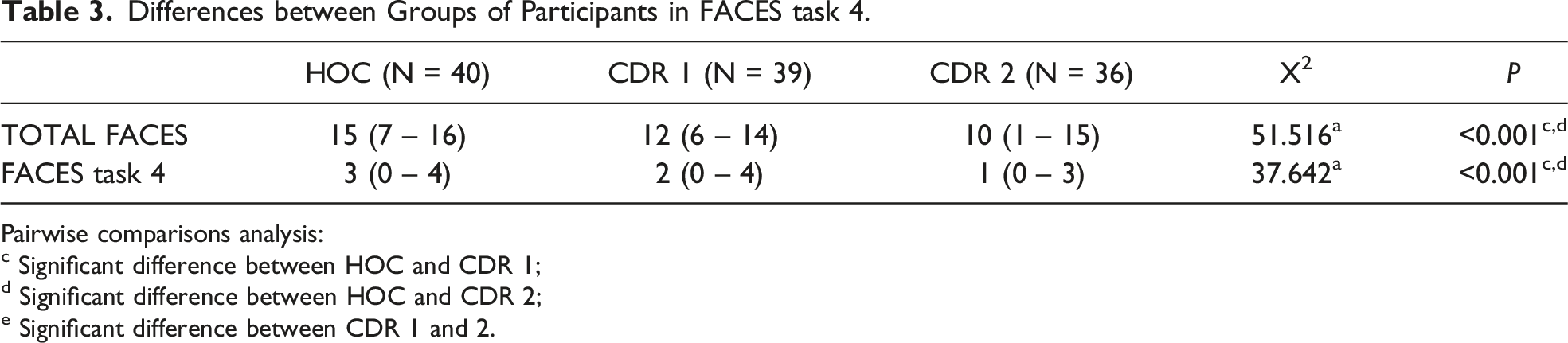

FACES Assessment

Differences between Groups of Participants in FACES task 4.

Pairwise comparisons analysis:

c Significant difference between HOC and CDR 1;

d Significant difference between HOC and CDR 2;

e Significant difference between CDR 1 and 2.

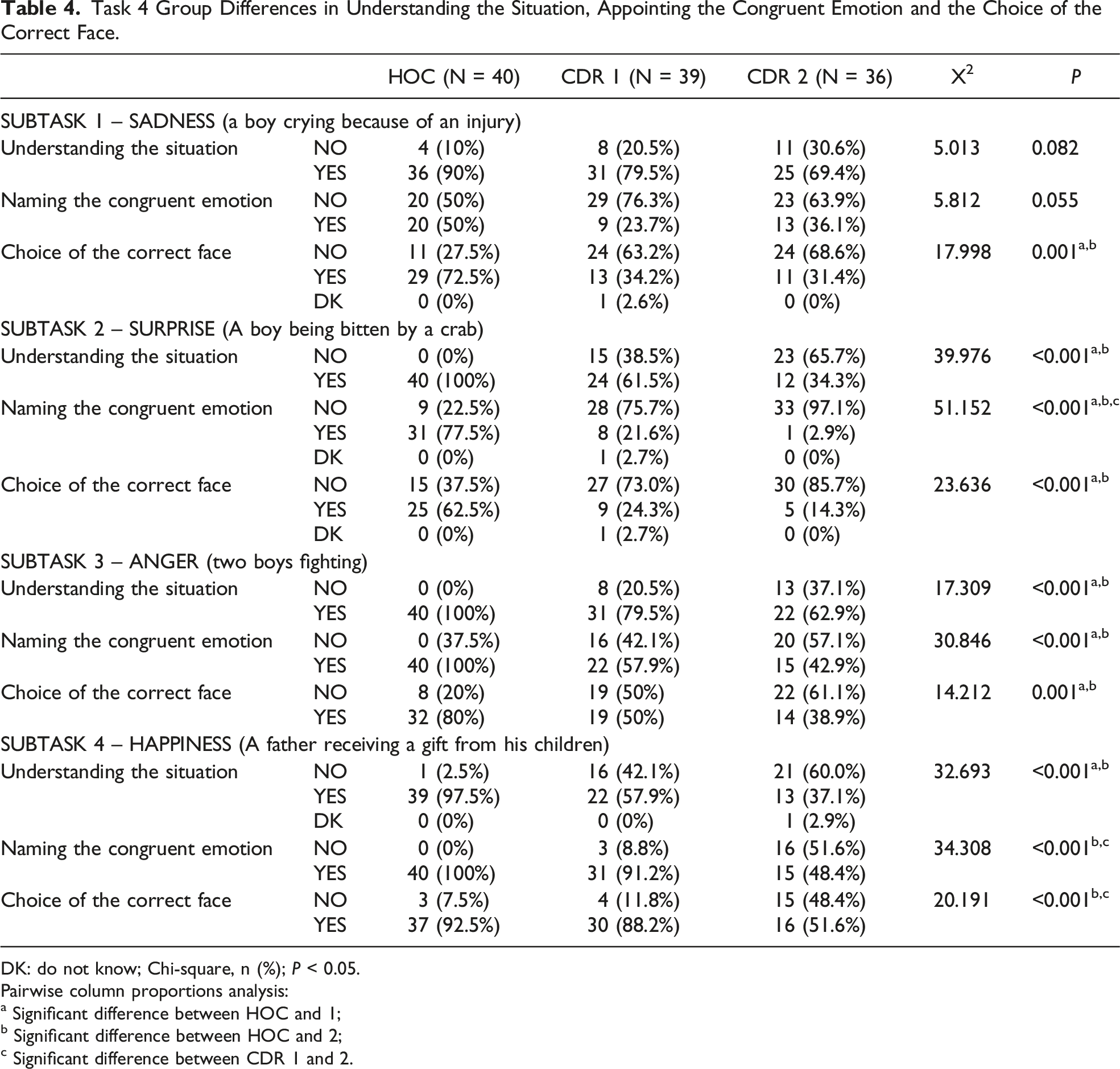

We investigated the differences between the understanding of an emotional context and the choice of the congruent facial expression recognition in each subtask of task 4 of FACES. In each subtask, the evaluator checked the ability of the HOC group and people with AD to understand 3 aspects of facial expression recognition: understanding the situation, naming the congruent emotion, and choosing the correct face.

Most people in all 3 groups correctly understood the situation of sadness, with no significant difference between the groups (P = 0.082). However, concerning the ability to name the congruent emotion, although the results did not show a significant difference between the 3 groups (P = 0.055), we observed greater difficulty in the AD groups. This difficulty was more evident in choosing the correct face, with a significant difference between the control group and people with AD (P = 0.001).

There was a significant difference between the control group and AD groups in understanding the situation (P = 0.001) and choosing the correct face (P = 0.001) for the facial expression of surprise. The differences between the groups were more evident in the ability to name the congruent emotion (P = 0.001).

Regarding the facial expression of anger, a significant difference was found between the control group and the AD groups in all assessed aspects (P = 0.001). However, there was no significant difference between AD groups in this subtask. The findings showed that healthy older people understood, named, and chose anger more correctly than the AD groups.

There was a significant difference between controls and the AD groups in all happiness assessments (P = 0.001). The AD group displayed more difficulty, showing more significant decline with the disease progression.

Task 4 Group Differences in Understanding the Situation, Appointing the Congruent Emotion and the Choice of the Correct Face.

DK: do not know; Chi-square, n (%); P < 0.05.

Pairwise column proportions analysis:

a Significant difference between HOC and 1;

b Significant difference between HOC and 2;

c Significant difference between CDR 1 and 2.

Spearman’s Correlation of FACES task 4 and Cognition.

MMSE – Mini-Mental State Examination; HOC – Health older controls; CDR – clinical dementia rating; *P < 0.05.

Discussion

Our study examined differences in the comprehension of an emotional situation and ratings of facial expression recognition in healthy older adults and people with mild and moderate AD. The novelty of this study is the decomposition of the recognition of facial expression in emotional situations in 3 contexts among older adults without dementia and mild and moderate AD participants: understanding the situation, the ability to name the congruent emotion, and choosing the correct face. Overall, participants varied consistently in FACES tasks, suggesting distinct patterns of recognition of emotional expressions at different stages of AD. An interesting finding was the positive correlation between cognition (MMSE) in the HOC and moderate AD group in FACES task 4. On the other hand, there was no correlation between cognition and mild AD, suggesting that for this group the cognitive reserve may be sufficient for performance of the task. Torres et. al., 13 in a longitudinal study, showed that people with mild AD maintain the ability to recognize and discriminate simple facial emotions. Shimokawa et al., 29 in a cross-sectional study, found a significant correlation between MMSE score and total emotional recognition task score in people with vascular dementia but not for people with AD, suggesting that overall cognitive performance measured by MMSE is independent of performance on emotion recognition. Due to the correlational nature of our findings, future studies should further investigate the relationship between cognition and facial expression recognition among different stages of AD and types of dementia using more powerful cognitive tests.

Our sample showed an increase in cognitive and functional deficits following the pattern of neurodegeneration caused by AD. In line with this pattern, our first finding was a significant difference between HOC and AD groups in almost all emotional situations assessed by task 4 of FACES. This finding corroborates the results of a meta-analysis 35 which showed the impact of overall cognitive function in tasks such as emotional selection and matching or emotional naming.

We also found that people mild and moderate AD displayed more difficulties in recognizing sadness, surprise, and anger than the HOC group. However, when happiness was assessed, people with mild AD recognized the emotion similarly to the HOC group. This finding highlights that cognitive decline alone could not explain the deficits presented by people with AD in FACES task 4. Torres et al. 11 suggested that the degree of impairment in facial expression recognition in AD is associated with the type and intensity of the emotions. Other studies showed that negative emotions may be more difficult for persons with AD to identify than positive ones.15,17 Gendron et al 20 showed that in facial expression recognition, perceptual and affective information is interpreted using semantic knowledge and contextual information, which would contribute to the categorization of specific expressions.

We also found that participants with AD could name the congruent emotion or understand the situation but could not choose the correct face. Nardeau 36 offers a plausible explanation for this pattern of deterioration. The network connecting processing and knowledge may facilitate stimulus recognition and reactive attention triggered by stimulus salience, familiarity, or context. 36 Moreover, simultaneous viewer-centered and object-centered processing of visual stimuli is made possible by concurrent knowledge encoding and processing support. 36 The degradation of this shared network that supports knowledge of an emotional condition and the connection to a specific facial choice, therefore, most likely involves 2 deteriorated areas of knowledge, resulting in higher odds of inaccuracy. Future longitudinal studies should further investigate the relationship between knowledge and processing in facial expression recognition in AD.

Sadness

We assessed the ability to identify sadness in subtask one of FACES task 4. The first finding was the lack of difference between the HOC and AD groups in understanding the emotional situation. All of the groups understood the situation, suggesting a similar pattern of functioning in understanding an emotional situation of sadness. Concerning choice of the correct facial emotional expression of sadness, the AD groups, although understanding the situation, could not name the correct label. Both AD groups could not match the label with the congruent emotion, showing a tendency towards significant difference compared to HOC. Visual processing of emotional facial expressions and age-related impairments do not occur automatically, because they depend partially on the type of task executed (identification, categorization, or matching). 36 García-Rodriguez et. al. 37 observed that identification of emotional stimuli is not independent of cognitive processing, but Bucks and Radford 38 suggested that people with AD may correctly recognize emotional facial expressions, although their performance on cognitive tasks was impaired compared to the control group. Therefore, cognitive deterioration may explain why participants understood the situation but could not choose the correct face. In addition, the degraded relationship between knowledge and visuospatial dysfunction may be more likely to cause the discrepancy between understanding the situation and choosing the correct face than impaired emotional processing. Further studies should investigate the link between understanding a situation, choosing the correct face and the cognitive domains.

Surprise

Surprise was the emotion assessed in the second subtask of FACES task 4. We found that the mild and moderate AD groups showed more difficulty than HOC in understanding the emotional situation. As for naming the congruent emotion, there was a significant difference between groups, showing that recognition of surprise was impaired according to severity of the disease. There was no significant difference between AD groups in choosing the correct face. This point highlights again that the choice of an emotion may be reduced or inadequately specific and not correspond to the situation presented. Emotional tasks involve underlying cognitive processes that may vary across the different tasks. Therefore, choosing a face may involve executive functioning, whereas recognizing an emotion may require more visuospatial abilities. 35 Torres et al. 13 suggested that although emotional processing is a noncognitive ability per se, the cognitive impairment that emerges even in the early stages of dementia can hamper this ability, specifically for tasks that become more cognitively demanding, such as identifying surprise.

Anger

Anger was the emotion assessed in the third subtask of FACES task 4. There was a significant difference between the HOC and AD groups, but not between the mild and moderate AD groups in understanding the situation. As for naming the congruent emotion, HOC performed better at naming the congruent emotion than the AD groups. This outcome was also found in participants’ ability to choose the correct face. HOC were more able to choose an angry face correctly when compared to the mild and moderate AD groups. Studies indicate that the effects of AD on emotional perception are not uniform but differ depending on which emotion is portrayed.15,18 The intensity with which emotions are presented also appears essential. The model proposed by Bruce and Young 39 postulated that facial recognition decisions are based on whether the face is known, as well as its expression. Werheid et al. 40 showed that facial expression is more observable in a strong expression such as anger, when compared to happiness. A recent study 41 demonstrated the similarity of the recognition of anger and sadness in people with dementia and people with normal cognition. However, we found significant differences in facial expression recognition in situations of anger, sadness, and surprise when comparing the AD groups and HOC. These findings are in line with Torres et al., 11 Phillips et al., 15 and Rosen et al., 17 who highlighted that expressions of positive emotions are identified more accurately than negative ones.

Happiness

In the context of happiness assessed in the fourth subtask, we found that most healthy older controls understood the situation, with significant differences between the HOC and AD groups but not between the mild and moderate AD groups. When we examined the ability to name the congruent emotion, the results for the mild AD and HOC groups were similar, as was the ability to choose the correct face, in which there was no significant difference between mild AD and HOC. These results demonstrated that people with AD may have difficulty recognizing some emotional situations at the beginning of the disease (but not positive emotions). These findings are in line with studies that point to the difficulties of people with AD in identifying negative emotions compared to positive ones.15,17,37 Goodkind et al. 42 showed that identifying positive emotions is more accessible in mild AD, because such emotions share the smile as a standard signal, allowing the participant to detect only a single expressive feature of a facial expression. Unlike other studies, our sample consisted of participants with mild and moderate Alzheimer's dementia, showing that the severity of the disease could impact even the recognition of positive emotions. Thus, our findings show that recognition of positive facial emotion expression in AD may also be affected during disease progression. Studies 42 have found that the relationship between the level of emotional decoding abilities and dementia severity in AD remains unclear, since progression of the disease is often measured with the MMSE. Therefore, further longitudinal studies should evaluate the relationship between facial emotion recognition patterns and changing patterns of disease progression.

Some potential limitations of the current study should be considered. First, the HOC and AD groups were not matched for age and schooling. The HOC group consisted of older adults aged 67 years (median), while the AD groups had median ages of 80 (mild AD) and 78.5 years (moderate AD). Second, the healthy older controls had more schooling than the AD groups. These issues may have biased the task's impact towards better facial expression recognition by the HOC group. Third, our sample was relatively small, and the analyses may thus have been underpowered.

Conclusion

The current study provides a broader understanding of cognitive emotional processing. We found differences in understanding an emotional situation, naming the congruent emotion, and choosing the correct face between the HOC, mild and moderate AD groups in almost all subtasks in FACES 4. These findings corroborate previous studies that suggested facial expression recognition impairment according to AD severity. Furthermore, the differences between understanding the situation, the ability to name the congruent emotion, and the choice of the correct face suggest an interaction between cognitive emotional processing and cognitive functioning. Thus, cognitive deficits may explain why the participants understood the emotional situation but could not choose the correct face on the label. Clinically, our results may help caregivers, family members, and professionals choose the most appropriate communication styles when treating people with AD, since the illness is associated with increased caregivers’ burden and the potential for decreased quality of life.

Impairments in facial processing may lead to poor judgment in social interactions and behavioral disturbances, since emotional perception is crucial for social functioning, intervention, and treatment in AD (e.g., music and occupational therapy, problem-solving training). Therefore, clinicians should consider measuring emotional perception as an outcome measure along with the battery of cognitive tests.

Footnotes

Acknowledgements

Marcia Cristina Nascimento Dourado is researcher 2 funded by CNPq and FAPERJ.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.