Abstract

Perhaps surprisingly, personality science has not yet adequately addressed a very basic aspect of individuality: The extent to which an individual differs from others, a phenomenon we refer to as unusualness. In this work, we provide a framework for defining, measuring, and uncovering the elements of unusualness. First, we define unusualness as the extent to which an individual’s personality deviates from that of others in the population, either through rare expression of individual variables (unusual values) or through atypical co-occurrence of multiple variables (unusual combinations), and distinguish it from related concepts in personality research. Second, we introduce and compare various methods to measure unusualness in a simulation study and demonstrate how reliability and validity of unusualness measures can be established. Third, we propose methods to uncover the elements of unusualness (i.e., unusual values, unusual combinations) and validate these methods through simulation. Fourth, we illustrate our framework using three empirical datasets (combined N = 1,834) and multiple assessment methods, showing that unusualness can be reliably measured and offering first insights into its generalizability, nomological network, incremental validity, and possible explanatory factors. Finally, we outline conceptual, methodological, and empirical directions for future research on unusualness.

Plain language summary

Who Stands Out? A New Framework to Define, Measure, and Understand Why Some People Deviate Significantly From Others in Their Personality—A Phenomenon We Refer to as Unusualness: Why was this study done? We all know people who stand out because of some unique characteristic of their personality or an unexpected combination of characteristics, but personality science hasn’t clearly defined or measured what makes someone “unusual.” This study introduces a new approach to understanding how and why people differ from others. We call this “unusualness” and explore both the individual variables that are rare and the unusual ways in which variables can appear together. This gives researchers a more complete picture of individuality. What did we do? We first defined unusualness and explained how it differs from other concepts in personality psychology. Then, we used simulations to test various ways to measure unusualness and evaluated how reliable and valid those measures are. We also developed tools to identify what exactly makes a person unusual—whether it’s rare scores on certain personality traits or unusual combinations of traits. Finally, we applied these methods to real-world data from over 1,500 people to demonstrate how they work in practice. What did we find? We identified a set of methods that are well-suited for measuring the overall degree of unusualness, and a different set of methods designed to uncover what specifically makes a person unusual. Our analysis of real data showed that unusualness can be measured reliably and with low uncertainty. We also found that unusualness shows some generalizability across assessment methods and may offer incremental value beyond existing personality measures. What does this mean for psychological research? This work opens up new ways to study individuality in psychology. By providing clear definitions and tools to measure unusualness, we give researchers a new way to explore how people differ, not just how much, but also in what ways. This could lead to new insights into personality, mental health, and social behavior.

Why do some people stand out as distinctly unusual, while others blend seamlessly into the crowd? From eccentric geniuses to people on the psychological margins, the concept of unusualness is undoubtedly of psychological relevance. Also, implicit notions of unusualness are prevalent across various psychological domains, including individual differences in striving for uniqueness and grandiosity (i.e., the motivation to be and the perception of being unusual, and unusually “good,” respectively; Chopik et al., 2024; Miller et al., 2021), perceptions of (non)normality (i.e., perceiving others to be more or less (un)usual; Kim et al., 2023; Wood et al., 2007), clinical psychology’s classification of psychopathology (indicating unusually low adjustment; Krueger et al., 2014), research on divergent thinking (e.g., referring to unusual ways of combining information; An et al., 2016; Runco et al., 2019), and person-centered personality science that categorizes individuals into types and profiles (of different prevalence and, with some individuals not fitting into any type at all, indicating different degrees of unusualness; York & John, 1992). Despite the ubiquitous reference to and significance of the idea of unusualness, the question of what unusualness is, how it can be assessed, and where it comes from has received surprisingly little attention in personality research in recent decades. Specifically, personality research is currently lacking an overarching conceptual framework for defining and measuring unusualness, as well as for uncovering its elements. In this work, we aim to provide such a framework and target three research questions.

RQ1 (How to define unusualness): First, we derive a definition of unusualness that captures two crucial elements: rare expression of individual variables (unusual values) and atypical co-occurrence patterns across multiple variables (unusual combinations). We also examine how, and to what extent, the two key elements of unusualness are reflected in various personality research approaches, including normative assessments, normativeness, and typeness.

RQ2 (How to measure unusualness): Second, we introduce and compare various methods for measuring unusualness and examine ways to assess the reliability and validity of unusualness. Specifically, we evaluate the ability of parametric distance-based methods and non-parametric unsupervised learning methods to detect the two key elements of unusualness (unusual values and unusual combinations) in a simulation study. We outline three complementary approaches to assess the reliability and robustness of unusualness: split-half reliability, test-retest reliability, and uncertainty estimation. To establish validity, we propose analyzing nomological correlates, predictive and incremental validity, and generalizability of unusualness.

RQ3 (How to uncover the elements of unusualness): Third, we introduce data-driven, bottom-up approaches to uncover the elements of unusualness. We propose three approaches—SHapley Additive exPlanations (SHAP; Lundberg & Lee, 2017) values, SHAP interaction values, regression tree path analysis (RTPA, building on surrogate decision-tree explanations, e.g., Aslam et al., 2022)—to clarify which personality variables 1 contribute most to an individual’s unusualness, and how they contribute (via unusual values or unusual combinations), evaluate their effectiveness in a simulation study, and compare their strengths and limitations.

In addition, to provide an initial proof of concept with empirical data, we apply this approach to three datasets (combined N = 1,834) including self- and informant-rated survey data as well as experience sampling (ESM) data. This allows us to demonstrate how unusualness can be measured and interpreted in practice and to perform a first exploration of its nomological correlates, incremental validity, and generalizability.

How to define unusualness?

The conceptual ambiguity of unusualness concepts

To date, there is neither a consistent label nor a clear definition for the notion that “an individual’s personality deviates from that of others.” Terms such as (ab- or non-)normal, special, and (non-)normative are frequently used to describe this phenomenon, yet often inconsistently, resulting in conceptual ambiguity and impeding theoretical development (Banissy et al., 2013; Furr, 2008; Gutiérrez et al., 2023; Wood et al., 2007). There are three major problems with existing concepts and their associated terms. First, some of the applied terms overlap with more generic statistical terms (e.g., normality, average), while at the same time aiming at a more specific conceptual meaning. Second, a number of alternative concepts carry strong positive or negative evaluative meaning (e.g., exceptional and unique versus strange and abnormal; see Wood et al., 2007), and/or are defined as outcomes (e.g., social functioning, mental health, maladjustment; Favini et al., 2018; Wood et al., 2007), which entangles the definition of the construct with its (potential) evaluation and outcomes. Third, other concepts are already strongly associated with a certain research stream and its specific conceptualization, which may lead to conceptual ambiguity (e.g., normativeness; Furr, 2008). Therefore, we adopt the term unusualness, a non-statistical term, less evaluatively laden (Wood et al., 2007), not inherently linked to any specific outcome, and not associated with one specific research stream and its conceptual assumptions. But what is unusualness?

Unusualness refers to unusual values and unusual combinations

How individuals differ from others can be described in terms of a vast array of individual characteristics including cognitive, affective, motivational, and behavioral tendencies, many of which are typically summarized as personality variables at different levels of aggregation (from personality nuances to personality meta-traits; DeYoung, 2010a, 2010b; Mõttus et al., 2019; Mõttus, Bates, et al., 2017; Mõttus, Kandler, et al., 2017). Historically, personality research has primarily approached these aspects in two ways: Either by considering individual values on selected individual personality variables (a variable-centered approach assessing personality variables; e.g., how sociable an individual is in comparison to others; Anglim et al., 2020; Barrick & Mount, 1991; Howard & Hoffman, 2018; Soto & John, 2017a, 2017b) or configurations of values across different individual personality variables (a person-centered approach assessing personality types; e.g., whether and how strongly an individual’s profile of personality variables resembles an overcontrolled personality; Asendorpf, 2006, 2015; Ferguson & Hull, 2018; Furr, 2008, 2010; Howard & Hoffman, 2018).

Here, we aim at an overarching concept of unusualness defined as the extent to which an individual’s personality, as defined by a set of personality variables, deviates from that of others in the population, both regarding individual variable values and their combinations. This definition has two important implications. First, unusualness is not a binary classification but a matter of extent, determined by how an individual compares to other individuals from the population. Second, unusualness needs to incorporate both described aspects: Individuals can be unusual because they differ unusually strongly in values on some individual variables (unusual values) and/or because they differ unusually strongly from others in how their personality variables co-occur (unusual combinations).

Previous research related to unusualness

Most previous research in personality science that somehow deals with unusualness and related concepts captures perceived unusualness (i.e., asking people themselves or others how (un-)usual an individual is; Arumäe et al., 2024; Blankenship et al., 2021; Kim et al., 2023; Liang et al., 2019, 2024; van Doeselaar et al., 2019; Wood et al., 2007). It is characterized by using a measure that explicitly assesses the gradual notion of estimated unusualness (e.g., scales for the assessment of grandiosity, need for uniqueness, normality, eccentricity, oddity, or weirdness; Ashton & Lee, 2012; Chopik et al., 2024; Carvalho et al., 2021; Miller et al., 2021; Kim et al., 2023, Wood et al., 2007) and includes both self-perceived unusualness and other-perceived unusualness (e.g., informant-, peer- or partner- assessments). Prominent examples of this are the constructs of eccentricity and oddity (Ashton & Lee, 2012; Carvalho et al., 2021; Verbeke & De Clercq, 2014; Watson et al., 2008; Wright et al., 2012). Though originating primarily from clinical contexts, they share a high conceptual overlap with unusualness, as they similarly capture deviations from social or statistical norms in thoughts and behavior.

By contrast, research examining observed unusualness, that is, unusualness as it manifests through unusual values and unusual combinations of personality variables, is surprisingly limited. Unusual values of specific variables are typically examined in isolation within normative assessments (i.e., labeling individuals as considerably above- or below-average on individual personality variables; Gunthert et al., 1999; Silverman, 2018). In contrast, unusual combinations are addressed to some degree by two established approaches from personality science that focus on the within-person patterns of personality variables: normativeness (e.g., the extent to which an individual has an average Big Five trait profile; Furr, 2008) and typeness (e.g., the extent to which an individual has an overcontrolled personality type; Asendorpf, 2006).

Unusualness as unusual values—normative assessments

The variable-centered approach assessing unusual values through normative assessments is commonly used across domains such as personality science or clinical assessments (Archer & Smith, 2008; Hopwood & Bornstein, 2014; Meyer et al., 2001; Silverman, 2018). Within this paradigm, individuals are labeled as above or below-average based on their percentile rank or standard deviation on a single personality variable relative to a reference population. Those falling beyond ±2 standard deviations from the mean (representing approximately 5% of the population) are often considered “special” (e.g., being intellectually gifted), while those beyond ±3 standard deviations (less than 0.3%) are viewed as “profoundly special” (Carman, 2013; Gignac, 2025; Gottfried, 1994; Silverman, 2018). However, while useful in practice, such cut-offs are somewhat arbitrary and reflect applied needs more than the dimensional nature of individual personality variables in variable-centered research (Hopwood et al., 2023; Krueger et al., 2014).

Normative assessments only capture one component of unusualness at a time: Individuals may be considered equally unusual due to having rare values on a single personality variable. The frequency with which specific values on multiple personality variables co-occur has received relatively little attention. Nonetheless, some recent studies have suggested expanding this perspective to a multivariate approach, so that individuals are evaluated based on their scores across multiple personality variables (Cheek et al., 2023; Gignac, 2025; Van Tilburg, 2019). An individual might be labeled as “special” if they exceed a given threshold (e.g., +2 standard deviations) on two or more variables simultaneously (Cheek et al., 2023; Gignac, 2025). However, as the number of variables increases, this method becomes less practical: it becomes exceedingly rare to find individuals who are consistently “special” across many personality variables (Gignac, 2025), just as it becomes unlikely to find individuals who are entirely average on all variables (Van Tilburg, 2019).

Unusualness as unusual combinations—normativeness and typeness

Furr (2008) introduced a framework for evaluating the normativeness of individual personality profiles, defined as the degree of similarity (i.e., correlation) between an individual’s personality variable configuration and the average profile. 2 Thus, a low correlation indicates a substantial deviation of an individual’s profile shape (i.e., relative elevations and depressions of personality variables) from the average, signaling non-normativeness. This concept has been primarily applied in research on social judgments (Allik et al., 2015; Biesanz, 2021; Borkenau & Leising, 2016; Rogers & Biesanz, 2015; Rogers et al., 2021). Normativeness has been found to be stable over time, influenced by both genetic and environmental factors, and associated with various positive outcomes, including well-being, life satisfaction, and health (Bleidorn et al., 2012, 2020; Damian et al., 2019; Klimstra et al., 2010).

Asendorpf (2006) introduced typeness as a continuous measure of how closely an individual’s personality profile aligns with a specific personality type (e.g., undercontrolled, overcontrolled, or resilient type). Typeness is estimated by regressing an individual’s personality profile onto the prototypical profiles, yielding person-specific coefficients that reflect the extent to which the individual’s profile mirrors the prototypical type’s shape. Individuals with atypical personality patterns may therefore receive low typeness coefficients, reflecting their lack of resemblance to any established personality type. Initial findings by Asendorpf (2006) support the longitudinal stability and predictive validity of typeness, and a growing body of research on personality types (e.g., Isler et al., 2017; Meeus et al., 2011; Specht et al., 2014) highlights the greater utility of continuous measures of type affiliation compared to rigid categorical classifications. However, empirical research on typeness remains limited, and no study has yet combined individual typeness coefficients into a broader measure of overall unusualness. Moreover, the conceptual uniqueness of the purportedly person-centered approach may be questioned because typeness is just another weighted aggregate of personality variables or their indicators, so essentially a factor score common akin to those used in variable-centered approaches.

In summary, both approaches assess the extent to which an individual’s pattern of personality variables reflects the average (normativeness) or a prototypical (typeness) profile shape, thereby reflecting the second key element of unusualness: unusual combinations. Furthermore, both approaches have mainly been applied to very limited aspects of personality (i.e., Big Five traits). A key difference between normativeness and typeness lies in how they address the typical co-occurrence of personality variables. Normativeness treats all deviations from the average profile equally, regardless of whether they align with or contradict the population-level correlational structure. In contrast, typeness incorporates this structure, but only insofar as it matches the predefined personality types it is based on, so effectively repackaging types(ness) as aggregate variable scores. Notably, neither approach accounts for unusual values in a way consistent with the present framework. Unusual values have limited influence on normativeness when assessed via correlation coefficients, which neglect profile elevation and scatter (Furr, 2008, 2010). In typeness, unusual values may paradoxically reduce typeness if they deviate from the prototypical pattern, contrary to the present framework’s premise that unusual values should reflect greater unusualness.

An integrative approach to unusualness

We demonstrated that existing frameworks in personality science only partially capture unusualness as we define it. Therefore, a comprehensive framework is needed that can adequately capture both key aspects of unusualness simultaneously: unusual values and unusual combinations. We aim to provide such a framework, defining unusualness as the extent to which an individual’s personality, as defined by a set of personality variables, deviates from that of others in the population. Thus, we define unusualness as a dimensional construct that captures the degree of deviation between an individual’s personality profile and the distribution of profiles observed in a reference population and some personality variable space. This deviation can manifest in two ways: (1) through values on personality variables that substantially differ from those of others in the population (unusual values), and/or (2) through co-occurrence of personality variable values that substantially deviate from typical population patterns (unusual combinations). For such combinations to influence unusualness, a discernible population-level structure (i.e., correlation) must thus exist. In principle, any personality variable can contribute to unusualness (e.g., dispositional traits, characteristic adaptations, or narrative identities; McAdams, 2011; McAdams & Pals, 2006). These contributions can occur at any level of aggregation (e.g., dimensions, facets, or nuances), although more fine-grained levels may offer richer insights that could be overlooked by aggregating data into broader dimensions (Denissen et al., 2020; Mõttus, Bates, et al., 2017; Mõttus et al., 2019; Mõttus, Kandler, et al., 2017; Paunonen & Ashton, 2001; Stewart et al., 2022). Importantly, unusualness, like any other construct, must always be interpreted relative to the specific set of variables used to assess it, as different variables capture different aspects of personality deviation—ranging from narrow, domain-specific expressions to broader, holistic characteristics. Here, “others” refers to the comparison group used to quantify unusualness, which can range from close peers to the broader population, depending on the chosen reference sample. Hence, unusualness also depends on the composition of the population it pertains to.

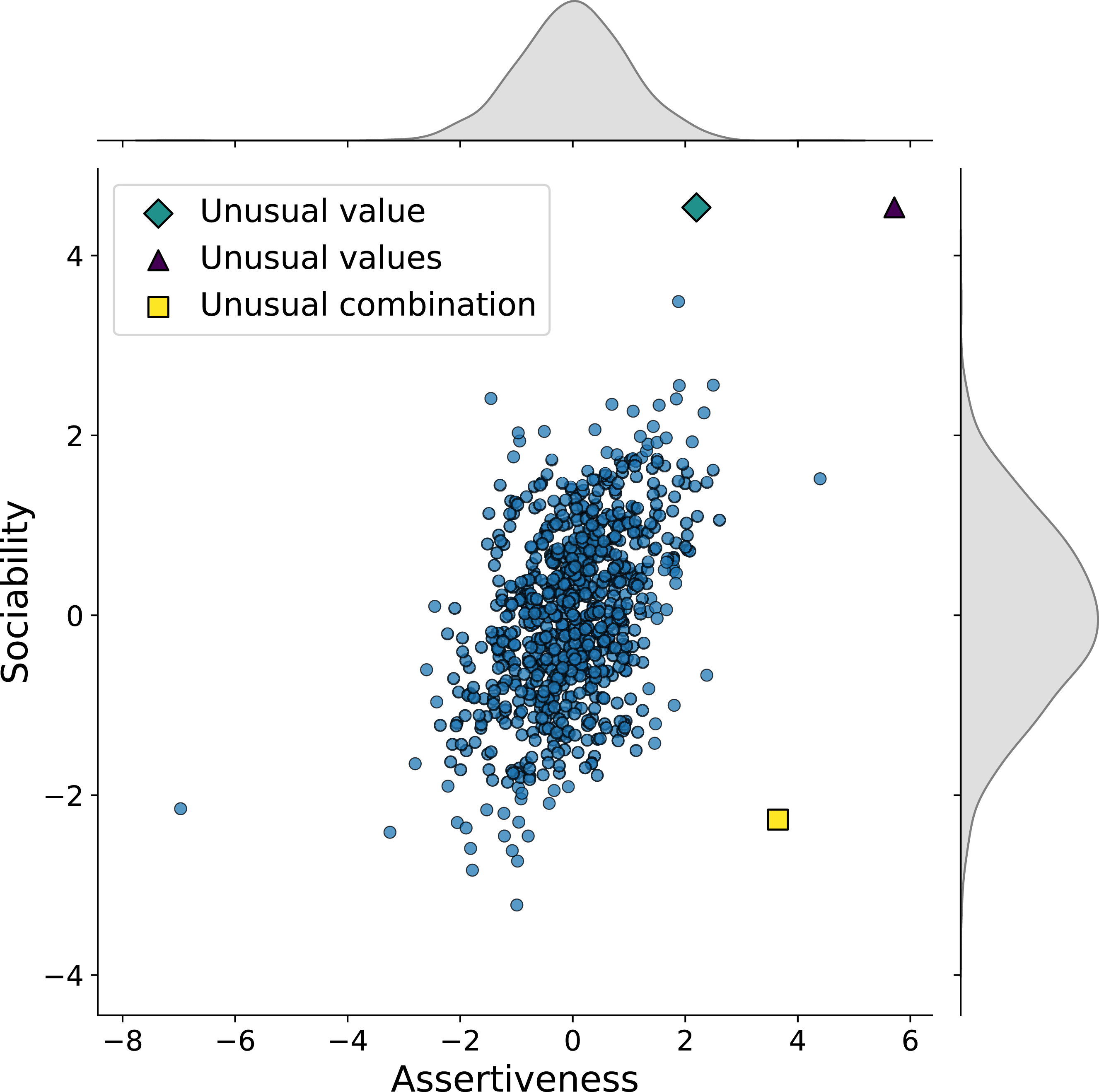

Figure 1 illustrates the two elements of unusualness using simulated data on two correlated facets of extraversion: sociability and assertiveness. The first individual (green diamond) shows an extremely high value on sociability. The second individual (purple triangular) shows extremely high values on both assertiveness and sociability. Both individuals exemplify unusual values while still conforming to the population’s positive correlation pattern (no unusual combination). The third individual (yellow square) demonstrates an unusual combination of variables (i.e., low sociability and high assertiveness) that deviates from the population-level correlational pattern and displays moderately unusual values to ensure detectability of this unusual pattern. Such unusual cases are common. For example, assuming the empirically derived correlation of sociability and assertiveness (r = .57; Soto & John, 2017b), only 52% of those scoring in the low, medium or high population third in one facet also score in the same third in the other, leaving 48% to be unusual according to this metric (Mõttus, 2022). Illustration of unusual values and unusual combinations. Note. This figure visualizes simulated data representing individuals’ z-standardized variable values for sociability and assertiveness. The highlighted dots present two elements of unusualness: unusual values as rare expressions of individual personality variables (green diamond, purple triangular) and unusual combinations as atypical patterns of personality variables (yellow square).

How to measure unusualness?

So far, we have introduced the concept of unusualness and defined it as the extent to which an individual’s personality, as defined by a set of personality variables, deviates from that of others in the population, either through unusual values or unusual combinations. In the following, we describe the characteristics that an adequate measure of unusualness must have and introduce and evaluate different methods for computing unusualness.

First, an adequate measure of unusualness must provide a continuous unusualness score (USC) that reflects how unusual an individual’s personality profile is compared to the reference population. Second, it must use a set of at least two personality variables as an input for computing a USC. Third, it must be able to account for both elements of unusualness by assigning high unusualness to (a) individuals with unusual values in at least one personality variable, or (b) individuals with one or more unusual combinations, where each combination can involve any number of variables from two up to the full set of variables included.

We intentionally keep this definition at a conceptual level without providing a strict formalization of the USC because the most informative operationalization of unusualness may depend on the context, research paradigm, and the substantive question at hand. Accordingly, various methods fulfil these requirements and compute USCs in different ways (e.g., pronounced separation from the data centroid, low density, and ease of partitioning). In the following, we provide a brief overview of the methods used in this study (see Supplement 1.1 for a detailed description of the methods).

Parametric methods

Parametric methods (such as distance metrics) have been previously used in personality research for empirical work (e.g., on profile similarity; Sels et al., 2020), or for conceptual work about aspects of unusualness and its implications (Del Giudice, 2023; Van Tilburg, 2019). Minkowski distance (MID) quantifies deviation from the centroid by summing absolute or squared deviations across variables, but it ignores correlations among variables and thus only captures unusual values. Shape distance (SHD), in contrast, measures the correlation between an individual’s variable profile and the population mean profile, capturing unusual combinations while being insensitive to unusual values. Mahalanobis distance (MAD) addresses both limitations by accounting for variable variances and covariances, making it sensitive to both unusual values and unusual combinations. The primary strength of parametric methods lies in their interpretability and their connections to multivariate statistics. However, interpreting high values in the discussed distance-based metrics as high values of unusualness (as understood by our conceptual framework) is only appropriate if the personality variables have an approximate multivariate normal distribution, a condition that may hold for some, but not all personality variables (e.g., dishonesty, Jiang et al., 2023; dark-triad traits, Webster & Jonason, 2013, trait evaluations of political candidates, Hetherington et al., 2016). When variables exhibit bimodal, heavily skewed, or otherwise non-normal distributions, these methods become less effective and may fail to identify unusualness (Figure 4). To address this, non-parametric methods from the field of anomaly detection offer a flexible and robust alternative for measuring unusualness.

Non-parametric methods

Anomaly detection methods refer to a broad class of statistical and machine learning techniques aimed at identifying observations that deviate substantially from the typical structure of a dataset (Aggarwal, 2017; Chandola et al., 2009). Originally developed in disciplines such as data mining, industrial engineering, and cybersecurity, anomaly detection is now widely applied across domains including medical diagnostics, fraud detection, finance, and systems monitoring (Abuzaid, 2020; de Santis & Costa, 2020; Hilal et al., 2022; John & Naaz, 2019). Extended isolation forest (EIF; Hariri et al., 2021) isolates individual observations through recursive random partitioning, with fewer partitions required to isolate an observation indicating greater unusualness. One-class support vector machine (OC-SVM; Schölkopf et al., 2000) learns a decision boundary that encompasses the majority of observations in a high-dimensional space, with observations lying outside this boundary classified as unusual. Local outlier factor (LOF; Breunig et al., 2000) estimates how unusual an observation is by comparing its local density to that of its nearest neighbors, assigning higher scores to individuals located in sparser regions of the variable space. Non-parametric models are less reliant on distributional assumptions than parametric approaches and inherently capable of detecting both unusual values and unusual combinations of variables, making them flexible and potentially more powerful alternatives to simple distance-based measures. That said, each method implicitly encodes specific expectations about the data structure—for instance, smoothness, locality, or boundary behavior—which influence their performance. As such, their suitability depends on the characteristics of the data at hand. They also typically involve multiple hyperparameters that must be tuned to the data.

Illustrative simulation examples

In the following section, we used simulated data to illustrate how different elements of unusualness affect the resulting USCs. Consistent with Figure 1, we used assertiveness and sociability as an example of two personality variables that are typically highly correlated (r = .57; Soto & John, 2017b).

Data simulations

Four distinct unusualness conditions were simulated (n = 750): (A) No injected unusualness, (B) unusual values, (C) unusual combinations, and (D) unusual values and unusual combinations. All data were generated from the same bivariate normal distribution with a correlation of .57. In Panels (B)–(D), unusualness was artificially introduced by perturbing 10% of the sample. In (B), this involved creating extreme values on assertiveness by multiplying the assertiveness scores of 10% of randomly selected individuals by a factor of three. In (C), we introduced atypical co-occurrences of assertiveness and sociability—such as low assertiveness paired with high sociability—by randomly inverting the assertiveness scores of 10% of individuals. In (D), both manipulations were applied: 5% of individuals were modified as in (B), and another 5% as in (C).

In most applications of unusualness, more than two variables will be used to estimate the USC. Therefore, we also ran our simulations for a greater number of personality variables. Precisely, all data were generated from a 15-dimensional multivariate normal (15D) based on the empirical correlation structure of the Big-Five facets (Soto & John, 2017b; for other dimensions, see Supplement 2.1; for the correlation matrix, see Supplement 2.6). The unusualness mechanisms in Panels (A)–(D) are the same as in the 2D examples (i.e., unusual values and unusual combinations were generated by perturbing a single variable for 10% of the sample). Additional conditions exploring alternative data distributions (i.e., normal, bimodal, exponential), number of variables (i.e., 2, 3, 6, 15), sample sizes (i.e., 500, 2000), correlation strengths in the 2D case (i.e., .30, .57, .70), and proportions of unusual individuals (i.e., .05, .15, .25) are provided in Supplement 2.1. We evaluated five methods (MID, MAD, EIF, LOF, and OC-SVM) 3 in terms of their ability to detect unusualness.

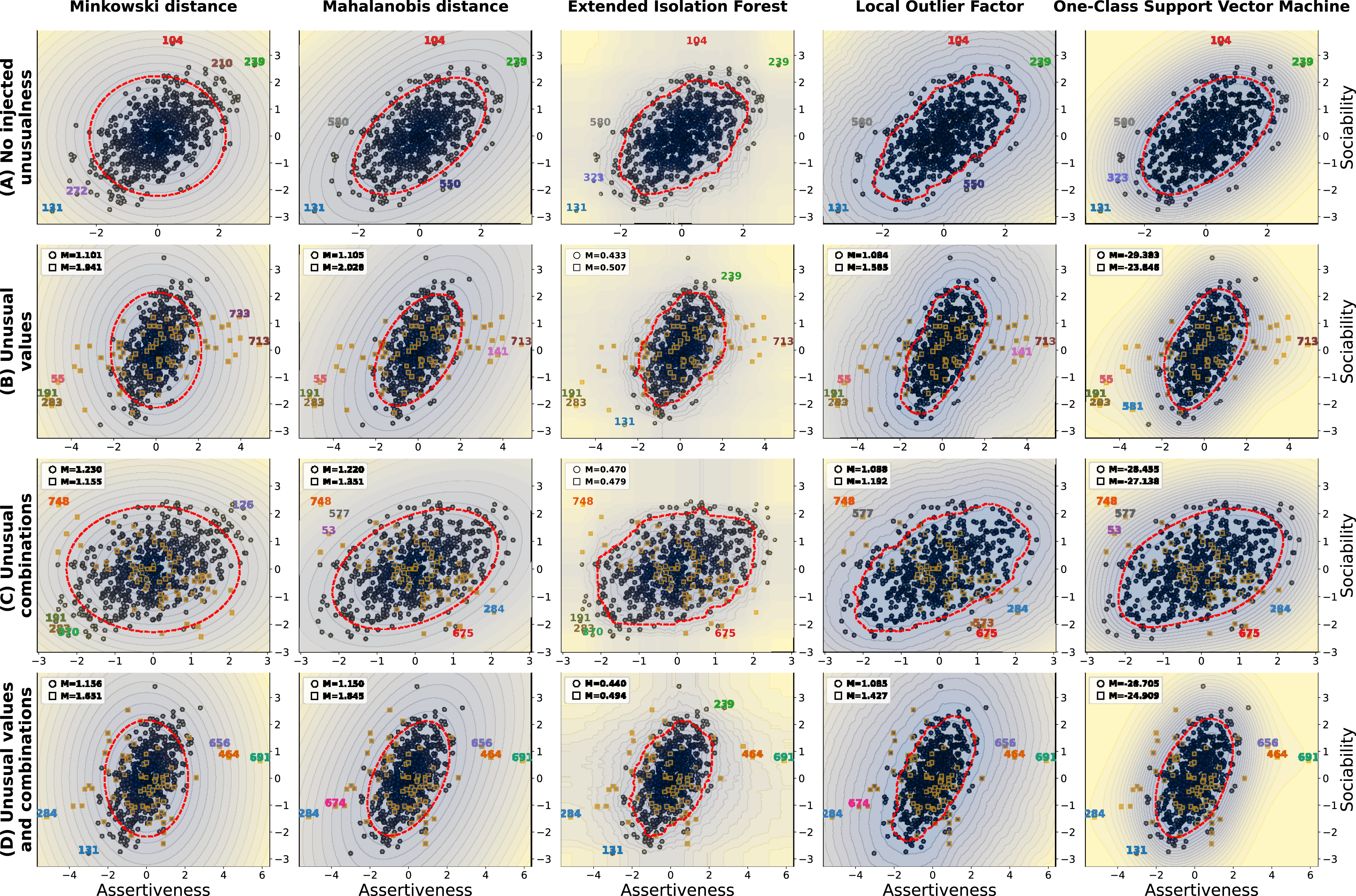

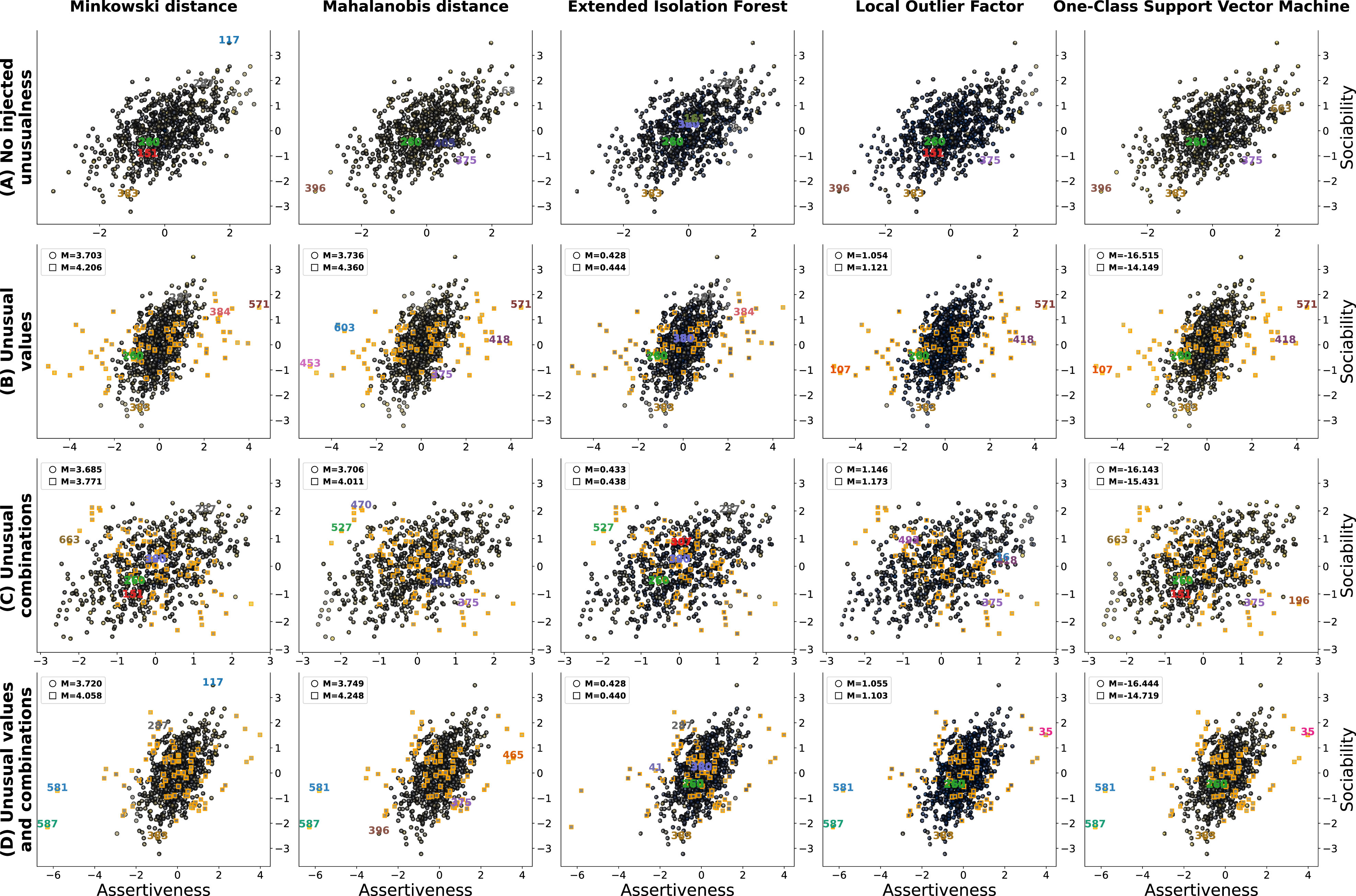

Methods

Models were implemented using the Python libraries scikit-learn for LOF and OC-SVM (Pedregosa et al., 2011), treeple for EIF (Li et al., 2024), and self-implemented for MID and MAD. For LOF, OC-SVM, and EIF, we used the default parameters provided by their respective libraries, except for the following key hyperparameters: the number of neighbors for LOF (n_neighbors = 60), which sets the neighborhood size for local density estimation; the upper bound on the fraction of outliers for OC-SVM (nu = 0.2), that is, an upper bound on training errors (and a lower bound on support vectors) that controls boundary tightness; and the subsample size for EIF (max_samples = 0.5), which sets how many data points are used per tree and influences inter-tree correlation. The computation of MID and MAD follows the formulas presented in Supplement 1.1. The results are shown in Figures 2 and 3. For each subplot, we visualized USCs as a color gradient (unusualness increases from darker to lighter values within the dots) across the 2D (Figure 2) or 15D (Figure 3) personality space, and highlighted the five individuals receiving the highest USCs. In Panels (B)–(D), we further compared the mean of USCs of the perturbed individuals (highlighted squares) with the remaining individuals. In Figure 2, we included a decision boundary (red dashed line) that excludes the top 10% of the most unusual individuals; however, this is of limited utility in the 15D case and therefore left out in Figure 3. Visualization of different methods to measure unusualness across unusualness conditions for two variables. Note. This figure shows how five methods (Minkowski distance, Mahalanobis distance, extended isolation forest, local outlier factor, and one-class support vector machine) assign unusualness scores (USCs) across four two-variable simulation conditions: (A) no injected unusualness, (B) unusual values, (C) unusual combinations, and (D) unusual values plus combinations. Unusualness is displayed as a color gradient, with darker colors indicating lower scores and lighter colors indicating higher scores. In each subplot, the five highest-scoring individuals are labeled. In Panels (B)–(D), unusualness was introduced by perturbing 10% of the sample (highlighted squares). The red dashed line marks a decision boundary calibrated to exclude the 10% most unusual individuals. For Panels (B)–(D), we also compare the mean USC of the perturbed individuals with that of the remaining individuals. Visualization of different methods to measure unusualness across unusualness conditions for 15 variables. Note. This figure shows how five methods (Minkowski distance, Mahalanobis distance, extended isolation forest, local outlier factor, and one-class support vector machine) assign unusualness scores (USCs) across four 15-variable simulation conditions: (A) no injected unusualness, (B) unusual values, (C) unusual combinations, and (D) unusual values plus combinations. Unusualness is displayed as a color gradient, with darker colors indicating lower scores and lighter colors indicating higher scores. In each subplot, the five highest-scoring individuals are labeled. In Panels (B)–(D), unusualness was introduced by perturbing 10% of the sample (highlighted squares). For Panels (B)–(D), we also compare the mean USC of the perturbed individuals with that of the remaining individuals.

Results

Figure 2 highlights key similarities and differences in how the methods assign USCs across different unusualness conditions. In (A), where no unusualness was injected, all models assigned higher USCs to more isolated individuals that occur at the edges of the distribution. In (B), all models assigned the highest USCs to individuals with extreme values of assertiveness, correctly identifying unusual values as the source of unusualness. In (C), both the MID and EIF struggled to detect unusual combinations. They assigned high scores to individuals that still follow the original correlational structure, rather than to those that deviate from it. In contrast, the MAD, LOF, and OC-SVM correctly identified individuals with atypical co-occurrence of sociability and assertiveness as most unusual. (D) shows that the models differed in their sensitivity to the elements of unusualness, as unusual values tended to exert a stronger influence on the USC than unusual combinations. Overall, Figure 2 shows that all models assigned higher average unusualness to the perturbed individuals, with unusual values being more consistently detected than unusual combinations.

Figure 3 illustrates how the patterns observed in Figure 2 extend to higher-dimensional settings. In (B), all models again assigned the highest USCs to individuals with injected unusual values. In (C) and (D), MAD, LOF, and OC-SVM showed a larger relative increase in mean USC scores from non-perturbed to perturbed individuals than EIF and MID, indicating more effective identification of unusual combinations. However, as expected, the identification of unusualness (particularly unusual combinations) was more challenging in the 15D case compared to the 2D case, likely due to increased random variation across the additional dimensions. Across all conditions, MAD showed the strongest performance, yielding the largest relative increases and capturing the highest proportion of perturbed individuals among the top 5 USCs. Overall, Figure 3 demonstrates that even when only a single variable among many is perturbed—resulting in either an unusual value on that variable or in unusual combinations involving it—methods such as MAD, LOF, and OC-SVM reliably detected unusualness, with MAD performing particularly well under multivariate normality.

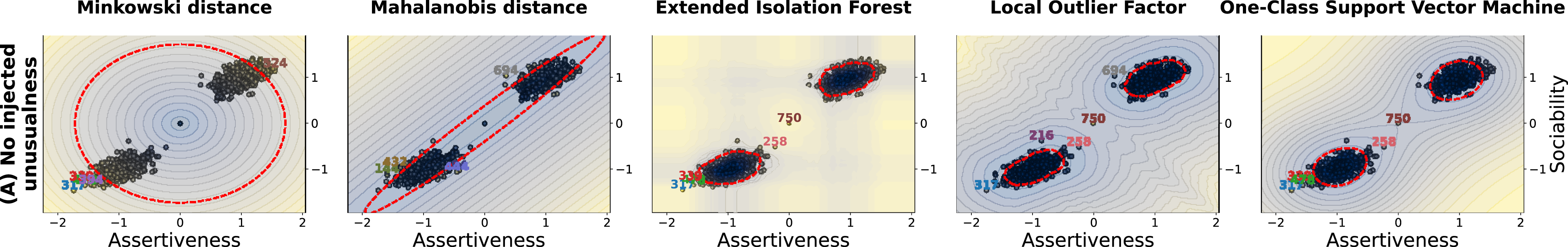

Figure 4, however, highlights a limitation of distance-based approaches in the presence of multimodal data. We simulated a two-dimensional bimodal distribution, placing a highly isolated individual at the midpoint between the two clusters. The distance-based models (MAD and MID) assign the highest density and thus the lowest USC to this central region, even though it lies far from any true data density peaks. In contrast, the non-parametric LOF, and OC-SVM correctly identify the cluster centers as high-density areas and assign the highest unusualness to the isolated individual, effectively capturing the structure of the bimodal distribution. Additionally, these models also handle other non-normal distributions adequately (e.g., heavily skewed distributions, see Supplement 2.1). EIF correctly identifies the centers but also shows some spurious artifacts already observed in the original Isolation Forest (Liu et al., 2008), indicating that it does not fully overcome the limitations of its predecessor (Figure 4). Visualization of different methods to measure unusualness under a bimodal distribution. Note. This figure shows how five methods (Minkowski distance, Mahalanobis distance, extended isolation forest, local outlier factor, and one-class support vector machine) assign unusualness scores in a bimodal two-variable setting. Unusualness is displayed as a color gradient, with darker colors indicating lower scores and lighter colors indicating higher scores. In each subplot, the five highest-scoring individuals are labeled. The red dashed line marks a decision boundary calibrated to exclude the 10% most unusual individuals.

How to assess basic properties of unusualness

Important challenges when defining and measuring a new construct are to meet requirements of basic psychometric properties, that is, the reliability (i.e., the extent to which the score is repeatable) and validity (i.e., the extent to which the measure accurately captures the intended construct). We will elaborate on approaches and challenges for measuring these properties in the following.

Reliability

To assess the reliability of USCs, two commonly applied psychometric techniques are suitable: split-half reliability, and test-retest reliability. When only a single measurement occasion is available, split-half reliability can be used to estimate internal consistency provided that each profile-constituting variable is measured with multiple indicators. Specifically, we split the indicators within each variable into two halves (e.g., odd vs. even items), recompute scale scores for each half, and thereby obtain two personality profiles per person, each containing all variables. We then compute USCs separately for the two profiles and correlate the resulting USC estimates. By contrast, splitting the set of personality variables themselves is difficult to interpret in our framework, because unusualness may be driven by a small subset of variables. Consequently, the resulting split-half reliability can depend strongly on how variables are assigned to the two halves. When repeated measurements over time are available, test-retest reliability can be computed by estimating USCs at different time points and correlating these scores to quantify consistency over measurement occasions. This approach does not rely on splitting variables or indicators and is therefore not affected by the above limitation.

Uncertainty estimation

An important property of unusualness is the degree of uncertainty associated with the scores and rank order predicted by different models. This uncertainty can be quantified through several complementary approaches. Bayesian methods provide posterior distributions over scores, enabling probabilistic interpretations of unusualness (e.g., Hayat et al., 2020; Zhang et al., 2018). Resampling-based techniques such as bootstrapping estimate the sampling distribution of scores or ranks by repeatedly drawing resamples, yielding confidence intervals and rank stability metrics (e.g., Hall & Miller, 2009). Additionally, uncertainty can be assessed by examining the consistency of results across different models and hyperparameter settings, which reflects the extent to which scores are influenced by modeling choices (e.g., Zimek et al., 2014).

Validity

Excluding bias

One key challenge in establishing unusualness as a valid construct is ruling out the possibility that it is primarily driven by measurement error or response biases. Such biases may arise from careless responding, idiosyncratic item interpretations, self-perceptions or response styles, or social desirability effects. Thus, we need to separate “true” unusualness emerging from an individual’s atypical thoughts, feelings, and behaviors from these error sources. Methods to mitigate the influence of these biases include data quality checks (e.g., metadata analysis such as investigating response time distributions, self-reports of careless responding, and response-pattern metrics like longstrings, response inconsistency, or aberrant response trajectories) and using multimethodal approaches such as combining self- and informant reports (see Generalizability).

Nomological correlates

To establish construct validity, the position of unusualness within a broader nomological network must be evaluated. This involves examining both convergent and discriminant validity in relation to prior conceptualizations of observed and perceived unusualness (normativeness, Furr, 2008; typeness, Asendorpf, 2006; need for uniqueness, Chopik et al., 2024; perceived uniqueness; perceived weirdness, Wood et al., 2007; Kim et al., 2023; eccentricity, Carvalho et al., 2021; oddity, Ashton & Lee, 2012), expert-, peer-, or informant ratings of unusualness, other theoretically related personality constructs (e.g., maladjustment, Gross, 2007; creativity, Eysenck, 1995; humor, Greengross et al., 2012), and sociodemographic variables (e.g., age, gender, education). Importantly, to avoid circular reasoning, variables used to compute unusualness must be excluded from correlational analyses to prevent inflated associations due to overlapping content.

(Incremental) predictive validity

Establishing (incremental) predictive validity is essential for demonstrating the utility of unusualness as a psychological construct. It enables an assessment of whether unusualness offers predictive value on its own and above the explanatory power of the personality variables it is based on. Assessing predictive validity may involve incremental validity (i.e., does unusualness add predictive value beyond established measures like the Big Five) or comparative analyses (does unusualness predict certain outcomes more effectively than established measures, see also comparisons of personality types and the Big Five; Asendorpf, 2003; Asendorpf & Denissen, 2006; Costa et al., 2002). Outcomes may include relevant within-person dynamics (e.g., pronounced short-term personality state fluctuations or long-term personality change; Jackson & Wright, 2024; Wilson et al., 2017), and social interaction patterns (unique relationship qualities, irregularities in interactions; Dunlop et al., 2017; Hopwood, 2018). Additionally, unusualness may be linked to objectively measurable broad life outcomes (e.g., physical and physiological health, educational or financial achievements, fame and popularity) as well as to domain-specific outcomes conceptually tied to unusualness (e.g., engaging in unconventional jobs, pursuing niche hobbies).

Generalizability

The generalizability of unusualness may pertain both to consistency across different assessment methods or aggregation levels and to its applicability across various contexts, including personality domains, populations, and over time. Researchers may assess the cross-method consistency of both the input data (i.e., how personality is assessed and aggregated) and the analytical configurations used to estimate unusualness (i.e., the choice of methods and parameters). For instance, USCs could be derived from personality variables obtained with diverse assessment methods, such as ecological momentary assessments (e.g., daily diaries, ambulatory tracking), informant reports (e.g., from peers or colleagues), and behavioral data (e.g., digital traces, sensor-based metrics), that are aggregated at varying levels of granularity (e.g., dimensions, facets, or nuances), and estimated with different statistical approaches (e.g., parametric versus non-parametric approaches, density-based versus tree-based approaches).

Furthermore, generalizability refers to different personality domains (i.e., whether USCs are consistent when estimated from different personality variables), populations (e.g., variations due to cultural context, socioeconomic status, or age), and time (i.e., whether unusualness is stable or contextually sensitive across developmental stages or life events). Evaluating the convergence of scores across these modalities and methods will offer insight into the robustness and generalizability of unusualness.

Summary

In this section, we introduced several methods for estimating unusualness and compared their performance in a simulation study. Three methods (MAD, LOF, OC-SVM) emerged as effective, as they were able to detect both key components of unusualness, unusual values and unusual combinations, across a variety of data conditions. The performance of MAD, however, was contingent on normality assumptions, showing strong results when these held but proving ineffective otherwise. We proposed assessing reliability via established psychometric techniques, such as split-half-, or test-retest reliability, and robustness via uncertainty analyses. We discussed several forms of validity (i.e., nomological correlates, (incremental) predictive validity, generalizability) and outlined key challenges (e.g., excluding biases) and methodological approaches to overcome them.

How to uncover the elements of unusualness?

In the following, we will elaborate on a key question in the analysis of unusualness: which personality variables and variable combinations contribute most to an individual’s USC—and in what ways. While the USC captures the degree to which someone stands out, it does not indicate in what way they are unusual. However, this is an important question, as unusualness can arise from a wide range of configurations—such as unusual values or unusual combinations on just one, several, or even all of the assessed variables. Naturally, it is of interest to understand which of these elements contribute to an individual’s unusualness and how, as the underlying mechanisms, antecedents, and potential consequences may differ depending on the specific configuration. Therefore, we view methods for uncovering the elements of unusualness as a valuable complement to the single-valued USC that stimulate future research.

As a first step toward analyzing the variables and variable combinations that constitute unusualness, we suggest examining individual personality profiles—for example, using profile plots—to gain an initial, interpretable view of how specific configurations may contribute to a person’s unusualness. To quantitatively examine the contribution of individual variables, we propose using SHAP values (Lundberg & Lee, 2017), as this has already been successfully applied to derive variable importances for various black-box anomaly detection models (e.g., Antwarg et al., 2021; Liu & Aldrich, 2023). However, determining how variable combinations contributed is not always straightforward. At its core, this involves identifying interactions between personality variables in models that cannot be easily decomposed into independent effects, echoing ongoing research in explainable artificial intelligence (e.g., Bordt & von Luxburg, 2023; Fumagalli et al., 2023; Köhler et al., 2024). To address this, we propose two distinct data-driven approaches: SHAP interaction values (Fumagalli et al., 2023; Lundberg et al., 2019) and RTPA.

SHapley additive exPlanations values and interaction values

SHAP values provide a method for interpreting machine learning models (or any other model) by quantifying the contribution of each variable to the model’s prediction (Lundberg & Lee, 2017). Drawing from cooperative game theory, they fairly distribute the difference between the actual prediction and an average prediction across all variables. SHAP interaction values extend this approach by capturing not only the individual contribution of each variable but also its interactions (up to any order specified), revealing how variable combinations influence the model’s prediction (Fumagalli et al., 2023; Lundberg et al., 2019).

In this work, we use SHAP values and SHAP interaction values to identify variables that contribute most to the USC and help disentangle the two underlying elements of unusualness (i.e., unusual values and unusual combinations). For the first task, we rely on SHAP values, as they reflect the overall importance of each variable, regardless of how it influences the USC. For the second task, we use SHAP interaction values, which can, in principle, separate the two elements of unusualness by attributing importance to individual variables (capturing unusual values) and to interactions between variables (capturing unusual combinations). Another beneficial property of the SHAP (interaction) values is that they are fundamentally local uncovering mechanisms (Lundberg & Lee, 2017). Thus, nuanced insights can be generated on the level of individual instances, and subgroups or the entire population (through aggregation of the local insights into global measures).

Regression tree path analysis

Regression trees are interpretable machine learning models for continuous outcomes, capable of capturing complex interaction patterns between variables (Hastie et al., 2009). They recursively split the data by variable values, forming leaves that group individuals with similar target values. Beyond serving as stand-alone prediction algorithms, regression trees can also increase the interpretability of other machine learning methods when used as surrogate models (i.e., by predicting another model’s outputs), because their structure yields explicit decision rules. As an example, Aslam et al. (2022) used machine learning models to detect unusual events and subsequently fit a decision-tree surrogate to better understand the resulting predictions. Building on this logic, we train regression trees as an interpretable surrogate model to predict USCs derived from the methods described in the Measurement section. Once trained, trees may be analyzed to identify the paths leading to leaves with the highest USCs. If these paths involve only a few unique variables that occur repeatedly and include split points at particularly extreme values, this may suggest that unusualness is primarily driven by unusual values. Conversely, if the paths include a large number of different variables, use moderate split points, and reveal recurring sequential patterns of different variables across trees, this points to unusual combinations playing a dominant role in the prediction of unusualness.

RTPA is well-suited for detecting and visualizing specific interactions of any order (up to the maximum tree depth). Furthermore, the split logic enables isolated investigations of specifically unusual individuals which may be overshadowed by a global metric. However, as regression trees are sensitive to small data variations and prone to overfitting (Amro et al., 2021; Li & Belford, 2002), we recommend repeating the estimation procedure using different hyperparameter settings, subsamples of the data, or cross-validation, and evaluating consistency of the results. Still, synthesizing results either within or across trees to draw global conclusions about the relative importance of main effects versus interactions in the full sample remains a major challenge.

Illustrative simulation examples

To evaluate the capability of the proposed methods to uncover the elements of unusualness, we computed SHAP values and SHAP interaction values for the unusualness conditions (A: no unusualness, B: unusual values, C: unusual combinations, D: unusual values and combinations) and methods from the illustrative simulation examples used before (Figures 2 and 3) and applied RTPA. Specifically, we explored if the proposed methods are able to identify the unusual values of the variable assertiveness as the source of unusualness in (B), the unusual combinations of assertiveness and sociability as the source of unusualness in (C), and both elements of unusualness in (D) and how this is affected by the number of variables.

Methods

SHAP values were computed using the shap package (Lundberg & Lee, 2017), employing the KernelExplainer with a single background sample defined as the feature-wise median. Pairwise SHAP interaction values were estimated using the shapiq library (Fumagalli et al., 2023), specifically with the KernelSHAP-IQ method and the k-Shapley Interaction Index (k-SII; Bordt & von Luxburg, 2023), using a sampling budget of 1,000 coalition/model evaluations. RTPA was conducted using scikit-learn’s DecisionTreeRegressor (Pedregosa et al., 2011), with the maximum tree depth set to 3 and all other parameters left at their default values.

Results

SHapley additive exPlanations values and interaction values

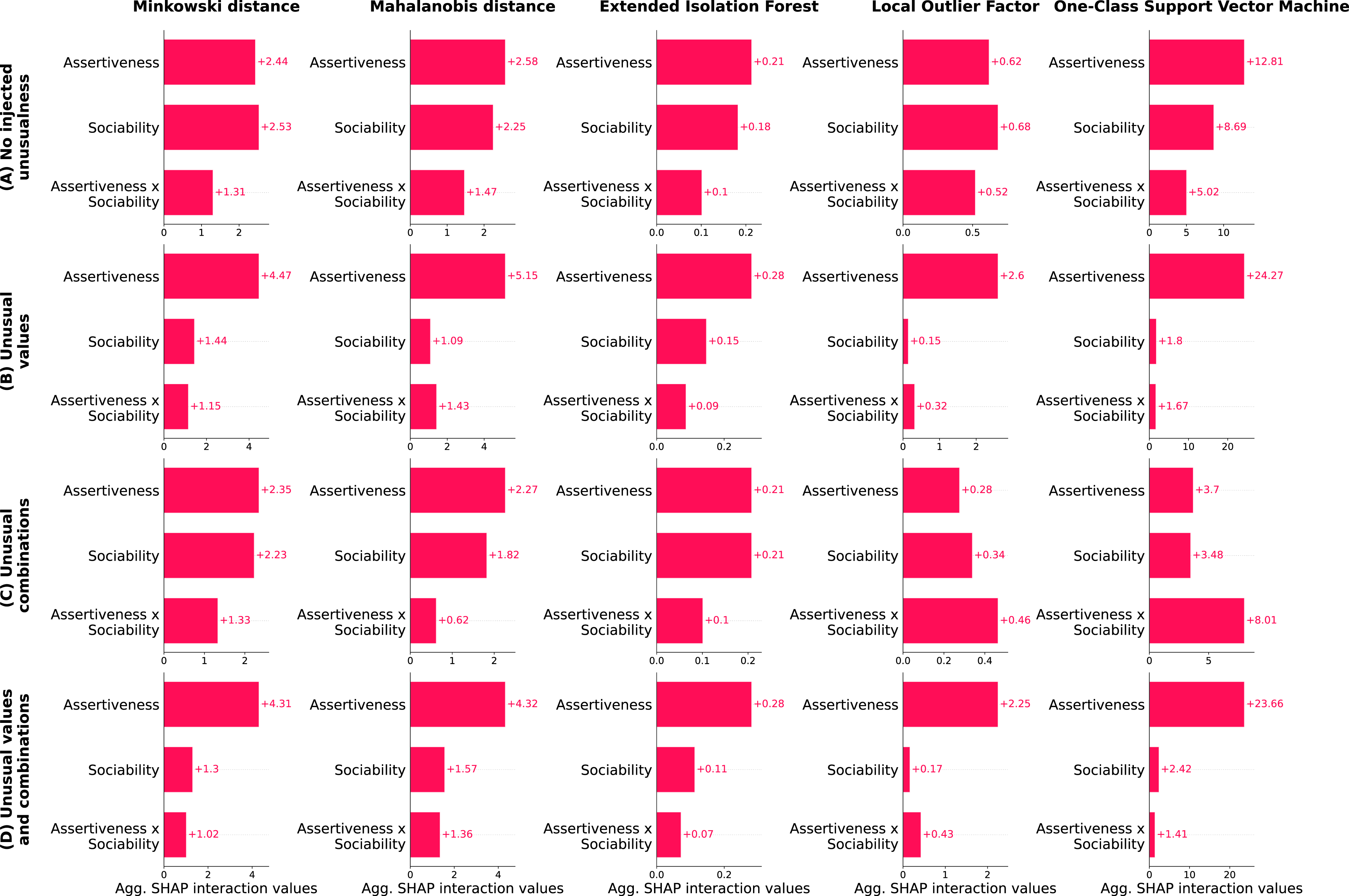

Because majority patterns can overshadow those of unusual individuals, we repeated the SHAP analyses using different proportions of individuals in the aggregation. In Figure 5, we displayed the aggregated SHAP interaction values for the 2D case where the number of individuals included equals the number of highlighted dots (i.e., five; for additional results—e.g., with varying proportions of individuals, different numbers of variables, or altered correlations—see Supplement 2.3). Variable and variable interaction attributions across different methods and simulated unusualness conditions. Note. This figure shows SHapley Additive exPlanations (SHAP) interaction values for five methods (Minkowski distance, Mahalanobis distance, extended isolation forest, local outlier factor, and one-class support vector machine) across four simulation conditions: (A) no unusualness, (B) unusual values, (C) unusual combinations, and (D) unusual values plus combinations. In each subplot, bars display the mean SHAP interaction values that quantify contributions to the method’s unusualness score for assertiveness, sociability, and their interaction (assertiveness × sociability).

First, it is noteworthy that all methods can reliably attribute the USC in (B) to assertiveness, confirming that SHAP values and SHAP interaction values can detect unusual values as a source of unusualness. Second, methods struggle to reliably detect unusual combinations (C). This may be because unusual combinations and unusual values are inherently intertwined, and the correct attributions can only be derived for certain near-optimal individuals representing the pattern of “not too extreme individual values but an unusual combination” (see also Supplement 2.4 for individual-specific decompositions of individual USCs, shown as shapiq force plots for the most unusual individuals to exemplify this). Nonetheless, SHAP interaction values computed for LOF and OC-SVM assign high importance to the unusual combination across most settings, whereas EIF, MID, and MAD fail to capture these interactions effectively. Thus, LOF and OC-SVM appear to be the most suitable methods to reliably detect unusual combinations as a source of unusualness. Third, in (D) where both elements of unusualness are present, unusual values outweigh unusual combinations in all models.

When extending the SHAP analysis to higher-dimensional settings (i.e., unusualness estimated using 3, 6, and 15 variables), the overall results remain largely consistent with those from the 2D case (see Supplement 2.3). SHAP interaction values reliably identify unusual values of assertiveness as a key contributor in (B) and (D). However, reliably detecting unusual combinations becomes increasingly challenging as dimensionality rises. In high-dimensional, correlated feature spaces, it becomes increasingly challenging to unambiguously attribute unusual combinations to specific pairs of variables. This is because an individual who deviates from the global covariance structure can exhibit unusual combinations with many variables. For example, if in the 3D case high assertiveness co-occurs with unusually low sociability, then not only sociability-assertiveness is an unusual combination but also sociability-energy level.

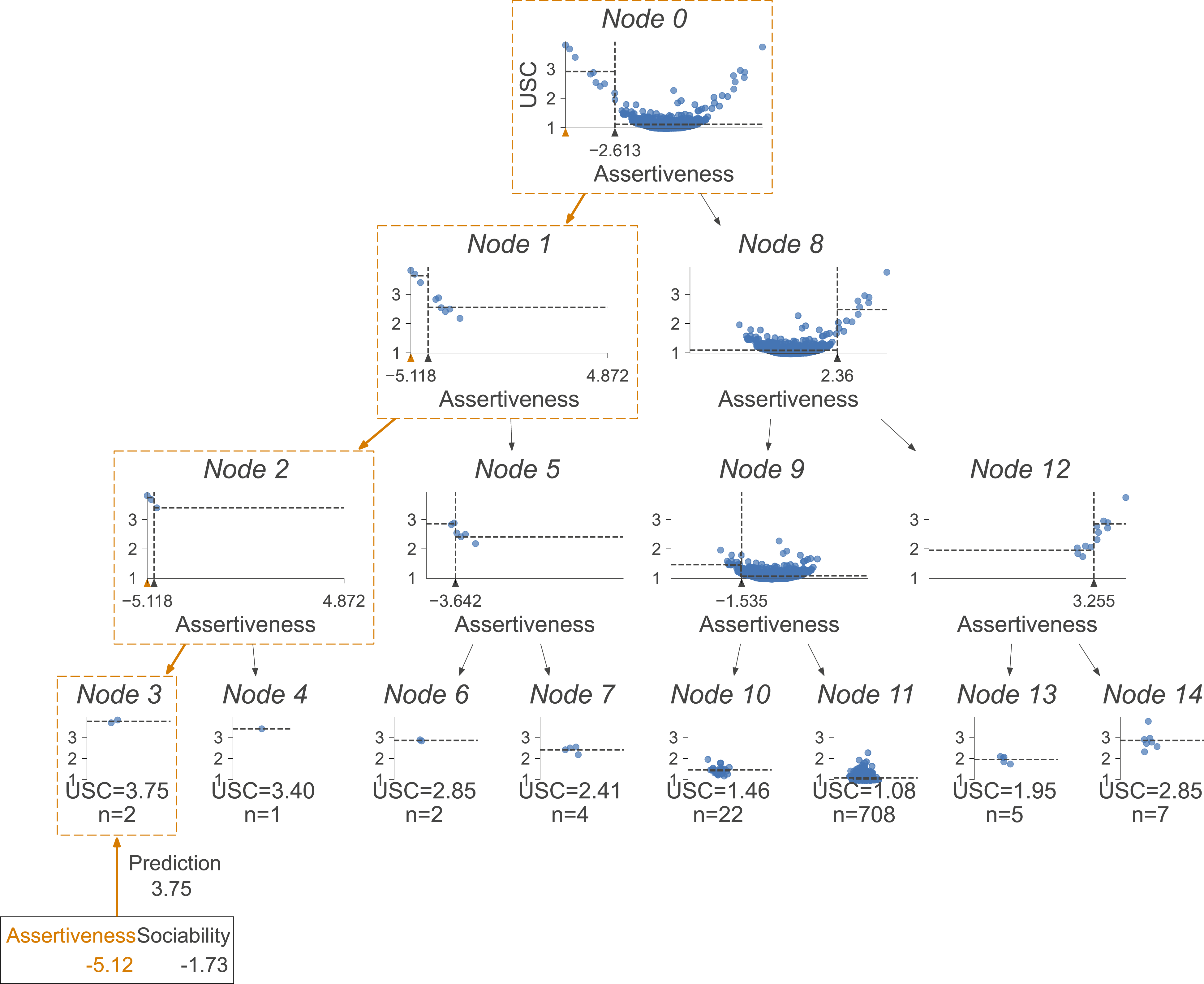

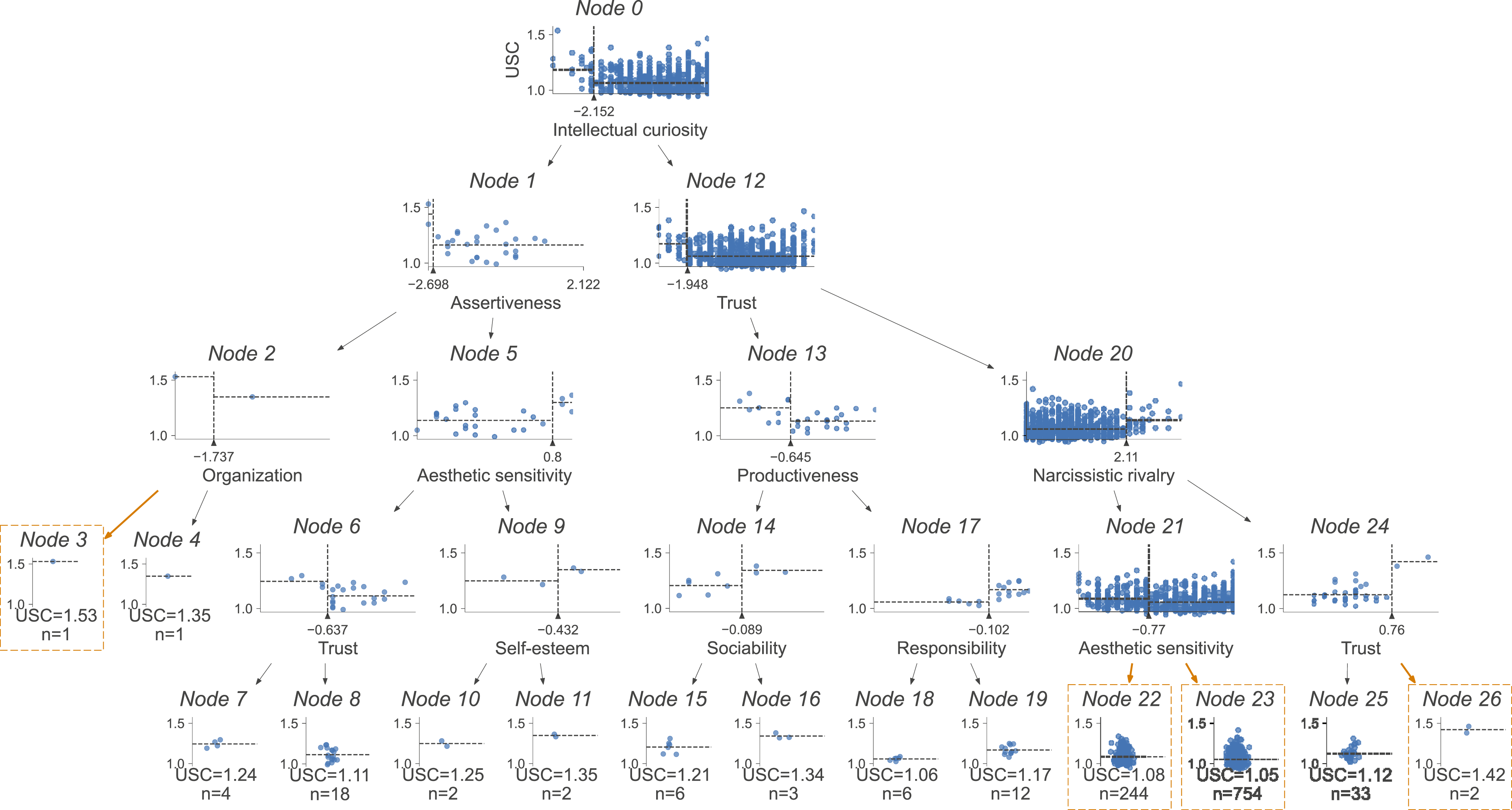

Regression tree path analysis

A representative RTPA for (B) is shown in Figure 6, based on the LOF (for other methods, unusualness conditions, and number of variables, see Supplement 2.5). For visualization purposes, tree depth was limited to 3. Across all unusualness conditions, most individuals are grouped into a single leaf node that contains typical instances (i.e., those without unusual values or combinations). In contrast, the most unusual individuals tend to appear in leaf nodes with smaller sample sizes. The RTPA in (B; Figure 6) mirrors that unusualness is driven by unusual values in assertiveness, as assertiveness is always selected as the splitting variable, the split thresholds are consistently extreme, and the most unusual individual follows a path characterized by the highest absolute value of assertiveness, clearly identifying the source of its unusualness (Node 3). In (C) where unusualness stems from unusual combinations, both assertiveness and sociability appear as split variables in roughly equal proportions, split thresholds are less extreme than in condition B, and the paths leading to the most unusual individuals include splits on both variables with moderately extreme values. RTPAs in (D) represent a mixture of the previous two scenarios. Illustrative regression tree path analysis for the unusual values condition. Note. This figure presents a regression tree path analysis of unusualness scores (USCs) with a tree of depth 3. Scores are derived from the local outlier factor (LOF; n_neighbors = 60) under condition B (unusual values). Each panel either shows (a) an internal node with its splitting rule or (b) a leaf node with the individuals assigned to it and their mean USC. Within each panel, the scatterplot displays the splitting variable on the x-axis and the LOF’s USC on the y-axis. The split point is marked by a vertical dashed line with a triangular marker on the x-axis. The path taken by the most unusual individual is highlighted, and that individual’s z-standardized variable values are reported.

Summary

We proposed three data-driven approaches to identify key variables contributing to unusualness and to distinguish between the effects of unusual values and unusual combinations: SHAP values, SHAP interaction values, and RTPA. In simulation studies, SHAP methods reliably identified unusual values, while SHAP interaction values effectively detected unusual combinations only for certain models (e.g., LOF, OC-SVM) and under certain data conditions (e.g., low dimensionality). RTPA further allowed for intuitive differentiation between unusual values and combinations.

Illustrative empirical examples

In the following, we use three datasets—a large-scale, multimethodological dataset and two smaller complementary datasets—to explore our novel approach to unusualness with actual personality data. Specifically, we apply one parametric method (MAD) and two non-parametric methods (LOF, OC-SVM) to measure unusualness based on individuals’ dispositional traits on different aggregation levels (i.e., facets/domains, nuances) from different assessment methods (i.e., survey, ESM; self-, informant ratings), and assess its reliability (i.e., split-half reliability, test-retest reliability) and validity (i.e., nomological correlates, generalizability, incremental validity). In the main dataset, we conduct further analyses on the facet level to assess the uncertainty of USCs (i.e., m out of n bootstrapping) and to uncover the elements of unusualness (i.e., profile analyses, SHAP values, SHAP interaction values, RTPA).

Methods

Sample information

The main dataset (D1) used in these examples stems from the Emotions project (Ryvkina et al., 2023; codebooks, data, and additional information are available under https://osf.io/6kzx3/). All procedures from the original study received ethical approval. Data were collected from a diverse convenience sample of the German population across two waves. Each wave included an initial survey assessment, an ESM sampling period, and a final survey assessment. Test users, duplicates, minors, participants without valid data, and those who declined participation were excluded (see Ryvkina et al., 2023). Additional exclusion criteria for this work were based on suspicious data (intra-individual response variability, longstring detection, completion time), insufficient ESM responses (fewer than 10 valid measurements), and missing values on any variable included. The final sample consisted of 1,088 participants, with a total of 52,141 measurements. The second dataset (D2) comes from a study in German sociotherapeutic prison departments (for details, codebooks, data, and additional information, see https://osf.io/eefvq/ and Niemeyer et al., 2022). Male prisoners completed self-report surveys and were rated by two informants (usually a psychologist/social worker and a prison officer), providing both self- and informant-reports of personality. After exclusions, the final sample comprised 243 participants. The third dataset (D3) is based on a previous investigation of the Personality Inventory for DSM-5 (PID-5) and includes self-reported survey data from 503 university students in Norway (for details, see Thimm et al., 2016; for data and codebook, see https://osf.io/gvj42/).

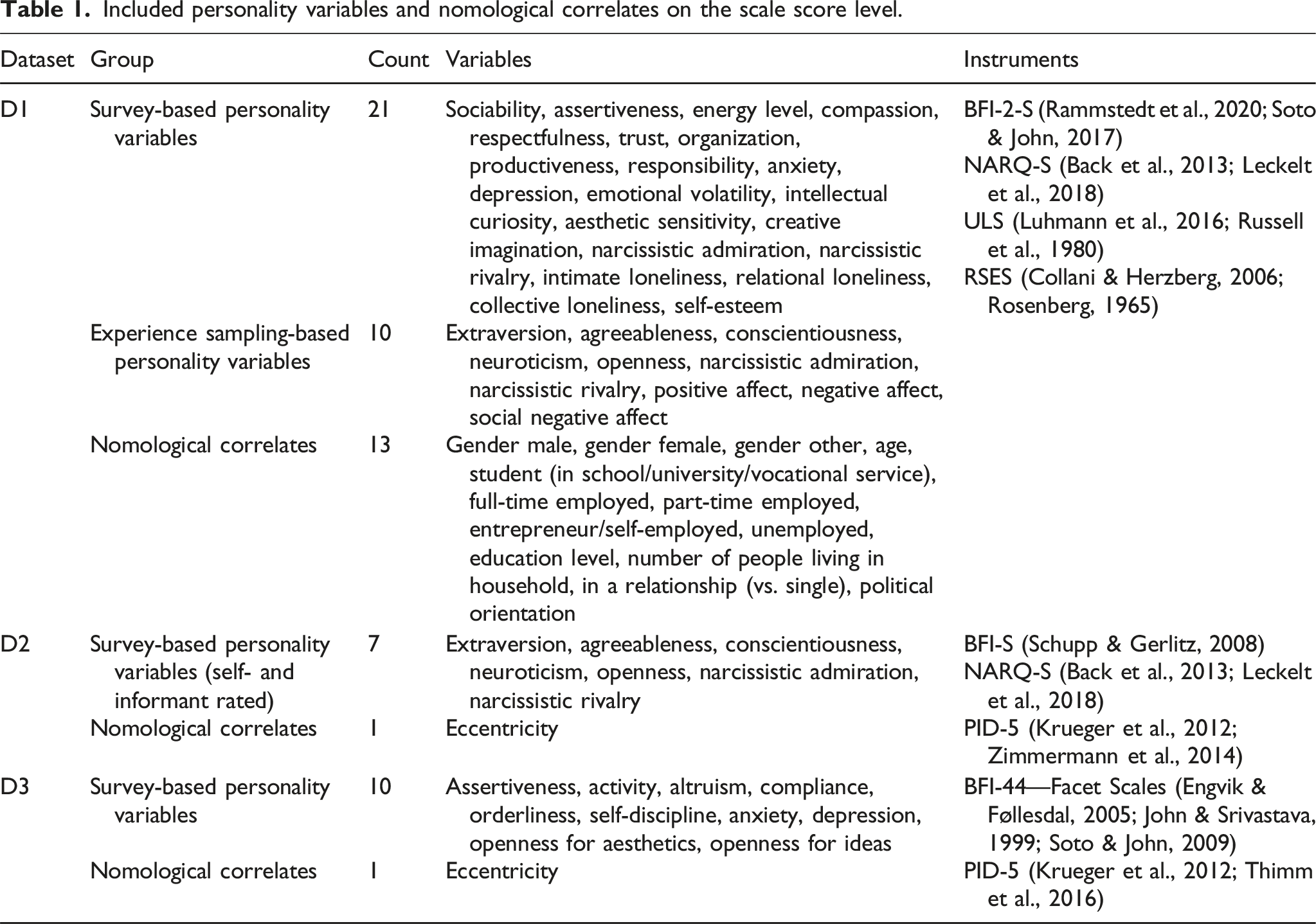

Measures

Included personality variables and nomological correlates on the scale score level.

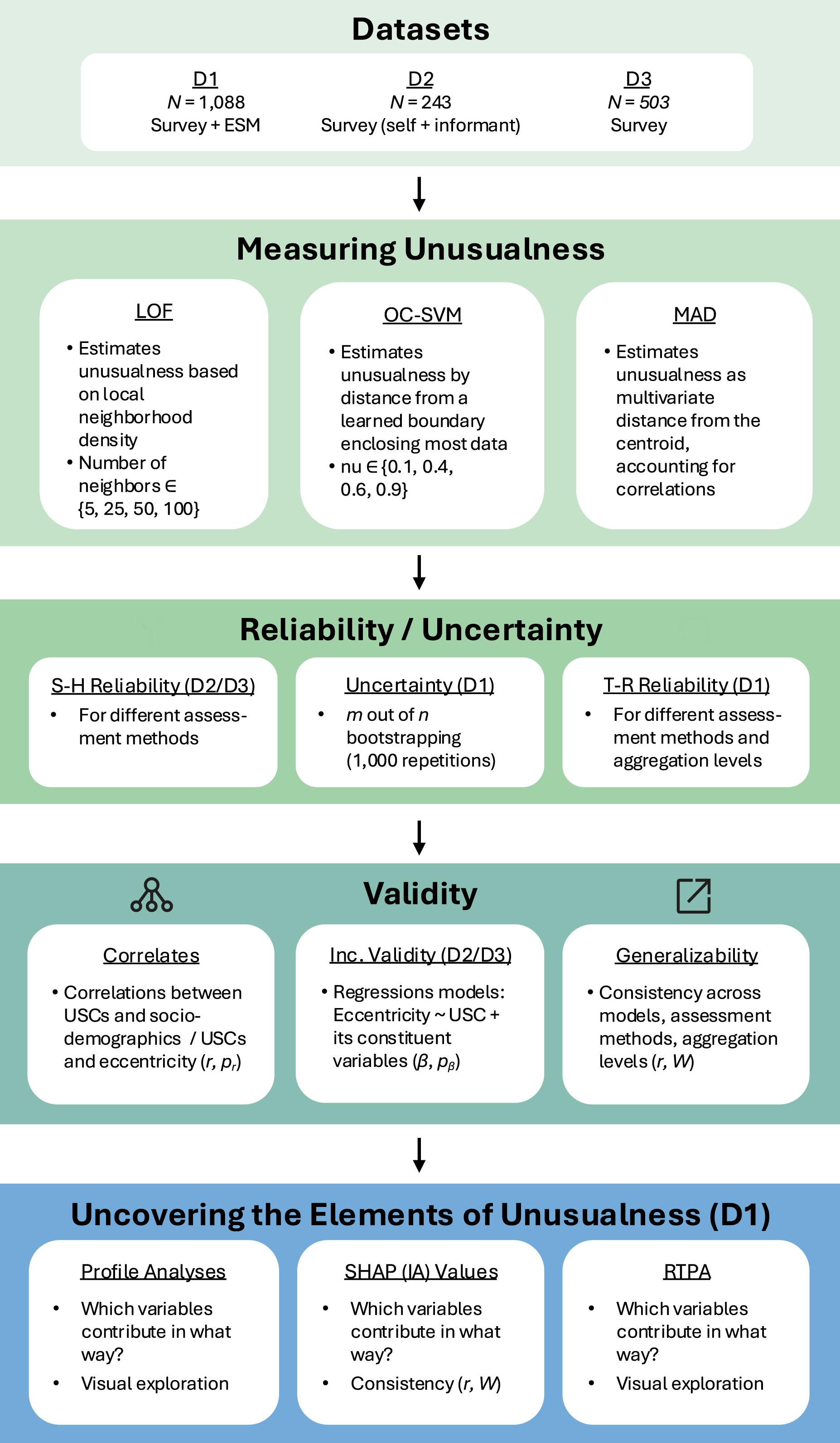

Statistical analysis

The analysis procedure is displayed in Figure 7. All analyses were conducted separately for all datasets, different aggregation levels (i.e., scale scores, nuances), and measurement methods (i.e., survey, ESM; self-, informant ratings), unless explicitly stated otherwise. Survey-based personality variables were averaged, categorical variables were one-hot encoded, person-means were formed for ESM-based personality variables (D1) and the two informant ratings were aggregated (D2). Scale scores were formed and descriptive statistics (mean, standard deviation, intercorrelations) were calculated. Continuous variables were standardized to zero mean and unit variance. Summary of the analyses in the empirical illustrative examples. Note. LOF: local outlier factor; OC-SVM: one-class support vector machine; MAD: Mahalanobis distance; r: Pearson correlation; p

r

: p-value of Pearson correlation; β: standardized regression coefficients; p

β

: p-value of standardized regression coefficient; W: Kendall’s W; SHAP: SHapley Additive exPlanations; IA: interaction; RTPA: regression tree path analysis; USC: unusualness score.

Unusualness was repeatedly estimated based on either scale scores or nuances (i.e., individual items) using three methods (MAD, LOF, OC-SVM) across a range of hyperparameter variations for the more complex models (LOF: number of neighbors:

Reliability

Split-half reliability was estimated using an odd–even item split and Spearman–Brown correction, computed at the level of scale scores for the datasets in which each scale score was assessed with at least two indicators (D2 and D3). Test-retest reliability was evaluated in D1 for individuals that participated in both collection waves (nSurvey = 256, nESM = 217, test-retest interval (in days): MSurvey = 52.73, SDSurvey = 4.96, MESM = 53.08, SDESM = 4.70). Reliability was computed separately for each analytical configuration (i.e., model and hyperparameters), and the resulting estimates were then averaged to yield an overall reliability score.

Uncertainty estimation

We quantified uncertainty of empirical unusualness rankings using a non-parametric m-out-of-n bootstrap (Bickel & Sakov, 2008; Hall & Miller, 2009) in D1 using scale scores for both ESM and survey data. In each of 1,000 iterations, we drew a bootstrap subsample of size m with replacement from the n observations, fit the estimation method on that subsample, and computed USCs for the full dataset. Scores were converted to ranks, and the resulting rank distribution across iterations summarized individual-level uncertainty. We chose m in an inner loop (20 iterations per candidate m) using the adaptive stability rule of Bickel & Sakov (2008): over a grid of candidates m ∈ {n0.6, n0.7, n0.8, n0.9}, we computed bootstrap distributions of the rank statistic at adjacent grid points and selected the m that minimized a distance between distributions. For each analytical configuration, we summarized the mean rank, rank standard deviation across individuals, and examined how uncertainty varied with mean rank.

Validity

The distribution of USCs was analyzed using histogram plots. Nomological associations were assessed by examining correlations between the USCs and sociodemographic variables (D1) and eccentricity (D2, D3). Cross-method consistency was evaluated across models, assessment methods, and aggregation levels. Consistency across models was evaluated across various analytical configurations (i.e., unusualness estimation methods and their respective hyperparameters) using Kendall’s W (W) and pairwise Pearson correlations (r). Consistency across assessment methods was evaluated by comparing correlations (r) of USCs across different assessment methods (D1: survey, ESM; D2: self-, informant ratings) within analytical configurations. Consistency across aggregation levels was assessed by correlating USCs derived from scale scores with those based on nuances. Incremental validity was assessed by testing whether unusualness accounted for additional variance in eccentricity beyond the personality variables from which it was derived. To this end, we estimated multiple regression models that included both the USC and its constituting variables (e.g., Big Five items) as predictors (D2, D3). In the incremental analyses, all variables were matched in terms of their level of aggregation and method of assessment. For example, informant-rated eccentricity was predicted using informant-rated scale scores and the corresponding USC derived from these scale scores.

Uncovering the elements of unusualness

We examined the internal structure by visually analyzing the personality profiles of the most unusual individuals. We conducted exploratory clustering analyses using K-Means (Ikotun et al., 2023) on the top 10%, 20%, and 30% most unusual individuals to generate hypotheses about potential systematic patterns in unusual psychological functioning. SHAP values and SHAP interaction values (up to two-way interactions) were computed to identify key contributors to unusualness. Variable attributions were examined across the full dataset, subsets of the most unusual individuals, and clustering-derived subgroups, and individuals. The consistency of these attributions (i.e., the rank-order consistency and score-level agreement of personality variables) across different analytical configurations (i.e., varying models and hyperparameter settings) was assessed using W and r.

K-Means clustering was implemented using the scikit-learn implementation (Pedregosa et al., 2011), with the number of clusters set to two and all other parameters kept at their default settings. SHAP values were computed using the shap package (Lundberg & Lee, 2017), employing the KernelExplainer with a single background sample defined as the feature-wise median. Pairwise SHAP interaction values were estimated using the shapiq library (Fumagalli et al., 2023), specifically with the KernelSHAP-IQ method and the k-Shapley Interaction Index (k-SII; Bordt & von Luxburg, 2023), using a sampling budget of 500 coalition/model evaluations and the median as the single background sample. RTPA was conducted using scikit-learn’s DecisionTreeRegressor (Pedregosa et al., 2011), with the maximum tree depth set to 4 and all other parameters left at their default values.

Results

Reliability

Unusualness showed strong test-retest reliability in the survey data (scale scores: r = .81, SD r = .07; nuances: r = .80, SD r = .09), but lower reliability in the ESM data (scale scores: r = .49, SD r = .07; nuances: r = .53, SD r = .07). In D2, split-half reliability was moderate for both self- and informant-rated survey data (self-rated: rSB = .64, SD r = .04; informant-rated: rSB = .72, SD r = .09). In D3, split-half reliability was moderate/high for survey data (scale scores: rSB = .59, SD r = .10; nuances: rSB = .78, SD r = .08).

Uncertainty estimation

Uncertainty analyses revealed some key findings. First, bootstrap rank uncertainty was modest, indicating that the models captured unusualness with reasonable consistency. Second, uncertainty varied across analytical configurations. Across models, OC-SVM yielded the lowest average rank uncertainty (survey: SD Rank = 4.25, ESM: SD Rank = 6.45), while LOF and MAD showed similar and moderately higher average variability (MAD: survey: SD Rank = 30.68, ESM: SD Rank = 21.83; LOF: survey: SD Rank = 26.02, ESM: SD Rank = 38.85). For LOF, uncertainty was strongly influenced by the hyperparameter k, with smaller neighborhoods producing more variability and larger k values reducing it (see Supplement 3.10). Third, uncertainty varied systematically with the USC itself: it was lowest at the extremes—that is, for the most and least unusual individuals—and highest in the middle of the distribution, where near-ties are more common.

Validity

Distribution of unusualness scores

Histogram plots revealed that across datasets, models, and aggregation levels, USCs were unimodally distributed, consistent with a dimensional conceptualization of unusualness (see Supplement 3.11).

Nomological correlates

In D1, sociodemographic correlates of USCs derived from ESM and survey data based on scale scores and personality nuances are presented in Supplement 3.5. While associations within assessment methods and aggregation levels were relatively stable, cross-assessment correlations exhibited greater variability. Nevertheless, several variables consistently showed strong associations with USCs across both methods: being unemployed, right-leaning political orientation, and lower educational status were all linked to higher levels of unusualness. In D2, unusualness estimated from informant ratings showed small correlations with both self- and informant-rated eccentricity at the level of scale scores (eccentricity self-rated: r = .11, SD r = .02; eccentricity informant-rated: r = .12, SD r = .02), and the nuance level (eccentricity self-rated: r = .12, SD r = .03; eccentricity informant-rated: r = .11, SD r = .02). When unusualness was estimated from self-ratings, associations with self-rated eccentricity were small to moderate, while no meaningful associations were found with informant-rated eccentricity—at either the scale score (eccentricity self-rated: r = .13, SD r = .02; eccentricity informant-rated: r = .02, SD r = .02) or nuance level (eccentricity self-rated: r = .20, SD r = .03; eccentricity informant-rated: r = 0.06, SD r = .01). In D3, unusualness and eccentricity were moderately correlated on average (scale scores: r = .23, SD r = .06; nuances: r = .22, SD r = .05).

Generalizability

Cross-method consistency of USCs across models was consistently high in all analyses. For D1, consistency was high for scale scores (W = .88 survey; W = .86 ESM) and little lower for nuances (W = .87 survey; W = .82 ESM). In D2, both scale scores (survey self-rated: W = .89; survey informant-rated: W = .90) and nuances (survey self-rated: W = .90; survey informant-rated: W = .88) showed similarly high consistency. Finally, in D3, consistency remained high for scale scores (W = .91) and nuances (W = .93). Pairwise correlations for each analytical configuration are displayed in Supplement 3.6.

Consistency across USCs estimated via survey and ESM-based assessments was small to moderate and significant (scale scores: r = .13, SD r = .04, t = 10.65, p < .001; nuances: r = .17, SD r = .03, t = 14.03, p < .001). Consistency across USCs estimated via self- and informant-rated survey assessments was moderate and significant (scale scores: r = .19, SD r = .03, t = 17.05, p < .001; nuances: r = .16, SD r = .03, t = 14.98, p < .001). Consistency across USCs estimated via scale scores and nuances was high in D1 (survey: r = .90, SD r = .06; ESM: r = .89, SD r = .07) and somewhat lower in D2 (survey self-rated: r = .75, SD r = .10; survey informant-rated: r = .83, SD r = .11), and D3 (survey: r = .78, SD r = .08).

Incremental validity

Incremental analyses indicated that unusualness accounts for variance in eccentricity beyond its constituting variables (see Supplement 3.14 for detailed results). In D3, 13 out of 18 joint model tests were significant, with average standardized coefficients of .09 for scale scores (8 significant) and .07 for nuances (5 significant). In D2, fewer tests reached significance (3 out of 36), yet standardized coefficients were consistently positive and similar in magnitude for unusualness derived from scale scores (self-rated: β = .07, informant-rated: β = .05) and nuances (self-rated: β = .09, 1 significant; informant-rated: β = .08, 2 significant).

Uncovering the elements of unusualness

Profile analysis

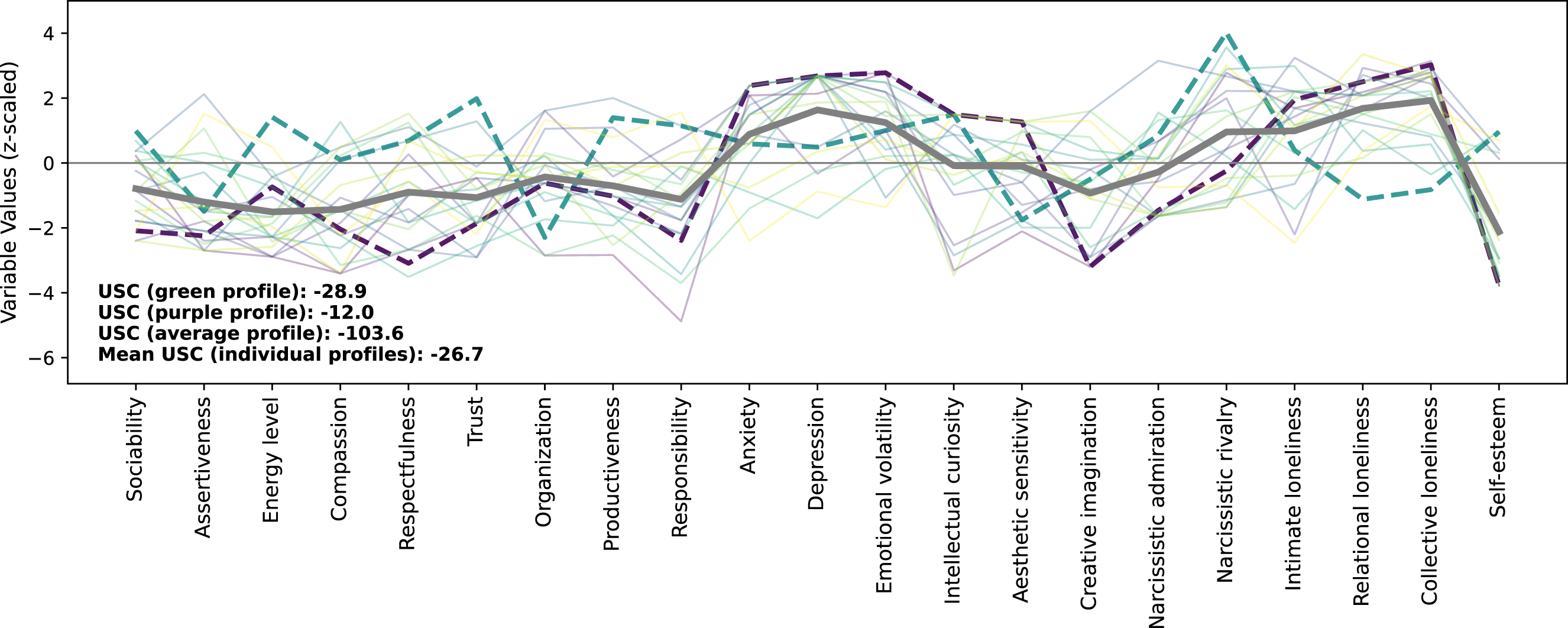

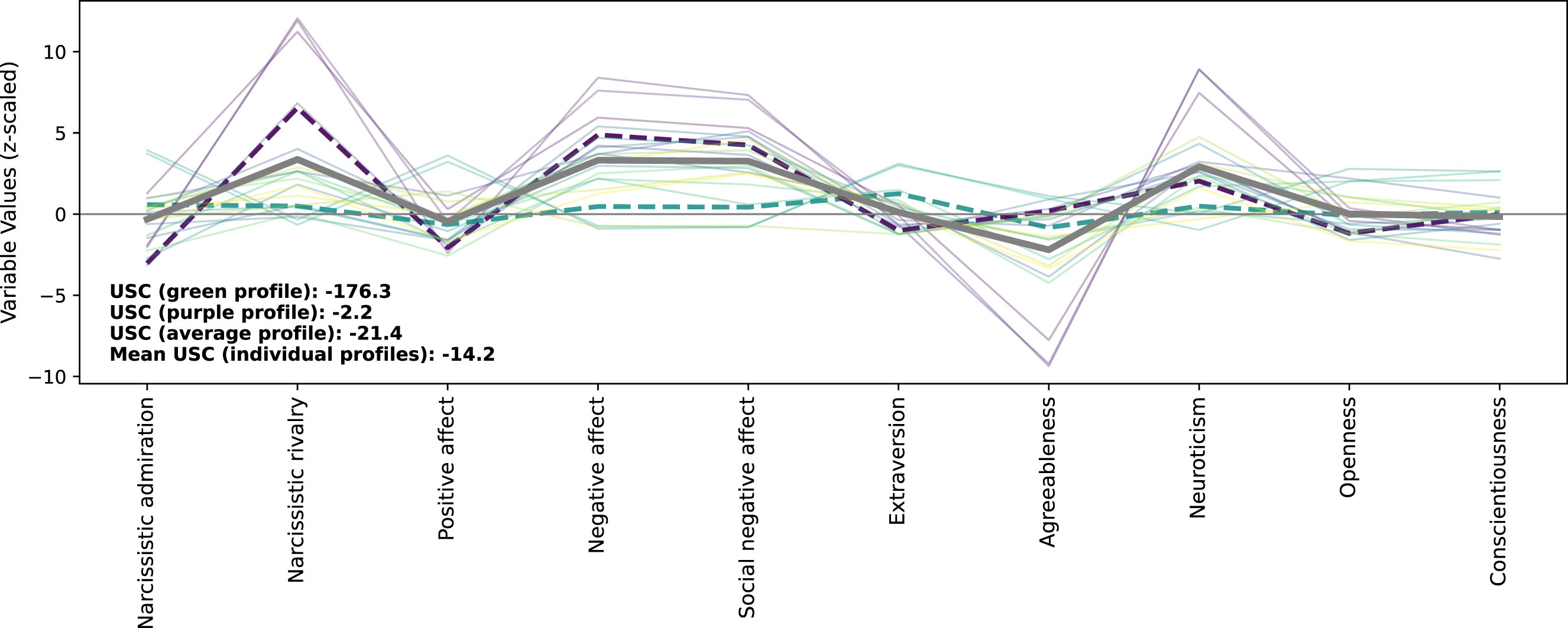

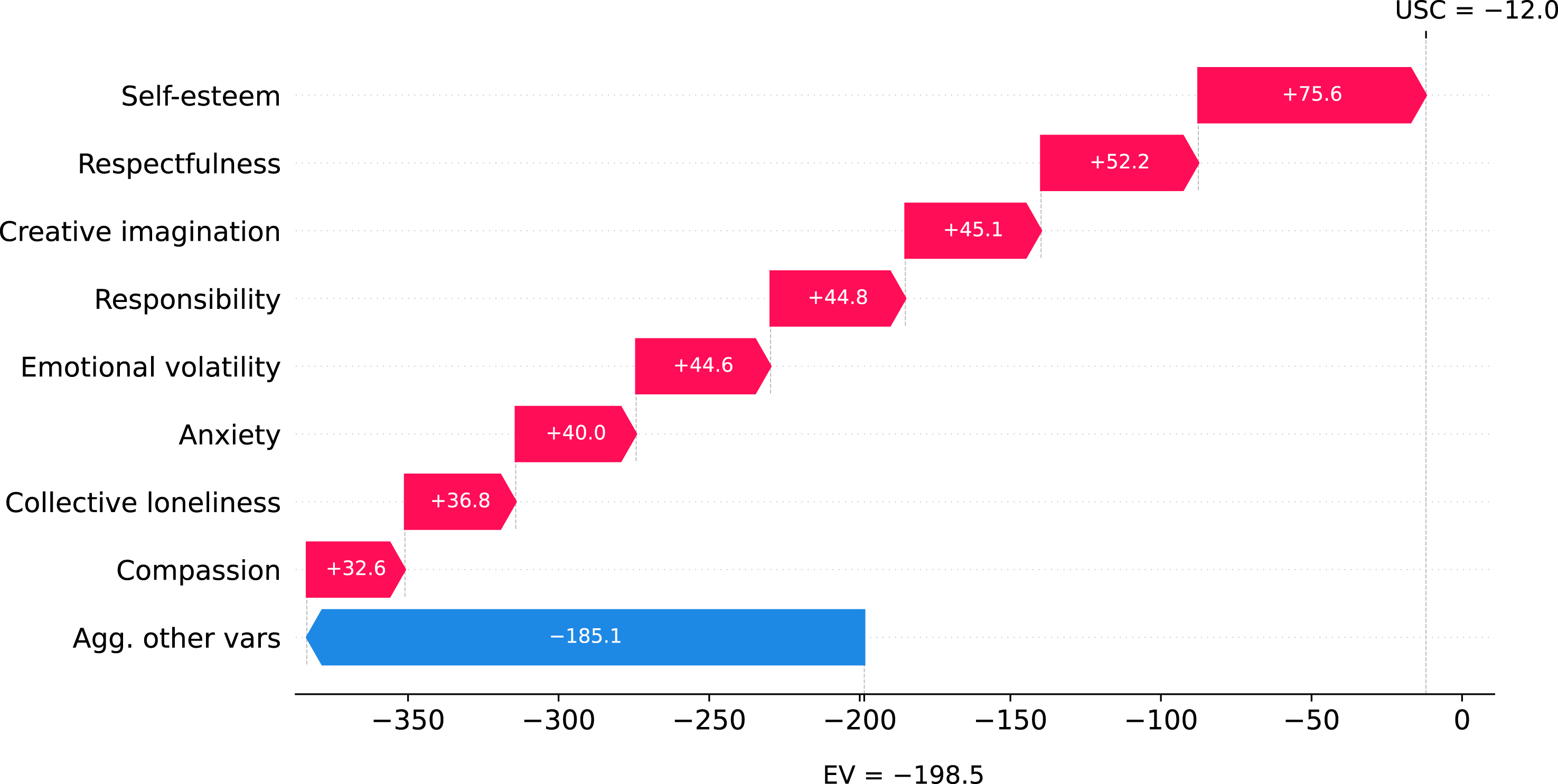

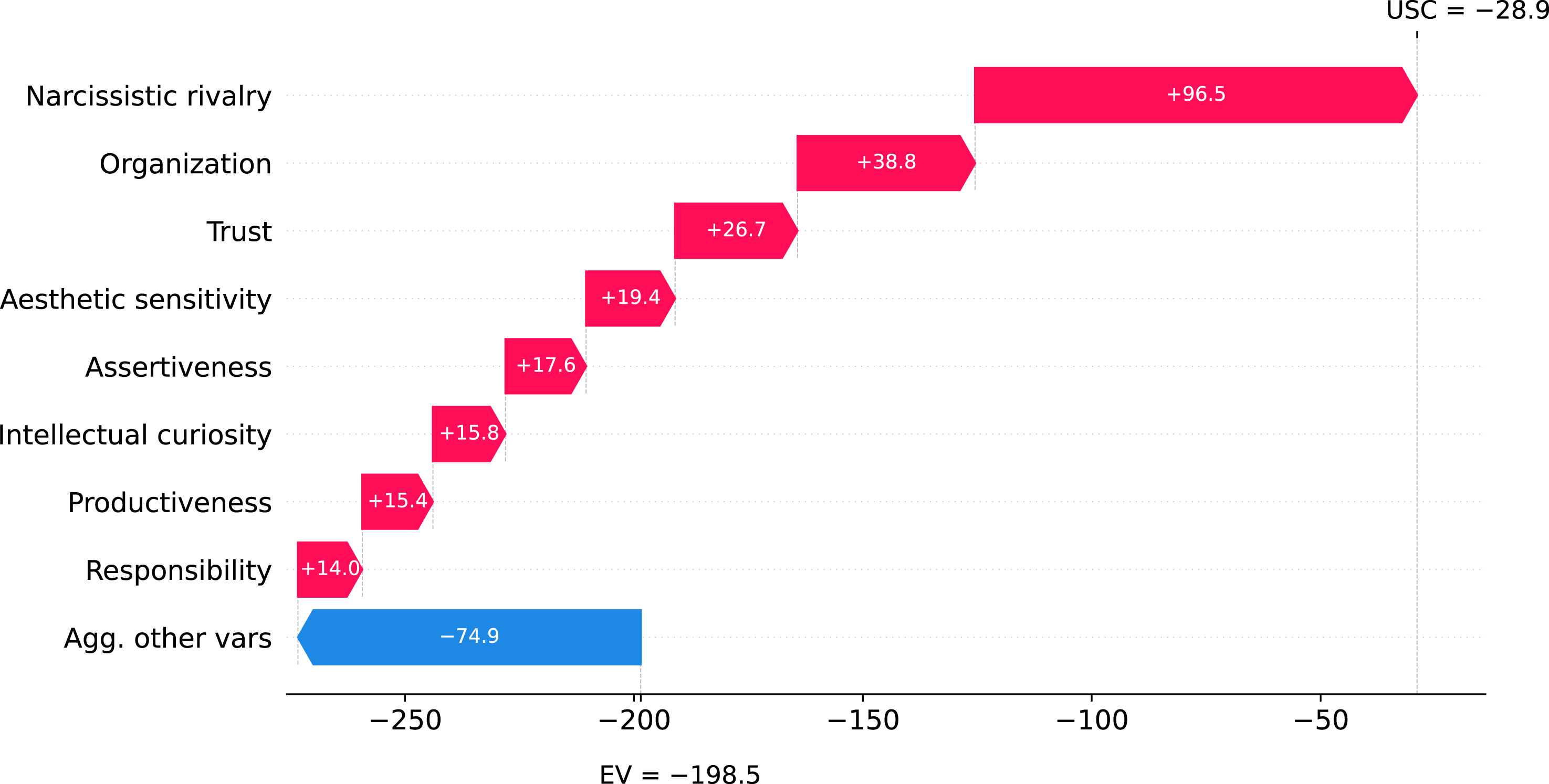

We visualized the personality profiles of the 2% most unusual individuals, identified using the OC-SVM (nu = 0.6) applied to both survey (Figure 8) and ESM (Figure 9) data (for other analytical configurations and proportions of individuals, see Supplement 3.3). In both figures, we highlight two individual profiles for illustration purposes: an individual whose unusualness is primarily characterized by unusual values and is elevated across both assessment methods (purple dashed line, Example A), and one who exhibits both unusual values and unusual combinations in the survey-based assessment, but whose unusualness is not elevated in the ESM-based assessment (Example B, green dashed line; not among the 2% most unusual individuals in the ESM data). Across both assessment methods, the average profile shape reflects a generally undesirable configuration of personality variables. On average, individual profiles are more unusual than the aggregated profile. Figures 8 and 9 indicate the presence of both unusual values (extreme scores on the y-axis) and unusual combinations (atypical profile shapes that deviate from common co-occurrence patterns among personality variables, such as the high within-domain variability in some Big Five traits). This supports the notion that aggregating personality variables into broader domains may obscure meaningful variation in these atypical profiles. The clustering analyses revealed a hypothesis-stimulating two-group solution across various analytical configurations with distinct personality profiles: one cluster characterized by more socially desirable profiles and unusual combinations, the other by more maladaptive profiles and unusual values. Full results are provided in Supplement 3.4. Personality profiles for the most unusual individuals based on survey data. Note. This figure shows the personality profiles of the top 2% of individuals with the highest unusualness scores, identified by applying the one-class support vector machine (nu = 0.6) to the survey data. The y-axis shows z-standardized variable values, where zero represents the sample mean. Each thin line represents an individual profile, the thick gray line represents the average profile of these unusual individuals, and the thick dashed lines represent the profiles of two illustrative individuals (Example A: purple dashed line; Example B: green dashed line). Personality profiles for the most unusual individuals based on experience sampling data. Note. This figure shows the personality profiles of the top 2% of individuals with the highest unusualness scores, identified by applying the one-class support vector machine (nu = 0.6) to the experience sampling data. The y-axis shows z-standardized variable values, where zero represents the sample mean. Each thin line represents an individual profile, the thick gray line represents the average profile of these unusual individuals, and the thick dashed lines represent the profiles of two illustrative individuals (Example A: purple dashed line; Example B: green dashed line, not among the 2% most unusual individuals in the ESM data).

SHapley additive exPlanations values and interaction values

SHAP values showed a high consistency in the order of variable importances across analytical configurations for survey data (W = .80, SD W = .07) and ESM data (W = .75, SD W = .08). SHAP interaction values showed a somewhat lower degree of consistency for survey data (W = .27, SD W = .02) and a medium degree for ESM data (W = .56, SDW = .09). This discrepancy is likely due to the larger number of variables in the survey data condition (231, representing all main effects and two-way interactions), with many of these variables contributing minimally or not at all to unusualness.

Across analytical configurations, SHAP value analyses revealed systematic patterns in how specific personality variables contributed to unusualness. As expected, extreme values on single variables consistently emerged as strong contributors, supporting that unusual values contribute significantly to unusualness. Moreover, the pattern of contributing variables varied across assessment methods and clusters, implying that the underlying structure of unusualness may reflect different psychological mechanisms among the most unusual individuals. Full results, including beeswarm plots for all configurations, are provided in Supplement 3.7. Analysis of SHAP interaction values revealed that unusual values had a stronger influence on USCs than unusual combinations, based on the current analysis restricted to two-way interactions (Supplement 3.8). This pattern may partly reflect the conceptual overlap between unusual values and unusual combinations and the difficulty of SHAP methods to adequately attribute unusual combinations in high-dimensional settings.