Abstract

Inferring causation from correlation can lead to erroneous explanations of violent behavior and the development and implementation of ineffective or even harmful interventions and policies. This article explores the inferences that violence researchers draw from evidence related to violent offending. We invited authors of articles published in violence journals to complete an online survey in which they were asked to identify a factor that may be a cause of violence, cite a study that demonstrates the factor is associated with violence, and provide their inferences from that study. We read each study and coded its research design (description of a sample [n = 9], cross-sectional/retrospective non-experiment [n = 18], single-wave longitudinal non-experiment [n = 10], multi-wave longitudinal non-experiment [n = 0], or randomized experiment [n = 5]) and the appropriate inferences (inter-rater reliability was adequate; κ = 0.73–1.00). Reassuringly, participants (N = 42; 57.1% in United States; 59.5% women) rarely indicated that their identified study demonstrated that their factor was a cause of violence (0.0%–16.7%) when the study was not a randomized experiment. However, many participants failed to acknowledge any plausible alternate interpretations (e.g., reverse causality, third variable) of the results from non-experimental studies (50.0%–88.9%). Moreover, most participants incorrectly selected a causal implication as following from the results of non-experimental studies (77.8%–100%). Our results suggest that even among authors of articles published in peer-review scientific journals on violence, many appear to infer causation from correlation.

Inferring causation from correlation can lead to erroneous explanations of behavior and the development and implementation of ineffective or even harmful interventions and policies (e.g., Harris & Rice, 2015; McCord, 2003; Petrosino et al., 2003; Seto, 2018). Correlation usually does not demonstrate causation because the research design leaves the results open to alternate interpretations (e.g., Shadish et al., 2002). Internal validity refers to the confidence with which causal relations between variables can be inferred from the observed associations found between the variables in a particular study (Shadish et al., 2002). Research design is key for internal validity. Randomized experiments involve manipulating an independent variable (e.g., attitudes toward violence) by randomly assigning participants to its different levels to test the extent to which it affects a dependent variable (e.g., violent behavior). If a difference is found on the dependent variable between the levels of the independent variable, then this permits a high degree of confidence that the observed difference on the dependent variable was caused by the independent variable. This is because randomly assigning participants to different levels of the independent variable reduces the number and plausibility of alternate interpretations of the observed results. For example, it is unlikely that pre-existing individual differences would be confounded with the group to which participants were randomly assigned. Thus, it is more likely that the manipulation of the independent variable caused the observed difference on the dependent variable than it is that the dependent variable caused the assignment to independent variable level (reverse causality) or that the association between the variables is accounted for by some third variable that causally affected both the independent and dependent variables.

In contrast, non-experimental designs permit less confidence about the extent to which the results reflect the causal effect of one variable on another because the results may also reflect reverse causality or third variables. For example, cross-sectional/retrospective non-experimental designs involve examining the association between an independent variable and a dependent variable, with the dependent variable measured at the same time or before the independent variable. This research design would provide ambiguous evidence because an observed association could be found even if the independent variable had no causal effect on the dependent variable. That is, an association could occur because the independent variable caused the dependent variable, but it could instead occur because the dependent variable caused the independent variable or because some third variable caused both the independent and dependent variables. Longitudinal non-experiment designs allow one to rule out the reverse causality alternate interpretation, but still leave open the third variable alternate interpretation. Thus, randomized experiments can demonstrate a causal effect of one variable on the other, longitudinal non-experiments can demonstrate that one variable predicts another, and cross-sectional/retrospective non-experiments can demonstrate only that one variable is associated with another.

Unfortunately, drawing causal conclusions from correlation is a very common critical thinking error among the general public, university students, and practitioners (e.g., Bleske-Rechek et al., 2015; Harris & Rice, 2015; Motz et al., 2023; Mueller & Coon, 2013; Nunes & Hatton, 2024; Seifert et al., 2022; Sibulkin & Butler, 2019). For example, Bleske-Rechek et al. (2015) found that participants from the general public were equally likely to endorse a causal inference from a correlational design as from a randomized experiment. Sibulkin and Butler (2019) found that psychology undergraduate students had difficulty identifying plausible “reverse causality” interpretations of correlational evidence (e.g., a correlation between exercise and happiness could reflect a causal effect of happiness on exercise instead of the more intuitive causal effect of exercise on happiness). Students correctly identified the reverse causality interpretation for only 45% of the various examples of correlations.

Researchers also often make unfounded causal interpretations and recommendations based on ambiguous correlational evidence (e.g., Harris & Rice, 2015; Nunes et al., 2019). For example, Nunes et al. (2019) surveyed 28 authors of articles in journals in the area of sexual offending, asked them to identify a factor that may be a cause of sexual offending, a study that demonstrates the factor is associated with sexual offending, and their inferences from that study. Nunes et al. read each study and coded its research design and the appropriate inferences. Though participants rarely incorrectly indicated that the study demonstrated their factor was a cause of sexual offending when the study they identified was not a randomized experiment, they frequently failed to acknowledge alternate interpretations of the evidence and frequently overstepped the evidence with their selection of implications. More specifically, the majority selected that their factor influenced sexual offending for non-experimental studies, but very few selected the correct alternate interpretations (sexual offending influenced this factor; something else influenced both this factor and sexual offending; or this factor had no influence on sexual offending). Participants were also very likely to incorrectly endorse causal implications as following from non-experimental studies. For example, the majority selected “This is an important factor to target in treatment programs aimed at reducing the likelihood of sexual reoffending.” Participants who had identified a cross-sectional non-experimental study also often incorrectly selected predictive implications. For example, 50% incorrectly selected “This is an important factor to consider when estimating risk for sexual reoffending.”

In this article, we followed the approach of Nunes et al. (2019) to explore inferences made by researchers, but we focused on the broader area of violence, which includes more researchers, more studies, and more randomized experiments. We examined the extent to which authors of research articles published in scientific journals on violence draw conclusions about prediction or causation from research designs that do not support such inferences.

Methods

Participants

We sent invitations to complete our online survey via emails to the corresponding authors of articles published in the following aggression and violence journals: Aggression and Violent Behavior; Aggressive Behavior; Journal of Aggression, Maltreatment, and Trauma; Journal of Interpersonal Violence; Psychology of Violence; Trauma, Violence, and Abuse; Violence and Victims; and Journal of Aggression, Conflict and Peace Research. Forty-three participants met our inclusion criteria (described below in the “Materials and procedure” section). Most participants were women (59.5%; 40.5% men), median age was 36 to 40 years, and most lived in the United States (57.1%). Most participants (92.8%) reported that they conducted research on violent offenders (n = 33) or similar topics, such as bullying and aggression (n = 4) or victims of violence (n = 1). The median amount of time typically spent on research was 11 to 20 days per month. The median amount of time they have been conducting research was 6 to 10 years. The majority reported being employed as researchers (59.5%, n = 25) and professors (52.4%, n = 22). Most reported working at a university/college (78.6%, n = 33). Most (85.7%, n = 36) reported that they were currently conducting or had conducted a research study involving statistical analyses for a graduate degree. Median number of quantitative research studies published in peer-review journals was 8 as first-author and 6 as second or later author. More detailed demographic information is provided in the Supplemental Table S1.

Materials and Procedure

People interested in participating clicked on a link in our invitation email, which directed them to the online survey, where they were presented with a consent form. Those who consented were then presented questions about their demographic characteristics, education, employment, and research activity. Participants were then asked to identify a factor that is a cause of violent offending, provide a reference to a study that shows an association between that factor and violent offending, and select interpretations and implications of the results of that study (see the Supplemental Material for the full set of instructions and questions presented to participants).

We read each of the studies identified by participants and coded the research design for the results relevant to the association between the factor the participant identified and violence. The research designs and their definitions from our coding form are presented below (the full coding form is provided in the Supplemental Material):

• Description of a violent case or sample (e.g., 75% of violent offenders abused alcohol), but no

• Cross-sectional/retrospective non-experimental; that is, the study examined the association with the factor measured

• Single wave longitudinal non-experimental; that is, the study examined the association with the factor (at only one time point) measured

• Multi-wave longitudinal non-experimental; that is, the study examined the association between

• Randomized experiment; that is, the study examined the association with the factor manipulated through

We excluded participants from the analyses if they formally withdrew from the survey at any point, did not correctly answer all three attention check questions, did not answer at least one of the inference questions, and did not provide a reference for an identifiable and recoverable empirical study or meta-analysis published in a journal that provided original empirical results regarding the identified factor and the perpetration of violent behavior using one of the five research designs specified above. We limited our focus to published journal articles because the relevant methodology and results were often less clear in other sources (e.g., books and reports). We also excluded participants who cited more than one study because it was not clear which study informed their inferences.

Some participants referenced meta-analyses, which we dealt with differently depending on the specificity of the results presented in the article (Item 5 in the coding form in the Supplemental Material). For meta-analyses that reported average effect sizes by research design, we classified the research design based on the strongest evidence that could be extracted. For example, if the average effect size was reported separately for randomized experimental studies and non-experimental studies, we would classify it as a randomized experiment. However, if average effect sizes were not reported separately by research design, then we classified the research design based on the weakest research design included in the average effect size. For example, if only one average effect size was reported from a mix of cross-sectional non-experiments, longitudinal non-experiments, and randomized experiments, then we classified it as a cross-sectional non-experimental design. Finally, if there was not enough information in the meta-analysis article to determine the research design(s) included in the average effect size, then we excluded that participant from the analyses.

Forty-three participants were not included in the analyses due to referencing studies that did not have original or relevant empirical results (n = 17, 39.5%), were not published journal articles (e.g., a book, book chapter, or government document, n = 17, 39.5%), or providing multiple (n = 3, 7.0%) or unidentifiable references (n = 5, 11.6%). Of the 42 participants included in the main analyses, 9 participants (21.4%) referenced studies that were descriptions of a case or sample, but with no relevant comparison group and no association examined between relevant variables. Eighteen participants (42.9%) referenced studies that were cross-sectional/retrospective non-experimental designs. Ten participants (23.8%) referenced studies that were single-wave longitudinal non-experimental designs. No participants referenced studies that were multi-wave longitudinal non-experimental designs. Finally, five participants (11.9%) referenced studies that were randomized experiments. Inter-rater reliability for the research design of the studies was excellent (κ = 1.00, 100% agreement) for the randomly selected 30 studies that were coded by both the first and second author.

Given the possibility that some of the studies and participants’ interpretations of those studies may not fit neatly within our coding, questions, or presumed correct responses, we also coded the referenced studies on whether there was some aspect (e.g., nature of the factor listed, inclusion of control variables) that would make it seem reasonable—or at least understandable—if a participant drew stronger inferences than the research design would typically allow (Item 12 in the coding form in the Supplemental Material). Inter-rater reliability was good (κ = .73, 86.6% agreement) for this item. Relatedly, we also coded whether there were ambiguities (e.g., unclear factor or reference listed) that made us uncertain if this was a fair test of each participant’s inferences (Item 13 in the coding form in the Supplemental Material); inter-rater reliability was excellent (κ = 1.00, 100% agreement). The factors and studies identified by participants and our coding of the studies’ research designs are shown in the Supplemental Table S2.

Results

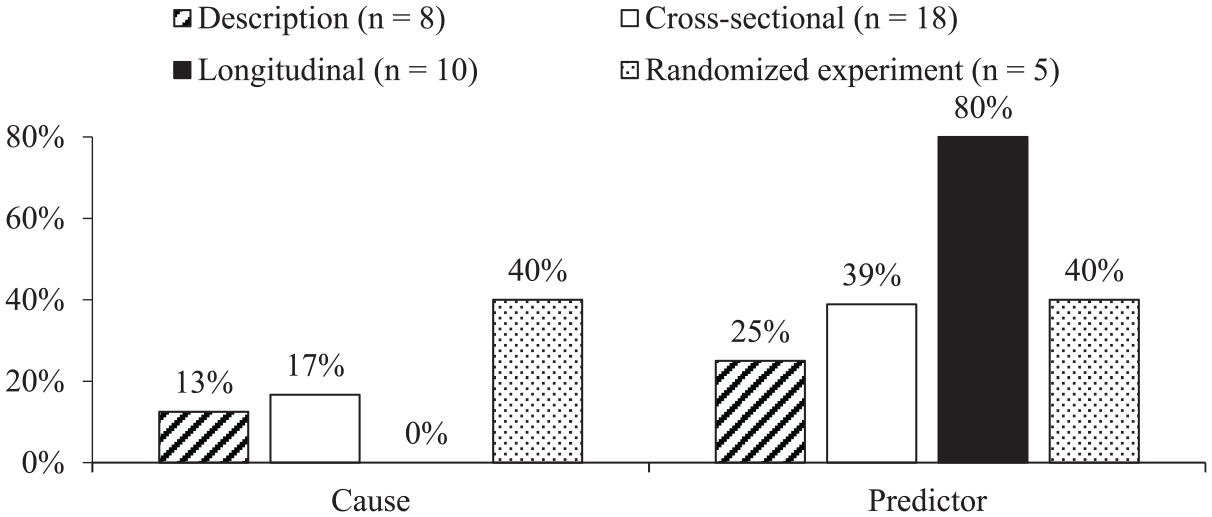

As shown in Figure 1, very few participants (0.0%–16.7%) said that the study demonstrated a causal effect for research designs that do not support such causal inferences. In contrast, 40.0% of the participants who referenced a randomized experiment correctly said that it demonstrated a causal effect. For inferences about prediction, more participants overstepped their study’s research design (25.0%–38.9%), but the pattern was generally consistent with causal inferences, such that more participants correctly said that the study demonstrated prediction for longitudinal designs (80.0%).

What does the study demonstrate?

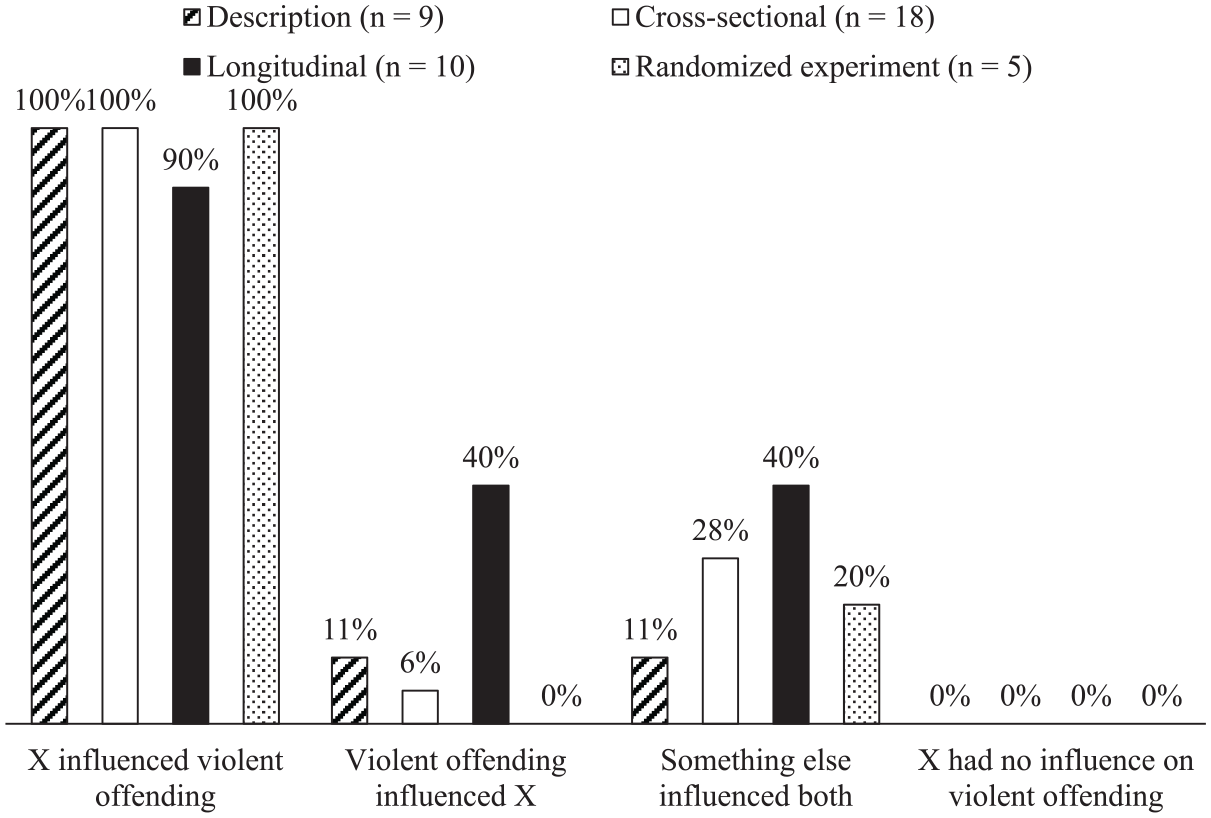

In contrast, when asked to identify plausible interpretations of the results, most participants selected only the intuitive causal interpretation regardless of whether their study’s research design ruled out or cast doubt on alternate interpretations. As shown in Figure 2, almost all participants selected the causal interpretation (90.0%–100%), but far fewer selected the reverse-causality interpretation when it was applicable (5.6% for the cross-sectional/retrospective designs), the third-variable interpretation when it was applicable (27.8% for the cross-sectional/retrospective designs and 40.0% for the single-wave longitudinal designs), or that there was no causal influence of their factor on violent offending when it was applicable (0.0% for both the cross-sectional/retrospective and single-wave longitudinal designs). We next distilled these responses into a dichotomous classification of whether participants who selected the causal interpretation also selected any of the alternate interpretations that were plausible for their particular study’s research design. For participants who referenced a case/sample description design, 88.9% selected only the causal interpretation and none of the alternate interpretations. For participants who referenced a cross-sectional/retrospective design, 66.7% selected only the causal interpretation and none of the plausible alternate interpretations. For participants who referenced a single-wave longitudinal design, 50.0% selected only the causal interpretation and none of the plausible alternate interpretations.

What are the plausible interpretations of the study?

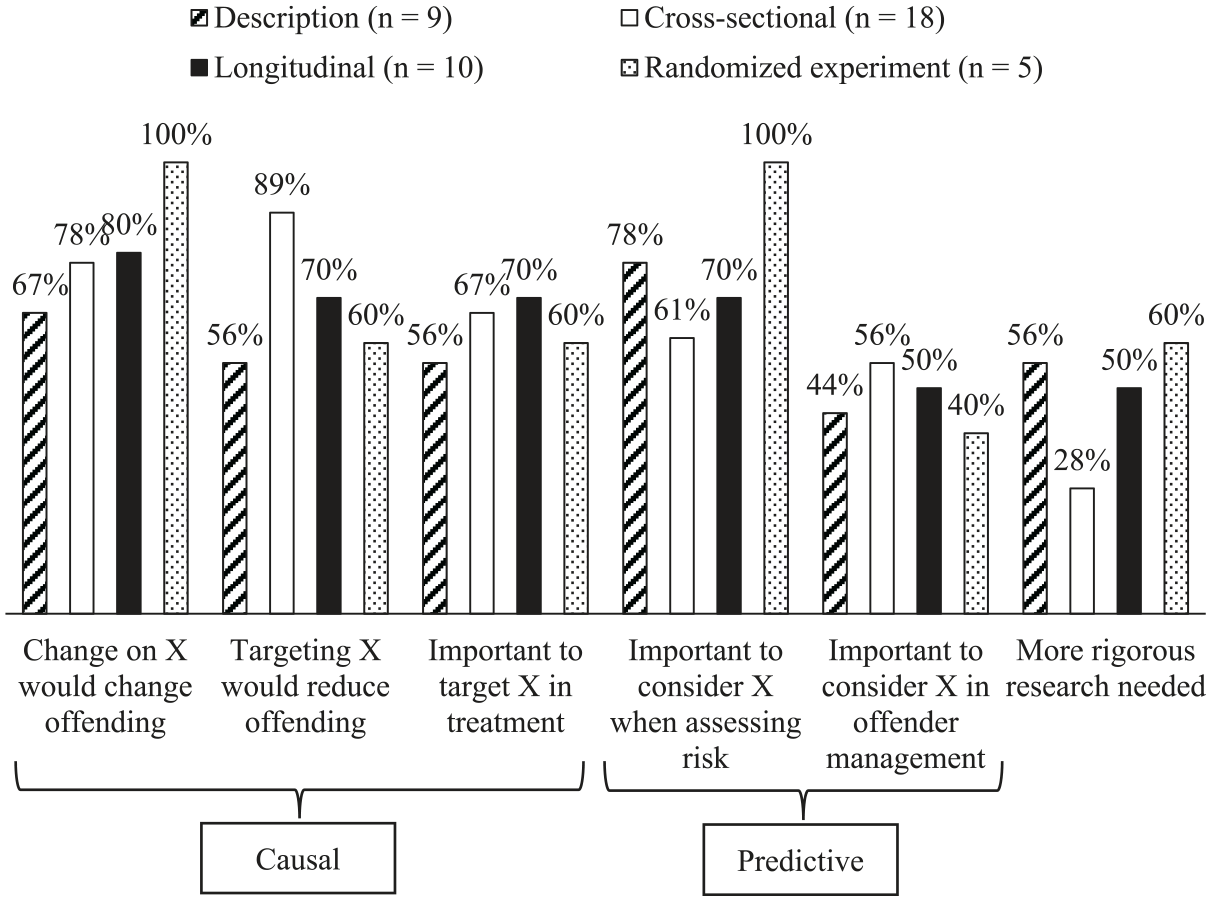

Similarly, most participants selected implications that would only follow from causal evidence or predictive evidence, regardless of whether their study’s research design demonstrated causality or prediction. As shown in Figure 3, the majority of participants (55.6%–88.9%) selected causal implications (e.g., “targeting this factor in treatment can be expected to reduce the likelihood of violent offending”) for research designs that do not demonstrate a causal effect (i.e., non-experimental designs). Similarly, 44.4% to 77.8% selected predictive implications (e.g., “this is an important factor to consider when estimating risk for violent re-offending”) for research designs that do not demonstrate prediction (i.e., case/sample descriptions and cross-sectional/retrospective designs). We next distilled these responses into a dichotomous classification of whether participants incorrectly selected any causal implications. For participants who referenced a case/sample description design, 77.8% incorrectly selected at least one of the causal implications. For participants who referenced a cross-sectional/retrospective design, 100% incorrectly selected at least one of the causal implications. For participants who referenced a single-wave longitudinal design, 90.0% incorrectly selected at least one of the causal implications.

Which implications follow from the study?

Ten studies (23.8%) were identified as potentially ambiguous or having some features that may allow for stronger inferences than would usually be permitted by the research design. Of these, five were cross-sectional/retrospective designs, and the remaining five were single wave longitudinal designs. Excluding these participants did not change the pattern of results reported above.

Discussion

In this article, we asked a group consisting mostly of researchers to identify a study and select interpretations and implications of the evidence from that study. When asked directly about whether a study demonstrated causality or prediction, participants’ inferences were generally sensitive to the strength of the research design used in the study they referenced. Very few participants said that nonexperimental studies demonstrated a causal effect. Though more participants incorrectly indicated that cross-sectional/retrospective nonexperimental studies demonstrated prediction, the proportion was still lower than the proportion of participants who correctly indicated that single-wave longitudinal studies demonstrated prediction. In contrast, participants showed little sensitivity to research design when asked to select from the various interpretations that logically underlie what inferences can be drawn about causality and prediction and the implications that follow from those inferences. Our findings are consistent with Nunes et al.’s (2019) similar survey of researchers in the area of sexual offending.

The same limitations of Nunes et al.’s (2019) study apply to the current study. Specifically, our results may not generalize to the population of violence researchers because of the small convenience sample and low response rate. In addition, at least some participants may have interpreted our survey questions differently than we had intended due to ambiguities in the wording. Accordingly, some of the responses we considered incorrect may instead reflect a legitimate alternate reading of the items. For example, as with Nunes et al. (2019), our wording did not specify whether we were asking about constructs or the variables used to represent those constructs. If participants read the questions to be about constructs, then it would be reasonable for them to assume temporal precedence in some cases. For example, it would be reasonable for participants to select the interpretation that childhood experiences predicted violent offending for a study that found an association between childhood experiences and adult violent offending, even if the study was cross-sectional/retrospective—because the childhood experiences would necessarily precede later violent offending, even if those childhood experiences were measured retrospectively after the violent offending. However, as with Nunes et al. (2019), our findings remained consistent even when we excluded such potentially ambiguous cases and even when we scored the responses generously to focus on only the most extreme errors; that is, a large number of participants selected only the causal interpretation when an alternate interpretation was also plausible and a large number of participants selected causal implications that do not follow from the evidence they referenced.

Relatedly, it is possible that at least some participants’ apparent overstepping of the evidence may have reflected different readings of the wording of our instructions, misremembering of the original study’s methodology, or a focus on the original study’s conclusions. In terms of wording, participants were instructed to select “all that apply” and this was especially important for the interpretations question, in which the key issue is acknowledging applicable alternate interpretations. In hindsight, however, we may have unintentionally “nudged” participants toward selecting only one interpretation by asking them to identify the “most plausible interpretation” (the full survey is shown in the Supplemental Material). Perhaps if we had instead asked participants to select “all plausible interpretations that apply,” more participants would have acknowledged plausible alternate interpretations. Furthermore, some participants may have also more generally interpreted our wording differently than we had intended. This may have been exacerbated by cultural or language differences—however, this may not have had a large impact given that over 80% of the participants were in English-speaking countries.

In terms of memory and focus, though we asked participants to answer based on “the results of the study,” it is possible that at least some participants did not answer our questions based on the actual methodology of the study they identified. For example, they may not have correctly remembered the relevant methodological details or they may have focused on the original authors’ conclusions reported in their abstract or “Discussion” section. Authors often draw stronger conclusions than warranted by their study’s methodology (e.g., Brown et al., 2013; Cofield et al., 2010; Han et al., 2022; Lazarus et al., 2015). Though this may be an additional reason for our participants’ apparent overstepping of the evidence, it is still consistent in suggesting that some researchers are drawing conclusions without sufficient attention to methodology.

Also, as with Nunes et al.’s (2019) study, our focus on internal validity in the current study is fairly simple and narrow. There are other types of validity (e.g., Shadish et al., 2002), non-experimental designs can sometimes permit causal inferences, and there are important trade-offs between the different approaches (e.g., internal validity vs. construct validity; Rohrer, 2018; Shadish et al., 2002). However, based on our reading, none of the non-experimental studies cited by participants meet the demanding conditions for permitting causal inferences (e.g., Murray et al., 2009; Rohrer, 2018). Ultimately, however, different approaches can provide important complementary evidence, with the strengths of each shoring up the weaknesses of the other to permit clearer understanding of the causal relations between the constructs of interest (e.g., Lösel et al., 2020; Rohrer, 2018; Sampson, 2010; Shadish et al., 2002). As Rohrer (2018) nicely puts it: “Different research designs are neither mutually interchangeable nor rivals, but can contribute unique information to help answer common research questions. The most convincing causal conclusions will always be supported by multiple designs” (p. 40).

Notwithstanding the limitations of our study, our findings are consistent with more general suggestions and evidence that people—whether the general public, practitioners, or researchers—often draw conclusions that overstep the available evidence (e.g., Bleske-Rechek et al., 2015; Harris & Rice, 2015; Motz et al., 2023; Mueller & Coon, 2013; Nunes & Hatton, 2024; Nunes et al., 2019; Seifert et al., 2022; Sibulkin & Butler, 2019). Though an understandable human tendency, such critical thinking errors can result in wasting effort and resources on interventions and policies that are ineffective at reducing violence and may even increase it (e.g., Harris & Rice, 2015; McCord, 2003; Petrosino et al., 2003). The implications of our findings are that greater acknowledgment of the weaknesses of different designs and the alternate interpretations of their findings is warranted and would facilitate better research and understanding of the causes of violence.

What can be done to reduce overstepping of evidence? One simple technique that may be helpful for both producers and readers of research is to consider the inferences one would draw had the results been in the opposite direction (Lord et al., 1984). For example, if a non-experimental study counterintuitively found that childhood physical abuse was associated with less violent offending—rather than the intuitive positive association—one would likely be motivated to more critically evaluate the evidence and, thereby, recognize that, though the evidence is indeed consistent with the possibility that physically abusing children causes them to be less violent adolescents and adults, the methodology may leave open alternate interpretations of the evidence (e.g., perhaps instead of childhood abuse reducing violence, a third variable or other methodological issues may account for the observed association). And because of the recognition that the evidence is open to such alternate interpretations, one may be more cautious about the implications and recommendations they see as following from that evidence (e.g., despite the observed association, few people would conclude that the study suggests that physically abusing children is an effective way to reduce violence). More generally, the use of appropriately tentative wording and acknowledgment of limitations and caveats can reduce the extent to which readers jump to causal conclusions from correlational evidence without compromising clarity or interest (Adams et al., 2017; Bott et al., 2019). Thus, raising awareness of the problem through studies like this one; training for researchers, knowledge translators, and those who use research evidence to inform practice and policy (e.g., Motz et al., 2023; Mueller & Coon, 2013; Seifert et al., 2022; Sibulkin & Butler, 2019; VanderStoep & Shaughnessy, 1997); and greater vigilance by journal editors and reviewers may facilitate more appropriate interpretations of evidence, encourage more rigorous research, and result in more effective and efficient efforts to reduce violence (e.g., Harris & Rice, 2015).

Supplemental Material

sj-pdf-1-jiv-10.1177_08862605241285996 – Supplemental material for Causal Interpretations of Correlational Evidence Regarding Violence

Supplemental material, sj-pdf-1-jiv-10.1177_08862605241285996 for Causal Interpretations of Correlational Evidence Regarding Violence by Kevin L. Nunes, Cassidy E. Hatton, Anna T. Pham, Carolyn Blank and Sacha Maimone in Journal of Interpersonal Violence

Footnotes

Acknowledgements

We are grateful to the participants for giving their time and effort to answer our survey questions. We are also grateful to students in the Aggressive Cognitions and Behaviour Research Laboratory for assistance with organizing the references for coding (Maya Atlas, Eric Filleter, and Chloe Pedneault), coding studies (Eric Filleter), preliminary data analysis (Eric Filleter), and updating and formatting the final reference lists (Benjamin Presta).

Author Note

Anna Pham is now at the Department of National Defence Canada. Carolyn Blank is now at BC Wildfires. Sacha Maimone is now at the University of Ottawa’s Institute of Mental Health Research at The Royal. Results from this study were presented in a paper at the 2023 Annual Meeting of the American Society of Criminology.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interests with respect to the authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research and/or authorship of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.