Abstract

The negative impact of misinformation on public discourse and public safety is increasingly a focus of attention. From the COVID-19 pandemic to national elections, exposure to misinformation has been linked to conflicting perceptions of social, economic, and political issues, which has been found to lead to polarization, radicalization, and acts of violence at the individual and group level. While a large body of research has emerged examining the development and spread of misinformation, little has been done to examine the human processes of being exposed to, and influenced by, misinformation material online. This article uses reinforcement sensitivity theory to examine the effect of individual differences in the Behavioral Inhibition System (BIS) on the behavioral and cognitive intentions to engage in violence after exposure to misinformation online. Using an online panel sample (Mechanical Turk), and a behavioral study that involved exposure to, and interaction with, misinformation, this study found that trait BIS score impacted how much individuals engaged with misinformation, as well as their ensuing activism and radicalism toward the narratives that were depicted. This study identified that engagement with misinformation impacted intentions for activism and radicalism, as did trait BIS. However, these effects were present for both misinformation and correct information conditions. These findings highlight the importance of BIS-related processes and raise important questions about the degree to which we need to think about online influence as a general process versus specific processes that directly relate to the effect of misinformation.

Introduction

Those who can make people believe absurdities, can make people commit atrocities.

Misinformation is defined as the inadvertent sharing of false information (Ireton & Posetti, 2018), and it is generally accepted that consuming misinformation can influence individuals’ likelihood of being radicalized (Davies, 2021). Significant strides have been made in defining what constitutes misinformation (vs., “disinformation”; Baines & Elliott, 2020), the types of material that are often used in misinformation campaigns and the form and functions of online groups who spread misinformation (Cook et al., 2015). While there are complexities to defining and utilizing the term “misinformation” with some scholars disavowing the overarching use of the term (Baines & Elliott, 2020), we are increasingly aware of the many and varied forms of harmful forms of online media. While misinformation is defined as false or misleading information that is not necessarily intended to deceive), disinformation is defined as false and misleading information provided with the intention to deceive. Malinformation is authentic information provided with the intent to cause harm (Omoregie, 2021). However, it is important to denote that the inherent problem of these definitions is that they define the content based on the motivation of the sender and not the perception of the viewer. In this article, misinformation will be defined as false information that is presented as true (see Cook et al., 2015). This is not to be confused with other types of information such as disinformation, which is when information is deliberately created to be deceitful (Bained & Elliot, 2020).

Exposure to misinformation has been linked to conflicting perceptions of social, economic, and political issues, which has been found to lead to polarization, radicalization, and even acts of violence (Barberá, 2021; Colleoni et al., 2014; Nguyen et al., 2014). Misinformation in online environments creates echo chambers that reinforce biases and diminish trust among consumers (Benkler et al., 2018). Misinformation and conspiracies “thrive in these online spaces, where they can remain unchallenged, and even morph into extremism” (Van Raemdonck, 2019, p. 153). We have also seen misinformation facilitate interpersonal violence. Recent misinformation campaigns have included spreading mistruths about the COVID-19 pandemic and the 2020 presidential election, both of which have had negative consequences for individual consumers and wider society. As a result of the spread of false narratives regarding COVID-19, the United States has seen an increase in hate attacks against the Asian population and escalation of hostilities in response to mask mandates (see Caruthers, 2021). Misinformation spread online about a human trafficking ring ran by the U.S. Democratic party led to a shooting in Washington DC in 2016 (see Marantz, 2020). Additionally, the spread of conspiracies regarding the 2020 elections manifested in the January 2021 storming of the Capitol Building in Washington D.C. to halt the certification of the next President of the United States (Barrett & Raju, 2021). The January 6th storming of the Capital Building in Washington D.C., fueled by these narratives of misinformation, was a large and violent enough display to be referred to as an “insurrection” (Bond & Neville-Shepard, 2021) and an “act of terrorism” (Horgan, 2021). Even since then, misinformation concerning the 2020 election has continued to be shared widely online for over a year (Alba & Frenkel, 2019). These events show the clear harm that can be caused at the individual, group, and societal level from misinformation online. Yet, despite the significant spread of misinformation, and the known harm caused by exposure to it, we have few empirically validated psychological theories to support efforts to understand the process through which individuals are influenced by misinformation, and critically, what processes play a principal role in this pathway.

Misinformation

To date, most research on misinformation has focused on how it develops, spreads and if it is believed by consumers. Misinformation is created, disseminated usually online, and can then go viral within minutes through the help of social media platforms, some of which have been found to be especially prone to false content (Fernandez & Alani, 2018). Research that focuses on the spread of misinformation via the internet has found that the creation of echo chambers has resulted in individuals selecting content that reinforces their narrative rather than based on veracity (Del Vicario et al., 2016). As individuals are consuming misinformation to support their own confirmation biases, other studies have supported that these individuals believe the misinformation they consume as well (Roozenbeek et al., 2020). It was found that, regarding misinformation about the COVID-19 pandemic, substantial proportions of individuals across several different countries believed the misinformation with some even rejecting the veracity of the most commonly known information about the virus (Roozenbeek et al., 2020). Most importantly, this study established a direct link between misinformation and subsequent behavior, finding that COVID-19 misinformation impacted individuals’ actions regarding following public health guidelines and getting vaccinated (Roozenbeek et al., 2020).

Elsewhere it is shown that exposure to certain forms of media is associated with later violent behavior in some individuals, and this relationship is mediated by pre-existing personality traits of the viewer (e.g., Anderson & Dill, 2000). Prior research has shown that quantifiable differences in personality traits, such as aggression and anxiety, significantly impact the effect of exposure to a range of materials, from violent video games to violent extremist propaganda (Anderson, 1997; Shortland et al., 2017; Shortland & McGarry, 2021). What these studies show is that it is likely that individual level factors contribute to how individuals interact with, and are affected by, misinformation (Newhoff, 2018).

When it comes to misinformation and the cognitive shift toward violent behavior, while a suitable starting point may be the role of aggression, an equally important factor may be that of anxiety. First, misinformation does not often emphasize violence (like a video game, or movie) but instead seeks to create anxiety and uncertainty in the viewer (Lu et al., 2020). Second, anxiety is often highlighted as a driver in the movement toward ideological violence, which some have compared to the process through which misinformation works (Kruglanski et al., 2014). Accordingly, in this study we examine the role of individual differences in behavioral inhibition system (BIS). The BIS is conceptualized as the avoidance-prone conflict resolution center of Gray’s (1991) reinforcement sensitivity theory (RST) and thus may play a key role in understanding the impact of misinformation on intentions to engage in activism and radicalism.

Reinforcement Sensitivity Theory

RST is a motivational theory of personality based on the biological underpinnings of Gray’s (1987) theories of anxiety. Gray (1991) proposes a reward system (behavioral approach system, BAS), punishment system (BIS), and threat-response system (Fight/Flight/Freeze system). The BIS is associated with anxiety and uncertainty, which makes people more susceptible to external influences that make them feel secure. BIS functions are closely associated with feelings of meaninglessness, threats to one’s identity, and sensitivity to social and societal rejection. Within RST the BIS is conceptualized as a conflict detection system that is responsible for inhibiting ongoing behavior when both approach and avoidance tendencies are activated (Berkman et al., 2009). Individuals with heightened BIS sensitivity also report feeling less meaning and purpose in their lives (McGregor et al., 2013) are more attentive to the negative aspects of a situation and have more pessimistic outlooks (Hirsh & Kang, 2015).

As it relates to the movement toward violence driven by extreme narratives and ideologies, anxiety is thought to play a key role in that it motivates behaviors to restore feelings of significance (Kruglanski et al., 2014). Such motivations have been linked with behaviors that provide a sense of belonging, status, and purpose—such as the need to seek identity and purpose from external sources (such as other groups who offer a strong sense of identity; McGregor et al., 2013). As such, a high BIS is associated with increased feelings of uncertainty, and a hyper-active BIS could motivate individuals to identify strongly with a certain group or ideology; or be drawn toward misinformation narratives that claim certainty over deeply uncertain events (such as decisions to get, or not get, vaccines). Elsewhere, research has found that individuals with high trait BIS are more likely to engage with extreme narratives, and to be influenced by them (McGarry & Shortland, 2023). Thus, a heightened BIS and the resulting anxiety and other negative emotions may influence feelings of missing out, which has been found to be motivated by a need for personal significance, relevance, and belonging (Beyens et al., 2016). Research has begun to explore the effect of anxiety and misinformation. Talwar et al. (2019) recently found that the “fear of missing out” is positively associated with sharing misinformation online. Anxiety is also linked to less accurate beliefs about an out-group (in this case out-political party), about being exposed to uncorrected misinformation (Weeks, 2015).

The Current Study

Taken together, we can make two key assertions based on previous research on the interaction of personality and harmful forms of media. First, individual differences in traits may impact the cognitive (and behavioral) consequences of what people they are exposed to. Second, trait anxiety may play a key role in the effect of misinformation due to its identified role in moving people toward extreme action driven by a need for certainty and belonging in an uncertain world (Kruglanski et al., 2014; McGarry & Shortland, 2023). As such, here we empirically investigate the effect of individual differences in BIS on the behavioral and cognitive response to exposure to misinformation online. Previous research using RST has emphasized the utility of RST as a motivational framework to conceptualize the process through which harmful extremist content online leads to harmful cognitions and potential behavior (Shortland & McGarry, 2021). As such there is both experimental and empirical warrant for this investigation. Therefore, in the following studies, we seek to expand on the use of RST by understanding the impact of the BIS and the degree to which exposure to misinformation online increases activism and radicalism intentions.

In line with general theories of activism that posit that overall uncertainty is a risk factor for engaging with extreme groups and ideologies (Kruglanski et al, 2014), we hypothesize that:

H1: Pre-test BIS score will be associated with an increase in activism and radicalism intentions scores both pre- and post-exposure to misinformation.

In addition to this, we propose the following hypotheses pertaining to how an individual will interact with misinformation, and the cognitive consequences of the interaction:

H2: Positive engagement with misinformation will be associated with an increase in activism and radicalism intentions scores.

H3: Viewing misinformation will be associated with an increase in activism and radicalism intention scores, when compared to viewing correct information.

H4: High BIS score will be associated with increased positive engagement with misinformation.

H5: High BIS score will interact with misinformation exposure, meaning that increases in activism and radicalism intentions after viewing misinformation will be higher in those with high trait BIS.

Finally, by incorporating both misinformation and correct information, this study also seeks to identify the degree to which any effect of the BIS is unique (or not) when individuals are exposed to misinformation. Here, we propose a final hypothesis related to the unique effects of misinformation and the BIS. Specifically:

H6: There will be an interaction between BIS and information type in that misinformation will cause higher levels of activism and radicalism, and that this will be moderated by individual differences in trait BIS.

We test these six hypotheses across two studies. One study uses misinformation pertaining to misinformation about the 2020 Presidential election, and a second study using misinformation pertaining to the COVID-19 pandemic.

Method

Participants

Participants over the age of 18 and located in the United States were recruited using Amazon’s Mechanical Turk (MTurk). The survey was presented as an anonymous research survey examining the effects of personality and motivation on online behavior. While MTurk samples can have limitations, literature suggests that adhering to the best practices for MTurk pools (as done for the current study) can mitigate many of these concerns such as attention of study participants. For example, Hauser and Schwarz (2015) demonstrated across three separate samples, that MTurkers are more attentive than their more traditional sample pool counterparts. In this study, participation was limited to individuals registered on MTurk with an acceptance rate of .95. Furthermore, individuals who completed the study either under 1 standard deviation (SD) from the mean completion time (to screen for attention), were removed from the sample leaving us with an N = 449 and 440 for Studies 1 and 2, respectively. For Study 1, the average age of participants was 38.67 (SD = 12.08), 53.4% male, 46.6% female, and 82% White. For Study 2, the average age of participants was 38.75 (SD = 11.97), 48.7% male, 50.8% female, and 82.3% White. Despite data reduction procedures, it is still important to note potential limitations to the generalizability of the current study given the MTurk sample as well as the high percentage of White participants. The current study was approved by the Institutional Review Board at a prominent northeastern university in the United States and informed consent was obtained from all participants.

Measures

RST Measures

The Corr-Cooper RST Personality Questionnaire (RST-PQ) was used to measure BIS sensitivity (Corr& Cooper, 2016). The BIS subscale within the RST-PQ consists of 23-items and utilizes a 5-point Likert-type scale from 0 (not at all) to 4 (highly). Scale reliability analyses were performed for RST-PQ and indicated good reliability.

Activism and Radicalism Intentions Scales

The Activism and Radicalism Intentions Scales (ARIS; Moskalenko & McCauley, 2009) were used to measure intentions of political behavior both prior to and post engagement: activism and radicalism. The activism scale (AIS) is a measure of individual intentions to participate in non-violent acts on behalf of a political group, while the radicalism scale (RIS) measures intentions to participate in violent acts on behalf of a political group. Participants responded to 10 items on a 7-point Likert-type scale ranging from 1 = disagree completely, to 7 = agree completely. Post exposure, participants responded according to the following instructions: “Think of the group that was connected to the material you were just exposed to.” This was done to specifically examine if the study materials presented to participants would influence their willingness for engaging in actions endorsing the specific views present in the materials viewed, rather than just general tendencies for radicalism and activism. To date, the ARIS has been used extensively to measure activism and radicalism intentions, and this specific manipulation (asking individuals to think of a specific group) has been shown effective in previous research (McGarry & Shortland, 2023; Shortland & McGarry, 2021). Scale reliability analyses were performed for the AIS and RIS and indicated good reliability.

Demographic Measures

Participant sex (Male = 0, Female = 1), race/ethnicity, age, and internet use were collected. Race/ethnicity was recoded after data collection as White = 0, and Non-White = 1. Due to the highly politicized nature of beliefs about COVID-19 and the 2020 election (Halpern, 2020), and the fact that partisanship can influence the effects of misinformation (Weeks, 2015), political ideology was also measured using a 5-point scale (Very Conservative, Conservative, Moderate, Liberal, and Very Liberal).

Measure of Online Engagement

For each photo, responses including “Like,” “Share,” or “Follow” were considered positive engagement and coded as “1.” Responses of “Report” or “None of the Above” were considered non-positive engagement and coded as “0.” The number of photos with positive engagement responses was divided by how many photos each participant chose to view, providing a measure of positive response rate.

Study 1 Procedure

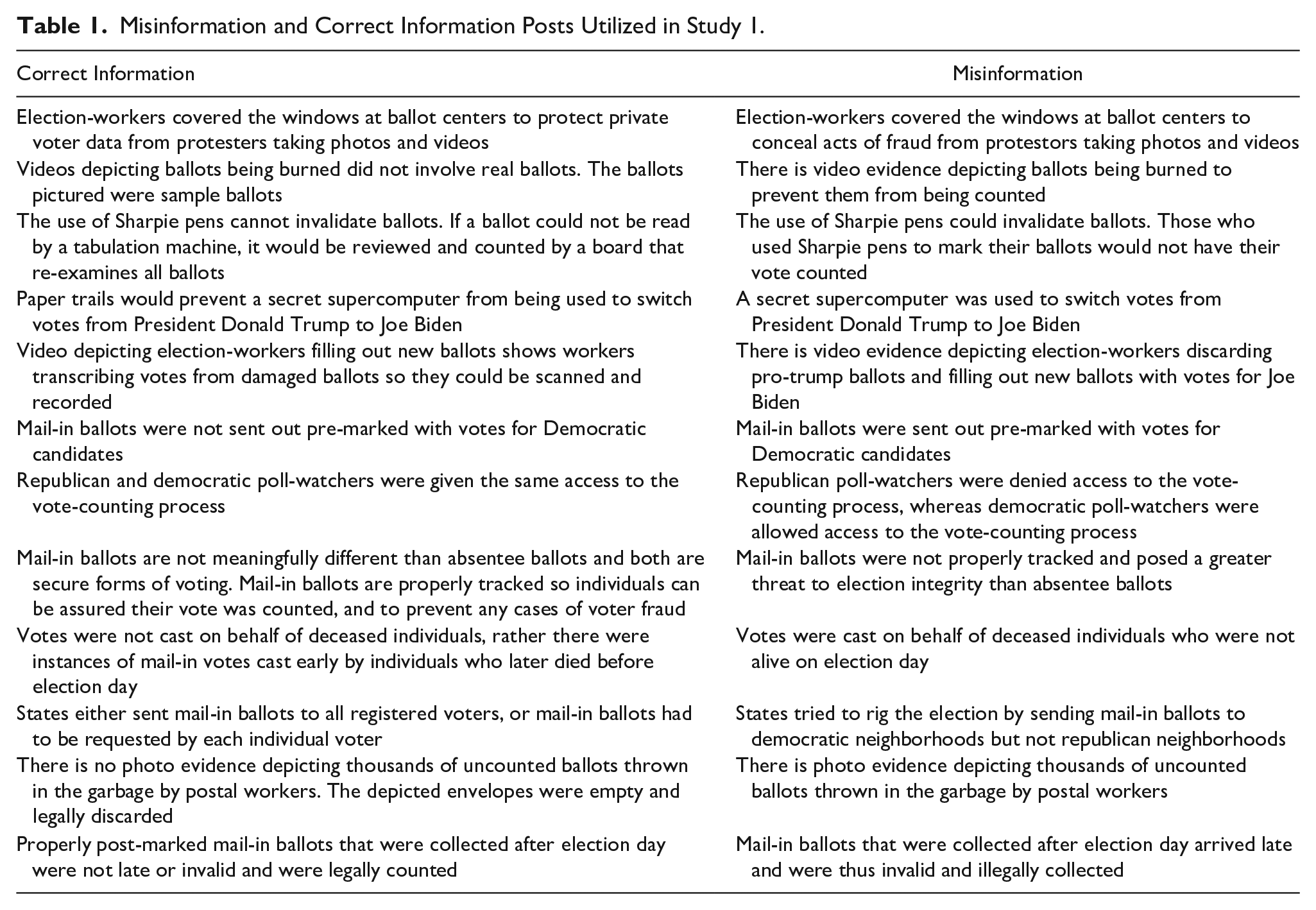

The first study utilized a version of the EXTREME (EXperimental Test of Radical EMotional Engagement)-inventory (Shortland & McGarry, 2021). The EXTREME-inventory is an experimental paradigm designed to measure the relationship between individual differences in personality and both willingness to engage with, and susceptibility to extreme content online (Shortland & McGarry, 2021). It is specifically designed to quantify the degree to which individuals engage with extreme content online, the degree to which they are cognitively impacted by this material, and the degree to which this is impacted by differences in personality traits, and/or content type (Shortland & McGarry, 2021). In this study, participants were randomly assigned to view either: (a) blocks of text detailing commonly propagated misinformation related to election fraud in the 2020 presidential election, or (b) correct information relating to claims of election fraud. Examples of these claims are provided in Table 1. Participants were shown one block of text at a time, and after each block of text were asked how they would respond to the information as if viewing it on social media and could choose to “Like,” “Share,” or “Report”; “Follow”; or “None of the above.” Overall, participants were shown up to eight blocks of text that contained misinformation or correct information. After each block, participants were allowed the opportunity to withdraw from seeing further material or continue to view another photo. After viewing the photos, participants then completed a modified version of the ARIS. Post study, participants were thoroughly debriefed, and any misinformation shown was debunked and correct information provided.

Misinformation and Correct Information Posts Utilized in Study 1.

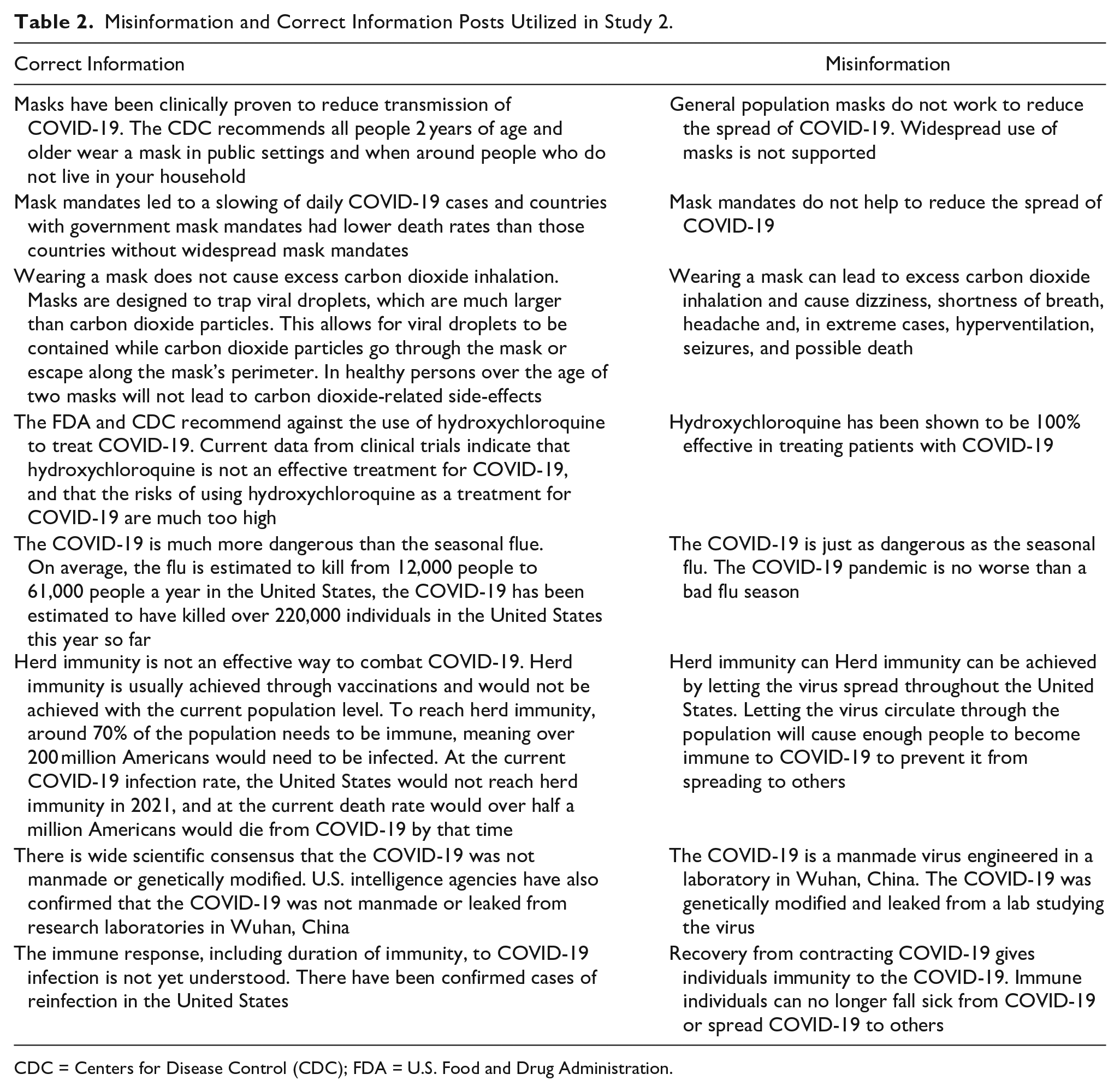

Study 2 Procedure

Study 2 utilized the same design as Study 1. However, in this study, participants were randomly assigned to view either: (a) blocks of text detailing commonly propagated misinformation related to the COVID-19 pandemic, or (b) correct information relating to the COVID-19 pandemic (see Table 2). The blocks of text were shown individually and in the same sequence for all participants within each group. Participants were asked how they would respond to the information as if viewing it on social media and could choose to “Like,” “Share,” or “Report” the post; “Follow”; or “None of the above,” In addition to these blocks of texts, participants were also shown a concurrent vignette describing a group who were either pro-mask, or anti-mask. Participants then completed a modified version of the ARIS, where participants were given specific groups described in vignettes prior to viewing the misinformation or correct information materials. Post study, participants were thoroughly debriefed, and any misinformation shown was debunked with the correct information provided.

Misinformation and Correct Information Posts Utilized in Study 2.

CDC = Centers for Disease Control (CDC); FDA = U.S. Food and Drug Administration.

Results

Study 1 Results

RST-PQ and ARIS

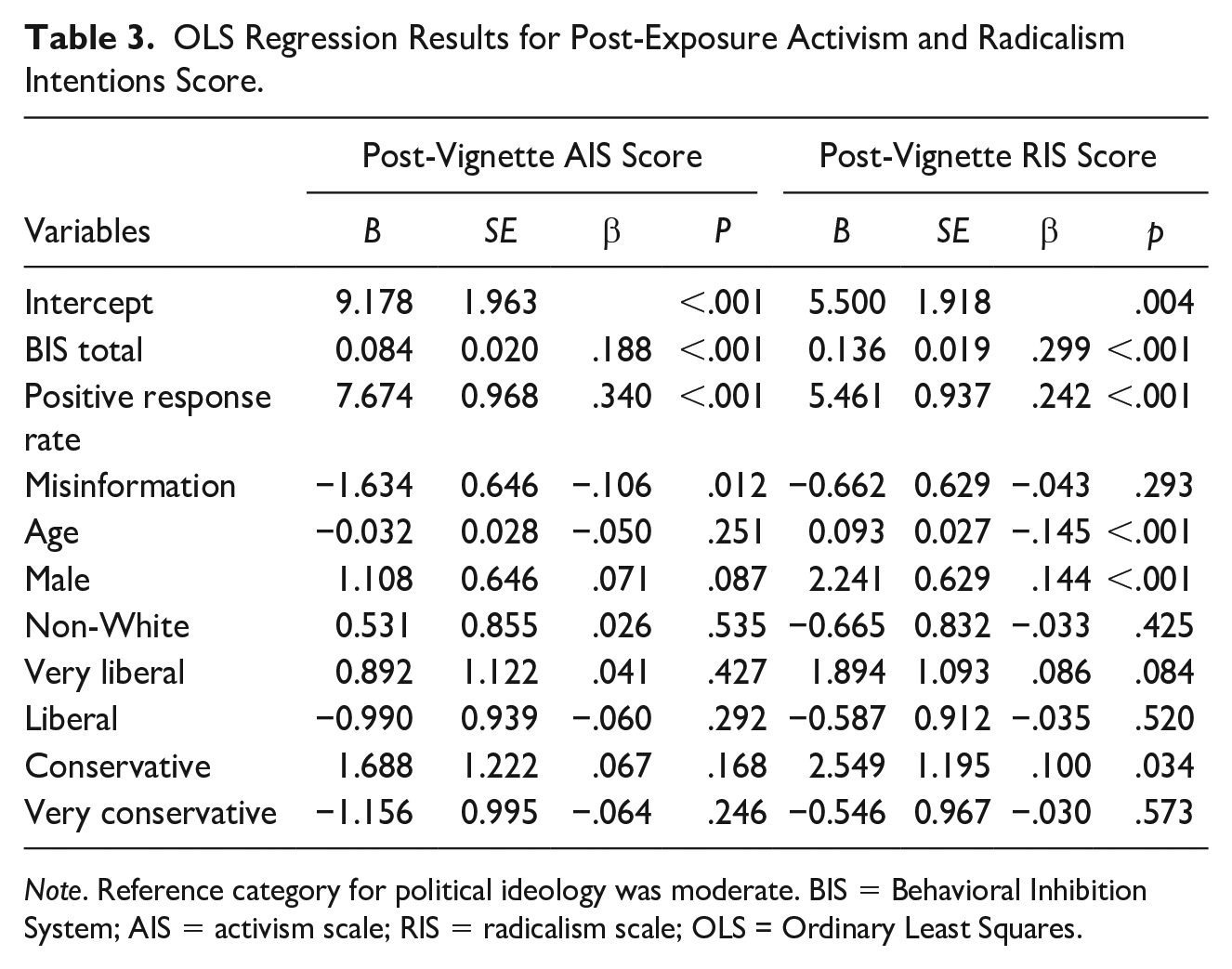

OLS regression results show that total BIS score significantly predicted post-AIS and RIS scores, supporting hypothesis 1 (see Table 3). BIS was positively associated with intentions to engage in both activism- (B = 0.036, p < .05) and radicalism- (B = 0.149, p < .001) related behaviors before exposure to the election-related information (misinformation/correct information). BIS was also related to willingness to engage in both activism- (B = 0.084, p < .001) and radicalism- (B = 0.136, p < .001) related behaviors in support of a specific group after exposure. The number of information blocks participants responded positively too (“Like,” “Share,” “Follow”) also significantly and positively impacted AIS and RIS scores. With every increase in positive response rate, both AIS (B = 7.674, p < .001) and RIS (B = 5.461, p < .001) scores, following the study manipulation, were predicted to increase by around 7 and 5 points, respectively. These findings indicated that the more participants endorsed the views presented in the information they were exposed to, the more willing they were to engage in activism- and radicalism-related behaviors in support of those views. Additionally, the information type participants were exposed to were also included in analyses for post-AIS and post-RIS. Contrary to hypothesis 3, viewing the misinformation decreased willingness to engage in activism-related behaviors (B = −1.634, p < .05); however, the information type viewed had no significant impact on willingness to engage in radicalism-related behaviors.

OLS Regression Results for Post-Exposure Activism and Radicalism Intentions Score.

Note. Reference category for political ideology was moderate. BIS = Behavioral Inhibition System; AIS = activism scale; RIS = radicalism scale; OLS = Ordinary Least Squares.

Positive Response to Experimental Content

A Poisson regression model was conducted to examine the factors that influenced the number of positive responses participants reported to the election content they viewed. In support of hypothesis 4, individuals with higher total BIS have a greater number of positive responses to the experimental content viewed, indicating that the number of positive responses to the election-related content was predicted to be 1.010 times or 1.0% greater for every unit increase in BIS score. The information type viewed by participants also impacted their number of positive responses to the content, with the number of positive responses to the correct election-related content predicted to be 1.324 times, or 32.4% greater for those viewing misinformation.

Study 2 Results

RST-PQ and ARIS

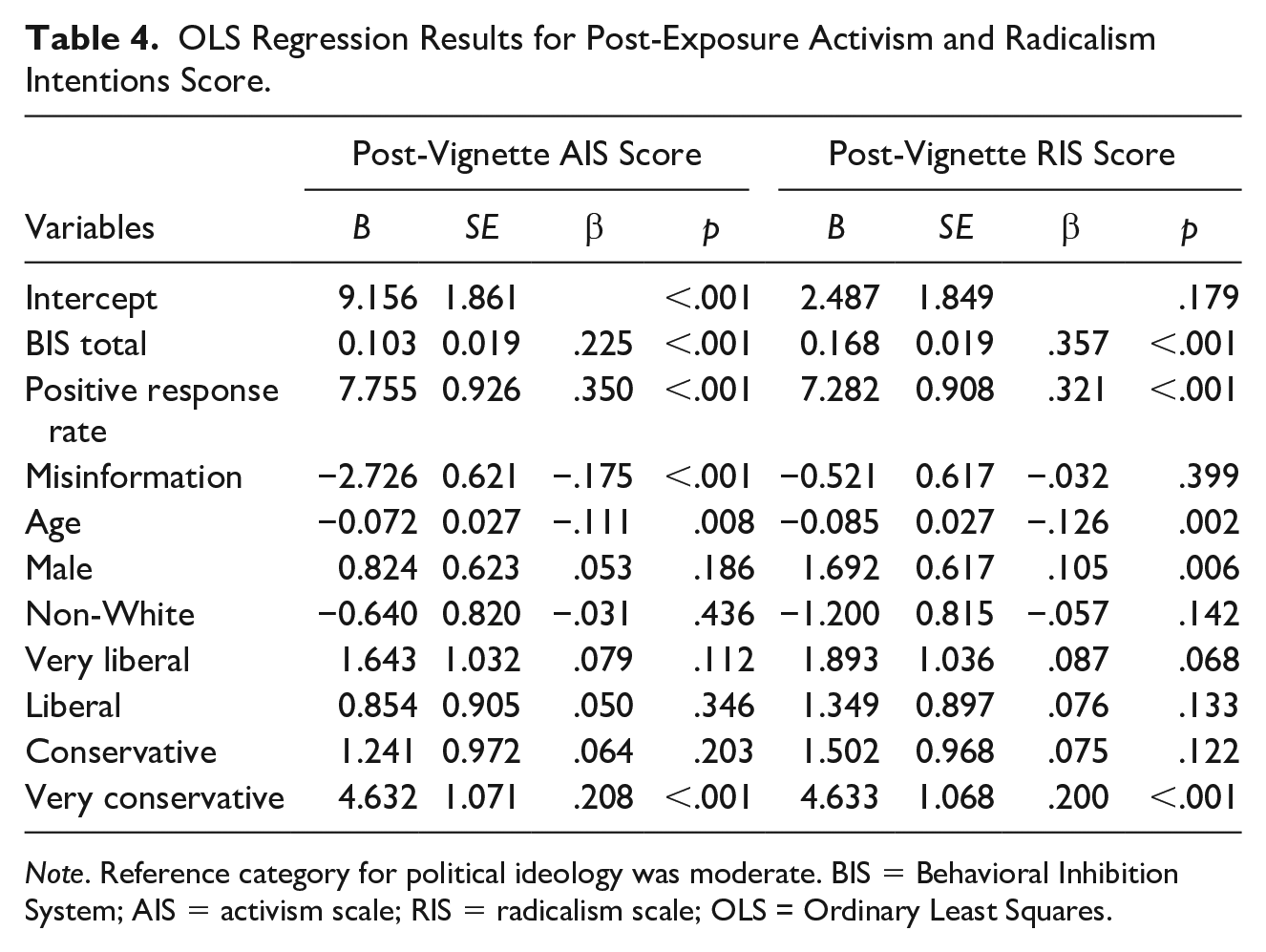

OLS regression results for ARIS scores from Study 2 show that total BIS score significantly predicted post-AIS and RIS scores, again supporting hypothesis 1 (see Table 4). BIS was positively associated with intentions to engage in both activism- (B = 0.058, p < .001) and radicalism- (B = 0.166, p < .001) related behaviors before exposure to the COVID-19-related information. BIS was also related to willingness to engage in both activism- (B = 0.103, p < .001) and radicalism- (B = 0.168, p < 0.001) related behaviors in support of a specific group. Further supporting hypothesis 2, positive response rate was significantly and positively associated with both AIS and RIS scores following viewing the COVID-19-related information, with a 1% increase in positive response rate associated with a 7.775 (p < .001) point increase in AIS and a 7.282 (p < .001) point increase in RIS scores. As with Study 1, we found lack of support for hypothesis 3; participants viewing the misinformation opposed to correct information were predicted to report lower AIS scores (B = −2.726, p < .05). Information type viewed had no significant impact on RIS scores.

OLS Regression Results for Post-Exposure Activism and Radicalism Intentions Score.

Note. Reference category for political ideology was moderate. BIS = Behavioral Inhibition System; AIS = activism scale; RIS = radicalism scale; OLS = Ordinary Least Squares.

Positive Response to Experimental Content

A Poisson regression model was conducted to examine the factors that influenced the number of positive responses participants reported to the COVID-19 content viewed. The results show that, in support of hypothesis 4, the number of positive responses to the election-related content was predicted to be 1.016 times greater for every unit increase in BIS score. Additionally, the number of positive responses to the election-related content is predicted to be 1.224 times greater for those viewing misinformation compared to those viewing correct information.

Discussion

Despite the significant importance of misinformation, psychological research has rarely explored the individual differences in susceptibility to misinformation, and, critically, the motivational processes through which exposure to misinformation can result in interpersonal violence. The poor conceptual understanding of the interaction between harmful misinformation online and violence within the population is problematic because it hinders efforts to prevent (or reverse) these effects. While it is clear that not all who view misinformation will engage in violence, it is clear that engaging with misinformation, for some, is a key part of the pathway toward violence and the information plays an important role in their willingness to support or engage in violent behavior. In the same way, it is important to understand the relationship between violent media and eventual violence, it is critical to explore the relationship between misinformation and acts of violence that are directly aligned with the narratives of that misinformation (e.g., interfering in an on-going election or transition of Government power).

This research sought to further our understanding of this process by exploring the role of individual differences in trait BIS on the behavioral (positive response rate) and cognitive (AIS & RIS) impact of exposure to misinformation about COVID-19 and the 2020 Presidential Election. In line with our hypotheses, this research found that trait BIS was related to both how much individuals positively engaged with the materials they were exposed to, as well as cognitions, or intentions, for both activism and radicalism. Furthermore, trait BIS only influenced activism and radicalism intentions after exposure to the experimental paradigm. This indicates that trait BIS does not lead to a domain general increase in activism and radicalism intentions, but instead, high trait BIS is associated with activism and radicalism only after exposure to misinformation. Higher scores for trait BIS were also associated with increased positive engagement with the photo content, indicating that trait BIS impacts both willingness to engage with the materials as well as how this engagement influences intentions for political activism and radicalism. Furthermore, information type only significantly impacted activism scores, which may indicate that there are distinct motivational and cognitive pathways that influence activism and radicalism intentions. This proposition should be addressed in future research. Below, we outline the theoretical and practical implications of this study.

Overall, this study supports the assertion that uncertainty/anxiety may be a factor that leads people to engage with, and be influenced by, misinformation (and indeed correct information). Our findings provide evidence that cognitions regarding political activism and radicalism can be influenced by the processes governed by an individual’s anxiety system (BIS), and that trait BIS may also influence initial willingness to engage with misinformation materials online. Engaging with and sharing controversial and attention-grabbing misinformation can be an avenue for individuals to mitigate biological anxiety responses as body seeks to return to a state of homeostasis. With current events inducing an uncertain political, social, and economic environment, the proliferation of misinformation, and a younger generation with chronically high anxiety (Glowacz & Schmits, 2020), we should be cognizant of the increased risk these factors pose as BIS-related factors may be increasing.

One possible explanation for this is the Quest for Significance theory; used elsewhere to explain how anxiety can push people toward extreme behaviors and groups (namely violent extremist groups). BIS functions are closely associated with feeling of meaningless, threats to one’s identity, and sensitivity to social and societal rejection, all of which have been advocated as potential motivators for engaging with violent extremist activity. Quest for significance (Kruglanski & Orehek, 2011) presents a model of radicalization that is centered on the need to achieve personal significance. As such, involvement with extremist groups is driven by three general drivers: a need for significance, a narrative that provides a means to achieve significance, and a network of like-minded individuals who make the violence-justifying cognitions perceived as morally acceptable. The theory holds that central to all action is the desire to “be someone,” and to have meaning in one’s life (Kruglanski et al., 2014). This study confirms the relevance and breadth of Kruglanski’s theory of the need for significance and the harm caused by a lack of it. It also reinforces the utility of RST. In this study, we provided preliminary evidence that (a) the processes that underpin radicalization in other domains (e.g., violent extremism) may be a useful lens to apply to the process through which exposure to misinformation turns into action and (b) quest for significance (and other affiliated RST theories such as re-active approach motivation; McGregor et al., 2013) may support our understanding of the process of how exposure to misinformation compels later behavior.

What is especially interesting, is that in both Study 1 and Study 2, the correct information led to greater engagement and lower post exposure intentions for activism and radicalism. This finding is critical to our understanding of the impact of misinformation as, in many cases, the information alone is not causing a shift toward extremism. Instead, what is critical, and reinforced here, is that the discrete personality factors associated with the viewer play a critical role in the power, if any, of misinformation. This finding is in line with previous research on the impact of exposure to harmful videogames (Anderson, 2004), or harmful extremist content (Shortland et al., 2017, 2019), in which the reaction to such material is not homogenous but dictated by the personality of the viewer. There are three important potential implications of this finding. First, research on the misinformation online can no longer hold viewers as passive and homogenous but must actively conceptualize and quantify the unique variance associated with viewers. We need to stop focusing solely on the existence of, online networks for, and content in, misinformation, but instead the pressing need to identify psychological factors at the state and trait level, that increase an individual’s susceptibility to misinformation. Second, in the realm of prevention, in addition to discussions of how to identify, and remove misinformation, it is important to think about how we can equip individuals to be in a state that is less permissive to misinformation (i.e., high anxiety). Finally, the fact that the role of BIS was similar in misinformation and correct information conditions implies that the processes underpinning engagement with misinformation may be similar to the processes underpinning engagement with correct content. An extension of this being that the pathways identified elsewhere that govern the relationship between exposure to content and violence may also be relevant and applicable for misinformation (e.g., general aggression model (GAM); Anderson & Dill, 2000).

Although these findings are preliminary, and to the authors’ knowledge, the first to explore personality and influence as it relates to misinformation, they have implications for the conversation on technology and harm. First, technology is becoming increasingly important in the lives of much of the Western world, particularly in the context of the ongoing COVID-19 pandemic. Such technology has been a societal boon for supporting the sharing of knowledge about the outbreak of COVID-19 and supporting online connectivity during isolation. Furthermore, younger demographics have increasingly moved their activities online, thereby increasing the rate at which they are exposed to misinformation and/or harmful content (Frissen, 2021; Lee & Leets, 2002). In this sense, the solution to misinformation is often viewed through the lens of technological innovation. This often manifests in censorship and removal of such material. That said, this study reinforces the need to think about the user and the personal and situational factors in the real world that they bring to the Internet every time they log on. Here, we showed the potential importance of anxiety as a risk factor. As such, a critical pathway to prevention is to minimize the effect of misinformation by reducing levels of anxiety at the societal level. Yet, as we are increasingly seeing, symptoms of anxiety have doubled during the global pandemic (Racine et al., 2021). In an era with rapid proliferation of both online activities and anxiety, focusing solely on the “technology” side of prevention will continue to prevent us from focusing on a critical part of the prevention process: the person.

Limitations

While the results of this study are important in furthering our understanding of the link between misinformation and violence, there are a series of limitations that should be highlighted. First and foremost, while the use of MTurk may mimic the viewing experience of those exposed to misinformation (i.e., alone and online), it is important to note that the validity of such sampling tools is still debated within the field. This is especially important when we consider a range of other factors that could be impacting the politically charged, and technologically driven nature of misinformation. Here, we controlled for age, sex, and political affiliation, but it is clear that future research also needs to consider elements associated with digital nativism, time online, and a range of other factors that may impact how people perceive and engage with misinformation (Bapaye & Bapaye, 2021). Second, although the findings here provide insight on the role of trait BIS, this should not be conflated with insight into the activation states that occurred during exposure. This study focused on trait BIS tendencies of the viewer, and not state BIS (or BAS) activation when being directly exposed to the materials. Thus, although these findings are useful in reinforcing the role of BIS traits, it is important that the discussion of these findings are not unintentionally conflated with confirming the activation of a BIS state at various stages during the process. That said, we strongly support the need for future research to measure state activation of RST processes. Finally, when studying the psychology of radical action in general, especially when considering the role of ideologies and third-party ideological material, it is important to highlight the long-standing issues with our understanding of this process. The AIS and RIS assesses readiness to support or participate in illegal or violent political action (Moskalenko & McCauley, 2009). That said, there is no evidence (yet) to support that individual differences in ARIS score are associated with an increased proclivity to engage in extreme behavior. In fact, many argue that we are yet to truly understand the cognitive process(es) that lead to engagement in violent behavior or the role of extreme cognitions (see Sageman, 2014; Shortland, 2020). This debate limits the breadth of the findings presented here. Although we found that BIS traits impact the degree to which individuals are likely to engage with extreme content online, and the degree to which exposure to this content increases their radicalism and activism, future research is required to support the role of BIS and RST in general on the behavioral pathway to engaging in violence driven by misinformation. It is also important to note that, methodologically, several of the items used as “misinformation”, would now, with hindsight and further analysis, be far less easy to define as such (e.g., the role of the Wuhan laboratory in the origins of the COVID-19 pandemic). While this is a methodological issue, it also reflects a challenge with the external defintion of information as “misinformation”, because to the viewer they believe it to be correct. Hence, the subjective perception is that they are viewing information, and not misinformation. This highlights the importance of acknowledging that when studying the human process of exposure to misinformation, thereis a disconnect between how information is by an external audience and how it is percieved at a subjective level by the viewing audience.

Conclusion

Traditionally, although the “radicalization” of thoughts and action was viewed under the psychological study of terrorism, the term (and therefore the theory) is increasingly being used to discuss radicalized action in a range of domains including general domestic politics (Benkler et al., 2018) and conspiratorial thinking surrounding current events (e.g., the COVID-19 pandemic and/or 2020 Presidential Election; DeCook, 2020). What these past few years have shown is that individuals are developing increasingly harmful radicalized opinions across a range of ideological niches, the outcomes of which pose a range of serious national and international security concerns. The problem of countering the pervasive threat of misinformation online is multi-faceted and involves a range of approaches; from advanced technologies to identify and remove such material, to general online education. Although there is much more research to be done in this domain, this article supports that in addition to studying the material itself, we must also focus on the person and the unique motivational processes that govern their intention to engage with such material, and to be influenced by it. This will not only help us understand why some individuals are so influenced by misinformation that they are willing to engage in extreme behaviors, but also support the development of ethical humanistic prevention efforts aimed at addressing central psychological processes such as anxiety. This study reinforces the need to think about the human in the loop when it comes to misinformation.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interests with respect to the authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Department of Defense Minerva Initiative grant “N000141812367” (PI: Shortland).