Abstract

Accurate measurement of sexual violence (SV) victimization is important for informing research, policy, and service provision. Measures such as the Sexual Experiences Survey (SES) that use behaviorally specific language and a specified reference period (e.g., since age 14, over the past 12 months) are considered best practice and have substantially improved SV estimates given that so few incidents are reported to police. However, to date, we know little about whether estimates are affected by respondents’ reporting of incidents that occurred outside of the specified reference period (i.e., reference period errors). The current study explored the extent, nature, and impact on incidence estimates of reference period errors in two large, diverse samples of post-secondary students. Secondary analysis was conducted of data gathered using a follow-up date question after the Sexual Experiences Survey–Short Form Victimization. Between 8% and 68% of rape and attempted rape victims made reference period errors, with the highest proportion of errors occurring in the survey with the shortest reference period (1 month). These errors caused minor to moderate changes in time period-specific incidence estimates (i.e., excluding respondents with errors reduced estimates by up to 7%). Although including a date question does not guarantee that all time period-related errors will be identified, it can improve the accuracy of SV estimates, which is crucial for informing policy and prevention. Researchers measuring SV within specific reference periods should consider collecting dates of reported incidents as best practice.

Keywords

Accurate measurement of sexual violence (SV) victimization is important for informing research, policy, and service provision. The development of self-report measures such as the Sexual Experiences Survey (SES; Koss & Gidycz, 1985; Koss et al., 2007) have substantially improved our ability to measure SV rates given that so few incidents are reported to police and other authorities (Cotter & Savage, 2019). However, measurement characteristics (such as item language and format) and other factors may result in over- or underestimating the prevalence and incidence of SV victimization if a survey does not capture certain types of experiences or captures experiences that occurred outside of the reference period being measured.

A substantial body of literature has been dedicated to assessing and improving existing self-report measures based on evidence about the impact of measurement characteristics on respondent interpretations and resulting SV estimates (see Koss, 1993 for an early review and more recent studies by Abbey et al., 2005; Krebs et al., 2021; Schuster et al., 2020; Tomaszewska et al., 2021). This literature has informed current best practices, including the use of behaviorally specific measures like the SES that ask about detailed scenarios/behaviors rather than using broad and potentially ambiguous labels such as “rape,” “sexual assault,” and “sexual intercourse” that respondents may not identify with (Cook et al., 2011; Koss, 1993; Koss et al., 2007). While this body of work has improved and continues to improve researchers’ ability to determine through self-report measures that an incident occurred (thereby reducing errors related to measure content and construct validity; see Krebs et al., 2021), little research has been conducted to assess and improve our ability to determine whether reported incidents occurred within the time or reference period asked about.

Most self-report SV victimization measures ask respondents to report on incidents that occurred within specific periods of time (e.g., since age 14, in the past 6 or 12 months, or, in the case of longitudinal research, since a previous survey). Although important for accurate prevalence and incidence rates, asking about specific reference periods can introduce additional measurement error as respondents may intentionally or unintentionally report incidents that occurred outside of the reference period asked about (a phenomenon referred to in the current paper as reference period errors). For example, when asked about experiences within the past 12 months, a respondent may report victimization experiences from years prior to that period. Understanding the extent, nature, and impact of these potential errors is important for several reasons. First, given that reference periods are a defining feature of most tools in SV measurement, it is useful to know how common these errors are (extent) across different reference periods to help researchers assess the utility and limitations of those reference periods. Second, understanding what these errors look like (nature) can provide clues as to their cause, which is important information for addressing or preventing them in future research. Finally, reference period errors represent a possible threat to accurate SV measurement. Assessing their impact on SV estimates can aid in interpreting past and future research.

Literature Review: The Extent, Nature, and Impact of Reference Period Errors

Errors related to the misplacing of events in time within retrospective (survey) research have been variously termed and framed in the literature (e.g., Farrell et al., 2002; Gaskell et al., 2000; Krebs et al., 2021; Perrault, 2015) and are sometimes referred to as telescoping, temporal displacement, or time window problems. Telescoping is commonly defined as a form of memory bias wherein a respondent will misremember the timing of events and therefore incorrectly date those events on surveys (Schneider & Sumi, 1981). Forward telescoping is commonly used to describe when survey respondents report events as having happened more recently than was the case, whereas backward telescoping is often used to describe when respondents report events as having happened earlier in time than was the case (Gaskell et al., 2000; Prohaska et al., 1998; Schneider & Sumi, 1981). In the current paper, we use the term reference period errors to refer to the various types of identifiable time-period errors (regardless of cause) made in respondents’ responses.

Little research has examined reference period errors in SV victimization surveys. Most knowledge about reference period errors comes from studies on reports of highly salient (“landmark”) events, including crime (e.g., Gaskell et al., 2000; Schneider & Sumi, 1981). For instance, evidence indicates that some crime victims will inflate or over-estimate the number of crimes that occurred, and disproportionally place crime events in summer months (Schneider & Sumi, 1981). Similarly, Farrell et al. (2002) note that prevalence estimates for repeat victimization are likely underestimations, given that multiple crimes that happened within the same time window are often misremembered as single events in retroactive surveys and interviews. Thus, the misremembering of events can and does impact researchers’ ability to accurately measure victimization, although the prevalence of these types of errors is largely unknown.

In addition to misremembering the timing of past events, additional explanations for reference period errors in survey research include respondents misunderstanding, misreading, or misremembering survey reference period instructions; choosing to report an experience that occurred outside the reference period in order for that experience to be heard in research; or, in the case of internet and computerized surveys, accidentally clicking a response (Evans et al., 2016; Gaskell et al., 2000; Prohaska et al., 1998). The issue of respondents misreading (or failing to read altogether) survey instructions is a well-established issue and is typically identified by way of attention or manipulation checks (Ward & Pond, 2015). Some victimization research has found that when instructions about the reference period were provided only at the beginning of the survey, respondents often forgot about those instructions and reported on events that occurred outside of the reference period (Evans et al., 2016). Finally, it is conceivable that some respondents might intentionally choose to report on experiences that occurred outside of a survey reference period out of a desire to have an earlier (or later) experience heard and recognized. Indeed, respondents are in control of what they disclose on any self-report survey (Cook et al., 2011), and there is evidence that SV victims often want to share their experiences with others, including media, courts, and researchers (Campbell & Adams, 2009).

To date, we know little about the impact of reference period errors on SV victimization rates (or other types of victimization more generally). While there are some exceptions—such as Cook et al. (2011) who noted that “. . .6% [of responses] were deemed invalid either because the experience was out of the reference period or the respondent did not provide enough details” (p. 209)—existing literature has seldom touched on these issues in discussions of data quality. Krebs et al. (2021) provide the only explicit analysis, to our knowledge, of the impact of reference period errors on SV rates. They found that excluding reported incidents that occurred outside of the 1-year reference period (i.e., reference period errors) reduced the SV incidence rate by 0.3%. Excluding incidents that could not be identified as having occurred within the reference period because no date was provided—though not a reference period error per se—further reduced the rate by another 1.1% (for a total reduction of 1.4%).

Preventing Reference Period Errors

Although reference period errors are a potential source of inflation for estimates of SV victimization, few suggestions for locating and reducing these errors are available. Some research has suggested that bounded interviews—whereby an interviewer confirms the accuracy of survey responses with the respondent and asks about events that have happened since—can reduce the effects of telescoping (Rennison & Schwartz, 2018; Schneider & Sumi, 1981). However, bounded interviews pose an expensive, time-consuming, and often logistically complex demand on researchers (Owens, 2017) and are only possible in very specific survey conditions (Gaskell et al., 2000).

Other methods for reducing reference period errors and their impact on SV estimates require what has been referred to as the two-stage approach to self-report measurement (Cook et al., 2011; Krebs et al., 2021). A one-stage approach assesses victimization in a single stage through specific questions meant to measure certain types of victimization (Cook et al., 2011; Krebs et al., 2021). The two-stage approach, in contrast, uses screening items to determine whether sexual victimization may have occurred (not necessarily used for classification), followed by detailed probing questions to gather further information about what happened from those who reported victimization on the screening items (Krebs et al., 2021). The two-stage approach risks losing respondents in the second stage who “falsely screen out” (thereby increasing the potential for Type II error); however, the second stage can include questions about the nature and timing of reported victimization to ensure that reports fit within the scope and reference period of the survey (thereby reducing the potential for Type I error if researchers exclude reports that occurred outside the scope or reference period; Krebs et al., 2021, p. 1957). The two-stage approach may, therefore, be preferable for researchers who are actively interested in minimizing inflation.

Within the two-stage approach, there are a number of strategies available for probing about the timing of an incident, including open-ended items about the date, calendar instruments, and timeline techniques (e.g., Abbey et al., 2005; Belli, 1998; Krebs et al., 2021). In addition to helping researchers validate that reported incidents occurred within the reference period being asked about (Krebs et al., 2021), these strategies prompt deeper thought about reference periods on the part of the respondent and can, therefore, improve data quality prior to analysis or researcher intervention. For example, these and other aided recall strategies used in retrospective surveys can improve completeness of responses, increase attention to inconsistencies in accounts, improve recall for multiple events as part of a sequence, and increase the ability to differentiate between distinct events (Glasner & Van Der Vaart, 2009).

Indeed, for years researchers have noted that calendar instruments can and do increase the completeness and accuracy of retrospective reports (Glasner & Van Der Vaart, 2009), and the use of calendar or other aided recall procedures (e.g., the timeline follow back method, see Belli, 1998) have been hypothesized as being potentially helpful for prompting recall for SV victimization. Yet, to date, there remains a shortage of published research that explores the use of self-report tools such as the SES in conjunction with calendar functions or other timeline follow-back procedures (Belli, 1998). Despite their recommended use in SV research (e.g., Abbey et al., 2005; Krebs et al., 2021), follow-up date/calendar items and other aided recall procedures are rarely used in SV studies or assessed for their utility in enhancing recall and reducing reference period errors (Glasner & Van Der Vaart, 2009; Krebs et al., 2021).

However, there are some exceptions, and although not the explicit focus of their studies, some authors have been using calendar tools in conjunction with self-report SV victimization surveys for years. In a recent example, a random sample study conducted by the second author and colleagues (Jeffrey et al., 2023), participants reported on events that happened in a specified reference period and were asked to provide the day, month, and year of attempted and completed rapes. In another example, the second author and colleagues (Senn et al., 2022) conducted a longitudinal program implementation trial evaluating the Enhanced Assess, Acknowledge, Act (EAAA) Sexual Assault Resistance Program (also known as Flip the Script with EAAA™; Senn, 2015). Responses to follow-up calendar/date items in the survey were used to measure sexual assaults that occurred pre- and post-intervention, and assaults that occurred outside of the specified reference periods were excluded. These experiences would have had direct implications for the program evaluation if not excluded since the researchers needed to know how many assaults took place after the intervention in the prevention program group compared to the control group in the same time period.

Although the scope and goals of these two studies were entirely different (a random sample survey of university students versus a longitudinal evaluation of a sexual assault resistance program), both studies explored attempted and completed rape experiences using the Sexual Experiences Survey–Short Form Victimization (SES-SFV; Koss et al., 2007). The authors of both studies were able to use the date information to manually examine and re-code responses that happened outside of the survey reference period, reducing error in victimization estimates. Access to multiple data sources (with different samples and reference periods) using follow-up date/calendar items with the same SV measure provided us with both the impetus and unique opportunity to examine the extent, nature, and impact of reference period errors, and to assess the utility of follow-up date/calendar items for reducing these errors post data collection.

The Current Study

No previous studies, to our knowledge, have qualitatively examined the nature of reference period errors in the SV literature and very few (Cook et al., 2011; Krebs et al., 2021) have provided insight into how common these errors are and what their impact is on SV estimates. The current study fills these gaps using a secondary analysis of data from the two studies described above (both with large and diverse samples of university students): a Single-Timepoint Random Sample Study (Jeffrey et al., 2023) and a Longitudinal Implementation Evaluation of the EAAA sexual assault resistance program (Senn et al., 2022). We examined the following exploratory research questions: (a) What is the extent of reference period errors (i.e., how common are reports of rape and attempted rape victimization outside of different reference periods) in single timepoint and longitudinal self-report surveys using the SES-SFV? (b) What is the nature of these reference period errors (i.e., what do they look like)? and (c) What impact do these reference period errors have on rape and attempted rape victimization incidence estimates?

Method

Samples and Data

The first data source (Single Timepoint Sample) included 80 participants who reported one or more rapes or attempted rapes in the past 12 months from a larger sample of 977 university students of all genders from Jeffrey et al. (2023). The original 977 were those who volunteered to participate from a randomly selected, gender-stratified sample of 2,000 invited via personalized email at one university in southwestern Ontario, Canada. The sample of 977 was diverse in terms of gender (64% women, 35% men, 1% non-binary), age (17–72; M = 21), ethnicity (59% White/European), and sexual identity (88% heterosexual). The larger sample of 977 was used in the current study only in the analysis of the impact of reference period errors on rape and attempted rape incidence estimates (Research Question 3). The primary sample of 80 rape and attempted rape victims were aged 18–33 (M = 21) and comprised 85% women (14% men, 1% nonbinary), 58% who identified as White/European, and 84% who identified as heterosexual. Participants responded to an online survey that included, among other measures, the SES-SFV measuring SV victimization over the last 12 months. The SES-SFV measures how many times someone committed seven sexual acts (nonpenetrative sexual contact and attempted and completed oral, vaginal, and anal penetration) against the respondent without consent through five possible tactics (two types of verbal coercion, intoxication, threats, and physical force). To help reduce the response burden on those with multiple experiences, those who reported any rapes or attempted rapes (i.e., oral, vaginal, or anal penetration through intoxication, threats, or force) were asked about the date and other information for the experience from the past 12 months that they think about most (for rape and attempted rape separately).

The second data source (Longitudinal Sample) included 108 women who reported one or more rapes or attempted rapes on at least one of the two follow-up surveys in the implementation study. The sample of 108 participants was drawn from a larger sample of 640 university students who were registered to participate in the EAAA program at one of five universities in Canada and had been recruited into the longitudinal evaluation study (Senn et al., 2022) 1 . The sample of 640 comprised mostly (96%) of self-identified women (as expected given that the program targets women), with 4% identifying as nonbinary or gender fluid, and was diverse in terms of age (17–62; M = 22), race/ethnicity (42% White/European descent), and sexual identity (69% heterosexual). After the intervention, a total of 577 participants remained in the study and completed the one-week follow-up survey and 519 participants completed the 6-month follow-up. The larger samples of 577 and 519 were used only in the analysis of the impact of reference period errors on rape and attempted rape incidence estimates (Research Question 3). The primary sample of 108 rape and attempted rape victims was aged 17–34 (M = 22) and comprised 98% women (2% nonbinary or gender fluid), 51% who identified as White/European descent, and 67% who identified as heterosexual.

Participants in the Longitudinal Sample 2 were sent a link to an online survey that included the SES-SFV measuring SV victimization (among other measures) at three timepoints: (a) one week before the program was scheduled (baseline); (b) one week after the program finished (first follow-up); (c) and 6 months after the program (second follow-up). Only the two follow-up surveys—which asked about victimization since the previous survey—were used in the current analysis because the baseline survey did not include a follow-up date question. The reference periods asked about in each follow-up survey were “since the last survey” (i.e., since the baseline survey for the first follow-up and since the first follow-up survey for the second follow-up). Because participants did not always complete the surveys promptly, the exact reference period varied for each participant depending on the date they completed each survey. Accordingly, the reference period ranged from 1 to 36 weeks (Mdn = 2.71, M = 3.77, SD = 3.85) for the first follow-up and 11–47 weeks (Mdn = 25.86, M = 26.15, SD = 6.42) for the second follow-up. Given the means, we refer to these as 1-month and 6-month reference periods. Those who reported any rapes or attempted rapes were asked about the date and other information for the first and last experience (for each oral, vaginal, or anal rape and attempted rape through intoxication, threats, or force separately).

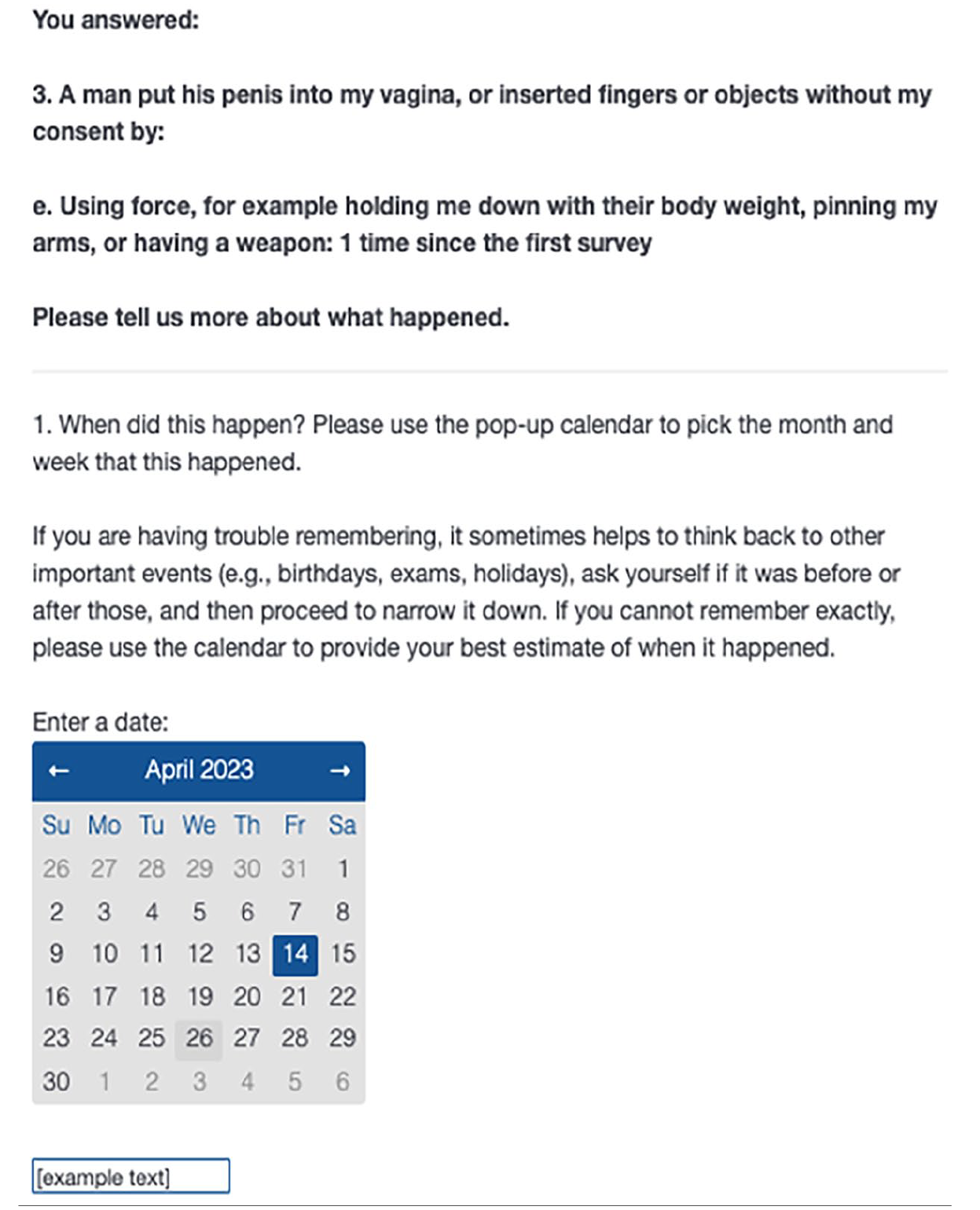

The follow-up date questions in both studies (adapted from Belli’s [1998] timeline follow-back method) repeated the reference period and allowed respondents to either type the date (or any other text) in a textbox or find and select the exact date (day, month, year) in a visual, monthly calendar provided in the Qualtrics survey platform (see Figure 1). In the Longitudinal Study, the date question(s) appeared after every rape or attempted rape report (before the respondent saw the next SES item). In the Single Timepoint Study, the date question(s) appeared at the end of the SES. The Longitudinal Sample study allowed for the dates of several experiences (depending on how many rape or attempted rape experiences they had had), whereas the Single Timepoint Sample study allowed for the date of up to one rape and one attempted rape per participant. Participants in both studies received gift cards ($25 or $30) for survey completion.

Example follow-up date question from the longitudinal study.

Secondary Data Analysis

All secondary analyses were conducted by the first author, who began by compiling the responses of participants from both studies who reported one or more experiences of rape or attempted rape on the SES-SFV (80 and 108 victims, respectively). She then manually cross-checked the dates reported in the follow-up date questions with the reference period asked about on each survey, taking into account the date that each respondent responded to the survey. For the Single Timepoint Sample, she identified the date exactly 12 months prior to each participant’s survey completion date to identify and code those who had reported at least one rape or attempted rape date outside of the 12-month reference period asked about. For the two Longitudinal Sample follow-ups, she identified the date of each participant’s previous survey to identify and code those who had reported at least one rape or attempted rape date outside of the reference period asked about.

To assess the extent of reference period errors (Research Question 1), the first author counted the number of victims who reported at least one rape or attempted rape that was outside of each reference period (12-, 6-, and 1-month). This included those who provided exact dates that were outside the reference period and those who provided open-ended imprecise dates (e.g., “in September,” “Valentine’s Day,” “December 2018”) only if those dates clearly fell outside the reference period. Dates without a year (e.g., “Valentine’s Day”) were assumed to pertain to the current calendar year and were assessed against the relevant reference period accordingly (e.g., someone reporting a rape “in September” was only counted as having made a reference period error if the reference period did not include September). She also counted the number of victims with at least one non-dated rape or attempted rape; that is, those who reported experiences that could not be identified as having occurred within or outside the reference period because no date at all was provided (i.e., no response to the date question or an open-ended indication of not knowing the date). Chi-square tests of independence conducted in SPSS version 28 by IBM were used to compare the proportion of victims with reference period errors and non-dated experiences between the 12-month and each of the other two reference periods. 3

To assess the nature of reference period errors (Research Question 2), the first author qualitatively and inductively examined the context surrounding each report of a rape or attempted rape outside the reference period. She examined open-ended responses about these incidents (including additional detail provided by some participants within the date question textbox) and other incidents reported by the same participant within the same survey or another survey (in the case of the Longitudinal Sample). After reading the responses, she developed an initial set of codes pertaining to the types and nature of reference period errors (e.g., evidence of accidental clicking and double reporting of the same incident, evidence that an incident should have been reported on a previous survey, a future date reported). She coded each respondent for the presence of each theme or characteristic, refining the codes as she went, and, finally, reviewing the complete dataset and coding structure and examining the codes across the three reference periods. She also manually calculated how far outside of the reference period each error was.

Finally, to assess the impact of reference period errors on rape and attempted rape incidence estimates (Research Question 3), she analyzed the percent change in the rape/ attempted rape incident rate for each of the three reference periods after removing (a) respondents who made at least one reference period error, and (b) remaining respondents who reported at least one rape/attempted rape without a date.

Results

Research Question 1: What Is the Extent of Reference Period Errors (i.e., How Common are Reports of Rape and Attempted Rape Victimization Outside of Different Reference Periods)?

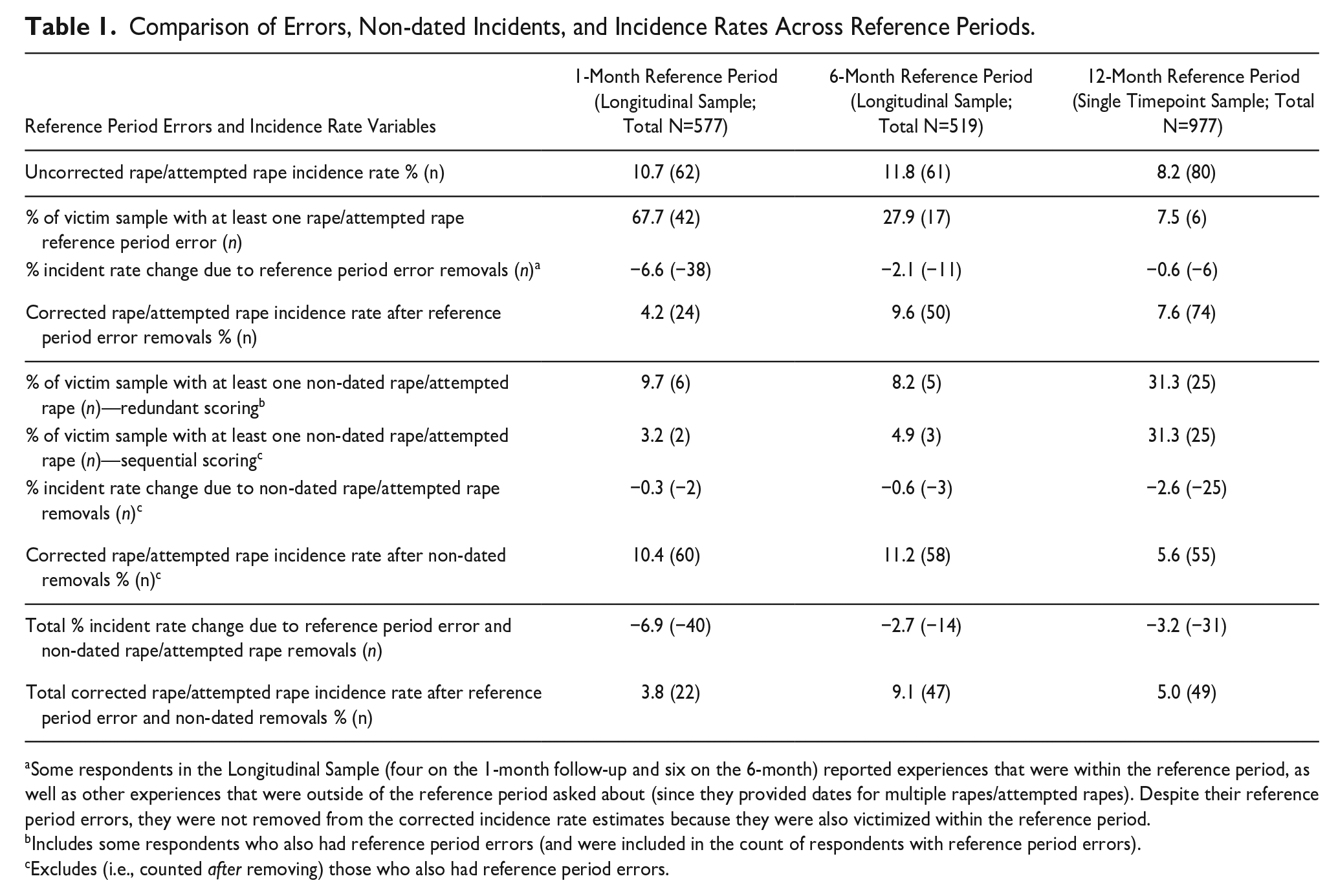

Across the three reference periods, 7.5%–67.7% of victims reported experiences with dates that occurred outside of the reference period asked about (i.e., reference period errors; see Table 1). Significantly fewer respondents made reference period errors in the 12-month reference period (Single Timepoint Sample; 7.5%) than in the 6-month reference period (27.9%; χ2 = 10.52, p < .01, Cramer’s V = .27) and the 1-month reference period (67.7%; χ2 = 56.65, p < .01, Cramer’s V = .63). Put simply, the longer the reference period, the fewer reference period errors were present in that survey, and the highest proportion of errors was found in the survey with the shortest reference period.

Comparison of Errors, Non-dated Incidents, and Incidence Rates Across Reference Periods.

Some respondents in the Longitudinal Sample (four on the 1-month follow-up and six on the 6-month) reported experiences that were within the reference period, as well as other experiences that were outside of the reference period asked about (since they provided dates for multiple rapes/attempted rapes). Despite their reference period errors, they were not removed from the corrected incidence rate estimates because they were also victimized within the reference period.

Includes some respondents who also had reference period errors (and were included in the count of respondents with reference period errors).

Excludes (i.e., counted after removing) those who also had reference period errors.

Moreover, 8.2%–31.3% of participants reported at least one rape or attempted rape without providing any date at all (3.2%–31.3% if counted after removing those who also had reference period errors; see Table 1). Non-dated experiences were distinct from reference period errors in that we could not confirm whether or not they occurred within the reference period. Some of these respondents provided no response at all to the follow-up date question and others explained in the open-ended textbox that they were unsure of the date (e.g., “I don’t remember the date”). In the latter case, they confirmed that an assault happened but could not provide a date. The chi-square comparison results were reversed for non-dated experiences: significantly more respondents reported experiences without dates in the 12-month reference period (31.3%) than in the 6-month reference period (8.2%; χ2 = 10.98, p < .01, Cramer’s V = .28), and in the 1-month reference period (9.7%; χ2 = 9.53, p = .002, Cramer’s V = .26). Thus, the longer the reference period, the greater the number of respondents who did not provide a date for their experiences.

Research Question 2: What Is the Nature of Reference Period Errors (i.e., What Do They Look Like)?

We identified four types of reference period errors (excluding non-dated experiences): double reports, reports made on the incorrect survey, reports with future dates, and accidental clicking. 4 Double reports and experiences missed at the last survey were errors that could only be explored and identified in the Longitudinal Sample because it was possible to cross-reference a respondent’s reports across multiple surveys. Double reports occurred when a participant reported the same rape/attempted rape incident (with the same date) on both the 1- and 6-month follow-ups, even though the reference periods were mutually exclusive. More than half (58.9%, n = 33) of respondents with errors in the Longitudinal Sample made double reports. We also found reports made on the incorrect survey among the Longitudinal Sample; that is, reports that had dates outside of the reference period being asked about on that survey but not outside the time frame of all study surveys. In other words, the incidents should have instead been reported on a previous survey as they occurred within that time frame but were not. For example, a respondent may have reported a rape on the 6-month follow-up that should have instead been reported on the 1-month follow-up but was not. Roughly 20% (n = 11) of respondents with errors in the Longitudinal Sample made reports on the incorrect survey.

Reports with future dates and accidental clicking were much less common but could be (and were) identified in both the Longitudinal and Single Timepoint Samples. Five respondents (8.1% of the 62 with reference period errors across the Longitudinal and Single Timepoint Samples) reported one or more rapes/attempted rapes with dates in the future (i.e., dates that had not yet passed at the time of the survey). Finally, six respondents (9.7% of those with reference period errors) indicated in the open-ended feature in the follow-up date question that they must have accidentally clicked something on a previous survey page because they had not experienced a rape/attempted rape (e.g., “That did not happen. I hit the button by mistake”), or not within the timeframe asked about (e.g., “This happened a long time ago, not within the last 6 months”). Although not a reference period error per se (given that these respondents generally indicated not having had an experience at all), we have included accidental clicking errors in our analysis because they were only identifiable by way of the open-ended date (and other) follow-up items.

In addition to helping us identify some of the reference period errors above (e.g., accidental clicking), the open-ended responses to the follow-up date question provided additional insights into the nature or reason for reference period challenges and errors. Some respondents provided text responses with approximate dates (e.g., “Valentine’s Day” or “Labor Day weekend” with no year, or a date with an indication of uncertainty or approximation such as “I think” or “ish”). Others appeared to respond about multiple experiences, which they were unable to capture in a single calendar day (e.g., “This all happened from October to February”). As noted, we counted imprecise dates as errors only if they clearly fell outside the reference period asked about. Regardless, these types of responses suggest that victims sometimes report multiple experiences at once, are sometimes uncertain about the exact date of an event, and sometimes use holidays and other notable calendar events as memory markers (at least when prompted).

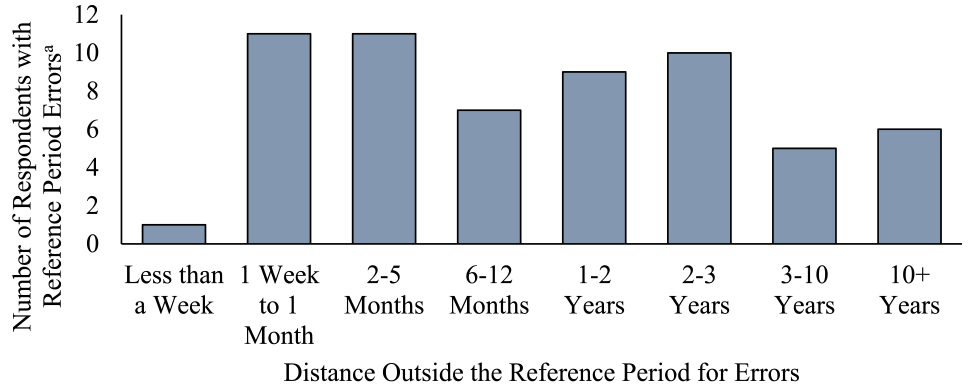

Lastly, we also explored how far outside of the reference period each error was and found that, of respondents with errors for which a specific date was provided (i.e., excludes those with accidental clicking and unknown date errors), 20% reported incidents with dates within one month of the start of the reference period asked about. Half (50%) reported incidents with dates more than a year outside of the reference period, and 10% provided dates more than a decade outside of the reference period. See Figure 2.

Distance outside of the reference period for errors in both samples (N = 60).

Research Question 3: What Impact Do Reference Period Errors Have on Rape and Attempted Rape Victimization Incidence Estimates?

Table 1 presents the changes to rape/attempted rape incidence rates with respondents with any reference period errors and non-dated incidents removed (sequentially). Removing participants with any reference period errors reduced (a) the 12-month rape/attempted rape incidence rate by 0.6%; (b) the 6-month rate by 2.1%, and (c) the 1-month rate by 6.6%. Removing those with non-dated incidents reduced the incidence rates by an additional 2.6%, 0.6%, and 0.3% for the 12-, 6-, and 1-month reference periods, respectively. The largest total reduction (6.9%) was in the survey with the shortest (1-month) reference period, followed by the 12-month (3.2%) and 6-month (2.7%) reference periods. Thus, not only was the 1-month reference period subject to the highest proportion of reference period errors compared to other reference periods, but those errors impacted the SV victimization incidence estimates to the greatest extent. Importantly, in the Longitudinal Sample we were able to identify 10 respondents (four on the 1-month follow-up and six on the 6-month) who reported multiple experiences, some of which were within the reference period and some of which were outside of the reference period (since they provided dates for multiple rapes/attempted rapes). Despite their reference period errors, these respondents did not impact the incidence estimates since they had also been victimized within the reference period in question.

Discussion

Surveys that ask respondents to report on incidents that happened within specific periods of time (e.g., since age 14, in the last 6 or 12 months) are common in SV victimization research. These reference periods help researchers estimate the prevalence and incidence of victimization. Yet, the extent to which participant responses are prone to reference period errors (reports from outside of the survey reference period) is typically unknown. In this study, we investigated the extent, nature, and impact on incidence rates of reports from outside of survey time periods (known as reference period errors) using secondary data from two discrete sources of data that used the SES.

The two studies from which the data were drawn for our secondary analysis first asked participants about victimization within a specified time period using the SES and then asked about the date of rapes and attempted rapes using a calendar and supplementary open-ended response option (i.e., two-stage approach). Cross-referencing the dates reported with the specific reference period asked about allowed us to examine the extent (or prevalence) of reference period errors in SV victimization surveys with different reference periods. We found that 7.5%–67.7% of rape and attempted rape victims made reference period errors, with an additional 3.2%–31.2% reporting at least one non-dated experience. Contrary to past research that has found that longer reference periods are associated with a higher degree of error in estimating the incidence of intimate partner violence victimization and health care use (Kjellsson et al., 2014; Yoshihama et al., 2005), we found that the survey with the shortest reference period (~1 month) had the highest proportion of reference period errors, while the survey with the longest reference period (12 months) had the lowest proportion of reference period errors. In other words, the longer the reference period, the fewer errors there were. In contrast, more participants did not provide dates at all in the longest (12-month) reference period. This pattern might indicate that errors on longer reference period surveys are more commonly due to not remembering a precise date (explaining the non-dated experiences) and errors on shorter reference period surveys are more commonly due to other issues such as wanting to report events that happened longer ago (explaining the prevalent double reports and reports on the incorrect survey).

Next, we also provided insight into the nature of reference period errors. We identified four types of reference period errors (excluding non-dated experiences). First, double reports—made by more than half of respondents with errors in the Longitudinal Sample—occurred when a participant reported the same incident (with the same date) on two follow-ups with mutually exclusive reference periods. Second, reports made on the incorrect survey—made by about 20% of respondents with errors in the Longitudinal Sample—occurred when participants erroneously made reports on one follow-up survey that should have instead been made on another. This finding aligns with past research which has found that some reports of SV victimization are inconsistent over time, as respondents’ recall, understanding, and labelling of what happened changes (Rowlands et al., 2021; Shachar & Eckstein, 2007). Third, reports with future dates (i.e., when participants reported experiences with dates that had not yet passed at the time of the survey) were made by 8% of respondents with errors across both samples. Finally, when asked follow-up questions about their experiences, 10% of respondents used an open-ended response option to indicate that they accidentally clicked something and had not experienced a rape/attempted rape within the specified reference period. In the case of future dates, it is possible that respondents chose dates at random or without paying close attention to the month, or meant to indicate a date from the previous year. The same could be said for rapes with unknown dates and accidental clicking in surveys. We also explored how far outside of the reference period each error was and found that, while some respondents reported on experiences that happened days or weeks outside of the time period asked about, many respondents provided survey responses about events that took place years or decades outside of the survey time period.

In addition to helping us identify some of the reference period errors above, the open-ended responses to the follow-up date question provided additional insights into the nature or reason for reference period challenges and errors. Indeed, this feature (in addition to the calendar feature) appeared to have helped many respondents convey additional information and nuance about the timeline of their experiences, particularly when they had multiple experiences or were unable to capture experiences in a single calendar day. Approximate dates manually generated from the open-ended responses (e.g., “labor day weekend”) helped the researchers in the original studies reduce error and avoid excluding participant reports due to missing specific date information. Based on this insight, our recommendation is that researchers include an open-ended textbox to complement closed-ended date or calendar items.

Finally, we explored the impact of reference period errors and non-dated experiences on incidence estimates. Excluding participants with reference period errors reduced SV estimates by 0.6%–6.6%, with the greatest impact on the 1-month reference period survey. These findings broadly indicate that future researchers using surveys with short reference periods should be particularly attentive to the potential inflation of SV estimates caused by time period-related errors. Excluding participants with non-dated experiences reduced SV estimates by an additional 0.3%–2.6%, with the greatest impact on the 12-month reference period survey.

Each specific type of reference period error can also have individual impacts on SV estimates. Double reports by participants in longitudinal designs, for example, might inflate the estimate of how many SV experiences those participants have had. Double reports might also inflate the overall incidence estimate in the subsequent time period if the rates are not adjusted accordingly. Reports made on the incorrect survey could inflate the SV estimate on one survey but deflate it on the other survey where it was missed. Reports of future dates and accidental clicking could leave the SV estimate unchanged or inflate it for the specified period if the true date was outside of the reference period. Using survey technology that can limit the calendar function to the specified time period (begins day after last survey was completed and does not allow future dates) may address some, but not all, of these issues. Unless longitudinal studies include additional questions to allow reporting of incidents that were “missed” in earlier surveys, technological solutions that prevent reference period errors may create other issues for victims.

Study Strengths and Limitations

The samples used in the current secondary analysis were large and diverse in terms of gender, race, age, sexuality, and other demographics. Most previous research on SV victimization, including one of the only previous studies to look at SV reference period errors (Krebs et al., 2021), includes only women. The Single Timepoint Sample used in the current project (n = 977) included participants of all genders and roughly represented the undergraduate population from which it was drawn (Jeffrey et al., 2023). The Longitudinal Sample (n = 577) included a diverse sample of women students from multiple Canadian universities.

The use of two sources of data with different sampling designs and reference periods, but consistent (and best practice) data collection methods for accurately identifying SV victimization, also allowed for greater triangulation and generalization. Both studies used the SES-SFV (validated for diverse respondents; Canan et al., 2020; Cecil & Matson, 2006), the modified timeline follow-back procedures in the date questions (See Belli, 1998), consistent instructions and calendars, and an open-ended feature to maximize the chances of catching errors and to improve overall reporting accuracy. In addition, the 12-month reference period from the Single Timepoint Sample is particularly important because it is one of the most common reference periods used in SV research (e.g., Koss et al., 2007), and findings from our secondary analysis of the Longitudinal Sample data also provide important insight into participant responses over time. The findings from this study complement previous studies which have examined how SV reports change over time (e.g., Rowlands et al., 2021; Shachar & Eckstein, 2007). Inconsistencies in reports over time are often linked to a concept called temporal discretion (Cook et al., 2011), which refers to respondents’ ability to discriminate their answers from two mutually exclusive time frames (e.g., discriminate between events that occurred in the summer versus the winter, or 2 weeks ago versus 2 months ago; Koss et al., 2007). However, there has yet to be any published research exploring errors related to temporal discretion using the SES on SV incidence estimates (Anderson et al., 2017).

Despite these advantages, comparing differing study designs (i.e., a random sample survey and a longitudinal evaluation of a sexual assault prevention program) comes with inherent discrepancies. First, there may have been different levels of participant investment, and therefore time and effort, in the research. Had this been a major influence, however, we would have expected fewer (not more) reference period errors in the Longitudinal Sample. Second, the Longitudinal Sample had more opportunity to make reference period errors than the Single Timepoint Sample because they were allowed to report on multiple rape and attempted rape experiences on each of the follow-ups, while respondents in the Single Timepoint Sample were only asked to provide a single date for the rape and a single date for the attempted rape they think about most. We opted to include all experiences reported by the Longitudinal Sample (rather than selecting only one rape and one attempted rape, rendering the number of reports more equivalent) because we had no way of knowing which experiences were most salient, and this could have introduced an additional confound. In acknowledgement of this, we counted the number of victims with errors rather than the total number of errors. Nevertheless, it is possible that our finding that more errors were made in the 1- and 6-month reference periods (by the Longitudinal Sample) was impacted by this methodological difference. Replication is therefore needed with more parallel study designs. There was also some variation in the number of weeks included in each participant’s reference period in the Longitudinal Sample given that not all completed their surveys promptly when sent. Thus, our labeling and comparison of the 1- and 6-month reference periods may reflect reference periods that fall outside of those windows. Accordingly, these labels are subject to a degree of measurement error. Nevertheless, individual reference periods were non-overlapping and most participants’ reference periods clustered around 1 and 6 months, as indicated by the means and medians.

Our findings also may not apply outside of university populations or may differ across different groups (e.g., gender, race, sexuality) who might interpret SV survey items differently. While there are concerns about the under-reporting of violence against men (Bullock & Beckson, 2011) and racialized groups (Tajima, 2021), there is no theoretical or empirical evidence to suggest that there might be differences in reference period errors based on gender, racial, or ethnic group membership. It is possible, however, that for certain groups the timing of victimization events may be more or less salient. These questions might be of interest to future researchers who are exploring group differences in relation to memory of traumatic incidents, for example.

Additionally, the follow-up date/calendar question used in our samples (and that we recommend for future use) cannot catch all reference period errors and does not guarantee that all respondents will respond or that responses will be accurate. Participants might mistakenly provide a date that is outside of the survey reference period even if the assault truly happened within the right period. Reducing error from this type of mistake would be possible with survey calendars that allow restriction to specific time periods, or through triangulation of additional sources of data, such as follow-up interviews with participants (Gaskell et al., 2000). Finally, although the SES measures a range of experiences including coercive acts, we only analyzed calendar data from rapes and attempted rapes.

Recommendation to Include a Follow-up Date Question: Implications and Considerations

Accurate and precise measurement of SV victimization is important for informing policy and prevention initiatives. Some research designs use mutually exclusive reference periods to measure SV victimization incidence. In other designs (e.g., program evaluations), it is critical to understand whether assaults occurred pre- or post-intervention or during participants’ time in a particular context (e.g., university). In each of these cases, collecting data on the dates of reported incidents is recommended. A calendar or other follow-up date question allows researchers to establish whether reference period and related errors occurred and adjust SV estimates accordingly. Researchers should make decisions on a case-by-case basis about which types of date-related errors to exclude. For instance, participants should normally be permitted to skip survey questions including follow-up date questions and, therefore, non-dated victimization reports should not necessarily be excluded from victimization rates (and were not in any of the original studies published by the research teams; e.g., Jeffrey et al., 2023; Senn et al., 2015).

Inclusion of follow-up date questions fits within existing best practices in SV measurement, such as the two-stage approach to self-report surveys (Krebs et al., 2021). Asking about the date of victimization events can prompt deeper thinking about the questions and reference period in question (Glasner & Van Der Vaart, 2009; Krebs et al., 2021) and provides participants with an opportunity to notice and indicate if they had accidentally clicked something earlier in the survey. The calendar feature might also help participants who have been repeatedly victimized organize multiple experiences on a single timeline (Abbey et al., 2005). Lastly, while the visual element of the calendar has been found to improve overall data completeness in other general surveys (Glasner & Van Der Vaart, 2009), evidence from this analysis indicates that the open-ended feature complements a closed-ended calendar.

Overall, this secondary analysis demonstrates that follow-up date questions can improve data accuracy, although their inclusion does increase demand on researchers somewhat, as they must then manually cross-reference each datapoint to locate any reference period errors. It also increases demand on participants, who must respond to additional questions about their traumatic experiences. Increased burden can impact the likelihood of dropout or item nonresponse (Krebs et al., 2021). However, overburdening participants is an issue with respect to all types of follow-up questions (see Cook et al., 2011) and is not limited to date questions specifically. Researchers can also limit the burden of follow-up questions by only asking about a subset of experiences (e.g., rape) rather than all types of SV victimization. For survey designs that do not require a specific date, researchers might consider simply asking participants to confirm that an event occurred between two specific dates. This approach would reduce the complexity of the reflection task for participants and the data coding for researchers.

Future Research

Future researchers should explore through experimental or quasi-experimental designs whether follow-up date question format impacts data quality. For example, while calendars may provide participants a visual memory prompt, open-ended questions may provide clarification to researchers in instances where participants can only remember an approximate date (as it did in this study). Qualitative follow-up interviews or think-aloud studies (Evans et al., 2016; Jeffrey & Senn, 2023) would provide additional context regarding where and when these errors are made, allowing us to learn more about participant motivation and other ways of improving accuracy in time reports. Other types of calendar instruments, such as Life History Calendars, a tool that combines a visual calendar with a structured interview, also represent an important area of future study (Yoshihama et al., 2005).

Conclusions

Reports from outside of survey reference periods represent a possible threat to accurate SV victimization measurement. The impact of these errors is particularly troubling in pre/post designs where researchers need to know how many assaults happened before or after a targeted intervention (and thus, the reference period is critical). If either pre- or post-intervention estimates are inflated or deflated, this could result in falsely inferring a program is or is not effective. Accurate measurement of SV prevalence is critical when assessing the success of violence reduction programs. While including follow-up date questions and identifying reference period errors slightly increases the demand on researchers as well as respondents, it improves data accuracy, sometimes substantially. Our recommendation, therefore, is that it be considered best practice to include follow-up questions about the date of SV experiences in surveys that use the SES or other similar SV measures that rely heavily on temporal reference periods.

Footnotes

Acknowledgements

We would like to thank Dr. Karen Hobden for her invaluable help on this project.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interests with respect to the authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: Gena K. Dufour is supported in part by funding from the Social Sciences and Humanities Research Council of Canada (File number 767-2021-1459). One of the data sets analyzed was gathered in a study funded by a Canadian Institutes of Health Research Project Grant (FRN: 148843), the other with funding from the Canada Research Chairs Program to Charlene Y. Senn.