Abstract

This study explores how students’ use of AI to generate information about social issues affects the results and dynamics of classroom discussions. The idea is to examine AI’s contribution when it is used by students the way they typically would, employing conceptual tools of Basil Bernstein and Legitimation Code Theory. The data comes from two discussion-based lessons taught in three upper-secondary social studies classes in Sweden. Results show that students’ ideas may be influenced by biased AI-generated information foregrounding a more essentialist view of gender while marginalizing non-binary perspectives. This indicates that generative AI can be a powerful agent in the classroom, and we identify AI optimism and vigilance in students’ views and relations to AI as an agent. We further suggest that vigilance requires (a) AI literacy to understand its limitations and uses and (b) clarity about what the core requirements of the task are to avoid imitation and bullshit.

Introduction

Students’ access to generative AI is now a new reality in formal education. How does students’ existing AI use integrate into classroom practices? So far, generative AI may be described as a double-edged sword in education (Crompton & Burke, 2024; Giannakos et al., 2024; Lo, 2023). Emerging studies show that generative AI can support students’ writing (Levine et al., 2024; H. Nguyen, Nguyen, et al., 2024; Wu & Xu, 2025) and creative problem-solving (Urban et al., 2024), while some link ChatGPT to improved critical thinking (Suriano et al., 2025) and others to its decline (Gerlich, 2025). The use of generative AI in education also comes with concerns about potential skyrocketing in cheating and “metacognitive laziness” (Bastani et al., 2024; Cotton et al., 2024; Fan et al., 2024).

Following the call for more context-specific investigations of technologies in education (Williamson et al., 2024), this article explores students’ use of generative AI in classroom discussions of social issues. Such discussions, which aim to develope students’ abilities and virtues needed for democratic life, are one of the key practices in social studies and civic education (Hess & McAvoy, 2014). In this study, we will focus on civic reasoning as one of those abilities. To engage in civic reasoning means “to think through a public issue using rigorous inquiry skills and methods to weigh different points of view and examine available evidence” (Lee et al., 2021, p. 1). It can be seen as a domain-specific form of critical thinking that benefits from intellectual tools of social sciences (Tväråna & Jägerskog, 2023).

It is common to give students different materials to support their learning and discussions about social issues in the Swedish social studies classroom (Bernmark-Ottoson, 2009). However, now students can opt to utilize AI for this purpose. What happens when students use generative AI to explore different perspectives and arguments on a social issue? On the one hand, tools like ChatGPT are designed to summarize public discourse as it appears online and accessibly present it. This capability can help both students and teachers by making preparation for classroom discussions easier, which is a key factor in fostering high-quality discourse (Hess & McAvoy, 2014). On the other, using AI for this purpose comes at risk of spreading misinformation (Kidd & Birhane, 2023) and biased representation (A. Nguyen, Hong, et al., 2024). Alongside content, generative AI can impact the processes of civic reasoning, although discussion as a task seems relatively GPT-proof in the sense that it requires student activity and does not allow direct “copy and paste.” Building on these considerations, we present a classroom study exploring the following question: how does students’ use of generative AI to access public discourse affect the results and dynamics of classroom discussions of social issues? Our interest is not to suggest good practices of AI-supported teaching, but to investigate how generative AI may influence teaching and learning when students in ordinary social studies classrooms use it.

Theoretical Framework

This study employs analytical tools from Basil Bernstein’s sociology and its development within Legitimation Code Theory (LCT). Our use and interpretation of Bernstein’s concepts align with social realism (Barrett & McPhail, 2023; Moore, 2013) and social studies didactics (Aashamar & Klette, 2023). We approach classroom discussions of social issues as recontextualization of public discourse. In Bernstein’s framework, recontextualization refers to the constituting principle of pedagogic discourse that “selectively appropriates, relocates, refocuses, and relates other discourses to constitute its own order and orderings” (Bernstein, 2003, p. 184). Similarly, in discourse studies, it has come to mean “what is included and what is excluded from the events and texts represented” when elements of one discourse are appropriated by another and moved to a new context (Fairclough, 2003, p. 55). In classroom discussions of social issues, discourse is moved from its original field (media, politics, etc.) to the classroom. This movement implies pedagogical transformation—both of what is discussed and how.

In Bernstein’s terms, what and how are shaped by classification and framing principles. Classification refers to the “degree of boundary maintenance” between different categories and contexts; it defines what counts as legitimate knowledge or practice within a given context. Classification is strong when boundaries between categories are explicit (e.g., traditional academic subjects) and weak when they blur (e.g., an interdisciplinary course). Framing describes control over knowledge transmission—who determines selection, sequencing, and evaluation. Strong framing means these elements are explicitly defined (e.g., teacher-centered instruction), while weak framing gives students more control (e.g., unguided inquiry). From the students’ side, recognition rules determine how they perceive and acknowledge these boundaries, distinguishing between contexts. Realization rules reflect whether they can produce contextually specific texts or practices.

In classroom discussions of social issues, classification defines what such discussions should look like—which perspectives are included, what counts as good civic reasoning, and which types of contributions (e.g., rational argument, personal testimony) are considered legitimate. Framing describes teacher control over the content and structure of discussion—how boundaries are enforced, whether structured formats (e.g., fishbowl, graphic organizers) are used, the teacher’s role as a facilitator, etc. Recognition rules influence how students interpret the expectations of discussion (defined by classification), while realization rules reflect their ability to contribute in line with these expectations.

In the classroom, discourse is transformed through interaction between teachers and students. Any movement of discourse allows for ideological transformation, which is particularly evident in discussions of social issues. Since “the outcome of framing in interaction has the potential for changing classification” (Bernstein, 2000, p. 125), each meeting between the teacher and the students can result in drastically different discussions in terms of what perspectives and contributions are seen as legitimate.

What does the introduction of generative AI add to this picture? Technological mediation theory describes technologies as not “merely functional and instrumental objects but as mediators of human experiences and practices” (Verbeek, 2015, p. 190). When used as a knowledge source on social issues, AI does not simply provide neutral information—it structures and filters discourse before students engage with it. Thus, AI influences both what is discussed and how discussions unfold. This way, it can be seen as another agent of recontextualization that has the potential to impact both what is discussed and how.

To understand these transformations in greater detail, we use the notions of semantic density (SD) and semantic gravity (SG) from Legitimation Code Theory (LCT; Maton, 2013). Semantic density refers to how much specialized meaning is condensed within a concept. For example, “heteronormativity” has a high semantic density (SD+), while “Society assumes everyone is straight” has a lower semantic density (SD−). Semantic gravity reflects the degree of context-boundedness—high semantic gravity describes meaning that is tightly linked to a specific context (e.g., Swedish “Green Party”; SG+), while low semantic gravity corresponds to more general concepts (e.g., “political party”; SG−). These two dimensions create four theoretically possible codes, but in reality, low density usually comes with high gravity and vice versa, making one-dimensional space suitable for empirical analysis. Movement between these two poles (SD+/SG− and SD−/SG+) is described as a “semantic wave” (Maton, 2013). In a classroom discussion, students are expected to move between general discourse around the issue and its contextualizations. However, the other two codes (SD−/SG−) and (SD+/SG+) remain possible. Overall, concepts of SD and SG help examine how generative AI adds to this process, aiding our understanding of its role as an agent of recontextualization.

Methods

Anchored in pragmatism to address the problems of practice (Creswell, 2021), this research explores the organic integration of generative AI in ordinary classrooms. Using a case study as an “in-depth analysis of a bounded system” (Merriam & Tisdell, 2015, p. 37), we focus on a two-lesson module developed and taught by three experienced social studies teachers in one Swedish upper secondary school.

Study Context and Data Collection

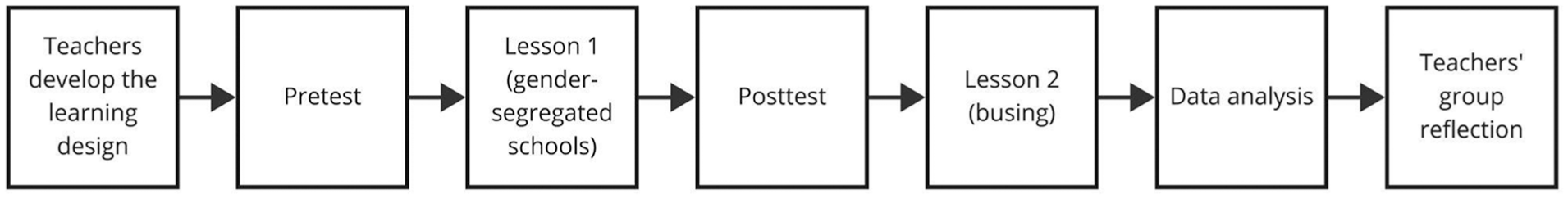

Figure 1 illustrates the process of data collection that took place in early 2024. Teachers interested in using generative AI in social studies contacted the second author and independently designed two lessons on social issues within a module on gender, ethnicity, and social class, following the national syllabus (Swedish National Agency for Education, 2011). Three teachers conducted both lessons in one class (17–18 years old), totaling six.

Research process.

It was not the teachers’ intention to instruct students in using AI but to use it as a resource in lessons aiming to highlight social issues. The first lesson used Structured Academic Controversy (Johnson & Johnson, 1993) to discuss gender-segregated schools, that is, the pros and cons of girls-only and boys-only schools. The teacher introduced the topic with examples (e.g., the UK), and students, in pairs, prepared arguments for or against it with the help of a generative AI of their choice. They then formed groups, presented their arguments, switched roles to summarize the opposing side, and finally discussed freely to reach a common standpoint. The second lesson focused on busing as a tool for school desegregation and emphasized source use. Students again prepared arguments for an assigned position but were also required to provide references. First, they were instructed to ask AI for sources in support of the generated arguments, check them, and understand that AI does not work this way and thus cannot give sources. Next, they had to support their position using sources they found themselves. Finally, pairs presented their positions in groups (except in Class A, which skipped this step).

Gender-segregated schools are not controversial in Sweden, where they are banned, but the topic connects to broader debates on gender. Meanwhile, school segregation is a social problem in Sweden (SOU, 2020:28) and a subject of political debate. Busing as a policy is implemented in some municipalities and is present in political discussions (e.g., DN, 2024; Lernfelt, 2022) but could hardly be called a “hot topic” at the time. While neither issue is emotionally charged, both allow for ideological diversity.

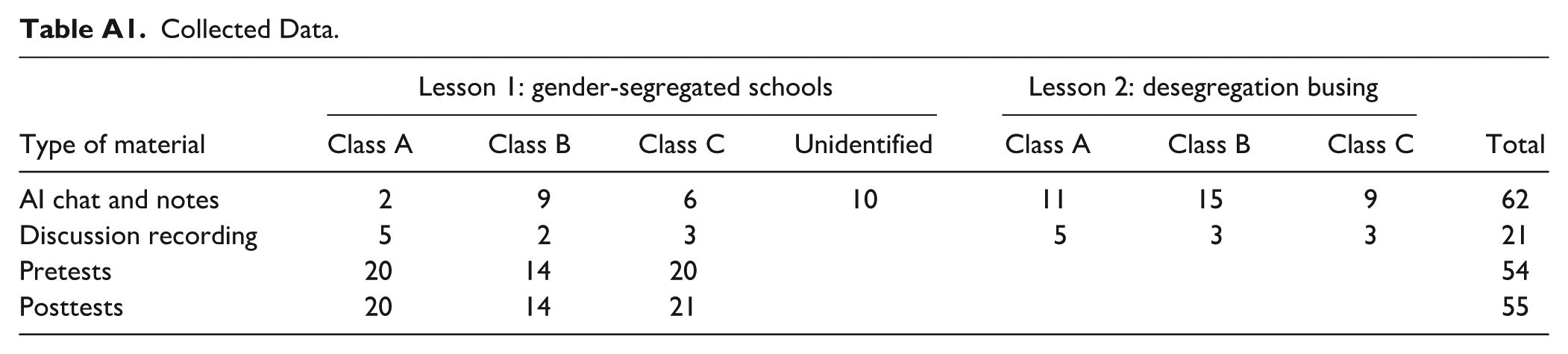

Before and after the first lesson, students completed a questionnaire. Their teacher informed them about the study, and they provided informed consent before answering the questionnaire. One student declined participation, and four opted out of recording. The first author did observations and recordings and collected student notes and AI chat threads. Table A1 in the Appendix A shows the overview of the data. This research was approved by the Swedish Ethical Review Authority, dnr 2023-04510-01.

Data Analysis

In line with pragmatism, we combined quantitative and qualitative content analysis to process the diverse data collected in the classroom (Creswell, 2021; Krippendorff, 2018). The analysis comprised three stages: (a) arguments in student tests and AI chat threads, (b) students’ views about AI as a source from posttest responses, and (c) students’ reasoning during the lessons.

In the pre-test and post-test, three open-ended questions captured student argumentation on gender-segregated schools (see Appendix A). Responses were analyzed as cohesive texts. The first author inductively categorized arguments in a codebook (Table A2 in Appendix A) and later applied them to posttest responses and AI chat threads. The second author independently coded half of the pretest responses (ICC from 0.5 to 0.87) to validate the codebook. Discrepancies were discussed and led to refinements in category definitions and some recoding. Frequencies of argument categories were then quantitatively analyzed across pretest, AI chat threads, and posttest. Two concerns are worth mentioning. First, only 17 AI chats were collected after the first lesson, and the chats were not evenly distributed across the three classes and could not be traced to individual students. However, this may not be a serious limitation because all the AI-generated content submitted by the students was relatively similar. Second, students worked with AI from assigned positions, and more “for” chats than “against” chats were submitted. To address this, arguments “for” and “against” are presented separately, with percentages calculated within each stance.

Students’ views on generative AI were recorded through an open-ended posttest question: “Do you think generative AI is useful in highlighting different perspectives on social issues? Explain.” Responses, submitted by 44 of 55 students, were analyzed inductively, identifying two distinct groups. The second author independently coded all responses using these descriptions (ICC=0.69). Discrepancies were resolved by refining group definitions and re-coding a few responses.

The analysis of student reasoning in class relied on the analytical strategies of abduction and constant comparison (Merriam & Tisdell, 2015). Primary descriptive codes for students’ actions were developed based on the lesson plan and classroom observations (e.g., “phrase the question,” “follow-up for sources”). This codebook was applied to student submissions (AI chats and notes) and audio recordings. A second coding round verified alignment with the revised codebook and introduced new inductive codes capturing nuances of actions (e.g., “AI use strategy,” “end of task”). The third analysis phase identified key categories by examining code co-occurrences and conceptual similarities, resulting in three categories: (a) interpretation and use of AI-generated arguments, (b) issue context and AI prompt, and (c) role of sources. Finally, identified patterns were interpreted using the conceptual tools of Bernstein and LCT.

Limitations

First, although we are interested in students’ organic use of AI, which is not scaffolded by carefully designed educational technologies or AI-focused instructions, the lesson pushed students to use AI more than they would do on their own. It would, however, be extremely difficult to capture and analyze students’ use of AI in this context in entirely natural conditions. Second, the theoretical framework was developed in parallel with the empirical investigation and applied to interpret the results only in the final stage of the analysis. Using analytical tools of this framework from the very beginning to code the data could have given a slightly different picture.

Results

This section presents findings from the analysis of student argumentation before and after the first lesson and their views on generative AI after this lesson. Next, it describes students’ reasoning in both lessons, focusing on their approaches to discussion and sources as well as transformations of the discussion content. Finally, we share teacher reflections on student challenges and learning design.

Changes in Argumentation

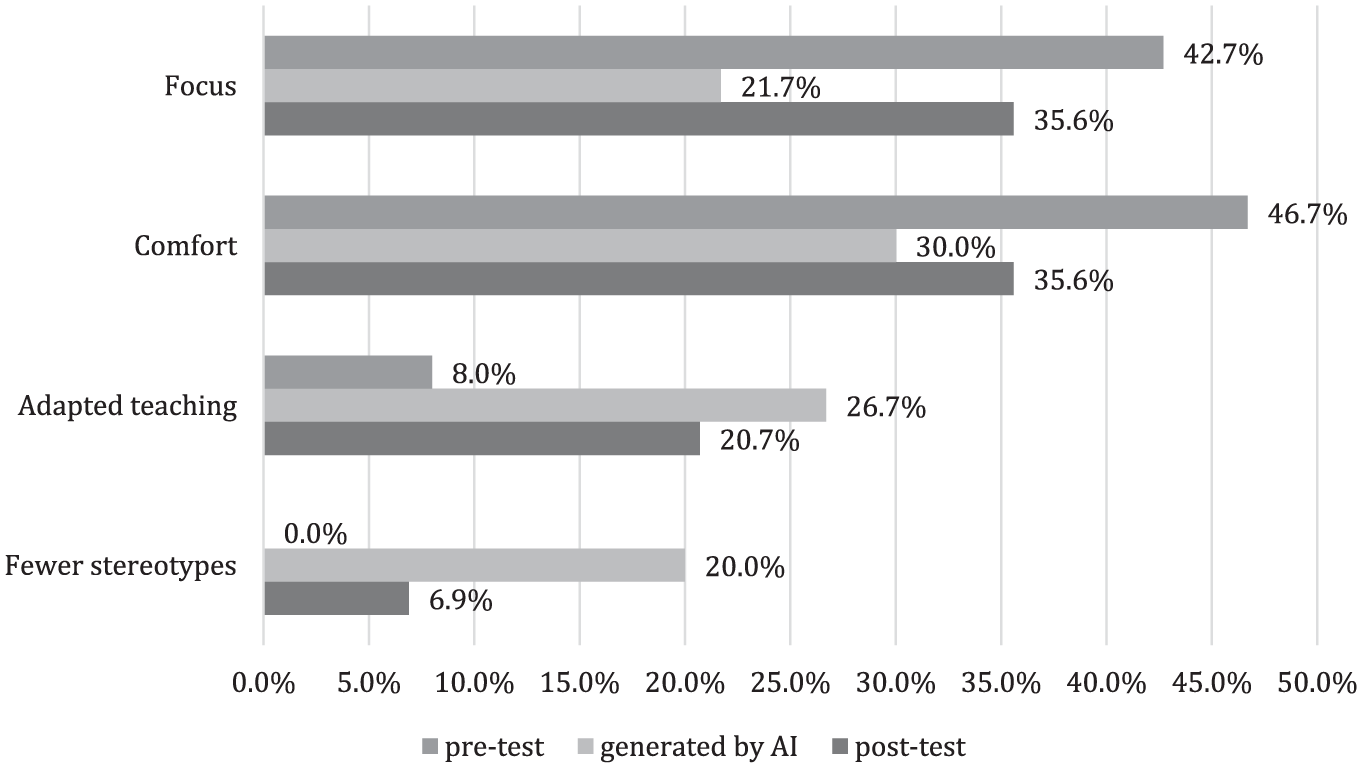

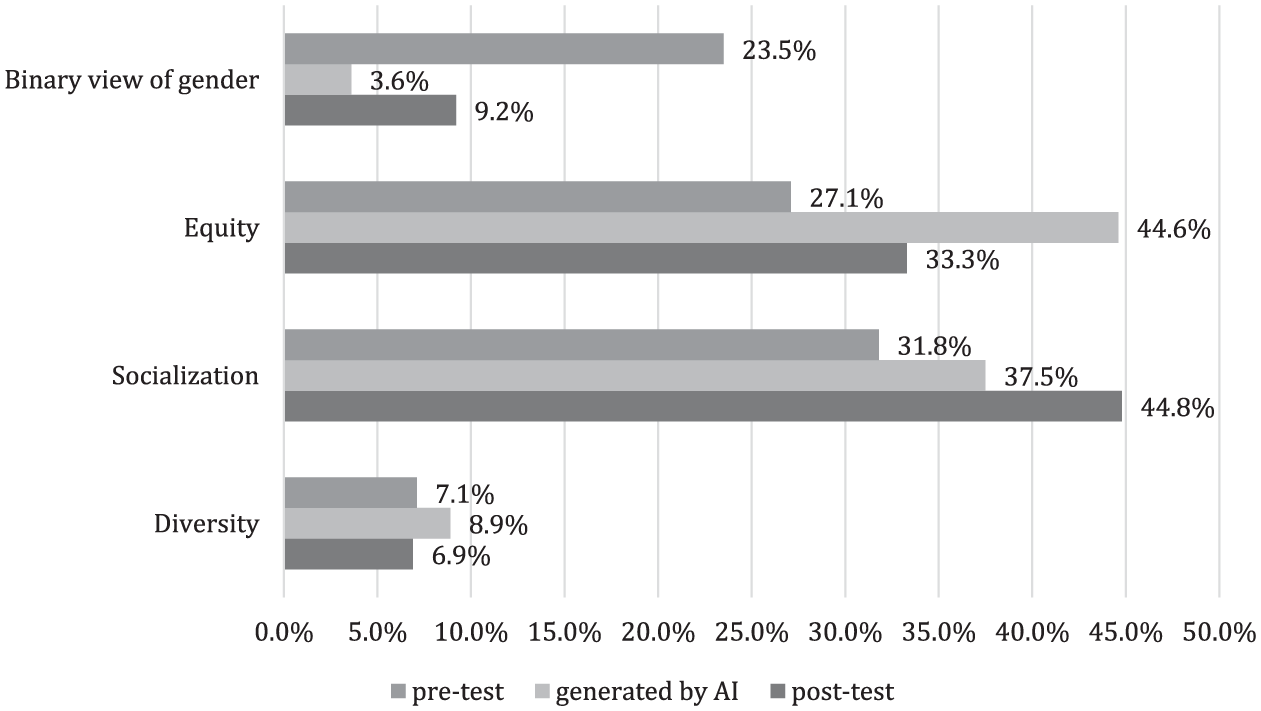

Figure 2 shows the four most common arguments in favor of gender-segregated schools in students’ tests and their chats with AI (see categories in Appendix A). Frequencies of categories are presented as percentages of the total number of arguments in favor, see absolute frequencies in the Appendix A (Table A3). Figure 3 presents arguments against.

Most common arguments in favor of gender-segregated schools, % of all arguments in favor.

Most common arguments against gender-segregated schools, % of all arguments against.

Some arguments are similarly popular across all sets. For instance, equity and socialization are key considerations against gender-segregation schools in both tests and AI-generated arguments. Still, there are some noteworthy disparities. A common argument in the pretest problematized the essentialist view of gender that the issue itself implies (“Binary view of gender”). This argument was almost absent among AI-generated and then halved in the posttests. Arguments about different learning needs and styles based on gender (“Adapted teaching”) were virtually absent in the pretest but appeared in every chat with AI and became very frequent in the posttest. Thus, students’ perspectives came closer to AI-generated content—by adding new arguments, as with gender-based learning differences, but also by omitting their own pre-existing ideas that were not represented in AI-generated content, as with transgender and non-binary people. This shows that generative AI works as a powerful recontextualization agent when summarizing public discourse in an ideologically specific way.

Views on Generative AI as a Source

Students’ open-ended responses reflected two distinct views on AI. The first group included 29 of 44 answers, reflecting optimism in relation to AI. Students in this group emphasized the efficiency and objectivity of generative AI as a source of perspectives. Most described AI as a quick way to get the right (or passable) answer: “You get the answers as quickly as possible,” “You get answers immediately and are usually correct,” and “It provides quick answers that you wouldn’t have been able to google yourself.” Alongside focusing on “the answer,” such responses often mention using AI to quickly get “the facts.”

“Optimistic” students may also see AI as unbiased and a good source of knowledge about society, claiming, for instance, that “AI gives several perspectives and does not take a position on the issue.” Some students in this group made comparisons between AI and humans, not in favor of the latter, for example, “AI can gather an incredible amount of facts that, for example, a teacher cannot gather, and it is not based on any personal opinion.” In these responses, AI stands out as more knowledgeable and objective than humans.

The second group takes a more critically aware approach to AI, reflecting activation of epistemic vigilance—“mechanisms targeted at the risk of being misinformed” (Sperber et al., 2010, p. 359). Only 15 of 44 answers belong to this group. These students highlighted that ChatGPT, or its alternatives, did not provide any sources and noted how AI systems could make mistakes, making it important to check output against other sources. Some “vigilant” responses also mention and problematize the selection of perspectives that ChatGPT provided, for instance:

I don’t really trust AI; the source is unclear, and I don’t think you get different perspectives. I particularly reacted to the lack of information and arguments about people who do not identify as girls or boys or as their biological sex.

Students in this group, however, are not entirely opposed to generative AI. Instead, they focus on its effective uses and strengths while also considering its limitations. For instance, most of them mention that tools like ChatGPT can be helpful in the initial stages of a search, stating that: “AI can give you a good start in the search for arguments or new perspectives” and “AI creates a foundation and a beginning to continue further.” Noteworthy, students from this “vigilant” group are mostly from one class. It is also the only class where the teacher explicitly addressed AI’s limitation at the end of the lesson and gave an example of ChatGPT being factually incorrect. However, the lack of a valid comparison makes it impossible to say whether the teacher’s actions had an effect.

This difference in students’ views on generative AI—between optimism and vigilance regarding its role in accessing discourse on social issues—can be understood on two levels. First, much optimism stems from the lack of AI literacy, leading students to believe it is correct and unbiased. However, repeated references to getting “the answer” also reveal potential problems with recognition rules when civic reasoning in a classroom discussion is substituted by “doing the task.” For “vigilant” students, AI-generated content alone is not enough in relation to civic reasoning, revealing that civic reasoning for them is supposed to include evidence and consider different perspectives. For most “optimists,” AI-generated content has its value as “the answer,” which implies a very different picture of civic reasoning.

Reasoning With AI in Class

Civic Reasoning versus “Doing the Task.”

Teachers planned for a relatively strong framing of the lesson. Students were expected to prepare and discuss using a highly structured format with explicit steps and roles. In the second lesson, they were also given a structured task to guide their work with sources. However, students showed important differences in civic reasoning, both in their approaches to discussion and their use of sources.

Three distinct approaches to the discussion were identified. In the first case, students used AI to get several arguments and later just read them from the page in the group discussion. Doubts are dismissed or ignored:

(presents an argument)

I do not get this one.

I do not even care.

Ok then (presents the next argument) (class A, lesson 1)

No selection or exclusion of ideas happens at any stage during these “copy and paste” discussions. Preparation in pairs is finished the moment AI has generated the answer, group discussion—when both sides have read these answers in line with the prescribed discussion structure. They followed the instructions and completed the task without engaging in any civic reasoning. It could implicate that recognition rules for civic reasoning in this case were reduced to doing all the steps of the task.

In the second case, students endorse some AI-generated arguments based on the presence or absence of immediate agreement. If doubts arise, they are often settled by dropping the argument:

This one is weird; fewer gender differences. Is it not the opposite? That’s not it.

No. (reads again the argument from AI)

Hmm, I do not know what we should do with it.

We take it away.

Yes. (class A, lesson 1)

This quick filtering of ideas makes sense, given the abundance of ideas that generative AI creates. Analysis of generated prompts shows that students mostly go for quantity, asking AI to give “all the arguments in the world” or follow-up prompts asking for more ideas. Meanwhile, only two of the submitted AI chats had follow-ups aimed at elaboration or explanation. Quantity was also the main topic in intergroup conversations, as students kept asking classmates from other groups how many arguments (or sources) they had. This information seems to have been helpful in reconstructing task criteria to decide the “good enough” quantity. Having a “reasonable” number of arguments was used as the base to consider the task done. It was enough to use AI to generate many ideas and choose those that seemed intelligible.

This approach reappears in group discussions when students rush to consensus through a quick exchange of opinions. The only time someone voiced a dissenting opinion, it was immediately dismissed, and the group proclaimed the task finished. Finding a technical compromise was another quick solution that prevented students from deeper engagement with ideas:

You can separate girls and boys for some tasks, not the whole school.

Yes, you can compromise.

Like with sport.

Yes. (silence) What do we do next? (class A, lesson 1)

Although this group did spend some time reiterating and putting against each other the most convincing arguments from both sides, they did not discuss contradictions (will stereotypes decrease or increase), dubious claims (gender-based learning styles), or missing perspectives (transgender or non-binary). Reaching a compromise worked as a legitimate way to end the discussion and go on to social chitchat for the rest of the lesson.

Compared to “copy and paste” discussions, this Tinder-like, consensus-based approach has more student engagement with the task but allows for only very limited civic reasoning. Again, students closely followed the instructions but seemed to lack meaningful criteria for good reasoning, linking recognition rules to superficial attributes, such as the number of arguments and the absence of disagreement.

These two approaches can be contrasted with the third one, when students stayed with AI-generated ideas a little longer to question or develop them by connecting to other ideas, background knowledge, or experience. Here, doubts worked as a means of collective inquiry even in the face of general agreement in the group:

Are there really differences between girls and boys in how they learn? Boys and girls learn the same way.

Yes.

It is probably more of an individual question than a gender question.

But the argument was probably that guys are less mature and that is why it is difficult for them to study. But it probably levels out when you grow up. (class A, lesson 1)

The focus of such discussions lies in the process itself, and students actively look for contradictions or questions to explore and consider the task done when they have nothing else to discuss. In other words, they seem to follow recognition rules close to good civic reasoning, as described in the literature.

During the first lesson (gender-segregated schools), sources and evidence did not appear to be something students actively considered. Repeating the output from AI, they often made a general reference to “some studies” or “research” without naming it. Only twice did students engage in lateral reading to verify claims—once made by the AI and once by a classmate. The second lesson (busing) was designed by the teachers to specifically draw students’ attention to sources. The fact that popular AI did not construe arguments in an evidence-based manner surprised many students. They also did not notice it right away and instead just copied and pasted nonsensical links and titles into their worksheets, which required the teacher to nudge the students to click on the links and google the titles.

The problem students experienced when evaluating AI-generated sources and searching for their own later was the lack of understanding of what they were looking for in the first place, which again can be linked to recognition rules. About half of the student submissions included sources for the topic instead of sources of evidence for specific claims. The number of sources was primary when considering the task done: “We have found three sources. It is enough” (class A, lesson 2). In this case, it was enough to google the topic and select some relevant publications, and no corroboration of AI-generated content was done. Those who used sources to support the specific claims had to engage in a more nuanced use of AI. For instance, one group immediately recognized that Bing is more suited for the task, as it connects ideas to sources. They used it in combination with ChatGPT to utilize their comparative strength. However, while this group was genuinely engaged when solving this task technically, they did not really read the sources they got, which became evident in the discussion later.

To summarize, despite receiving the same explicit instructions (realization rules) on how to discuss and how to support arguments with sources of evidence and despite closely following those instructions, some students engaged in very limited or no civic reasoning. Their discussions contained neither developed rational argumentation nor genuine personal engagement and focused instead on “doing the task.” Students considered the task complete after finishing steps, resolving disagreement, and listing enough arguments and sources. It was especially difficult for students to see the connection between sources and discussion, which resulted in sources being treated as a formal requirement. This contrasts with students who, given the same instructions, actively explored ideas in discussion and used sources and evidence to support and question claims. Both types of students interpreted and developed the instruction they received, but in different ways, revealing a lack of clarity about the recognition rules when it comes to civic reasoning. The instructions could have aided in explicating realization rules, but they could not be fully effective since recognition rules were not established first. What AI adds to this picture is the opportunity to imitate the discussion and to successfully do the task without using civic reasoning.

AI-generated Content and Semantic Waves

Both gender-segregated schools and busing were introduced first as concepts and then “unpacked” by giving specific examples, which is a typical semantic wave from specialized general terms (SD+/SG−) to explanations using everyday language and concrete applications (SD−/SG+). As discussion issues, they were introduced as general policies without connection to any specific context (SG−). Most students used this formulation directly to prompt AI for arguments (“give me arguments for gender-segregated schools”). Following this contextless prompt, AI-generated content that was low in its connection to context and used some specialized terms, such as “gender-specific harassment,” “stereotypes,” “learning styles,” or “educational choice” (SD±/SG−). As described in the previous section, many students did not transform AI-generated content at all and repeated argument verbatim, which implies a “flattening” of the discourse. Arguments were not “unpacked” and specified with evidence and examples, but also not “repacked” to generalize their meaning on a conceptual level, providing limited opportunities for learning.

In contrast, those who engaged in critical inquiry using AI-generated arguments did so by performing semantic waves. “Unpacking” the general policy by linking it to experience was the key step that distinguished these discussions:

(reads) Less distracting behaviours.

This is damn true!

What does it mean?

(laughs) Guys can go crazy . . . (class A, lesson 1)

Apart from personal experiences, students contextualized the issue by relating it to discussions on social media, such as incels, gender-neutral bathrooms, and even falling birth rates. These groups also could move back from an example to a more abstract level, clarifying their understanding of concepts and connecting a specific policy issue to the notion of identity in general:

What I am thinking about is what if someone does not identify with a gender? Where do they go? Not to school.

Then you go to . . .

To daycare for dogs!

To a non-binary school, I think.

No, I do not want to be mean, but for real, if a person identifies this way, I have seen it on TikTok. Furries. (students laugh)

It is weird to fall in love with things.

(changes tone to serious) It has nothing to do with it, but to identify yourself, identity.

Aha, these are two different things. (class B, lesson 1)

The crucial role of semantic waves becomes even more evident in the case of busing. Here, a direct prompt in Swedish (“give me arguments for busing”) resulted in very different interpretations of busing. Instead of connecting it to school segregation, AI could understand it as the development of buses as a means of public transport, as the introduction of dedicated buses for schoolchildren, or as bushing (technical equipment which is also called “bussning” in Swedish). This ambiguity highlights the importance of anchoring concepts within a specific intellectual framework or contextual domain. Without such connections, “busing” remained in a conceptual “dead zone,” characterized by both low semantic density (SD−) and low semantic gravity (SG−).

This problem (when recognized by students or highlighted by the teacher) required a reformulation of the prompt. Successful prompts either introduced busing as a specialized term by adding “for desegregation,” making its meaning more condensed (SD+), or contextually specified it by adding “of students” (SG+). Less successful prompts represent failed attempts to give meaning to busing by moving it in the semantics space. Some just switched to English, which resulted in a context-bound interpretation (SG+) within the US discourse with its specific institutional history of racism and exclusion. Others tried to “unpack” busing, but ended up replacing it with a more general issue of diversity (“why it is good to mix students at school”).

Different results of directly prompting AI for arguments, as well as differences in reformulation strategies, led to students preparing for very different kinds of discussion. For instance, while one pair could have gathered arguments for busing against racial segregation in the US in the era following Brown v. Board of Education, their opponents could have prepared to argue against investment in public transport. If misunderstanding was obvious, this mismatch was somewhat productive, as it helped students clarify the concept. Sometimes, it was less clear, fueling confusion:

I have found research that shows that diversity in the class leads to better academic results for all.

But then there is nothing that shows that busing necessarily improves the quality of education.

But I have found this one study.

What I mean is that the focus should be on improving existing schools instead of bringing students from other schools, hoping that will change something. (class B, lesson 2)

Even if students managed to get the correct interpretation in preparation with AI, they could switch to the wrong one when trying to connect it to their experience in the discussion, failing to do a semantic wave. For instance, this group spent most of the discussion focusing on desegregation busing but “unpacked” it as public school transportation when they started discussing freely at the end:

I think busing is bad. If we had had it in Sweden, this would have been unnecessary.

But what about our buses?

Busing is one bus that goes around, like in the US. School bus. They do not have regular public transport buses. As I understand it, distances are also longer in the US, and towns are bigger. (class C, lesson 2)

To summarize, successful semantic waves were a crucial element of civic reasoning, allowing students to contextualize the broad issues they were given to discuss. To make meaningful discussion possible, AI-generated content—which is contextless and lacks systematic conceptual organization—had to be contextualized and/or conceptually developed by students, mostly on their own. Failing to do so resulted in the recirculation of AI content without understanding the issue being discussed and talking past each other, filling the classroom with meaningless discourse.

Discussion

This study explored students’ use of generative AI in the context of classroom discussions of social issues. Building upon Bernstein’s theoretical framework, we introduced AI as one of the agents of recontextualization, meaning that it has its own role in the transformation of public discourse that takes place when social issues are discussed in class. In this section, we address what this role can be and how to support students’ civic reasoning in the age of generative AI.

Generative AI as Recontextualization Agent: Bias and Bullshit

First, our analysis shows that students’ ideas became closer to AI-generated content. This movement is not ideologically neutral but a shift to a more traditional frame of discussion that centers around essentialized differences between the two genders. Although listing arguments and endorsing them are two different things, and we do not claim that students’ beliefs about gender have changed, we still can say that their representation of public discourse as an overview of possible perspectives did change. On the one hand, a study of political bias in GPT-4 shows that it leans more to the left than the average American (Motoki et al., 2025). On the other hand, its bias can be different across different topics. The average American probably stands far away from the average Swede in terms of gender issues and potential bias. In our case, bias lies on the level of assumptions, not explicit positions. It is, therefore, reasonable to suggest that AI’s recontextualization of public discourse about gender was more US-centered and traditionalist, which can be traced back to how GPT is trained and the explicit and implicit bias of LLMs (Bai et al., 2024; Schaul et al., 2023).

Second, following Frankfurt’s (2005) definition of bullshit as the lack of concern for truth, we see another contribution of generative AI in filling classroom discourse with bullshit. Defying the often proposed opposition between rational argumentation and lived experience (e.g., Gibson, 2020; Knowles & Clark, 2018), it supports classroom discussions that have neither. Although discussion seems a GPT-proof task that you cannot just “copy and paste,” despite teachers’ structured approach, students could still use AI-generated content to successfully do the task without using civic reasoning. Showing little concern for the truth, some just wanted to finish all the steps and be done, while others relied heavily on social approaches to evaluate claims (Metzger et al., 2010). Furthermore, generative AI, on its own, has a tendency toward bullshit (Hicks et al., 2024). In our study, without careful prompting and further development, AI-generated content consisted mostly of general claims, with no connection to students’ context (low or moderate semantic gravity) but also little systematic conceptual organization (low or moderate semantic density). Without transformation, it did not allow closer examination of social issues on either practical or theoretical level, giving students few tools to discuss what is true and good.

How is generative AI, in that sense, different from any other material that represents public discourse in a classroom setting? After all, a textbook or a newspaper article can also be biased material that students just reproduce the same way they used AI-generated content here. Still, AI has the potential to be more powerful and unpredictable. Arguments produced by generative AI have been described as very persuasive, as they have a highly confident “voice” (Mollick, 2024). In addition, the illusion of objectivity leads people to believe that AI has no standpoint or equally reflects all standpoints (Messeri & Crockett, 2024). When asking ChatGPT to “give all the arguments in the world,” students might be tricked into thinking that they actually get the full picture. It is possible to expect people to be more critical toward traditional sources that have an author than toward AI as an all-knowledgeable “black box.” Finally, it is easier for teachers to control the quality of traditional materials, and we usually expect textbooks to be conceptually structured and media materials to relate to the context, both potentially better than broad claims generated by AI.

Supporting Epistemic Vigilance in the Age of Generative AI

Turning now to implications, this study suggests that from a student’s perspective, successful integration of AI into the task requires the development and activation of epistemic vigilance. This, in turn, relies on both knowledge of AI and of the core requirements and meaning of this task. Regarding knowledge of AI, low AI literacy has been shown to predict higher AI receptivity (Tully et al., 2025). Our findings align with AI literacy frameworks that highlight the role of humans in creating and using AI (Long & Magerko, 2020). From the developers’ side, this role is reflected in the choice and biases of the training data, model tuning and specification, and establishing political and ethical guidelines that limit the output. From the users’ side, students can be informed about the ways the prompt impacts the output and their own responsibility for its interpretation and constructive use.

However, AI literacy might not be enough, as knowledge of AI capabilities should be put in relation to the task of civic reasoning and its core requirements as recognition rules, highlighting the need and the space for epistemic vigilance. Our observations align with previous research indicating that classroom discussions of social issues lack integrative argumentation; instead, students often prefer to go for quick consensus or limit themselves to short opinion exchanges (Crocco et al., 2018; Jerome et al., 2021). Students struggled to corroborate AI-generated claims using external sources, showing again that adolescents frequently fail to evaluate information online (Breakstone et al., 2022; Nygren & Guath, 2019, 2022). In other words, access to generative AI can exacerbate existing challenges in students’ civic reasoning.

Explicating recognition and realization rules is typically discussed in Bernstein-inspired literature as a way to diminish disparities in educational achievement (Barrett & McPhail, 2023; Morais, 2002). Our results suggest that explicit instructions for learning activities used by the teachers did not make visible the core requirements of good civic reasoning, which probably has to do with its weak classification as practice and, therefore, unclear recognition rules. Lack of clarity about what civic reasoning should be leads to an imitation of practice that looks good on the surface but contributes little to learning. Future research can examine this idea by comparing successful and unsuccessful integration of generative AI in learning tasks across different domains, with a focus on clarity of the task requirements. On a very practical level, our study also indicates that if generative AI is used to represent public discourse for learning purposes, it should be scaffolded with a focus on missing perspectives, corroboration, and contextualization and conceptualization of AI-generated content.

Footnotes

Appendix A

Collected Data.

| Type of material | Lesson 1: gender-segregated schools | Lesson 2: desegregation busing | Total | |||||

|---|---|---|---|---|---|---|---|---|

| Class A | Class B | Class C | Unidentified | Class A | Class B | Class C | ||

| AI chat and notes | 2 | 9 | 6 | 10 | 11 | 15 | 9 | 62 |

| Discussion recording | 5 | 2 | 3 | 5 | 3 | 3 | 21 | |

| Pretests | 20 | 14 | 20 | 54 | ||||

| Posttests | 20 | 14 | 21 | 55 | ||||

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partly funded by the Swedish Institute for Educational Research (Grant Number: 2020-00009).