Abstract

Introduction

Radiology requisitions represent the most important and sometimes only instance of communication between referring physicians and radiologists prior to imaging and interpretation. Anecdotally and in the literature, the benefit of clinical information provided on requisitions has been long recognized by radiologists.1,2 Multiple systematic reviews have attempted to summarize and quantify the impact of clinical history on radiologists’ reports.3,4 Intuitively, a more thorough clinical history increases the sensitivity of the radiologist for the related finding (i.e. stating history of a known lung nodule in the right upper lung would increase sensitivity for detecting nodules in that location).3,4 Understandably, there is a concurrent increase in the number of false positives (e.g. overlapping shadows misinterpreted as a lung nodule).3,4 Comparing results across studies is difficult, as they often use divergent methods for evaluating the quality of the final radiology report. Overall, recent systematic reviews have concluded that clinical histories have a positive effect on the radiology report.3,5

Despite knowledge of the relevant benefits, the clinical history and indications provided on imaging requisitions are often inadequate.6-12 Various authors have deemed requisitions inadequate if they lack relevant medical or surgical history (e.g. history of prior appendectomy on a requisition for a CT of the abdomen and pelvis),7,8,12 lack appropriate localization/lateralization (e.g. abdominal pain in the left lower quadrant), 12 lack a clear clinical question,7,8,12 lack association to an appropriate billing code 11 or are cloned from previous requisitions (e.g. in ICU patients receiving daily follow-up radiographs). 11 Though individual definitions of appropriateness or adequacy of requisitions are subjective, there is a clear pattern in the literature that the information provided to reading radiologists is often lacking in some form.

Attempts have been made by radiologists to improve the quality of requisitions, to varying success.8,13-15 Recently, there has been an attempt to generate a more standardized appropriateness criteria for requisitions (‘RI-RADS’), which grades requisitions from ‘adequate’ to ‘deficient’ based on their inclusion of an impression, clinical findings and a diagnostic question.13,16 However, such efforts can potentially lead to friction with referring physicians.

Though machine learning is currently a hot topic for its potential to augment imaging interpretation, relatively little work has focused on machine learning to analyse imaging requisitions. Assad et al (2017) published a study focussing on using machine learning to categorize CT chest requisitions as appropriate or inappropriate. 17 They found success in doing so, with a Naïve Bayes classifier approach yielding an accuracy of 90% compared to manually assessed requisitions. 17

The purpose of this study was to determine if poor quality musculoskeletal radiograph requisitions could be identified automatically using machine learning and natural language processing techniques. Accurate and timely identification of such requisitions can allow for improvements in the workflow in the radiology department.

Methods

The study was conducted at a single tertiary care hospital. This was a retrospective study conducted following research ethics board approval at our institution.

Musculoskeletal (MSK) radiograph requisitions for studies performed at our institution between January 2018 and April 2021 were reviewed through the electronic medical record (EMR). Each requisition contains multiple digital fields. Only anonymized text from the ‘indication’ field of each requisition was retrieved. The ‘indication’ field allows the clinicians ordering the examination to input free text to provide the clinical history and specify the indication for the study. No patient specific data was included or collected.

Two board certified staff radiologists (with 5 years of experience) manually classified and analysed the requisition indications text and deemed each entry ‘appropriate’ or ‘inappropriate’ based on the ACR guidelines for radiograph indications. 18 If at least one appropriate indication for radiography was included, the entry was determined to be appropriate. To access the relevant criteria, the authors queried the cited ACR database by procedure (e.g. searching ‘elbow’ and then selecting ‘Radiography elbow’ for elbow radiographs). As an example, the ACR appropriateness criteria lists 10 distinct indications appropriate for elbow radiography, any of which was accepted if present in the requisition text. Some indications were also accepted in simplified form (e.g. ‘tumour’ or ‘mass’ accepted in place of ‘primary bone tumour’ or ‘soft tissue mass’). Ratings were decided by consensus, and the two radiologists conferred until a final decision was reached for each entry.

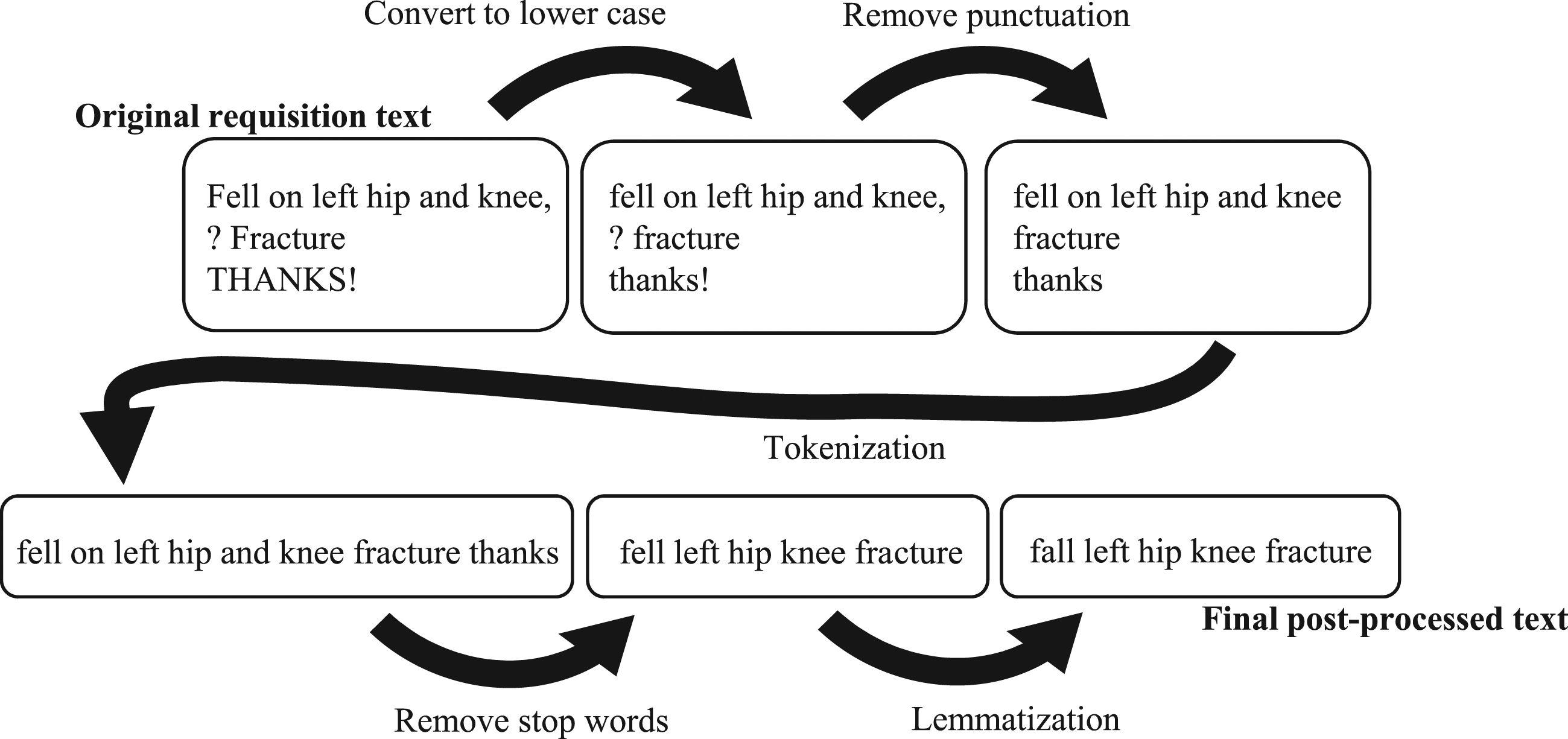

Next, entries were sorted randomly into training and testing sets according to an 80/20 ratio. Requisition text was then pre-processed as follows (see Figure 1): (1) Text was converted to lower case. (2) Punctuation was removed. (3) Tokenization (breaking sentences down to individual words) was performed. (4) Stop words were removed (words which can be removed without a significant impact on the meaning of the sentence e.g. ‘a’, ‘the’ and ‘please’). Any words contained in the name of the procedure were also removed from the requisition at this point. (5) Lemmatization (converting words to a single base form i.e. ‘go’ instead of ‘went’, ‘going’ and ‘goes’) was performed. (6) The residual word sets were transformed into a bag-of-words set of vectors Example of text pre-processing before application of the machine learning algorithm.

A Multinomial Naïve Bayes algorithm was chosen based on its demonstrated previous success with imaging requisitions. 17 Default parameters were used. Alpha (Laplace smoothing) parameter was left at 1. Learn prior class probabilities was left as True. The model was fitted on the training set and assessed on the test set. Results were described in terms of accuracy, false positive and false negative rates.

The following software and packages were used in the design and testing: Python 3.8.5 www.python.org scikit-learn version 1.0.1 scikit-learn.org pandas version 1.32 pandas.pydata.org NLTK (Natural Language Toolkit) version 3.6.5, www.nltk.org

Results

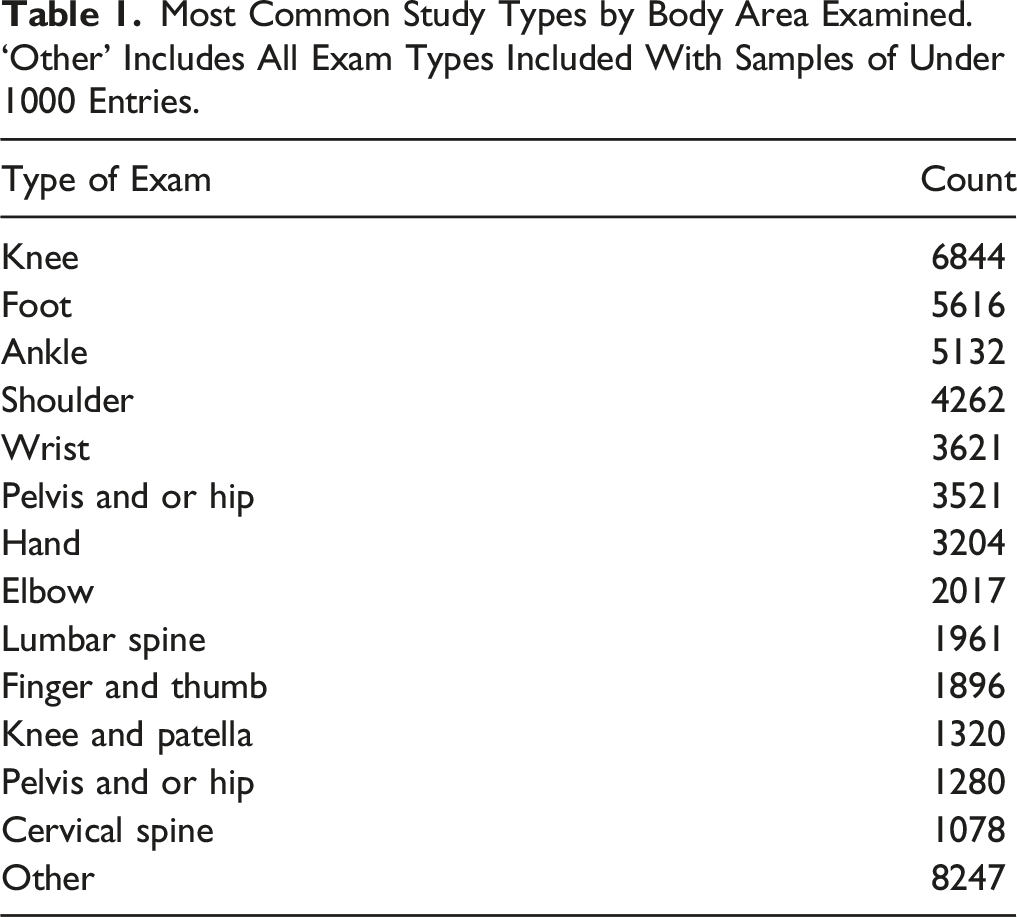

Most Common Study Types by Body Area Examined. ‘Other’ Includes All Exam Types Included With Samples of Under 1000 Entries.

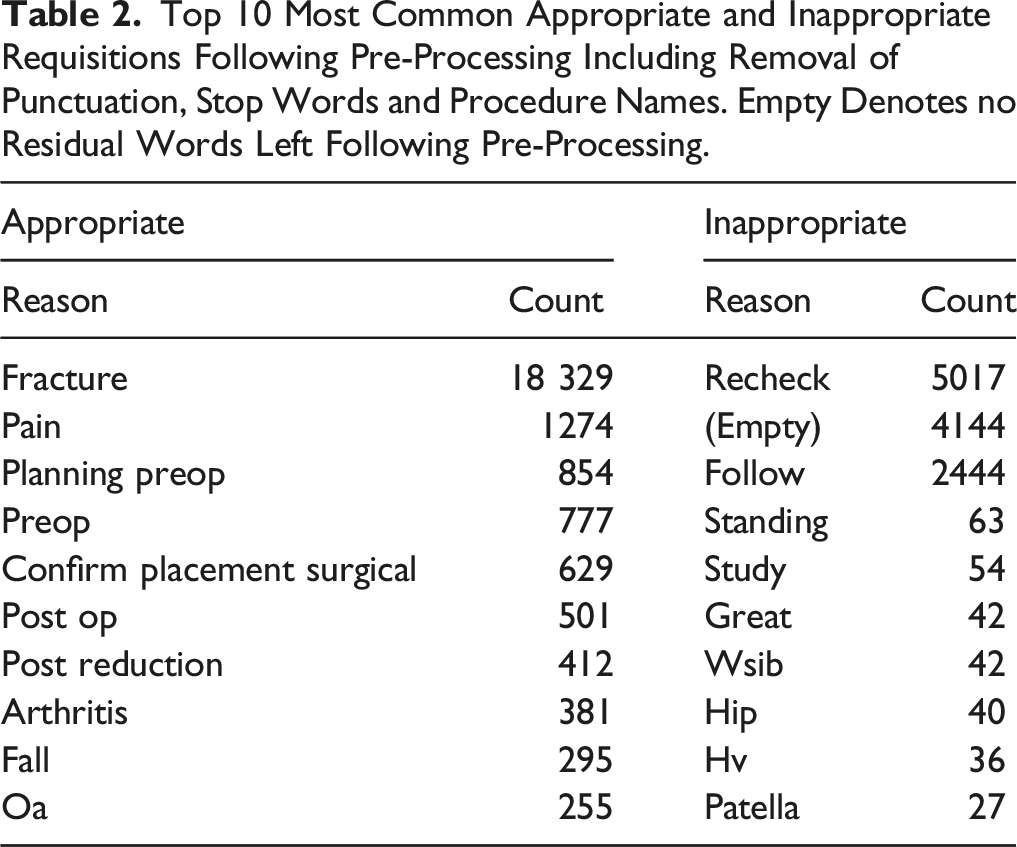

Top 10 Most Common Appropriate and Inappropriate Requisitions Following Pre-Processing Including Removal of Punctuation, Stop Words and Procedure Names. Empty Denotes no Residual Words Left Following Pre-Processing.

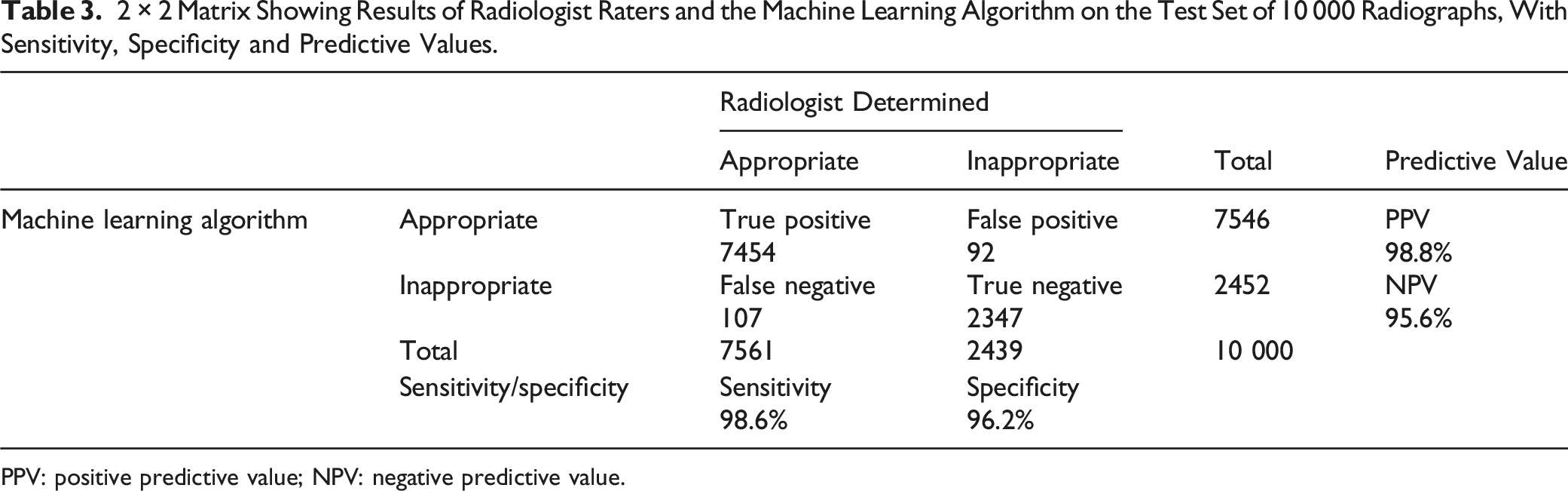

2 × 2 Matrix Showing Results of Radiologist Raters and the Machine Learning Algorithm on the Test Set of 10 000 Radiographs, With Sensitivity, Specificity and Predictive Values.

PPV: positive predictive value; NPV: negative predictive value.

A Naive Bayes model correctly classified requisitions with an accuracy of 98%. In the test set, 107 of 7561 (1.4%) appropriate requisitions were incorrectly flagged and 92 of 2439 (3.8%) inappropriate requisitions were not flagged.

Discussion

Across a large data set of 50 000 MSK radiograph requisitions, we found that only 75.5% were appropriate in terms of clinical indication by ACR criteria. Using a Naïve Bayes algorithm, we achieved an accuracy of 98% in identifying inappropriate requisitions.

Of the requisitions that were classified inaccurately, we found that the model tended to struggle to accurately classify nonsensical requisitions such as random letters or requisitions with very low incidence. In other words, it needed to have seen some examples of the words used in a given requisition to be able to classify it well. Practically applied, we might expect even better results as the model is exposed to a greater number of requisitions to train.

Notably, we chose to assign appropriateness based on ACR criteria, rather than the recently published RI-RADS criteria, because our study focused on requisitions for radiographs. At many institutions (including our own), radiograph requisitions virtually never include an impression, clinical findings and a diagnostic question, all of which are necessary to earn an ‘adequate’ score according to RI-RADS. 16 In the future, this may prove a more useful criteria for assessing requisitions for CT or MRI studies.

In addition to properly defining adequacy, the utility of flagging requisitions as appropriate or inappropriate hinges on whether there is a benefit to patient care. In the literature, this is often measured by comparing the quality of a radiology report with and without provided clinical history. For radiographic interpretation, the existing literature is largely 25–35 years old,19-24 with multiple more recent systematic reviews.3,5 In a recent publication, Yapp et al recommended that future studies use a ‘free response’ method of reporting, where radiologists are asked to identify all suspicious areas and assign each one a confidence rating. Correct answers are rewarded, and incorrect answers are penalized. This method minimizes the tendency to ‘game the system’ when readers are asked only to identify a single area of concern, and reduces confusion when a study is flagged as abnormal for the wrong reasons (e.g. the reader identified four lung nodules, but only one is a true finding).3,25 Older studies tended not to use this method, which is a notable limitation of their analyses.19-24

A single more recent study utilized the free response method for data collection and showed that a clinical history that increases the perceived prevalence of the abnormality in the imaged population increases overcall in radiologists.

26

In their example, radiologists were asked to screen for and identify lung nodules in patients with either no clinical history, a history of ‘visa application’ or a history of known cancer. The only statistically significant result was a decrease in specificity for nodules found in the known cancer group (.54) when compared to the visa application group (.7) (W = −41,

It should be noted that the impact of clinical history across all studies is rather difficult to quantify in a controlled environment. When we imagine a useful clinical history, what comes to mind is not ‘how likely is this person to have a lung nodule?’ in a study where we already know lung nodules are the only possible finding. Instead, history of trauma, location of pain, symptoms, time course, known pre-existing diagnoses or suspected clinical diagnoses are details that are important to frame findings appropriately, rather than simply to help identify them in the first place. This is admittedly difficult to capture effectively in the necessary strictly controlled environment of multi-observer studies.

In their recent and comprehensive systematic review, Castillo et al 5 found that, generally, clinical history has been used to increase interpretation accuracy, reporting confidence and clinical relevance of reports. 5 The authors were similarly critical of Littlefair et al (2016) for inadequately simulating a realistic clinical scenario.5,26

Our study has a few limitations. Requisitions were collected from a single institution, and regional imaging ordering tendencies could therefore influence our analysis. Only MSK radiograph requisitions were used, and a separate analysis would be necessary to apply the algorithm to other modalities. Testing the algorithm with external data would also help prove generalizability of our approach. Finally, time will tell whether the machine learning approach is vulnerable to gaming, 25 which in this context is a phenomenon where users tend to change their input minimally or non-meaningfully in order to circumvent a pop-up or warning.

We speculate that this machine learning approach to requisition analysis could prove useful in the clinical setting. For instance, such an algorithm could be integrated into the EMR to analyse and flag inappropriate requisitions as they are entered and submitted electronically. EMR-integrated automated reminders have been used to varying success in the literature. Initial assessments of the effectiveness of clinical reminder systems date back over 25 years, including a meta-analysis of randomized control trials that looked favourably upon electronic reminders with an odds ratio of improving preventive healthcare practice of 1.77.

27

Reminders are commonly employed to prevent errors in prescription of medication and have been used to avoid adverse drug interactions,28,29 avoid inappropriate dosage,

29

and to ensure orders are not likely to be inputted for the incorrect patient.

30

Although surveys indicate that physicians sometimes ignore alerts in patient charts,

31

notable successes have been seen in reminder systems that focus on improving documentation, rather than those that aim to trigger an intervention. For example, an automated reminder system for documenting the placement of arterial lines by anaesthesiologists in the operating room improved the rate of documentation – with 88% of procedures documented in the reminder group and only 75% in the control group (

In our use case, ordering physicians would be given an opportunity to modify their requisitions if they are flagged as inappropriate at the point of entry, encouraging clinicians to provide adequate clinical indications. In one example from the literature, Gupta et al. (2016) adopted an EMR-based decision support system based on ACR appropriateness criteria and assessed its performance over a five year period. 25 At the end of the assessment period, the proportion of ACR-inappropriate orders had dropped to less than 3%, with approximately 63% of these exams actually completed (the remainder were cancelled). 25

Would an EMR alert that ‘rejects’ inappropriate requisitions inadvertently discourage clinicians from ordering necessary imaging or contribute to interpersonal conflict? Little data is available on the proportion of study requests that are declined by the radiologist or triage nurse due to inadequate clinical information. A handful of studies in the United States examined the utility of ‘Radiology Benefit Managers’, who gatekeep imaging study requests and have the ability to accept, deny or direct requests to less-expensive or more appropriate modalities, and largely concluded that they are costly to radiology departments without significantly changing the studies performed.36,37 These studies are limited by their intimate links to the U.S. medical system, however, as issues related to insurance coverage and payment are relatively unique to the American setting. In any case, implementation of an electronic reminder system would aim to encourage clinicians to provide more appropriate requests, and any reduction in frivolous requests would be an added benefit.

In a Canadian study of complaints submitted to a radiology department, a minority of complaints involved scheduling or inappropriate delays for imaging. 38 Among these, Robins (2014) actually attributed miscommunications to the lack of clinical history – without knowledge of the clinical scenario, studies were sometimes inappropriately triaged, resulting in longer waiting times or changes to the exam modality. 37

In summary, our machine learning algorithm is able to accurately determine whether MSK radiograph requisitions are appropriate or inappropriate based on the provided clinical indication. There is an opportunity to implement such an algorithm to create EMR-based reminders to prompt ordering physicians to improve requisitions. We hope that such a system will benefit patient care by improving the clinical history available to radiologists prior to imaging interpretation.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.