Abstract

Purpose:

Postgraduate residency programs in Canada are transitioning to a competency-based medical education (CBME) system. Within this system, resident performance is documented through frequent assessments that provide continual feedback and guidance for resident progression. An area of concern is the perception by faculty of added administrative burden imposed by the frequent evaluations. This study investigated the time spent in the documentation and submission of required assessment forms through analysis of quantitative data from the Queen’s University Diagnostic Radiology program.

Methods and Materials:

Data regarding time taken to complete Entrustable Professional Activities (EPA) assessments was collected from 24 full-time and part-time radiologists over a period of 18 months. This data was analyzed using SPSS to determine mean time of completion by individuals, departments, and by experience with the assessment process.

Results:

The average time taken to complete an EPA assessment form was 3 minutes and 6 seconds. Assuming 3 completed EPA assessment forms per week for each resident (n = 12) and equal distribution among all staff, this averaged out to an additional 18 minutes of administrative burden per staff member over a 4 week block.

Conclusions:

This study investigated the perception by faculty of additional administrative burden for assessment in the CBME framework. The data provided quantitative evidence of administrative burden for the documentation and submission of assessments. The data indicated that the added administrative burden may be reasonable given mandate for CBME implementation and the advantages of adoption for postgraduate medical education.

Introduction

The Royal College is leading a national multi-year change initiative in specialty medical education. Competency-based medical education (CBME) is now being adopted into all medical postgraduate programs across Canada.1,2 The Queen’s University Diagnostic Radiology Residency Program was an early adopter of this model, with implementation of CBME in July 2017.

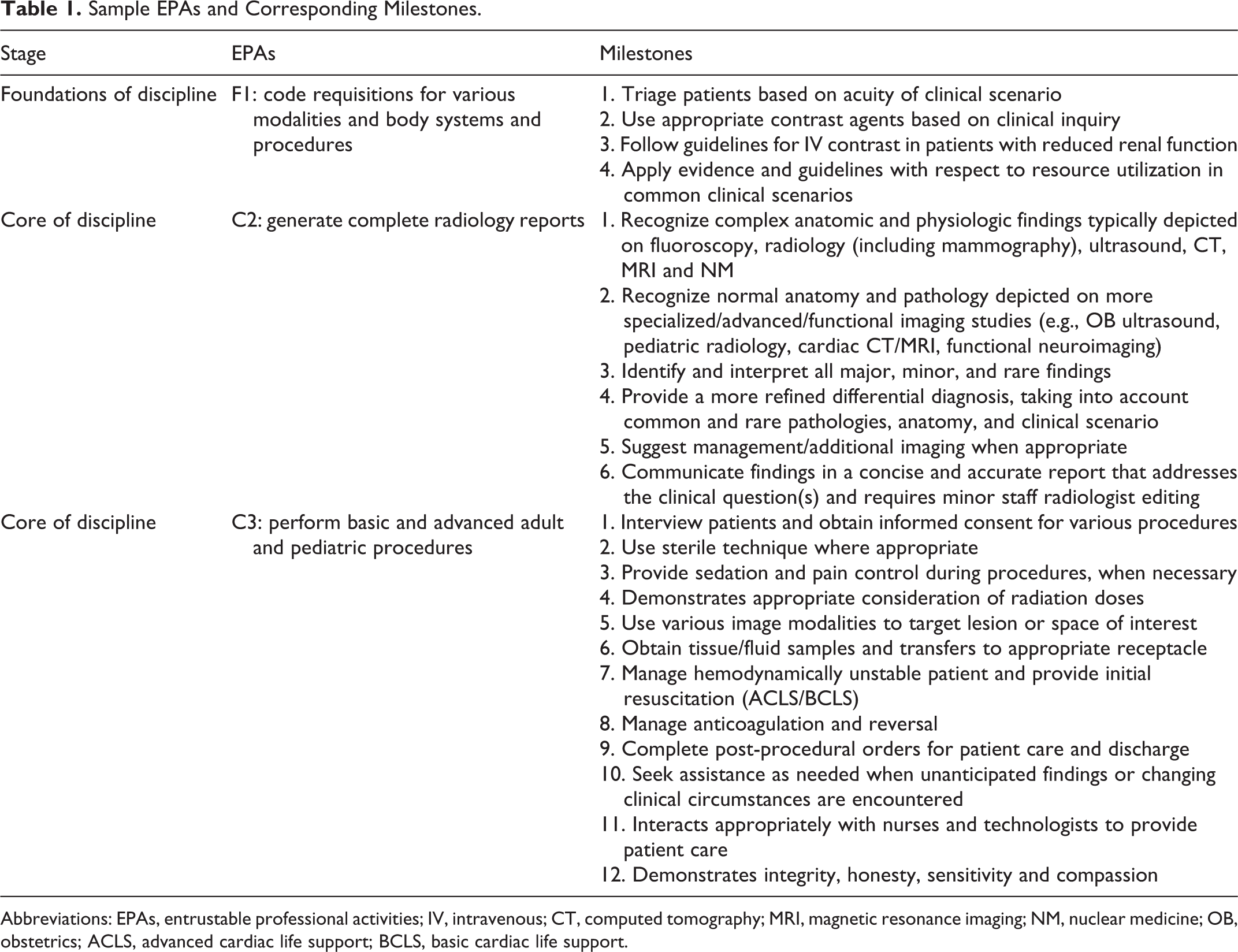

CBME is defined by the Royal College of Physicians and Surgeons of Canada (RCPSC) as, “an outcomes-based approach to the design, implementation, assessment and evaluation of a medical education program using competencies as the organizing framework.” 3 A curricular structure based on CanMEDS roles is articulated into a framework of Entrustable Professional Activities (EPAs) and developmental milestones. Formative assessments are integrated into clinical work that reflect professional activities and document performance on explicit competencies. Queen’s Department of Radiology developed their own EPA curricular structure (Table 1).

Sample EPAs and Corresponding Milestones.

Abbreviations: EPAs, entrustable professional activities; IV, intravenous; CT, computed tomography; MRI, magnetic resonance imaging; NM, nuclear medicine; OB, obstetrics; ACLS, advanced cardiac life support; BCLS, basic cardiac life support.

The adoption of CBME has afforded many advantages to residents. 4 The competency-based model defines clear sequential stages for specialty training as well as stage-specific objectives for learning progression. 4 The assessment framework integrates multiple assessments of resident performance for specific competencies with both direct and indirect observation in clinical settings. 5 A variety of faculty participate in the assessment process, allowing for diverse perspectives on performance. 6 The model has a mechanism for feedback that includes mandatory comments on assessments for areas of concern. Additionally, the frequency of assessment provides continuous feedback for the resident allowing them to adjust their learning trajectory to address areas needing improvement. 7 All faculty received standardized training which includes a comprehensive review of Competency Based Design (CBD) and the shift to a new framework for assessment.

The implementation of CBME is facilitated through the Queen’s electronic portfolio system, Elentra. This is an Integrated Teaching and Learning PlatformTM created by an international consortium of medical schools. The platform allows access, interaction, and management of information within a unified online environment. The system allows for evaluators to easily access the assessment platform through their computer, tablet, or mobile device.

Several areas of concern are commonly cited against the implementation of CBME.8-11 One such concern relates to the added administrative requirements with respect to assessment 10 : the adoption of CBME requires additional faculty training 8 ; the feasibility of evaluating every resident on every proposed milestone 11 ; and, the burden of documenting more frequent assessment through detailed online scoring systems. 9 Overall, there is apprehension that faculty may spend more time on the administration of CBME, rather than ensuring high quality learning encounters for the residents. 8

This study investigates the administrative burden for documenting resident performance on frequent assessments. It specifically analyzes data regarding the time taken to complete the online assessment forms. It should be noted that this study focuses on the documentation time only, and not the time allotted to perform case reviews or procedures that precede the completion of the online assessment.

Methods

Our data specifically investigated the time to complete EPA assessments within the Department of Diagnostic Radiology at Queen’s University in Kingston, Ontario, Canada between July 1, 2019 to December 31, 2020. There are currently 12 CBME residents within the Radiology Program at Queen’s. Data points were collected from 24 radiologists and included radiology subspecialty (abdominal, cardiothoracic, Interventional, musculoskeletal, neuroradiology nuclear medicine, pediatric radiology, or women’s imaging specialists), employment status (full time or part time), total assigned assessments, completed assessments, pending assessments, assessments in progress, deleted assessments, and average completion time per faculty member. 755 completed assessments were included in the analysis. New hires (defined as new faculty in their first year) were also noted. Assessment scores and comments were not included in our data analysis.

Data was input into SPSS (Statistical Package for Social Sciences) Statistics for data analysis. Descriptive statistics and comparative mean functions were utilized in the analysis of our data.

Results

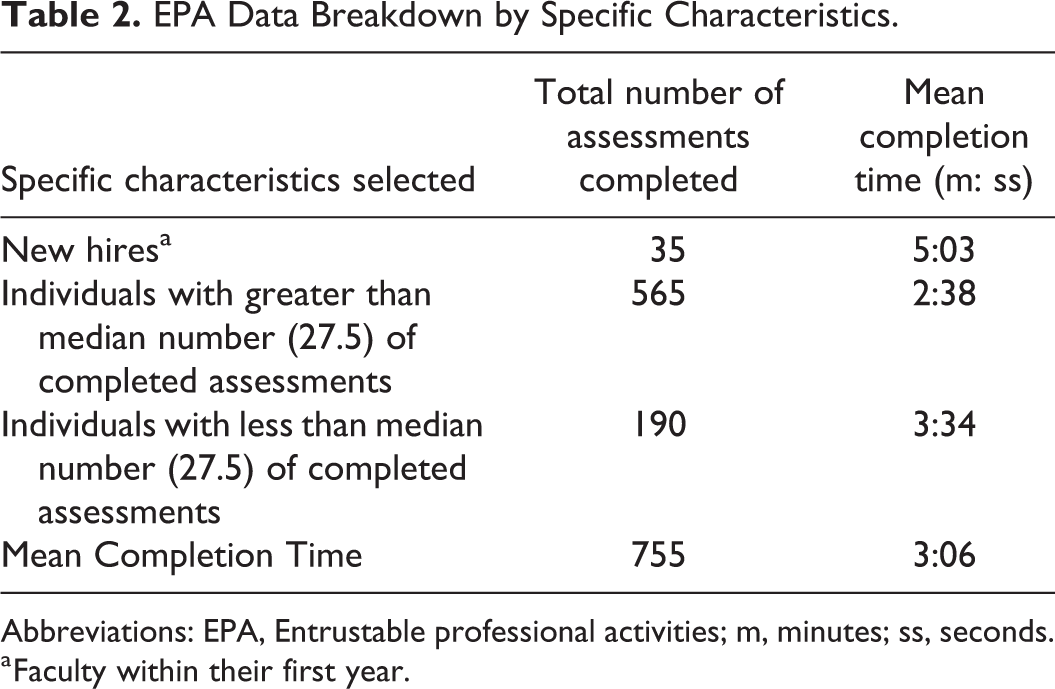

The overall average time taken to complete EPA assessments by all radiology faculty (N = 24) was 3 minutes and 6 seconds (95% CI: 2:29-3:43) with a median number of assessments completed at 27.5. The average time for new hires (N = 3) was 5 minutes and 3 seconds (95% CI: 1:13-6:32) (Table 2).

EPA Data Breakdown by Specific Characteristics.

Abbreviations: EPA, Entrustable professional activities; m, minutes; ss, seconds.

a Faculty within their first year.

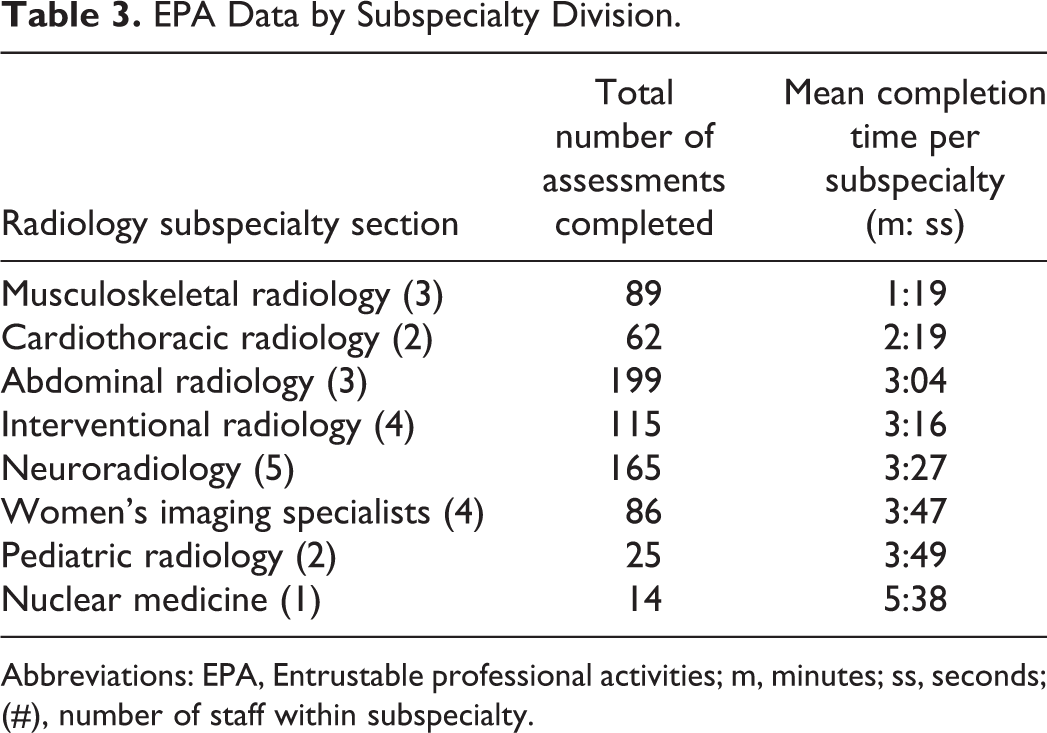

Broken down by subspecialty section, musculoskeletal (MSK) radiologists required the least time (1:19); followed by cardiothoracic (2:19), abdominal (3:04), and interventional (3:16) radiologists; neuroradiologists (3:27); women’s imaging specialists (3:47); pediatric radiologists (3:49); and finally, nuclear medicine specialists (5:38) (Table 3).

EPA Data by Subspecialty Division.

Abbreviations: EPA, Entrustable professional activities; m, minutes; ss, seconds; (#), number of staff within subspecialty.

When analyzing times around the median number of completed assessments, it was found that those who completed less than 27.5 assessments did so with an average of 3 minutes and 34 seconds (95% CI: 2:30-4:37) and those who completed over 27.5 assessments averaged a completion time of 2 minutes 38 seconds (95% CI: 1:55-3:21). There was no significant difference found between these 2 means using an Independent Samples test, p = 0.125.

Discussion

A major concern with the implementation of CBME, is the added administrative burden for faculty in utilizing the CBME assessment framework. This study investigated the completion times for assessments from 24 radiology faculty at Queen’s University.

Despite our small sample size, there is a general trend from the data demonstrating faster completion time with increasing familiarity and repetition. Those with the most experience (more assessments completed) generally had faster completion times versus: 1) those who were new hires (with a grouped average over 2 minutes higher than the overall average), or 2) those that completed fewer assessments than the median (with an average 36 seconds above the overall average). Shorter times might be explained by familiarity with the assessment process.

There was variability in assessment time between subspecialty sections, with MSK having the fastest average time required (1:19) and nuclear medicine requiring the longest average time (5:38). It should be noted that nuclear medicine average was comprised of only one nuclear medicine specialist, thus was omitted from grouped departmental statistics calculation.

Our study demonstrates that, on average, it took approximately an additional 3 minutes to complete an assessment. However, this does not include the actual time taken to perform/observe the procedure or case review, only the administrative time of completing the actual EPA assessment form. It should also be noted that these assessment forms are being completed 1-3 times per week with each resident.

At the maximum 3 assessments per week, over a 4-week rotation block, the additional administrative time required per resident is 36 additional minutes shared by department faculty. The load of 36 additional minutes per resident (N = 12) distributed among 24 staff faculty members equates to an additional 18 minutes per block of administrative time. There is no standardized expected amount of time to perform assessment duties per resident, and as a result it is difficult to draw conclusions on the significance of this finding. It has also been noted that some staff complete significantly more EPA assessments than others, which would lead to a proportional increase in administrative burden. This imbalance could potentially be due to 1) availability of the case scenario, and 2) staff interest in the assessment process, and/or the provision of more useful feedback. However, an average of 18 additional minutes per staff member over a 4-week period seems quite reasonable.

Prior to the implementation of CBME, In-Training Evaluation Reports (ITERs) were used for evaluation. These were summative assessments that occurred at the end of a block rotation. ITERs were designed as a holistic evaluation of resident performance over a period of time. In contrast, the CBME assessment framework is more granular, based on multiple assessments of resident performance for specific competencies at a specific point in time. The frequency of assessment is greater within the CBME model, and therefore would require more overall faculty time than the 1-time completion of an ITER.

There are several limitations to this study. This study only covered one department within the entire medical school and hence is a small sample size. It would be interesting to evaluate if the findings from this study holds true between departments at Queen’s University or even between radiology departments at other schools, once CBME is fully implemented into radiology programs nationwide. Years of practical experience in radiology is an interesting omitted factor which may warrant further study looking beyond form completion but overall assessment time. Increased years of practice experience typically results in more experience teaching and working with residents, potentially leading to faster overall assessment times. Finally, it should also be noted that the specific EPAs used for this analysis were developed by Queen’s University and may not be identical to those ultimately approved by the RCPSC for rollout in 2022. However, the overall CBME framework, target milestones, and model of assessment should generally remain the same.

The focus of this study was on actual documentation time. However, it is not known if the new assessment model requires more time from faculty to integrate the milestone framework into their own mental model for assessment. Are the assessors utilizing the milestone framework as a guideline during their case reviews or observation of procedures? For example, C2 EPA (Generate Complete Radiology Reports) lists 6 milestones that require assessment on a developmental scale (see Table 1). These milestones may already be tacit in the assessor’s evaluation framework and are easily transferred into documentation. For new faculty, it may take more time to integrate the milestones as assessment criteria.

Time added which may not be captured in this data includes time taken to consider resident performance prior to opening the assessment form as well as time taken discussing performance with the resident in person and/or coaching the trainee through weaknesses identified through the assessment process. Conversely, assessment times captured electronically may be inflated by “idle time” whereby the evaluator opens the assessment and leaves it open while their attention is elsewhere. Additionally, the extent and depth of assessor comments could potentially impact completion time, however comment specific data was not analyzed as a part of the present study.

Additional sections on the assessment forms that require faculty feedback include Entrustment, Professionalism, Next Steps and Global Feedback all of which will entail further reflection by the assessor prior to actual completion of the form (Figure 1).

Example of some sections in a supervisor Form for EPA C2: generate complete radiology reports. Flags in “needs attention” milestone section indicate that a text box will pop-up if this scale is checked for any milestone. Flags in “yes” concerns section indicate that a text box will pop-up if this scale is checked for any item.

Conclusion

One of the major concerns from faculty with the adoption of a CBME model is the perceived additional administrative burden specifically related to assessment. In this study, we show that the actual additional burden for completion of an assessment, averages out to approximately 3 minutes per assessment. This completion time will vary based on familiarity with the process and comfort using the electronic input system.

However, there remains questions as to the potential increase in actual faculty teaching and assessment time that results from the assessments being more granular. These new assessments provide detailed milestones that guide the assessment process. The overall interaction time is a consideration but not within the scope of this paper. In terms of actual administrative burden for documentation of resident performance, this study found that, within the Queen’s Radiology program, there is an addition of approximately 3 minutes per assessment which averages to an additional 18 minutes per staff member over a 4-week block.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.