Abstract

Social media platforms such as Pinterest are a popular medium for locating and consuming health and mental health information, as well as educational resources to assist struggling learners. Despite parents and educators being frequent consumers of education-related information on Pinterest, no studies to date have explored the accuracy of intervention information for dyslexia on Pinterest-linked web pages, meaning that the extent to which it aligns with evidence-based practice and the science of reading is unclear. This study reviewed online information about interventions for dyslexia from 41 Pinterest-linked web pages to evaluate accountability, presentation, alignment with evidence-based practice, and readability using a set of standardized criteria. The quality of intervention information was generally poor, with websites meeting less than 10% of the standardized criteria. Further, most information was published by unspecified authors or authors without formal experience providing evidence-based interventions for dyslexia. Most sites also neglected to reference their sources or recommend follow-up with a professional. These findings suggest that psychologists should be steering educators away from Pinterest as a resource and towards more reliable websites. Possibilities for future research, and practical implications for school psychologists are discussed.

Introduction

Although search engines such as Google have traditionally been the primary means of accessing mental health information online, there has been a shift toward social media platforms for this purpose (Zhao & Zhang, 2017). Visual-based platforms, such as Pinterest, offer accessible, appealing, and efficient ways to engage with information, particularly benefiting users who prefer visual content or find traditional text-heavy resources less approachable (Mackert et al., 2009). Notably, major categories of Pinterest users include mothers and teachers (Cleaver & Wood, 2018; Hall et al., 2018), with recent statistics suggesting that 80% of all mothers who use the internet also use Pinterest (Omnicore, 2021). Moreover, an estimated 87% of elementary teachers and 62% of secondary teachers have been reported to use Pinterest for professional purposes such as enhancing their professional activities and supplementing the needs of their students (Opfer et al., 2016).

There is a wealth of content aimed at educators regarding dyslexia on Pinterest; however, it is unclear whether this content reflects current approaches to reading instruction and intervention based on the science of reading. Although research supports direct systematic instruction in phonics (e.g., phonemic awareness, letter knowledge, speeded practice, structured reading practice; see Table 1 for a list of essential components) as effective for improving literacy in both typical and struggling readers (McArthur et al., 2018; Walkey, 2024), there has been minimal research examining the alignment between evidence-based interventions for dyslexia and the information found online, particularly on platforms like Pinterest. However, research examining online information for other disorders (e.g., ADHD) has found that this information is frequently inconsistent with evidence-based recommendations and clinical guidelines for intervention (see King et al., 2021).

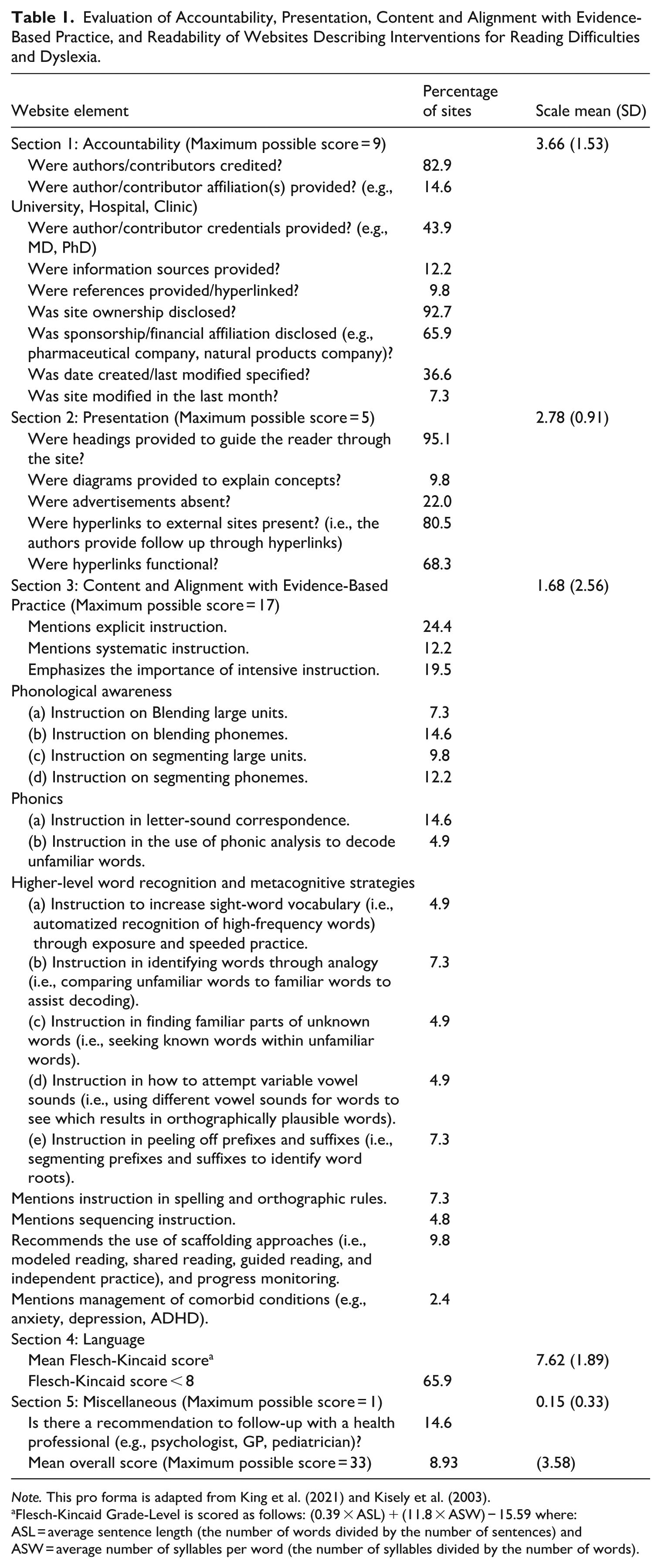

Evaluation of Accountability, Presentation, Content and Alignment with Evidence-Based Practice, and Readability of Websites Describing Interventions for Reading Difficulties and Dyslexia.

Note. This pro forma is adapted from King et al. (2021) and Kisely et al. (2003).

Flesch-Kincaid Grade-Level is scored as follows: (0.39 × ASL) + (11.8 × ASW) − 15.59 where: ASL = average sentence length (the number of words divided by the number of sentences) and ASW = average number of syllables per word (the number of syllables divided by the number of words).

With this in mind, the current study conducted a content analysis of dyslexia-related content on Pinterest to evaluate the quality of intervention information available to parents and teachers. By assessing the information’s availability and accuracy, clinicians will be better positioned to provide guidance to parents and educators about evidence-based interventions. The study focused on: (1) categories of websites linked to dyslexia-related pins, (2) alignment of information with evidence-based practices, (3) presentational and accountability features of linked sites, (4) readability of linked sites, and (5) recommendations for consulting certified health professionals. Based on prior research examining online information related to mental health disorders (e.g., King et al., 2021; Kisely et al., 2003), it was predicted that alignment between Pinterest-linked intervention recommendations and evidence-based practices for dyslexia would be limited.

Method

Design

This study used a descriptive approach to exploring intervention information for dyslexia available on Pinterest-linked websites. The methodology was informed by previous research (e.g., Heymann, 2020; King et al., 2021; Kisely et al., 2003; Paige et al., 2015), particularly in pin selection and inclusion criteria.

Measures

Pro Forma

The pro forma, initially created by Kisely et al. (2003) to assess online health-related information, was modified for dyslexia by adding key elements of evidence-based intervention for reading difficulties following consultation with an expert in the field. The pro forma was systematically applied to evaluate four key elements of information: (1) Accountability, (2) Presentation, (3) Content Alignment with Evidence-Based Practice, and (4) Readability. Table 1 lists the items included in each section of the pro forma. Each website element was scored quantitatively (i.e., 1 or 0) by the first author (Sara King) using the criteria listed in the pro forma. The maximum possible pro forma score was 33, with higher scores indicating higher quality information and alignment with evidence-based practices.

Procedure

Pin and Site Selection

Data collection was conducted on a reformatted laptop using a newly created Pinterest profile to minimize confounding variables. The selection process involved two steps: (1) pin assessment and (2) evaluation of linked websites. To mimic typical user behavior, the analysis was limited to the first 20 pins from initial searches or until 100 pins were sampled. Search terms were selected based upon combinations of terms often used interchangeably to refer to an identical pattern of reading difficulties (i.e., dyslexia, reading disability, and learning disability in reading) and phrases related to addressing challenges (i.e., intervention, treatment, and management). The goal when developing search terms was for them to remain uncomplicated, thereby reflecting the most likely search strategy of a parent or teacher (see Kisley et al., 2003). Thus, no refinement was performed following the initial searches.

All linked web pages were analyzed unless they were duplicates, paywalled, non-English, or lacking the specified keywords with terms related to interventions (e.g., treatment, management). No refinement was performed on these initial searches. For each identified pin, the first 20 suggested pins from Pinterest’s “More Like This” feature were sampled, adhering to the same inclusion criteria. Websites linked from the selected pins were then included in the analysis.

Inclusion/Exclusion Criteria

To be included, pins were required to meet four criteria: (1) active web links; (2) content on interventions for dyslexia; (3) contain at least one of the following keywords or phrases: “learning disability,” “learning disability in reading,” “reading disability,” “specific disability in reading,” “dyslexia,” “developmental dyslexia,” “specific reading disorder,” “developmental learning disorder with impairment in reading,” “specific learning disorder affecting reading,” “struggling reader(s),” or “reading difficulties”; and (4) be written in English. A screenshot was taken for each, and pins assigned a unique identifier.

Site Evaluation

Included sites were quantitatively coded using the pro forma (see Table 1). To assess validity of the coding of extracted data, a second coder evaluated 20 randomly selected websites from the sample using the pro forma (see Jeyaraman et al., 2020).

Results

Descriptive Statistics

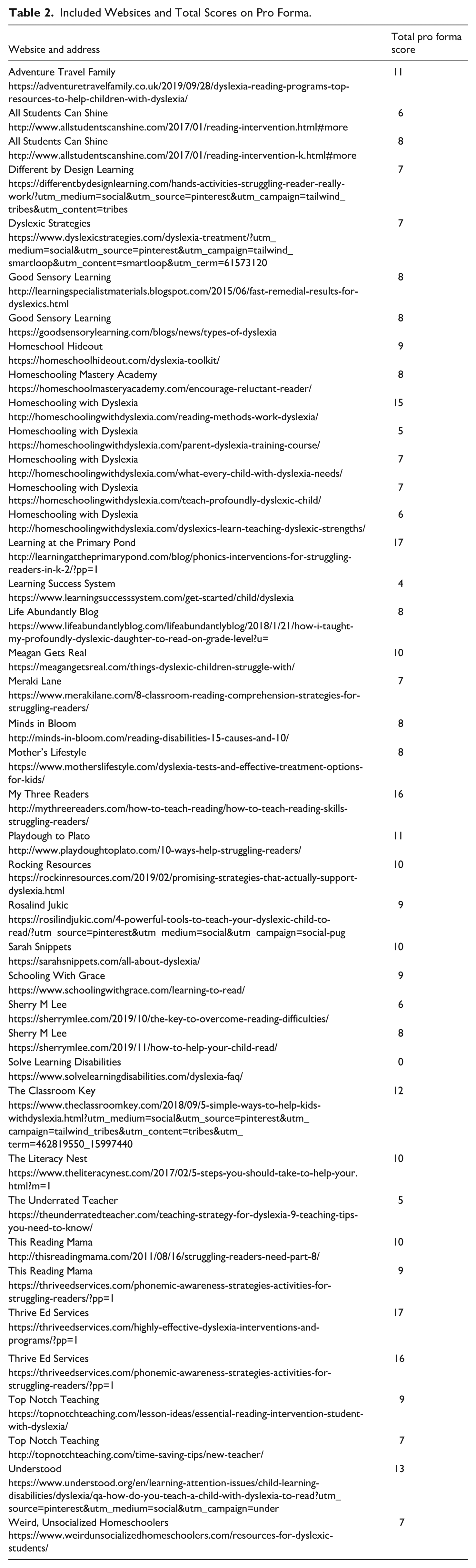

A total of 900 pins were sampled using non-probability quota sampling from the first 100 pins for each search term related to dyslexia. Of these, 489 lacked keywords, 226 did not address interventions, 62 were unrelated to dyslexia, and 94 were duplicates or had non-functional links. The final sample included 45 websites after reviewing 29 remaining pins and their linked sites, with four sites excluded for containing minimal relevant information. Ultimately, 41 websites were coded (see Table 2).

Included Websites and Total Scores on Pro Forma.

Pro Forma Scores

Scores for the 41 sites ranged from 0 to 17, with a mean of 8.92 (SD = 3.58). The lowest scores were observed in the Content and Alignment with Evidence-Based Practice subscale. Interrater reliability was substantial (Kappa = 0.612, p < .001), with 84.5% agreement across 20 websites (Gisev et al., 2013; Jeyaraman et al., 2020; Landis & Koch, 1977). This level of agreement is consistent with previous studies (e.g., King et al., 2021) and was deemed acceptable for this study. The data presented below represent the coding of the first author Sara King.

Accountability

The mean score on the accountability subscale was 3.66 (SD = 1.53) out of nine points. Most sites (93.5%) disclosed ownership and credited authors (82.9%). Few sites cited their sources (12.2%) or provided references (9.8%), though 65.9% disclosed sponsorship or financial affiliation with a commercial brand or company.

Presentation

The mean score on the presentation subscale was 2.78 (SD = 0.91) out of five points. Most sites were well laid out and included major elements such as headings (95.1%). Although 80.5% of sites contained hyperlinks, not all were functional, and 78% of pages featured advertisements.

Content and Alignment with Evidence-Based Practice

The mean score for alignment with evidence-based practices was 1.68 (SD = 2.56) out of a possible 17 points. Among the sites, explicit instruction was the most frequently mentioned evidence-based practice but only appeared in 24.4% of the content. Systematic and intensive instructional approaches were mentioned even less frequently, with only 19.5% and 12.2% of sites respectively addressing these strategies. Recommendations for scaffolding techniques to support learning were cited in just 9.8% of the websites.

Few sites discussed teaching letter-sound correspondence or phoneme blending (14.6%) and even fewer addressed word segmentation (12.2%). There was minimal mention of phonic analysis for decoding unfamiliar words (4.9%), and only a small fraction (4.9%) suggested teaching students to identify familiar parts in unknown words.

Readability

Flesch-Kincaid scores ranged from 5.0 to 14.1, with a mean of 7.62 (SD = 1.89). Most sites were linguistically accessible, with 66% written at a grade 8 level or below.

Recommendation for Follow-Up

Only 14.6% of sites recommended follow-up with a professional for further information on interventions for dyslexia, and recommendations varied widely, often addressing auditory or visual issues rather than interventions for dyslexia specifically.

Discussion

The sampled websites were generally well-organized, visually appealing, and easy to navigate, with most attributing authorship, disclosing site ownership and including functioning hyperlinks. Unfortunately, most hyperlinks led to e-commerce pages rather educational resources. Consistent with previous studies (see Diniz-Freitas et al., 2017; King et al., 2021; Kisely et al., 2003), few sites presented evidence-based intervention strategies for dyslexia. On average, the sampled sites met less than 10% of the evidence-based criteria, suggesting significant shortcomings in the quality of intervention information available to users. These findings highlight a critical lack of comprehensive, evidence-based information about dyslexia on Pinterest-linked websites, underscoring the need for better alignment between online intervention content and established educational practices.

Although multiple sites emphasized the importance of phonics instruction for struggling readers, few provided actionable guidance. Rather, they focused more on promoting multisensory instruction, reading games or assistive technologies as methods of supporting struggling readers. Additionally, even when sites made valid recommendations for intervention (e.g., phonics instruction), they were often presented without clear guidance regarding implementation. This lack of guidance is significant because the passive dissemination of information is generally ineffective at altering behavior or translating into effective intervention ((Ross et al., 2018).

Several sites endorsed unsubstantiated approaches including using dyslexic fonts, colored overlays, dietary interventions, or approaches which do not yet feature sufficient empirical support to be considered evidence-based, such as localized brain stimulation and brain integration therapy (see Turker & Hartwigsen, 2022). Neuromyths, such as teaching students based on their learning style or misconceptions attributing dyslexia to attention issues, auditory/visual impairments, and/or reversible conditions also further exacerbated the misinformation on display, despite significant evidence to the contrary (see Westby, 2019).

Overall, the misalignment of information with empirically validated interventions, specifically explicit and systematic phonics instruction and phonemic awareness training, highlights the need for more easily accessible evidence-based online resources from reliable sources.

Implications for School Psychology Practice

Given that the Internet is a key source of mental health information for parents (Kubb & Foran, 2020), and some studies suggest teachers frequently use platforms like Pinterest for professional development (Schroeder et al., 2019), the poor alignment of much of this information with evidence-based practices is disconcerting. As more people turn to social media as sources for health and mental health information, clinicians must be mindful of the low quality of intervention information for dyslexia found through Pinterest-linked sites and other social media sources. Awareness of the quality of information available to parents and educators is important to help school psychologists work collaboratively with these stakeholders for treatment planning and when recommending resources. Clinicians must be knowledgeable about their clients’ experiences and pre-existing beliefs about effective interventions in order to provide accurate information (King et al., 2021). Parents and educators are likely to do their own information-searching; therefore, being knowledgeable about the quality of online information would place psychologists in a better position to minimize the potential harm related to clients accessing misinformation online and to direct them away from social media sites such as Pinterest and toward higher-quality and more reliable sources (e.g., professional organization websites).

Results of this study emphasize the importance of recognizing and evaluating the source of and motivations behind information posted online. Many of the sites featured authors lacking formal qualifications or information with no listed sources, highlighting the importance that school psychologists ensure their clients possess the skills to critically evaluate online information. Additionally, many sites featured sponsored content or direct financial incentives to endorse certain products or programs. Since sponsorships typically present a financial motive for authors to present products or information in a positive light, school psychologists must be mindful of how such considerations may influence the recommendations sites provide and information they endorse.

Limitations

Pinterest’s search results are influenced by one’s search history, the pin’s poster, and the relevancy of the pin to the search criteria. Thus, other users’ search results will likely be affected by factors that are difficult to account for in a research setting. Additionally, this study and its findings represent a snapshot of the current information landscape regarding intervention information for dyslexia within Pinterest-linked sites and cannot account for changes and updates that may influence how Pinterest returns search results or updates to information curation online as a whole. Relatedly, sites that lacked keywords were not included in the analysis, meaning that it is possible that sites providing reliable evidence-based information about dyslexia are available to Pinterest users but not included in the current review. Finally, despite use of a standardized pro forma, there was a degree of subjectivity to how the data were scored, as evidenced by the inter-rater reliability value; however, inter-rater reliability and percent agreement between raters were consistent with other findings in similar work (see King et al., 2021; Kisely et al., 2003).

Conclusion

This study found that the quality of information about intervention for reading disorders and difficulties on Pinterest-linked sites is low and poorly aligned with current evidence-based practices. Despite the potential for Pinterest as an educational resource for dyslexia intervention, there remains considerable room for improvements that will ultimately benefit users. The insights gained here can enhance psychologists’ and educators’ understanding of the quality of information available through Pinterest so they can be better prepared to ensure optimal outcomes for children affected by dyslexia.

Footnotes

Acknowledgements

The authors gratefully acknowledge Dr. Stephen Kisely for allowing the use and modification of the pro forma used in this study.

Correction (December 2025):

Article type updated to read as Special Issue: Graduate Student Research Article, instead of Brief Article

Ethical Considerations

The current work was not required to undergo UREB review, as no human participants were involved. No informed consent was required.

Author Contributions

Green: 70% (Conceptualization, data extraction/coding/analysis/interpretation, manuscript preparation); Ritchie: 10% (Support with data analysis, committee membership); Heymann: 5% (Support with conceptualization, committee membership); McCay: 5% (Inter-rater reliability coding); King: 10% (Supervision, manuscript preparation).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a Canada Graduate Scholarship – Masters (CGS – M) from the Social Science and Humanities Research Council of Canada (SSHRC) awarded to the first author.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data set available upon request.