Abstract

This article explores the limitations of traditional maximum performance tests in psychological assessments within educational settings, highlighting the need for efficiency assessments to better understand students’ real-world capabilities. While maximum performance tests like IQ and achievement tests reveal peak abilities under optimal conditions, they often fail to reflect students’ proficiency and speed, crucial for classroom success. Efficiency assessments, such as Curriculum-Based Measurements (CBMs), provide valuable insights by evaluating both accuracy and speed, offering a more practical measure of skill mastery. By integrating these assessments, psychologists can more accurately identify areas of need, inform targeted interventions, and monitor progress, ensuring tailored support for each student’s unique learning profile. This comprehensive approach enhances academic development, ensuring that students receive the necessary support to thrive in educational settings.

Keywords

One of the primary purposes of a psychological assessment in an educational setting is to understand a student’s learning profile, which in turn enables the recommendation of appropriate interventions. Psychologists evaluate students’ cognitive abilities and their impact on academic skills, as well as assess social-emotional functioning, self-perception, and mental health status. The findings of the assessment then inform the recommendations for educators, families, and the client on how to best support the student. Traditionally, psychologists working in educational settings have employed maximum performance tests as the primary means of assessment. Maximum performance tests can be helpful tools as part of the assessment process. However, they neglect to consider the efficiency and real-world applicability of students’ capabilities, particularly speed and proficiency, which are crucial skills for classroom success.

Maximum performance tests evaluate an individual’s highest level of ability or performance under optimal conditions (Sattler, 2014). This type of testing aims to determine the peak capabilities of a person in specific areas such as cognitive functioning, academic skills, or physical abilities. Maximum performance testing is characterized by its standardized nature and reliance on normative comparisons to evaluate a student’s abilities (Kaufman & Kaufman, 2004). Such tests are administered under strict, uniform procedures to ensure consistency and fairness, which is essential for maintaining the validity and reliability of the test results (Wechsler, 2009). These tests are often used to identify strengths and potential areas for development, and can inform educational planning and interventions. For example, maximum performance tests such as IQ tests aim to provide insight into a student’s highest level of cognitive ability.

Achievement tests are common forms of maximum performance testing in educational settings (Sattler, 2014). In a maximum performance spelling test, for example, a student might be asked to spell a list of words that become progressively more difficult. When the student has misspelled a set number of words in a row, the test is terminated. The number of correctly spelled words is then scored, indicating the student’s current maximum spelling ability, which is then compared to the normative sample. This allows testers to determine if the student is in the average, below average, or above average range for someone their age, which in turn is used to inform further educational planning and interventions (Bloom, 1984; Fuchs & Fuchs, 1999).

While such testing has benefits in identifying a student’s peak capabilities and potential, maximum performance testing does not always accurately reflect real-life performance. Consider, for instance, the following scores of a student with reading difficulties: on the Wechsler Individual Achievement Test. (WIAT) subtests, the student has a standard score of 78 on pseudoword reading and 75 on word reading, but achieves a standard score of 92 on reading comprehension. This discrepancy can create a misunderstanding of the student’s actual reading abilities as the test results may suggest that their comprehension skills are age-appropriate and sufficient for understanding reading material within a typical classroom setting, when in reality, they are not. This could lead to providing insufficient recommendations around reading or simply providing the student with extra time to read. What this manner of testing misses is that a student with this skill profile, when engaged in a reading comprehension activity, will often trade time for accuracy. They may, for example, extend administration time by repeatedly rereading passages to locate the answers to each question. Consequently, the reading comprehension score may not accurately represent real-world classroom performance, where students do not have the luxury of spending hours rereading the same passages to answer questions. Alternatively, because reading is so cognitively taxing, they may choose to simply opt out of reading in the classroom. A student needs an efficient reading system that facilitates mastery of foundational reading skills. Mastery of foundational skills leads to the ability to quickly and accurately read words so students can acquire and apply knowledge from the page. This efficient reading and comprehension process is crucial for learning and allows students to demonstrate understanding through timely responses to questions. To adequately assess mastery of skills, assessment tools must take into consideration both accuracy and completion time. It is, of course, crucial to ensure the student is accurate in their responses, but it can be equally as important to assess whether the student has the ability to perform the task quickly. Taken together, accuracy and speed demonstrate that the skill is well-consolidated or mastered.

For a student who decodes words accurately but lacks sight recognition, the cognitive load is higher due to the decoding process (Cunningham et al., 2011). This increased cognitive load reduces the resources available for comprehension, resulting in a need to reread passages multiple times to fully understand the text. Each rereading incrementally adds to the student’s overall understanding. In contrast, a student with an efficient reading system recognizes most words on sight, resulting in a lower cognitive load for word recognition and allowing for more cognitive resources to be allocated toward comprehension.

The measurement of a student’s skill development is captured in the scientific framework of the instructional hierarchy (Deno & Mirkin, 1977), which includes several stages through which learners progress. Initially, learners experience frustration, characterized by struggle, errors, and increased comprehension time when first encountering a new skill. During the instruction phase, learners receive guided practice and teaching to help overcome these challenges, which leads to high accuracy and increasing speed. Finally, in the mastery stage, learners achieve a high level of proficiency and automaticity, demonstrating their ability to perform the skill quickly and accurately. Ideally, this progression ensures that learners not only acquire new skills but also become fluent and adaptable in their application.

Evaluating accuracy under timed conditions provides valuable information to students, parents, teachers, and psychologists about whether a specific academic skill has reached a level of mastery that can support higher-level learning. Maximum performance testing does not necessarily provide this information. A student could score high on a maximum performance test but be in the instructional stage of frustration. In the reading comprehension example above, the student with weak reading skills scores in the average range on a maximum performance reading comprehension test. While the student may achieve a “typical” reading comprehension score, they may still struggle to acquire necessary knowledge within classroom time constraints. Therefore, integrating efficiency or proficiency tests into psychological assessments is essential to obtain a more accurate understanding of a student’s functional capabilities in real-world settings (Hosp et al., 2016).

Efficacy testing is not new to those working in the field; school psychologists have several efficiency assessments as part of their repertoire. Tests such as the Test of Word Reading Efficiency (TOWRE, Torgesen et al., 2012) provide information on a student’s age-appropriate oral decoding and word reading skills. For reading comprehension, assessments such as the Gray Oral Reading Test (GORT; Wiederholt & Bryant, 2012) offer insights into a student’s reading efficiency, fluency, and comprehension. Unlike reading comprehension measures where the passages remain in front of the student while answering questions, the GORT requires the passage to be removed as the student answers factual and inferential questions. This method assesses how efficiently the reading system has developed by evaluating the retention of the information read. By not having the passage in front of the student, the GORT evaluation focuses on how well the student comprehends and retains the material.

Students with underdeveloped foundational reading skills (in the frustration stage) will score lower on the GORT comprehension scale because they concentrate more on word-level components rather than overall comprehension (Denton et al., 2022). Additionally, students with ADHD might also score lower, as they may read proficiently but fail to attend to or retain the information (Ghelani et al., 2004). These assessments are crucial in identifying specific areas where students may need additional support, enabling targeted interventions to improve reading skills.

Curriculum-Based Measurement (CBM) is another example of an efficiency test; this is the most commonly used form of academic screening, as it directly ties to academic skill development, uses standardized assessment tools for easy and reliable comparison, and the tools are widely available and free of charge (Stecker et al., 2005). CBM tools are designed to be easy and quick to administer, typically taking only 1 to 3 min. As they are norm-referenced, they immediately indicate whether a specific academic skill is developing typically. This immediate feedback is valuable for determining if the targeted academic skill needs further development in order for the student to fully engage in regular classroom activities.

An example of how CBMs are used effectively in psycho-educational assessments is the Oral Reading Fluency (ORF) test. This test is easy and quick to administer. During the assessment, a student is asked to read a grade-level passage aloud for 1 min, and the number of words read correctly is recorded. The correct words read are then compared to a norms chart that indicates cut points for typically developing and atypically developing reading skills, providing information on whether the student’s reading skills are developing as expected. This type of assessment is particularly useful because it focuses on practical, real-time performance rather than maximum potential under optimal conditions, providing a more accurate picture of a student’s everyday reading abilities than maximum performance testing alone (Hosp et al., 2016).

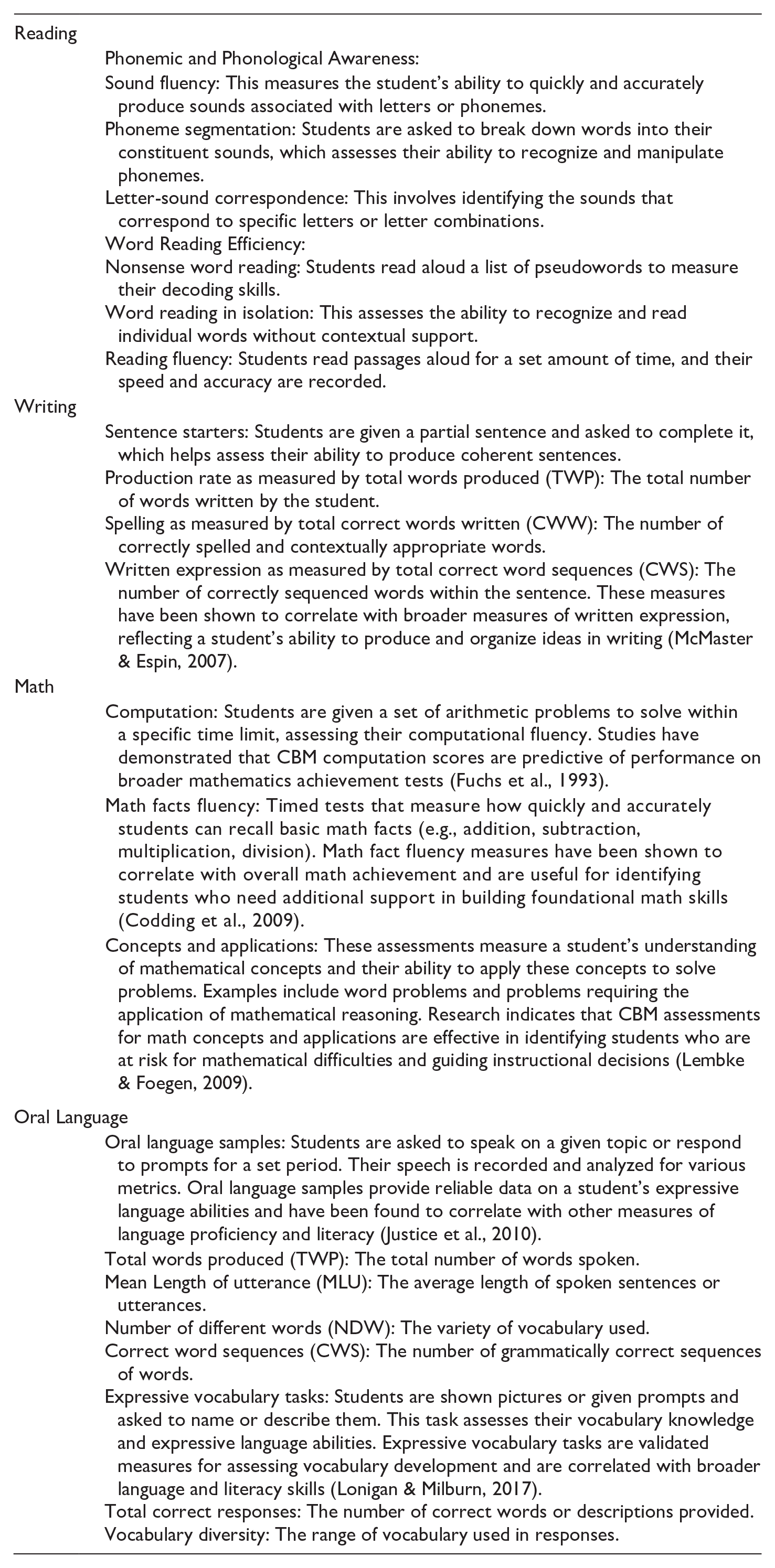

Reading, writing, and math are not singular skills but are comprised of multiple interconnected skills. Therefore, there are specific CBMs designed to assess each of these individual abilities. It is not necessary to test each skill during every reassessment; instead, testing should be targeted to the developmental needs of the individual. As discussed below, specific CBMs can be used to determine where a particular skill deficit lies, allowing for more focused and effective interventions.

CBMs can be used as quick screening tools within a psychological assessment to help practitioners identify which of a student’s academic skills are in the frustration, instruction, or mastery stage. For example, when a grade 4 student comes in for testing, the clinician can administer an Oral Reading Fluency test (2 min), a Sentence Starter task (5 min), a Math Computation test (3 min), and an Oral Language Sample (5 min). Within 15 min of testing time, the clinician will have a clear understanding of which skills are developing typically and which skills need further investigation.

For example, if a grade 5 student scores below the developmental cut-off point on an Oral Reading Fluency (ORF) test, it indicates that the student has not mastered reading fluency for their age. The next step is to determine the underlying cause. By using maximum performance tests such as the WIAT (Wechsler Individual Achievement Test) or KTEA (Kaufman Test of Educational Achievement), the clinician can administer subtests such as pseudoword reading or word reading to isolate which specific reading skills are underdeveloped. Follow-up tests measuring phonological and /or orthographic processing can further identify any underlying issues.

Alternatively, rather than using maximum performance testing, the psychologist could assess downward through the academic skills to determine which skill has been mastered and which are in the frustration stage. For example, if a grade 4 student scores below the cut-off point on a grade 4 ORF test, a grade 2 ORF test could then be administered. If they score below the grade 2 ORF end-of-year cut-off point, a grade 1 Nonsense Word Fluency test could be administered, and so on. The psychologist would continue administering tests downward until identifying the skill level that has been developed. From here, they could select the skill just above this level for targeted remedial intervention.

To assist in using CBMs (Curriculum-Based Measurements) for intervention decision-making, resources such as the Road to Reading Infographic can be utilized. This resource provides a structured approach to identifying and addressing reading deficits (https://www.ldatschool.ca/road-to-reading-infographic).

Similar techniques can be used for writing assessments. Instead of waiting 10 to 15 min for a writing sample, the clinician can administer a Sentence Starter task to quickly gather a meaningful sample of what the student can produce in 3 min. By analyzing the three measures used in the Sentence Starter task—Total Words Produced, Correct Words Written, and Correct Word Sequences—the clinician can determine whether the student’s ability to express ideas on paper quickly and accurately is developmentally appropriate. If the Correct Words Written measure is below the developmental cut point, further exploration of the student’s spelling skills is required. If the Total Words Produced measure is below the cut point, the clinician should then investigate the student’s graphomotor abilities, idea generation, or overall oral language skills.

In addition to identifying a student’s instructional stage, CBMs can also be used to monitor progress. These measures are designed with multiple equivalent forms of the same assessment, allowing for frequent and consistent administration to accurately track student development over time. Psychologists and teachers can administer these tests weekly or biweekly to collect data on student progress. This frequent data collection enables educators to monitor the effectiveness of interventions and make timely adjustments if students are not making sufficient gains. This dynamic approach ensures that interventions are responsive and tailored to meet the evolving needs of each student.

In conclusion, integrating efficiency and proficiency assessments alongside traditional maximum performance tests provides a more comprehensive understanding of a student’s learning capabilities. While maximum performance tests effectively identify peak abilities under optimal conditions, they often fail to capture the practical, real-world performance required for classroom success. Efficiency assessments such as Curriculum-Based Measurements (CBMs) offer valuable insights into a student’s functional abilities by evaluating both accuracy and speed, which are crucial for mastering foundational skills. By utilizing a combination of these assessments, psychologists can better identify specific areas of need, inform targeted interventions, and monitor progress over time. This holistic approach ensures that educational support is tailored to each student’s unique learning profile, ultimately enhancing their academic development and success.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.