Abstract

Many countries rely on statements issued by professional associations to delimit the scope of school psychological practice. It is, however, not always clear to what extent these statements match current practices and school psychologists’ self-perception of their professional role, as empirical data is often unavailable or limited. This study aims to address this gap by collecting empirical data on the scope of school psychological practice in Germany. In a mixed method study, we first applied the Delphi technique to develop a questionnaire in collaboration with school psychological experts from different federal states of Germany. Second, we collected information on federal policies through semi-structured interviews with regional experts. Third, we invited a representative sample of school psychologists to complete the questionnaire developed through the Delphi technique. In this first report, we focus on the Delphi procedure and overall results of the survey describing the scope of school psychological practice in Germany at a country-wide level. These findings provide a detailed characterization of the broad and heterogeneous scope providing an evidence-base for future research and political decision-making.

The broad range of services provided by school psychologists in many countries often makes it difficult to delimit the scope of activities for which school psychologists claim to be responsible. From the perspective of social identity theory (Hogg & Terry, 2000; Tajfel & Turner, 1986), delimitations of boundaries “give stability to the social identity of members and legitimacy to the entity as a ‘social unit with a life of its own’” (Aldrich, 1999, p. 112) (Montgomery & Oliver, 2007, p. 678). Defining the scope of a profession, therefore, has the potential to strengthen the perception school psychologists have of their professional role, a concept often referred to by the term professional identity (Brott & Myers, 1999). The way practitioners perceive their own professional role results from a “process of continual interplay between structural and attitudinal changes” (Brott & Myers, 1999, p. 339). Ultimately, professional identity “serves as a frame of reference from which one carries out a professional role, makes significant professional decisions, and develops as a professional” (Brott & Myers, 1999, p. 339). On top of the impact clear boundaries may have on professional identity, delimiting the scope of school psychological practice also makes it possible for service users, policymakers, and other professions to recognize unique knowledge domains and adjust their expectations accordingly (Montgomery & Oliver, 2007).

With the aim of strengthening professional identity and defining standards of practice, professional associations in many countries have issued statements or guidelines delimiting the scope of school psychological practice (e.g., Canada: Professional Practice Guidelines for School Psychologists in Canada—Canadian Psychological Association, 2007; Germany: School Psychology in Germany—Professional Profile, Professional Association of German Psychologists, BDP Sektion Schulpsychologie, 2015; USA: The Professional Standards of the National Association of School Psychologists—NASP, 2020). Based on extensive collaborative discussions among leading members of the professional community, these documents reflect shared representations of the responsibilities practitioners are expected to fulfill.

There have, however, also been several attempts to delimit the scope of school psychological practice by collecting empirical data on current practices school psychologists engage in and how they perceive their professional role (e.g., Coelho et al., 2016; Corkum et al., 2007; Jimerson et al., 2004, 2006, 2008, 2009, 2010; Jordan et al., 2009; Trombetta et al., 2008). These contributions complement professional statements and guidelines by providing an evidence-base of how “real life” school psychological practice looks like in contrast to agreements on the responsibilities that “should” be fulfilled by school psychologists. A combined analysis of both sources of information opens new opportunities to target efforts in strengthening underdeveloped areas and in this way move the field forward.

In this study, we aimed to contribute to the delimitation of the scope of school psychological practice by collecting empirical data on the professional practice of school psychologists working in Germany. We acknowledge that our study might be especially interesting to readers attached to the school psychology community in Germany. However, we believe that the questions we aimed to investigate, as well as the methods we applied, may be informative to a broader international audience and may be replicated in other countries to further define the scope of school psychological practice. Therefore, we conceptualize this report as an opportunity to communicate the rationale, methodology and findings of a collaborative research project that aims to bring researchers and practitioners together with the purpose of delimiting the scope of school psychological practice.

To the best of our knowledge, so far there has only been one attempt to collect empirical data on the scope of school psychological services in Germany by Jimerson et al. (2006). In the context of the International School Psychology Association, in 2003 the International School Psychology Survey was administered to a sample of 45 school psychologists in Germany, as well as in other countries. While this study represented a first step to document how “real life” school psychological practice in Germany looked like, there are several limitations that make it difficult to estimate the validity of the evidence that was obtained. For instance, only 45 of the approximately 1,050 (4%) school psychologists that were working in Germany at the time of the study completed the survey (Jimerson et al., 2006). Furthermore, there is no information on the federal states of Germany in which participants were working as school psychologists. Each of the 16 federal states has their own autonomous educational system and school regulations, which are likely to play an important role in defining school psychological practice.

A final limitation of the available empirical data on the scope of school psychological practice in Germany refers to the validity of the questionnaire items used to collect information from participants. As the International School Psychology Survey was originally developed for the U.S. context and then translated and adapted to be used in other countries, it is difficult to estimate to what extent participants understood the terms used to refer to different types of services in the intended way. This limitation was also pointed out by Jimerson et al. (2009) when using the International School Psychology Survey with practitioners in New Zealand: “it is likely that educational psychologists’ efforts to fit reports of their practice into the categories supplied would mean that the reports were not entirely valid” (Jimerson et al., 2009, p. 450).

To address this limitation, our study aimed to engage in collaborative efforts with an expert panel of experienced school psychologists from the 16 federal states of Germany to reach consensus on a set of valid questionnaire items to explore different aspects of school psychological practice in Germany. More specifically, we aimed to collect empirical data on five domains of professional practice addressed by the national statement issued by the Department of School Psychology of the Professional Association of German Psychologists (BDP Sektion Schulpsychologie, 2015): (a) demographics, (b) provided services, (c) ethical principles, (d) initial training and professional development and (e) quality assurance. For further information, we provide a translated version of the national statement on the Open Science Framework (OSF) project page for this study.

The Present Study

In sum, this study aimed to explore the scope of school psychological practice in Germany by addressing the following research question: What is the range of responses provided by school psychologists in Germany on the following five domains of their professional practice: (a) their own demographic information, (b) services they provide, (c) ethical principles, (d) initial training and professional development and (e) quality assurance of services?

Methods

This study was conceived as a registered report following guidelines by the Canadian Journal of School Psychology (see Shaw et al., 2019 for a detailed explanation). The introduction, methods and data analysis plan underwent peer review and received an in-principle acceptance letter before data collection began. This so called “Stage I” manuscript was made public on OSF on August 18th, 2020. Once we completed data collection and analysis, we submitted the final version of this manuscript (i.e., “Stage 2’) for peer review. The introduction, methods, and data analysis section of the Stage I and II manuscripts are almost identical with minor adjustments concerning verb tense (i.e., future tense converted to past tense, as plans have now been implemented) and formatting mistakes missed at Stage I (e.g., APA references). In the methods and data analysis plan sections, we have added a subsection called “deviations from pre-registration” to transparently communicate changes introduced after Stage I. Furthermore, in agreement with the editor of the Canadian Journal of School Psychology the final Stage 2 report is distributed into two manuscripts to make the information more digestible for readers. In the present manuscript (i.e., Part 1), we address our first pre-registered research question, while a second manuscript (i.e., Part 2) covers information on two additional pre-registered research questions.

To address the above-mentioned research question, we combined two methodological approaches: (1) an online Delphi investigation with school psychology representatives from different German federal states to design a questionnaire on the scope of school psychological practice in Germany and (2) the administration of this online questionnaire targeted at all school psychologists in Germany. In the following sections we provide details on each of the above-mentioned methodological steps.

Delphi Technique

We followed and slightly adapted the procedure described by Bishop et al. (2016). The Delphi technique is a consensus building method to reach agreement between a group of experts on a specific topic (Hasson et al., 2000). It consists of a series of consecutive cycles in which a panel of experts provides ratings and feedback on a set of statements. This information is then fed back to the entire panel to influence each member’s judgment and increase the chances of reaching a higher consensus in the next cycle of ratings and feedback. Results of each cycle are used to modify statements and move the group toward increasing consensus. The anonymity of the process ensures that “everyone gets a chance to have their views taken into account, without senior individuals or forceful personalities dominating” (Bishop et al., 2016, p. 3).

Participants

To ensure a diverse panel of experts in school psychological practice in Germany, we sent e-mail invitations to the 23 representatives of the Department of School Psychology of the National Association of Psychology of Germany listed on the institution’s website. Further experts recommended by these representatives or personally identified as experts by the authors of this report were also invited. Following Okoli and Pawlowski (2004), we aimed to limit the number of panel members to a maximum of 40 participants. In addition, as a minimum criterion to proceed with this study, we intended to make sure that representatives from at least 10 of the 16 federal states in Germany were involved. In order to provide background information on the expert panel in the final report of this study, we collected the following information on participants’ professional background in the first round of the Delphi technique:

• Years of experience in providing school psychological services in Germany

• Federal state of Germany they were currently working in

• Years of experience in providing school psychological services in the specific federal state of Germany they were currently working in.

Materials and Procedure

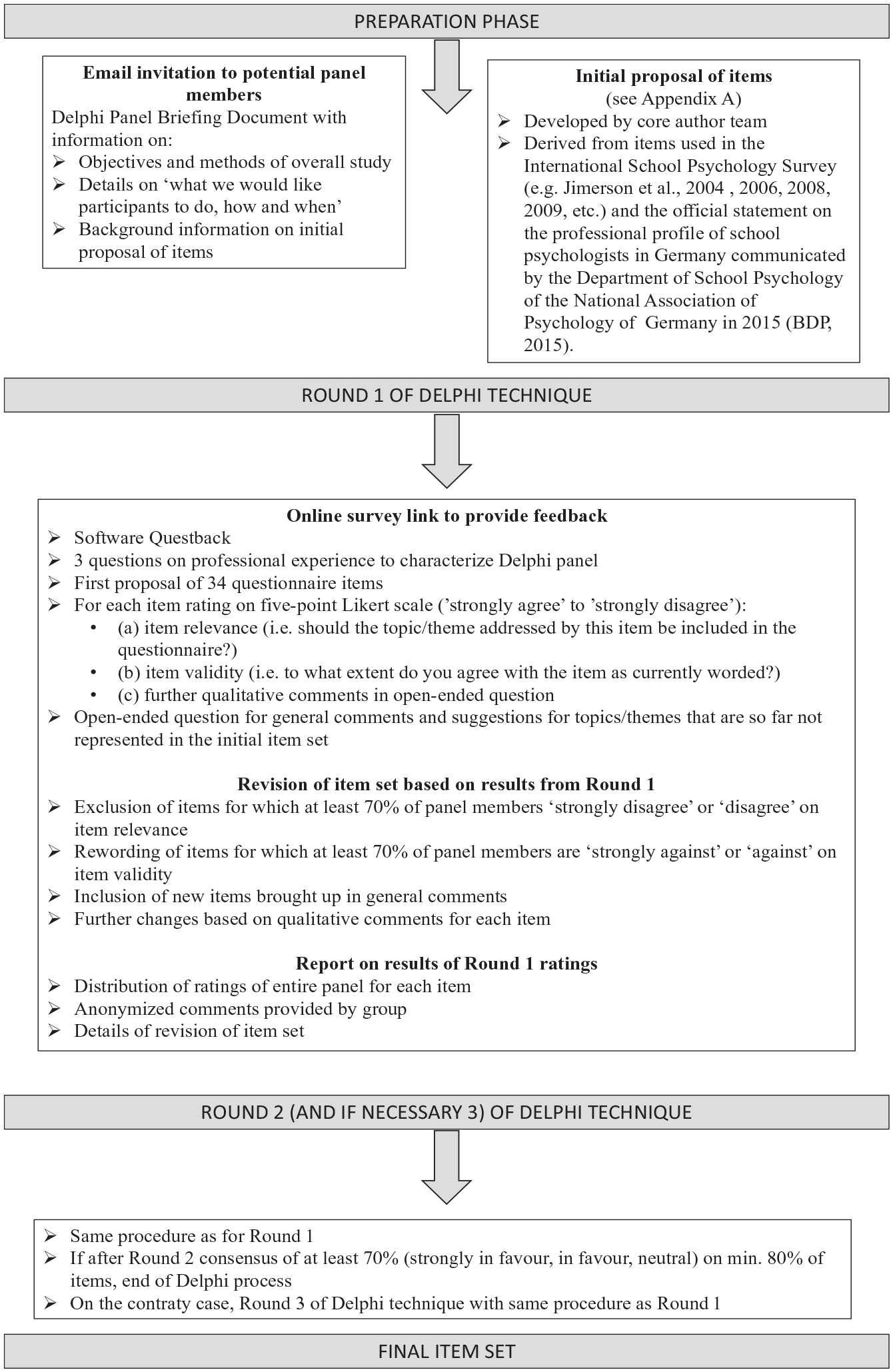

As a first step, we obtained approval from the Ethics Committee of the Department of Psychology of the Goethe University Frankfurt am Main in Germany for panel members to give written consent for their ratings to be used to derive a consensus questionnaire item set. Furthermore, in coherence with the procedure used by Bishop et al. (2016), we followed the methodological steps and used the materials detailed in Figure 1.

Procedure of Delphi technique.

The materials mentioned in Figure 1 are available on the OSF project page for this study.

Deviations From Pre-Registration

At Stage I, we explicitly stated in Figure 1 that “if after Round 2 consensus of at least 70% (strongly in favor, in favor, neutral) on min. 80% of items” (see Figure 1) was reached, this would lead to the end of the Delphi process. However, we omitted explicit information on the criteria to decide if sufficient consensus was reached after Round 1 or if Round 2 was necessary. We noticed this after data for Round 1 had been collected and decided to apply the same criteria after Round 1, as prespecified for the time-point after Round 2.

Survey

Participants

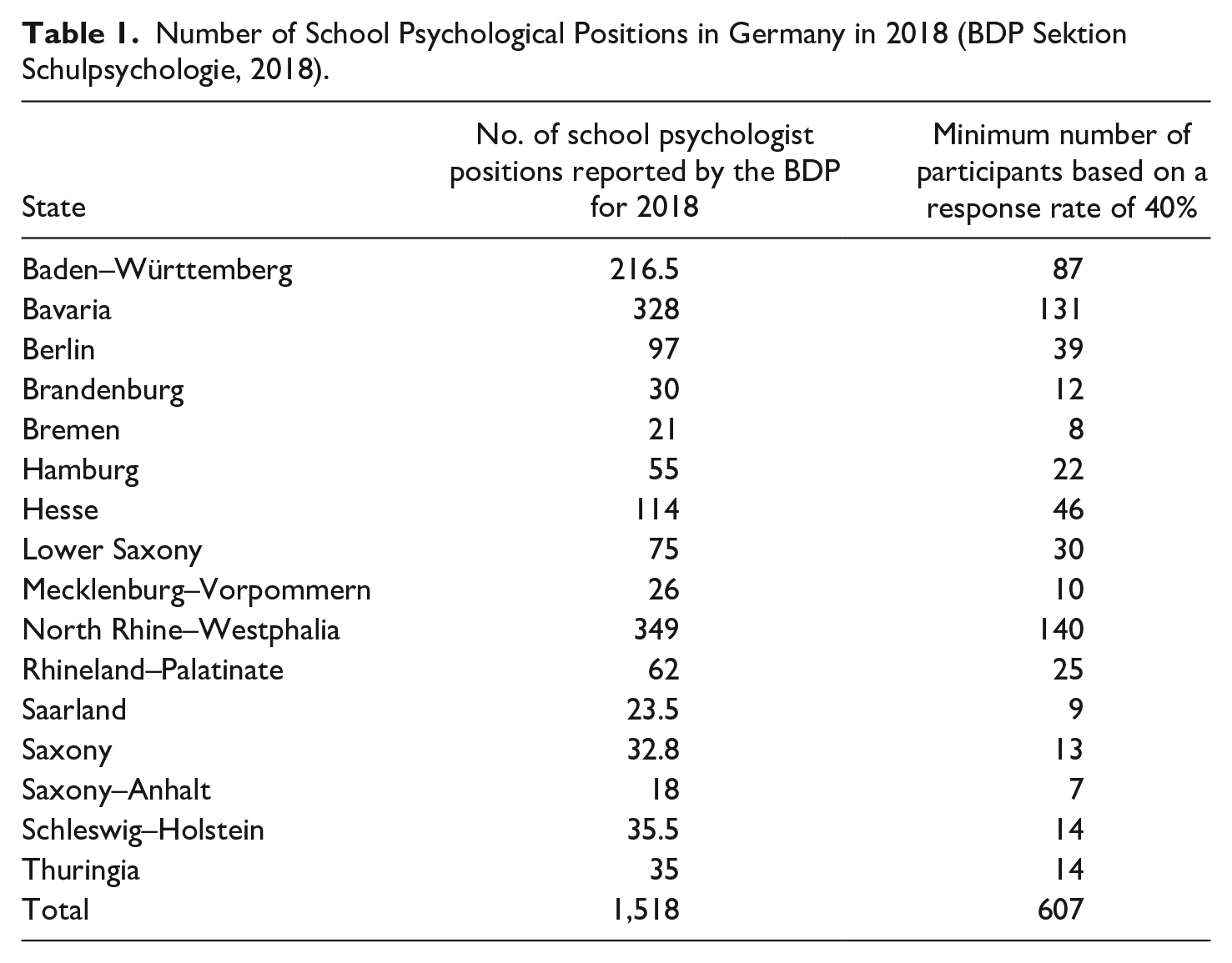

We invited all school psychologists working in Germany to complete an online anonymized survey presented through the online software Questback. The Department of School Psychology of the National Association of Psychology of Germany reports the information presented in Table 1 on the number of school psychological positions per federal state for the year 2018:

Number of School Psychological Positions in Germany in 2018 (BDP Sektion Schulpsychologie, 2018).

Based on these numbers and estimating a response rate of 40%, we aimed to recruit at least 607 participants with the minimum sample sizes across federal states as shown above in Table 1.

According to the guidelines provided by the Ethics Committee of the Department of Psychology of the Goethe University Frankfurt am Main in Germany, we did not need to seek ethical approval for this part of the study for the following reasons: (1) “It can be firmly assumed that participation in the study will not cause any conceivable physical or mental harm or discomfort for the participants that exceed their every-day experiences” AND (2) “The study is based on data anonymized at the source (e.g., return of an outpatient anonymized questionnaire or heart rate data which were collected in the course of a day within a department, anonymous online survey, etc.).”

Materials and Procedure

At Stage I, we were unable to provide the specific items that we planned to use in the survey, as this was sought to be a product of the above-mentioned Delphi technique process. However, we attached an English translation of the initial set of 34 items that we intended to present to the Delphi panel in the first round in Appendix A. They were organized into the following five domains: (a) demographic information on school psychologists (n = 7), (b) services provided by school psychologists (n = 14), (c) ethical principles of school psychological practice (n = 2), (d) initial training and professional development of school psychologists (n = 5), and (e) quality assurance of school psychological services (n = 5). Although the final set of questions included in the survey was dependent on the results of the Delphi technique process, we planned to limit the number of total items included in the survey to a maximum of 50 questions.

Deviations From Pre-Registration

After Stage I, we learned that we needed to apply for permission by the Federal Ministry for Instruction and Culture of Bavaria (in German “Bayrisches Staatsministerium für Unterricht und Kultus”) to share the survey with school psychologists in Bavaria, one of the sixteen federal states of Germany. In response to our application, we were informed that to proceed with data collection in Bavaria, we needed to modify and omit several of the survey items that from the perspective of the federal ministry did not apply to school psychologists in Bavaria. In contrast to the remaining 15 federal states of Germany that request a Masters’ degree in Psychology, the EuroPsy certificate or an equivalent international degree, school psychologists in Bavaria need to prove a teachers’ degree with an additional pass on a state exam in psychology (in German “Staatsexamen in Psychologie”—BDP Sektion Schulpsychologie, 2015, p. 5). This leads to a unique professional profile of school psychologists in Bavaria which differs to a great extent to the profile of the rest of Germany. Considering this unique profile, we agreed with the federal ministries feedback that the survey that resulted from the Delphi-process would have needed major adjustments to be administered in Bavaria. As this would have limited the comparability of the results with data obtained from the remaining 15 federal states of Germany, we decided to stop recruitment of survey participants from Bavaria (target sample specified at Stage I was n = 131) and limit survey data collection to 15 federal states.

Data Analysis

Data collected through the Delphi technique and the survey are available on OSF. Moreover, we relied on the software R (R Core Team, 2013) to perform data analyses.

Preliminary Analyses

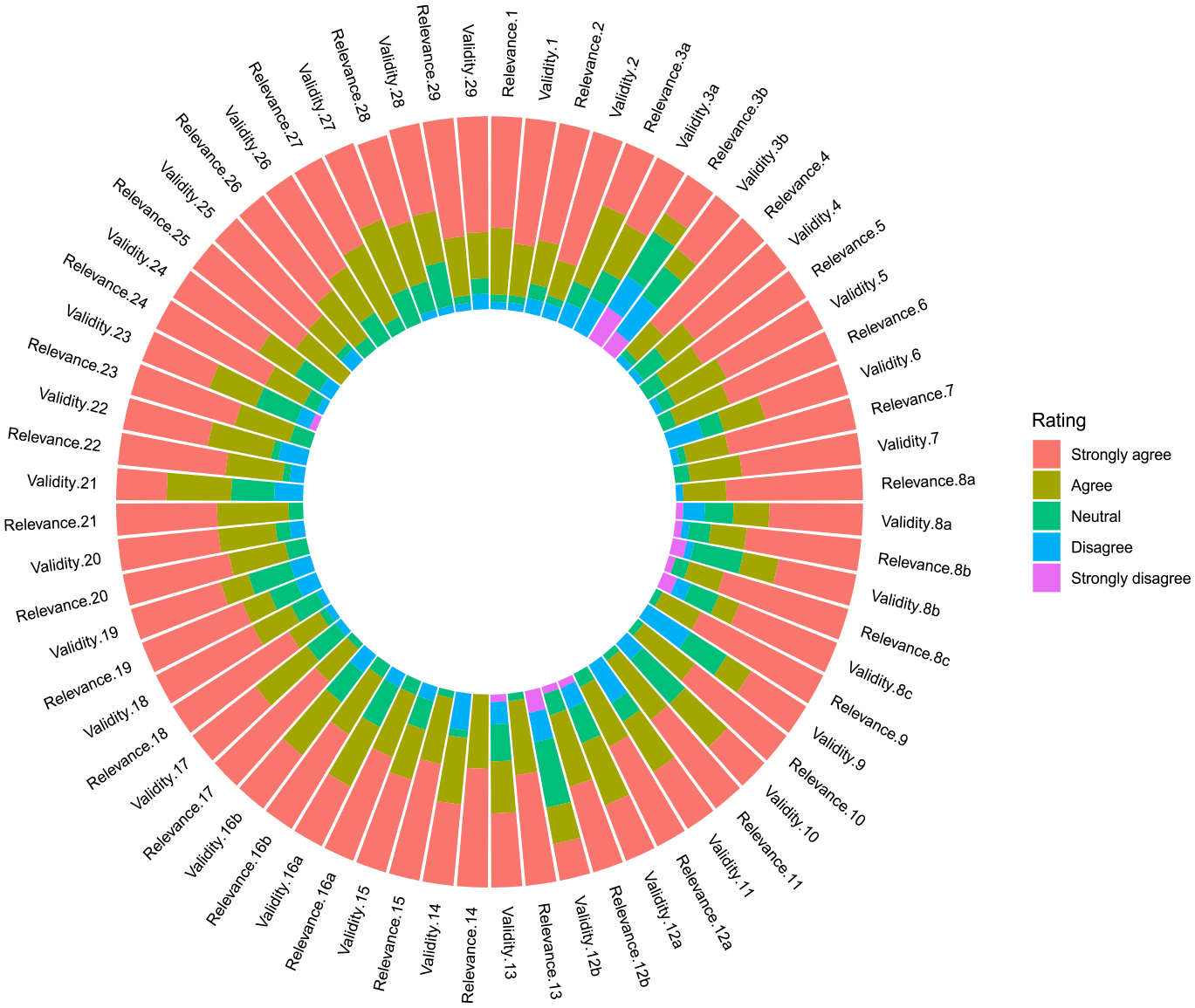

For each round of the Delphi technique and for each item, we computed the percentage of ratings falling into each of the five-point Likert scale categories and represented these results on stacked bar graphs. We also collated anonymous qualitative comments for each item and listed them in a table. This information was presented in a report for panel members and is available on OSF. To pilot this step of the process at Stage I, we conducted two test Delphi cycles among our research team and uploaded the pilot data, R code and report to OSF.

Scope of School Psychological Practice in Germany

Our first research question aimed to explore the range of responses provided by school psychologists in Germany on the following five domains of their professional practice: (a) their own demographic information, (b) services they provide, (c) ethical principles, (d) initial training and professional development and (e) quality assurance of services. For this purpose, we planned to compute descriptive statistics and frequencies for every item of the survey and report this information in separate tables and/or figures for each of the above-mentioned domains of enquiry.

Deviations From Pre-Registration

As explained above, after Stage I we decided not to proceed with the recruitment of school psychologists from Bavaria, one of the federal states of Germany. Despite this decision, our final dataset included responses from five participants from Bavaria. We suspect that they received the survey-link through fellow school psychologists from other federal states. In coherence with our decision to focus on 15 of the 16 federal states of Germany due to the unique profile of school psychologists in Bavaria, we excluded data from these five participants.

Furthermore, while eyeballing the final dataset, we noticed that in section B of the survey (i.e., scope of school psychological services) some participants marked that they had no direct contact with schools, students and teachers (items 9 to 11 – “With how many schools/teachers/students approximately did you get in touch with as a school psychologist in 2021?” – response option “No answer possible, as my work does not include direct contact with schools/teachers/students – e.g., responsible for leadership, coordination, etc.”). However, at a later stage of the survey they completed item 12 (To what educational levels do the schools belong with which you were in contact as a school psychologist in 2021?) and 13 (“What percentage of time did you dedicate on average to counseling/consultation with the following user groups in 2021?”) that referred to direct contact with schools/teachers/students. As we considered these responses as inconsistent, we excluded responses from these participants (n = 24) when computing descriptive statistics for items 12 and 13.

Results

Preliminary Analyses

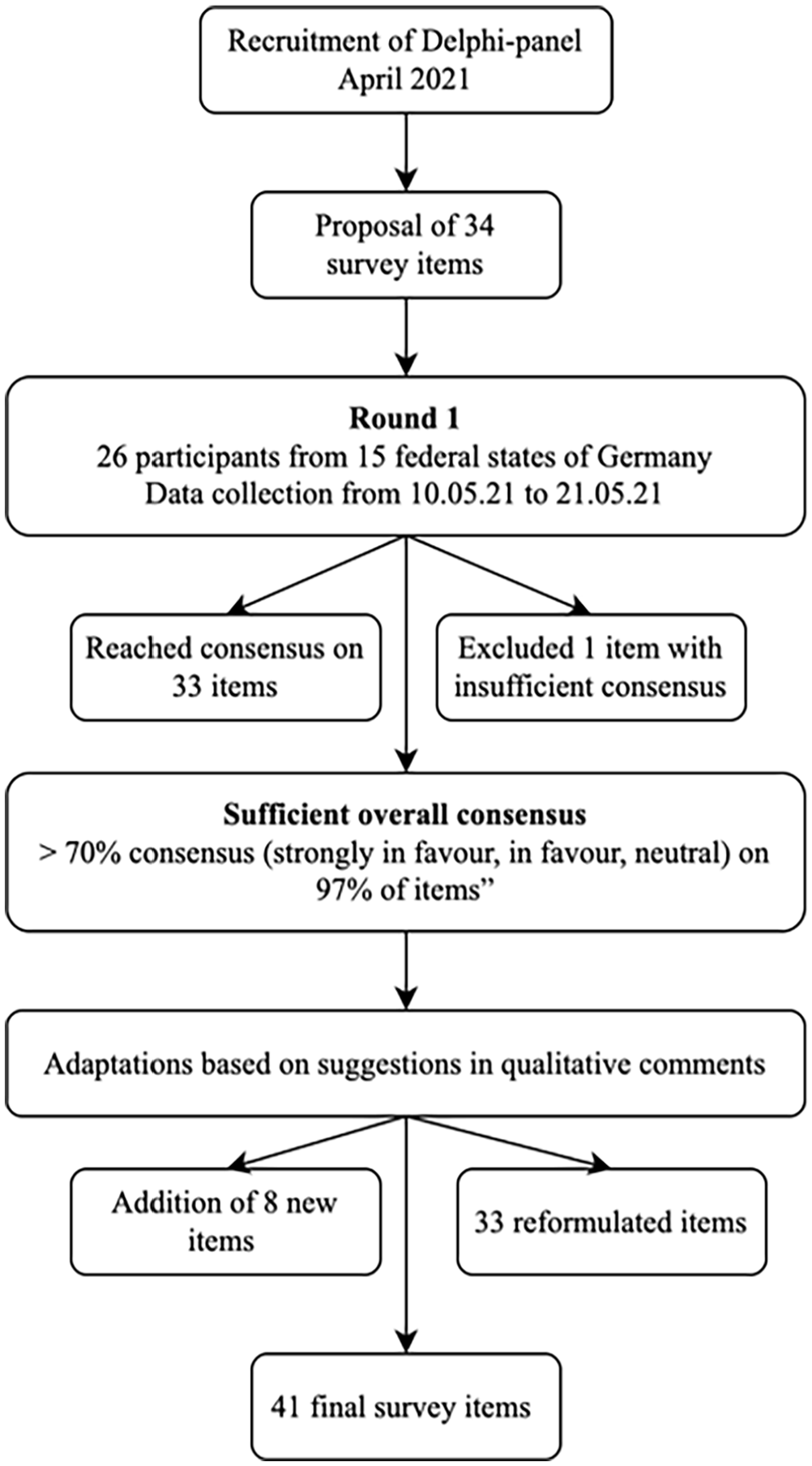

In total, 26 participants from 15 of the 16 federal states of Germany agreed to be part of the Delphi expert panel. On average, participants’ reported 15.88 years of professional experience in school psychological practice (SD = 8.23 years; Range = 5–35 years). Figure 2 provides further details on the implementation of the Delphi process.

Delphi process.

After Round 1, results revealed sufficient overall consensus with ratings over 70% (Likert scale options: “strongly in favor,” “in favor” and “neutral”) for 97% (33 of 34) of the items we initially presented. Figure 3 shows an overview of relevance and validity ratings for each item.

Overview of consensus by item.

Following suggestions provided in the qualitative comments for each item, we reworded items and added, changed, and removed response options. We also added five follow-up items to be shown only if certain responses were selected, as well as eight new items. Therefore, the final survey ended up with 41 items (see OSF for an English translation of the final items). The expert panel received a report with the percentage of ratings, the anonymous qualitative comments and adaptations for each item (available on OSF).

Scope of School Psychological Practice in Germany

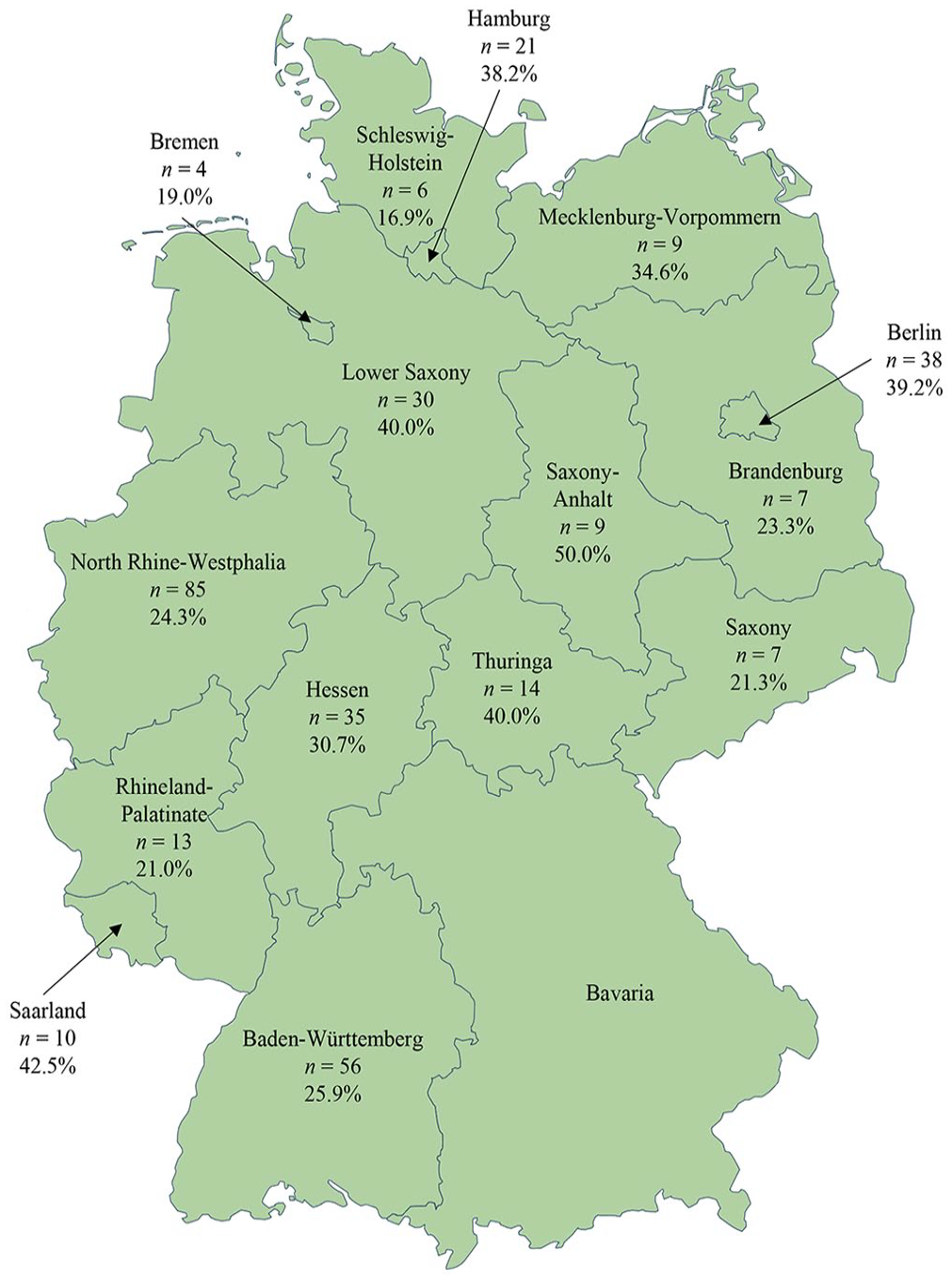

In total, 356 school psychologists completed the above-mentioned survey from the 20th of September of 2021 to the 15th of February of 2022. As mentioned in the pre-registered methods section, we aimed to reach 40% of the overall population of 1,190 school psychological positions (excluding Bavaria) as reported by the Department of School Psychology of the National Association of Psychology of Germany for the year 2018 (BDP Sektion Schulpsychologie, 2018). Unfortunately, however, we were only able to reach 29.83% despite several efforts and repeated invitations to potential participants. Nevertheless, we succeeded in recruiting school psychologists from 15 of the 16 federal states of Germany with participation rates ranging between 16.9% and 50.0% of the overall population per state, as detailed in Figure 4.

Composition of sample per federal state.

Through this survey, we aimed to address our first research question and explore the range of responses provided by school psychologists in Germany on the following five domains of their professional practice: (a) their own demographic information, (b) services they provide, (c) ethical principles, (d) initial training and professional development and (e) quality assurance of services. In the following sections, we report descriptive statistics and frequencies for each of the above-mentioned domains of enquiry and also provide an excel file with complete information for all survey items and response options on OSF.

Demographic Information

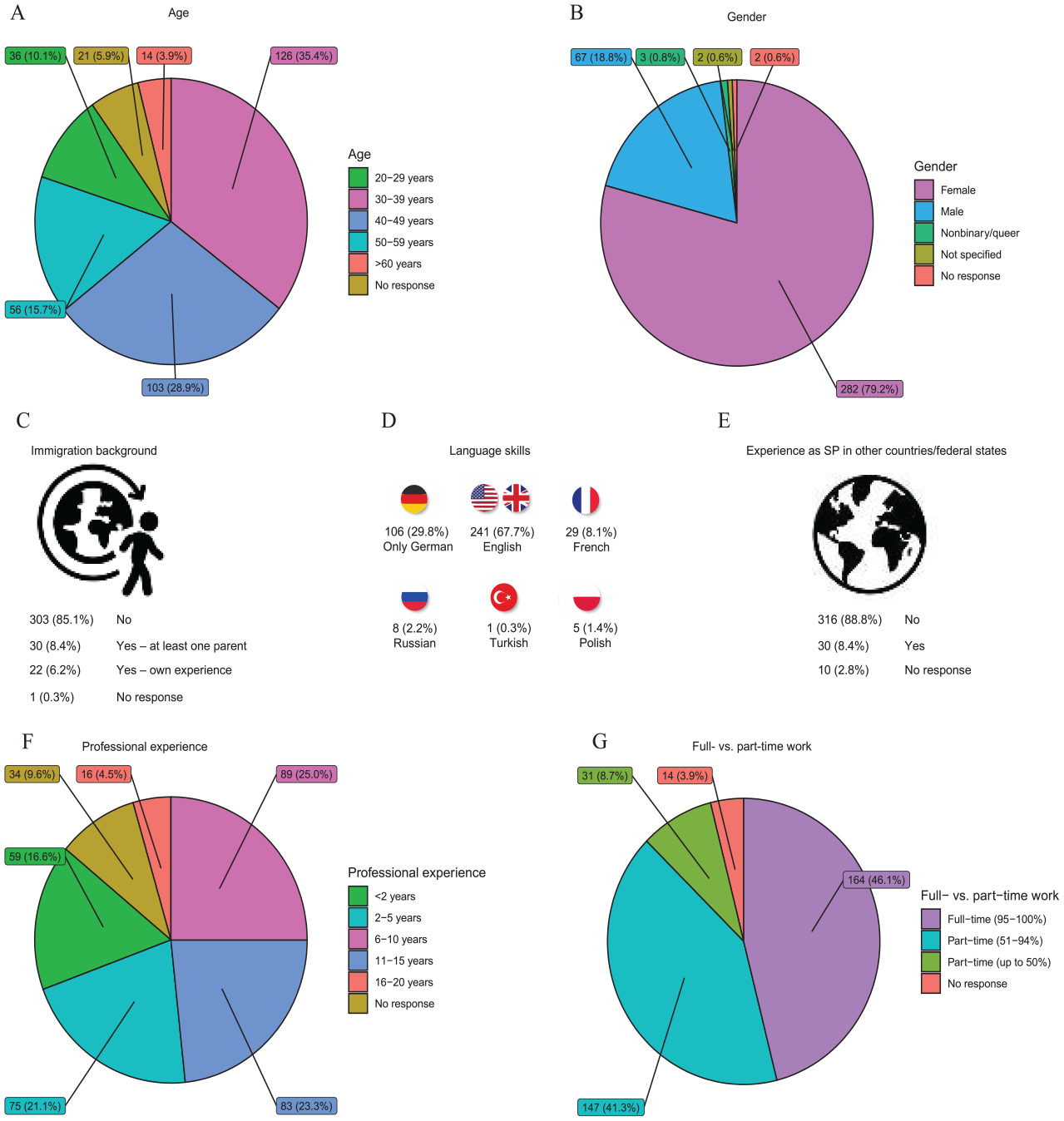

Figure 5 provides an overview of the demographic information reported by school psychologists completing the survey.

Overview of demographics. Panel A: Age. Panel B: Gender. Panel C: Inmigration background. Panel D: Language skills. Panel E: Experience as school psychologist in other countries/federal states. Panel F: Professional experience. Panel G: Full- vs. part-time work.

Most of the participants were aged between 30 and 39 (n = 126 – 35.4%) and 40 to 49 years (n = 103 – 28.9%) with most being female (n = 282 – 79.2%). Only a small proportion of school psychologists reported an immigration background with themselves (n = 22 – 6.2%) or at least one parent moving to Germany from another country (n = 30 – 8.4%). The majority reported that they were able to rely on English language skills during work (n = 241 – 67.7%), while only a third indicated that they only used German (n = 106 – 29.8%). In addition, up to 10% of the sample also used other frequently used languages in Germany (i.e., French, Russian, Turkish, Polish) to provide school psychological services. Furthermore, only 8.4% of school psychologists reported experiences as a school psychologist in a different federal state or country. Approximately three quarters of the sample had been working as school psychologists for 2 to 15 years. Most of them worked part time (51%–94% of regular working hours: n = 147 – 41.3%; up to 50% of regular working hours: n = 31 – 8.7%), although a large proportion also completed full-time work (n = 164 – 46.1%).

Scope of Services

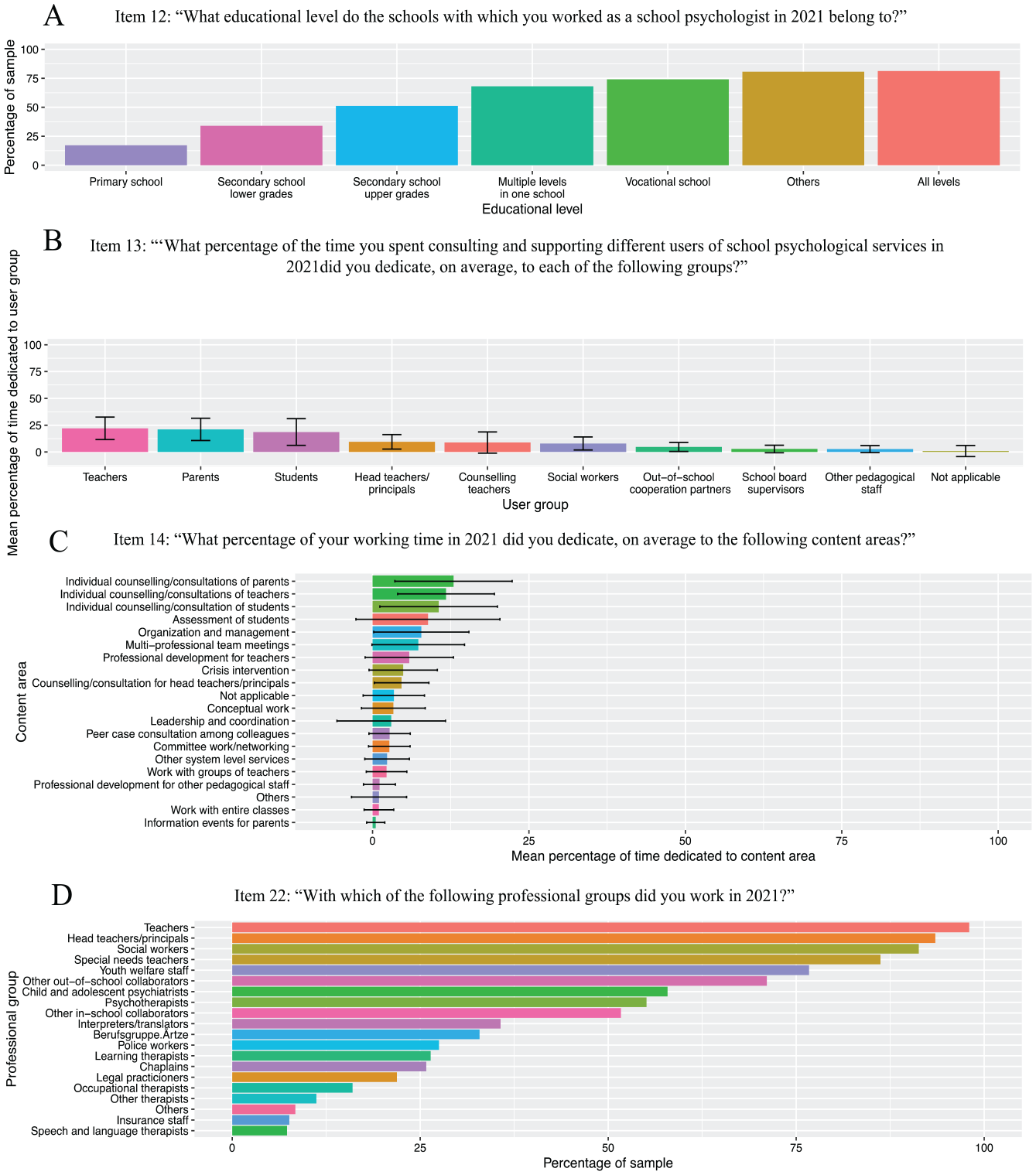

In Figure 6, we present an overview of the findings related to the scope of services provided by school psychologists in Germany.

Scope of services. Panel A: Item 12: “Item 12: “What educational level do the schools with which you worked as a school psychologist in 2021 belong to?” Panel B: Item 13: “‘What percentage of the time you spent consulting and supporting different users of school psychological services in 2021did you dedicate, on average, to each of the following groups?” Panel C: Item 14: “What percentage of your working time in 2021 did you dedicate, on average to the following content areas?” Panel D: Item 22: “With which of the following professional groups did you work in 2021?”.

In terms of the amount of contact with service users in 2021, most school psychologists indicated that they worked with 11 to 20 schools (n = 111 – 31.2%), less than 100 teachers (n = 162 – 45.5%) and less than500 students (n = 303 – 85.1%). Moreover, school psychologists reported to predominantly cater to primary (n = 270 – 81.3%) and secondary schools (lower grades: n = 268 – 80.7%; upper grades: n = 246 – 74.1%), as well as schools with multiple educational levels (n = 226 – 68.1%), while approx. half of the sample also worked with vocational schools (n = 170 – 51.2%), all of the above-mentioned options (n = 113 – 34.0%) or other educational levels (n = 57 – 17.2%).

On average, school psychologists dedicated most of their time to work with teachers (M = 22.0; SD = 10.5), parents (M = 21.1; SD = 10.4) and students (M = 18.6; SD = 12.4) and less than 10% of their time with other user groups (e.g., head teachers/principals, social workers, etc. – see Figure 6). In coherence, the content areas that they spent most time on were individual counseling/consultation of parents (M = 12.9; SD = 9.4), teachers (M = 11.7; SD = 7.7) and students (M = 10.6; SD = 9.4) with less than 10% of their time dedicated to other content areas such as assessment of students (M = 8.8; SD = 11.5) or professional development instances for teachers (M = 5.9; SD = 7.0 – more details in Figure 6). The top three professional groups school psychologists collaborated with were teachers (n = 349 – 98.0%), head teachers/principals (n = 333 – 93.5%) and social workers (n = 325 – 91.3% – more details in Figure 6).

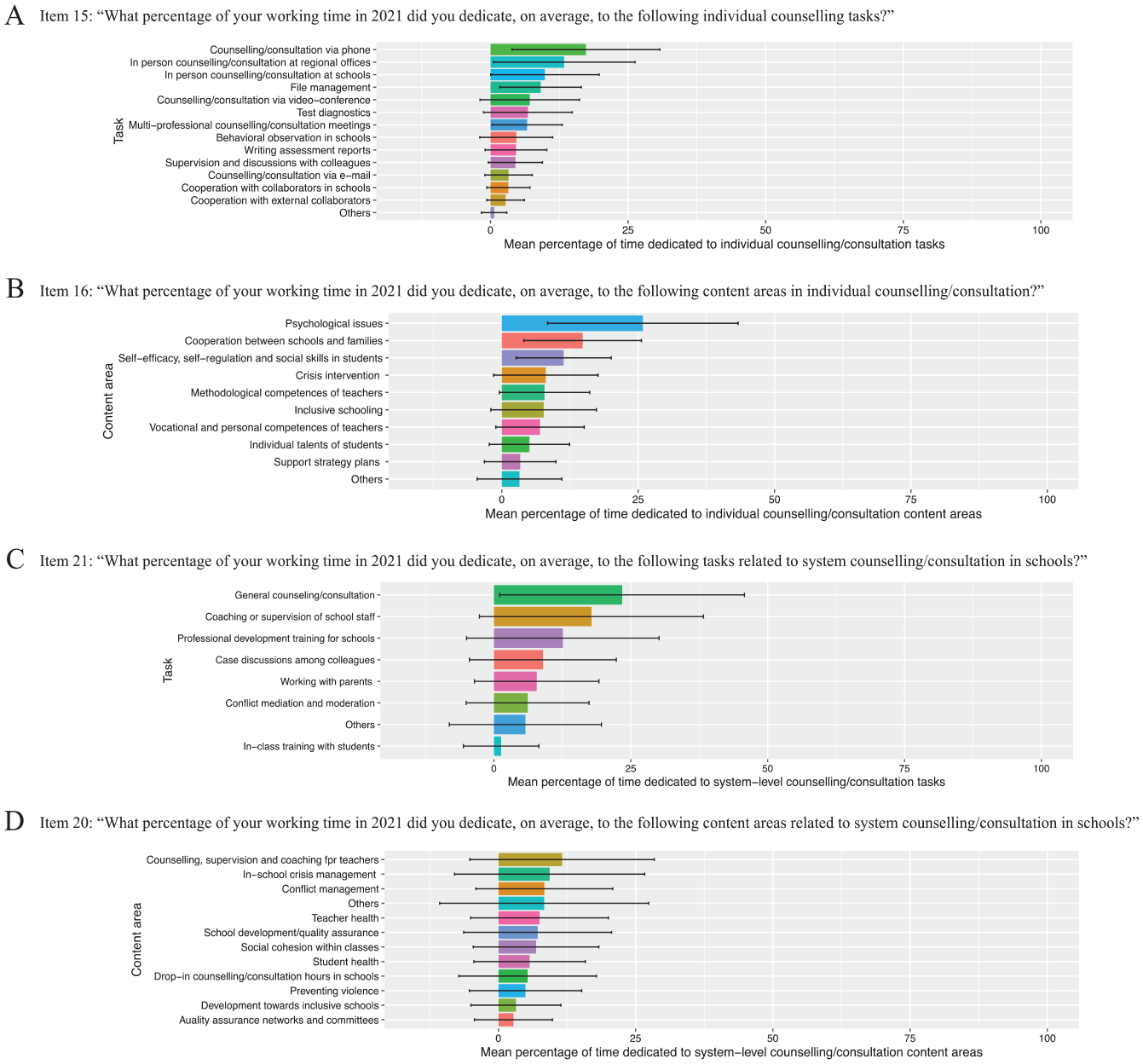

In Figure 7 we provide further details on the scope of individual and system-level counseling/consultation tasks and content areas.

Scope of individual and system-level counseling/consultation tasks and content areas. Panel B: Item 16: “What percentage of your working time in 2021 did you dedicate, on average, to the following content areas in individual counselling/consultation?” Panel C: Item 21: “What percentage of your working time in 2021 did you dedicate, on average, to the following tasks related to system counselling/consultation in schools?” Panel D: Item 20: “What percentage of your working time in 2021 did you dedicate, on average, to the following content areas related to system counselling/consultation in schools?”.

In relation to individual counseling/consultation, school psychologists reported that they spent the largest percentage of their time providing services by phone (M = 17.3, SD = 13.4), in person at regional offices or consultation/counseling centers (M = 13.4, SD = 12.8) and at schools (M = 9.8, SD = 9.8). The content areas most in demand focused on psychological issues (M = 25.8, SD = 17.5), cooperation between schools and families (M = 14.8, SD = 10.8) and fostering self-efficacy, self-regulation and social skills in students (M = 11.3, SD = 8.7).

To describe the most frequent reasons for individual counseling/consultation with students, we asked participants to select five from 48 possible topics. Our findings show that school psychologists mostly focused on noticeable social behavior (n = 175 – 49.1%), absenteeism (n = 169 – 47.5%) and disruptive behavior in class (n = 126 – 35.4%). Similarly, teachers contacted school psychologists most frequently when they needed support to handle difficult classes (n = 263 – 73.9%), students with psychological issues (n = 230 – 64.6%) and in relation to their own psychological wellbeing (n = 168 – 47.2%). The most frequently mentioned reasons to engage in crisis intervention concentrated on suicidality (n = 214 – 60.1%), self-harming behavior (n = 126 – 35.4%) and threats of violence (e.g., violence toward school staff – n = 119 – 33.4%).

Regarding system-level counseling/consultation, school psychologists mostly dedicated their time to general services (M = 23.4, SD = 22.3), coaching or supervision of school staff (M = 17.8, SD = 20.5) and professional development training for schools (M = 12.6, SD = 17.6). Content-wise they dedicated most of their time to create opportunities for individual and group counseling, supervision and coaching for teachers (M = 11.6, SD = 16.8), to provide training for in-school crisis management (M = 9.3, SD = 17.3) and focused on conflict management (M = 8.3, SD = 12.5).

Ethical Principles

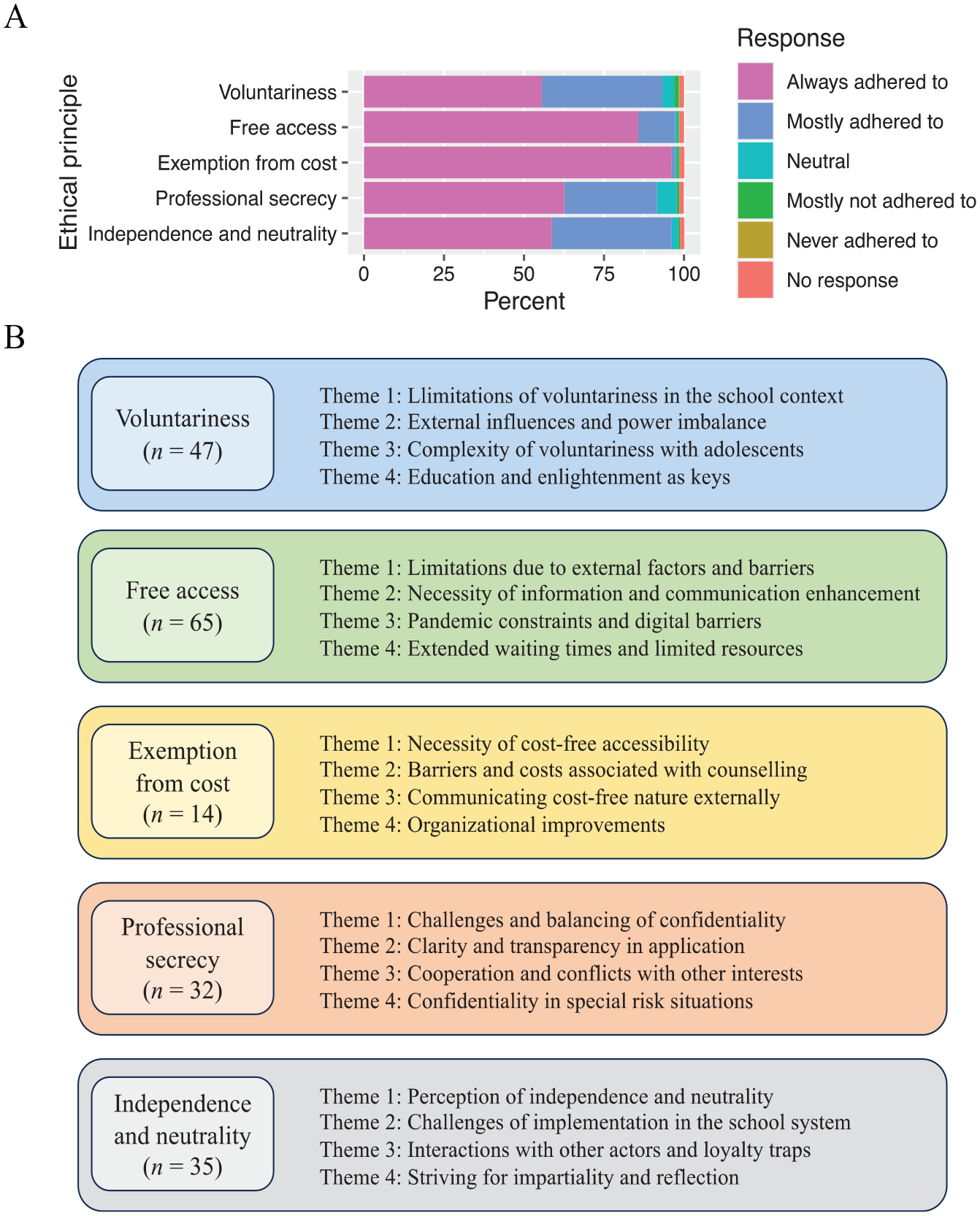

Figure 8 provides information on school psychologists’ responses regarding the ethical principles issued by the Department of School Psychology of the Professional Association of German Psychologists (BDP Sektion Schulpsychologie, 2015).

Ethical principles (BDP Sektion Schulpsychologie, 2015). Panel A: Item 23: “In 2021, to what extent were you able to adhere to the following working principles taken from the professional profile defined by the Professional Association of German Psychologists (BDP)?” Panel B: Themes on suggestions for changes in ethical principles.

Overall, the majority of school psychologists reported that they always or mostly adhered to these ethical principles. However, between 5.0% and 18.5% of the sample highlighted critical issues and suggestions for changes in an open-ended question for each principle. We summarized these open-ended responses in themes shown in Figure 8 and provide further detail on individual responses and an elaboration of each theme in a separate document on OSF (original German and translated English version).

Initial Training and Professional Development

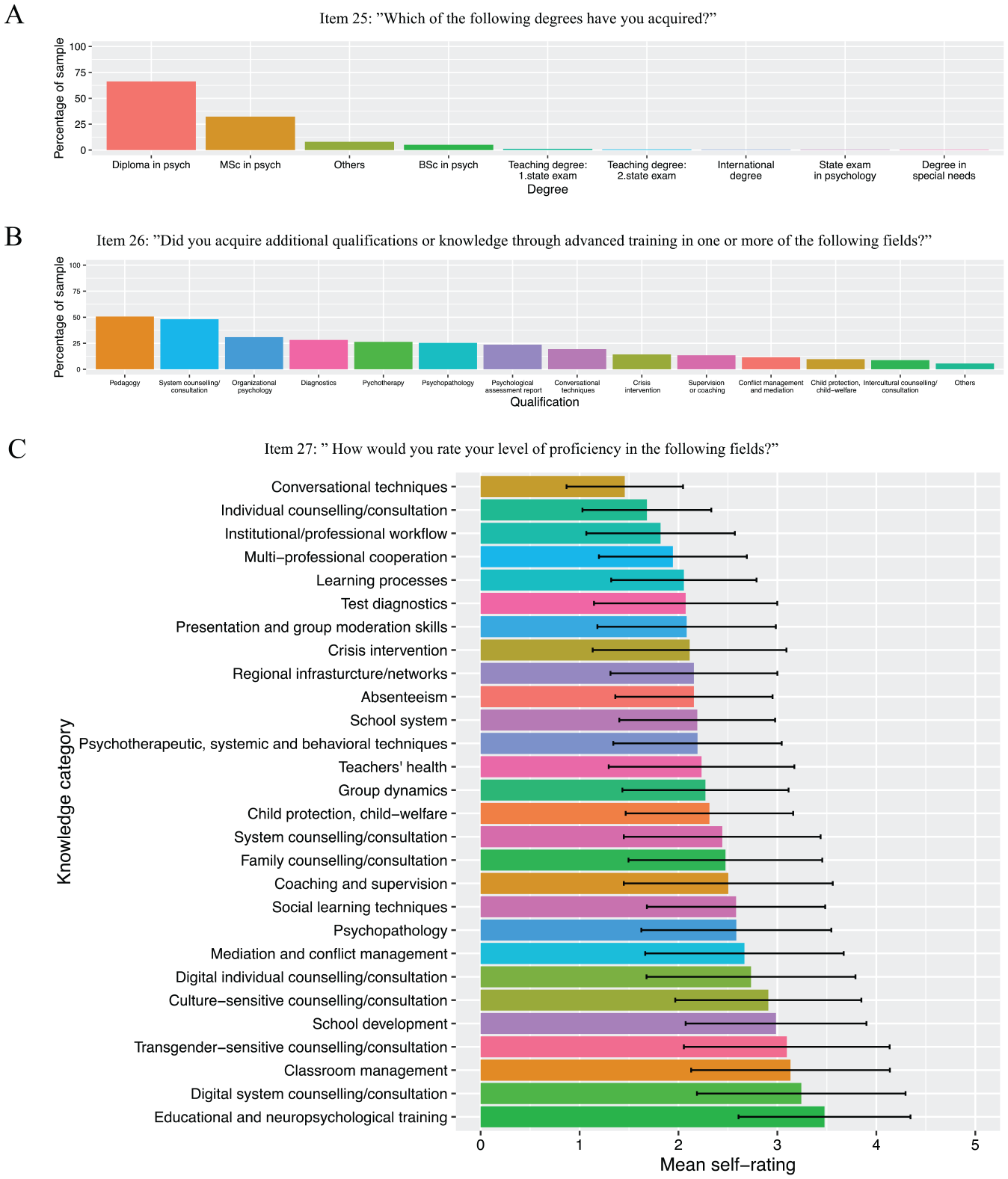

In Figure 9, we summarize results concerning school psychologists’ initial training and professional development.

Initial training and professional development. Panel A: Item 25: ”Which of the following degrees have you acquired?” Panel B: Item 26: ”Did you acquire additional qualifications or knowledge through advanced training in one or more of the following fields?” Panel C: Item 27: ” How would you rate your level of proficiency in the following fields?”.

Most of the school psychologists in the sample acquired a diploma (n = 236 – 66.3%) or MSc in psychology (n = 115 – 32.3%) with less than 10% of the sample reporting other acquired degrees (e.g., international degree, PhD in psychology, etc. – see Figure 9). Moreover, school psychologists indicated additional qualifications or knowledge achieved through advanced training: knowledge on system counseling or consultation (n = 180 – 50.6%), crisis intervention (n = 171 – 48.0%) and supervision and coaching (n = 110 – 30.9%) emerged as the three most frequently reported additional qualifications.

In relation to school psychologists’ self-rated level of proficiency in professional fields (see Figure 9), on average participants rated “conversational and relationship-shaping techniques” (M = 1.4; SD = 0.6), “structuring individual counseling and consultation processes in general” (M = 1.7; SD = 0.6) and “workflow and processes within the institution they work in, within the school psychological field and in collaboration with other professional groups” (M = 1.8; SD = 0.7) as the three top strengths in their profile. In contrast, school psychologists indicated that their knowledge is satisfactory tending to sufficient (response options 3 and 4) concerning “educational and neuropsychological training techniques for children and adolescents” (M = 3.5; SD = 0.9), “structuring system counseling and consultation processes through digital formats” (M = 3.2; SD = 1.0) and “classroom management” (M = 3.1; SD = 1.0).

When asked about three topics for which they desired more possibilities of advanced training, most school psychologists mentioned “educational and neuropsychological training techniques for children and adolescents” (n = 37 – 10.4%), followed by “learning processes, forms of behavior and child and adolescent development” (n = 35 – 9.8%) and finally “presentation techniques and moderation of groups” (n = 34 – 9.5%). A similar question focusing on recommendations on topics new school psychologists entering the profession should focus on revealed the following ranking: (a) learning processes, forms of behavior and child and adolescent development (n = 111 – 31.2%), (b) structuring individual counseling and consultation processes in general (n = 68 – 19.1%), and (c) test diagnostics (n = 55 – 15.4%).

Quality Assurance of Services

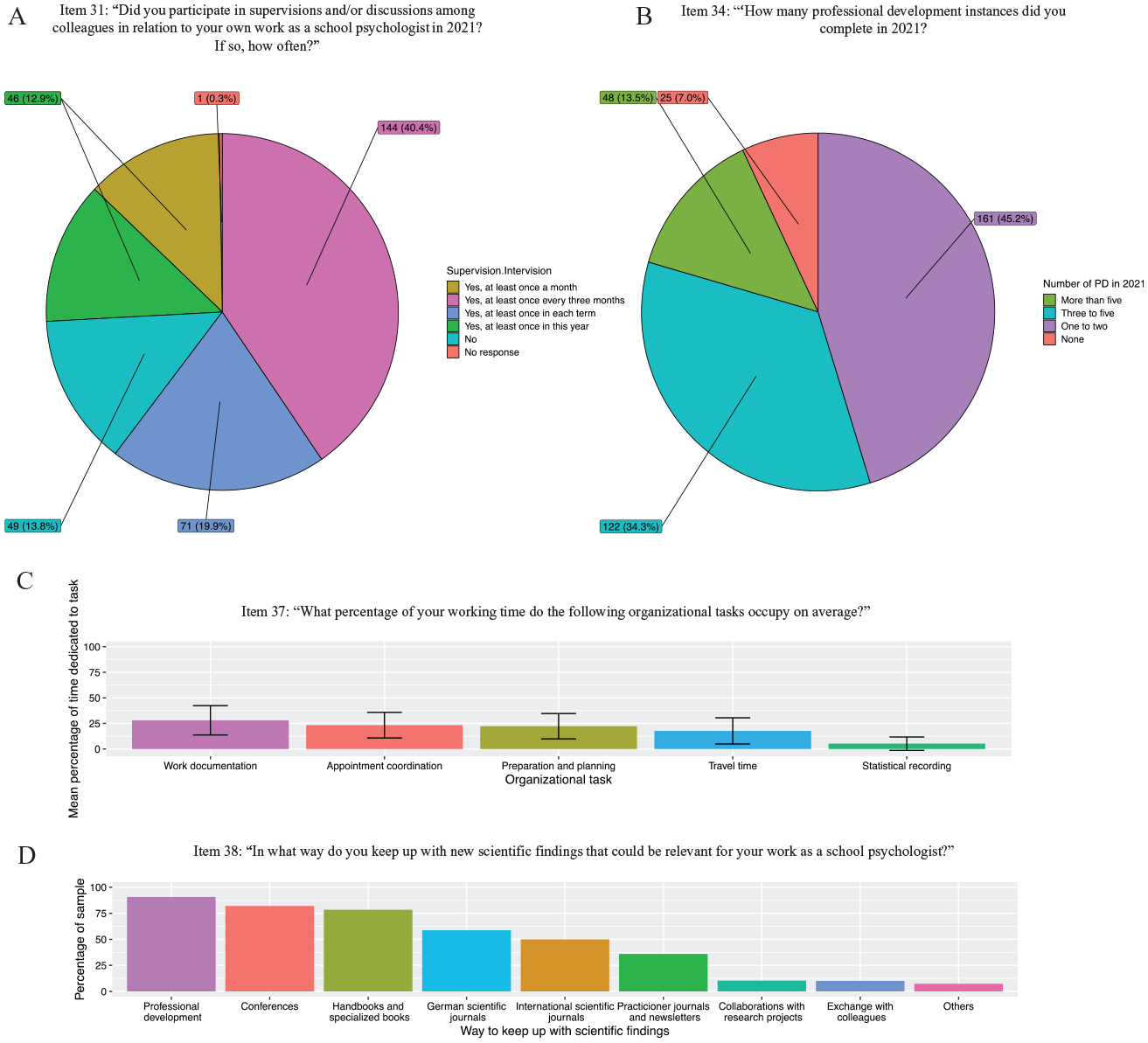

Figure 10 shows an overview of school psychologists’ responses regarding quality assurance of services they provide.

Quality assurance of services. Panel A: Item 31: “Did you participate in supervisions and/or discussions among colleagues in relation to your own work as a school psychologist in 2021? If so, how often?” Panel B: Item 34: “‘How many professional development instances did you complete in 2021?” Panel C: Item 37: “What percentage of your working time do the following organizational tasks occupy on average?” Panel D: Item 38: “In what way do you keep up with new scientific findings that could be relevant for your work as a school psychologist?”.

Most school psychologists reported that they were able to attend supervision or intervision meetings at least once in 2021 (n = 46 – 12.9%), once in each term (n = 71 – 19.9%), every 3 months (n = 144 – 40.4%) and once a month (n = 45 – 12.6%). A small group of participants (n = 49 – 13.8%) expressed that they had no supervision or intervision opportunities during 2021 (no response – n = 1 – 0.3%). Moreover, 48.9% (n = 174) of the sample indicated that they were satisfied with their possibilities to participate in supervision or intervision meetings, while 46.9% (n = 167) expressed a need for more opportunities of this type and 4.2% (n = 15) provided no response.

In relation to professional development, almost half of the sample attended between one and two instances (n = 161 – 45.2%), while approx. a third completed three to five (n = 122 – 34.3%) and a small group even more than five (n = 48 – 13.5%). Only 7% (n = 25) of the sample indicated that they were unable to attend professional development instances in 2021. Furthermore, school psychologists reported that the time invested in professional development ranged from zero to 300 hr (often also outside of their official working hours) in 2021 with most participants (n = 36 – 10.1%) indicating a total amount of 40 hr as an estimation.

Turning to the modality of record keeping, results showed that most school psychologists still rely on handwritten/paper files (n = 215 – 60.4%). Approx. a third of the sample in addition also uses electronic files (n = 126 – 35.4%), while only 3.1% (n = 11) exclusively work with electronic files (no response: n = 4 – 1.1%).

Of all the organizational tasks that are part of school psychologists’ workload, on average documenting their work seems to be the most time-demanding task (M = 28.0; SD = 14.3), followed by coordinating appointments (M = 23.3; SD = 12.5), preparing and planning (M = 22.2; SD = 12.4), traveling (M = 17.6; SD = 12.8) and finally statistical recording (M = 5.1; SD = 6.5).

When asked about ways to keep up with scientific findings relevant to their work, exchanging information with colleagues (e.g., in working groups – n = 323 – 90.7%) was mentioned most frequently, followed by professional development (n = 292 – 82.0%), handbooks and specialized books (n = 279 – 78.4%), practitioner journals and newsletters (n = 209 – 58.7%) and conferences (n = 178 – 50.0%). Less than half of the sample mentioned relying on German (n = 128 – 36.0%) or international scientific journals (n = 37 – 10.4%) or collaborating with research projects (n = 36 – 10.1%) to keep updated. Most participants reported that they sometimes (n = 150 – 42.1%) or frequently (n = 120 – 33.7%) adapted their work based on scientific findings, while n = 28 (7.9%) indicated that this was always the case and n = 48 (13.5%) rather seldomly (no response: n = 10 – 2.8%).

Finally, most participants expressed that they were “satisfied” (n = 176 – 49.4%) or “very satisfied” (n = 108 – 30.3%) with their work as school psychologists, while some selected the “neutral” (n = 49 – 13.8%), “unsatisfied” (n = 16 – 4.5%) or “very unsatisfied” (n = 4 – 1.1%) response options (no response: n = 3 – 0.8%).

Discussion

In this registered report study, we aimed to explore the scope of school psychological practice in Germany by applying a mixed methods approach. This first report informs about the results of a Delphi procedure to develop a questionnaire in collaboration with school psychological experts from different federal states of Germany, as well as country-wide level survey results on the scope of school psychological in Germany. These findings address our first pre-registered research question: What is the range of responses provided by school psychologists in Germany on the following five domains of their professional practice: (a) their own demographic information, (b) services they provide, (c) ethical principles, (d) initial training and professional development and (e) quality assurance of services? Considering the breath of data collected in this study, we report findings concerning two additional preregistered research questions in a second article to address (a) similarities and differences in the above-mentioned domains of school psychological practice across federal states in Germany and (b) the impact of federal policies, professional experience and self-rated knowledge on the tasks school psychologists engage in.

As a first result of the Delphi procedure, we obtained a survey on the scope of school psychological practice in Germany developed in collaboration with an expert panel composed of 26 experienced school psychology representatives from 15 of the 16 federal states of Germany. The expert panel provided numerous qualitative comments and suggestions on the proposed items underlining the variability across federal states concerning terminology, tasks, services, and school psychological practice in general. Nevertheless, the panel reached consensus on the relevance and validity of 97% (33 of 34) already in the first Delphi round. To the best of our knowledge, this is the first instrument of this type developed in Germany and in collaboration with representatives from different federal states. This is a step forward compared to other tools used in previous attempts to collect empirical data on the scope of school psychological practice in Germany, such as for example the International School Psychology Survey used in Jimerson et al. (2006) which was originally developed for the U.S. context, and then translated and adapted for other countries. The results of the Delphi procedure, therefore, increase the overall validity of the survey and of the evidence collected through this tool. Taking a wider viewpoint, the Delphi procedure also allowed us to introduce the overall project to stakeholders, gain support for recruiting survey participants and to provide a new opportunity to build research-practice partnerships in the school psychological field in Germany.

The second set of results we obtained in this study refer to a country-wide characterization of the scope of school psychological practice in Germany based on survey responses from 356 school psychologists from 15 of the 16 federal states of Germany. The sample corresponds to 29.83% of the overall population of 1,190 school psychological positions (excluding Bavaria) as reported by the Department of School Psychology of the National Association of Psychology of Germany for the year 2018 (BDP Sektion Schulpsychologie, 2018). To the best of our knowledge, the only comparable scientific evidence that has been reported so far was brought forward by Jimerson et al. (2006) and comprised a sample of 45 German school psychologists. Although it is difficult to compare our results to this evidence, as time-points of data-collection are almost 20 years apart and different survey-instruments were used, findings are consistent to a certain extent.

For instance, Jimerson et al. (2006) reported that Germany had the smallest gender ratio compared to Australia, China, Italy, and Russia with 50% of female school psychologists. In coherence with this observation, we also found that most school psychologists in Germany are female, but with a much larger gender ratio of 79.2% which is closer to data reported for other countries (e.g., 80% in Australia, 78% in China, 64% in Italy and 100% in Russia – Jimerson et al., 2006). Furthermore, Jimerson et al. (2006) highlighted that most school psychologists in Germany were fluent in two or more languages in contrast to participants in Australia and Russia, who were predominantly monolingual. Our data confirm this observation, as 67.7% of participants expressed that they were able to rely on English language skills during work. However, 29.8% of our sample indicated that they were only able to provide services in German, a result that could be seen as problematic for effective service provision considering that approx. Thirty-seven percent of the student population in Germany has an immigration or refugee status with limited German skills (Statistisches Bundesamt [Destatis], 2020).

Another contrast concerns the ratio of school psychologists to school-aged children. While Jimerson et al. (2006) reported a relatively high ratio of 1:16,549 with a range from 1,000 to 100,000 for Germany, our data showed that, on average, school psychologists were in contact with less than 500 students during 2021. Additional data from 2020 published by the Department of School Psychology of the Professional Association of German Psychologists (BDP Sektion Schulpsychologie, 2020) mention a ratio of approx. 1:6,000 with a very wide range from 1:3,730 to 1:13.018 across federal states. This shows how difficult and misleading it can be to make statements about school psychological practice in Germany at a country-wide level without considering variability across federal states. For this reason, in this study we aimed to explore similarities and differences in school psychological practice across federal states and report our findings on this matter in a second article.

Looking at the roles and responsibilities of school psychologists, Jimerson et al. (2006) stated that “either psycho-educational evaluations or counseling students were reported as comprising the greatest percentage of practitioners’ time” (p. 17). More specifically, German participants in Jimerson et al. (2006), on average, dedicated 28% of their time to psycho-educational evaluations, 14% to counseling students, 13% and 15% to consultation with teachers and parents, respectively and 15% to training staff. Our findings reveal a similar pattern as participants indicated that, on average, they spent 10.6% of their time counseling students and 11.7% and 12.9% in consultation with teachers and parents, respectively. However, in our sample, school psychologists, on average, only dedicated 8.8% to student assessment and 5.9% to teacher trainings.

One reason for these contrasting findings could be a shift in the scope of school psychological practice in Germany over the last 20 years from a predominant focus on direct services, such as psycho-educational assessments, to indirect and system-level services, such as the delivery of school-wide prevention programs, as has been observed in other countries (Jimerson et al., 2007). Unfortunately, it is impossible to further investigate this idea empirically due to the limited available data on the scope of school psychological practice in Germany. Another reason could, once again, be the composition of the sample concerning the federal states school psychologists worked in and the extent to which psycho-educational evaluations and teacher training fell within the scope of school psychological practice in this federal state. Unfortunately, we do not have any information on this aspect concerning the sample in Jimerson et al. (2006), but in the second report of this study, we take a closer look at this aspect by exploring similarities and differences across federal states.

Overall, our results provide a detailed characterization of the scope of school psychological practice in Germany at a country-wide level. Due to the extension of the information on this study, we report a complementary exploration of similarities and differences across federal states in a second article. An important limitation of our study concerns the size and representativeness of our sample. Although we aimed to reach 40% of the overall population (BDP Sektion Schulpsychologie, 2018), unfortunately, only 356 school psychologists (29.83% of the overall population) participated. Compared to previous efforts by Jimerson et al. (2006), we were able to reach a bigger and more representative sample with school psychologists from 15 of the 16 federal states of Germany. Readers should nevertheless keep in mind that our results might not be representative of the entire population on school psychologists in Germany.

Another limitation concerns the fact that participants were asked to complete the survey from the 20th of September of 2021 to the 15th of February of 2022, a time during which the Covid-19 pandemic was still ongoing. Furthermore, many of the items asked participants to refer to the period of 2021 to report, for example, on the number of schools, students, and teachers they had contact with or the time they dedicated to different tasks and content areas. As there have been many descriptions on the impact of the Covid-19 pandemic on school psychological practice worldwide (e.g., May et al., 2022; Reupert et al., 2022), it is important to note that our results could be reflecting the scope of school psychological practice under these specific circumstances, rather than providing a general picture.

Next steps for future research in Germany could, therefore, consist in conducting a new data collection wave with the same collaboratively designed survey, hopefully reaching a larger and more representative proportion of the overall population. Collecting data in a periodic manner (e.g., every 5 years) would also allow for longitudinal investigations that could address questions related to historical changes (e.g., shift from direct to indirect services), as well as the impact of specific historical events (e.g., Covid-19 pandemic, Ukraine war) on the scope of school psychological practice in Germany. From an international perspective, the mixed methods approach used in this study could serve as an orientation for other researchers to conduct similar studies aiming at exploring the scope of school psychological practice in other countries. In the past, many studies have relied on the International School Psychology Survey for this purpose (e.g., Coelho et al., 2016; Jimerson et al., 2004, 2006, 2008, 2009, 2010). This tool was, however, originally developed for the U.S. context and may lack validity when applied to other countries. The combination between a Delphi procedure, a quantitative survey and semi-structured interviews with regional experts as implemented in this study, might, therefore, also be a useful way for international researchers to strengthen research-practice partnerships in the field of school psychology.

On a practical level, our findings have important implications for members of professional associations, policymakers, school psychologists and service users, as they provide an empirical description of the scope of school psychological practice in Germany, an important contribution to make evidence-based school psychological practice possible. For instance, the Department of School Psychology of the Professional Association of German Psychologists has expressed their interest in obtaining data on survey participants’ suggestions for improvement concerning ethical principles mentioned in the national statement. They plan to use this evidence as a basis to review the formulation of the ethical principles in an upcoming update of the national statement. In a similar way, policymakers could rely on data on survey participants’ self-rated knowledge to plan upcoming professional development opportunities. Moreover, by delimiting the scope of school psychological practice in Germany, the results of this study have the potential to strengthen German school psychologists’ professional identity (Brott & Myers, 1999), as well as to make it easier for service users, policymakers, and other professions to recognize the unique profile and knowledge domains of school psychologists in Germany (Montgomery & Oliver, 2007).

To conclude, this study contributes a detailed characterization of the broad and heterogeneous scope of school psychological practice in Germany providing an evidence-base for future research, political decision-making and the development of school psychologists’ professional identity. In addition, the mixed-methods approach used in this study represents an innovate example of a collaborative research project that aims to bring researchers and practitioners together with the purpose of delimiting the scope of school psychological practice.

Supplemental Material

sj-pdf-1-cjs-10.1177_08295735231226195 – Supplemental material for Scope of School Psychological Practice in Germany: Part 1

Supplemental material, sj-pdf-1-cjs-10.1177_08295735231226195 for Scope of School Psychological Practice in Germany: Part 1 by Alexa von Hagen, Bettina Müller, Natalie Vannini, Nils Rublevskis, Mirijam Schaaf, Stephan Jeck, Marion Müller-Staske, Gerhard Bachmann, Anna Sedlak, Joanna Wegerer and Gerhard Büttner in Canadian Journal of School Psychology

Footnotes

Author Contributions

AVH: Conceptualization; Data curation; Formal analysis; Investigation; Methodology; Project administration; Resources; Visualization; Writing – original draft; Writing—review & editing.

BM: Conceptualization; Data curation; Formal analysis; Methodology; Writing—review & editing.

NV: Conceptualization; Formal analysis; Methodology; Writing—review & editing.

NR: Data curation; Formal analysis.

MS: Data curation; Formal analysis; Investigation.

SJ: Conceptualization: Funding acquisition; Resources.

MM: Conceptualization; Resources.

GBA: Conceptualization.

AS: Conceptualization.

JW: Conceptualization.

GBÜ: Conceptualization; Funding acquisition; Methodology; Resources; Writing—review & editing.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online on the Open Science Framework

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.