Abstract

Learning to read marks an important milestone in children. Extensive research with monolingual and bilingual children has demonstrated that language comprehension (LC) forms fundamental building blocks for reading comprehension (RC). However, mixed findings are reported among studies that compare readings skills in children with and without diverse language experiences. Depending on how researchers operationalize the construct of LC and RC, studies use different standardized tests or assessments to assess reading skills in children, which may lead to different findings across studies. The current review systematically examined tests of LC and RC that empirical studies have used to assess bilingual children who speak English as their second language. Out of an initial sample of 374 studies, 25 were eligible for inclusion. We extracted LC and RC assessments from the studies and documented task- and administration-related factors. Moreover, participant characteristics, definition of LC as described by authors, and findings related to the relationship between LC and RC were examined for each study. Our results demonstrated variability in the measures and definitions used to assess and describe LC and RC, potentially explaining the mixed findings in the literature. We underscore the importance of considering the multidimensional nature of LC and the need to further explore how different administrative and task characteristics of LC tests relate to RC. Furthermore, this review provides researchers and practitioners with an original and extensive survey of the literature on how LC and RC were assessed among bilingual children. Lastly, we highlight limitations in the current literature and discuss practical implications in the field of school psychology in supporting children with diverse language experiences.

Learning to read is a developmental process involving the coordination of different component skills for children with homogeneous and diverse language experiences. To become a successful reader, a child must learn to coordinate and integrate multiple component skills efficiently. The components identified as important for reading comprehension (RC) include vocabulary, listening comprehension, phonological awareness, working memory, background knowledge, inferential processing, and comprehension monitoring (Cain & Oakhill, 2006; Stanovich, 2000), which have been documented in theoretical models of reading. One such model is the Simple View of Reading (SVR; Gough & Tunmer, 1986; Hoover & Gough, 1990). The SVR posits that decoding (i.e., the ability to read pseudo-words and real words) and language comprehension (LC, i.e., the ability to associate meaning to speech sounds) are fundamental building blocks for RC, with the success of RC based on the interaction of LC and decoding. The SVR is a parsimonious framework that has received robust support from studies with both monolingual (e.g., Catts et al., 2015) and bilingual children (e.g., Bonifacci & Tobia, 2017; Cho et al., 2019).

For monolingual children, longitudinal studies have shown that lexical and grapheme-phoneme correspondence skills (i.e., decoding, phonological awareness) in early elementary school predicted a substantial amount of variance in RC (Storch & Whitehurst, 2002). However, in subsequent ages, as lexical skills become established, oral language components of LC, including vocabulary and listening comprehension, become stronger and more reliable predictors of RC (Cutting & Scarborough, 2009; Storch & Whitehurst, 2002). This pattern has also been shown in studies with bilingual learners (Geva & Farnia, 2012; Verhoeven & van Leeuwe, 2012). While research suggests that the reading trajectory of bilingual children is similar to that of monolingual children, Melby-Lervåg and Lervåg (2014) found in their meta-analysis that bilingual children show weakness in RC, a greater weakness in LC, and similar skills in decoding when compared to their age-matched monolingual peers. Moreover, language-related abilities rather than decoding-related abilities have shown to be stronger predictors of second language (L2) RC in bilingual learners compared to monolingual learners (Burgoyne et al., 2009; Lervåg & Aukrust, 2010; Lesaux et al., 2010). In line with this finding, Jeon and Yamashita (2014) reported a strong correlation (r = .77) between passage-level L2 RC and L2 LC across 14 studies with bilingual children.

Studies have measured LC and RC in children with more than one test to measure the same construct. For example, RC as measured by the Woodcock Language Proficiency Battery-Revised (WLPB-R, using a cloze-test format) and Gray Oral Reading Test (GORT, using a multiple-choice question-and-answer format), was differentially related to decoding and vocabulary in bilingual children (Grant et al., 2012). Moreover, decoding accounted for more variance in RC when measured in cloze tests compared to question-and-answer tests in a study with monolingual children (Cutting & Scarborough, 2006). Accordingly, researchers have raised the issue of measurement-construct discrepancy, as most studies use a single measure of RC. Furthermore, the relationship between the characteristics of LC assessments—different assessments and the types of questions within them—and reading outcome is less understood in the literature. Importantly, while Hoover and Gough (1990) state that a measure of LC must assess the ability to understand language by assessing the ability to answer questions about the content of a spoken narrative within their SVR framework, previous research has examined different components of LC by using one or several measures of listening comprehension, vocabulary, syntax, morphology, storytelling, inferencing, or grammar (Cho et al., 2019; Kim, 2016). It is not clear, however, whether these components of LC should be treated as interchangeable proxies of a general construct of LC, and whether these components of LC differentially relate to reading outcomes.

Given the dynamic characteristics of bilingualism, it is often challenging to evaluate special education needs based on bilingual children’s language and reading abilities. In the U.S., bilingual students and L2 learners are reported to be disproportionately represented (i.e., underdiagnosed and overrepresented) in special education (Mancilla-Martinez et al., 2022; Yamasaki & Luk, 2018). Children in special education services are clinically identified or are at risk of having a learning difficulty, and standardized assessments are typically used to determine such needs. Standardized assessments are designed for clinical use with defined structures for administering and scoring and allow clinicians to gather and interpret performance based on norms. The child’s performance on each assessment is first gathered as raw scores (i.e., scores that have not been altered in any way), which can be converted into a standard score. Standard scores are interpretable because it references their performance relative to the norm, which are derived from a representative sample of children with the same age or grade. For clinicians and educators, standardized assessments are useful instruments that help inform clinical judgment and practices. However, the same implications may not apply to children with diverse language experiences. Norms used to standardize the measures consist of monolingual samples or do not take language experiences into consideration, thus, the appropriateness of using standard scores for bilingual children has been a contentious topic in both clinical and research work (Boerma & Blom, 2017; O’Connor et al., 2019).

The objective of the current review was to examine the variability in defining and measuring LC in research involving bilingual children and whether the choice of LC and RC test plays a role on the components of SVR. Given the varied definitions of LC and measures, we operationally defined and examined LC as the ability to associate meaning to speech sounds at the word, sentence, or passage level. We asked the following research questions: (1) How is LC operationalized in research involving bilingual children with English as their L2? (2) What measures were used to assess RC and LC? (3) What scores have been used for statistical analysis to interpret RC and LC? and (4) What role does LC play on RC in bilingual readers speaking English as a L2? Finally, we aimed to clarify the construct-measurement relationship to help connect educational challenges to real life, such as elucidating clinical decisions regarding language- or reading-related disabilities in bilingual children.

Method

The current review systematically gathered and synthesized peer-reviewed articles on L2 RC and LC in school-aged children. The scope of the review is to target assessments used to assess L2 LC and RC. While we acknowledge the literature with studies comparing monolinguals to bilinguals (e.g., Babayigit, 2014; Taboada Barber et al., 2020), we do not intend for this review to document performance differences. Instead, we focus on how LC and RC were conceptualized and measured in studies. Our search was conducted in two electronic databases in the field of education and psychology: PsycINFO and Education Resources Information Center (ERIC). The review was conducted following the PRISMA guideline (Moher et al., 2015).

Eligibility Criteria

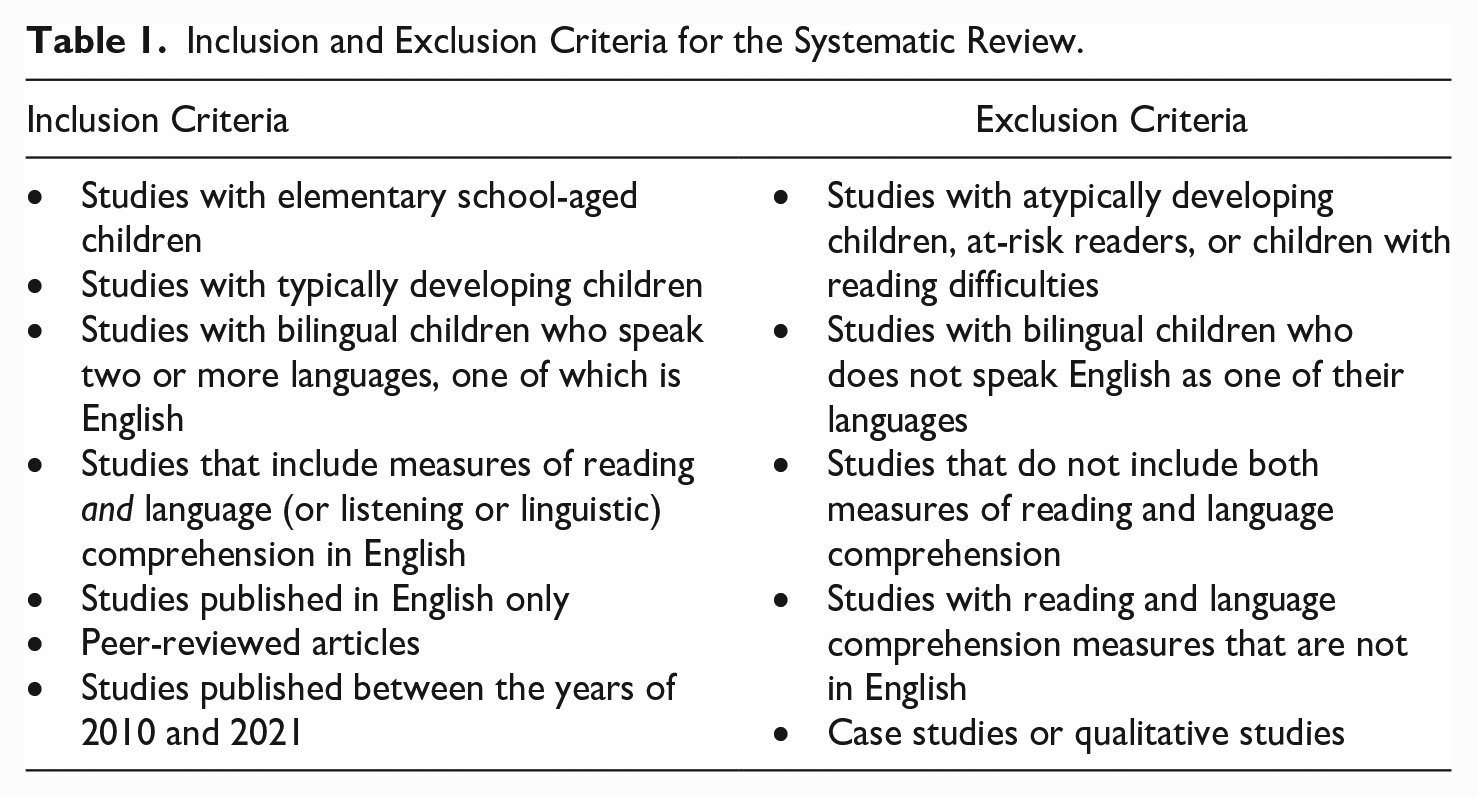

Peer-reviewed articles published in English that included measures of L2 “reading” and “language” or “listening” or “linguistic comprehension” were considered for inclusion to ensure that each study included the components of the SVR. Furthermore, participants in the studies had to be elementary school-aged, typically developing, and speak two or more languages, one of which is English. The focus on English-speaking bilinguals was necessary to provide a review of English assessments. We noted that the exclusion of studies on languages other than English was a limitation in the discussion. The decision limiting to studies published from 2010 to 2021 was made to ensure the tasks used in the literature are recent to inform future research. See Table 1 for detailed inclusion and exclusion criteria.

Inclusion and Exclusion Criteria for the Systematic Review.

Search Strategy

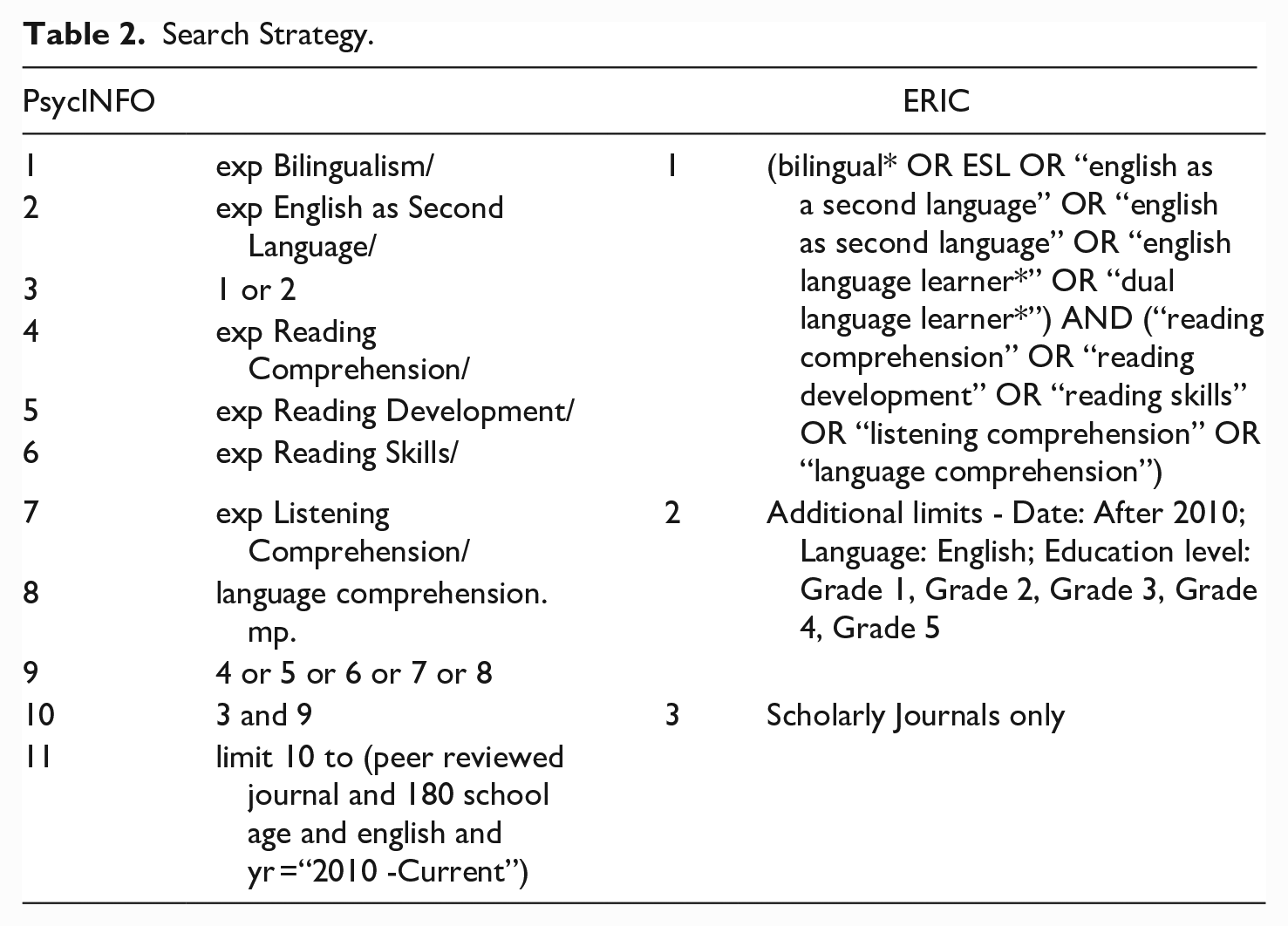

A comprehensive search strategy and keywords were developed by the first author, in collaboration with the librarian at the Humanities and Social Sciences Library at McGill University, based on previous research on bilingualism and the SVR (see Table 2). The search was limited to English language, peer-reviewed, elementary school-aged children, and empirical studies from 2010 to 2021. The final search was conducted on June 22nd, 2021.

Search Strategy.

Study Selection

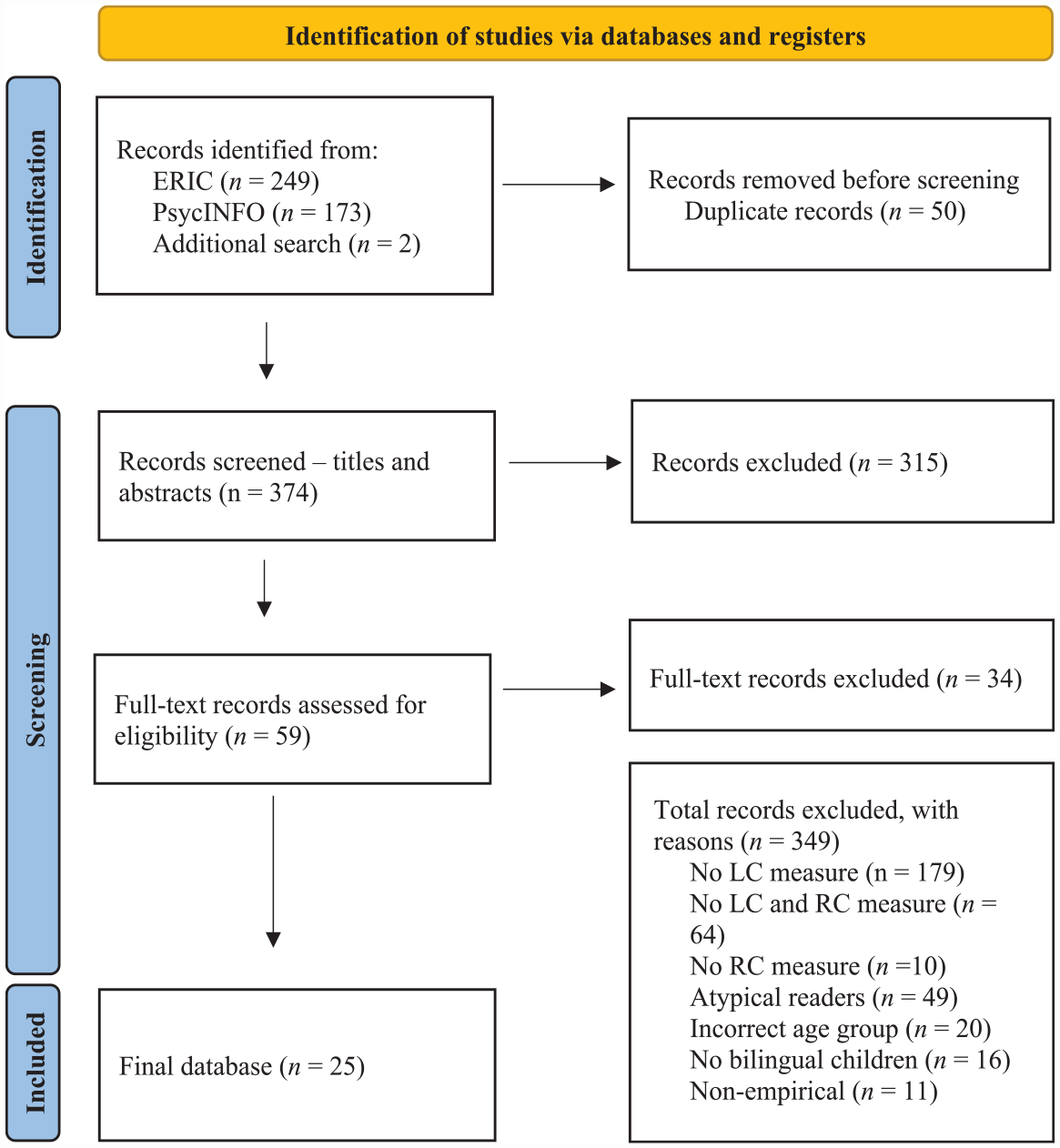

The electronic search from the two databases yielded 422 articles; two additional studies were found through a manual search by crosschecking references (see Figure 1 for the PRISMA flow diagram). Fifty duplicate studies were found and removed. The remaining 374 records were imported to Rayyan, a web application for systematic reviews to screen eligible studies and establish the reliability of the inclusion and exclusion of articles. The first and second authors independently and blindly coded the articles. An inter-rater reliability of Kappa 0.86 was found. The disagreements were resolved through discussions referring to articles and the inclusion and exclusion criteria. A total of 25 articles were selected to be included in the review.

Prisma chart describing the procedure involved in searching and filtering articles.

Data Collection

All measures of LC and RC were recorded, and information about each measure was collected directly from the articles but also indirectly from resources made available by the publishers. For each LC measure, the first author extracted the following information: whether the passage was read by the examiner or a recording was played, the types of questions (i.e., multiple choice, cloze, open-ended), other relevant information, the subtype of questions (i.e., factual, inferential), and the number of studies that used each assessment. Similarly, for each RC assessment, it was recorded whether the passage was read out loud or silently by the child if the child can refer to the passage when answering questions, if there is a time limit, other relevant information, the types of questions, and the number of studies that used each assessment.

Additionally, the following information was extracted from articles: (a) author and publication year, (b) study site country, (c) participant characteristics, (d) the definition of LC used by authors and measures assessing LC, (e) measures assessing RC, (f) scores used for analysis, and (g) relevant findings including group differences, correlations, and results related to the relationship between LC and RC. For participant characteristics, the participant’s first language (L1) and L2, grades and age, as well as language experiences at home and school were documented to better understand the degree of bilingualism for each sample. Finally, given the significant role that socioeconomic status (SES) has in childhood bilingualism (Calvo & Bialystok, 2014) and in the development of L2 RC (Kieffer, 2008; Kieffer & Vukovic, 2012), information about SES was recorded for all studies where available.

Results

Study and Sample Characteristics

A summary of the main characteristics of studies and relevant findings can be found in Table S1 under Supplemental Materials, which can be found in this OSF link: https://osf.io/x82r3/. About half of the studies (48%) were conducted in the U.S. (k = 14), all of which had participants with Spanish as their L1. Four studies from Canada included participants with various L1, including Punjabi, Portuguese, Tamil, Urdu, Hindi, Guajarati, Mandarin, and Cantonese. Two studies from the U.K. had Indian languages as their L1 (e.g., Somali, Urdu, Punjabi, Guajarati). Additionally, there was one study from Australia (L1 = Persian), India (L1 = Malaylam, Kannada or Telugu), and South Korea (L1 = Korean). Two studies had participants with English as either L1 or L2: one from Singapore (L1 = English, Chinese or Malay, L2 = English or Chinese) and the other from Canada (L1 = English/French, L2 = English/French).

There were 12 longitudinal studies (48%) with participant’s age ranging from kindergarten to Grade 8. Other study designs included a cross-sectional study with Grades 2, 3, and 4 (k = 1 for each grade), and a correlational study with participants in Grades 1, 2, and 3 (k = 1 for each grade). Further, three studies had participants in Grade 4, three additional studies had participants in Grade 5, one study had participants in Grades 4 and 5, and one study did not specify the grade (mean age was reported as 12;7). The number of participants in the sample ranged from 75 to 1,376 and the total sample size was 7,671; two studies had fewer than 100 participants, eight studies had 100 to 200 participants, and 15 studies had more than 200 participants.

Most studies (64%, k = 16) examined bilingual participants only, while nine studies adopted a group comparison approach and examined bilingualism as a categorical variable, for example, English as first language speakers (EL1) versus English language learners (ELL). Eight studies with group comparisons showed that the EL1 group had higher RC scores compared to the ELL group; only one study (Burgoyne et al., 2011) had equivalent RC scores between groups. The EL1 group had higher LC scores compared to ELL groups in six studies, and one study demonstrated comparable LC scores between groups. Twenty-two studies (88%), and 21 studies (84%) had information about home and school language experiences, respectively; all studies except one identified English as the main language of school instruction. Most studies included participants from low (k = 16) or low-mid (k = 4) SES backgrounds which were based on free or reduced lunch status in the U.S. or parent education level as a proxy. Furthermore, only four studies considered or controlled for variables important in characterizing bilingualism, such as the age of L2 acquisition, home language, SES, and initial L2 decoding skills.

Operational Definitions of Language Comprehension

Language comprehension has been defined and described in various ways. The most frequently used descriptor of LC was oral language proficiency/composite/skills (k = 9), which consisted of two or more components of LC including listening comprehension, vocabulary, story retell, inferencing, grammar, syntax, phonological awareness, or memory for sentences. Other descriptors included listening comprehension (k = 8), linguistic comprehension (combination of listening comprehension and vocabulary, k = 2), vocabulary, syntax and listening comprehension as three aspects of LC (k = 2), semantic knowledge and listening comprehension (k = 1), receptive vocabulary (k = 1), and vocabulary and verbal working memory (k = 1). In addition to these descriptors, there were notable explanations of LC from several studies. For instance, O’Connor et al. (2019) indicated that their study assessed listening comprehension as an additional measure of LC to provide a more “nuanced assessment of the language skills” (p. 235) involved in RC. Ji and Baek (2019) stated decoding, phonological awareness, and orthographical processing skills as “micro-level component” while listening comprehension and vocabulary knowledge are “macro-level components” (p. 392). Similarly, Relyea and Amendum (2020) also described LC in two levels: lower level (e.g., vocabulary, syntax) and higher level (e.g., inferencing, comprehension monitoring) language skills. Finally, Taboada Barber et al. (2021) explained that listening comprehension is typically operationalized as linguistic comprehension, which refers to the ability to take lexical information and derive sentence- and discourse-level meaning.

With regards to the measures chosen to assess LC, 23 out of 25 studies included a measure of listening comprehension while two studies did not; these two studies assessed vocabulary and verbal working memory (Mancilla-Martinez & Lesaux, 2017) or vocabulary only (Zhang & Ke, 2020). Furthermore, 14 studies examined LC using a composite score (e.g., combining scores for both vocabulary and listening comprehension) and did not examine the unique contribution of each component of LC on reading. Six out of the 14 studies examined listening comprehension together with a measure of vocabulary, and other studies examined it with a measure of story retell (k = 1), memory for sentences (k = 1), vocabulary and syntax (k = 2), vocabulary and story retell (k = 2), vocabulary and grammar (k = 1), and vocabulary, inferencing skills, grammar, and phonological awareness (k = 1). Seven studies only had one listening comprehension measure to assess LC, and two of these studies were intervention studies that examined LC as the outcome variable. Lastly, two studies examined listening comprehension within a composite score but also as a separate variable. More specifically, Kieffer (2012) combined listening comprehension, vocabulary, and story retell as a composite score but also examined how each variable contribute to RC. Davison et al. (2011) made it clear that they sought to examine vocabulary and listening comprehension individually, rather than grouping it as one variable.

Measures of Listening Comprehension

There were 18 unique measures of listening comprehension in 23 studies. An overview of these measures can be found in Table S2 under Supplemental Materials: https://osf.io/x82r3/. Fifteen measures were standardized assessments, and two were developed by the researchers themselves. When categorizing the measures of listening comprehension by the type of questions, seven had open-ended questions, five had multiple choice questions, three had cloze questions, and one had both open-ended and multiple-choice questions; this information was not available for two measures. In terms of question subtypes, slightly more than half of the assessments (n = 10) included both factual and inferential questions, one had factual questions only, and three had inferential questions only; this information was not available for four measures. Factual questions are answered explicitly by facts contained in the text while inferential questions are answered by analyzing and interpreting specific parts of the text. Regarding administration, four studies played audio recordings whereas 19 studies had the examiner read the story out loud to participants.

Twenty studies (87%) included one measure of listening comprehension, while two studies included two measures, and one study (Mesa & Yeomans-Maldonado, 2021) included three measures of listening comprehension (Clinical Evaluation of Language Fundamentals (CELF) fourth edition, Qualitative Reading Inventory fifth edition, and Inferencing skills). Six studies used different editions of the CELF: Third US edition (k = 2), fourth UK edition (k = 2), and fourth US edition (k = 2). Moreover, three studies from Canada and one study from India used the Durell Analysis of Reading Difficulty (DARD). The Woodcock–Johnson Tests of Achievement third Ed. (WJ III) was used in three studies, while the Woodcock–Johnson IV Oral Language (WJ IV OL) and the WLPB-R were used in two studies, respectively.

Measures of Reading Comprehension

All included studies included a measure of RC. An overview of these measures can be found in Table S3 under Supplemental Materials. Most studies (72%, k = 18) had one RC measure and 28% of studies (k = 7) had two to three measures. There was a total of 23 unique assessments of RC; 19 of them were standardized assessments, two were developed by researchers, and one measure was an amalgamation of different standardized assessments. Administrative information about passage reading was available for 17 measures; the most common method was asking the child to read the passage out loud (k = 7), followed by reading silently (k = 4), and having both options of either reading out loud or silently (k = 4). Furthermore, 61% of the measures (k = 14) allowed the child to refer to the passage when answering questions, while one measure took the passage away once the child finished reading. The majority of the measures (n = 14) did not have a time limit for the task, but this information was not available for seven measures. There were two measures that had a time limit of 35 min, which were the second and fourth editions of the GMRT.

When grouping measures by the type of questions, 12 out of 23 studies had multiple-choice questions, four had open-ended questions, three had cloze questions, and two had both multiple-choice and open-ended questions; this information was not available for two measures. Furthermore, eight studies administered the RC task in a group setting while 17 studies administered it individually for each participant. Of the 25 included studies, eight used different editions of the GMRT (second edition k = 3, and Fourth edition k = 5), four studies used the WLPB-R, and two studies each used the following three measures: Woodcock-Johnson fourth Ed. Tests of Achievement (WJ-IV ACH), DARD, and Neale Analysis of Reading Ability-second Revised British Ed. (NARA-II).

Use of Standard Scores

When reporting RC and LC scores, seven studies used both raw scores and standard scores, another seven studies used raw scores only, and four studies used standard scores only; seven studies did not specify the score they used for analysis. Notably, three studies provided a rationale for using a specific type of score for analysis. For example, Babayigit (2014) conducted their analysis using both raw and standard scores, due to the concern that the use of standard scores might be inappropriate for children from diverse language and cultural backgrounds. Farnia and Geva (2013) used raw scores “to eliminate the possible effect of task standardization that did not include ELL learners (p. 415),” and O’Connor et al. (2019) used raw scores because “the norms used to standardize the measures in this study are based on EL1 samples (p. 237).” In addition to clarifying the intent for using raw scores or standard scores, providing a rationale for the appropriateness or the choice of assessments would be important to understand the skills and components needed to complete the tasks. Though some studies had more information about their measure, which is useful to understand the skills needed to complete the tasks, most studies did not provide a justification for the appropriateness of their chosen measure.

Relationship Between RC and LC in the SVR Framework

Fifteen studies (60%) reported the correlation between measures of RC and LC. The correlation coefficients among the studies varied from r = .19 to .69, and the average correlation was r = .49. Moreover, 15 studies reported LC to be a significant predictor of RC while five studies did not find this relationship. Association between LC and RC was not explored in four studies. In addition to examining LC as a predictor of RC, there were other variables that studies identified to play a key role in RC. These include decoding (k = 12), reading fluency (k = 4), verbal working memory (k = 3), and executive functions (k = 1). Lastly, most studies (k = 19) supported the theoretical framework of SVR, demonstrating significant relationships between RC and LC (assessed as single or several components) and decoding. Conversely, three studies did not support the SVR, while the SVR was not examined in two studies.

Discussion

The current systematic review examined the variety of assessments that research has used to capture LC and RC among studies with elementary school-aged children speaking English as their L2. After a comprehensive search of the literature published between 2010 and 2021, 25 articles were included for review. The goal was to investigate how LC has been operationally defined by researchers and thoroughly document and compare measures of L2 language and reading comprehension. Our results demonstrated that the definition of LC was inconsistent across studies and a wide range of assessments have been used to measure LC and RC, with different question types and administrative rules. Lastly, we found a moderate correlation between LC and RC, and the SVR was supported by most studies. These findings are considered in turn.

Language Comprehension as a Construct

The wide variability in the definition and measurement of LC across studies reveals the complex and multidimensional nature of LC. Importantly, it raises concerns about measurement and constructs alignment for a core component of a prevalent model (i.e., SVR) used in reading research. Indeed, while 92% of the included studies have a measure of listening comprehension, there is less consistency in other components of LC that studies chose that made up their LC composite score. Specifically, 10 studies examined vocabulary in addition to listening comprehension, but other studies also examined story retell, memory for sentences, syntax, grammar, inferencing skills, and phonological awareness as components of LC.

In addition to concerns related to measure-construct misalignment, another issue is that different combinations of LC components have been examined as a single composite score. Our results demonstrated that more than half of the included studies examined LC using a composite score, and 13 of these studies demonstrated a significant relationship between LC and RC. In comparison, two studies examined LC as a composite score but also examined the independent contribution of each component of LC to examine their unique role on RC. For instance, Kieffer and Vukovic (2012) used a composite score from the English Language Proficiency Assessment for Early Learners (preLAS), which includes a measure of listening comprehension, vocabulary, and story retell. It was found that early LC significantly predicted later RC in Grades 3, 5 and 8; however, when the composite score was examined individually, only vocabulary, but not listening comprehension and story retell, predicted RC. Certainly, this “lump-sum” method of examining LC as a single construct poses a methodological challenge as it is not clear whether components of LC uniquely or as a composite score predict RC. This may be more problematic in longitudinal studies given the developmental shift in the contribution of LC on RC outcomes between early and late school aged-years (Farnia & Geva, 2013; Mancilla-Martinez & Lesaux, 2017).

Assessments of Language and Reading Comprehension

Our review demonstrated that a variety of tasks have been used to assess LC and RC. From the 25 included articles, there were more diverse RC assessments (n = 23) compared to LC assessments (n = 18), and 34 out of 41 measures were standardized assessments. Past research has demonstrated that different question types in RC measures may require different skills. For example, measures of RC using cloze procedures (compared to multiple choice and open-ended procedures), such as Woodcock-Johnson’s measure of passage comprehension, rely heavily upon word recognition processes including decoding (Keenan et al., 2008). A comparison of different RC measures in our review demonstrated that 12 out of 23 measures of RC had multiple-choice questions, four had open-ended questions, three had cloze questions, and two had both multiple-choice and open-ended questions. Across the 18 LC assessments identified in the review, open-ended questions (n = 7) were most frequently used, followed by multiple choice (n = 5), cloze (n = 3), and both open-ended and multiple-choice questions (n = 1). Given the multidimensional aspect of LC, it will be prudent to investigate how different question types tap into different skills required for successful LC.

It is important to note that several studies revealed variables that significantly contributed to RC in addition to the components identified in the SVR (i.e., decoding and LC), such as reading fluency, inferencing skills, and cognitive skills (i.e., working memory and executive functions). This is consistent with previous findings that demonstrate the critical role cognitive skills play in RC. For example, a longitudinal study with Spanish-English bilingual children (Grades 1–3) exhibited that growth in working memory uniquely predicted growth in L2 literacy and RC (Swanson et al., 2015). Additionally, research has also demonstrated associations between literacy acquisition in L2 with working memory (Bialystok et al., 2005a) and attention (Luk & Bialystok, 2008) among bilingual children. Overall, these studies and the results of our review highlight the critical role of cognitive component skills in bilingual children’s RC, and if assessed within the SVR framework, may provide a better understanding of the developmental trajectory of L2 RC.

Consideration of Heterogeneity of Bilingualism

Recent bilingual research has underscored the transition from viewing children as English L2 learners to bilinguals (Relyea & Amendum, 2020) as well as the need to adopt a multi-factorial, individual differences approach by examining individual differences in bilingual experiences (e.g., Takahesu Tabori et al., 2018). In our review, although information on the language experiences of participants varied across studies, it was clear that most researchers found it important to consider and report domains of bilingualism that are crucial in child development (Byers-Heinlein et al., 2019), namely home language and language of schooling. Indeed, 88% of studies reported home language experiences and 84% of studies reported school language backgrounds of participants. However, few studies considered other important variables of bilingualism in their analysis, such as the age of L2 acquisition, home language, SES, or initial L2 decoding skills. Furthermore, although SES was not considered in statistical analysis by most studies, 20 out of 25 studies reported the SES of participants. On the other hand, very few studies reported sociolinguistic factors from which the sample was drawn. Information regarding sociolinguistic factors would have provided a comprehensive picture of children’s bilingualism in each sample and allowed improved comparison of results. Indeed, previous research has emphasized the need to consider sociolinguistic factors that are relevant to bilingual experience (Surrain & Luk, 2019; Whitford & Luk, 2019).

The Simple View of Reading in Bilingual Children

Based on extant examination of recent L2 reading research in bilingual children, our findings provide support for the framework of SVR. Analysis of included studies repeatedly demonstrates the crucial role of LC on RC. Indeed, 60% of studies reported significant correlations between LC and RC, and 15 out of 25 studies confirmed that LC explained a substantial amount of variance in RC. Of note were findings from longitudinal studies which demonstrated that decoding and word-based LC skills are more likely to contribute to RC during early school-aged years, whereas language-based LC skills contribute to RC during later elementary grades as word-level reading skills become more established (Geva & Farnia, 2012; Mancilla-Martinez & Lesaux, 2017; Mesa & Yeomans-Maldonado, 2021). Considering these developmental differences, it would be important for future research to utilize LC measures that tap into word-based and language-based LC skills to examine both their unique and combined influence on RC.

Limitations and Directions for Future Research

This review adds to the current literature on how variations in measures of LC relate to RC in children with diverse language experiences. However, there are several limitations. First, we did not assess the characteristics of assessments to their full extent due to limited resources and information available from studies. Evaluating and comparing the quality of the assessments would have been informative as studies used many different assessments, some of which are more established with better psychometric properties compared to others. However, the purpose of this review was not to analyze or criticize the quality of the assessments but rather to demonstrate the variety of assessments used in studies involving bilingual children. Another limitation of this review is that it did not systematically assess the methodological quality. Though quality indicators including sample size, study design, and attrition were examined descriptively, systematically coding the quality of studies with tools such as Statement for Improving Reporting in Observational Studies (STROBE, Vandenbroucke et al., 2014) would have provided further plausible explanations for the heterogeneity in findings across studies. Finally, our review only included bilingual children who speak English and another language, limiting the generalizability of the findings to studies using non-English assessments with bilingual children who do not speak English.

To better understand the processes needed to support RC in bilingual children, future research could explore the role of task-related factors (e.g., type of questions) as well as administrative-related factors (e.g., orally provided questions and/or passages, being able to refer back to the passage) in bilingual children’s LC and RC. Moreover, the impact of question type can be examined, for example, by comparing performance on questions that demand literal, semantic, or inferential understanding. Inferencing questions could also be further broken down into several factors such as questions that require background knowledge, context, or prediction. Lastly, the content of passages can be compared (i.e., narrative, informational, expository passages). As a matter of fact, the original paper that outlines the SVR asserts that parallel materials need to be used when assessing LC and RC in order to adequately test SVR (Hoover & Gough, 1990). That is, if narrative material is used for LC, then narrative as opposed to expository material must also be used to assess RC.

Conclusion

The present review documented measures that emiprical studies have used to assess L2 LC and RC, as well as the relationships examined between LC and RC in school-aged bilingual children. A comprehensive examination of 25 studies revealed that our understanding of the role of LC on RC among bilingual children is limited by three methodological challenges. First, the definition of LC is diverse across studies, making cross-study comparisons difficult. Second, studies measuring LC and RC use a wide range of measures with diverse characteristics (e.g., type of questions, number of passages and questions, administration rules). Third, there is a lack of consistency in how each component of LC has been operationalized, and these components are often examined as a single composite score of LC, making it difficult to examine the unique contribution of LC components to RC. To address these limitations, future research is necessary to investigate not only which construct best predicts RC in bilingual learners, but also enrich operational approaches and methodologies that assess components of LC that yield the most predictive power.

Relevance to the Practice of School Psychology

The current review sought to achieve a better understanding of the reading development in bilingual children with existing measures in order to shed light on the sources of success or difficulties in reading. In line with previous research (Melby-Lervåg & Lervåg, 2014), our results showed strong relationships between LC and RC, but also demonstrated the nuances of how LC is defined and measured and how different components of LC differentially relate to RC. These findings bring several implications for clinicians and educators in school psychology when assessing bilingual children with reading difficulties. First, in addition to acknowledging the important role LC has in RC, it would be important for clinicians and educators to consider and assess the multiple dimensions of LC when trying to understand reading skills in bilingual children. Furthermore, examining and comparing the child’s performance in terms of administration (e.g., read aloud vs. silently, timed vs. untimed), task characteristics (e.g., content of reading, narrative vs. expository passages) and question types (e.g., cloze, multiple choice) of the chosen assessment may provide a better picture of the child’s weakness and strengths in reading. Lastly, the dynamic characteristic of bilingualism calls for the need to consider important variables of bilingualism for children, including language history and background, home and community language experience, L1 language proficiency, sociolinguistic factors, and other cultural and social factors that may influence the child’s development, such as level of acculturation. In sum, when assessing a bilingual child, it would be crucial for assessors to have a good understanding of the characteristics and normative sample of their assessments, make an informed choice on which assessments to use and areas to assess for, consider the child’s language background and experience, and use clinical judgment when interpreting scores. Having a fuller picture of each child’s reading profile will help better inform supports and strategies for reading that is more individualized and appropriate for children with diverse language backgrounds.

Supplemental Material

sj-docx-1-cjs-10.1177_08295735231183608 – Supplemental material for Assessments of English Reading and Language Comprehension in Bilingual Children: A Systematic Review 2010 to 2021

Supplemental material, sj-docx-1-cjs-10.1177_08295735231183608 for Assessments of English Reading and Language Comprehension in Bilingual Children: A Systematic Review 2010 to 2021 by Julie H. J. Oh, Badriah Basma, Armando Bertone and Gigi Luk in Canadian Journal of School Psychology

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by Doctoral Research Scholarship from the Fonds de Recherche du Québec – Société et Culture (FRQSC) to the first author and the Discovery Grants from the Natural Sciences and Engineering Research Council of Canada (NSERC; RGPIN-2020-05052) to the last author.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.