Abstract

Generative AI (GenAI) is breaking new ground in emulating human capabilities, and content generation may only be the beginning. In this work, the authors systematize and illustrate promising areas of application of GenAI in marketing. They lay out a conceptual framework along two dimensions: (1) GenAI impact (i.e., human enhancement, human replacement) and (2) the marketing cycle stage (i.e., marketing research, marketing strategy formulation, marketing actions related to the marketing mix instruments). Based on the AI ethics literature, the authors then introduce a set of principles (i.e., ASSURANCE:

Keywords

Automation cannot and never will take the place of intelligence, good layout, well trained personnel, effective advertising, carefully planned promotions, sound policies, and satisfying customers’ needs. Rather, automation can be a force working in conjunction with these factors, to move the goods from the producer to the consumer in the most efficient manner. (Goeldner 1962, p. 56)

Six decades after Goeldner’s (1962) remarks, and after the launch of ChatGPT, the most prominent example of generative artificial intelligence (GenAI), scholars are debating the extent to which GenAI can be considered intelligent and can enhance, imitate, and even replace humans. At the same time, companies are increasingly integrating GenAI systems and applications into various marketing functions and tasks such as marketing communication content creation (e.g., Adobe Sensei GenAI, Jasper AI, Salesforce's Einstein), customer service (e.g., Salesforce's Einstein), dynamic pricing (e.g., Uber's Michelangelo), and marketing research and analytics (e.g., Adobe Sensei GenAI; MonkeyLearn). For instance, the Swedish fintech company Klarna has built over 300 GPTs (generative pretrained transformers), or custom versions of OpenAI's ChatGPT designed for specific use cases, for internal use and was thus able to cut marketing and sales costs by $10 million on an annualized basis through GenAI use for image production and other marketing tasks (Klarna 2024).

GenAI shifts the focus from AI-driven prediction to new content creation (Hermann and Puntoni 2024), which suggests that “a broader human augmentation (and possibly replacement) revolution is on the horizon” (Grewal, Guha, and Becker 2024, p. 870). Although the marketing discipline has undergone a variety of institutional and technological changes over the last six decades, the introductory quotation shows remarkable parallels to today's discussions, challenges, and controversies including economic, legal, social, and particularly ethical concerns (Peres et al. 2023). Addressing these challenges and concerns requires collaboration and coordination across marketers, policy makers, and governments (Grewal, Guha, and Becker 2024).

Against this backdrop, we first systematize and illustrate the benefits, opportunities, and areas of applications of GenAI in marketing along two dimensions: (1) GenAI impact (i.e., human enhancement, human replacement) and (2) the marketing cycle stage (i.e., marketing research, marketing strategy, and marketing actions). Second, we discuss how GenAI can be designed and deployed in marketing practice in an ethical and socially beneficial way by introducing the ASSURANCE principles:

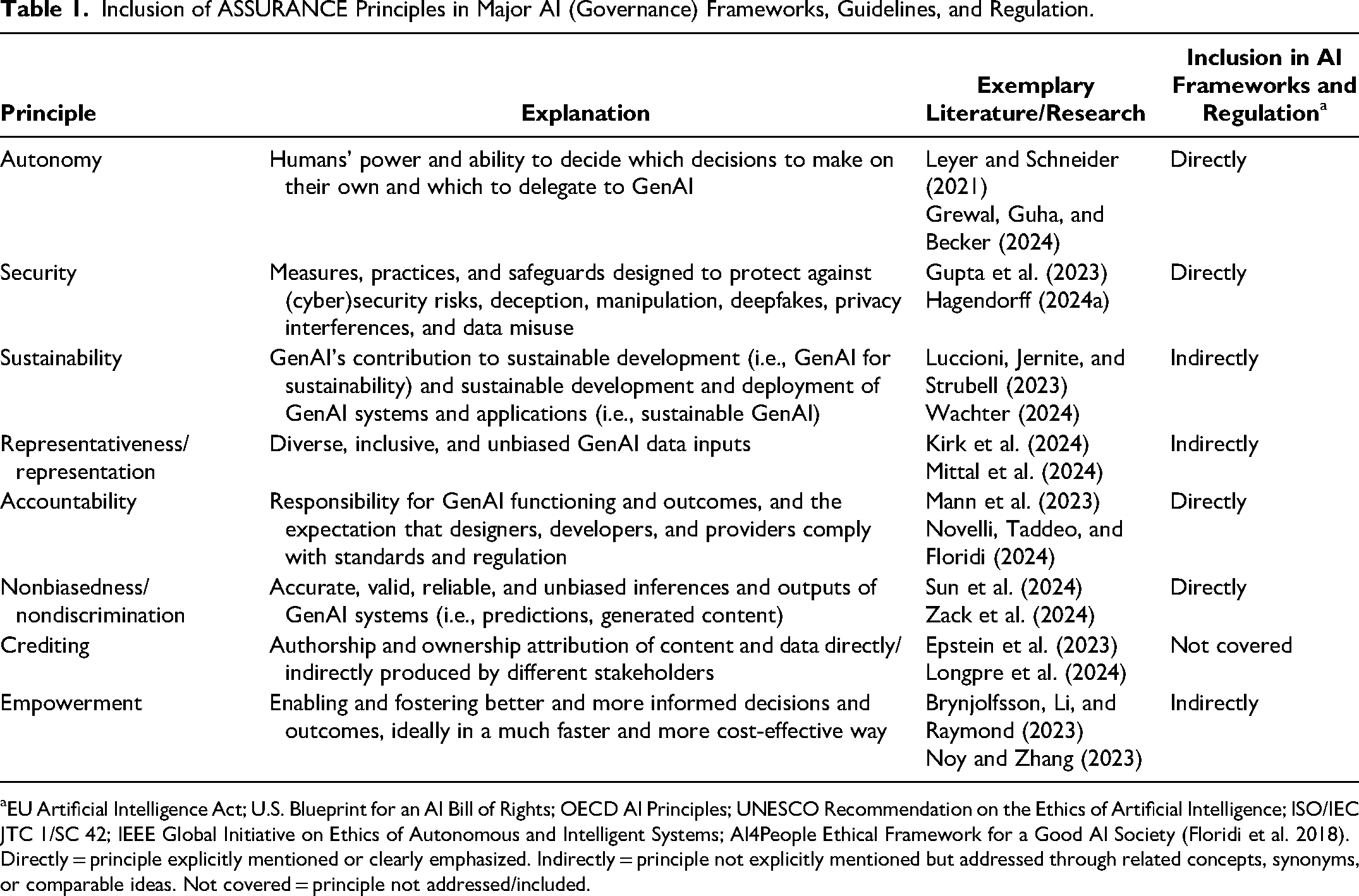

Inclusion of ASSURANCE Principles in Major AI (Governance) Frameworks, Guidelines, and Regulation.

EU Artificial Intelligence Act; U.S. Blueprint for an AI Bill of Rights; OECD AI Principles; UNESCO Recommendation on the Ethics of Artificial Intelligence; ISO/IEC JTC 1/SC 42; IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems; AI4People Ethical Framework for a Good AI Society (Floridi et al. 2018). Directly = principle explicitly mentioned or clearly emphasized. Indirectly = principle not explicitly mentioned but addressed through related concepts, synonyms, or comparable ideas. Not covered = principle not addressed/included.

GenAI Impact Along the Marketing Cycle

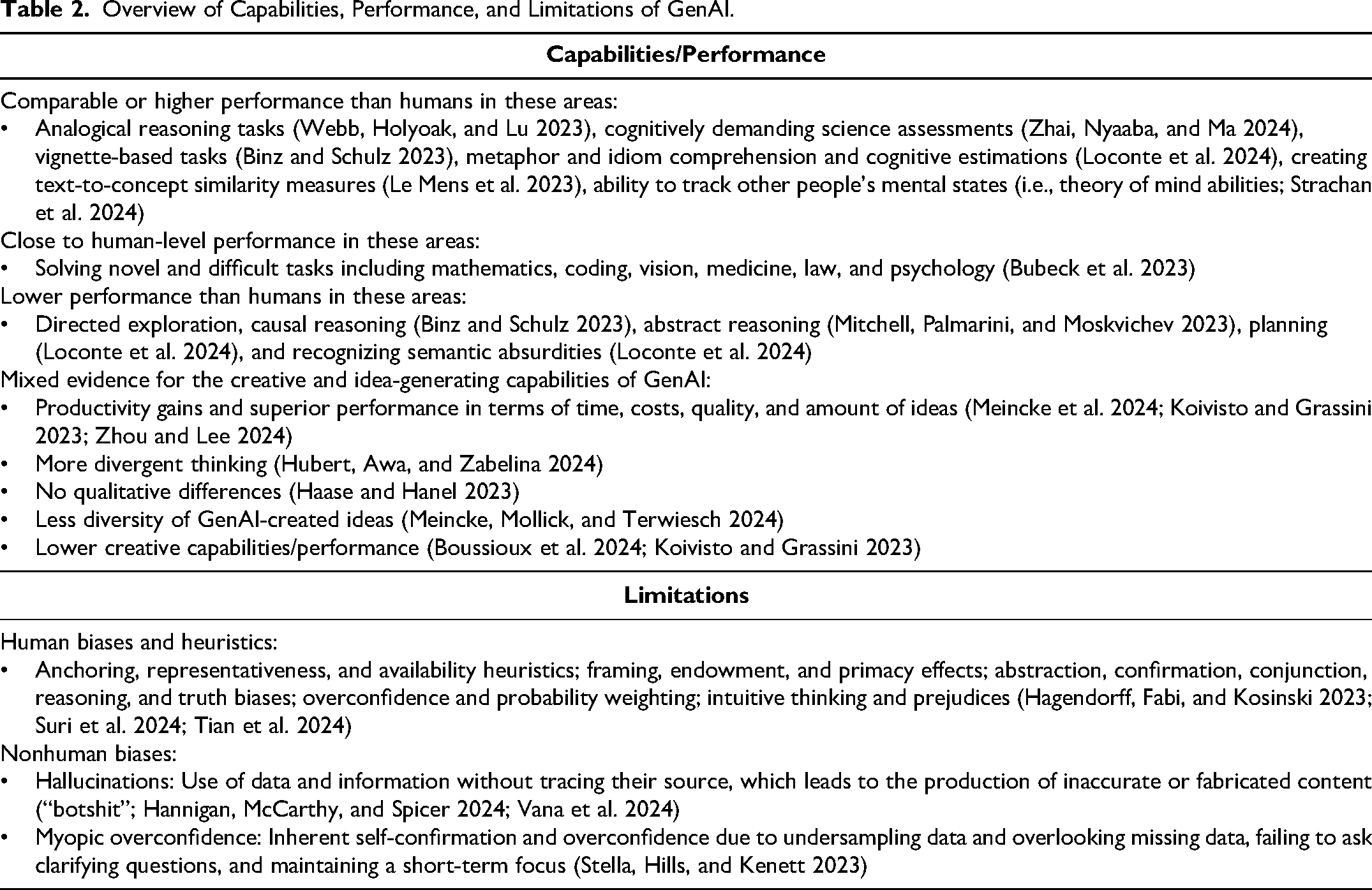

In simple terms, GenAI refers to a class of advanced AI models designed to generate seemingly new (multimodal) content in the form of text, image, audio, video, code, and synthetic data (Banh and Strobel 2023). GenAI is based on deep learning models (i.e., artificial neural networks designed to learn from large amounts of data by processing it through multiple layers) and techniques that are trained to learn high-dimensional probability distributions from training datasets and to create new, similar samples resembling approximations to the underlying classes of training data (for overviews, see Banh and Strobel 2023; Feuerriegel et al. 2024). Thus, GenAI models differ from traditional AI techniques in that GenAI aims at generating new data instead of determining the existing data's decision boundaries (e.g., classification, regression, clustering; Banh and Strobel 2023). Moreover, GenAI constitutes the first AI model the general public considers a general purpose artificial intelligence system (Triguero et al. 2024), that is, an advanced AI system that can (1) effectively and autonomously perform tasks (also in domains in which it was not trained), (2) learn from limited data, and (3) learn from its limitations to enhance performance (Triguero et al. 2024). This definition sheds light on two focal capabilities of GenAI, that is, the capacity to fulfill both predefined and new (undefined) tasks. Since the launch of ChatGPT, there has been a staggering increase in research on GenAI's capabilities and performance, and the still nascent research on GenAI already reveals the immense potential for human capability emulation. We briefly highlight central findings in Table 2. With regard to the rapidly growing but still nascent field of GenAI research, note that these findings and those in the subsequent sections are subject to change and refinement (depending on areas of applications, specific tasks, technological progress and advancement of GenAI models, and so forth) and should not be regarded as conclusive.

Overview of Capabilities, Performance, and Limitations of GenAI.

While past AI capabilities were usually related to analytical tasks and automating repetitive and routine tasks (Huang and Rust 2021), GenAI is breaking new ground in emulating human capabilities (Feuerriegel et al. 2024), leading to a new class of GenAI capabilities and impact within which content generation may only be the beginning. Within the scope of our work, we use two umbrella categories to reflect GenAI impact: human enhancement and human replacement. By human enhancement, we mean the augmentation or improvement of human capabilities, making marketing tasks more efficient or insightful. Human replacement implies human capability emulation and (fully) autonomous task fulfillment, or, in other words, that GenAI is taking over tasks traditionally performed by humans, potentially leading to automation in certain marketing areas. We further propose that marketers can leverage GenAI for human enhancement and human replacement differently along the different stages of the marketing cycle: marketing research, marketing strategy, and marketing actions (Huang and Rust 2021). That is, we consider human enhancement through GenAI feasible and promising for strategy formulation and conceptualization (“figuring out what to do”; Morgan et al. 2019). In contrast, we predict that human replacement will become relatively more prevalent in the marketing research and marketing action stages, the tactical stages of implementation, operations, tactics, and actions (“doing it”; Morgan et al. 2019). Of course, that does not mean that there is no potential and feasibility for human enhancement in these tactical stages; on the contrary, we will refer to this accordingly. 3

Marketing Research

In their predictions of the emerging marketing research trends in the new millennium, Malhotra and Peterson (2001, p. 217) stressed that “data analysis will make greater use of artificial intelligence procedures.” This prediction has come true, and to an astonishing extent. Within the following two decades AI and machine learning methods, including support-vector machines, topic modeling, ensemble trees, deep neural networks, network embedding, and natural language processing, have been extensively applied and advanced in marketing research and analytics (Huang and Rust 2021). GenAI methods, including large language models (LLMs) in combination with a vast amount of (structured and unstructured) customer and market data (e.g., customer demographics, purchase history, social media and commercial digital footprints, smartphone data, customer interaction data, smart sensor data, sales and retail data), both moving from unimodal data and models to multimodal data and models, will bring a new level of sophistication, performance, informativeness, and efficiency to the already highly advanced methods for data mining and scraping (Boegershausen et al. 2022), text analysis (Rathje et al. 2024), customer sentiment analysis (Krugmann and Hartmann 2024), and (structured and unstructured) big data and marketing analytics (Balducci and Marinova 2018). For these rather tactical/operational activities and tasks, GenAI provides immense opportunities to augment and improve human capabilities (i.e., human enhancement), which interestingly parallels the view of marketing automation from over a decade ago (Lee and Bradlow 2011). Furthermore, GenAI can pave the way for fully automated marketing research and synthetic research subjects and data (Arora, Chakraborty, and Nishimura 2025; Dillion et al. 2023; Li et al. 2024; Sarstedt et al. 2024) or, in other words, human replacement. An exploding number of tech startups are offering synthetic data solutions (e.g., Arena, Betterdata, Entropik, Evidenza, Osum, Synthetic Users, Synthesis AI, Zumo Labs, to name a few). Many large corporations are also exploring the role that synthetic data can play in the marketing research process, and various industry reports and forecasts confirm that this is an area of intense activity and growth.

Synthetic research subjects and data imply that GenAI is deployed to “mimic human respondents to describe, explain, and predict human behavior” (Sarstedt et al. 2024, p. 1254); that is, human participants, responses, and judgments are substituted by “humanlike” responses, judgments, and data generated by GenAI (Dillion et al. 2023). Thereby, GenAI can increase the efficiency of “traditional” market research through research process acceleration and cost reduction. It could be also used for hard-to-reach respondents like doctors and senior managers (Arora, Chakraborty, and Nishimura 2025) and for more nuanced questions including demographic variables or contextual variations that would be otherwise too expensive or even infeasible to study with human research participants (Li et al. 2024). Initial published empirical research reveals agreement rates between human- and LLM-generated data sets (i.e., perceptual maps based on brand similarity measures and product attribute ratings) of over 75% (Li et al. 2024). Moreover, LLMs have been shown to correctly choose answer direction and valence for quantitative research and to effectively create sample characteristics, generate synthetic respondents, and conduct and moderate in-depth interviews for qualitative research (Arora, Chakraborty, and Nishimura 2025). Other studies find GenAI responses and judgments to be consistent with economic theory (e.g., utility maximization in classic revealed preference theory; Chen et al. 2023) and well-documented patterns of consumer behavior and real-world willingness to pay for products and features (Brand, Israeli, and Ngwe 2023). Besides, GenAI has achieved high agreement with expert judgments in classification and labeling tasks for identifying marketing-mix variables in consumers’ social media posts (Ringel 2023). In contrast, prior research suggests that GenAI appears to diverge from consumer risk preferences (Qiu, Singh, and Srinivasan 2023) and human preferences of intertemporal rewards (Goli and Singh 2024) and appears to be limited in predicting new outcomes absent in its training data and in leveraging substantially new information when predicting more versus less novel outcomes (Lehr et al. 2024).

Beyond marketing and economic contexts, GenAI exhibits response distributions beyond surface similarity with human responses, that is, nuanced, multifaceted, and reflecting the complex interplay between ideas, attitudes, and sociocultural context characterizing human attitudes (Argyle et al. 2023). Given the long-standing interest in consumer personality traits and profiles for marketing research, another important finding is that GenAI is able to identify personality traits and to incorporate those assigned to them in their outputs; that is, they can show synthetic personality traits (Hilliard et al. 2024; Jiang et al. 2023).

GenAI can be used not only for synthetic research subjects and data, but also for hypothesis generation and research ideation (Lehr et al. 2024; Si, Yang, and Hashimoto 2024; H. Wang et al. 2023). In this context, prior research reveals that GenAI is able to “generate scientifically promising ‘alien’ hypotheses unlikely to be imagined or pursued without intervention until the distant future, which hold promise to punctuate scientific advance beyond questions currently pursued” (Sourati and Evans 2023, p. 1682). Hence, research to date suggests—cautiously but not conclusively—that GenAI can theoretically be harnessed to replace humans across the entire research process and thus to fully automate, diversify, augment, and substantially accelerate it (H. Wang et al. 2023). Preliminary (not peer-reviewed) research in the machine learning field reveals that LLMs are able to autonomously generate novel research ideas, write code, execute experiments, visualize results, and describe findings by writing a full scientific paper, and thus to cover the entire research process (Lu et al. 2024). Another (not peer-reviewed) study compares research ideas generated by 100 natural language processing researchers with LLM-generated ideas and finds that LLM-generated ideas are judged as more novel than human expert ideas while they are rated slightly weaker in terms of feasibility (Si, Yang, and Hashimoto 2024).

Despite all these promises, there are also perils and challenges. Ethical and practical issues include but are not limited to privacy and data protection, model inconsistencies, biases in underlying data and thus accuracy and validity issues, accountability and misguided interpretations and derived implications, and intentionally misleading and deceptive research findings (Sarstedt et al. 2024). In regard to the latter and misuse, GenAI can be theoretically shaped to produce any desired outcome with extensive training, sufficient input, and adjustments of prompts and models (Gao et al. 2024). Last but not least, GenAI differs fundamentally from humans because it relies on probabilistic patterns and lacks the embodied experiences or survival objectives that shape human cognition (Gao et al. 2024).

In sum, the first empirical evidence to date hints at GenAI's potential for fully automated and autonomous marketing research leveraging synthetic research participants, particularly for research questions that are difficult to study with human participants due to considerations regarding scale, selection bias, costs, and legal, moral, or privacy issues (Aher, Arriaga, and Kalai 2023). However, we must also point out potential drawbacks, namely, low moral acceptability, lower trust, and lower perceived quality of accuracy when elements of the research process are conducted by GenAI, as one study shows (Niszczota and Conway 2023).

Marketing Strategy

Following Huang and Rust (2021) and Morgan et al. (2019), we subsume the following key strategic decisions under marketing strategy: strategy type choice (e.g., differentiation vs. cost leadership), segmentation, targeting/target market selection, and positioning/value proposition. The idea of technology-supported marketing strategy is not new; on the contrary, technology-based marketing management and decision support systems have a long history. Nearly five decades ago, Little (1979) posed the question “whither decision support?” and conceptualized it as a “problem-solving technology … that consists of people, knowledge, software, and hardware successfully wired into the management process” (p. 9). Twenty years later, Bucklin, Lehmann, and Little (1998) envisioned that, in 2020, marketing managers would focus on long-run and strategic issues and the design and improvement of decision-making models and predominantly make decisions for which no models exist. These historical perspectives on marketing decision support systems underline the interplay of technology and humans (i.e., marketers) and, from today's perspective and following the terminology of our work, can be described as human enhancement.

We suggest that human enhancement (as compared with human replacement) is—at least for now—the most viable form of GenAI application for the strategic stage of marketing strategy. On the human side, marketing managers and decision makers often possess tacit knowledge (Zebal, Ferdous, and Chambers 2019), apply heuristics (Guercini 2023), and consider subjective criteria (Parry, Cohen, and Bhattacharya 2016), all of which are not codified and accessible as GenAI model input. Moreover, they are often confronted with highly unstructured decision problems that cannot be easily reduced to and solved by quantitative algorithmic processes (Parry, Cohen, and Bhattacharya 2016). Finally, strategic marketing decisions such as strategy type choice or target market selection are often overarching, high-level strategic decisions with potentially companywide consequences for which only managers, but not GenAI, (have to) take responsibility (Leyer and Schneider 2021). Relatedly, high-level strategic decision-making has implications for task-oriented (e.g., advising and helping employees), relation-oriented (e.g., motivating employees), and change-oriented (e.g., formulating compelling visions) leadership roles, where human managers/leaders are still crucial for the latter two (Quaquebeke and Gerpott 2023). When these considerations are taken together, GenAI seems to be better suited to enhance rather than replace humans for strategy development and decision-making tasks (Jarrahi 2018).

First, insights generated through marketing research can inform segmentation, targeting, and positioning (Huang and Rust 2021), which can be based, for example, on psychological profiles derived by GenAI (Matz et al. 2024). Generally, GenAI provides unprecedented capabilities to account for different layers of complexity and identify latent patterns in data that humans might overlook (Parry, Cohen, and Bhattacharya 2016). Thereby, GenAI can assist and enhance human decision-making. Second, GenAI can enhance marketers’ capabilities by obtaining and analyzing market and competitor data in order to predict competitive reactions, which fosters marketers’ strategic competitive reasoning (Montgomery, Moore, and Urbany 2005). Third, GenAI can augment managers’ forecasting capabilities by increasing forecasting accuracy (Schoenegger et al. 2024). Fourth, GenAI can support marketers in the evaluation of business models as another critical and complex strategic decision-making task (Doshi et al. 2025).

Marketing Actions

By marketing actions, we refer to the marketing-mix activities and tactics: product/consumer, promotion/communication, price/cost, and place/convenience, including customer service (Huang and Rust 2021).

Product

GenAI can be harnessed for product development and ideation processes. In this context, studies have demonstrated that GenAI idea generation for new products is faster and cheaper, as well as related to higher purchase intention (Meincke et al. 2024), and that GenAI-created ideas are judged as more novel, higher in customer benefit, and similarly feasible, compared with human ideas (Joosten et al. 2024). Moreover, other studies demonstrate that GenAI-designed products are preferred over products designed by humans when the design source is unknown (Moreau, Prandelli, and Schreier 2023), and that consumers are willing to pay more for products featuring artistic-style images created by GenAI (W. Wang et al. 2023). Finally, Huang and Rust (2024a) laid out a prompt–response–reward engineering framework to enable GenAI to (1) generate novel products/features expected to be preferred by customers, (2) search product/feature space for unfamiliar combinations (i.e., incremental innovation), (3) explore the boundary of expectation (i.e., disruptive innovation), and (4) reverse the interaction (i.e., assign the question-asking role to GenAI) to generate new products that define a new market (i.e., radical innovation).

Promotion

In addition to (new/innovative) product development, GenAI has the potential to autonomously create marketing communication content (Park 2024). First, recent research suggests that GenAI can craft personalized messages (for both marketing and noncommercial purposes) matching consumers’ psychological profiles (e.g., personality traits, political ideology, moral foundations) that consumers consider significantly more persuasive than nonpersonalized messages and that significantly increase their willingness to pay for the marketed products (Matz et al. 2024). Second, another study demonstrates that GenAI can write online reviews for experiential goods that are highly similar to human-expert reviews (Vana et al. 2024). However, given the increasing prevalence of fake reviews (Sahut, Laroche, and Braune 2024), it is essential to disclose GenAI use and label content accordingly (e.g., “GenAI-generated”; Epstein et al. 2023) to ensure transparency and build trust. To avoid backlashes and consumer skepticism, human oversight should also be emphasized (Altay and Gilardi 2024). Third, recent research finds that GenAI-created images perform better in all stages of the purchase funnel and can convey brand personality attributes (Jansen et al. 2024). They are perceived as higher quality and more realistic, while leading to comparable consumer engagement on social media as human-created visual content (Hartmann, Exner, and Domdey 2025), and they lead to higher sales probabilities and prices and attract more consumers (Xie and Li 2024). However, GenAI social media content and emotional marketing communication creation can also adversely affect consumers’ attitudinal and behavioral responses when they perceive brands or content as less authentic (Kirk and Givi 2025). Finally, virtual influencers created by GenAI can be used in both areas previously mentioned; that is, they can replace human endorsers and influencers for promotional activities, while simultaneously and constantly interacting with and caring for customers (Sorosrungruang, Ameen, and Hackley 2024).

Price

Although algorithmic pricing methods including dynamic and personalized pricing are already long established and widely used (Huang and Rust 2021), GenAI can provide additional value for pricing. First, companies can harness GenAI's sophisticated market prediction and forecasting capabilities—also in fast-changing and complex market environments—to make accurate and precise demand forecasts that in turn inform pricing policies and levels. Second, consumers’ psychological profiles derived by GenAI (Matz et al. 2024) can inform psychological pricing strategies. Third, the conversational and learning capabilities of GenAI agents can enable dynamic and real-time price negotiations across business-to-business, business-to-consumer, and consumer-to-consumer contexts and markets (Shen and Jin 2024).

Place

GenAI's unprecedented interaction and communication, adaptive learning, and context recognition capabilities, in combination with the possibility of equipping them with humanlike personas (Radivojevic, Clark, and Brenner 2024), can break new grounds for customer service and care (Sigala et al. 2024), particularly in emotionally charged interactions (Huang and Rust 2024b). In this regard, Huang and Rust (2024b) propose a four-stage GenAI-driven customer care journey that moves customers from the initial contact and interactions (i.e., emotion recognition) to be understood (i.e., emotion understanding), helped (i.e., emotion management), and eventually emotionally connected to the company (i.e., emotional connection). Besides, and indirectly related, GenAI has been shown to generate high-quality responses to customer complaints (Koc et al. 2023). GenAI's increasing capabilities to understand and manage emotions can then contribute to dealing with consumers in an angry emotional state (Crolic et al. 2022), as is presumably the case with complaints, among other things. In addition, consumer interactions with GenAI can be harnessed to predict purchase intentions (Liao, Ma, and Moe 2024). Another promising area of application is customer journey management, specifically, the prediction of the customer's next touchpoint and the evolvement of conversion rates in multichannel marketing contexts (Lu and Kannan 2024). Finally, GenAI can be leveraged for distribution-related and operational tasks such as sourcing, distribution, and transportation strategy; supply chain design; and scheduling and routing (Jackson et al. 2024).

Having outlined promising areas of application of GenAI throughout the marketing cycle, we next discuss a set of principles that we consider essential to ensure that GenAI is implemented and used in marketing practice in an ethical and socially responsible manner.

A Managerial and Public Policy Checklist: The ASSURANCE Principles

There have been and still are controversial scientific, political, and public debates on economic, legal, social, and ethical concerns in respect to GenAI (Novelli et al. 2024a; Peres et al. 2023; Stahl and Eke 2024), including calls for pausing GenAI development and deployment (Gans 2025). However, we follow the reasoning that the impact of GenAI (both positive and negative) can be only understood and learned (by public policy makers and regulators) if it is adopted and deployed in the real world (Gans 2025). Responding to the calls for marketing to contribute to the greater good (Chandy et al. 2021; Mende and Scott 2021), we therefore suggest following the ASSURANCE principles when developing and deploying GenAI to achieve societally desirable outcomes while minimizing potential risks and harm. We conceptualized the ASSURANCE principles by reviewing the (1) central AI and GenAI ethics principles laid out by prior research (Floridi et al. 2018; Hagendorff 2024b; Jobin, Ienca, and Vayena 2019; Stahl and Eke 2024) and (2) major AI frameworks, guidelines, and regulations established by national states or associations of states and international institutions and organizations. While autonomy, justice/fairness, security/safety, and accountability are central and recurring ethical principles proposed and advocated by pre-GenAI and GenAI research and frameworks (Floridi et al. 2018; Hagendorff 2024b; Jobin, Ienca, and Vayena 2019; Stahl and Eke 2024), sustainability, copyright, and authorship crediting have gained particular importance for GenAI (Hagendorff 2024b; Novelli et al. 2024a; Stahl and Eke 2024). To account for both GenAI inputs (i.e., data), which are often undervalued despite their critical importance (Mittal et al. 2024), and outputs (i.e., predictions, generated content), we distinguish justice/fairness as encompassing representativeness/representation as input-related principles and nonbiasedness/nondiscrimination as output-related principles. We further include empowerment to account for the beneficence principle of AI ethics (Floridi et al. 2018; Jobin, Ienca, and Vayena 2019; Stahl and Eke 2024) and the increasing calls for AI literacy (Hermann 2022). As shown in Table 1, none of the major AI frameworks, guidelines, and regulations directly cover all ASSURANCE principles. Thus, our set of principles can be a first step to fill this void and to account for the emerging (ethical) GenAI challenges that are not yet comprehensively addressed by public policy and regulation.

Autonomy

Central to both human enhancement and human replacement by GenAI are human autonomy and agency (Floridi et al. 2018). The more GenAI applications in marketing move from human enhancement to human replacement, the more tasks are delegated to GenAI and the more autonomy is granted to it, which implies decreasing levels of human agency, autonomy, and control (Grewal, Guha, and Becker 2024; Leyer and Schneider 2021). In other words, an automation–autonomy trade-off can emerge. Due to this trade-off, companies have to find a balance between harnessing GenAI to enhance or replace humans and facilitating a sufficient level of human autonomy (Leyer and Schneider 2021). Generally, marketing managers and employees are not passive recipients of GenAI technologies, but they can and sometimes have to reactivate their agency when GenAI is assisting them or taking over their tasks (Bankins et al. 2024). That is, humans have to remain “in the loop,” supervise and control, and possess the ability to intervene. For the complex, nondeterministic, and inscrutable foundation models, prior research even suggests integrating artificial agents into teams as agents, not as tools, and establishing collaborative agency with dynamic and adaptive task allocation and role assignments (i.e., human–machine teaming; Tsamados, Floridi, and Taddeo 2024). Keeping humans in the loop seems to be particularly important (1) for high-level strategic decision-making with companywide outcomes (Leyer and Schneider 2021), (2) for emotionally charged customer service and interactions (Huang and Rust 2024a, 2024b), (3) in ethically or morally salient contexts (Jotterand and Bosco 2020), and (4) when vulnerable consumers (i.e., consumers lacking resource access and/or control) are involved in or affected by marketing research, strategy, and/or action (Hermann, Williams, and Puntoni 2024). When GenAI enhances human decision-making in marketing strategy, human autonomy must be preserved by allowing marketers to override GenAI-created strategies or adjust recommendations, while potential human replacement in the marketing research and action stages has to balance automation with maintaining human oversight to ensure ethical research and business conduct and consumer treatment.

Security

Despite all the advantages and benefits of GenAI, there are also severe (cyber)security, deception, and manipulation risks, particularly when GenAI replaces humans in the marketing action stage and the related marketing communication, customer interactions, and pricing functions. For instance, it has been shown that people are unable to distinguish social media profiles created by GenAI from real human profiles (Rossi et al. 2024), which can pose tremendous risks in respect to the dissemination of false information and deceptive or manipulative content. Besides, jailbreaks pose tremendous challenges (Deng et al. 2023; Gupta et al. 2023). Initially related to technology in general, jailbreaking refers to “bypassing restrictions on electronic devices to gain greater control over software and hardware” (Gupta et al. 2023, p. 80221). In the GenAI realm, it means to strategically prompt GenAI (1) to circumvent safeguards and generate (malicious) content that is otherwise moderated or blocked or (2) to generally command it in ways not originally intended by its developers (Deng et al. 2023; Gupta et al. 2023). Different studies prove the effectiveness of jailbreak prompts to successfully attack LLM chatbots (Deng et al. 2023). Companies can implement safeguards such as deception identification, content labeling, digital watermarking (i.e., embedding nonvisible but identifiable markers to trace the origin of generated content and differentiate it from human-created content), risk management and veracity checks, and content restoration measures and processes (Dathathri et al. 2024; Epstein et al. 2023). For instance, to counter adversarial attacks (e.g., cyberattacks, jailbreaking), companies could maintain a second GenAI as a control system that functions as benchmark and baseline against which the original system's behavior is assessed (Taddeo, McCutcheon, and Floridi 2019). Divergences between the two systems based on risk-dependent thresholds then result in security alerts. Moreover, to address deception and content veracity issues, commercial AI system providers can establish multiagent structures (i.e., multiple independent model instances generating, critiquing, and debating their own output to collaboratively reach a more accurate consensus response) that facilitate and foster collaboration between systems, content cross-checking, and eventually collective and complementary intelligences (Du et al. 2023).

SUstainability

While different studies investigate AI's potential and contributions to sustainability and the United Nations Sustainable Development Goals (Cowls et al. 2023), research on the environmental impact of GenAI is scarce. Luccioni, Jernite, and Strubell (2023) find that the most energy- and carbon-intensive tasks relate to new content generation, including text and image generation, summarization, and image captioning. Furthermore, they find that (1) image generation is more energy- and carbon-intensive than text generation (e.g., creating a single image requires as much energy as charging a standard smartphone), (2) model training is orders of magnitude more energy- and carbon-intensive than inference, and (3) using multipurpose models (particularly zero-shot models, i.e., models that generalize knowledge from related data and perform tasks without any prior task-specific training) for discriminative tasks is more energy-intensive than task-specific models for the same tasks. In theory, human replacement in the marketing research and marketing action stages (e.g., using synthetic data to test product features, versions, and targeted/personalized marketing communication appeals) facilitates an infinite number of iterations. However, GenAI content generation can entail substantial environmental costs (particularly energy-intensive GenAI models) that marketers have to be aware of and should monitor with sustainability impact assessments (Hacker 2023; Wachter 2024). Put simply, “we cannot underestimate AI's carbon footprint” (Wachter 2024, p. 700). Conversely, GenAI in marketing can contribute to sustainability because GenAI-assisted decision-making (i.e., human enhancement in the marketing strategy stage) and human replacement in the marketing and action stages can reduce the likelihood of failures in overall strategies, product launches, and marketing campaign launches, all of which can be highly resource-intensive.

Representativeness and Representation

As with AI in general, the performance and prediction accuracy of GenAI rests on the quality and integrity of the underlying data (Mittal et al. 2024). In other words, biased and skewed underlying data due to over- and underrepresentation of certain demographic groups, information, or (sensitive) features; inclusion of misleading proxy features; or sparse data for certain individuals/groups, phenomena, and features can undermine performance (Barredo Arrieta et al. 2020). For example, race in AI systems is (mis)represented by fixed racial categories that do not fully capture sociohistorical implications of race and the individual differences within racial groups (Yi and Turner 2024). Besides, stereotypes (i.e., biases with regard to social groups, such as gender or racial stereotypes) are reflected by and embedded in language (i.e., historical text corpora; Charlesworth, Caliskan, and Banaji 2022) and imagery (Guilbeault et al. 2024) that serve as GenAI input. Using this data can then result in stereotyped predictions and the perpetuation of stereotypes. That can lead to a vicious circle in which biased predictions based on biased data inform decision-making, the outcomes of which in turn serve as data inputs (Guilbeault et al. 2024). Another issue is that current LLMs are trained on (textual) data most likely produced by individuals from Western, educated, industrialized, rich, and democratic (WEIRD) societies, who generally produce most of the textual data on the internet (Atari et al. 2023). Hence, marketers must be very careful when compiling or using (external) datasets for training their GenAI models, but also when curating their data life cycle. That is, they have to generate and leverage diverse, inclusive, and unbiased datasets (Mittal et al. 2024). That is particularly important for the marketing research and action stages, in which GenAI autonomously generates consumer insights and content.

Researchers already started to address these representation issues by developing and providing more representative data resources like PRISM (i.e., participatory, representative, individualized, subjective, multicultural), which maps the sociodemographics and stated preferences of 1,500 diverse participants from 75 countries, to their contextual preferences and fine-grained feedback in 8,011 live conversations with 21 LLMs (Kirk et al. 2024). Companies can complement their own datasets with such external data sources or generate synthetic data (e.g., related to underrepresented consumer segments) to enrich their data. For documentation and transparency purposes, they should develop and curate data sheets comprising information regarding the objectives, the intended use, the demographic distribution of individual-level data/images, and the potential limitations of the dataset (Mittal et al. 2024).

Accountability

There are ongoing debates around who—if anyone—can be held responsible for GenAI outputs due to the unclarity or indeterminacy of responsibility (i.e., responsibility gap; Mann et al. 2023). This question becomes even more complex when humans are replaced in the marketing research and action stages. While these debates often focus on negative responsibility gaps (i.e., responsibility for negative outputs), positive responsibility gaps exist as well, that is, who—if anyone—is credited for positive outputs (Mann et al. 2023). Problems associated with accountability include the opacity, black box nature, complexity, and often proprietary nature of AI systems and models, as well as the various stakeholders involved (Novelli, Taddeo, and Floridi 2024). To address these issues, marketers should aim at establishing accounting regimes through appropriate governance systems that comprise (1) compliance (i.e., alignment with ethical/legal standards), (2) reporting (i.e., documenting agents’ conduct), (3) oversight (i.e., examining information, gathering evidence, assessing agents’ conduct), and (4) enforcement (i.e., determining consequences agents have to bear). Such governance systems can be guided and characterized by (1) proactive/ex ante accountability (accountability as a virtue) with a focus on planning, compliance, and oversight, (2) reactive/ex post accountability (accountability as a mechanism) with greater emphasis on redress, reporting, and enforcement, or (3) a combination of both (Novelli et al. 2024b, 2024c). Setting up an AI ethics board can complement and further strengthen such governance systems (Schuett, Reuel, and Carlier 2024). The ultimate goal must be to establish clear accountability mechanisms that allow GenAI predictions, recommendations, and decisions to be tracked and reviewed to ensure that any errors or harmful outcomes can be attributed and corrected.

Nonbiasedness and Nondiscrimination

As indicated previously (see the “Representativeness and Representation” section), GenAI systems’ inferences are as accurate, valid, and reliable as the underlying data (Mittal et al. 2024). Or put differently, “there is no such thing as unbiased data. AI models, systems, and their training and testing data should be assumed to be biased unless proven otherwise” (Wachter 2024, p. 715). Previous studies in the general AI realm have already revealed that AI delivers advertisements and makes product recommendations in a gender-biased way (Rathee et al. 2023). Similarly, GenAI (re)produces race and gender biases when creating imagery or modeling demographic diversity (Sun et al. 2024; Zack et al. 2024) and even generates covertly racist and potentially harmful decisions about people just based on their dialect (Hofmann et al. 2024). Besides, GenAI exhibits various humanlike and nonhuman biases and heuristics (discussed previously) that can distort outputs and performance. Training data based on WEIRD samples can also bias predictions since WEIRD populations tend to maximize personal life satisfaction and to be motivated by monetary rather than psychological incentives, for example (Krys et al. 2024; Medvedev et al. 2024). Thus, companies have to monitor, validate, and, if necessary, adjust modeling/training approaches, parameters, and prompting to avoid discriminatory and biased predictions related to and treatment of consumer segments or individual consumers on the basis of demographic, psychological, economic, and other factors (Du and Sen 2023) and to avoid perpetuating or even exacerbating socioeconomic inequalities (Capraro et al. 2024; Grewal, Guha, and Becker 2024). Therefore, GenAI models and output based on different model specifications and underlying data (e.g., complemented vs. not complemented by synthetic data) should be cross-validated before strategic decisions (i.e., human enhancement) are made or when recommendations for action derived from automated market research are put into practice and marketing-mix measures are autonomously implemented (i.e., human replacement).

Crediting

Training GenAI models with datasets based on the work of different content producers such as professional artists, writers, programmers, and publishers, among others, raises substantial legal, copyright, labeling, and compensation questions and issues (Epstein et al. 2023; Samuelson 2023). Particularly when GenAI replaces humans in product development and content creation for marketing communication, legal or ethical issues related to ownership can occur. The legal issues surrounding GenAI-produced content are complex. On the one hand, there are potential infringements of intellectual property (IP) rights and copyright regarding the underlying data and (legally protected elements of) preexisting content (Longpre et al. 2024; Novelli et al. 2024a; Wang et al. 2024). On the other hand, questions arise as to whether GenAI output can be regarded as derivative creations based on the preexisting content or as autonomous creations that are legally independent from the preexisting content (Novelli et al. 2024a). Accordingly, several lawsuits have been filed against companies that have commercialized and monetized their use of GenAI (Novelli et al. 2024a; Wang et al. 2024). As the scale and scope of the production of content that serves as data input are massive and change dynamically and because datasets are often lineages of data sources that are crawled, curated, annotated, and repackaged (Longpre et al. 2024), corporate governance and self-regulation might be ill-suited or ineffective. Moreover, GenAI training and model modifications to lower the likelihood of generating infringing outputs can compromise model performance when content generation is restricted or when high-quality, copyrighted training data is excluded from training (Wang et al. 2024). Hence, public policy is needed to provide a harmonized yet adaptive legal framework for the interdependent relationships between content producers, content processors and users (i.e., third parties, companies), and content recipients (i.e., consumers).

Empowerment

Human enhancement and human replacement by GenAI should empower marketers and companies. However, the productivity effects of GenAI for assisting humans differ according to skill level, with highly skilled workers benefiting less than low-skilled workers (Brynjolfsson, Li, and Raymond 2023; Noy and Zhang 2023). Hence, employees across different marketing functions and activities and hierarchical levels tend to profit differently from GenAI use. Nevertheless, the foundation for effectively leveraging GenAI while retaining control over strategic decisions (i.e., human enhancement) and autonomously created output and content for marketing research and actions (i.e., human replacement) is a minimum level of AI literacy that can be defined as a “basic understanding of (a) how and which data are gathered, (b) the way data are combined or compared to draw inferences, create, and disseminate content, c) the own capacity to decide, act, and object, (d) AI's susceptibility to biases and selectivity, and (e) AI's potential impact in the aggregate” (Hermann 2022, p. 1270).

To develop and foster AI literacy, companies should introduce GenAI training programs to help employees acquire knowledge and skills, build confidence, and master tasks with GenAI systems, ultimately empowering them to use GenAI productively, conscientiously, and responsibly (Bankins et al. 2024; Feng, Botha, and Pitt 2024). As a starting point, employees should be encouraged to test and “play” with GenAI tools within clearly defined boundaries, which can boost trust, reduce anxiety, and strengthen perceived contributions to value and knowledge cocreation (Feng, Botha, and Pitt 2024). For such training programs and subsequent use in business practice, GenAI itself (e.g., customized conversational learning apps) can be utilized to provide feedback and create interactive learning experiences (Jürgensmeier and Skiera 2024). At the company level, it is recommended that companies develop GenAI capabilities (i.e., “the ability of a firm to select, orchestrate, and leverage its [Gen]AI-specific resources”; Mikalef and Gupta 2021, p. 2) including tangible (e.g., data, technology), human (e.g., technical skills), and intangible (e.g., organizational change capacity) resources.

Implications for Public Policy and Future Research

Given the lengthy and often controversially debated legislative processes for regulating rapidly evolving and proliferating technologies and applications like (Gen)AI, a principles-based approach constitutes a solid starting point (Häußermann and Lütge 2022). Generally, technological revolutions, as we are currently experiencing, can be divided into three phases: introduction, permeation, and power phase (Moor 2005). As technologies go through these phases, not only are the intensity of use, the number of users, and the understanding and integration into and impact on commercial and private spheres and society as a whole increasing, but so are the (ethical and societal) challenges. Particularly, the increasing variety of areas of application of such technologies raise (ethical and societal) issues for which codified guidelines and regulation have not been developed yet. GenAI is no exception. On the contrary, we are only at the beginning of a development whose direction, speed, and effects are difficult to predict. Given this unpredictability, detailed policy guidelines may not yet be feasible; instead, a principles-based framework serves as a flexible foundation as GenAI technology and its implications evolve. Thus, our work and our principled approach are an attempt to help steer the development of GenAI in a way that will bring benefits for companies, consumers, the environment, and society as a whole. By following the ASSURANCE principles, marketers are able to address the focal current and—most likely—future issues and challenges related to GenAI design, development, and deployment, especially when humans are replaced and GenAI fulfills tasks fully autonomously. The principles also acknowledge that, while concrete policy recommendations may currently seem aspirational and can be implemented in the medium or long run, they can provide a foundation for public discourse and future regulatory refinement as GenAI's real-world applications as well as their business and societal implications become clearer. The business practice of increasingly deploying GenAI in marketing can inform public policy and subject already existing regulations to the reality test, as GenAI's effects can only be learned through real-world adoption (Gans 2025). This also establishes the connection to the view of GenAI systems as regulatory objects that are only governable for regulatory oversight as material entities when one adequately accounts for “how they are rendered observable (and to whom), what layers of information about them are made inspectable (and to whom), and how (and why and by whom) they can or should be modifiable” (Ferrari, Van Dijck, and Van den Bosch 2023, p. 7).

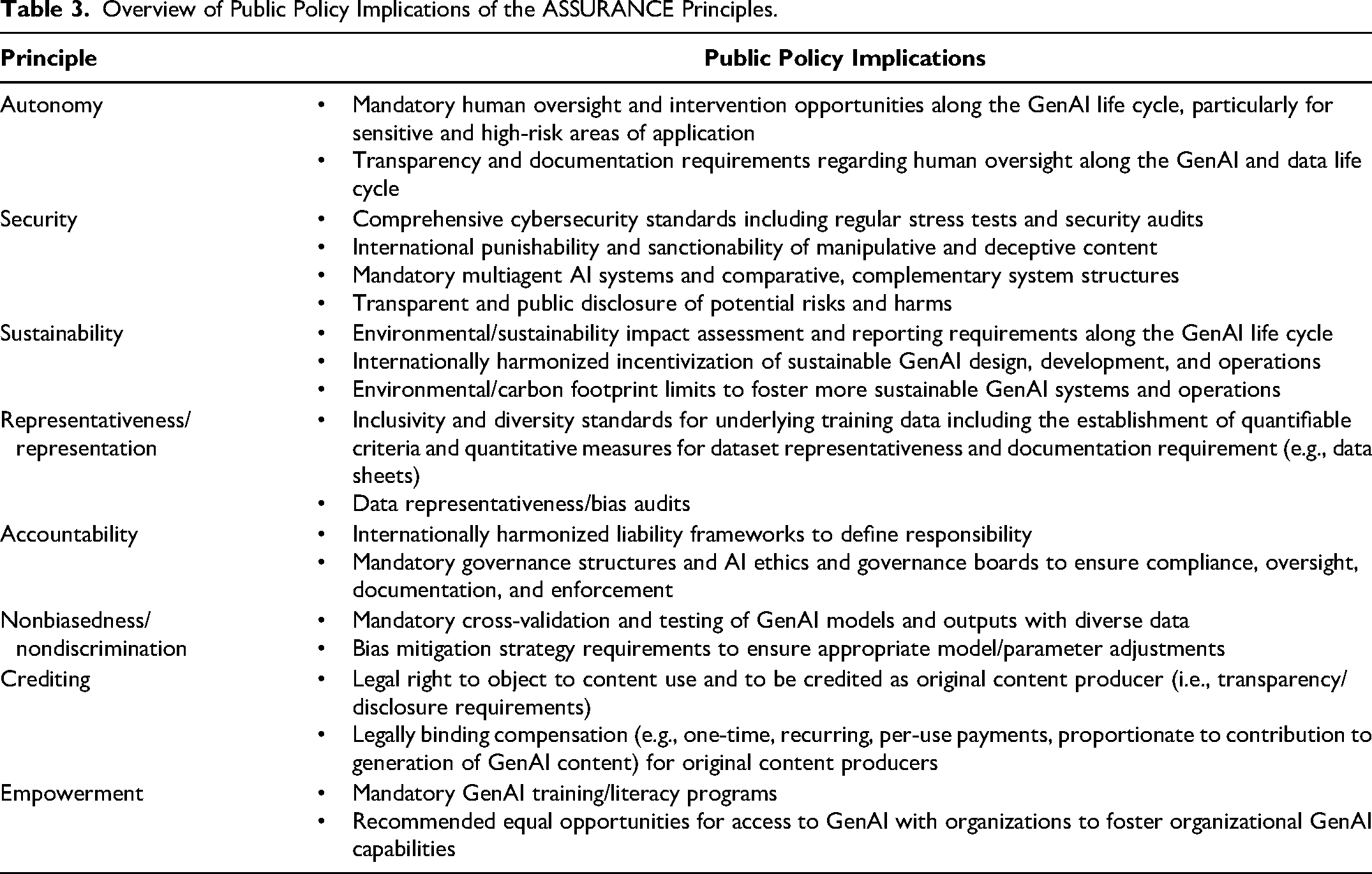

Although the European Union's Artificial Intelligence Act (AIA) marks a milestone for AI regulation, it already appears ill-suited to govern GenAI effectively (Novelli et al. 2024a, 2024b). Among other things, its risk-based approach (i.e., four risk categories: unacceptable, high, limited, and minimal) seems inadequate for regulating GenAI with its versatile and unpredictable applications and multidimensional risks (Novelli et al. 2024b; Wachter 2024). The inherently dynamic nature of the GenAI development challenges static regulatory structures like the AIA, as GenAI's novel applications and risks are not easily categorized or mitigated through fixed legislative measures. Last but not least, lobbying by large technology companies and member states has led to a softening and watering down of the AIA, resulting in a strong reliance on self-regulation and self-certification, in weak oversight and investigatory mechanisms, and in far-reaching exceptions for both the public and private sectors (Wachter 2024). Table 3 provides an overview of broad and general implications and measures to consider by public policy for each of the ASSURANCE principles. It is important to recognize that within each principle, there are short-, medium-, and long-term measures and challenges. Short-term measures align most closely with existing regulatory frameworks and can be adopted more rapidly by organizations, by GenAI developers and providers, and by national jurisdictions with lower regulative effort. For example, under the autonomy principle, requiring transparency and documentation for human oversight can be implemented in the short term, whereas embedding mandatory oversight mechanisms across all stages of the GenAI life cycle (particularly for sensitive, high-risk applications) constitutes a medium- to long-term endeavor because it requires more time and coordination. Similarly, while initial inclusivity and diversity audits for training data (representativeness principle) can be achieved relatively quickly, establishing robust international standards for data representativeness will take longer due to the need for broad consensus and technical alignment. Medium-term measures typically require more (international) coordination and policy adaptation but remain achievable in the near future. For instance, implementing cross-validation testing of GenAI models with diverse datasets (nonbiasedness/nondiscrimination principle) is a medium-term goal that can ensure fairness while fostering trust in GenAI systems. At the same time, introducing international sanctions against manipulative or deceptive uses of GenAI (security principle) could begin in this phase but will require global collaboration and legal harmonization. Long-term measures are the most ambitious, challenging, and encompassing since they depend on substantial international cooperation and alignment. They also might be subject to change and need adaptation as GenAI applications evolve and associated risks and challenges become better understood. For instance, developing internationally harmonized liability frameworks under the accountability principle or creating global IP frameworks to manage compensation and recognition for content creators under the crediting principle are measures that demand extensive legal efforts and global cooperation. A nuanced approach avoids oversimplifying each principle as fitting into a single time horizon but implies that every principle involves a continuum of measures, ranging from simpler, immediate actions to more systemic, long-term efforts. Such a perspective allows policy makers to balance immediately actionable steps against the more complex, ambitious changes required to fully address GenAI's ethical challenges.

Overview of Public Policy Implications of the ASSURANCE Principles.

Generally, GenAI's ongoing advancement and evolution underscores the need for adaptive regulatory frameworks that can accommodate new risks and ethical considerations as they emerge in practice. Besides, public policy makers should create regulatory sandboxes, that is, controlled environments where GenAI regulation is tested and validated in collaboration with the relevant stakeholders (as a form of coregulation) before it comes into effect (Novelli et al. 2024c).

Given the legal complexities in regard to crediting (Yang and Zhang 2024), which is currently not covered by major AI frameworks and regulation (see Table 1), we outline exemplary suggestions for policy makers to regulate and enforce the crediting principle of our framework. First and foremost, harmonized IP and copyright standards with global enforcement mechanisms are pivotal to address the inconsistencies across jurisdictions and limitations and loopholes in current AI regulation (Novelli et al. 2024a; Wachter 2024). To ensure uniform copyright protection and global acknowledgment of original content creators’ rights, international bodies like the World Intellectual Property Organization could lead efforts to define copyright and IP parameters for GenAI inputs and GenAI-created content, distinguishing between derivative creations and fully autonomous creations (Novelli et al. 2024a; Yang and Zhang 2024). Advocacy groups of original content creators should be also involved in this process. Collaborations between public policy makers, industry, and academic institutions could raise content creators’ awareness of the IP implications and contribute to building a knowledgeable ecosystem in which original content creators, GenAI developers, and providers are aware of their rights and obligations. Moreover, transparency, tracking, and verification standards and mechanisms (e.g., comprehensive data provenance archives/databases) in respect to the data and sources used to train GenAI (Longpre et al. 2024) models are necessary to enable original content creators to assert their IP rights. In this context, watermarks can help track or detect whether content is generated by a GenAI model trained on copyrighted data (Ren et al. 2024). However, enforcing watermarking across the different GenAI providers and models including open-source models deployed in a decentralized manner requires legislative coordination. In other words, public policy makers should mandate watermarking as part of GenAI development to facilitate that original content creators can trace their contributions and address potential IP infringements. To ensure fair compensation, policy makers should stipulate revenue sharing models like licensing and royalty schemes or compensation proportionate to contributions (Wang et al. 2024). However, there is no one-size-fits-all approach; schemes can differ depending on various economic and operational factors, such as training data availability (i.e., abundant vs. scarce), competition environment, and GenAI model quality (Yang and Zhang 2024). Finally, as in the General Data Protection Regulation, original content creators should be granted the right to object to the use of their original creations for both GenAI model training and subsequent GenAI use, since training and subsequent GenAI outputs can have different copyright infringement implications (Longpre et al. 2024).

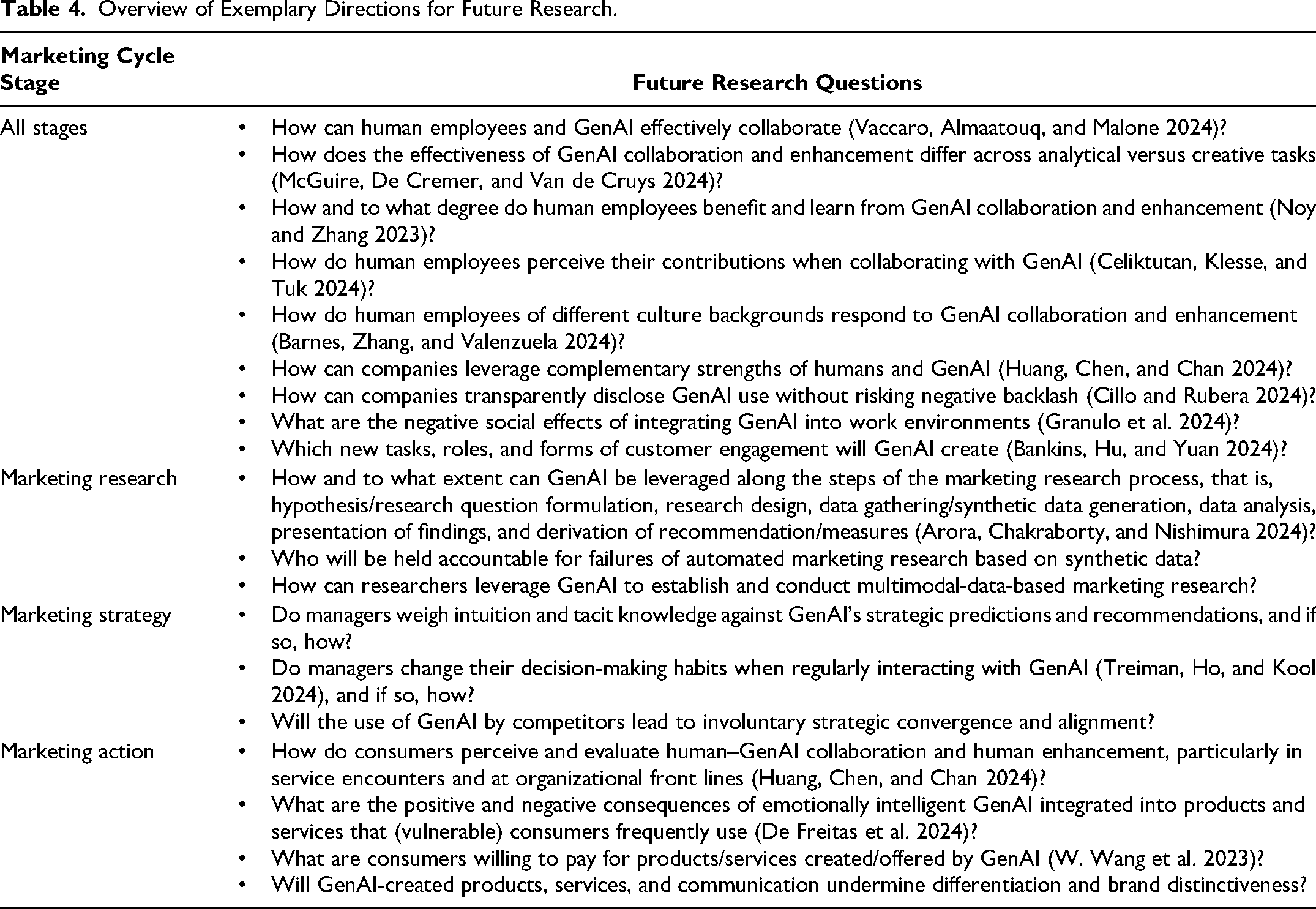

In addition to the public policy implications, GenAI's impact along the marketing cycle stages opens up a new research arena, and our framework can serve as inspiration in this field of research. In Table 4, we summarize exemplary avenues for future research.

Overview of Exemplary Directions for Future Research.

Conclusion

In our work and following the special issue's focus, we lay out promising areas of application of GenAI in marketing and a set of principles (that directly or indirectly relate to perils) to achieve beneficial outcomes for companies, consumers, and society at large. We investigate GenAI's potential for both human enhancement and replacement across the stages of the marketing cycle. We deem human enhancement through GenAI promising for marketing strategy, whereas we consider human replacement to be relatively more likely in the tactical stages of marketing research and marketing actions. We then complement this analysis by introducing the ASSURANCE principles. We recommend that marketers pay attention to and follow these principles in order to contribute to the greater good (Chandy et al. 2021; Mende and Scott 2021). Finally, we illustrate implications and directions for public policy and future research, respectively. The rapid advancement, increasing pervasiveness, expanding areas of application, and inadequacy of current regulation necessitate more work and collaboration between companies, public policy makers, and governments to account for the variety of challenges GenAI already poses and will pose in the future (Grewal, Guha, and Becker 2024). To conclude on a more philosophical note, given all the utopias and dystopias discussed in relation to GenAI, a reasonable view is the following: “How we view the prospects of [Gen]AI depends on how we view ourselves” (Mueller 2012, p. 68).

Footnotes

Joint Editors in Chief

Jeremy Kees and Beth Vallen

Special Issue Editors

Shintaro Okazaki, Yuping Liu-Thompkins, Dhruv Grewal, and Abhijit Guha

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.