Abstract

Developing students’ source-based argument writing skills is a vital educational goal for the 21st-century information society. Consequently, researchers and educators continually seek ways to understand and improve students’ capacities for advancing arguments and synthesizing multiple documents, texts, or sources in a range of subject areas in secondary schools. This study examined differences between middle and high school students’ argument essays (N = 207) in multiple dimensions of source-based argument writing in history, the dimensions writing in history, and the relations of identified dimensions to overall writing quality. Using multivariate analysis of covariance, middle and high school students’ writing significantly varied in areas of writing related to language use, the presentation of ideas, and evidence use. Their writing varied less so for skills related to historical thinking, indicating a lack of development in these skills across secondary school. Findings from confirmatory factor analysis and structural equation modeling showed a bifactor model with a general factor and 4 specific factors—Presentation of Ideas, Evidence Use, Language Use, and Historical Thinking—best represented writing in this genre, with the general factor strongly predicting holistic writing scores. Implications for both research and educational practice are discussed, including the importance of attending to developmental variation in discrete writing skills.

U.S. National Assessment of Educational Progress (NAEP) results indicate secondary students in the United States encounter challenges while responding to tasks involving source-based argument writing (Goldman & Scardamalia, 2013; National Center for Education Statistics [NCES], 2015), including interpreting what the task is requesting, presenting both sides of an argument, and supporting arguments with evidence and reasoning (Anmarkrud et al., 2014; Du & List, 2020; Goldman & Scardamalia, 2013; List et al., 2019; National Council for Social Studies [NCSS], 2013). Given these challenges, U.S. policymakers have increasingly emphasized the development of students’ argument writing skills across the content areas (e.g., history and science) to prepare students for the demands of a 21st-century information society (Hillocks, 2011). The Common Core State Standards (CCSS), for example, emphasize that learning to argue with evidence and reasoning is “essential to both private deliberation and responsible citizenship in a democratic republic” (National Governors Association Center for Best Practices & Council of Chief State School Officers, 2010, p. 3).

Because policymakers and researchers in the United States emphasize that effective literacy instruction is predicated on knowledge of students’ strengths and challenges (Graham et al., 2016), the emphasis on increased argument literacy across content areas must be accompanied by nuanced assessment of how well students can communicate arguments within these disciplines (Goldman et al., 2016). Such assessment must attend to disciplinary variation in how they conceptualize effective communication owing to their distinct purposes, norms, standards for evidence and reasoning, and practices for making knowledge claims (Langer, 2011; Moje, 2008; Shanahan & Shanahan, 2008, 2012).

For example, in history, students analyze evidence from multiple sources to make “historically or empirically situated interpretation[s]” (CCSS, Supplemental Appendix A, p. 23); key disciplinary practices include generating interpretive claims about the past using documentary evidence, reasoning, and conceptual lenses like continuity and change or cause and effect (Goldman et al., 2016; Langer, 2011; Moje, 2008; Monte-Sano, 2010; NCSS, 2013; Nokes & De La Paz, 2023). While students coordinate disciplinary skills and knowledge to write effective academic arguments tailored to the norms for historical inquiry, students also use general writing skills and knowledge to meet the rhetorical and disciplinary demands of a task (De La Paz et al., 2017; Hayes, 2012; Hillocks, 2011; MacArthur et al., 2019; McCutchen, 2006; Monte-Sano & De La Paz, 2012; NCES, 2015).

Because of its complexity and importance for secondary students, a better understanding of source-based argument writing in history—its dimensions, how dimensions relate, and which aspects students find most challenging—is of interest to researchers, educators, and policymakers in the United States so that they can design instruction and learning contexts to develop disciplinary literacy and prepare students for college and career readiness (Applebee & Langer, 2011; NCSS, 2013).

Recent studies that have assessed how students write arguments in history have independently measured general aspects of argument writing, like cohesion, alongside disciplinary literacy skills like sourcing documents (De La Paz et al., 2017; Monte-Sano, 2010; Steiss et al., 2024). In addition to attending to the general and disciplinary dimensions of source-based writing in history, researchers would benefit from a nuanced understanding of how these features relate to each other so that they can better measure, understand, and improve student writing (NCES, 2015). In addition, examining differences between middle and high school students can present a developmental portrait of writing that can be used to design precise and tailored writing instruction in history. While numerous studies have examined the reading and thinking of students in history, few studies have examined student writing, its discrete parts, and how they relate (Monte-Sano, 2012; Pessoa et al., 2019). To this end, the present study examined U.S. secondary students’ source-based argument writing (SBAW) in history, using 207 writing samples of secondary students from the Southwest United States. We examined (1) key differences in writing performance across middle (ages 10-14) and high school students (ages 14-18) in history, (2) the dimensions of secondary students’ SBAW in history and their relations, and (3) the relations of the identified dimensions of SBAW in history to holistic writing scores.

Literature Review

Source-based argument writing in history

In the United States, assignments to write arguments in history class typically require students to respond to a historical inquiry question using multiple sources to construct a plausible and evidence-based interpretation of past events (Goldman et al., 2016; Monte-Sano, 2010, 2011; Nokes & De La Paz, 2023). Such writing requires higher-order reasoning aligned with norms for substantiating knowledge claims in a discipline, such as sourcing, contextualization, and corroboration (Kim, 2020; Kim & Graham, 2022; Moje, 2008; Wineburg, 1991). Sourcing means attending to information about where, who, or when a document/text/media was created to assess its relevance and reliability. Corroboration refers to the intertextual process of comparing information across sources (e.g., whether two sources [dis]agree on some key point). Contextualization involves situating sources, actors, and events within their temporal and spatial contexts to understand their perspectives and relevance to inquiry. These skills allow writers to make accurate and persuasive arguments in response to historical questions (Britt & Aglinskas, 2002).

The challenges of integrating evidence that is relevant and reliable and analyzing evidence are well documented in academic writing research (Breakstone et al., 2013; De La Paz et al., 2012; Goldman & Scardamalia, 2013; Monte-Sano, 2010; Wiley & Voss, 1996). The use of sources can be difficult even for students who are proficient in other types of writing, like narrative, because it requires reading and synthesizing source information, integrating information from sources with prior knowledge, and distinguishing one’s own ideas from source information while writing (Cho et al., 2023; MacArthur et al., 2023; Monte-Sano, 2010; Traga Philippakos, 2022).

Because historical writing constructs meaning from facts with no clear answers, disciplinary norms of U.S. academic history writing require writers to position their interpretations of events as tentative, unconfirmed, and liable to be disproved with countervailing evidence (Bain, 2006; Breakstone et al., 2013; Monte-Sano & De La Paz, 2012; Wineburg, 1991). Therefore, acknowledging and determining the validity of counterclaims is a crucial part of source-based arguments in history that must be addressed in writing evaluation and instruction (Bain, 2006; Goldman et al., 2016; Monte-Sano, 2010, 2012; Monte-Sano & De La Paz, 2012; Nokes & De La Paz, 2023; Wineburg, 1991).

Researchers have observed variations in how students use evidence across developmental levels in writing in multiple subjects, noting younger students in Grades 6-8 are especially challenged by using and interpreting evidence (Correnti et al., 2020; Wang et al., 2018). As students get older, they are somewhat more able to cite textual evidence from primary and secondary documents and reason with evidence in history and other disciplines (CCSSI, 2012; Goldman et al., 2016; NCSS, 2013). Still, researchers note that middle and high school students alike struggle to make sophisticated interpretations of the documentary evidence during inquiry tasks and argumentation (Wineburg, 1991, 2001). While sometimes referred to as digital natives, young people who have grown up in a digital information society saturated with sources may not necessarily possess the skills to evaluate the sources, synthesize ideas across sources, use sources in arguments, and interpret sources to make claims (MacArthur et al., 2023; Rouet et al., 2017; Steiss et al., 2024). A further challenge for many writers is balancing purposeful summary, evidence, and commentary (Olson et al., 2023).

Though research has found that older students can learn disciplinary strategies for meaning-making in history (Jay, 2021; Reisman, 2012), several studies note that middle and high school students typically read and respond to historical texts without using disciplinary skills like corroboration, sourcing, and contextualization (Goldman et al., 2016; van Boxtel & van Drie, 2012; Wineburg, 1991). Studies have typically examined the writing of middle or high school students separately (Monte-Sano, 2008; Pessoa et al., 2019). Directly examining differences between middle and high school students’ writing in a single study could have important curricular and instructional implications. Additionally, while some research frames historical thinking as a separate dissociable dimension of writing quality in history (De La Paz et al., 2017; Du & List, 2020; Monte-Sano, 2012; Pessoa et al., 2019), such a view will be supported or challenged presently.

Assessing source-based argument writing in history

When measuring writing quality, one must consider, among other concerns, (1) how the multidimensionality of the writing construct is represented and (2) the context in which writing occurs. The complex and multidimensional nature of the writing construct has been explicitly recognized in the direct and indirect effects model of writing (DIEW), a theoretical model that highlights the importance of measurement of constructs and their implications (Kim & Graham, 2022).

Evaluation of writing quality in large-scale assessment is often measured via holistic (assigning a single score) or analytic (evaluating discrete skills) scoring (MacArthur et al., 2019; Olinghouse et al., 2015). Holistic evaluation assumes that writing is a unidimensional construct, whereas the analytic evaluation approach presumes that writing comprises multiple dimensions (e.g., structural organization, language use, and conventions). Whether a holistic or analytic view of writing quality is adopted, the aspects of writing subject to evaluation vary based on the developmental stage of the writer (e.g., secondary students), the register of the writing (e.g., narrative vs. argument writing), and the writing task (e.g., causal analysis of a historical event) as different tasks require cognitive, social, and rhetorical solutions (Graham, 2018; Kim & Graham, 2022; Wagner et al., 2011).

Analytic scoring rubrics often include a subset of traits such as argument, evidence, and style. On scoring rubrics, presenting ideas is often described as distinct from language-based features of writing—syntactic complexity, lexicon, the use of appropriate tone and register, and the use of conventions—in multiple disciplines and genres (Kim et al., 2015; Northwest Regional Educational Laboratory [NREL], 2011; National Writing Project, 2005, 2010; Steiss, 2022; Troia et al., 2019; Wilson et al., 2017).

Presenting ideas is also viewed as separate from evidence use in source- or text-based genres (Correnti et al., 2020; Wang et al., 2018; Steiss et al., 2022). In history, the presentation of ideas may be referred to as substantiation—how well the writing offers explanations in support of a claim (De La Paz et al., 2017; Monte-Sano, 2010).

While writing rubrics sometimes distinguish Organization or Structure from Ideas, a view of structure and ideas as separate writing factors has yet to be validated to our knowledge (Steiss et al., 2022). The presentation of ideas may include what is said and how it is communicated. Organizational elements like introductory and concluding paragraphs state and restate main ideas. Distinct body paragraphs substantiate claims with supporting claims, evidence, and reasoning. In this way, structure allows ideas to be presented and developed clearly for readers. Therefore, ideas and structure could be a single construct in writing (Kim et al., 2015; Steiss et al., 2022; Wagner et al., 2011). Whether these writing dimensions are separate or singular in historical argumentation has implications for assessment and instruction.

Methods

Study Context

This study took place at the beginning of a writing intervention to improve secondary students’ SBAW through improved teacher knowledge and instruction. Participants came from two urban school districts in the southwest United States that partnered with a university to improve student writing. Before the study, history teachers in both districts (N = 78) were surveyed about their beliefs, experiences, preparation, and practices around teaching writing. There were no significant differences between teachers across districts. In both districts, history teachers had little preparation and experience teaching writing and more than half of the teachers reported no preparation (Tate & Collins, 2022).

Though state standards emphasized developing writing skills in history and both districts used curricular materials featuring document-based questions, more than half of teachers reported teaching writing less than 30 minutes weekly. Additionally, most writing assignments were brief summaries or note-taking—extended writing assignments were uncommon. The teachers’ preparation and experiences in writing instruction are similar to others in the United States (Applebee & Langer, 2011; Kiuhara et al., 2009).

Across both districts, we used district liaisons to recruit 24 teachers to voluntarily participate in the intervention. Liaisons recruited teachers from the general track of classes (i.e., non-Advanced Placement) and from each grade level. Teachers were compensated for their involvement. They selected one class to participate, choosing the class that was (1) most like other grade-level classes in the district and (2) featured students with different language proficiencies (as defined by school district English language status guidelines). We did not survey individual teachers about their specific writing practices because writing data come from the beginning of the school year before any substantive writing instruction took place.

Participants

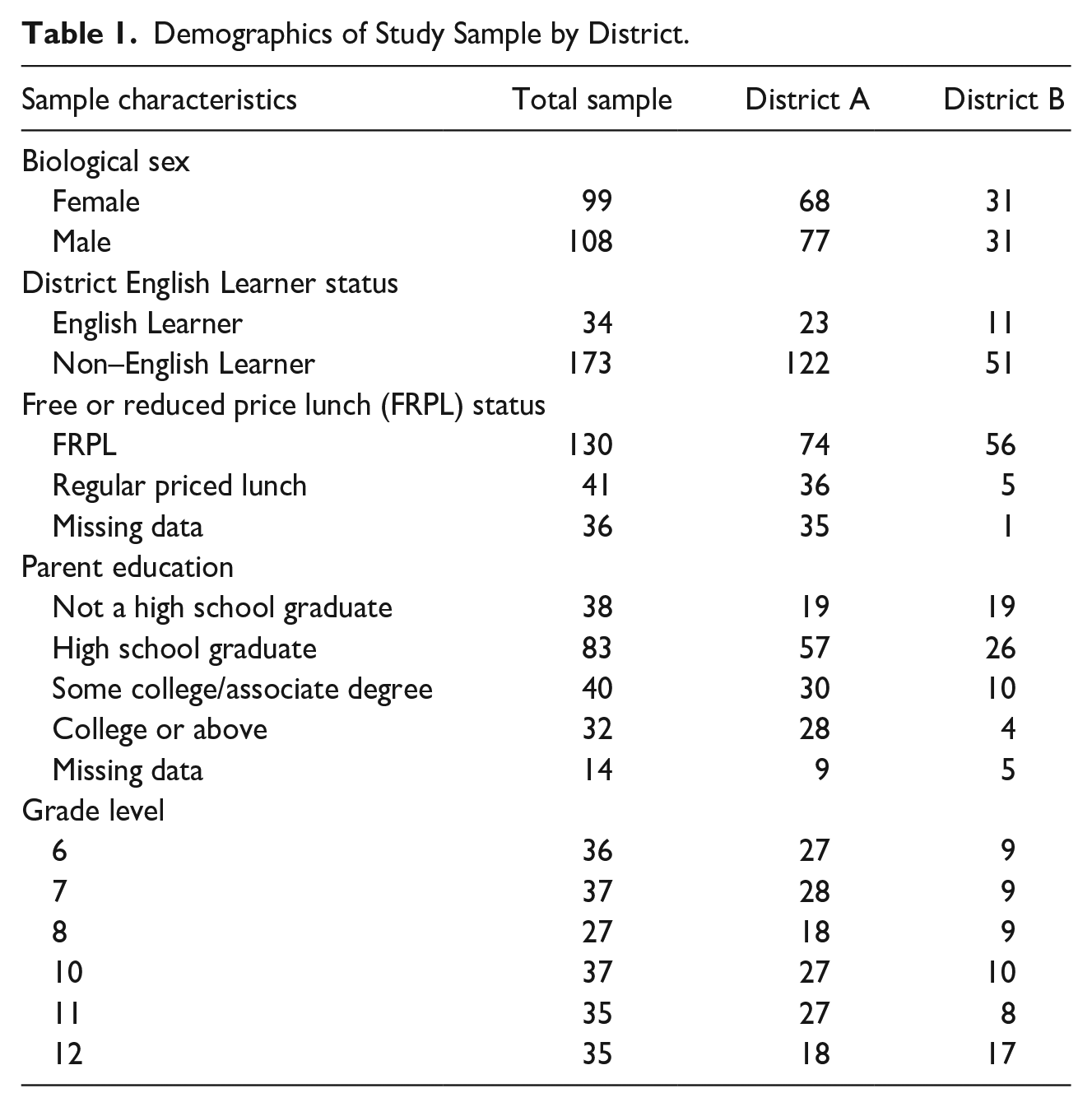

Participants in the study included 207 secondary students from the two urban school districts (District A and District B). The students came from the classrooms of 24 teachers, across Grades 6, 7, 8, 10, 11, and 12, who were recruited to participate in the intervention (no history classes were offered in Grade 9). At each grade level, three teachers in District A and one teacher in District B (4 teachers per grade level) participated. Table 1 shows participants’ characteristics.

Demographics of Study Sample by District.

For each of the four teachers at each grade level, researchers used stratified random sampling to select 9 student writing samples for analytic coding, resulting in 36 student writing samples per grade. Sampling procedures first blocked students by gender and then by English language status to ensure that an adequate number of students with varying proficiencies in English were included. The school districts determined English language designations based on U.S. Department of Education testing designations and teacher referrals (California Department of Education, 2024). In the sample, approximately 16% of the students were designated as English Learners (ELs), 27% were designated as Reclassified Fluent English Proficient (RFEP), and 57% were designated as English Only or Initially Fluent English Proficient (IFEP). The percentages of English learners in the sample were similar to districtwide percentages for each district.

One eighth-grade teacher participating in the pilot study changed teaching assignments before the school year began and did not have her Grade 8 students participate in the intervention. Therefore, the stratified random sampling of 9 students over 23 classrooms resulted in a sample of 207 students used in the present study. Forty-eight percent of the students were female (n = 99). Sixty-three percent of the students (n = 130) were receiving free or reduced-price lunch (FRPL), 20% were not receiving FRPL (n = 41), and 17% of these data were missing (n = 36). One hundred of the students were in middle school, and 107 were in high school. The districts did not provide the racial/ethnic composition of the students. Seventy-three percent of students received FRPL in District A, and 70% of students received FRPL in District B. Approximately 70% of the students in both districts were Hispanic/Latinx. Finally, the variable parent education reported the highest degree attained by either parent. The sample had the following: 18% not a high school graduate, 40% high school graduate, 19% some college, 15% college graduate, and 7% not responding. All data were accessed and used in accordance with the University of California, Irvine Institutional Review Board (2019-5085).

Measures

Source-based argument writing task

Students were randomly selected at the classroom level to write to one of two source-based analytical writing prompts. The tasks, topics, and sources were not previously discussed with students. Each prompt asked students to read four sources about a historical topic and write an argument of causal analysis. Students wrote arguments in response to one of the following questions: (1) How did the Montgomery Bus Boycott succeed? (n = 99), or (2) How did the Delano Grape Strike and Boycott succeed? (n = 108). Both prompts emphasized constructing interpretations of the past using multiple primary and secondary sources and reasoning—key writing skills emphasized in history classrooms as well as U.S. Common Core State Standards Initiative (CCSI, 2012; Goldman et al., 2016; Monte-Sano, 2011, 2012; Wiley & Voss, 1996; Wineburg, 1991). Although the questions appear explanatory rather than argumentative, they are aligned with norms for historical reasoning and argumentation (Goldman et al., 2016; Monte-Sano, 2010, 2011; Nokes & De La Paz, 2023; Wiley & Voss, 1996). Monte-Sano and Allen (2019) argued that asking students “What was the most significant cause for the success of the movement?” may be more argumentative but is ahistorical. Sound historical thinking contends that multiple forces of varying influence contribute to change and consequence (Seixas & Morton, 2012). Monte-Sano and Allen thus recommended including directions to argue in the prompt directions while maintaining the openness of the inquiry question to increase argumentative reasoning. We adopt these recommendations for the present writing task.

The prompts were designed over the previous year with multiple cycles of testing, analysis of writing samples, and integration of feedback from teachers and subject-matter experts in the fields of writing and history. The prompts featured background information, the essential question, four sources, and the writing prompt. We modified sources by creating distinct boundaries between sources (i.e., placing source text in boxes and on separate pages), eliminating extraneous vocabulary, modifying length, eliminating irrelevant proper nouns, changing syntax, including a headnote at the top of the source with context and background, and including a source note at the bottom of the source with relevant author info, audience, date, and genre to help students evaluate reliability (Britt & Aglinskas, 2002; Wineburg & Martin, 2009). The same text sets and prompts were used for middle and high school students so that a comparison between age levels could be made. The average Lexile score for both prompts (including sources) was 1,000 L-1,200 L (Grade Level Band 8-9). The prompts had 1,205 and 1,240 words respectively. The prompt, “How did the Montgomery Bus Boycott succeed?” was adapted from a similar lesson created by the Stanford History Education Group (Stanford History Education Group, n.d.). See Supplemental Appendix C for the writing prompts.

Classroom teachers administered the writing assessments across two consecutive 50-minute class periods as part of normal classroom instruction. We provided teachers with detailed instructions on how and when to administer the assessment, including not allowing students to work on the assessment outside of class. Students received the prompt and sources on Day 1. If students finished reading on Day 1, they could plan or make an outline for Day 2. On Day 2, students were instructed to read the writing prompts and write an essay using the sources and any notes/outlines they made the day before.

All students responded to the prompt using Google Docs and typed their essays. All students were familiar with using Google Docs and keyboards were provided. Students had an option to hear the sources read aloud. Approximately 6 weeks after completing the writing, teachers received holistic scores for each student’s writing and each student received individualized formative feedback. Teachers shared feedback with students and had them create writing goals as part of the larger intervention.

Holistic scores

Trained evaluators assigned holistic scores to student writing using a holistic scoring rubric. The rubric was developed using rubrics for SBAW in history from the research literature and was shared with subject-matter experts in the field to assess content validity (De La Paz & Monte-Sano, 2012; Monte-Sano, 2010, 2012; NWP, 2005, 2010; NREL, 2011). The rubric used a scale of 1-6, with 1 indicating “no evidence of achievement” and a 6 indicating “exceptional achievement.” The holistic rubric captured all criteria related to proficient SBAW in history. See Supplemental Appendix A for the complete rubric.

During the summer of 2022, we trained 18 raters to use the holistic rubric using anchor papers and discussion of key features of essays representing each of the six distinct categories—1 through 6. Raters were secondary literacy and history teachers or graduate students majoring in education or history. Scorers were recruited so the intervention could score many essays efficiently and accurately. Two raters scored each essay. Absolute agreement ICC (using a two-way random effect model) was .923 for the two double-coded scores. The average agreement within 1 point was 89% for the evaluators (De La Paz et al., 2012; Kuhn et al., 2016; MacArthur et al., 2019; Troia et al., 2019). Scores that disagreed by more than 1 point were scored by a third evaluator.

Analytic coding

While holistic scores indicate the overall quality of writing (Schipolowski & Böhme, 2016), for the present study trained coders used an analytic framework to evaluate proficiency in discrete aspects of students’ writing (e.g., the quality of reasoning or how well the writing attributes evidence to sources). Because writing quality may be a multidimensional construct where student performance across various dimensions differs, using an analytic framework can reveal strengths and weaknesses of student writing in a way that is valuable to evaluators, researchers, and teachers (Olson et al., 2023; NREL, 2011; Steiss et al., 2022).

By measuring proficiency across discrete but related skills, we can better understand specific challenges of student writing and tailor instruction to students’ needs. For example, we might find that students in grade 7 are particularly challenged by presenting a strong claim and that the quality of claims is strongly related to other aspects of writing proficiency. Further, an analytic framework can be used to describe the dimensionality of writing in a genre, how these dimensions develop, and how they relate to each other and to holistic scores. Finally, using latent variables consisting of multiple items can decrease measurement error and increase the content validity of the intended construct (DeVellis, 2021).

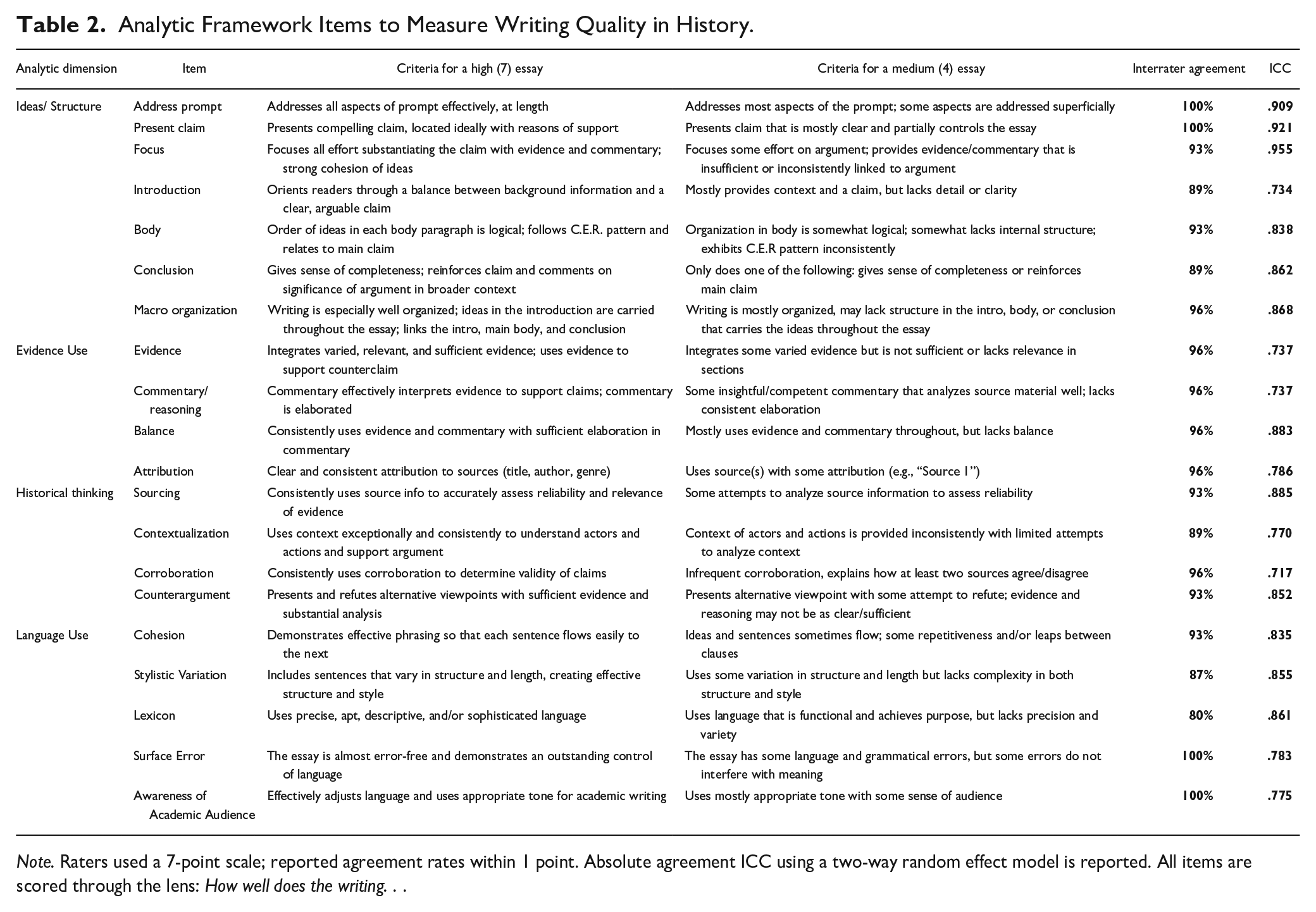

To create a reliable and valid analytic framework, the research team generated a comprehensive list of 20 items to separately measure key aspects of SBAW quality in history as reflected in the research discussed previously and extant writing rubrics evaluating the quality of writing in history (Goldman et al., 2016; Monte-Sano, 2010, 2012; Monte-Sano & De La Paz, 2012; NREL, 2011; NWP, 2005/2010). After several iterations of generating and applying items to student writing, the tool was shared with eight subject-matter experts in the field of secondary writing research who provided critique and feedback on the content validity of the framework (Anastasi & Urbina, 1997).

Individual coders scored each item on a scale of 1-7, with 1 indicating “not evident” and 7 indicating “highly effective” for a specific analytic item. All items were scored through the lens of how well the writing performed in that component of writing. The identities of student writers were blinded. Although the framework included criteria for every score from 1 to 7, we present descriptions for a “4” and “7” for each item in the interest of brevity in the table below.

To achieve suitable rates of interrater agreement, the research team (1) made individuals responsible for coding fewer than five items, (2) engaged in iterative cycles of coding to clearly define coding criteria and improve reliability over several months, (3) generated a list of anchor papers (MacArthur et al., 2019) that described each score (1-7) for every item, and (4) assigned another researcher to monitor each coder’s progress to ensure the content validity of their measurements. A training set of essays was used to calibrate coders before the present study. Then, coders jointly scored a shared set of 32 student writing samples to calculate interrater agreement rates (15% of the sample) (Gallagher et al., 2017). For all analytic items displayed in Table 2, agreement within a score point (on a 7-point scale) was considered acceptable (Bang, 2013; Gallagher et al., 2017; MacArthur et al., 2019; Troia et al., 2019). The average agreement within 1 point for all categorical items was 94%, and all agreement rates were above 80%. ICC ranged from .717 to .955.

Analytic Framework Items to Measure Writing Quality in History.

Note. Raters used a 7-point scale; reported agreement rates within 1 point. Absolute agreement ICC using a two-way random effect model is reported. All items are scored through the lens: How well does the writing. . .

Analytic Approach

We used multivariate analysis of covariance (MANCOVA) to address the first research question examining differences in student writing across middle and high school. With analytic framework items representing dependent variables and middle school/high school (MS/HS) as the key independent variable, the MANCOVA also controlled for gender, English learner (EL) status, free or reduced-price lunch (FRPL) status, and parent education. Pillai’s trace statistic was used as it is robust to unbalanced samples with nonnormal and heterogeneous variance. The Benjamini-Hochberg procedure was used to decrease the false discovery rate and to avoid Type 1 error because multiple dependent variables (20 in total) were included in analyses (Benjamini & Hochberg, 1995).

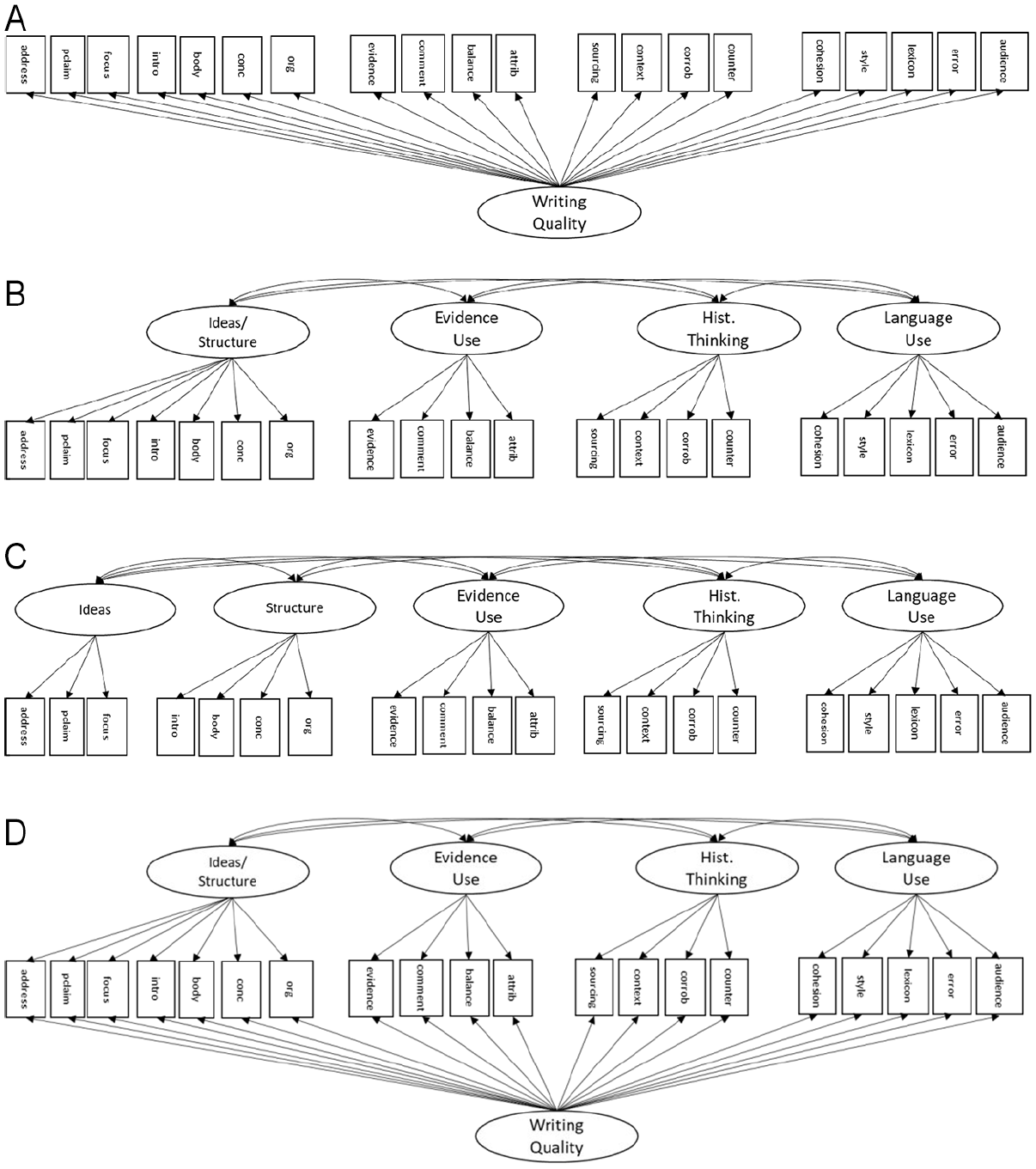

We used confirmatory factor analysis (CFA) to answer the second research question regarding the dimensionality of secondary students’ source-based argument writing (SBAW) in history. CFA was conducted using Mplus 8.4 (Muthén & Muthén, 2017) with the weighted least square mean and variance adjusted (WLSMV) estimator. Four competing alternative confirmatory factor models shown in Figure 1 were fitted to the data, with items from the analytic framework used as indicators for the latent constructs.

Competing models of SBAW in history. The items comprising the latent factors in each model match the items in the analytic coding framework in Table 2.

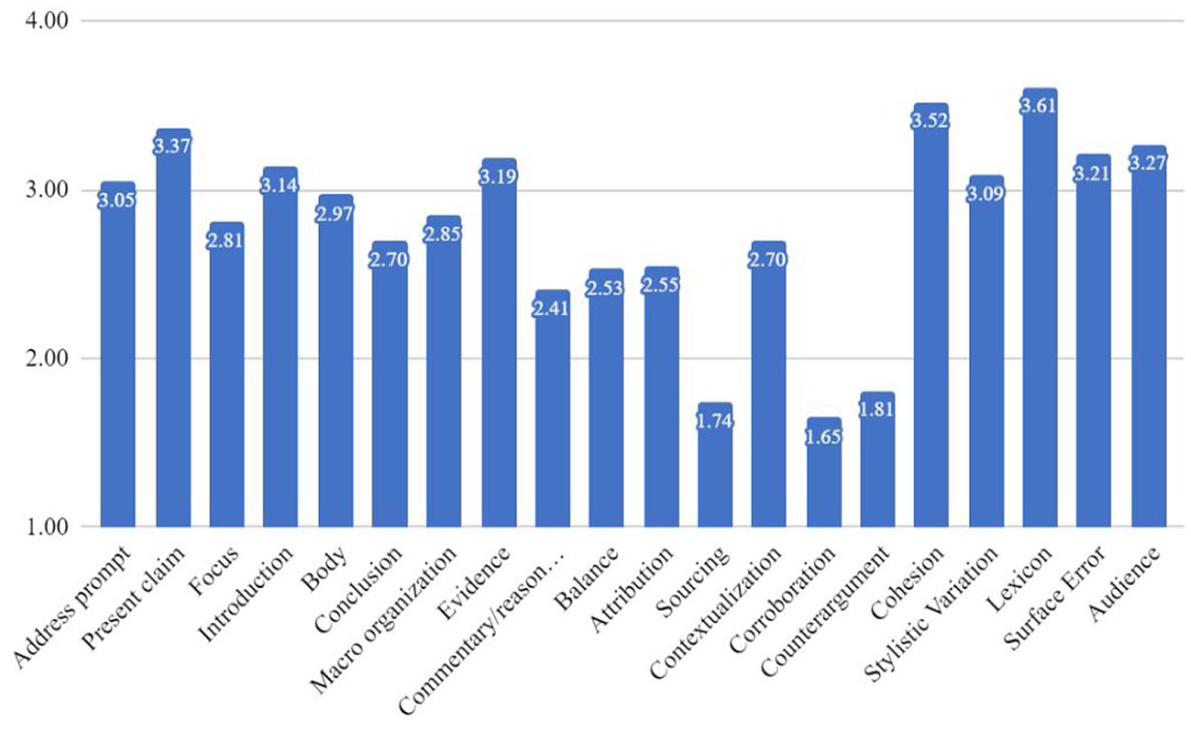

Average performance for analytic framework items for all students.

The first model, a baseline model, was a unidimensional model, where SBAW in history is a single construct that reflects all the items in the analytic framework (Figure 1a). The second model (Figure 1b) tested a four-factor model, where Ideas/Structure, Evidence Use, Historical Thinking, and Language Use are dissociable, but related, dimensions of writing quality. This model posits that the ideas and structure of an argument essay are too closely related to be dissociable constructs. The third model (Figure 1c) also tested the assumption of multidimensionality but represented Ideas and Structure as separate factors.

Finally, we tested a bifactor model (Figure 1d) with a general factor that reflects common variance among all the variables and four or five uncorrelated specific factors depending on the relative fit of the four- and five-factor models. In a bifactor model, the general factor (overall writing quality) captures common variance across all the indicators while the specific factors, orthogonal to the general factor, help to explain variance that is over and above the general factor (Gibbons & Hedeker, 1992). Difftests were used to examine whether model fits are statistically different across the models since these models were nested (Hu & Bentler, 1999; Kline, 2015).

For the third research question, examining how dimensions of SBAW in history relate to holistic scores, the best-fitting model identified in the second research question was used in a structural regression model with writing dimensions predicting the holistic score.

Findings

Student performance in key aspects of SBAW in history

Descriptive statistics for the full sample show that U.S. students across middle school and high school can advance claims, integrate evidence from sources, and organize their writing more successfully than other aspects of writing, such as sourcing documents, using commentary to interpret evidence, and presenting and refuting counterarguments.

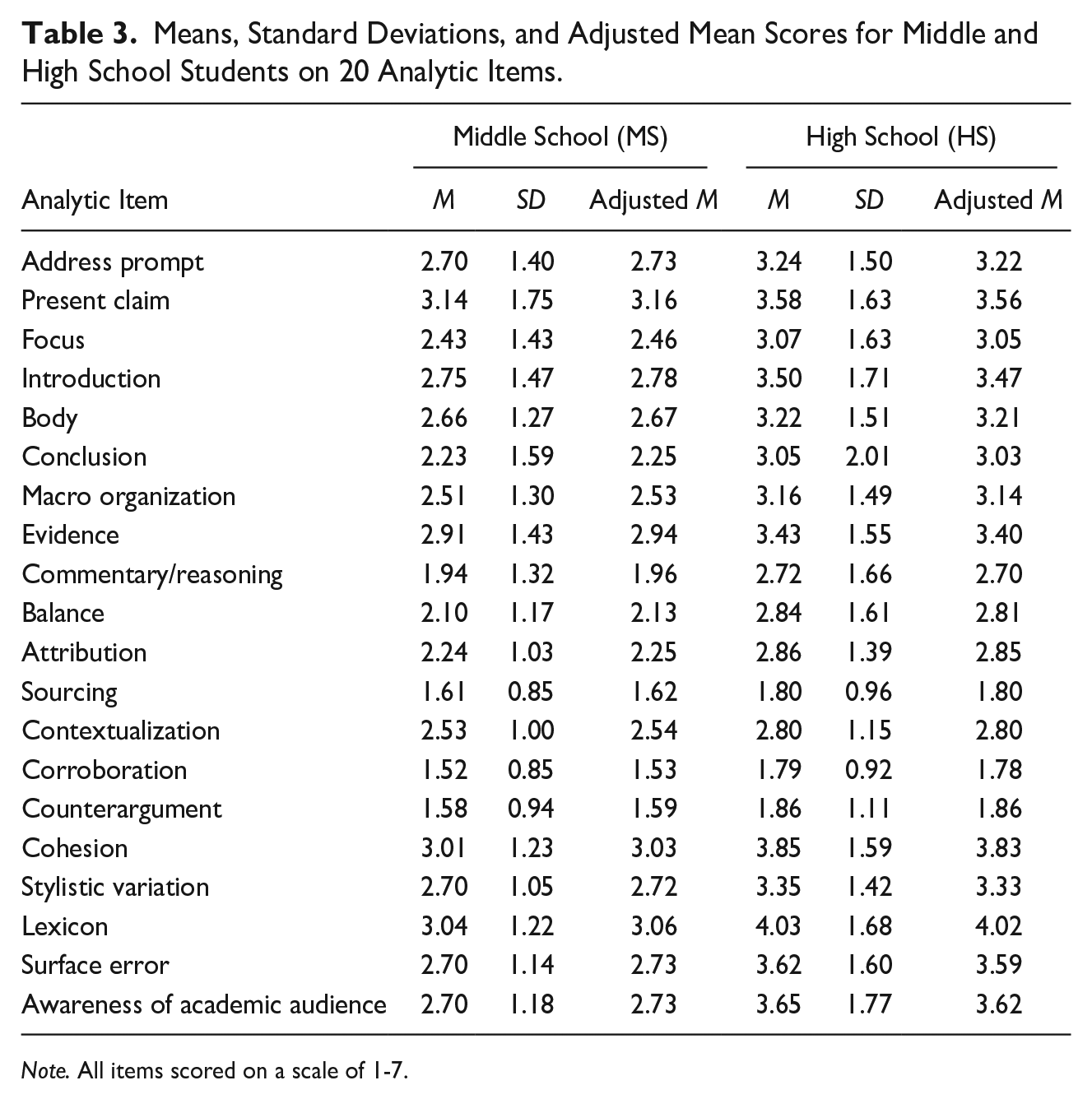

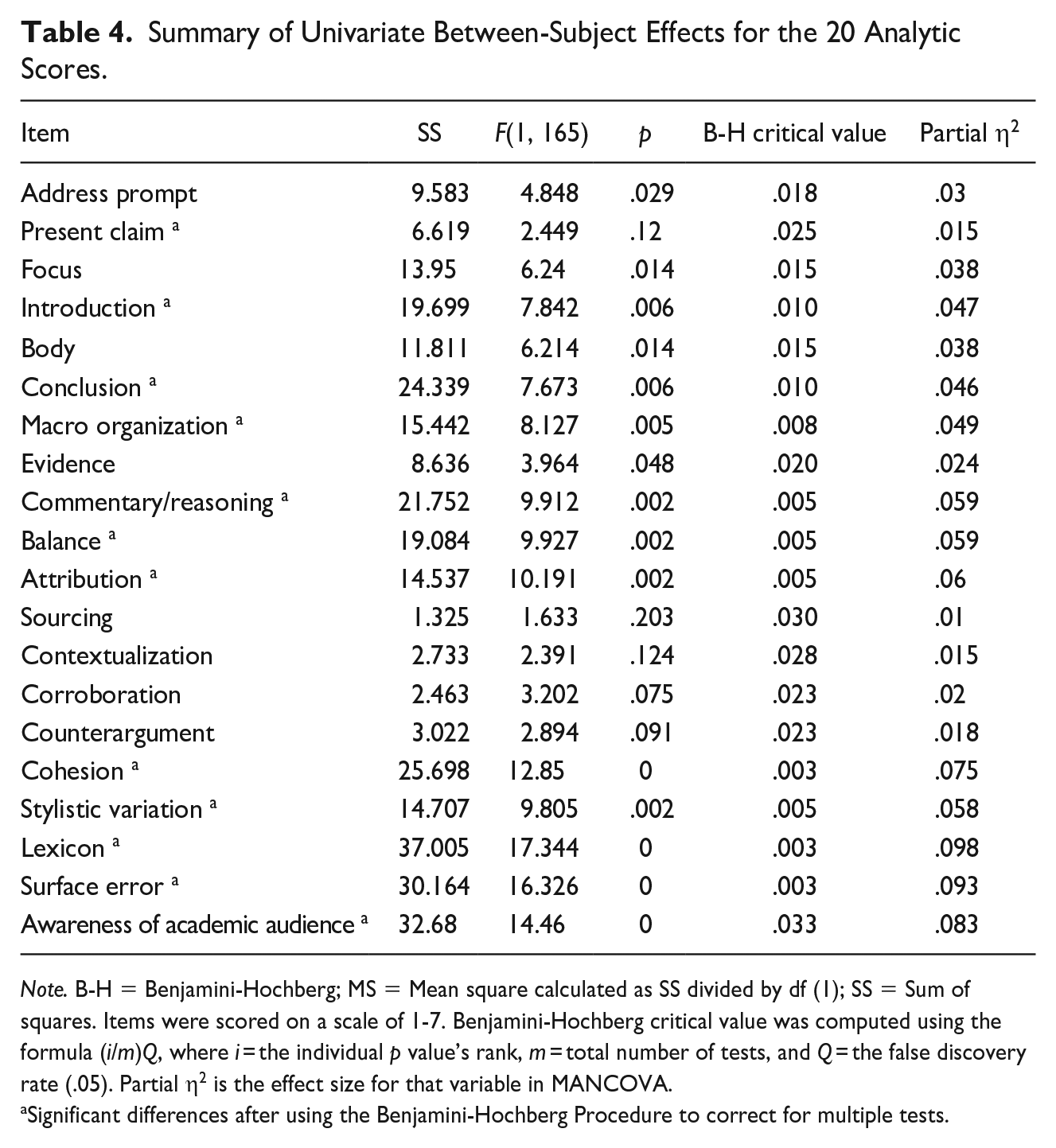

MANCOVA revealed significant differences between middle school and high school students in writing performance controlling for biological sex, EL status, FRPL status, and parent education (Pillai’s trace = .196, F(5, 159) = 1.71, p = .038, partial η2 = .196). No significant differences were found between middle school and high school students on the other covariates. Table 3 shows mean scores and adjusted mean scores for each analytic item for middle school students and high school students.

Means, Standard Deviations, and Adjusted Mean Scores for Middle and High School Students on 20 Analytic Items.

Note. All items scored on a scale of 1-7.

Students in HS demonstrated significantly higher scores for items in the analytic framework related to structure, evidence use, and language use. For example, controlling for demographic variables, HS students scored .73 points higher on average than MS students in their commentary and reasoning, t(165) = 3.15, p = .002. We also observed significant differences in scores on how well students balanced summary, evidence, and reasoning, b = .69, t(165) = 3.15, p = .002, and how well students attributed evidence to sources, b = .60, t(165) = 3.19, p = .002.

The analytic items related to structure, except for organization of the “body” of the essay, also had significant differences between MS and HS students, ranging from .48 to .77 on a 7-point scale. Items related to presentations of ideas—addressing the prompt, presenting a clear claim, and focusing on substantiating a claim—were no longer significant after using the Benjamini-Hochberg procedure. Table 4 shows the results of MANCOVA for each writing outcome.

Summary of Univariate Between-Subject Effects for the 20 Analytic Scores.

Note. B-H = Benjamini-Hochberg; MS = Mean square calculated as SS divided by df (1); SS = Sum of squares. Items were scored on a scale of 1-7. Benjamini-Hochberg critical value was computed using the formula (i/m)Q, where i = the individual p value’s rank, m = total number of tests, and Q = the false discovery rate (.05). Partial η2 is the effect size for that variable in MANCOVA.

Significant differences after using the Benjamini-Hochberg Procedure to correct for multiple tests.

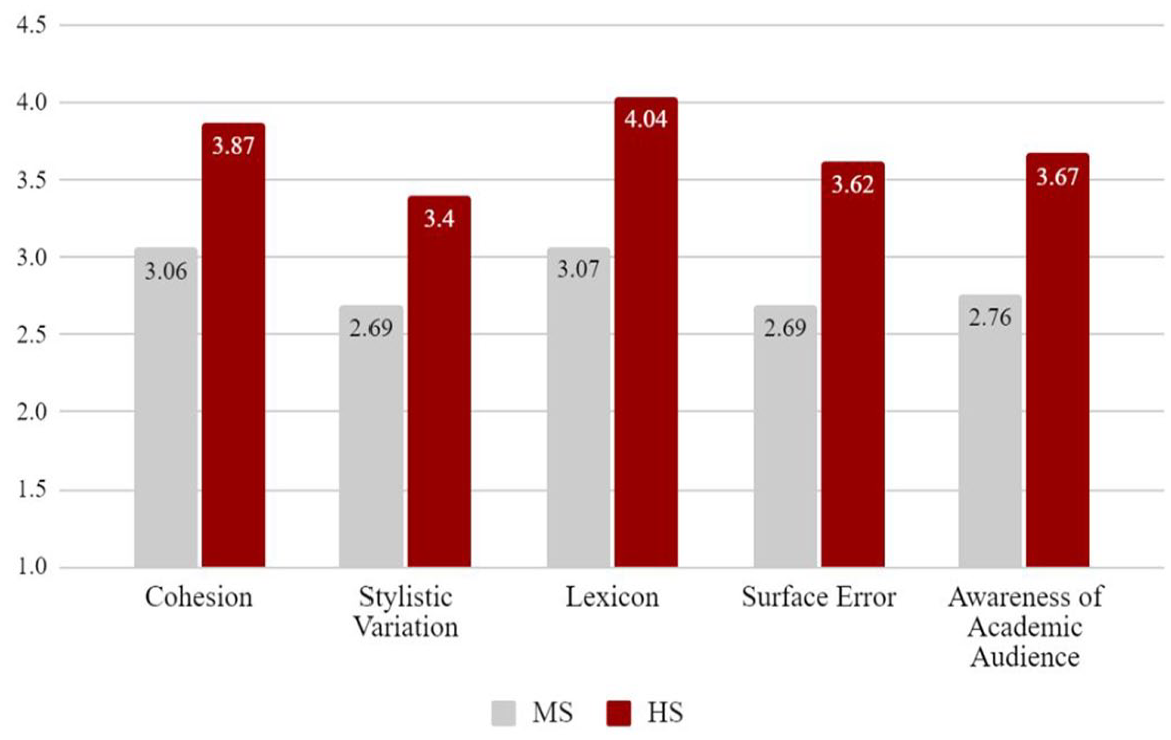

Differences between MS and HS students in language use were significant across all five items and ranged from .59 to .94, controlling for demographic factors. Figure 3 shows the differences between MS and HS students for language items.

Average scores for MS and HS students: language use.

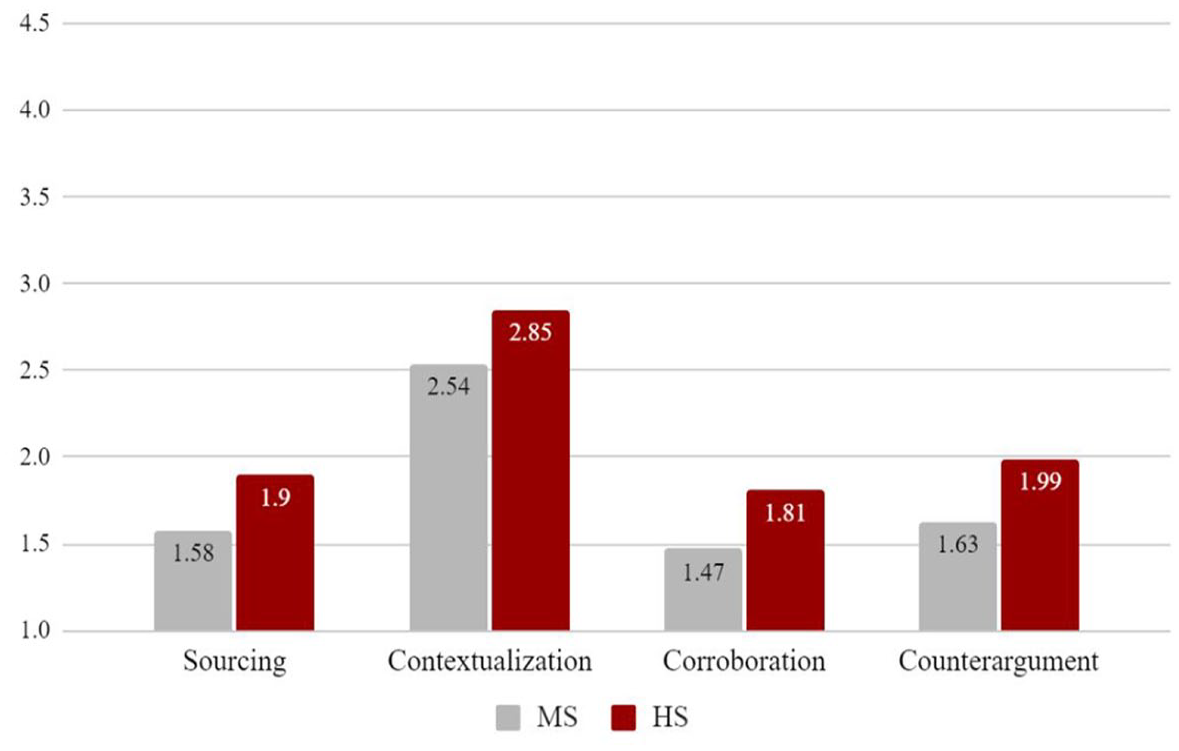

There were no significant differences between MS and HS students in how well students engaged in sourcing, contextualization, corroboration, or presenting and addressing counterarguments, as seen by the similar column heights in Figure 4.

Average scores for MS and HS students: historical thinking.

Dimensions of source-based argument writing in history

Before engaging in CFA, we assessed the normality and distributions of variables in the analytic framework. All variables were moderately or strongly related to each other. The distribution of scores, skewness, and kurtosis were all adequate. Bivariate correlations between variables are presented in Supplemental Appendix B.

At this stage, we made two changes. First, the variable “org,” which measured the overall organization of the writing, was removed from subsequent analyses because it was strongly correlated with many variables, especially “body,” which made the inclusion of “org” in CFA redundant. Substantively, this variable prompted a “holistic” evaluation of writing. Therefore, it was not appropriate to the purpose of the analytic framework.

Second, the variable “body” fit better under the Evidence Use factor. Bivariate correlations as well as discussions with coders using the analytic framework suggested skills related to evidence use were indeed being measured with this item. In the present genre and writing corpus, effectively structuring the body of an essay requires skills in integrating evidence and providing reasoning as these are the substantive components of the body. Therefore, the item “body” was set to load onto Evidence Use in the multidimensional and bifactor models.

After this respecification, difftests indicated that the correlated four-factor model (Figure 1b) had a good fit and superior fit than the unidimensional model (Figure 1a) (p < .001). The chi-square difference test also indicated that the correlated five-factor model (Figure 1c) had a superior fit than the four-factor model (p = .003). Despite the superior fit of the five-factor model, the four-factor model was selected for further analysis for two reasons: (1) previous research examining the dimensions of writing of secondary students suggested that Ideas and Structure are a single factor (Steiss et al., 2022); and (2) the Ideas and Structure factors in the five-factor model were correlated at .996, which is so high as to suggest that these are not distinct and dissociable constructs in writing. Lastly, the four-factor model still had a good fit, with standardized root mean square residual (SRMR), comparative fit index (CFI), and Tucker-Lewis index (TLI) values considered ideal and RMSEA values considered good (SRMR = .031; CFI = .994, TLI = .992; RMSEA = .083).

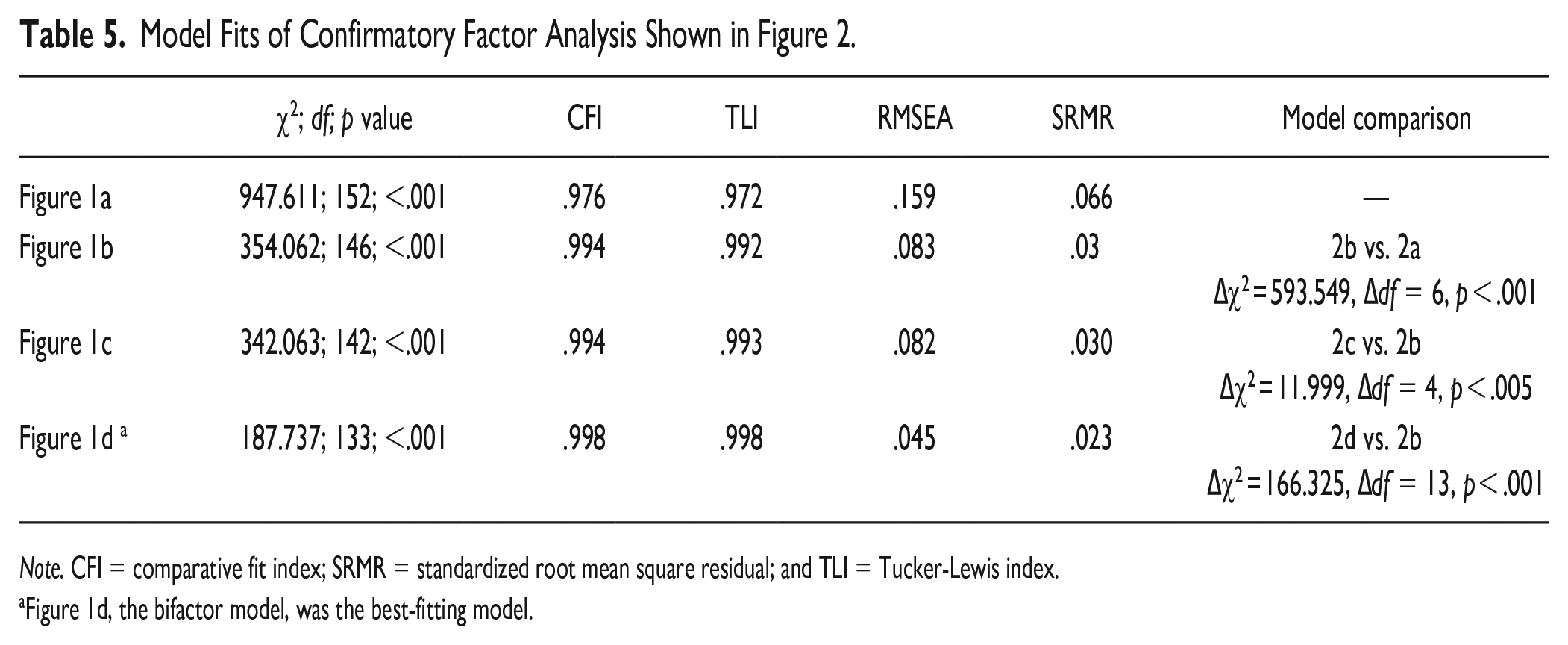

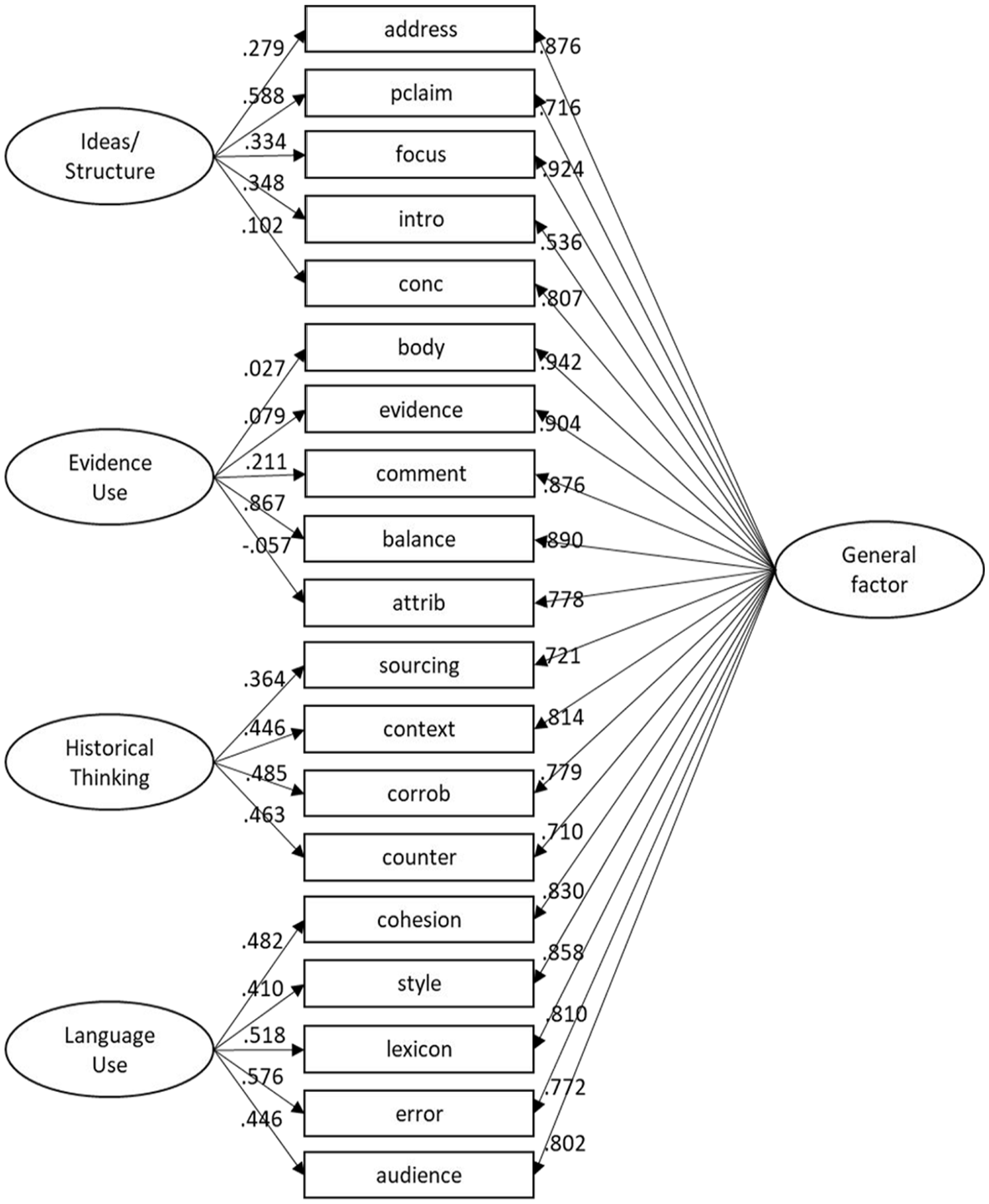

The four factor model (Figure 1d) was then compared to a bifactor model with four specific and one general factor. The bifactor model was the best-fitting model overall. Model fits are reported in Table 5.

Model Fits of Confirmatory Factor Analysis Shown in Figure 2.

Note. CFI = comparative fit index; SRMR = standardized root mean square residual; and TLI = Tucker-Lewis index.

Figure 1d, the bifactor model, was the best-fitting model.

Table 5 shows that the fit for the final respecified model was excellent, and the difftest indicated a preference for the bifactor model over the four-factor model (p < .001). Figure 5 shows the final model.

Model of best fit for source-based argument writing in history.

In the final model, all factor loadings from indicators to the general factor were moderate or strong (.536 ≤ 964). All factor loadings from items to their respective specific factors were weak to strong (−.057 ≤ 867). For the bifactor model, factor reliability was determined using coefficient omega (ω) (Reise, 2012). The general factor was very reliable (ω = .943), but all the specific factors—Ideas (ω = .126), Evidence Use (ω = .043), Historical Thinking (ω = .221), and Language Use (ω = .244)—showed minimal reliability. Low reliability indicated these factors could not be used in the subsequent structural regression model.

Relations of dimensions to holistic scores

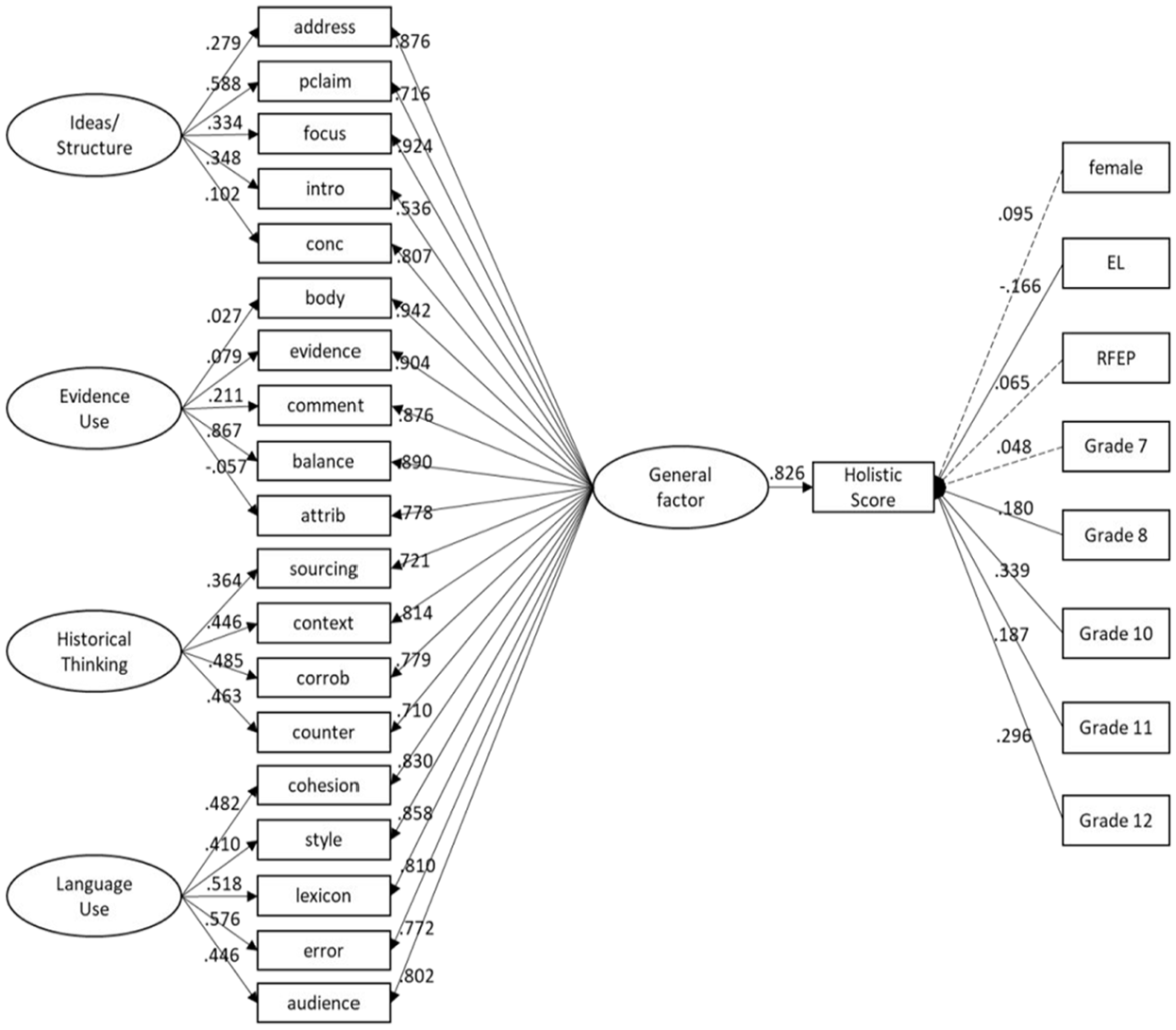

To examine the relations between dimensions of SBAW in history and holistic writing quality, a structural regression model was fitted to the data, controlling for biological sex, EL status, and grade levels (Figure 6).

Dimensions of writing predicting holistic scores while controlling for gender, English Learner (EL) status, and high school (HS).

In this model, the general factor strongly and significantly predicted holistic scores (b = .792, SE = .039, p < .001). Biological sex did not affect holistic scores. Students in grade 8 (b = .279, SE = .089, p = .002), 10 (b = .253, SE = .101, p = .012), and 12 (b = .428, SE = .102, p < .001) scored higher on the holistic scores, compared with students in grade 6. Being an EL student significantly predicted lower scores in holistic scores compared with other students (b = −.224, SE = .088, p = .011). Parent education did affect holistic scores, though students in the FRPL program scored significantly lower (b = −.202, SE = .078, p = .009). Overall, the model explained 86.4% of the variance in holistic scores.

Discussion

Since demands on writing knowledge and skills vary depending on measurement characteristics such as task and discipline as well as evaluative methods (Kim & Graham, 2022), this study explored the performance of secondary students and systematically examined the dimensions of SBAW in history context, as evaluated using an analytic evaluation framework, and their relations to holistic writing scores.

SBAW in History

The relative performance across items in the analytic framework indicates that U.S. middle school students in this study were largely in a knowledge-telling period for source-based history writing—that is, most students were summarizing or restating source material, as opposed to engaging in knowledge transformation to support a claim (Scardamalia & Bereiter, 1987). Given the key role of evidence and commentary/reasoning in this genre and middle school students’ scores, overall writing would improve if instruction could move them from knowledge-telling to knowledge-transformation in this genre (Olson et al., 2023). Instructional strategies such as engaging in dialogue with peers (having to justify or explain their thinking), explicit instruction in key cognitive strategies (e.g., Contextualization: Why did the handouts matter given the historical context?), and engaging students in the revision of their writing to add more reasoning may help develop their writing (Olson et al., 2023; Graham et al., 2016). Additionally, instructional approaches utilizing a C.E.R. (Claim. Evidence. Reasoning.) heuristic for writing the body of an essay seem appropriate given the strong relations between the quality of the body, evidence, and reasoning in the overall sample. High school students’ higher scores for commentary and items related to structure and language use indicate some development in these aspects of writing for older students. However, many high school students were challenged to provide elaborate reasoning.

Across MS and HS, some aspects of general argumentation (e.g., providing reasoning to support claims) and disciplinary thinking (e.g., sourcing and addressing counterarguments) were especially challenging (Goldman et al., 2016; Wineburg, 1991). The lack of significant differences between MS and HS students in their sourcing, contextualization, and corroboration affirm Wineburg’s (1991, 2001) claim that historical thinking is an unnatural act. Students do not enter classrooms with sophisticated disciplinary reading, thinking, and writing practices to make arguments about the past; nor do these skills develop naturally as students progress from MS to HS (De La Paz et al., 2012; Nokes & De La Paz, 2023; Wineburg, 1991).

Further, results provide empirical evidence to claims made by other researchers that the complex disciplinary reasoning and writing skills central to the discipline are not sufficiently addressed in the U.S. secondary school curricula (Applebee & Langer, 2011; Bain, 2006; Breakstone et al., 2013; De La Paz et al., 2021; Monte-Sano, 2010; Nokes, 2017; Pessoa et al., 2019; Wineburg, 1991). Across grades, students need explicit instruction, modeling of key disciplinary practices, and frequent opportunities to practice source-based historical inquiry to develop skills that are key to overall writing quality (De La Paz et al., 2017; Nokes & De La Paz, 2023). Consider the following piece of writing by an HS student: The letter Cesar Chaver wrote to the people of Los Angeles is another reason that helped the strike succeed. Well, most of the sources are from primary sources and usually have evidence. They are also imprinted in history.

While this student can attribute evidence to sources and they know sourcing documents is important, their ability to evaluate the reliability of sources is superficial at best. At the classroom level, teachers are justified in targeting these aspects of writing by designing tasks and instructions that help students to construct meaning from documents, think in discipline-specific ways, and use evidence and reasoning to make defensible claims about the past (Monte-Sano, 2011, 2012; Steiss et al., 2024; Van Drie et al., 2021).

If policymakers, researchers, and other stakeholders want students to develop complex disciplinary reasoning, they must also design instructional contexts that explicitly engage and measure students’ progress in reasoning, sourcing documents, presenting and addressing counterarguments, corroboration, and contextualization. Because (1) these skills are challenging to learn (Britt & Aglinskas, 2002), (2) history teachers have received little formal training in literacy instruction (Applebee & Langer, 2011; Tate & Collins, 2022), and (3) these skills strongly predict overall writing quality, policy should emphasize preparing teachers to build robust disciplinary literacy skills. Such efforts are essential to help students meet standards that emphasize sound argumentation through disciplinary inquiry.

Dimensions of SBAW in History

The bifactor model of SBAW in history is inconsistent with previous studies that find writing quality to be unidimensional or argue that analytic scores are too closely correlated to provide additional information about writing quality (Bang, 2013; Crossley et al., 2016). The specific factors in the bifactor model indicate that it is indeed meaningful to consider distinct aspects of writing in instruction and evaluation beyond holistic judgments of writing. Teachers may, for example, devote class time to building students’ skills in writing strong body paragraphs with a Claim, Evidence, Reasoning (C.E.R.) structure if they observe students need additional instruction in this dimension of writing.

While attention to specific aspects of writing may be effective in specific instructional contexts, there is no single skill or aspect of writing that matters most to develop complete writing proficiency. All aspects of writing matter for overall quality, as indicated by the strong general factor with moderate to strong factor loadings for all items. Further, general writing quality and historical thinking are strongly interrelated in our model. General and disciplinary writing proficiency is best viewed as a single construct, with some variation left to be parceled out by specific factors (e.g., Historical Thinking and Language Use) (De La Paz et al., 2017; MacArthur et al., 2019; Monte-Sano, 2010; NCES, 2015). Historical thinking has an integral but not entirely dissociable place in source-based argument writing in history. Therefore, a comprehensive approach to improving students’ historical thinking as they write essay-length arguments about the past should also build literacy skills like structuring the body of an essay and integrating evidence from sources as these are inextricably related to contextualization and sourcing.

The Presentation of Ideas and Structure as a single specific factor is aligned with prior claims that the ideas and structure of an argument are too inextricably bound to be viewed as separate constructs (Steiss et al., 2022). For example, when attending to the quality of an introduction, a scorer thinks about how the introduction organizes key ideas, such as a claim that carries ideas throughout the essay. Additionally, Evidence Use and Language Use as specific factors affirms prior research that sees these dimensions of writing in other genres (Correnti et al., 2020; Kim et al., 2015; Steiss et al., 2022; Wang et al., 2018), though the primacy of the general factor, which implies these aspects of writing are highly interconnected, should be acknowledged.

Dimensions of Writing Predicting Holistic Scores

The structural regression model shows that the analytic framework and human coding can reliably describe overall writing quality as the model explained 86% of the variation in students’ holistic scores. Although costly in terms of time, the use of human evaluators to score the quality of discrete aspects of writing has distinct advantages to approaches using natural language processing approaches that do not attend to the quality of linguistic features in a rhetorical situation. Instead, they indicate the frequency of different linguistic indices that may or may not relate to overall essay quality and predict far less variance in holistic scores (MacArthur et al., 2019; Tate et al., 2024).

In the present model, the strong contribution of each analytic item affirms the assumption of holistic scoring that all aspects of writing matter and that discrete skills are strongly related (Hillocks, 2011; McCutchen, 2006; Monte-Sano & De La Paz, 2012). However, this does not necessarily mean targeted instruction on specific skills is unproductive. An intervention may indeed target students’ skills in historical thinking after noticing a lack of contextualization and sourcing in their writing. The development of these skills may augment the development of other key skills later in an instructional sequence. Such questions about the development of the dimensions of writing are important for future research. The model of writing we presented can be used to test the effects of targeting specific aspects of writing on other aspects and to track how certain groups of students differ in their writing development over time.

Limitations

A key limitation of this study is the small number of teachers represented at each grade level. The relatively low scores for grade 10 students indicate that this issue may have affected results. We also lacked measures for reading comprehension and topic knowledge that may explain differences in student writing performance. Reading and writing skills are highly interrelated, especially in source-based genres. Still, districts did not want to devote additional time to measuring students’ reading comprehension or background knowledge beyond the 2-day reading and writing assessment. Similarly, while we had information related to time spent writing in the districts, we did not have information about specific instructional approaches at the district or teacher levels or how many students elected to have the sources read aloud. It is possible that different district-wide approaches to instruction would influence the nature of writing in other settings. Future studies should examine how other student and contextual factors influence writing performance with multiple sources.

Another limitation is that scored writing samples come from only a single type of writing. This prompt may prioritize certain thinking skills over others—namely, a preference for contextualization over sourcing. Advancing a causal explanation of how a movement succeeded more obviously requires putting actors, actions, and events in their temporal and spatial contexts. Future studies should test how different writing prompts influence student writing performance and, thus, the model of writing (Monte-Sano & De La Paz, 2012; Steiss et al., 2024). Similarly, a study using a wider range of writing may also revise our proposed model of source-based argument writing in history. For example, low scores for items related to the specific Historical Thinking factor may have influenced factor structure and the contributions of distinct factors to holistic scores.

Conclusion

The present study presents an analytic picture of U.S. middle school and high school students’ SBAW in history and how students in middle and high school perform across complex and interrelated skills. Specifically, we see major differences in some aspects of writing, such as language use and knowledge transformation, but no differences in historical thinking skills. Educators and interventionists concerned with building robust disciplinary literacy skills for secondary students are warranted in targeting these skills in writing instruction across all grade levels, while also attending to other aspects of student writing, like presenting ideas and using conventions of Academic English (Schleppegrell, 2004).

Our model of source-based argument writing suggests that building students’ argument literacy within and across disciplines requires the coordination of multiple skills concurrently. Students need to learn the skill of contextualization to better understand historical causation and consequences; they need to learn to organize their interpretations around central claims, to integrate evidence using clear signal words, and to write conclusions that emphasize their main arguments. At the same time, the bifactor model of SBAW in history presented presently can be utilized by practitioners and researchers to understand how different groups of students vary in key aspects of writing and how targeting specific aspects of writing can benefit students’ general and disciplinary argument literacy over time. While assigning writing a holistic score captures a great deal of information about that writer, there is indeed more to be learned about their performance in specific aspect of writing. Thus, evaluators and educators may effectively assess and target specific aspects during writing instruction (Van Drie et al., 2021). Any efforts to focus on these discrete aspects should translate into holistic improvement given the strong relations between all aspects of students’ SBAW in history.

Supplemental Material

sj-docx-1-wcx-10.1177_07410883241263549 – Supplemental material for U.S. Secondary Students’ Source-Based Argument Writing in History

Supplemental material, sj-docx-1-wcx-10.1177_07410883241263549 for U.S. Secondary Students’ Source-Based Argument Writing in History by Jacob Steiss, Jiali Wang, Young-Suk Grace Kim and Carol Booth Olson in Written Communication

Supplemental Material

sj-docx-2-wcx-10.1177_07410883241263549 – Supplemental material for U.S. Secondary Students’ Source-Based Argument Writing in History

Supplemental material, sj-docx-2-wcx-10.1177_07410883241263549 for U.S. Secondary Students’ Source-Based Argument Writing in History by Jacob Steiss, Jiali Wang, Young-Suk Grace Kim and Carol Booth Olson in Written Communication

Supplemental Material

sj-docx-3-wcx-10.1177_07410883241263549 – Supplemental material for U.S. Secondary Students’ Source-Based Argument Writing in History

Supplemental material, sj-docx-3-wcx-10.1177_07410883241263549 for U.S. Secondary Students’ Source-Based Argument Writing in History by Jacob Steiss, Jiali Wang, Young-Suk Grace Kim and Carol Booth Olson in Written Communication

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305C190007 to the University of California, Irvine.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.