Abstract

This intervention study aimed to test the effect of writing process feedback. Sixty-five Grade 10 students received a personal report based on keystroke logging data, including information on several writing process aspects. Participants compared their writing process to exemplar processes of equally scoring (position-setting condition) or higher-scoring students (feed-forward condition). The effect of the feedback on writing performance and process was compared to a national baseline study. Results showed that feed-forward process feedback had an effect on text quality comparable to one grade of regular schooling. The feedback had an effect on production, pausing, revision, and source use, which indicates that it supported participants in self-regulating their writing process. Additionally, we explored the students’ perception of the feedback to get an insight into its strengths and weaknesses. This study shows the potential of writing process feedback and discusses pedagogical implications and options for future research.

Introduction

In current secondary education, the focus is no longer solely on assessing students’ performance (Van der Kaap & Van Silfhout, 2018; Wiliam, 2011). By placing an emphasis on formative learning, educational practice focuses more on the students’ learning process. In this context, feedback plays an important role as it supports students in taking control over their learning (Nicol & MacFarlane-Dick, 2006). While there is a great variety of intervention studies focusing on writing instruction (Graham & Perin, 2007), previous studies on writing feedback are rather scarce. Most of these intervention studies are very much product-oriented, targeting the feedback at product measures. Though these product-oriented writing interventions have resulted in useful insights and effective practices (Graham & Perin, 2007), we argue that process-oriented feedback has a great potential to promote learning processes and should thus be explored. Developments in keystroke logging have opened up possibilities for more process-oriented writing intervention studies.

In this study, we propose a process-oriented feedback approach to improve upper-secondary students’ synthesis writing. Synthesizing information from different sources into a new and meaningful text is an important skill in upper-secondary and higher education. Given the complex nature of such writing tasks, students need guidance in acquiring synthesizing skills (Van Ockenburg et al., 2019). The feedback in our study is based on keystroke logging data, reflection, and comparison with exemplars.

Supporting Students in Learning to Write

Writing is a complex activity, and as such, students need support in their learning process. Meta-studies such as the one by Koster et al. (2015) for primary education and Graham and Perin (2007) for secondary education give an overview of the numerous writing intervention studies that have been set up in the past three decades. These meta-analyses show the variety in methods implemented to improve students’ writing and point out the importance of introducing effective instructional approaches—such as goal-setting, strategy instruction, peer assistance, and feedback—into everyday classroom practice. Nevertheless, studies on writing feedback in L1 are rather limited. Moreover, they are often product-oriented, and feedback is embedded within an instructional approach. We give arguments for the inclusion of process-oriented feedback in the classroom and explain how keystroke logging can play a role in this.

Previous studies on writing feedback in L1

Though the importance of feedback in the writing classroom has long been recognized (Hillocks, 1986), recent studies on effective teacher feedback on students’ individual writing in L1 are not numerous. In addition, previous studies show a considerable amount of variability in the concrete implementation of the feedback.

Studies such as the ones by Duijnhouwer et al. (2012) and Parr and Timperley (2010) examined the effect on students’ writing performance of written comments including improvement strategies added to the writer’s text. Duijnhouwer et al. (2012) found positive effects when the students were prompted to reflect on the teachers’ written comments as this increased students’ engagement. Parr and Timperley (2010) pointed to the importance of the quality of the written comments. To have a positive impact on the students’ writing performance, the feedback had to indicate the students’ position relative to the goal or targeted performance, contain information on the goal, and indicate how to achieve the goal.

Two other types of product-oriented feedback were tested in a study by Lipnevich et al. (2014): a detailed rubric with information on product criteria, and text exemplars (without annotations). To reduce the teachers’ workload, the feedback was nonindividualized as all students received the same feedback information. Both rubric and exemplars proved to have a positive effect on students’ revision of the draft, with the rubric yielding better results as it seemed to provoke more self-reflection.

Some feedback studies included elements focusing on the writing process. Schunk and Swartz (1993), for example, studied the effect of providing students with feedback focusing on strategies. They concluded that providing students with strategies that include a process goal (i.e., goals involving techniques and strategies used by students to learn) resulted in better writing outcomes than providing strategies with a product goal (i.e., goals related to the quality of the work). Moreover, students who also received information on their progress obtained the best results, as this motivated them and showed them their goals were reachable. Also, Zimmerman and Kitsantas (2002) introduced writing strategies in their feedback intervention. They examined the influence of modeling and social feedback on writing revision. The feedback consisted of positive feedback comments by the experiment leader or assistant about the strategy steps the students performed properly. The feedback assisted students, who also observed a coping or mastery model, in acquiring writing and self-regulatory skills.

The gap: Feedback without instruction and a process focus

Two issues are notable when considering the previous studies on writing feedback in L1. First, most feedback studies incorporate a high amount of instruction. By incorporating goal-setting and offering strategies, the feedback resembles strategy instruction. Strategy-focused writing instruction explicitly teaches strategies to facilitate activities taking place during the writing process such as planning, drafting, and revising the text (De La Paz & Graham, 2002; Fidalgo et al., 2015). Feedback often forms part of extensive instructional programs. From a research perspective, investigating the sole effect of feedback devoid of instructional elements would give interesting insights into feedback effects. Moreover, these insights may also guide educational practice.

Secondly, previous writing feedback studies are mainly product-oriented. Students get feedback on, for example, (a selection of) text quality criteria. In some studies, the feedback also targets the writing process (e.g., when writing strategies are provided). However, in those instances, the feedback is still indirectly focused on certain text criteria. For example, comments like “You should plan before you write because your text fails to incorporate all main ideas” address the planning during the process, but via an aspect inferred from the writing product, namely, the lack of sufficient information.

Why process-oriented feedback?

Although process-oriented feedback is almost nonexistent in writing education, we can identify several elements in previous research that point to the potential of process-oriented feedback to support the writing skills of students. In their conceptual analysis of feedback, Hattie and Timperley (2007) identify feedback at the process level as more powerful than feedback at the task level when it comes to deep processing, as process-level feedback focuses on strategies to make an improvement. In this respect, process feedback targets the core of feedback: closing the gap between the current and target performance level. Previous studies have shown that the process influences the quality of the written text (Baaijen & Galbraith, 2018; Breetvelt et al., 1994; Sinharay et al., 2019). Process-oriented feedback can thus help students in adapting their writing process as to move closer to the goal. To reduce the gap between the current and target performance level, it is important that students learn to develop self-regulating skills. Students need support to learn to monitor, direct, and regulate their actions (Graham & Harris, 2018; Hattie & Timperley, 2007; Nicol & MacFarlane-Dick, 2006; Sadler, 1989) as “a great part of skill in writing is the ability to monitor and direct one’s own composing processes” (Hayes & Flower, 1980, p. 39). By targeting the writing process, students are encouraged to choose process strategies. They reflect on strategies, and monitor and regulate them, so as to make progress toward goals (Butler & Winne, 1995). In this way, students adopt an active role in processing the feedback, which has been shown to lead to a higher feedback engagement (Carless & Boud, 2018). For feedback to be successful, students’ agentic participation is critical (Winstone, 2022). Lastly, by focusing on the process instead of on the final product, the feedback looks beyond the specific assignment and increases the potential to transfer the acquired skills to future tasks (Butler & Winne, 1995; Schunk & Swartz, 1993). Process feedback thus encompasses a significant potential for learning and transfer in writing.

Keystroke logging: Insights into the writing process

Until recently, it was quite complex to observe and collect writing process data. The development of keystroke logging applications in the domain of writing, however, has made it possible to capture the students’ writing process in naturalistic settings and in an unobtrusive way (Lindgren and Sullivan, 2019). Keystroke logging consists of a logging program that is activated on a computer, recording every keystroke, mouse click, or movement during the writing process. The logging data are time-coded, allowing to reconstruct and analyze the writing process dynamics as a function of time and cognitive effort (Leijten & Van Waes, 2013). More precisely, information on specific process aspects such as production, fluency, and revision can be provided at different intervals in the writing process (beginning, middle, and end, for example).

Keystroke logging has been used previously in a limited number of classroom interventions, yielding positive results (Dux Speltz & Chukharev-Hudilainen, 2021; Lindgren et al., 2008; Ranalli et al., 2018). In the study of Lindgren et al. (2008), university students, who worked on a translation task, engaged in peer discussions based on a replay of the writing process provided by a keystroke logging program. The intervention triggered the students to reflect on the task. In addition, students reported that the process-oriented intervention had a positive effect on their writing motivation. Ranalli et al. (2018) used data from keystroke logging with eye-tracking in an intervention to support university students’ L2 writing. The case study included rather time-consuming individual teacher-student discussions in which the student’s text and process, and process data of an experienced model were discussed. The students also received individual instruction by the teacher. This study showed that the process data facilitated the discussion and helped both students and teacher understand where learners are in their learning, where they need to go to, and how to best get there. The goal of the intervention study by Dux Speltz and Chukharev-Hudilainen (2021) was to encourage fluency in university students’ L1 writing. Participants received real-time fluency-focused feedback based on keystroke logging data. When taking a pause while writing, the screen slowly faded, becoming transparent and invisible to the participant after 5 seconds, thus prompting the student’s fluent text production. The students in the intervention condition produced more text during the writing process and wrote higher-quality texts than the students in the control condition.

Synthesis Writing

Reading-writing task

Synthesis writing is a specific type of source-based writing that involves an interplay of reading and writing subprocesses. During the writing process, students alternate between reader and writer roles as they read sources, select relevant information from the sources, compare and contrast the information from the different source texts to each other, and write and revise the actual text. Key to synthesis writing is the integration process, which encompasses connecting the ideas from the different source texts by organizing and structuring them around a central theme in the target text (Solé et al., 2013; Spivey & King, 1989). To many students in upper-secondary education, it is still a relatively unexplored task type (Van Ockenburg et al., 2019). Students find it challenging, which is not surprising given the cognitively demanding nature of this task (Martínez et al., 2015; Mateos et al., 2008).

Writing process of synthesis texts

Recent studies have focused on the complex processes taking place when students perform a synthesis task. From these studies emerge some important insights on relevant process variables that could be the subject of process feedback.

A national baseline study (Vandermeulen, De Maeyer, et al., 2020) mapped the writing process of students in the three highest grades of upper-secondary education in the Netherlands. Based on keystroke logging data, various writing process aspects in three process intervals (each writing process was divided into three parts of equal time) were studied. Results indicated that lower-grade students spend more time in the sources during the first and third interval of the process, compared to the older students when writing informative synthesis texts. They also switch less frequently between the synthesis text and the sources in the first interval. The lower-grade students spend less time actively writing their synthesis text in the first interval, and they write less fluently, both in the first and second interval. They spend less time pausing during production in the second and third interval. Compared to the older students, the lower-grade students pause less frequently during production in the last interval, and in the first interval the pause duration is shorter. They also revise less throughout the process.

Another study (Vandermeulen, van den Broek, et al., 2020), exploring the relation between source use during the writing process and text quality, showed that the quality of an informative synthesis text has a positive linear relationship with the number of transitions per minute between the sources in the first interval, a negative linear relationship with the number of transitions per minute between the sources in the third interval, and a positive curvilinear relationship with the proportion of time spent in sources in the third interval.

These two studies point to important process variables in synthesis writing, namely, source use behavior (time in sources and transitions between sources), pausing, fluency, and revision across different intervals in the writing process. Ideally, these process aspects are captured in feedback aiming to improve students’ synthesis writing.

Feedback and the Use of Exemplars

Reflecting on and taking control over own learning

Feedback can be defined as information about how the student’s present performance relates to the goals of the task and the performance standards (Nicol & MacFarlane-Dick, 2006). The main aim of feedback is to enable students to reduce the gap between the current and the targeted performance level. Students thus have to monitor their learning during actual production (Sadler, 1989). To facilitate monitoring, feedback should be targeted at promoting self-regulated learning (Graham & Harris, 2018). Self-regulation in an educational context refers to students’ ability to monitor and regulate their thinking and actions (Zimmerman, 2002). Key to developing self-regulation in students is providing them with opportunities to reflect on their learning and their work (Nicol & Macfarlane-Dick, 2006). By reflecting on their learning, students become aware of their learning process and may consider alternative strategies (Van Den Boom et al., 2004). Feedback that empowers students to understand the learning goal, judge their learning process, and explore strategies supports students to bridge the gap between the current and desired levels.

Comparison with exemplars

Nicol (2021) argues that “students are making comparisons all the time” and that, for feedback to be powerful, these natural and internal comparisons have to be made more explicit. Integrating examples in feedback makes the feedback more explicit, triggers reflection, and stimulates self-regulatory skills (Scheiter, 2020). Moreover, examples allow learners to abstract from the given task, which may foster transfer of knowledge and skills to other tasks.

A proven effective method of example-based feedback is to work with exemplars. These are key examples of authentic student work that are typical of certain levels of quality or competence (Sadler, 1987). Exemplars have several benefits. First, they help students develop an understanding of the task as they are a concrete representation of task standards (Carless & Chan, 2017; Handley & Williams, 2011). Secondly, exemplars raise awareness of the different ways in which tasks can be addressed (Orsmond et al., 2002). Thirdly, they define an objective standard to which students can compare their work (Hendry et al., 2011). Fourth, exemplars can provide a source of encouragement for students. By seeing work of relatable peers, they can gain confidence that they can achieve a comparable level (Dixon et al., 2020; Hendry et al., 2011).

Research has shown that students benefit when they are encouraged to use exemplars as a point of reference when reflecting on their own work (Dixon et al., 2020). Exemplars can facilitate students’ awareness of their own learning process and performance (Hawe et al., 2019). This will enable them to gain insights into a range of possible strategies and outcomes of a given task. These insights can also be used to revise their own work.

Present Study

Several elements from studies on feedback point to the potential of process-oriented feedback to support learners in a demanding task such as writing a synthesis text. In this study, we examined the effect of two process-oriented approaches to give feedback on students’ synthesis writing. To analyze and interpret the feedback effect, it was compared to the grade effect of a national baseline. Moreover, we questioned students’ opinion on the feedback, given that students’ perception of feedback is an important factor in enabling successful feedback (Henderson et al., 2019). The way in which students perceive the feedback may have an impact on how they process the feedback and perform after it.

We developed an intervention in which we gave feedback on students’ writing process involving the composition of an informative synthesis text. The feedback was based on an individual process report with keystroke logging data. Moreover, exemplar writing processes were included in the feedback. Two feedback conditions were created, which differ in one aspect: the reference point for the exemplar processes. In the position-setting condition (PS), the exemplars showed writing processes resulting in a text with a similar quality to the quality of the participant’s text; and in the feed-forward condition (FF), the exemplars showed writing processes resulting in a text with a higher quality than the quality of the participant’s text.

The reasoning behind the position-setting condition is that comparison with processes of equal-scoring students may enable students to gain insight into a range of process approaches and strategies adopted by relatable peers (Dixon et al., 2020). This may enable them to establish the dialogue with their own process and to increase understanding of their own strategy. We hypothesized that the feed-forward condition may benefit students’ self-regulation as the examples of higher-scoring students offer a sense of perspective toward the targeted goal. In this way, the exemplar processes scaffold students’ awareness of areas of improvement and motivate them to improve their work (Hawe et al., 2019).

Aims

Previous research on feedback focusing on the writing process and using keystroke logging measures is scarce. As we wanted to explore the potential of this newly developed type of feedback, we approached the study from three angles: product, process, and perceptions. To evaluate the feedback’s potential to improve students’ writing skills, we tested the effect of the feedback on text quality (Research Question 1). Moreover, as process feedback targets the writing process, we expected it to help students to self-regulate their writing process. Therefore, we investigated the effect of the feedback on students’ writing processes (Research Question 2). Additionally, students’ perceptions of the feedback were explored (Research Question 3) as it can provide researchers with important information not only on the validity of the feedback but also on building stones for future process feedback studies.

Research Questions

We were guided by the following research questions and subquestions:

What is the effect of two types of process-oriented feedback on text quality, compared to a national baseline?

What is the effect of two types of process-oriented feedback on the writing process, compared to a national baseline?

What are students’ perceptions of the process-oriented feedback?

a. How did participants in the two conditions perceive the feedback?

b. Which elements of the process-oriented feedback are positively evaluated by the students and seem to have a positive effect on the participants’ progress?

Method

Participants

A total of 73 Dutch Grade 10 students from four classes of the same school participated in the feedback intervention. Since the aim of our study was to test the effect of the feedback program, only the 65 students who completed the full feedback intervention with three measurement occasions were taken into account (Nfemale = 36, Nmale = 29, Mage = 15.42 years). Written consent was obtained from all participating students.

The participants were randomly assigned to one of the two feedback conditions: position-setting (PS) and feed-forward (FF) (Nstudents PS = 32, Nstudents FF = 33). In PS, participants could compare their own writing process to the process of equal-scoring students (i.e., students with more or less the same score on text quality), while in FF, the participants could compare their writing process to the process of better-scoring students (i.e., students with a higher score on text quality). We elaborate on the exemplar processes below.

Baseline

To assess the effect of the intervention on participants’ text quality and writing process, we make the comparison with a baseline. The baseline consists of a nationally representative sample of students from three grades (10-12) who each wrote several synthesis texts without receiving feedback (Vandermeulen, De Maeyer, et al., 2020). Comparison with the baseline allowed us to compare the feedback effect with the effect of the progress students make over time or over grades.

For this study, three baseline groups were created, based on the complete national baseline sample. The first group contained all the informative synthesis texts and corresponding processes of the Grade 10 students who participated in the national baseline. We refer to this baseline as baseline control grade 10 (BC-g10); it consists of 435 texts and corresponding writing processes of 242 students. Additionally, two other baseline groups, baseline control grade 11 (BC-g11) and baseline control grade 12 (BC-g12), were used, containing the complete sample of informative synthesis texts written by, respectively, Grade 11 (Ntexts = 456, Nstudents = 246) and Grade 12 students (Ntexts = 190, Nstudents = 103).

There are two main reasons to prefer a national baseline over a more traditional control group for this intervention. First, a baseline offers a more representative view of synthesis products and processes than a traditional control group that is more limited in the number of participants and tasks. A second advantage was that all participants could participate in a feedback condition (Graham & Harris, 2014). With a traditional control group, part of the participants would not have received feedback in two instances, and a switching panel design (Bouwer et al., 2018) would be inefficient with regard to invested time, given the three measurement occasions needed for the complete feedback program.

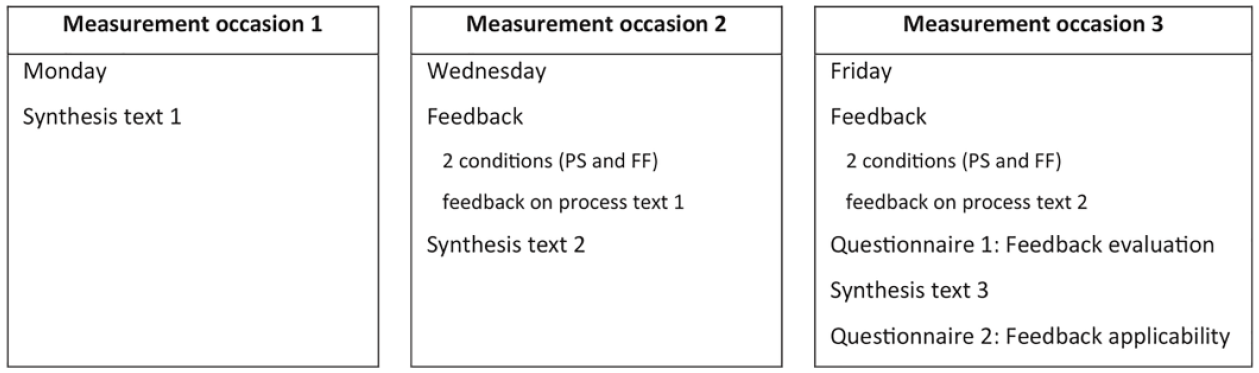

Procedure

The intervention took place at the students’ school and was led by a researcher (first author of this study) and a trained assistant. The feedback program was carried out at three moments in a week’s time. Figure 1 presents an overview of the activities at each measurement occasion (M1, M2, and M3). At each of the three measurement occasions, students wrote an informative synthesis text. They had 50 minutes to carry out the writing task. The processes were logged with a keystroke logging software, Inputlog (version 7.1.0.53).

Overview of the activities at each of the three measurement occasions.

The participants received process feedback at M2 and M3, prior to writing a new text. They processed the feedback individually at their own pace, within a time limit of 30 minutes. At M3, participants filled in a survey, consisting of two parts, in which we questioned how they evaluated and used the feedback. After completing the feedback intervention program, the participants were rewarded with film tickets.

Feedback

Based on four design principles, we built an online feedback module with several steps. The feedback was designed to encourage students to analyze, understand, and reflect on their own writing process by offering them personal writing process data collected with keystroke logging. Moreover, the feedback showed exemplar processes to which the students could compare their own process. These comparisons should encourage reflection and self-regulation.

Feedback flow and underlying design principles

We provided the feedback in an online flow of six steps. The participants logged on to our website and processed all the steps of the feedback individually at their own pace, albeit within a fixed time limit of 30 minutes. The six feedback steps were as follows:

Identification of performance position: positioning on a benchmark rating scale

Self-reflection on writing process: questionnaire on writing process approach

Insight into the personal writing process: Inputlog writing process report

Insight into exemplar writing processes: two annotated exemplar process graphs

Active processing of the information: fill-in-the-gaps of a general process description with the information from the individual and personal Inputlog process report

Goal-setting for the next task

For examples of Steps 1, 2, 5, and 6, see Appendix A. For an example of Step 3, see Appendix B. Appendix C contains an example of Step 4.

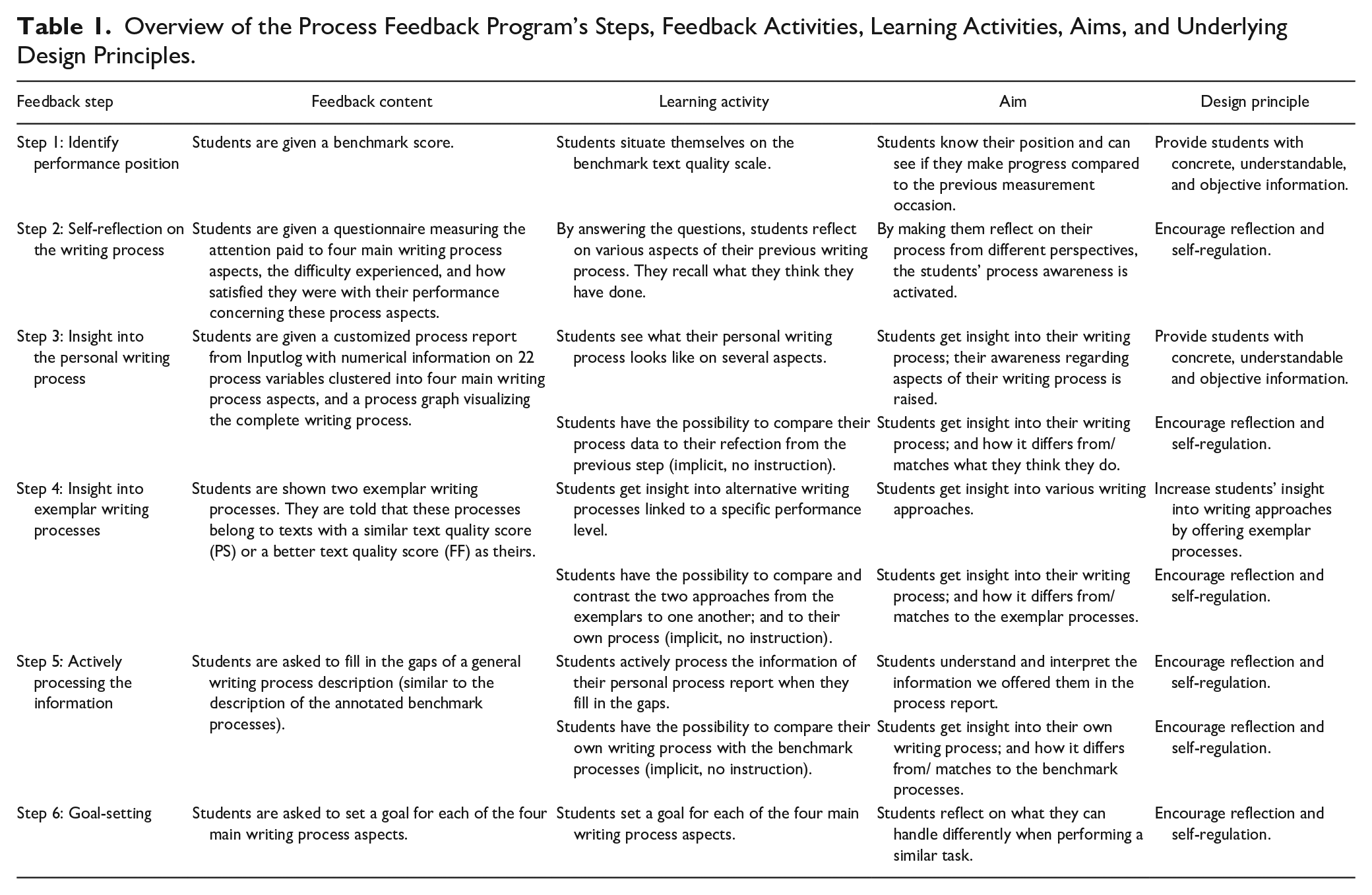

Table 1 gives an overview of the different steps of the feedback, its content (what does the feedback offer?) and learning activities (what are students expected to do?). We also specify the aim of each step and the underlying design principle. For this overview, we have followed the recommendations of Bouwer and De Smedt (2018) and Rijlaarsdam et al. (2018) on reporting interventions.

Overview of the Process Feedback Program’s Steps, Feedback Activities, Learning Activities, Aims, and Underlying Design Principles.

Three pedagogically oriented design principles, based on findings of previous studies on effective elements in feedback, were incorporated into the feedback flow. The first and overarching design principle was that the feedback should encourage students to reflect on their own writing with the goal to stimulate self-regulation of their writing process (Graham & Harris, 2018). The second and third design principles support this principle. The second design principle was that the feedback should provide students with concrete, understandable, and objective information on their personal performance (Nicol & MacFarlane-Dick, 2006). Thirdly, exemplar processes were used to show students concrete examples of writing process approaches, and to create an awareness of the synthesis process dimensions. The exemplars showed writing approaches of equally or better-scoring students (depending on the feedback condition), so students get insight into various writing processes linked to a specific performance level. As they raise awareness and provide insights, exemplars can encourage self-regulation. They assist students in taking action to narrow the gap between the current and the desired level (Hawe et al., 2019). These three design principles are mainly inspired by pedagogical guidelines. We also formulated a more methodological design principle. Namely, to assess the sole effect of the feedback, the intervention did not contain any direct instruction.

In short, the feedback was focused on awareness-raising: making students reflect on their writing process, helping them to gain insight into various aspects of their writing approach, and offering them possibilities to self-regulate their process. The Inputlog writing process report gives students concrete information on their writing process. Although this process report provides detailed information on various process aspects, the focus is not on these specifics but rather on raising awareness. Based on the Inputlog report, students reflect on their process. This is especially stimulated by showing them exemplar processes. Reflection and comparison, facilitated by the Inputlog process report and the exemplar processes, should enhance self-regulation.

Inputlog writing process report

To enhance the accessibility of keystroke logging data in an educational context, a new function within the keystroke logging program Inputlog was developed: the Inputlog report function (Vandermeulen, Leijten, et al., 2020). This function makes it possible to automatically generate a user-friendly report based on the logfile. The default report consists of a PDF file that addresses different writing process perspectives by selecting variables from the existing analyses in Inputlog.

For the present intervention study, a custom-made report template was created (Appendix B) that informed the participants about their writing process of the previous writing task. The customized report was adapted to the target audience and the writing task genre under study. In other words, the report had to be understandable for Dutch Grade 10 students and had to give information on process aspects related to the writing of an informative synthesis text.

The process report used in this intervention study contains information on 22 process variables, grouped into four aspects that are important for synthesis writing (Du & List, 2020; Leijten et al., 2019; Lenski & Johns, 1997; Mateos et al., 2008; Vandermeulen, De Maeyer, et al., 2020; Vandermeulen, van den Broek, et al., 2020). The four aspects are (1) use of time (reading, thinking, and writing), (2) production and fluency, (3) revision, and (4) source use and source switches. Each of these aspects is shortly introduced so as to facilitate understanding and interpretation. Moreover, taking into account findings of previous research on the importance of the timing of writing process activities (Breetvelt et al., 1994; Xu & Xia, 2019), the report includes information on the distribution of most of the process variables over three intervals: beginning, middle, and end of the writing process. Besides the numerical data on the 22 variables, the process report also contains a process graph: a graphical representation of the writing process that integrates information on several writing process aspects into one visual.

Exemplar processes

A crucial part of the process-oriented feedback are the exemplar processes, to which participants could compare their own writing process.

The exemplar processes consisted of annotated process graphs: process graphs with detailed information on the four writing process aspects (see Appendix C for an example). The process graph presents a general overview of the writing process, incorporating several process aspects into one visual (Xu & Xia, 2019). Visualizations are frequently used in education as they support students in dealing with complex and heterogeneous data (Vieira et al., 2018).

The exemplar process graphs are empirically grounded as we selected them from a previously carried out national baseline study (Vandermeulen, De Maeyer, et al., 2020) with 2,310 rated synthesis texts and matching writing processes of 658 students from three grades (Grades 10-12). Each of the selected exemplar processes corresponds to a text that is representative of a certain text quality category, that is, –2 SD, –1 SD, average texts, +1 SD, +2 SD. For each of the five categories, two sets of two exemplar processes were created. A different set was shown at each of the two feedback moments (M2 and M3). In this way, even the students whose text quality score did not change after the first feedback moment received other exemplars during the second feedback moment. Each set contained two divergent exemplar processes as we wanted to reflect the variety in writing process approach. Previous research (Van Ockenburg et al., 2019) has indicated the need to provide students with the freedom to adapt strategies to their personal preferences.

The same exemplar processes were used in both feedback conditions. The difference between the two conditions lies in the reference point of the exemplars. In the case of the PS condition, the participants received a set of exemplar processes corresponding to texts with a text quality score similar to theirs. In the FF condition, the participants were shown two exemplar processes resulting in texts with a higher text quality score than their text: one process of a student scoring 1 SD higher and another process of a student scoring 2 SDs higher.

Synthesis Writing Quality

Writing tasks

Participants wrote three informative synthesis tasks, one at each measurement occasion. The tasks were previously used in a national baseline study (Vandermeulen, De Maeyer, et al., 2020). Because of the time load of the intervention, we opted to only administer one task at each moment, although a student’s writing skill is ideally measured by several tasks at each measurement occasion (Van den Bergh et al., 2012). However, to compensate for this and in order to generalize across task features, several versions of each of the three tasks were created, varying in the relation between the source texts (complementary/contradictory) and the amount of irrelevant information in the sources (low/high). The number of sources remained constant over the different versions within each topic; that is, five sources on the topic “wildlife” for the task at M1, three on the topic “food additives” for the task at M2, and four on the topic “self-driving cars” for the task at M3.

Rating

Benchmark rating was used to assess the quality of the synthesis texts written by the students. The scale with benchmark texts was validated in a previous study (Vandermeulen, De Maeyer, et al., 2020). Ten raters scored the texts. They received a training that involved practice of benchmark assessment and discussion of the scoring in small groups of two to three people. The raters were professionally involved in language teaching or language research. They received a financial compensation. Each text was rated by three raters (interrater reliability, Cronbach’s α = .75). The average of the three scores was used for analysis.

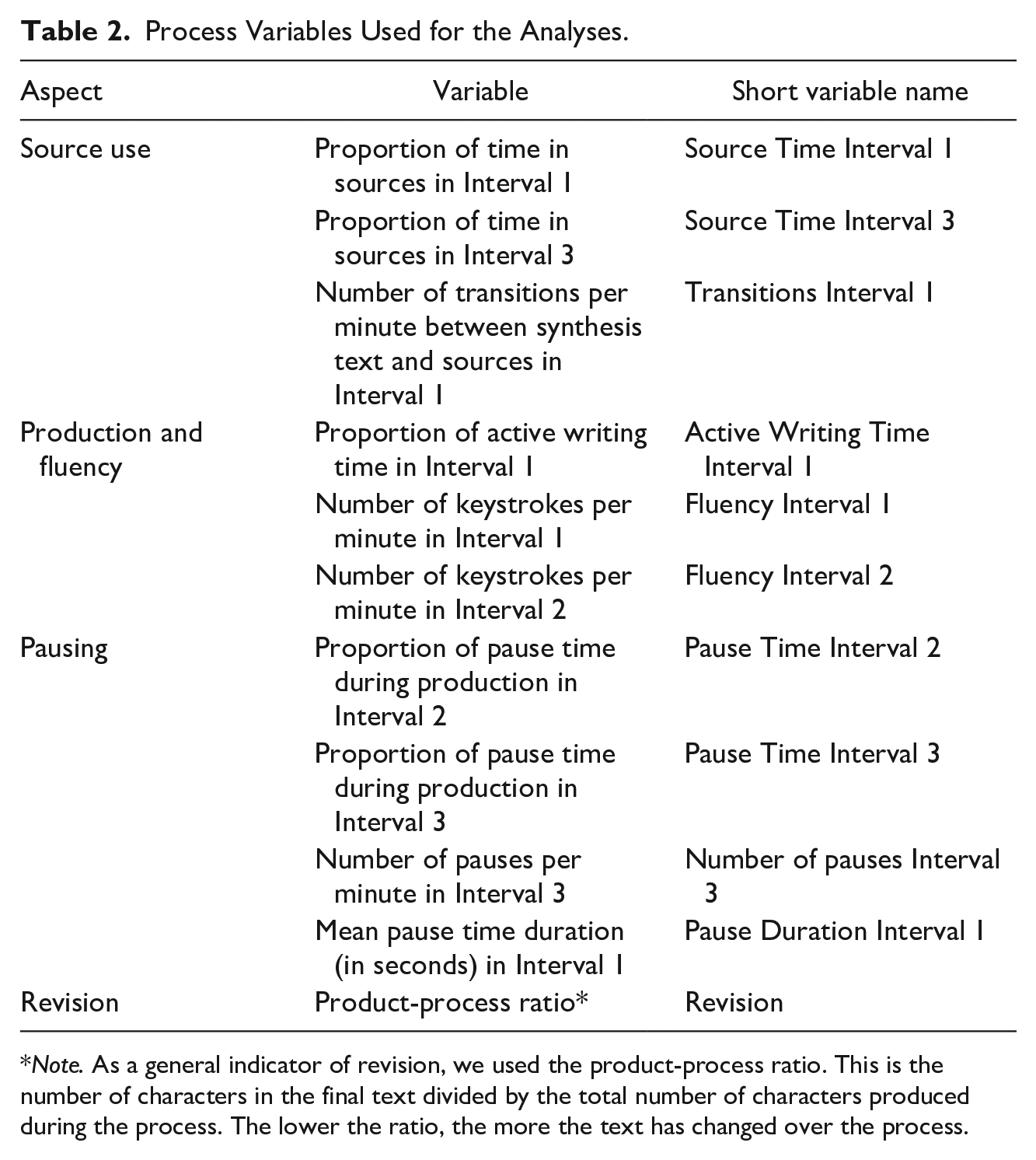

Writing Processes

To explore the effect of feedback on the writing process, we collected data on 11 writing process variables. Table 2 gives an overview of the 11 process variables used in the analyses. The variables are relative in nature, allowing us to compare the different writing processes (as some students finished earlier than the given 50 minutes’ time on task) and to generalize the findings. From here on, we will use the short variable names to refer to the writing process variables.

Process Variables Used for the Analyses.

Note. As a general indicator of revision, we used the product-process ratio. This is the number of characters in the final text divided by the total number of characters produced during the process. The lower the ratio, the more the text has changed over the process.

The variable selection was based on three criteria. First, these variables are all related to aspects that were incorporated in the personalized Inputlog process report. The rationale is the following: when giving feedback on specific writing process variables, we may expect to find an effect of the feedback on these writing process variables. Secondly, from previous research we know that these process variables are correlated with text quality (see, e.g., Vandermeulen, van den Broek, et al. (2020) for source-related variables and Van Steendam et al. (2022) and Van Waes & Leijten (2015) for fluency-, pausing-, and revision-related variables). For this reason, these variables were also incorporated into the Inputlog writing process report. Thirdly, in a previous study (Vandermeulen, De Maeyer, et al., 2020), we mapped the synthesis writing process of students from Grade 10 to 12 and found significant differences between the grades for these 11 writing process variables, showing that higher-grade students not only write higher-quality texts but display another writing process than the lower-grade students. This is an important criterion, as it proves that these specific variables vary according to students’ writing experience. The fact that these variables change over grades suggests that they may also be subject to self-regulation. We can thus expect the feedback to have an effect on them. In addition, using this set of variables allows us to compare the feedback effect of the intervention to the grade effect of the baseline.

Questionnaire: Feedback Perception

We administered an online survey (see Appendix D) at M3 to solicit the participants’ opinion and experiences with the feedback intervention. The first part of the questionnaire was given to the students right after they processed the feedback (and before writing Synthesis Text 3). These questions gathered the students’ opinion about several aspects of the feedback: the exemplar processes (2 items, α = .60), their belief that the feedback can help them make progress on different process aspects (4 items, α = .87), the usefulness of feedback on process aspects (4 items, α = .84), the instructiveness of feedback on process aspects (4 items, α = .83), and the clearness of feedback on process aspects (4 items, α = .92). This first part focused on the evaluation of the feedback. After writing Synthesis Text 3, students were asked to fill in the second part of the survey; this part contained nine individual questions that focused on the applicability of the feedback.

Fidelity of Implementation

To ensure the fidelity of implementation of the intervention (Dumas et al., 2001), three measures were taken. The first measure was related to supervision. The intervention was led by a researcher and a trained assistant. Given that the intervention did not require any direct intervention (as the feedback program was offered online), they operated as facilitators, keeping track of time and ensuring that the different phases of the feedback program were followed. Secondly, an independent samples t test was performed on the perception questionnaire data to check equal implementation of the feedback in the two conditions. Results indicated that there were no significant differences. Thirdly, a process check was built in into the feedback flow (see Step 5: Handout with fill-in-the-gap exercise). All of the participants filled in the handout. Fourth, we performed a check on the correctness of the fill-in-the-gaps handout. Out of a sample of 30 handouts, on average 94% of the gaps were filled in correctly, indicating that the students were able to process the feedback.

Analyses

Preparing process data for analysis

Prior to running the analyses, the Inputlog data were prepared by using the time filter and source recoding functions of Inputlog (Leijten & Van Waes, 2020). Second, as the variable TransSS-Int1 was not normally distributed, a natural log-transformation was performed.

Text quality

The effect of the feedback on the quality of the synthesis texts was analyzed by comparing the feedback conditions with the three baseline control groups (BC-g10, BC-g11, and BC-g12). We compared the feedback effect with the grade effect via a comparison of effect sizes and a visual exploration of graphs. In other words, the progress made from pretest (M1) to posttest (M3) in the intervention was compared to the progress made during 1 year of regular schooling (Grade 4 vs. Grade 5 vs. Grade 6). From the baseline study (Vandermeulen, De Maeyer, et al., 2020), we know that students’ writing performance and writing process differ according to the grade because of regular schooling and development. By contrasting the effect of the intervention with the effect of a year of regular schooling, we were better able to understand and interpret the impact of the feedback.

As this study investigates the effect of the complete feedback program, we focused on comparing M1 and M3 to determine the feedback effect, so comparisons between M1 and M2 or M2 and M3 are of less interest.

Writing process

The effect of the feedback on the various writing process variables was analyzed parallel to the analysis of effect on text quality. So, the feedback effect was compared to the grade effect for each of the 11 process variables based on effect sizes and visual explorations of graphs.

Questionnaire on feedback perception

To explore how the participants evaluated the feedback and used it when performing a new task, descriptive statistics were calculated. We also checked for correlations between the answers on the various questions and the progress the students made between M1 and M3 in order to identify elements of the feedback that might have had a positive effect on the participants’ progress.

Checks prior to the analyses

Before conducting the analyses, we checked for (1) initial differences in writing performance and writing process between the two feedback conditions and the baseline data of Grade 10, and (2) possible task effects on text quality and process variables. Initial differences and task effects were taken into account when interpreting the results.

Preintervention equivalence

At M1, text quality scores of FF differed significantly from those of BC-g10. Grade 10 students in FF scored on average 7.55 points lower than the Grade 10 students of the baseline (p = .012). For several process variables, significant differences were found between the conditions at M1: Pause Time Interval 2 and Number of Pauses Interval 3 were both lower in the baseline than in the two feedback conditions, and Pause Time Interval 3 was lower in the baseline than in PS. The two feedback conditions did not differ significantly from each other at M1 for either of the variables under study.

Task effect

As this study investigates the effect of the complete feedback program, we compare the text quality and writing process of M1 with that of M3. In order to know whether the differences between M1 and M3 are due to the feedback and not to the task itself, we checked for differences between the tasks in the baseline. To detect potential task effects, all the tasks of the baseline study that correspond to the task performed at M1 of the feedback intervention (informative tasks on wildlife topic written by Grade 10 students) were compared to the tasks of the baseline study that correspond to the task performed at M3 of the feedback intervention (informative tasks on self-driving cars topic written by Grade 10 students) for the variables under study. Possible task effects between M1 and M2 and between M2 and M3 are of less interest in interpreting the results as we focused on the feedback effect between M1 and M3. Results indicated that the text quality scores of the students in the baseline for the task performed at M3 did not differ significantly from the scores for the task performed at M1. There was a significant difference for several process variables when comparing the tasks from the baseline, suggesting that the specific task may influence these process variables. Source Time Interval 1 and Source Time Interval 3 were higher in the case of the first task, Active Writing Time Interval 1 was lower for the first task, and Fluency Interval 1 was also lower for the first task.

Results

Effect of Feedback on Text Quality

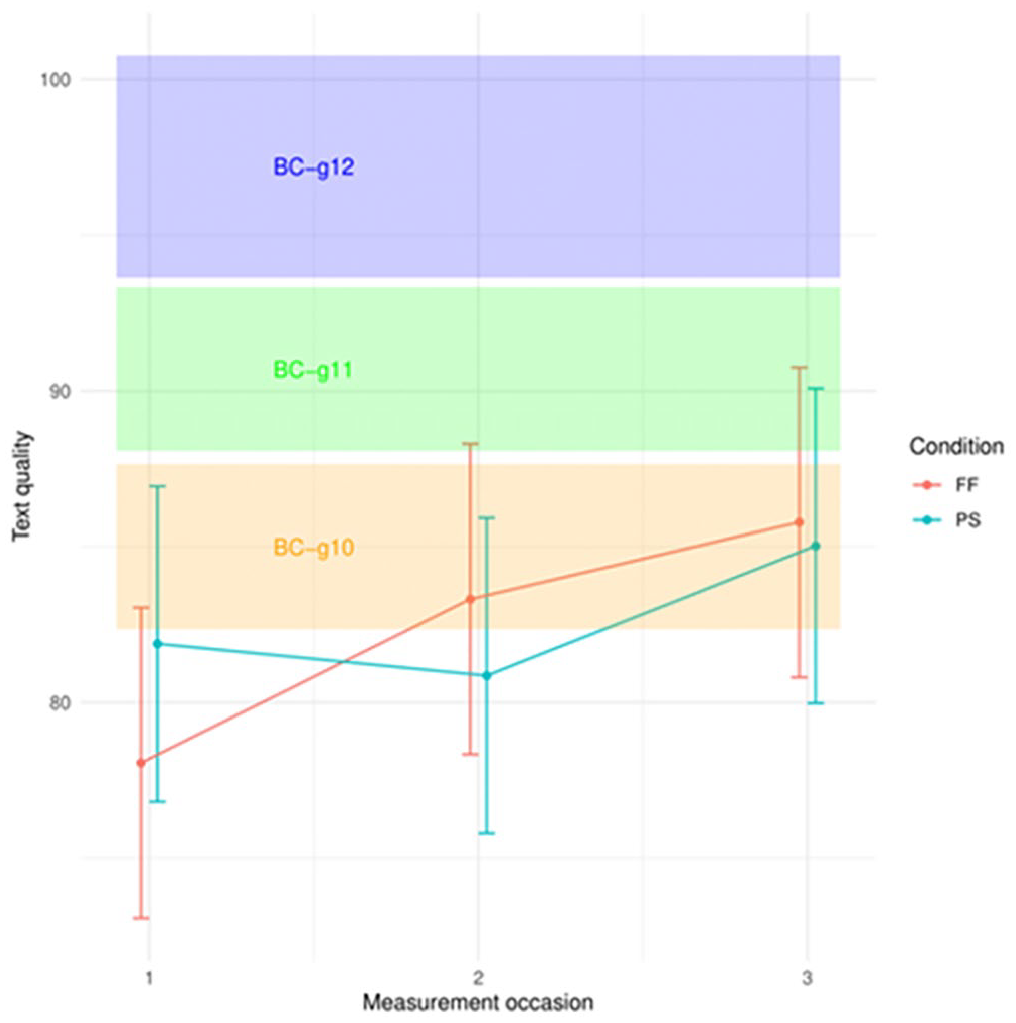

The table in Appendix E reports the means and standard deviations for text quality for the two feedback conditions (PS and FF) and the three baseline control groups (BC-g10, BC-g11, and BC-g12).

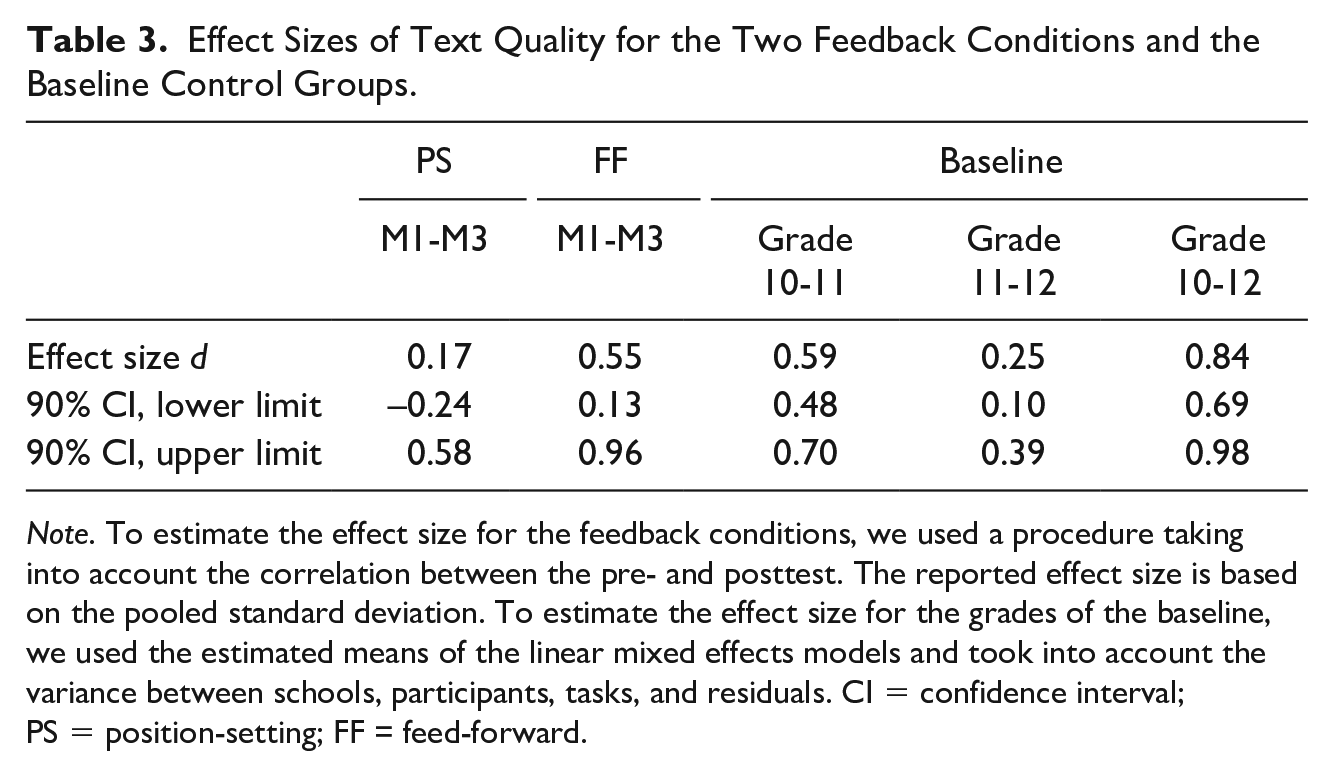

To estimate the effect of the feedback, we compared the feedback effect to the grade effect based on effect sizes. In this way, the effect of the feedback was compared to the progress students make during 1 year of regular schooling. First, we calculated the effect size between M1 and M3 of each of the feedback conditions to estimate the feedback effect. Secondly, we calculated the effect size for the differences between the three grades of the national baseline to estimate the grade effect. Table 3 gives an overview of the various effect sizes.

Effect Sizes of Text Quality for the Two Feedback Conditions and the Baseline Control Groups.

Note. To estimate the effect size for the feedback conditions, we used a procedure taking into account the correlation between the pre- and posttest. The reported effect size is based on the pooled standard deviation. To estimate the effect size for the grades of the baseline, we used the estimated means of the linear mixed effects models and took into account the variance between schools, participants, tasks, and residuals. CI = confidence interval; PS = position-setting; FF = feed-forward.

For the PS condition, the effect size is so small that the effect is nihil (d = 0.17).

For the complete feedback program of FF, the effect size indicates an intermediate effect (d = 0.55). We can then compare the progress made by the Grade 10 participants of the intervention study in the FF condition (i.e., the feedback effect) to the progress made by the Grade 10 participants of the baseline (i.e., the grade effect). Both have an intermediate effect (d = 0.55 for feedback effect; d = 0.59 for grade effect), and there is no significant difference between the two effect sizes (z = –0.13, p = .895). We can thus conclude that the process feedback in the FF condition had an effect comparable to 1 year of regular schooling.

Figure 2 shows the average text quality score with standard error bars for the two feedback conditions (PS and FF) at the three measurement occasions. It also visualizes the three baseline groups (BC-g10, BC-g11, and BC-g12), namely, the Grade 10, 11, and 12 students of the baseline, by plotting the average text quality score with error bars for each of the three baseline control groups. The middle of each colored grade block (indicated by the placing of the label) represents the average score. The top and bottom of each colored block represent the end points of the error bars.

Text quality: feedback conditions compared to the baseline of Grades 10, 11, and 12.

A visual exploration of Figure 2 confirms the results of the effect size comparison. The graph shows the progression of the participants in the FF condition. At M1, the range of FF is situated almost completely below the baseline of Grade 10 students; as the preanalysis check showed, the students’ initial performance in this condition was lower than the baseline. However, after two feedback moments, the range of FF shows great overlap with not only BC-g10 but also with BC-g11. The average score in FF at M3 is slightly higher than the average in BC-g10.

Effect of Feedback on Writing Process

The table in Appendix F reports the means and standard deviations for the process variables under study for the two feedback conditions (PS and FF) and the three baseline control groups (BC-g10, BC-g11, and BC-g12).

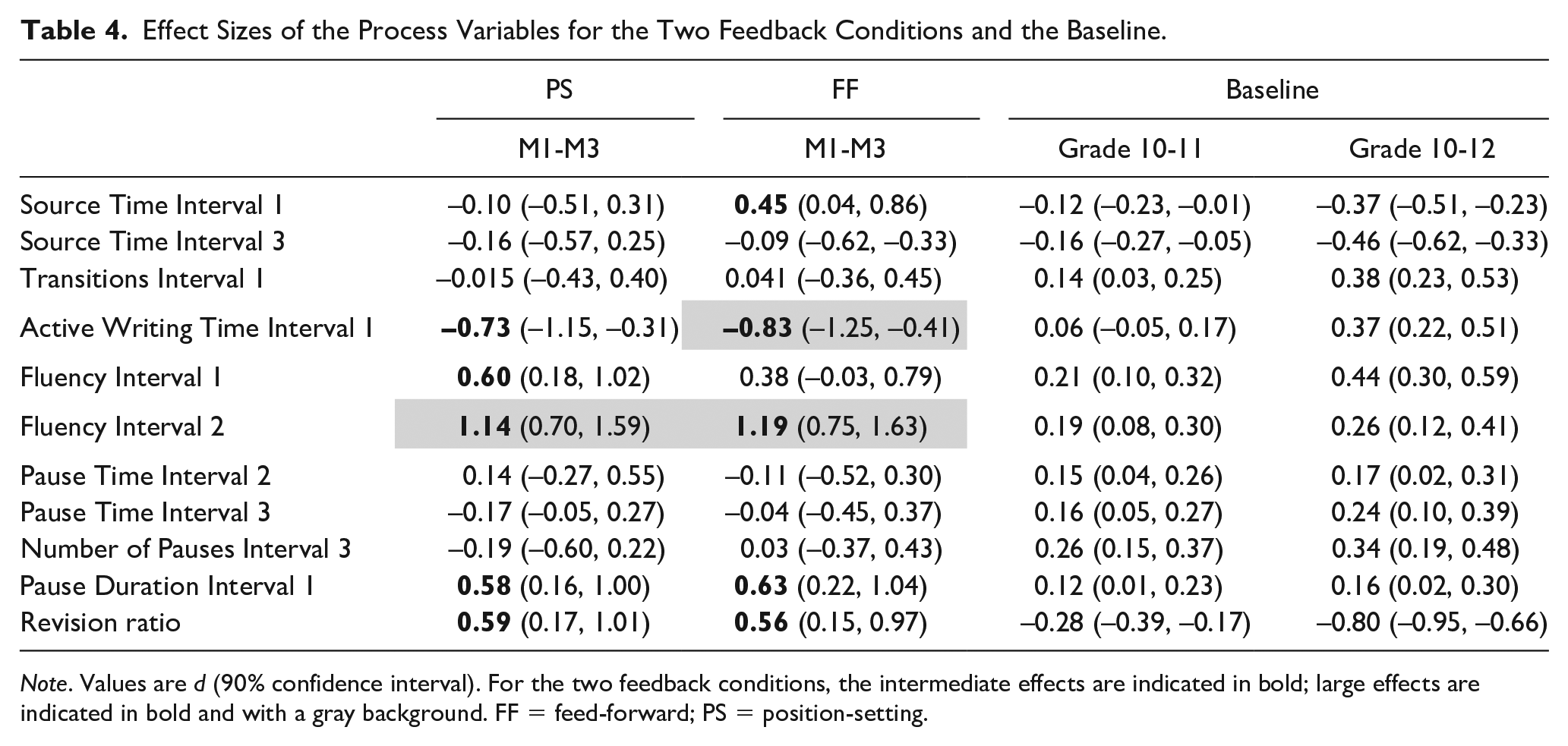

Table 4 shows the effect sizes for the difference between M1 and M3 in the two feedback conditions PS and FF, and for the difference between Grades 10 and 11, and between 10 and 12, from the baseline. We can then compare the feedback effect with the grade effect for all process variables under study. For 6 of the 11 process variables, intermediate or large effects were found in one or both of the feedback conditions.

Effect Sizes of the Process Variables for the Two Feedback Conditions and the Baseline.

Note. Values are d (90% confidence interval). For the two feedback conditions, the intermediate effects are indicated in bold; large effects are indicated in bold and with a gray background. FF = feed-forward; PS = position-setting.

For PS, there are intermediate effects for Active Writing Time Interval 1, Fluency Interval 1, Pause Duration Interval 1, and Revision. For Fluency Interval 2, there is a large effect.

For FF, there are intermediate effects for Source Time Interval 1, Pause Duration Interval 1, and Revision. A large effect was found for Active Writing Interval 1 and Fluency Interval 2.

The intermediate and large effect sizes indicate on which process aspects the feedback had an effect. To interpret this effect, we compared the effect of the feedback to the grade effects of the baseline. Parallel to what we did above, this interpretation is based on (1) a comparison of effect sizes of feedback conditions and baseline and (2) a visual exploration of the graphs plotting the two feedback conditions and the three baseline grades. These comparisons lead to a number of observations.

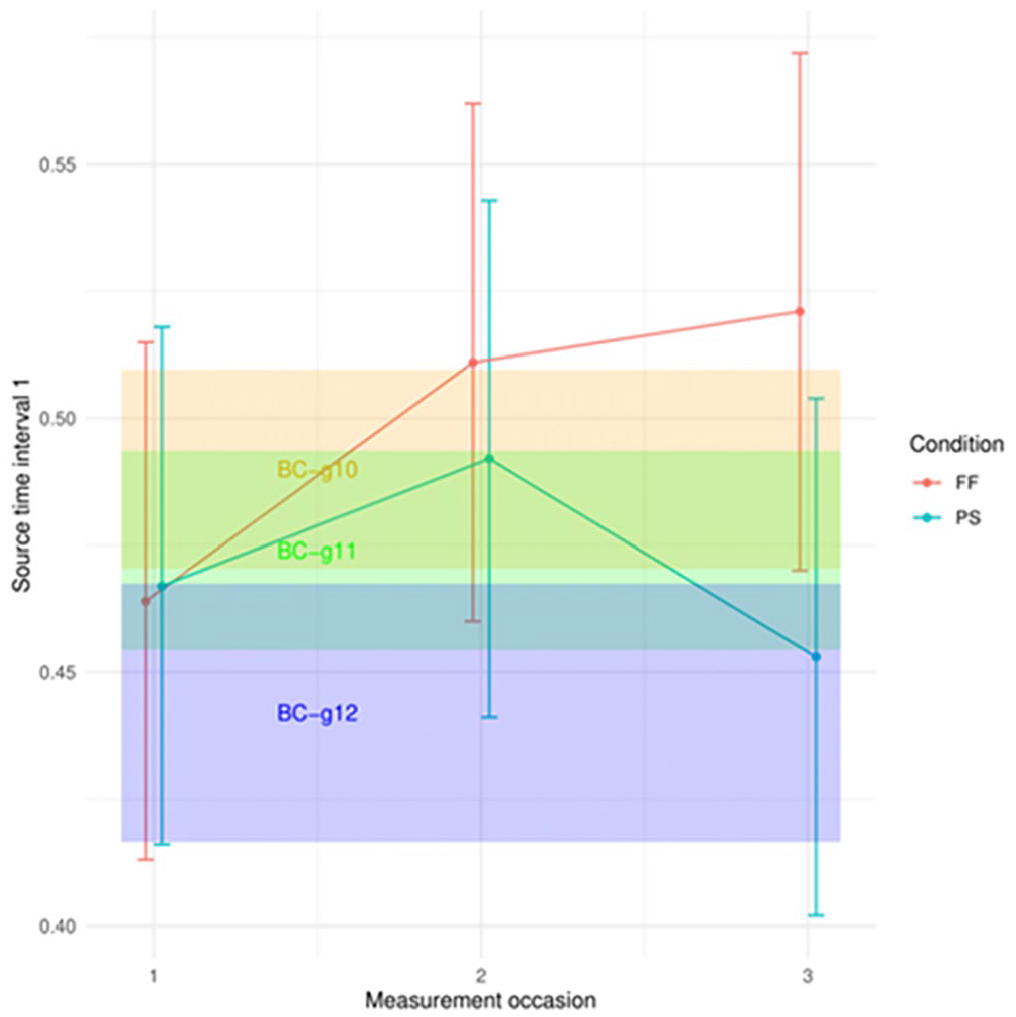

First, while students in FF spent more time in the sources during the first interval after receiving feedback (d = 0.45), the higher-grade (and thus more experienced) students from the baseline spent less time in the sources (d = –0.12 for the difference between Grades 10 and 11, and d = –0.37 for the difference between Grades 10 and 12). The difference between the effect sizes of FF feedback and Grades 10 and 12 is significant (z = 2.44, p = .015). We also observe this difference between the feedback effect and the grade effect in Figure 3. At M1, the average source time in the first interval is lower for FF compared with BC-g10 (though the difference is not significant), but after two feedback instances, it goes beyond the upper limit of the BC-g10 range. While we see a downward trend over the grades (with BC-g12 spending less time in the sources), an upward trend is noticed in the FF condition. Note that preanalysis checks showed a task effect; namely, Source Time Interval 1 was higher for the task used at M1 compared to the task used at M3. The increase in Source Time Interval 1 in the FF condition is thus a clear effect of the feedback.

Source Time Interval 1: feedback and grade effect.

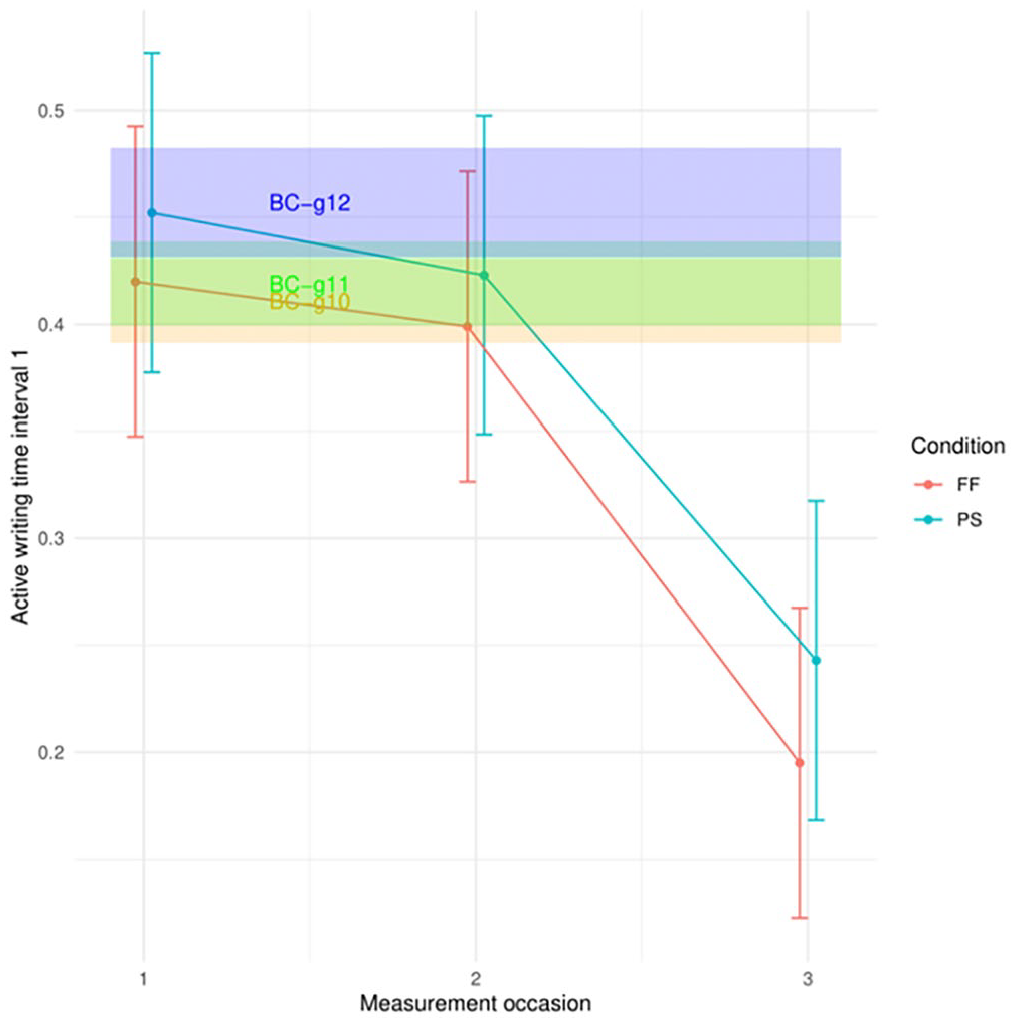

Secondly, in both conditions, the feedback has a large effect on the time students are actively writing their text. They spent less time on writing during the first interval at M3 compared to M1 (d = –0.73 for PS, and d = –0.83 for FF). One year of regular schooling, however, has no effect; after 2 years, there is a small effect (d = 0.37). Moreover, the grade effect is opposite to the feedback effect as higher-grade students spent more time actively writing their synthesis text in the beginning of the process. The feedback effects differ significantly from the grade effects (z = –2.43, p = .015, for the comparison of PS and Grades 10 and 11; z = –2.75, p = .006, for the comparison of FF and Grades 10 and 11; z = –3.17, p = .002, for the comparison of PS and Grades 10 and 12; and z = –3.48, p < .001, for the comparison of FF and Grades 10 and 12). Figure 4 shows that there is great overlap between BC-g10 and BC-g11; in BC-g12, students tend to spend more time actively writing their synthesis text. At M1, the average active writing time in the first interval of the FF condition is situated within the range of BC-g10 and BC-g11, and the average of the PS condition lies within BC-g12 (though there is no significant difference for this variable between PS at M1 and BC-g10). Figure 4 confirms the effect of the feedback on the active writing time in interval 1 in both conditions. After having received feedback at two moments, the students in PS and FF spent a considerably lower amount of time on actively writing their text than any of the baseline controls. Note that preanalysis checks showed a task effect; namely, Active Writing Time Interval 1 was lower for the task used at M1 compared to the task used at M3. The decrease in Active Writing Time Interval 1 in the two feedback conditions is thus a clear effect of the feedback.

Active Writing Time Interval 1: feedback and grade effect.

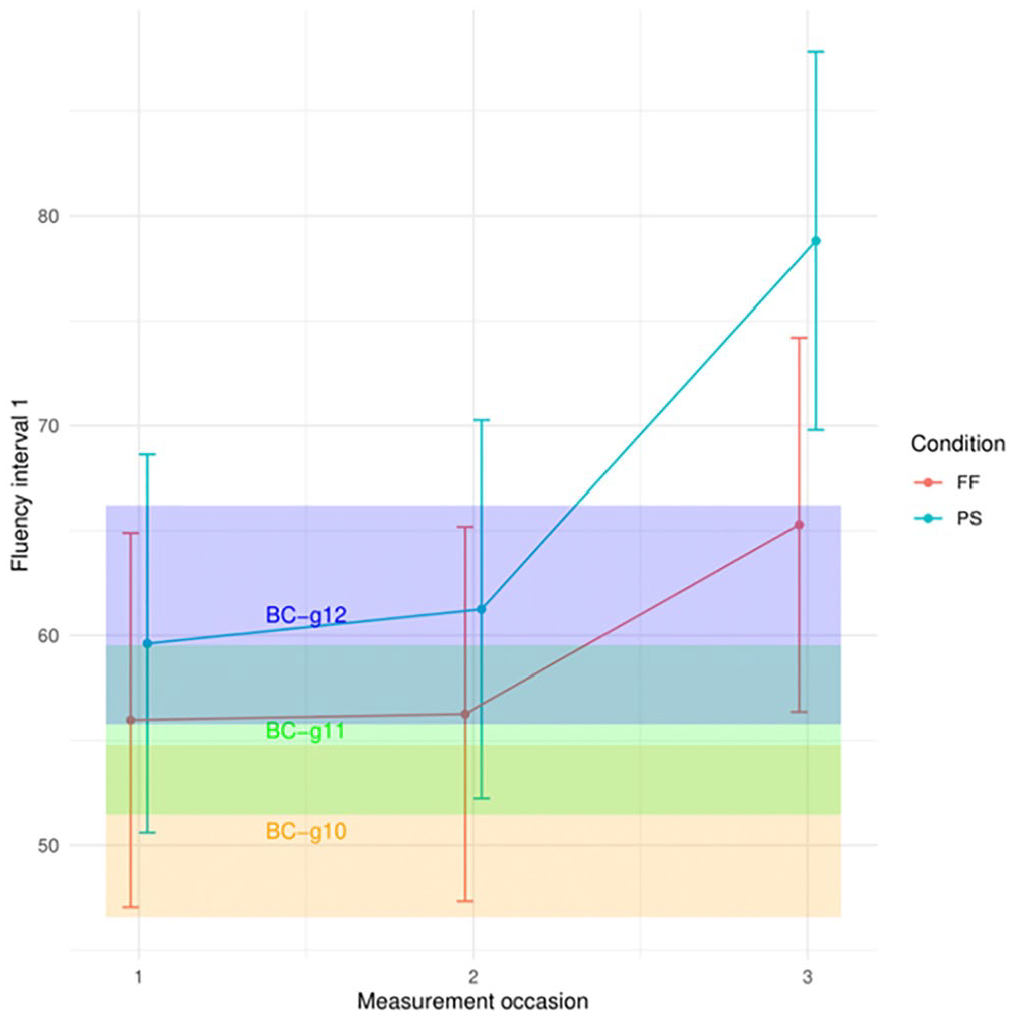

Thirdly, for Fluency Interval 1, there is an intermediate effect for the feedback in the PS condition (d = 0.60), while the grade effect is small (d = 0.21); the difference between the effect sizes is not significant (z = 1.20, p = .229). Students produced more keystrokes per minute during the first interval at M3, after receiving PS feedback, than at M1. However, we also observed that this effect could be related to the task. Figure 5, however, seems to suggest that the effect found in the intervention may not only be the consequence of the task, given that the average fluency in interval 1 at M3 is beyond the range of the three grades for PS. Note that while this graph suggests that the Grade 10 students in the two feedback conditions scored higher than the average of BC-g10 at M1, the preanalysis checks above showed no significant initial difference between the feedback conditions and baselines.

Fluency Interval 1: feedback and grade effect.

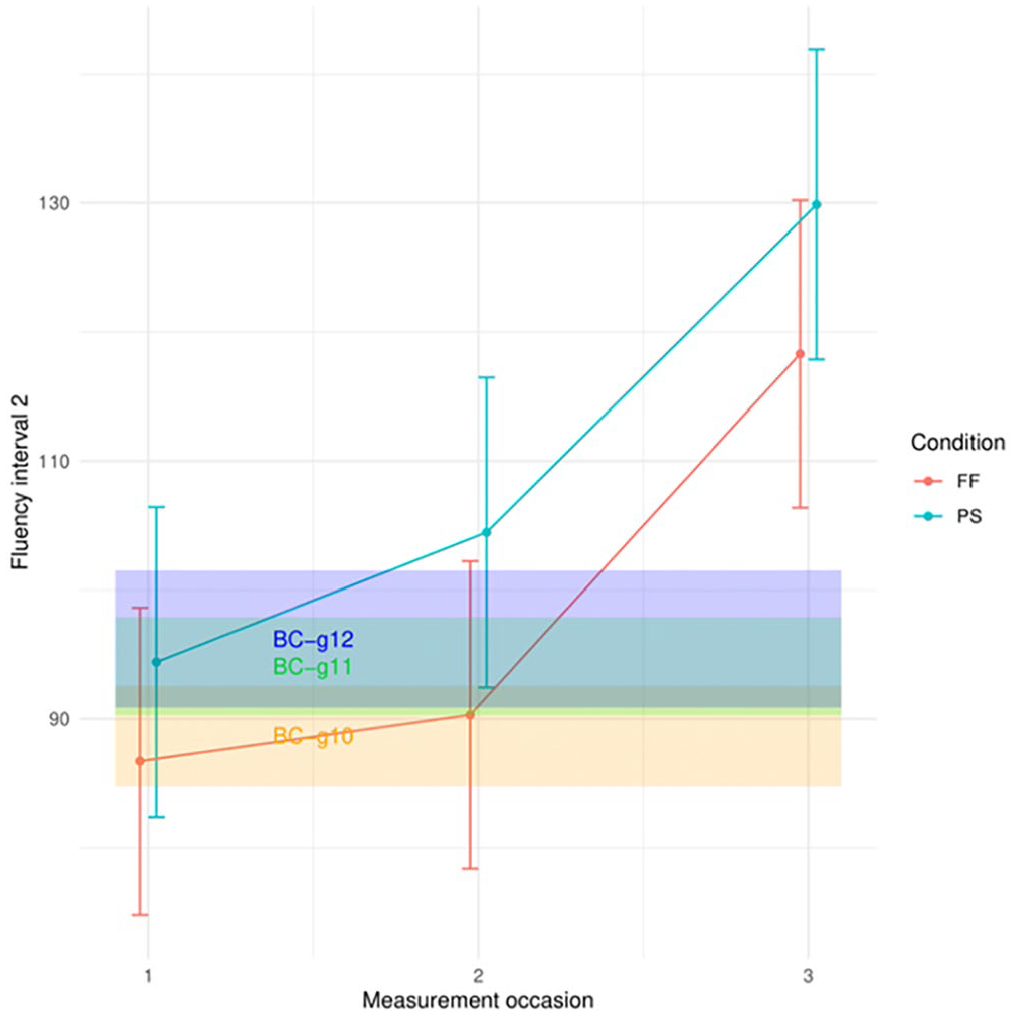

Fourth, in the second interval, there is a large effect of the feedback on the production fluency. The effect in both PS (d = 1.14) and FF (d = 1.19) largely exceeds the grade effects (d = 0.19 and d = 0.26). The feedback effects significantly differ from the effect of 2 years of regular schooling (z = 2.46, p = .014, for PS, and z = 2.62, p = .009, for FF). Figure 6 illustrates that at M3, both PS and FF exceed the ranges of all three baselines.

Fluency Interval 2: feedback and grade effect.

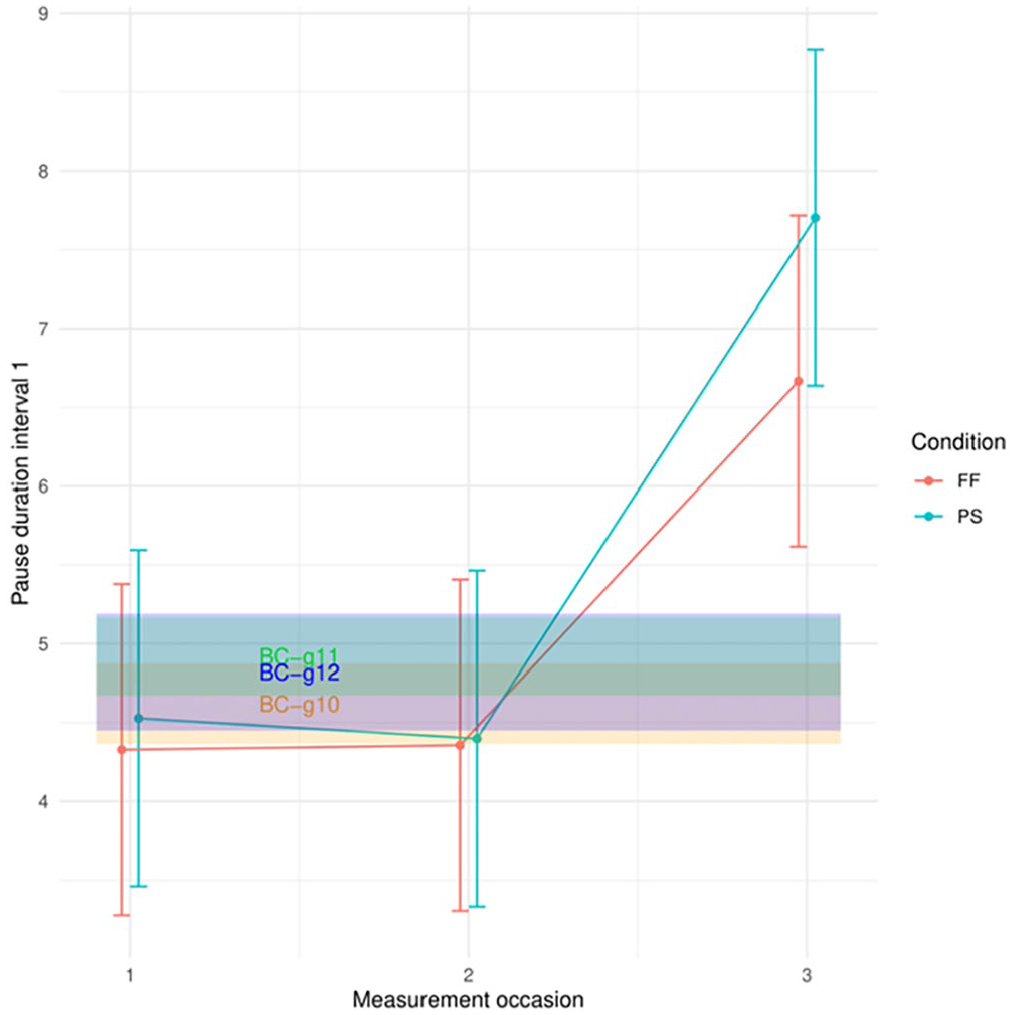

Fifth, for Pause Duration Interval 1, the effect sizes of the grades are small (d = 0.12 and d = 0.16), but we see an intermediate effect in both PS (d = 0.58) and FF (d = 0.63): the average duration of a pause during the first interval was longer at M3 compared to M1. A significance test showed that the feedback effects do not differ significantly from the grade effects. Figure 7 shows that, at M3, the average duration of a pause in the first interval of the process is remarkably higher in the two feedback conditions compared to the three grades of the baseline.

Pause Duration Interval 1: feedback and grade effect.

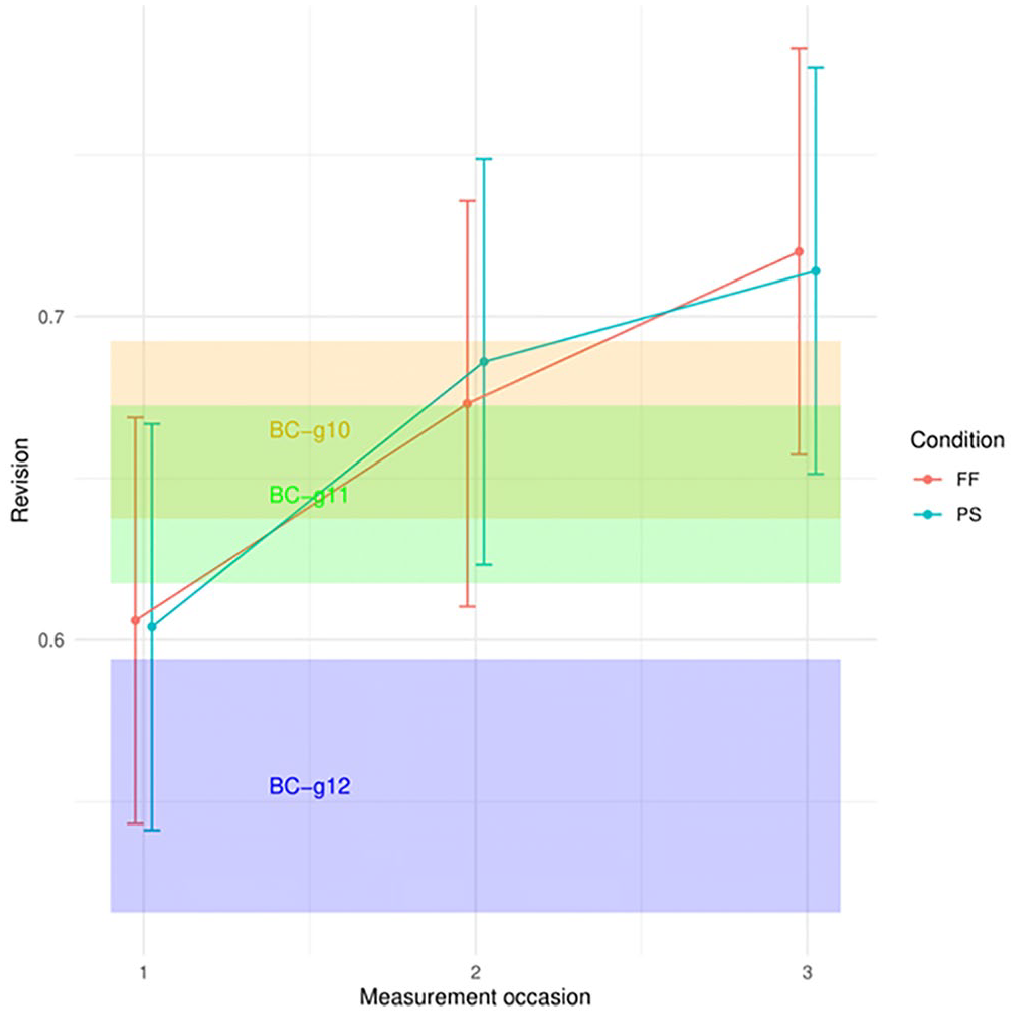

Sixth, Figure 8 shows that the feedback also has an effect on the revision. After receiving feedback at two instances, the product-process ratio was higher, implying that more of what was typed during the process ended up in the final text (d = 0.59 for PS and d = 0.56 for FF). In other words, less produced text was deleted throughout the writing process. So, in general, the feedback makes students revise less. When we look at grade effects, we see a small effect size after 1 year (d = –0.28), but after 2 years, there is a large effect (d = –0.80): the product-process ratio is lower in Grade 12 than in Grade 10; thus, Grade 12 students revise more than Grade 10 students. Feedback effects and grade effects differ significantly (z = 4.04, p < .001, for the comparison of PS and Grades 10 and 12; and z = 4, p < .001, for the comparison of FF and Grades 10 and 12). Figure 8 illustrates the opposite tendencies when comparing feedback effect to grade effect.

Revision ratio: Feedback and grade effect.

Students’ Perceptions on Process-Oriented Feedback

Descriptive statistics

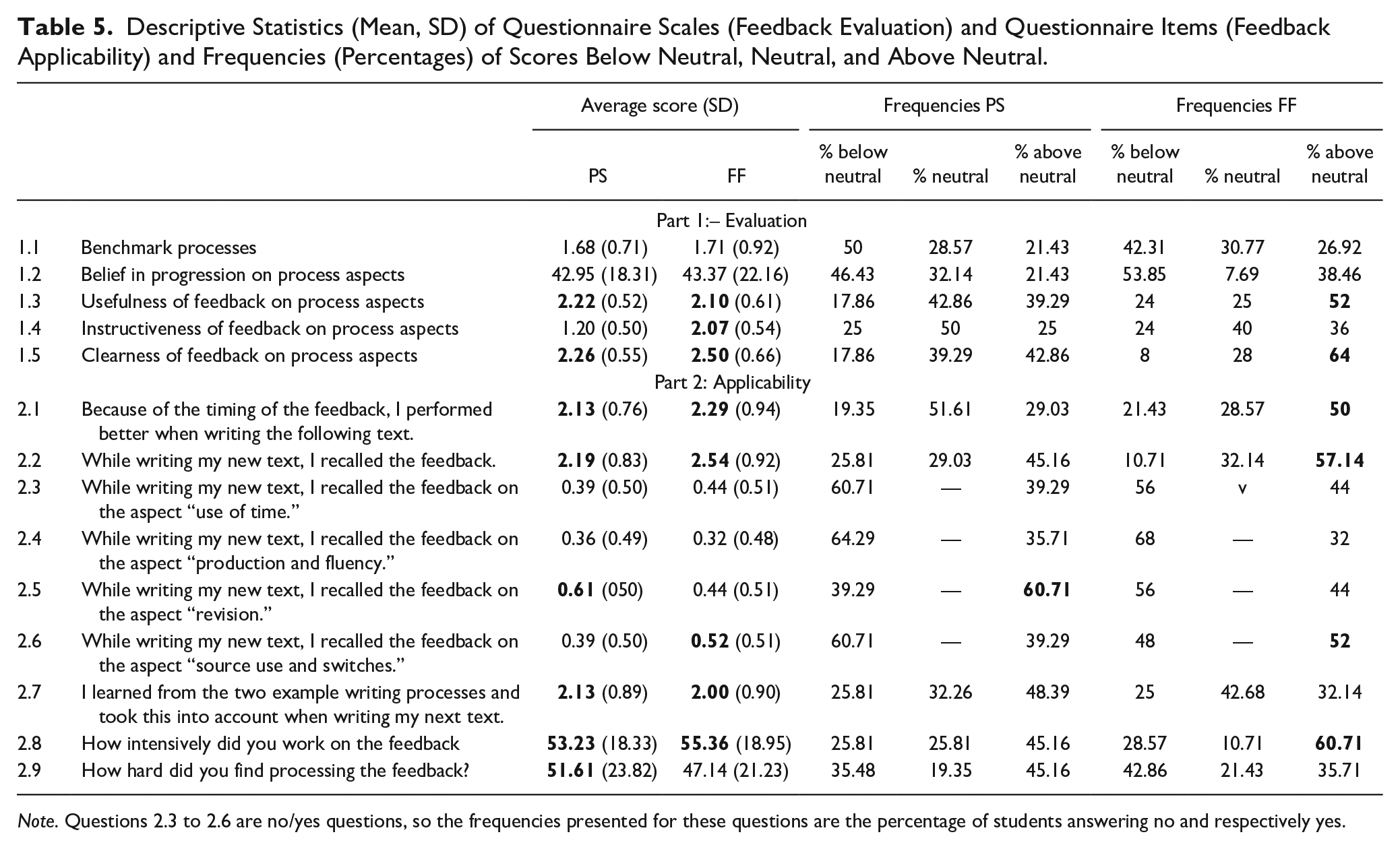

To get insight into the students’ perception of the feedback, we explored the descriptive statistics of the questionnaire scales and items measuring their evaluation and perception of applicability of the feedback. Table 5 presents the averages and standard deviations. Furthermore, Table 5 shows the percentage of participants scoring below neutral, neutral, or above neutral.

Descriptive Statistics (Mean, SD) of Questionnaire Scales (Feedback Evaluation) and Questionnaire Items (Feedback Applicability) and Frequencies (Percentages) of Scores Below Neutral, Neutral, and Above Neutral.

Note. Questions 2.3 to 2.6 are no/yes questions, so the frequencies presented for these questions are the percentage of students answering no and respectively yes.

Questions 2.3 to 2.6 were no(0)/yes(1) questions. Part of the questions (1.1, 1.3 to 2.2, and 2.7) were 5-point Likert scale questions with answers ranging from 0 (completely disagree) to 4 (completely agree). For the other questions (1.2, 2.8, and 2.9), an 11-point Likert scale was used, ranging from 0 to 100. The scales and items for which the average scores were above neutral (i.e., a score of 2 for the 5-point Likert scale questions, and 50 for the 11-point Likert scale questions) in at least one of the two feedback conditions are indicated in bold in Table 5. Also, scales and items for which at least 50% of the participants scored above neutral are indicated in bold in Table 5.

The feedback on the various process aspects was perceived as moderately useful and clear in both feedback conditions, with evaluation scores slightly above neutral. Only in the FF condition, more than half of the participants agreed: 52% of the participants evaluated the feedback as useful, and 64% found it clear. The feedback was also perceived as rather instructive in the case of the FF condition. We see that students in both conditions relatively appreciated the fact that they received feedback after 2 days. On average, students took time to reflect on their writing process in both conditions. They expressed that they recalled the feedback while writing a new text, including the information they got from the exemplar writing processes. The average scores for these two items were only slightly above neutral. However, a more positive evaluation is noticed for the FF condition, with 57% of the participants indicating that they recalled the feedback while writing. Nevertheless, when asked about recalling the feedback for each of the four specific writing process aspects, scores were rather low. Only the feedback on revision (for PS) and source use (for FF) was recalled while writing a new text by more than 50% of the participants. Moreover, we see that students found it rather hard to process the feedback. On average, students put effort into the feedback, with 45% of the students in PS and 60% of the students in FF reporting to work intensively on the feedback.

Correlations between feedback perception and performance

The correlations between the progress the students made from pre- to posttest, and the scores on the questions from the feedback perception questionnaire may indicate which elements of the feedback could be decisive for its effect on students’ performance in each of the feedback conditions. The students who made most progress in the PS condition indicated that they recalled the feedback while writing, r(29) = .448, p = .012. For the FF condition, more correlations were found. The students making most progress in the FF condition reported a stronger belief in the possibility to make progress on various process aspects, r(24) = .497, p = .010, and they found the feedback on the process aspects instructive, r(23) = .489, p = .013. They appreciated the timing of the feedback more, r(26) = .423, p = .025. Moreover, they reported that they recalled the feedback on the aspect “use of time” more than the students who made no or little progress, r(23) = .467, p = .019.

Discussion

This intervention study aimed at testing the effect of a personalized and empirically based way of giving feedback on students’ writing process. Based on keystroke logging data, we offered 65 Grade 10 students insight into several aspects of their writing process. Students were also given exemplar processes relative to their own performance. More concretely, they were shown writing processes of equal-scoring students (in the position-setting condition) or of higher-scoring students (in the feed-forward condition). Exemplars were selected from a previously carried out national baseline study (Vandermeulen, De Maeyer, et al., 2020) and showed a variety of writing process approaches. The intervention took place in 1 week’s time over three measurement occasions (M1, M2, M3). Students received feedback on their previous writing process at two moments (M2, M3), prior to writing a new text.

The aim of this study was three-fold as we wanted to test the effect of the feedback on the students’ writing performance (Aim 1) and writing process (Aim 2). Moreover, we wanted to know how students evaluated the feedback (Aim 3). In this discussion, we not only give an overview of the results, but we also offer interpretations for the effects found on text quality and writing process, and the differences in effects for the two feedback conditions. We embed the study in previous research and highlight its importance. Implications for future research and implementation in education are discussed. We consider limitations of this study, and to address them, offer recommendations for future research.

Regarding the effect of the feedback on the students’ writing performance, results showed that the position-setting process-oriented feedback had no effect on the quality of the students’ texts. Students in this condition did not perform significantly better at M3 compared to M1 as the effect size of the feedback was very small (d = 0.168). The feedback in the feed-forward condition, however, had a positive effect on students’ writing performance. Students in this condition scored higher at M3 compared to M1 (d = 0.546). When comparing the progress made by the Grade 10 participants of the intervention study to the progress made by the Grade 10 participants in the baseline (i.e., the difference between Grades 10 and 11), we concluded that the feed-forward process feedback had an effect comparable to 1 year of regular schooling (d = 0.334).

Regarding the effect of the feedback on the writing process, effect sizes indicated that the feedback had an effect on several aspects of the writing process. In both feedback conditions, feedback effects were found for production-related variables, a pause-related variable, and revision. In the case of the feed-forward condition, the feedback also had an effect on the source use.

These results now raise two questions: (1) Why did the students who receive feed-forward feedback perform better at the end of the intervention? and (2) Why did the feedback in the position-setting condition not have a significant effect on the students’ writing performance? To answer these questions thoroughly, more research specifically focusing on these questions is needed. Nevertheless, we can provide some indications based on our study.

The positive effect of the feed-forward process-oriented feedback may be related to the effect it had on the writing process. Students approached the writing process differently after the feed-forward feedback. In the beginning of the writing process (Interval 1), students spent more time in the sources at M3 compared to M1 (d = 0.45). The feedback may have made students more aware of the importance of the sources, resulting in more time dedicated to processing the sources (Beauvais et al., 2011; Bråten et al., 2018). Related to this, students were spending less time actively writing their synthesis text in the first part of the writing process (d = –0.83), and when pausing during production in the first part of the process, the average pause duration was longer (d = 0.63). This increased mean pause duration may indicate cognitive effort (Baaijen et al., 2012). When producing text in the middle part of the writing process, the students wrote more fluently, as more keystrokes per minute were produced (d = 1.15). In other words, the feed-forward feedback resulted in faster text production in the middle of the process. For the overall writing process, the product-process ratio (as a general measure of revision) was higher (d = 0.56); in other words, more of what was produced during the process ended up in the final text. So, the feedback resulted in less revision.

To interpret these changes in the writing process, we compared them to the results of a previous study in which we mapped the development of writing process variables in synthesis writing over the three highest grades of upper-secondary education (Vandermeulen, De Maeyer, et al., 2020). We compared the feedback effect to the grade effect: the progression in the condition with feed-forward feedback was compared to the progress students normally make during a school year. For the beginning of the writing process, there is little change between Grades 10 and 11 for two variables: time spent in the sources (d = –0.12) and active writing time (d = 0.06). There is, however, change over 2 years; compared to Grade 10 students, students in Grade 12 generally spend less time in the sources (d = –0.37) and spend more time actively writing their text (d = 0.37) in the first interval. So, we see an opposite evolution over the grades compared to what happens in the feed-forward condition (d = 0.45 for proportion of time in sources and d = –0.83 for proportion of active writing time). This may be related to the fact that higher-grade students have fewer problems to understand the sources and to select relevant information; therefore, they do not need to spend that much time in the sources compared with the younger students. For the Grade 10 students in our intervention study, however, spending more time in the sources was probably necessary to understand the sources better and to look for relevant information. The feedback may have made them more aware of the importance of the sources. Moreover, when the students paused during production in the beginning of the writing process, the pauses were longer. The feedback effect is large (d = 0.63), while the grade effect is very small (d = 0.14). In the middle of the writing process, the production fluency in the feed-forward condition is higher at M3 than in any of the three baseline grades (d = 1.19 for feedback effect, d = 0.19 for Grade Effect 10 and 11, d = 0.26 for Grade Effect 10 and 12). After focusing on the sources in the first interval, the second interval is dedicated to production. These findings seem to indicate that the participants of the intervention study have developed a more goal-oriented reading-writing strategy (Martínez et al., 2015). They have spent more time in the sources (reading, selecting information, comparing and contrasting), they have thought about what to write (hence, the longer pauses), and thus can produce more fluently. Additionally, we make the comparison between feedback effect and grade effect for revision for the overall process. The revision after feedback in the feed-forward condition is lower than before the feedback (d = 0.56). The low amount of revision may be related to the strategy adopted by the students after processing the feedback, namely, as they focused on the sources before starting to write, they had a more planned text production, so they had to revise less. Adding to this, we know that secondary students in general struggle with revision. This is seen in the baseline data as the effect size for the difference between Grades 10 and 11 is small (d = –0.28). Progression is only seen after 2 years of regular schooling (d = –0.80 for the difference between Grades 10 and 12). Previous research has also shown that not-so-experienced writers usually do not revise at higher-order levels (Myhill & Jones, 2007; Olmanson et al., 2016). We assume that the higher amount of revision at M1 in the feed-forward condition could be related to low-order revisions and deleting previously copy-pasted text blocks from the sources. These sort of revisions occur less when the sources are first read more carefully and text production is more goal-oriented, as was possibly the case at M3 in the feed-forward condition.

Now that we know what the effect was of the feedback on the writing process of students in the feed-forward condition, this raises the question how this effect differs from that in the position-setting condition. First, we see that the shift to focus more on the sources is absent in the position-setting condition. The position-setting process-oriented feedback had no effect on the time spent in the sources during the first interval (d = –0.10). As source use is key to synthesis writing (Solé et al., 2013; Vandermeulen, van den Broek et al., 2020), this may partly explain why the participants in the position-setting condition did not write better texts at M3. Another difference in the effect of the feedback on the writing process between the two conditions was found for fluency in the first interval. In both conditions, the fluency in the beginning of the writing process increased after the feedback. In the case of the feed-forward condition, the effect size was small (d = 0.38), while in the position-setting condition, the feedback had an intermediate effect on the fluency (d = 0.60). After receiving position-setting feedback, the students concentrated on fast text production in the first interval instead of on the sources. Despite the high fluency, active writing time decreased after feedback (d = –0.73). It seems as if the combination of three factors in the beginning of the process, namely, little attention to the sources, little attention to active writing, and fast text production, are key to understanding why the position-setting feedback did not have a positive impact on students’ writing performance.

An important remark should be made about the effect of feedback on the writing process so as to avoid misinterpretations. We saw changes in the writing process as a result of the feed-forward feedback (such as more time in the sources and less time actively writing in the beginning of the writing process, and writing more fluently in the middle of the process), and we know that the feed-forward feedback helped students write better texts. This, however, does not imply that a higher amount of source time and a lower amount of active writing time in the first interval and a higher production fluency in the second interval are indicative of effective writing processes. In fact, we see, for example, that source time in the first interval correlates negatively with text quality (see correlation table in Appendix E); this is in line with findings from previous research (Vandermeulen, van den Broek, et al., 2020). The patterns observed in the writing process of FF condition participants contrast with findings from previous research on effective synthesis writing patterns (Vandermeulen, van den Broek, et al., 2020). Thus, we cannot say that the changes provoked by the feedback are indicative of high-quality texts. A more careful conclusion could be that the feed-forward feedback had an impact on the students’ writing approach; it may have made them reflect on what they were doing, which may have caused them to change their writing approach regarding certain process aspects. In other words, the feedback prompted students’ self-regulation of their writing process.

Another possible reason for the difference in effect between the two conditions is related to the main difference between the two feedback conditions, namely, the point of reference of the exemplar processes. Studies on feedback theory have shown that feedback is key in helping students reduce the gap between their current and desired level (Carless & Boud, 2018; Nicol et al., 2014). The exemplar writing processes in the feed-forward condition—by being situated within the students’ zone of proximal development (Vygotsky, 1986)—provide an example of how students could close the gap between their current performance and the objective (Hawe et al., 2019). Based on this insight, feedback that includes feed-forward does indeed seem to have more potential as it shows the process leading to a better performance. It seems plausible that the confrontation with better performing processes evokes more reflection so that the writers become more aware of certain aspects in their writing process.

Previous research has shown that students’ perception and evaluation of feedback is an important factor in enabling successful feedback (Henderson et al., 2019). Since only the feed-forward condition had a positive effect on the performance of the students, we expected to see a difference in perception between the two conditions. However, the differences in feedback perception were limited and not particularly pronounced. Compared to the position-setting condition, the majority of the students in the feed-forward condition recalled the feedback while writing a new text and reported that they worked rather intensively on the feedback. The difference in effect of the two feedback conditions is more noticeable when correlating the students’ perceptions of the feedback to their progress in writing performance. More correlations were found in the case of the feed-forward condition. It concerned aspects related to feedback appreciation, self-efficacy, applicability of the feedback when writing a new text, and the instructiveness of several process aspects. These findings may point to elements of the feedback that could be decisive for its effect on students’ performance.

This study is, to our knowledge, the first to test the effect on both the writing product and the writing process of process-oriented feedback based on a report with keystroke logging data. This study provides us with crucial information about the aspects of the writing process that are influenced by the feedback. The results showed a different effect on the process variables depending on the condition. By comparing the impact of the feedback on the writing process in the successful condition (feed-forward) with the impact on the process in the unsuccessful condition (position-setting), aspects that are likely to be crucial in the learning process of synthesis writing emerge. These insights can be used to further shape process-oriented feedback to be tested in future intervention studies. The results suggest a positive impact of self-regulation aimed at a goal-oriented reading-writing strategy in which dealing with sources in the beginning of the process in combination with fluent text production in the middle is central. Our research may have implications for classroom practice, as it shows the potential of the process report of Inputlog and the exemplar process graphs (examples of better-performing students) when embedded in a flow to stimulate reflection and self-regulation.

There are a few limitations to this study. First, as it is the first time that this type of feedback is tested in classrooms, it is advisable to replicate the study. Moreover, follow-up studies could help us adapt the feedback materials and procedure, by testing several variants. The current study worked with timed, single-version tasks. To use the feedback with other tasks, such as tasks involving multiple text versions produced in several writing sessions (e.g., draft and revision), adaptations to the Inputlog writing process report and the exemplars are needed. In order to focus on the potential of process-oriented feedback based on keystroke logging and exemplar processes, the current study did not include instruction or elements of product-oriented feedback. Future studies could add instruction to the feedback, or combine process-oriented feedback with product-oriented feedback, as both have yielded positive results in a multitude of previous intervention studies. Moreover, a delayed test could be added to the intervention study to examine possible long-term effects. Secondly, further research is needed to fully grasp how students process the feedback. We gained insight into this by studying the keystroke logging data and examining the feedback effect on the process, but the picture is not complete yet. Keystroke logging data could be combined with questionnaires, interviews, or focus group conversations to further examine the impact of the feedback processing. This could provide us with additional information on how the different aspects of the feedback (such as reflection, the report, the different aspects of the report, the exemplar processes, and the different aspects of the exemplar processes) contributed to the students’ self-regulation. Thirdly, although we got an insight into shifts in the writing process caused by the feedback, it would be interesting to look further into the writing processes. We mapped the writing process based on a carefully chosen but limited set of writing process variables. The (synthesis) writing process is very complex; many variables, and the interaction between the various variables, are worth investigating. A more in-depth analysis of the writing process could give us more insight into the feedback’s effect on the process.