Abstract

Do students learn from video lessons presented by pedagogical agents of different racial and gender types equivalently to those delivered by a real human instructor? How do the race and gender of these agents impact students’ learning experiences and outcomes? In this between-subject design study, college students were randomly assigned to view a six 9-minute video lesson on chemical bonds, presented by pedagogical agents varying in gender (male, female) and race (Asian, Black, White), or to view the original lesson with a real human instructor. In comparing learning with a human instructor versus with a pedagogical agent of various races and genres, ANOVAs revealed no significant differences in learning outcomes (retention and transfer scores) or learner emotions, but students reported a stronger social connection with the human instructor over pedagogical agents. Students reported stronger positive emotions and social connections with female agents over male agents. Additionally, there was limited evidence of a race-matching effect, with White students showing greater positive emotion while learning with pedagogical agents of the same race. These findings highlight the limitations of pedagogical agents compared to human instructors in video lessons, while partially reflecting gender stereotypes and intergroup bias in instructor evaluations.

Introduction

Objective and Rationale

Consider a learning scenario in which a student sits at a computer screen that delivers a video lesson on a science topic. The instructor presenting the lesson on the screen is either a real human instructor or a pedagogical agent of a particular gender (male, female) and race (Asian, Black, White). Do the students learn better with the real human instructor than the pedagogical agents? Can the gender and race of the pedagogical agents affect students’ learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes)? This present study addresses these issues.

The motivation for this study arises from the potential influence of the instructor’s race and gender on learners’ emotional, social, and cognitive processing during learning from video lectures. By using pedagogical agents, we can replicate the original lesson delivered by a human instructor, altering only the instructor’s race and gender. This approach significantly enhances diversity in online education. Unlike human instructors, whose identities are fixed, pedagogical agents offer flexibility, allowing for customizable appearances and voices that provide a more inclusive learning experience for students from diverse backgrounds. Therefore, this study aimed to investigate whether pedagogical agents are as effective as, or more effective than, human instructors for students, and whether students react differently to pedagogical agents of certain genders or races.

In terms of theoretical implications, this research also seeks to examine whether theories such as the Media Equation Theory (Reeves & Nass, 1996), the Alliance Hypothesis (Taylor et al., 1978), and the Matching Hypothesis (Kalick & Hamilton, 1996) can be extended into learning with pedagogical virtual agents. The Media Equation Hypothesis suggests that learners interact with pedagogical agents in the same way they do with real humans, indicating that pedagogical agents in video lessons could be just as effective as human instructors, while also subject to similar racial and gender biases. Theories based on intergroup bias, such as the Alliance Hypothesis and the Matching Hypothesis, suggest that students tend to favor instructors who share their racial or gender characteristics, raising the important question of whether such preferences extend to pedagogical agents. In terms of practical implications, the findings of this investigation are intended to provide insights into the gender and racial design of animated pedagogical agents, underscoring their potential to promote inclusivity and diversity in multimedia learning environments.

Theoretical Background

Media Equation Theory

Media Equation Theory, introduced by Reeves and Nass (1996; 2005), argues that people tend to instinctively respond to computers and media as if they were real human beings. This response is rooted in the human brain’s natural tendency to apply social and cognitive behaviors to non-human entities (Rosenthal-Von Der Putten et al., 2014). As a result, under certain conditions, people could interact with digital agents in virtual settings as if they were interacting with actual people. A range of studies (Lawson et al., 2021a, 2021b, 2021c; Lawson & Mayer, 2021; Nass et al., 1997; Nass & Steuer, 1993; Zhao & Mayer, 2023a, 2023b) has provided support for this theory. These investigations have examined whether behaviors typically seen in human-to-human or human-environment interactions—such as social connections, emotional reactions, and gender-based responses—are similarly reflected in interactions between humans and technology.

For example, one study by Nass and Steuer (1993) explored social connections and emotional reactions in polite interactions with computers. Participants were asked to evaluate a computer’s performance in teaching a lesson, and were randomly assigned to provide feedback either on the same computer they had used for the lesson or on a different computer in another room. Interestingly, those who evaluated on the same computer gave significantly more favorable responses than those who evaluated on a different computer, suggesting a form of politeness toward the machine. This phenomenon was later replicated in both text- and voice-based interactions, indicating that people tend to act politely toward computers, as if avoiding hurting the computer’s “feelings.”

In a more recent study by Zhao & Mayer (2023b), two experiments were conducted to explore the effect of both the lesson design (cartoon vs. original slides) and voice types (machine vs. human) on learning experiences and outcomes. Participants viewed either cartoon-like slides or original line-drawn slides, narrated by either a machine-synthesized voice or a human voice. The findings revealed no significant interaction between lesson design and voice type, suggesting that both types of voices led to similar effects on students’ emotions, social connections with instructors, and learning outcomes.

Another study investigated gender stereotypes by assigning computer voices to topics typically associated with either men or women (Nass et al., 1997). The results showed that participants easily detected gender differences and applied human-like stereotypes to the computer agents, based solely on the voice. This demonstrates that people engage in social interactions with technology, whether through text or voice, and that these behaviors are triggered by subtle cues in the interaction.

Additionally, a more recent study by Zhao et al. (2024) asked participants to observe virtual agents of different races and genders and then identify their characteristics. Although this study did not investigate the effect of those various virtual agents on students’ learning, the findings indicated that individuals could accurately recognize the race and gender of virtual agents, much like they do with real people.

In summary, several research studies supporting the Media Equation Hypothesis show that individuals can form social connections with technological entities, such as computers, machine voices, and virtual agents. However, the specific influence of race and gender of virtual agents on students’ learning remains an area that requires further exploration, particularly in terms of their effectiveness compared to human instructors.

Grounded in the Media Equation Theory, which suggests that people respond to technological entities as they would to real humans, this study explores how pedagogical virtual agents with diverse racial and gender identities influence students’ learning experiences (i.e., affective and social dimensions) and academic performance (i.e., cognitive processes). Specifically, it seeks to expand the theoretical framework by investigating whether learners engage with virtual agents in video-based learning environments in ways that parallel human-to-human interactions. Given the theory’s premise that non-human entities can elicit human-like responses, this study utilizes sophisticated 3D modeling techniques to create pedagogical agents based on an original female human instructor. These agents represent six distinct identities: Asian Female, Asian Male, Black Female, Black Male, White Female, and White Male.

Guided by the Media Equation Theory, this research seeks to determine whether students taught by these different types of pedagogical agents achieve equivalent or superior learning outcomes compared to those taught by a real human instructor. Furthermore, this study examines whether the racial and gender identities of the agents influence students’ learning experiences, including their affective feelings and social connections with instructors, as suggested by the previous findings of human-like social and emotional interactions with media (Lawson et al., 2021a, 2021b, 2021c; Lawson & Mayer, 2021; Zhao & Mayer, 2023a, 2023b). By addressing these questions, the study not only extends the Media Equation Theory into the domain of multimedia education but also provides practical insights into the design and application of diverse pedagogical agents. The findings can potentially promote a more inclusive and equitable learning environment, benefiting students from various cultural and social backgrounds.

Racial and Gender Stereotypes in Instructor Evaluations

The influence of gender and racial stereotypes on instructor evaluations has been widely studied, with evidence suggesting that students’ perceptions of their instructors are deeply shaped by these biases (Eagly & Wood, 2012; Kierstead et al., 1988).

Specifically, Social Role Theory (Eagly & Wood, 2012) posits that gender roles are shaped mainly by the division of labor within society, with women typically perceived as nurturing and emotionally expressive, while men are viewed as assertive, dominant, and competent. These stereotypical perceptions are often extended to the classroom, influencing how students perceive and evaluate their instructors based on gender. A study by Kierstead et al. (1988) explored the impact of gender stereotypes on the evaluations of instructors. Their findings revealed that female instructors who demonstrated warmth—such as by smiling or engaging in informal social interactions with students—received more favorable evaluations. These behaviors reinforced the stereotype of women as nurturing and approachable, suggesting that female instructors are often held to different standards.

In contrast, male instructors’ evaluations were largely unaffected by similar behaviors, highlighting a gender bias in how professional competence is perceived. Female instructors, therefore, may be expected to display warmth to receive positive evaluations, while male instructors are judged more on authority and competence. For example, Renström and colleagues (2021) examined how gender stereotypes influence student evaluations of teaching (SET), showing that female lecturers are evaluated based on adherence to traditional gender roles. Feminine traits (e.g., nurturing) are linked to higher likability, while masculine traits (e.g., assertiveness) are associated with competence. However, research by Khokhlova et al. (2023) challenges earlier findings by showing that male virtual instructors in higher education received significantly higher ratings than female virtual instructors, particularly on traits such as enthusiasm and expressiveness—traits typically associated with female instructors.

In addition to gender, racial stereotypes also heavily influence instructor evaluations by students. For example, a study conducted by Campbell (2023) focused on racial disparities in teacher evaluations within North Carolina’s Teacher Evaluation System (TES), specifically among female teachers. The findings highlight that Black female teachers consistently received lower classroom observation ratings compared to White female teachers, despite exhibiting similar levels of teaching effectiveness. In addition, the intersection of race and gender in student evaluations is further evidenced by Reid (2010), who analyzed ratings from RateMyProfessors.com. Minority faculty, particularly Black and Asian professors, received lower ratings in overall quality, helpfulness, and clarity compared to their White counterparts. These evaluations were often skewed by racial stereotypes, despite the actual effectiveness of the instructor. The results also showed that gender played a less pronounced role overall, but Black male professors were evaluated more harshly than other groups, which highlights the compounded impact of both race and gender in instructor evaluations.

Furthermore, Anderson (2010) examined the double-layered impact of race and gender on student perceptions of professors. The study found that female professors, particularly Latina professors, were rated higher in warmth when teaching traditionally feminine courses such as composition. However, Latina professors faced polarized evaluations depending on their teaching style, a phenomenon known as response amplification. Students either excessively praised or penalized them based on whether their teaching conformed to stereotypical expectations, revealing how both race and gender intersect to influence student perceptions.

As another example, Basow et al.(2013) examined how race and gender stereotypes influence both student evaluations and academic performance. The study found that Black and female professors consistently received lower evaluations compared to their White and male counterparts, despite delivering similar teaching performance. Additionally, female professors were expected to display nurturing behaviors in order to receive positive evaluations, while male professors were rewarded for demonstrating authority and competence. These stereotypes not only skewed evaluations but also affected students’ academic outcomes. Specifically, students performed better when their professors aligned with societal expectations. For example, male or White professors were perceived as more competent and, therefore, more effective in enhancing student performance. In contrast, minority professors, especially Black women, faced biases that undermined their credibility and effectiveness, ultimately negatively impacting student learning outcomes.

In conclusion, while the literature demonstrates that racial and gender stereotypes significantly influence student evaluations of instructors, there is no clear consensus on how these biases affect students’ learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes). To address this gap, the present study aims to explore which gender and racial types of pedagogical agents are most preferred by students and result in the best learning experiences and outcomes, contributing to a deeper understanding of the impact of these stereotypes in educational settings.

Expanding on prior research, this study examines the design and implementation of pedagogical agents that vary in both race and gender. Using state-of-the-art 3D modeling, a female human instructor in an instructional video was digitally transformed into six virtual agents, encompassing three racial backgrounds (Asian, Black, and White) and two gender identities (Female and Male). The resulting six agents—Asian Female, Asian Male, Black Female, Black Male, White Female, and White Male—serve as the foundation for analyzing how race and gender differences in virtual instructors may shape students’ learning experiences, including affective and social interactions, as well as cognitive learning outcomes. By incorporating these diverse pedagogical agents, this study builds upon existing work on instructor stereotypes, providing further insight into their influence on students’ perceptions and engagement in digital learning environments.

This study is informed by insights from Social Role Theory and prior research (Eagly & Wood, 2012; Kierstead et al., 1988), which highlight how gender and racial stereotypes influence the perception and evaluation of instructors. By incorporating diverse virtual agents, this study provides a nuanced exploration of whether students’ interactions with these agents also reflect the biases commonly observed in evaluations of human instructors. Furthermore, this approach addresses a critical gap in the existing literature by investigating how virtual agents of varying racial and gender identities may differentially influence the effects of stereotypes in multimedia learning environments, helping us to identify the most preferred and effective pedagogical agent type for enhancing student learning. The broader impact of this research could guide the development of more inclusive and effective multimedia learning environments, tailoring pedagogical agents to meet the diverse needs of students and potentially reducing the negative effects of stereotypes on learning.

Alliance Hypothesis and Intergroup Bias Theory

According to the Alliance Hypothesis, humans are naturally inclined to form alliances with individuals who share similar physical characteristics, such as race and gender, promoting cooperation and collective success (Taylor et al., 1978). This theory argues that racial and gender categorization plays a role of visual cue that influences the form of alliance, subsequently shaping social behavior and group dynamics (Kurzban et al., 2001; Pietraszewski, 2021; Taylor et al., 1978). Evolutionary theories such as kin selection and reciprocal altruism (Eberhard, 1975; Michod, 1982) provide explanations for the Alliance Hypothesis, which proposes that humans have an innate tendency to align with genetically similar individuals, thereby increasing the likelihood of mutual support and collaboration.

Numerous studies provide support for the Alliance Hypothesis, demonstrating that individuals naturally form groups with clear boundaries based on superficial and context-dependent cues like race, gender, clothing, or speech. For instance, Taylor et al. (1978) showed that participants categorized others based on race and gender while observing a group discussion, making more errors within racial or gender categories than between them, suggesting a tendency to minimize differences within groups and exaggerate differences between them. Similarly, additional research (Cosmides et al., 2003; Pietraszewski, 2009, 2016, 2021) revealed that people can quickly and automatically identify racial and gender categories, often associating these characteristics with alliances.

Pietraszewski’s (2021) study, for example, demonstrated that when participants had no clear team memberships, they defaulted to race as a basis for forming alliances. However, when team affiliations were clear, race categorization was temporarily suppressed, though gender continued to strongly influence alliance formation. Together, these findings emphasize that people tend to minimize within-group differences and exaggerate between-group differences, using race and gender as key alliance markers.

Building on this, Intergroup Bias Theory suggests that these alliance formations can extend into intergroup biases, emphasizing that individuals tend to favor their own group (the in-group) over other groups (the out-group) based on characteristics such as race and gender, particularly when social hierarchies or group statuses are perceived as unstable (Hewstone et al., 2002). For example, Social Dominance Theory (SDO) explains that men often exhibit higher levels of intergroup bias due to their greater social dominance orientation. Men, compared to women, are more likely to promote hierarchical distinctions between groups, reflecting stronger gender-based intergroup biases (Sidanius et al., 2000). Similarly, racial biases also emerge, with individuals showing a tendency to favor their racial in-group. Social dominance theorists have found that individuals with high SDO across racial groups tend to support systems that maintain societal hierarchies, which corresponds with heightened racial bias (Hewstone et al., 2002). These findings illustrate how both race and gender influence intergroup bias, shaping social behavior and preferences, especially when group status is perceived as unstable or at risk.

However, previous studies on the Alliance Hypothesis and Intergroup Bias have primarily focused on real human interactions rather than virtual agents, and they have not extensively explored educational contexts. This leaves a gap in research regarding the application of these theories to pedagogical agents, particularly pedagogical agents in educational settings. Specifically, there is limited understanding of how these theories influence students’ learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes) in multimedia environments taught by pedagogical agents of different racial and gender types. In this study, we intended to extend the scope of the Alliance Hypothesis and Intergroup Bias by investigating whether students exhibit similar tendencies and biases when evaluating their instructors—specifically, pedagogical agents with varying racial and gender characteristics. We aimed to explore whether students show more positive emotions and stronger social connections with agents who share their race and/or gender, and if so, whether these tendencies and biases influence their learning outcomes.

Matching Hypothesis

In line with the Alliance Hypothesis and Intergroup Bias Theory, the Matching Hypothesis posits that individuals are inclined to form relationships with others who share similar characteristics, including demographic factors such as gender and race (Berscheid & Reis, 1998; Kalick & Hamilton, 1996; Murstein, 1980). Numerous studies have supported this hypothesis, emphasizing its impact on educational settings. Specifically, when extended to educational contexts, the Matching Hypothesis posits that students learn better with instructors of the same race and gender as them.

Gender-Matching Effect

Research on the gender-matching effect in students’ learning (Makransky et al., 2019; Zhao & Mayer, 2023a) has shown that students tend to perform better and experience stronger social connections when their instructor, including pedagogical agents, matches their gender. For example, a study by Zhao and Mayer (2023a) investigated how the emotional tone (happy vs. sad) and gender (male vs. female) of machine voices influenced learners’ emotions, social connection with the instructor, and learning outcomes in multimedia lessons. The results provided partial evidence for the gender matching effect, demonstrating that female learners responded more positively to and built stronger social connections with female machine voices than male voices. While the gender-matching design of machine voices did not significantly affect learning outcomes, it enhanced learners’ emotional experiences and social connections with instructors of the same gender.

Similarly, in another study by Makransky et al. (2019), middle school students were tasked with learning about laboratory safety in an immersive virtual reality environment. The participants were divided into two groups: one group interacted with a female virtual agent (Marie), and the other with a drone designed to serve as a male role model. The findings revealed that girls performed significantly better with the female agent, achieving higher retention and transfer scores, while boys also performed better when interacting with the male-representing drone. These results suggest that the gender-specific design of pedagogical agents can improve learning outcomes, especially when the agent’s gender aligns with the learner’s gender.

Solanki and Xu (2018) also revealed that female instructors positively influence female students’ motivation, serving as role models who enhance engagement and foster identity congruence. Specifically, while female students generally underperform compared to male students in these courses, the presence of female instructors slightly reduces this performance gap, which helps narrow gender disparities in interest and persistence in STEM fields.

Overall, these studies underscore the potential important role of gender-matching effect in educational settings, where gender congruence between students and instructors can enhance both learning experiences (i.e., affective and social processes) and academic performance (i.e., cognitive processes).

Race-Matching Effect

Previous research on the race-matching effect has shown that students, particularly those from minority groups, tend to benefit when their instructors share their racial background (de Albuquerque Rocha et al., 2024; Egalite et al., 2015; Gershenson et al., 2021; Harbatkin, 2021; Joshi & James, 2022). In their book,

In addition, prior research findings have also highlighted the positive impact of race-matching between students and instructors on learning performances. For instance, Egalite et al. (2015) utilized a large dataset from the Florida Department of Education to examine the academic performance of students in grades 3–10. Their findings revealed that students, especially Black and Asian/Pacific Islander students, performed better in both math and reading when their teachers matched their racial or ethnic background. This race congruence was particularly beneficial for minority students in lower-performing schools. Additionally, Harbatkin (2021) investigated the impact of race matching on course grades, demonstrating that Black students, in particular, achieved better academic outcomes when they were taught by teachers of the same race. The effects were especially notable for lower-performing students, suggesting that race matching may help mitigate achievement gaps.

Overall, these studies highlight the positive impact of race-matching between students and instructors, suggesting that a more racially diverse and inclusive teaching workforce could enhance academic outcomes and better meet the needs of students from diverse backgrounds, particularly those from underrepresented groups.

Research Gaps

While previous studies supporting the Matching Hypothesis in educational settings have primarily focused on its impact on cognitive processes measured by students’ learning outcomes, it is equally important to explore how race and gender-matching designs influence students’ learning experiences, indicated by students’ emotional experiences and social connections during learning. To address this gap, the present study includes a supplemental exploratory analysis that investigates not only the effect of gender and race-matching pedagogical agents on students’ learning outcomes but also on their overall learning experiences.

Theory and Predictions

Pedagogical agents, with their flexibility to adapt race, gender, and emotional expressions through facial cues and voice tones, provide a unique opportunity to create more diverse, inclusive, and customizable learning experiences. This flexibility may lead to different learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes when compared to human instructors. Since this study is in its exploratory phase and prior research has shown inconsistent findings regarding the impact of race and gender stereotypes on instructor evaluations and learning outcomes, we have chosen to frame our investigation through research questions rather than specific hypotheses.

These research questions are grounded in the framework of the Cognitive-Affective Model of Learning with Media (Lawson & Mayer, 2021; Mayer, 2022; Moreno & Mayer, 2007; Zhao & Mayer, 2023a, 2023b, 2024). According to this model, meaningful learning with media follows a cascading process that incorporates affective, social, and cognitive processing, ultimately resulting in improved learning outcomes. Specifically, in this study,

Learning Outcomes: Cognitive Processes

Research Question 1

Do students achieve different learning outcomes from a video lesson delivered by pedagogical agents compared to a real human instructor? Specifically, we aimed to assess differences in retention and transfer posttest scores across the seven video lesson conditions (i.e., Asian female agent, Asian male agent, Black female agent, Black male agent, White female agent, White male agent, and the original human instructor). Our goal was to compare the effectiveness of the original human instructor with the various types of pedagogical agents in terms of their impact on students’ learning outcomes. According to the Media Equation Hypothesis, we expected that pedagogical agents could be as effective, or perhaps even more effective, than human instructors in enhancing students’ learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes).

Research Question 2

Are the learners’ learning outcomes affected by the pedagogical agents’ gender and/or race? Specifically, we are particularly interested in exploring the race and gender of the pedagogical agents to determine which combination of race and gender is most effective in facilitating student learning.

Learning Experiences: Affective Processes

Research Question 3

Do pedagogical agents lead to different felt emotions of the learners compared with a real human instructor? Specifically, we aimed to assess differences in students’ ratings of their own positive and negative emotions during learning across the seven video lesson conditions (i.e., Asian female agent, Asian male agent, Black female agent, Black male agent, White female agent, White male agent, and the original human instructor). Our goal was to compare the impact of the original human instructor with the various pedagogical agents on students’ emotional experiences during learning.

Research Question 4

Are the learners’ felt emotions affected by pedagogical agents’ gender and race? Specifically, we aimed to investigate the race and gender of the pedagogical agents to determine which combination is most effective in enhancing students’ emotional experiences during learning.

Learning Experiences: Social Processes

Research Question 5

Are the learners’ social connections with the instructor different for pedagogical agents compared with the real human instructor? Our specific goal was to examine differences in students’ ratings of perceived social connections with the instructors across the seven video lesson conditions (i.e., Asian female agent, Asian male agent, Black female agent, Black male agent, White female agent, White male agent, and the original human instructor). We aimed to compare the influence of the original human instructor versus the various pedagogical agents on how socially connected students felt to their instructors. Based on research into racial and gender stereotypes in instructor evaluations, we anticipated that female pedagogical agents may be generally preferred, as female instructors are often perceived as more supportive. Similarly, White pedagogical agents might be favored due to their perceived credibility, which is often rated higher than that of minority instructors.

Research Question 6

Are the learners’ social connections with the instructor affected by the pedagogical agents’ gender and race? Specifically, we aimed to investigate the race and gender of the pedagogical agents to determine which combination best facilitates the development of a strong social connection between students and the agent.

For the exploratory analysis, we further examined the potential impact of gender and race matching on students’ learning experiences (i.e., affective and social processes) and learning outcomes (i.e., cognitive processes) when interacting with pedagogical agents of varying gender and race. Building on the Alliance Hypothesis, Intergroup Bias Theory, and the Matching Hypothesis, we expected students to exhibit a preference for pedagogical agents that share their own race or gender, potentially demonstrating a race and gender matching effect on both learning experiences and outcomes. Additionally, we compared the overall effectiveness of pedagogical agents against a real human instructor by consolidating data from all pedagogical agent groups and comparing it to the data from the original human instructor group.

Method

Participants and Design

The participants were 229 undergraduate students from the psychology subject pool at a university in California, in which they fulfilled a course requirement by participating. Concerning gender, 153 identified as female, 72 identified as male, 3 identified as other gender, and 1 preferred not to say. Concerning race and ethnicity, 60 identified as Asian, 5 identified as Black, 72 identified as Hispanic/Latino, 4 identified as Indian, 49 as White, 39 as other ethnicity, and 2 preferred not to say. The mean age was 19.25 years (

To ensure the sample size was sufficient for detecting meaningful effects, an a priori power analysis was conducted using G*Power 3.1.9.4. The analysis was based on a one-way ANOVA with seven groups, an alpha level of 0.05, power of 0.80, and a medium effect size (

In a between-subjects design based on the characteristics of the instructor in a 9-min video lecture on chemical bonding participants were randomly assigned to one of seven groups: 33 participants received the original lesson with a real human instructor (original group), 32 viewed the lesson with an Asian female agent, 33 viewed the lesson with an Asian male agent, 33 viewed the lesson with a Black female agent, 32 viewed the lesson with a Black male agent group, 34 viewed the lesson with a White female agent, and 32 viewed the lesson with a White male agent group. The dependent measures in this study included posttest scores (i.e., retention and transfer scores), ratings of learners’ felt emotions, and ratings of learner’s partnership connections with the instructor.

Materials

The materials included a perceived prior knowledge questionnaire, seven versions of a 9-minute video lesson about chemical bonds (i.e., original lesson, Asian female agent lesson, Asian male agent lesson, Black female agent lesson, Black male agent lesson, White female lesson, and White male lesson), Positive and Negative Affect Schedule (i.e., PANAS; to measure the students’ felt emotion), Agent Persona Instrument (i.e., to assess the social partnership connection of learners with the instructor), and demographics survey. All research materials were published as a Qualtrics survey and presented on Dell or iMac desktop computers in individual cubicles in a research lab.

Perceived Prior Knowledge Questionnaire

The perceived prior knowledge questionnaire assessed participants’ knowledge of chemistry before taking the video lesson. The first question asked participants to rate how much knowledge they think they have about chemistry (i.e., “Please rate your knowledge of chemistry:”), on a scale of 1 (very little) to 5 (very much). The second question asked participants to check any of nine statements about their experience or understanding of chemistry knowledge that applied to them (i.e., “I have taken a chemistry class before.” or “I know what endothermic means.”). The perceived prior knowledge score was calculated by summing the rating of the first question and the number of items chosen in the second question together. The Cronbach’s alpha showed an acceptable reliability level,

Video Lessons

There were seven versions of a 9-minute video lesson that provided an introduction to three types of chemical bonds and their formation processes. Each of the seven versions of the video involved an instructor standing at a podium next to a series of slides as they lectured. All versions maintained the same script and slides arranged in the same way, ensuring content consistency across the groups.

The original version was excerpted from a UCI Open course lecture on general chemistry available on YouTube (https://youtu.be/6GjYGd-k32U?t=92; Brindley, 2013), spanning from 1:32 to 10:46, with the UCI logo and the professor’s name obscured for this study. The instructor was a White female.

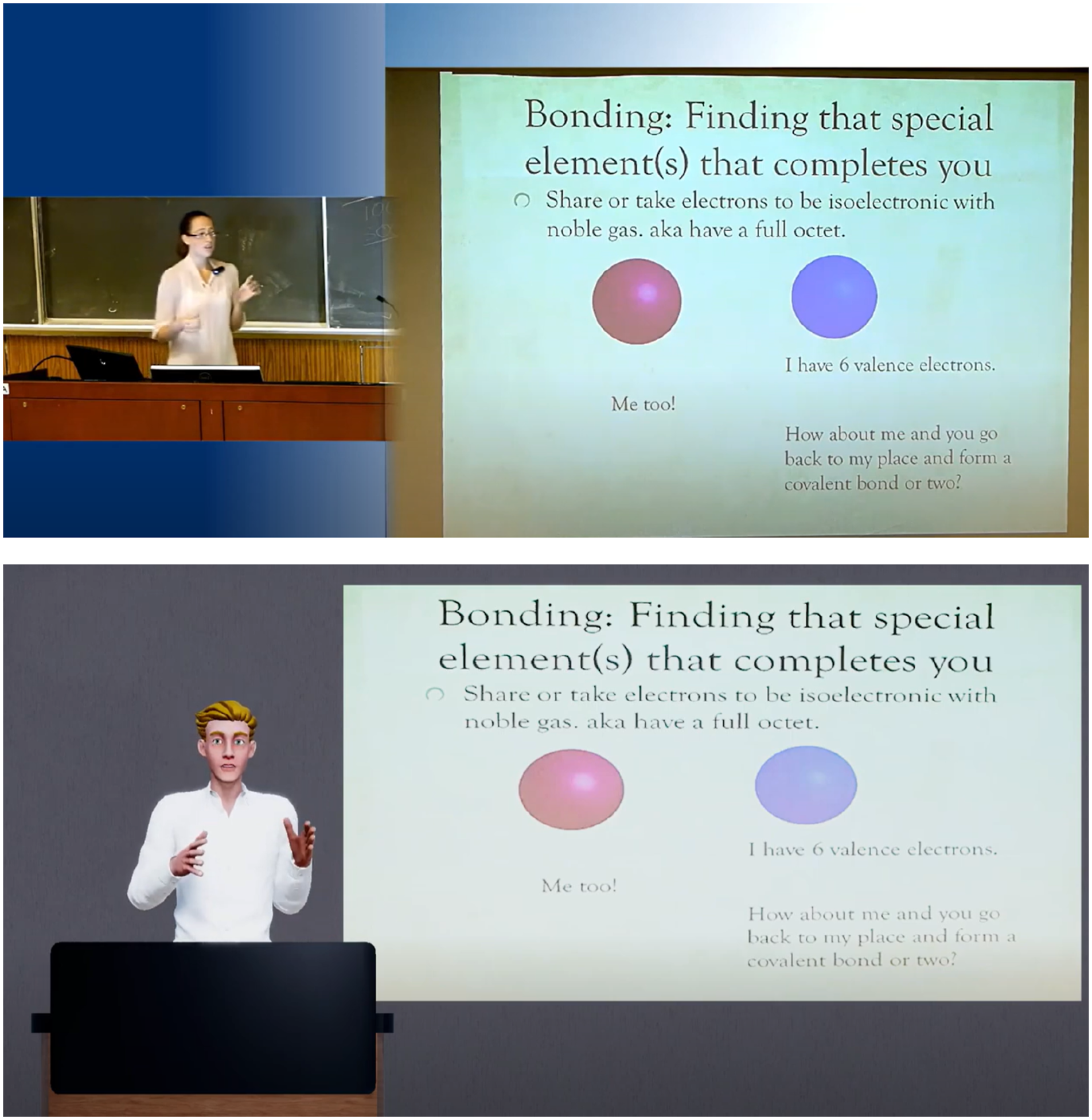

Six modified versions of the original video were created by replacing the human instructor with a 3D animated pedagogical agent that was either Asian female, Asian male, Black female, Black male, White female, or White male. Versions with a female agent used the same voice as in the original version whereas versions with a male agent were given a corresponding synthesized male voice, while the script remained unaltered. Example screenshots of the original video lesson and modified video lesson are shown in Figure 1. Access to the seven versions of the video lectures is available at the following link: https://osf.io/zy2tm/?view_only=9e6e67ec00434d15aa052be8d66ccbc8. Example screenshots of the original video lesson and a modified video lesson with a pedagogical agent.

The six animated agents were selected based on Zhao and colleagues’ previous research (2024), which explored the degree to which people could relate to and recognize the race/ethnicity and gender of animated pedagogical agents intended to represent different races/ethnicities and genders. It was found that participants could more accurately identify the agents’ gender (female vs. male) and race (Asian, Black, and White) rather than their ethnicity (Indian and Hispanic). Therefore, in this study, we used agents from Zhao and colleagues’ earlier work (2024) that were found to be superior in representing the various racial types (Asian, Black, White) and genders (female and male). Specifically, the six agents selected—Asian female, Asian male, Black female, Black male, White female, and White male—were chosen based on two main criteria: first, the agent supported high accuracy in participants’ being able to identify their racial and gender types; and second, the agent supported high ratings on scales for human-likeness and likability. In short, within each of the six categories of agents, we selected the one that Zhao and colleagues (2024) found displayed the most recognizable racial and gender characteristics and was rated highest in likability and human-likeness. Example screenshots of the six agents are shown in Figure 2. Example screenshots of the six agents.

To integrate the pedagogical agents into the video lecture, we processed the original video. For the motion of the pedagogical agents, we extracted the instructor’s movements from the original video and applied them to the pedagogical agents. Specifically, using a deep learning-based video-to-motion extraction method, we obtained the instructor’s motion data. Then, we retargeted it to the pedagogical agents. Additionally, to enhance the realism of the pedagogical agents, we applied eye movements, such as blinking and gaze shifts, and lip-sync animation based on the original lecture’s script. Furthermore, we added motions like pressing a button when the slide image changed to make the pedagogical agents appear more natural. Regarding the voice of the pedagogical agents, we aimed to preserve the instructor’s voice from the original video. To achieve this, we extracted the audio file from the original lecture and used it for female pedagogical agents. For male pedagogical agents, we employed Praat (Boersma, 2011) to convert the audio file to a male voice. We normalized it to the recommended 23 loudness units relative to full scale (LUF) for consistent perceived loudness (EBU–Recommendation, 2011). Moreover, we designed a 3D virtual classroom as the environment for the video lesson. Specifically, we placed a podium in front of the pedagogical agent and positioned a slide screen on the right side of the pedagogical agent.

Positive and Negative Affect Schedule (PANAS)

To measure the positive and negative emotions experienced by students, the study utilized the Positive and Negative Affect Schedule (PANAS), a well-established instrument in psychology for measuring individuals’ emotional states and moods (Plass et al., 2020; Watson et al., 1988). The PANAS comprises 20 emotion-related words that express either positive (e.g., “excited”;

Agent Persona Inventory (API)

The Agent Persona Instrument (API) evaluated participants’ perceptions of their social connection with the instructor, based on a previous study by Ryu and Baylor (2005). This survey aimed to explore whether learners could build a partnership connection (i.e., social connection) with the instructor (i.e., the human instructor vs. the various 3D agents) and which types of instructors were most effective in fostering a stronger partnership connection. The API survey comprised 23 items, categorized into four sub-scales. The first sub-scale encompassed ten items that evaluated the instructor’s ability to facilitate learning (e.g., “The instructor helps me concentrate on the presentation.”;

Posttest

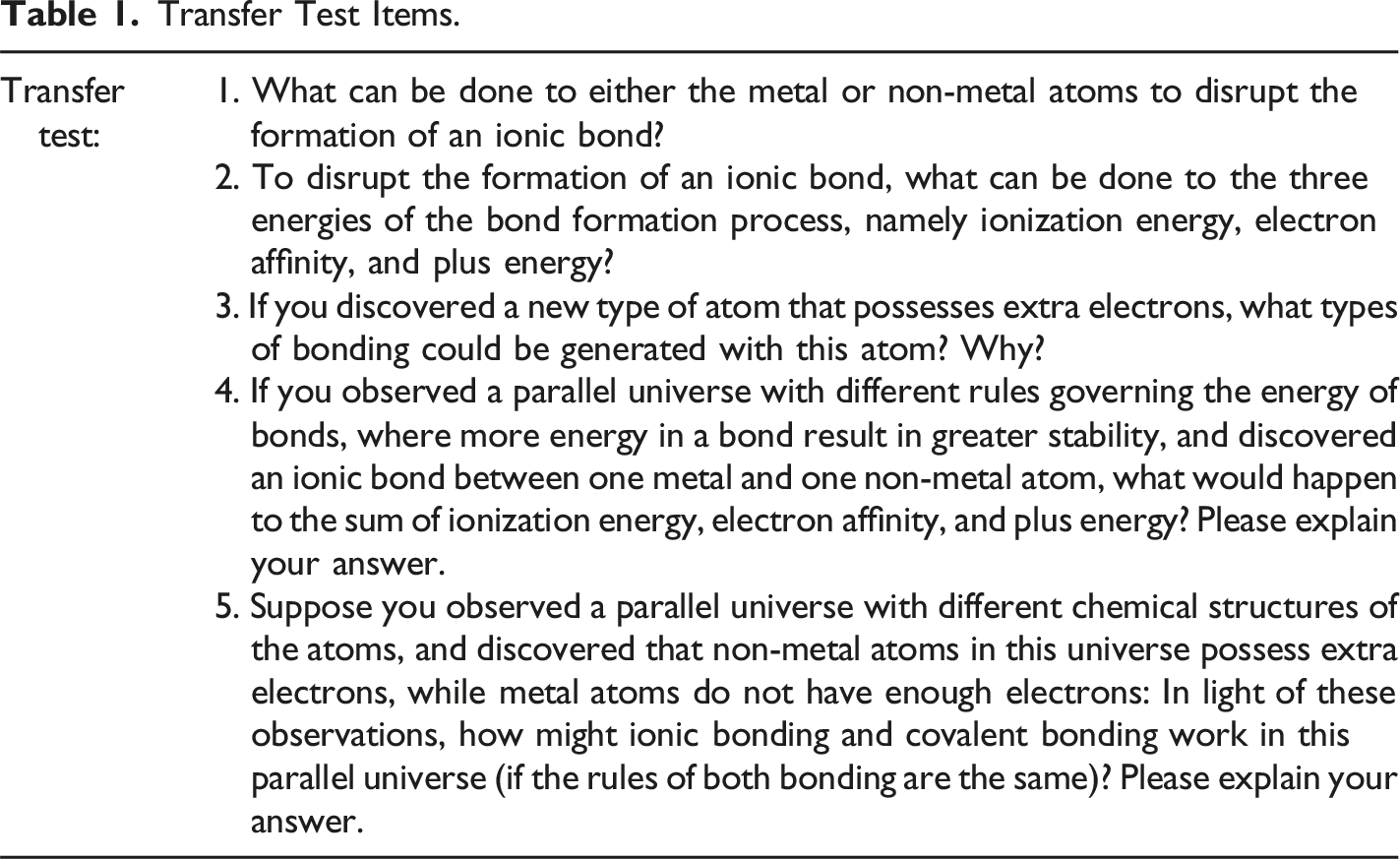

The posttest included both a retention test and a transfer test, with all questions and grading rubrics accessible in the OSF repository: https://osf.io/zy2tm/?view_only=9e6e67ec00434d15aa052be8d66ccbc8. The retention test consisted of 10 multiple-choice questions designed to evaluate students’ ability to recall the conceptual knowledge presented in the lesson, aligning with the cognitive theory of multimedia learning’s selection process (Mayer, 2022). In the retention test, participants selected the correct answer from four options per question, each reflecting the fundamental concepts of chemical bonding discussed in the video lesson. An example question from the assessment is: “What type of bonding is ionic bonding?” or “What is ionization energy?” The question is followed by four multiple-choice options: (A) Metal + Metal, (B) Non-Metal + Non-Metal, (C) Metal + Non-Metal, and (D) None of the above. The correct answer, in this case, is (C), Metal + Non-Metal, which accurately describes the nature of ionic bonding. No time limit was required for the retention test responses. Participants earned one point for each correct answer, yielding a maximum possible score of 10. Cronbach’s alpha was 0.67.

Transfer Test Items.

Demographics Questionnaire

Finally, the demographic questionnaire requested participants to indicate their age, gender, and race/ethnicity (i.e., White/Caucasian, Black/African American, Asian, Hispanic/Latino, Indian, and Other).

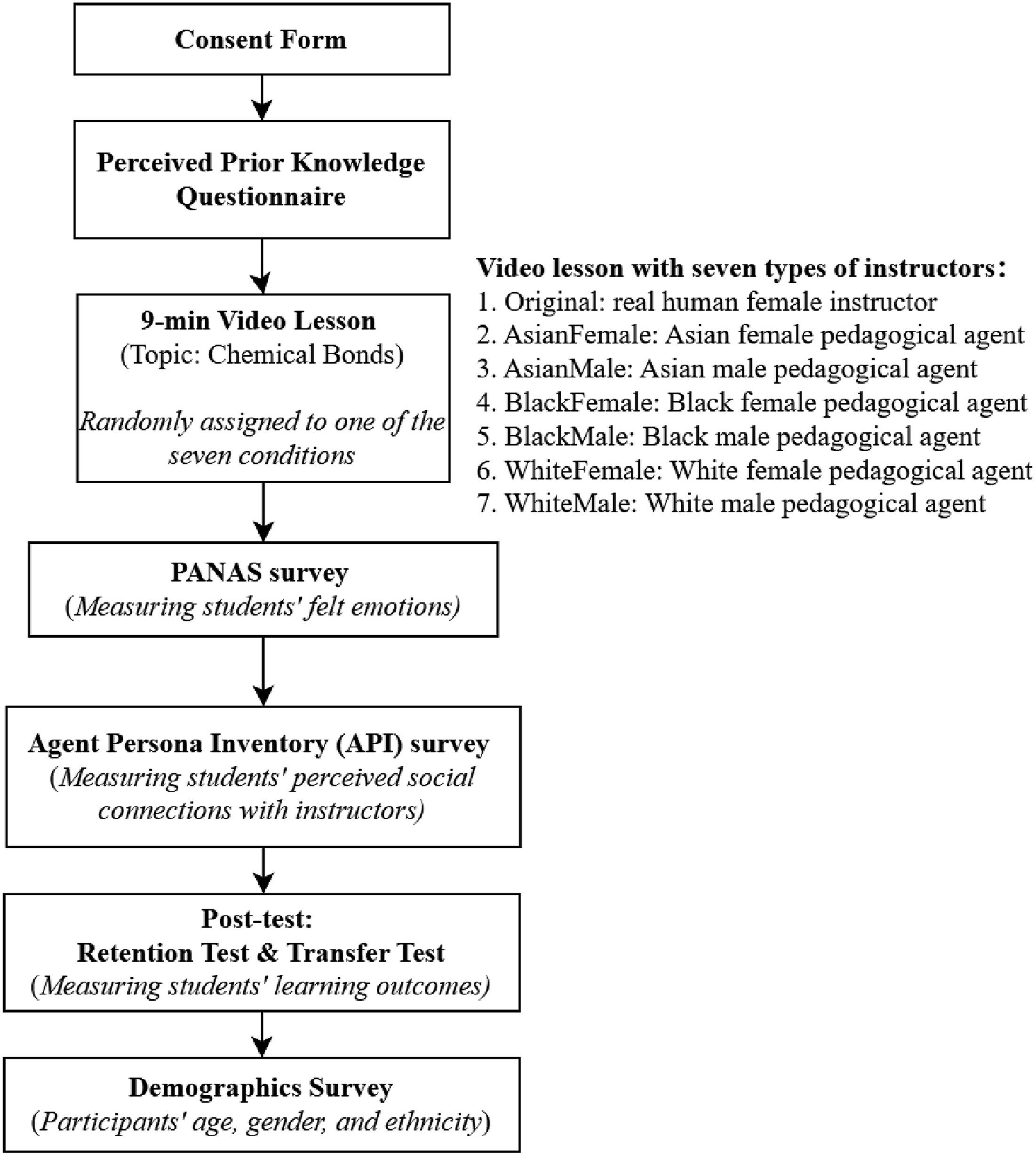

Procedure

Participants were solicited through SONA, a computer-based automated participant scheduling system, with up to four participants scheduled for each session. Each participant was randomly assigned to either the original video lesson or one of the six modified versions. The study took place in a psychology laboratory equipped with five Dell or iMac desktop computers, each stationed within a visually isolated cubicle. The experiment was conducted using Qualtrics to present all material on the computer screen.

Upon their arrival, participants were directed to a computer displaying the initial Qualtrics survey page, where they signed a consent form outlining the study’s general objectives. Following this, they completed the perceived prior knowledge questionnaire. Then, participants were instructed to attentively watch one of the seven versions of the 9-minute video lesson about chemical bonds corresponding to their treatment group, with instructions that it would be followed by a test. Depending on their assigned group, they viewed either the original video lesson or one of six versions of modified video lessons. These video lessons played without the option to stop or rewind. After watching the video, participants proceeded to fill out the PANAS and API surveys, followed by the posttest. This posttest consisted of 10 multiple-choice questions assessing retention, with no time limit, and five short-answer questions evaluating transfer knowledge, with a three-minute time limit per question. Finally, participants completed a short demographic questionnaire, read a debriefing form describing the study, and were thanked for their contribution. The procedure of this study is illustrated in Figure 3. Experiment procedure.

We received IRB approval and followed guidelines for research with human subjects.

Results

Did the Groups Differ on Basic Characteristics?

As the initial analysis of participants’ basic characteristics, we found no significant differences among the seven groups in terms of age,

Research Question 1: Did Students Achieve Different Learning Outcomes from a Video Lesson Delivered by Pedagogical Agents Compared to a Real Human Instructor?

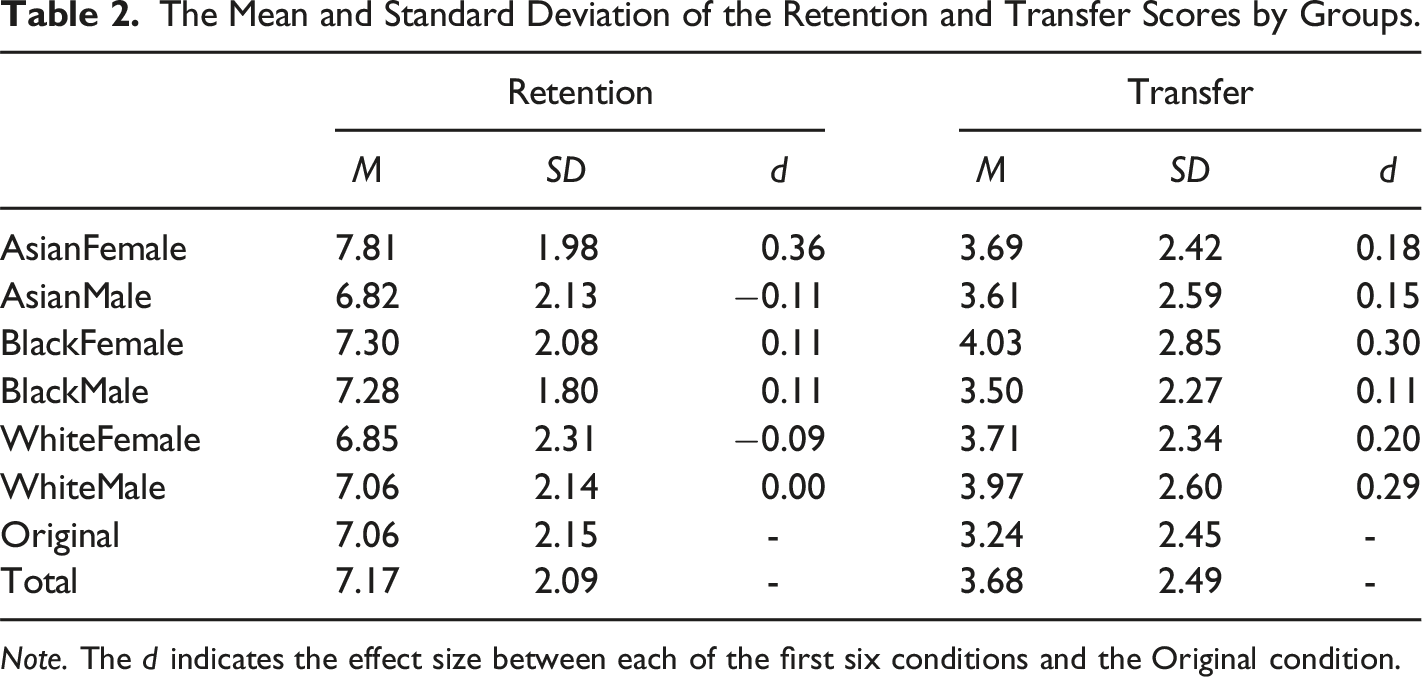

The Mean and Standard Deviation of the Retention and Transfer Scores by Groups.

The analysis revealed no significant differences in retention test scores among the groups,

The findings indicate that video lessons delivered by pedagogical agents did not produce significantly different learning outcomes in terms of retention and transfer compared to lessons delivered by a real human instructor. These results align with the Media Equation Hypothesis, which suggests that learners treat computer-generated characters as they would human instructors, resulting in equivalent educational impacts. While certain groups, such as the Asian female agent group for retention and the Black female agent group for transfer, exhibited slightly higher average scores than the original lesson group, these differences were not statistically significant. This consistency across conditions supports that pedagogical agents can serve as effective alternatives to human instructors, at least for learning outcomes assessed in this context. Moreover, the negligible effect sizes underscore the robustness of this equivalence, suggesting that pedagogical agents could provide scalable instructional solutions without compromising learning efficacy.

Research Question 2: Are the Learners’ Learning Outcomes Affected by the Pedagogical Agents’ Gender and/or Race?

To investigate which pedagogical agent was the most effective in facilitating learning for students, we concentrated on data from the six groups exposed to the modified video lessons. We applied 2 (gender: female vs. male) x 3 (race: Asian, Black, and White) ANOVAs to explore the main effects and interactions of the agents’ gender and racial types on students’ outcomes in both retention and transfer tests.

The analysis revealed no significant main effects for the gender,

In conclusion, these findings demonstrated that the learning performance of students, in terms of retention and transfer, was not influenced by agents of different genders or races, nor by the interaction between these factors. This suggests that the effectiveness of pedagogical agents in facilitating learning is consistent across diverse gender and racial representations. These results underscore the potential for using diverse pedagogical agents in educational settings without concerns about adverse impacts on learning outcomes, thereby supporting inclusive representation in instructional design.

Research Question 3: Did Pedagogical Agents Lead to Different Felt Emotions of the Learners Compared with a Real Human Instructor?

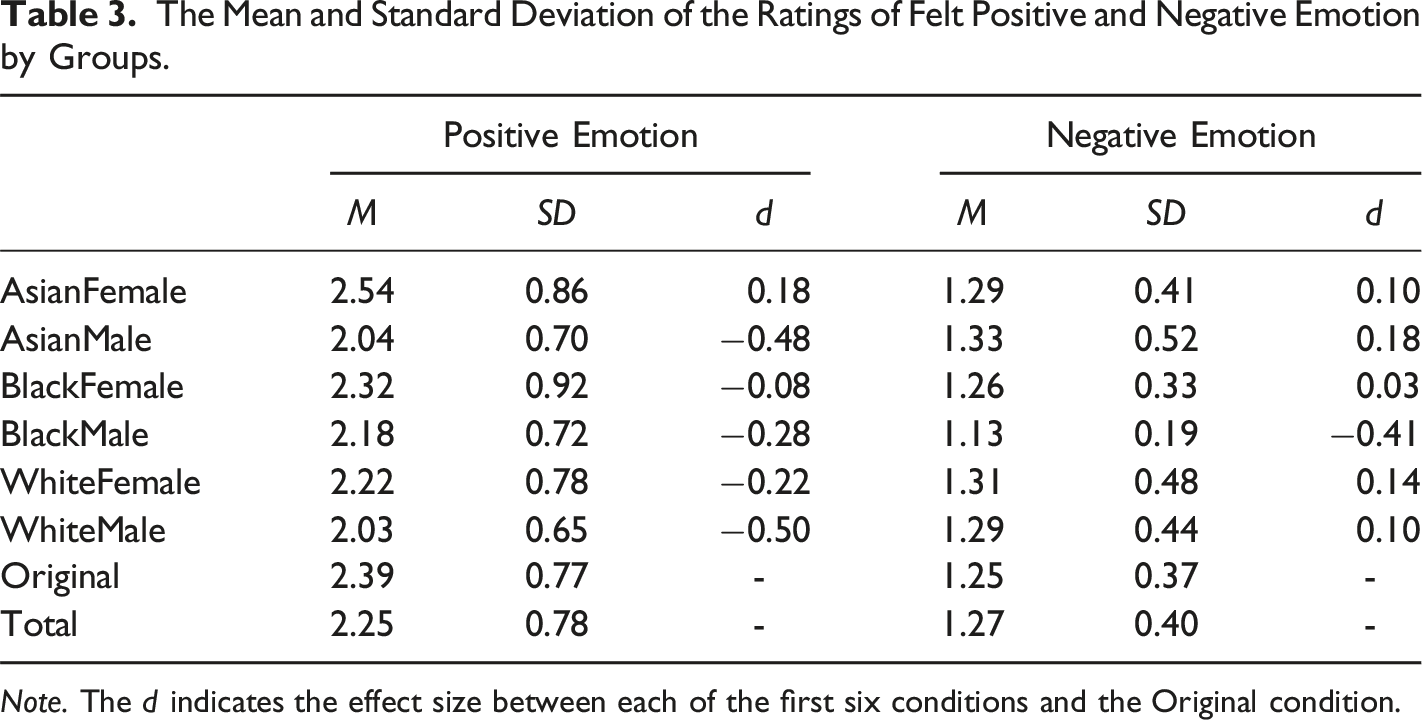

The Mean and Standard Deviation of the Ratings of Felt Positive and Negative Emotion by Groups.

The analysis indicated no significant differences in the ratings of positive emotions across the groups,

In summary, the findings demonstrate that the presence of pedagogical agents did not lead to a significantly different effect on the learners’ emotional experiences compared to a real human instructor. This outcome aligns with the Media Equation Hypothesis, suggesting that participants respond similarly to video lessons irrespective of whether they are taught by pedagogical agents or human instructors. Despite slight variations in mean ratings across conditions, these differences were not statistically significant, suggesting that the emotional impact of the instructional medium remains comparable. These findings provide further evidence that pedagogical agents can effectively replicate the emotional feelings during learning typically associated with human instructors, supporting their viability as scalable, emotionally neutral alternatives in educational settings.

Research Question 4: Are the Learners’ Felt Emotions Affected by Pedagogical Agents’ Gender and Race?

We employed 2 (Gender: Female vs. Male) x 3 (Race: Asian, Black, White) ANOVAs to explore the impact of the gender and race of pedagogical agents on learners’ felt emotions. The analysis for positive emotion ratings revealed a significant main effect for gender,

In summary, the findings indicate that while the variations in gender and race of the pedagogical agents did not significantly influence the students’ negative emotions, female pedagogical agents, regardless of their race, led to more positive felt emotions for learners, compared with male pedagogical agents. This pattern may reflect the influence of gender stereotypes, as Social Role Theory suggests that women are often perceived as more nurturing and emotionally expressive, traits that could foster more positive emotional responses from learners (Eagly & Wood, 2012; Kierstead et al., 1988). However, while gender stereotypes appear to influence affective experiences, the results suggest that racial biases may be less pronounced in this particular context, which contrasts with findings from prior research (Basow et al., 2013; Reid, 2010). The absence of significant effects for race and the interaction between gender and race underscores the intricate interplay between race and gender in shaping learners’ emotional responses.

Research Question 5: Are the Learners’ Social Connections with the Instructor Different for Pedagogical Agents Compared with the Real Human Instructor?

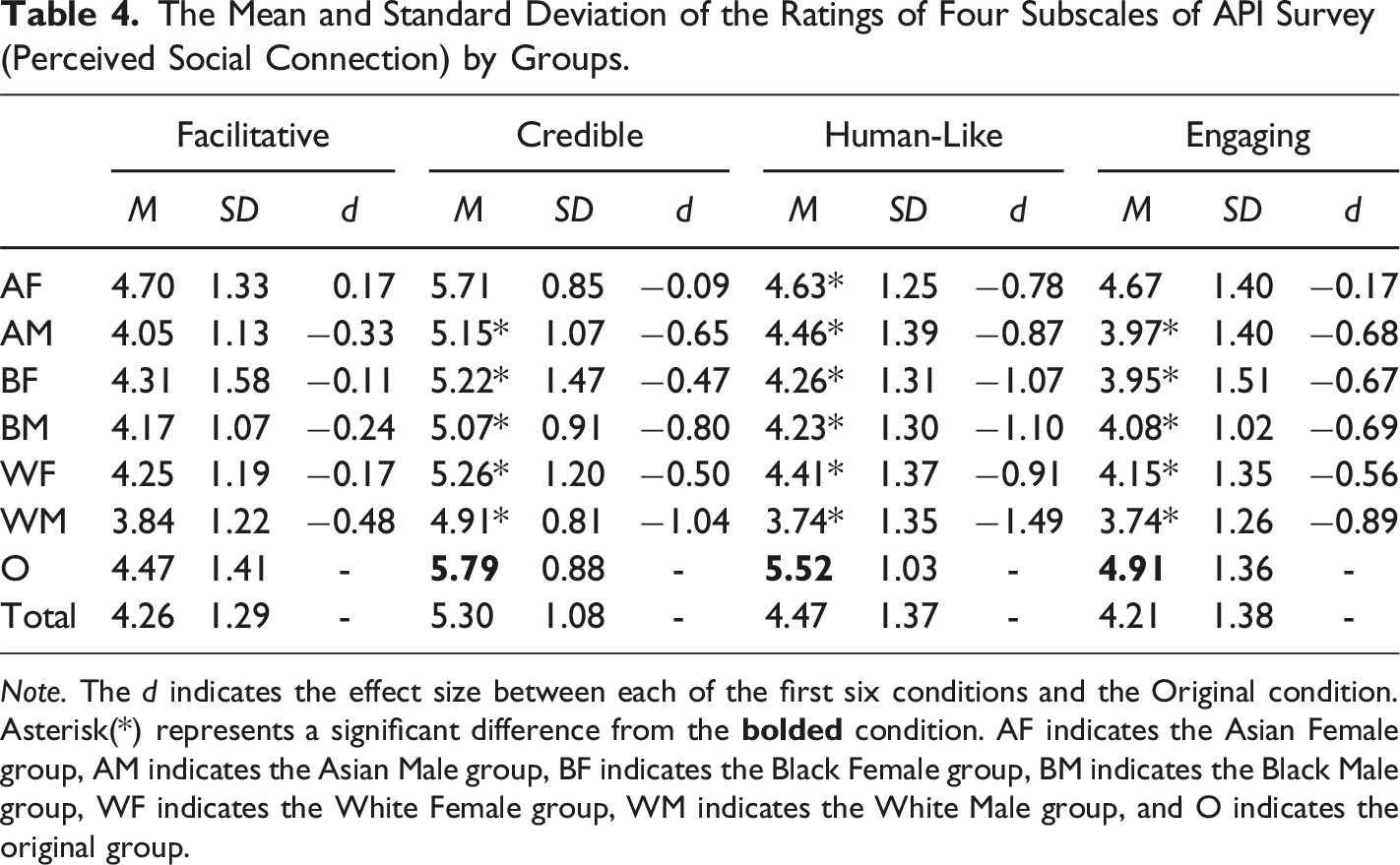

The Mean and Standard Deviation of the Ratings of Four Subscales of API Survey (Perceived Social Connection) by Groups.

Although our analysis did not reveal any significant differences in learners’ perceptions of how well the video lessons facilitated learning across the various groups,

Significant differences were found in the ratings of human-likeness among the seven groups,

For the ratings of how engaging the instructor was, there was a significant difference among the groups,

In conclusion, while students perceived the pedagogical agents in the modified lessons as equally capable of facilitating learning as the real human instructor in the original lesson—consistent with the Media Equation Hypothesis—they consistently rated the human instructor higher in credibility, human-likeness, and engagement. These findings suggest that learners still tend to build a stronger social connection with a human instructor than with a virtual instructor. Notably, the Asian female agent received the highest ratings for credibility, human-likeness, and engagement among all the pedagogical agent groups. This finding suggests that the perceived social connection with the Asian female agent was less negatively impacted compared to other agents, which may be attributed to the interplay of cultural and gender-based stereotypes (Eagly & Wood, 2012; Kierstead et al., 1988).

Research Question 6: Are the Learners’ Social Connections with the Instructor Affected by the Pedagogical Agents’ Gender and Race?

To explore the effect of pedagogical agents’ gender and race on learners’ perceptions of social connection with their instructor, we restricted our analysis to data from the six modified video lesson groups. We applied 2 (Gender: Female vs. Male) x 3 (Race: Asian, Black, White) ANOVAs to determine the interactive effect of different gender and racial types of pedagogical agents on the learners’ perceived social connections. These social connection ratings were assessed by four subscales of the Agent Persona Instrument (API), namely facilitating learning, credibility, human-likeness, and being engaging.

The analysis revealed significant main effects for the influence of the pedagogical agents’ gender on ratings of facilitating learning,

Moreover, for the ratings of being engaging, although there is a marginally significant main effect for gender,

In summary, the findings suggest that learners’ social connections with their instructors were affected by varying the gender of the instructor, with female instructors rated as more facilitative of learning, more credible, and more engaging than their male counterparts. This aligns with Social Role Theory (Eagly & Wood, 2012), which suggests that societal expectations cast women as nurturing and approachable, traits that enhance evaluations of teaching effectiveness (Kierstead et al., 1988). In contrast, learners’ social connections with their instructors were not affected by the varying racial identities of pedagogical agents, in contrast to previous findings that highlighted racial biases in instructor evaluations (Basow et al., 2013; Reid, 2010).

Exploratory Analysis 1: Was There a Gender-Matching Effect?

In this exploratory analysis, we aimed to investigate whether there was a gender-matching effect on various dependent variables. To this end, we excluded three participants who identified their gender as “Other” or preferred not to disclose their gender and then conducted a 2 (student gender: female vs. male) x 2 (pedagogical agent gender: female vs. male) ANOVA to explore the interaction effects on students’ felt emotion ratings, social connection ratings, and learning outcome scores. We viewed an interaction as indicating the potential of a gender-matching effect.

For ratings of felt emotion, we did not find any significant interaction effects between instructors’ gender and students’ gender on students’ felt positive emotion,

In conclusion, our findings did not indicate evidence for a gender-matching effect on students’ learning outcomes, emotional responses, and perceived social connections with instructors. Specifically, students did not respond differently when they received a lesson presented by a pedagogical agent of the same versus different gender as themselves. The lack of significant interaction effects suggests that gender congruence between students and pedagogical agents does not play a meaningful role in shaping learning experiences or outcomes in this context. This finding underscores the robustness of pedagogical agents in supporting diverse learner populations without requiring gender alignment to achieve similar educational outcomes.

Exploratory Analysis 2: Was There a Race-Matching Effect?

In this exploratory analysis, we aimed to explore whether there was a race-matching effect on various dependent variables. Due to the insufficient recruitment of Black participants (

Asian Versus Non-Asian Students

For comparing the race-matching effect between Asian and Non-Asian students, we did not find a significant interaction effect between students’ race and pedagogical agents’ race on students’ felt positive emotion,

However, we did find one marginally significant interaction between students’ race and pedagogical agents’ race on the ratings of being credible,

In summary, although we did not find a race-matching effect between Asian and Non-Asian students on felt emotion, facilitative learning, human-like, engaging, and learning outcomes, the results partially revealed the presence of a racial-matching effect among Asian participants on credibility ratings, reflecting a preference for pedagogical agents that share their racial type.

White Versus Non-White Students

For comparing the race-matching effect between White and Non-White students, there was no significant interaction effect between students’ race and pedagogical agents’ race on students’ felt negative emotion,

However, we identified a significant interaction between the students’ racial backgrounds and the racial types of the pedagogical agents affecting the ratings of felt positive emotion,

Moreover, there was also a significant interaction between the students’ racial backgrounds and the racial types of the pedagogical agents affecting the ratings of engagement,

In summary, these findings indicate that the race of pedagogical agents differentially impacted the positive emotions experienced by Non-White and White students. Although White students rated Black pedagogical agents as more engaging, contradicting the race-matching hypothesis, they generally reported feeling most positive when taking a lesson with White pedagogical agents.

Overall, the findings for both White versus Non-White and Asian versus Non-Asian students offer some evidence that the race of pedagogical agents differentially impacts the positive emotions experienced by students from different racial backgrounds. Notably, there is evidence suggesting that students tend to prefer pedagogical agents who share their racial identity, which partially supports the Matching Hypothesis and Intergroup Bias Theory.

Exploratory Analysis 3: Pedagogical Agents Versus Human Instructor

Finally, we consolidated the data from all six pedagogical agent groups into a single category labeled

The results indicated no significant differences between the pedagogical agents’ groups and the original lesson group in terms of students’ felt positive emotion,

However, in terms of perceived social connections with instructors, although there was no significant difference in the ratings of facilitative learning between the two groups,

Overall, the results indicate that pedagogical agents were generally perceived as less credible, less human-like, and less engaging than the real human instructor. This suggests that inconsistent with the Media Equation Hypothesis, students tend to form stronger social connections with real human instructors than with pedagogical agents, while such preference did not appear to impact their felt emotions or learning outcomes.

Discussion

Empirical Implications

The findings of this study offer insights into the impact of pedagogical agents with varying race and gender types on students’ learning experiences (i.e., affective and social processes) and learning outcomes (cognitive processes), in comparison to a real human instructor. These insights are framed within the Cognitive-Affective Model of E-learning, addressing cognitive, affective, and social processing.

Learning Outcomes: Cognitive Processing

First, regarding the cognitive processing and learning outcomes, the results demonstrated no significant differences in retention and transfer posttest scores between students who learned from pedagogical agents and those who learned from a real human instructor. This finding is consistent with the Media Equation Hypothesis (Reeves & Nass, 1996), suggesting that pedagogical agents can facilitate learning as effectively as real instructors. However, the negligible differences in learning outcomes align with previous research (e.g., Zhao & Mayer, 2023a) suggesting that computer-generated instructors (i.e., machine voice narrators) may not inherently outperform human instructors in promoting cognitive learning. This underscores the need for further exploration of pedagogical agent design to achieve meaningful improvements in cognitive processing.

Furthermore, contrary to the expectations derived from Social Role Theory (Eagly & Wood, 2012), there was no significant impact of the gender or race of the pedagogical agents on students’ retention and transfer learning outcomes, indicating that the agents’ race and gender did not influence students’ cognitive processes. These findings do not align with the previous studies that suggest gender and race stereotypes in educational settings (Basow et al., 2013; Campbell, 2023; Kierstead et al., 1988; Reid, 2010; Renström et al., 2021). For example, Basow et al. (2013) found that Black and female instructors consistently received lower evaluations compared to their White and male counterparts, which in turn impacted students’ academic performance, as students performed better when their instructors aligned with societal expectations. Such discrepancies may stem from the controlled, brief nature of the lessons in this study, suggesting that real-world settings or longer exposure to pedagogical agents might yield different results.

Learning Experiences: Affective Processes

Second, in terms of affective processing, the study found no significant differences in positive or negative emotions between students who learned from various types of pedagogical agents and those taught by a real human instructor. This finding is in line with the Media Equation Hypothesis (Reeves & Nass, 1996). Furthermore, female pedagogical agents elicited more positive emotions compared to male agents, regardless of race. This finding aligns with Social Role Theory (Eagly & Wood, 2012) as well as previous research on gender stereotypes (e.g., Anderson, 2010; Basow et al., 2013; Renström and colleagues, 2021) suggesting that female instructors are often perceived as more nurturing, leading to more positive emotional experiences for students.c However, race did not significantly influence emotional responses, nor did the interaction between gender and race, indicating that the affective processing during multimedia learning was largely unaffected by these factors. The absence of significant racial effects on students’ felt emotions might highlight the diminished salience of race in digital education environments compared to face-to-face education settings. Notably, there was also no significant effect on negative emotions, likely due to the emotional neutrality of the video lessons.

Learning Experiences: Social Processes

Third, in terms of social processing, while both pedagogical agents and human instructors were rated similarly for facilitating learning, the real human instructor was perceived as more credible, human-like, and engaging. This finding challenges the Media Equation Hypothesis by showing that real human instructors may still have an advantage in fostering stronger social connections with students, which indicates a gap in the design of pedagogical agents to match the social rapport of human instructors. Among the different race and gender types of pedagogical agents, female agents received higher ratings for facilitative learning, credibility, and engagement than male agents, indicating that students tended to build stronger social connections with female agents. This finding echoes findings on gender stereotypes favoring women in teaching roles (Renström et al., 2021), suggesting that gender stereotypes may influence social dynamics in learning, with female instructors perceived as more approachable and engaging. However, race stereotypes were not supported, as there was no significant main effect of agent race or interaction between agents’ gender and race. Overall, these findings suggest that gender, more than race, shapes social perceptions in virtual learning environments.

Exploratory Insights on Matching Effects

Additionally, exploratory analyses examined the potential effects of gender and race-matching, alongside a comparison of the overall effectiveness of pedagogical agents versus a human instructor. For the exploratory analysis investigating gender-matching effects, the results revealed no significant interactions between students’ gender and the gender of the pedagogical agents across emotional responses, social connections, or learning outcomes. This suggests that gender congruence did not significantly impact students’ experiences or performance, contrasting with prior research highlighting the gender-matching effect in educational settings (e.g., Makransky et al., 2019; Zhao & Mayer, 2023a). A possible explanation for this discrepancy is that the female pedagogical agents in this study may have been designed with features that made them appear more authentic or approachable compared to the male agents. These design characteristics could have led students to prefer the female agents, irrespective of gender congruence, thereby diminishing any potential gender-matching effect.

The exploratory analysis of the race-matching effect provided limited evidence. The results indicate that the race of pedagogical agents had a differential impact on the positive emotions experienced by Non-White and White students. Notably, White students generally reported feeling most positive when taught by White pedagogical agents, which aligns with research on intergroup bias (Taylor et al., 1978). However, they found Black agents to be more engaging, which contradicts the Matching Hypothesis (Kalick & Hamilton, 1996), while Asian students rated Black agents as less credible, which aligns with the previous findings of the racial stereotypes of minority groups (Basow et al., 2013). Overall, the findings did not consistently support the Matching Hypothesis, underscoring the complexity of the race-matching effect. Specifically, while some students may naturally gravitate toward instructors of similar racial backgrounds, others appear to be more influenced by stereotypes or contextual cues. A potential reason for the limited evidence supporting the race-matching effect could be the straightforward nature of the survey questions used to rate instructors, which may have prompted participants to provide biased responses shaped by social desirability or cultural norms rather than genuine evaluations of the pedagogical agents. These findings highlight the need for further research to untangle the interplay of race, gender, and intergroup biases in shaping learner’s learning experiences and outcomes with pedagogical agents.

Theoretical Implications

From a theoretical perspective, this study makes contributions by extending the application of key frameworks such as the Media Equation Theory (Reeves & Nass, 1996), the Social Role Theory (Eagly & Wood, 2012), the Alliance Hypothesis (Taylor et al., 1978), and the Matching Hypothesis (Kalick & Hamilton, 1996) to pedagogical agents.

The Media Equation Theory posits that learners interact with virtual agents in ways similar to how they interact with real humans. While our findings partly support this theory—showing that pedagogical agents were equally effective as human instructors in terms of cognitive processes (i.e., learning outcomes) and emotional processes (i.e., felt emotions)—the real human instructor was perceived as more credible, and engaging, suggesting that certain aspects of social connection may still be better facilitated by human instructors. This challenges the assumption that virtual agents can fully replicate the social rapport built by real human instructors, emphasizing the need for further research into how pedagogical agents can be optimized to enhance social connections.

In addition, Social Role Theory (Eagly & Wood, 2012) and previous studies of race stereotypes posit that students perceive and evaluate their instructors based on gender and race (Basow et al., 2013; Eagly & Wood, 2012; Kierstead et al., 1988; Reid, 2010). Specifically, female instructors are often perceived as more nurturing and emotionally supportive than male instructors, while male instructors are associated with competence and authority (Eagly & Wood, 2012; Kierstead et al., 1988; Renström et al., 2021). Similarly, racial stereotypes can influence perceptions of credibility and competence, with minority instructors, such as Black instructors, often evaluated less favorably than their White counterparts (Basow et al., 2013; Campbell, 2023; Reid, 2010). These biases can extend to pedagogical agents, as seen in this study, where female agents were rated more favorably than male agents in terms of positive emotions and perceived social connections, reflecting gendered expectations. However, the lack of consistent race effects suggests that racial stereotypes may manifest differently in virtual settings compared to face-to-face interactions, potentially moderated by the digital nature of pedagogical agents. This underscores the importance of designing agents that challenge rather than reinforce societal biases, creating more inclusive and equitable learning environments.

Furthermore, the Alliance Hypothesis (Taylor et al., 1978) and Matching Hypothesis (Kalick & Hamilton, 1996) suggest that learners are likely to favor instructors who share their racial or gender characteristics, raising critical questions about whether these preferences are equally applicable to virtual instructors. Our findings challenge these assumptions, showing limited evidence for the race and gender-matching effects, suggesting that pedagogical agents do not consistently benefit from shared characteristics in the way human instructors might. Notably, some findings (e.g., Asian students rating Black agents as less credible) align with the influence of racial stereotypes, suggesting that implicit biases may also shape learner preferences. This underscores the need for a nuanced interpretation of intergroup dynamics, suggesting that contextual factors, such as the nature of learning tasks and the design of survey instruments, might impact these effects.

Overall, this study not only sheds light on the complexities of applying these theories to pedagogical agents with different racial and gender characteristics but also emphasizes the importance of designing more effective and inclusive educational technologies. The findings related to the Media Equation Theory provide a foundation for understanding the equivalent effect of affective processes (i.e., felt emotions) and cognitive processes (i.e., learning outcomes) between human and virtual instructors. However, the observed influence of race and gender stereotypes on students’ evaluations of various pedagogical agents suggests that learners’ social and emotional responses to agents might be influenced by implicit biases on gender. Meanwhile, the limited support for the Alliance Hypothesis and Matching Hypothesis points to the need for a more nuanced understanding of how intergroup dynamics operate in virtual learning environments. These findings underscore the importance of designing pedagogical agents that account for societal biases and stereotypes to foster a more inclusive and equitable learning environment, thereby better supporting students’ affective, social, and cognitive processing during learning. Future research should focus on enhancing the human-like qualities of pedagogical agents and exploring how to mitigate the influence of stereotypes, ultimately bridging the gap between theoretical predictions and practical applications.

Practical Implications

The findings suggest that while pedagogical agents can deliver similar learning outcomes as real human instructors, there are still key areas where human instructors outperform virtual agents, especially in fostering social connections. This highlights the need for further refinement in the design of pedagogical agents to enhance their credibility, human-likeness, and engagement, thereby bridging the gap between virtual agents and human instructors.

Additionally, the study reveals that students respond more positively to female pedagogical agents, which indicates the importance of considering gender dynamics when designing virtual instructors in multimedia lessons. Based on the results of the study, it would be recommended to provide a more supportive, warmth, and emotionally expressive female pedagogical agent in a lesson for a better learning experience (i.e., enhanced affective and social processing) and learning outcomes (i.e., enhanced cognitive processing) for students.

Furthermore, while race-matching effects were not consistently found, the findings still suggest that pedagogical agents should be designed with cultural sensitivity. This approach can prevent the reinforcement of stereotypes and support the development of a more inclusive learning environment. The design process should account for diverse cultural representations while avoiding oversimplified or stereotypical portrayals, ensuring that agents resonate positively with students from varied backgrounds.

Overall, the findings of this study offer valuable guidance for educators and developers, highlighting the importance of optimizing the design of pedagogical agents to foster positive emotions and stronger social connections with students. Additionally, the study emphasizes the need to address potential biases and stereotypes related to instructors’ race and gender, ensuring that these agents foster more inclusive learning environments that meet the needs of students from diverse backgrounds. By doing so, we can create more effective educational tools that better serve a broad range of learners.

Limitations and Future Directions

Due to the limited number of Black students recruited in this study, the analysis of the Matching Hypothesis was restricted to comparisons between Asian and White students, not including Black students. This limitation affects the generalizability of the findings. Future studies should aim to recruit an equal number of samples from each race type to better understand how race-matching effects manifest across various racial categories, including Black students.

Moreover, although the power analyses have suggested a relatively sufficient sample size for this study, the smaller sample size within each experimental group might still limit the statistical power to detect subtle differences between groups. This could potentially reduce the credibility of the findings. Additionally, the possibility of social desirability bias and individual differences in aesthetic preferences may have influenced participants’ questionnaire responses, potentially impacting the accuracy and reliability of the data. To mitigate these limitations, future studies could expand the sample size and incorporate well-designed qualitative methods, such as participant interviews before and after the experiment, to provide deeper insights and strengthen the interpretation of the results.

In addition, the use of the PANAS scale to measure students’ felt emotions during learning may not have been entirely suitable. PANAS is designed to be more adept at assessing general daily emotions rather than assessing those emotions during the learning process. Therefore, the lack of significant effects for positive and negative emotions in this study might be attributed to the inadequacy of the scale for this context. Future research should consider utilizing other standardized emotion measurement tools that were specifically designed for educational settings to more accurately gauge emotional responses during learning.

Moreover, this study employed only one style of virtual pedagogical agent, raising concerns about the generalizability of the findings to other styles of agents. The results may be specific to the specific agent design used in this study and may not apply broadly to all pedagogical agents. To enhance the generalizability of future research, it would be valuable to explore a wider variety of agent designs, such as less realistic or more playful, cartoonish avatars. This would help determine whether different styles of agents produce varying effects on students’ learning experiences and outcomes—in other words, their affective, social, and cognitive processing.

Another limitation is that the posttest in this study was administered immediately following the lesson without evaluating long-term learning performance. Future research should incorporate delayed posttests, conducted days or weeks after the initial lesson, to gain deeper insights into the lasting effects of pedagogical agents on students’ knowledge retention and transfer.

Finally, the lesson in this study was relatively short (i.e., 9 minutes) and focused on a fundamental chemistry topic (i.e., the formation of chemical bonds). As the design and effectiveness of a pedagogical agent can be influenced by the type of knowledge content, and the brief exposure to the learning materials may not be sufficient to produce a significant impact on students, the brevity of the video and the narrow focus raise concerns about the generalizability of the findings to more formal lectures that cover a broader range of subjects. Future research should explore longer, more complex lessons that better reflect real classroom experiences. Additionally, broadening the scope of the learning materials to include other STEM topics, such as mathematics or biology, as well as non-STEM topics, such as history or social sciences, could offer a more comprehensive understanding of whether and how different types of pedagogical agents uniquely influence learning across a variety of content areas.

Conclusion

In conclusion, this study investigated whether pedagogical agents are as effective as, or more effective than, human instructors for students and whether students show a preference for pedagogical agents of certain genders or races. The findings of this study reveal that while pedagogical agents can deliver comparable learning outcomes to real human instructors, human instructors still foster stronger social connections, challenging the Media Equation Theory. Female agents were more positively received, highlighting gender stereotypes in student-instructor interactions. However, limited evidence was found for the race-matching hypothesis, suggesting the complexity of intergroup biases in learning. These findings emphasize the need for inclusive, well-designed pedagogical agents and call for further research to explore their role in diverse learning environments.

Footnotes

Author Contributions

Fangzheng Zhao and Richard E. Mayer both contributed to developing the design of the study, interpreting the results, and writing the manuscript. Fangzheng Zhao took responsibility for creating the materials, running the participants, and tabulating the data. Nicoletta Adamo-Villani, Christos Mousas, Minsoo Choi, and Klay Hauser contributed to developing the 3D virtual agents, created by Reallusion’s Character Creator, and transform the original female human instructor into six distinct types of pedagogical agents.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by Grant 2201019 from the National Science Foundation.