Abstract

Artificial intelligence (AI) has emerged as a prominent topic in K-12 education recently. However, pedagogical design has remained a major challenge, especially among young learners. Guided by the Zone of Proximal Development theory and AI education research literature, this design-based study proposes an analogy-based pedagogical approach to support AI teaching and learning in upper primary education. This pedagogical approach is centered on human–AI comparison, where humans are gradually shifted from an analogue to a contrast to make visible the attributes, mechanisms, and processes of AI. To evaluate its effectiveness, a quasi-experimental study with mixed methods was conducted. The quantitative comparison shows that the participants in the experimental group learning with the analogy-based pedagogical approach significantly outperformed their peers with the conventional direct instructional approach in all three dimensions of AI knowledge, skills, and ethical awareness. Qualitative analyses further reveal its pedagogical benefits, including demystifying AI through relatable and engaging learning, supporting student comprehension and skill mastery, and nurturing critical thinking and attitudes. The analogy-based approach contributes to the field of K-12 AI education with an age-appropriate, child-friendly pedagogical approach. Notably, AI education should prioritize teaching for student understanding, and AI should be recognized as an independent subject with interdisciplinary applications.

Research Background

Teaching and Learning Artificial Intelligence (AI) in the K-12 Context

Artificial intelligence (AI) has become an essential aspect of modern society, and children in this digital age are increasingly encountering AI in their daily lives (Greenwald et al., 2021). Therefore, it is crucial that students develop a comprehensive understanding of this technology from a young age (Touretzky et al., 2019). In particular, early training in the working principles, ethical implications, and societal impacts of AI technology will encourage individuals to use AI more consciously and safely and become informed and responsible AI practitioners (Ng et al., 2021). In response to these calls, various curriculum frameworks and educational programs have been developed globally to equip K-12 students with AI knowledge, skills, and ethics (United Nations Educational, Scientific, and Cultural Organization [UNESCO], 2022).

Educators and researchers urge that the teaching and learning of AI should move beyond “material tools” and playing and emphasize the holistic development of AI literacy (UNESCO, 2022). AI literacy refers to the knowledge and skills necessary to effectively engage with AI, as well as being able to critically evaluate their economic, ethical, and social implications on various aspects of life (Long & Magerko, 2020). Based on an exploratory review, Ng et al. (2021) conceptualized AI literacy into four dimensions: know and understand AI, use and apply AI, evaluate and create AI, and AI ethics. Correspondingly, a recent global review by UNESCO (2022) showed that education outcomes of government-endorsed AI curricula often include three strands: knowledge, skills, and values and attitudes.

Student understanding plays a critical role in supporting student gain of AI knowledge, skill, and values and attitudes. Student understanding is essential for academic success, in general, and fundamental for student development in AI literacy, in particular. Without sufficient understanding, students would lose a solid knowledge base to use, adopt, and develop AI systems effectively (Long & Magerko, 2020). Moreover, a thorough understanding of the working process and mechanism of AI can further support students in making sense of AI ethics and becoming responsible users of AI (UNESCO, 2022). This is especially relevant as the rapid growth and potential impact of AI on all areas of society require ethical and accountable development and deployment.

The emphasis on student understanding of AI echoes a long-lasting tradition in science, technology, engineering, and mathematics (STEM) education: teaching for student understanding (Wiske, 1998). Teaching for understanding is a pedagogical orientation that focuses on helping students understand the concepts and ideas being taught rather than just memorizing facts or procedures (Blythe et al., 1998). It emphasizes the ability to transfer the understanding to new context and the development of problem-solving skills (Perkins & Blythe, 1994). It has been found effective in promoting student understanding and skills, academic achievement and retention, and learning motivation and interests (Grouws & Cebulla, 2000; Wonu & Gladys, 2017).

Nevertheless, teaching AI for student understanding is by no means an easy task for teachers, as pedagogical design has been a major challenge in K-12 AI education. Considering the complexity and difficulty of the AI subject matter, K-12 students are often intimidated by AI knowledge (Wong et al., 2020). In addition, young students typically do not possess prior technological knowledge and experiences (e.g., knowledge of computer programming and robotics), especially students in primary education (Yang, 2022). Their limited cognitive development, such as the low level of abstract thinking skills, may pose extra challenges for pedagogical design and complicate the teaching and learning process (Dai, 2023). Therefore, the status quo calls for more empirical research and theory-informed practices to navigate the design of teaching and learning AI in the K-12 context.

Using Humans as a Reference to Teach Artificial intelligence

Pedagogical design has been a major research topic in the field of K-12 AI education, as researchers and educators navigate effective teaching strategies and methods to facilitate student learning. In a scoping review, Su et al. (2023) have identified various teaching methods in AI classrooms, such as inquiry-based learning, project-based learning, and game-based learning. In another review by Yue et al. (2022), direct instruction has remained a most popular pedagogy in delivering AI concepts, whereas hands-on, experiential activities are often incorporated to enhance student engagement. From the literature review, while most of current pedagogical approaches involves applying established pedagogical framework in AI classrooms, there appears to be a limited focus on the development of domain-specific pedagogy tailored for upper primary students.

Of particular interest to the present study is to use humans as an analogy, a metaphor, or a reference in teaching AI and Computer Science (CS) concepts. For example, CS instructors often analogize the central processing unit (CPU) to the human brain and the algorithm to the cognitive schema. AI educators use activities like comparing AI devices and AI versus human abilities to enhance primary students’ understanding of the nature of intelligence (Dai et al., 2023b; Heinze et al., 2010; Zimmerman, 2018). With humans as an analogy, students are guided to transform something already known (their understanding of humans) into understanding something new (the intended knowledge).

Using humans as an analogy to teach AI can be justified from several perspectives. First, humans and their life experiences are something most students are familiar with and can relate themselves to. Using humans as an analogy can provide a concrete anchor for students to make sense of and meaning of abstract, complex AI concepts. Second, the human–AI juxtaposition, for example, the competition/war between humans and machines, has long been a popular topic in mass media and popular culture and is well-known to many students. Introducing this popular topic into classrooms can bring fun, excitement, and dynamics into classrooms and create an easy threshold to cross, making AI learning more accessible and inclusive. Furthermore, the analysis of human intelligence has long been a critical source for approaching machine intelligence by AI experts and scientists. In this regard, comparing AI and humans can help students better understand the ideas, attributes, and processes of intelligence and AI (Long & Magerko, 2020).

Meanwhile, some AI researchers and educators warn about the pitfalls of analogizing AI to humans (Luger, 2005; Russell & Norvig, 2021). Although both humans and AI are intelligent agents, they are essentially two distinguished domains. The overuse of human–AI juxtaposition may lead to student misconceptions that AI works entirely like humans in a completely autonomous way (Sulmont et al., 2019). The misconceptions may keep students from developing a higher-order understanding (e.g., an engineering, mathematics-oriented perspective to view intelligence as rational agents) and negatively impact their higher studies. The potential pitfalls indicate a need for a well-thought pedagogical design to balance the pedagogical value and limitations of using humans as a reference to teach AI.

An Analogical Scaffolding Model

Using humans as a reference to teach AI can be informed by the Zone of Proximal Development (ZPD) theory and scaffolding. The ZPD is a learning theory introduced by Vygotsky that emphasizes the importance of social interactions and learning facilitation in fostering cognitive development (Vygotsky, 1978). The ZPD is the difference between what a learner can achieve independently and what they can achieve with facilitation from others (Vygotsky, 1978). From this perspective, learning is a transformative process: learners start with limited independence and much external facilitation and then gradually gain proficiency and independence while diminishing the facilitation. Based on the ZPD theory, scaffolding refers to the facilitation and guidance offered to students during the learning process (Van de Pol et al., 2010). The goal of scaffolding is to enable students to become independent, self-directed learners as they gradually build their understanding and skills and take on more responsibility for learning (Hogan & Pressley, 1997).

Of particular interest is an analogical scaffolding strategy (e.g., Podolefsky & Finkelstein, 2007; Emig et al., 2014). An analogy rooted in Gentner’s (1983) structure mapping theory is to logically map and compare concepts from a familiar source domain with those in the unfamiliar target domain—“A is like B” (e.g., a database is like a brain). Analogies have long been used to teach difficult, abstract ideas in STEM education (Gogolla & Stevens, 2018). In analogical teaching, teachers can select a familiar, appropriate student-world analogue to assist their explanations of the intended concepts. By analogizing the familiar attributes from the source to target domains, students are guided to enrich or modify their prior or existing understanding toward new, sophisticated understanding (Harrison & Treagust, 2006). In this regard, analogies can serve as scaffolding to support student learning, in which students are gradually transformed from relying on familiar base domains to gaining new, sophisticated understanding.

Despite the benefits, there are potential pitfalls to using analogies in teaching AI. There are two types of similarities between the source and target representations in an analogy: the superficial similarity (e.g., appearance or color) and structural similarity (e.g., the underlying systems of relationships between elements) (Keane et al., 1994). Generally, deep structural similarities are believed to have greater exploratory power and, therefore, are more useful for fostering conceptual change (Gentner, 1989). However, students often struggle to understand the deep systematic process similarities as they require high-level cognitive skills (Glynn, 1990). For example, the analogy that a database is like a brain is based on the similarity that both brains and databases are storage systems that can retrieve and transmit data. However, it may lead to misconceptions, such as viewing the database as a chip as tangible as a human brain. Therefore, it is essential to guide students through a careful process of mapping the deep structural relationship between the source and the target and help them grasp the intended similarity (Duit, 1991).

An opportunity for overcoming the above pitfall lies in the fact that an analogy breaks down eventually. Essentially, an analogy is a simplified comparison between two different domains in which the targeted similarity is focalized and other irrelevant aspects are ignored (Harrison & Treagust, 2000). As the mapping between the two domains broadens, the disparity between them will eventually become substantial enough to break down the analogy. The breakdown can be leveraged as a learning opportunity to reflect on the comparison between the base and the target and to shift the focus from similarity to difference in which the unique attribute of the target domain can be highlighted. In this way, students can be guided to focus on the uniqueness of the target domain, obtain a deeper understanding, and consolidate their conceptual change.

Given the above research gaps, this study adopts a design-based research (DBR) methodology that integrates the development of pedagogical intervention and empirical research (Design-Based Research Collective, 2003). DBR is characterized by a cyclical process of (re)design, implementation, and analysis through which researchers and practitioners collaborate to make and test hypotheses regarding how learning can be best supported within the local context (Anderson & Shattuck, 2012; Fishman et al., 2013; Bryk et al., 2015). In this DBR study, we follow a theory-informed approach to develop a domain-specific pedagogical approach for AI education and conduct a quasi-experimental study to evaluate its impact.

Theory-Informed Design: An Analogy-based Pedagogical Approach

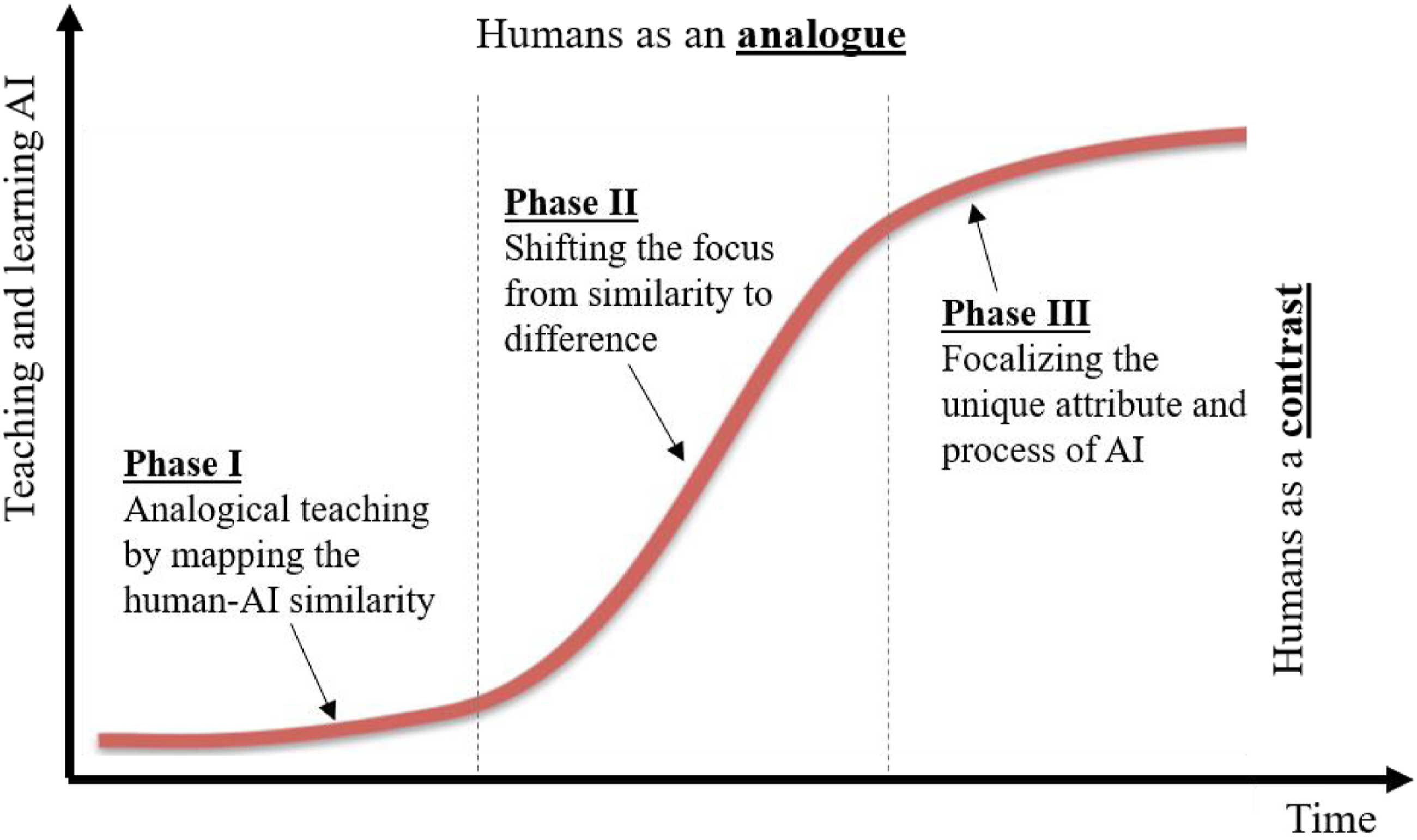

Informed by the ZPD theory and AI education literature, an analogy-based approach to AI pedagogy is proposed to support the teaching and learning of AI in the upper primary education context. This approach largely draws upon the human–AI analogy as the key scaffolding to explain AI concepts and processes, as represented in Figure 1. Overall, the AI teaching and learning process can be seen as an evolutionary process, where students start by relying on the familiar human analogue to make sense of AI and then gradually grasp the unique attributes of AI as an independent domain. Through this process, the role of humans is gradually transformed from an analogy to a contrast. Figure 1 represents the process of gradual transformation, rather than a change at a constant rate (i.e., a straight line). S curve of the analogy-based approach of teaching and learning AI.

The teaching and learning process guided by the analogy-based pedagogy can be approximately divided into three phases: • Phase I usually relies on conventional analogical teaching. The human–AI analogy is introduced to explain the intended concept by logically mapping the deep structural similarities between humans and AI, including the processes and mechanisms, working principles, and strategies for how humans and AI perform tasks and make decisions. Analogical teaching can help students build relevance and connection with abstract AI terms and processes. The analogical teaching can also be extended to block-based programming, where students learn how to program and actualize the respective AI terms and processes. • Phase II is characterized as a time to break down the analogy, where the focus is changed from mapping the similarity to sketching the difference. In this transitional phase, the role of humans is shifted from being an analogue to a contrast. By deliberately disrupting the human–AI analogy, students’ attention is oriented to the fundamental differences between humans and AI to prevent the over-mapping between humans and AI or the associated misconceptions. Block-based programming is constantly referenced to show the computational process of AI as a contrast to the biological and social process of human intelligence. • Phase III marks the focus on the unique attribute, processes, and principles of AI as an independent domain, with the human analogue being gradually removed. It is the time to demonstrate both the advantages and limitations of AI, such as improved productivity and potential ethical implications. In addition, highlighting the unique, double-edged sword of AI is critical in nurturing reflective, critical, and responsible practices and attitudes.

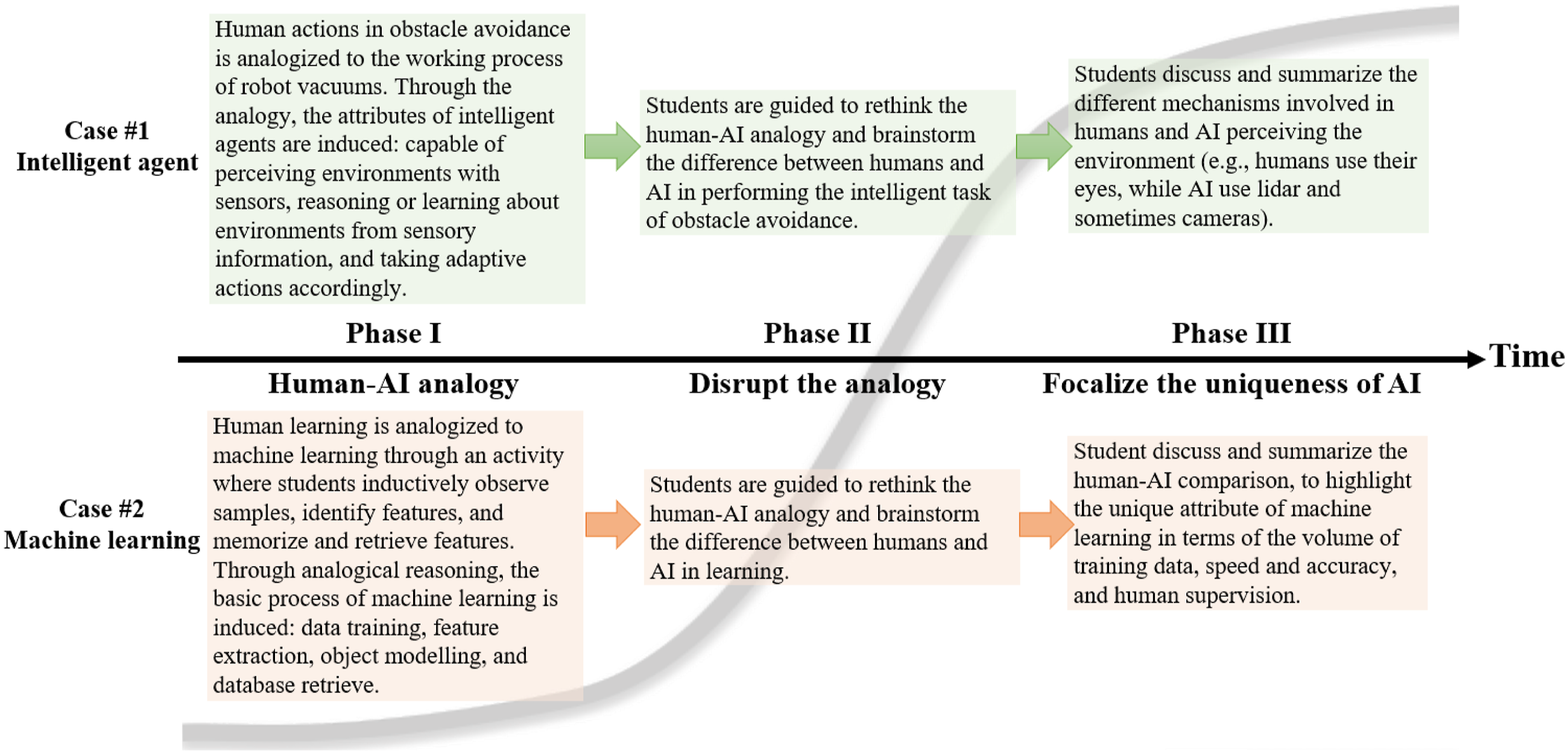

To further illustrate the analogy-based approach, two pedagogical cases are presented for demonstration purpose, as shown in Figure 2. The two cases are centered on the teaching topics of intelligent agents and machine learning: the first represents an introductory topic about the basic attribute of AI (i.e., intelligent agents), and the second represents a more advanced topic about the working process and mechanism behind AI. Two teaching cases of intelligent agents and machine learning informed by the analogy-based pedagogical approach.

Of particular significance is that the analogy-based approach is not an unplugged, low-tech approach that emphasizes conceptual teaching without computers. Instead, it is more of a domain-specific pedagogical framework that informs the design and organization of instructional activities in AI classrooms. It can integrate unplugged and plugged block-based programming activities and support student growth in conceptual knowledge, skills, and ethical awareness. For example, in both teaching cases in Figure 2, after explaining the idea and meaning of intelligent agents/machine learning in analogical teaching (Phase I), block-based programming activities in Scratch are followed, where students learn how to actualize or fulfill the respective intelligent tasks with programming. Afterward, in comparing the difference between humans and AI, the programming activities are turned into a resource for students to analyze and reflect on the strengths and limitations of AI, as well as the human–AI relationship. In this way, students’ sense-making about the social and ethical implications of AI is grounded in their first-hand experiences of programming and developing AI systems rather than second- or third-hand accounts from teachers.

Method

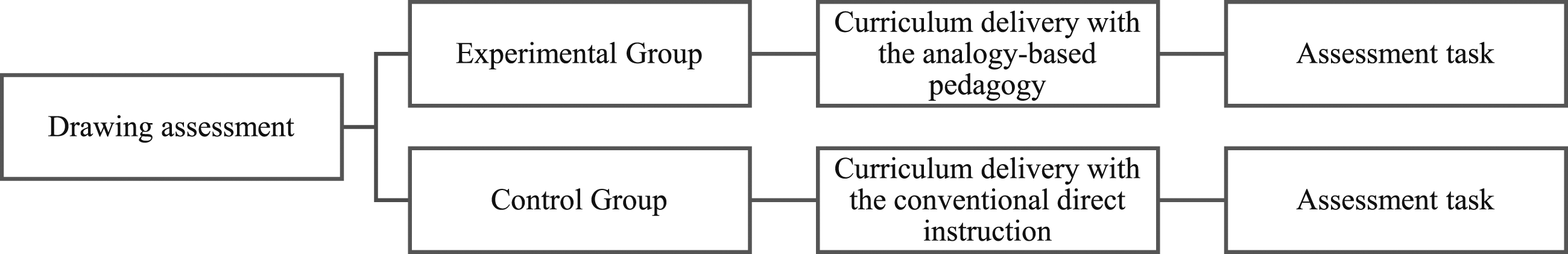

A quasi-experimental study was conducted to evaluate the impact of the specially designed pedagogical approach. A control group and an experimental group were set up to compare the differences in students’ AI learning performances with the analogy-based pedagogical approach and the conventional approach. The study was guided by the following research questions: • How did the analogy-based pedagogy impact students’ AI learning performance in comparison with the conventional approach? • How did the students taught by the analogy-based pedagogy perceive this pedagogical approach?

Research Context and Pilot Study

This study was situated in a larger research project between the research team and a school district in China (Dai, Liu, et al., 2023). The school is situated in a middle-class school district with an average socio-economic background, and students are admitted from the school district through a fair banding system. An information technology (IT) teacher from the school participated in the study as a research collaborator. Before the study, she had incorporated AI topics in the IT courses for one year. Her teaching largely followed the conventional direct instruction approach, where the teacher delivered explicit, guided explanations about AI topics to students. The direct instruction approach was taken as the pedagogical condition for the control group.

In this design-based research, the research team and the IT teacher collaborated to develop the pedagogical intervention. While the researchers led the theory-informed design of pedagogical activities and teaching materials, the teacher contributed with local knowledge and led the classroom enactment. Upon the completion of the draft materials, the materials were sent to two curriculum specialists and two AI scientists for expert review and feedback to ensure age appropriateness, scientific accuracy, and content validity. Two DBR cycles had been developed, as the teacher and researchers collaborated to iteratively (re)design, enact, analyze the pedagogical approach. The first cycle served as a pilot study, which was conducted with a cohort of 79 Grade 6 primary school students. From the pilot study, feedback from teachers and students were collected to further revise the teaching materials and research procedure. The experimental study was conducted in the second cycle.

Research Procedure

With the facilitation of the IT teachers, a quasi-experimental study was conducted in Grade 6 of the beforementioned school. The study was enacted in the 430 Curriculum Project —the school-based curriculum taking place between 4:30 p.m. and 6:30 p.m. in schooldays in China (Jiang, 2021). In the 430 Curriculum Project, a school-based AI curriculum was developed for sixth graders who did not have prior learning experiences in AI and relevant subjects (e.g., block-based programming and robotics). The criteria of student selection helped ensure the homogeneity of student backgrounds as beginner learners. In the curriculum, two sessions were chosen as the experimental and control groups. In each session, there were approximately 45 students. A total of 83 students participated and completed the study, including 43 students in the experimental group and 40 students in the control group. Specifically, 55.42% of all the participating students were males and 44.58% were females, with an average age of 11.5 years. The two sessions had a similar gender ration 120:100. Informed consent was obtained from the students and their parents. Figure 3 shows the research procedure. The research procedure.

The beforementioned IT teacher delivered the school-based AI curriculum to both the experimental and control groups. The curriculum consisted of two modules: AI basics and machine learning, which was organized into eight weekly workshops (each lasting for 2 hours). Generally, the initial half of a workshop introduced the AI concepts and topics, often using unplugged activities; the latter half emphasized block-based programming in Scratch. In the experimental group, students were guided to approach the basic AI concepts, processes, and strategies (how AI senses the environments, represents and communicate information, learn and acquire new knowledge, and make reasoning by analogizing and comparing those of humans).

Both groups attended the workshops in the same multimedia classroom, where each student had access to a desktop for programming activities. Scratch embedded in the Machine Learning for Kids platform (https://machinelearningforkids.co.uk/) was employed. The control group received conventional direct instruction, while the experimental group received the analogy-based approach as the pedagogical intervention. In this way, the two groups were nearly equivalent in terms of the class size, gender ration, teaching contents and facilities, and pre-instructional understandings (elaborated in the finding), but with the sole difference in the pedagogical condition.

Data Collection

Drawing Assessment

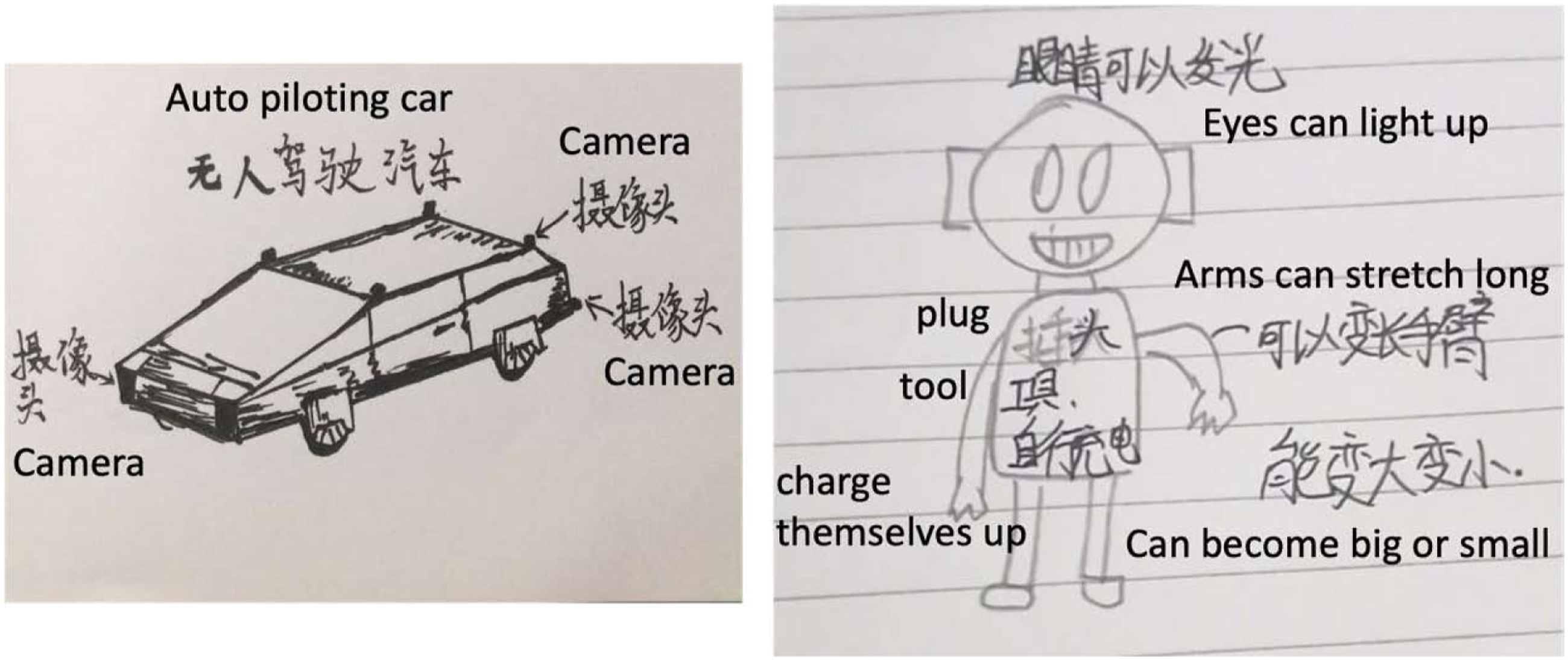

At the beginning of the AI curriculum, a drawing assessment was conducted as the pre-intervention test. Drawing assessment has been widely used in science education to evaluate students’ understanding and cognitive development about certain abstract concepts and processes through drawings or sketches. This form of assessment serves as an alternative or complementary tool to traditional written or oral evaluations, allowing young learners with limited linguistic ability to externalize their thoughts in an inclusive, multimodal way (Chang et al., 2020). In the drawing assessments, students were tasked to draw their perceptions, along with prompt questions to stimulate their thinking: “what do you think is AI? What AI can do? What would you like to use AI for?” Students can also indicate that they did not know much about AI and skipped the assessment. Figure 4 shows two pieces of student artefacts collected in the drawing assessment. Student artefacts collected in the drawing assessment.

Assessment Task

The students’ AI learning performance was measured in the post-intervention test with a collection of assessment tasks. The measurement was set in three dimensions: AI knowledge, skill, and ethical awareness. The knowledge and ethical awareness were assessed with a paper-and-pencil test, while the skill was assessed with Scratch projects. The assessment tasks were either adapted from local textbooks (Qin et al., 2019; Yang, 2020) or constructed by the researcher team, which was tested and validated in the situated school district (Dai et al., work in progress). The assessment tasks in the three dimensions were found to be highly correlated (0.84–0.88), indicating good concurrent validity (Boyle et al., 2015).

The students’ AI knowledge and ethical awareness were measured with multiple-choice question (MCQ) items in a paper-and-pencil test, as shown in the Appendix. Student proficiency in AI knowledge entailed their memory and comprehension of AI terms, concepts, and principles, which comprised the “Remembering” and “Understanding” levels in Bloom’s Taxonomy (Bloom et al., 1971). The MCQ items were organized in two modules: AI Basics (14 items and one point for an item) and machine learning (18 items and one point for an item). Ethical awareness is concerned with students’ value and attitude towards AI ethical issues, whether they could identify, evaluate, and respond to these issues approximately and responsibly (Stahl, 2021). The students’ ethical awareness was measured with scenario-based assessments (SBA), where real-life situations or ethical dilemmas were provided for them to analyze, evaluate, and take action (Romine et al., 2017; Daniel & Mazzurco, 2019). There was a total of 10 points for the 10 MCQ items in the SBA. The Cronbach’s α for the paper-and-pencil test was 0.76, indicating acceptable reliability.

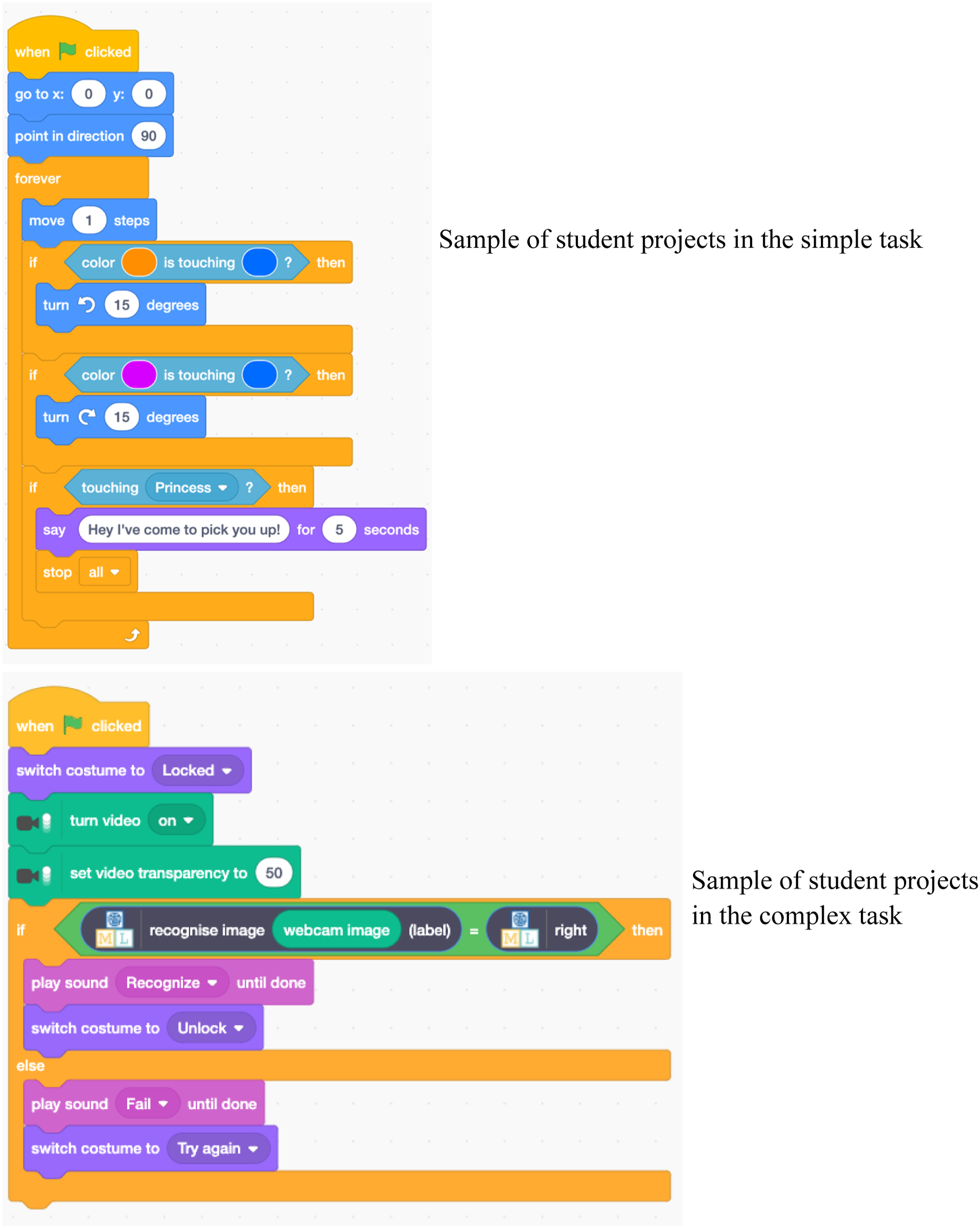

To evaluate the students’ mastery of AI skills, they were instructed to create digital artifacts and solve the given problems in Scratch. This assessment corresponded to the “Applying” and “Creating” levels of Bloom’s Taxonomy (Bloom et al., 1971). According to Dörner and Funke (2017), most technology and engineering problems can be divided into simple and complex problems, depending on whether there is a well-defined problem with a specific solution path. For a comprehensive assessment, one simple-problem task and one complex-problem task were included. Figure 5 shows the student artifacts in the two tasks. Screenshots of the students’ projects in the two scratch tasks.

The simple-problem task was to program a given Sprite to autonomously avoid obstacles and navigate directions. The complex-problem task was an open-ended question with a general goal of building an access-control system for classrooms. The students needed to formulate the question and define a solution path before programming, and the task was primarily about machine learning techniques. The grading rubric was used in rating the quality of the students’ projects. For the simple-complex task, the rubric included four aspects: problem decomposition, logical thinking, data representation, and flow control (Moreno-León & Robles, 2015), with each aspect rated on a three-level scale (basic, developing, and proficiency), for a total of 12 points. For the complex-problem task, a fifth aspect, namely, originality and creativity, was added to the grading rubric, for a total of 15 points. For the two tasks, Cronbach’s α was 0.87, indicating high reliability.

Focused Group Interviews

Upon completion of the AI course, a focus group interview was conducted in the experimental group to elicit the students’ perspectives and opinions toward the pedagogical approach. Purposive sampling was adopted to invite active, average, and less active students in the semi-structured interviews (Etikan et al., 2016), with four to six students in each group. A total of 21 students participated in four interviews (≈1.5 hours per interview). The students were asked to reflect on their perspectives and experiences in general and on specific learning activities concerning how the activities impacted their learning. Sample questions included the following: “What do you think about the in-class activities?”, “What do you see as the most helpful/difficult activities?”, “How do your views about AI compare before and after the course?”, and “Which activity or content triggered a change in your view?” The interviews were audio-recorded and transcribed.

Data Analysis

Deductive Coding

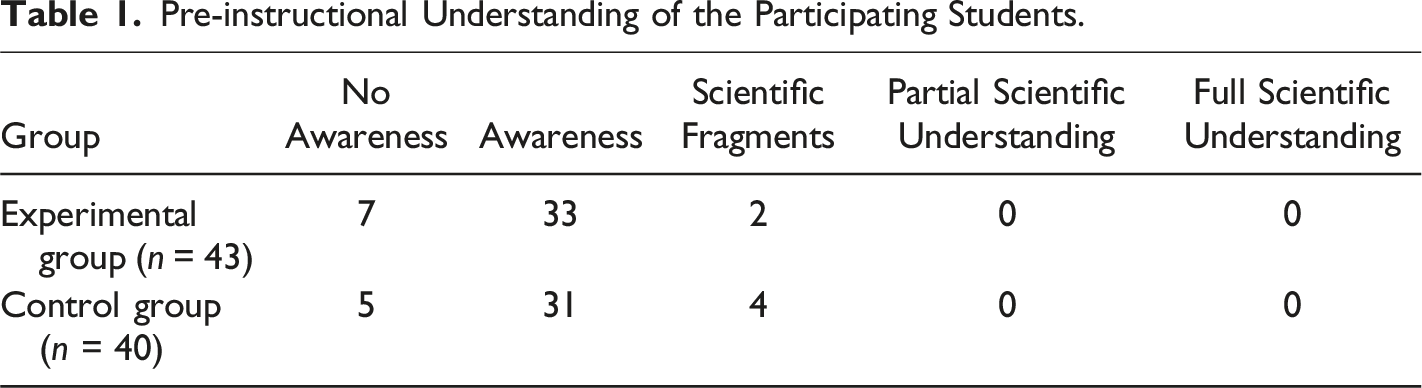

The students’ responses in the drawing task were coded deductively to identify the level of their pre-instructional understanding of AI. The coding was informed by a well-established code list developed by Adadan et al. (2010) and Trundle and Bell (2010), which defines students’ understanding from no awareness (i.e., having no idea), awareness (i.e., fragmented, irrelevant/incorrect ideas), scientific fragments (i.e., fragmented, scientific ideas), and partial scientific understanding (i.e., coherent, complete understanding with certain misconceptions) to full scientific understanding. The five-level code list was also an ordinal variable, making it possible for statistical analysis. Two postgraduate research assistants independently coded a collection of 15 pieces of student drawings, with an initial interrater agreement of 73.33%. Through discussion, they resolved the disagreement and reached a further agreement in interpreting and operating the code list. They then independently coded the rest of the student drawings, with an interrater agreement of 92.77%. The disagreed items were resolved through discussion until a 100% agreement was reached.

Independent Sample T-test

For the students’ scores in the post-intervention assessment, an independent sample t-test was performed to compare their performances in the three dimensions of AI knowledge, AI skill, and ethical awareness. Before the analysis, data were plotted and examined to check the satisfaction of assumptions. From the stem-and-leaf plot, data in all the variables were distributed with approximately bell shapes. The students’ scores in major variables (e.g., overall performance, knowledge proficiency, and skill mastery) were normally distributed, as assessed by the Shapiro–Wilk test (p > 0.05). Although the students’ scores in some variables failed to pass the Shapiro–Wilk test, it constituted mild deviations from normality. Given the nearly equal sample size between the two groups, the independent-sample t-test was still robust and applicable (Schmider et al., 2010).

Thematic Analysis

The focused group interview was analyzed with thematic analysis (Braun & Clarke, 2006) to inductively identify, synthesize, and report the theme about the perceived affordance of the pedagogical approach by students. Specifically, thematic analysis allows the researcher to interpret and describe the participants’ perspectives and experienced realities based on their own written or verbal accounts (Braun & Clarke, 2006). Following the convention of thematic analysis, the analytical procedure begins by reading all the interview transcripts to become familiar with the data and generate initial codes, followed by an initial search for themes through the collation of the codes. Each theme was then checked or reviewed to ensure the coded extracts were related. This analysis process was iterative and recursive in nature, as the researchers had constantly been reading through the data to check for consistency and accuracy among the themes back and forth for verification and revision, as opposed to a linear process. Finally, the themes were defined and named to produce the report by relating them back to the research questions. The coding reliability and validity were enhanced through collaborative analysis and interpretation within the research team.

Findings

Statistical Analysis Results

Pre-instructional Understanding of the Participating Students.

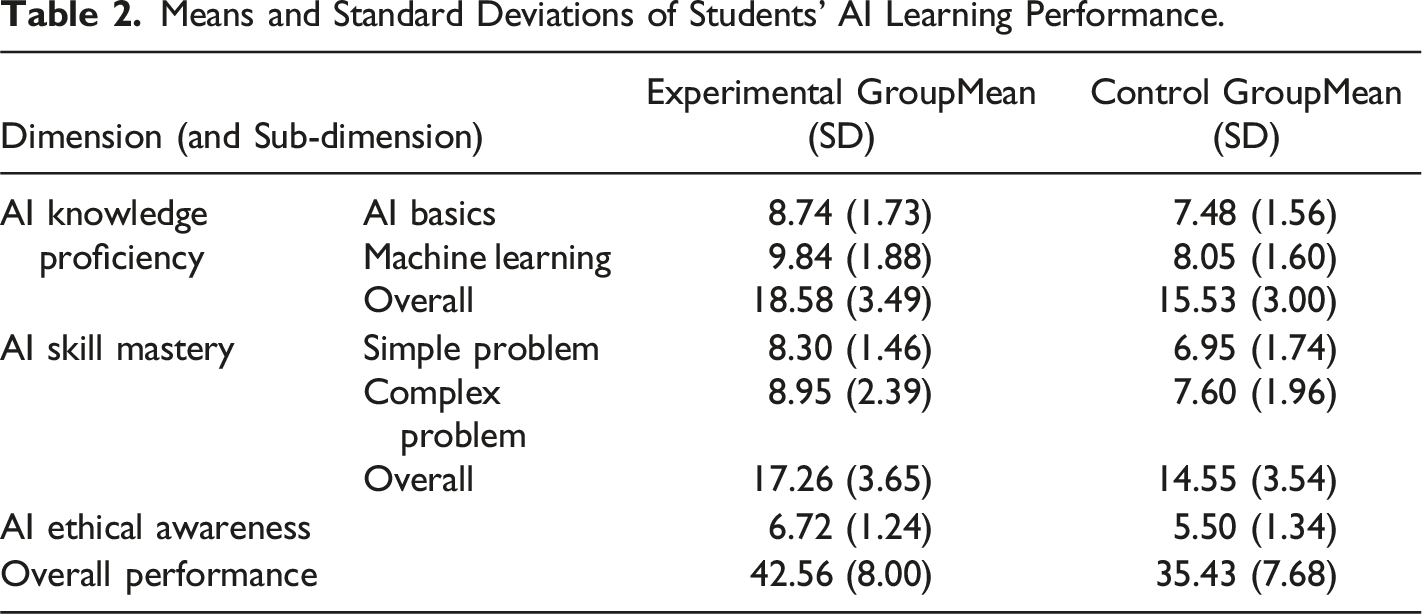

Means and Standard Deviations of Students’ AI Learning Performance.

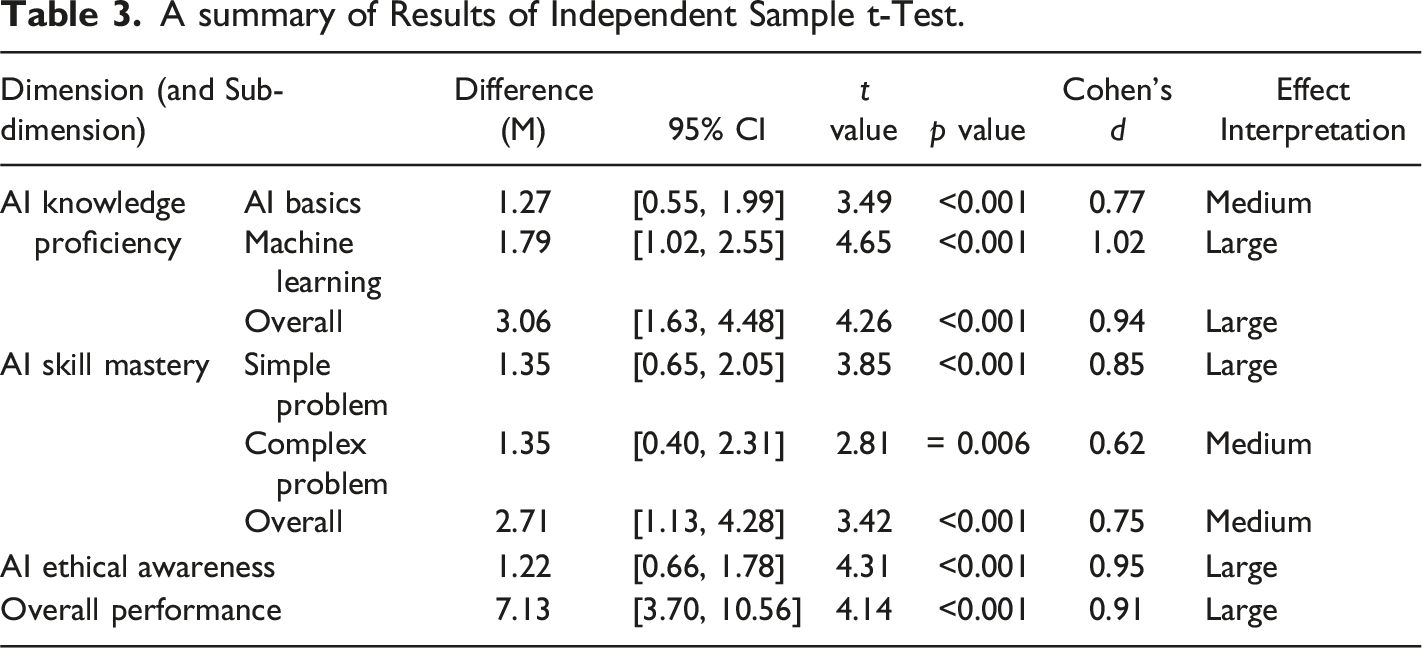

A summary of Results of Independent Sample t-Test.

Student Perceptions of the Pedagogical Approach

Demystifying Artificial intelligence Through Relatable and Engaging Learning

From the focus-group interviews, a salient theme emerged, that is, the proposed pedagogical approach has demystified AI technology and made AI knowledge more accessible to the students. Before the instruction, most of the participating students viewed AI as “complex,” “highly advanced,” and “mysterious and aggressive.” One student recalled that the AI he saw in movies were “much smarter and more capable than humans, and often helped humans perform dangerous tasks, such as exploring caves, magma, and ocean trenches.” Even with a general impression of AI, most of the students did not really understand what AI was and how it worked. For them, AI was “something too far from us and too high above us.” The students’ preconceptions showed that AI appeared to be a distant or disconnected notion.

After the pedagogical intervention, the students found themselves with a different view of AI—“AI is actually not as complex and difficult as we thought.” They attributed their changed view to the human–AI analogy: using humans as an analogue made the abstract AI concepts less mysterious and more relatable. The human actions and experiences provided a tangible, concrete basis for the students to make sense of intelligence and intelligent agents and further construct their understanding of AI. For example, one student described his learning experiences:

(Now I have) a totally different understanding of AI. Before, whenever AI was mentioned, I was full of questions. I had no idea what it was about. Now, things are much clearer. There are so many connections between humans and AI. I finally know what humans can do and what AI can do.

The interview shows that the student’s understanding of AI improved and became much clearer as a result of the pedagogical intervention. Meanwhile, the student’s reference to “what humans can do and what AI can do” suggests that she can relate AI to her own experiences and capabilities, making the topic more personally relevant and engaging. Based on personal relevance, she saw many connections between humans and AI, leading to a more comprehensive understanding.

The accessible and relatable learning experience was deemed fun and enjoyable by many students. The human-AI analogy enabled students to engage with AI knowledge in a more lighthearted and age-appropriate way, alleviating the challenging of directly grasping the abstract and intricate concepts. In particular, students complained that the content about AI they had encountered before was incomprehensible and stressful, even leading to fear of the AI subject for some. However, in this course, the human–AI analogy lowered the threshold of learning and made AI less intimidating for the students while bringing a sense of excitement and fun into the classroom. The positive learning atmosphere greatly encouraged student participation and confidence, as many students expressed interest in attending future AI courses.

Supporting Student Comprehension and Skill Mastery

Beyond superficial or general knowledge of AI, the students were guided to explore the inner workings of AI systems, based on which they acquired a more in-depth understanding of the processes, principles, and mechanisms of AI systems. This student advancement was largely enabled by the analogical teaching that emphasized the deep structural similarities between humans and AI: the AI processes and mechanisms were mapped onto those of humans; how humans interacted with environments and performed cognitive tasks were leveraged as a basis to explain those of AI systems. This pedagogical affordance was evident in the student interview below I have gained a deeper understanding of AI and the actual meaning of the relevant concepts rather than simply knowing their names. In this course, (I’ve learned that) there are many reasons or logic behind AI. AI follows certain logical ways of thinking in doing tasks step by step. It (the course) made the thinking process very clear.

A deep understanding of AI can promote various skills in students, including technical and problem-solving skills, as demonstrated in the Scratch activities. By delving into the mechanisms and processes of AI systems, students can better comprehend the intricacies of these technologies and apply their knowledge to new tasks. Specifically, when the students explored the inner workings of AI systems, they learned about the essential techniques, principles, and strategies required to develop or apply AI tools. Such a comprehensive understanding can help them gain technical skills to work with AI systems, such as coding, designing simple algorithms, and debugging. For example, a student elaborated on his gains in terms of Scratch programming experiences I used to think Scratch was fun. As long as I assemble the blocks following the teacher’s steps, I can have a game to play. However, now I see why I should do these. For example, the game of shooting balloons was like an intelligent agent. (In the game, when) using video to shoot balloons, there is a video sensor inside to sense your actions of hitting. To determine whether you hit the balloon or not, you need to put some codes for recognition and conditions. I am more aware of why I do this, not just copying the teacher’s code.

The student’s description of his experience with Scratch suggests that improved comprehensions allowed him to make more informed decisions in programming. In viewing the shooting balloons game as an intelligent agent, he demonstrated the ability to recognize intelligent agents in various contexts and to apply the relevant AI principle into the next context. His understandings about the principle of intelligent agents further enabled him an informed perspective to break down a task/problem and devise appropriate solutions. In this regard, programming in Scratch was no longer merely technical operation or physical manipulation of blocks of code, but an informed process where the student linked his conceptual understanding with decision making in programming. As such, the enhanced understanding paved the path for technical and problem-solving skills in block-based programming.

Nurturing Critical Thinking and Attitudes

The analyses further revealed that the pedagogical approach did not necessarily lead to student misconceptions. Instead, it appeared to help students clarify their misconceptions and gain a critical understanding of AI. As shown in the interview, many students referenced science fiction movies or mass media content in their prior understanding of AI, in which they viewed AI as humanoid robots. These robots were often portrayed as sentient machines or antagonists whose aim was to overcome or control humans/protagonists. Meanwhile, they tended to hold a one-sided, black-and-white perspective of AI. Some of the students only noticed the convenience and efficiency brought by AI tools (e.g., “AI is a kind of machine that does tasks for us and makes our life easy”). Others displayed a hostile attitude towards AI—“robots who invade the earth and want to dominate human beings.”

After participating in the course, many students reported having clarified some of their misconceptions about AI, acknowledging that “many objects I used to think as AI turned out to be not AI.” One student explained: I used to think AI were robots that worked like humans. However, now I know AI are not just robots. AI have special abilities like being able to learn and adapt to new things. Not all robots are AI.

Similarly, most students abandoned the preconception that AI resembled humans. The changes in student conceptions were associated with their growing proficiency in AI knowledge, especially through the teaching practice of disrupting the human–AI analogy and highlighting the uniqueness of AI. By shifting the focus from similarities to differences, the teaching strategy helped students resolve and prevent relevant misconceptions from analogical reasoning.

Besides the clarified misconceptions, the students also demonstrated critical understanding, as well as more nuanced and informed perspectives of AI. Their critical understanding is evident in their “renewed” thoughts about AI: In the movies, AI are always fighting with humans. (Now I know) AI can help humans and provide lots of convenience to our lives. I thought only mobile phones were AI. Later, I found that so many things were AI. Initially, I thought AI could only help us do some simple tasks on phones. However, now, (I’ve learned that) AI can do several tasks that often require a lot of time for humans to complete. I used to think AI are very smart and can do everything. However, now (I think) AI could be a bit stubborn sometimes and not as flexible as humans. The algorithm bias is the least perfect part of AI. Their bias is different from humans who do people favors due to personal connections. AI discriminate in a very stiff manner.

The student interviews showed their critical reflections on the capabilities and limitations of AI. The first student dispelled the notion that AI is always portrayed as a threat to humans in movies. His recognition of the positive impacts of AI served as a counterbalance to common dystopian portrays of AI in popular culture, implying a more balanced and thorough understanding of AI. The second student demonstrated plurality in her views, as she recognized that AI was not a monolithic entity but encompassed a wide range of technologies and applications. Her view highlights the pervasive nature of AI in our society, as well as the potential of AI to augment and enhance human capacities. As for the third student, he adjusted his expectations of AI capabilities as he recognized the limitations of AI, such as more stubbornness and inflexibility compared with humans. His comment on AI being stiff implies his awareness of its potential bias and drawbacks. Overall, the student accounts demonstrated critical understandings that recognizes the opportunities and challenges posed by this rapidly evolving technology. Their recognition of the challenges and limitations implies their emerging ethical awareness and critical attitudes to AI.

Discussion

An Artificial intelligence Pedagogy for Upper Primary Education

This design-based study has fulfilled twofold goals of developing a theory-informed pedagogical approach and constructing empirical evidence to validate its effectiveness. The pedagogical approach is centered on the human–AI comparison as the key scaffolding strategy that is further enhanced by restructuring analogical teaching practices. The approach was further implemented and evaluated with mixed methods and both quantitative and qualitative evidence.

The quasi-experiment revealed that the experimental group taught with the analogy-based pedagogy demonstrated significantly higher performances than the control group with conventional direct instruction in AI knowledge proficiency, with medium to large effect sizes in AI basics and machine learning knowledge (Cohen’s d = 0.77 and 1.02, respectively). The result shows the strength of the pedagogical approach in developing conceptual understanding and increasing knowledge proficiency. The gap in the effect size between AI basics and machine learning knowledge could potentially attributed to the varying levels of difficulty associated with each module. The module of AI basics, being relatively less challenging, allowed students in the control group to attain a reasonable level of understandings from direct instruction, thus resulting in a less pronounced impact of the analogy-based pedagogy. By contrast, the more complex nature of machine learning knowledge served as a better discriminator between the two pedagogical approaches, which highlights the superior efficacy of the analogy-based pedagogy in facilitate student learning and comprehension.

Similarly, the experimental group displayed a superior understanding and awareness of AI ethics, evidenced by a large effect size (d = 0.95). This finding highlights the potential of analogy-based pedagogy in fostering not only technical knowledge but also ethical considerations about AI. The students’ accounts in the interview further revealed that a deeper understanding of the underlying principles and working process of AI systems is crucial for grasping their ethical implications and gaining ethical awareness (UNESCO, 2022). Meanwhile, the human–AI comparison in the analogies may make the ethical issue of AI more relatable for students, as they can link the intended topics with their first-hand experiences.

Regarding AI skill mastery, the experimental group excelled in both simple and complex problem-solving tasks compared with the control group, with large and medium effect sizes, respectively (d = 0.85 and 0.62, respectively). The overall skill mastery scores further corroborated the superiority of the experimental group, yielding a medium effect size (d = 0.75). The analogy-based approach appears to favor the training of students’ skills in the simple task: the students can better comprehend the ideas and logic behind their manipulation of blocks in Scratch, which helped improve their programming proficiency and techniques. By contrast, the students’ problem-solving skills with the complex task seemed to be less addressed. The students’ ability to analyze, formulate, and solve ill-defined problems requires new training approaches and strategies.

In summary, the experimental group’s overall performance was significantly better than that of the control group, as indicated by a large effect size (d = 0.91). These findings underscore the potential benefits of implementing analogy-based pedagogy in AI education, as it appears to enhance students’ knowledge proficiency, skill mastery, and ethical awareness. The present study contributes to the growing body of literature on effective pedagogical approaches in AI education and supports the adoption of analogy-based pedagogy as a viable alternative to conventional direct instruction (Evangelista et al., 2018; Sanusi & Oyelere, 2020).

Conceptualizing Artificial Intelligence as an Independent Subject with Interdisciplinary Applications

The research offers insights into the position and conceptualization of AI education in the K-12 context. The significant outperformance brought about by the analogy-based approach emphasizes the importance of fostering students’ conceptual understanding of AI terms, mechanisms, and principles before delving into the technical, practical details of building AI systems; that is, AI education in the K-12 context should prioritize teaching AI for student understanding (Dai et al., 2023b; Su et al., 2022). A solid comprehension of AI terms and processes can establish a strong foundation for students’ subsequent development of skills and ethical awareness (Fast & Horvitz, 2017; UNESCO, 2022). Moreover, by cultivating a deep understanding of AI concepts, students are better equipped to grasp the potential implications of AI technologies in various domains (Long & Magerko, 2020; Touretzky et al., 2019). Consequently, AI education that starts with student understanding lays the groundwork for the holistic development of both technical expertise and ethical awareness (Chiu, 2021).

The students’ holistic development in AI knowledge, skills, and ethical awareness further implies that the AI subject is an independent subject that is essentially different from computational thinking and programming education (Wong et al., 2020; Chiu, 2021; Dai, 2023). While computational thinking emphasizes thinking and problem-solving skills like those of computer scientists, programming education focuses on teaching students the syntax and semantics of specific programming languages (Jacob & Warschauer, 2018). Although both computational thinking and programming skills contribute to AI education, AI encompasses a broader range of topics and agendas, such as machine learning, computer vision, and robotics. Furthermore, AI education extends beyond the technical aspects, emphasizing the ethical and social implications of AI technologies (Touretzky et al., 2019). By acknowledging AI as an independent subject, K-12 curricula can provide a more comprehensive and interdisciplinary approach to AI education, incorporating elements from computer science, mathematics, statistics, ethics, and domain-specific knowledge (Akram et al., 2022).

Analogies and Analogical Teaching in Science, Technology, Engineering, and Mathematics Education

This research provides new evidence to expand the discussion of analogies and analogical teaching in the field of STEM education. Previous studies have explored their effectiveness in various subjects, such as physics (Treagust et al., 1996) and mathematics (Richland et al., 2004). While AI is a newly emerging subject matter, the study expands the adoption and application of analogical teaching in this new field. In particular, given the unique nature of AI education, the focal analogy-based approach has restructured conventional analogical teaching and created a new learning configuration by purposefully disrupting the analogies and refocusing on the contrast. This strategy echoes previous research suggesting that students are more likely to develop accurate and lasting knowledge of the target domain when prompted to explicitly compare and contrast two domains (Duit et al., 2001).

However, the analogical teaching in AI education slightly differs from that in many other subjects. Rather than merely using human analogue as a vehicle to learn AI, students’ reflective understanding of humans and human–AI relationships are simultaneously important in the analogical teaching process (Long & Magerko, 2020). Students’ reflective understanding can facilitate their growth in critical thinking and ethical awareness (Heinze et al., 2010). For example, a common misconception held by students is their ignorance of human bias in AI decisions and overstatement about the AI capacity (Sulmont et al., 2019). In this regard, a mutually enhanced understanding of humans and AI can contribute to a better understanding of AI topics, human–AI relationships, and social and ethical implications of AI.

Limitations and Future Studies

This study has certain limitations. First, it was conducted in one primary school with students mainly from middle-class families in southern China. Therefore, it may not be appropriate to generalize its findings to other cultural contexts or age groups. Further research is needed to explore the generalizability and transferability of this approach to other student populations at large. Second, since this research relied on the assessment task to measure students’ learning outcomes, it did not capture the dynamics and complexity of student learning enacted by the analogy-based pedagogy in classrooms, leading to an incomplete understanding of the impact of the pedagogical approach. Future research will be conducted to qualitatively document and analyze student engagement and interactions in situ toward compressive and in-depth accounts of the learning opportunities and constraints enacted by the pedagogical approach.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Chinese University of Hong Kong under the Direct Research Grant Scheme (Reference No. 4058097).

Ethics Statement

This research was granted ethical approval by the Survey and Behavioral Research Ethics Faculty Sub-committee at the Chinese University of Hong Kong, Faculty of Education (Reference No. SBRE-21–0813). Informed consent was obtained from all individual participants included in the research.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon request.