Abstract

This study examines the applicability of generative artificial intelligence (GenAI) in public employee selection. Our interest in how cultural differences influence GenAI outputs leads us to compare OpenAI’s ChatGPT and Baidu’s ERNIE. We designed a conjoint experiment wherein these GenAI models evaluated pairs of hypothetical candidates with varying meritocratic attributes (including pre-employment assessment results, education level, and relevant experience) and non-meritocratic attributes (including gender, ethnicity, and age). Experimental vignettes were created based on two distinct settings—police and teacher—to control for job type. Our mixed-methods findings indicate that GenAI’s hiring recommendations are more swayed by meritocratic than non-meritocratic attributes. However, compared to ERNIE, ChatGPT’s candidate selections are slightly more influenced by considerations of gender diversity. Additionally, both models exhibit inconsistencies when non-meritocratic factors are involved. Based on these findings, we recommend a cautiously optimistic approach incorporating training and oversight if GenAI is to be used in employee selection processes.

Keywords

Introduction

Is it time to consider adopting artificial intelligence (AI) in public human resource management (HRM)? Early iterations of AI were primarily programing-based systems that executed symbolic reasoning tasks, such as recognizing keywords in conversation and responding with pre-programed replies (Russell & Norvig, 2021). However, with the advent of machine learning algorithms, AI has evolved to perform self-supervised learning from big data (L. Zhou et al., 2017), enabling it to make independent decisions without reliance on explicit programing (LeCun et al., 2015; Taeihagh, 2025). This new frontier, known as generative AI (GenAI), profoundly reshapes the dynamic relationship between humans and technology, presenting substantial potential for its adoption across all facets of human life, including the workplace (Krakowski, 2025; Nasr & El-Deeb, 2025; Obrenovic et al., 2025).

Since the release of ChatGPT, a large language model (LLM) developed by OpenAI in the U.S., the use of GenAI in the workplace has grown by leaps and bounds. Notably, the largest strides in GenAI adoption have been observed in various professional services, including HRM (Ooi et al., 2025). HRM encompasses a range of functions, including job analysis and design, recruitment, selection, compensation and benefits, training and development, and performance management (Guy & Sowa, 2022). Scholars have recognized the transformative potential of GenAI within these functions (Chowdhury et al., 2024; Garg et al., 2022; Johnson et al., 2022; Tambe et al., 2019; Votto et al., 2021). For instance, organizations can leverage GenAI in the selection process to efficiently assess a large pool of applicants based on predefined criteria (Andrieux et al., 2024).

Despite the optimism surrounding AI-enabled HRM, skepticism persists regarding the fairness of GenAI. This apprehension is particularly relevant in the public sector, as HRM decisions carry high stakes for citizens and governments. For instance, employment bias could impede equal access to government jobs and undermine the legitimacy of public organizations (Jakobsen et al., 2023). Against this background, the black-box nature of GenAI, where algorithmic processing is opaque to users (Huang et al., 2024), raises serious concerns about hidden biases (An et al., 2024; Gross, 2023; Lippens, 2024).

Therefore, before confidently adopting GenAI in public HRM, it is crucial to identify and address any potential risks. Selection decisions, in particular, are intended to be merit-based (Jankowski et al., 2020; W. Resh, 2025); however, they often involve subjective judgments regarding candidates’ qualities, which can result in biased outcomes that negatively affect specific demographic groups (Andrieux et al., 2024). Thus, employee selection represents an ideal setting for evaluating whether GenAI can be a reliable tool for facilitating fair and just HRM decisions. Driven by this inquiry, we propose this study to evaluate the decision-making of GenAI in public employee selection. Our research is guided by the following questions: Does GenAI prefer specific attributes in candidates? Are there differences in how different GenAI models make hiring recommendations?

Building on the research of Jankowski et al. (2020), which was initially designed to examine public and private sector employees’ hiring preferences, we conducted a conjoint experiment in which GenAI evaluated two hypothetical candidates side by side for public service positions. The experiment vignettes were crafted based on Jankowski et al.′s candidate attribute dimensions, focusing on two different occupations—police officers and primary school teachers—to control for job type. In each instance, GenAI received information about the organization and job duties before selecting one candidate from the pair. The candidates were characterized by distinct attributes, including meritocratic (i.e., pre-employment assessment results, education level, and relevant experience) and non-meritocratic (i.e., gender, ethnicity, and age). We employed a mixed-methods approach to collect and analyze the recommendations made by GenAI.

A distinguishing feature of this study is our comparison of GenAI models across cultural boundaries. The success of ChatGPT has sparked significant interest in AI technologies, leading to a proliferation of similar LLMs, including those developed by Chinese companies. Among Chinese LLMs, Baidu’s ERNIE serves as a relevant comparison to ChatGPT, given its similar developmental history and natural language processing capabilities (Nasr & El-Deeb, 2025; Yuan et al., 2025). The need for an East-West comparison arises from the fact that algorithmic parameters are inherently value-laden, shaped by developers who often prioritize specific values and interests over others (Mittelstadt et al., 2016). Therefore, it is imperative to examine how differences in socio-cultural values, norms, and ideologies between the East and West influence the outputs of GenAI.

This study contributes to the limited body of research on AI in public HRM by examining the applicability of GenAI in the selection of public employees. While there has been extensive scholarly discussion regarding the benefits and risks that AI presents to public administration (e.g., Bullock, 2019; Giest & Klievink, 2024; Madan & Ashok, 2023; Pencheva et al., 2020; Wirtz et al., 2019), empirical research on its impact, particularly in the realm of public HRM, remains scarce (Johnson et al., 2022). Notable exceptions include W. G. Resh et al. (2025), who assess AI’s impact on occupational competencies in the U.S. federal workforce and Keppeler et al. (2025), who examine AI’s rating of person-job fit for candidates of different genders and ethnic backgrounds. While person-job fit is certainly important, public sector hiring typically employs a holistic approach that extends beyond this criterion. Therefore, this study investigates how multiple meritocratic and non-meritocratic factors affect AI-enabled candidate evaluations. Additionally, we enhance the existing literature on AI in public administration by incorporating cultural nuances through an East-West comparative analysis, a perspective that has not been previously explored.

Hypothesis Development

AI-Enabled Employee Evaluations: Merit-Oriented?

The reception of GenAI in HRM is marked by a blend of hope and doubt. Supporters argue that GenAI’s ability to process and generate complex information surpasses human capabilities, offering significant enhancements to HRM (Chowdhury et al., 2024). In contrast, critics raise concerns about bias in GenAI, questioning its suitability for HRM decision-making (Andrieux et al., 2024). For example, Gross (2023) demonstrated that ChatGPT suggests subtly different skills for men and women to include in their curriculum vitae. Additionally, Lippens (2024) discovered that in a simulated candidate screening task, ChatGPT exhibited more racial bias than gender bias, with gender discrimination being particularly pronounced in gender-atypical jobs. Similarly, An et al. (2024) observed racial bias in ChatGPT during automated hiring decision-making processes.

Despite these criticisms, there are also reasons for optimism. For instance, Fang et al. (2024) found that ChatGPT generates less discriminatory content than other LLMs examined in their study. Furthermore, Saumure et al. (2025) noted that while ChatGPT is not entirely free from bias, it is less likely to exhibit bias regarding politically sensitive traits such as race and gender. Additionally, Gaebler et al. (2025) even reported that LLMs, including ChatGPT, tend to favor women and ethnic minorities in an effort to reduce inequity and discrimination in hiring. These insights suggest that, although bias in GenAI is a recognized issue, this bias can potentially be mitigated.

To mitigate GenAI bias, it is crucial to understand its sources. In the machine learning process, bias can originate from problematic training data, algorithm design, and human usage, known as data bias, algorithmic bias, and user bias, respectively (Ferrara, 2023; Tang & Zhu, 2024). Data bias occurs when the data used to train GenAI is missing vital information, contains errors, or comes from biased sources, ultimately resulting in skewed GenAI outputs. Conversely, algorithmic bias arises when the algorithms used to process and generate outputs are inherently biased, reflecting the questionable values and ideologies of their developers. Finally, user bias occurs when problematic user inputs lead to biased outputs.

Among the three sources of GenAI bias, the first two may warrant greater attention because individuals bring their own perspectives and preferences into interactions with GenAI, making it challenging to fully mitigate user bias. Conversely, developers can employ diverse datasets to train GenAI and audit outputs to prevent discriminatory patterns. They can also design bias-aware algorithms to detect biases in user inputs and minimize their impact on outputs. Thus, the risks associated with GenAI bias are not inevitable (Ferrara, 2023; Taeihagh, 2025; Tang & Zhu, 2024). Due to its malleability, GenAI can be guided to adhere to established ethical guidelines in a manner similar to how human children learn moral behaviors. When GenAI develops a social conscience during the machine learning process, we can expect its behavioral patterns and outcomes to align closely with commonly accepted norms. Only under this condition will AI-enabled HRM become more viable (Andrieux et al., 2024).

In the sphere of employee selection, merit orientation, rooted in the Weberian model of bureaucracy, stands as the primary norm that informs effective decision-making (Jankowski et al., 2020). According to Weber (1947), bureaucracy is a professional and impersonal entity governed by rationally designed rules that aim to organize employees into a hierarchy of positions, each with clearly defined duties and responsibilities. The configuration of job positions establishes the standards for evaluating and selecting candidates based on merit, which pertains to technical qualifications and competencies (Ferreira & Serpa, 2019). Building on this theoretical foundation, Jankowski et al. (2020) have empirically demonstrated that merit orientation significantly influences hiring preferences across both public and private sectors.

While merit orientation in employee selection appears ubiquitous, it is not only humans who will adhere to this practice; it can also be extended to GenAI through human-in-the-loop machine learning, where human input shapes the design and refinement of these tools (Monarch, 2021). As mentioned earlier, humans can influence GenAI by providing training data and setting algorithmic parameters. LLMs are trained on a diverse array of publicly available texts, as well as privately acquired information, thereby enabling them to learn through the identification of recurring patterns in the training data, which consequently enhances their ability to understand and generate human language. The algorithms that underpin these models dictate how they learn from the data, process information, and generate outputs. When evaluating candidates, the literature suggests that LLMs do not rely on automatic rubrics (Li et al., 2024). Instead, they generate outputs by decomposing relevant information into basic units known as tokens and matching these tokens with learned textual associations from their training data (Kumar, 2024). For example, if meritocratic attributes are more frequently linked to positive hiring outcomes in the training data, they will carry greater weight in the decision-making process of LLMs. Assuming GenAI absorbs the norm of merit-based hiring during its interactions with humans, we can anticipate that it will prioritize candidates’ meritocratic attributes over non-meritocratic factors in our public employee selection experiment.

Comparing GenAI Models: ChatGPT Versus ERNIE

As we hypothesize that GenAI models will adhere to the norm of merit-based hiring, could there be differences in how they evaluate candidates’ non-meritocratic attributes? Scholars have argued that the algorithms of GenAI are “inescapably value-laden” (Mittelstadt et al., 2016, p. 1), suggesting that human values and beliefs permeate the development of GenAI and configure its operations. Given that Eastern and Western societies often exhibit divergent cultural values and beliefs (Hofstede, 1980, 1991), this study compares ChatGPT from OpenAI and ERNIE from Baidu to explore the differences in public employee selection between LLMs developed in the East and the West.

The success of OpenAI’s ChatGPT has positioned the U.S. as a leader among global competitors in AI development. In contrast to the U.S., where AI development is primarily driven by the ideals of free-market capitalism and the vision of a good AI society designed for diverse populations, China views AI prowess as a strategy to enhance national competitiveness and security (Hine & Floridi, 2024). To date, China has made significant strides in this race, bolstered by government investment and policies (Wu et al., 2020), which enable it to mobilize and integrate resources from both the public and private sectors. These government-led initiatives have facilitated the development of various GenAI systems, notably Baidu’s ERNIE (Nasr & El-Deeb, 2025).

Both ChatGPT and ERNIE demonstrate exceptional natural language processing capabilities and the ability to produce novel yet coherent outputs across a wide range of domains. Given their comparable prominence and capabilities, they serve as suitable representatives of their respective peers and have been extensively compared in parallel in studies such as Guo et al. (2025), Montag et al. (2025), Yuan et al. (2025), and K. Z. Zhou and Sanfilippo (2023). With this stage set, we will now explore the reasons underlying their potential differences in public employee selection.

The cultural dimension of individualism-collectivism offers a useful perspective for understanding the conjectured differences between ChatGPT and ERNIE in assessing candidates’ non-meritocratic attributes. As the most widely recognized and validated dimension of culture (Minkov et al., 2017), individualism-collectivism closely aligns with the contrast between Western and Eastern societies, where the former typically emphasizes individualism and the latter often underscores collectivism (Hofstede, 1980, 1991). Individualist societies, such as the United States, prioritize personal freedoms and rights, thereby affording significant protections to individuals, particularly those in marginalized positions. Consequently, Americans may be more inclined to adopt hiring practices that promote equal opportunities for women and minorities (Byrd & Scott, 2014). Conversely, collectivist societies, such as China, place greater emphasis on group welfare rather than individual interests (Minkov & Kaasa, 2022), suggesting that the Chinese may prioritize workplace cohesion over diversity.

Given this context, we anticipate that ChatGPT, compared to ERNIE, may attend more to candidates’ non-meritocratic attributes, reflecting the common pursuit of workforce diversity within American society. In contrast, ERNIE primarily caters to the Chinese market (Wangsa et al., 2024), where the value of group cohesion often supersedes individual considerations due to the influence of collectivism (GiacobbeMiller et al., 1997). These differences suggest that identical prompts may elicit divergent responses from these LLMs, with ChatGPT more likely to align with the Western narrative of workforce diversity.

Methods

To test the proposed hypotheses, this study conducted a conjoint analysis simulating public employee selection. Conjoint analysis requires respondents to “choose from or rate hypothetical profiles that combine multiple attributes, enabling researchers to estimate the relative influence of each attribute value on the resulting choice or rating” (Hainmueller et al., 2014, pp. 2–3). Lately, this technique has gained traction in employee selection research (Carey et al., 2020). Building on Jankowski et al. (2020), we prompted the latest versions of ChatGPT and ERNIE at the time of this study—GPT 4o and ERNIE 4.5—to evaluate two hypothetical candidates characterized by six meritocratic and non-meritocratic attributes for police constable and primary school teacher jobs. In the following sections, we will detail the development of the experimental vignettes, the data collection process, and the strategies employed for data analysis.

Vignette Development

The vignettes used in this study were set in Hong Kong to facilitate an effective comparison between ChatGPT and ERNIE. By employing a single cultural setting for the ChatGPT-ERNIE comparison, we can attribute any observed differences between the two models—one developed in the West and the other in the East—to their inherent variations, thereby eliminating the confounding effects of contextual differences, such as variations in job specifications across different contexts. Hong Kong was chosen for its status as a vibrant melting pot where East meets West (Chiu & Siu, 2022). Formerly a crown colony under the United Kingdom and now a special administrative region of the People’s Republic of China, Hong Kong is known for its integration of Chinese and Western cultures and traditions into its work practices (Chan & Sim, 2020; Zakić, 2015). Given that ChatGPT is developed within a Western context while ERNIE is tailored to the needs and preferences of users in China, Hong Kong can relate to both and thus serves as an ideal setting for evaluating these GenAI models simultaneously.

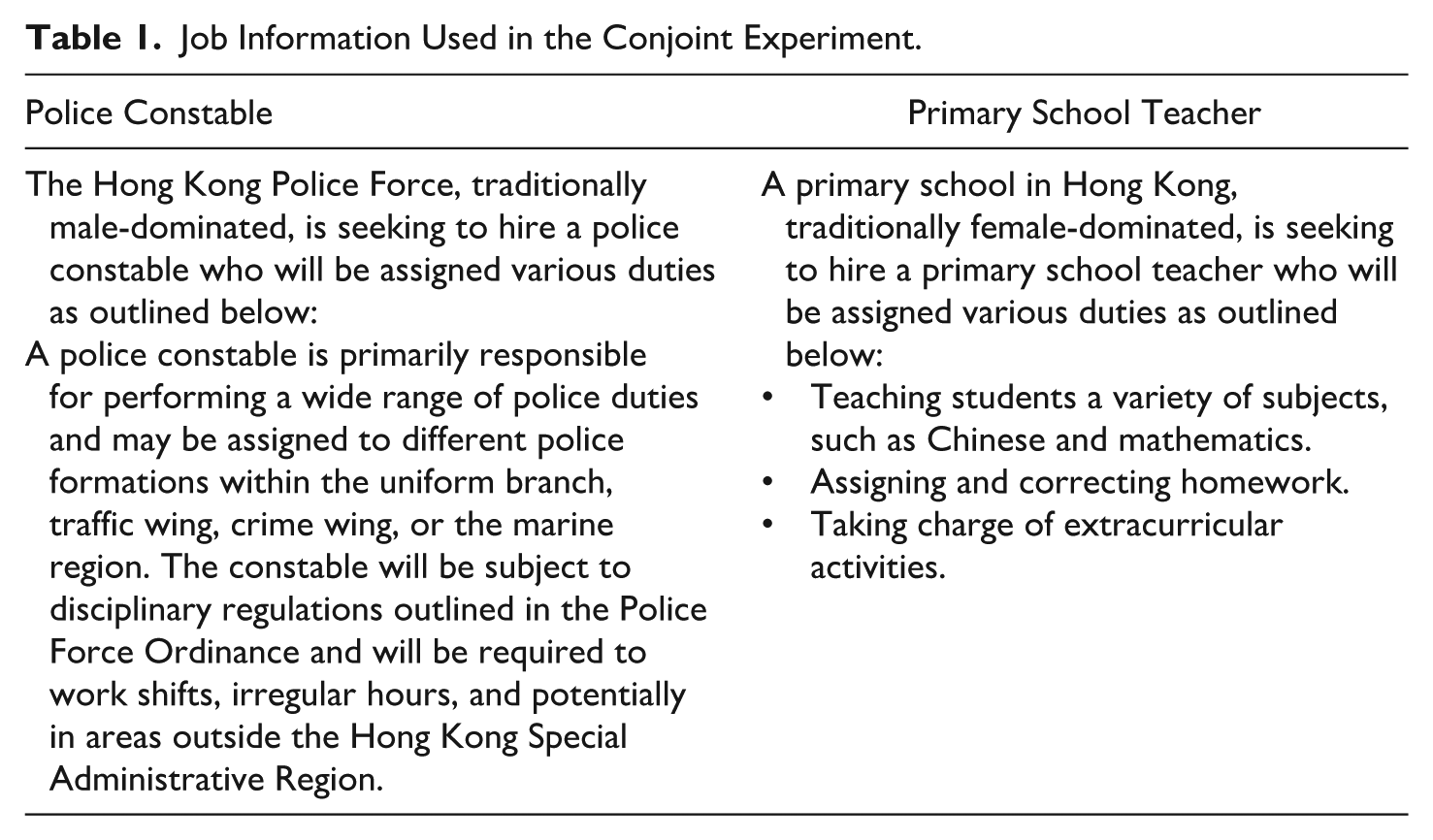

Additionally, there are compelling reasons to simulate candidate selection using the professions of police constables and primary school teachers in Hong Kong. As noted earlier, Lippens (2024) found that gender bias in AI-enabled candidate selection is more pronounced in gender-atypical occupations, with greater discrimination against females in male-dominated fields and vice versa in female-dominated jobs. Therefore, we purposively selected police and primary school teaching professions for this study, as the former is male-dominated (with males comprising 82%) and the latter is female-dominated (with females accounting for 76%), according to the latest statistics. 1 Subsequently, we developed two brief descriptions of the organizations and job duties based on real-life examples (see Table 1).

Job Information Used in the Conjoint Experiment.

If bias indeed tends to emerge in atypical environments, we can also expect bias against non-Chinese individuals to be a legitimate concern in Hong Kong, where most of the population, 91.6%, is Chinese, according to the city’s 2021 Population Census. Furthermore, despite the establishment of anti-discrimination ordinances aimed at promoting equality, there is currently no law in Hong Kong that prohibits age discrimination. Given the prevalent notion of the “curse of 35” in Chinese society (He, 2024), suggesting that individuals over 35 are considered too old to join the workforce, age appears to be a relevant factor in assessing bias in GenAI.

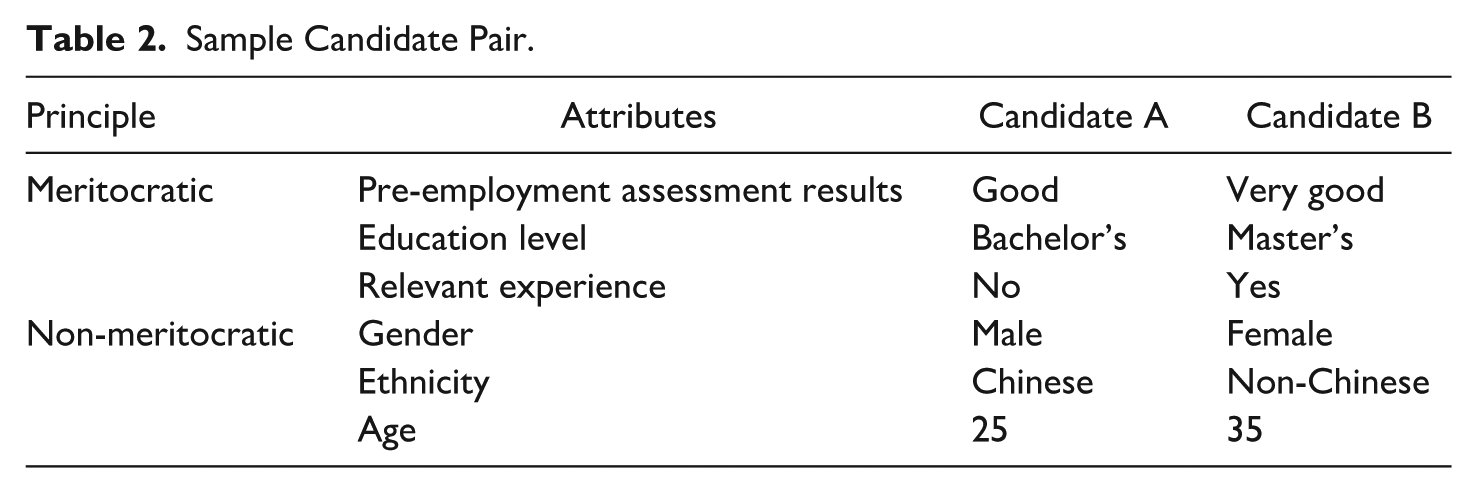

In addition to the aforementioned individual trait factors, we incorporated meritocratic attributes to describe candidates in our conjoint experiment, following the approach taken by Jankowski et al. (2020). In their investigation of civil servants’ hiring preferences, Jankowski and colleagues developed a multidimensional framework of candidate attributes, encompassing both meritocratic and non-meritocratic factors. We adapted three of their four meritocratic attributes, as English language skills are not always necessary for the positions described in our experiment. To align effectively with the job contexts under investigation, we labeled the selected meritocratic attributes as pre-employment assessment results, education level, and relevant experience.

The three meritocratic attributes, along with gender, ethnicity, and age, are presented in Table 2. To better compare the relative influence between attribute values, we streamlined the research design by making the attribute values binary. This approach resulted in 64 (i.e., 26) different candidate profiles, each containing six meritocratic and non-meritocratic attributes. Consequently, we generated a total of 2,016 unique candidate pairs using the formula (64 × (64−1))/2. Each vignette used in the experiment was thus a combination of one job description from Table 1 and one of the 2,016 candidate pairs.

Sample Candidate Pair.

Data Generation and Collection

In our conjoint experiment, both ChatGPT and ERNIE evaluated the 2,016 candidate pairs for the police constable position and an additional 2,016 for the primary school teacher job. Consequently, the simulated candidate selection task was executed 8,064 times. In these occasions, ChatGPT and ERNIE were presented with an experimental vignette containing job information and candidate attributes, followed by the prompt: “Two candidates have applied for the position. Please select one candidate from the following options and provide a reason for the choice.” All our inputs were in English, which is the language commonly used in government contexts in Hong Kong.

Building on studies that employed the application programing interface (API) for large-scale data collection from GenAI (An et al., 2024; Lippens, 2024), we developed Python scripts incorporating the aforementioned experimental vignettes and text prompts. We then submitted these requests to ChatGPT and ERNIE via their respective APIs—specifically, the OpenAI API and Baidu AI Cloud. Through these APIs, we recorded the candidate evaluations made by both models, along with their reasoning. Unlike web interfaces, APIs enable researchers to seamlessly integrate predefined functionalities into LLMs, facilitating efficient data collection. Additionally, each API request starts a new dialog, with no retained historical context, ensuring that the outputs of LLMs remain fresh and unaffected by previous inputs. Data collection spanned 2 months and was concluded in May 2025.

Data Analysis Techniques

Since the present study generated both quantitative and qualitative data, our analysis employed a convergent mixed-methods approach, which involves first analyzing the data independently and then comparing them to derive integrated findings (Creswell, 2014). This approach allowed us to leverage the strengths of both methodologies. That is, quantitative analysis provided causal estimates of attribute effects, whereas qualitative interpretation offered textual depth and insights into the evaluations made by ChatGPT and ERNIE. Through this analytical procedure, we enhanced the richness and robustness of our findings, particularly in uncovering how these LLMs process meritocratic and non-meritocratic cues in their decision-making.

To analyze the text outputs of ChatGPT and ERNIE, we employed content analysis, defined as “the systematic, objective, quantitative analysis of message characteristics” (Neuendorf, 2019, p. 215). This method allowed us not only to categorize the hiring recommendations of LLMs but also to facilitate quantitative data analysis. Initially, APIs were utilized to categorize the GenAI choices for each of the 8,064 candidate pairs. To ensure the reliability of the results, two researchers were assigned to code the data separately. Discrepancies (90 out of 8,064, or 1.1%) were found between APIs and the researchers, as well as between the two researchers, in the coding of “no-choice” responses from ChatGPT and ERNIE, meaning these tools are indecisive in recommending a candidate. These differences were discussed and verified by the entire team to ensure analytical consistency and accuracy.

To evaluate how meritocratic and non-meritocratic factors affect GenAI’s hiring recommendations, we employed Average Marginal Component Effect (AMCE) estimation, as it is widely recommended for analyzing conjoint data (Hainmueller et al., 2014). The AMCE represents the causal effect of a specific attribute (e.g., gender) by comparing the target value to a reference value (e.g., male vs. female), while holding all other attributes constant. To compute AMCEs, we applied ordinary least squares regression with clustered standard errors to account for repeated attribute measures within candidate pairs, ensuring accurate estimations. Additionally, to capture potential differences between LLMs and control for job type, we estimated AMCE models for both ChatGPT and ERNIE, as well as for police constable and primary school teacher positions. The results of these models were visualized using 95% confidence intervals, facilitating vivid assessments and comparisons of the attribute effects under investigation.

Results

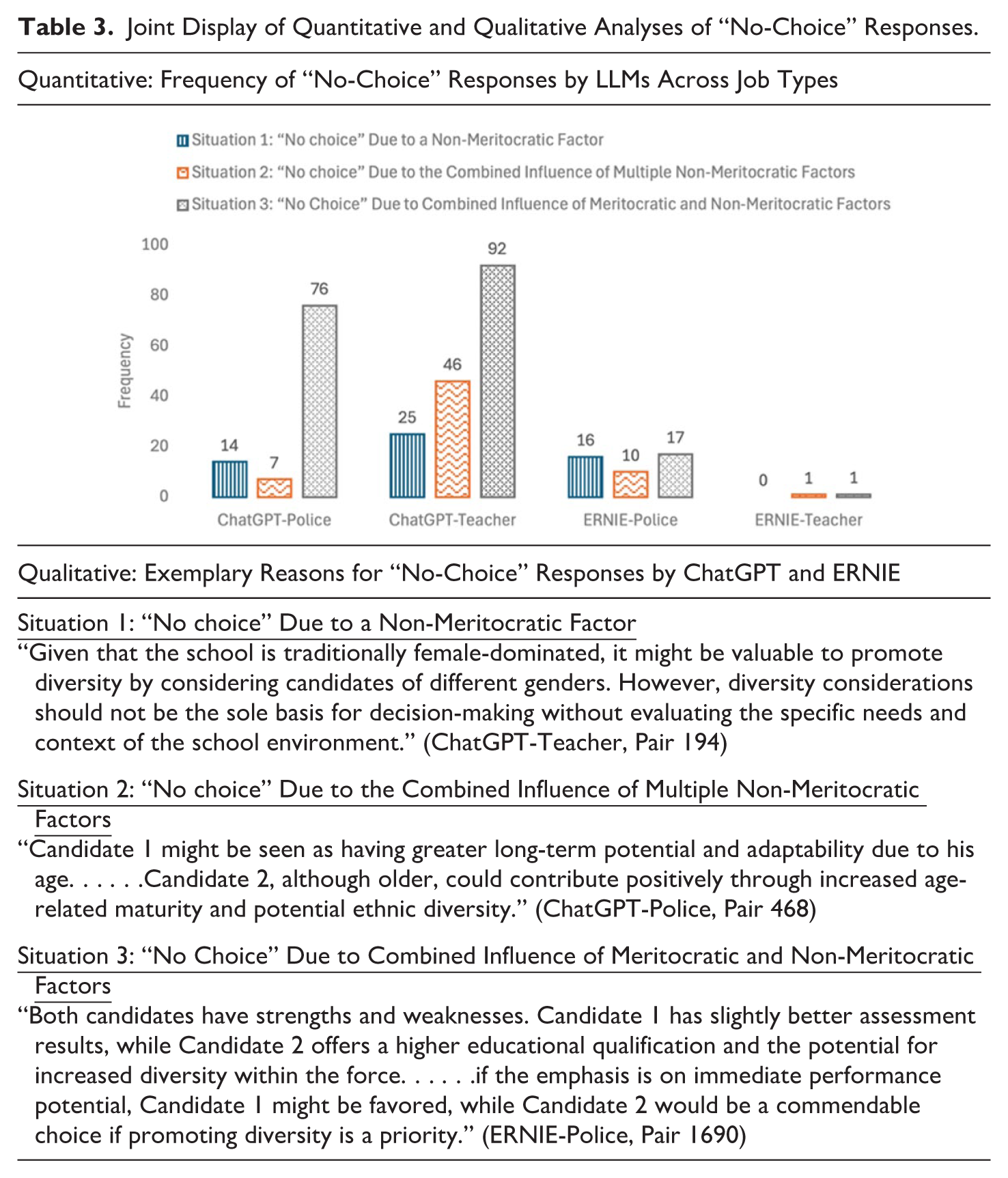

Analysis of Unexpected “No-Choice” Responses

Despite directing ChatGPT and ERNIE to select from a pair of hypothetical candidates, 3.78% of (or 305) evaluations yielded no definitive choice. Of these 305 pairs, 97 were from the ChatGPT-Police combination, 163 from ChatGPT-Teacher, 42 from ERNIE-Police, and two from ERNIE-Teacher. As a choice and a lack thereof are the two sides of the same coin, an in-depth examination of these “no-choice” responses may provide initial insights into how these LLMs make decisions regarding public employee selection. To elucidate the reasons behind these evaluations, we coded them according to the extent to which meritocratic and non-meritocratic factors were involved.

Inspired by Haynes-Brown and Fetters (2021), we present a joint display of quantitative and qualitative analytical results regarding the “no-choice” responses generated by ChatGPT and ERNIE in Table 3. Notable observations from the table include the following: First, all these responses involve at least one non-meritocratic factor. When meritocratic factors alone do not result in a “no-choice,” it becomes evident that LLMs are more adept at evaluating solely merit-related candidate qualities, seemingly aligning with Hypothesis 1. Second, ChatGPT is more likely to provide ambiguous responses involving non-meritocratic factors, such as gender, ethnicity, and age, compared to ERNIE across police and teacher jobs. Refraining from arbitrary decisions may serve to protect specific demographic groups, albeit potentially at the expense of undermining the influence of meritocratic factors, as illustrated in Table 3 (see Situation 3). Given the apparent differences between ChatGPT and ERNIE, there is preliminary support for Hypothesis 2. Next, we turn to further analyses to test proposed hypotheses.

Joint Display of Quantitative and Qualitative Analyses of “No-Choice” Responses.

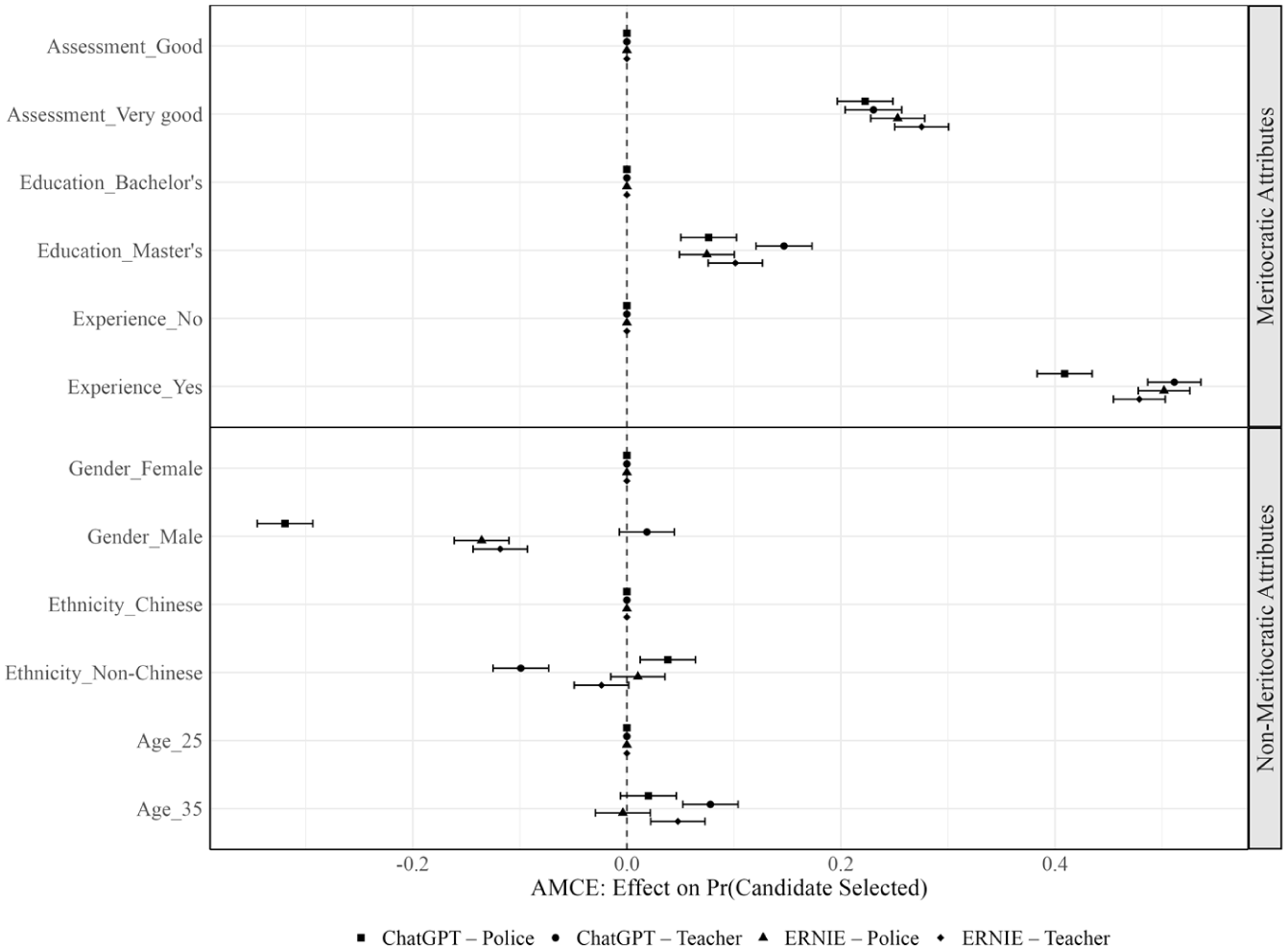

Effects of Meritocratic Versus Non-Meritocratic Attributes

The AMCE results presented in Figure 1 illustrate how meritocratic and non-meritocratic factors influenced candidate evaluations by ChatGPT and ERNIE. In this figure, as well as Table 4, the meritocratic factors under investigation are referred to briefly as assessment, education, and experience for ease of reference. As shown, in both police and primary school workplaces, ChatGPT and ERNIE consistently favored candidates with stronger meritocratic profiles, with relevant experience having the strongest effect on selection probability, followed by pre-employment assessment results and then education level.

Effect of candidate attributes on selection probability.

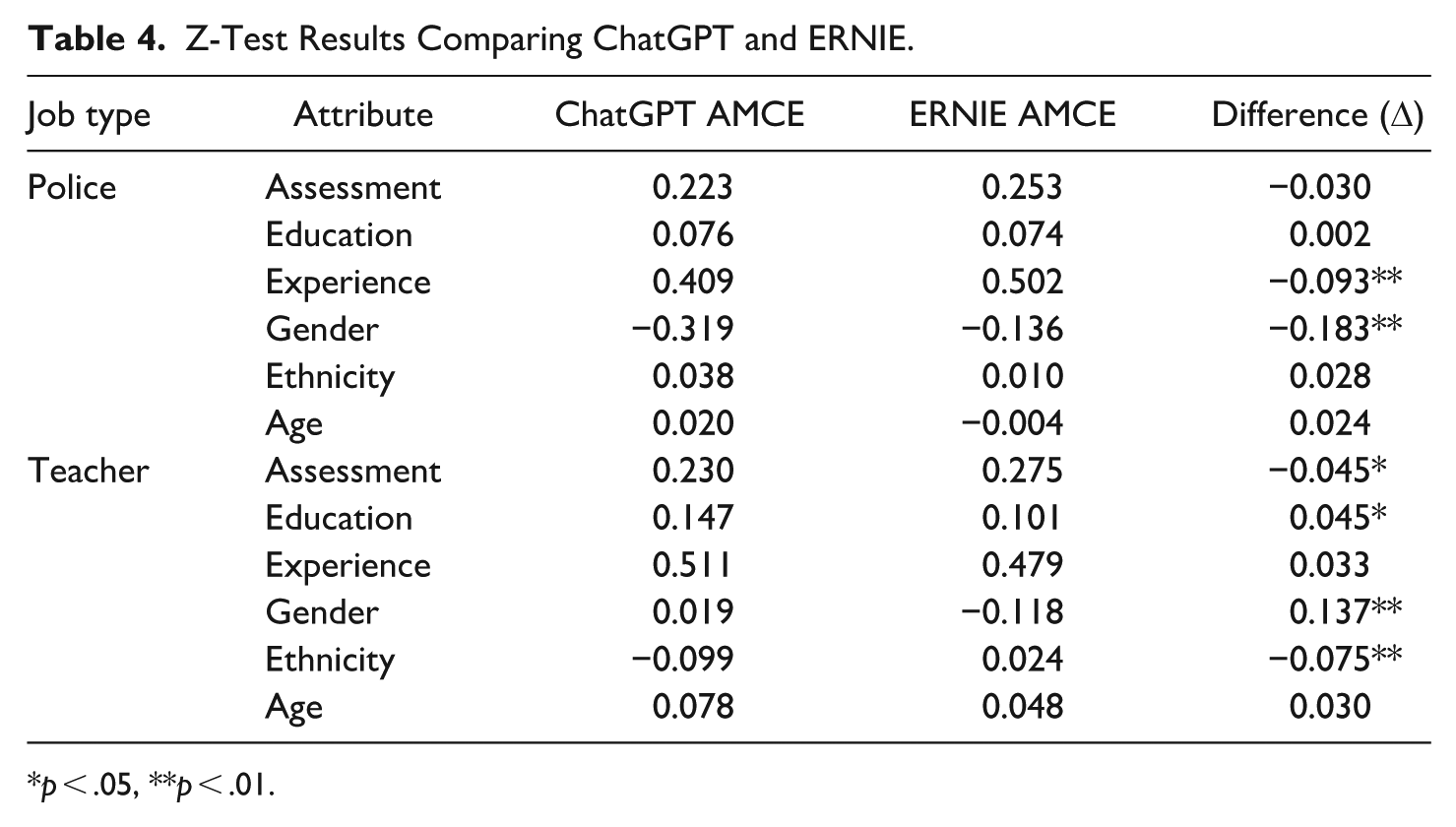

Z-Test Results Comparing ChatGPT and ERNIE.

p < .05, **p < .01.

Regarding relevant experience, both LLMs strongly favored experienced candidates. For instance, the AMCE for the ChatGPT-Police combination (β = .409) indicates that the likelihood of selection increased by 40.9% when the experience attribute shifted from “no” to “yes,” while holding other variables constant. Likewise, experienced candidates were 51.1%, 50.2%, and 47.9% more likely to be selected in the ChatGPT-Teacher, ERNIE-Police, and ERNIE-Teacher combinations, respectively. Additionally, when compared to the reference value of “good,” candidates rated as “very good” in pre-employment assessment results had significantly higher selection possibilities, with increases of 22.3%, 23%, 25.3%, and 27.5% in the four LLM-job combinations. Education level also positively influenced candidates’ selection probabilities, with those holding a master’s degree being more likely to be selected by both LLMs compared to candidates with a bachelor’s degree, as the AMCE values—0.076, 0.074, 0.147, and 0.101—were modest yet statistically significant at the α = .05 level. Overall, these findings demonstrate that GenAI adhered to the norm of merit-based hiring as hypothesized.

Conversely, non-meritocratic attributes, including gender, ethnicity, and age, yielded weaker and sometimes insignificant effects. Firstly, concerning gender, both LLMs penalized male candidates for the police constable job, which is traditionally male-dominated, although ChatGPT did so more than ERNIE (β = −.319 vs. −0.136). Interestingly, ERNIE continued to show a slight preference for female candidates even in the female-dominated teaching profession (β = −.118), whereas ChatGPT displayed no significant gender preference (β = .019, p = .154). Secondly, the impact of ethnicity was modest, with ChatGPT showing a slight preference for non-Chinese candidates in police recruitment (β = .038). In contrast, ERNIE exhibited no preference for ethnicity (β = .010, p = .425). For teaching positions, ERNIE remained indifferent (β = −.024, p = .068), while ChatGPT unexpectedly disfavored non-Chinese candidates (β = −.099). Finally, the effect of age was also marginal; both LLMs preferred candidates aged 35 over those aged 25 for the primary school teacher position (β = .078 for ChatGPT and 0.048 for ERNIE). However, for the police constable position, the AMCE values related to age (β = .020 and −0.004) were not significant at the α = .05 level.

The AMCE results, along with their underlying rationale, are further illuminated by the explanations provided by ChatGPT and ERNIE. Notably, both LLMs emphasized the practical utility of meritocratic attributes, contending that candidates who excel in these areas are better equipped to meet job requirements. This merit-oriented assessment mentality is evidenced by the following selected quotes: Given the importance of a strong pre-employment assessment, which indicates immediate readiness and aptitude for the role, I would select Candidate 1. Although Candidate 2 has higher formal education, Candidate 1′s ‘very good’ assessment result better assures their capability to perform well, especially in a practical teaching environment. (ChatGPT-Teacher, Pair 1276) Candidate 2 holds a master’s degree. . . the increased educational background may provide an edge in understanding complex situations that constables may encounter while on duty. (ERNIE-Police, Pair 533) When selecting a candidate for the police constable position, both candidates have very good pre-employment assessment results and hold bachelor’s degrees. Given that Candidate 2 has relevant experience, which can be highly beneficial in a demanding role like that of a police constable, Candidate 2 appears to be the more suitable choice. (ChatGPT-Police, Pair 309)

In relation to non-meritocratic attributes, particularly gender and ethnicity, ChatGPT and ERNIE frequently cited “diversity” as a key factor in their decision-making. For instance, ChatGPT justified its recommendation for one candidate pair by stating that “the Hong Kong Police Force. . .is traditionally male-dominated, selecting. . .the female candidate could contribute positively towards gender diversity within the force, aligning with broader goals for inclusive hiring practices” (ChatGPT-Police, Pair 1969). ERNIE also demonstrated a preference for female candidates in police constable recruitment, but this tendency extended even to the predominantly female teaching profession, not for reasons of diversity but due to considerations of person-environment fit, as illustrated by the following quote: The school is traditionally female-dominated and hiring a female teacher may align better with the existing culture and dynamics of the school environment. . .encourage a more familiar environment for the students, particularly in a primary setting where young children often relate well with female educators. (ERNIE-Teacher, Pair 428)

While ERNIE was insensitive to ethnicity, ChatGPT favored non-Chinese candidates for the police position, aligning with its advocacy for gender diversity within the Hong Kong Police Force. This is reflected in the following statement: “Candidate 2, being of non-Chinese ethnicity, might bring a different perspective and help the force better reflect and serve an increasingly diverse community” (ChatGPT-Police, Pair 141). However, in primary school teacher recruitment, ChatGPT appeared to be influenced by the vignette specifying teaching Chinese as a job duty, along with the unique context of Hong Kong. Consequently, it preferred Chinese candidates, as illustrated by the following statement: Given that the primary school is in Hong Kong and often has a curriculum that includes subjects like Chinese, a candidate with a background in Chinese culture and language might have an advantage in terms of understanding the local context and effectively communicating with students and parents. (ChatGPT-Teacher, Pair 1707)

Finally, age is considered a proxy for experience and maturity when ChatGPT and ERNIE assessed candidates for the teaching position. This is exemplified by the following quote: “[T]he age difference could be a proxy for life experience, which could be beneficial in handling responsibilities and interacting with students and their parents. . . additional life experience at age 35 could offer an advantage in these aspects” (ChatGPT-Teacher, Pair 337). However, the advantage that older candidates typically hold diminished in the police context, as noted in the statement: “Younger candidates may have advantages in roles requiring physical activity and have potentially longer careers ahead of them” (ChatGPT-Police, Pair 32).

In summary, mixed-methods evidence confirms that ChatGPT and ERNIE prioritized meritocratic attributes over non-meritocratic ones when evaluating candidates. These findings support Hypothesis 1. However, the nuances observed between the two models suggest potential differences in how they process candidate attributes. Therefore, we will explore this further in the next section.

Comparing ChatGPT and ERNIE

To assess the differences between ChatGPT and ERNIE, we conducted a Z-test on AMCEs across candidate attributes in the contexts of police and teacher recruitment. The results are presented in Table 4. Both LLMs uniformly prioritized meritocratic over non-meritocratic attributes. Although significant differences were observed, no clear pattern distinguishes the two models. For instance, ERNIE demonstrated a significantly higher AMCE for relevant experience in police recruitment (Δ = −0.093, p < .001), whereas ChatGPT placed significantly more emphasis on educational credentials in the teacher context (Δ = 0.045, p < .05).

In contrast to the lack of clear distinctions between ChatGPT and ERNIE regarding meritocratic attributes, a stark difference emerged in one non-meritocratic attribute: gender. Table 4 divulges ChatGPT’s inclination toward gender diversity, particularly in the male-dominated police profession (Δ = −0.183, p < .001). In teacher recruitment, this LLM also slightly (yet insignificantly) favored gender minorities (i.e., males), resulting in a statistically significant difference with ERNIE (Δ = 0.137, p < .001). On the other hand, ERNIE appeared ambivalent regarding promoting diversity in gender-atypical workplaces, as previously discussed. Another significant difference between the two LLMs was observed in the attribute of ethnicity for the teacher job (Δ = −0.075, p < .001), indicating ChatGPT was more likely to favor Chinese candidates than ERNIE. As mentioned previously, this is likely due to ChatGPT’s sensitivity to the requirement of teaching Chinese and the local context of Hong Kong.

Taking all the Z-test results into account, Hypothesis 2 is only partially supported. Compared to ERNIE, ChatGPT’s selection of candidates aligned more closely with the prevailing emphasis on gender diversity in contemporary public HRM practices (Guy & Sowa, 2022). However, no clearly different patterns were found in other non-meritocratic factors.

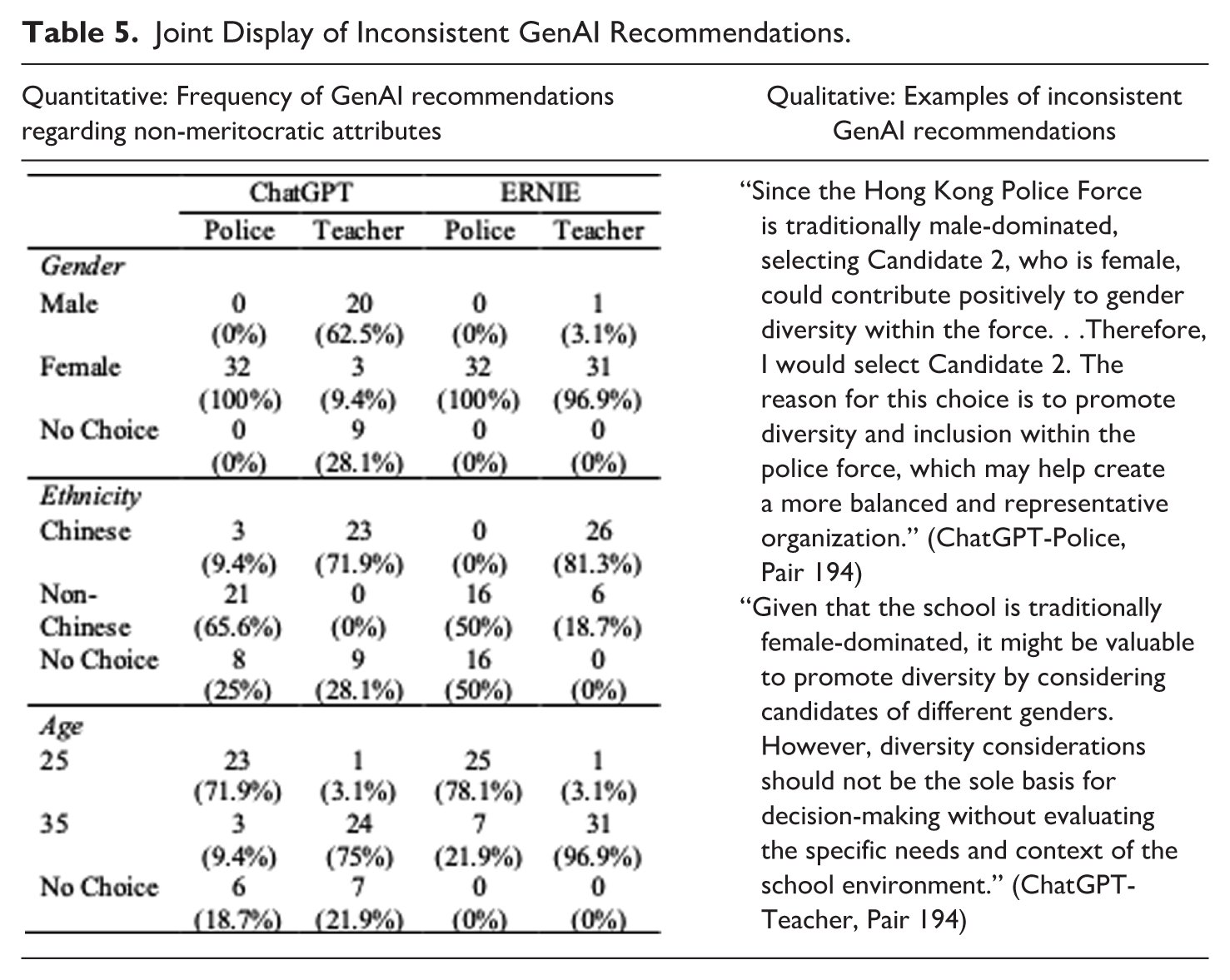

Notable Inconsistencies in GenAI Recommendations

During the data analysis process, we observed that both ChatGPT and ERNIE exhibited a steadfast preference for candidates with strong meritocratic profiles. However, discrepancies emerged when non-meritocratic factors were involved, warranting meticulous attention. To illustrate how these LLMs made different decisions in similar or identical situations, we extracted pairs of candidates that differed solely by one of the non-meritocratic attributes and analyzed the varying recommendations. The results are presented in Table 5, accompanied by qualitative evidence highlighting the inconsistencies in GenAI recommendations.

Joint Display of Inconsistent GenAI Recommendations.

As Table 5 indicates, while ChatGPT and ERNIE consistently favored female candidates for the police position, differing and even conflicting choices were made across all other scenarios. The qualitative examples provided further underscore the stark contradictions within these GenAI recommendations. As shown, when diversity was cited as a primary guiding principle in one recommendation, it was subsequently disregarded in another. These discrepancies in GenAI evaluations are particularly concerning, and we will discuss this issue further in the next section.

Discussion and Conclusion

It is our conviction that before adopting GenAI in public HRM, it is essential to identify and address potential risks. To gauge whether GenAI is a reliable tool, this study examines how it evaluates candidates’ meritocratic and non-meritocratic attributes using conjoint analysis. Our interest in how cultural differences between the East and West influence GenAI outputs prompts us to compare ChatGPT with ERNIE. By employing a mixed-methods approach to analyze the text outputs generated by these LLMs, we begin to uncover a few noteworthy findings.

First and foremost, a recurring theme from the AI-generated quantitative and qualitative data is that the hiring recommendations made by ChatGPT and ERNIE are predominantly driven by candidates’ meritocratic qualities, specifically pre-employment assessment results, educational attainment, and relevant experience. This finding may inspire optimism for AI-enabled HRM, as advocates seek to enhance existing practices by leveraging GenAI’s exceptional capabilities to produce relevant outcomes (Chowdhury et al., 2024; Garg et al., 2022; Johnson et al., 2022; Tambe et al., 2019; Votto et al., 2021). In this study, the decisions rendered by these LLMs appear to be contextually relevant, as they adhere to the commonly established practice of merit-based hiring (Jankowski et al., 2020). Notably, among the three meritocratic attributes, both ChatGPT and ERNIE place greater emphasis on relevant experience and pre-employment assessment results over education level. This indicates that these models prioritize candidates’ readiness for the positions of police constable and primary school teacher. However, if potential, that is, the likelihood for future growth and development, is also highly valued, it may be necessary for users to instruct these LLMs to balance candidate readiness with potential. This could involve assigning greater emphasis to educational level or incorporating other attributes not explored in this study, such as adaptability and leadership potential, which generally correlate with career advancement (Ni et al., 2024; S. Wang et al., 2021).

Another noteworthy finding is that the hiring recommendations of ChatGPT and ERNIE are somewhat influenced by non-meritocratic factors, particularly gender, suggesting a delicate balance between upholding a merit-oriented approach and promoting workplace diversity. This aligns with recent studies indicating that GenAI is increasingly attuned to issues of gender and racial equity (Gaebler et al., 2025; Saumure et al., 2025). Indeed, although concerns about GenAI bias remain valid, there is a genuine possibility for these systems to become fairer and more equitable as algorithmic parameters continue to evolve.

However, it is important to note that the embrace of diversity may not always be deemed desirable by ChatGPT and ERNIE. For instance, while ChatGPT promotes gender diversity in both police and primary school settings, ERNIE favors female candidates based on their fit with the culture and dynamics of a female-dominated school environment. Similarly, both ChatGPT and ERNIE assume that Chinese candidates are more proficient in teaching local subjects, such as Chinese. Given these context-specific hiring preferences, it is imperative to carefully examine the contextual relevance of AI-generated recommendations when non-meritocratic factors are involved. Extra caution is strongly advised, as such recommendations carry significant implications for individuals, organizations, and society as a whole (Jakobsen et al., 2023).

When evaluating non-meritocratic attributes, extra caution is strongly advised for another reason—the considerable inconsistencies observed in the recommendations of ChatGPT and ERNIE. These inconsistencies are manifested not only in these models’ struggle with the trade-off between meritocratic orientation and workforce diversity but also in their erratic choices between gender, ethnicity, and age categories. This raises serious concerns from an HRM perspective. To address this issue, it may be helpful to provide clearer and more detailed instructions to GenAI, including specifying preferences for particular demographic groups. However, if these parameters conflict with those inherent in GenAI, inconsistencies are likely to persist. Alternatively, hiring agencies could remove non-meritocratic factors from AI-enabled employee selection processes. Yet, GenAI may still detect characteristics that imply such factors and incorporate them into its analysis. Clearly, there is no easy solution available. Therefore, it is advisable to continuously monitor and scrutinize GenAI’s hiring recommendations when non-meritocratic factors are involved.

Regarding the differences between ChatGPT and ERNIE, we hypothesized that ChatGPT, developed within a Western context that emphasizes inclusion and fairness, would be more susceptible to non-meritocratic candidate traits than ERNIE. Despite some evidence supporting our hypothesis, these LLMs are, in fact, more similar than different, as illustrated by our AMCE results. This similarity may be attributable to the fact that, during the machine learning process, both models likely encountered comparable training data. For example, to optimize operational capabilities, AI developers often leverage diverse datasets, which may include sources from the West, to train LLMs (Bhupathi, 2025). Consequently, the presumed divide between ChatGPT and ERNIE, due to cultural differences, is diminished by their identical developmental approaches.

What lessons can be drawn from the aforementioned findings, which encompass both encouraging and concerning aspects? Supporters argue that the versatility of GenAI presents substantial potential for its adoption in public HRM, and some of our findings appear to support this stance. However, GenAI, the very tools intended to enhance our work, may also introduce new challenges due to inconsistencies in its outputs. As a result, those who believe that AI-enabled HRM is a reality may be overly optimistic and overlook potential biases. Conversely, those who view AI-enabled HRM as a complete fantasy may be excessively pessimistic about the transformative potential of GenAI. To balance these views, we recommend a cautiously optimistic approach for practitioners in public HRM.

In the context of public employee selection, given that GenAI can consistently evaluate candidates’ meritocratic attributes—though with varying priorities—but not non-meritocratic ones, hiring agencies can train their staff to uncover GenAI’s hidden algorithmic logic and make necessary adjustments. For instance, staff can be instructed to develop a detailed candidate evaluation rubric to guide GenAI’s decision-making. A study has shown that with such a rubric, GenAI can be more reliable than human agents (Markow et al., 2024). However, as previously mentioned, tight and continuous oversight over GenAI is strongly advised. When preexisting decision-making logic conflicts with merit principles, the recommendations made by GenAI must be adjusted to ensure fairness and integrity in public employment.

This study also has significant implications for AI developers, as it offers user feedback that can improve training data and algorithm design. Given that this study identifies inconsistent GenAI outputs as a major risk in automated HRM decision-making, greater efforts and resources should be dedicated to addressing this critical issue. Until AI developers effectively resolve these challenges, public HRM practitioners should continue to exercise caution when relying on GenAI for candidate evaluation.

In conclusion, this study presents the first attempt to compare GenAI models in evaluating candidate attributes for public employment. As AI rapidly transforms human lives, it is crucial to understand and prepare for its impact. Accordingly, this study proactively evaluates the applicability of GenAI in public HRM before its widespread adoption. Our findings lead us to recommend a cautiously optimistic stance regarding the use of GenAI in the selection of public employees. However, HRM encompasses functions beyond employee selection. To comprehensively gauge whether GenAI can enhance public HRM decisions, future studies are encouraged to explore other functions. Researchers may refer to the discussion by Andrieux et al. (2024) on GenAI’s affordances in the context of HRM and related ethical concerns, as this can inform the formulation of their research questions and designs.

Moreover, our investigation is limited to ChatGPT and ERNIE, despite the many LLMs available on the market. Whether other models can yield similar results remains an area requiring further exploration. Additionally, it is essential to note that our research offers a snapshot of the current state of AI technology. Given the rapidly evolving nature of AI, the findings of this study may change over time. Thus, continuous attention and ongoing evaluation by both researchers and practitioners will be crucial as GenAI continues to transform our work practices.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.