Abstract

The increasing use of digital assessment tools in education necessitates rigorous psychometric evaluation of to support their equivalence across administration formats. This study investigated the structural validity and measurement invariance of the Mental Health Continuum-Short Form (MHC-SF), a widely used measure of student well-being, across paper-and-pencil and web-based formats. Participants included 1,297 secondary school students (59.75% female; Mage = 14.57, SD = 1.23) from public schools in the Philippines, who answered the MHC-SF administered in English. Using confirmatory factor analysis, we examined competing measurement models and found superior fit for the theoretically-derived three-factor structure (emotional, social, and psychological well-being) compared to alternative models. Multigroup invariance testing demonstrated measurement invariance between paper-and-pencil (n = 663) and web-based (n = 634) administration modes, with invariant factor structure (configural), factor loadings (metric), intercepts (scalar), and residual variances (strict). The findings corroborate the internal validity of the MHC-SF and demonstrates its utility for assessing adolescent students’ well-being across different administration formats. This study provieds empirical support on the viability of the MHC-SF as a web-based tool for assessing well-being, which is crucial for timely intervention. Critically, the equivalence of the MHC-SF across administration methods has important implications for the growing integration of technology in education, especially in resource-constrained contexts.

Keywords

The increasing integration of technology in educational settings has opened up new possibilities for assessing student well-being and learning outcomes. Online administration of psychological instruments offers potential advantages in terms of efficiency, accessibility, and data management compared to traditional paper-and-pencil methods (Davies et al., 2017; Howell et al., 2010; Lazarova et al., 2021; Van de Looij-Jansen & De Wilde, 2008). However, as assessments transition to digital formats, it is crucial to rigorously examine how different modes of administration may impact the psychometric properties of instruments and measurs, as well as the interpretation of scores (Cai et al., 2008; Fouladi et al., 2002; Kim et al., 2022; Meng et al., 2023; Vecchione et al., 2012).

Establishing measurement invariance is essential to ensure that an instrument measures the same constructs in the same way across different groups or conditions, such as paper-and-pencil vs. online administration (Chen, 2007; Maassen et al., 2023; Vecchione et al., 2012). Without evidence of invariance, observed differences between groups or formats could be due to measurement bias rather than true differences in the underlying constructs of interest (Maassen et al., 2023; Millsap, 2011; Vecchione et al., 2012). As educational institutions increasingly rely on technology-based tools to support both student learning and well-being, demonstrating the psychometric equivalence of measures across traditional and virtual contexts is a fundamental imperative (Davies et al., 2017; Kim et al., 2022; Lazarova et al., 2021; Wasil et al., 2020). This study examines the factorial validity and masurement invariance of the Mental Health Continuum-Short Form (MHC-SF; Keyes, 2002), as a measure of positive well-being, in paper-and-pencil and web-based formats among secondary school students in the Philippines.

The Mental Health Continuum-Short Form (MHC-SF)

The Mental Health Continuum-Short Form (MHC-SF; Keyes, 2002) is a widely used instrument that assesses emotional, social, and psychological dimensions of well-being. It has demonstrated robust psychometric properties across diverse cultural contexts. In China, Guo et al. (2015) validated the scale among 5,399 adolescents (ages 12–18), finding excellent fit for the three-factor model (CFI = .936, RMSEA = .086, SRMR = 0.057) with evidence for internal consistency (α = .89–.94). Their findings supported using MHC-SF scores for identifying flourishing and languishing youth in school-based screening programs. Lupano Perugini et al. (2017) established measurement invariance across gender and age groups in Argentina (N = 1,300 adults), demonstrating that MHC-SF scores can validly support cross-group comparisons for research and intervention evaluation. Karaś et al. (2014) validated the Polish adaptation among 2,115 individuals, establishing scalar invariance across gender and educational groups. Żemojtel-Piotrowska et al.’s (2018) 38-nation study (N = 8,066) provided the most comprehensive cross-cultural evidence, demonstrating configural and metric invariance across all countries, indicating that the three-factor structure and factor loadings remain consistent globally. These validation studies collectively support the MHC-SF for: (1) screening and identifying students requiring mental health support, (2) evaluating intervention effectiveness through pre-post comparisons, (3) conducting cross-cultural and developmental research, and (4) monitoring population-level well-being trends.

The MHC-SF is grounded on the dual-continua model of mental health (Keyes, 2005), which posits that positive mental health is not merely the absence of psychopathology but a distinct dimension encompassing emotional, psychological, and social well-being. This model has substantial psychoeducational relevance. MHC-SF has been shown to distinguish between flourishing and languishing students (Keyes, 2007) and can determine profiles of students who may be at risk for academic difficulties based on their scores in each dimension (Suldo & Shaffer, 2008). MHC-SF profiles have also been shown to predict student engagement and GPA (Antaramian et al., 2010), which has significant implications for educators in addressing needs of students across all levels of well-being (Chan et al., 2022). This multidimensional perspective on well-being has gained considerable empirical support and has been influential in guiding research and intervention efforts aimed at promoting positive mental health (Keyes, 2007; Lamers et al., 2015; Petrillo et al., 2015). These findings suggest that capturing emotional, social, and psychological dimensions provides valuable information for educational intervention and support beyond what mental health symptom screening alone can offer.

Two important observations arise from the existing literature. First, the MHC-SF has been implemented in both formats and research has shown evidence of its reliability and validity, and research supports its theoretical foundations and practical applications (e.g., Bernardo et al., 2022; Mendoza et al., 2023; Mendoza & Yan, 2023); however, no published studies have directly examined its measurement invariance across paper-and-pencil and online administration formats (Lamborn et al., 2018; Luijten et al., 2019; Lupano Perugini et al., 2017). Given the increasing reliance on online administration methods, particularly during the COVID-19 pandemic (e.g., Kim et al., 2022; Meng et al., 2023), establishing the equivalence of the MHC-SF across these modes is critical to ensure the validity of research findings and the appropriateness of intervention decisions based on the instrument (see Cockerham et al., 2021).

Second, while the MHC-SF has been validated in various cultural contexts, there is a paucity of research examining its psychometric properties in lower middle-income countries such as the Philippines. The Philippines, with its large population and growing mental health concerns (Lally et al., 2019; Perlas et al., 2022), represents an important context for investigating the applicability and invariance of the MHC-SF. Establishing its measurement equivalence across administration modalities in this setting would not only contribute to the MHC-SF’s cross-cultural validation but also provide valuable insights into the assessment of well-being in resource-limited contexts.

Paper-and-Pencil versus Online Administration and the MHC-SF

Previous investigations of well-being and mental health instruments such as the Satisfaction With Life Scale, Positive and Negative Affect Schedule, Subjective Happiness Scale, and Strengths and Difficulties Questionnaire have shown that the online administration mode can yield similar results to paper-based administration (Van de Looij-Jansen & De Wilde, 2008; Howell et al., 2010). However, other studies have noted potential differences between these two modes of administration. For example, Fouladi et al. (2002) and McCoy et al. (2004) found that online surveys can introduce biases and may affect stability of the participants’ responses, although the magnitude of these effects was generally small. Relatedly, Crawley et al. (2000) noted that online administration of quality-of-life instruments was preferred by both patients and research coordinators as it was found to be more comfortable, efficient, and easier to use.

To date, no published study has directly examined these modes of administration for the MHC-SF, despite its validation across several countries (Żemojtel-Piotrowska et al., 2018) and groups (e.g., Lamborn et al., 2018; Luijten et al., 2019; Lupano Perugini et al., 2017). Given the MHC-SF’s multidimensional structure and its grounding in the dual-continua model of mental health (Keyes, 2005), it is essential to establish the invariance of the scale’s factor structure and psychometric properties across paper-and-pencil and online administration methods. This would provide evidence for the robustness of the underlying theoretical framework and support the valid use of the MHC-SF in various research and educational settings, regardless of the assessment medium.

The Present Study

Building upon the existing literature and addressing the identified research gaps, the present study aims to evaluate the psychometric properties and measurement invariance of the English version of the MHC-SF across paper-and-pencil and online administration methods. We hypothesize that the three-factor structure of emotional, social, and psychological well-being will demonstrate a good fit to the data and show invariance across the two administration modes. Testing this hypothesis will provide evidence for the valid use of the MHC-SF in online formats, with implications for educational research and practice in diverse contexts, particularly in lower middle-income countries like the Philippines.

This study has three main objectives. First, by focusing on a sample of secondary school students, we aim to shed light on the MHC-SF’s utility in assessing well-being among adolescents, a population facing unique mental health challenges (Rapee et al., 2019; Salmela-Aro & Tuominen-Soini, 2010). Establishing measurement invariance across administration methods in this age group would support the MHC-SF’s use in school-based well-being screening and intervention programs that increasingly incorporate online platforms (Dowdy et al., 2015; Solmi et al., 2022). Second, by conducting the study in the Philippines, we seek to expand the cross-cultural applicability of the MHC-SF and its utility in resource-limited settings. The findings may inform well-being assessment and intervention efforts in contexts with limited mental health infrastructure and resources (Lally et al., 2019; Perlas et al., 2022). Finally, we aim to provide evidence for the robustness of the dual-continua model of mental health (Keyes, 2005) by examining the invariance of the MHC-SF’s factor structure and psychometric properties across administration methods. This addresses the need for research on the MHC-SF’s factorial validity across traditional and web-based modes of administration, particularly in the context of a lower middle-income country facing the challenges posed by the COVID-19 pandemic. By addressing these gaps, this study contributes to the understanding of the MHC-SF, its use in diverse contexts and modalities, its implications for educational research and practice.

Methods

Participants and Procedures

Selection and Recruitment

Data collection occurred during two time periods, with paper-and-pencil administration in January 2020 and online administration in May 2020. In the Philippines, no educational disruptions due to COVID-19 cases have been enforced in January 2020. Nationwide closures began in mid-March, and by May 2020, schools had already transitioned to remote learning. Rather than viewing this as a limitation, we consider it an ecologically valid test of measurement equivalence under naturally occurring conditions. This temporal difference provides an opportunity to examine measurement equivalence under substantially different contexts, testing whether the MHC-SF maintains psychometric integrity across both format and contextual changes.

Schools were selected through stratified random sampling from the Department of Education’s (DepEd) regional database of public secondary schools, stratified by size and urban/rural location. Classrooms within each school were randomly selected for participation. All participating public secondary schools served predominantly lower-income communities. Individual student socioeconomic status was documented through receipt of government assistance: 68.4% of students across both samples (n = 887 of 1,297) were recipients of the Pantawid Pamilyang Pilipino Program (4Ps), the Philippines’ national conditional cash-transfer program targeting households classified as poor or near-poor based on program eligibility criteria tied to the national poverty line (approximately USD215 monthly household income at the time of data collection). Specifically, 67.9% of the paper-and-pencil sample (n = 450 of 663) and 68.9% of the online sample (n = 437 of 634) received government assistance.

Inclusion criteria for participants were: (1) enrollment in Grades 7–10 and (2) sufficient English comprehension as verified by teachers. For participants under 18 years old, written parental consent was obtained via consent forms sent home with students 1 week prior to data collection. Students provided written assent on the day of assessment. For 18-year-old participants, direct informed consent was obtained. No students were excluded based on academic performance, socioeconomic status, or other characteristics.

For paper-and-pencil administration, 796 of 1,200 invited students from one large urban public secondary school provided consent (66.3% response rate). For online administration, 675 of 850 students invited across five public secondary schools within the same district consented to participate (79.4% response rate). The higher online response rate likely reflects different recruitment contexts (single school vs. multiple schools) rather than format preference. Analysis of available administrative data indicated no significant differences between participants and non-participants in age (available for all invited students) or grade level distribution (χ2 = 2.34, p = .504).

Administration Procedures

The MHC-SF was administered in English. All participants were enrolled in English-medium instruction schools where all subjects are taught in English. Participants’ English proficiency was evidenced by successful completion of at least 6 years of English-medium instruction, English language grades of “satisfactory” or above in the previous academic year, and teacher verification of adequate comprehension skills for survey completion.

Paper-and-pencil administration occurred in classroom settings during regular school hours with 25–35 students per session (see Mendoza & Yan, 2021). Trained research assistants provided standardized verbal instructions following a scripted protocol. They responded to student questions but did not assist with item interpretation. No time limits were imposed; completion typically required 12–18 minutes. Online administration was conducted using the Qualtrics platform. Students answered the survey in school computer laboratories during designated class periods. Technical support staff were available for platform-related issues (e.g., login problems) but did not assist with survey content. Completion time ranged from 10 to 15 minutes.

Both formats included: (1) welcome screen/page with study overview, (2) informed consent/assent process, (3) demographic questions (age, gender, grade level), (4) MHC-SF items in fixed order, and (5) closing acknowledgment. Item wording, response scales, and sequencing were identical across formats, although the digital format enabled forced responses to minimize missing data. All consent materials described the study purpose, procedures, voluntary participation, confidentiality protections, and available mental health referral resources. The study was approved by the Human Research Ethics Committee of the first author’s affiliated university (Ref. no.: 2019-2020-0152).

Participants

The paper-and-pencil dataset initially contained 796 responses. Missing data occurred primarily due to incomplete surveys (e.g., skipped items). Analysis revealed that 94 respondents (11.8%) had missing data exceeding 5% of MHC-SF items and were excluded from analysis to maintain data quality. The remaining 702 cases had missing data on 1–4 items (representing 0.5%–3.6% of responses per case). We tested whether data were missing completely at random (MCAR) using Little’s MCAR test (χ2 = 87.34, df = 94, p = .665), confirming that missingness was not systematically related to observed variables. This supports the use of multiple imputation procedures.

Multiple imputation with chained equations (MICE; van Buuren & Groothuis-Oudshoorn, 2011) was performed using the mice package in R (version 3.14.0), generating 20 imputed datasets. The imputation model included all 14 MHC-SF items, including demographic variables (age, gender, grade level) as auxiliary variables. Predictive mean matching was used for continuous variables. Results were pooled across imputations following Rubin’s rules. After outlier removal using the Mahalanobis distance rule (see Bedrick et al., 2000), the final paper-and-pencil sample consisted of 663 cases.

The online dataset (n = 675) contained no missing data due to forced-response format in the Qualtrics platform, which required responses to all items before proceeding. After outlier removal (n = 41), the final online sample consisted of 634 cases.

The final analytical dataset consisted of 663 from the paper-and-pencil administration and 634 for the online administration (N = 1,297; 59.75% females). The mean age of the respondents was 14.57 years old (SD = 1.23). Students in Grade 10 comprised the largest percentage of participants (n = 309; 23.59%), followed by students from Grade 9 (n = 275; 20.99%).

Instruments

The Mental Health Continuum-Short Form (MHC-SF; Keyes, 2002; e.g., Mendoza & Yan, 2023) is a 14-item measure of general well-being, with items pertaining to emotional (3 items; e.g., “satisfied with life”), social well-being (5 items; e.g., “that you had something important to contribute to society”), and psychological (6 items; e.g., “that your life has a sense of direction or meaning to it”). Respondents rated the items from 1 (never) to 6 (everyday). The Cronbach’s alpha for emotional, social, and psychological well-being subscales for this study were α = 0.82, α = 0.87, and α = 0.89, respectively. The Cronbach’s alpha of the full MHC-SF is α = 0.94.

Data Analysis

To determine the best-fitting factor structure of the MHC-SF, we conducted confirmatory factor analysis (CFA) using the lavaan package (Rosseel, 2012). We tested three factor structures: (1) a unidimensional model included all items in one general latent factor with all 13 MHC-SF items, (2) a two-factor model with emotional (3 items) and eudaemonic well-being (11 items) as latent variables, and (3) a three-factor model with emotional (3 items), social (5 items), and psychological (6 items) well-being as latent variables. In the CFA, we employed the Weighted Least Squares with Mean and Variance (WLSMV) adjusted estimator for all CFA models (e.g., Valdez & Mendoza, 2024). This estimator has demonstrated advantages over both Weighted Least Squares and Maximum Likelihood (WLSML) methods (Flora & Curran, 2004). We assessed CFA models using the Satorra-Bentler scaled test statistic and compared models using the chi-square tests (SBχ2). To determine goodness-of-fit, we used several fit indices, including the Comparative Fit Index (CFI), Tucker-Lewis Index (TLI), Root Mean Square Error of Approximation (RMSEA), and standardized root mean square residual (SRMR). Following conventional guidelines, models with CFI and TLI values exceeding 0.90 and RMSEA and SRMR values below 0.08 were considered to have good fit to the data (Hu & Bentler, 1999).

Consequently, to investigate the measurement invariance of the best-fitting model, we conducted multigroup CFA using the equaltestMI package in R (Jiang & Mai, 2020). The equivalence testing required dividing the sample into two groups based on the administration procedures: paper-and-pencil and web-based administration. We first established the initial multigroup CFA model for each group, allowing all factor loadings, uniqueness, and residuals to be freely estimated across groups (e.g., Mendoza et al., 2024; Mendoza & Yan, 2025). Subsequently, we performed configural, metric, scalar, and strict invariance tests by progressively constraining the factor structure, factor loadings, intercepts, and errors, respectively. To determine measurement invariance, we followed Chen’s (2007) recommendations for samples greater than 300: a change of CFI (ΔCFI) that is less than or equal to .01, supplemented by a change of RMSEA (ΔRMSEA) that is less than or equal to .15 or a change in SRMR (ΔSRMR) that is less than or equal to .03 will indicate invariance. All analyses were conducted in R (R Core Team, 2016).

Results

Comparing the Factor Structures of the MHC-SF

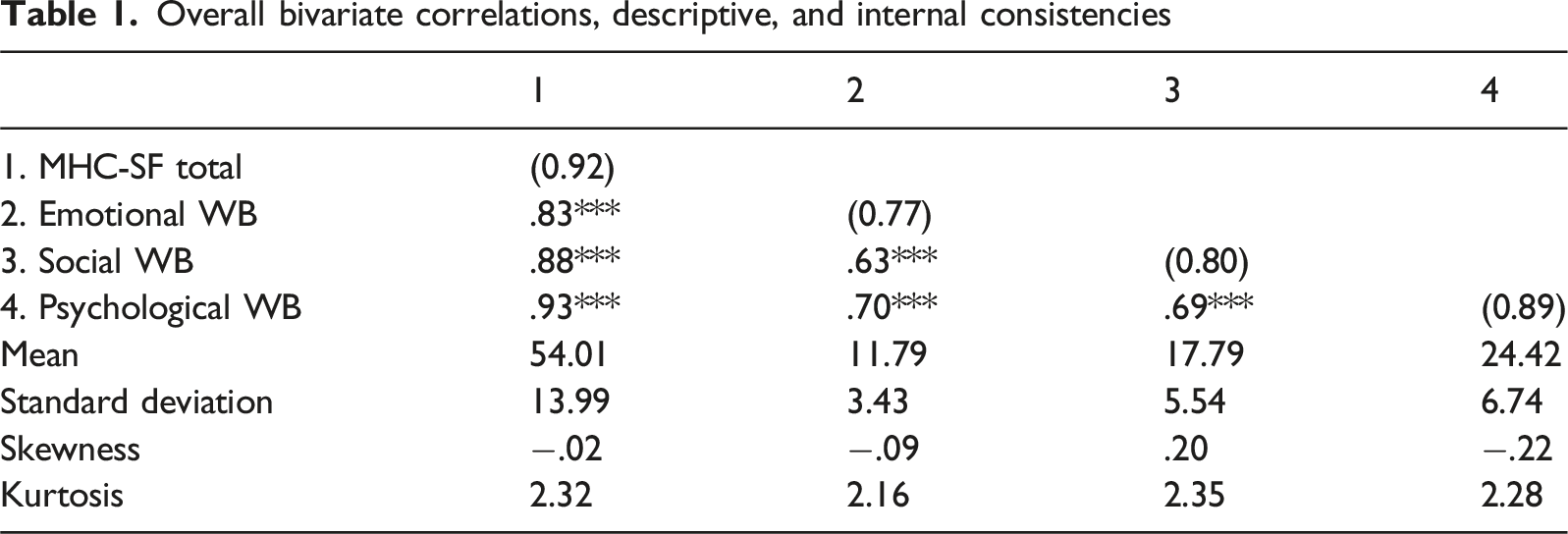

Overall bivariate correlations, descriptive, and internal consistencies

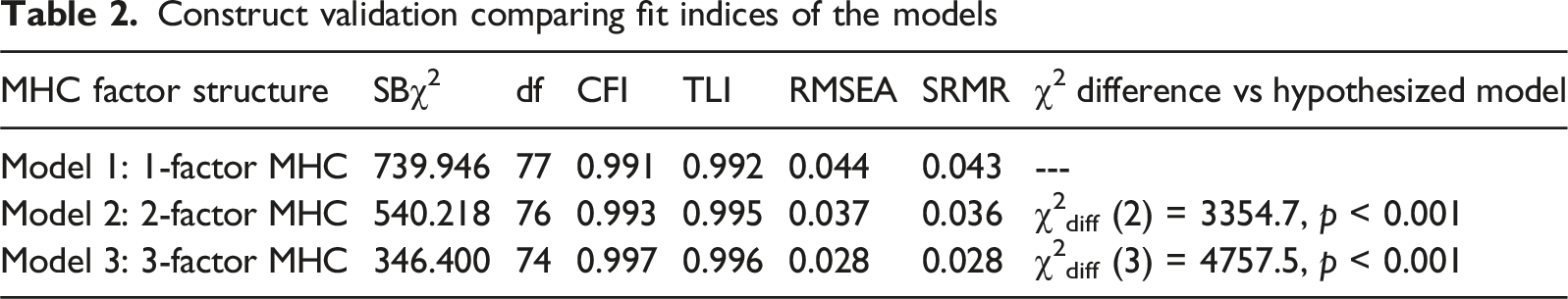

Construct validation comparing fit indices of the models

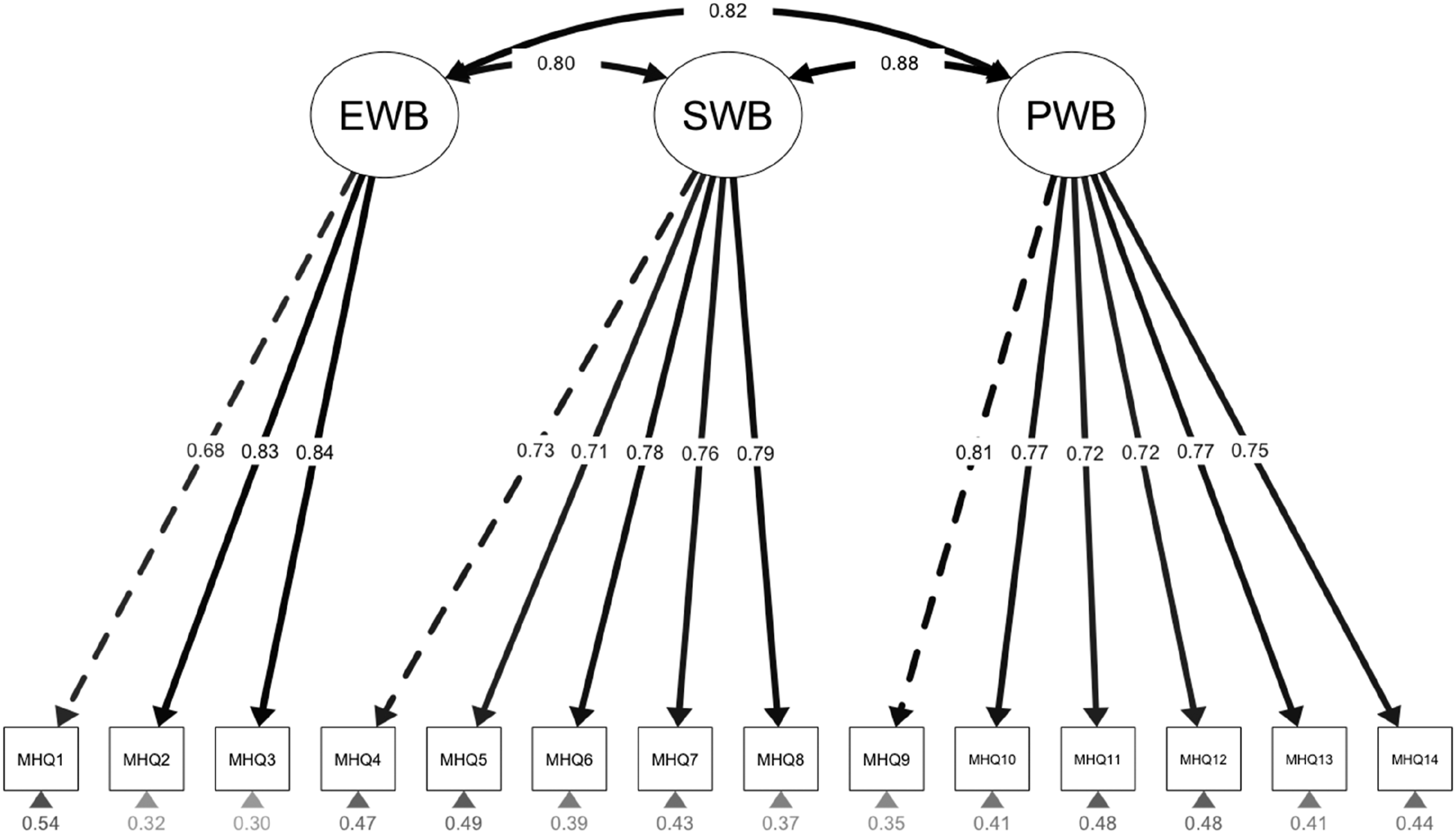

The three-factor structure of the MHC-SF

Administration Invariance of the MHC-SF Through Multigroup CFA

We used the best-fitting model of the MHC-SF (i.e., three-factor model) to test the measurement invariance between two scale administration procedures: paper-and-pencil and online. Preliminary analyses confirmed no significant differences between administration groups on demographic or socioeconomic characteristics: age (t = −1.43, p = .154), gender distribution (χ2 = 0.13, p = .716), grade level distribution (χ2 = 3.21, p = .523), or government assistance receipt (χ2 = 0.84, p = .359). These findings support the interpretation that observed measurement equivalence reflects format robustness rather than sample differences.

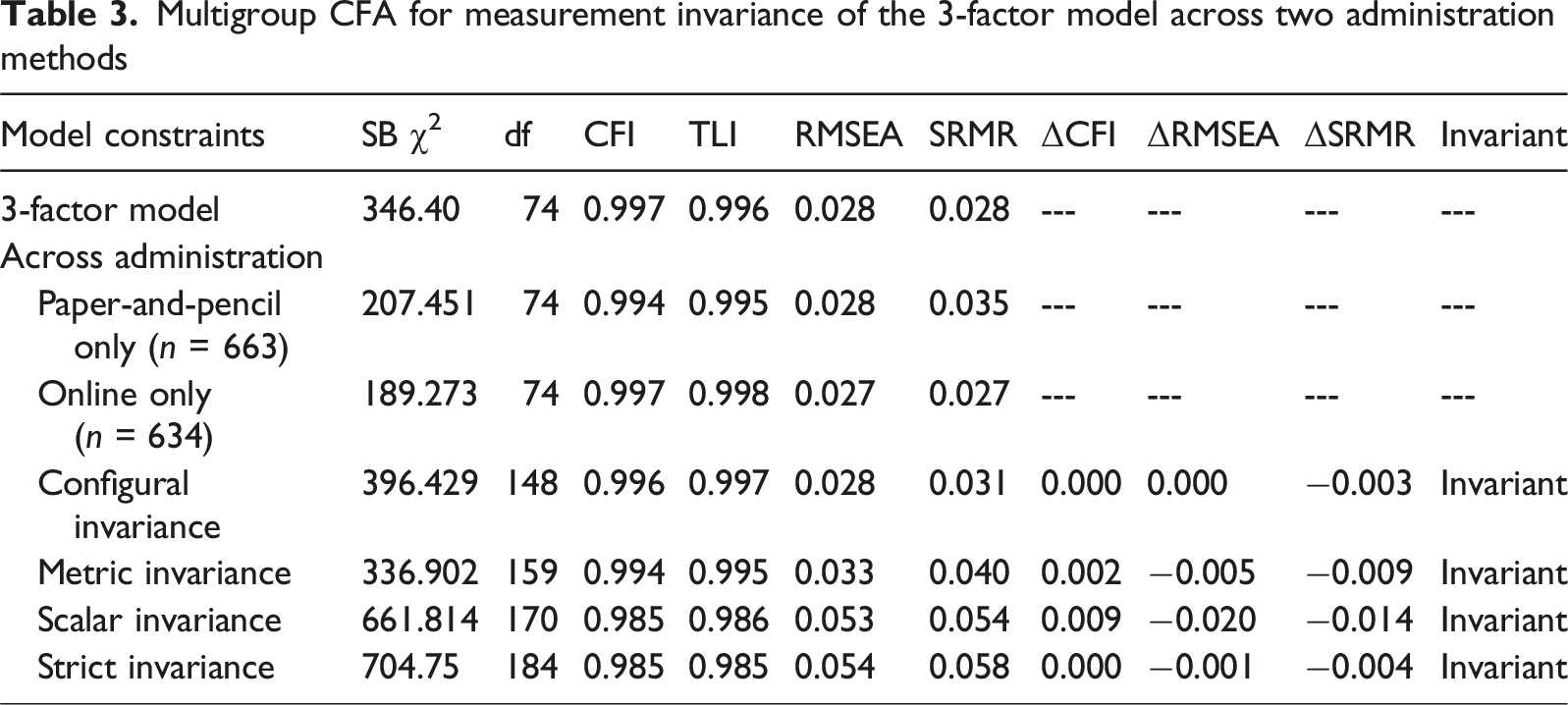

Multigroup CFA for measurement invariance of the 3-factor model across two administration methods

Post-hoc Analysis of Contextual Effects

Following the establishment of strict measurement invariance, we conducted post-hoc analyses to examine whether the temporal context affected mean well-being levels (see Supplemental Table 2). This analysis addresses a distinct question from measurement invariance: while invariance examines whether the measurement model functions equivalently, mean comparisons test whether actual well-being levels differed between contexts. Mean comparisons revealed significant differences across all MHC-SF scales with medium effect sizes (Cohen, 1988).

Item-level descriptive statistics (Supplemental Table 1) show that students in May 2020 reported lower means across all 14 items. These patterns are consistent with documented pandemic impacts on adolescent mental health, including increased anxiety, social isolation, and disrupted routines during early COVID-19 school closures (e.g., Cockerham et al., 2021; Lee, 2020). However, we acknowledge that mean differences may also reflect unmeasured school-level differences in student well-being, as paper–pencil administration occurred in one school while online administration occurred across five different schools within the same district.

Discussion

This study evaluated the psychometric properties and measurement invariance of the Mental Health Continuum-Short Form (MHC-SF; Keyes, 2002) administered through paper-and-pencil and online methods among a large sample of Filipino secondary school students. Consistent with previous literature (Franken et al., 2018; Guo et al., 2015; Karaś et al., 2014; Lamers et al., 2011), the findings supported the three-factor structure of the MHC-SF comprising emotional, social, and psychological well-being dimensions. Additionally, the three-factor model exhibited significantly better fit compared to one-factor and two-factor models, showing evidence of construct validity.

The three-factor structure’s robustness across paper-and-pencil and web-based administration procedures suggests that the MHC-SF’s conceptualization of well-being as a multidimensional construct (Keyes, 2002) holds true regardless of the assessment medium. This finding aligns with the dual-continua model of mental health (Keyes, 2005), which posits that positive mental health is not merely the absence of psychopathology but a distinct dimension encompassing emotional, psychological, and social well-being. The invariance of the three-factor structure across administration methods supports the notion that these well-being components are fundamental to the conceptual framework underlying the MHC-SF and are not influenced by measurement administration.

Notably, we found evidence of strict measurement invariance between paper-and-pencil and online administration, indicating comparable reliability and validity of scores across these modes. This finding suggests the MHC-SF can be validly applied through various formats, supporting its use in adapted contexts necessitated by changes in educational practices and environments (Kim et al., 2022; Meng et al., 2023). Our results address the gap noted by Lamborn et al. (2018), Luijten et al. (2019), and Lupano Perugini et al. (2017) by providing the first evidence of the scale’s measurement invariance when transitioning between traditional and virtual administration formats.

Our design presents both a limitation and a strength. We cannot isolate format effects from temporal effects, as data collection occurred during different contexts (pre-COVID vs. during COVID). Post-hoc analyses revealed substantial mean-level differences with medium effect sizes (d = 0.43–0.63) across all well-being dimensions. However, this addresses an important practical question: Does the MHC-SF maintain measurement equivalence when administered under substantially different contextual conditions? Strict measurement invariance, despite these substantial differences provides particularly robust evidence. Future research employing counterbalanced designs would complement our findings by isolating format effects under controlled conditions.

As educational institutions increasingly rely on technology-based tools to support student learning and well-being, demonstrating the psychometric equivalence of measures across paper-and-pencil and online contexts is a fundamental concern (Davies et al., 2017; Kim et al., 2022; Lazarova et al., 2021; Wasil et al., 2020). Our results suggest the MHC-SF can be used interchangeably between these modes without introducing measurement bias, consistent with some previous studies on the online adaptation of well-being scales (Howell et al., 2010; Van de Looij-Jansen & De Wilde, 2008). Our study extends this research by establishing strict invariance for the multidimensional MHC-SF and sampling students from an under-represented, lower-middle-income country.

Implications

The study’s findings have important theoretical and practical implications. From a theoretical perspective, the invariance of the MHC-SF’s three-factor structure across paper-and-pencil and web-based administration supports the robustness and generalizability of the dual-continua model (Keyes, 2005) and the multidimensional nature of well-being. We demonstrated strict measurement invariance of the MHC-SF across paper-and-pencil and online administration formats. This finding addresses a critical gap in literature and has three important implications. First, the MHC-SF functions equivalently across formats in that observed scores reflect the same underlying constructs regardless of administration method. Second, educators and researchers can confidently transition between formats or compare scores obtained via different methods without introducing measurement bias. Third, this evidence supports flexible, resource-adaptive assessment strategies where schools can select administration formats based on available infrastructure rather than psychometric concerns.

The robustness of our invariance findings is particularly noteworthy given the substantial contextual differences between samples. Post-hoc analyses revealed that students assessed online in May 2020 (during COVID school closures, from five schools) reported significantly lower well-being than students assessed via paper–pencil in January 2020 (pre-COVID, from one school), with medium effect sizes across all subscales (d = 0.43–0.63). These differences may reflect temporal effects related to the pandemic, school-level differences in student well-being, or a combination of both factors. The demonstration of strict measurement invariance despite these meaningful differences (which may stem from temporal, school-level, or combined effects) provides particularly robust evidence for the scale’s psychometric stability by functioning equivalently even when comparing groups that differ substantially in their actual well-being levels.

Practically, the establishment of strict measurement invariance for the MHC-SF across administration methods has significant implications for educational and psychological research and practice. The findings indicate that the MHC-SF can be confidently used in online formats without sacrificing psychometric integrity, which is particularly valuable in light of the increasing reliance on digital tools in educational and mental health settings (Davies et al., 2017; Lazarova et al., 2021; Wasil et al., 2020). This flexibility allows for the valid assessment of student well-being across various contexts and facilitates the transition to remote learning and psychological service delivery when necessary, as evidenced during the COVID-19 pandemic (Kim et al., 2022; Meng et al., 2023). Moreover, the results, along with previous research highlighting the impact of the pandemic on adolescent well-being and social interactions (Cockerham et al., 2021), underscore the importance of identifying and addressing student needs in online education to foster positive mental health.

These findings extend beyond well-being assessment to inform the broader transition toward digital educational measurement. As schools increasingly implement computer-based testing for achievement and aptitude assessment, establishing measurement equivalence across formats becomes paramount. Our methodological approach (i.e., multigroup confirmatory factor analysis testing configural through strict invariance) provides a framework applicable to other educational measures. The demonstration that a multidimensional psychological instrument maintains psychometric integrity across formats suggests similar robustness may be achievable for cognitive and achievement measures, though empirical verification remains necessary for each instrument. Educational psychologists and assessment specialists should prioritize invariance testing when transitioning established measures to digital formats, ensuring that technological modernization does not compromise measurement validity.

Our findings also illustrate an important methodological principle: measurement invariance testing and mean comparison serve distinct but complementary purposes. Invariance testing examines whether the measurement model (factor structure, loadings, intercepts, and residuals) functions equivalently across groups, while mean comparisons examine whether groups differ on the construct being measured. These are separate questions. The common misconception that groups must have similar means for invariance testing to be valid or meaningful would paradoxically eliminate the primary purpose of invariance methodology, that is, enabling valid cross-group comparisons when groups are expected to differ. Our study demonstrates this whereby we established measurement equivalence first, which then provided the psychometric foundation to confidently interpret substantial mean differences as reflecting genuine contextual effects (whether temporal, school-level, or combined) rather than measurement bias introduced by format or assessment conditions.

Lastly, the study’s focus on Filipino secondary school students addresses the need for culturally-sensitive mental health assessment tools in lower middle-income countries. The MHC-SF’s psychometric robustness in this context highlights its potential as a valuable instrument for assessing well-being among adolescents in diverse cultural settings, particularly those that may have limited access to mental health resources. The findings can inform the development and evaluation of interventions aimed at promoting positive mental health and well-being among students in the Philippines and other similar contexts.

Limitations and Future Research Directions

While this study provides validity evidence and measurement invariance of the MHC-SF across administration methods, some limitations should be considered.

First, our design confounds administration format with both temporal context and school-level factors, precluding definitive attribution of mean differences to any single source. Paper–pencil administration occurred in one school in January 2020 (pre-COVID), while online administration occurred across five schools in May 2020 (during COVID). Although observed mean differences align with expected pandemic impacts on adolescent well-being, they may also reflect unmeasured school-level differences or the interaction of multiple factors. Importantly, the demonstration of strict measurement invariance remains valid regardless of the source of mean differences, confirming that the MHC-SF functions equivalently across these varied conditions. This represents a particularly stringent test of measurement stability under naturally occurring, real-world conditions.

Demonstrating strict invariance with meaningful mean differences illustrates the fundamental purpose of invariance testing. Without establishing equivalence of the measurement model, we could not determine whether lower well-being scores in May 2020 reflected actual decreases in well-being versus measurement artifacts introduced by format changes, temporal instability, or their interaction. Our findings confirm that observed differences reflect genuine changes during the pandemic transition. While controlled experimental designs isolating format effects would complement our findings, the co-occurrence of format and substantial contextual differences provides ecologically valid evidence for measurement robustness under real-world implementation conditions where multiple factors inevitably vary.

Additional limitations include reliance on self-report data, which may be subject to response biases. Multi-informant designs could provide more comprehensive assessment. The cross-sectional nature limits examination of within-person changes over time. Longitudinal designs would allow investigation of measurement stability and predictive validity across administration modes over multiple time points. Experimental and mixed-methods designs enable a more nuanced understanding of the factors influencing well-being and inform development of targeted interventions. Qualitative approaches, such as interviews or focus groups, could provide valuable insights into students’ experiences and perceptions of well-being, complementing the quantitative findings from the MHC-SF. Examining MHC-SF’s psychometric properties across various administration methods such as technology-based formats and digital platforms would further support the scale’s adaptability and validity in the rapidly evolving landscape of psychological service delivery. While our sample represents resource-limited contexts in the Philippines, replication in other lower middle-income countries and diverse cultural contexts would strengthen evidence for cross-cultural applicability. Finally, although our focus was on adolescent well-being, examining invariance across different age groups (children, adults) would provide evidence for the MHC-SF’s developmental applicability across the lifespan.

Conclusion

Our findings advance the psychometric literature on well-being assessment in educational contexts by establishing the MHC-SF’s measurement invariance across administration formats among Filipino secondary school students. While previous validations demonstrated the instrument’s utility across cultural contexts (Franken et al., 2018; Guo et al., 2015; Karaś et al., 2014), this study contributes to psychoeducational assessment research through specific ways. First, it provides empirical evidence supporting flexible use of the MHC-SF across paper-and-pencil and web-based formats, addressing a key methodological concern in flexible and resource-adaptive assessment implementation. Second, it offers validity evidence from a non-Western educational system, supporting cross-cultural applicability while highlighting context-sensitive measurement considerations. Finally, the findings inform the feasibility of sustainable school-based mental health monitoring that accommodate shifts in assessment modality without compromising measurement precision. These findings suggest that schools can implement hybrid well-being assessment approaches, which are particularly valuable for developing evidence-based well-being support systems across diverse educational contexts. Future research should examine the MHC-SF’s utility within integrated school-based assessment programs, specifically focusing on early identification and intervention planning applications.

Supplemental Material

Supplemental Material - Ensuring the Validity of Assessing Student Well-Being in Paper-and-Pencil and Web-Based Formats: The Measurement Invariance of the MHC-SF

Supplemental Material for Ensuring the Validity of Assessing Student Well-Being in Paper-and-Pencil and Web-Based Formats: The Measurement Invariance of the MHC-SF by Norman B. Mendoza, Jet U. Buenconsejo, Shen Ba, and Zi Yan in Journal of Psychoeducational Assessment

Footnotes

Acknowledgment

We would like to thank Ms. Jelli Grace C. Luzano, LifeRisksPH, and Dr. Ericson Cabrera for their support in data collection and feedback of this paper.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

All pertinent data, materials, and codes for analysis will be made available by the authors upon written request by any party.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.