Abstract

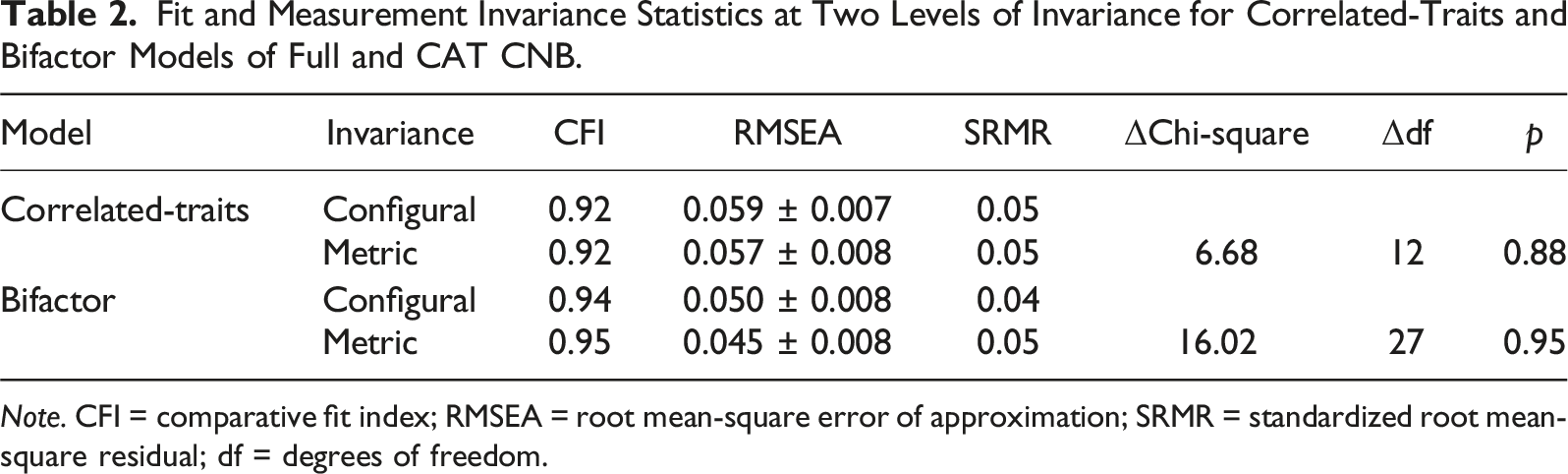

The Penn Computerized Neurocognitive Battery (CNB) is a collection of tests validated using neuroimaging, genetics, and other criteria. An updated version of the CNB was constructed in which all tests were converted to either computerized adaptive (CAT) or abbreviated forms. In a mixed community/clinical sample (N = 307; mean age = 25.9 years; 53.7% female), we tested measurement invariance across the CNB and CAT-CCNB in terms of factor configuration and loadings. Multiple-Group factor analyses were conducted with appropriate constraints, and results supported the measurement invariance assumption, with non-significant differences in chi-square fit statistics for both correlated-traits (Δχ2 = 6.68; df = 12; p = .88) and bifactor (Δχ2 = 16.02; df = 27; p = .95) configurations. Results indicate that the latent constructs measured by the two versions of the CNB are roughly equivalent and comparable.

The Penn Computerized Neurocognitive Battery (CNB; Gur et al., 1992, 2001, 2010) assesses a comprehensive array of cognitive abilities, including sub-components of executive functioning, memory, complex reasoning, social cognition, and reward processing. It has been constructed for optimizing links between brain and behavior by using tasks validated with functional neuroimaging (Ragland et al., 1997; Roalf et al., 2014) and examining correlations with brain parameters (Cui et al., 2020; Roalf et al., 2014; Satterthwaite et al., 2016) and genetics (Greenwood et al., 2011; Gur et al., 2007; Swagerman et al., 2016). Recently, a computerized adaptive (CAT) version of the Penn CNB was developed (Moore et al., 2023) and validated (Di Sandro et al., 2024), and the goal of the present study was to test whether factor models of the Penn CNB are invariant across the Full and CAT forms. The time savings achieved by the CAT and abbreviated versions decreases participant burden and allows augmentation with additional domains, but it is important to confirm that the structure of inter-test correlations remains the same in the updated version (i.e., that measurement invariance is achieved).

Measurement invariance (Cheung & Rensvold, 2002; Van De Schoot et al., 2015) is a standard whereby the psychometric properties of a test or battery (e.g., configuration and factor loadings) are statistically indistinguishable across groups or administrations. Here, the comparison is between two versions of a test battery administered to the same sample of people. Invariance is important to establish (Wicherts, 2016) because violations of measurement invariance imply that a construct is being measured differently across battery forms. The issue of when and how full-form and adaptive scores are comparable has been explored thoroughly since CATs became widely known (Roper et al., 1995; Shapiro & Gebhardt, 2012; Wang & Kolen, 2001), but most of these studies compare full and adaptive scores on a single test to confirm, for example, convergent validity between them or comparable associations with external criteria. The current study goes a step further to assess whether multiple CAT tests relate to each other in the same way that their analogous full forms do (i.e., whether they have the same factor structure). Please see the Supplement for further discussion of invariance, as well as additional background on the Penn CNB.

Methods and Results

Participants and Procedures

Participants were recruited by advertising in the community and in specialty clinics. To allow comparison of validity across forms (Di Sandro et al., 2024), the sample was enriched for two psychopathology domains, where mood disorders (MD), psychosis spectrum (PS), and community controls (HC) each composed approximately a third of the sample. Participants were eligible for the study if they were 18–35 years old, resided in the U.S., understood study instructions in English, and had no physical limitations for performing the tests. The final sample comprised 307 individuals, with 104 participants in the PS group, 96 participants in the MD group, and 107 participants in the HC group. The sample was 53.7% female, 53.1% White, 27.4% Black, 19.5% other race, and 6.5% Hispanic or Latino/a, with a mean age of 25.9 years (SD = 4.5) and mean education of 15.2 years (SD = 2.2). A more detailed description of the sample, including recruitment and study procedures, is provided in the Supplement. The University of Pennsylvania Institutional Review Board approved this study.

Assessments

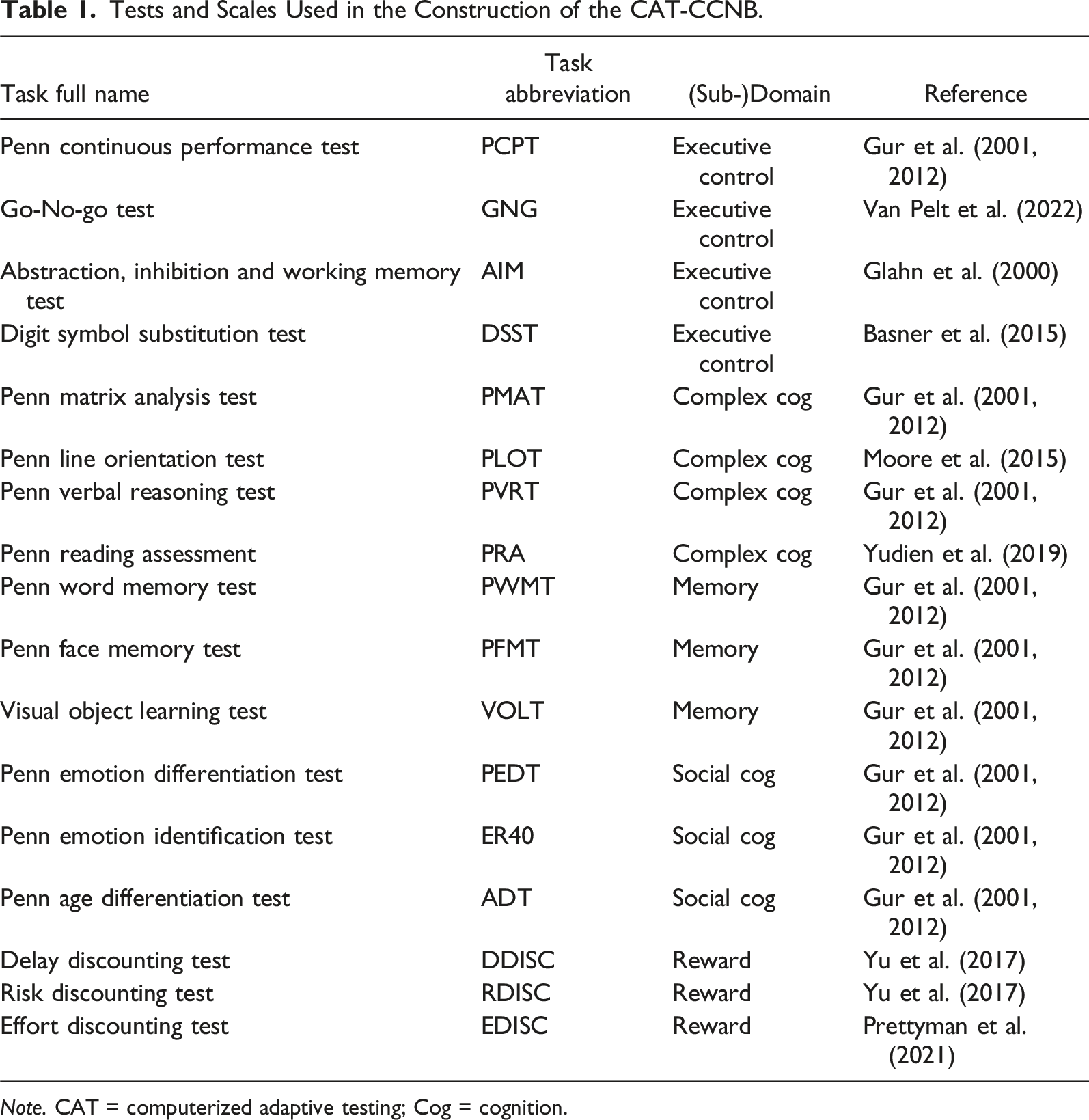

Tests and Scales Used in the Construction of the CAT-CCNB.

Note. CAT = computerized adaptive testing; Cog = cognition.

Assessments were conducted by trained research staff, counterbalancing for order. Participants took an average of 39 minutes to finish the Full CNB (ranging from 25 to 85 minutes), excluding instruction time and practice trials. By comparison, average duration for the CAT CNB was 25 minutes (ranging from 17 to 45 minutes). Testing sessions took place either remotely (N = 295) or in-person (N = 12).

Statistical Analyses

A total of 17 performance efficiency scores were analyzed for each battery. Measurement invariance was tested using “multiple group” confirmatory factor analysis (CFA) estimated using the robust maximum likelihood estimator, where “group” was defined as the test form administered (Full or CAT). Model fit was assessed using the comparative fit index (CFI; ≥0.95 acceptable) root mean-square error of approximation (RMSEA; ≤0.06 acceptable), and standardized root mean-square residual (SRMR; ≤0.08 acceptable) (Hu & Bentler, 1999). Configural invariance was assessed by examining the fit indices of the combined (both forms) model, where the only constraint between forms was that they have the same configuration. CFAs were also estimated for each form separately to determine whether model fit differed substantially between forms (using the same configuration). Metric invariance was tested statistically by comparing the above combined model to a model in which the factor loadings were constrained to be equal across forms. A significant worsening of fit when these constraints are added—that is a statistically significant change in chi-square or an absolute change in CFI > 0.01 (Cheung & Rensvold, 2002)—would indicate a metric invariance violation.

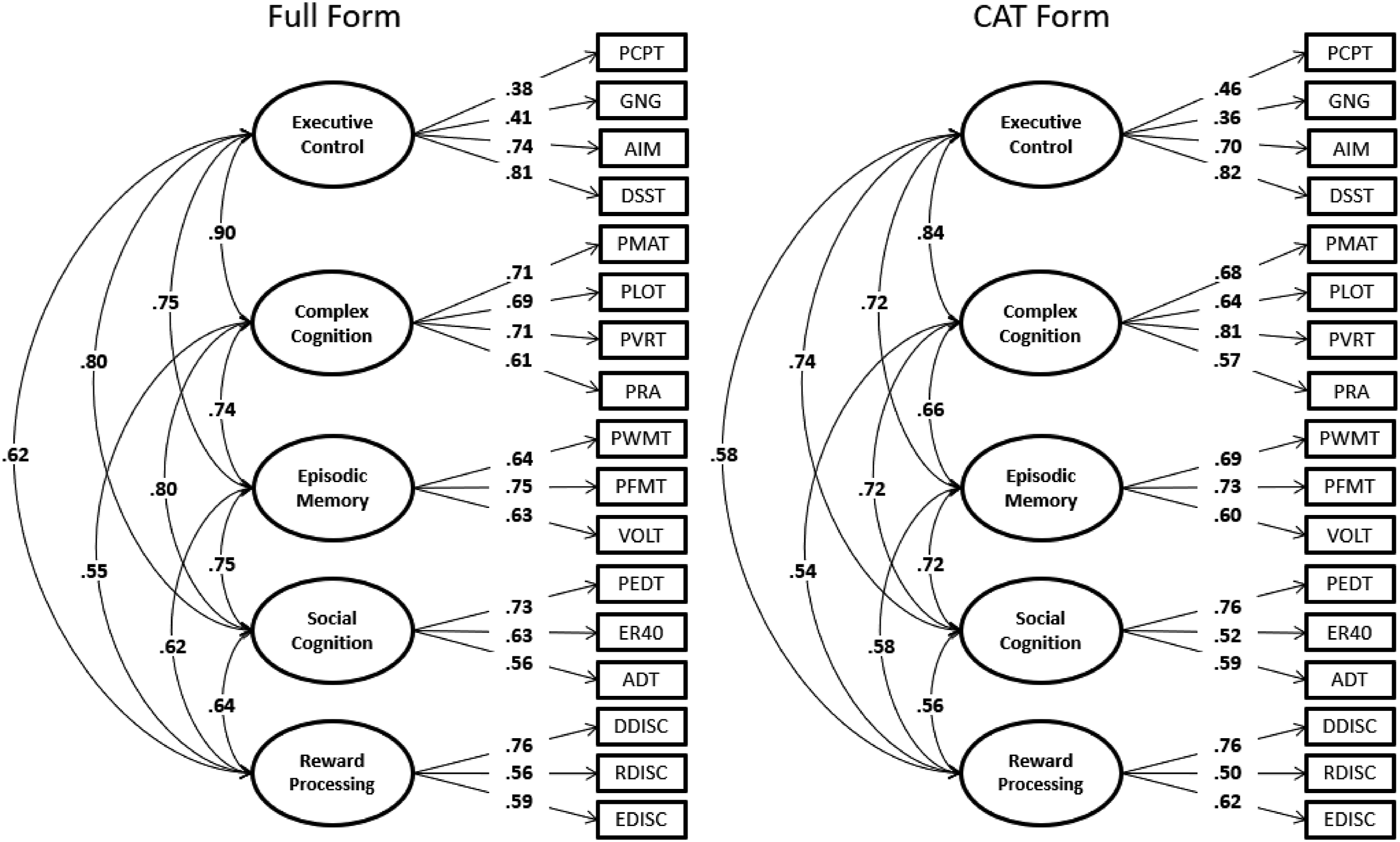

Consistent with Moore and colleagues’ (2015a) methods, the above analyses were performed on both correlated-traits and bifactor configurations (see Reise et al., 2010, for general comparison of these configurations). While bifactor models generally fit CNB data best, the specific sub-factors in bifactor models rarely have sufficient factor determinacy (Grice, 2001; Rodriguez et al., 2016) for score calculation. Therefore, generally, a bifactor model is used to obtain “g” scores, while a correlated-traits model is used to obtain sub-scores. Finally, post hoc exploratory factor analyses (EFAs) and invariance tests (following above procedure) using the EFA-adjusted models were performed.

All analyses were conducted in Mplus v8.4 (Muthén & Muthén, 1998) and R (R Core Team, 2022). Mplus scripts (.inp files), as well as further details of data preparation and analyses, can be found in the Supplement.

Results

Fit and Measurement Invariance Statistics at Two Levels of Invariance for Correlated-Traits and Bifactor Models of Full and CAT CNB.

Note. CFI = comparative fit index; RMSEA = root mean-square error of approximation; SRMR = standardized root mean-square residual; df = degrees of freedom.

Correlated-Traits Confirmatory Factor Analyses of Full and CAT Forms of the Penn Computerized Neurocognitive Battery. Note. All loadings significant at p < .05 level; Full-Form model CFI = 0.92; Full-Form model RMSEA = 0.059; Full-Form model SRMR = 0.048; CAT model CFI = 0.91; CAT model RMSEA = 0.060; CAT model SRMR = 0.053; PCPT = Penn Continuous Performance Test; GNG = Go-No-Go Test; AIM = Abstraction, Inhibition and Working Memory Test; DSST = Digit Symbol Substitution Test; PMAT = Penn Matrix Analysis Test; PLOT = Penn Line Orientation Test; PVRT = Penn Verbal Reasoning Test; PRA = Penn Reading Assessment; PWMT = Penn Word Memory Test; PFMT = Penn Face Memory Test; VOLT = Visual Object Learning Test; PEDT = Penn Emotion Differentiation Test; ER40 = Penn Emotion Identification Test; ADT = Penn Age Differentiation Test; DDISC = Delay Discounting Test; RDISC = Risk Discounting Test; EDISC = Effort Discounting Test.

Finally, Supplemental Table S2 shows the results of post hoc EFAs conducted on the full and CAT forms separately. These results suggest that the AIM and DSST might belong in the complex reasoning factor instead of executive, and Figure S2 shows the updated confirmatory model based on the EFA results. Table S3 shows the invariance results using the updated confirmatory model, where, consistent with the main study results, measurement invariance appears to be fulfilled (χ2 difference test p-value = .89; all CFI change < 0.005).

Discussion

Converting a full test battery to abbreviated and computerized adaptive (CAT) forms requires demonstration of measurement invariance to ensure that the composite scores on these forms are comparable. Here, we demonstrate that the computerized adaptive (and abbreviated) form of the Penn CNB (CAT-CCNB) is invariant across forms with respect to configuration and factor loadings, and this invariance maintained even after updating the model based on post hoc EFA. This suggests that the constructs assessed with the two batteries are comparable. When examining metric results loading-by-loading, some possible violations were found, but it is worth noting that all apparent violations at the individual loading level are for tests that are not ultimately recommended for the CAT version of the CNB. Specifically, it was previously recommended (Di Sandro et al., 2024) that the PVRT, ER40, PFMT, and VOLT remain in their full versions rather than using the CAT, due either to questionable convergent validity between Full and CAT forms or to lack of sufficient time savings (e.g., PFMT and VOLT were < 1 minute shorter in their adaptive forms). This concurrence of results from two different methods lends further support for maintaining full forms of the PVRT, ER40, PFMT, and VOLT rather than updating to CAT forms.

The present findings should be considered with some limitations in mind. First, this is a relatively small sample (N = 300), particularly in comparison to the original factor analysis (N ≥ 9000) (Moore et al., 2015), and the age range is limited to 18–35, compared to 18–80 in the calibration study (Moore et al., 2023). To enhance generalizability of the findings, replication of this work in a larger sample with a broader age range is recommended. Finally, the present study did not evaluate scalar invariance, whereby intercepts of test-factor relationships are shown to be invariant across forms, due to the difference in scales of the two forms (sum scores or d-prime in Full, vs. z-score metric in CAT). Future work aiming to link the CAT forms with historical administrations of the Full form will require re-scoring historical data in an IRT metric using the same item bank as used in the CAT form.

These limitations notwithstanding, our study demonstrates the psychometric comparability of the Full and CAT forms and encourages adoption of the CAT version, especially for large-scale or longitudinal studies. The CAT version will result in substantial time savings and hence reduced burden on participants and test administrators, yielding neurocognitive performance data with high fidelity.

Supplemental Material

Supplemental Material - Configural and Metric Invariance Across Full-Form and Computerized Adaptive Versions of the Penn Computerized Neurocognitive Battery (CAT-CCNB)

Supplemental Material for Configural and Metric Invariance Across Full-Form and Computerized Adaptive Versions of the Penn Computerized Neurocognitive Battery (CAT-CCNB) by Tyler M. Moore, Katherine C. Lopez, J. Cobb Scott, Jack C. Lennon, Akira Di Sandro, Eirini Zoupou, Alesandra Gorgone, Monica E. Calkins, Daniel H. Wolf, Joseph W. Kable, Kosha Ruparel, Raquel E. Gur and in Journal of Psychoeducational Assessment.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by National Institute of Mental Health grants (MH117014, MH119219).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.