Abstract

Collectively, measures created for research use—whether self-report or observational—have contributed to evidence underscoring the importance of ensuring teachers develop knowledge and skills to engage in asset-based pedagogy. Teachers who wish to enhance their practice, however, do not have a way to elicit students’ perspectives of their instruction with a validated instrument designed to do so. Given that student identity is a robust predictor of minoritized students’ academic and non-academic outcomes, this study reflects the development and validation of Asset-Based Identities Measure that centers student voice to formatively inform teacher practice. The iterative design of the study included expert educators, students, and a larger validation sample of N = 860 students. Cognitive interviews and focus groups contributed to the refinement of the pilot measure across three identity domains. Factor structures were examined through confirmatory factor analyses resulting in a robust measure. Use of the measure is discussed.

Keywords

Scholars have contributed to a substantial body of asset-based pedagogy (ABP) research focused on eliminating racism in the curriculum by centering instruction on the perspectives of minoritized communities (see Aronson & Laughter, 2016; López, 2017). Among the numerous ABP orientations are critical bicultural pedagogy (Darder, 1991, 2012), equity pedagogy (Banks, 1993), culturally relevant pedagogy (Ladson-Billings, 1995a; 1995b), culturally responsive teaching (Gay, 2000), cultural connectedness (Irizarry, 2007), culturally sustaining pedagogies (Paris, 2012), and critical culturally sustaining revitalizing pedagogy (McCarty & Lee, 2014), although this is only a partial listing. These and other distinct conceptualizations of ABP share foundational principles that counter deficit-laden messages about marginalized students’ cultural backgrounds. Teachers who engage in ABP affirm “the legitimacy of cultural heritages of different ethnic groups, both as legacies that affect students’ dispositions, attitudes, and approaches to learning and as worthy content to be taught in the formal curriculum” (Gay, 2000, p. 29). Moreover, teachers who engage in ABP have been shown to affirm marginalized students’ identities in ways that directly influence achievement outcomes (López, 2017; Matthews & López, 2018).

Researchers have contributed to research by creating and validating measures that reflect various dimensions and conceptualizations of ABP. Some measures were developed explicitly to explore the interrelationships between teachers’ asset-based knowledge and practices, and how these in turn promote student outcomes (e.g., Brown & Chu, 2012; Kumar et al., 2021; López, 2017; Matthews & López, 2018). Others have contributed to research by creating and validating self-report measures of teachers’ self-efficacy in asset-based teaching (e.g., Siwatu, 2007), drawing on the established role of teacher self-efficacy on student motivation (Caprara, et al., 2006; Skaalvik & Skaalvik, 2007) and student achievement (Caprara et al., 2006; Klassen & Chiu, 2011; Tschannen-Moran & Hoy, 2001). Still other measures rely partially on self-report (e.g., via self-reflection), focusing on knowledge about asset-based pedagogy (Pohan & Aguilar, 2001) as well as practices (Jensen et al., 2018; Powell et al., 2016). Collectively, measures principally created for research use—whether self-report or observational—have contributed to the evidence underscoring the importance of ensuring teachers develop knowledge and skills to engage in ABP.

Many measures also exist to capture educator practices and pedagogy. These include widely used classroom observation measures, the Classroom Assessment Scoring System (CLASS; Pianta et al., 2008) and The Framework for Teaching (Danielson, 2013), both of which can be used formatively to improve teacher practice or as summative measures of effectiveness. Although these measures have been updated to include considerations of ABP, they do not consider students’ perspectives of teaching beyond that which is inferred from classroom observations. Student perception surveys fill this need and have become more common due to the requirement of multiple data sources in teacher evaluations (see Geiger & Amrein-Beardsley, 2019). Although student perception surveys provide information directly from students, there is no validated measure of student perception aligned with ABP goals of affirming students’ belonging, intersectional identities, as well as competence.

The Need for a Validated Measure of Students’ Perceptions of Asset-Based Pedagogy

Despite the body of knowledge generated on measuring teachers’ asset-based knowledge, self-efficacy, and behaviors, as well as students’ perceptions of instruction, missing are measures that can provide formative feedback to teachers with students’ direct experiences of ABP. To address this gap, we conceptualized, designed, and refined a measure that focused on students’ experiences of ABP in ways that could enhance teachers’ practices. Specifically, the present study reflects the development and validation of an asset-based student identity measure that focuses on students’ academic self-concept, intersectional identities inclusive of disabilities, and student belonging in the context of their instructional experiences. The measure was developed to fill the void of a formative tool that teachers could use in multiple ways to refine their practices. This includes opportunities to deepen their rapport about their instruction with students, as well as elicit how their instruction resonates (or does not) after a particular lesson, unit, or other timeframe of interest.

Development of the Asset-Based Identities Measure

The development of the Asset-Based Identities Measure relies on guidance provided by the Standards (AERA, APA, NCME, 2014) as well as Kane’s (2013) discussion of the argument-based approach to validation that includes (1) an explicit proposed interpretation/use of a measure and (2) a validity argument that evaluates the proposed interpretation/use. In the argument-based approach, validity “depends on how well the evidence supports the proposed interpretation/use” of the resulting scores (Kane, 2013, p. 21). Given that the Asset-Based Identities Measure reflects the theoretical constructs of students’ self-concept, intersectional identities, and belonging that are aims of ABP, “the theory defining the construct would constitute the core of the [interpretation/use argument]” (Kane, 2013, p. 9).

Our proposed use of the Asset-Based Identities Measure is to enhance teachers’ development of ABP by gauging the extent to which students are responding to the aims of ABP instruction (i.e., by affirming identities). As such, validity evidence requires theoretical rationales for the constructs represented in the measure (i.e., evidence based on content and response processes; AERA, APA, NCME, 2014). Additionally, evidence based on internal structure “[indicates] the degree to which the relationships among test items and test components conform to the construct on which the proposed test score interpretations are based” (AERA, APA, NCME, 2014, p. 16). However, the interpretations of scores are intended to respond to instruction that aims to reflect ABP and as such do not assume or require evidence on the extent to which scores generalize over a period of time or occasions (Kane, 2013).

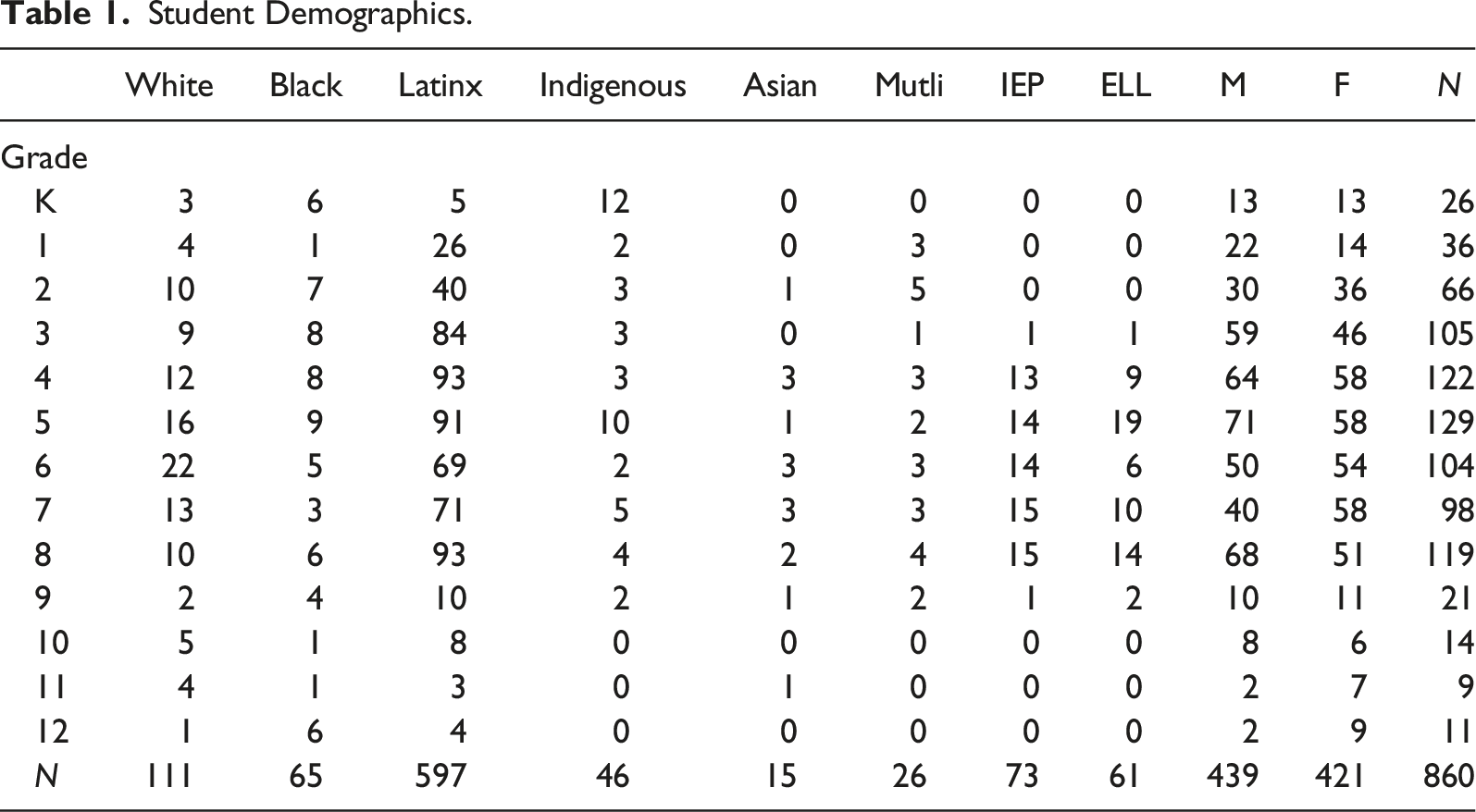

Student Demographics.

Asset-Based Pedagogy

Persistent disparities in achievement outcomes for youth living in poverty, most of whom are students of color, are well documented. Attempts to identify remedies that are rooted in deficit perspectives, however, are also well documented. These views reflect a conviction wherein “children succeed in school only if their many deficiencies are corrected and if they are taught to behave in more traditionally mainstream ways in specially designed intervention programs” (see Valdés, 1997, p. 398). The fact is that minoritized youth rarely have exposure to perspectives from their communities except when added “in a ‘contributions’ fashion to the predominantly Euro-American narrative of textbooks” (Sleeter, 2011, p. 2). Moreover, recent political pressures to censor culturally relevant books and teaching about race-related histories exasperate this lack of exposure (López & Sleeter, 2023).

Challenging racist views in education are scholars who point out that minoritized students “are neither inherently antischool nor oppositional. They oppose a schooling process that disrespects them” (Valenzuela, 1999, p. 5). These scholars have contributed to a substantial body of ABP research focused on eliminating racism in the curriculum by centering instruction on the perspectives of minoritized communities (see Aronson & Laughter, 2016; López, 2017). Scholars involved in the preparation of teachers for historically marginalized students have detailed the importance of developing teachers’ historical, political, and contextualized knowledge (i.e., critical consciousness) to inform their asset-based pedagogy (e.g., Anderson & Stillman, 2013; Banks, 2001; Darling-Hammond, 2000; Darling-Hammond & Bransford, 2005; Gay, 2005; Gay & Kirkland, 2003; Hollins & Torres-Guzman, 2005; King & Ladson-Billings, 1990; Milner, 2010; Morrison et al., 2008; Valenzuela, 2016). This is because a “lack of political and ideological clarity often translates into teachers uncritically accepting the status quo as ‘natural.’ It also leads educators down an assimilationist path to learning and teaching….and perpetuates deficit-based views of low-SES, non-White, and linguistic-minority students” (Bartolomé, 2004, p. 100). Educators who develop critical consciousness, however, engage in behaviors that affirm students’ identities and promote academic outcomes (López, 2017). Indeed, the affirmation of identity is integral to ABP (Aronson & Laughter, 2016). Despite the evidence favoring the development of knowledge that affirms students’ identities, few educators are provided opportunities to develop these skills (López, 2024; Valenzuela, 2016).

Teachers who authentically engage in ABP reflect their deep understanding of students. The lack of opportunities teachers have to develop skills to engage in ABP contributes to the superficial ways it is enacted in classrooms and suggests gauging teachers’ self-efficacy may lead to inaccurate assessments of teachers’ skills (Sleeter, 2012). This is in part due to the fact that ABP is most often understood and applied in simplistic ways that center cultural celebration, trivialize ABP by incorporating student interests as additions to teacher-centered instruction, and essentialize culture as traits shared by particular groups (Sleeter, 2012). Teachers who wish to enhance their ABP practice do not have a way to elicit students’ perspectives of their instruction with a validated instrument designed to do so. Given that the effectiveness of ABP relies on affirming students’ identities, and researchers have documented that ABP promotes competence and ethnic identity (López, 2017), belonging (Gray et al., 2018), and other domains of their intersectional identity (Boveda, 2016), a measure designed to elicit student feedback specifically about these identity factors holds promise to more effectively inform educators’ understandings about their students, which can enhance ABP instruction.

Student Identities

Competence reflects an individual’s discernment of being able to successfully execute a task (Ryan & Deci, 2020). There is an extensive body of research demonstrating the crucial role of academic self-competence in achievement outcomes (e.g., Marsh et al., 2006; Möller et al., 2020; Schunk & Pajares, 2005). Although students need mastery experiences to develop competence (Ryan & Deci, 2020), minoritized students are more likely to be in contexts that lack requisite opportunities for mastery experiences (Ladson-Billings, 2006; López, 2010), as well as experiences that make known the value of academic achievement (Taylor & Graham, 2007).

A sense of belonging at school is consistently predictive of both academic and psychological outcomes (Allen et al., 2018). Whereas belonging at school has been historically conceptualized as a sense of psychological membership with the school environment and defined as acceptance, respect, inclusion, and support (Goodenow, 1993), there are structural realities that students encounter that can support or hinder the opportunities they have to belong inside a classroom environment—particularly among students from historically marginalized backgrounds (Gray et al., 2018). Students from minoritized identity groups are more engaged when educators provide them with interpersonal and instructional opportunities to belong and less engaged when fewer of these opportunities are provided (Matthews et al., 2021). Empirical research also demonstrates that opportunities to belong are dynamic and fluctuate as students transition from one classroom to the next (Gray et al., 2022a). Specific educator practices that support opportunities to belong among culturally and linguistically diverse students include opportunities to receive meaningful feedback (Maloney & Matthews, 2020; Yeager et al., 2014); learn communally (Boykin, 1986; Cooper, 2013; Gray et al., 2022b); make real-world connections (Aronson & Laughter, 2016; Martell, 2013); emotional expression (Nasir & Abd Ghani, 2013); individualized support (Brooms, 2019; Garza, 2009); and share ideas and opinions (Brown, 2017). The connective tissue among each of these educator practices is they contribute to an instructional setting in which students are honored and affirmed for who they are and what they bring to the classroom space.

Conceptualized to examine the simultaneous effects of multiple oppression, Black feminists such as legal scholar Kimberlé Crenshaw (1989) and sociologist Patricia Hill Collins (1990/2000) originally wrote about intersectionality to explain how Black women and other women of color navigated marginalization. Beyond a recognition of diverse identity categories (e.g., race, ethnicity, disability, linguistic origin, and gender), intersectionality frames the complexities involved in the interactions of multiple markers of difference (McCall, 2005) and the systems of power and oppression entangled with these markers (e.g., racism, sexism, ableism, classism, and nationalism). Since intersectionality first entered the academic lexicon three decades ago, education researchers have found its utility for addressing minoritized students’ school experiences (e.g., Haynes et al., 2020).

Boveda (2016), a special education scholar and teacher educator, drew from intersectionality to develop the intersectional competence measure (ICM). Intersectional competence refers to educators’ preparedness to recognize how schooling is implicated in intersecting systems of oppression, collaborate with relevant stakeholders who themselves may navigate multiple social marginalization, and consider sociocultural differences while making instructional decisions (e.g., planning for English learners who are labeled with a disability). In addition to developing and gathering evidence of validity for the ICM, Boveda and colleagues have developed tools to enhance educators’ intersectional competence and recognition of intersectionality in their practice (e.g., Boveda & Weinberg, 2022). Of relevance to the present study are the intersectional competence indicators concerning (a) identifying sociocultural group categories and markers of difference, (b) understanding the interlocking and simultaneous effects of multiple markers of difference, and (c) understanding the systems of oppression and marginalization that occur at the intersections of multiple markers of difference (Boveda & Aronson, 2019). This study expands on prior validity efforts for the construct by including youth participant perspectives for these indicators.

Many researchers have contributed to the understanding of the role of ethnic identity in predicting positive academic and non-academic (i.e., mental wellbeing; O'Leary & Romero, 2011) outcomes among historically marginalized youth. For youth in hypersegregated contexts, which is increasingly the case for Latinx youth (Massey, 2015), the likelihood of perceived discrimination is higher than in more diverse contexts (Brown & Chu, 2012; Seaton & Yip, 2009) and has been linked to lower achievement (DeGarmo & Martinez, 2006; Umaña-Taylor et al., 2004). Researchers exploring the role of ethnic identity, however, have found that marginalized youth with a strong ethnic identity who are aware of, and seek to overcome, obstacles are more likely to experience positive academic outcomes (Altschul et al., 2008). This is consistent with the finding that teachers who value, rather than ignore, cultural differences are more likely to support positive ethnic identity development among Latinx youth, which has been found to be directly related to academic achievement outcomes (Brown & Chu, 2012).

Taken together, there is a robust body of literature supporting a focus on student identity as outcomes in and of themselves. This underscores the importance of teachers’ development of understanding students’ identities and ways to enhance their instruction. To enhance teachers’ development of ABP, we address the following research questions as evidence of validity (AERA, APA, NCME, 2014; Kane, 2013): 1. How should existing items from identity measures be modified to capture students’ voice regarding teachers’ instruction? 2. To what extent does centering student voice lead to the item-to-factor structure of the measure? 3. What is the stability of the measure across multiple administrations? 4. What are recommendations from educators for the use and practicality of the measure?

Method

Setting and Sample

The study took place in an urban school district in Arizona where approximately 65% of the students in this district identify as Latinx, 6% Black, and 4% Indigenous (Tohono O’odham and Pascua Yaqui); and close to 15% of the students receive special education services. Approximately 46% of the student population in the district qualifies for free or reduced lunch and close to 5% of students are classified as English Learners. In terms of the teacher demographics in the district, 59% are identified as White; 31% Latinx; 4% Black; 4% Asian/Pacific Islander; and 2% Indigenous. The district has an established research–practice partnership with educators in the district and university-based scholars who have expertise in ABP. The partnership contributed to a strategic plan to align all district efforts with ABP. As a result, the district has provided professional development to all teachers and leaders in the district over the past decade. Teachers with more than two years of professional development are eligible to become expert teachers who become mentors to other teachers engaging in the district’s professional development. Expert teachers receive additional rigorous ongoing professional development that includes a two-day introduction on the foundational elements of teaching ABP. This involves a thorough examination of curricular materials, the history of the courses as developed in the district, theories undergirding the courses, and various strategies used in the classes. For all teachers in the district, the district also provides monthly training and updated sessions offered on Saturdays.

Due to the iterative nature of the study, the participants varied in each stage of the study. In the first phase, six expert teachers across grade levels (grades 3 through 8) were identified and recruited in consultation with the district to provide feedback on the developmental appropriateness and cultural relevance of the items for students. The expert teachers were asked to identify students for cognitive interviews of the resulting items who would represent variability in terms of identities. In Phase II, a total of 19 students across classrooms received parent consent and assented to participating. They represented grades 3 (n = 3), 4 (n = 8), and 5 (n = 8) and identified as Latinx (n = 1), African American (n = 3), Multiracial (n = 2), and White (n = 3). In the final phase of the study reported here, N = 860 students across 42 classrooms completed the survey. Student demographic information is presented in Table 1.

Procedure

Phase I

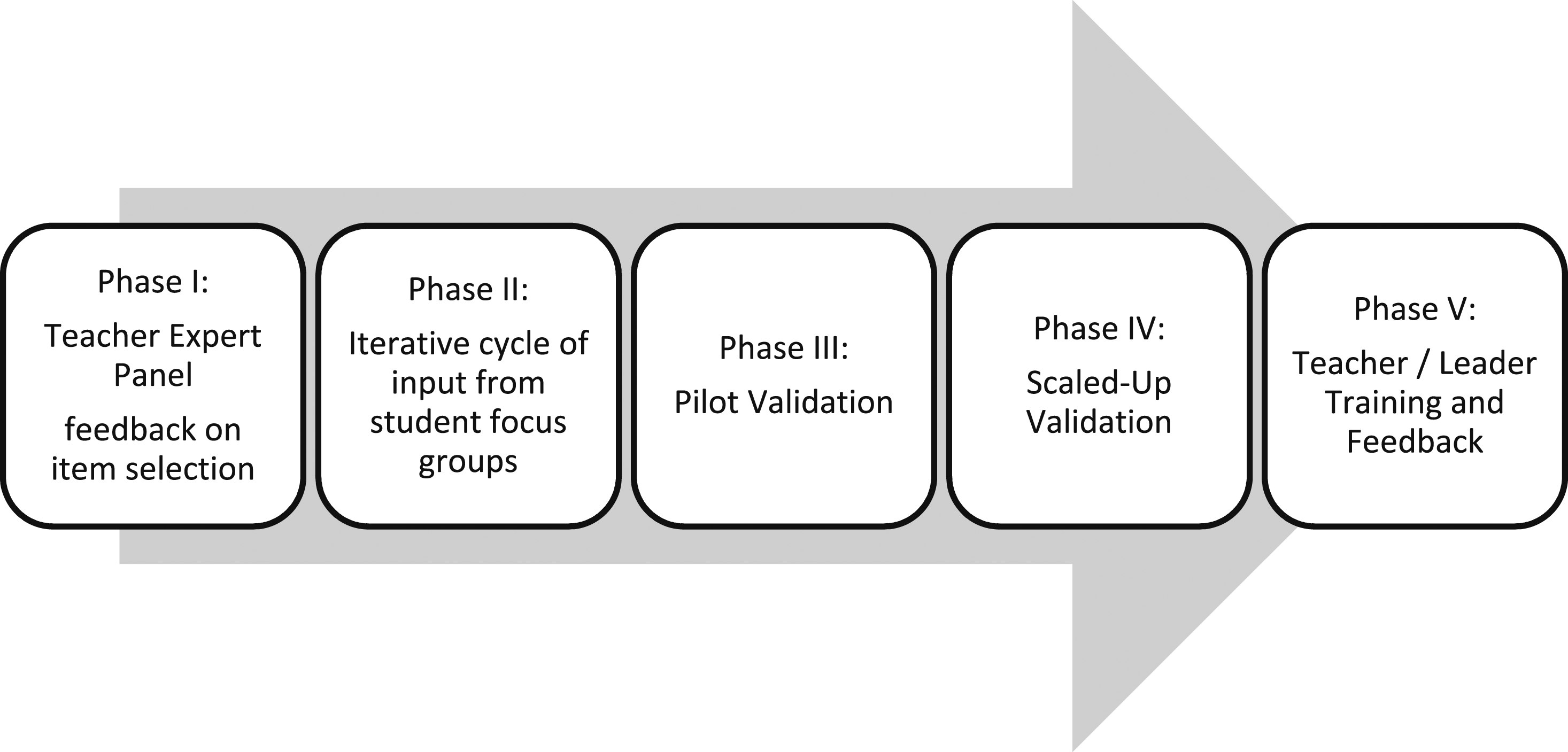

Prior to engaging in the iterative validation process (see Figure 1), the research team collectively reviewed items from existing validated measures of academic self-competence (Marsh, 1992), ethnic identity (Umaña-Taylor et al., 2004), belonging (Gray et al., 2020), and intersectionality (Boveda, 2016). After identifying and revising the items to reflect a prompt eliciting immediate student feedback based on classroom instruction (e.g., from “I am good at most academic subjects” to “In today’s class, I did well”), the expert panel consisting of expert ABP teachers of grades 3 through 8 (N = 6, 1 per grade level) provided feedback on each of the items. Specifically, following a similar survey development protocol to Gray et al. (2022a), expert teachers were asked to reflect on the following questions: 1. What is this question trying to find out from students? 2. Is the question clear? 3. To what extent (and how) is the item practically relevant for you and your classroom? 4. Is the question redundant? Validation approach.

Based on the teachers’ responses to the questions, the items were edited to be developmentally appropriate and to consider the student population.

Phase II

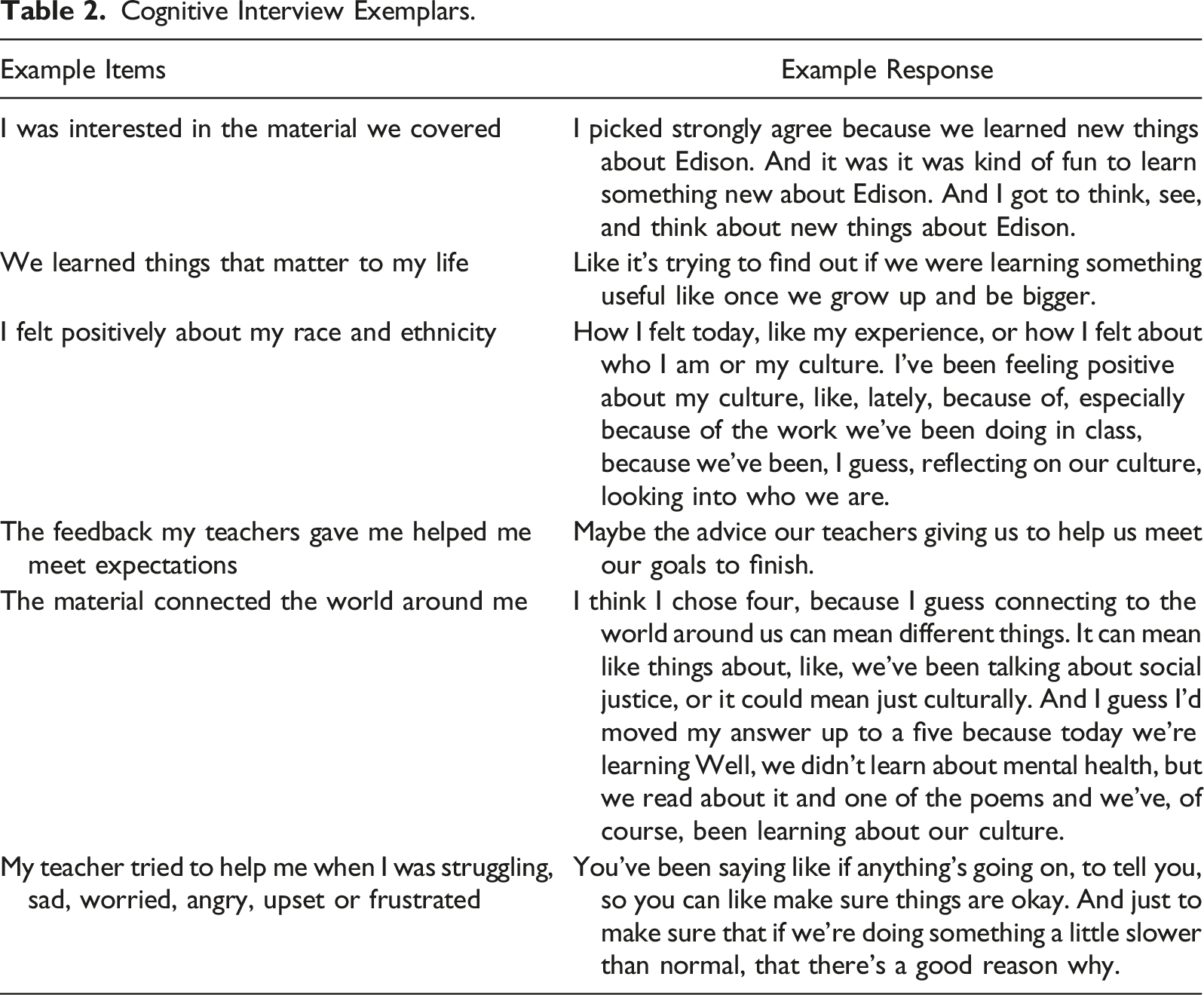

The expert teachers then identified students to engage in the following cognitive interview protocol (Karabenick et al., 2007) for each item of the measure after teachers’ feedback was incorporated: (1) Please read this question out loud; (2) What is this question trying to find out from you? (3) Which answer would you choose as the right answer for you? (4) Can you explain to me why you chose that answer?

Phase III

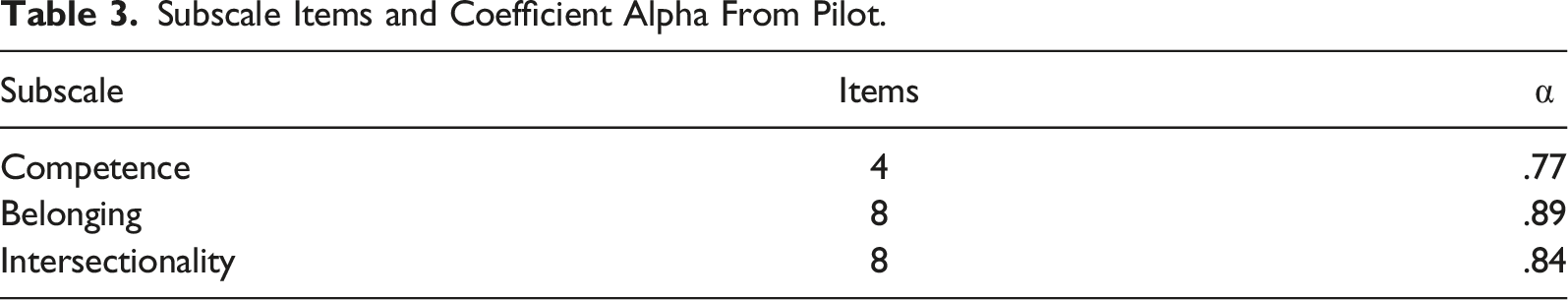

Student responses to the cognitive interviews were transcribed and coded for analysis to determine further edits to the items. Item/scale analyses were carried out to determine internal consistencies for each subscale.

Phase IV

To recruit a larger, representative sample of the district for the next phase of the validation, members of the research team presented information during a district leadership meeting to inform school site leaders about the project and the process required to consent parents/caregivers and students. A follow-up meeting with district personnel identified school sites for recruitment and consenting, which resulted in the identification of 12 schools with identified staff (N = 10) who would lead the oversight of distributing and collecting consent forms. Once student permission was received, administration cycles were scheduled with district guidance to avoid overlap with any other district assessments scheduled.

The district entered the survey items into their assessment platform that includes benchmark assessments (i.e., students are accustomed to taking surveys on the platform), students responded to the questions on the measure (see Appendix) with the prompt “In today’s class…” using a 9-point Likert-type scale that ranged from − 4 “Strongly Disagree” to + 4 “Strongly Agree.” Students completed between 1 and 4 administrations over a 6-week period, overseen by the respective classroom teachers. We then carried out analyses of the measure (described in the section entitled Data Analysis below).

Phase V

After carrying out analyses of the administrations of the measures, members of the research team shared findings with N = 40 educators during a district-wide professional development conference and elicited feedback in collaboration with the district. Namely, the district asks all participants of the conference to answer surveys after conference sessions that include Likert-type prompts ranging from 5 = Strongly Agree to 1 = Strongly Disagree. The prompts include “I would like to learn more about this topic” and “The material will inform my instruction and/or understanding.” Additional feedback elicited for the validation of the measure included questions about how educators would use the measure and/or items in their own practice; how often they would envision using the measure; and how the measure could be made more useful.

Data Analysis

Phase I

To modify the identity items, we conducted a qualitative content analysis (Schreier, 2012) of the resulting text from teachers’ feedback. In our analysis, we focused on the extent to which the modifications of the items were consistent with the respective identity domains reflected in our review of the literature. Four members of the research team independently reviewed teachers’ feedback in their entirety and coded items consistent with the identity domains. No coding discrepancies were found (100% agreement). The members of the research team then discussed redundant items and reached consensus on the items to keep for the next phase of validation.

Phase II

To ensure the items reflected the intended factors, expert teachers conducted cognitive interviews with focus groups that were recorded and transcribed. Four members of the research team independently reviewed the focus group discussions in their entirety to identify if any of the items introduced interpretation issues for students. All of the members of the research team agreed that the students’ discussions reflected consistency with the item domains.

Phase III

We examined the internal consistencies of the four subscales using coefficient alpha. We also examined the individual items for possible removal by examining the scale if item deleted coefficients, as well as the descriptive statistics to examine the extent to which the entire scale was used.

Phase IV: Scaled-Up Validation

Item-Factor Structure

Given that the items used in the measure originated from existing validated measures reflecting belonging, competence, and intersectionality (including ethnic identity), we used a confirmatory factor analysis using MPlus Version 8 to examine the extent to which identity items loaded onto their hypothesized latent variables. Specifically, we tested our hypothesized three-factor model, in which the Belonging, Competence, and Intersectional Identities were specified as latent variables against a single-factor model in which all items were loaded onto a single latent variable.

Differences Based on Demographic Variables

We carried out analyses of variance with Satterthwaite’s method for the t-tests to examine systematic differences on the basis of demographic variables and the total identity scores as the dependent variable. Student-level administrative data included students’ grade, gender (0 = male, 1 = female), English Learner (EL) status (0 = not EL, 1 = EL), and whether a student had an Individual Education Plan (IEP; 1 = Yes, 0 = No). Also included were students’ race/ethnicity (Hispanic, White Indigenous, African American, Multiple, and White).

Stability

To examine the extent to which items capture both general and personal feelings about the classroom situations, we created total scores for the 21-item scale and examined how those fluctuated across time by grade and conducted linear mixed model fit by restricted maximum likelihood (REML) analyses with Satterthwaite’s method.

Phase V

As a final step in the validation, we out feedback from teachers in the partnering district to determine the practical utility of the measure. Members of the research team examined notes from the discussion with teachers, as well as responses to the surveys.

Results

Phase I: Teacher Feedback

When asked to provide feedback on the original items of the measure, the expert teachers consistently raised concerns about negatively worded items, recommending their removal. The expert teachers provided recommendations for words they believed students may not be familiar with in the context of the items (e.g., beneficial) and also provided suggestions for rewording of items (e.g., original item “I have learned about my ethnicity by doing things such as reading (books, magazines, newspapers), searching the Internet, or keeping up with current events” was modified to “the material taught me about my race/ethnicity”). After the research team carefully reviewed all of the teacher feedback, some items were removed due to redundancy; other items were reworded based on teacher recommendations; negatively worded items were removed; and additional modifications to items (e.g., word choice) were made.

Phase II: Student Cognitive Interviews

Cognitive Interview Exemplars.

Phase III: Item/Scale of Pilot Administration

Subscale Items and Coefficient Alpha From Pilot.

Phase IV: Theory-Driven Approach to Factor Analysis and Reliability

Confirmatory Factor Analysis

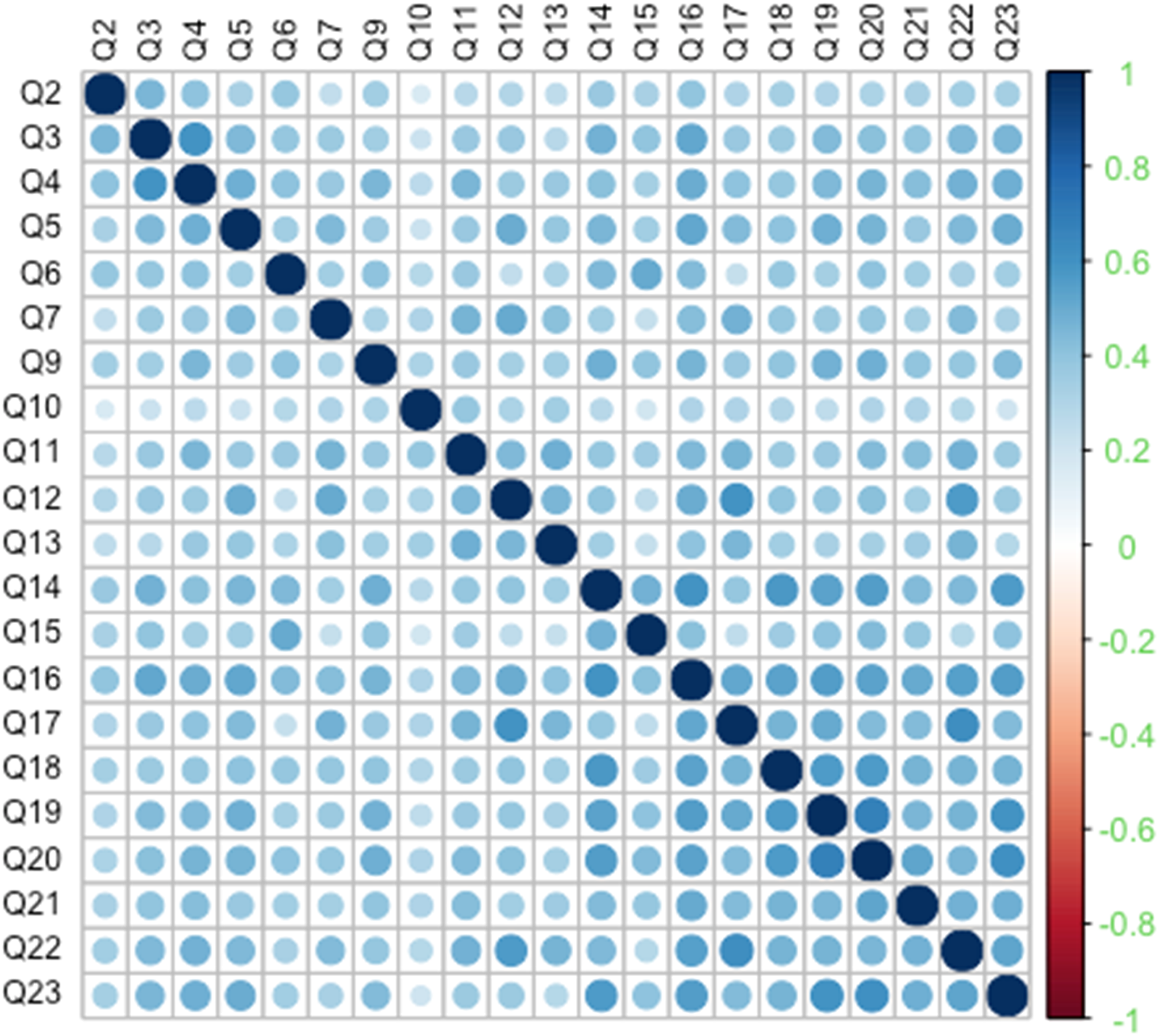

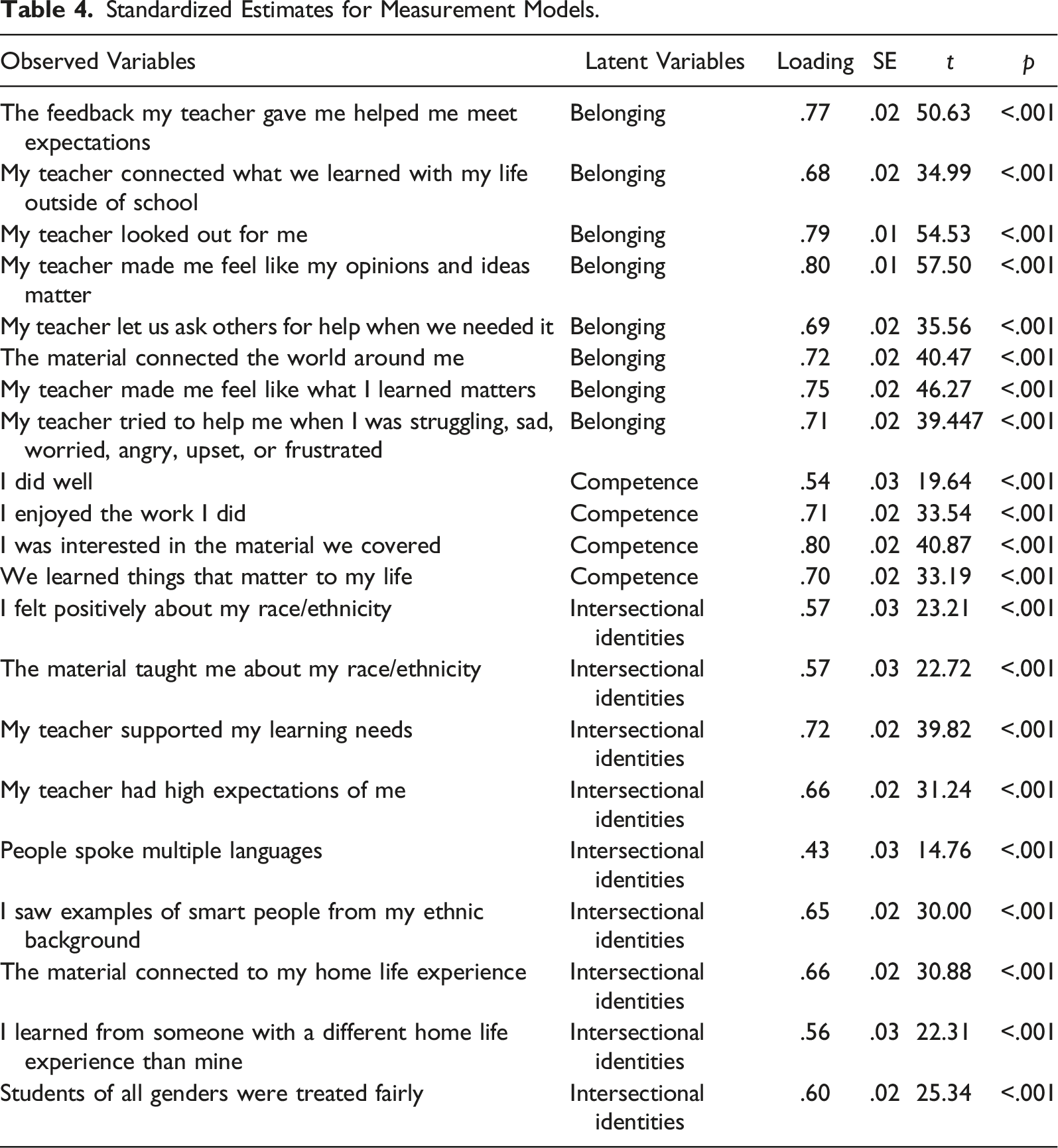

After the iterative collection of measures was completed (we aimed for 4 administrations), a total of 1585 responses were obtained from N = 860 distinctive students (see Table 1). The resulting sample size exceeded the minimum number of cases required given the number of factors and respective indicators (Jackson et al., 2013). Correlations among items are presented in Figure 2. In our confirmatory factor analysis to examine the extent to which identity items loaded onto their hypothesized latent variables, we tested our hypothesized three-factor model. Theoretically, the three-factor model should demonstrate superior fit, relative to the single-factor model—as demonstrated by a lower chi-square statistic and a lower Akaike Information Criterion (AIC). Results supported this prediction. The three-factor model demonstrated superior fit across several indices (three-factor model: χ2 (186) = 1109.18, p < .001, SRMR = .05, RMSEA = .08, CFI = .90, TLI = .88, AIC = 71764.73; one-factor model: χ2 (210) = 9195.55, p < .001, SRMR = .38, RMSEA = .19, CFI = .39, TLI = .36 AIC = 76326.85). In addition, the measurement model was statistically supported. As shown in Table 4, all observed variables in the three-factor model loaded significantly on the appropriate latent variables, p < .001. Correlations among items, across all 1585 responses. Standardized Estimates for Measurement Models.

Internal Consistency

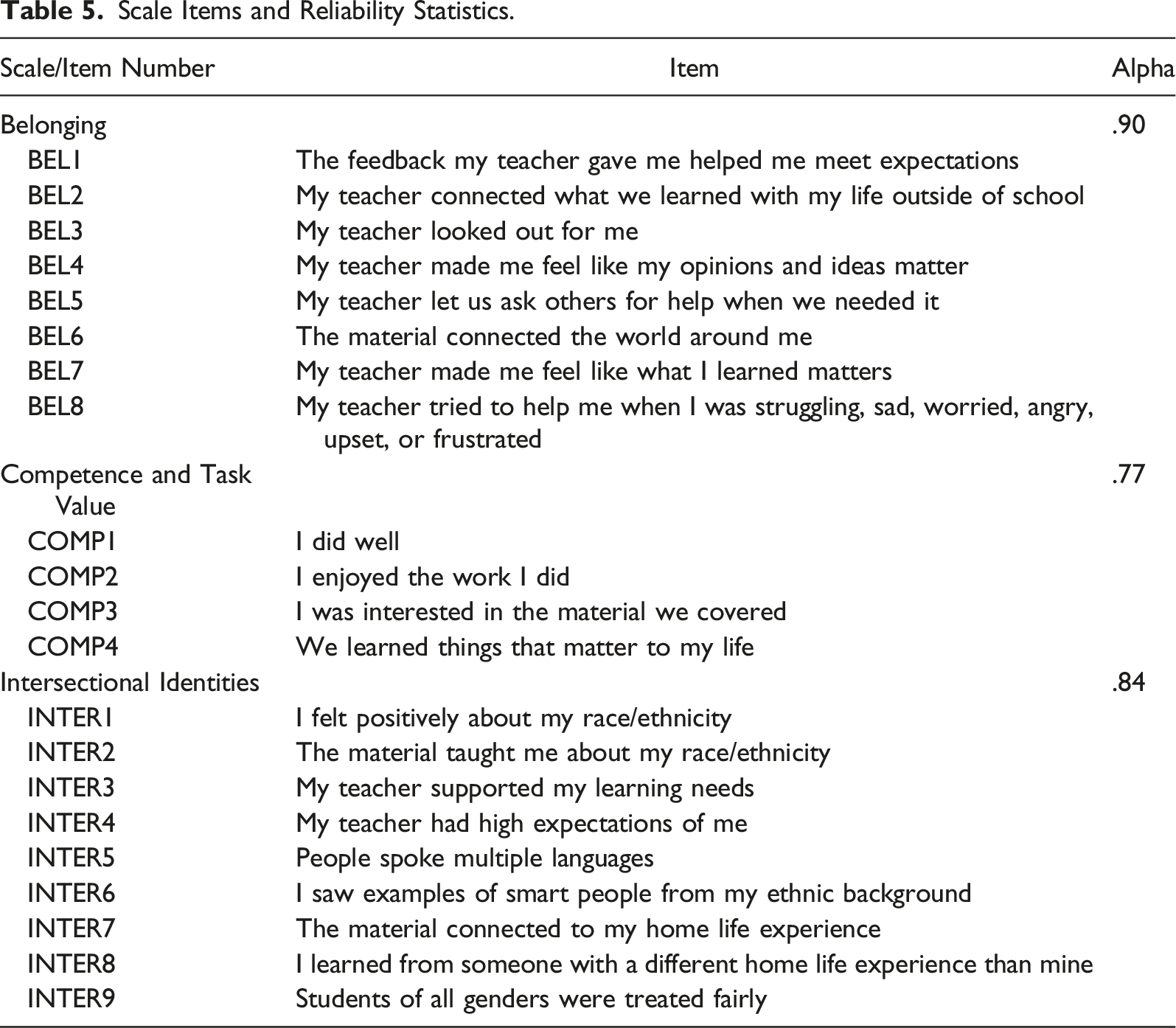

Scale Items and Reliability Statistics.

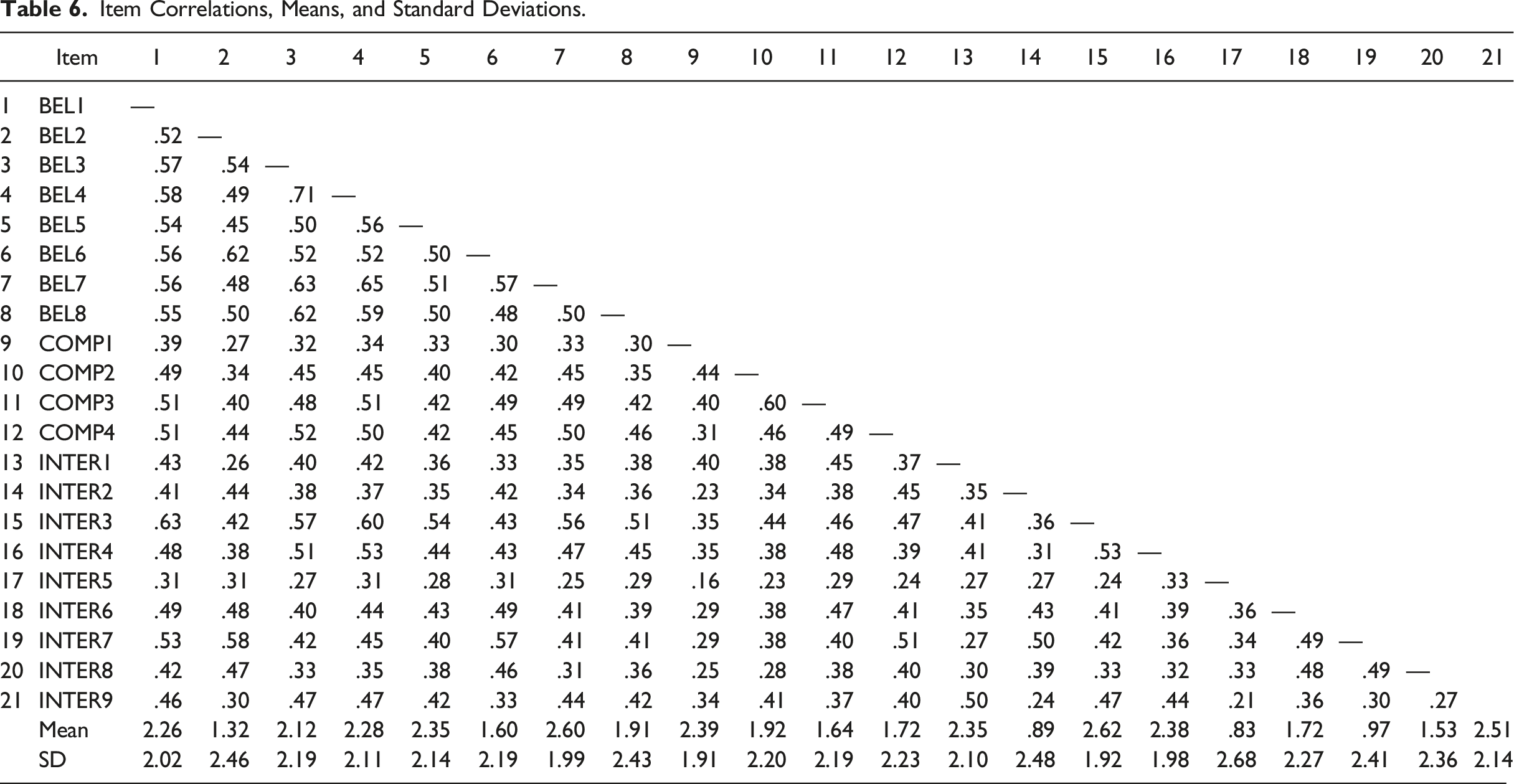

Item Correlations, Means, and Standard Deviations.

Demographic Differences in Item Responses

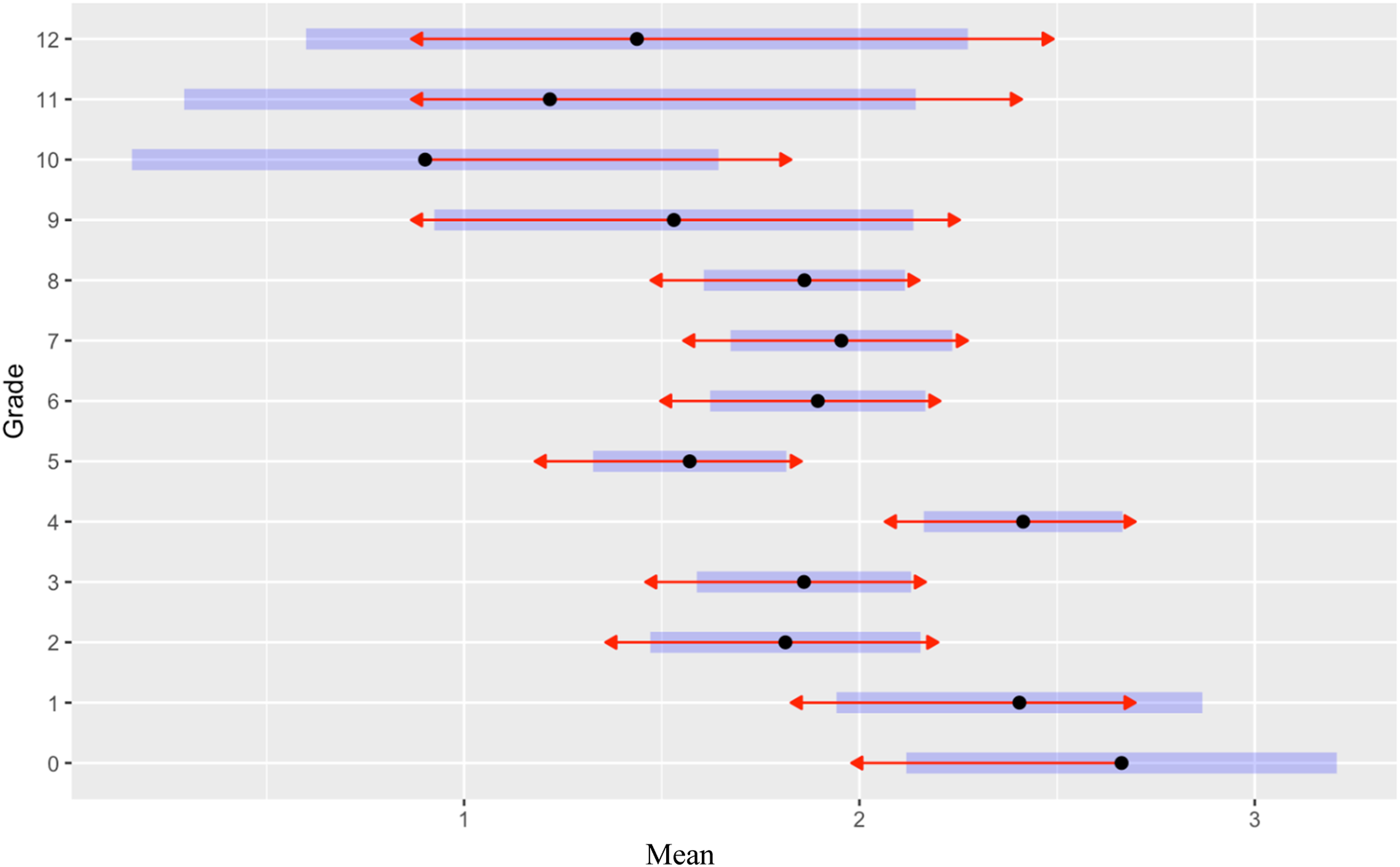

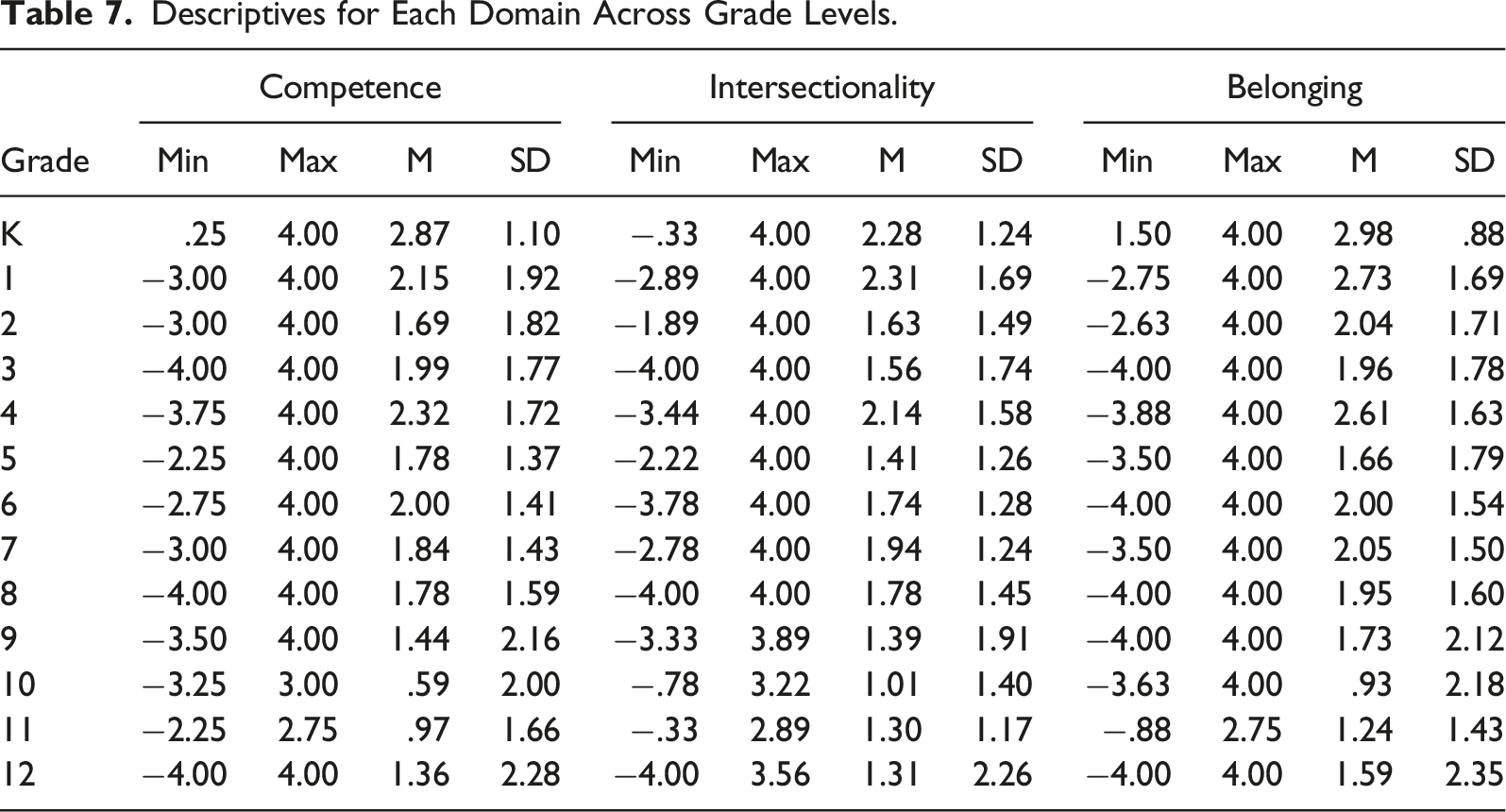

In line with developmental considerations, the extent of positive agreement with the items decreased systematically with age (F = 13.37, p < .001). That is, students in the early grades generally reported more agreement with the items and students in the later grades were more mixed in their evaluations (see Figure 3). Although there were no systematic gender differences in overall level of agreement with the items (F = .71, p = .40), there were some systematic differences in overall level of agreement related to ethnicity (F = 2.62, p = .02). Latinx and Multiracial students tended to agree a bit more with the items, .40 and .80 points higher on average, respectively, than White students. Agreement with items by grade level across 4 administrations.

Descriptives for Each Domain Across Grade Levels.

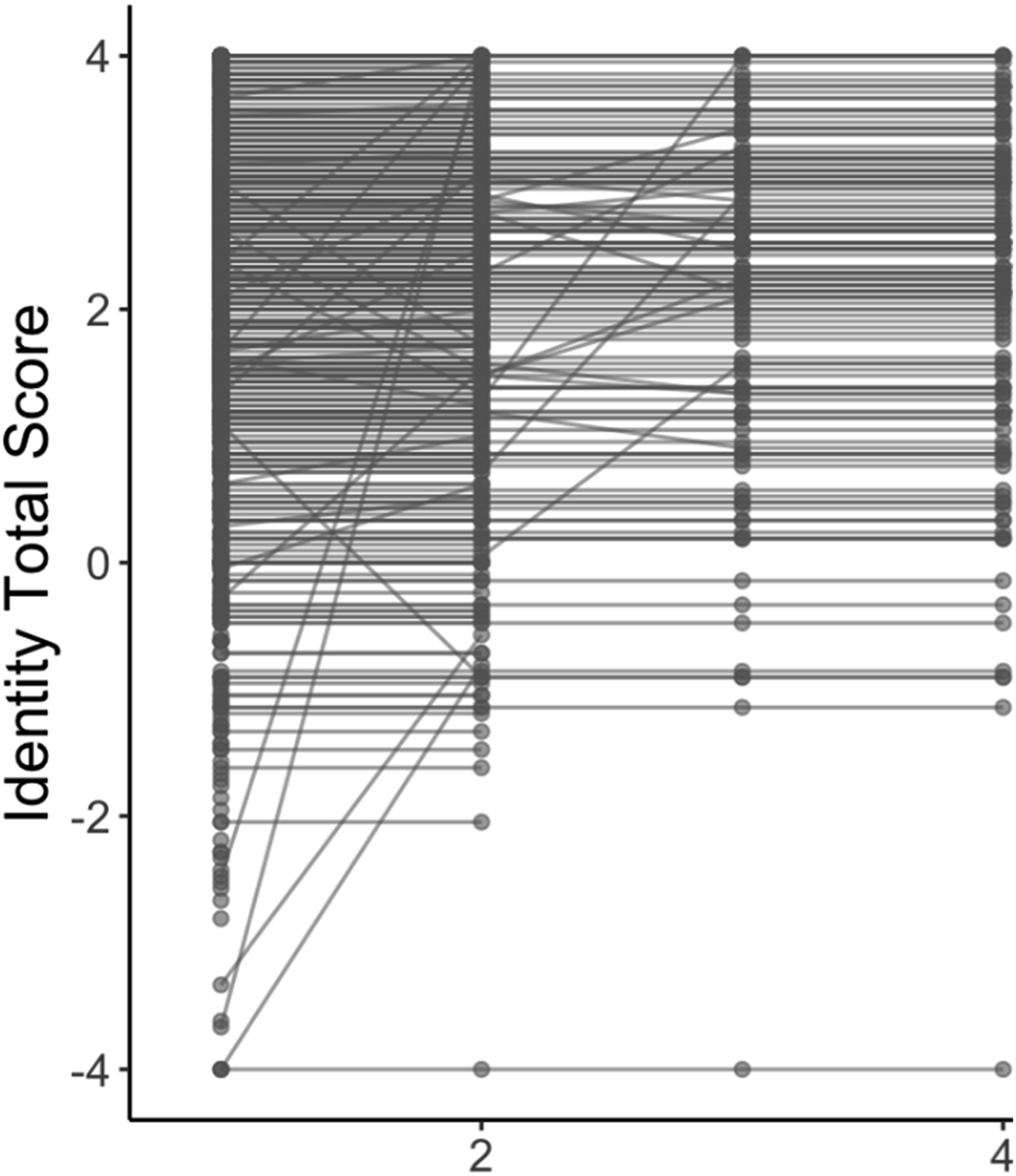

Within-Student Changes Across Administrations

To examine class-to-class variability of responses, teachers of participating classrooms were asked to administer the survey to students on four occasions. Of the N = 860 participating students, 15% (n = 125) had completed all 4 cycles of iterative administrations (after dropping n = 5 that completed 8). We examined patterns of responses for all students in grades K through 12 by conducting a linear mixed model fit by REML by grade. We found that students were generally consistent in their responses despite administrations of the measure occurring one to two weeks apart, accounting for 30% of the variance across administrations. There were very few exceptions to these patterns (see Figure 4). Within-Student Variation across 4 administrations.

Phase V: Formative Assessment Interpretations From Teachers in Our Partnering School District

The district’s interest in using the measure as a way to address limitations with teacher observation measures that are not aligned with ABP led to an invitation to present the background of the validation and use to teachers during a regularly scheduled district conference. Teachers voluntarily signed up to attend (N = 40) the session. After being provided a summary of the validation process, teachers were asked to provide feedback that should be considered with formative measures on student identity. Educators raised the issue that the district’s formative assessment platform has a negative association due to the large number of assessments students are required to take. The teachers also raised the problem of assessments having become “a box to check” that has resulted in the failure to use assessments to guide instruction and/or provide students with feedback on their progress. Teachers also lamented that students generally do not see value in completing surveys because they are not only asked to complete assessments and fill out many surveys but also never see action taken when they do provide feedback. The disconnect between participating in surveys to give feedback and the lack of action taken results in students becoming skeptical as to whether it is worth their time to respond to surveys at all.

In addition to issues with assessments writ large, educators expressed appreciation at the positively worded items that were directly connected to the student experience in ways that could enhance their practice. Specifically, the positivity of items (e.g., “I saw people from my background that were smart” and “This was personally meaningful to me”) makes the measure a useful tool to guide instruction and provide opportunities to engage in authentic and personally meaningful conversations with students about the content. When asked how they envision they might use the measure, educators provided various scenarios. Some raised the benefit of being able to use the measure to guide instruction within the school year and others envisioned using it to inform revisions to their curriculum for the following year.

Participants were asked to fill out a survey at the end of the session by the district, with 50% of participants providing written feedback (n = 20). Responses reflected an overwhelmingly positive disposition toward the applicability and use of the measure. To the prompts, “I would rate the session as valuable” and “The content of this session will inform my instruction or understanding,” almost all educators (95%) responded “strongly agree” or “agree” (with 65% “strongly agree”); and one responded “not sure.” Twenty percent of respondents expressed the need for more time to practice using the measure, and 100% expressed desire to learn more about the topic. In terms of the open-ended feedback provided, educators responded to questions about how they envision applying what they learned, how often they would use the items or measure to inform their practices, what changes would make the measure more useful, and anything else that should be considered.

Consistent with responses provided by educators during the session, overall, educators saw the incorporation of the use of the items and measure as very useful and envisioned using it in their own practice. There was, however, substantial variation in how often they would use it with some responses using the measure at the end of each semester, others 2–3 times per quarter, and still others stating that they would use it weekly. Many respondents expressed the need for additional time and practice with the measure. For one salient comment from the question “What would make the measure more meaningful to you?”, one educator stated that having information about what students themselves would like to see from a survey would make the measure more meaningful. Another educator suggested the use of the items as journal prompts for students.

Discussion

This study reflects the validation of an asset-based measure of students’ multiple identities as they relate to ethnicity/race, disability, academic (self-concept), and belonging in the context of a district with a comprehensive ABP curriculum. The aims of the validation were to evaluate a measure that could be used formatively to inform educators’ instructional planning in ways that affirm students’ identities. Although the extant research on identity development and ABP supports the validity of the identity factors in the measure, this study reflects additional validation procedures that include teacher feedback and student cognitive interviews; internal consistency of the items; as well as teacher feedback on the feasibility and use of the measure to inform their practice. Below, we discuss the validity evidence generated by the study for the intended use of the measure; implications for formative use; conditions required for the optimal use of the measure; and suggestions for future research.

Implications

Phases I and II

We relied on guidance provided by the Standards (AERA, APA, NCME, 2014) as well as Kane’s (2013) discussion of the argument-based approach to validation wherein validity “depends on how well the evidence supports the proposed interpretation/use” of the resulting scores (Kane, 2013, p. 21). As part of the validation, the extant literature on ABP and students’ self-concept, intersectional identities, and belonging “constitute the core of the [interpretation/use argument]” (Kane, 2013, p. 9). The present study, however, augments validity evidence by considering teacher and student feedback related to the intended interpretation and use of the measure consistent with the Standards evidence based on response processes (AERA, APA, NCME, 2014).

Phases III and IV

The present study also provides validity evidence as it pertains to internal structure and item-factor structure, in addition to the examination of the stability of the measure in the context of educators aiming to provide ABP instruction that affirms student identity, supporting the interpretation of scores (Phases III and IV; AERA, APA, NCME, 2014).

Given that the measure is designed to be used in K–12 contexts, we also carried out analyses to determine developmental differences in terms of agreement with items. Consistent with developmental considerations, we found that students in the early grades generally reported more agreement with the items than students in later grades, who were also more mixed in their evaluations. Another consideration of the intended interpretation and use of the measure is that it aims to gauge identity in the context of ABP. As such, we examined demographic differences in responses among students. The findings that Latinx and Multiracial students tended to agree slightly more with the items are consistent with the theoretical underpinnings of ABP as a pedagogical approach that affirms marginalized students, contributing to the validity evidence of the measure. Moreover, the lack of systematic differences among students who had an Individual Education Plan as well as those who were labeled as English learners (with the exception of higher belonging scores) also contributes to the validation of the measure. That is, in ABP contexts, students who are at risk of increased marginalization responded in ways that suggest they are not marginalized in relation to peers.

Phase V

As a final step in the validation process, we explored the extent to which educators found the measure a practical and useful tool to enhance their instruction consistent with our intended use (AERA, APA, NCME, 2014; Kane, 2013). Overall, educators familiar with ABP provide additional validity evidence for the intended use of the measure, which we expand on below.

Implications

The educators affirmed the utility of the measure and provided feedback regarding how often and how they might use the measure. For example, teachers may have students complete the measure after a lesson that centers ABP (that the teacher aims to refine) and carry out a discussion by asking students why they selected their answer on a particular item or set of items consistent with the procedure in Phase II of the validation process (Phase II). Teachers could carry out this process multiple times a week in the beginning of the year as a way to establish iterative feedback procedures in the classroom, establish rapport, and develop trust by ensuring that the information elicited results in changes in instruction that affirms students’ comments.

Another way teachers might use the measure is to evaluate factor-level and item-level data for each student, as well as scores disaggregated by race and gender so that educators can develop a more granular and concrete understanding of their students’ experiences. Teachers might assess items and/or scores on the subscales over several academic subject areas to understand fluctuations in students’ identity affirmation levels across the school day (or across time). When teachers administer the measure several times over the course of instructional unit, teachers can evaluate results in ways that provide information about events or curricular experiences that lead to dips and spikes in responses over time, leading to curricular refinement or revised pedagogical moves.

Conditions Required for Optimal Use

The teacher comments point to various conditions that need to be present to engage students in ways that are conducive to formative assessment for teachers. One of the most salient is that students must see value in answering items from the measure. Mitigating issues with perceptions of low utility might include explicit communication to students about the purpose of the measure; how it will be used; and why students’ voices are important. Once the purpose of answering items on the measure is established, however, students must also see evidence that teachers have acted on their feedback.

There are also considerations that contribute to determining the starting point for the use of the measure. For the measure to be used consistent with the intended use and interpretation of the scores, educators will need to have a requisite understanding of the theoretical underpinnings of belonging, intersectionality, and self-competence as they relate to ABP instructional practices and student outcomes. For teachers who are engaging in their trajectory to develop ABP, it may prove useful to develop rapport with students, using the belonging and competence items as a point of entry. The process should be iterative and allow the opportunity for teachers and students to co-construct meaning of the purpose of instruction and the extent to which students identify with the instruction. Teachers who are further along the trajectory of developing ABP may want to use all the items as a way to evaluate the extent to which their aims were received by students as intended. It is important to consider the context of the validation and that teachers in the district are exposed to many opportunities to develop ABP. Accordingly, the recommendations provided that ranged from using the measure at the end of a unit or semester may not be the ideal practice for teachers who are earlier in their development of ABP.

Future Research

Research on ABP has contributed to our understanding of the important role it has in promoting student academic outcomes (e.g., Aronson & Laughter, 2016; López, 2017). Despite the promise of ABP, there is a paucity of research on how teachers can formatively enhance their skills. The present study is the first attempt (to our knowledge) to validate a measure that is a departure from a reliance on those who are not the intended recipients of instruction, leveraging students’ perspectives of teaching for teachers who wish to enhance ABP practices. To contribute to the argument-based approach (Kane, 2013), future research is needed to add to a comprehensive understanding of how the measure can be used to inform teacher practice, as well as for whom and under what circumstances.

In addition to future work detailing how the measure might enhance ABP instruction, future research is needed with samples that vary in terms of the focus of culturally responsive practices to determine sensitivity to instruction eliciting students various and intersecting identities. Namely, the norming sample was in a district with expert teachers who had received robust professional development in ABP aligned with the district mission. To determine whether identities vary across contexts, it would be beneficial to carry out research with teachers who vary in terms of their expertise in ABP, as well as the extent to which the measure is used to inform instruction.

Conclusions

Research has provided promising evidence on the effectiveness of endeavors in preservice teacher preparation, such as coursework focused on social justice, which can reduce implicit biases (e.g., Kumar & Hamer, 2013). Most educators, however, do not receive the kind of preparation that reduces biases and prepares them for asset-based pedagogy (Valenzuela, 2016). Nevertheless, recent studies illustrate that motivation toward being unprejudiced is an important consideration among teachers developing their understanding about asset-based pedagogy (Kumar et al., 2021). Moreover, one of the many pervasive obstacles to ABP is that the understanding and practice is limited to cultural celebrations, trivialization, essentializing, and the absence of political analysis of inequities (Sleeter, 2012). Taken together, providing teachers with a measure that can be used to understand how their practices are received by students as described here can be a powerful tool in ameliorating the obstacles to ABP. Teachers can engage in conversations that deepen their knowledge of each student’s lived experience, mitigating trivialization and essentializing. Moreover, an understanding of how lessons that intentionally center students’ experiences can also serve to replace superficial cultural celebrations.

We conclude with a note of caution against the use of the measure or items in ways inconsistent with the validation evidence provided here. For example, using scores from the measure in summative ways to evaluate teachers’ practice is inconsistent with the intended use and interpretation of scores. The focus on a formative measure was to provide teachers with a way to center student voice as they refine instruction, intentionally avoiding the promotion of the measure in ways that could reduce teacher agency. The present validation suggests that the measure can provide teachers with numerous ways to enhance their instruction aligned with asset-based practices but does not provide evidence that it can or should be used in ways that deviate from its intended application.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by funding provided by the Assessment for Good Program by the Advanced Education Research and Development Fund.