Abstract

Efficient and intuitive interpretive frameworks for social-emotional learning (SEL) measures are necessary for identifying student needs and informing programming decisions across multitiered systems of support in schools. Though familiar to educators and often used with standardized tests of academic achievement, criterion-referenced frameworks are less common in SEL assessment. As such, the current study examined the psychometric evidence for scores from one such framework, the Competency-Referenced Performance Framework, which was developed to inform universal screening decisions based on the SSIS SEL Brief Scales (Elliott et al., 2020). Specifically, we evaluated stability, test-criterion relationships with academic outcomes, and treatment sensitivity of the CRPF using data from an efficacy trial of a universal SEL program. Results provided preliminary supportive evidence for the CRPF.

Keywords

Social-emotional learning (SEL), the process of developing healthy self-identities, managing emotions, achieving goals, feeling empathy, maintaining relationships, and making caring decisions, is essential to children’s school success (Collaborative for Academic, Social, and Emotional Learning [CASEL], 2022). Social-emotional learning involves knowledge, skills, and attitudes relative to five core competencies (self-awareness, self-management, social awareness, relationship skills, and responsible decision-making), which can be taught via both formal and informal instruction in schools (CASEL, 2022). Intervention programs promoting SEL at the universal level (e.g., all students in a classroom or school) have been shown to foster positive student skills, behaviors, and attitudes and reduce emotional distress in both the short- and long-term (Durlak et al., 2011; Taylor et al., 2017).

In response to growing student mental health needs exacerbated by the COVID-19 global pandemic, the U.S. Department of Education has recommended multi-tiered systems of support (MTSS) as an organizing framework to guide the provision of evidence-based services in schools (U.S. Department of Education, 2021). Such systems emphasize prevention for all students universally (Tier 1) as well as early intervention for those who need additional targeted and individualized support at Tiers 2 and 3 (Hughes et al., 2020). Within such frameworks, students are moved along a continuum of SEL programming (i.e., universal/primary, secondary, and intensive/tertiary) based on skill strengths and needs, responsiveness to intervention, and consideration of risk and protective factors (Hines et al., 2022). To successfully use tiered systems for SEL allocation, schools require psychometrically sound measures to identify students in need of intervention and monitor progress (McKown, 2019). Even though several SEL measures are currently available, few are fully aligned with CASEL’s SEL framework or sufficiently brief for universal screening (Anthony et al., 2021).

Unfortunately, there are considerable challenges to implementing effective MTSS in real-world school settings, including the use and interpretation of assessment data to make valid decisions (Hagermoser Sanetti & Collier-Meek, 2019). In practice, school teams often have difficulty knowing how to employ data to screen for student needs, monitor student progress, and assess intervention effectiveness (Burns et al., 2008). Interpreting the results of screening and progress monitoring instruments and then using the information to align students with services can be a daunting and complex task, and errors in decision making can result in financial and time losses for schools and opportunity costs for students (VanDerHeyden et al., 2018).

Norm-referenced score interpretation (e.g., percentile ranks) is often used in screening and summative assessment of SEL (DiPerna et al., 2020), which relies on comparing a score to that of a reference group to make sense of performance (American Educational Research Association [AERA], American Psychological Association [APA], & National Council on Measurement in Education [NCME], 2014). In contrast, criterion-referenced scores, commonly used in state and other standards-based achievement tests, are interpreted relative to well-defined standards that have been previously established (Clifford, 2016; Neil et al., 1999). Proficiency categories (e.g., basic, proficient, and advanced), defined by performance of specific skills and/or behaviors, can be used by educators to understand an individual’s or group of students’ current level of skill performance, determine need for intervention, and evaluate movement across categories after exposure to evidence-based instruction (Gross et al., 2019). As such, criterion-referenced performance levels tend to be more intuitive to stakeholders and often preferred when communicating assessment results (e.g., Hart et al., 2020). Because student scores are not referenced to the performance of other students, but instead compared to a clearly defined set of expectations, criterion-referenced assessment can reveal that all students, some students, or no students meet predetermined standards, informing subsequent instructional decision-making (Clifford, 2016). Criterion-referenced scores can therefore provide useful feedback and summative information to students, families, and educators seeking to clearly understand if students are meeting desired learning outcomes and communicate the potential need for intervention (Lok et al., 2016).

In response to the growing demand for efficient and psychometrically sound measures of SEL skills, several brief SEL scales have been published during the past decade. For example, the Social Skills Improvement System–Social Emotional Learning Brief Scales (SSIS SEL Brief Scales; Elliott et al., 2020) are a family of multi-informant brief scales developed to assess skills aligned with the domains of the CASEL’s SEL framework (i.e., self-awareness, self-management, social awareness, relationship, and responsible decision-making). In addition to norm-referenced score interpretation, the SSIS SEL Brief Scales have a criterion-referenced interpretive framework, the Competency-Referenced Performance Framework (CRPF; Elliott et al., 2020). The CRPF categorizes the total scores of the SSIS SEL Brief Scales into four overall SEL competency levels (Emerging, Developing, Competent, and Advanced) based on frequency (never, seldom, often, and almost always) of performance relative to developmentally appropriate expectations from a learning progression perspective. Specifically, children in the Emerging level receive consistently low frequency ratings (never or seldom) on strength-focused items, and children in the Advanced level have consistently high ratings of almost always. Cut scores corresponding with these proficiency levels were established based on data from a large nationally representative sample of K-12 students as well as reviews by school professionals and SEL experts (Elliott et al., 2020). However, no studies to date have examined psychometric evidence for the CRPF levels using an independent sample.

The Standards for Educational and Psychological Testing (Standards; AERA, APA, & NCME, 2014) specify that evidence of reliability and validity of test scores be provided to support their proposed use. With respect to SEL assessment, the psychometric evidence for a measure should be evaluated with respect to whether the scores it provides will guide intended uses (e.g., screening) and assist in reaching conclusions about student SEL competency levels (Buros Center for Testing, 2020). Screening requires examining broad outcomes to assess levels of risk and identify student needs (Kettler et al., 2014). McKown (2019) suggested criteria for evaluating SEL assessments includes temporal stability for score reliability, correlations with other relevant variables, and evidence that students exposed to high-quality instruction improve more than a control group. Similarly, Gross et al. (2019) described scores from sound screening instruments as demonstrating evidence including reliability, responsiveness to change, and generalized utility. Maintaining technical rigor while maximizing efficiency and utility are important considerations for developing SEL screening assessments for use in research and practice (Kim et al., 2022).

For criterion-referenced score interpretation, reliability evidence can be established using decision consistency indexes, such as percentage of correct decisions and Cohen’s kappa, across replications of the same testing procedure (Standards, p. 40). One way to replicate the same testing procedure is to repeat administration of the same test to the same group of examinees (i.e., test-retest). Although test-retest stability for SSIS SELb-T total scores has been found to be sufficient in previous studies (.78 in Elliott et al., 2020 and .85 in Anthony et al., 2021), stability evidence for the CRPF levels has not been evaluated to date.

Validity refers to “the degree to which all the accumulated evidence supports the intended interpretation of test scores for the proposed use” (Standards, p. 14). One relevant and common source of evidence to support the use of criterion-referenced interpretations is test-criterion relations, which can be established using correlational and experimental methods to examine concurrent and predictive evidence (Hambleton et al., 1978). A body of literature exists to support the association between SEL competence and academic outcomes for both elementary and secondary grades (Panayiotou et al., 2019), and the relationships between prosocial behaviors and academic achievement found in previous research has been characterized as moderately positive (see DiPerna et al., 2016).

Given that academic achievement is one of, if not the, primary intended outcomes of the formal schooling process, whether the CRPF levels are related concurrently and predictively to academic outcomes is an important consideration for MTSS decision-making in schools. As one example, correlations between student reported SEL competence and standardized academic test scores were found to be mediated by mental health difficulties, suggesting important relationships between SEL, academic achievement, and student mental health needs (Panayiotou et al., 2019). Currently, no published studies have examined the test-criterion relationships between the CRPF and academic outcomes, and this important form of validity evidence warrants examination.

Lastly, as the SSIS SEL Brief Scales are intended to be used for universal screening and progress monitoring of SEL skills within MTSS, evidence of treatment sensitivity (which is essentially the experimental method that Hambleton et al., 1978 described) would be vital to support the use of scores for assigning students to tiers of interventions, progress monitoring of intervention responsiveness, and evaluating of intervention efficacy. For example, the SSIS-Classwide Intervention Program (SSIS-CIP; Elliott & Gresham, 2007) is a universal SEL program used in schools to promote social skill development and positive behavior. Via 10 core units and 30 lessons delivered by the classroom teacher, the program focuses on instruction of foundational social skills such as listening to others, paying attention to your work, and asking for help. Although previous evaluations have demonstrated that teacher ratings using the SSIS Rating Scale–Teacher form (SSIS RS-T; Gresham & Elliott, 2008) are responsive to the SSIS-CIP intervention (DiPerna et al., 2015; 2018), the sensitivity of the CRPF to skill changes resulting from such universal interventions has not yet been studied.

Thus, the current study is guided by three research questions: (1) How stable are students’ CRPF levels over time (i.e., stability)? (2) Are students’ CRPF levels related to their academic outcomes (i.e., test-criterion relationships with academic outcomes)? (3) Are students’ CRPF levels sensitive to intervention (i.e., treatment sensitivity)?

Although initial reliability and validity evidence support the use of SSIS SELb-T norm-referenced total scores for lower-stakes decisions (Anthony et al., 2021), stability, test-criterion relationships with academic outcomes, and treatment sensitivity of the CRPF levels have not been thoroughly examined to date. Based on previous findings with total scores from the SSIS SELb-T, the CRPF levels are expected to be moderately stable in the absence of intervention (Anthony et al., 2021), positively related to academic outcomes (e.g., Panayiotou et al., 2019), and relatively sensitive to skill change resulting from intervention (e.g., DiPerna et al., 2016; 2018).

Method

To generate psychometric evidence to evaluate the CRPF, we conducted a secondary analysis of data from a previous efficacy trial of a school-based SEL intervention. Specifically, evidence of reliability (test-retest stability) and validity (test-criterion relationships with external criteria as well as treatment sensitivity) appropriate for criterion-reference score levels were examined.

Sample

Data for this study were drawn from a multi-year efficacy trial of the SSIS-Classwide Intervention Program (SSIS-CIP; Elliott & Gresham, 2007) in the Mid-Atlantic region of the United States (See DiPerna et al., 2015; 2016 for more information). The original study used a multisite cluster randomized controlled trial design in which second-grade classrooms (clusters) were randomly assigned to treatment conditions (intervention or business-as-usual [BAU; standard practices employed by school if a research study was not being conducted]) within seven schools (sites). It included four cohorts of students who were enrolled in participating Grade 2 classrooms across four successive years. Follow-up data were collected for two additional years in the same schools during which schools were free to use the SSIS-CIP program on a voluntary basis.

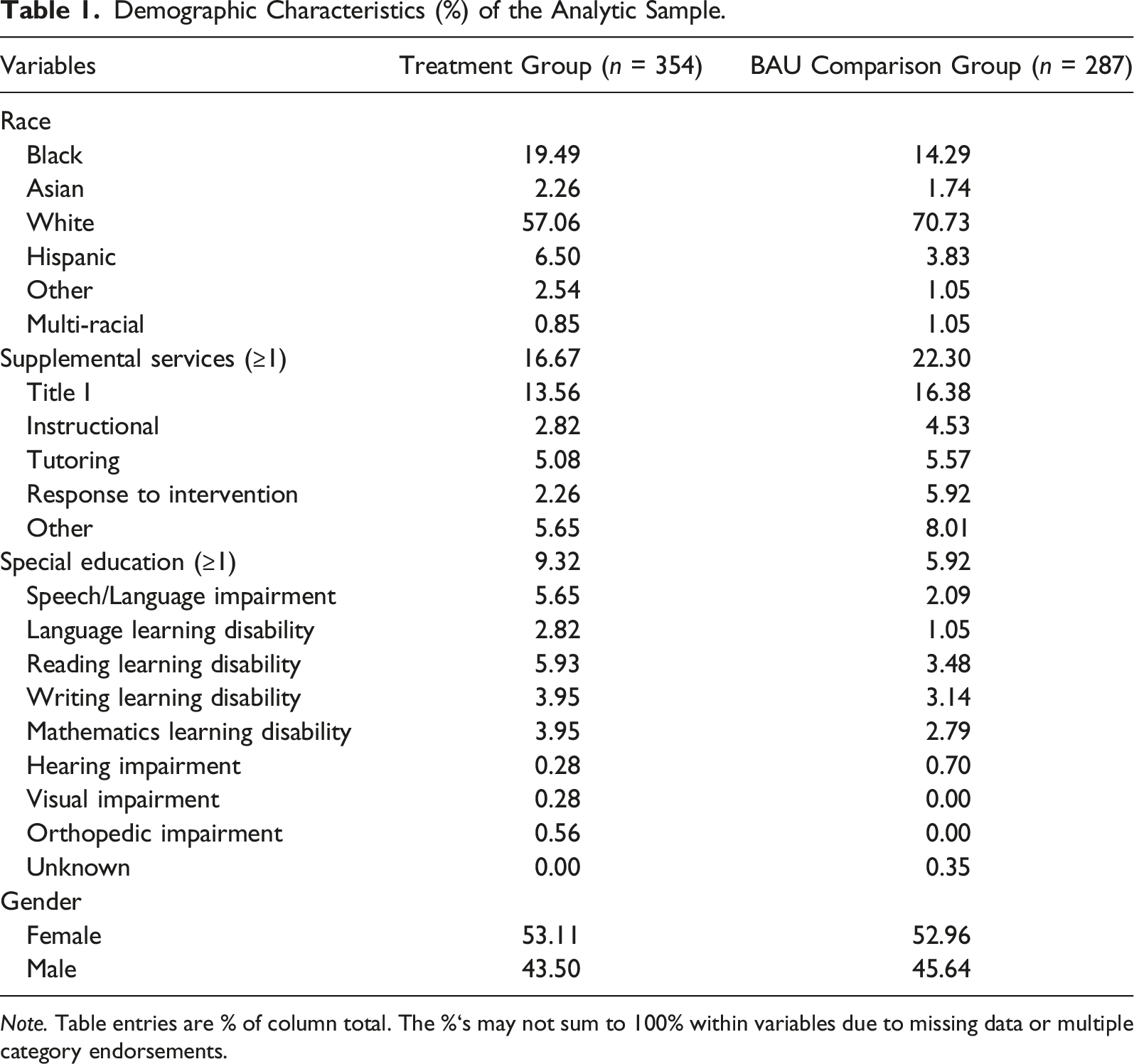

Demographic Characteristics (%) of the Analytic Sample.

Note. Table entries are % of column total. The %‘s may not sum to 100% within variables due to missing data or multiple category endorsements.

Measures

Three measures were used in this study, one for SEL skills and two for academic outcomes (reading and math).

SEL skills were measured by items on the SSIS SELb-T (Elliott et al., 2020). The SSIS SELb-T was developed by applying Item Response Theory to the standardization sample of the more extensive and full-length SSIS RS-T and SSIS SEL Rating Forms (Gresham & Elliott, 2017). A set of maximally efficient items was selected that maintained content coverage as well as similar psychometric properties to the original forms (see Anthony et al., 2021 for more information about the development of these brief forms). The published SSIS SELb-T (Elliott et al., 2020) includes 20 items, four for each of the five CASEL domains of self-awareness, self-management, social awareness, relationship skills, and responsible decision-making. Anthony et al. (2021) reported evidence of internal consistency (Cronbach’s

Star Reading (Renaissance Learning, 2010) is a brief, computer adaptive test that assesses students’ reading comprehension and overall reading achievement. It includes 25 items requiring students to construct sentence meaning based on vocabulary knowledge and contextual information. Split-half reliability estimates reported by the publisher were .89 in both Grades 2 and 3. Publisher-reported validity evidence included high correlation coefficients between Star Reading scores and other standardized reading assessment scores (e.g., American College Testing, Scholastic Aptitude Test, and Iowa Test of Basic Skills). Star Reading produces scaled scores, which are transformations of the Rasch ability estimate resulting from the test, that range from 0 to 1400. The scores represent a student’s performance on a vertical developmental scale that spans the K-12 grade levels (Renaissance, 2010). For example, the first to third quartiles (Q1, Q2, and Q3) benchmarked for Grade 2 students in the fall (around baseline assessment for our sample) were 126, 224, and 322, respectively; and the corresponding quartiles for Grade 3 in fall (around the 1-year follow-up assessment for our sample) were 259, 357, and 461 (Renaissance Learning, 2015).

Star Math (Renaissance Learning, 2009) uses multiple-choice questions to assess students’ number concepts, computation, algebraic thinking and other fundamental math skills. Split-half reliability estimates provided by the publisher were .78 in both Grades 2 and 3. High positive correlations with scores from other standardized math assessments provide support for the validity of Star Math scores. Star Math scaled scores range from 0 to 1400. The first to third quartiles benchmarked for Grade 2 students in fall (around baseline assessment for our sample) were 357, 414, and 467, respectively; and the corresponding quartiles for Grade 3 in fall (around the 1-year follow-up assessment for our sample) were 443, 500, and 552 (Renaissance Learning, 2015).

Procedures

Active parent/guardian consent and student assent were obtained for all students participating in the data collection associated with the larger efficacy trial; approximately 52% of students in participating classrooms had parental consent to participate. Classrooms in both intervention and BAU conditions followed the same data collection procedures. Teachers completed an online questionnaire for each participating student that included the SSIS SELb-T items as well as other items from the full-length SSIS teacher form. Teachers also provided student demographic information such as gender, race/ethnicity, receipt of special education, or supplemental services. Trained research assistants administered the Star Reading and Math assessments. Baseline data were collected within a 4-week period (e.g., October–November) before implementation of the SSIS-CIP (Elliott & Gresham, 2007) in classrooms randomly assigned to the treatment condition. Posttest data were collected after the SSIS-CIP implementation (e.g., March–April). Follow-up Star data were collected in the year following the SSIS-CIP implementation in winter/early spring (e.g., February–March).

Teachers assigned to the intervention condition received training in using the SSIS-CIP program (see DiPerna et al., 2015, 2016 for more information). The SSIS-CIP, which focuses on social skills identified by teachers as being important for successful classroom learning, consists of 10 core units that are each taught via three lessons. Program materials include a teacher manual providing scripted lesson plans, brief video examples, and role-play activities for students. Lessons are delivered through direct instruction of skill steps, modeling and practice of steps, and monitoring and generalization activities. Fidelity of implementation of each of the lesson components was monitored via periodic real-time observations in the classroom by trained research assistants as well as weekly self-report questionnaires completed by teachers. Fidelity reported by both trained independent observers and teachers was high (97–98%). APA ethical guidelines were followed for conducting human participants research, and all procedures were approved by institutional review board.

Data Analysis

To address the first research question about stability of the SSIS SELb CRPF levels, only data from participants in the BAU condition were used because their post-test scores were not affected by the intervention. Specifically, we cross-tabulated frequencies between baseline and post-test CRPF levels for the BAU group and calculated percentages of each baseline level distributed across different CRPF levels at posttest. The association between baseline and posttest CRPF levels was estimated by multiple indexes (including the decision consistency indexes of percentage of agreement and Cohen’s kappa, phi-coefficient, and Spearman’s rho) and tested for statistical significance using chi-square. Stronger association suggests higher stability and less movement across CRPF levels over the approximate 3-month interval.

To address the second research question and examine test-criterion relationships with academic outcomes for the CRPF levels, we again used participants in the BAU condition who completed the Star measures at baseline for concurrent relationship evidence and 1-year follow up for predictive relationship evidence. The CRPF levels defined at baseline were analyzed to provide concurrent and predictive relationship evidence with academic outcomes at baseline and 1-year follow up, respectively. We first conducted a simple one-way ANOVA test to examine CRPF level differences on each of the standardized Star Math and Star Reading measures at each time point. Pairwise comparisons between CRPF level means were conducted using Tukey’s HSD to control familywise Type I error. Next, we conducted a random-intercept ANOVA model using the mixed procedure in SAS (SAS Institute Inc, 2020a) to account for clustering by classrooms and to test CRPF level mean differences on math and reading at each time point while controlling for participants’ demographic variables, including receipt of special education (1 = yes, 0 = no), receipt of supplemental services (1 = yes, 0 = no), race/ethnicity (1 = White student, 0 = racial-ethnic minority student), and gender (1 = male student, 0 = female student). Benjamini and Hochberg (1995) correction was applied to control for false discovery rate due to multiple comparisons between groups (i.e., 6 pairwise comparisons). Significant differences among CRPF levels on baseline math or reading scores would provide concurrent relationship validity evidence for the CRPF. Significant CRPF level differences on math or reading scores at 1-year follow up would provide predictive relationship validity evidence for the CRPF. In addition, we ran a random-intercept ANCOVA model using the mixed procedure in SAS (SAS Institute Inc, 2020a) on each of the follow-up math and reading measures while controlling for participants’ corresponding baseline math or reading scores and demographic variables to investigate whether the CRPF levels could predict relative change over a year in academic outcomes.

For Research Question 3 regarding the sensitivity of CRPF levels to intervention, we used data from the efficacy trial of the SSIS-CIP for second grade participants with both baseline and posttest CRPF levels. First, baseline equivalence between treatment conditions was gauged for baseline CRPF levels and each of the demographic variables using the quick and simple chi-square test of independence. We did not use more advanced methods to assess baseline equivalence as these baseline variables were all included in the analysis model and thereby controlled. Then, we ran a random-component proportional odds model with multinomial distribution and cumulative probit link to test whether students in the SSIS-CIP and BAU comparison conditions had differential probabilities of moving up or down the CRPF levels from baseline to post-test. Participants’ special education status, supplemental service status, race-ethnicity (White student or racial-ethnic minority student), and gender were included as covariates and clustering by classrooms were accounted for in the model using the glimmix procedure of SAS (SAS Institute Inc, 2020b).

Results

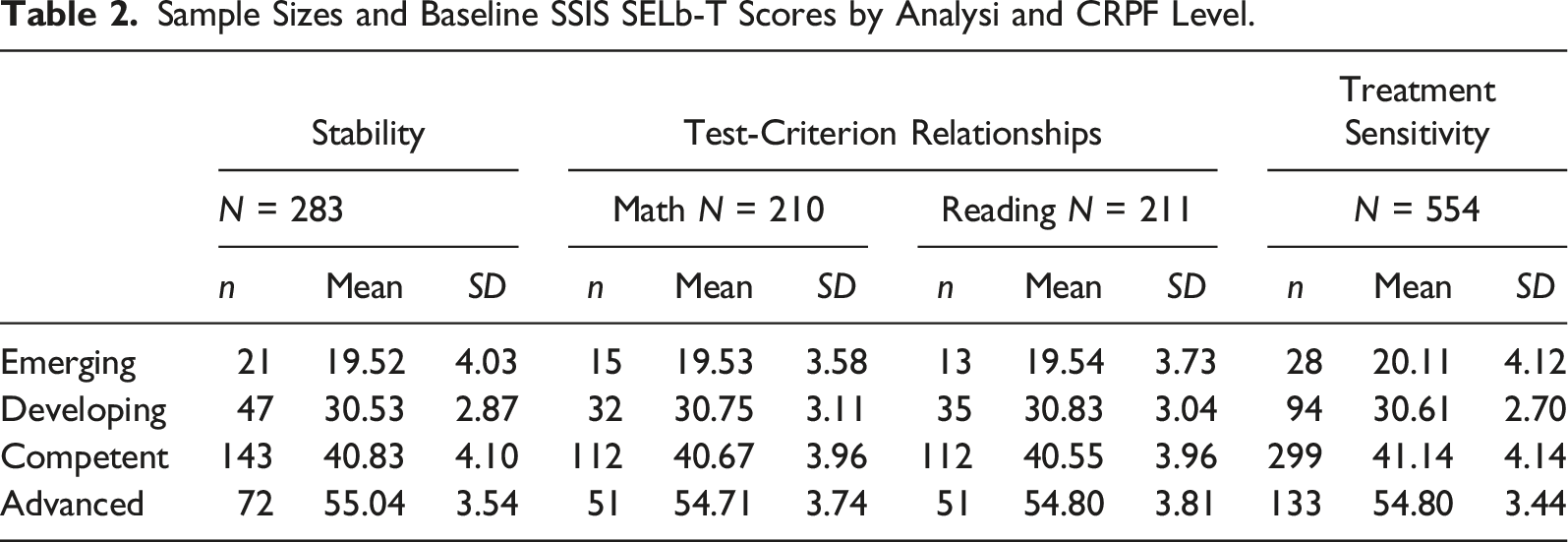

Sample Sizes and Baseline SSIS SELb-T Scores by Analysi and CRPF Level.

As expected, the SSIS SELb-T prorated total scores were different across CRPF levels, by about 10 points between adjacent levels from Emerging to Competent and 15 points between Competent and Advanced. The SSIS SELb-T score variability was quite similar across the CRPF levels. The score pattern was also similar across analysis samples.

Stability Evidence

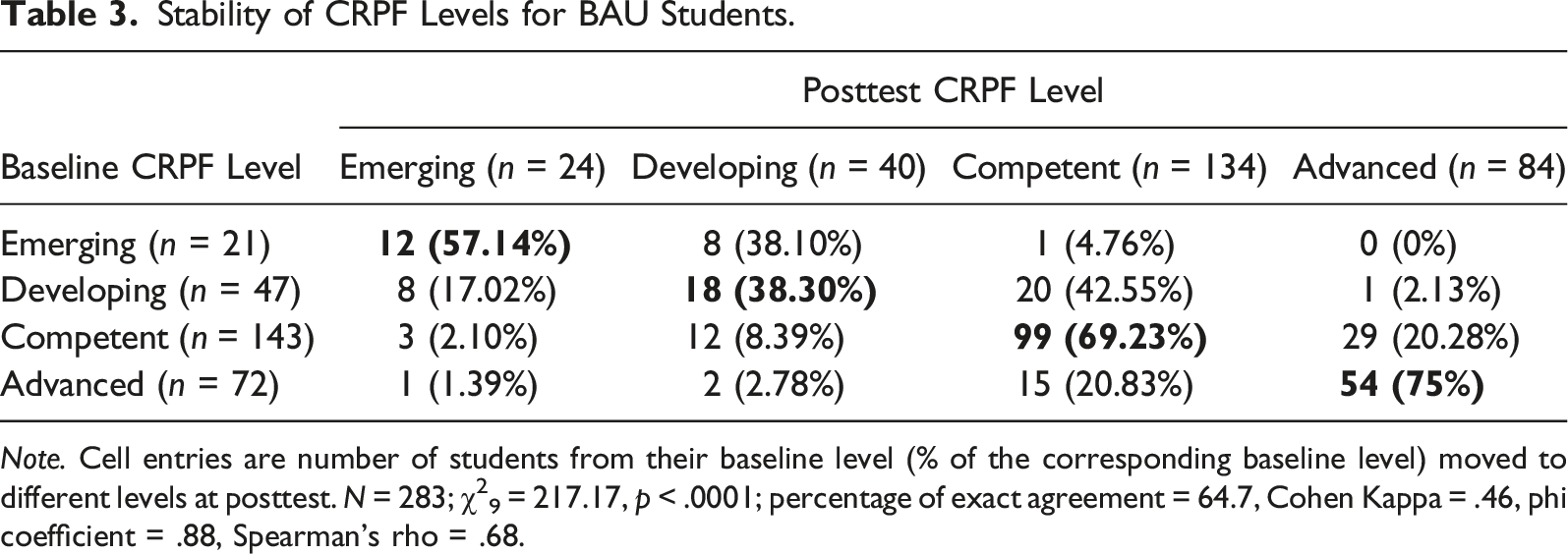

Stability of CRPF Levels for BAU Students.

Note. Cell entries are number of students from their baseline level (% of the corresponding baseline level) moved to different levels at posttest. N = 283; χ29 = 217.17, p < .0001; percentage of exact agreement = 64.7, Cohen Kappa = .46, phi coefficient = .88, Spearman’s rho = .68.

Test-Criterion Relationships Evidence

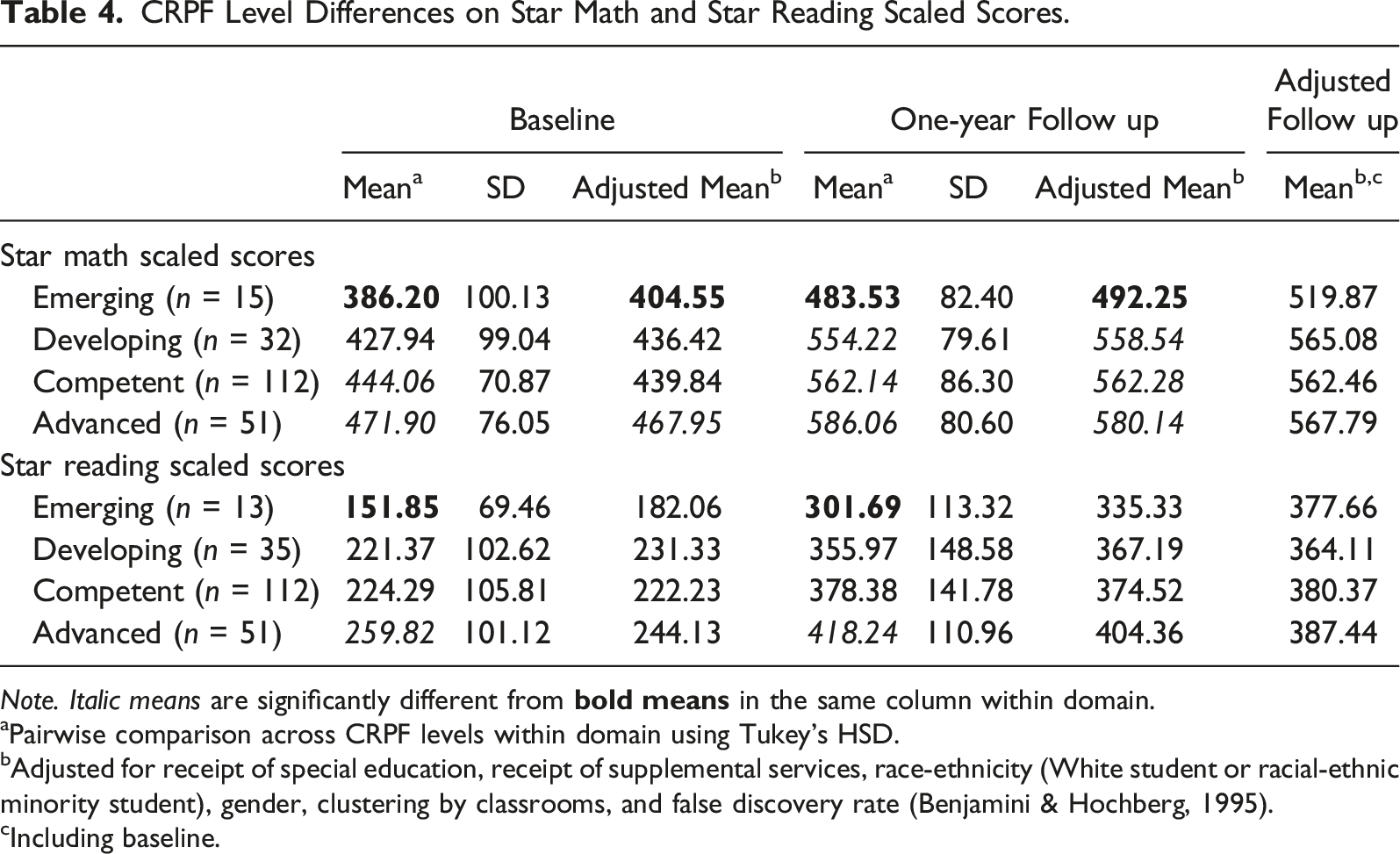

CRPF Level Differences on Star Math and Star Reading Scaled Scores.

Note. Italic means are significantly different from

aPairwise comparison across CRPF levels within domain using Tukey’s HSD.

bAdjusted for receipt of special education, receipt of supplemental services, race-ethnicity (White student or racial-ethnic minority student), gender, clustering by classrooms, and false discovery rate (Benjamini & Hochberg, 1995).

cIncluding baseline.

Treatment Sensitivity

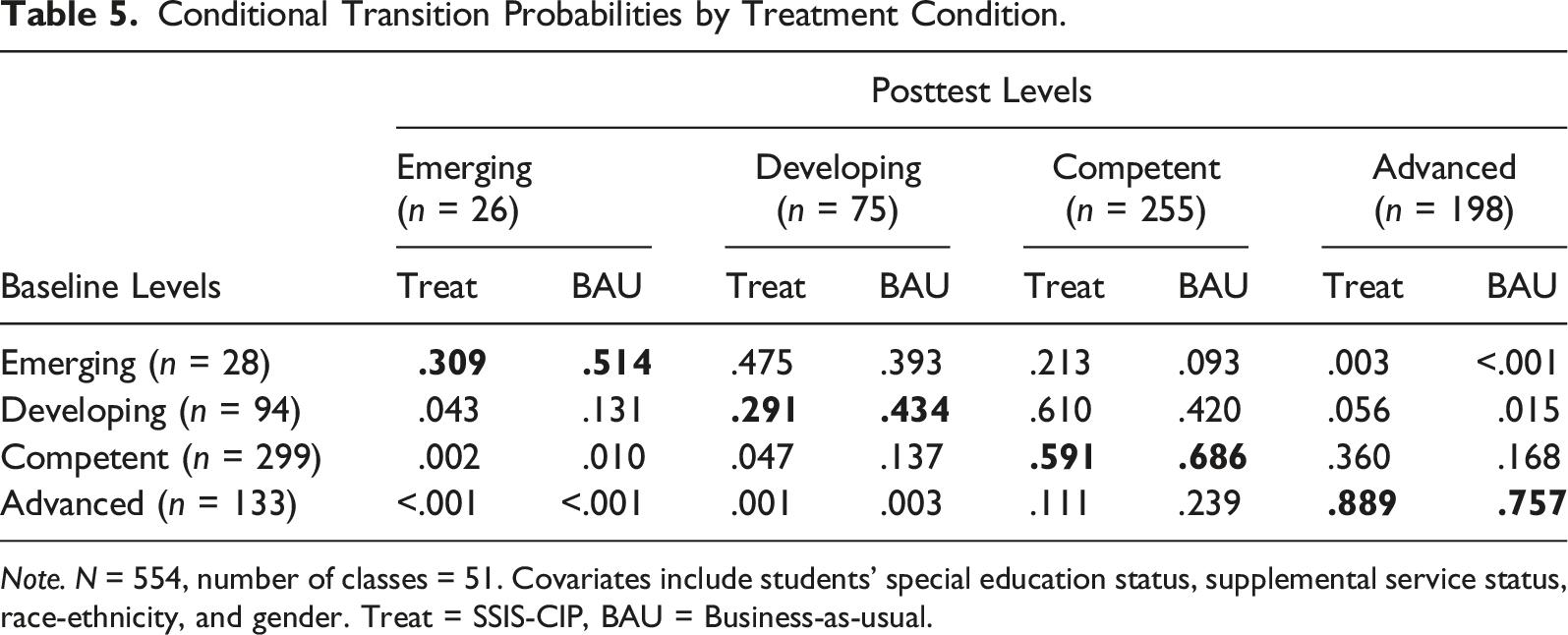

Based on chi-square tests of independence, the two treatment groups did not appear to be greatly unbalanced with respect to baseline CRPF levels (χ23 = 7.34, p = .062), special education status (χ21 = 2.60, p = .107), receipt of supplemental services (χ21 = 2.09, p = .148), and gender (χ21 =.05, p = .815). However, the treatment group had a larger proportion of racial-ethnic minority students than the BAU group (35.67% vs. 23.68%; χ21 = 9.26, p = .002). Results of the random-component proportional odds model showed that treatment condition was a statistically significant predictor of posttest CRPF levels after accounting for baseline CRPF levels, demographic variables, and clustering (b = .53, SE = .19, p = .007).

Conditional Transition Probabilities by Treatment Condition.

Note. N = 554, number of classes = 51. Covariates include students’ special education status, supplemental service status, race-ethnicity, and gender. Treat = SSIS-CIP, BAU = Business-as-usual.

Discussion

School-based interventions to promote student social-emotional competence have become widespread during the last decade (Bryant et al., 2021). However, the development of sound assessment tools and practices to inform intervention planning have lagged (Gross et al., 2019), and data-based decision making about screening and intervention remains a challenging task for MTSS teams (VanDerHeyden et al., 2018). Efficient SEL measures with practical interpretive frameworks are essential to facilitate SEL screening in schools and inform programing decisions (DiPerna et al., 2020). The competency-referenced performance framework (CRPF) was developed for the SSIS SEL Brief Scales to facilitate screening decisions (Elliott et al., 2020). However, the psychometric evidence for performance levels yielded from this framework has not been examined with samples beyond the original standardization data. This study examined evidence of stability, test-criterion relationships with academic outcomes, and treatment sensitivity of the CRPF for SSIS SELb-T using data drawn from a large efficacy trial.

Key Findings

Results of the study showed that the SSIS SELb-T CRPF levels appeared to be relatively stable despite longer than typical time intervals between assessment administrations for stability indices. Kappa appeared somewhat low because it was affected by unequal distribution across CRPF levels. Test-retest stability for the SSIS SELb-T composite scores reported in the manual (Elliott et al., 2020) was .78 presumably for a typical 2-week interval. Stability based on the SSIS SELb-T scores for our BAU sample was comparable at .77. Therefore, the slightly lower estimates for CRPF levels (e.g., Pearson r =.7, Spearman’s rho =.68) appear to primarily be due to categorization of scores rather than longer time intervals. However, this might not hold for a longer time interval (e.g., from beginning to end of the school year) as changes might occur resulting from teachers naturally correcting social interactions and reinforcing appropriate peer-to-peer engagement in classrooms over time.

The SSIS SELb-T CRPF levels were also related to standardized math achievement scores both concurrently and predictively, with or without adjusting for clustering and demographic differences. However, the CRPF levels did not significantly relate to standardized reading scores after controlling for clustering and demographic differences, nor did they predict relative change on math or reading outcomes. Although the omnibus tests for CRPF level differences on academic outcomes were not statistically significant when baseline differences were adjusted for, the gap in math outcome between the emerging level and the other CRPF levels was notably larger at 1-year follow up compared to baseline.

The lack of statistical significance could be due to low statistical power resulting from the smaller sample size for the BAU sample when broken down by CRPF levels (particularly for the Emerging category). However, the relative frequency distributions of students in our samples across the CRPF levels are similar to the distribution of the standardization sample published in the manual and consistent with developmental expectations (Elliott et al., 2020). Specifically, the percentages of students in different CRPF levels are not expected to be equal, and the percentage in the Emerging level is expected to be relatively small. As such, the pattern of between-CRPF-level differences would not likely change dramatically if the total sample size were to increase. Given that significant difference between groups parallel different benchmark quartiles for the Star scaled scores, we are confident that the findings are not significantly negatively impacted by the relatively small sample size for one group. Nevertheless, the finding should be replicated in future research with larger samples to explore if students with emergent SEL skills might require special attention not only in the SEL domain.

Previous research has highlighted differences between early math and reading skill development, suggesting that math skill acquisition may rely more heavily on active and quality learning environments in schools (Ginsburg et al., 2008; Rimm-Kaufman et al., 2007), be more highly compromised by behavioral problems in the classroom (Miller et al., 2017), and relate positively to students’ behavioral skills (Ponitz et al., 2009). Similarly, improvements in math skills have been linked to interventions that promote self-regulation (Schmitt et al., 2015) and self-affirmation (Borman et al., 2016). Future work is necessary to further investigate if SEL constructs are more salient to math compared to reading and examine factors that may mediate such relationship.

Moreover, the SSIS SELb-T CRPF levels demonstrated sensitivity to the SSIS-CIP intervention, an important condition if the assessment is to be used to evaluate SEL programming outcomes. However, given the alignment between skills assessed by the SSIS SELb-T and skills targeted by the SSIS-CIP lessons, the treatment sensitivity results may not generalize to other SEL programs. As such, future studies are needed to determine if the CRPF is sensitive to SEL skill change resulting from the implementation of other universal SEL programs (e.g., Second Step, [Committee for Children, 2016]; Promoting Alternate Thinking Strategies [PATHS; Kusche & Greenberg, 1994]). In addition, female students and those who were not receiving supplemental services had a higher probability of transitioning to a higher CRPF level. In reviewing the demographic characteristics of students reported in a large meta-analysis of universal SEL impact studies, Rowe and Trickett (2018) found that gender was the most analyzed moderator of treatment effect, and 41% of studies that tested treatment-by-gender interactions found significant relationships. However, they noted that there was no consistent pattern in the direction of this moderation and that very few studies reported student disability status or receipt of services. Especially given the mixed results in previous research, there is need to replicate the current findings with more samples and programs in future studies.

Limitations and Future Directions

In addition to the aforementioned limitations and future directions relative to specific research questions, this study only examined the SSIS SELb Teacher form with Grade 2 students. As such, findings also may not generalize to other SSIS SELb forms completed by other informants (students or parents) or to other grade levels. Students’ behaviors might vary in different contexts (e.g., home or community vs. school), and there might be developmental differences across grades or ages (e.g., different variabilities [besides different means] that may affect strengths of inter-variable relationships). It is important to further investigate whether evidence of reliability and validity for CRPF levels is similar for other contexts, grades, or age levels.

In addition, teachers who taught the SSIS-CIP lessons also rated students’ behavior outcomes in this study, an unavoidable limitation for gauging treatment sensitivity of teacher ratings relative to a universal program that is meant to be facilitated by classroom teachers. As such, it is possible that teachers were more primed to notice SEL skills after teaching the lessons. However, given that teachers in both treatment and BAU conditions rated students at baseline using the same rating form, it is possible that both groups could have been primed to notice SEL skills after the first exposure to the rating form. One could also argue that being more primed to notice SEL skills is part of the program impact; that is, the program could have changed teachers’ perceptions about SEL (e.g., Domitrovich et al., 2016). Future investigation could include a qualitative component to better understand the program impact on teachers and their perceptions of student behaviors.

Finally, it is important to acknowledge limitations associated with the items used to obtain the CRPF levels in this study. First, because there were three new items introduced for the Self-Awareness scale of the SSIS SELb measures subsequent to data collection for the efficacy trial, scores for only 17 of the 20 SELb items were available in the current database and prorated to obtain the total scores. This approach implicitly assumes that the missing items would function similarly to the available items, which may or may not be tenable. Second, Self-Awareness was underrepresented because the three unavailable items were all from this subscale. Although the CRPF levels were based on cut scores for the composite rather than subscale scores and Spearman-Brown’s stepped-up reliability was high for the composite, classification of participants might nonetheless be different when all items are completed. As such, it is crucial to replicate the current study with the intact SSIS SELb-T form and larger independent samples.

Implications and Conclusions

As the demand for efficient and informative SEL assessments continues to grow in schools, the availability of criterion-referenced interpretative frameworks such as the SSIS SELb CRPF may help improve the practical utility of scores from SEL assessments. Understanding student functioning relative to a performance level is an approach with which teachers, families, and even students are already familiar. The use of criterion-referenced score interpretation frameworks is common in standardized tests of academic achievement such as those used in statewide student assessment systems. As such, stakeholders are likely to find such scores easier to understand, interpret, and act upon compared to the norm-referenced approaches most commonly used in SEL assessments today. Furthermore, performance level interpretation may assist in making MTSS intervention decision-rules that are intuitive, consistently applied, and easy to document and track. When considering adoption of an assessment for universal SEL screening in schools, members of MTSS teams have been encouraged to consider the reliability and validity of the scores yielded by the measure. We further encourage them to also consider the type(s) of interpretation framework(s) offered by an SEL measure and how they may be used by school personnel and understood by other stakeholders. In addition, developers of SEL assessments should continue to investigate the potential use of criterion-referenced frameworks to advance efficient, informative, and useful SEL assessment decisions for students.

Results of this study provide initial independent evidence relative to the use of the SSIS SELb-T CRPF for universal SEL screening and monitoring student response to implementation of the SSIS-CIP universal program in schools. Although future investigations with additional samples and intervention programs are needed, the current results complement and expand upon prior findings of reliability and validity evidence for SSIS SELb scores using data drawn from the standardization sample (Anthony et al., 2021).

Statement of Potential Conflicts of Interest: Pui-Wa Lei and James C. DiPerna are authors of the SSIS SEL Brief Scales and receive a royalty from the publisher.

The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305A090438 to The Pennsylvania State University. The opinions expressed are those of the authors and do not represent the views of the Institute or the U.S. Department of Education.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Pui-Wa Lei and James C. DiPerna are authors of the SSIS SEL Brief Scales and receive a royalty from the publisher.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Institute of Education Sciences (R305A090438).