Abstract

The current study aimed to examine how video presence in a simulated virtual socialization meeting would affect younger (n = 60) and older adults’ (n = 65) associative and source memory. Participants were instructed to watch a simulated virtual meeting where speakers introduced themselves with a name and an occupation, half with their video on and the other half with their video off. Participants completed a recognition test of intact, rearranged, and new name–occupation pairs. For pairs recognized as old, participants were asked to identify whether the pair was presented with their video on or off. The associative memory accuracy (i.e., hit rate – false alarm rate) results showed a better performance in younger relative to older adults, but both age groups benefited equally from video presence. Source memory (i.e., video-on vs. video-off) results showed a significant benefit of video presence in older but not younger adults.

• Virtual learning features (i.e., video) can benefit associative memory performance in both younger and older adults and can uniquely benefit source memory performance in older adults. • Certain features (i.e., video) can be incorporated into online learning settings to improve memory performance and information recognition in younger and older adults. • Certain features (i.e., video) can be used to enhance older adults’ online socialization experiences, particularly in situations that require meeting and remembering new people.What this paper adds

Introduction

There has been a global increase in video-conference (VC) technology use (e.g., Zoom and Skype) to maintain social connections and education during the COVID-19 pandemic (Branscombe, 2020; Islam et al., 2020). For example, there was a 25–30% increase in global internet traffic in the first month of the pandemic (Branscombe, 2020). Following the pandemic, older adults used more VC than ever before to remain socially connected (Masoud et al., 2021) and reduce loneliness (Airola et al., 2020). The use of VC to maintain social relationships has been especially beneficial in geographically distant locations (Airola et al., 2020; Molyneaux et al., 2012) or when living in senior care institutions (Naudé et al., 2021). Engaging in VC use has effectively reduced social isolation (Airola et al., 2020), loneliness, and depressive status (Tsai et al., 2010) among older adults.

The social engagement aspect of VC may hold cognitive benefits for older adults. Both in-person (Nie et al., 2021) and online (Rafnsson et al., 2021) social engagement could lead to better cognitive functioning among older adults, partially due to the increased cognitive stimulation of social activities (Brown et al., 2016). It has been evidenced that older adults with larger social networks performed differentially better on cognitive tasks measuring global cognitive functioning, learning ability, and processing speed (Nie et al., 2021). Older adults with more social activity participation also experienced a reduced rate in cognitive decline over a 5-year period (Pugh et al., 2021). Overall, increased participation in social activities provides older adults with more opportunities to engage in cognitively stimulating activities, which may boost their cognitive functions (Brown et al., 2016; Nie et al., 2021).

Despite the increasing popularity of VC and its well-documented importance in older adults’ social life (e.g., Sixsmith et al., 2022) and cognition (e.g., Nie et al., 2021), it remains unclear how features specific to a VC environment might affect older adults’ memory for critical social information shared in virtual meetings. However, memorization of critical information (e.g., names, faces, and occupations) in a virtual context is becoming more and more important for older adults’ social efficacy and confidence to stay connected and maintain social satisfaction. It should be noted that memory changes and forgetfulness is a common experience reported among older adults (Bolla et al., 1991), and episodic memory is especially vulnerable to aging-associated memory changes (e.g., Tromp et al., 2015). According to the associative deficit hypothesis (e.g., Naveh-Benjamin, 2000), it becomes more challenging for older adults to remember associations between items (i.e., associative memory) relative to remembering the individual items (i.e., item memory). This associative memory deficit in older adults remains even when being intentionally instructed to encode associations (Old & Naveh-Benjamin, 2008). Additionally, older adults also show greater difficulty in remembering sources or contexts in which target information is presented. For example, spatial source memory showed a linear decline across the adult lifespan, with 30% of memory variation being explained by age (Cansino et al., 2013). This change in source memory performance experienced by older adults might be best explained by reduced processing resources, which resulted in more attentional allocation to focal items than contextual elements (Erngrund et al., 1996).

Despite the aging-related memory changes for associative and source information, older adults tend to preserve their memory for information of high value or personal importance (e.g., Cassidy & Gutchess, 2012; Hargis & Castel, 2017). Hargis and Castel (2017) manipulated perceived social importance of face–name–occupation triplets presented to participants and then tested their memory performance. The results showed greater memory challenges in older relative to younger adults for less important, but not for personally important faces. Past research also suggests that older adults’ source memory challenges could be minimized or even eliminated for affective (TRUE vs. FALSE statements) or socially salient (GOOD vs. EVIL character of the speaker) sources (Rahhal et al., 2002). Taken together, social importance or salience may ameliorate the associative and source memory challenges in older adults. In light of these findings, it is expected that during a virtual social event, video presence of a speaker might offer extra socially meaningful contextual cues (e.g., identity, facial features, postural styles, and subtle emotions) that might inform personally perceived social significance and thus might alleviate the age-related changes in associative or source memory for information that is potentially important for future socialization (e.g., names, occupations, and the associations between the two).

However, no studies have examined the effect of video presence during an online social gathering on older adults’ memory. This is a timely and significant question considering the explosive increase in VC use during and following the pandemic and given the current trends of global digitalization in communication and socialization (e.g., Sixsmith et al., 2022). Furthermore, accurate memory of socially meaningful name–occupation pairs and the associated sources hold significant social implications, as it allows older adults to remain socially active by building new and maintaining existing social networks. For example, in a social gathering, we are more likely to approach and initiate a chat with those for whom we can remember their identities, such as names and/or occupations. This might be especially true for older adults.

In this context, the current study took a novel approach to examine the effect of video presence on younger and older adults’ memory for name–occupation associations and the related encoding context/source in a simulated online socialization event. The study addressed three specific research questions: First, does video presence (i.e., “video-on” vs. “video-off” mode) enhance memory for name–occupation associations in younger and older adults? Second, does video presence enhance source memory for name–occupation pairs (i.e., “video-on” or “video-off” context) in younger and older adults? Third, is the associative and source memory benefit, if any, differentially larger for older than younger adults? In light of the literature, we hypothesized that both age groups would show better name–occupation associative and source memory performance in the “video-on” relative to the “video-off” encoding condition, and the benefits would be differentially larger for older than younger adults.

To address these questions, this study employed an associative memory paradigm, combined with an embedded source memory task, wherein younger and older adults joined a Zoom meeting simulating a virtual social party. They first watched a pre-recorded video of a number of Zoom attendees introducing themselves (name and occupation), half with video on and half with video off. After a filler period, they completed an associative memory task in which they recognize name–occupation pairs as old (i.e., intact pairs studied earlier in the video clip) or new (i.e., rearranged or new pairs). For those recognized as old, they were also asked to identify the source (i.e., video on vs. video off) of a specific pair. Both associative and source memory scores were submitted to a 2 (age: younger vs. older) × 2 (condition: video-on vs. video-off) mixed model ANOVA to examine age differences and the effect of video presence.

Method

Participants

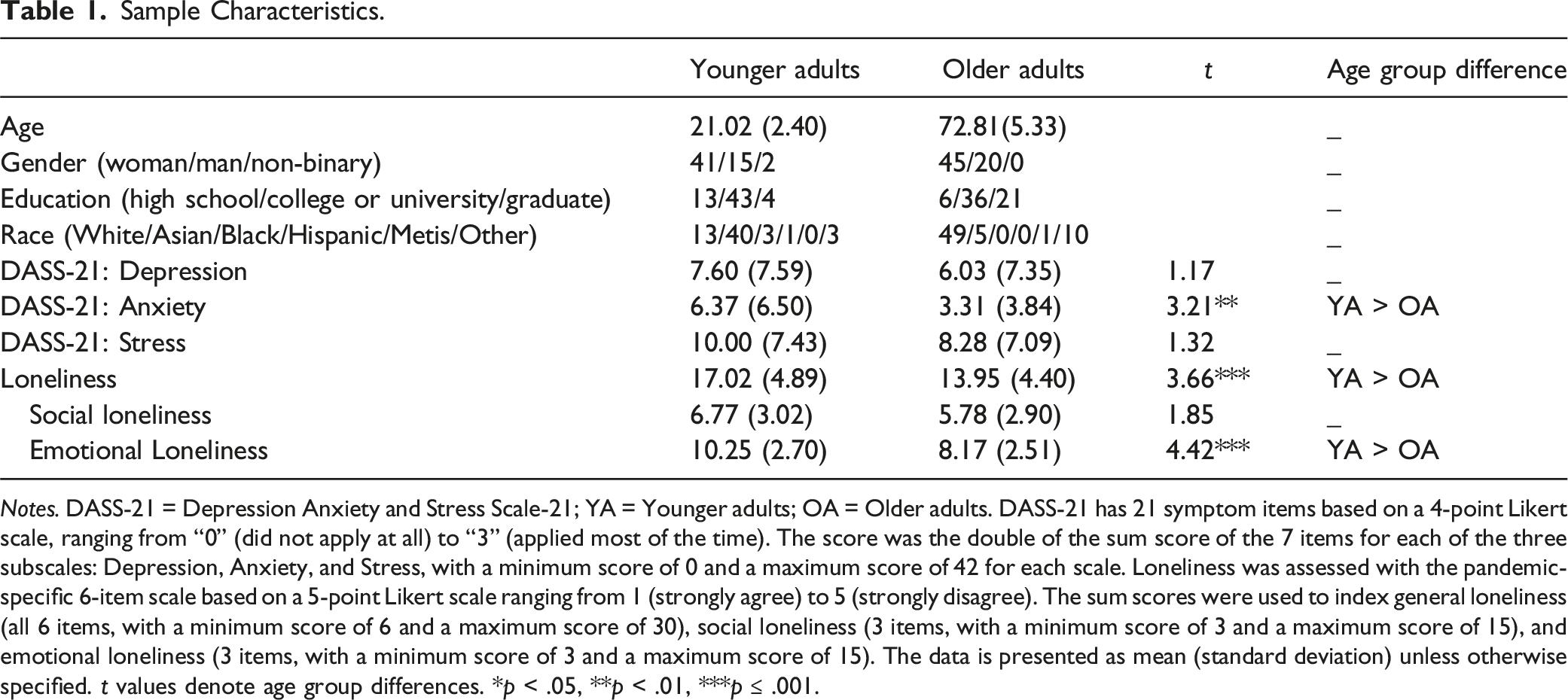

Sample Characteristics.

Notes. DASS-21 = Depression Anxiety and Stress Scale-21; YA = Younger adults; OA = Older adults. DASS-21 has 21 symptom items based on a 4-point Likert scale, ranging from “0” (did not apply at all) to “3” (applied most of the time). The score was the double of the sum score of the 7 items for each of the three subscales: Depression, Anxiety, and Stress, with a minimum score of 0 and a maximum score of 42 for each scale. Loneliness was assessed with the pandemic-specific 6-item scale based on a 5-point Likert scale ranging from 1 (strongly agree) to 5 (strongly disagree). The sum scores were used to index general loneliness (all 6 items, with a minimum score of 6 and a maximum score of 30), social loneliness (3 items, with a minimum score of 3 and a maximum score of 15), and emotional loneliness (3 items, with a minimum score of 3 and a maximum score of 15). The data is presented as mean (standard deviation) unless otherwise specified. t values denote age group differences. *p < .05, **p < .01, ***p ≤ .001.

Materials

Pre-Recording of the Stimulus Videos

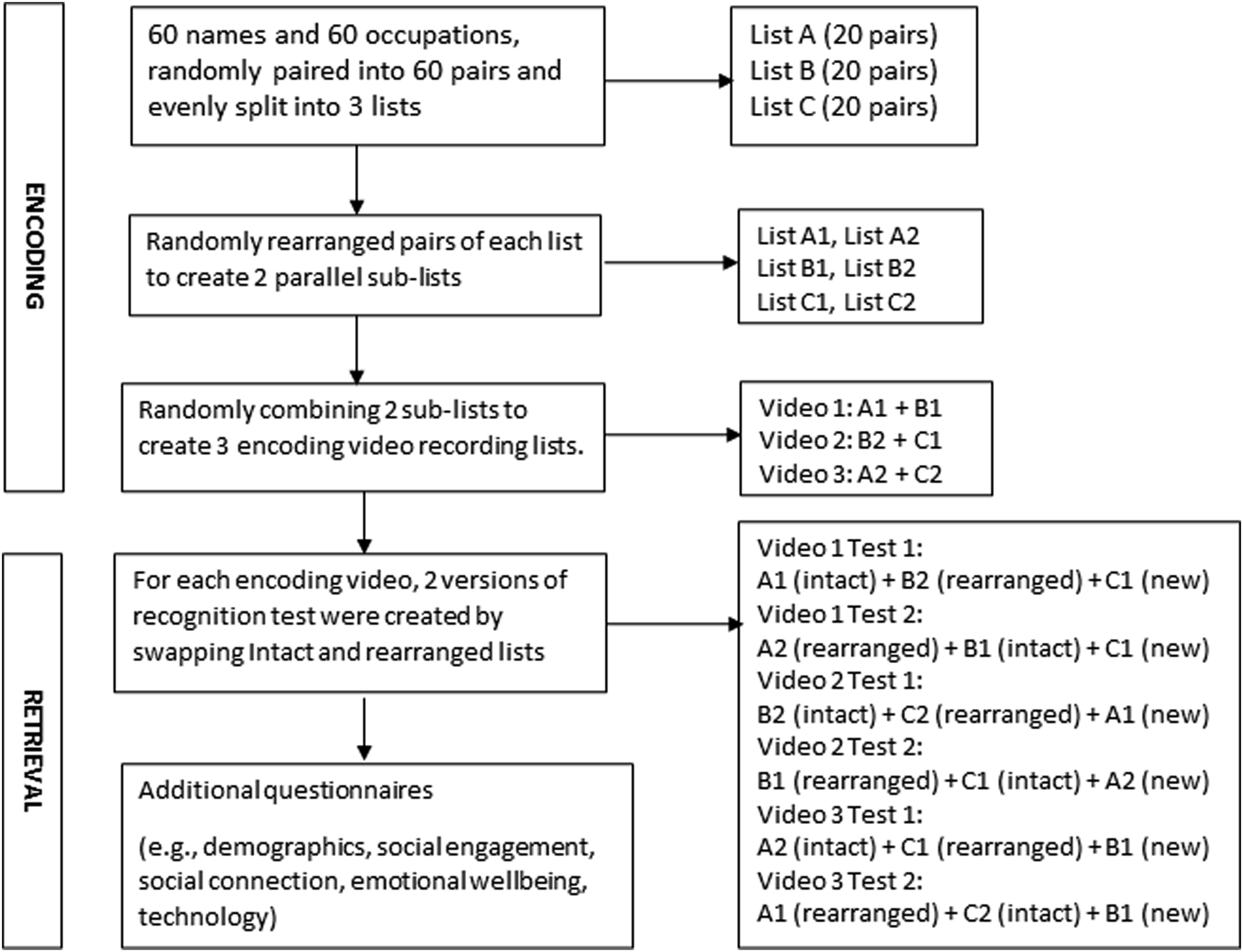

Figure 1 illustrates the procedure for the testing stimulus development. A list of 60 names and 60 occupations were collected through a Google search, randomly paired into 60 pairs. The names and occupations were split into three initial lists that were then randomly rearranged to create six sublists (i.e., A1, A2, B1, B2, C1, and C2), roughly matched for length of both names and occupations. Two of these 6 sublists were arbitrarily combined and presented in each of the 3 encoding videos (i.e., A1 + B1, B2 + C1, and A2 + C2), administered in a counterbalanced way across participants during encoding. Names were assigned to the speakers in a gender congruent way and occupations were randomly assigned independent of the typical gender stereotype. Pairs were pseudorandomized with no more than three pairs of the same characteristics (e.g., age, gender, and video on/off) presented in a row. Flowchart Illustrating the Development of Encoding and Testing Stimuli.

The online consent form was attached to recruitment emails and posts for the testing stimulus video pre-recording. Participants provided their consent to have their video and audio on during the pre-recording where each participant’s Zoom participant name was changed to their pre-assigned subject ID. At the pre-recording Zoom sessions, a leading Research Assistant (RA) then shared the screen to reveal a slideshow of individual name–occupation pairs with an ID number. The participant with the corresponding ID number would unmute themselves to do a self-introduction in the following format: “Hi, my name is [name], and I’m a [occupation]” using the information on the screen. The video was recorded in a side-by-side view using a speaker/slide ratio of 50:50. Participants were recorded presenting 2 separate self-introductions from each stimulus list and they kept their video on during the recording, with half the clips being edited post-recording to create the video-off version. Thus, each participant’s face was only revealed once in each video, but their voice appeared twice. For the video-off version, an image of a gray head figure was edited post-hoc to mask the image of the speaker for each trial. For the video-on condition, a person from the chest up was presented in the video. The recordings were checked and edited for quality and duration for each pair. Individuals with poor quality recordings (e.g., poor video, audio, or lighting) were identified and subsequently replaced. The length of the videos ranged from 3 m and 29 s to 3 m and 35 s in total. The pre-recording occurred in February – March of 2022.

Memory Task

The memory recognition test was built in Qualtrics. There were 6 test versions in total. Each test included one sublist that remained consistent to the sublist shown in the video (i.e., intact), one sublist that was rearranged from the video, and one new sublist that was not shown in the video (see Figure 1). The two test versions for each encoding list were administered in a counterbalanced way across participants to minimize the list-specific effects. Participants were instructed to respond “OLD” only to intact pairs and “NEW” to both rearranged and new pairs. Pairs were pseudorandomized manually to ensure no more than two pairs of the same characteristics (i.e., video on/off; pair type) were presented in a row. To avoid hyper-binding of pairs presented in temporal or spatial proximity, rearranged pairs were not within 5 pairs of each other in sequence during the encoding. Data for the memory testing were collected in May – September of 2022.

Survey Questions

The survey was built in Qualtrics. It included demographic questions (e.g., gender, age, and ethnicity) and questions on the level of perceived social engagement in general (i.e., how socially engaging you found the video, meeting, and Zoom session overall was?) and in video-on and video-off conditions (i.e., how socially engaging you found the introductions were where the speaker had their camera on/off?), based on a Likert scale of 1 (lowest) to 10 (highest).

The survey also included a number of technology-related questions (e.g., “What device did you use to access the Zoom meeting”; “Did you experience any difficulties with technologies that may have hindered your participation”) to check for any technological issues that may have impacted memory performance. The frequency analysis showed that a majority of the participants used a computer (desktop or laptop) to complete the task (98.40%), used full screen mode during encoding (94.40%), were able to clearly see and hear the video (with comfortable brightness and volume levels, 96.80%), did not experience any difficulties with technologies that hinder their participation (82.40%), and did not know any of the people in the encoding video in their real life (98.4%). These suggest participants completed the task in a generally well-controlled testing environment, with proper technological set-up and support.

Importantly, the survey also included standardized scales to assess emotional wellbeing, including the Depression Anxiety and Stress Scale-21 (Henry & Crawford, 2005) and the pandemic-specific 6-Item De Jong Gierveld Loneliness Scale (Gierveld & Tilburg, 2006; Yang et al., 2022). It should be noted that none of the sociodemographic or psychological wellbeing (e.g., depression, anxiety, stress, and loneliness) variables correlated with memory scores (rs ≤ .156, ps > .086).

Memory Task Procedure

Encoding

Memory encoding was completed in online Zoom meetings with small groups of 2–8 participants assigned to the same counterbalance condition. Upon being registered to the study with informed consent through a Qualtrics form, participants were emailed scheduling information for the Zoom meeting and preparation materials (e.g., Zoom installation and set-up protocol, consent form), along with the Zoom meeting information before the session. While in the Zoom meeting waiting room, RAs changed the participants’ Zoom participant names into their assigned subject IDs. All participants were asked to have their camera on throughout the meeting. After a brief consent confirmation and Zoom tutorial, participants were asked to engage in a social check-in activity with the RAs by responding to the question “What is your favorite season and why?” in turn. They were then told a hypothetical socialization scenario (i.e., they have been invited to a virtual Zoom party and will need to remember the information introduced by the other attendees).

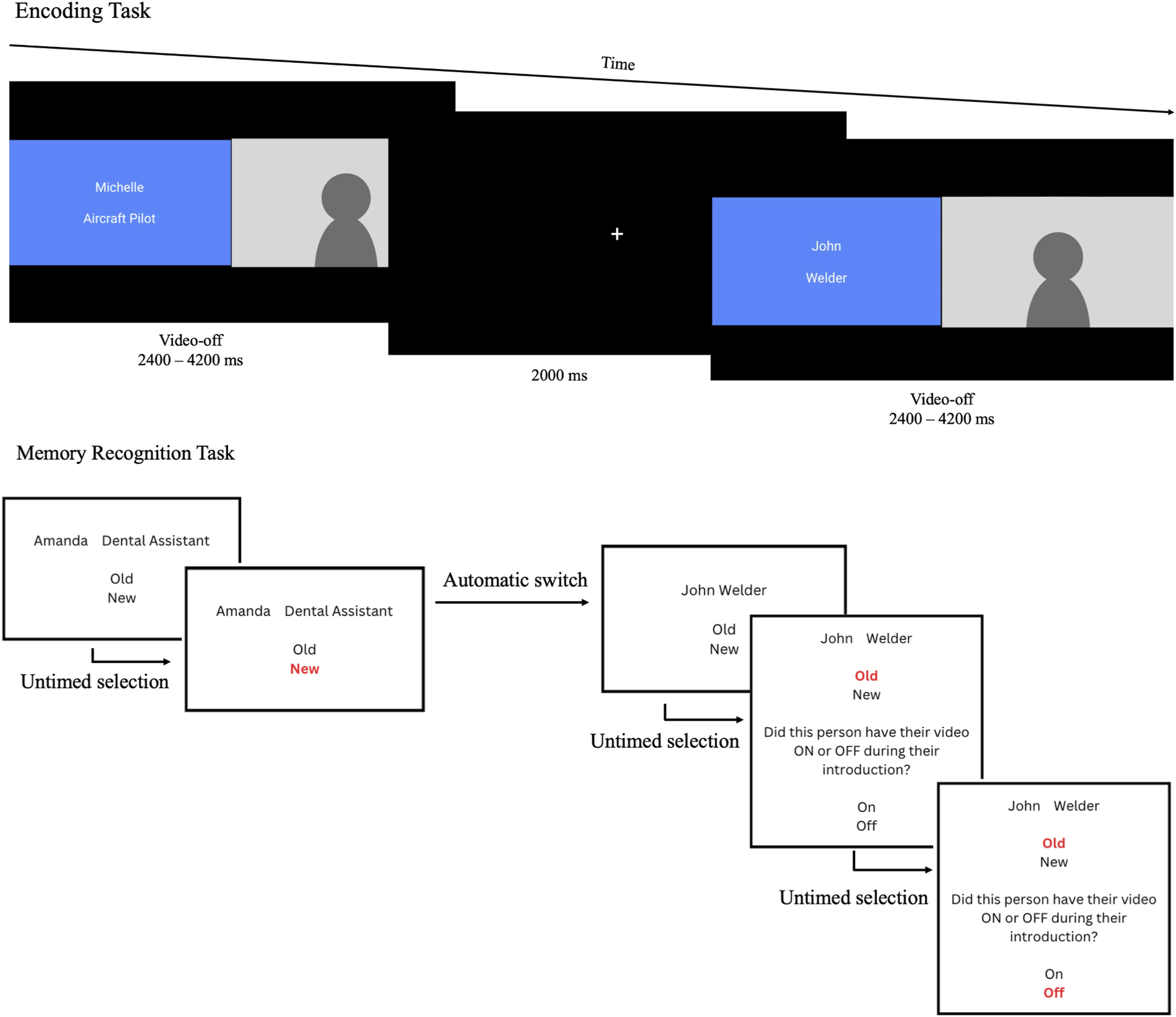

Next, participants watched the pre-recorded stimulus video (i.e., encoding) and were told not to write anything down during the video watching and the two RAs would be monitoring the session. The encoding video included 40 pre-recorded Zoom attendees, balanced for gender and age, introducing themselves with a name and an occupation (i.e., a name–occupation pair), half with video on and the other half with video off, following a pace of 2.4 s–4.2 s per pair to simulate a natural online social interaction. Between any two consecutive pairs, a 2-s black screen was inserted, including a fixation cross presented at the center. The length of the videos ranged from 3 m and 29 s to 3 m and 35 s in total. Figure 2 (top panel) illustrates an example pair shown during the encoding phase. Example Pair Stimuli Presented during the Encoding and Recognition Tasks. Note. Figure 2 only features the video-off condition for privacy protection purposes.

Following the encoding, participants then responded to the second social activity question “What is your favorite movie and why/what is it about?”. These two social engagement check-in questions are typical in a natural socialization scenario and thus used here to enhance the feeling of realness of the virtual socialization simulation. Together, the two social activities were self-paced, each typically took approximately 3–5 minutes in total to complete.

Recognition

Participants were given a tutorial on how to access the self-paced memory recognition and survey questions and received the test link and password via the Zoom chat. Pairs were sequentially presented as text only (i.e., the videos were not used as cues) during the memory test and participants were instructed to decide whether each pair was old (i.e., intact) or new (i.e., rearranged, new) by clicking the correct option on the screen. For each pair where “OLD” was selected, a second question appeared in which they were asked to identify the source (i.e., whether this pair was introduced by a person with their video on or off during encoding). The task was self-paced. Figure 2 (bottom panel) illustrates the memory recognition task trial procedure. Attendees were muted to complete the online memory recognition task and the survey questions on their own, but asked to keep their cameras on so RAs could observe and record any major distractions experienced while taking the test. They were instructed to raise their virtual or physical hand or message the RAs through chat to ask questions. Participants who had difficulties using chat were sent to a breakout room where they were individually assisted by a RA.

Data Analysis Approach

The data were analyzed in IBM SPSS 24. The name–occupation associative memory accuracy was indexed by recognition discrimination (i.e., hit rate – false alarm rate, Chalfonte & Johnson, 1996). Hit rate refers to the proportion of intact pairs recognized as old, whereas false alarm rate refers to the proportion of rearranged pairs recognized as old. Source memory was calculated as the proportion of correctly recognized intact pairs (i.e., hits) attributed to the correct sources (i.e., “video-on” or “video-off”). Each of these two dependent variables were submitted to a 2 (age: younger vs. older) × 2 (condition: video on vs. video off) mixed model ANOVA.

Results

Normality Check

Histograms were used to assess normality. Accuracy was considered normally distributed for both video-on and video-off conditions. Source memory for the two conditions appeared slightly off normality (i.e., video off: skewness = −.775, kurtosis = −.413; video on: skewness = −1.349, kurtosis = 2.504), but these scores were well below the suggested cut-off scores of +/−3 for skewness and +/−10 for kurtosis (Kline, 2015).

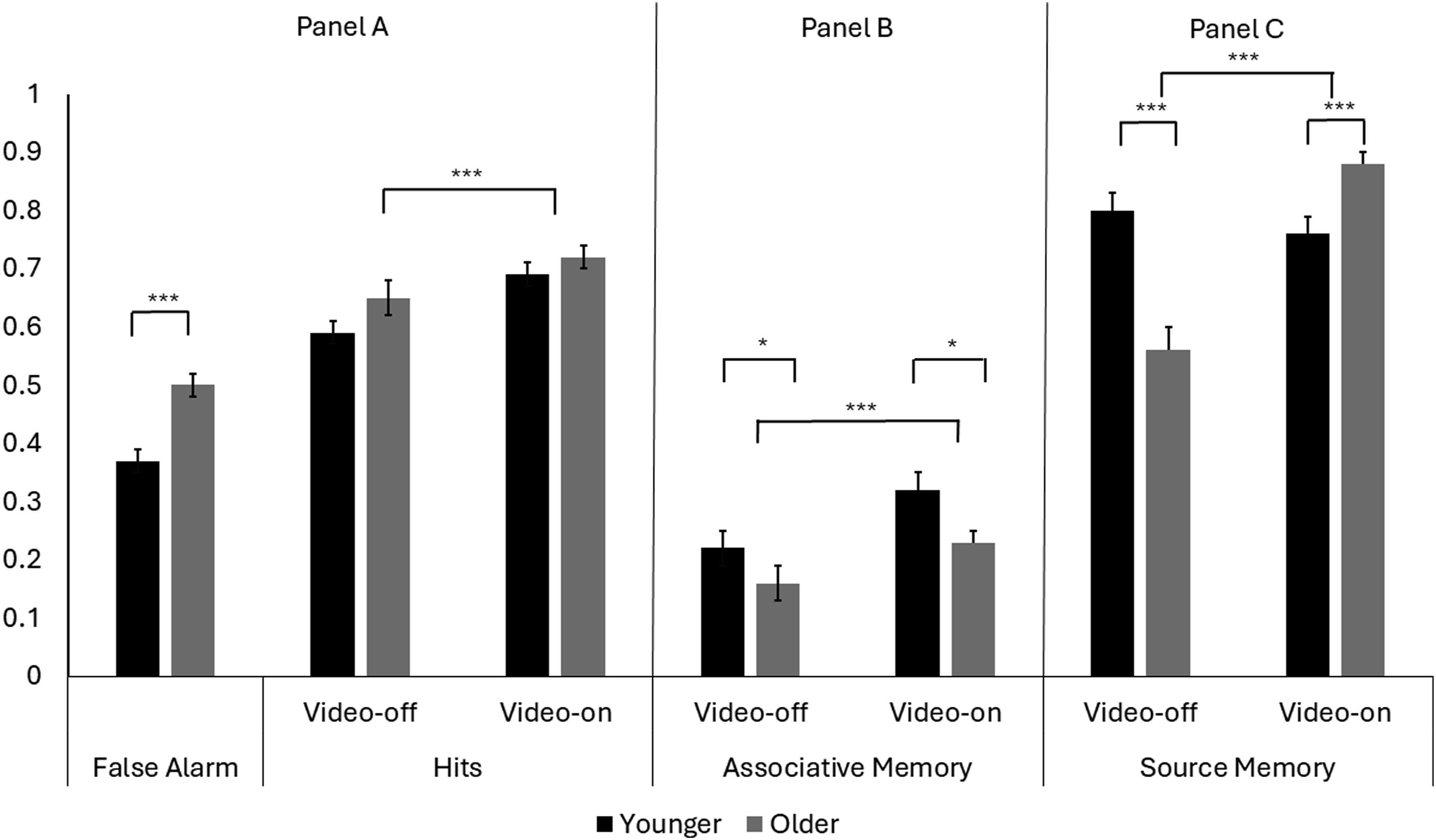

Associative Memory

The ANOVA on the recognition discrimination score revealed significant main effects of age [F(1,123) = 4.98, p = .027, MSE = .077, ηp2 = .039] and video [F(1,123) = 16.97, p < .001, MSE = .028, ηp2 = .121]. Specifically, younger adults outperformed older adults, and pairs were better recognized in the video-on than video-off condition. The interaction between video and age was not significant (p = .399), suggesting the video benefit was equivalent for younger and older adults. Separate analysis on hit and false alarm rates showed that although older adults showed a higher false alarm rate [F(1,123) = 14.37, p < .001] than younger adults, the two age groups did not differ in hit rate (p = .093). However, there was a main effect of video, with a higher hit rate for the video-on than the video-off condition [F(1,123) = 16.97, p < .001, MSE = .028, ηp2 = .121]. Figure 3 displays the hit and false alarm rates (Panel A) and recognition discrimination scores (Panel B) across conditions. Memory Accuracy Scores of Younger and Older Adults. Note. Panel A presents false alarm and hit rates, with a significant age difference in false alarm rate (older > younger), and a significant video presence effect (video on better than video off) in hit rate. Panel B shows associative memory accuracy scores (i.e., hit rate – false alarm rate), with significant main effects of age (younger > older) and video presence (video-on > video-off). Panel C shows source memory scores (i.e., proportional scores of correctly recognized sources out of corrected recognized intact pairs), with a significant main effect of video presence qualified by an age by video interaction (younger > older in the video-off, whereas younger < older in the video-on condition). Error bars represent standard errors. *p < .05, **p < .01, ***p ≤ .001.

Source Memory

The ANOVA on the source memory score revealed significant main effects of video [F(1,123) = 22.57, p < .001, MSE = .055, ηp2 = .155], qualified by an age by video interaction [F(1,123) = 38.24, p < .001, MSE = 0.55, ηp2 = .237]. Post-hoc pairwise comparisons with the Bonferroni correction found no effect of video in younger adults (p = .322), but older adults showed better performance in the video-on relative to video-off condition (p < .001). Furthermore, while younger adults (M = .80, SD = .21) outperformed older adults (M = .56, SD = .32) in the video-off condition, p < .001, older adults (M = .88, SD = .15) outperformed younger adults (M = .76, SD = .20) in the video-on condition, p < .001. Figure 3 (Panel C) presents the source memory scores across conditions.

Supplementary Analysis

The first set of supplementary analysis examined the age group differences in background variables and the manipulation check. The one-way ANOVA revealed higher loneliness (p < .001) and anxiety scores (p = .002) in younger than older adults, but a higher perceived social engagement in older than younger adults for the video-off condition (p = .005). Consistent with our manipulation prediction, the paired samples t test showed that the video-on introductions (M = 7.16, SD = 2.20) were perceived as more socially engaging than the video-off introductions (M = 4.06, SD = 2.26) based on a Likert scale of 1–10, t(119) = 14.82 p < .001.

The second set of supplementary analysis addressed the possible impact of mental health conditions on the results. First, we reran ANOVAs on associative and source memory after excluding 9 participants (5 younger adults and 4 older adults) who scored in the “extremely severe” category on depression, anxiety, or stress based on their DASS-21 performance. The result pattern remained the same. This suggests that the extremely severe DASS-21 scores may not impact memory performance. Second, we reran ANOVAs on memory performance with loneliness and anxiety as covariates (given the age differences in these scores, Table 1). The main effect of age in associative memory (p = .047) and the age by video interaction in source memory (p < .001) remained significant, suggesting that these variables do not confound the primary results related to age differences.

Discussion

This study examined the effect of video presence on associative and source memory for name–occupation pairs in a simulated virtual socialization scenario among younger and older adults. The results suggest that video presence benefits associative memory in both younger and older adults, but benefits source memory exclusively for older adults. Strikingly, older adults outperformed younger adults in source memory in the video-on condition.

Age-Related Associative Memory Deficit

The results of the current study replicated previous findings that younger adults outperformed older adults in associative memory (e.g., James et al., 2008). This holds true regardless of the video presence conditions. This result can be explained by the associative deficit hypothesis (ADH) as greater difficulty in creating and retrieving links between units of information, resulting in a higher false alarm rate in older than younger adults (Delhaye et al., 2018; Fine et al., 2018; Naveh-Benjamin, 2000). Similarly, the current study revealed a higher level of false alarms in older than younger adults.

Additionally, the lack of an age by video interaction suggests that both age groups showed equal benefits of video presence on associative memory, and the additional contextual information provided by the presence of a face did not counteract the age-related differences in associative memory. This is in line with previous findings where younger and older adults experienced an equivalent benefit in associative memory for high-value relative to low-value information (Castel et al., 2016). The result is also consistent with a previous study that found equivalent benefit from the the presence of visual cues on speech perception between younger and older adults (Smayda et al., 2016).

Source Memory

When the speaker’s video was off during the memory encoding, older adults experienced significantly lower performance in source memory relative to younger adults, replicating previous literature (Erngrund et al., 1996). However, when the speaker’s video was on, older adults significantly outperformed younger adults. This striking result suggests that video presence during a virtual meeting or social event is especially beneficial for older adults when monitoring and remembering the sources of the exchanged social identity information. These findings support our original hypothesis about source memory, as well as previous findings that including picture and audio conditions will benefit the memory of older adults when compared to an audio only condition (Smith et al., 2015).

The potential explanation for the source memory advantage of video presence in older but not younger adults is that video adds social context and subsequently social relevance as well as vivid visual context that can be particularly helpful for older adults’ memory (Cassidy & Gutchess, 2012). The visual and social salience associated with the presence of video may provide a rich context to encode the pairs by increasing the importance and overall value placed on the specific pair. However, the added video presence context was especially beneficial for older adults’ source memory but did not reduce age differences in associative memory. Supportively, it has been speculated that the age-related changes in source memory may be reduced when attention is explicitly directed toward the sources (Naveh-Benjamin et al., 2005). In the current study, associative memory recognition for the name–occupation pairs is independent of video sources. However, when source memory was assessed, participants were explicitly instructed to directly reactivate and recognize the video context sources in which the pairs were presented. Thus, the explicit direction to the source cue might be critical for reducing or even reversing the age difference in source memory. This speculation aligns with the theories about aging-associated deduction in attentional resources in older adults (e.g., Bastin, 2018), which enabled older adults to strategically allocate attention toward the information explicitly cued or signaled as relevant and important and to reduce their source memory deficits (Garlitch & Wahlheim, 2021). However, further testing would be required to verify this speculation.

Alternatively, it is also possible that determining whether a pair had been presented previously with or without a video (i.e., source memory) primarily relies on retrieval based on semantic familiarity, which remains relatively intact among older adults (e.g., Wong et al., 2021). This is because the source recognition does not require participants to retrieve specific face stimuli themselves. In contrast, associative memory tasks may require encoding and retrieval of very specific information and the specific associations. Thus, it is possible that source recognition in the current task is easier for older adults given their intact familiarity-based retrieval. This pattern has been found in previous literature, where memory of socially salient (GOOD vs. EVIL character of the speaker) sources remained intact, whereas memory for perceptual sources (i.e., identifying the speaker who introduced a specific face) declined in older adults (Rahhal et al., 2002).

Finally, as previously speculated, the extra socially meaningful visual contextual cues offered by the video (e.g., facial features, postural styles, and subtle emotions) might inform personally perceived identity and social significance (e.g., Cassidy & Gutchess, 2012). Supplementary analysis of the results showed that participants found the video-on condition to be more socially engaging than the video-off condition. Thus, associative pairs in the “video-on” condition might be perceived as more meaningful, which thus alleviated the age-related challenges in source recognition. This is consistent with previous finding that older adults’ source memory deficit was reduced for meaningful information (May et al., 2005). Taken together, it is speculated that video presence provides additional meaningful information that is particularly beneficial for older adults to track video-related sources/contexts.

Our findings are particularly relevant to research into virtual rehabilitation, where having a good relationship with the rehabilitator is necessary for treatment compliance (Berton et al., 2020). Our findings suggested that video presence may increase social engagement perception and memorability. Specifically, video presence is very important in virtual intervention or rehabilitation services and programs and has a positive impact on intervention/treatment compliance. Since the outbreak of the COVID-19 pandemic, there was a drastic surge in virtual care use (e.g., telecare, telehealth, and video medical call/visit), particularly among older adults in Canada (Bhatia et al., 2021). This makes it important to identify important factors for the quality and effectiveness of virtual health care. Previous work found both telephone and video communication with healthcare professionals could effectively reduce loneliness among community-dwelling older adults (Mierzwicki et al., 2024), but the addition of video did not have additional benefits when compared to the telephone communication. However, the results of the current study highlight the importance of video presence in information processing and memory among older adults. Taken together, these results suggest that although video presence might have no extra social benefit (i.e., reducing loneliness), it represents an easy but crucial approach to ensure memory efficacy of virtual communication among older adults.

Limitations

There are a few limitations in this study. For one, item memory was not assessed, and thus associative memory could not be compared to item-specific memory to assess associative memory deficit. Additionally, given the restrictive nature of the group virtual testing setting, we did not administer a global cognitive health screening test which typically requires a one-on-one setting. Although participants were self-selected during registration to exclude those with any previous neurological conditions, this does not rule out the possible confounding effect of cognitive impairment. Another limitation of this study is that the simulated meeting of several people online did not offer opportunity for participants to respond and introduce oneself. This lack of a realistic scenario may have reduced participants’ willingness to utilize the same attentional resources they would have if this was a real-life scenario where subsequent meetings are possible, and thus remembering each person would be imperative. Participants were not asked to report their experience with or frequency of video-conferencing usage. Although the recruitment material required potential participants to be “comfortable with using and have reliable access to Zoom” and a Zoom tutorial was also provided, varying levels of video-conferencing experience may have created additional stress or distraction during encoding. Moreover, generalizability of our results may be restricted due to the limited racial and ethnic diversity in our sample, and a smaller portion of participants from a lower socioeconomic status due to the required use of personal electronic devices.

Conclusion and Future Outlook

Despite the limitations, the current study revealed a novel finding that only older adults benefited from video presence in a virtual social simulation on source memory to such a degree that they even outperformed younger adults in the video-on condition. Additionally, despite the lack of an age by video interaction, there is clear evidence for the benefit of video presence on associative memory in both younger and older adults. While it is speculated that video presence may enhance familiarity processes, which are relatively intact in older adults (e.g., Wong et al., 2021), future research may further examine the benefit of video presence on memory of more specific sources such as features of the faces in the video (e.g., age or gender of stimuli). Future work may further test the effect of direct attentional allocation instructions at encoding and retrieval on older adults’ associative and source memory, and whether the effects would be generalized to still face images. Finally, future research may compare video-on and video-off conditions in virtual learning and information retention of both instructors and the students.

Footnotes

Acknowledgments

We would like to thank Max Marshall, Stephanie Truong, Anastassia Trofimov, Linke Yu, and other research assistants who helped with data collection for this project.

Authors’ Note

Data presented in this article has previously been presented at the International Convention of Psychological Science (ICPS) conference.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Natural Sciences and Engineering Research Council of Canada (NSERC) Discovery Grants (RGPIN-2014-06153, RGPIN-2020-04987) awarded to Dr. Lixia Yang.