Abstract

Intergenerational practitioners responding to a 2018 national survey identified a need for evidence-informed evaluation tools to measure program impact. The Best Practices (BP) Checklist, a 14-item (yes/no) measure assessing the extent to which an intergenerational program session maintained effective intergenerational strategies, may help meet this need. Yet, researchers have not validated the measure. In this study, we begin the empirical validation process by completing an exploratory factor analysis (EFA) of the BP Checklist to offer insight into possible item reduction and an underlying latent factor structure. Using BP Checklist data from 132 intergenerational activities, we found a 13-item, 3-factor structure, reflecting dimensions of: (a) pairing intergenerational participants, (b) person-centered strategies (e.g., selecting activities reflecting participants’ interests), and (c) staff knowledge of participants. Our study represents a foundational step toward optimizing intergenerational program evaluation, thereby enhancing programming quality.

Keywords

Introduction

Intergenerational (IG) programs engage youth (24 years of age and younger) and older adults (50 years of age and older) in purposeful activity for mutual benefit (Generations United, 2018). Programs take many shapes. Some involve older adult mentors in schools (e.g., Experience Corps; Gruenewald et al., 2016). Others involve students visiting older adults in care settings to mitigate social isolation for both groups (Breck et al., 2018). Shared site care programs deliver services concurrently to youth and older adults in communal settings, such as child and adult day programs located in a single building (Butts & Jarrott, 2021). IG program results are generally favorable (Maley et al., 2017) with older adults demonstrating greater levels of generativity (Gruenewald et al., 2016) and young people demonstrating less ageist views of older adults (Gonzales et al., 2010), for example.

Despite demonstrated benefits, IG program guidelines and evaluation methods and tools are highly variable (Galbraith et al., 2015). A review of the literature reveals that most IG program evaluations lack a guiding theoretical framework (Kuehne & Melville, 2014) and involve small sample sizes and pre-post surveys focused on participant attitudes and program satisfaction (Jarrott et al., 2021; Maley et al., 2017). Measures are often developed for individual studies, thereby limiting generalizability. Moreover, contextual factors (e.g., time, training needed) influencing the implementation of IG program sessions are rarely measured and, thus, poorly understood (Galbraith et al., 2015).

A 2018 national survey of IG program providers revealed that major challenges of operating an IG program included (a) the lack of available, high-quality outcome measures and (b) difficulties accessing staff training materials (Jarrott & Lee, 2020). To understand the measurable impact of IG programming and distinguish their programs from single generation services, practitioners need evidence-based measures of implementation and outcome but find them in short supply.

Practitioners’ challenges reflect those of researchers interested in valid measures specific to IG settings (Kuehne & Melville, 2014). Over 20 years of reviewing and conducting IG research, we have identified numerous qualitative process and outcome evaluations (e.g., Bernard et al., 2011; Breck et al., 2018; Bunting & Lax, 2019; Heydon et al., 2017). Quantitative, age-specific instruments have also been employed, with results reported for one age group and, occasionally, staff (e.g., Jarrott & Bruno, 2003). For example, a study of a multi-dimensional measure of childcare settings with IG programming identified features that support program quality, such as a consistent schedule (Epstein & Boisvert, 2006); it includes items specific to staff practice during IG programming. It has not been validated, nor have recently published studies utilized the scale. While a recently published toolkit presents validated outcome measures used in IG research (Jarrott, 2019), they lack process indicators, leaving a gap in understanding how IG program outcomes are achieved.

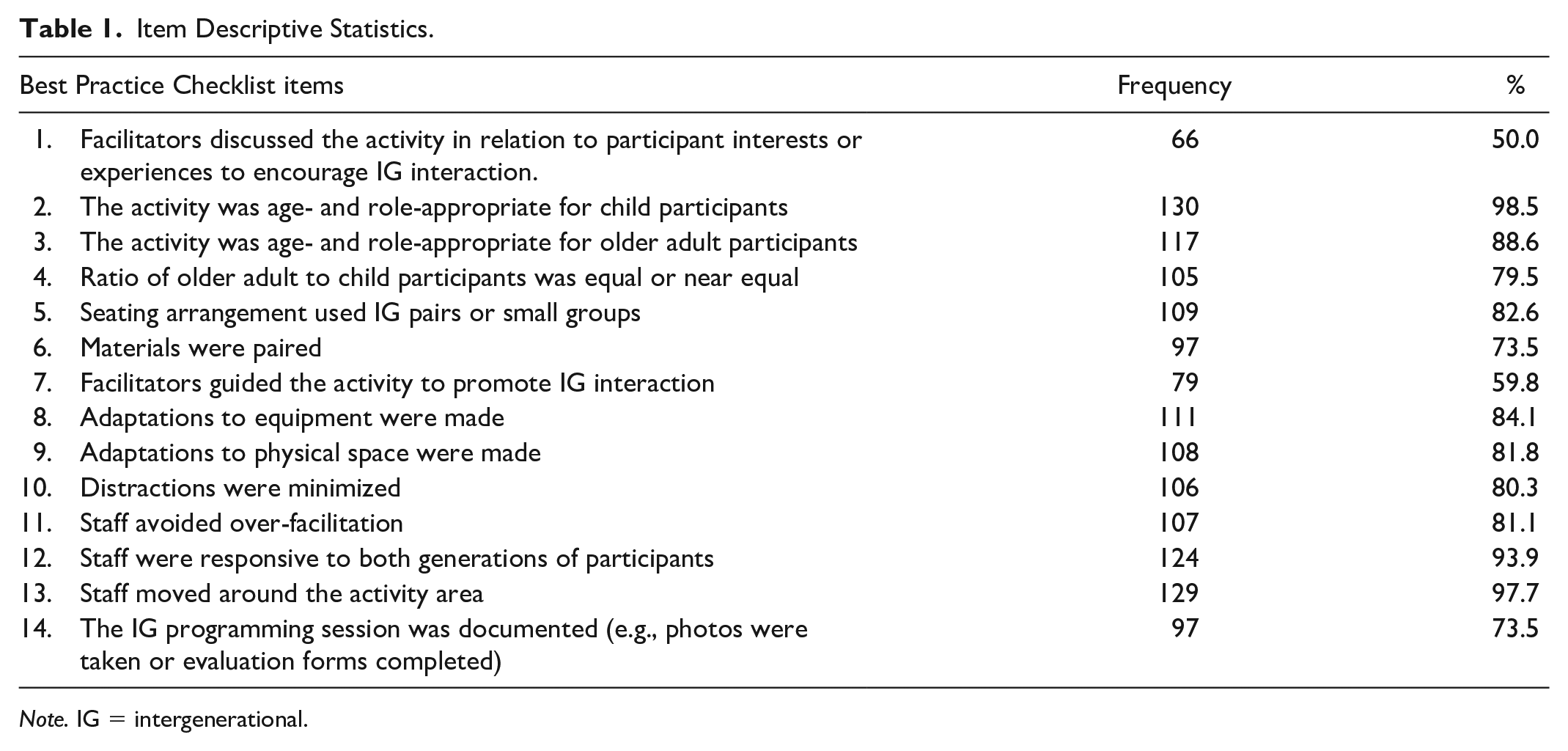

At the heart of IG programs are the program staff who organize IG program sessions and facilitate interaction among participants (Epstein & Boisvert, 2006; Heydon et al., 2017); staff significance is even greater when participants’ abilities are limited (Kitwood & Bredin, 1992; Lawton, 1982), such as with older adults who have memory and/or cognitive impairments. To enhance the replicability of effective staff-led IG programs, the first author led a multi-year effort to develop and test strategies that program leaders can adopt to enhance IG program participant outcomes (see Jarrott et al., 2019 for a detailed description). Investigators developed a framework that drew on evidence and theories of sociocultural learning (Vygotsky, 1978), environmental press (Lawton, 1982), and contact theory (Allport, 1954)—the most widely cited theoretical framework informing IG program research (Martins et al., 2019). Sociocultural learning theory supports the value of IG contact for children. Environmental press and contact theories detail conditions that support positive participant responses and acknowledge the importance of staff and other stakeholders. For example, the theory of environmental press informs practices of adapting a space to suit participants’ abilities and needs; practices related to offering age-appropriate roles and facilitating IG interaction are informed by contact theory. The resulting 14 strategies, herein referred to as IG Best Practices (BPs) (see Table 1), are considered to be the “core components” of IG programming that lead to desired outcomes.

Item Descriptive Statistics.

Note. IG = intergenerational.

The first-author’s team developed multi-modal training materials (e.g., in-person workshops, online modules, and IG activity guides) to optimize the implementation of these BPs by IG program staff. Of particular relevance for the current study—the BP Checklist was developed to measure the extent to which staff members implemented each BP during individual IG program sessions. When used by IG program staff, the BP Checklist can serve two purposes: (a) to capture implementation of each BP during IG programming and (b) to remind staff of the BPs covered in training materials. Trained outcome assessors who observe live/recorded IG program sessions for research purposes can complete the BP Checklist, indicating which BPs were implemented by IG program staff. Prior analyses comparing staff to assessor ratings of BP implementation during the same IG program sessions yielded evidence that staff could consistently implement the majority of BPs when facilitating IG program sessions in the community (Juckett et al., 2021).

The BP Checklist shows promise as a tool that IG program staff and trained outcome assessors can use to measure adherence (i.e., fidelity) to IG program recommendations. Used in conjunction with participant-level outcome measures, the BP Checklist has the potential to serve as a critical tool for IG programmers and researchers; however, it has not been psychometrically evaluated. Accordingly, the current study presents an initial empirical evaluation of the BP Checklist. Conducting an exploratory factor analysis (EFA), we aimed to examine a possible underlying factor structure of the BP Checklist that could reflect constructs theoretically hypothesized to positively influence IG programming outcomes. We also aimed to identify individual items that could be removed from the BP Checklist to maximize its ease of implementation in IG program settings.

Method

Setting

At five sites where childcare centers partnered with an older adult program (i.e., adult day services, senior center, or volunteer program), we trained affiliated staff to use evidence-based BPs (see Table 1). Twelve staff had primary responsibility for leading IG programming sessions; ancillary staff or volunteers provided support. All lead facilitators were women, 25% of whom identified as persons of color. They possessed varied levels of education, usually in fields of child development, geriatric nursing, or education. Diverse IG programming sessions were offered at each site 1 to 7 times weekly involving music, movement, literacy, and craft activities, among others.

Instrument

Trained assessors used the BP Checklist (Jarrott, 2019) to document staff use of 14 BPs for each IG program session. Practices were dichotomous yes/no; items coded yes were present throughout the session (e.g., adaptations to physical space were made) or were noted to occur multiple times during the session (e.g., discussing the activity to reflect participants’ interests). The BP Checklist was completed for 132 live or video-recorded IG programming sessions, with approximately 65% coded from live observation. A range of 22–32 IG programming sessions were observed at each of the five sites between March 2013 to May 2016. Because observations were conducted as a program evaluation, it was determined unessential for staff to complete informed consent. All study activities were granted approval by the Institutional Review Board at Virginia Tech (IRB 11-580).

Analysis

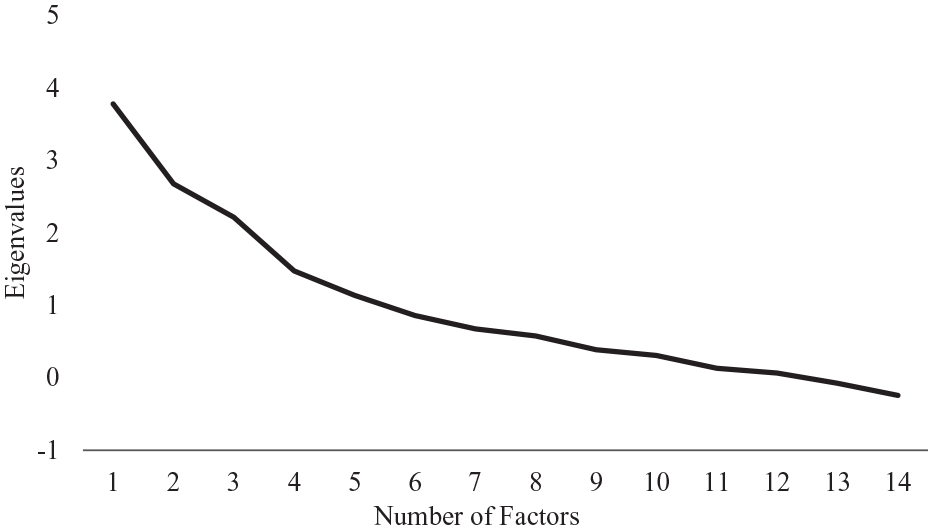

Initially, we generated a scree plot with all 14 items (Figure 1), which was ambiguous and lacked a clear visual indication of the number of factors (Thompson, 2004). Because five factors had an eigenvalue over 1, we began the EFAs with a five-factor solution—to inform the factor structure and identify possible items to remove from the checklist. We used oblique rotation (GEOMIN) and weighted least-squares means and variance (WLSMV) estimation, which have been used in EFA of other instruments with dichotomous indicators (Moyo et al., 2018). We assessed global model fit through a chi-square test (with p-values greater than 0.05 meaning the model was not significantly different than a perfect model; Kline, 2005), the root-mean-square error of approximation (RMSEA; >.08 = poor fit, .05–.08 = acceptable fit, .00–.05 = close fit), the comparative fit index (CFI; <.90 = poor fit, .90–.95 = acceptable fit, >.95 = good fit), and the Tucker–Lewis index (TLI; <.90 = poor fit, .90–.95 = acceptable fit, >.95 = good fit). Where items loaded onto more than one factor at .4, we placed the item in the factor where it loaded most highly and/or made theoretically-informed decisions about which factor was most congruent with the item. We used SPSS Version 27 to determine the extent to which BPs were implemented, and the Cronbach’s alphas of the eventual factors. We then used Mplus to conduct the EFA.

Eigenvalues from the 14-factor EFA (n = 132).

Results

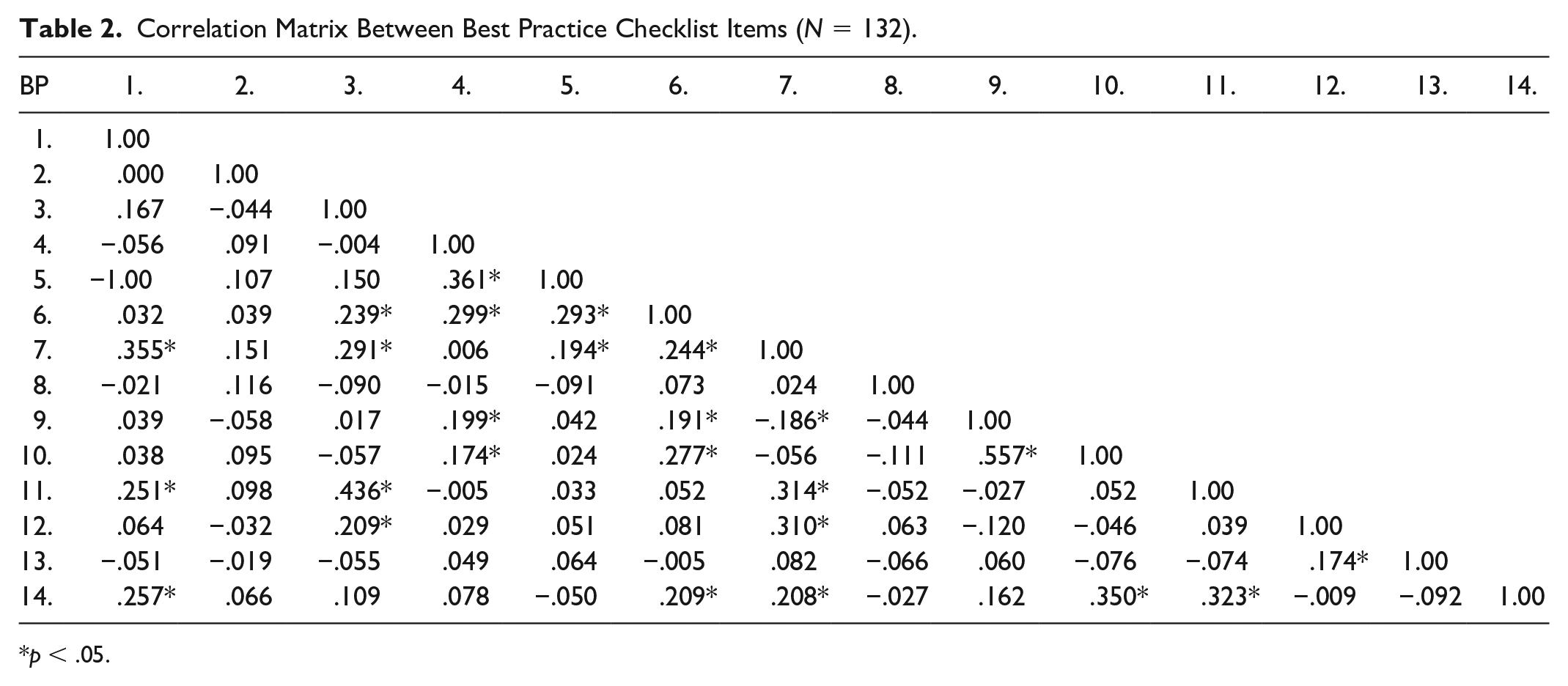

Table 1 indicates the extent to which BPs were implemented in each session, with some items (e.g., 2 and 13) being implemented nearly 100% of the time. Table 2 presents correlations between individual items.

Correlation Matrix Between Best Practice Checklist Items (N = 132).

p < .05.

EFA

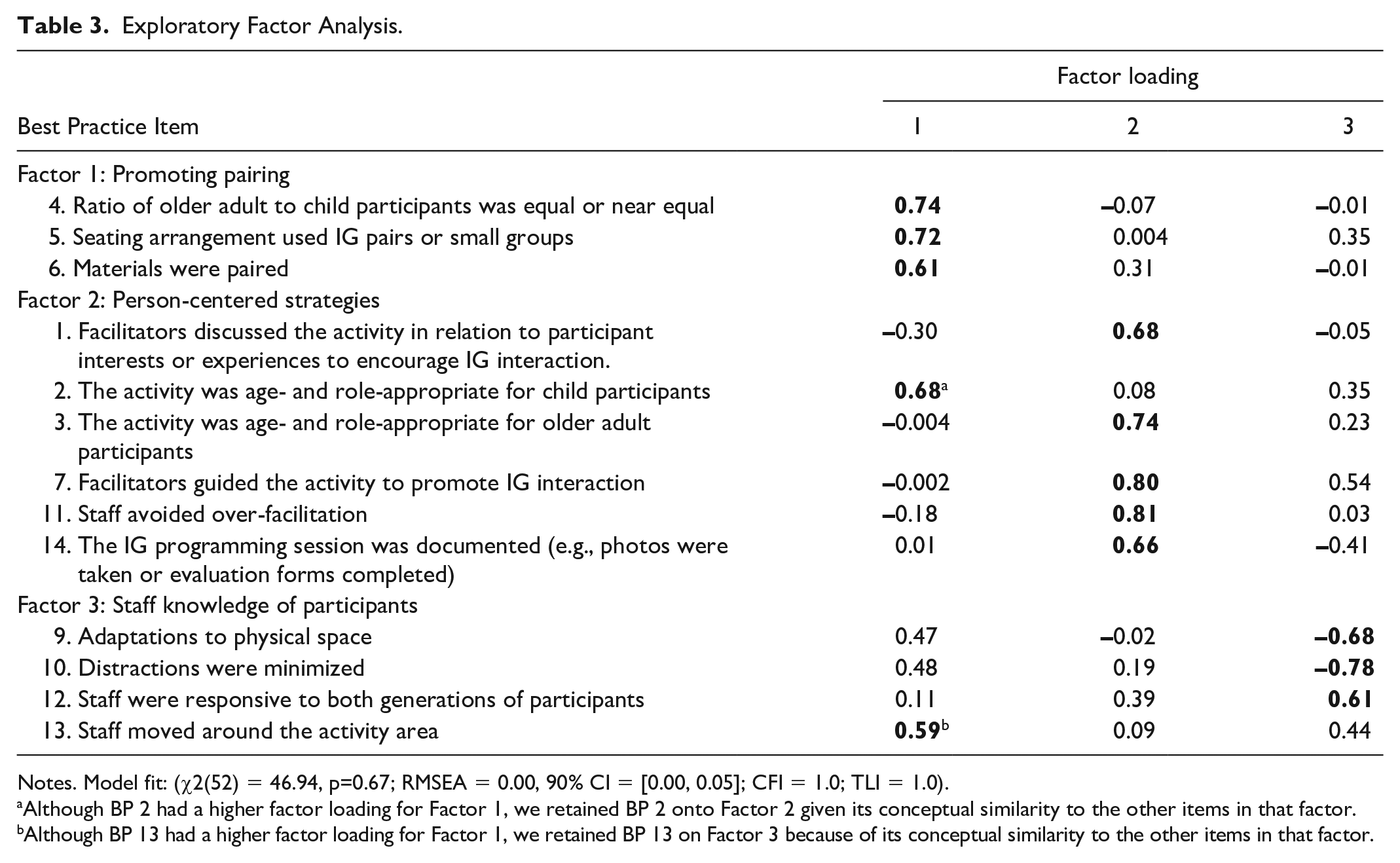

The initial five-factor model had strong model fit, χ2(31) = 26.78, p =0.68, per the RMSEA = 0.00, 90% CI = [0.00, 0.05]; CFI = 1.0; TLI = 1.0). However, the fifth factor only had one item (BP2) that loaded at 0.4 or higher. Thus, we ran a four-factor model, which could not converge. We then ran the three-factor model, which had strong fit, χ2(52) = 46.94, p = .67; per the RMSEA = 0.00, 90% CI = [0.00, 0.05]; CFI = 1.0; TLI = 1.0), and each factor had at least 3 associated items. In the 3-factor model, BP8 did not load onto a factor. Thus, we concluded that the 13-item, 3-factor solution was the best possible fit.

In presenting the factor structure, we made two theoretically-informed adjustments. First, BP2 loaded onto Factor 1 (0.68), but we placed it with Factor 2 (.08) so that it was placed with conceptually similar items. Second, though BP13 loaded more strongly onto Factor 1 (0.59) than Factor 3 (0.44), we kept it with Factor 3 so that it, too, was grouped with conceptually similar items. The statistically- and theoretically-informed solution follows.

Factor 1

Statistically, Factor 1 included Items 4, 5, and 6 (α = 0.575; Table 3). Conceptually, Factor 1 items involve promoting intergenerational pair formation to encourage collaboration. The factor relates to the contact theory tenets of cooperation, a common goal (Allport, 1954), and friendship, such as sharing enjoyable activities (Pettigrew, 1998). For example, paired materials encourage interaction as IG partners collaborate on a shared activity rather than working in parallel to each other.

Exploratory Factor Analysis.

Notes. Model fit: (χ2(52) = 46.94, p=0.67; RMSEA = 0.00, 90% CI = [0.00, 0.05]; CFI = 1.0; TLI = 1.0).

Although BP 2 had a higher factor loading for Factor 1, we retained BP 2 onto Factor 2 given its conceptual similarity to the other items in that factor.

Although BP 13 had a higher factor loading for Factor 1, we retained BP 13 on Factor 3 because of its conceptual similarity to the other items in that factor.

Factor 2

Statistically, Factor 2 included Items 1, 3, 7, 11, and 14, with item 2 added for conceptual congruence (α = 0.621; Table 3). Conceptually, Factor 2 represents person-centered strategies, that is respect for and interest in the backgrounds, abilities, experiences, and preferences of older adult and youth participants (e.g., Kitwood & Bredin, 1992). To illustrate one practice, facilitator discussed the activity in relation to participant interests, a staff member facilitated an IG programming session focused on modes of transportation, describing the children’s interest in cars and buses, and asking an older adult participant to talk about their previous work as a bus driver.

Factor 3

Statistically, Factor 3 represents items 9, 10, and 12, with 13 included for conceptual congruence (α = 0.621; Table 3). Conceptually, Factor 3 represents facilitator knowledge of participants and how to respond to participants in any age group. For example, a skilled facilitator knowledgeable about older adult participants with dementia that they work with would be able to anticipate what might distract them from the focal activity and adapt the environment so that distractions were minimized.

Discussion

Our study represents the first step in validating a measure that captures IG program staff’s adherence to “core components” of effective IG programming. Testing the underlying structure of the BP Checklist, we identified three sets of items reflecting theoretical and empirical constructs that frequently inform IG programming. Further exploration of the BP Checklist is needed, as two items were positioned in factors theoretically but not statistically, and internal consistency is modest. Still, the high rate of BP implementation achieved and our EFA findings encourage additional investigation of the BP Checklist as a resource to practitioners and researchers, both groups having called for reliable, valid methods to evaluate IG program outcomes (Gerritzen et al., 2019; Jarrott & Lee, in press). It can shed light on the effectiveness of BP training and contextual factors influencing implementation. In other words, low BP implementation may suggest that training materials need refining or that other factors (e.g., physical space limitations) are impeding implementation. A recent scoping review (Jarrott et al., 2021) revealed that researchers rarely measure contextual factors, leading to insufficient understanding of how to improve IG programming. Incorporating the BP checklist can help fill this knowledge gap. The BP Checklist may prove useful for assessing program quality, using it in tandem with participant-level outcome measures to test the association between BP implementation and participant outcomes.

The strength of the current study is that data were collected across multiple community settings where staff received training on the BPs. Though insufficient to conduct confirmatory factor analysis, the number of observations was larger than most IG program studies (Jarrott et al., 2021). With more BP Checklists, confirmatory factor analysis may further validate the structure of the BP Checklist. Next steps include testing psychometrics of the BP Checklist and the widespread feasibility of implementing the BP Checklist in diverse IG program settings.

Conclusion

IG programs are receiving increasing attention, including in the re-authorized Older Americans Act (Supporting Older Americans Act, 2020), for their potential to meet the needs of multiple generations in diverse communities. Validating measures for practitioners and researchers supports their efforts to assess whether and how such programs achieve their goals. The present study serves as a foundational step toward optimizing rigorous IG program evaluation and providing tools to enhance the quality of programming implemented with youth and older adult participants.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the USDA CYFAR Sustainable Community Project mechanism (USDA Award No. 2011-41520-30639). All study activities were granted approval by the Institutional Review Board at Virginia Tech (IRB 11-580).