Abstract

On platforms, workplace rules can be ambiguous and inconsistently enforced. This article examines the labor process within the “grey zone” of platform governance, bridging theories of organizational misbehavior to research on digital labor. We compare two groups of content creators—porn creators and viral entertainers—who earn a living by sharing pictures and videos on social media. Both strategically utilize sexual imagery to boost their visibility and income. While platforms restrict explicit content, workers perceive blurred lines between what is allowed and what is not, and they look for ways to push those boundaries. Based on 94 interviews and ethnographic research, we identify a three-step process of strategic risk-taking. Creators edge against the rules, floodgate successful strategies, and recuperate after receiving sanctions. Ultimately, the “grey zone” allows workers to test and break rules, which ultimately benefits the platform by keeping both users and creators engaged. By conceptualizing platforms as grey zones, we connect the digital labor process to value production in the platform economy. Grey zones perpetuate the growth and interests of capital by keeping both users and creators engaged on the platforms.

Attention is a double-edged sword for digital laborers who make a living by posting content on social media platforms. They seek to harness users’ attention, which is the primary scarce resource in the “attention economy” (Goldhaber, 1997). However, the most attention-grabbing content is often prohibited and penalized by platforms. How, then, do online creators navigate the paradox of a pursuit that can easily border on the illicit?

This article outlines the labor process of evading rules in a new workplace, the digital platform. We center our analysis on the case of sexual content, because it is formally prohibited on platforms like Facebook and Instagram, but sex can also be a tool for creators to capture attention, if they can deploy it without crossing the line. As studies of algorithmic management have found, the line between permissible and illicit is blurred, because on platforms, rules are ambiguously worded, constantly changing, and inconsistently enforced (Duffy, 2020; Gillespie, 2018; Vallas & Schor, 2020). As a space with vague rules, platforms are a “grey zone” (Dieuaide & Azaïs, 2020) in which workers learn which rules can be broken, or at least bent. Organizations often deliberately construct grey zones to allow for some amount of misbehavior which can be beneficial to companies (Anteby, 2008). On social media, the grey zone of platform governance allows for some amount of sexual content.

This should come as no surprise, given the centuries-long entanglement of sexuality and commerce. The historian Peter Bailey, writing on Victorian barmaids, observed that bar owners strategically deployed feminine sexuality, what he called parasexuality – to be “deployed but contained,” or in common terms, it is a show of “everything but” actual sex (1990, p. 148). Endemic to capitalism, parasexuality draws attention to a wide range of goods and services for sale — from waitresses in restaurants and nightclubs to flight attendants on airplanes and hotel concierge staff (Mears, 2014). Accordingly, social media platforms deploy and contain the display of sex. We demonstrate how content creators produce parasexuality in the grey zone of platform governance.

We study the labor of content creators as they “play in the grey” (Hoang, 2022), that is, as they deploy sex in ways that verge on being illicit. Drawing from ethnographic observations and 94 interviews with content creators, we examine how workers perceive rules and respond to their ambiguity. After a review of literature on grey zones at work, we describe our cases. We leverage a comparison of two types of content creators: high-performing entertainers posting viral videos and sex workers posting pornographic content. Our comparison follows the best practices of paired case comparisons in previous studies of workplaces (Bechky & Okhuysen, 2011; Bechky & O’Mahony, 2015). Sex is our analytical point of comparison across these two types of workers, as it allows us to see common strategies among workers who seek to increase their visibility with prohibited tactics. Our findings unfold with a three-part process of strategic risk taking we outline as edging against the rules, floodgating successful strategies, and recuperating after sanctions. Ultimately, the grey zone allows workers to test and occasionally break the system's rules, and these activities in fact benefit the platform by keeping both users and creators engaged. We conclude with a discussion of how grey zones can advance labor studies in the age of platform capitalism, specifically by linking the digital labor process to rule breaking and the political economy of value in the platform economy.

Literature: From Organizational to Platform Grey Zones

Workplaces, like any modern bureaucracy, have rules. But rules do not always divide behavior into black and white. Rules can create grey zones, where illicit behavior thrives. Rule-breaking is a classic concern in the sociology of work because it is a fulcrum of labor control in capitalism. We extend the concept to the study of contemporary platform labor, in particular, social media content creators.

Grey Zones and Rule Ambiguity at Work

The idea of a “grey zone” describes situations of rule ambiguity, such as the unclear rule of law in geographies between state borders (Mazarr, 2015), financial investments that neither comply with nor break global regulations (Hoang, 2022), and the moral ambiguity between good and evil in a concentration camp (Levi, 1988).

Organizations often deliberately construct grey zones, because ambiguity grants leeway for actors to break rules that may be useful and profitable. Concerning workplaces, grey zones occur when rules and norms are ambiguous or not strictly enforced, allowing for advantages to certain workers. Adia Harvey Wingfield, for instance, describes the informal rules at workplaces as “grey areas” which benefit White workers (2023). Furthermore, workers’ misbehaviors – the breaking and bending of rules and exploiting loopholes – are rampant. Scholars have identified systemic “organizational misbehavior” like recalcitrance, pilfering, soldering, and sexual misconduct on the job (Ackroyd & Thompson, 1999; Vardi & Wiener, 1996).

Grey zones are commonly tolerated by management, because they are beneficial (Anteby, 2008; Ditton, 1977; Mars, 1982; Pollert, 1981; Roy, 1959). In manufacturing, managers tolerate some level of theft on the shop floor (Anteby, 2008), or they allow for time wasting like the game of “banana time” described by Donald Roy (1959), because these keep workers engaged. In some cases, workers and employers collude on rule-breaking in order that both may profit. For example, casinos allow card dealers to cheat to increase their tips, which lowers casinos’ labor costs (Sallaz, 2002). As another example, profanity may be tolerated as it reinforces community bonds at work (Fine & Corte, 2024). Non-compliance often smooths business operations. Consider truck drivers: they fudged paper logbooks to appear in compliance with the law while still making deliveries on time. Today, under digital surveillance, some drivers engage in “decoy compliance” by putting up a sticker in the cab to suggest they are following the rules (Levy, 2024).

Because grey zones and their attendant forms of misbehavior are embedded in specific modes of management (Ackroyd & Thompson, 1999), we can expect them to evolve under algorithmic management.

Platform Labor, Algorithmic Management, and Ambiguous Governance

The grey zone is a useful analytic to study platforms, which must manage both workers and consumers. In the framework of surveillance capitalism, platforms extract surplus in the form of users’ data and attention, which they can sell to advertisers (Zuboff, 2019). Social media platforms in particular must incentivize creators to keep posting new and engaging content, in order to keep users coming back to their sites.

On platforms, the rules are often ambiguous and inconsistently enforced by remote-working humans and algorithms. Algorithms are generative rules, that is, rules that exercise power and shape future realities in a mechanistic way, unlike other forms of human-made rules (Schwarz, 2021). In traditional employment, managers directly guided workers’ behaviors. On platforms, workers interpret the rules often with no guidance from human managers (Manriquez, 2019), but by following nudges, rewards, and sanctions from algorithmic management (Cameron, 2024). The lack of human oversight can make it difficult to contest or even understand managerial decisions (Kellogg et al., 2020), giving more room for workers to interpret or perceive rules in various ways.

Workers also experience arbitrary enforcement of rules, which, unlike in traditional factory settings (e.g., Burawoy, 1979), are unstable on platforms (Ravenelle, 2019). Platforms can change rules without notice or explanation, prompting scholars to describe gig work with metaphors of opacity such as “invisible cages” (Rahman, 2021, 2024) and algorithms as “black boxes” (Christin, 2020).

Platforms also change the nature of the employee-employer relationship, which Dieuaide and Azaïs (2020) call a “grey zone” in their study of digital gig workers like housekeepers, when work takes place inside clients’ homes and managerial power is unclear (Pulignano & Morgan, 2023).

Faced with ambiguous algorithmic rules, some workers may feel alienated and powerless (Glavin et al., 2021), while others find creative ways to resist (Lei, 2021; Wood et al., 2021), like by “gaming” algorithms (Cameron, 2024; Curchod et al., 2020; Rahman, 2021). Hence, platforms both afford autonomy to workers, and exert control over them (Schüßler et al., 2021). This is especially evident among content creators on social media platforms.

Contradictions of Content Creation on Social Media Platforms

To date, studies of algorithmic management have concentrated on online gig work such as Uber, even though this represents only a small share of the workforce (Piasna et al., 2022). In comparison, social media content creation has grown exponentially with an estimated 200 million creators worldwide, and the job of “influencer” now ranks among the most desirable careers for young people (Hahn, 2019; Linktree, 2023). Content creators - people who seek to earn money from their social media postings - do not compete for gigs like rideshare and delivery food workers, but they compete for users’ attention. Content creators upload videos and pictures with the goal of attracting views and clicks for direct and indirect profits. Platforms like Facebook and Twitter organize the digital marketplace for user attention by capturing and measuring audiences, and harvesting their data into assets (Helmond et al., 2017). Platforms collect and display for creators their performance metrics, like view counts and user reactions in the form of likes, comments, and emojis. The higher their view counts and followers, the more successful content creators are (Christin & Lewis, 2021; Levina & Arriaga, 2014).

To get attention, creators must make engaging content. Generally people pay more attention to affectively-charged content, such as outrageous or sexually explicit videos (Berger & Milkman, 2012). This means that creators have an incentive to post prohibited imagery, like eye-catching sexual scenes. Social media platforms must manage this key problem: to show users the content that they want, without showing content which the platform doesn’t want (e.g., illegal, unsafe, and offensive content). Many rules are informed by advertisers and the state, because platforms need to appeal to brands and comply with the law.

To manage this problem, platforms develop governance regimes, which include the rules, procedures, and penalties around user behavior and content. Such rules protect the “digital market-fortress” of social media platforms, so that the assetization of attention can run smoothly (Willis, 2023). On Facebook and Instagram, the rules in the form called the Community Standards, on Twitter, the “Twitter Rules.” They are an expansive set of dozens of individual prohibitions spread across six categories including “Violence and Criminal Behavior, Safety, and Objectionable Content.” All told, on Facebook, they include over 50 distinct rules which are frequently updated. Many of the prohibitions are highly contextual and arbitrary. For instance, women's nipples cannot be shown except in moments of breastfeeding or protest, but men's nipples can appear freely (Gillespie, 2018; on TikTok see Biddle et al., 2020).

Platforms use both humans and algorithms to sort through the vast amount of content users post (Gillespie, 2018). Recommender algorithms promote popular content; on YouTube, for example, 70% of what people watch on YouTube is recommended to them from an algorithm (Popken, 2018). At the same time, moderation algorithms detect, suppress, and flag prohibited content which then goes into review by human moderators. Algorithms are imperfect detectors; they often confuse categorically distinct things, like satire and misinformation, or, in one study, between cockfights and car crashes (Seetharaman et al., 2021). Content made for English-speaking North America and Europe, like in our study, is subject to stricter, more sensitive detection compared to less dominant countries where problematic content often goes without penalty (Perrigo, 2019). As such, much platform misbehavior goes undetected, and the rules are inconsistently enforced.

Thus specific grey zones emerge around sex. Since the lines between permissible and prohibited content are blurry, AI is imperfect, and content moderation is not transparent (Gillespie, 2018; Poell et al., 2022), we expect creators will see opportunities to break the rules in pursuit of attention.

To date, studies of content creators have documented strategies to break platform rules. For instance, creators making prohibited far-right content look for weaknesses in algorithmic surveillance when posting (Gibson, 2022; Marwick & Lewis, 2017). Content creators have been described as “gaming the algorithm,” which platforms allow so long as they don’t outright violate major rules (Bishop, 2019; Cotter, 2019; O’Meara, 2019). Other scholars have documented how users seek visibility in ways platforms find objectionable, like influencers who play a “visibility game” to get seen on Instagram (Cotter, 2019), or who use sexy clickbait images to get promoted on YouTube (Bucher, 2018). While the term “grey zone” has been applied to unclear copyright protections on music-sharing platforms (Brøvig-Hanssen & Jones, 2023), these case studies have not yet offered a systematic analysis of labor and misbehavior.

Producing and Policing Sex on Social Media

Sexual content creators are especially policed. Commercial sex and sexual content are prohibited on most social media platforms since the passage of FOSTA-SESTA (The Allow States and Victims to Fight Online Sex Trafficking Act [FOSTA] and the Senate's Stop Enabling Sex Traffickers Act [SESTA]) in the U.S. in 2018. FOSTA-SESTA mandated companies to curb online sex trafficking. The law criminalizes sex work by conflating it with human trafficking. 1 As such, social media platforms are mandated by law to prohibit any content that promotes commercial sex. On Facebook, any content that promotes sexual services is considered “Sexual Solicitation” and prohibited as “Objectionable Content.”

Platforms are quite intolerant of sex workers (e.g., Blunt et al., 2021), yet sex is pervasive in visual culture and on the Internet. Hence, sexual content creators face the central problem of how to “play in the grey,” that is, how to navigate the opaque rules of platforms in order to maximize revenues and minimize risks of getting punished (Stegeman et al., 2024).

Our focus on sex emerged empirically in the data, but sexual misbehavior has been well-documented in other workplaces, since sex is a central if often unacknowledged part of the organization of work (Connell, 2015). Workers often play with the boundaries of sexual propriety, as Pollert (1981) documented in a 1970s British factory in which women flirted and used sexual banter with their bosses. They “knew how far they could go,” Pollert writes, but if it “went too far, the rules of the game could snap” (1981, p. 144).

In the following findings, we show how content creators develop a sense for how far they can go in an algorithmically-managed game of showing the right amount of sex.

Methods and Cases

The first and third authors independently conducted interviews and fieldwork on two cases, entertainers who make viral videos on Facebook (FB) and sex workers who make pornographic content on Instagram (IG) and Twitter (now X). Both porn and viral creators are “digital laborers” who produce original content for social media platforms (Scholz, 2013). Even before the Covid-19 pandemic, entertainers and sex workers were turning to online work in high volumes (Cunningham & Craig, 2019; Poell et al., 2022; Slayden, 2010; van Doorn & Velthuis, 2018). Like other workers in cultural production for whom “influencer creep” is reshaping work, creators must do multi-layered labor, a combination of previously discrete jobs like production, filming, acting, and marketing (Bishop, 2023; Dumont, 2016), as we describe below.

Viral Entertainers on Facebook

Viral creators make original entertainment videos to post to monetized pages mainly on Facebook. In December 2020, first author Ashley Mears gained access to a production company, pseudonymously called “Magic Media Productions.” Known as a “content farm,” Magic Media manages over 150 monetized pages and about 180 creators as independent contractors. The company shares skills and resources in exchange for a commission from the pages’ earnings (akin to a talent agency). Based in Las Vegas, the company also shares professional data analysis, props, and work spaces like a “collab house,” which is also a crash pad for out-of-town creators. They have institutional ties to platforms, for instance, they have access to a Facebook representative devoted to assisting top performing creators.

Magic Media's mainstay are short, scripted videos, ranging in length from 3–20 min, like prank videos, food hacks, magic tricks, and scripted dramas. Several of their top pages have over 10 million followers; collectively, their pages got between 5–7 billion views monthly and creators’ median monthly earnings per page were $30,000; in mid-2021, over 90% of their pages grossed more than $5,000 per month, and 6% of them earned more than $200,000 a month. Viral creators are clearly outliers, because they have managed to capture exceptionally high attention. They are “winners” in the winner-take-all creator economy which has few well or even decently-paid creators (Jin, 2020).

Having agreed to the terms of the study which included a limited non-disclosure agreement, Mears was granted access to Magic Media's closed Facebook group for observations, including over a dozen hours of training videos on how to make content. She kept systematic notes in a field journal highlighting relevant themes as they emerged inductively. All names of viral creators are anonymized to protect their privacy.

She also interviewed 60 of them (48 on Zoom) lasting between one and two hours. Included were six viral creators and managers who work at competitor companies. About 80% of the interviewees have professional backgrounds in entertainment as magicians, singers, comedians, filmmakers, and dancers. Mears conducted in-person observations of their work in Las Vegas on six field trips between March 2021 and August 2024 for between 5–10 days each trip to film with them, including during key events called “Meet Ups” that involved upwards of 60 creators filming in small teams, in addition to extended conversations at their almost nightly rituals of hanging out and discussing their work.

Porn Creators on Twitter and Instagram

Porn creators upload self-produced pornographic content and short clips of studio-produced content mostly to Twitter and Instagram in order to attract paying customers to other subscription-based sites like OnlyFans. Between January and May of 2019, third author Thao Nguyen interviewed 34 participants in the adult entertainment industry in North America and Europe and conducted five months of ethnographic fieldwork, mostly virtually — on Twitter, Instagram, and in sex worker's online forums — supplemented by in-person observations at four sex-positive events in Chicago and New York. Nguyen gained access to this hard-to-reach population through email introductions and by posting a recruitment call on social media. The majority of her participants kept up with an online presence and spent a significant amount of time on social media for business-related transactions and interactions. She used online participant observation to build rapport, connect with potential interviewees, and stay up-to-date about their work, following research practices of virtual social worlds (Boellstorff, 2012).

The interviews lasted between thirty minutes and two hours. The pool of interviewees includes people with backgrounds as porn directors, producers, performers, public relations professionals, and CEOs and founders, referred to here with the umbrella term “sex workers.” Throughout fieldwork, she kept detailed notes of interactions and events that emerged in the digital space and triangulated those information with her participants during interviews.

Unlike viral creators, porn creators do not have as much financial success, and not all of them are motivated by money alone, because some of them also pursue goals like personal pleasure and social justice. Most of the creators in Nguyen's study work in the indie porn market that is tailored to queer, feminist and non-heteronormative sexual desires. With smaller audiences, they are more structurally disadvantaged than those in mainstream porn.

Paired Case Comparison

Both studies were conducted independently and cleared by our universities’ review boards. Our comparative approach emerged from conversations with second author Elif Birced who identified analytic points of convergence across our cases. A key and surprising point of comparison was the strategic use of sexual content, which appeared in the data as both a resource and a risk. While the creators use sex to varying degrees, both push boundaries around rules in comparable ways.

We began our analysis with this shared theme and uncovered several overlaps and divergences, coding sex as a tool for gaining attention, like other scholars, deliberately centering sex in the study of platform labor (Rand, 2019). Once we identified sex as an organizing principle, we explored other similarities and differences, following best practices in the matched pair approach (Bechky & O’Mahony, 2015). Both types of creators navigate ambiguous platform rules about showing sexual content, with “how much” and “how” emerging as critical themes.

Through shared transcripts and fieldnotes, we developed co-written analytic memos, identifying differences such as their reliance on sexual content. For porn creators, sexual imagery is central, while viral creators use sex sparingly as one of many tools to attract views. The two groups also monetize differently: viral creators earn directly from the platform and its in-stream ads, earning a share of ad revenue when ads roll on their videos. 2 Porn creators, in contrast, direct customers to subscription sites like OnlyFans, since their explicit content is prohibited on major platforms. Viral creators often receive direct support from platform representatives, while porn creators lack such resources. Both groups developed strategies to navigate ambiguous platform rules.

Findings

Content creators strategize which risks are worth taking through a trial-and-error process. We begin by showing how content creators confront and interpret platform rules as a grey zone. Next, we show a three-part process of risk-taking. First, they innovate new ways to break rules by edging towards the boundary of what is not permitted. Next, they imitate peers’ successful rule violations, leading to new forms of illicit content we call floodgating. Finally, when they or their peers go too far and receive a sanction from the platform, they recuperate by reverting to “PG” content, or by migrating to other platforms.

Part 1: Confronting Rule Ambiguity, Sanctions, and Illicit Incentives

All creators, whether advertising their porn or trying to make viral entertainment videos, talked of rule ambiguity. They saw that platform rules were often vaguely stated, and that their enforcement was inconsistent, yet sanctions could be severe. They also saw incentives for deviance, in particular by posting sexual imagery, if they could get away with it.

Working primarily on Facebook because of its high adshare payments, viral entertainment creators intended to make users “stop the scroll,” as one put it, and to “hook” a viewer into watching their videos. To do so, they seek to arouse affect, which can heighten viewers’ attention and they use a variety of sentiments to do so: curiosity, outrage, sentimentality, and sexual excitement. They made strategic choices on their thumbnails, titles, emojis, hashtags, storylines, and so on. Among their tools was sexual imagery, even just the use of low-cut blouses on women and “butt shots,” like a camera focusing on a person's backside. This aligned with their core assumption that feminine sexiness hooks attention, as Jake, a viral creator explained, “It's entertainment, and sex is always gold in entertainment.”

But they were also keenly aware that Facebook's Community Standards prohibited sexual content, among many other prohibitions. The viral creators talked about the Community Standards with utmost seriousness. They were the first thing that Mears was assigned to study when she got access to join their company as a creator. In Las Vegas, two creators had printed out the Community Standards in color and put them in a big binder on top of their kitchen counter, full of neon post-it notes sticking out of the sides. Throughout his almost-weekly updates to creators on their closed FB group, the head of Magic Media often exclaimed things like: Re-read the Community Standard Guidelines right now. I know, you think I'm talking to somebody who is not you. But I'm not. I'm talking to every single person. If you get a violation and a page is demonetized, then none of your other work matters. – Ryan, October 2021, FB group post … we can’t even really talk about sex at all without getting in trouble, like it's in their Terms of Services now. Right now there's actually a lot of censorship on social media like Twitter and Instagram. Instagram, especially just being out right and saying, “We don’t want sex work.” So, for instance, they don't allow nudity…And they’ve never allowed pornographic content.

Since porn creators cannot monetize their prohibited “sexually explicit” content directly on social media, they use platforms as showcases to attract customers to their other income-generating sites like OnlyFans and “clip sites” like ManyVids. Social media is so important that one porn creator put it, “Social media is your job, and then porn comes second.”

Furthermore, platforms like Instagram outsource content governance to users; any user can click a “Report User” button on any page or profile to initiate a process of profile scrutiny. By letting the community police itself this way, any profile can be targeted by personal or political enemies, leading sex workers to have heightened senses of vulnerability given the social stigma of their work. This was a concern to LJ, an amateur independent pornographer since 2013, who explained that Instagram is a great way to build a following with content on Stories, a feature of the platform in which “you can highlight something specific at a particular moment in time and catch people's eyes,” he said. Growing a following this way takes time, but losing an account can happen immediately: So Instagram is still a somewhat useful tool, but you have to be careful with it just because of censorship. And so many people are having their accounts either targeted by individuals that are going after them just because they are a sex worker, because they're a pornographer, or I've heard instances of that. …You can’t figure out who the person is that's targeting you, and you can’t really like address anything… it seems like one person can single handedly just like, take down a profile. Without any real investigation into it whatsoever.

However, social media is vital for sex workers such as porn creators to attract paying clients, similar to cultural producers in general who advertise their work on socials (Bishop, 2023). This was summed up by porn creator LK: We're doing digital marketing without most of the tools accessible to people who do digital marketing in any other industry. So we can't market our porn on Facebook. … So there's also a monitoring of your social media presence.

Sanctions for posting sexual content could be severe, and these were highly variable across platforms. Platforms issue penalties depending on the perceived seriousness of the violation and their frequency, following a model of procedural justice, from demonetization of a video to the permanent deletion of an entire page. 3

Within any single platform, there are tiers, or layers, of penalties: some violations bring severe punishment, as do repeat offenders who get multiple “flags” or “strikes” for perceived smaller offenses (Caplan & Gillespie, 2020). On Facebook, mild violations like subtly sexualized content results in partial or full demonetization of content, that is, the video won’t receive any ad money. More serious offenses, such as perceived violations of human rights, violations of speech or acts against protected classes (e.g., expression of racism, sexism, homophobia or anti-Semitism), or the promotion of sexual trafficking or violence, including depictions of forced touching, can result in a Community Standards violation. This results in suspending monetization of the entire page for a period of time and can even result in permanent closure of the page. In between those two outcomes – demonetized content and page deletion – there are a range of sanctions.

For instance, among the viral entertainment creators, one of their videos was sanctioned on Facebook with limited demonetization because it featured cuddling between two consenting adults in bed, but the page continued to earn money from other videos. But when a page is demonetized for a more serious community standard violation, the entire page and all of its earnings are frozen. This sanction can last between a few days to several months, and the reason for that variable length is not always clear.

Porn creators in particular spoke about their fears of severe penalties and “getting into trouble,” as Chulita put it, meaning the many punitive measures that are determined by AI and communicated via automated messages. The worst punishment is account deletion, a blow to a sex worker's livelihood, and perceived as an unjust punishment by Kutie, who worked as both a performer, content producer and cam model: Instagram and Twitter can just take away, delete your account, suspend you, because you may have had a nude, you may have had a nipple slip or somebody reported a picture of you completely. I had a friend who had hundreds of thousands of followers just get deleted, and she lost a bunch of her income. Because if you lose your followers, you lose your regulars, you lose people who may have been interested in that, you lose the people who could have referred you. You lose everything basically. So it's really sad what social media does because we rely so heavily on it. As soon as it's taken away from us, or if we're not, you know, using Instagram for a week, not tweeting for a week, you can see just your content not being sold at all…

Another severe but ambiguous punishment is “shadow banning,” when a profile's reach gets reduced or is no longer visible (Gillespie, 2022). As the term shadow banning implies, creators are not notified of their demotion, but rather the system simply hides their content without disclosing why or if their reach has been deliberately reduced (Cotter, 2021). This leads creators to seek out assistance from online communities and fellow sex workers to cross-check the visibility of their accounts. As Alexis explains: They don’t want us using their platform. But they’re not going to come out and say that. …They’ve been doing a thing called shadow banning, where you're not actually banned from posting anything or using the website anyway. But people won't be able to find you when they search for you. …. Because I used to gain, I would say, 10,000 followers every few months. And now I only have around 80,000 followers. And it's been more or less than that number for a while, like several months. And I didn’t notice immediately, but it definitely has affected my sales. ” an ominous post about surveillance on social media, which received 1,437 likes.

” an ominous post about surveillance on social media, which received 1,437 likes.

Hence, viral and porn creators have clear incentives to post sexual content to “catch people's eyes,” as LJ put it regarding porn content. As Jake said, “sex is gold” regarding the production of viral content. Sex is a tool to get more attention and more profit for both types of creators. Yet they face severe penalties for posting sexual content. Inside this contradiction, workers were also aware of ambiguity about the rules. Porn creators noted that they could in fact get away with risque content on some platforms, depending on the moment, while viral creators frequently got flagged for videos they thought were fine, or “PG.” Sometimes, a video may have gained millions of views several months ago, but it could be flagged today for a violation, resulting in page suspension. Reasons for getting flagged were often delivered in vague automated messages, or in the case of shadow banning, no explanation at all. This ambiguity made the platform governance regime into a “grey zone” of illicit yet sometimes tolerated behaviors.

Part 2: Edging

Seeing platform governance as a grey zone, creators develop strategies to push against the rules. We call this edging, that is, “pushing against the edges of what the management system [allows],” as Cameron describes in her study of deviant Uber drivers (2024, p. 488). The term edging also comes from a YouTuber who explained that the platform algorithm “rewards you for being an edge lord,” that is, getting as close to the edge of the prohibitions as possible (reported in Caplan & Gillespie, 2020, p. 5).

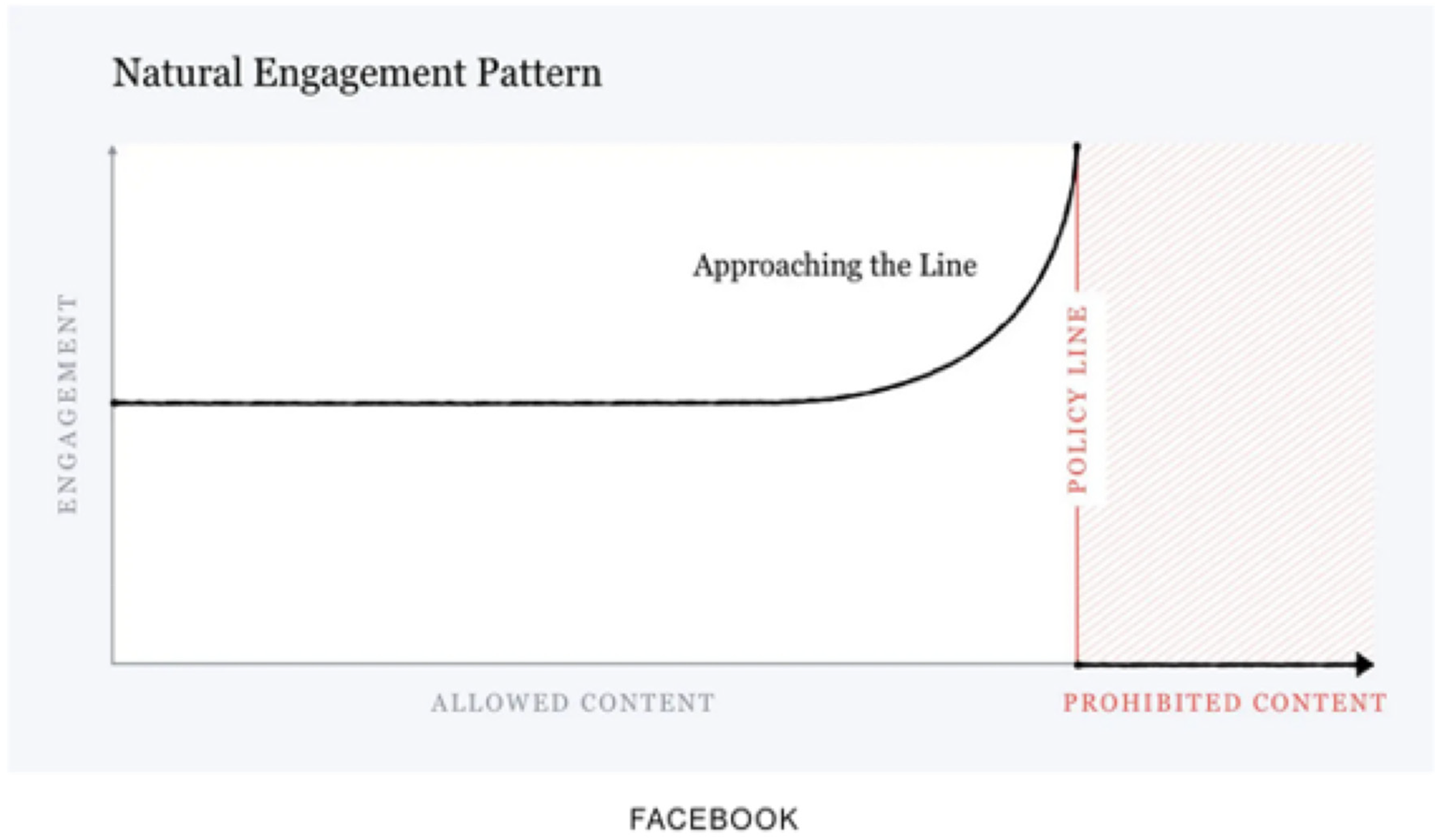

Facebook's own communications with creators highlights how the platform incentivizes edging. The company released a graphic, shown below (Figure 1), depicting how user engagement increases as content approaches the prohibitions (in Gillespie, 2022, p. 5). 4

“Natural Engagement Pattern” from Facebook, 2018.

As a labor strategy among creators, edging takes many forms. One popular strategy among viral entertainers is to take a conventional video genre like “unboxing,” when someone unwraps or opens a package to reveal a surprise inside, and they spike it with sexual innuendo or imagery, as in a video of a woman post-plastic surgery unwrapping bandages on her body to “reveal” her new ostensibly bare breasts or butt, which are never actually revealed. Or, they take the genre of physical comedy, like a video of someone falling down, and they film it poolside with the suggestion of nudity. This was the strategy behind one popular video titled, “Her BIKINI RIPPED  ” viewed 153.6 million times in five months, which filmed a prank of a bikini-clad woman slipping on a banana peel at the pool. In both innovations, the viewers anticipate seeing a naked woman's body, which entices them to watch the video to its titillating end, which is never as promised.

” viewed 153.6 million times in five months, which filmed a prank of a bikini-clad woman slipping on a banana peel at the pool. In both innovations, the viewers anticipate seeing a naked woman's body, which entices them to watch the video to its titillating end, which is never as promised.

For instance, a viral creator named Margo made one high-performing live stream video of herself getting ready to take a bath titled, “She doesn’t know her camera is still on [The viewers] think you’re gonna get naked. I don’t know how I feel about it. It's icky… but simply by being a woman with breasts, people wanna watch you. Like even being in a bathing suit, they’re like, “Ooh, what if nipples show?” That's what people are watching for. ,” suggestive of a spy camera that secretly films women in the bath. In the video, Margo wears a bathrobe, faces the camera, and, pretending not to notice it recording, proceeds to spend the next 18 minutes brushing her hair, putting lotion on her hands, and shaving her face, while the bathtub water runs. The video received over 2,500 comments from (rather frustrated) viewers. As Margo knows, getting undressed and bathing on camera violates the Community Standards, but anticipating these acts is allowed. She explained why she decided to make that video, which was edging towards “Objectionable Content:”

,” suggestive of a spy camera that secretly films women in the bath. In the video, Margo wears a bathrobe, faces the camera, and, pretending not to notice it recording, proceeds to spend the next 18 minutes brushing her hair, putting lotion on her hands, and shaving her face, while the bathtub water runs. The video received over 2,500 comments from (rather frustrated) viewers. As Margo knows, getting undressed and bathing on camera violates the Community Standards, but anticipating these acts is allowed. She explained why she decided to make that video, which was edging towards “Objectionable Content:”

To edge towards the prohibited boundaries requires creators to imagine how rule-enforcers, both human and algorithmic, will “see” their videos. For example, during her ethnography, Mears filmed a prank skit in which a man swaps raw chicken into his wife's box of bra pads; a discussion ensued about whether or not to show the box, which displayed a woman wearing only the peach-colored pads. They decided against it when Jude, a fellow content creator, invoked the “proletariat” image of the human moderator (Schwarz, 2019): It's not nudity, by any stretch, but we are not dealing with the law about what is and isn’t nudity. It's some Karen sitting at her computer clicking four videos a minute, at a call center somewhere sitting at her small cubicle, just clicking yes, no, yes, no: It's what she says is nudity that matters.

5

By envisioning the rules and their enforcement, these creators are highly strategic in identifying how to edge and against which rules. For instance, they show near-nude bodies in the contexts that algorithmic and human detection will likely allow. They film women in revealing outfits like bikinis only in swimming contexts like at the beach or poolside to lower their risks of getting flagged, so an algorithm will better distinguish a bikini (permitted) from underwear (prohibited).

Edging even manifests in seemingly small and innocuous directorial decisions - voyeuristic camera angles, low-cut blouses, seductive body postures - that were intended to heighten viewers’ anticipation of prohibited imagery in otherwise “clean” videos, e.g., those which are not sexual in any other ways. On set, one entertainment creator named Alexa helped to film a cooking video of “foot-made” guacamole: a woman sat on top of a kitchen counter with her feet in a bowl of avocados, with the camera pointed at her from below. Alexa approved: “People will think they’re gonna see up her skirt,” but she advised the cameraman, “But don’t actually show [up her skirt].”

Magic Media even circulated data insights about its top performing videos, which had thumbnail images featuring breasts, butts, legs, or mouths, which they called “BBLM” imagery for short. Viral creators were encouraged to limit their use of BBLM imagery, mostly deploying it in “clean” videos, such as in cooking videos, to decrease the risk of a Community Standards violation. This resulted in a style of cooking videos in which women prepared meals in the kitchen wearing low-cut shirts,, or filmed from behind while wearing tight yoga pants. Jude, a content creator, explained the editorial choices he made when he finished filming a video: Obviously [when we filmed] we had you with your back facing the camera on purpose… To me it's not porn, it's what's attractive. So how do we make ourselves the most attractive to the audience?

Thus, these creators took selective risks: they weighed the costs and benefits of publishing potentially explicit content. In fact, when one creator uploaded a video that got hit with a sanction, another fellow creator consoled him on their group page with this message: You’ve only taken calculated risks, good ones. Onward and upward!

Porn creators similarly engage in a trial-and-error process of innovating strategies to edge past the boundaries. They too calculate the most eye-catching thumbnails, emojis, and texts they think they can get away with. Careful not to reveal that they are sex workers, these creators signal their commerce in other ways, like with strategic names, euphemisms, and emojis like the  (sweat droplets),

(sweat droplets),  (hot pepper) or

(hot pepper) or  (peach) to convey, respectively, bodily fluids, passion, and buttocks. For instance, the profile of Amy Foxxx, a Playboy Model and Producer with more than 130k followers on Instagram, chose to not use her full stage name in her profile but rather calls herself “Amy Fox triple ex.” This means only people with select knowledge can readily find her Instagram. Another top performer in the industry, Asa Suzuki, with 2.3 million followers on Instagram, does not post sexually explicit pictures, but only writes in her bio the following line: “I have an award-winning asshole.” These practices help hide their identity as sex workers to the platform regulators, though they are easily discernable by fans and clients.

(peach) to convey, respectively, bodily fluids, passion, and buttocks. For instance, the profile of Amy Foxxx, a Playboy Model and Producer with more than 130k followers on Instagram, chose to not use her full stage name in her profile but rather calls herself “Amy Fox triple ex.” This means only people with select knowledge can readily find her Instagram. Another top performer in the industry, Asa Suzuki, with 2.3 million followers on Instagram, does not post sexually explicit pictures, but only writes in her bio the following line: “I have an award-winning asshole.” These practices help hide their identity as sex workers to the platform regulators, though they are easily discernable by fans and clients.

In this way, porn creators online face a problem of visibility similar to solicitation in-real-life. They need to be semi-visible in public to attract clients but invisible to authorities or other hostile people.

6

This point came out in an interview with Courtney, a queer performer and film company owner: I just pretend I’m not a porn star and skirt around it… But social media is a place where we kind of just have to use code words. Like, wink wink [ ] or peach [

] or peach [ ] emoji. Hopefully, that doesn’t look like peddling or something. But basically, I feel like sex workers are having some of the same problems on social media that they would have like, in a casino, or a bar or a strip club if they’re being accused of peddling their wares in a public place.

] emoji. Hopefully, that doesn’t look like peddling or something. But basically, I feel like sex workers are having some of the same problems on social media that they would have like, in a casino, or a bar or a strip club if they’re being accused of peddling their wares in a public place.

Viral creators, we have seen, infuse sex into conventional genres; among porn creators, the reverse happens. They infuse their sex-themed pages with conventional content to appear more “normal.” Alexis described her posts: “it can be silly pictures, like ‘Here's my morning breath!’” In a so-called “good morning post” like a photo of her lying in bed in sexy pajamas, Alexis asks followers to say good morning back in the comments to increase her page's engagement. Sex workers feel pressure to constantly post about their personal lives to sustain engagement on their pages, ultimately with the goal of directing users to paid websites. Ellie, a former stripper and porn creator in the MILF genre, said: There's promotion where you’re saying okay, here's my porn…here's how much it is and you should buy it. And then there are other types of activities…where it's just exemplifying my personality and getting people to like me and also interacting with people.

Porn creators also use evocative imagery with care. They show skin but often hide their nipples with an X-mark, blurred effects, or emojis. They maneuver around algorithms which flag certain words. Instead of using “sex” or “porn,” they may write “s*x”, “p*rn”, or NSFW (Not Safe For Work), or “sexy” instead of sex. These adaptations constantly change, as platforms frequently update their lists of flagged words. LJ explains his process of self-censorship on Instagram as “surgical:” So you have to be somewhat careful. You can’t just like blatantly market anything on Instagram, but you can still use it in a kind of like a very precise kind of surgical way.

While edging against the rules, both viral entertainment and porn creators try to look as though they are within the boundaries of the rules. In this way, they exemplify parasexuality, the centuries old strategy in commercial settings of deploying but not “fully releasing” sexuality (Bailey, 1990), in order to maximize attention.

Part 3: Floodgating

Upon finding an opening to edge past the rules, creators then rush to replicate any successful strategy, which leads to new genres and modes of depicting illicit content. We call this “floodgating,” a situation where a small action triggers a large and sudden release of more of the same actions, in this case, new labor strategies to evade the rules.

Imitation is central to this dynamic. When creators see one strategy work, they copy it. Viral entertainment creators are highly attuned to what others are posting, and they are in frequent communication with each other about what works and doesn’t work, for instance on closed group chats.

Consider one discussion in the viral entertainers’ group about whether they could post “fake blow job” videos, also called “road head videos,” which simulate oral sex in public, like on a bus or at a pool, only to be revealed as a misunderstanding by the video's end. For a few weeks, they had been noticing a top competitor posted a series of highly successful viral videos in this style. Facebook prohibits the portrayal of oral sex, whether real or simulated, under its “Adult Nudity and Sexual Activity” Community Standards. But it seemed that when filmed as a prank, fake blow job videos could be a route to virality and its financial rewards. So for a few short months, many Magic Media creators leaned in heavily to this genre, posting videos such as “I can’t believe she did THAT on a public bus… ,” where a woman leans into the lap of a fellow passenger in a suggestive way, but by the end of the video, people realize she been petting his dog. The video was watched over 35 million times in its first month. But soon after, the director of Magic Media noticed that other platforms were cracking down on these “PG-13 videos,” and he reasoned Facebook would soon flag them too, so he advised everyone to stop posting in this style. Hence the brief flood of road head videos came to an end.

,” where a woman leans into the lap of a fellow passenger in a suggestive way, but by the end of the video, people realize she been petting his dog. The video was watched over 35 million times in its first month. But soon after, the director of Magic Media noticed that other platforms were cracking down on these “PG-13 videos,” and he reasoned Facebook would soon flag them too, so he advised everyone to stop posting in this style. Hence the brief flood of road head videos came to an end.

The road head video trend exemplifies how responsive creators are to other creators’ successes and failures as they floodgate illicit strategies. It also illustrates how the community plays a role in helping workers decide what and how to imitate. Creators are constantly discussing with each other why one video gets flagged when another doesn’t. Or they ask one another, “does this video cross the line?”

For porn creators, similarly, floodgating involves exploiting the permissiveness for sexual content unique to each platform. As they skirt around the rules, porn creators notice that rule enforcement is inconsistent. Some content that seems to be an outright violation could go unpunished, for instance on Twitter. Porn creators look for and exploit such inconsistencies, and they pay close attention to differences in tolerance across platforms. Mahx, a director of a queer and BDSM porn company, said, “Now the most we can hope for is a platform not saying anything and letting things slide,” meaning that they look for which platforms allow leeway for creators to post explicit content.

On platforms where they perceive greater permissiveness, porn creators post their most explicit content, as was the case on Twitter at the time of our study. Some of them even posted outright sex scenes on this platform. Like all platforms, Twitter did not openly allow posting pornographic content. But neither did Twitter impose an outright ban on “sensitive content” except in some highly visible forms like live videos, and images for profiles, headers, and banners (Twitter Help Center, 2024). When a profile was flagged for a potential violation, Twitter notified its viewers, “This profile may include potentially sensitive content” but viewers could still view the profile. Accordingly, sex workers put their more explicit content onto Twitter, while they censored themselves on other platforms, like Instagram, so as to not be seen as “too porny” and avoid the stricter sanctions on Meta-owned companies. Said Alexis: So what happens is we go on [Instagram], and we make our accounts with either just completely PG photos, or more often than not, like censored photographs of ourselves.

There is a sharp contrast in their strategies on Twitter, where the floodgates are more widely open and porn creators exploit their chances to be seen while they can. For example, King, co-owner of a Black-owned production company, explained why he posts explicit sex scenes on Twitter: We have to always look at it from this angle: someone in a decision-making capacity is not going to support us. So you know, from that, nothing lasts forever. You know, when we're on a social media platform and it's working really well, like use the hell out of it until it's no more, you know?

LJ also elaborated on how sex workers maneuver the rules on Twitter more easily in comparison to Instagram: On Twitter, as long as you do mark your profile insensitive, and anything that's publicly visible like your main profile picture or a profile header, as long as there's no nudity in there, you can promote pretty much anything and everything on Twitter.

Like viral creators, porn creators share and consult with each other in online groups such as closed Instagram group chats or through Twitter posts to see which strategies work. Through this interaction, they collectively find ways to adapt and work around rules, fostering a shared learning process for managing platform ambiguity as they engage with both the platform and one another.

Part 4: Recuperating

Finally, when creators or their peers innovate or imitate strategies that are not what the platform wants, they may receive a sanction, at which point, creators must recuperate. Recuperation involves recovering from a penalty and re-aligning to be in compliance with the platform's rules. In the face of demonetization, shadow banning, and account deletion, creators often quickly revert to “PG” content, or they migrate to other pages and platforms.

The problem of recuperation for both entertainment and porn creators often begins with trying to figure out if they have been penalized, and for which violations. This is especially difficult in the case of shadow banning, around which confusion and ambiguity abounds as in porn creator Kutie's experience: I'm not even 100% sure, but a lot of people say that they have been shadow-banned. And it's never happened to me personally. But I know a lot of people don't ever show up on my feed, you know, they just, they don't appear. They post things. But it's not notified to me that, yeah, when you go on to their page, you're like, “Oh, I didn't know you had been posting this whole time. It's not being promoted.”

“Am I shadow banned?” is essentially a question of ambiguous rule enforcement, as creators try to decipher if they are being sanctioned. Like porn creators, entertainment creators sometimes could not tell if their content wasn’t reaching audiences because the platform was restricting their reach, or if their content just wasn’t “good” anymore to reach audiences. For example, two creators, Alexa and Westin, posted an incest-themed video featuring a brother and sister getting married. “Aww, the incest video was my favorite,” Alexa recounted, and though it went viral, they noticed an immediate drop in their reach for weeks afterward, indicative to them that the platform shadow banned them, but they never learned if the platform was actually punishing them. Meta and YouTube have acknowledged, albeit quietly, that they take a “reduction” approach to content moderation by limiting the reach of sensitive content (Gillespie, 2022).

Hence, shadow banning is sometimes called stealth banning or ghost banning because it happens without notification or explanation, leading creators to seek out assistance from online communities of fellow creators to cross-check their visibility (Pezzutto, 2019). For example, Erika, one of the most successful indie porn directors, started a hashtag campaign called #biasedbanning in April 2019 to help fellow creators understand this invisible system of governance.

After receiving a penalty, even an ambiguous one like shadow banning, viral creators and porn creators have different strategies to recover. Viral creators typically try to salvage their Facebook page by easing off of the offending content. They “clean up” the page by posting consistently “PG” content, to restore what they call “page health.” This was Alexa and Westin's strategy after their incest video and their ensuing poor performance. For weeks they tried to build their page's health back up with “clean” content that in no way breaks the rules. It worked, and their high reach eventually resumed.

Porn creators are more likely to migrate to other platforms when they receive a strike. Because they do not get paid directly by a platform as viral creators do, they are more nimble in being able to move to other platforms. This is a major disruption, as Allie, a porn creator for three years, explains having to restart everything after her account was deleted on Instagram: It was like having the rug pulled out from under you out of nowhere and just having to like completely rethink and redo everything, my email, my phone number … Watching all your community get kicked off social media, no longer being able to reach clients anymore at all. …I have been robbed since FOSTA-SESTA.

Porn creators were nimble and quick to maneuver rules across platforms, which makes sense as their income does not come directly from ads revenue, as was the case for viral creators on Facebook. From Skype to Tumblr to Instagram to Twitter: when one opportunity closes, porn creators are ready to migrate to another. It was remarkable, in fact, how quickly and casually porn creators listed the rules and strategies to maneuver across various platforms, always ready to shift strategy, or as King put it, to “change at the drop of a dime.” In anticipation of getting deleted, they create backup accounts and mention these in their bios, also listing links to their other sites - ManyVids, OnlyFans, Discord, Twitch, TikTok, Amazon Wish List, Premium Snapchat, iWantClips, and formerly, Switter. Engaging on multiple platforms simultaneously, or multi-homing, ensures greater survival of their online presence, akin to delivery or ride-share platform workers who ‘double dip’ and rely on different platforms to get by financially (Ravenelle, 2019; Schor et al., 2020).

Entertainment creators also engage in a form of diversification, but they do so within the Facebook platform. Given their reliance on Facebook's high pay rates, many establish backup pages as a precaution against potential sanctions. If one page is demonetized, they switch to the backup page, using it as their primary account. This strategy is a common recuperation tactic among creators. Magic Media, for instance, secures multiple pages for its successful creators, posting riskier content on the less valuable pages to offest potential losses.

Because of their success, Magic Media had access to a Facebook representative for troubleshooting, and creators frequently sought help from this “rep” to appeal violations, though often with limited results. In contrast, sex workers lack this access. They rarely have platform support and must rely on AI systems for appeals. Unlike viral creators, they are not part of organized companies and have no direct recourse. Instead, they support each other through advocacy groups like the Adult Performer Advocacy Committee, and connect to each other in private groups on Twitter and Instagram.

These key dynamics of the grey zone – ambiguous rules, edging, floodgating, and recuperating – are illustrated with a final example, that of women's underpants in viral videos. One popular “hook” in the summer of 2021 involved women removing their undergarments in obviously impossible ways, like pulling their underwear up and out from their jeans.

However, the platform flagged one such video when a woman removed her underwear on an airplane. The removal process took several seconds, and was flagged as self-stimulation, which is a Community Standards violation of “Objectionable Content.” It resulted in her entire page being demonetized for an unspecified amount of time. The following discussion ensued on the creators’ group discussion: … When videos feature removing underwear, that surely dramatically increases the scrutiny that reviewers place on those videos. Any little thing becomes a big thing. … And remember, we have to avoid not only violations, but things that look like violations.

Yet within two months of this exchange, another creator sought advice from the group by asking if it was now okay to post a video that opened with panty removal. She wrote on the forum: Ok, so where are we with panty removal video starts? I know that increases the chances of an actual violation or getting flagged, but if there's no non-consensual touching or any other violations, are we ok?

Discussion

For content creators, the double-edged sword of attention promises profits but also sanctions for those who seek to use attention-grabbing sexual imagery. Sex, we found, like other prohibited content, can bring both risk and reward. Like many industries, social media platforms encourage parasexuality, in which creators see incentives to make content that “deploys but contains” sex (Bailey, 1990).

This paper documents how creators manage this contradiction through strategic risk-taking. Our contribution is unique owing to our ethnographic approach, which allowed us to observe the labor process of working in the grey zone of platform governance. We inductively arrive at a three-step process of strategic risk-taking. Creators first edge against platforms’ rules by introducing slightly provocative elements, often within conventional or “clean” contexts. Next, they floodgate the successful strategies, quickly replicating them as much as possible before sanctions occur. Finally, when penalized, they recuperate by cleaning up their pages or moving to other platforms. Each step in this process builds upon the previous one, forming a continuous loop that enables workers to adapt, innovate, and persist in breaking the platforms’ rules. This labor process is an adaptive and collective effort in response to platforms’ ambiguous rules and their inconsistent enforcement.

Both types of creators develop methods to break rules subtly, consulting each other to refine their tactics. Clearly porn and viral creators diverge in their goals, routes to monetization, and their position: porn creators are more vulnerable, less tolerated, and receive less support from platforms, such as in the form of the Facebook rep which the viral creators could consult. We should expect that sex workers, a historically stigmatized occupation that continues to be policed as a public nuisance today, would face the problem of visibility online, as other research has documented (Stegeman et al., 2024). What is surprising in our comparison is that viral entertainment creators employ parallel strategies.

Conclusion

This article has shown how successful creators deploy parasexuality in the grey zone of platform governance. It holds implications for future research on organizational misbehavior in two directions.

First, unlike in traditional organizations, where in-person managers directly shape the meanings of rules, on platforms, automated systems enforce rules, and this gives workers more room to interpret them (Cameron, 2024; Manriquez, 2019). The fact of algorithmic management heightens rule ambiguity and gives workers novel opportunities for misbehavior in new kinds of workplaces, and beyond. Parallel instances of misbehavior from automated rule enforcement can be seen among job applicants who now use common tactics to “beat the bots” that screen their resumes, and far-right content creators’ use of “dog whistles” to post undetectable hate speech on social media (Marwick & Lewis, 2017). Because AI is always evolving, and the borders between permissible and prohibited are blurry, research on the future of work should attend to emergent modes of strategic risk-taking. This can tell us about new forms of coordination among online workers, so well-illustrated by both porn and viral creators in our studies. This research agenda should also consider which types of workers are better positioned to break the rules; in our research, both porn and viral creators followed financial incentives to edge against the rules, as did some, but not all, of the Uber drivers which Cameron studied (2024). Thus a promising question for future research: how are risk-taking and rule-breaking distributed among different types of online workers?

Second, our study extends a critical analysis of the political economy of platforms. Concerning twentieth century labor relations, scholars understood rule-breaking at work as a fulcrum of labor control in capitalism (Ackroyd & Thompson, 1999). Organizational grey zones were found to activate workers’ sense of agency (Anteby, 2008), and often, cheating at work benefitted organizations by engaging workers, keeping costs low, and yielding greater profits.

Similar dynamics drive platforms. Social media platforms generate revenue by monetizing users’ attention, selling their data and eyeballs to advertisers. The more users a platform attracts, the more profits it generates (Srnicek, 2016). However, platforms face a contradictory incentive: they need to keep users engaged with enticing content, but not content that offends advertisers or violates laws like FOSTA-SESTA. As Willis (2023, p. 1880) notes, platform capitalism is dependent on an economy of protection – designers erect rules, moderators, and algorithmic detection systems to keep out unwanted user behaviors. But new rules bring new opportunities to break them. On social media platforms, the grey zone of governance incentivizes content creators to make illicit content, thus engaging both consumers and the creators to spend more time and attention on these platforms.

Like traditional labor processes, the digital labor we examine on platforms ultimately serves capital. With an incessant supply of content that edges just past the rules, users stay hooked to keep watching, while creators continuously innovate new tactics to increase views. Platforms, by offering vague guidelines on acceptable content, encourage strategic rule-breaking. This ambiguity allows creators to test boundaries while enforcement ensures platforms maintain a “PG” and advertiser-friendly image. In this way, the labor process within the grey zone captures attention of both creators and users, and in turn, the grey zone increases profit in the platform economy.

Footnotes

Acknowledgements

For valuable feedback on earlier versions of this paper, the authors thank members of the Boston University's Precarity Lab (![]() ) and members of the “Algorithmic Control” workshop at the Max Planck Institute for the Study of Societies in Cologne, Germany in 2022, especially Kathleen Griesbach, Niels van Dorn, Valeria Pulignano, Georg Rilinger, Greetje Corporaal, and Alex Wood, and members of the “Shady Subjects” workshop at Cornell University in 2025, particularly Noah McClain and Lana Swartz. Finally, thanks for comments from the Kobe de Keere writing group at the University of Amsterdam.

) and members of the “Algorithmic Control” workshop at the Max Planck Institute for the Study of Societies in Cologne, Germany in 2022, especially Kathleen Griesbach, Niels van Dorn, Valeria Pulignano, Georg Rilinger, Greetje Corporaal, and Alex Wood, and members of the “Shady Subjects” workshop at Cornell University in 2025, particularly Noah McClain and Lana Swartz. Finally, thanks for comments from the Kobe de Keere writing group at the University of Amsterdam.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The first author, Ashley Mears, declares that she was paid by platforms via a publishing company for the content that she posted as part of the research activities. Neither the platforms nor the publishing company had prior access to her data, analyses, or writings, nor has any entity seen any of these writings prior to publication, beyond fact-checking selected statements. This research has been approved by the Institutional Review Board and cleared by the Conflict of Interest Committee at Boston University.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.