Abstract

Objective

Child and youth mental health problems are often assessed by parent self-completed checklists that produce dimensional scale scores. When converted to binary ratings of disorder, little is known about their psychometric properties in relation to classifications based on lay-administered structured diagnostic interviews. In addition to estimating agreement, our objective is to test for statistical equivalence in the test-retest reliability and construct validity of two instruments used to classify child emotional, behavioural, and attentional disorders: the 25-item, parent completed Ontario Child Health Study Emotional Behavioural Scales-Brief Version (OCHS-EBS-B) and the Mini International Neuropsychiatric Interview for Children and Adolescents-parent version (MINI-KID-P).

Methods

This study draws on independent samples (n = 452) and uses the confidence interval approach to test for statistical equivalence. Reliability is based on kappa (κ). Construct validity is based on standardized beta coefficients (β) estimated in structural equation models.

Results

The average differences between the MINI-KID-P and OCHS-EBS-B in κ and β were −0.022 and −0.020, respectively. However, in both instances, criteria for statistical equivalence were met in only 5 of 12 comparisons. Based on κ, between-instrument agreement on the classifications of disorder went from 0.481 (attentional disorder) to 0.721 (emotional disorder) but were substantially higher (0.731 to 0.895, respectively) when corrected for attenuation due to measurement error.

Conclusions

Although falling short of equivalence, the results suggest on balance that the reliability and validity of the two instruments for classifying child psychiatric disorder assessed by parents are highly comparable. This conclusion is supported by the high levels of agreement between the instruments after correcting for attenuation due to measurement error.

Introduction

In the 1980s, concern about the reliability of psychiatric diagnoses based on clinical judgement led to the development of structured diagnostic interviews (SDIs) to classify child and youth (herein child/ren) psychiatric disorders as present or absent. Accompanying the development of SDIs was a debate on the nature of child psychiatric disorder: was it a true class in the taxonomic sense or an underlying continuum?1,2 No evidence has emerged to indicate that common disorders such as generalized anxiety disorder (GAD) or major depressive disorder (MDD), conduct disorder (CD) or oppositional defiant disorder (ODD), and attention-deficit hyperactivity disorder (ADHD) represent true classes. Angold et al. 3 reported large differences in prevalence for the same individuals across SDIs using identical diagnostic criteria, indicating that classifications of child disorder depend on the way instrument developers convert symptoms into classifications. Despite the challenges of classifying disorder, they are needed by clinicians for setting treatment priorities, administrators for making resource allocation decisions, and researchers for conducting epidemiological studies. 4

Pickles and Angold

1

have called for instruments that can measure child psychiatric disorder dimensionally

There are theoretical reasons 4 and empirical evidence for expecting classifications of disorders derived from problem checklists to be as reliable and valid as classifications obtained using SDIs. The overall test-retest reliability of SDIs is modest (κ = 0.58), highly variable across studies, 9 and within reach of scale scores converted to classifications. 10 Furthermore, past empirical studies have been unable to identify validity differences between the two approaches.11-14

The objective of this paper is to determine if scale scores from the 25-item, parent completed Ontario Child Health Study Emotional Behavioural Scales-Brief Version (OCHS-EBS-B), converted to binary classifications of these problems, are as reliable and valid as corresponding disorders classified by the Mini International Neuropsychiatric Interview for Children and Adolescents-parent version (MINI-KID-P). In this study, we focus on lay-administered SDIs used in epidemiological studies and clinical research to classify psychiatric disorder as present or absent. This focus excludes the important role that SDIs can play when used by clinicians as aids to the diagnostic process. We hypothesize that (1) between-instrument differences in test-retest reliability and construct validity will be small and nonsignificant (statistically equivalent); or, and (2) agreement between instruments in the classifications of disorder will be substantial after correcting for attenuation due to measurement error.

The past empirical studies cited above exhibit one or more of the following limitations: relatively small sample sizes, the absence of reliability comparisons, the use of data “eyeballing” to make inferences, and measurement error compressing associations downwards. We have overcome these limitations by: combining independent samples to obtain a relatively large number of participants, comparing the test-retest reliability of the two instruments in the same time interval (1 to 2 weeks); using methods to correct for the attenuating effects of measurement error and implementing formal empirical tests of measurement equivalence.

Methods

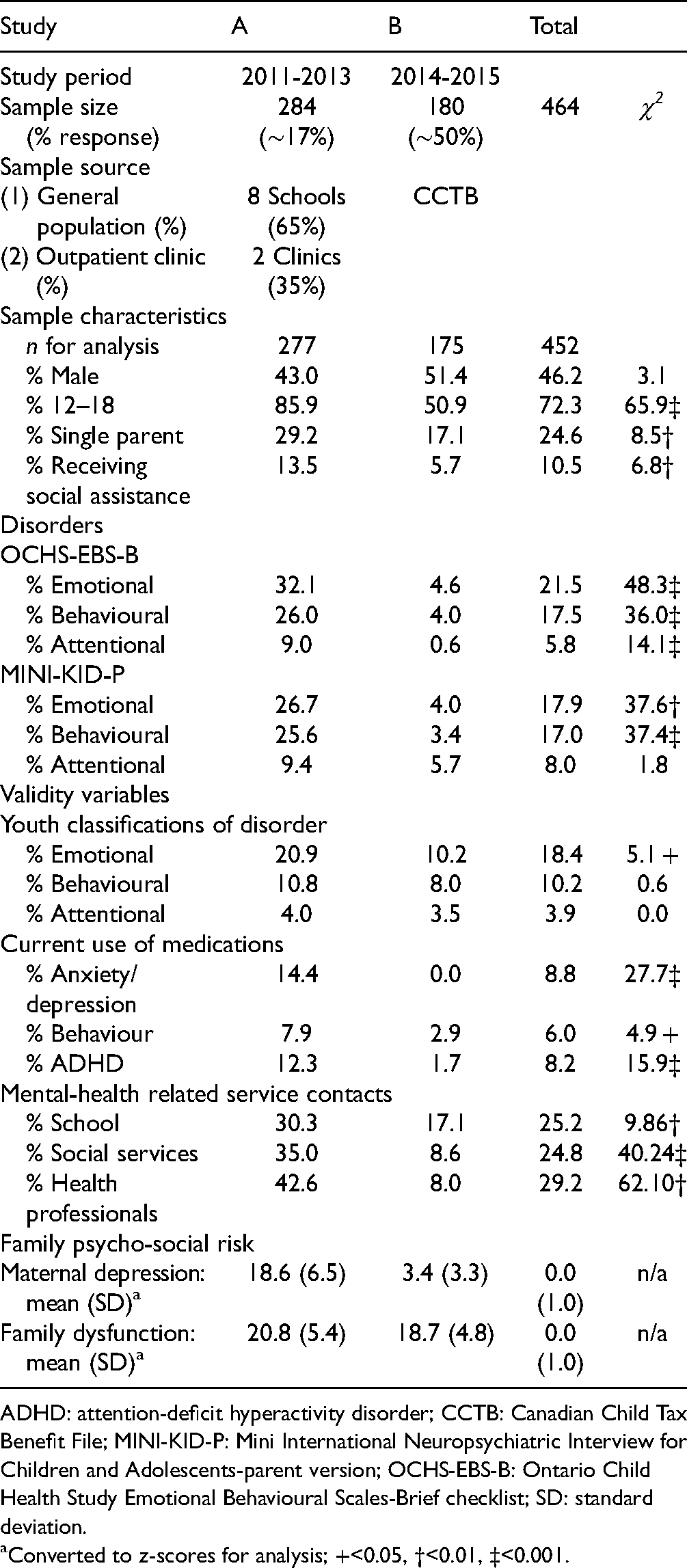

The two studies used in this report are described in Table 1: study A 15 and study B. 16

Study and Sample Characteristics.

ADHD: attention-deficit hyperactivity disorder; CCTB: Canadian Child Tax Benefit File; MINI-KID-P: Mini International Neuropsychiatric Interview for Children and Adolescents-parent version; OCHS-EBS-B: Ontario Child Health Study Emotional Behavioural Scales-Brief checklist; SD: standard deviation.

Converted to z-scores for analysis; +<0.05, †<0.01, ‡<0.001.

Participants

Of the 464 parent participants in the two studies, 452 had complete assessment data on the MINI-KID-P and OCHS-EBS-B on two occasions (Table 1)—meeting the inclusion criterion for our analyses. Net response in study A was 17% and in study B, 50%.

Ethical Considerations

Study A was approved by Research Ethics Committees at McMaster University, the School Boards and participating service providers. Informed written consent was obtained from participants. Study B procedures, including consent and confidentiality requirements, were approved by the Chief Statistician at Statistics Canada and were conducted according to the Statistics Act. Informed verbal consent was obtained.

Mental Health Disorders

Ontario Child Health Study Emotional Behavioural Scales-Brief Version. The OCHS-EBS-B is a 25-item problem checklist for completion by parents/caregivers of 4 to 17 years old. It is comprised of rating scales that measure three types of mental health problems 17 : emotional (8 items), behavioural (10 items), and attentional (7 items). Item response options are 0, 1, 2 (“never or not true,” “sometimes or somewhat true,” and “often or very true”). Raw scores are summed to form scale scores measuring each type of problem. The development and evaluation of the OCHS-EBS-B scales appear in the Appendix.

To convert the OCHS-EBS-B scale scores into classifications of disorder, their frequency distributions were examined in the 2014 Ontario Child Health Study 16 to find the score cut-points which identified children in the top 7.8% (emotional: 6+), 5.7% (behavioural: 6+), and 3.4% (attentional: 10+) that matched the world-wide prevalence of child major depressive or anxiety disorders, CD or ODD, and ADHD. 18 These cut-points were applied in each study to convert the OCHS-EBS-B scale scores into classifications of disorder independent of the MINI-KID-P.

Mini International Neuropsychiatric Interview for Children and Adolescents. The MINI-KID-P (parent version) and MINI-KID-Y (youth version) are SDIs that assess DSM-IV-TR disorders in children aged 6 to 17 years with a reference period of 6 months, except for CD which is one year. 19 Respondent specific test-retest reliability based on kappa (κ 20 ) goes from 0.67 (MDD) to 0.77 (ADHD) for parents and from 0.46 (ADHD) to 0.64 (MDD) for youth. 15 In our study, MINI-KID-P classifications of disorder were coded as (1) present or (0) absent and grouped as follows: emotional (MDD or GAD), behavioural (CD or ODD) and attentional (ADHD) to match the OCHS-EBS-B problem types.

Construct Validity Variables

Construct validity is a general approach to validation that works from a set of fundamental principles grounded in the philosophy of science to evaluate the meaning (informational content) of a measured construct by examining its strength of association with other variables. 21 We focus on variables expected to be positively associated with the disorders and test measurement equivalence by comparing associations between latent variable measures of disorder based on the MINI-KID-P and OCHS-EBS-B classifications of disorder with latent variable measures of youth reported classifications of disorder; and three related constructs measured as latent variables: current use of prescription medications for child mental health problems; child mental health-related service contacts with schools, service organizations or health professionals; and family psycho-social risk.

Youth Classifications of Disorder. Youth were administered the MINI-KID-Y at times 1 and 2 and classified with disorder as present or absent at each administration using the same groupings created for parents: emotional (MDD or GAD), behavioural (CD or ODD), and attentional (ADHD).

Current use of Prescription Medications for Mental Health Problems. At time 1, a latent variable measure of medication use was based on three binary indicator variables derived from parent interview responses to a stem question “Is <child> currently taking any prescribed medication?” and follow-up “What does <child> take this medication for: (a) hyperactivity, (b) behavioural problems, (c) depression or anxiety?”

Mental Health-related Service Contacts. At time 1, a latent variable measure of mental health-related service contacts was based on three binary indicator variables derived from parent interview responses to the following groups of questions: (1) “In the past 6 months, did <child>, or you see or talk to anyone from school about any emotional or behavioural problems?” (contact with schools); (2) “Has <child> ever been away overnight in: a foster or group home? a detention centre or juvenile centre; a police station or jail?”, “In the past 6 months did <child>, or you see or talk to anyone: from the Children’s Aid Society; from court, probation or other justice services; from some other service for children with emotional or behavioural problems?” (contact with service organizations); (3) “During the past 6 months … did <child>, or you, personally see any of the following about <child> emotional, behavioural or learning problems: a paediatrician or family doctor (general practitioner); a psychiatrist, psychologist or social worker?” (contact with health professionals).

Family Psycho-social Risk. At time 1, a latent variable measure of family psycho-social risk was based on scale score measures of maternal depressed mood and family dysfunction derived from parent responses on a self-completed questionnaire. In study A, maternal depressed mood is measured by the 12-item adaptation of the Center for Epidemiologic Studies Depression (CES-D) Scale 22 and in study B, by the K6. 23 Used often in empirical studies, the characteristics and psychometric properties of both measures are well described. In studies A and B, internal consistency reliabilities (ICR: alpha) are 0.89 (CES-D) and 0.79 (K6).

Family dysfunction is measured in both studies by the 12-item general functioning subscale of the McMaster Family Assessment Device. 24 The items describe family behaviour and relationships in six dimensions: problem-solving, communication, roles, affective responsiveness, affective involvement and behavioural control. Item responses go from (1) strongly agree to (4) strongly disagree. Positive items are reverse-coded; and all items, summed to form scale scores. ICRs were 0.89 (study A) and 0.83 (study B). The scale correlates predictably with alternative measures of family functioning. 25

Analysis

We use the confidence interval (CI) approach to test our hypotheses of statistical equivalence in the reliability and validity of the MINI-KID-P and OCHS-EBS-B.

26

This test computes between-instrument mean differences in the parameters of interest (i.e.,

Test-Retest Reliability

We use κ, a chance-corrected measure of agreement scaled from 0 to 1 to quantify the test-retest reliabilities of the MINI-KID-P and OCHS-EBS-B for classifying the disorders

To determine if the reliability of the two instruments are statistically equivalent between studies, recruitment samples (general population vs. clinical), child sex or age, we extend the WLS approach in separate analyses to estimate and compare, one at a time, the sub-group specific reliabilities for the MINI-KID-P and OCHS-EBS-B (e.g., males vs. females). In these analyses, to increase statistical power by decreasing the SEs of the estimates, the three disorder types are analysed altogether rather than one at a time. Each of the 452 participants contributes three data points, one for each disorder type, to the reliability estimates. The number of participants remains the same (n = 452) but the number of observations triples (442 × 3 = 1,326). The nesting or clustering of observations within participants (intra-class correlation) is taken into account in the statistical tests.

Agreement Between Instruments

We use κ to quantify between-instrument agreement in the classification of disorders at times 1 and 2. Based on the instrument test-retest reliabilities observed in our study and the sample correlation method described by Moss 28 , we also show the estimated levels of agreement and their 95% CIs corrected for attenuation due to measurement error.

Construct Validity

We use β coefficients derived from structural equation models (SEMs) to estimate and compare the strength of association of the construct validity variables with the disorders classified by the MINI-KID-P and OCHS-EBS-B. All of the variables in the SEMs are measured as latent variables. A latent variable is unobserved—a prediction based on the pattern of associations among a set of observed indicator variables. The variance associated with a latent variable is free of measurement error in the sense that residual variance associated with the indicator variables, not predictive of the latent variable, is removed.

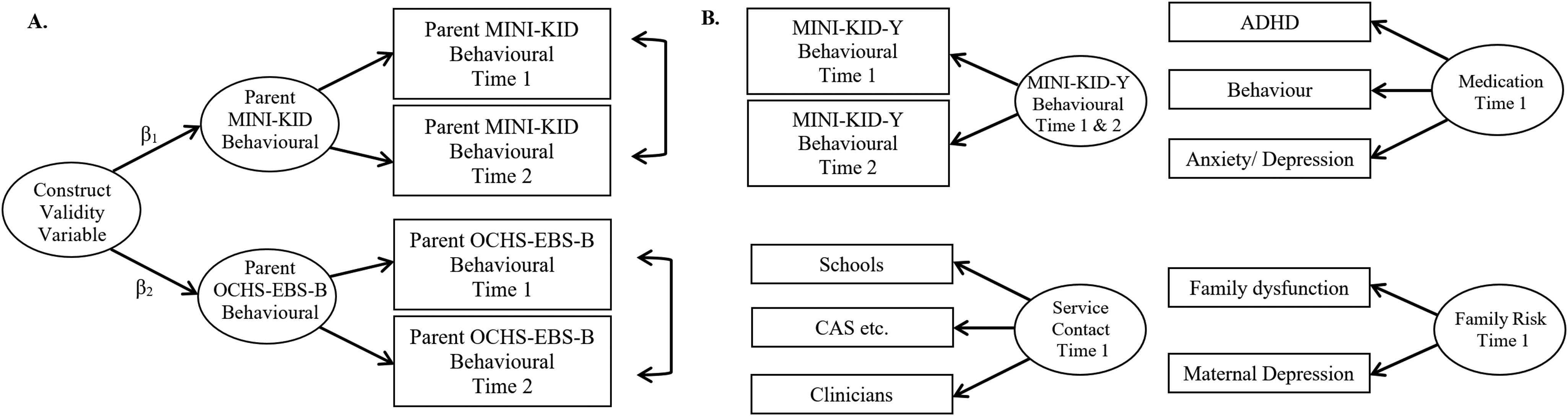

As illustrated in Figure 1A, each disorder (emotional, behavioural and attentional) classified by the MINI-KID-P and OCHS-EBS-B is measured as a latent variable based on its time 1 and 2 classifications (indicator variables). The latent variable measures of disorder based on youth interviews draw on their time 1 and 2 MINI-KID-Y classifications in exactly the same way. As a result, the SEMs involving youth classifications are specific to each disorder. The construct validity variables are also measured as latent variables (Figure 1B). These latent variables draw only on indicator variables assessed at time 1. In the comparison of the MINI-KID-P and OCHS-EBS-B, each disorder is modelled as a function of the same construct validity variable (e.g., current use of prescription medications examined separately for emotional, behavioural and attentional disorders). These three construct validity variables are expected to be associated generally with all of the disorders. Although prescription medications are linked with individual disorders, we created a latent variable measure of prescription medications to reduce the impact of misclassification errors associated with parent responses.

Illustration of latent variable measures developed for SEMs. (A) Parent assessment of behavioural problems based on MINI-KID-P classifications of CD or ODD at times 1 and 2 and OCHS-EBS-B classifications of behavioural problems at times 1 and 2. Construct validity tests if the 90%CI around β1 – β2 falls within −0.20 and+0.20. (B) The construct validity variables include youth assessments of behavioural problems based on MINI-KID-Y classifications of CD or ODD at times 1 and 2 (separate latent variables are developed for emotional and attentional problems); and three construct validity variables: current use of prescribed medications; service contacts with schools, service organizations or health professionals and family psycho-social risk. CI: confidence interval, MINI-KID-P: Mini International Neuropsychiatric Interview for Children and Adolescents interview-parent version; OCHS-EBS-B: Ontario Child Health Study Emotional Behavioural Scales-Brief checklist; SEM: structural equation models.

To test for statistical equivalence in the βs, we specify separate SEMs for each disorder. There are three latent variable measures in each SEM: two for the same disorders assessed by each instrument and one for the construct validity variable. We used MPlus 7.4 29 for the analyses. MPlus offers a generalized measurement component applicable to dichotomous and ordered categorical indicator variables. 30 Although the measurement scale is the same for both instruments (0, 1), the prevalences (variances) are different. As a result, we use standardized variables in the SEMs to ensure that the comparisons of βs are made on a commensurate scale. Adequate model fit was defined as values ≥0.98 for the comparative fit index (CFI, range 0 to 1.0) and ≤0.05 for the root mean squared error of approximation (RMSEA).

Results

The sample characteristics and distribution of variables appear in Table 1. In study A versus B, there are more 12 to 18 year olds, greater levels of socio-economic disadvantage (e.g., single parents) and a higher prevalence of child disorder.

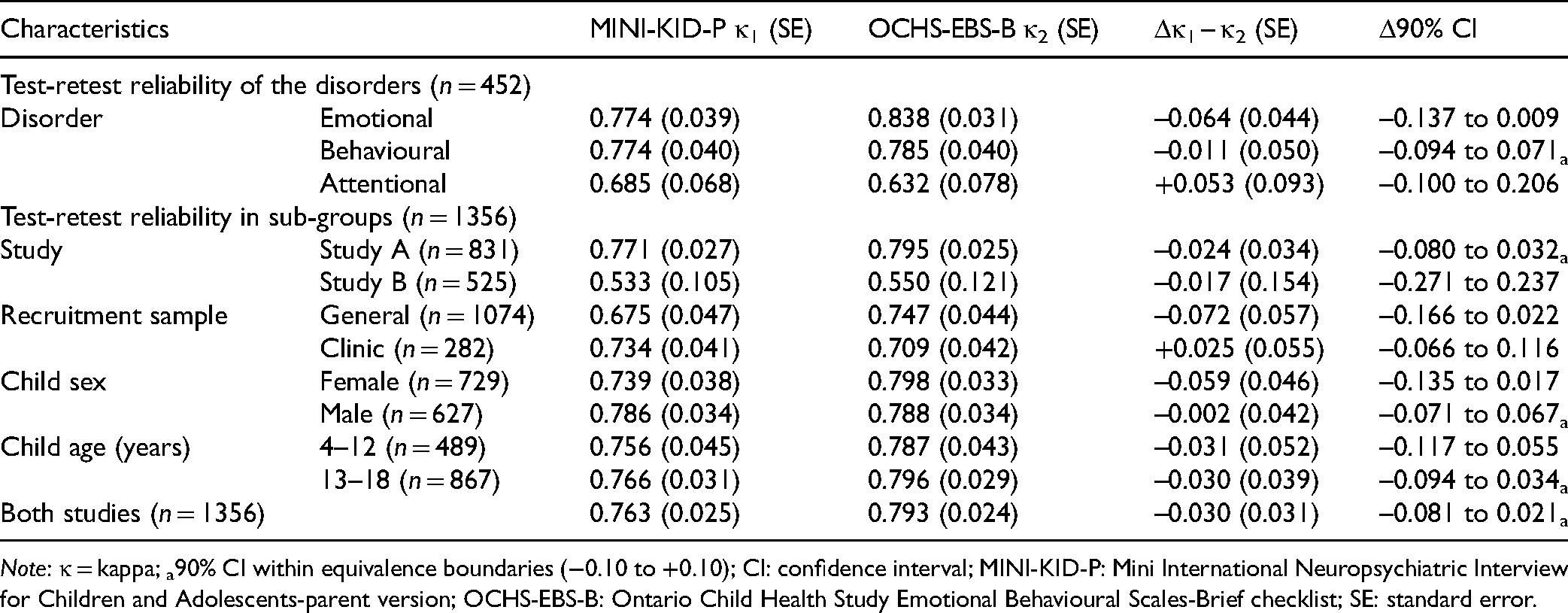

Table 2 shows the test-retest reliability outcomes. Differences between the MINI-KID-P and OCHS-EBS-B (κ1–κ2) in the classification of disorders goes from −0.064 (emotional disorder) to +0.053 (attentional disorder) with an average difference of −0.007 (not shown). Only behavioural disorder (−0.041) meets the criteria for statistical equivalence (see a). In the subgroup analysis, the most extreme differences are associated with the recruitment sample: −0.072 (general population) and +0.025 (clinic). The average difference in the subgroup analyses, −0.027. The criteria for equivalence are met for Study A, males, youth aged 13 to 18 years and for both studies combined.

Test-Retest Reliability (κ) of Parent MINI-KID-P and OCHS-EBS-B for Classifying Individual Child Disorders and Combined Disorders Grouped by Study, Recruitment Sample, Child Sex and Age.

Note: κ = kappa; a90% CI within equivalence boundaries (−0.10 to +0.10); CI: confidence interval; MINI-KID-P: Mini International Neuropsychiatric Interview for Children and Adolescents-parent version; OCHS-EBS-B: Ontario Child Health Study Emotional Behavioural Scales-Brief checklist; SE: standard error.

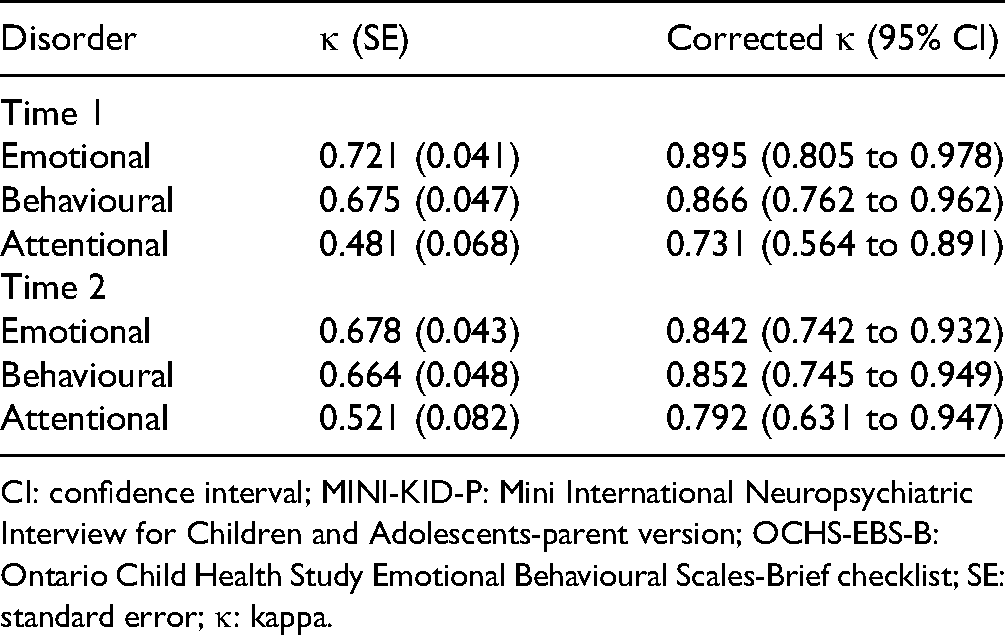

Table 3 shows agreement between the MINI-KID-P and OCHS-EBS-B on the classifications of disorder at times 1 and 2. Observed κ goes from 0.481 (attentional at time 1) to 0.721 (emotional at time 1). After correcting for attenuation due to measurement error, the corresponding estimates are substantially higher at 0.731 and 0.895, respectively.

Agreement (κ) Between the MINI-KID-P and Parent OCHS-EBS-B on the Classification of Child Disorders: Observed and Corrected for Attenuation due to Measurement Error (n = 452).

CI: confidence interval; MINI-KID-P: Mini International Neuropsychiatric Interview for Children and Adolescents-parent version; OCHS-EBS-B: Ontario Child Health Study Emotional Behavioural Scales-Brief checklist; SE: standard error; κ: kappa.

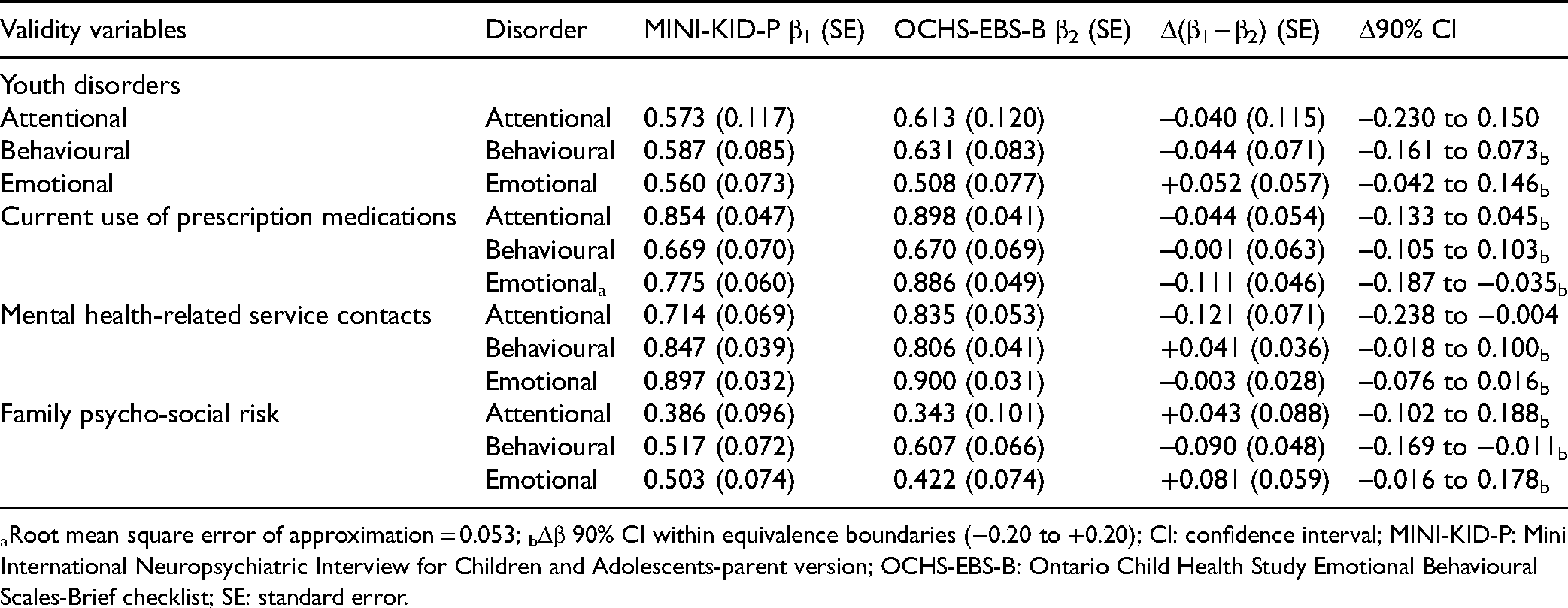

Table 4 shows the βs associated with the MINI-KID-P and OCHS-EBS-B classifications of disorder when modelled as functions of the construct validity variables. Model fit (CFI) is ≥0.99 for all models (not shown). With one exception, the RMSEAs are ≤0.05 (Table 4). The average difference between instruments (β1–β2) is −0.020 (not shown). The criteria for statistical equivalence are met in 5 of 12 comparisons.

Structural Equation Model Regression Results of Parent Classifications of Disorder (MINI-KID-P and Parent Reported OCHS-EBS-B) on Latent Variable Covariates.

aRoot mean square error of approximation = 0.053; bΔβ 90% CI within equivalence boundaries (−0.20 to +0.20); CI: confidence interval; MINI-KID-P: Mini International Neuropsychiatric Interview for Children and Adolescents-parent version; OCHS-EBS-B: Ontario Child Health Study Emotional Behavioural Scales-Brief checklist; SE: standard error.

Discussion

In our study, average between-instrument differences in test-retest reliability (Δκ) were small and favoured the OCHS-EBS-B over the MINI-KID-P. However, only 5 of 12 comparisons met the criteria for statistical equivalence. Correcting for attenuation due to measurement error revealed that between-instrument agreement in the classifications of disorder based on κ was >0.80 for emotional and behavioural disorders and slightly lower for attentional disorder. This very high level of the agreement indicates substantial overlap between instruments in their classifications of disorder—a finding that can only become apparent when adjustments are made for measurement error. Our analyses of construct validity differences between instruments mirrored the pattern of results observed for reliability: on average Δβ −0.020 favouring the OCHS-EBS-B over the MINI-KID-P. However, the criteria of statistical equivalence were met in only 5 of 12 comparisons. On balance, these findings are suggestive that the reliability and validity of the OCHS-EBS-B and MINI-KID-P completed by parents (mostly mothers) are similar if not equivalent for classifying disorders in children. However, there are several limitations and challenges associated with this work.

Study Limitations and Challenges

One, nonresponse overall was particularly high in study A and we lack the information to evaluate the representativeness of this sample. In study B, the prevalence of OCHS-EBS-B disorders should be the same as the OCHS survey population but it is lower, suggesting that there was either selective nonresponse or the reliability sample was chosen in a geographical area at lower risk for the disorder. The lower estimates of reliability in study B are the product of low prevalence: κ estimates of reliability are compressed downwards under conditions of low prevalence, particularly <5.0%. Nonresponse could affect estimates overall or exert a differential effect on reliability and validity across instruments. It seems unlikely that nonresponse would have a differential effect on the instruments’ reliability and validity—there is no obvious mechanism.

Two, we are unaware of any studies in psychiatry that have attempted to demonstrate psychometric equivalence between alternative instruments used to classify psychiatric disorder. Accordingly, there are no precedents for identifying the SESOI. The SESOIs selected in our study are conservative which has resulted in low statistical power. Doubling the sample size to 900 would make it possible for us to show that the 90% CI for observed between-instrument differences in κ =±0.04 would fall within an equivalence boundary of ±0.10. To date, there have been no measurement studies of this size in child psychiatry and the prospects for funding such studies are low.

Three, in testing for construct validity differences between the two instruments, we selected three variables that were “independent” of the specific questions/items and instrumentation approach of the MINI-KID-P and OCHS-EBS-B. We expected that these variables would exhibit relatively large, nonspecific associations with the three disorders classified by the instruments. Although the use of the MINI-KID-Y violates the principle of independence (i.e., relies on the same content and similar instrumentation as the MINI-KID-P which should positively bias its association with the MINI-KID-P), we believe that the absence of between-instrument differences in the βs associated with the MINI-KID-Y classification of emotional disorder represents evidence of equivalence.

Four, the absence of a strong, evidentiary-based consensus on the variables that might be used to quantify instrument differences in construct validity is a serious challenge. 4 To the best of our knowledge, no studies have compared the construct validity of alternative SDIs. As a result, we are not able to compare our findings with previous work and have a little context for interpreting the effect sizes observed in our study.

Five, in this article, we focus exclusively on the measurement objective of classifying child disorders based on the OCHS-EBS-B scale scores converted to binary ratings of disorder and compared with classifications of disorder derived from lay-administered SDIs. In contrast, clinicians may use SDIs as part of the diagnostic process to identify the disorder and its origin and to engage patients and formulate treatment plans. This is an entirely different context beyond the scope of our study.

Conclusions

In the years ahead, we believe that governments will focus on comprehensive approaches to addressing the mental health problems of children. 31 This will require the ability to monitor these problems in both the general population and in those accessing mental health services. A prerequisite for monitoring these problems will be simple, brief, inexpensive measurement instruments that are psychometrically sound, flexible in their administration, able to measure mental health problems as dimensional phenomena or, converted to binary measures, classify corresponding disorders as present or absent on a par with SDIs. Importantly, these instruments will need to exhibit near-identical psychometric properties when used in the general population and clinical samples. We believe that the OCHS-EBS-B go a long way to satisfying these extensive and detailed requirements.

Given the striking differences in cost and burden between SDIs and problem checklists, it is surprising how little research has been directed towards testing the extent to which problem checklists can substitute for SDIs in classifying disorder as present or absent. Additional studies addressing this question are urgently needed to provide clinicians, administrators and researchers with appropriate evidence for making cost-effective decisions about using problem checklists to monitor child mental health needs in the general population and in clinical settings.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Institute of Human Development, Child and Youth Health, research operating grant 125941 from the Canadian Institutes of Health Research (CIHR) and Health Services Research Grant 8-42298 from the Ontario Ministry of Health and Long-Term Care (MOHLTC) and with support from MOHLTC, the Ontario Ministry of Children and Youth Services and the Ontario Ministry of Education. Dr. Duncan is supported by a Research Early Career Award from Hamilton Health Sciences Foundation.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.