Abstract

Objective:

The need for digital tools in mental health is clear, with insufficient access to mental health services. Conversational agents, also known as chatbots or voice assistants, are digital tools capable of holding natural language conversations. Since our last review in 2018, many new conversational agents and research have emerged, and we aimed to reassess the conversational agent landscape in this updated systematic review.

Methods:

A systematic literature search was conducted in January 2020 using the PubMed, Embase, PsychINFO, and Cochrane databases. Studies included were those that involved a conversational agent assessing serious mental illness: major depressive disorder, schizophrenia spectrum disorders, bipolar disorder, or anxiety disorder.

Results:

Of the 247 references identified from selected databases, 7 studies met inclusion criteria. Overall, there were generally positive experiences with conversational agents in regard to diagnostic quality, therapeutic efficacy, or acceptability. There continues to be, however, a lack of standard measures that allow ease of comparison of studies in this space. There were several populations that lacked representation such as the pediatric population and those with schizophrenia or bipolar disorder. While comparing 2018 to 2020 research offers useful insight into changes and growth, the high degree of heterogeneity between all studies in this space makes direct comparison challenging.

Conclusions:

This review revealed few but generally positive outcomes regarding conversational agents’ diagnostic quality, therapeutic efficacy, and acceptability, which may augment mental health care. Despite this increase in research activity, there continues to be a lack of standard measures for evaluating conversational agents as well as several neglected populations. We recommend that the standardization of conversational agent studies should include patient adherence and engagement, therapeutic efficacy, and clinician perspectives.

Introduction

The need for digital tools in mental health is clear, with insufficient access to mental health services worldwide and clinical staff increasingly unable to meet rising demand. 1 The World Health Organization (WHO) reports that depression is a leading cause of disability worldwide. 2 In 2013, the economic cost of treatment for mental health and substance abuse disorders in the United States alone was nearly US$200 billion. 3 Contributing to mental health vulnerability and burden, social isolation and loneliness has been quantified as an epidemic associated with an estimated US$6.7 billion in treatment. 4 Response to emergent global situations such as COVID-19 is exacerbating the already-limited access to services, and the urgent need for innovation around access to mental health care has become clear. Innovation offered via artificial intelligence and conversational agents has been proposed as one means to increase access and quality of care. 5,6

Conversational agents, also known as chatbots or voice assistants, are digital tools capable of holding natural language conversations and mimicking human-like behavior in task-oriented dialogue with people. Conversational agents exist in the form of hardware devices such as Amazon Echo or Google Home as well as software apps such as Amazon Alexa, Apple Siri, and Google Assistant. It is estimated that 42% of U.S. adults use digital voice assistants on their smartphone devices, 7 and some industry studies claim that nearly 24% of U.S. adults own at least 1 smart speaker device. 8 With such widespread access to conversational agents, it is understandable that many are interested in their potential and role in health care. Early research has explored the use of conversational agents in a diverse range of clinical settings such as helping with diagnostic decision support, 9 education regarding mental health, 10 and monitoring of chronic conditions. 10 In 2018, the most common condition that conversational agents claimed to cover was related to mental health. 10 Other conditions included hypertension, asthma, type 2 diabetes, obstructive sleep apnea, sexual health, and breast cancer. 10

In today’s evolving landscape of rapidly changing technology, growing global health concerns, and lack of access to high-quality mental health care, use and evaluation of these conversational agents of mental health continue to evolve. Since our team’s 2018 review on the topic, many new conversational agents, products, and research studies have emerged. 11 While conversational agents have been posited to benefit patients and providers, many risks of conversational agents such as possibly disrupting the therapeutic alliance have not been fully elucidated. 12 As emerging research in suicide prevention is evaluating the use of automated processes to detect risk—the need for a clear understanding of the current state of the field is patent. Some conversational agents offer self-help programs to reduce anxiety and depression, 13 while others are continuingly being assessed for use as diagnostic aids 14 with goals of entering into clinical settings.

Like any digital tool, conversational agents raise concerns and complications surrounding privacy breaches and lack of guidance on regulatory/legal duties. 11 Individuals may be using conversational agents and other digital tools with or without recommendation from their physician or psychiatrist. Clinicians need to be aware of what the current evidence and actual abilities of these conversational agents are rather than relying on company marketing materials, which may offer a biased and overvalued estimation. To understand which conversational agents are effective and their potential uses—as well as harms and risks—researchers must continue to correctly understand the kinds of effects they may posit within and outside of the clinical mental health setting.

Despite this increase in the availability of conversational agents, in our prior review, we found a lack of higher quality evidence for any type of diagnosis, treatment, or therapy in published mental health research using conversational agents. 15 Another review in 2019 reported only a single randomized controlled trial measuring the efficacy of conversational agents in general. 10 It is also important to consider the role of industry-sponsored studies and potential adverse impacts this may have on study design. 16 Our prior preliminary systematic review of the landscape was performed in 2018, 8 finding high heterogeneity regarding outcomes and reporting metrics for the conversational agents, missingness of information, crucial factors such as engagement, adverse events, and more. Now 2 years later and given the advent of new research, products, and claims, in this review, we revisit the flourishing mental health conversational agent space, quantifying these factors and suggesting novel or alternative approaches where appropriate.

Methods

For this comparative review, the same terms as defined in our prior systematic review on conversational agents were used; that is, a combination of keywords including “conversational agent” or “chatbot” without other filter parameters for peer-reviewed published papers (and not conference proceedings, poster abstracts, etc.) in the English language only between July 2018 and January 2020. These terms were selected initially as they provided the largest set of relevant articles. This literature search was conducted in January 2020 on the same select databases (PubMed, Embase, PsycINFO, and Cochrane), with the exception of Web of Science and IEEE Xplore, primarily due to a lack of clinically relevant literature, as determined in our prior review. Title, abstract, and full-text screening and full-text data extraction phases were conducted by 2 authors (A.N.V and D.W.L) through discussion. Disagreements in screening phases were resolved through discussion and majority consensus. Reasons for exclusion were compiled. From included articles, data extraction comprised of study characteristics (duration of study, conversational agent name, ability for unconstrained natural language, sample size, mean age, sex), study outcomes and engagement measures, and conversational agent features. Ability for unconstrained natural language was also assessed, as this feature could pose serious safety risks. 17

Studies were selected that measured the effect of conversational agents on patients with serious mental illness (SMI): major depressive disorder, schizophrenia spectrum disorders, bipolar disorder, or anxiety disorder, either diagnosed or self-reported. SMI was chosen as the primary population of focus due to noticeable trends in these areas, whereas conversational agents in other psychiatric disorders with lower prevalence have not been substantially studied. Notably, substance abuse disorders were included in the prior review and are here excluded in order to narrow our focus, as the use of conversational agents in this population may merit its own in-depth review today. Given that conversational agents have many forms, it was ensured that any selected study was agreed upon by all authors.

Studies were excluded if the study protocol did not measure the direct effect of the use of a conversational agent or did not at all involve the conversational agent in diagnosis, management, or treatment of SMI. Studies were also excluded if the conversational agent used by participants according to the study protocol did not dynamically generate its content through natural language processing; for example, “Wizard of Oz”–style conversational agents that match input dialogue or query to recycled statements from other users did not qualify. Abstracts, reviews, and ongoing clinical trials were excluded. Non-English-language manuscripts were excluded.

Results

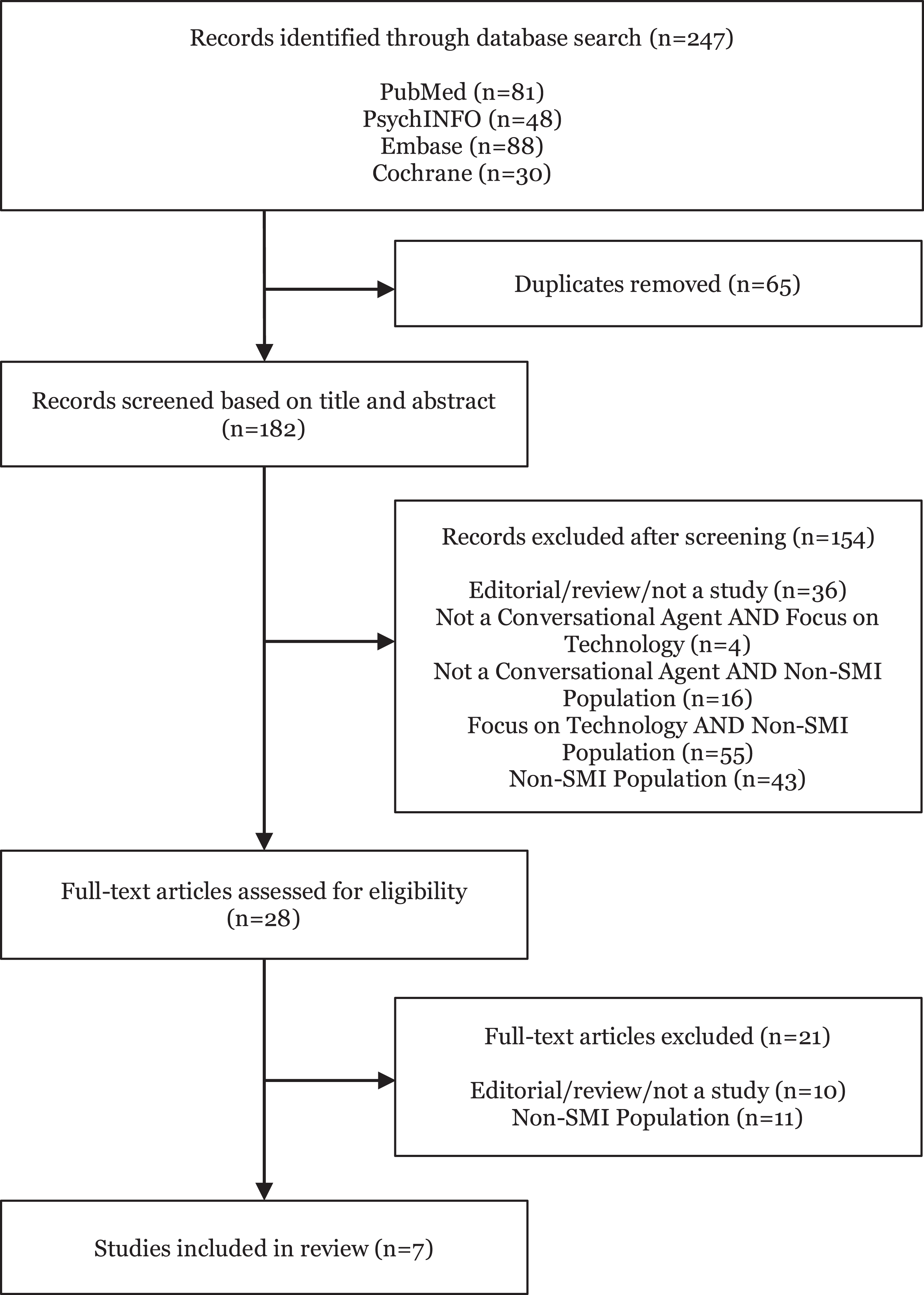

Of the 247 new references identified from search terms applied to selected databases, 65 duplicates were removed and 154 were screened out based on title and abstract. Of the 28 studies identified for full-text screening, 11 were not relevant to our aims related to study population. Only 7 studies were identified as relevant for the data extraction phase. Figure 1 depicts the detailed Preferred Reporting Item for Systematic Reviews and Meta -Analyses (PRISMA) diagram.

Preferred Reporting Item for Systematic Reviews and Meta-Analyses (PRISMA) diagram.

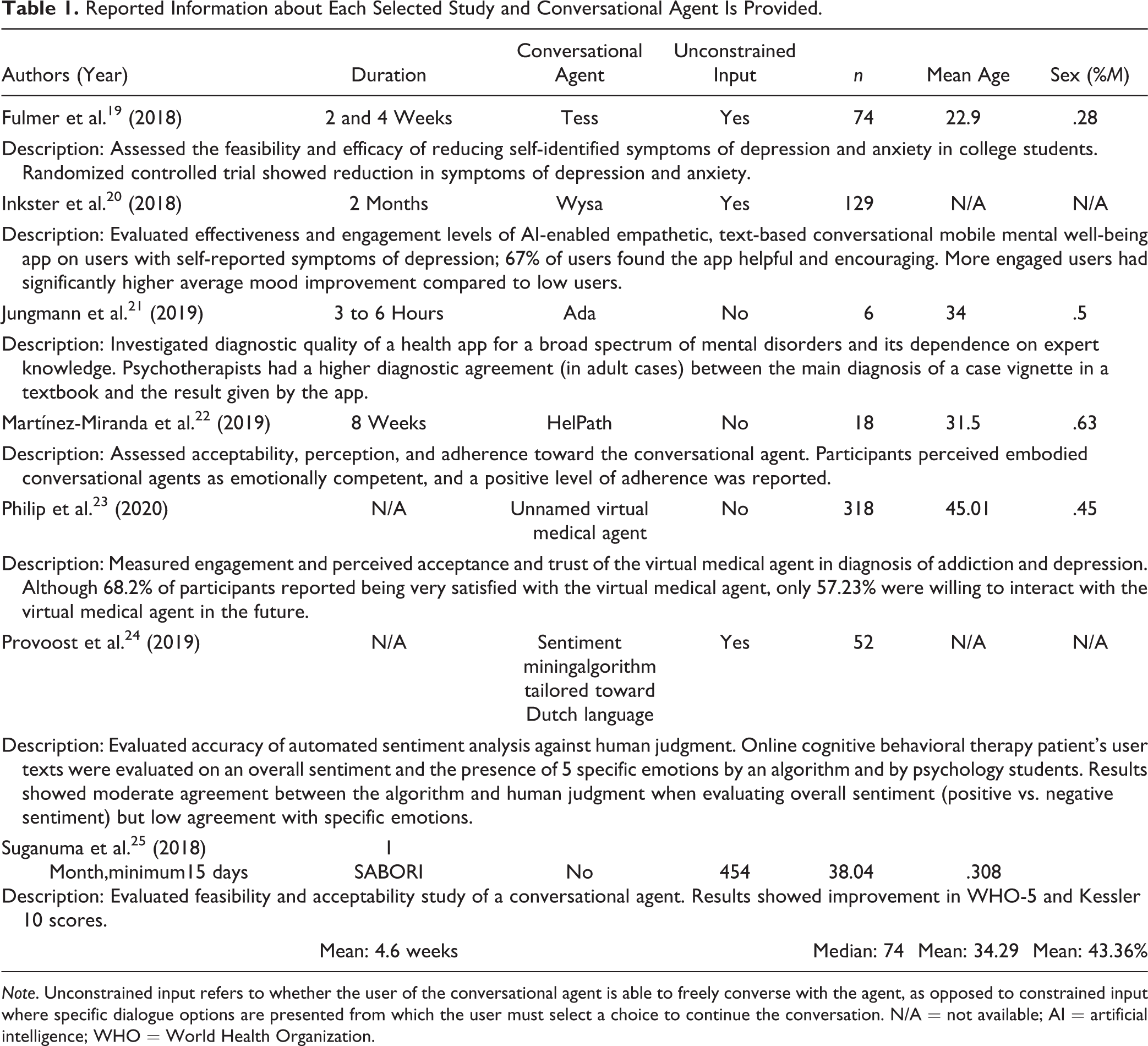

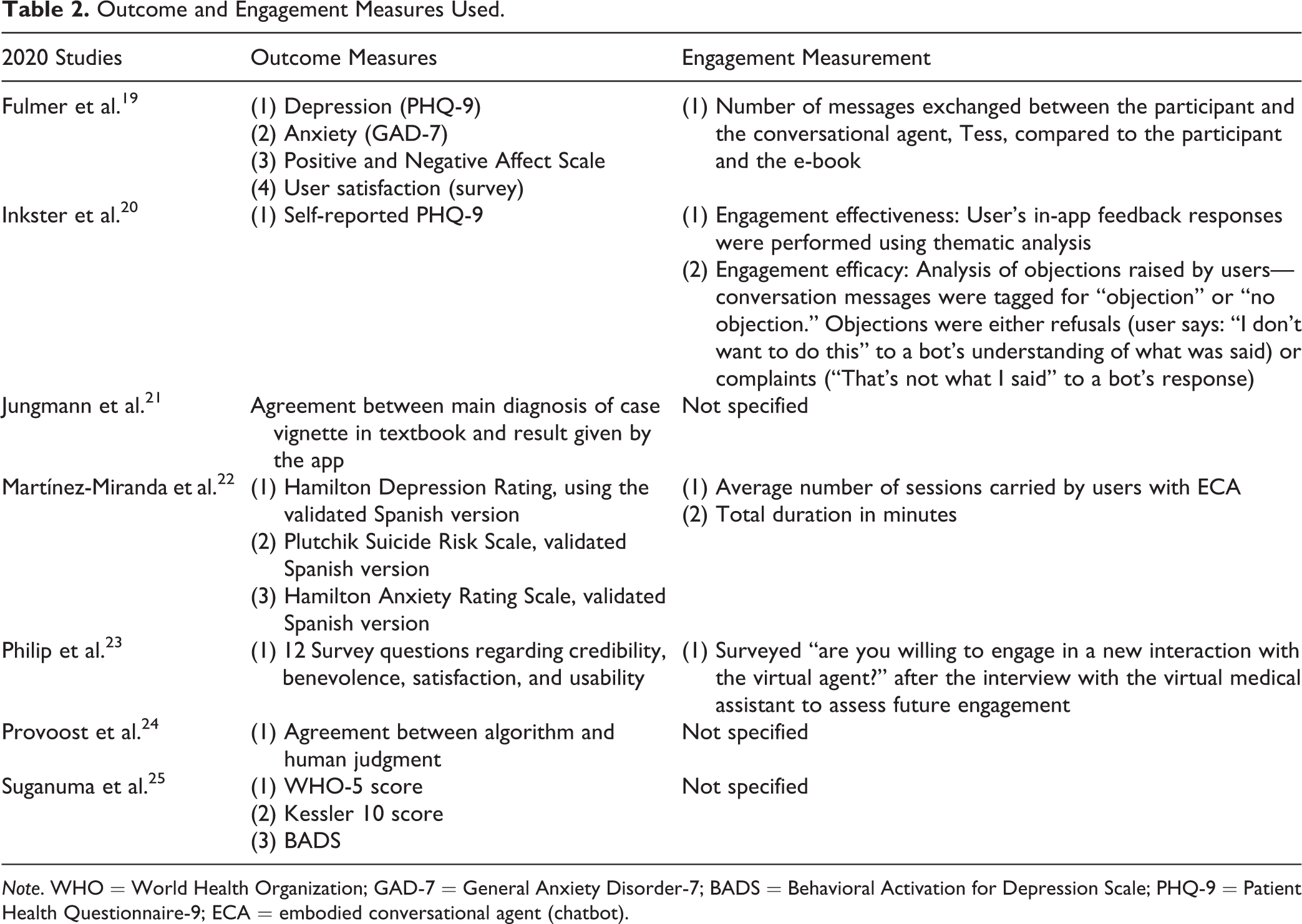

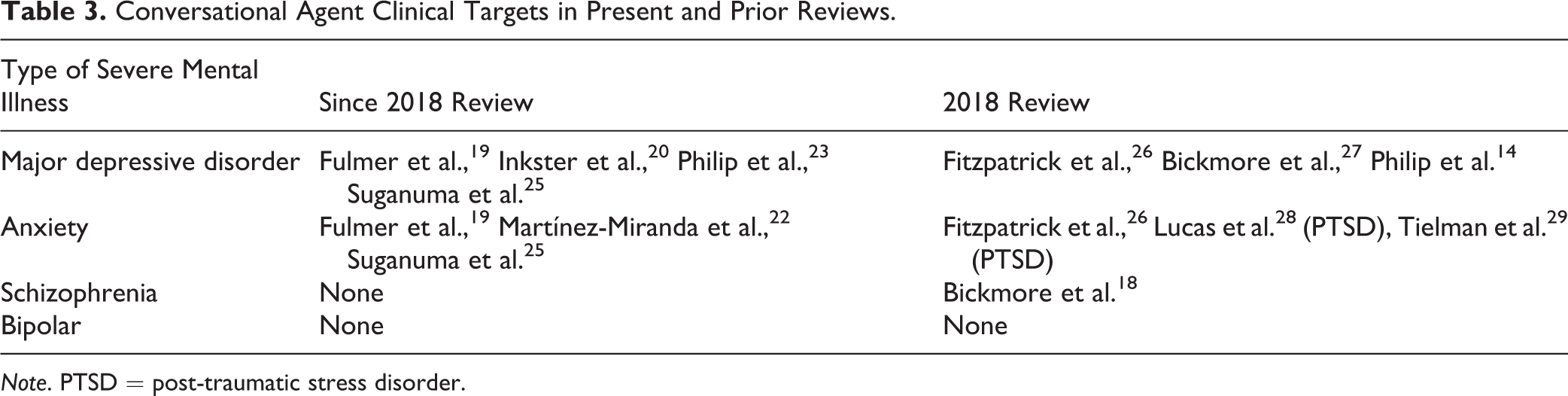

Summary data for the 7 studies in Table 1 are relatively similar to our prior review in 2018. The mean age of participants in the study was 34.29. The mean number of participants was 74. The mean study duration was 4.6 weeks. Measures examined are included in Table 2. Notably, only 4 of 7 studies included adherence or engagement measures. In comparison, all studies in 2018 had some level of engagement measure. Table 3 shows clinical targets of each study; 4 studies investigated major depressive disorder, and 3 examined anxiety disorders. While our 2018 review contained a single study that investigated schizophrenia, 18 schizophrenia and bipolar disorders were not examined in any of the studies in this review.

Reported Information about Each Selected Study and Conversational Agent Is Provided.

Outcome and Engagement Measures Used.

Conversational Agent Clinical Targets in Present and Prior Reviews.

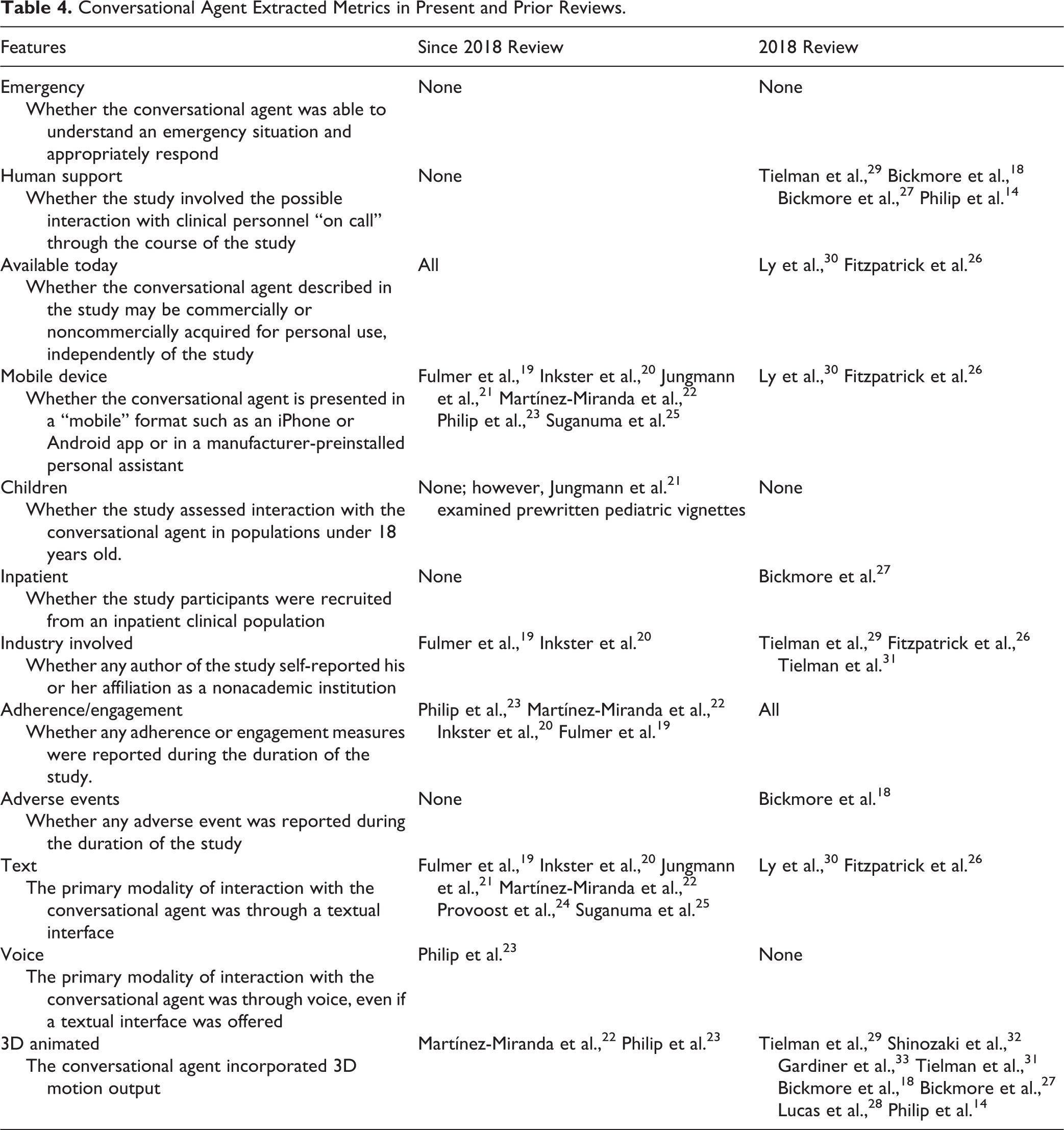

Overall, features of the conversational agents in Table 4 were mostly unchanged in comparison to 2018. Similar to our prior review, there continued to be no inclusion of children or consideration for emergency situations in these studies as well as minimal reporting of adverse effects. Notably, more conversational agents examined within the past 2 years were available on text and mobile device interface rather than other modalities (such as 3D avatars, with or without motion output) as was found in our 2018 review.

Conversational Agent Extracted Metrics in Present and Prior Reviews.

Discussion

Conversational agents continue to gain interest, given their potential to expand access to mental health care. In this updated review, 7 new studies were included. Two of these studies focused on assessing diagnostic quality, 3 studies examined therapeutic efficacy, and 2 studies evaluated the acceptability. Compared to our prior review in 2018, among the 7 new studies, there continues to be no consistent measure to evaluate engagement, yet more conversational agents are now available on mobile devices. Over half of the conversational agents were focused on depression, while schizophrenia and bipolar disorders had no representation in research output in the last 2 years.

Diagnostic Quality, Therapeutic Efficacy, and Acceptability

Two new studies focused on the diagnostic quality of the conversational agents when compared to a gold standard. Jungmann et al. compared the diagnosis of mental disorders in the conversational agent Ada versus psychotherapists, psychology students, and laypersons and concluded that the conversational agent had high diagnostic agreement with psychotherapists and moderate diagnostic agreement with psychology students and laypersons. 21 The conversational agent had lower diagnostic agreement with all participants in child and adolescent cases, which suggests that pediatric cases may be more nuanced. Provoost et al. compared the accuracy of an automated sentiment analysis against human judgment. User texts were evaluated on overall sentiment and the presence of specific emotions detected by an algorithm and psychology students. Results showed moderate agreement between algorithm and human judgment in evaluating overall sentiment (either positive or negative); however, there was low agreement with specific emotions such as pensiveness, annoyance, acceptance, optimism, and serenity. These results suggest that there continues to be room for improvement in the diagnostic quality of these particular conversational agents.

Three studies examined the therapeutic efficacy of different conversational agents. Fulmer et al. found that the conversational agent Tess was able to reduce self-identified symptoms of depression and anxiety in college students. 19 Inkster et al. studied the conversational agent Wysa and found users who were more engaged with the conversational agent had significantly higher average mood improvement compared to lower engagement users. 25 Suganuma et al. found that the conversational agent SABORI was effective in improving metrics on WHO-5, a measure of well-being, and Kessler 10, a measure of psychological distress on the anxiety–depression spectrum. 20 While these results show promise, the effect of conversational agents as an adjunct to in-person psychiatric treatment has been understudied. 12 It is unclear whether these results are generalizable and applicable to a broader population, given inadequate participant characterization in the included studies. Further research is required to determine appropriate indications for the use of adjunctive conversational agents.

Two studies specifically evaluated the acceptability of the conversational agents by patients. Martínez et al. assessed acceptability, perception, and adherence of users toward HelPath, a conversational agent that is used to detect suicidal behavior. 22 Participants perceived HelPath as emotionally competent and reported a positive level of adherence. Philip et al. found that the majority (68.2%) of patients rated the virtual medical agent positively very satisfied; 68.2% of patients “totally agreed” that the virtual medical agent was benevolent, and 79.2% rated the virtual medical agent more than 66% for credibility. Interestingly, despite nearly 66% of patients were “very satisfied,” only 57.23% were willing to interact with the virtual medical agent again in the future, which highlights the continuing need to measure engagement and adherence in future studies.

Heterogeneity in Reporting Metrics

There were few improvements in the standardization of conversational agent evaluation in this review compared to our prior 2018 review. From the 7 included studies, a continued heterogeneity of conversational agents and reported metrics is present, and none of the studies measured engagement in the same way. Fulmer et al. measured engagement as the number of messages exchanged between the participant and the conversational agent, 19 Martínez-Miranda et al. used duration of time spent, 22 and Philip et al. surveyed participants regarding engagement. 23 Given the growing literature surrounding the use of conversational agents in health care, the development of standardized methods of collecting and reporting data is imperative, without which the broad critical assessment of such agents and studies remains inconclusive. Reassuringly, while there are no validated instruments that assess adherence and engagement of patients using conversational agents, the research community is however utilizing some measures, though they are not currently universally agreed upon. Going forward, studies should aim to include assessments of therapeutic efficacy. For example, for depression, the “Severity Measure for Depression—Adult” is adapted from the Patient Health Questionnaire 9 (PHQ-9) and can be used to monitor treatment progress. Finally, to the best of our knowledge, no studies have been conducted regarding the interest among psychiatrists in conversational agents. Strikingly, many psychiatrists considered it unlikely that technology would ever be able to provide empathetic care as well as or better than the average psychiatrist. 34 Clinician engagement is necessary in order to integrate conversational agents into psychiatric practice and should be assessed with a modified engagement metric similar to those used for patients.

Unexplored Areas

Although conversational agent research is expanding, several areas remain understudied, primarily specific illness populations. Most research in the last year was conducted in adults, with the average participant age in studies as 34 years. An estimated 75% of all lifetime mental disorders emerge by age 24, and 50% emerge by age 14. 35 This highlights an important understudied period of intervention in which detection, monitoring, and treatment may have long-standing benefit on the trajectory of these young patient’s lives. While no studies assessing emergency response were discovered, there is emerging work on whether conversational agents are able to understand an emergency situation and appropriately respond. 36,37

Notably, 6 of the 7 conversational agents primarily had a text interface, and only 1 included a voice interface. By design, text is more discreet, which may allow patients to feel more comfortable using conversational agents in public. This is particularly true when sharing personal information regarding their emotions. In 2013, the Pew Research Center reported that of the U.S. adults who use digital voice assistants, 60% cite that they use digital voice assistants because “spoken language feels more natural than typing.” 14 To our knowledge, a direct text to voice comparison of acceptance and therapeutic efficacy has not yet been conducted. More research into which modality is more effective in certain psychiatric conditions is needed.

These findings align with other reviews, concluding that while conversational agent interventions for mental health problems are promising, more robust experimental design is needed. In a review by Gaffney et al., metrics for engagement and reporting were inconsistent. 38 Another review by Bibault et al. focusing on oncology patients suggests that the scarcity of clinical trials in evaluating conversational agents contrasts with the increasing number of patients poised to use them.

It is important to characterize the use case of conversational agents. At present, conversational agents can potentially augment, but not replace, clinical care. To this end, conversational agents may best serve as a means of increasing access to care, such as to see a clinician. They could provide lists of mental health clinicians in the area or recommend patients speak to their primary care physician regarding specific concerns that the conversational agent may not be capable of handling.

Further, while privacy and security remain a major concern in the use of technology in health-care settings, the sensitive nature of mental health may present a greater risk to patients. Little has been done to understand what steps, if any, are taken by commercially available conversational agents and whether sensitive, private, and vulnerable information shared by patients about their mental health is sufficiently safeguarded.

Limitations

It is important to note that there remain several limitations in evaluating conversational agents in mental health. Our search term aimed to be inclusive but may have missed some crucial studies, especially as research may be published in journals outside of those with a health-care focus chosen for this literature search. Our search term did not include terms such as “voice assistant,” “smart assistant,” and “dialog system” (these were also not included in our prior 2018 study), and these terms may have identified further studies. While comparing research changes between 2018 and 2020 offers useful insight, the high degree of heterogeneity between studies in this space continues to limit direct comparison. As highlighted in our prior review, the heterogeneity of reporting metrics continues to prevent the drawing of firm conclusions around already-limited use cases, and without a standardized metrics reporting framework, these limitations may persist further.

Conclusion

Conversational agents have continued to gain interest across the public health and global health research communities. This review revealed few, but generally positive, outcomes regarding conversational agents’ diagnostic quality, therapeutic efficacy, or acceptability. Despite the increase in research activity, there remains a lack of standard measures for evaluating conversational agents in regard to these qualities. We recommend that standardization of conversational agent studies should include patient adherence and engagement, therapeutic efficacy, and clinician perspectives. Given that patients are able to access a wide range of conversational agents on their mobile devices at any time, clinicians must carefully consider the quality and efficacy of these options, given such heterogeneity of available data.

Footnotes

Authors’ Note

Aditya Nrusimha Vaidyam and Danny Linggonegoro contributed equally to this work.

Acknowledgment

John Torous reports unrelated research support form Otsuka outside the scope of this work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: National Institute of Mental Health (grant ID: 1K23MH116130-01).