Abstract

In this article, we problematize the notion that the continuously growing use of bibliometric evaluation can be effectively explained by ‘neoliberal’ ideology. A prerequisite for our analysis is an understanding of neoliberalism as both denoting a more limited set of concrete principles for the organization of society (the narrow interpretation) or as a hegemonic ideology (the broad interpretation). This conceptual framework, as well as brief history of evaluative bibliometrics, provides an analytical framing for our approach, in which four national research evaluation systems are compared: Norway, Russia, Sweden, and the United Kingdom. On basis of an analysis of the rationales for implementing these systems, as well as their specific design, we discuss the existence or non-existence of neoliberal motivations and rationales. Overall, we find that a relatively homogeneous academic landscape, with a high degree of centralization and government steering, appears to be a common feature for countries implementing national evaluation systems relying on bibliometrics. Such characteristics, we argue, may not be inductively understood as neoliberal but as indications of national states displaying strong political steering of its research system. Consequently, if used without further clarification, ‘neoliberalism’ is a concept too broad and diluted to be useful when analyzing the development of research evaluation and bibliometric measures in the past half a century.

Introduction

‘Neoliberalism’ is a term and concept widely used to describe broad changes to the political and economic systems of the Global North in the past few decades, sometimes with an analytical aim, but usually with a normative and critical ambition. A quick look at extant literature shows that nearly everything can be pejoratively termed ‘neoliberal’ – from urban slums (Davis, 2006) to dumbing reality TV shows (Couldry, 2010), and a lot in between (for a review, see Flew, 2014). One area of governance reform often discussed with reference to an underlying ‘neoliberal’ political agenda is the significant growth in intensity, scope, and importance of research evaluation systems in the past decades, as part of a broader transformation of the governance and funding of academic research and higher education (e.g. Canaan and Shumar, 2008; Fleming, 2021; Mirowski, 2011; Nedeva and Boden, 2006; Slaughter and Rhoades, 2004).

In this article, we problematize the notion that research evaluation is ‘neoliberal’ and that the continuously growing use of bibliometric evaluation as a governance tool for academic research (and teaching) can be effectively explained by ‘neoliberal’ ideology. We do this with the use of secondary literature and with a chiefly analytical and theoretical aim, attempting to answer the question what neoliberalism means in relation to research evaluation as a governance tool for universities; if, how, and to what extent research evaluation is really ‘neoliberal’; and if there are other concepts that can better explain what lies behind the current excesses of evaluation in universities, also highlighting similarities and differences between national systems for performance-based research evaluation.

The article is structured as follows. In the next section, we discuss the notion of ‘neoliberalism’ and how it has been treated in various scholarly studies of politics, economy, and social change in the past decades, arriving at a tentative understanding of the concept as either very broad and vague, or more distinct and analytically useful. We thereafter try these two alternative understandings of ‘neoliberalism’ on the patterns of change to the governance of universities, both discussing how ‘neoliberalism’ has come to be used in latter-day analyses of such change, and how other terms and concepts such as ‘academic capitalism’, ‘managerialism’, and ‘New Public Management’ (NPM) can complement it. Thereafter, we provide a brief history of bibliometrics and research evaluation, with the purpose of demonstrating that many key indicators and measures used in bibliometrics today emerged as part of a scholarly ambition to analyze patterns of collaboration and productivity in science, rather than being invented by evaluators or bureaucrats. In the second half of the article, we chronicle the emergence and implementation of a particular, widespread, and highly debated type of evaluation system, namely national models for performance-based allocation of research funding to universities. The analysis concerns the various forms that such systems have taken in four national contexts: Norway, Russia, Sweden, and the United Kingdom. By analyzing the rationales for implementing these systems, as well as their specific design, we provide a comparative perspective on the influence of ‘neoliberal’ motivations and rationales.

We conclude the article with a discussion that analyzes the four systems with the help of the theoretical understandings of ‘neoliberalism’ developed in the first part of the article. Aiming to provide some conceptual clarity in discussing evaluation and bibliometric measurement in relation to societal and economic development, we return to the two alternative understandings of neoliberalism and discuss the consequences of these for the understanding of the rationales behind evaluation systems and metric use, comparing ‘neoliberalism’ with other concepts discussed in the article and suggesting a modification of the conceptual toolbox for analyzing changes to the governance of academic science.

What is ‘neoliberalism’?

In one of the most renowned works on neoliberalism, David Harvey (2005) identifies it as ‘a theory of political economy practices’ that rests on the fundamental supposition that ‘human well-being can best be advanced by liberating individual entrepreneurial freedoms and skills’ where the role of the state is ‘to create and preserve an institutional framework’ of ‘strong private property rights, free markets, and free trade’ (Harvey, 2005: 2). He places the birth of these ideas to the late 1970s, in particular the Reagan presidency in the United States and the Thatcher era in the United Kingdom, and views their emergence as a direct result of the crisis of over-production in (post-)industrialized economies in the early 1970s. In his description, neoliberal ideas came then to gain influence in very different national contexts like China, beginning in 1979, and in Sweden during the 1990s (Harvey, 2005: 9). But while this historical argument is plausible and educative, it still gives very little clue regarding how ‘neoliberalism’ as a political or ideological doctrine or superstructure acts out in practical policy and governance, let alone if concrete policies can be causally explained by a rise of ‘neoliberalism’, and if so, how.

This is a general problem. While most scholars seem to share Harvey’s view of the historical emergence and transmission of neoliberalism globally, there is less agreement regarding the definition of the concept, and many rather influential authors who use the term lavishly and normatively, rather than analytically. The term is used on many levels, from a ‘neoliberal era’, to a ‘neoliberal state’, and even ‘neoliberal selves’, which makes it difficult to analytically operationalize it. Moreover, as Grealy and Laurie (2017) argue, the ‘strong’ theory of neoliberalism as a universal ideology, which influences cannot be escaped, may limit the possibility of nuanced and detailed analysis: If neoliberalism permeates everything, and operates through our actions, we are not likely to be able to use it conceptually to explain or analyze our actions or their consequences.

Both the variation in use and the claims that neoliberalism pervades all of contemporary society make neoliberalism into an ‘essentially contested concept’, a notion originally attributed to Gallie (1956). But as Boas and Gans-Morse (2009: 138) argue, ‘neoliberalism’ differs greatly from other such concepts, like ‘democracy’ and ‘liberty’, in that its meaning has hardly ever been explicated and its wide use continues to be surprisingly diverse or even amorphous. Similar observations of insufficient or lacking definitional effort among those many academic studies that invoke ‘neoliberalism’ as an analytical tool or an explanatory cause of current phenomena and events have been made by several recent studies (e.g. Dean, 2014; Mudge, 2008; Rose, 2017; Venugopal, 2015). Systematic attempts to bring clarity to the various uses of the concepts have yielded different taxonomies. Watts (2022) identifies four different common ways of thinking about ‘neoliberalism’ – as ‘a set of economic policies’, as a ‘hegemonic ideological project’, as a ‘political rationality and form of governmentality’, and as ‘a specific type of embodied subjectivity’ – and demonstrates that the first three can have great merit and explanatory values in social science, but that one ought to be very careful not to overinterpret them, whereas the fourth is largely unconvincing and fails to take into account the institutional specificities of neoliberalism, which in turn defeats the purpose of critical social scientific analysis of the phenomenon itself.

Neoliberalism understood as ‘a set of economic policies’ could also be called ‘actually existing neoliberalism’, as it can be empirically identified with a number of economic reforms that were enacted most pronouncedly by the Reagan administration in the United States, and the Thatcher government in the United Kingdom, in the 1980s (Watts, 2022: 3). They included, but were not limited to, privatization of public sector organizations, deregulation of financial markets, elimination of price controls, tax cuts for corporations and wage earners, and fiscal austerity (Boas and Gans-Morse, 2009). This use of the concept of neoliberalism is usually not normative, in contrast to its use to describe a ‘hegemonic ideological project’, where a causal and logical chain is established between a countermovement to the expansion of welfare states in the 20th century, led by the Mont Pèlerin Society and the Chicago School, and the policies actually implemented by, among others, Reagan and Thatcher (e.g. Harvey, 2005; Wacquant, 2010). Neoliberalism is thus viewed as a mission to shrink public sectors and advance the interests of economic elites, usually implemented by a vague but powerful ‘thought collective’ who waited behind the scenes for the right moment to act and found it in the 1970s when Western countries experienced continuous stagflation and a dire need for economic reform (Watts, 2022: 4).

The third form of neoliberalism, understood in the Foucauldian sense as political rationality or a form of ‘governmentality’, differs in that it does not comprise of an ideology but instead a form of political rule or ‘raison d’État’ or ‘art of government’ (Foucault, 2008: 218), which means ‘specific ways they rationalize how government is to be exercised and for what purposes’ (Watts, 2022: 6). This Foucauldian understanding of neoliberalism is very close to what authors have suggested to call ‘economization’ (Berman, 2014; Hallonsten, 2021a: 12–14) in order to escape the political left-right axis and abolish the identification of this change in governmentality as a distinct right-wing project. In contrast to classical liberalism, which sought to establish a balance between the market and other spheres of society (including the state and civil society), Foucault claims, neoliberalism seeks to replace citizenship and all other forms of human participation in society with the atomistic, self-serving, and profit-maximizing homo economicus, and thus subsume society and all its parts under the hegemony of the market (see Brown, 2015: 17ff). This trend is almost identical to the process that several scholars have identified as economization: ‘a systemic societal process’ (Wentzlaff, 2019: 58) whose effects are manifest in several pervasive features of contemporary society, including a reorientation of policy in practically all domains ‘around economic interpretations of issues’ (Smith, 2007: 17; see also Berman, 2022) but also a discursive shift in broader society that reconstructs behaviors, organizations, and institutions as ‘economic’ (Callon, 1998) and makes the economy and all its constitutive parts the model for how to organize society as a whole and in all its parts (Hallonsten, 2023).

This overview, while far from giving a complete review of possible ways to define the concept, shows how neoliberalism can be understood both as a more specific and concrete set of ideas and practices, and as a broader almost all-encompassing ideological discourse. The more concrete, or narrow, definition identifies neoliberalism as a particular set of economic policies (including, for example, ideas regarding competition and a free market) as well as ideals of how society should be governed (governmentality). This interpretation may be described as the ‘narrow’ theory of neoliberalism. The wider definition, on the other hand, positions neoliberalism as a ‘hegemonic ideological project’ which affects nearly all aspects of our lives, including our world view and our identities. Such an interpretation may be described as the ‘broad’ theory.

In the following, we will use the narrower definition of neoliberalism, building on two of the four interpretations identified by Watts (2022), namely neoliberalism as a set of economic policies and a form of governmentality. While such an approach might appear reductive to those arguing for a broader understanding of neoliberalism, it is necessary to provide us with a set of concrete characteristics from which an analysis can be performed. Yet, we will revisit the discussion over the analytical potential of the all-encompassing definition of neoliberalism in the concluding section of this article, and there discuss its relevance in analyzing the contemporary university.

Neoliberalism and the university

The evolution of ‘neoliberalism’ into a ‘catch-all both to describe or explain, and to condemn almost everything one does not like about our present conjuncture’ (Rose, 2017: 318), has come to encompass also the recent decades of broad reform of the governance and funding of academic research in Europe and North America. Reforms of the management of academic faculties and departments, the instrumentalization of academic education, and the emergence of various performance-based evaluations, including bibliometric measures and indicators, of academic research, are changes to the governance and funding of academic science that are said to have brought the ‘neo-liberalising’ of science (Nedeva and Boden, 2006: 280), with academia ‘pressured by a set of neoliberal practices and structures’ (Canaan and Shumar, 2008: 3), and the predominant model of academic governance now being ‘the neoliberal university’ (Fleming, 2021: 4).

Berman (2022), who has undertaken one of the most detailed and insightful analyses of the transformation of politics in the United States from the 1970s and on, uses ‘the neoliberal era’ as an overall label for the past four decades, but only very generally and in combination with other more distinct concepts to explain and analyze processes and events. If the history of science and science policy in the 20th century should be summarized briefly, it is probably adequate to have World War II mark the inauguration of the era of broad public support based on the ‘social contract for science’ (Guston, 2000) and the ‘linear model of technological innovation’ (Godin, 2006b), which in combination meant generous funding but little direct governance and steering. The 30-year period when this arrangement held coincided with the era of unprecedented and spectacular economic growth in the West, but came to a rather abrupt end in the 1970s, with the economic downturn and the changes in macroeconomic over-ideology from Keynesianism to monetarism (Rodgers, 2011), the introduction of microeconomic models of thought in policy (Berman, 2022), and the rise of enterprise culture (Keat and Abercrombie, 1991) and market ideology (Djelic, 2006). If the current era is the neoliberal era, then perhaps the pre-World War II era was the ‘liberal era’, and the 30 years in between the ‘etatist era’ or similar. This postwar model of planning academic knowledge production to serve the public good was rooted with Marxist thinkers such as John Desmond Bernal but not exclusively leftist – the need for a further organization of science was also evident in the ideas promoted by Vannevar Bush (1945) in his influential Science, the endless frontier – but perhaps anti-liberal. As Beddeleem (2020) argues, ‘early liberals demarcated and defended a liberal science against progressive scientist who promoted science as a midwife of social change’ (p. 22).

Hence, the series of reforms enacted in the United States in the 1970s and on, and their underlying political rationale, emanated from both the left and the right, and were implemented by Democrats and Republicans alike. Berman (2014) suggests ‘economization’ as a far more accurate and useful concept to explain the changes, showing that the hegemonic status of economic thinking and economic concerns in politics (and broader society) had its origins in leftist and etatist ideology just as much as the right and ‘neoliberalism’. Hallonsten (2021b) concurs and argues that the core of the problem is a current ‘deadening focus on economic growth at all costs and as everything’s true purpose’ (p. 391), which has led politicians and bureaucrats on all levels of government and in academic organizations to implement economic models and an economic style of thinking, which includes not least quantitative metrics and evaluative practices that are shortsighted and generalized and ill-fit for academic research and higher education. Economic growth is a very tangible goal for policy, and the realignment of priorities in policymaking and public administration to achieve economic growth amounts to a very concrete set of actions, which makes economization far more precise in character than the general proliferation of ‘neoliberal’ ideology.

There are plausible historical reasons for the development. The post-World War II era was a time of unprecedented growth and unprecedented public (and private) sector enlargement in the West, including a manifold expansion of research and higher education. The social and technological progress of this era, and the political consensus around science’s role in this progress, was, however, halted by the economic downturn of the 1970s and the several reminders of the dark side of technological development and bureaucratic expansion, which ushered in a new era of reflexive modernization and risk awareness (e.g. Beck, 1992; Giddens, 1990). By a broad change in mindset and frames of reference, science and higher education became viewed less as goods in themselves and as means toward broad individual and social enhancement, and more as means toward the increasingly overarching aim of economic growth and strengthened industrial competitiveness (Berman, 2014, 2022; Kleinman and Vallas, 2001; Mirowski, 2011). Universities were gradually reconceived as primarily subcontractors to the economy, which prompted governance reform and new evaluative mechanisms to ensure efficiency and accountability (Hallonsten, 2021a).

Management of large organizations with the main objective of meeting shortsighted productivity goals is as old as the modern corporation. In the early 20th century, the ‘scientific’ management techniques associated with Fredrick Winslow Taylor gained popularity, and though it has since been almost one-sidedly refuted by organization and management scholars, the idea of a supposedly exact and neutral form of managing organizations that can counter the arbitrariness of and seeming inefficiencies of human creativity and craftsmanship lives on. Today, critical management scholars have identified managerialism as the widespread and almost ideological belief in measurable efficiency as the primary goal for the management of all organizations, management itself as a superior collection of techniques for governing organizations, and management as a force of good not only within the organizations where it is applied but for society (Edwards, 1998; Enteman, 1993; Locke and Spender, 2011; Parker, 2002). Klikauer (2013) goes as far as calling this set of ideas ‘hyper-Taylorism’ (p. 49). For public sector organizations, managerialism mainly means the replacement of professional autonomy, collegial governance, and traditional bureaucracy by line management and hierarchical models imported from business contexts. The kinship with economization (and, much more vaguely, with ‘neoliberalism’) is the primacy given to measurable (economic) outcomes as the objective for governance and the replacement of process orientation by result orientation in management of organizations.

Managerialism is only partially linked to the NPM, an ex post facto identified set of reforms enacted in Western liberal democracies in the 1980s and onwards, with the aim of making public sector organizations and the provision of welfare services more efficient and customer-oriented. This included, among other things, decentralization, the introduction of line management, tighter financial controls, and systematic and standardized quality appraisal (Hood, 1995; Pollitt and Bouckaert, 2004). The latter, recurring quality and performance appraisal, typically by standardized quantitative means, has been even more distinctly identified and conceptualized under headlines such as the audit society (Power, 1997), the evaluation society (Dahler-Larsen, 2012), and metric fixation (Muller, 2018). Common to managerialism, NPM, and the audit society is the belief in the standardization and universalism of ‘neutral’ or ‘scientific’ techniques for improving efficiency and goal attainment in organizations, understood very narrowly but distinctly as economic efficiency or at least efficiency that is quantitatively measurable.

And it has all affected academic research and higher education, to varying degrees in different contexts but with no country, institution, or subject area completely spared, in continuous streams of reform in the past four to five decades. Most conspicuous are the introduction of quantitative performance evaluation as a basis for the distribution of research funding (Whitley and Gläser, 2007; Whitley et al., 2010); the gradual displacement of collegial self-governance by line managers, administrators, and strategic management (Deem et al., 2007; Münch, 2014); and the introduction of ‘excellence’ as a kind of quasi-currency to allow the strategic management of universities as profit- and revenue-maximizing businesses on global markets despite the fact that knowledge cannot be traded like a commodity (Hallonsten, 2022: 285). Under such ‘academic capitalism’ (Münch, 2014), it has been shown that core values of university research and education are easily displaced and a strong showing with easily comparable but essentially shallow measures of funding attracted, publications produced, citations amassed, and ranking positions achieved comes to replace curiosity, creativity, collaboration, and long-term or deeper relevance, quality, and impact (Collini, 2012; Rolfe, 2013; Watermeyer and Olssen, 2016). Yet, a more detailed consideration of the emergence of various research metrics shows a more complex history which begins long before the rise of neoliberalism.

Bibliometrics and research evaluation

The increasing use of performance measures, and specifically bibliometric measures, has been seen as an integral part of the ‘neoliberalization’ of academia. For example, in an influential paper, Burrows (2012) argues that neoliberal rationalities lie the foundation for the ‘co-construction of statistical metrics and social practices within the academy’ (p. 361). Similarly, Hall (2011) suggests that ‘bibliometric exercises are inherently a component of the circuits of cultural capital that are fundamental to neoliberal thinking’ (p. 26). A more recent account by Ma (2022) relies on previous descriptions when positioning ‘neoliberalism and new public management as the driving forces that compel the use of quantitative indicators’ (p. 397). These accounts have in common that both the notion of ‘neoliberalism’ and the definition of ‘metrics’ often are poorly defined, and vaguely operationalized, implying that they subscribe to the strong interpretation of neoliberalism as pervasive and all-encompassing.

The broadness of the claims makes it difficult to draw direct connections between policy and evaluative practice. A brief history of the emergence of bibliometrics, and its gradual introduction as an evaluative instrument in the governance of academia, will therefore be of use when answering the question in the title of this article: Are bibliometric measures neoliberal? As detailed below, the history of bibliometrics stretches far back, and the arguments behind the use of indicators are varied, and they have often been introduced within the scientific community, before they were adopted as policy instruments.

In the broader literature, bibliometrics is often discussed as something emerging during the second half of the 20th century, with the launch of Eugene Garfield’s Science Citation Index (SCI) as a key event. Yet, as detailed by Godin (2006a), many of the ideas, and methods, of bibliometrics, were established much earlier. In the late 19th century, Alphonse Candolle and Francis Galton both employed what we today would define as bibliometric methods to show how the environment (Candolle), or heredity (Galton) shaped science, and scientists. Their focus was on the number of reputed scientists across research fields or countries, or what the creator of the first university ranking, James Mckeen Cattell called eminent men (Hammarfelt et al., 2017). However, the idea of measuring the output of science in the form of publications soon became a popular method used by librarians, and among scholars studying their own discipline (Broadus, 1987).

The longer history of bibliometrics as an applied method for evaluating science remains to be written. Yet, if we with bibliometrics refer to the counting of publications in order to evaluate scholars, then this practice can be traced back as far as the late 18th century. For example, Josephson (2014) describes how scholars in Germany were evaluated based on their publications in order to estimate their ‘Rhäume’ (esteem). High esteem was important as scholars and universities were dependent on attracting students, and famous and frequent authors had greater chances in this endeavor. In concrete terms a register, called Das gelehrte Deutschland (first published in 1767), of academics and their publications, was used. This book was consulted when new professors were recruited at German universities. In terms of a system that directly rewards scholars on the basis of publications, Sokolov (2021) argues that Russia might be one of the earliest examples. He bases this assumption on a study by Galiullina and Ilyina (2013) who describes a system, established already in 1820, in which professors were ‘obliged to produce journal articles on a yearly basis, with a significant part of their salary being paid as bonuses contingent on the number of papers published’ (Sokolov, 2021: 1007). This might very well be the first formal use of a performance-based research funding based on the production of academic publications.

The systematic use of indicators on a policy level emerged much later. Martin (2011) describes how economic crises, during the 1970s, resulted in significant cuts in public spending. Thus, increasingly, it was demanded that funding devoted to research should be spent wisely. At the same time, arguments were made that the decision-making process should be opened up to include a wider public. Yet, to achieve this new data on inputs and outputs were needed, and subsequently organizations such as SPRU (Science Policy Research Unit, UK), CWTS (Centre for Science and Technology Studies, the Netherlands), as well as CHI (Computer Horizons Inc., USA) were established. These made use of the data provided by the SCI, founded in 1964, which was supplemented by additional statistics on patents and manpower. Hence, a more systematic measurement – as well as an emerging field of research policy and evaluation 1 – was gradually established during the 1980s.

While it would be convenient to place these initiatives as part of a ‘neoliberalization’ of research policy and the governance of academia, it is important to remember that many of these actors and organizations emerged as bottom-up initiatives with the purpose of developing our understanding of science. For example, Eugene Garfield relied on a long tradition in the sociology of science when developing SCI (Wouters, 1999), while Francis Narin’s (CHI) attempts of providing evidence for the impact of research through patents was part of an effort to save basic science from budget cuts (Hammarfelt, 2021). A strong theory of neoliberalism would yield that these efforts were part of the pervasive and all-encompassing spread of neoliberalism as a ‘hegemonic ideological project’ which nobody can escape. But such an interpretation would end the analysis right there, with little added explanatory value.

Turning, instead, to economization and managerialism, it can be established that bibliometric measurement came in demand because of a need for measures that could switch the focus of the governance of science from process to outcome, and establish economic criteria and exactitude in performance evaluation as central tools. Meanwhile, the history of bibliometric measurement as such is dependent on theoretical developments in the sociology of science. Hence, we can conclude that the emergence of evaluative bibliometrics has a history of its own, starting long before any neoliberal area. Acknowledging that neoliberal ideas – for example, regarding the primacy of the market and importance of competition – may indeed have been an accelerating factor for increased use of metrics and performance-based evaluation, this causality is not necessarily best explained by ‘neoliberalism’, especially not without further clarification.

A reason why bibliometrics have become debated and questioned (Benedictus et al., 2016; Hallonsten, 2021a) is its use in national systems for performance measurement of research. More specifically, such use has been questioned due to unintended effects in forms of goal displacement and narrowed research agendas (De Rijcke et al., 2016). However, as shown below, the design of models for assessing, and rewarding, research performance varies considerably across countries, and the degree to which they use bibliometrics, and the extent to which ‘neoliberal’ thinking can be said to have inspired their construction, varies considerably. Importantly, our purpose here is not to find direct, casual, explanations but rather to trace possible influences based on a narrow definition of neoliberalism. Therefore, we foremost focus on aspects related to economic policies and changes in the governance of science (governmentality).

Four distinct shapes of national research performance measurement

United Kingdom: Competition and collegiality

The most discussed system for performance-based allocation of research funds is the one employed in the United Kingdom (its international influence is highlighted by Engwall, Edlund, and Wedlin, this volume). The system first named Research Assessment Exercise (RAE) was introduced in the United Kingdom in 1986, and later superseded by the Research Excellence Framework (REF) in 2014. Despite the name change, the basic design of the system has remained intact over the years. The main focus of the system is on scholarly publications, which are evaluated in a collegial process of peer review, and based on these results funds are allocated to departments and universities. This evaluation process produces a ranking of departments and universities, which has been described as a kind of ‘champions league’ for academic institutions (Collini, 2012). During its existence it has resulted in specific occupational roles, like REF-managers and REF-administrators, as well as sayings such as ‘being REF-able’ (McCulloch, 2017). Overall, it is a highly visible and influential system, which has been debated and studied intensively. For example, recent studies have argued that the REF has led to a homogenization of research in several fields (Pardo-Guerra, 2022), yet as shown by Wieczorek et al. (this volume), the concrete epistemological effects of the system are difficult to determine.

The RAE was instigated in 1986 under the Thatcher government. At first it was known as the ‘Research Selectivity Exercise’, but in its second round, in 1989, it changed name to the ‘Research Assessment Exercise’. As suggested by Sokolov (2021), the motivation behind the system was in line with the ‘neoliberal’ politics of the time, as the primary motivation was to solve the ‘lazy agent’ problem (p. 990). This was not unique for science, but part of a general government policy that demanded that public spending should adhere to the principles of ‘value for money’ (Martin, 2011; Rhodes, 1994). Initially, the assessment focused on a few key publications from each evaluated unit, but after critique against this selective approach, the scheme was extended. Gradually then the evaluation system has expanded to include a broader set of outputs. The latest iteration of the assessment, the so-called REF, has come to incorporate a component that tries to access the social impact of research through the so-called impact reports. As many critics have observed, the cost of the exercise has risen gradually to a point where it can be questioned if the benefits really exceed the costs. An estimate made already in 2006 found that the expenses – including the time spent by universities to prepare for the exercise – amounted to a figure in the order of £100 million (Sastry and Bekhradnia, 2006: 5). The increasing demands and complexities of the assessment have resulted in it being described as a Frankenstein’s monster (Martin, 2011). Despite the increasing costs of the evaluations, it has always relied mainly on collegial evaluation (peer review) exercised by an academic elite. Use of bibliometrics has been rather limited, despite reoccurring arguments that such a system would be cheaper, and fairer (Butler and McAllister, 2009; Taylor, 2011). Suggestion of replacing it with cheaper bibliometric methods has not been met favorably by the academic community (Wilsdon et al., 2015).

Several arguments can be made to why the RAE could be described as a system that has distinctive neoliberal characteristics. A great number of critical discussions of the system have also emphasized its ‘neoliberal’ qualities (Burrows, 2012; Watermeyer and Olssen, 2016). Undoubtedly, it is so that the RAE/REF has neoliberal features, and the motivations behind the evaluation system relied on arguments originating in a rationale of how publicly funded activities should be held accountable. At the same time, the actual assessment is pre-dominantly performed by academics themselves in collegial processes (peer review through panels), and bibliometrics play a rather small role, even in fields where indicators such as citations have been a recognized method for estimating the impact of research.

Sweden: Bureaucratic and invisible system

Currently, Sweden does not allocate research funds based on performance-based system. However, between 2008 and 2015, a bibliometric indicator was used to allocate resources between universities, and parts of the model were only abandoned in 2019. The system used citation data from Web of Science, which were normalized, and adjusted through various measures in order for it to be applicable across disciplines. Still, rather early on, ‘arbitrary measures’ had to be inserted in the model in order for it to work across all fields. Moreover, the field delineation used eventually showed to be rather sensitive to outliers, due to the fact that highly cited articles in the humanities and social sciences could give a very large return in terms of research funds. For example, when one social science article with hundreds of citations was excluded due to the time window applied, Stockholm universities lost as much as 5% of their total allocation in the model. 2 Hence, rather early, it became evident that the system was very complex, which made it difficult to understand, and hard to develop and maintain.

An original ambition with the system was, according to its originator, to further excellence in research, and a key strategy for achieving this was to increase the number of peer-reviewed international publications (Swedish Government, 2007: 1, 394). The goal of ‘changing publication behavior’ was explicitly stated, and a background to proposing the model was a concern that Sweden was not keeping up with comparable nations in terms of research impact. The bibliometric model used in Sweden would, on the other hand, provide: ‘[. . .] strong incitements to increase activity on the global publication market’ (Swedish Government, 2007: 1, 418). Its focus was therefore only on English language articles indexed in Web of Science. The designers of the model claimed that alternative models, such as the Norwegian system, could reinforce rather than contest traditional publication practices (e.g. book publications and non-English publications). Other types of systems, such as the REF in the United Kingdom, were considered too burdensome in terms of resources, and an explicit strength of the Swedish system was that it did not require the collection of new data; rather, it used an already existing data source. An interesting aspect of the Swedish system is that it claimed not only to measure production, but also productivity by using the estimated workforce (academics in each field) as an input in the system.

A center-right government introduced the Swedish model, and it was obviously inspired by ideas about excellence and global competition. As evident from the research bill of 2008 – in which the new system for resource allocation was launched – the idea was not only to increase international visibility but also to provide incentives for specialization within Sweden (Swedish Government, 2008: 23). Universities should, according to the bill, be incentivized to find research areas in which they have a competitive advantage over other institutions. This focus on ‘top research’ was further accentuated by significant funding being allocated to ‘centers of excellence’ which were research groups and institutions deemed to be able to compete on the very highest international level. The system could be described as rather radical, also in an international perspective, in its sole focus on international journal publishing, yet there was very little political or academic debate following the introduction of the system. Moreover, it remained largely in place during social democratic (left) government which were in charge from 2014 to the abandonment of the system in 2019. One reason for it being so little discussed is that it had limited influence in terms of the redistribution of funds between universities and fields, and at the same time its design was very complex which made it hard to grasp, even for experts on research policy. Hence, the former Swedish system is perhaps best described as a compromise between the need of having a system and the challenges in finding agreement between actors. These difficulties are also illustrated by Engwall, Edlund, and Wedlin (this volume) when outlining the various local research evaluations of specific universities in Sweden.

The complexity of the national system, which made it difficult to discern its impact, resulted in it being little discussed both in the research literature and in the public debate. Compared to the REF, or the Norwegian system described below, it did not involve any collegial influence either in its construction or in the running of the model. Instead, the system was known in detail by a limited group of experts. Although radical in its original design, the system mainly became a cheap, invisible, bureaucratic tool, which influence on actual academic practice (like publishing) was modest. Still, the idea that there was bibliometric evaluation on state level, provided the incitement for local systems of evaluation, which in themselves – especially when applied on small groups or individuals may have had rather direct effects (Hammarfelt et al., 2016). Hence, incentives for bibliometric evaluation trickled down, but its manifestation on the organizational level often followed another logic compared to the motives behind the national system. In fact, local systems of evaluation often used, or at least took inspiration from the Norwegian model (Hammarfelt, 2018), a system that, contrary to the Swedish model, aimed for inclusion, and transparency.

Norway: Universal and transparent system

The Norwegian system has become widely known, and the points-based system that it introduced has been used, with variations, in several national and local systems of evaluation. The basic idea of this model is that publications are given points based on genre, and level of quality. Hence, a level 1 journal article receives 1 point, while a level 2 gains 3. The same applies to books, but here are instead 5 or 8 points given depending on the grading of publishers. The system relies on disciplinary committees, which decide on how different journals and publishers should be rated. To avoid inflation in highly graded channels, only 20% of the total channels in a research area can be graded as level 2 at a specific time. The publications points indicator was implemented in 2005, and the whole or parts of the model has since been exported to other Nordic (Denmark, Finland) and European countries (Poland, Flanders in Belgium). It has also been used locally at a several universities in Sweden (Sivertsen, 2018).

The Norwegian system was prepared by a right-wing government coalition, but came into to actual use in 2006 under social democratic leadership. The gradual development of the Norwegian model began in 2002 as a new national register for publications was developed. Simultaneously, ideas for how to assess research were discussed at the University of Oslo, and the model – also called the ‘tellekant’ 3 model – became the inspiration for a national system which was presented in 2004. As documented by Nelhans (2013: 249f), the implementation of two levels was a direct consequence of experiences in Australia where a system giving the same number of ‘points’ for all articles in international journals supposedly led to an increase in publications on low-quality journals, rather than incentivizing research of high quality. 4

One of the purposes of the Norwegian model was to incentivize publications for a global English language audience, and early critic – predominately from humanities scholars – was that the system would lead to Norwegian being abandoned as a research language (Nelhans, 2013: 255f). Still, and especially in comparison with the Swedish model, the system was explicitly designed to protect local contexts and languages, and to provide a balanced evaluation across fields. In fact, it has been argued that the Norwegian model, and the database of all publications that it uses, strengthens research in the humanities and the social sciences by highlighting output that seldom is registered in international databases such as Web of Science or Scopus. For example, Sīle and de Rijcke (2022) argue that publication databases developed for evaluation – like the Norwegian one – are motivated not only as part of a market discourse, but their rationale is also based on an idea of spreading knowledge which is based on ideas stretching back to the ‘enlightenment’. Such an argument resonates also with its inception, starting as a registry for overview and control, rather than as an evaluative instrument.

The Norwegian system is currently under debate, and its continuation is at the time of writing uncertain. Rather early on, the system was criticized for being biased against co-authored research, and it has also been claimed to incentivize more quantitative approaches over qualitative ones (Sætnan et al., 2019). Overall, however, the system has been adjusted – for example, in terms of how co-authored publications are counted – in order not to result in major redistributions of resources between field and institutions. Yet, its influence is not only dependent on the actual level where it is implemented, but it also has established highly visible and easily comprehensible indicators, which have been adopted in many contexts. Hence, the success of the Norwegian system largely depends on its simplicity and its universalism (it can be applied in many fields). While the motivation for the system was partly based on an ambition to make Norwegian research more internationally visible, the actual design emphasizes fairness, representability, and universalism rather than exclusiveness and fierce competition. The balanced approach is reflected in the design of the system which grades outputs in two categories, 1 (normal quality) and 2 (high quality), but it does not provide further gradings. Hence, high-quality research is not a category reserved for the selected few, but an attainable goal for many researchers, its excellence interpreted in an egalitarian system.

Russia: Managerial control and distrust in scientists

Russia’s research policy has undergone several reforms during the last decades. Around 2006, a set of reforms known as the ‘research turn’ were launched which resulted in considerable increases in resources devoted to academic research. A few years later, in 2012, the Russian Academic Excellence Project, known as ‘5–100’ program, was initiated. The concrete aim of this program was to place at least five Russian universities into the top 100 in global rankings (which ranking was not specified). After an evaluation process, based on plans for scoring high on indicators in various rankings, 21 universities were selected as winners of major grants (Sokolov, 2021). Overall, the strive for international recognition – defined as having top-ranked universities – is an important part of Russian research policy. The policy has resulted in a highly stratified system where a few elite institutions receive a lion share of the, in Western terms, small research budget. Promises of increases in the funding or research and higher education has been frequent, but rarely implemented. Instead research policy during the Putin years has predominately focused on appearances in international comparisons and rankings (Balzer, 2021).

The system for allocation resources across universities which has been in place since 2010, uses bibliometric data from Web of Science. The choice of a renowned international database resonates with the strong emphasis on global reputation in Russian research policy. Recent figures suggest that the emphasis on international outlets has resulted in an increase of publications in international databases, yet as observed by Balzer (2021) this rise should be interpreted carefully as it may reflect attempt to game the system rather than actual improvements in research output.

In relation to such widespread gaming of measurements Sokolov (2021) suggest that the choice of using an external data source should be explained by a government that does not entrust scientists with the authority to take strategic decisions regarding research funding. Hence, the idea was not primarily to design a system which thus motivate scholars toward specific goals (such as international publishing), instead, the overall emphasizes is on governmental control without the inclusion of researchers themselves. The main goal of this system was not to steer research in specific directions, or to increase production, but to solve the problem of the ‘corrupt knower’. Hence, quantitative indicators are used in order to deliberately keep scientific elites from influencing decisions. The demand for indicators made it possible for a few entrepreneurs in the business of producing metrics to become hugely influential in how assessment systems were designed (Sokolov, 2021). Overall, the Russian system emphasizes managerial control from the very top, within a system where collegial, and general, trust is low.

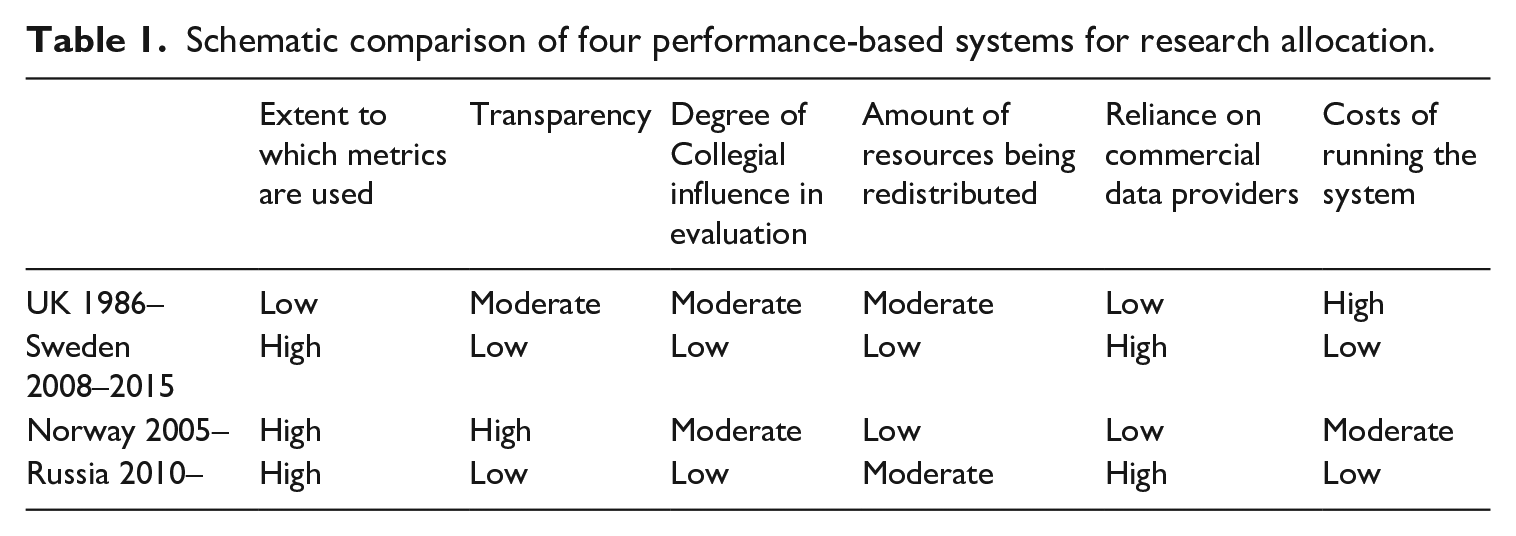

To summarize, we find that the four systems under scrutiny differ considerably in terms of the extent to which metrics are used, transparency, collegial influence on evaluation, reliance on data providers and cost of running the system (Table 1).

Schematic comparison of four performance-based systems for research allocation.

The degree to which these characteristics can be interpreted as ‘neoliberal’ of course depends on our definition of the concept. A high dependence on data provided by external companies – as used in the Swedish and Russian example – might be seen as ‘neoliberal’ in its reliance on commercial enterprises. Collegial influence – for example through peer review panels – could be interpreted as being in opposition to neoliberal influences. Furthermore, it might be suggested that the amount of money being re-allocated is of importance when discussing the neoliberal features of performance-based allocation. The more resources that are redistributed the more trust is put into a logic that emphasizes competition. In a broader interpretation of neoliberalism then, transparency may also be viewed as supportive of a ‘neoliberal hegemony’ (Valdovinos, 2018), yet it might also be linked to ideals originating as far back as the Enlightenment. Hence, what this brief analysis of four national systems show is that any definite conclusion on the extent of neoliberal influences is hard to reach. This is partly due to the limited scope of the present study, but even more so it is a consequence of the difficulties in defining and operationalization of neoliberalism. In the concluding section we therefore take a step back to revisit our earlier discussion on how to, and how not no, define neoliberalism, and what this means for our understanding of research evaluation.

Concluding discussion

A historical and sociological view of the changes to the governance of (academic) science in the last quarter of the 20th century and on, yields a rather complex picture. The quick review in a previous section of this article can only give a very sketchy overview; the notorious reader should consult the other contributions to this special section and the many prominent works in the same area by other scholars. Clear is, however, that there are several key events and processes in this development that can be traced back to a shift in economic policy and a change in how the public sector is organized and funded, that might be adequate to attribute to a rise in ‘neoliberalism’ in some shape or form.

This, however, hinges on the definition and operationalization of ‘neoliberalism’. In a previous section, we suggested that neoliberalism can be understood in two ways, either broadly and vaguely as a set of fundamental ideas and suppositions that permeates all of social life in contemporary society (the broad interpretation), especially politics, or as a set of economic policies connected to a specific form of governmentality that can explain many reforms on many policy areas, including the governance of universities (the narrow interpretation).

Regarding the broad interpretation, we contend that such a definition is hard to argue against, and therefore difficult to meaningfully operationalize in any scholarly analysis. Most of all, the broad interpretation implies that we are all deeply embedded in neoliberal thinking, which presents a challenge for formulating a critique. Moreover, a problem of the broad interpretation is that resistance against features described as neoliberal – such as metrics and rankings – appears as futile in a social setting that is dominated by a hegemonic neoliberal ideology from which supposedly nobody can escape. Nothing less than a drastic rebuttal of this dominating worldview, and a dramatic overthrowing of the system that upholds it, would suffice for achieving actual change. It is, to say the least, unclear how such an overthrowing of the system would be possible through critical scholarly analysis. Therefore, the first and foremost conclusion of this article is that if ‘neoliberalism’ is used without further clarification, it is a concept too broad and diluted to be useful in any analysis of the development of research evaluation in the past half century. In the event that this conclusion knocks in an open door, we defend it by noting that this nonetheless seems to be in need of repeating.

A more limited, or narrow, definition of neoliberalism, focusing mainly on its concrete influence on governance, may for some appear reductive and limited. Yet, such an approach would allow for a more precise critique of particular practices and their effects on academic knowledge production, and hence the stringent identification of causalities. Regarding the latter, Espeland and Sauder (2016: 45), for example, make a compelling case that although neoliberal reforms differed considerably between countries, and were implemented at different speeds and points in time, university governance and funding was often ‘a prime target’ for the multifarious and amorphous policy efforts to shrink government, lower taxes, and create ‘markets’ where markets previously had not existed. This speaks in favor of an interpretation of the sweeping but gradual governance reforms in academia in the past decades – including the introduction of bibliometric research evaluation – as neoliberal in character. Meanwhile, the argument can be questioned not least on the basis of the routine identification of neoliberalism as a political right-wing project. The evident variations of policy development over several decades and across several countries with very dissimilar institutional setups, and in many cases alternating political majorities, make ‘neoliberalism’ misplaced as label for the political rationales behind the developments.

The same principal argument can be made for bibliometrics: it is not monolithic. Different kinds of measures operate under different rationales, and can therefore be said to be more or less closely aligned with neoliberal notions of competition and markets. Citations, for example, which are used in the Russian and former Swedish system, can to some extent be said to indicate direct ‘market value’ (how is a specific research product (article) valued by peers) while tiered journal system, as used in Norway, is less directly related to the idea of a market.

Looking more closely at the four assessment systems examined above, we find that some of them clearly have what could be described as ‘neoliberal’ features, but the relation between the use of bibliometric measures and neoliberal politics is uncertain. The REF/RAE was certainly inspired by neoliberal thoughts on ‘competition’ and ‘value for money’. Interestingly, however, bibliometric measures were never an integral part of this system; instead, it is one of few systems that has not adopted such methods. The now abandoned Swedish system relied heavily on a ‘discourse of excellence’ and its design reflected an ambition to increase international competitiveness as well as competition between universities within Sweden. Yet, its design is rather hidden and complicated, and the actual redistribution of funds based on the system was rather modest. Hence, while it might be possible to theoretically describe this system as ‘neoliberal’, it is much more accurate and empirically warranted to characterize it as a bureaucratic budget tool whose influence on the university leadership and management, and on individual researchers, remained very limited. International competitiveness was among the inspirations for the Norwegian model, yet it started as an effort to provide complete information on all types of scientific publications. Moreover, the very design stressed that all fields of research should be represented fairly, and it emphasized collegial influence over quality criteria. Finally, the Russian system was similar to the Swedish and used a citation-based system, which aims for controlling researchers and provides managers with information which can be used for making decisions without collegial influence. Overall, several of the systems presented above display features which could, and have, been interpreted as ‘neoliberal’. We do not argue that such descriptions are completely invalid; rather, we suggest that such an account may obstruct a more heterogeneous set of motivations and influences for the implementation of performances measurement in research evaluation. Particularly, we find that the relation between neoliberalism and the use of bibliometric measures is less than straightforward, especially as the system being most criticized for having neoliberal features – the UK RAE – is the model that relies the least on quantitative metrics.

The argument can be extended to national contexts more generally. If indeed ‘neoliberalism’ would be a driver of the use of metrics, it is surprising that the documented institutionalized use of metrics is rare in the often-emblematic country of neoliberalism, the United States. Arguably, one reason for this is that the US-American research system is largely grant-founded, another is the large degree of regional differences and regional/state influence on policy. Germany, although widely different in its organization of research, also lacks any central evaluation system as each federal state has considerable impact on how universities are governed in the respective region. 5 Thus, it seems that national bibliometric systems for evaluation are dependent on a strong central state with ambitions to create nationwide bureaucratic systems. Ironically, the strong state, which is to be demoted in neoliberal thinking, appears as important for the establishment of national systems of evaluation. Similar reflections can also be made in relation to universities within a national system. In Sweden, for example, a vast majority (24 out of 26) of universities use publication indicators in one form or another (Hammarfelt et al., 2016). Interestingly, the two institutions that did not use bibliometric research evaluation, the Stockholm School of Economics and Chalmers University of Technology, are two of the three universities that are independently run (as foundations), rather than under direct state control. Hence, a unified, state-governed, bureaucratically governed academic sector appears to be more inclined to use metrics, while more loosely structured contexts with independent institutions are less prone to introduce indicator-based assessment. This means, in turn, that a relatively homogeneous academic landscape, with a high degree of centralization and government steering, appears to be a common feature for countries implementing national evaluation systems which make use of bibliometric measures. Such characteristics, we argue, may not be inductively understood as ‘neoliberal’ but rather as indications of national states displaying strong political and bureaucratic steering of its research system.

But also beyond national evaluation systems, and research evaluation in general, our short recapitulation on the development of bibliometrics in an earlier section of this article shows that its emergence was largely spurred by efforts to further our understanding of science – its patterns of productivity and collaboration – more generally rather than providing tools for effective assessment. Put differently, bibliometrics has its own history, and lives its own life as a method and research field, but it has gradually become co-opted by a growing need and want for quantitative and purportedly neutral research evaluation techniques, and thus by extension also co-opted by a broader reform agenda that has impacted academic research in profound ways. From this we can conclude that bibliometric measures are not neoliberal in themselves, but rather make up a bureaucratic technique which allows for distant steering. Such techniques may be useful in a political system described as neoliberal, but may make even more sense if, for example, attributed to ‘economization’ or ‘managerialism’. These two concepts signal, in turn, a need or want to reconceptualize policy in economic terms – for example, making university research about contributing in a measurable way to innovation and economic growth, and making university education a means to provide the labor market with qualified personnel – and a need or want to exercise tighter and more detailed control over professional activities considered to be afflicted by arbitrariness, inefficiencies, and human error. Bibliometric research evaluation offers a toolbox to accomplish both ends: metrics that allow square comparison and ranking of researchers, groups, departments, institutes, universities, and countries with little or no direct attention to the content of their activities give an impression that they are production units and that their outputs are commodities. Note the paradox: paying little or no attention to the actual content of academic research or education in a qualitative sense means that the possibilities of evaluating their contributions to innovation and economic growth are stymied. However, an economic style of thinking, integral to economization, implies quantifying also the non-quantifiable, or considering all human and social activities as economic in the shortsighted and instrumental sense of profit- and productivity-maximation, balancing incentives, and competition. Managerialism is similar, but with clearer agency involved. The ‘one-size-fits-all’ approach to governance and management of organizations that is implied by managerialism is very illiberal in character, as it builds on a view of managers as a specific class of people trained for the task of managing organizations, optimizing their operations by increasing their efficiency, and thus minimizing any influence of what it views as ‘human error’, including arbitrariness and nepotism but also professional judgment, craftmanship, and collegial decision-making processes. That something is illiberal does not mean it cannot be neoliberal, but there is enough in this seeming play on words to suggest that the interrelations between managerialism and neoliberalism need to be better explicated for any one of them to work conceptually to analyze and explain evaluative bibliometrics.

Similarly, NPM must be far better explained and problematized, especially in relation to bibliometrics and research evaluation, to be able to enhance the explanatory value of our studies and not diminish it. If NPM is used as an ex post facto label put on a bundle of reforms of public sectors in the West in the 1980s and 1990s, that were probably all motivated by a wish to increase efficiency by the introduction of line management, tighter financial controls, and systematic and standardized quality appraisal, then it is probably very similar to neoliberalism understood as ‘a set of economic policies’ or ‘actually existing neoliberalism’ (see above), or at least as a subset of it, aimed specifically at reform of public sector organizations. This would make NPM a better conceptual fit to historically explain the rise of evaluative bibliometrics, in two ways. First, it suffers slightly less from predominantly normative and pejorative use than ‘neoliberalism’. Second, it concerns more clearly and distinctly reform agendas, and actual reforms in actual organizations (including universities), than ‘neoliberalism’ ever has. It appears neoliberalism continues to have trouble to escape the conceptual vagueness that we problematized at the beginning of this article.

Our focus here has been on the systematic and institutional application of bibliometrics, and it could be claimed that our argumentation overlooks how individuals employ such measures. Critical studies of metrics have pointed to how individual measurement practices can be tied to the idea of neoliberal selves, and a market place of ideas. Such arguments should not be discarded, and there are arguments to be made in relation to the development of an ‘attention economy’ in academia. However, we should be careful to describe our contemporary obsession with citations, or views, as historically unique. Instead, it could be maintained that modern academia to a degree always has been characterized by a competition for collegial reputation and recognition. Arguably, services like ResearchGate or Google Scholar profiles have neoliberal features in how they emphasize competition. However, such platforms should, in our view, be seen as an intensification of in-built characteristics of how the research system works (Francke and Hammarfelt, 2022), rather than a neoliberal break with the good old times when scientists were uninterested in status and rewards.

Overall, our argument is not that neoliberalism and the proliferation of bibliometric measures are unrelated phenomena. What we suggest is that using neoliberalism as a central explanation to the emergence of measures and indicators is not very effective analytically, as it might actually hinder detailed studies of their use and effects. Therefore, we propose to use either a more distinct definition of neoliberalism or turn to a more detailed set of concepts, such as economization, managerialism, and bureaucratization in order to depict the social and political hinterland on which the use of bibliometric measures resides.

Footnotes

Acknowledgements

We are grateful for the detailed, rich, and constructive comments by the two anonymous reviewers.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.