Abstract

Quality data are vital to the planning and operation of traffic systems. High occupancy vehicle (HOV) lanes, for instance, must comply with federal performance standards. If an agency fails to meet the standards, the facility is considered to be “degraded” and the agency is required to undertake actions that would return the facility to satisfactory operation. This could include removing exempted vehicles (e.g., low-emission vehicles) or increasing toll prices and passenger occupancy limits. Such policy changes may be costly, and may affect related policy goals, such as promoting clean air vehicles. Owing to constant changes in the system (e.g., roadwork, system upgrades), some of the thousands of HOV sensors in California’s transportation system are misconfigured, such as being labeled as general-purpose lanes. In this situation, HOV lane data may be mistakenly aggregated with general-purpose lane data and vice versa, causing a HOV facility to be erroneously reported as degraded and requiring unnecessary policy action. Detecting these misconfigurations is challenging and labor-intensive to accomplish manually. The purpose of this research was to utilize machine learning techniques to detect sensor misconfigurations and to understand the extent to which they affect performance reporting of HOV lanes. The results for Caltrans District 7 (Los Angeles and Ventura counties) showed that about 5% to 8% of Performance Measurement System HOV sensors are misconfigured. Therefore approximately 27 to 44 mi of HOV lanes are erroneously measured, with approximately 10 to 16 mi (38%) of those reporting an erroneously high degradation rating.

Keywords

Quality data are the lifeblood of traffic management, research, and policy making. Without accurate and trustworthy data, it is impossible to conduct analyses and make confident decisions. This is true for high occupancy vehicle (HOV) and high occupancy toll (HOT) lanes, which not only utilize traffic data to set demand-based pricing and occupancy minimums but are required to report HOV lane performance to ensure these federally funded facilities meet performance requirements. Thus, poor data quality in producing these reports could hold major policy implications.

If a HOV facility fails to meet federal standards, it is considered “degraded”; in this case, the responsible agency is required to undertake appropriate actions, such as increasing HOT lane prices, increasing occupancy limits (e.g., from 2+ to 3+), or modifying the rules for exempted vehicles (e.g., low-emission vehicles). All such actions could have collateral impacts on other policy goals, such as reducing emissions by promoting clean air vehicles. This means that high quality traffic data are paramount to ensuring facilities are operating as intended and to avoid unnecessary, and potentially costly, policy actions.

With over 925 lane miles across 345 HOV facilities, the California Department of Transportation (Caltrans) currently operates the largest inventory of HOV lanes in the United States. Freeways in California are monitored in real time by Caltrans using some 40,000 fixed traffic sensors across the state, the data for which are stored in the Performance Measurement System (PeMS) ( 1 ). PeMS data are used for a variety of operational and reporting purposes, including HOV degradation reporting. Continuous operation of such a large system is inevitably prone to data collection and integrity issues, such as sensor outages, data corruption, or sensor misconfiguration. Misconfigured sensors operate normally, but are incorrectly labeled as another sensor, such as that of a general-purpose lane, and vice versa. This is particularly problematic because a congested general-purpose mainline freeway lane could cause a HOV facility to be erroneously reported as degraded.

The purpose of this research was to utilize machine learning techniques to detect sensor misconfigurations and to understand the extent to which they affect HOV lane degradation reporting. This research was conducted in three phases: first, different machine learning models were trained and tested in a controlled environment along a 16-mi long corridor of the I-210 in Pasadena, CA, containing 33 sensors. The trained models were then further tested on a larger scale for the entirety of Caltrans District 7 (Los Angeles and Ventura counties) comprising approximately 870 sensors. In this second phase, the analysis was twofold: a single date-range analysis with a manual review of detection results, and a longer-term multidate analysis across 13 quarters from 2018 to 2021. The purpose of this was to perform both a “deep” analysis with an in-depth manual review of results for a single date, and a “wide” analysis across several dates without a manual review, to simply evaluate how detection rates vary over time. The third phase was to evaluate the magnitude of misconfigured sensors in HOV degradation reporting.

Background

With computational advancements and the explosive growth in emerging data sources, there have been a multitude of machine learning techniques proposed and utilized in transportation. Machine learning implementations span a huge range of potential applications, from traffic flow monitoring ( 2 ), to identifying wage inequality in transportation ( 3 ), the routing of snow plows and hazardous substances ( 4 ), and even predicting budgets for transportation research grants ( 5 ). New machine learning techniques and applications are constantly being developed and improved but can generally be organized along a spectrum of data-generating and data-analyzing applications. There are, of course, applications that both generate and analyze data, such as for traffic control and monitoring ( 6 ), or the imputation of missing data ( 7 , 8 ).

Data-generating applications, such as artificial intelligence-based sensors, fundamentally use base data (e.g., trained detection models) to automate the collection of data (e.g., counts) more efficiently or effectively than if done with existing technologies. Applications include collecting traffic counts and trajectory information ( 9 , 10 ), but also functions such as asset management with automated road inspection and maintenance prediction ( 11 , 12 ). These technologies expand the capabilities of transportation agencies, helping them to keep up with their growing needs in light of limited resources. At the other end of the spectrum is data-analyzing applications of machine learning. These applications analyze and fuse data from multiple sources to extract useful information or predictions that would otherwise be difficult to elicit. For example, traffic flow and incidents can be used to model and even predict future conditions ( 13 – 15 ). Similarly, safety research has used machine learning techniques to uncover hidden outcomes by fusing multiple data sources, such as land use and demographics ( 16 – 18 ).

There is an ever-growing body of research utilizing novel machine learning techniques to improve the quality and applicability of traffic count data. For example, various machine learning techniques have been utilized to forecast traffic patterns ( 19 , 20 ), back-cast missing counts ( 21 , 22 ), or estimate hourly adjustment factors ( 23 ). Recent advances in sensing technology have also created opportunities to improve traffic count data for historically undercounted and difficult to count modes, such as pedestrians and bicycles ( 24 , 25 ). However, the bulk of traffic data machine learning studies typically focus on improving the accuracy and expanding the applicability of data ( 26 – 28 ), and do not necessarily consider the validity and integrity of the data itself.

It is important to clearly distinguish between data “accuracy” and “validity.” Although both terms relate to data quality, accuracy in this article is defined as how close the measured value is to the actual value (e.g., the number of vehicles that cross the sensors versus the number counted), and validity is defined as whether the data record itself is correctly associated with the subject. For example, it is possible to automate the detection of malfunctioning sensors ( 29 ) and even cyberattacks ( 30 , 31 ), but a malfunctioning sensor in this context can still be “valid” even if it is so inaccurate that it cannot be used. In contrast, it is possible for a sensor to be operating normally and accurately but to be labeled incorrectly in a database and, thus, be invalid and accurate. It is also possible that a sensor could be both malfunctioning (i.e., inaccurate) and misconfigured (i.e., invalid). The primary objective of this study was therefore to improve the validity of sensor data by detecting misconfigured sensors.

Data validity in this context is a critical aspect of data quality, as both research and industry rely on the assumed accuracy, reliability, and validity of state department of transportation (DOT)-owned permanent continuous counters. This lack of data validity would undermine the results of any analysis, regardless of whether it is machine learning or conventional ( 32 ). As DOTs seek to extrapolate traffic data through supplemental manual counts and third-party mobile data ( 33 , 34 ), it is imperative that data be valid as well as accurate.

This research reviewed and tested a variety of different machine learning approaches for the purpose of detecting anomalous, misconfigured HOV sensors. Anomaly detection is the process of identifying unusual patterns and unexpected observations (i.e., outliers) in a data set. Hawkins defines an outlier as “an observation which deviates so much from other observations as to arouse suspicions that it was generated by a different mechanism” ( 35 ). The conditions generating an outlier differ from those of normal observations; therefore, an outlier contains valuable information about unusual characteristics of a system and its data generation processes ( 36 , 37 ). Analyzing such abnormal characteristics can provide valuable insights and guide the actions that should be performed.

Fundamentally, machine learning approaches can be divided into two basic types: supervised and unsupervised learning ( 38 ). In supervised models, ground truth labels are available, indicating whether an observation is an outlier or not.

Supervised Learning Methods

Among the prominent supervised learning methods, the following approaches were explored in this research:

K-nearest neighbors (KNN)—A nonparametric model that uses stored training data to classify the target variable based on nearest neighboring data points in the training set ( 39 ).

Logistics regression—A probabilistic model that estimates the probability of the target variable belonging to one category or another using a sigmoid function to represent the distribution ( 40 ).

Decision tree—A conjunction of rules to organize the data into a tree structure, where each branch is a binary decision with a threshold for each variable (i.e., belongs to one group or another). Following the branches along each decision point will determine the final classification ( 40 ).

Random forest—An ensemble of decision trees for which a single training data set is randomly divided into M samples and each is used to train the classifiers before being aggregated (i.e., bootstrapped) in a final combined result ( 41 ).

Unsupervised Learning Methods

In unsupervised techniques, labels are not required, and statistical differences must be inferred from the data themselves. As a result, unsupervised methods do not need to be trained and are run directly on the data. The following unsupervised learning methods were explored in this research:

Isolation forest—An unsupervised method utilizing decision trees, but instead of profiling normal values into classifications it attempts to isolate anomalies ( 42 ).

Local outlier factor (LOF) (density-based detection)—Uses KNN to compute local reachability for each point to its nearest neighbors, enabling statistical outliers to be flagged ( 43 – 45 ).

One-class support vector machine (SVM)—SVMs find a decision boundary between points that maximizes the margins, dividing the data into discrete classifications. A one-class SVM is a special case of SVM in which no labels are provided, making it an unsupervised method ( 46 ).

Robust covariance (distance-based detection)—Another variation of KNN that relies on the median distance to the nearest neighbors. The median value, rather than the mean, provides a more robust calculation of distance that is less sensitive to anomalous data ( 39 ).

All methods differ in their approach, with each having advantages and disadvantages. Selecting a model for anomaly detection depends on various factors such as analysis objectives, data size, and data type.

Methods

The following section describes the methodology used to detect and evaluate misconfigured HOV sensors in Caltrans data for District 7. The overall research design was structured as follows:

Model training and selection—The different machine learning models were tested on a small, controlled set of data to determine the most appropriate models. The test data also served as training data for the larger districtwide test.

Districtwide test—Two models were run on the entirety of Caltrans District 7 (Los Angeles and Ventura Counties). This was done at two timescales: a. Single date-range—One week of traffic data was analyzed and manually evaluated to confirm the results. b. Multidate range—A week of traffic data was analyzed for 13 quarters from 2018 to 2021 to determine detection variability and frequency. 3. Erroneous degradation magnitude—The single date-range results were further evaluated to determine the magnitude of erroneous degradation and its impact on annual performance reporting.

The remainder of this section is organized as follows: first is a description of model selection and training, how the traffic sensor data are structured, and the useful features extracted for analysis. Next is a description of the study region and data used in this research, followed by the training results and model selection. Finally, is an explanation of how the detection results were manually evaluated and the degradation impact was calculated.

Study Region and Data

All machine learning methods were tested using data from Caltrans District 7, which includes Los Angeles and Ventura Counties. Most traffic sensors in District 7 are 6-ft induction loops embedded in the pavement. Typically, the sensors are single loops only, which cannot directly measure speed and only physically measure the counts of vehicles and the occupancy every 30 s (i.e., the percentage of time the sensor is occupied by a vehicle). For single-looped sensors, velocities were calculated by the PeMS database using a g-factor algorithm that converts occupancy to density based on a calibrated vehicle length, called the g-factor ( 47 , 48 ). Under normal operation, the count accuracy is expected to be within 4 mph, but typically achieves 2 mph accuracy ( 49 ). The region contains approximately 870 HOV sensors but the amount varies as sensors are added, removed, and modified over time. Two types of data are used:

5-min traffic counts—Used in the machine learning models to identify potentially misconfigured sensors.

Hourly traffic counts—Used to calculate the degradation status of HOV facilities. Some 180-days’ worth of aggregated hourly counts were used in this calculation.

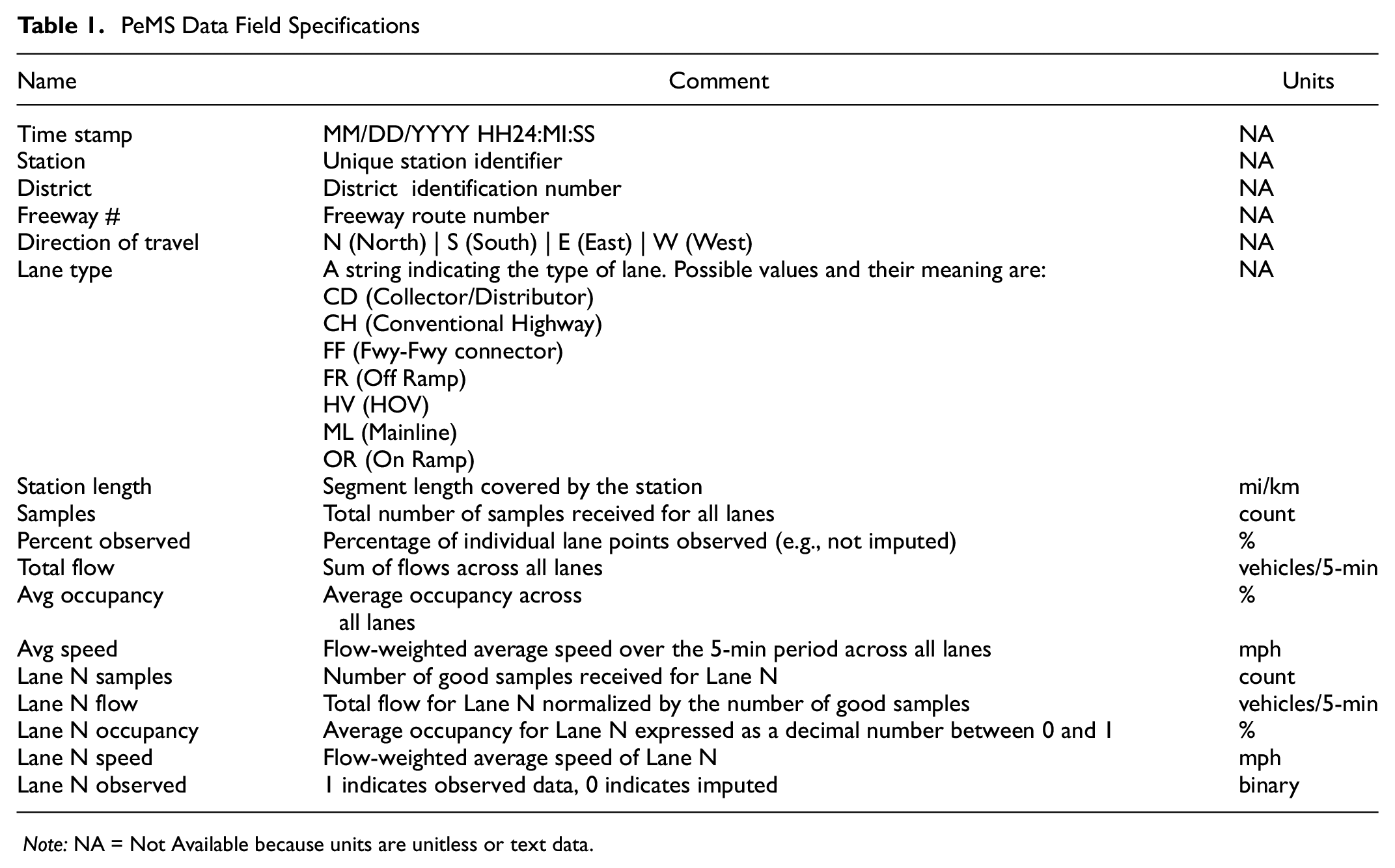

Both the 5-min and hourly data possess the following field specifications, shown in Table 1, the only difference being the time aggregation. Depending on the analysis date, each daily 5-min traffic count data file for District 7 contained approximately 4,800+ sensors with 1.4 million rows of data. Thus, a weeklong data analysis required approximately 9.8 million rows. However, these data were reduced by about half when only analyzing roadways with HOV sensors and were then further reduced substantially through feature extraction and reduction, described in subsequent sections.

PeMS Data Field Specifications

Note: NA = Not Available because units are unitless or text data.

Although the 16-mi study corridor along the I-210 in Caltrans District 7 had been extensively verified and validated for sensor misconfigurations, not all sensors were currently operational or reliably reporting traffic data. This may have been caused by road maintenance, lane closure, or malfunction, for example. Thus, data were prefiltered by keeping only sensors that met the following criteria:

≥5 total samples per lane,

≥50% “observable” data, and

≥50% of the time stamps reporting flow.

The analysis was then conducted on the remaining sensor data.

Model Selection and Training

To select the most appropriate machine learning methods for this task, the eight methods identified in the literature were compared by testing a sample of misconfiguration-free traffic data. Seven consecutive days of traffic data from December 6 to 12, 2020, were used with known misconfigurations randomly introduced into the data. The models were then trained (if applicable) and tested on the data. The accuracy was then able to be calculated as the total correct predictions, true positives plus true negatives, divided by the total number of predictions. Using data with no unknown misconfigurations is critical, otherwise it would be impossible to determine the true positives and true negatives.

The test data were from the aforementioned 16-mi corridor along the I-210 freeway in Caltrans District 7, located in the Pasadena, CA, area. This location was selected because the I-210 corridor is part of a large-scale simulation pilot project and had been extensively verified for misconfigurations before this study. Although it is possible that real unknown misconfigurations may persist in the data, there were likely to be few if any at all. This served as the best-case data set in which to introduce artificial misconfigurations for model training and testing in a relatively controlled setting while still using real traffic data.

Data Analysis Structure

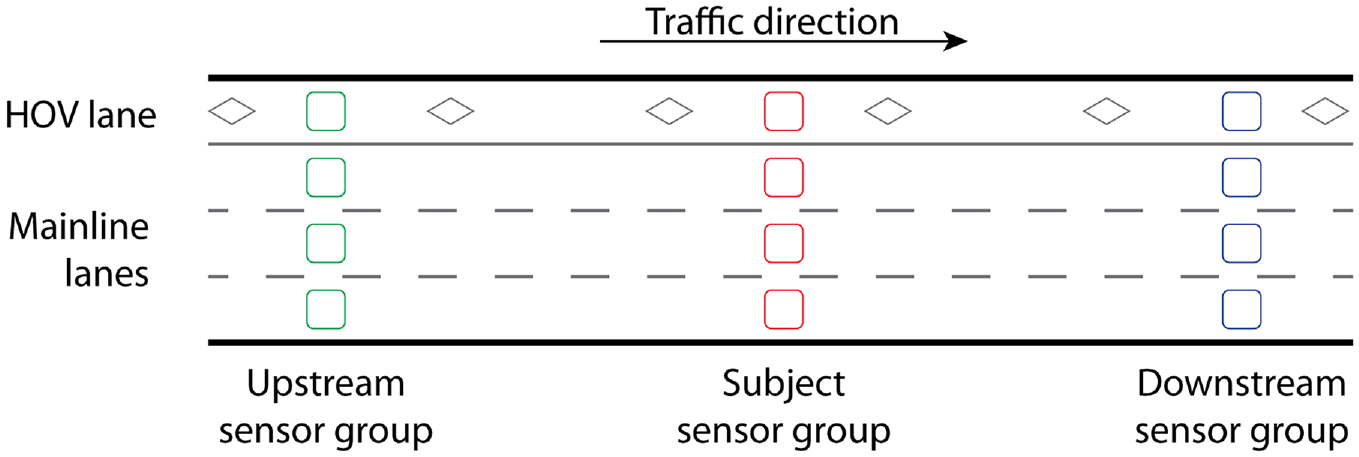

To conduct machine learning on traffic data, it is necessary to reduce the data and extract critical features for the machine learning methods to analyze and use to identify the erroneous labels. The data were first organized into a structure that related the sensor positions to each other in a group, and to nearby groups, in a way that the algorithm could analyze each HOV sensor systematically, shown in Figure 1.

Sensor organizational structure.

A sensor group is composed of sensors that share the same longitudinal position (postmile) along the roadway but have different lateral lane positions (e.g., mainline or HOV lanes). These sensor groups are then further organized into a structure of groups linearly relative to each other as being either upstream or downstream. Upstream sensors precede the subject sensor group relative to traffic flow (i.e., vehicles cross upstream sensors before the subject sensor), and downstream sensors succeed the subject sensor group. In this organizational structure, data from sensors can either be compared laterally between sensors in the subject group (at the same postmile), or longitudinally across upstream and downstream sensors within the same lane.

The machine learning algorithm analyzed each HOV sensor as the subject, comparing its features to the other sensors within the group and to the nearest upstream and downstream sensors. After each HOV analysis, the algorithm moved to the next sensor and repeated the analysis for all HOV sensors in the data set.

Feature Extraction

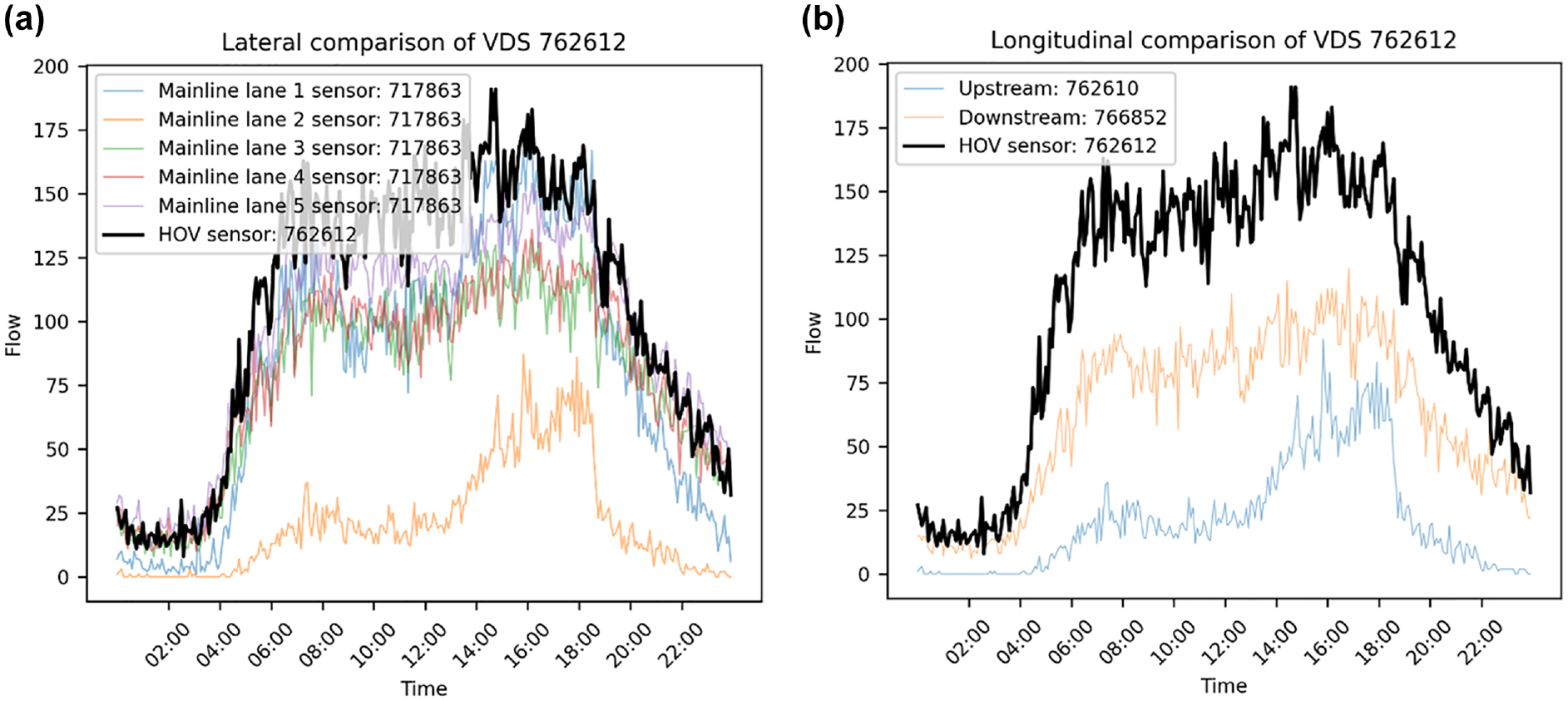

Inductive loop traffic sensors collected two fundamental measurements: flow and occupancy. Flow is a count per unit of time, such as vehicles per hour. Occupancy in this setting is a very different measure from passenger occupancy, it is the proportion of time that the sensor is active, in other words, the amount of time that a vehicle “occupies” the sensor. Although flow and occupancy can serve as useful features to further discriminate for erroneous sensors, a key feature identified by the researchers for indicating whether a HOV lane is misconfigured is the average nighttime traffic flow. It was observed that nighttime traffic flow in HOV lanes tends to be at or near zero. This can be intuitively explained by there being little reason to utilize a HOV lane at night since traffic congestion is at its lowest.

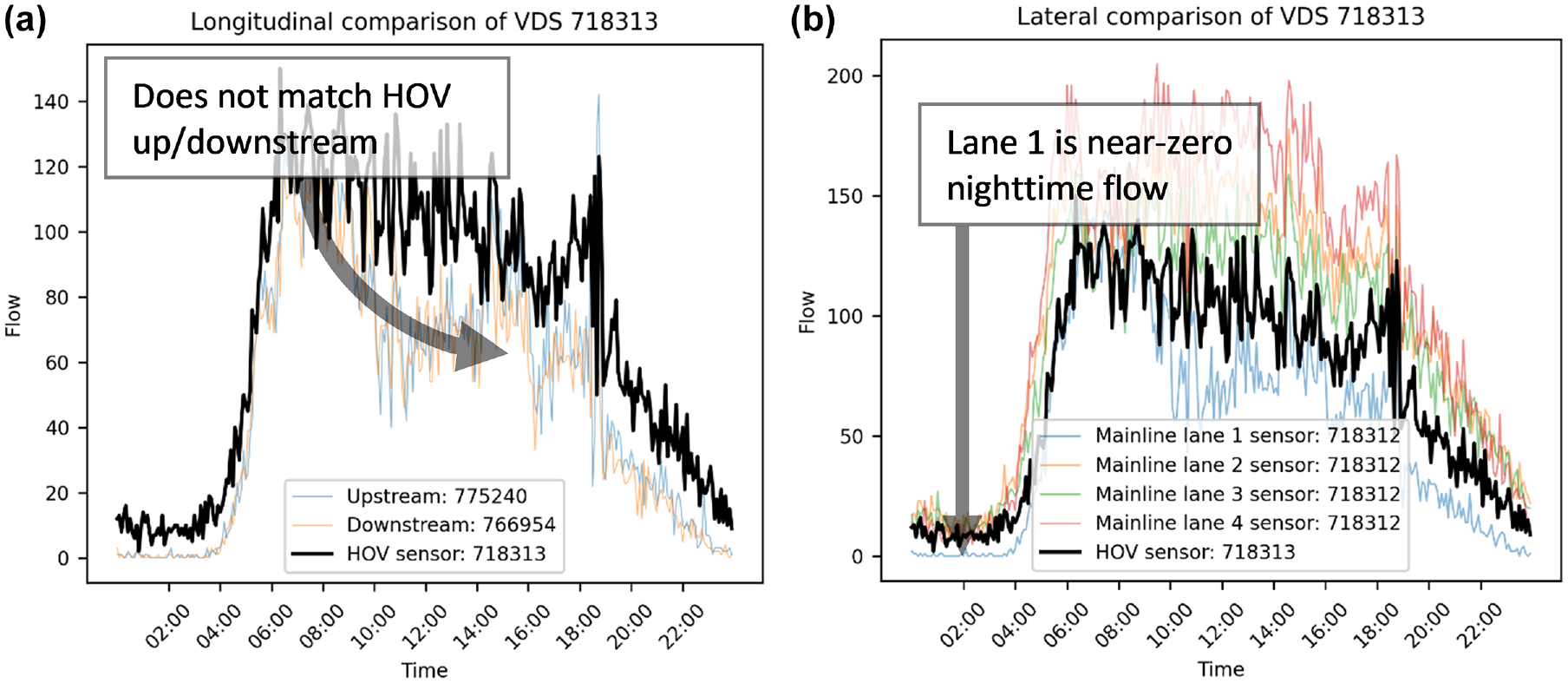

Figure 2 shows a misconfigured HOV lane in dark black where the upstream and downstream HOV lane flows in orange are near zero at night and clearly do not match the subject sensor in either case. In this case, it is likely that this HOV sensor was a mainline lane mislabeled as an HOV lane.

Example comparisons of high occupancy vehicle (HOV) lane sensor versus (a) lateral mainline lanes and (b) longitudinal upstream/downstream HOV sensors located in I-605 Northbound near the intersection of Alondra Boulevard in the city of Cerritos, CA.

This obvious mismatch in nighttime flows makes it a useful feature for identifying erroneous HOV sensor labels. Moreover, calculating the average nighttime flow also provides a single data point per sensor for each 24-h period, essentially reducing the 288 rows per sensor of 5-min count data in each 24-h period to a single row. This is called feature reduction and is useful in machine learning as it not only extracts useful features as in this case, but can substantially reduce the quantity of data, allowing the algorithm to be computationally more efficient.

Feature Reduction

Although the erroneous HOV sensor label in Figure 2 is easily identifiable to the naked eye, computers require a more analytical and structured statistical comparison to make these determinations. This was achieved by preprocessing the data to reduce and extract flow and occupancy into the following two features:

Average nighttime traffic flow between the hours of 1:00 and 3:00 a.m., and

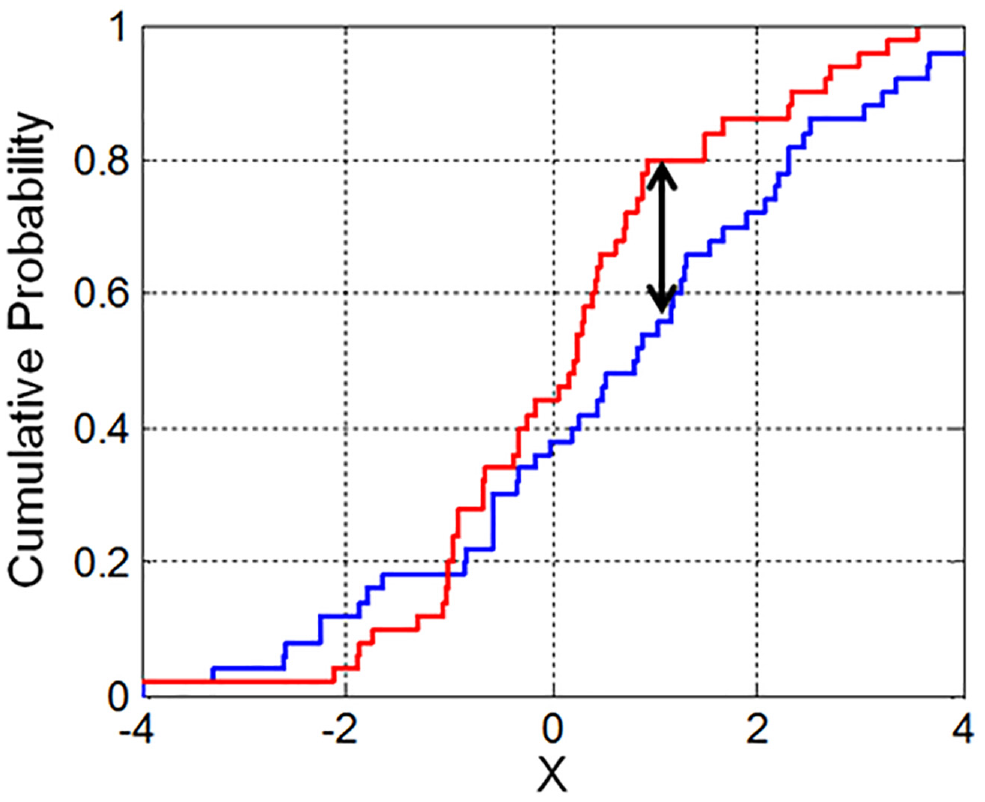

A two-sample Kolmogorov–Smirnov test (K-S test) to indicate the probability that the flow and occupancy profiles between sensors were statistically equal.

A K-S test is conducted by first calculating the empirical cumulative distribution for each of the respective traffic flow time series data. This both normalizes the data and helps smooth out messy time series into a monotonic function. The K-S statistic is then determined as the largest absolute difference between the two distribution functions, as shown in the example in Figure 3. This K-S statistic can then be used for hypothesis testing by checking whether the K-S statistic exceeds the critical value for the desired confidence level (e.g., 95%).

Example K-S empirical cumulative probability distribution function.

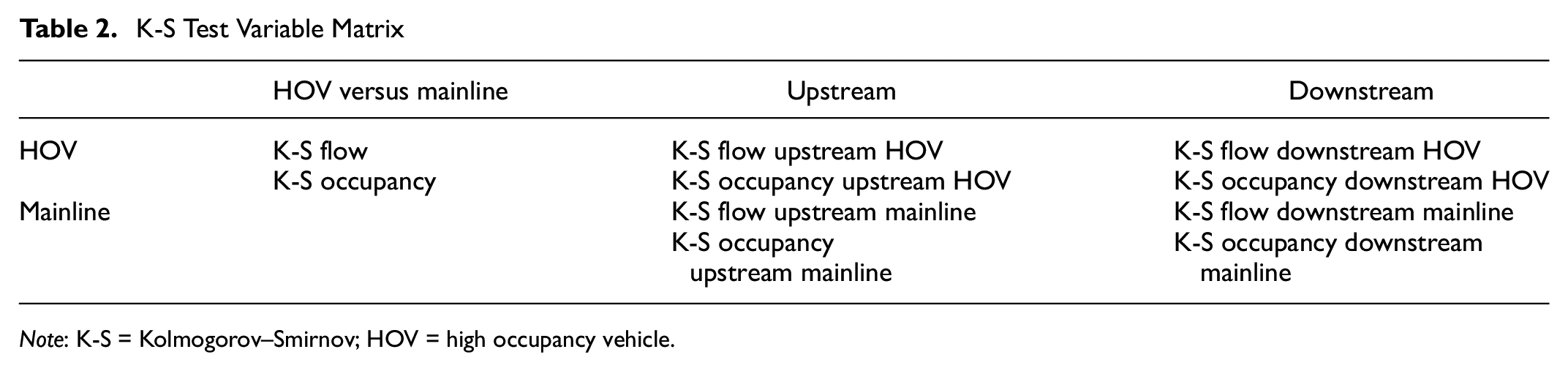

The K-S test was conducted between HOV and mainline lane sensors within a group, and the upstream and downstream sensors in the same lane. This was conceptually organized into a variable matrix as shown in Table 2. The result of each test combination is a p-value reflecting the statistical probability that the two distributions (i.e., flow or occupancy profiles) are significantly different.

K-S Test Variable Matrix

Note

The extracted feature data set was a substantially reduced table in which, instead of multiple rows of data per sensor, there was one row per unique HOV sensor with associated values in columns. These extracted features were then used in the various machine learning methods to predict the binary outcome of whether each HOV sensor was correctly labeled or not.

Model Selection

To determine which technique was most appropriate for this task, the eight methods were compared by testing a sample of the I-210 data with known misconfigurations randomly introduced. The models were then evaluated by comparing their prediction performance. The prediction performance was calculated using several different measures.

The overall accuracy was calculated as the total correct predictions, true positives plus true negatives, divided by the total number of predictions. However, overall accuracy does not measure how many misconfigurations the model missed, or how many were false positives. These other binary prediction performance measures are “precision” and “recall.” Recall, sometimes called sensitivity, is the measure of how many targets were hit out of the total number of targets.

Recall performance does not measure false positives and is useful in applications for which it is more important to hit as many targets as possible, regardless of false positives. Precision, in contrast, is the measure of how many of the predicted positives were true and is useful in applications for which false positives are highly detrimental. An alternative measure of overall accuracy is the F-score, which is essentially the harmonic mean of precision and recall.

To provide a sample of both weekday and weekend traffic patterns, seven consecutive days over December 6 to 12, 2020, of traffic data were used for machine learning analysis. To explore day to day variability and the impact of data quantity, the data were analyzed in two ways:

Separate daily traffic data tests—The machine learning algorithms were run for a single day of traffic data, repeating the run for each of the 7 days independently.

Contiguous daily traffic data tests—The machine learning algorithms were run using all seven consecutive days of traffic data as one input data set.

The separate daily tests were intended to reveal the stability of the results, that is, the extent to which results can vary when a different day of data is used. The contiguous daily test was intended to determine whether providing more data to the algorithm would affect the results. Based on these results, a final selection of one supervised and one unsupervised method was utilized for the full districtwide test.

Manual Evaluation of Results

The machine learning prediction provided a binary outcome for each sensor as being either misconfigured or not. Without manual evaluation, it cannot be confirmed whether it is a true misconfiguration or a false positive. Moreover, the prediction results did not specify what the misconfiguration issue was (e.g., Lane 1 swapped with HOV lane). To validate the prediction results, each of the predicted misconfigurations was manually evaluated to confirm the machine learning results and diagnose the problem. Because of practical resource limitations, only the sensors flagged for misconfiguration were manually evaluated among the entire set of approximately 870 sensors in District 7.

On inspection of the prediction results, not all misconfigurations could be definitively confirmed as a discrete true or false. For example, some sensors appeared misconfigured but the sensor they had been swapped with could not be determined. In some cases, the sensor label was valid and was not swapped but the actual flow and occupancy data appeared corrupted (e.g., a temporary sensor malfunction). To provide some qualitative description for these cases, each sensor flagged by the machine learning algorithm was given one of the following categories based on manual evaluation:

Misconfigured—Lane label swapped, but data appear OK (definite true positive);

Indeterminate—Lane label is likely erroneous, but requires further review (likely true positive);

Corrupted—Data have errors, label may be OK (likely false positive); and

OK—Data and label appear to be fine (definite false positive).

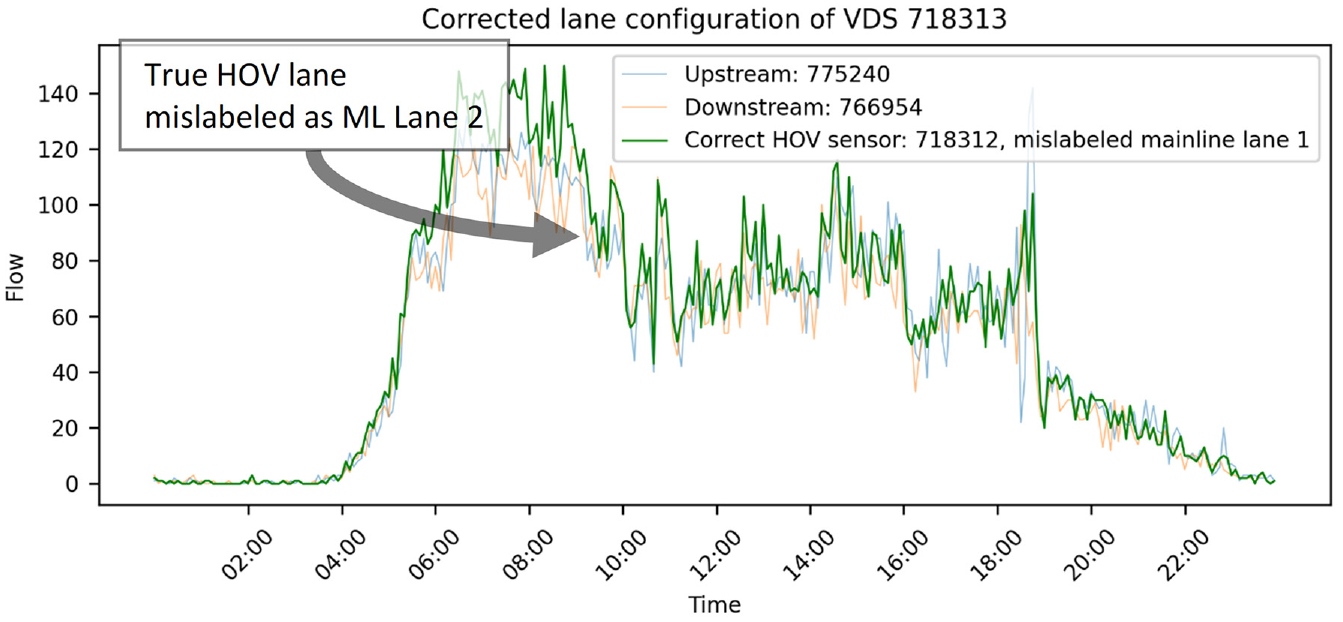

These categories were determined based on a preliminary inspection of the prediction results. It should be emphasized that all sensors diagnosed as “indeterminate” were likely to be misconfigured, but the correct sensor ID could not be definitively identified. Further analysis or physical inspection of the device in the field would be necessary to be 100% conclusive. However, for the sensors diagnosed as “misconfigured” it was possible to select the correct HOV lane just by looking at the data in the adjacent lanes. This is a common situation, when the labels for HOV and mainline sensor data have obviously been swapped. Figure 4 shows one such case in which the HOV sensor label was swapped with what should have been the Lane 1 sensor label.

Example visual diagnosis of misconfigured high occupancy vehicle (HOV) Sensor #718313 located on I-405 Southbound near the intersection of North Sepulveda Boulevard in Los Angeles, CA: (a) longitudinal comparison showing mislabeled HOV sensor (bold black line) not matching upstream (blue line) and downstream (orange line) sensors, and (b) lateral comparison showing mislabeled HOV sensor (dark black line) registering a higher nighttime flow than the mainline Lane 1 sensor (light blue).

The first indication of a problem was in the longitudinal comparison shown in Figure 4a, in which the HOV sensor did not match the flow from either the upstream or downstream sensors. The problem can be diagnosed from Figure 4b, in which the HOV lane flow is not near zero at night, but Lane 1 is, which is highly irregular. Swapping the labels from the HOV and Lane 1 sensors in Figure 5 showed that the newly relabeled HOV lane matched the upstream and downstream sensors. Not all flagged misconfigurations were as easily diagnosable as in the above example, but where possible a suggested diagnosis was given.

Flow profiles for true high occupancy vehicle (HOV) lane (dark green line) closely matching upstream (light blue line) and downstream (light orange line) HOV lanes located on I-405 Southbound near the intersection of North Sepulveda Boulevard in Los Angeles, CA.

Degradation

A degraded facility, as defined by 23 U.S.C. 166(d)(2), is one that does not meet the minimum average operating speed of 45 miles per hour (MPH) for 90 percent of the time over a 180-day monitoring period during morning and evening weekday peak hours (or both), in the case of a HOV facility with a speed limit of 50 MPH or greater; or not more than 10 MPH below the speed limit in the case of a facility with a speed limit of less than 50 MPH.

Caltrans further expands the federal definition of degraded to include four categories of degradation depending on the percentage of time that speeds fall below the threshold: (1) Not degraded (≤10%), (2) Slightly degraded (10% to 49%), (3) Very degraded, (50% to 74%), (4) Extremely degraded (≥75%).

Once potential misconfigured sensors were identified by the machine learning algorithm and verified through manual evaluation, the level of degradation was calculated for each sensor for the weekday peak periods of Monday to Friday, 6:00 to 9:00 a.m. and 3:00 to 6:00 p.m.

Results

This section begins by describing the performance and selection of machine learning models for districtwide analysis. It continues with a detailed diagnosis of the misconfigurations flagged in Caltrans District 7. The section concludes by detailing the impact of the misconfigurations on degradation performance.

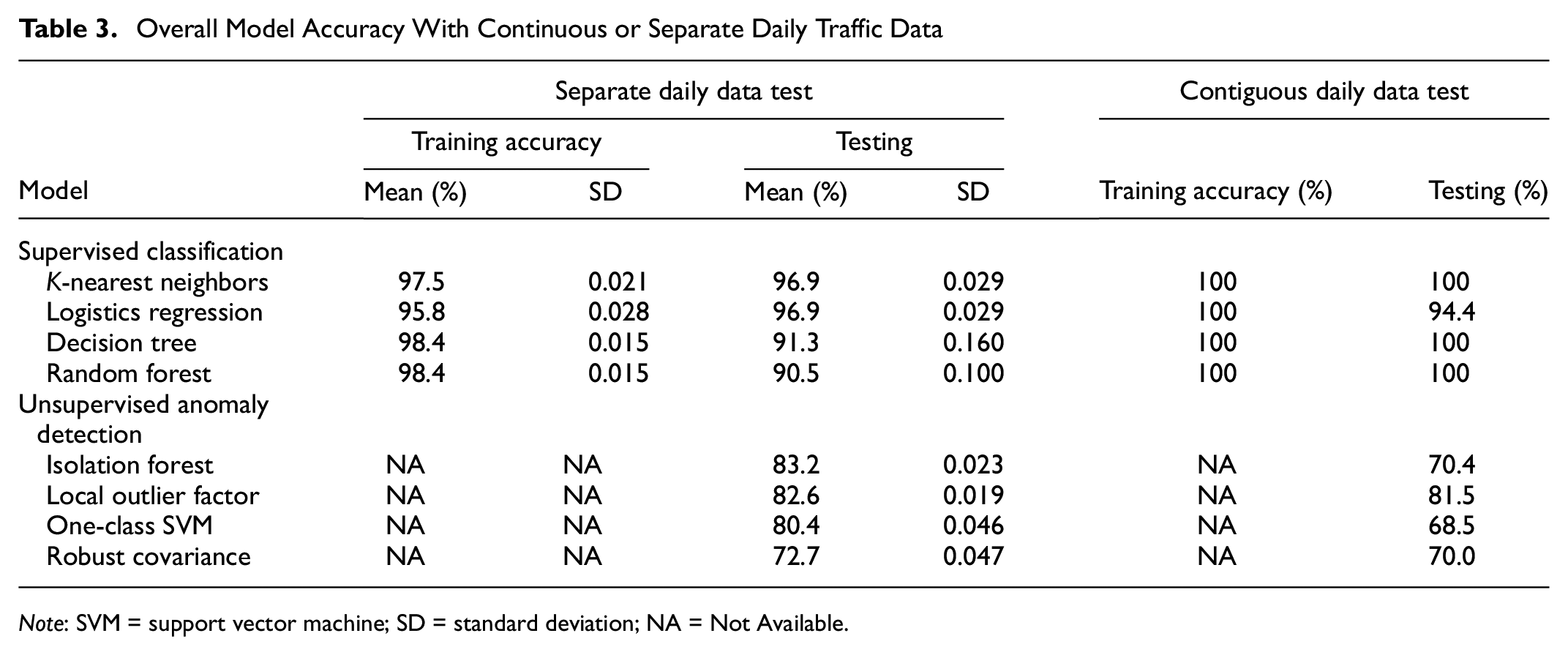

Model Selection and Training

The overall accuracy of each machine learning method using the training data is presented in Table 3. The values in this table present results from both testing paradigms for separate daily and contiguous weeklong tests. The separate daily tests represent the overall aggregated accuracy calculated as the mean and standard deviation of the seven test days. Note that the unsupervised models do not require a training data set and thus do not have an accuracy result in this case.

Overall Model Accuracy With Continuous or Separate Daily Traffic Data

Note: SVM = support vector machine; SD = standard deviation; NA = Not Available.

When the analysis period was extended, the results stabilized very quickly after only a week of data and did not improve accuracy further with either separate or contiguous daily data. This makes intuitive sense, given that most traffic sensor disturbances (e.g., incidents or debris) are brief and would have dissipated by the next day. Longer-term disturbance (e.g., construction) would be likely to result in a sensor blackout. The data set should also be small to keep the computational time and resource requirements within practical limits. Although technically feasible to include additional data in an analysis, the computational resources required to preprocess each 24-h period of 5-min traffic counts would have caused the model to quickly become encumbered with little performance gain. However, a notable result was that the unsupervised methods all performed slightly better using separate daily data compared with their contiguous daily data results. The reason for this is uncertain but given that providing more data yielded worse results may mean that a smaller data set may allow unsupervised methods to more easily detect statistical anomalies that could otherwise be missed among the additional “noise” of larger data sets.

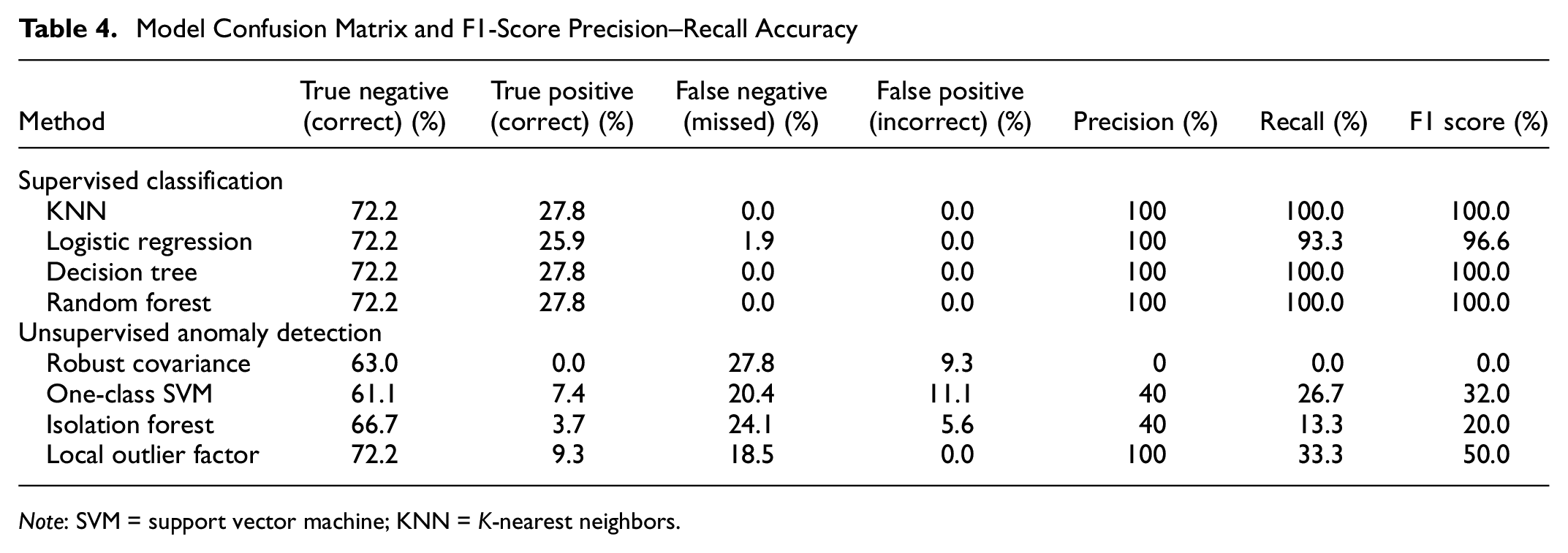

In addition to overall accuracy, the models’ confusion matrix and resulting precision, recall, and F1-scores are shown in Table 4. For supervised learning, most methods achieved very high F1-scores of 100%, except for logistic regression, which yielded 96.6% accuracy. The unsupervised methods yielded much lower accuracies of 0.0%, 32.0%, 20.0%, and 50.0% for SVM, robust covariance, isolation forest, and LOF, respectively. This disparity was not entirely unexpected because the availability of a priori training data enabled the supervised models to be calibrated to a specific target. In contrast, the unsupervised methods merely scanned for statistical outliers. Since traffic data are inconsistent and prone to irregularities on their own, it may be difficult for unsupervised methods to properly identify misconfigurations. However, training data risk being locally biased (i.e., overfitted) and may offer diminished accuracy when applied to regions with substantially different traffic data. Moreover, training data may not be available in all Caltrans districts, making the flexibility of unsupervised methods an attractive feature.

Model Confusion Matrix and F1-Score Precision–Recall Accuracy

Note: SVM = support vector machine; KNN = K-nearest neighbors.

Ultimately, the two methods selected for further use in the overall research were LOF for unsupervised methods, and random forest for the supervised method. Whereas LOF clearly outperformed other unsupervised methods, the supervised methods were tied in accuracy. However, the decision to use random forest over the other methods was based on ease of use and its dissimilarity to LOF. Random forest is a mature, easy to use, and well-established machine learning method. It is essentially a more sophisticated ensemble of multiple decision trees and often more robust than a single decision tree. Furthermore, it is not a distance-based method, whereas LOF is fundamentally based on a concept of local density by KNN. Thus, to use sufficiently diverse, easy to use, and robust methods, random forest, and LOF were chosen to be tested on a districtwide scale.

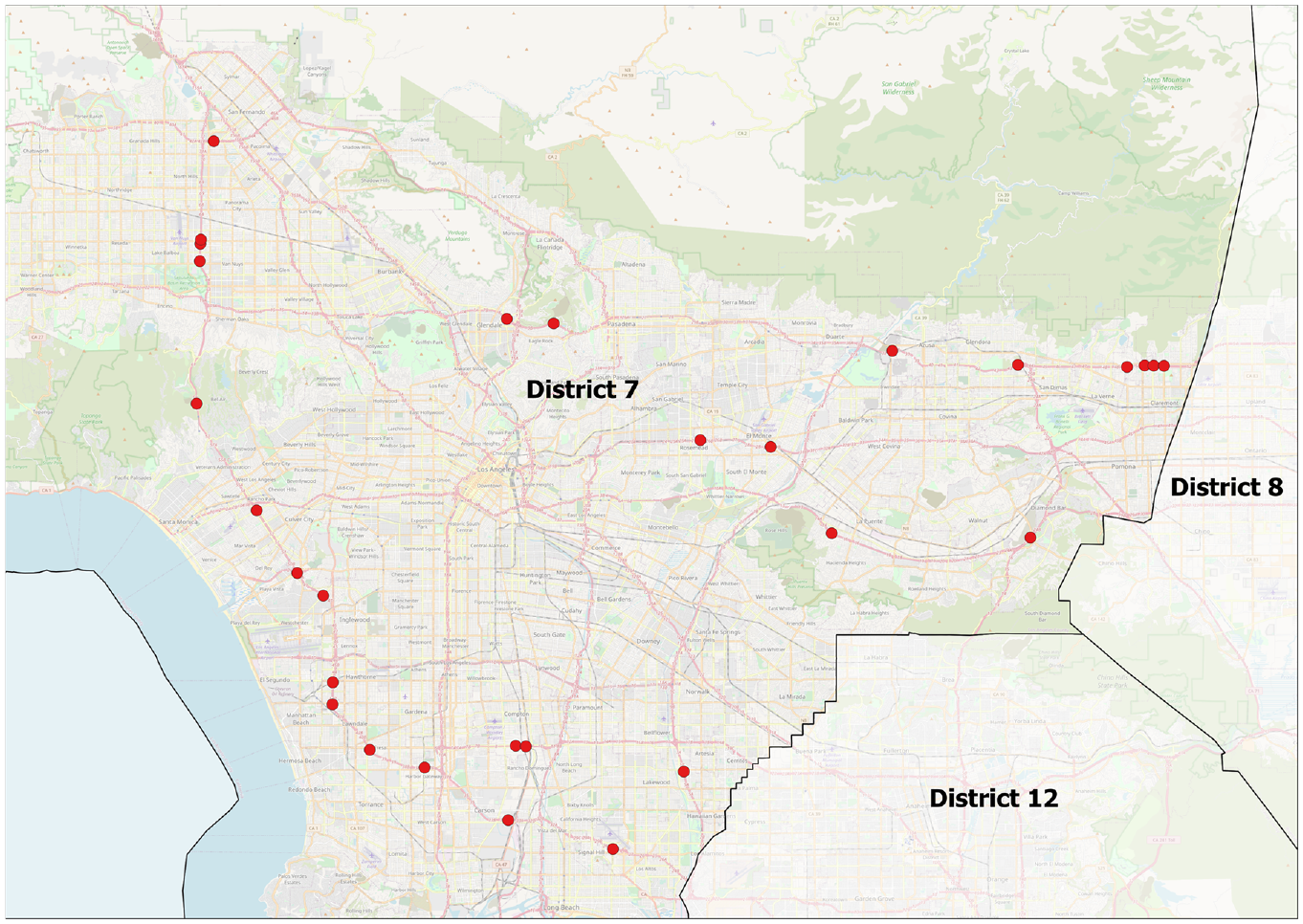

Manual Analysis of Single Date-Range of Flagged Misconfigured Sensors

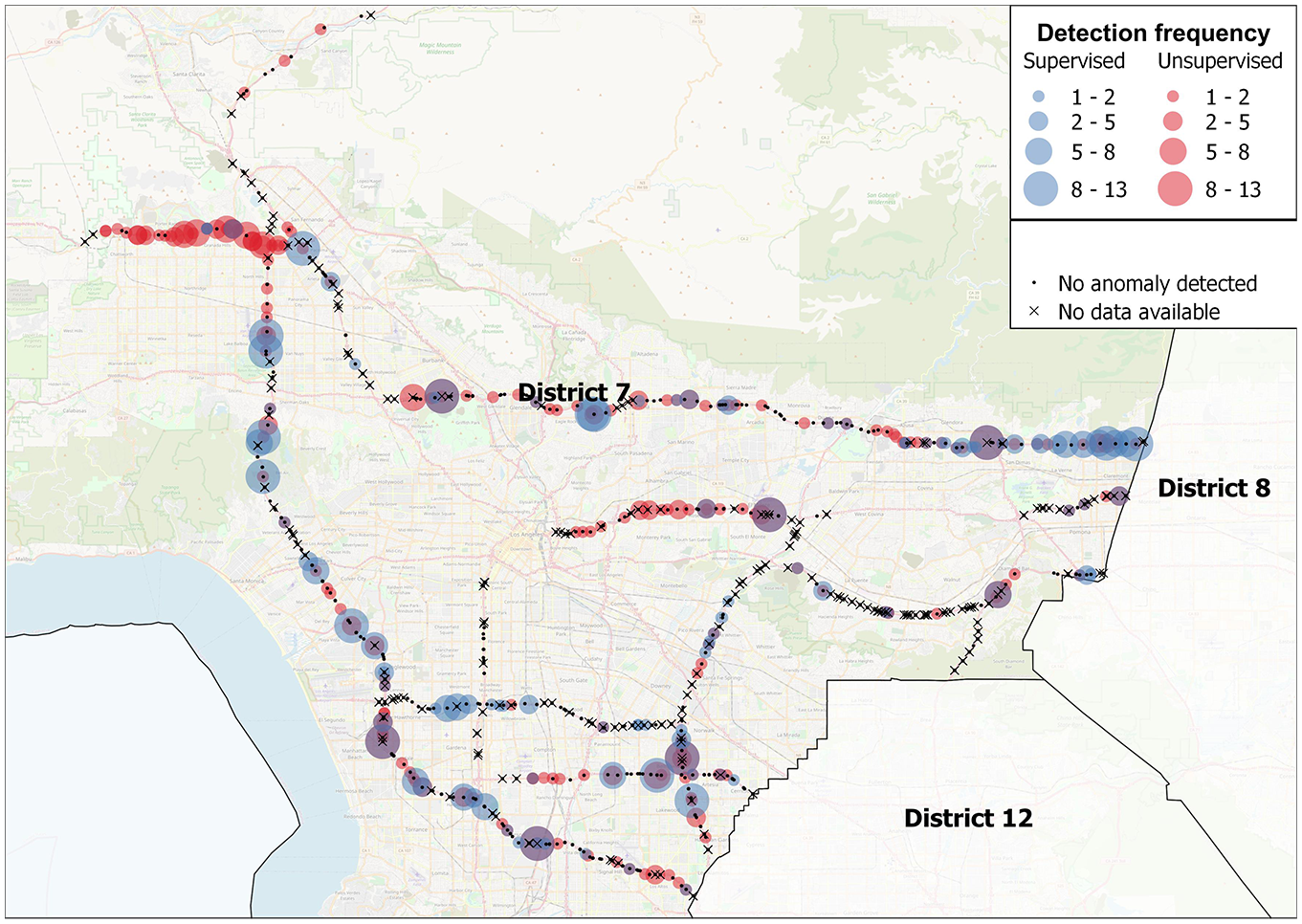

For the manual analysis of a single date-range, 7 days (December 6 to 12, 2020) were used as the traffic input data. Figure 6 shows the approximate location of each sensor flagged by each of the machine learning methods. Of the 314 viable sensors analyzed, a total of 29 (9.2%) sensors were flagged as potentially misconfigured by either supervised or unsupervised learning methods; 17 (5.4%) of those 29 were flagged by both supervised and unsupervised methods, and 12 (3.8%) were flagged by only the unsupervised method. It should be noted that the detections are not mutually exclusive, and an individual sensor can be detected by either or both methods. The outcome can be conceptualized as a Venn diagram, with two overlapping circles representing sensors flagged by either supervised or unsupervised methods and the overlapping portion representing sensors flagged by both. For this analysis period, the outcome resulted in all supervised flagged sensors also being detected by the unsupervised method, meaning that the supervised circle was completely inclusive of the larger unsupervised circle. However, such a result is not guaranteed and is likely to have been a coincidental outcome for the date range analyzed.

Map of flagged misconfigurations in District 7.

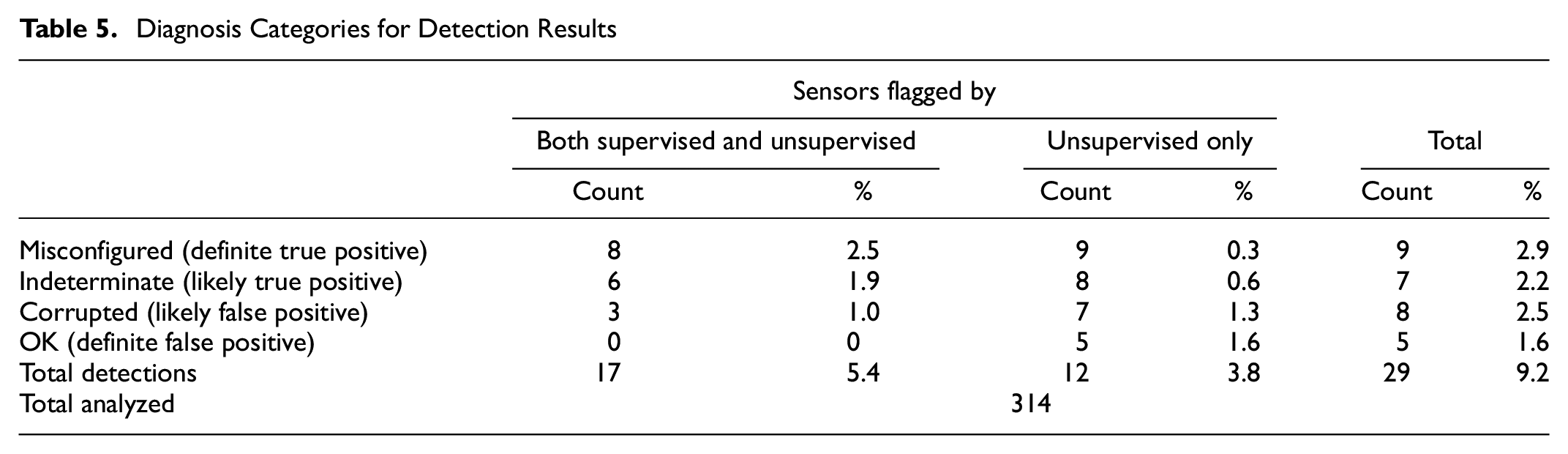

Table 5 provides a breakdown of the manual results, showing by machine learning method how many sensors fell into each diagnosis category (i.e., misconfigured, corrupted, indeterminate, or OK).

Diagnosis Categories for Detection Results

Based on these results, the unsupervised learning method provided a more relaxed detection approach and flagged an additional 12 sensors as being potentially misconfigured beyond those also detected by supervised learning. Of the 12 additional sensors flagged by the unsupervised learning method, five were false positives for which the data appeared “OK.” This showed that the supervised method provided a more conservative result with fewer false positives, but possibly more false negatives (i.e., missed misconfigurations). Unsupervised learning also flagged an additional four sensors with a “corrupted” diagnosis and an additional two with an “indeterminate” diagnosis. Although there does appear to be some amount of trade-off between a more restrictive supervised method and a more inclusive unsupervised method, both machine learning methods performed well. Moreover, the unsupervised method’s relatively good performance would make it useful for locations without reliable training data.

Overall, 9% of the 314 sensors were flagged as potentially misconfigured, with 4% of those being flagged by only the unsupervised method. This means that the machine learning algorithms successfully reviewed the bulk (91% to 95%) of the sensors, reducing the total burden to a handful for which more in-depth manual evaluation was feasible. Of the 9% potential misconfigurations, between 30% and 60% were diagnosed as true misconfigurations, depending on whether the probable misconfigurations (i.e., indeterminate) were included. This made the overall rate of misconfiguration in Caltrans District 7 to be approximately between 3% and 5%, based on these results.

Multidate Extended Analysis

One key goal of this research was to identify misconfigured HOV sensors in the field. However, during the single date-range analysis only 314 out of approximately 870 sensors had sufficient data available. It was observed that the sensor data availability and quality varied substantially over time depending on a multitude of factors, such as power outages, temporary malfunctions, and ongoing maintenance (e.g., roadwork, damage, or sensors added, replaced, and removed).

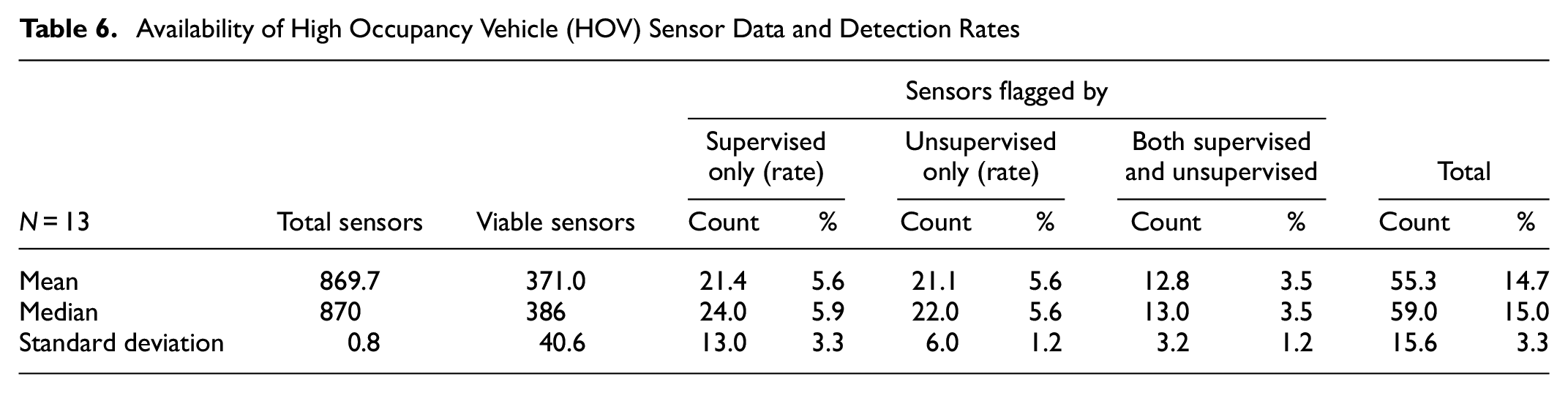

To address this complication, the machine learning model was run repeatedly on a week of data from each of 13 quarters from 2018 to 2021. The analysis dates were chosen systematically as the first full week of weekdays of the last month of each quarter (i.e., starting the first Monday of the last month in each quarter). To ensure consistency, all analyses used the same model that was trained for December 6 to 12, 2020. This date range was used because these data along the I-210 had no unknown misconfigurations and could therefore be relied on for robust training of the model. A summary of results is shown in Table 6.

Availability of High Occupancy Vehicle (HOV) Sensor Data and Detection Rates

The rate of data availability among the HOV sensors was consistently below 50%, with an average of 42.7%. The rate of misconfiguration detection was somewhat variable, with an average total rate from both machine learning methods of 14.7%. It is likely that over time different subsets of sensors are operational or nonoperational. As a result, variability in the detection of potential misconfigurations might be partially explained by variability in the available pool of sensors providing data during each analysis date range.

Another import pattern is the frequency of sensor detection over time. Some of the detections occurred as a result of corrupted data specific to the date when the sensor data were analyzed. If a sensor is truly misconfigured, it will be flagged as misconfigured more than once. Figure 7 shows the location of all sensors in District 7 and their detection results. Blue and red dots denote detection by supervised or unsupervised learning, respectively, with the dot size indicating detection frequency. Black dots denote no anomaly detection and black Xs denote sensors with no data available for any of the analysis dates.

Map of misconfiguration detection frequency in District 7 by unsupervised learning (red dots) and supervised learning (blue dots), for which larger dots indicate more detections per sensor.

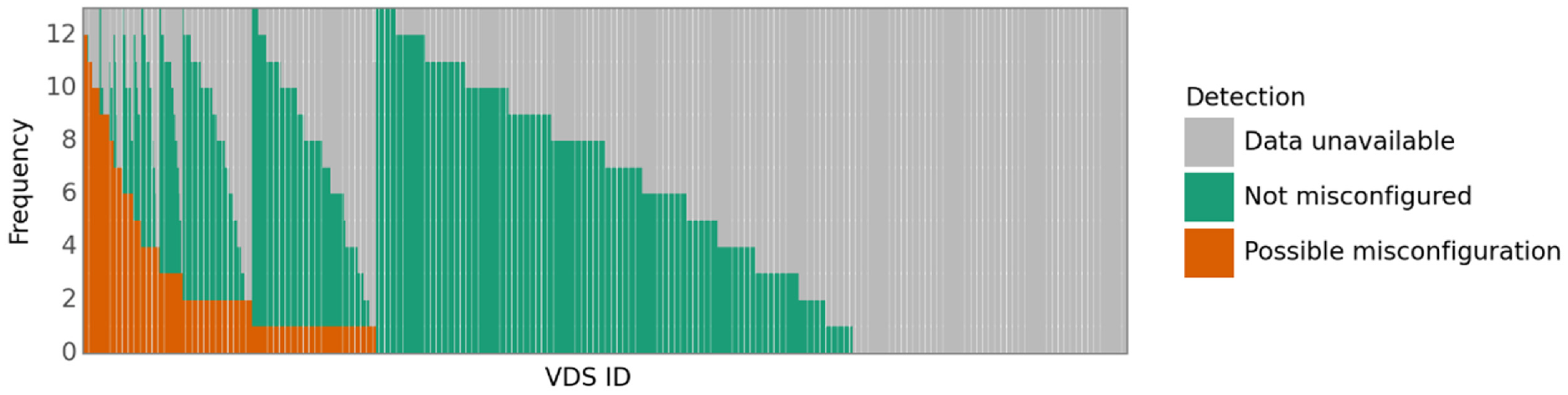

These results can be statistically visualized in the distribution shown in Figure 8 in which sensor detection frequency is plotted along the vertical axis and the sensor ID is plotted along the horizontal axis. For clarity, the sensor IDs were removed and plotted in order of detection frequency.

Sorted frequency distribution of misconfigured sensors for quarterly analyses from 2018-Q1 to 2021-Q1.

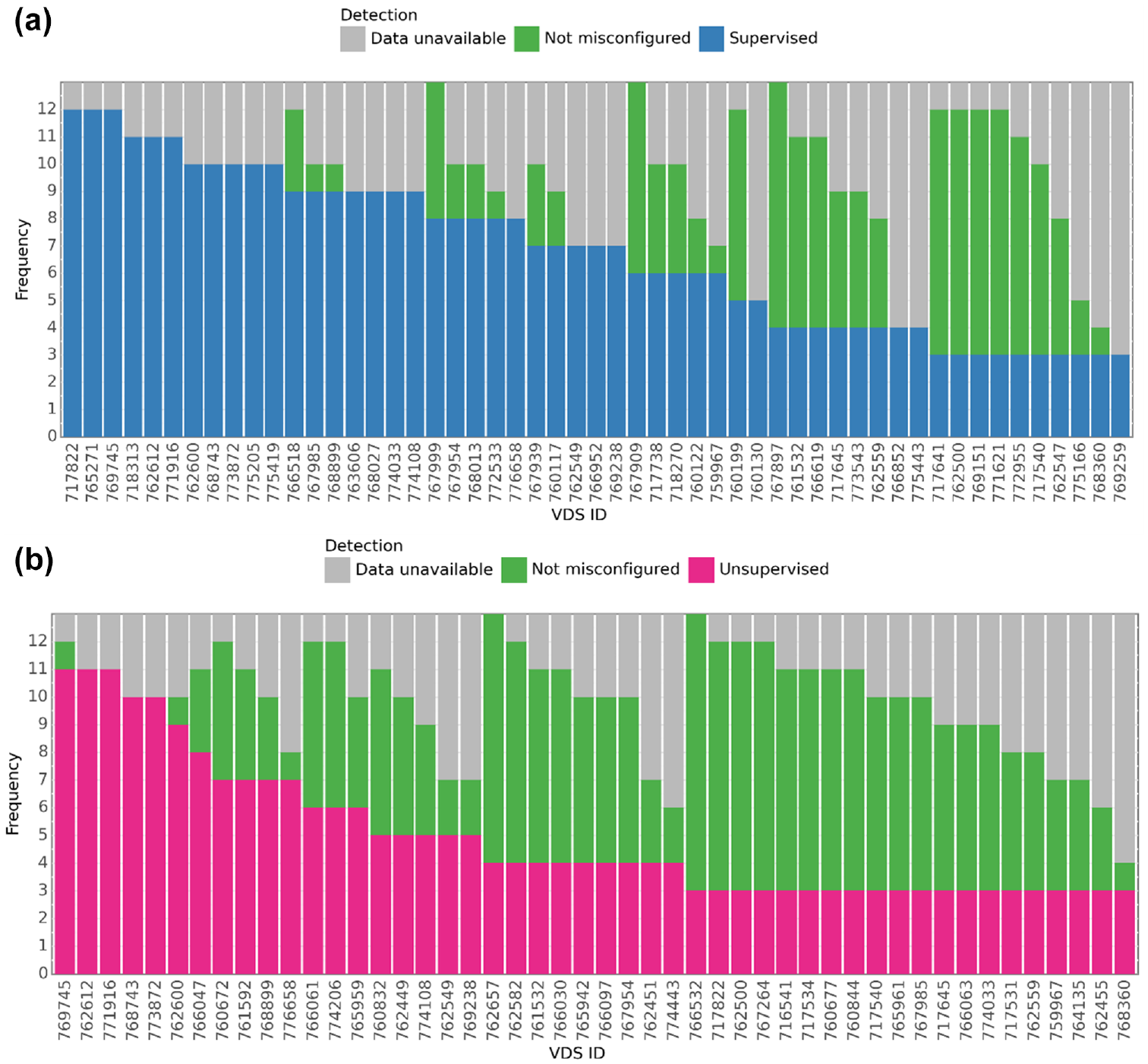

Figure 9 provides a closer view of the same distribution, but with sensor ID labels included, truncating the plot to include only sensors flagged at least three times. Most sensors fell into the category of either not having data available or not being misconfigured; however, nearly a third of sensors were flagged as possibly misconfigured (or at least having some suspicious data characteristics) at some point between 2018-Q1 and 2021-Q1. Most of the anomaly detections occurred only once or twice per sensor, probably the result of some random irregularity in the data (e.g., communication interruption, roadwork). A smaller portion of sensors were flagged much more frequently, a few being flagged nearly every time. Often, the main reason for not being flagged is unavailable data. It is likely that these more frequent detections are true misconfigurations and should be prioritized for manual review.

Sorted frequency distribution of misconfigured sensor detections for quarterly analyses from 2018-Q1 to 2021-Q1, truncated to sensors with at least three or more detections: (a) sorted frequency distribution of sensors flagged by a supervised learning method and (b) sorted frequency distribution of sensors flagged by an unsupervised learning method.

Approximating Average Misconfiguration Rate

The detailed study in this report used data from December 6 to 12, 2020, to manually evaluate and diagnose the sensor results for true misconfigurations and false positives. This corresponded to a week of good data on the I-210 for training the models, but relatively low data availability in other parts of District 7. More data were available for the district at other times from 2018 to 2020. For these other years, the detection of misconfigurations was much greater, reaching as high as 20%. The exact number of true misconfigurations in these other years was not determined, however, as it would have required manual review of hundreds of sensors. However, it is possible to approximate the average number of misconfigured sensors based on the overall results.

The single date-range analysis results with manual evaluation for December 2020 showed that 17 out of the 29 sensors (59%) flagged by the algorithms were either definitively or likely to be misconfigured. Assuming a similar proportion of misconfigured sensors is found across other years, then about 5% to 8% of HOV sensors in District 7 are likely to be misconfigured based on this analysis. However, it should be noted that this is a big assumption. The range depends on whether the 8 indeterminate but probable misconfigurations are included with the 9 confirmed misconfigured sensors, giving a total of 17.

Alternatively, the frequency of detection can be used to approximate an average rate. Sensors frequently detected as misconfigured are more likely to be true misconfigurations than less frequently detected sensors. Based on the frequency distribution in Figure 8, assuming sensors with at least four detections are considered misconfigured, there are 63 sensors out of approximately 870 that lie in this category. This yields a misconfiguration rate of approximately 7.2%, which is within the 5% to 8% range separately determined from the detailed manual analysis.

Degradation Results

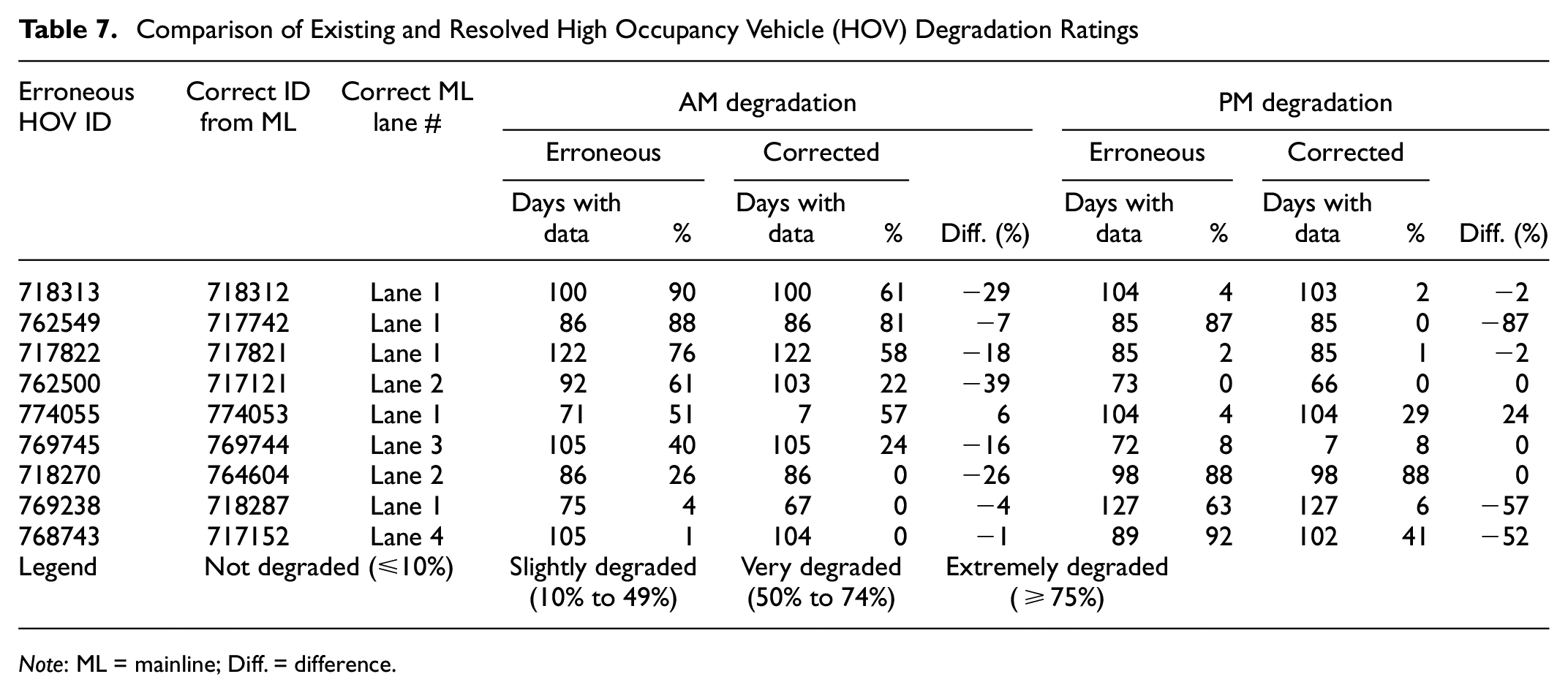

Through manual evaluation, nine sensors were diagnosed as “misconfigured,” meaning that the traffic data itself appears to be correct, but the data labels had been swapped. For each of these nine sensors, a proposed correction could be deduced through manual evaluation (e.g., the Lane 1 label appears to have been swapped with the HOV label). In these cases, the proposed correction was made, and the degradation results were recalculated using the data associated with the corrected label. A summary of degradation results is presented in Table 7. These results are presented for the morning (AM) and evening (PM) peaks separately because degradation tended to differ by peak hour (i.e., some were degraded during only one peak hour and not the other).

Comparison of Existing and Resolved High Occupancy Vehicle (HOV) Degradation Ratings

Note

Overall, there was an improvement in percent degradation across nearly all sensors. For example, Sensors, #768743 and #718270, were no longer degraded during one peak hour, and one sensor, #769238, was no longer degraded at all. This change in degradation performance frequently translated into a change in the discrete degradation status. Only one sensor, sensor #774055, did not improve, changing from “not degraded” to “slightly degraded” in the PM peak. However, there were only 7 days for which data were available for this sensor, which does not meet the minimum 180-day requirement. Moreover, such a small sample is not likely to provide reliable results and will exaggerate errors.

Discussion

The analysis was conducted at several levels: as a single date-range analysis with a manual review of the results, as well as a multidate range (13 individual weeks across 2018 to 2021) detection frequency analysis. The multidate analysis was conducted owing to the relatively low (i.e., 314 out of approximately 870) and sporadic data availability of sensors over time in District 7. The algorithms in the single date-range analysis flagged 29 sensors as suspicious out of the 314 available. The two machine learning algorithms, supervised and unsupervised, were then applied quarterly for all available HOV sensors located in District 7 from 2018 to 2021. Based on these results, approximately 5% to 8% of PeMS HOV sensors were classified as misconfigured in District 7, depending on time-specific factors.

Each of the 29 sensors identified in the single date-range analysis were then subjected to further manual inspection. The goal was to find its partner—another misconfigured sensor in an adjacent general-purpose lane. For nine of the sensors, it was possible to determine precisely which sensors had been swapped. Another eight sensors were diagnosed as being indeterminate, meaning that they were probably misconfigured, but further analysis is required to determine which lane of data corresponds to the actual HOV lane. For the nine corrected misconfigurations, most resulted in an improvement in degradation performance (i.e., the degradation of the HOV lane was less serious than reported). Some sensor degradation ratings improved enough that they were no longer considered degraded in one peak hour, and one sensor was no longer considered degraded at all. However, most sensors overall remained at some degraded level. Further extrapolating the average misconfiguration rate (5% to 8%) by the total HOV lane miles indicated approximately 27 to 44 mi of erroneously measured HOV lanes at any given time, with approximately 10 to 16 mi (38%) of those reporting an erroneously high degradation rating.

Although 5% to 8% may seem small in absolute terms, this could affect traffic management and policy. For example, the district may attempt to mitigate the erroneous degradation status by increasing HOV occupancy (e.g., from 2+ to 3+) or by disallowing zero and low-emission vehicles in the HOV lanes. This could worsen congestion if fewer cars are eligible to use the HOV lane, and also undermines the state’s goal of reducing emissions by promoting carpooling and greener vehicles.

Conclusions

HOV lane sensors in PeMS are sometimes misconfigured as general-purpose lanes. In this situation, HOV lane data could be mistakenly aggregated with general-purpose lane data and vice versa. The purpose of this research was to understand how widespread this problem might be and the extent to which it affects performance reporting on the degradation of HOV lanes. This research successfully trained machine learning algorithms to flag potentially misconfigured HOV lane sensors. These algorithms were tested over a well-studied section of the I-210 freeway achieving approximately 80% and 90% accuracy for unsupervised and supervised methods, respectively.

This study has demonstrated that machine learning can be effectively utilized in a dragnet for detecting misconfigured traffic sensors in a large system. Overall the results showed that approximately 5% to 8% of HOV sensors are misconfigured at any given time in Caltrans District 7, with about 38% of those reported to be erroneously degraded.

This paper highlights three important findings:

Data validity is tied to scalability—Although sensor accuracy is irrelevant to the number of sensors in a system, misconfigurations are an endemic problem that becomes harder to detect manually as the number of traffic sensors in a system grows.

Sensor unavailability is widespread and dynamic—Not only is a large portion of sensors unavailable at any given time in the system (approximately 60%), but which sensors are unavailable is constantly changing. This not only makes it challenging to track down the moving target of malfunctioning sensors but creates further challenges when a consistent block of data is needed for analysis (e.g., for degradation reporting or detecting misconfigured sensors).

Erroneously degraded highways reported to FHWA could have serious policy impacts. Degraded HOV lanes require the state to alter local policies (e.g., revoking exemptions for clean air vehicles or excessively increasing occupancy minimums from 2+ to 3+), possibly causing the state to fail to meet their policy goals (e.g., emissions reduction).

Although this research represents an appropriate use of machine learning technology and yielded satisfactory results, there are aspects that could be improved. The first is that, given the fluctuation of sensor operability over time, research is necessary to develop a sampling strategy to optimize the date selection of sensors. An algorithm could be designed in such a way as to maximize the number of sensors in operation for the analysis of a time window, or to avoid certain dates (e.g., events and construction dates). Second, the emergence of third-party traffic data from location-equipped devices (e.g., smart phones or commercial telematics), makes it possible to validate against these third-party data. For example, if the speeds from a fixed-location sensor do not match the third-party data, this may indicate misconfiguration. However, the capabilities of third-party data are not entirely uniform or clear. For example, few third-party mobile-based data sources possess the spatial precision to differentiate between lanes. Further research is necessary to determine what is possible and to develop an appropriate methodology.

This research highlights the need for thorough and ongoing quality control and validation of traffic data. Although manual review of data is best, the vast volume of data being generated makes such a task infeasible. Machine learning techniques provide a huge labor-saving tool for transportation agencies to utilize. Although random false positives may be generated, the occurrence of repeated misconfiguration detections could be used to prioritize sensors for manual inspection. Moreover, this research inadvertently revealed the scope of sensor unavailability and the challenges in managing a large sensor network, highlighting the need for data management tools such as this.

Footnotes

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: A. Patire, Y. Zeinali Farid; data collection: N. Fournier, Y. Zeinali Farid, A. Patire; analysis and interpretation of results: N. Fournier, A. Patire; draft manuscript preparation: N. Fournier. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the California Department of Transportation (Caltrans), grant number 65A0759, and utilized traffic data in Caltrans’ PeMS database.