Abstract

Over the past few decades, numerous adaptive traffic signal control (ATSC) algorithms have been proposed to alleviate traffic congestion and optimize traffic mobility using real-time traffic data, such as data from connected vehicles (CVs). However, most of the existing ATSC algorithms do not consider optimizing traffic safety, likely because of the lack of tools to evaluate safety in real time. In this paper, we propose a novel ATSC algorithm for real-time safety optimization. The algorithm utilizes a traditional Reinforcement Learning approach (i.e., Q-learning) as well as recently developed extreme value theory (EVT) real-time crash prediction models. The algorithm was validated using real-world traffic video data collected from two signalized intersections in British Columbia. The results indicated that, compared with an existing fully actuated signal controller, the developed algorithm can significantly reduce the real-time crash risk by 43% to 45% at the intersection’s approaches even at low CVs market penetration rates.

Keywords

Adaptive traffic signal control (ATSC) systems have been receiving considerable interest in recent years. This interest is expected to grow with the availability of real-time traffic data from emerging connected vehicles (CVs) and advances in sensing technologies. ATSC systems use real-time traffic data to optimize traffic efficiency and minimize traffic delay. The mobility-oriented ATSC techniques have demonstrated considerable benefits in enhancing traffic efficiency at signalized intersections (

There has been some previous work on optimizing the safety of signalized intersections using microsimulation models and the Surrogate Safety Assessment Model (SSAM) (

Recently, prediction models for real-time safety evaluation have been developed and validated (

Acknowledging the above-noted limitations of using traffic conflicts, researchers have proposed use of extreme value theory (EVT) for conflict-based crash-risk estimation (

In this paper, we propose a Q-learning-based adaptive signal control algorithm for real-time safety optimization (QASCS) to minimize crash risk. EVT-based real-time crash prediction models for signalized intersections (

Literature Review

Real-Time Crash Prediction Models

Recently, considerable research has been conducted in modeling real-time crash risk to manage and evaluate traffic safety proactively. For example, Abdel-Aty and Abdalla developed a model to predict daytime crashes on a freeway using real-time roadway geometric features and traffic flow characteristics (

EVT Models

There has been significant interest in using traffic conflicts to estimate crashes and develop crash-risk measures from observable non-extreme frequent events (i.e., conflicts). This can be realized by applying the EVT, in which models can be developed to enable extrapolation from observed levels to unobserved levels of a stochastic phenomenon. The application of EVT for road safety was first proposed by Campbell et al. and Songchitruksa and Tarko (

Recently, the use of EVT models in safety analysis has witnessed considerable advances, including new methods and applications. New applications included the use of EVT models to conduct before–after safety evaluations (

Zheng and Sayed proposed an approach for real-time crash-risk prediction at signalized intersections within the EVT framework (

ATSC Algorithms

ATSC algorithms have recently been implemented in many jurisdictions worldwide to alleviate traffic congestion and reduce delays. The Sydney coordinated adaptive traffic system was the earliest ATSC algorithm (SCATS) (

Traffic Signal Optimization Using CV data

With the increasing emergence of CV technology, numerous traffic signal control algorithms have recently been proposed to optimize traffic efficiency using real-time data from CVs. Some studies, for example, proposed various algorithms to optimize and coordinate traffic movement in road intersections without using any traffic lights, assuming that all vehicles are connected and autonomous (

Use of Reinforcement Learning for Traffic Control

Reinforcement learning (RL) is a machine learning approach that analyzes how agents can take actions to maximize their cumulative reward. RL techniques have been proposed in the literature for signal control as they are suited to the stochastic and dynamic traffic environments. These techniques can learn the control policy by interacting with the environment directly without the need of a model of the traffic environment or human intervention (

Methodology

RL Formulation

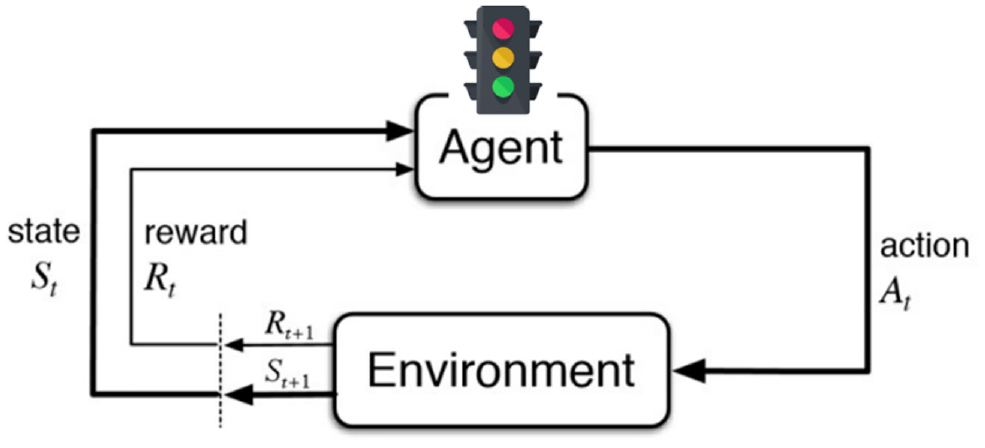

RL is an area of machine learning that analyzes how an agent is interacting with the surrounding environment to realize a goal (

Reinforcement learning framework.

Modeling the Environment

A signalized intersection in Surrey, British Columbia was simulated in this study using the microsimulation platform (VISSIM 7) (

Q-Learning

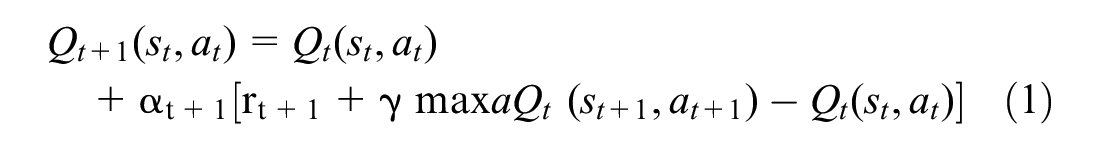

There are three main methods for formulating the RL algorithms and learning the optimal policy: dynamic programming (DP), Monte Carlo techniques (MC), and temporal difference (TD) learning. The three methods share some similarities and distinctions. For example, the DP method requires a model of the environment to be defined, whereas in the MC and TD methods, the RL algorithm can learn directly from interacting with the surrounding environment. Likewise, the DP and TD methods share an advantage of updating the estimates at each time step without waiting for the final outcome such as in the MC method (

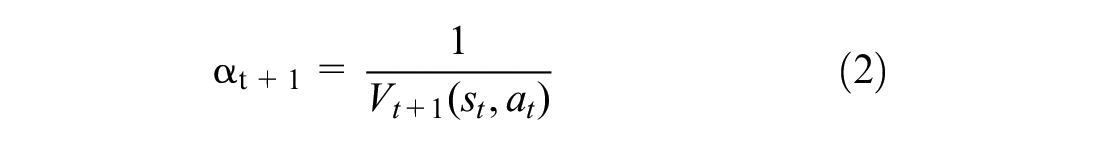

The learning rate (

where

γ: a discount factor, a value of 0.5 is chosen.

State Definition

In Q-learning, a tabular form is usually used to represent all state–action pairs. This approach of storing the states in a look-up table is questionable, especially when RL is applied to stochastic environments that possibly include an infinite number of states. Including many states in the Q-matrix will result in most states not being experienced by the agent. Potentially, a generalization from the states that were visited previously to the ones that have never been experienced (i.e., function approximation) may be helpful to solve this issue. Popular methods of generalization include artificial neural networks and statistical curve fitting (

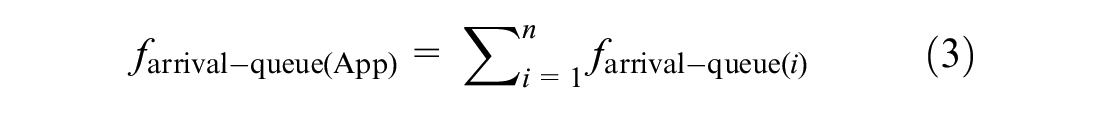

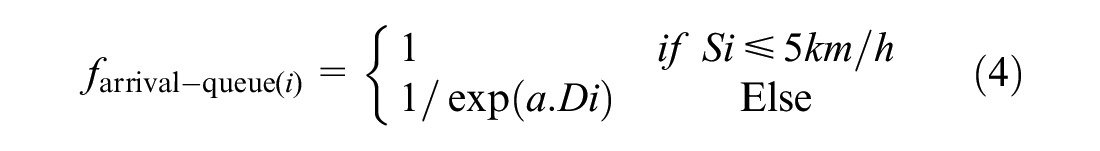

The state is represented by the current green phase and the status of the total number of vehicles within a range (i.e., DSRC = 225 m) on its incoming approaches upstream of the stop line. The overall objective of the proposed algorithm in this research is to optimize traffic safety. Therefore, an arrival-queue factor that represents positions and speeds of vehicles and the real-time traffic condition is introduced. The arrival-queue factor of an approach is a weighted sum of the number of vehicles that exist at this approach. This weighted sum considers the position and speed of every vehicle. If the vehicle is stopping or moving at speed less than 5 km/h (i.e., vehicle is in a queue), it is counted as one vehicle. Otherwise, it is counted as a fraction (i.e., between zero and one). The value of this fraction depends on the distance from the vehicle position to the end of the queue or to the stop line, whichever is shorter. To cover most of the possible states of the environment, the value of the arrival-queue factor is divided into 15 ranges to create a Q-matrix. The factor for each approach is calculated as follows:

where

Action Definition

In RL-ATSC algorithms, defining the next green phase is the action that is taken by the signal controller. The number of possible actions that the signal controller can choose from varies depending on the phasing sequence scheme. Two phasing sequence schemes were defined in the literature: the fixed phasing sequence (

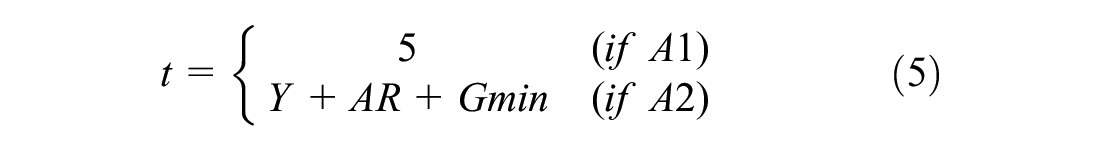

At each time step, the agent of the proposed algorithm implements one of two actions, either extending the green time (A1) or switching the green light to the next phase (A2). In the case in which the agent selects A1, the current green phase of the through movements is extended by a time (t). If A2 is selected, the green light will be switched to the next phase and its minimum green time (

where

Following the standard signal timing manual (

It is worth noting that selecting the update time interval (

Action Selection Strategy

In RL, the agent is accumulating the maximum reward through exploiting the best rewarding actions. Moreover, it needs to explore new actions, to make better action selections in the future. Exploration enables the agent to visit more state–action pairs to converge the optimal policy (

In this study, the ∈-greedy method was employed as the action selection strategy. In this method, the greedy action is selected most of the time except for ∈-time when a random action is selected uniformly. At the beginning of the learning process, the rate of exploration is higher than the rate of exploitation, as the agent does not know much about the environment. Then, the agent exploits more until the end of the learning process as it converges to the optimal policy (

where

Real-Time Collision Prediction Models

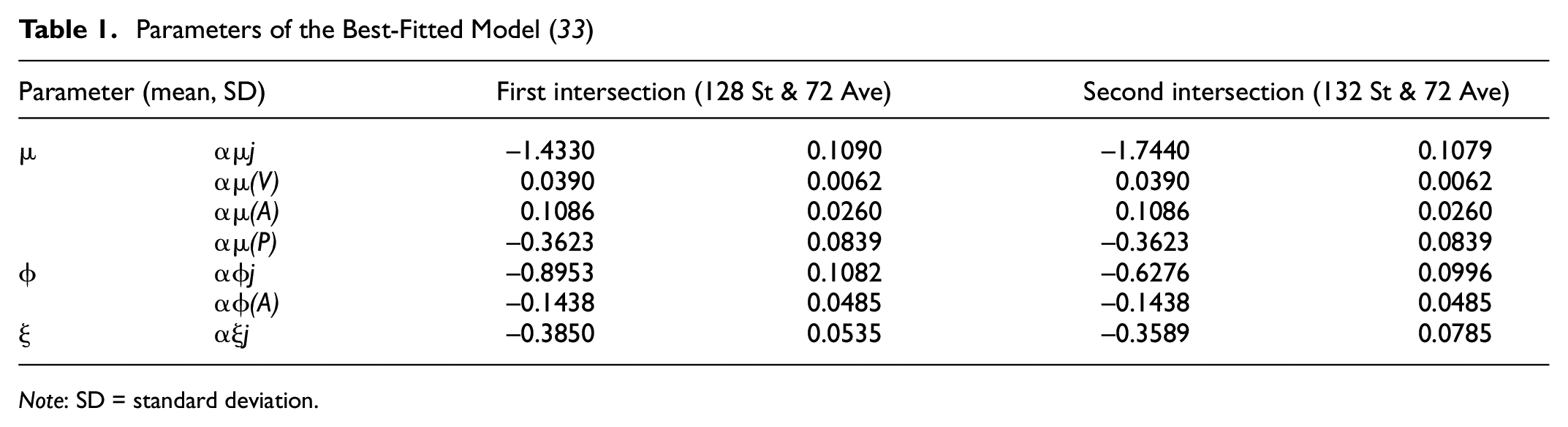

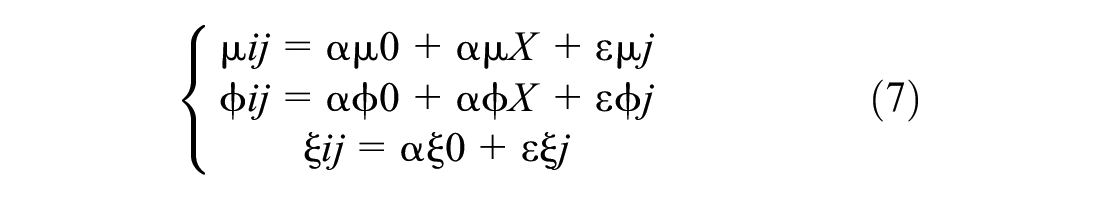

The real-time collision prediction models developed by Zheng and Sayed (

where αμ0, αϕ0 and αξ0 are the three intercept terms corresponding to the three model parameters location, scale, and shape, respectively. εμ

The parameters of the utilized best-fitted model are shown in Table 1.

Real-time Bayesian Hierarchical Models (BHM) at the cycle level.

Reward Definition

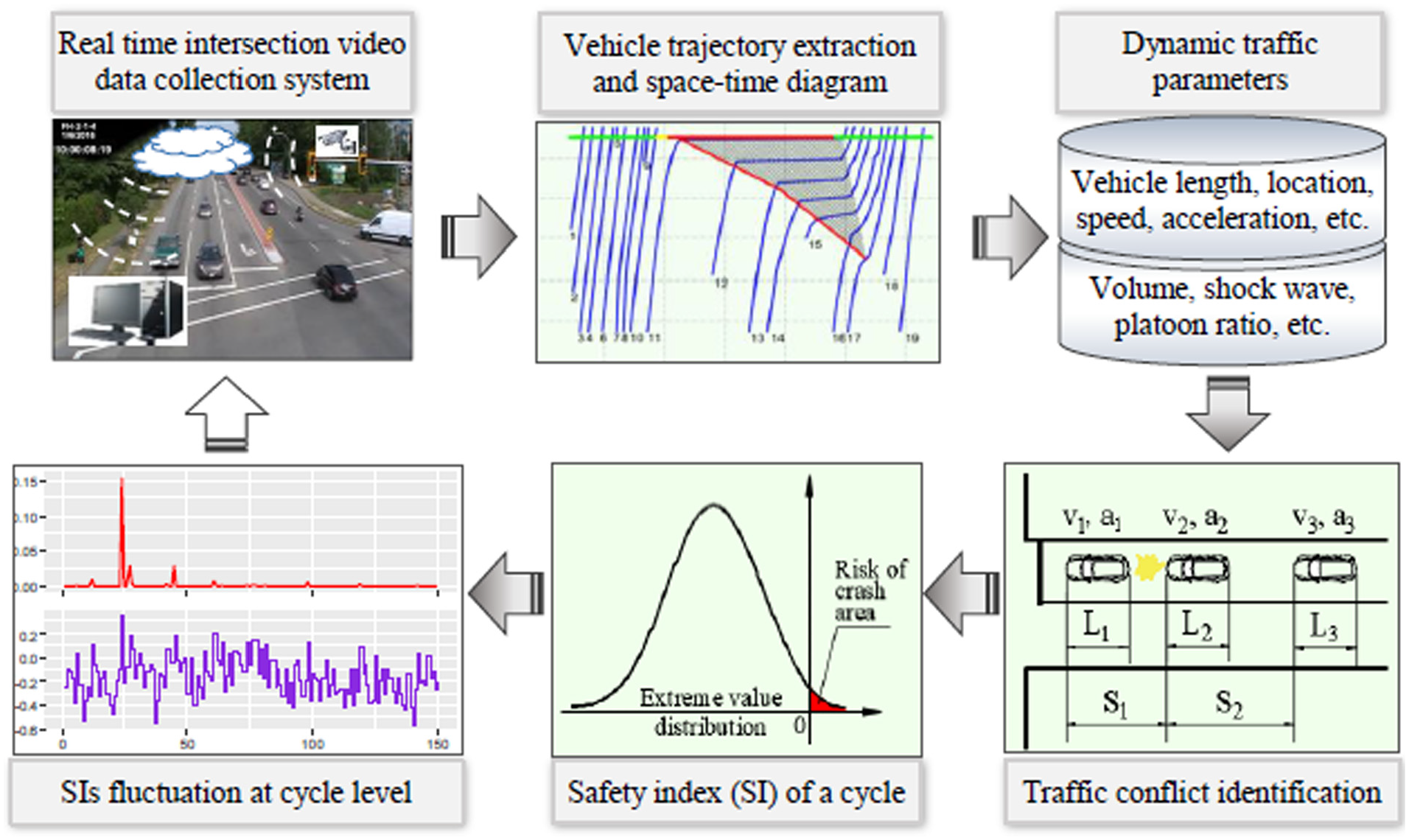

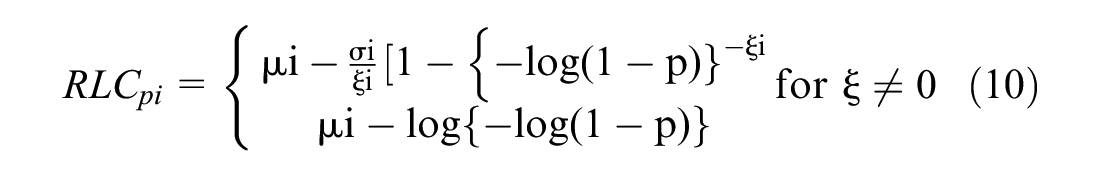

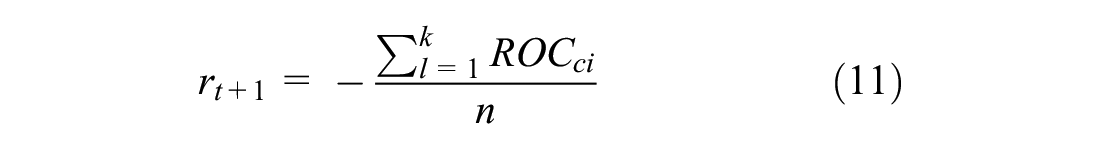

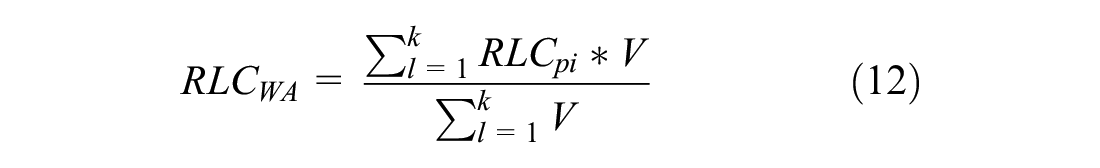

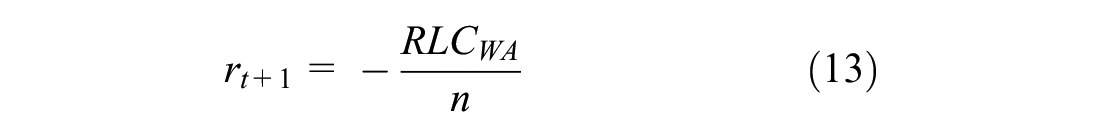

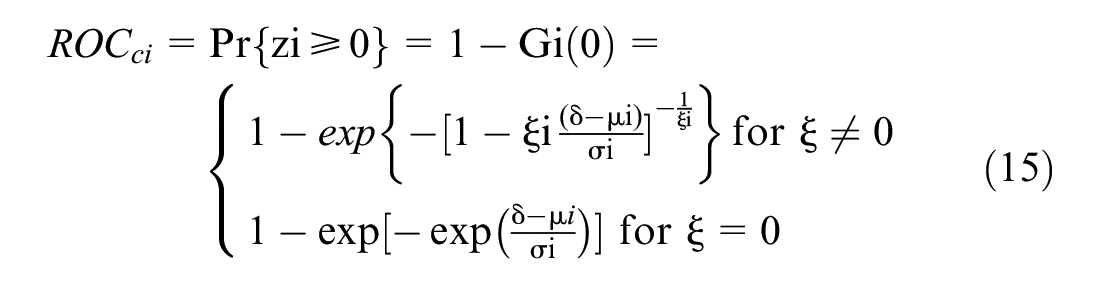

The main objective of this research is to optimize the safety of signalized intersections by minimizing the ROC in real time. Therefore, there was a need of quantitative measures that can reflect the fluctuating safety levels of dynamic traffic conditions cycle-by-cycle, to represent the algorithm’s reward or penalty. The ROC and RLC were selected as two RL rewards. In the proposed QASCS algorithm, the ROC and RLC were estimated from real-time collision prediction models (

The ROC is a non-negative indicator. A value of zero indicates a safe cycle with no risk of crash, whereas a ROC greater than zero indicates a positive crash risk. The RLC is a standard prediction in extreme value analysis that also reflects the safety level of a cycle. A value greater than or equal to zero for RLC indicates positive ROC of the cycle, and RLC less than zero implies that no crash risk is predicted. It is worth mentioning that the ROC and RLC are positively correlated (

where

X: the vector of model covariates.

Training the Algorithm

The proposed QASCS algorithm was trained using the simulation platform VISSIM to find the optimal policy. The simulation was run for 500 iterations for both safety indices (i.e., ROC, RLC). Each iteration was divided into a 1,000-s warming-up period, a 500-s cooling-down period, and a 3,600-s (i.e., an hour) training period. The total training time for each safety index was more than two million seconds. It was observed that after 400 iterations, the proposed algorithm converged to the optimal policy. At each time interval (t) as shown in Equation 5, a new state of the environment is defined after pausing the simulation, the agent selects the best action and applies it, and finally the Q-value is updated. Afterwards, a reward is received at the end of each cycle as a delayed reward and divided backward equally to the cycle actions. For each signal controller, 10 different random seeds were applied, and the results were then averaged. The minimum required number of random seeds to compare the performance measures of the two alternatives (i.e., the proposed QASCS and the ASC benchmark) was estimated, following the methodology provided in Dowling et al. (

Validation of the Proposed Algorithm

Real-world traffic data from two signalized intersections in the city of Surrey, British Columbia, Canada, were used to validate the proposed algorithm. The first intersection is 72nd Avenue and 128 Street, and the second intersection is 72nd Avenue and 132 Street. Figure 3 shows the two signalized intersections and the studied approaches. Both intersections are urban signalized intersections and are controlled by a typical fully actuated signal control (ASC). The trained QASCS algorithm and the existing ASC were both simulated in a VISSIM model for each intersection.

Study intersections and approaches.

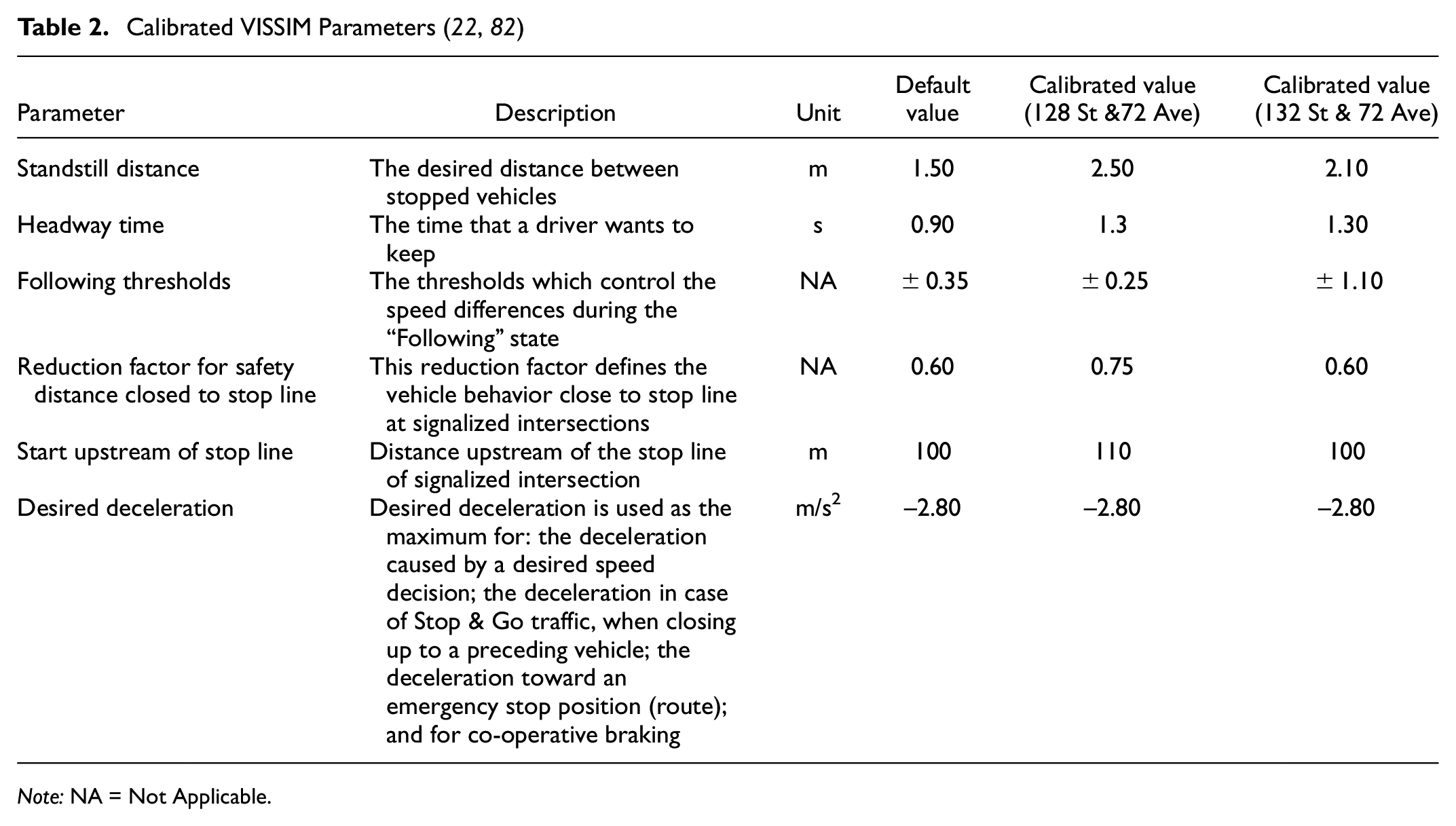

VISSIM models of the two selected intersections came from previous studies (

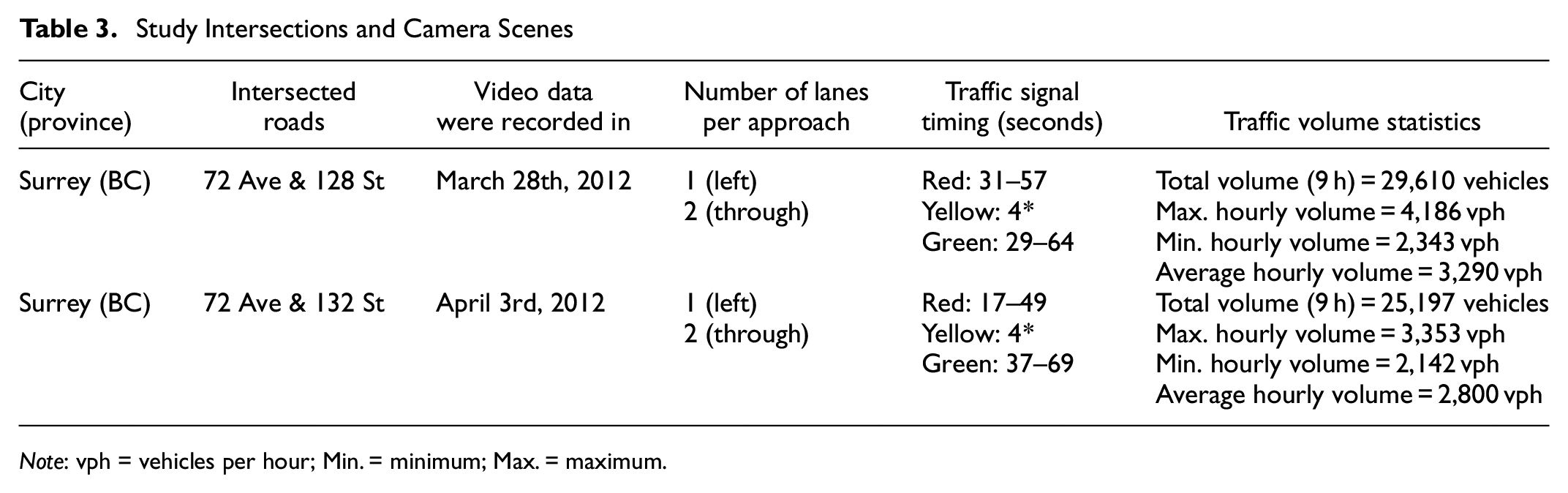

After developing the simulation models for both intersections using ASC and QASCS algorithm, the two measures ROC and RLC were estimated and compared for both signal controllers. The calibrated simulation models were run separately for each signal controller for 9 h (i.e., the available real-world video data are from 9:00 a.m. to 6:00 p.m.). Table 3 shows the location, date of the video data collection, number of lanes, and traffic volume statistics for each intersection. The ASC was simulated using the RBC module, whereas the QASCS algorithm was represented by an external supporting code. Simulated traffic data were constantly extracted and saved for each simulation run, such as position and speed of each vehicle crossing the intersection, the vehicle type (e.g., connected or non-connected), and the indication of all signal heads.

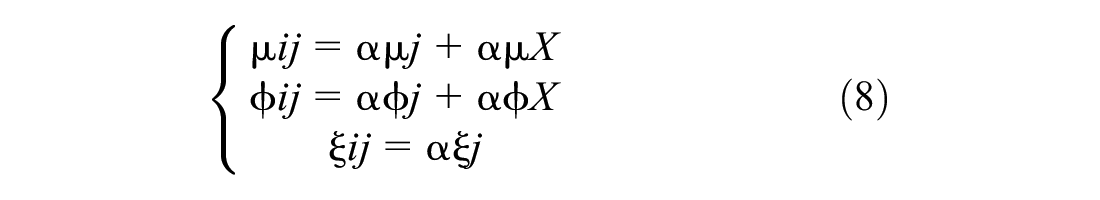

Study Intersections and Camera Scenes

Dynamic traffic parameters were extracted for each signal cycle (e.g., shock wave area, platoon ratio, traffic volume). These dynamic parameters were then used in the EVT model (

where

Validation Results

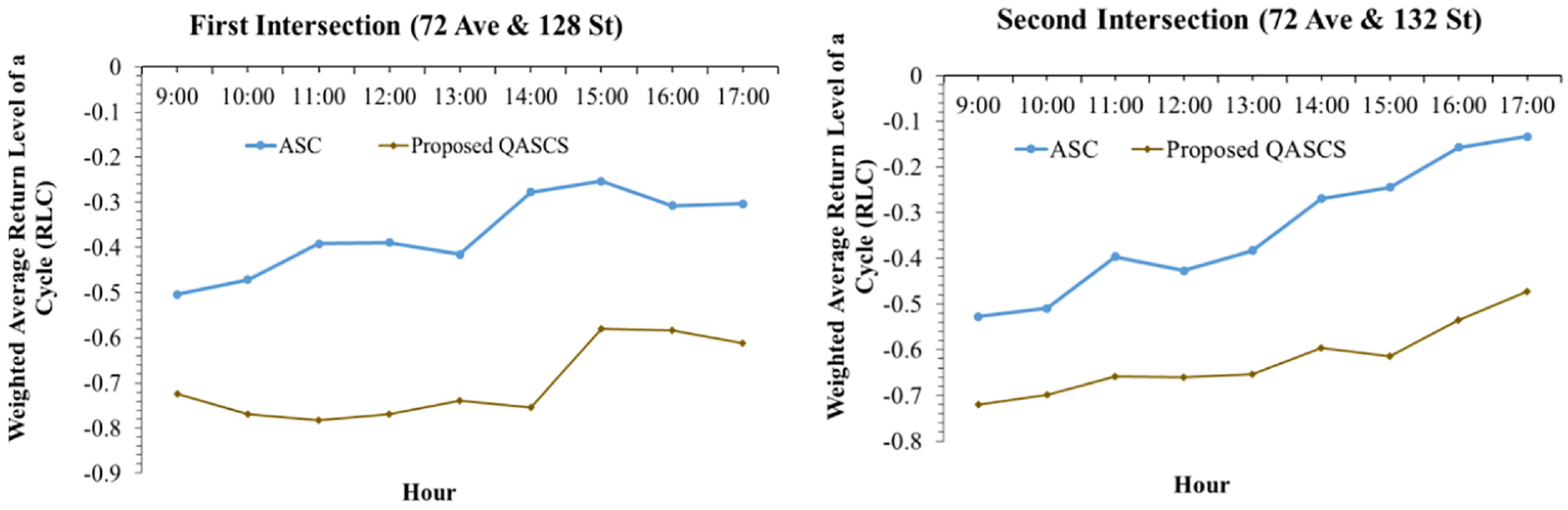

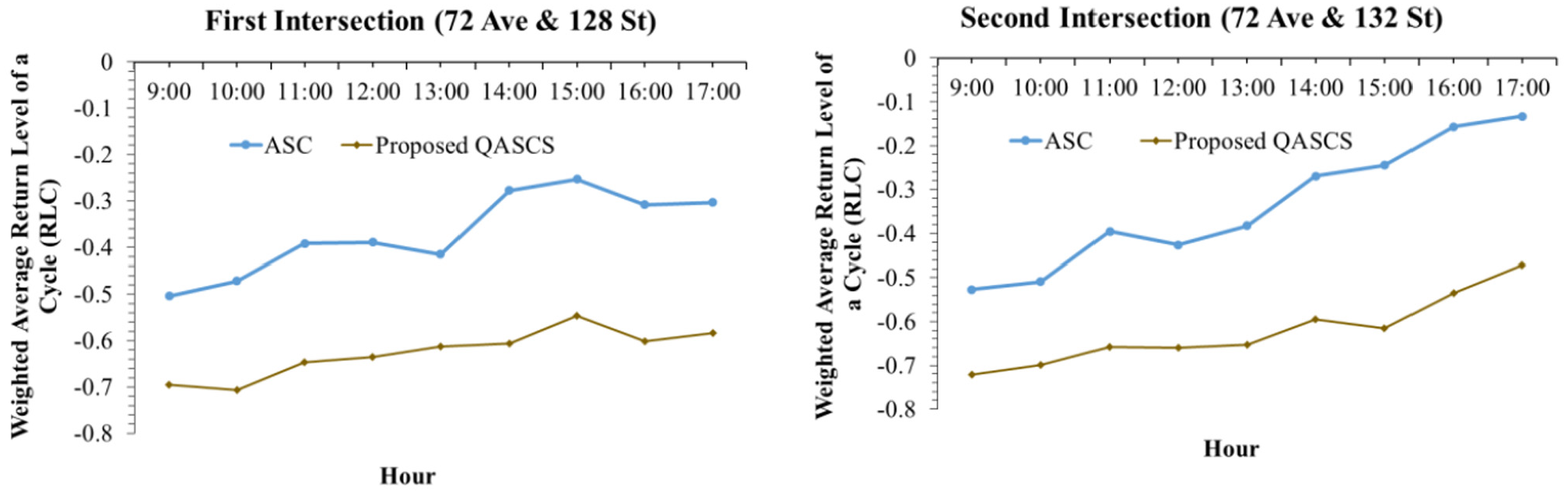

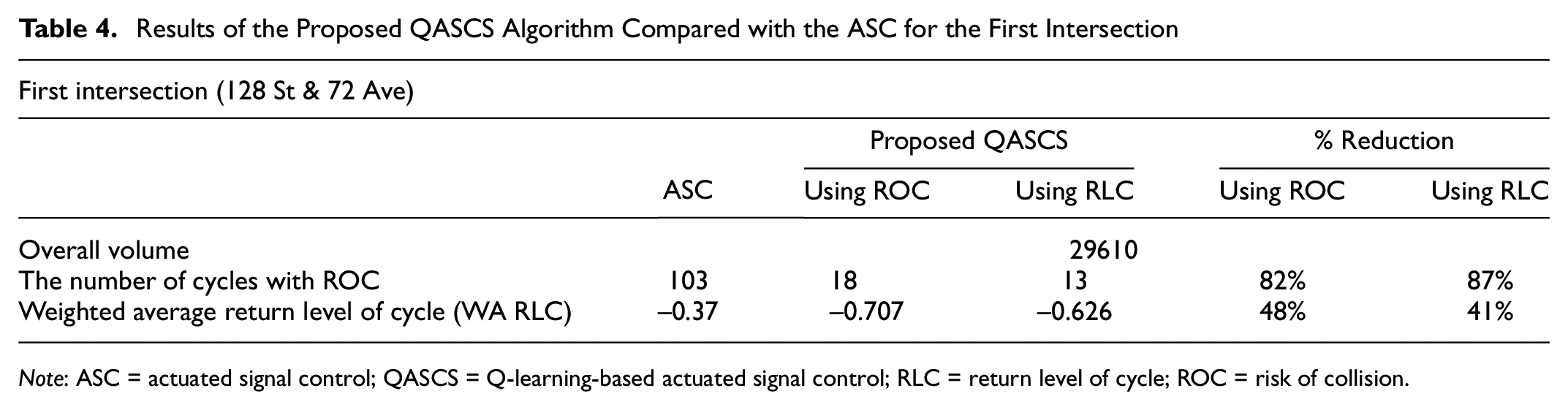

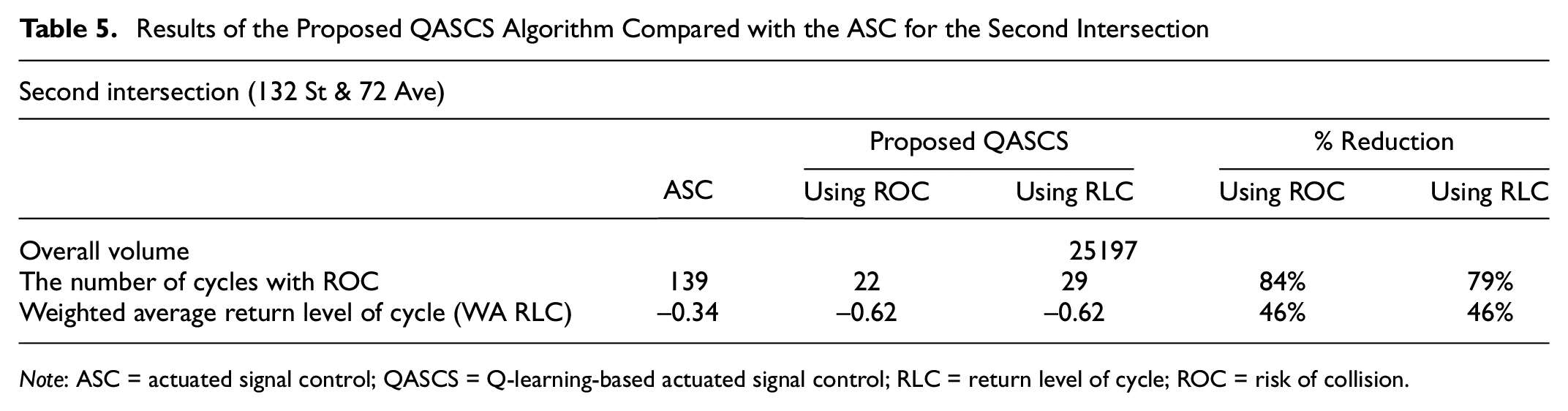

A comparison between the existing real-world ASC and the trained QASCS algorithm was conducted. The results indicated that the proposed algorithm improved traffic safety considerably at both intersections. The number of cycles with ROC as estimated using Equation 9 was reduced from 103 cycles to 18 and 13 cycles for the first intersection using ROC and RLC as reward functions, respectively. For the second intersection, the number of cycles with ROC was reduced from 139 cycles to 22 and 29 cycles using ROC and RLC as reward functions, respectively. Furthermore, taking into consideration the strong correlation between ROC and RLC, the weighted average RLC was estimated using Equation 12 and compared for each hour of the day for the ASC and the proposed QASCS (Figures 4 and 5). Reduction values of 48% and 41% in the weighted average RLC were observed at the first intersection when using ROC and RLC as reward functions, respectively. For the second intersection, 46% reduction was obtained after using both reward functions (i.e., ROC and RLC) separately.

Weighted average return level of a cycle (RLC) at the two studied locations before and after implementing the proposed algorithm with risk of collision (ROC) as a reward.

Weighted average return level of a cycle (RLC) at the two studied locations before and after implementing the proposed algorithm with RLC as a reward.

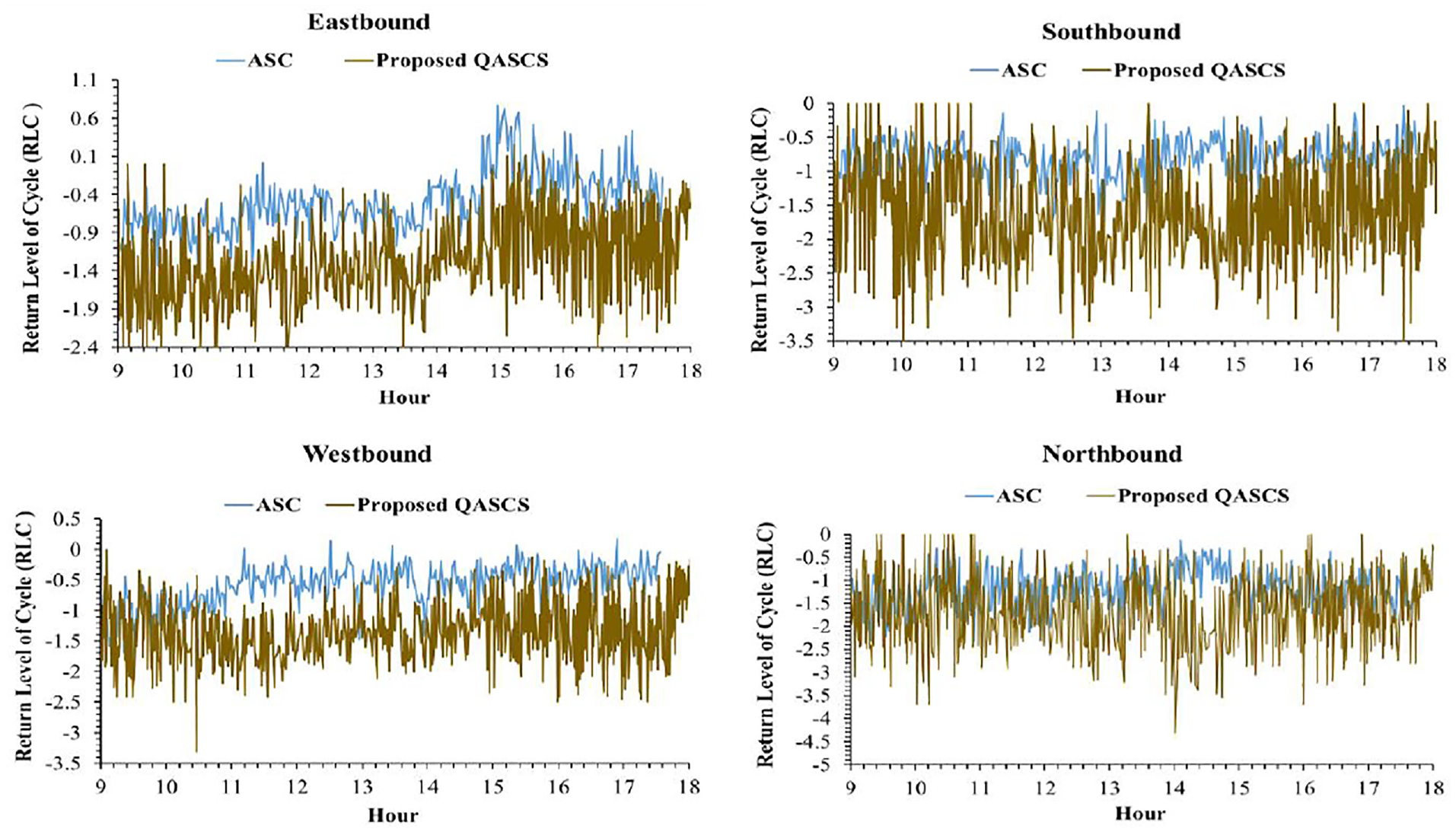

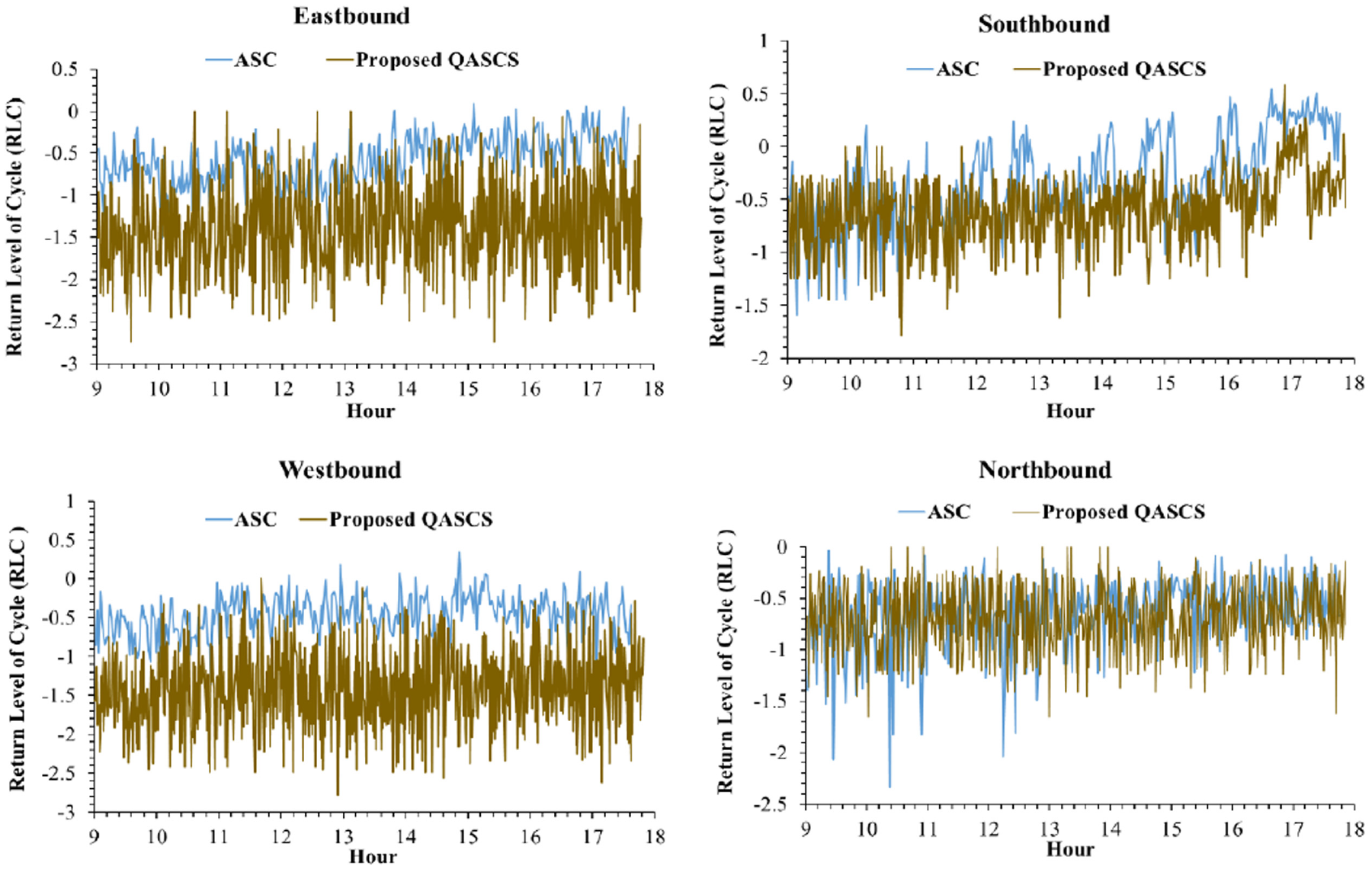

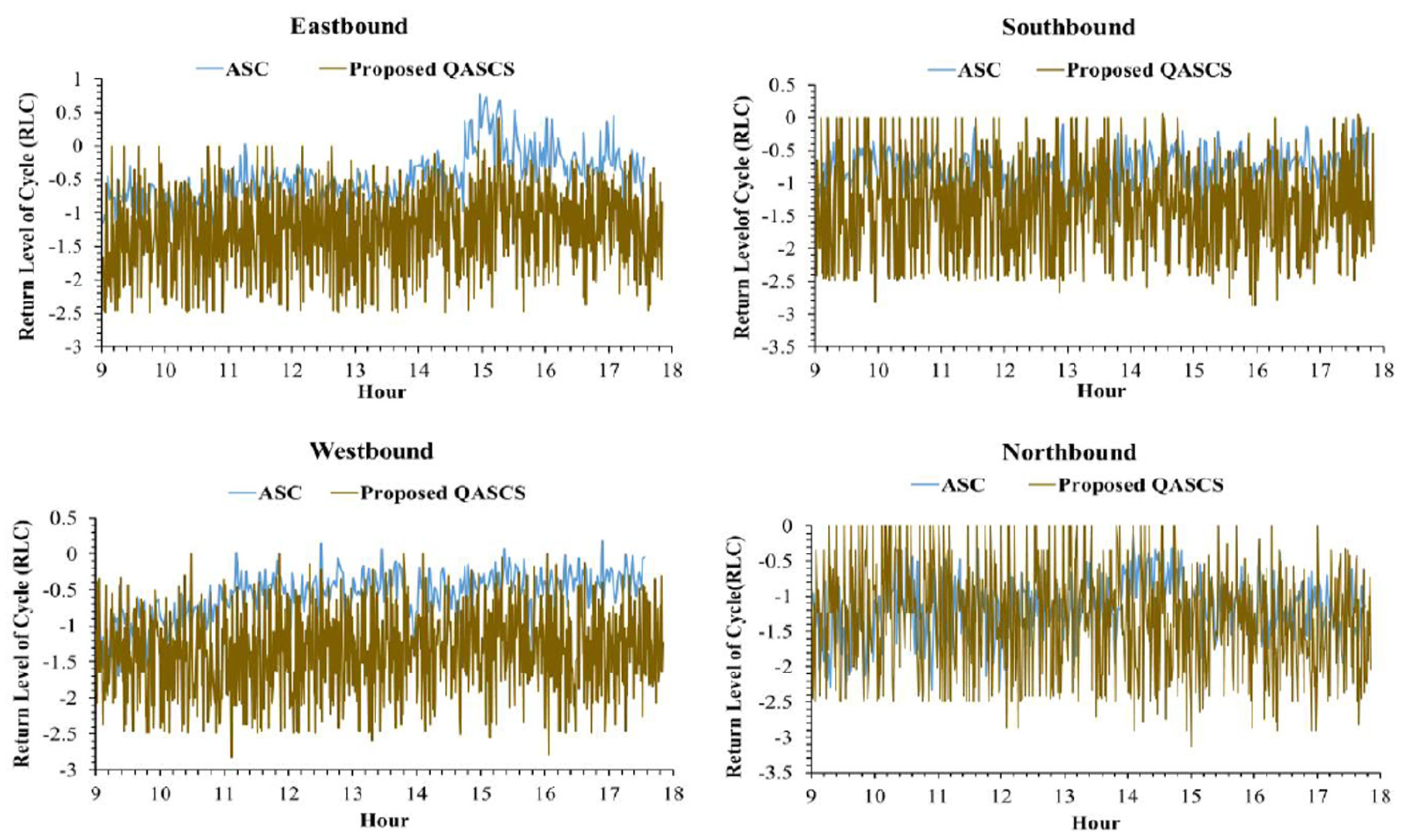

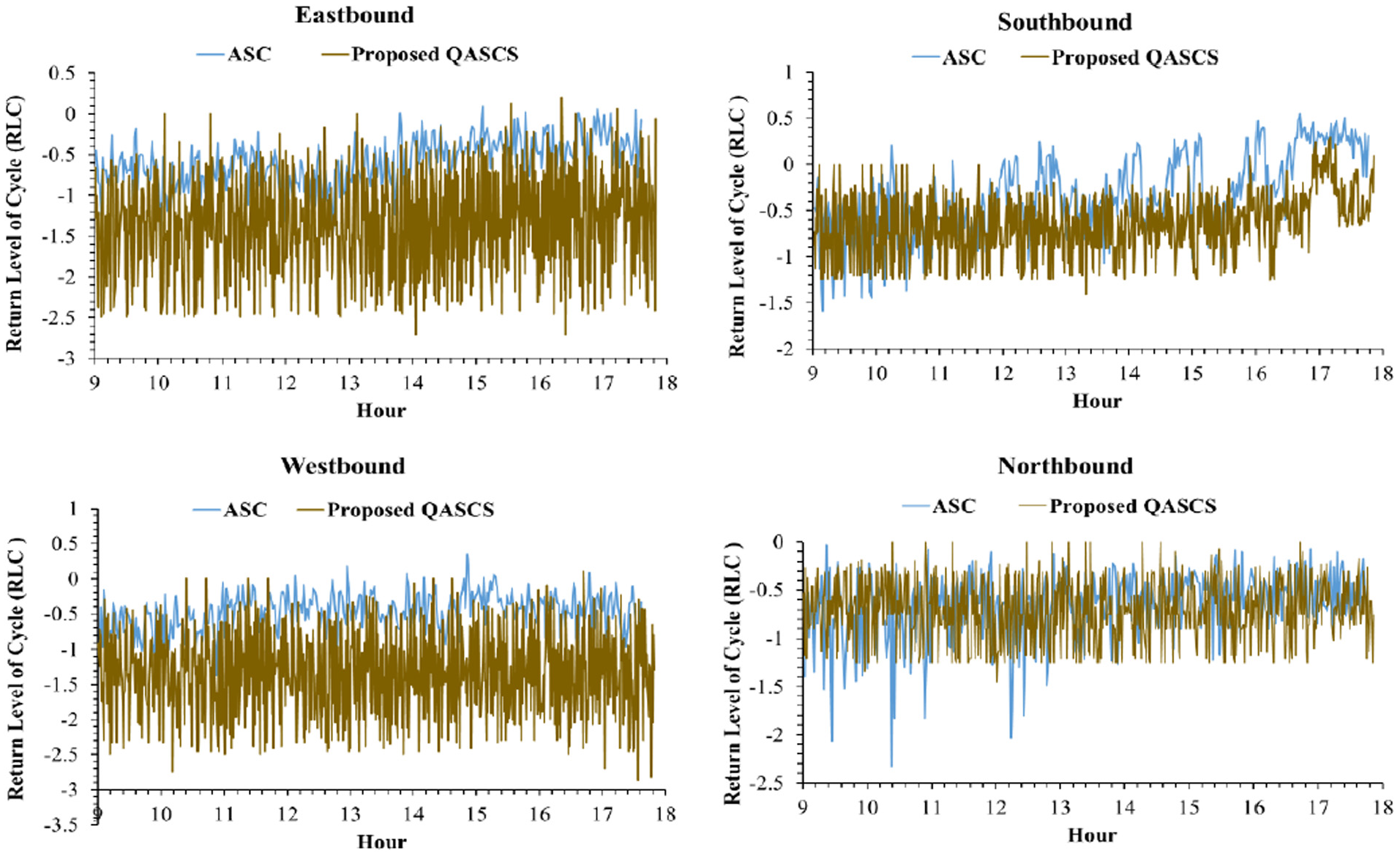

The real-time variation of RLC is shown in Figures 6–9 for both locations and both safety rewards. These values were calculated using Equation 10; positive RLC values imply that positive crash frequency is expected, whereas negative RLC values indicate that the cycle is safe and lower values of RLC represent safer signal cycles. As shown in the following figures, a reduction in RLC was observed after applying the proposed QASCS algorithm in most of the approaches and for both reward functions. Although the value of RLC has not improved significantly for some cycles, they are still safe as RLC remains below zero.

Cycle-by-cycle fluctuation of RLC at each approach of the first intersection (72 Ave and 128 St) before and after implementing the QASCS algorithm using ROC reward.

Cycle-by-cycle fluctuation of RLC at each approach of the second intersection (72 Ave and 132 St) before and after implementing the QASCS algorithm using ROC reward.

Cycle-by-cycle fluctuation of RLC at each approach of the first intersection (72 Ave and 128 St) before and after implementing the QASCS algorithm using RLC reward.

Cycle-by-cycle fluctuation of RLC at each approach of the second intersection (72 Ave and 132 St) before and after implementing the QASCS algorithm using RLC reward.

The results shown in Figures 6–9 indicate that the RLC values of the QASCS generally have higher variability than that of the ASC. The reason is that the two controllers are completely different in relation to the operation mechanism. The QASCS utilizes dense and detailed traffic data from CVs, whereas the ASC relies on relatively limited traffic information captured by loop detectors. Thus, the QASCS is more adaptive to the real-time variation in traffic conditions, and it results in higher variability among consecutive signal cycles in relation to the optimized signal-timing plan (e.g., cycle length) and, subsequently, the RLC value.

Tables 4 and 5 summarize the validation results. It was also noted that the number of extreme conflicts and the number of crashes can be calculated and compared for QASCS and the ASC algorithms. In this case, the results would show reductions in extreme conflicts and crashes reaching more than 95%. However, given the short time period (limited number of hours), the calculation of these values is subject to very large uncertainty and therefore not reported in Tables 1 and 2.

Results of the Proposed QASCS Algorithm Compared with the ASC for the First Intersection

Results of the Proposed QASCS Algorithm Compared with the ASC for the Second Intersection

In addition to the safety impact of the proposed algorithm, the algorithm’s effect on mobility was also evaluated. Even though the delay/travel time was not the primary objective function, the proposed algorithm improved mobility and reduced the total travel time at both intersections. The results indicated that the total travel time per vehicle was decreased by an average of 16% after applying QASCS using the ROC as an objective function for the two intersections. A reduction of 7% in the total travel time per vehicle was observed for the two intersections after using the RLC as a reward. Other performance metrics were also improved, including the queue length and the number of stops. Specifically, the maximum queue length, the 95th percentile of queue length, and the number of stops were reduced by 14%, 39%, and 32% using ROC as a reward, and by 16%, 40%, and 10% using RLC as a reward, respectively.

Thus, the proposed algorithm improves both the safety and operational performance. In other words, the algorithm optimizes safety (i.e., minimizes ROC and RLC) without deteriorating mobility. No doubt that traffic delays are an essential issue as congestion occurs more frequently and leads to significant economic and environmental cost. Traffic safety as well is a fundamental issue because of high collision frequencies and severities at signalized intersections and their enormous associated social and economic costs. Therefore, both traffic safety and mobility are fundamental optimization objectives. As previous research has been focused on optimizing delays (i.e., mobility) (

Effect of CV MPRs

The prevalence of CV technology is expected to increase gradually over the coming years. Before the full deployment of this technology, CVs will constitute a percentage of the total number of vehicles. Therefore, the validation of the proposed algorithm should be conducted based on various MPRs of CVs. The performance of the proposed algorithm was evaluated and compared using various MPRs of CVs at both intersections. The investigated MPRs range from 10% to 100%. Various MPRs of CVs were represented in the VISSIM model by creating a new vehicle class called “connected vehicle” and varying traffic composition percentages of each traffic input point. When implementing the algorithm with a specific MPR value, instantaneous vehicle information was captured from vehicles with the “connected vehicle” class only. The arrival-queue factor of each approach was estimated from CVs data. To determine the real-time state for the algorithm, the estimated arrival-queue factor was multiplied by a correction factor (i.e., magnification factor) to represent all vehicle classes (CVs and conventional vehicles). This factor equals the reciprocal of the MPR value. The exact MPR value is estimated in real time, given the number of CVs from the V2I communications and the total traffic counts from the counting detectors upstream of each approach of the intersection.

The results showed that the proposed QASCS algorithm can lead to considerable safety improvements even under lower MPRs of CVs. For example, compared with the benchmark ASC, a reduction of 43% in the weighted average RLC was achieved when the QASCS is applied with MPR of 50%. Generally, the higher the MPR value, the more the safety effectiveness of the algorithm. It should also be noted that MPR values less than 20% may not lead to significant safety benefits, as the algorithm cannot define the environment state with a reasonable accuracy because of the lack of real-time information on vehicle positions and speeds.

Summary and Conclusions

This study introduces an ATSC algorithm (i.e., QASCS) to optimize traffic safety in real time by directly minimizing crash risk. Reward representation and safety evaluation of the algorithm were based on real-time crash prediction models for signalized intersections developed in a recent study (

The TD RL method, (particularly, the Q-learning off-policy method) was used in this study. In this method, the environment was simulated using VISSIM model. The state of the environment was represented by the position and the speed of each vehicle approaching the intersection within the DSRC range (i.e., 225 m). The fixed phasing sequence for action definition was adopted. This includes two actions, either extending the green time to the phase in effect or switching it to the next phase. Moreover, two real-time crash-risk measures, ROC and RLC, were employed to define the reward function as a penalty, separately. Constraints such as the yellow, the minimum green, the maximum green, and the all-red times were considered to ensure the feasibility of implementing the proposed technique in the real world.

The algorithm was trained using a real-world intersection modeled and simulated by VISSIM to learn the optimal policy. Traffic volumes at each intersection were randomized to run the simulation model for 500 iterations for both reward functions (i.e., ROC, RLC). Each iteration was divided into a 1,000-s warming-up period, a 500-s cooling-down period, and a 3,600-s training period. It was observed that after 400 iterations, the proposed algorithm converged to the optimal policy.

Validation of the trained algorithm was investigated using two separate signalized intersections in the city of Surrey, British Columbia, Canada. Additionally, the safety performance of the proposed QASCS algorithm and the field fully actuated traffic signal controller was compared. Important safety performance measures were evaluated for the two algorithms, including the number of cycles with ROC, and the weighted average RLC. Generally, the validation results showed that the proposed QASCS algorithm reduced ROC at the two signalized intersections significantly compared with the existing ASC. A drop of 82% and 87% in the number of cycles with ROC was obtained after implementing the QASCS algorithm at the first intersection using ROC and RLC as reward functions, respectively. For the second intersection, the number of cycles was reduced from 139 cycles to 22 and 29 for ROC and RLC as reward functions, respectively. The findings also illustrated that the weighted average RLC was reduced by 48% using ROC and 41% using RLC for the first intersection, whereas it was reduced by 46% for both reward functions at the second intersection.

Furthermore, the algorithm’s effect on mobility was evaluated at the two intersections. Despite the delay/travel time not being the primary objective function, the proposed algorithm improved mobility and reduced the total travel time at both intersections. The results indicated that the total travel time per vehicle was decreased by an average of 16% after applying QASCS using the ROC as an objective function for the two intersections. When using the RLC as a reward, a reduction of 7% in the total travel time per vehicle was observed for the two intersections. This reduction cannot be considered the optimal outcome for mobility improvement, as the primary objective of the proposed QASCS algorithm is optimizing traffic safety by reducing the ROC.

Additionally, the performance of the proposed algorithm was investigated under various MPRs of CVs. Results indicated that reasonable safety improvements can be realized at MPR values lower than 100%. Approximately 43% reduction in the weighted average RLC was obtained at MPR of 50%, compared with the existing ASC system.

Several areas of future research can be applied to improve the effectiveness of the proposed QASCS algorithm and address the study limitations. First, this study used only two intersections for validation. Future studies may consider a larger number of intersections to investigate the safety and mobility performance of the proposed algorithm. Second, future research may consider replacing the discrete Q-table that defines the possible states to a continuous state space by using a deep neural network to describe the infinite possible states of the environment. Third, investigating the sensitivity of the results to the assumed parameters such as the discount factor, the update time interval, and the V2I DSRC domain is recommended. Fourth, the improvement in the safety performance in this research was achieved by using the CVs technology and the RL technique combined. Investigating the separate effect of each of them is an interesting area of research that deserves future investigation. Fifth, it is suggested to test the algorithm’s performance in other jurisdictions (e.g., developing countries) with different traffic conditions and driving cultures as well as to compare the algorithm’s performance with other ATSC algorithms. Most importantly, safety and mobility can be considered in the algorithm as two primary objectives for a multi-objective real-time traffic signal optimization.

Footnotes

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: P. Reyad, T. Sayed; data collection: P. Reyad, M. Essa; analysis and interpretation of results: P. Reyad, M. Essa, L. Zheng; draft manuscript preparation: P. Reyad, T. Sayed. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.